Abstract

Testbeds are widely used in experiential learning, providing practical assessments and bridging classroom material with real-world applications. However, manually managing and provisioning student lab environments consumes significant preparation time for instructors. The growing demand for advanced technical skills, such as network administration and cybersecurity, is leading to larger class sizes. This stresses testbed resources and necessitates continuous design updates. To address these challenges, we designed an efficient Environment Management Platform (EMP). The EMP is composed of a set of 4 Command Line Interface scripts and a Web Interface for secure administration and bulk user operations. Based on our testing, the EMP significantly reduces setup time for student virtualized lab environments. Through a cybersecurity learning environment case study, we found that setup is completed in 15 s for each student, a 12.8-fold reduction compared to manual provisioning. When considering a class of 20 students, the EMP realizes a substantial saving of 62 min in system configuration time. Additionally, the software-based management and provisioning process ensures the accurate realization of lab environments, eliminating the errors commonly associated with manual configuration. This platform is applicable to many educational domains that rely on virtual machines for experiential learning.

Keywords:

cybersecurity; education; experiential learning; automation; testbed; cyber range; virtualization 1. Introduction

Since the late 1990s and early 2000s, testbeds and cyber ranges have been a popular training platform in academic and commercial applications [1,2,3,4,5,6,7,8,9,10,11,12,13,14,15]. One such platform, the Real-Time Immersive Network Simulation Environment for Network Security Exercises (RINSE), was developed to support large-scale security exercises for situations involving Denial of Service (DoS) attacks [16]. Since RINSE, testbeds have advanced, offering dynamic exercises and adaptive learning. The KYPO platform exemplifies these achievements, providing a unique learning experience based on the student’s learning needs [10,11,12]. In addition to educational applications, testbeds are a valuable tool in data collection and model training. An early example is the Lincoln Adaptable Real-Time Information Assurance Testbed (LARIAT), which evaluated intrusion detection models in 2002 [17]. Today, the application of testbeds has expanded to Industrial Control Systems (ICS) and the Internet of Things (IoT) [18,19,20,21,22,23,24,25]. Both fields rely on cyber-physical systems, a unique blend of physical processes and the digital world. As a result, organizations are using cyber ranges to retrain engineers and IT staff to adapt to the new paradigms and cybersecurity threats.

Cyber threats and threat actors continually evolve and adapt to the ever-changing technology landscape, placing a heavy burden on defense infrastructure and amplifying the demand for skilled personnel. The International Information System Security Certification Consortium (ISC)2 reports a global shortfall of roughly 4 million cybersecurity jobs despite a 9% increase in the cybersecurity workforce in 2022 [26]. This gap appears unlikely to diminish, as the U.S. Bureau of Labor Statistics forecasts a 32% growth in cybersecurity roles, such as cybersecurity analysts, over the next decade [27]. Simultaneously, the escalating demand for cybersecurity expertise is fueling enrollment in cybersecurity degree programs, necessitating an expansion of resources for these educational initiatives. These resources include cyber ranges, testbeds, and practice environments providing critical hands-on experience. Increased enrollment leads to a greater demand for the provisioning and managing of network resources. To tackle these challenges, we have explored automation solutions for the provisioning and managing of environmental resources.

This paper outlines the Environment Management Platform (EMP), a system designed to streamline the process of provisioning, maintaining, and cleaning up student learning environments in a virtualized cyber range environment. The EMP includes both a Command Line Interface (CLI) and a Web Interface (WI), allowing instructors to define, deploy, and manage complex cybersecurity lab setups with minimal overhead. To guide the development process, we set two research objectives. Our first research objective was to design an automation framework that could accommodate a diverse set of educational use cases. Many existing provisioning platforms are built around narrow sets of configuration files or rigid deployment assumptions. Our goal was to abstract away this complexity, resulting in four core operations: Template, Clone, Revert, and Purge. These four phases encapsulate a key lifecycle in managing the student lab environment. The Template operation prepares a virtual machine for cloning by saving it in an optimized format, minimizing usage of disk space while ensuring consistency when generating multiple instances. The Clone operation automatically generates individualized virtual machines for students, including user accounts, IP addresses, and isolated network configurations. The Revert operation enables instructors to reset lab machines to their original snapshot, encouraging experimentation and simplifying recovery from errors. Lastly, the Purge operation efficiently cleans up virtual machines, users, and associated resources, preparing the platform for reuse in future exercises and classes. These tools were engineered for both individual and bulk operations, allowing instructors to rapidly deploy environments.

Our second major objective was to empirically evaluate the operational performance of the EMP and assess its potential to improve educational delivery at scale. Using classroom and cybersecurity boot camp deployments, we benchmarked key operations, such as VM cloning, snapshot restoration, and environment cleanup, against performing these operations manually. The results demonstrated that our automation solution significantly outperforms manual workflows across all measuring tasks, achieving up to a 12.84-fold speedup in cloning and a 100-fold improvement in snapshot restoration. In high-enrollment scenarios, these gains translate into several hours of instructor time saved per session along with near elimination of setup errors due to misconfiguration.

The novelty of the EMP lies in its ability to unify system provisioning, user access control, and network isolation into a modular, instructor-facing toolchain that operates at scale with minimal administrative overhead. Rather than developing a new virtualization backend, EMP focuses on innovating the workflow that bridges infrastructure automation and educational usability. Unlike more complex cyber range platforms that require extensive orchestration layers, large infrastructure footprints, or deep technical knowledge, EMP is designed to be deployed and operated by instructors without prior systems administration experience. EMP’s key contribution is the design of a streamlined, script-driven process for bulk virtual machine provisioning that abstracts away the complexity of student isolation, network bridging, and storage templating. The system provides both a command-line interface and a web-based front end, enabling instructors to perform common actions such as deploying full lab environments, resetting student workspaces, or restoring configurations from snapshots with a single action. These features are delivered through an open-source, reproducible platform that can be adapted for different course structures or scaled to support dozens of students with little additional overhead.

The remainder of this paper is organized as follows. Section 2 outlines the broader testbed development lifecycle and situates our work within existing research on education cyber range deployment systems. Section 3 provides a detailed architectural overview of the Environment Management Platform, including descriptions and rationale for each of the core components: Template, Clone, Revert, and Purge. Section 4 describes the software tools and technologies used to implement the EMP along with the underlying virtualization infrastructure and integration with the Proxmox REST API. Section 5 presents a case study from an undergraduate cybersecurity course and boot camp, where the EMP was deployed in an academic setting. Section 6 concludes the system development by summarizing the implementation outcomes. Finally, Section 7 concludes with a summary of our findings, the educational impact of the platform, and directions for future research and development.

2. Cyber Range Development Cycle

To deploy a successful cyber range, six components need to be developed: (1) Scenario, (2) Monitoring, (3) Learning, (4) Environment, (5) Teaming, and (6) Management [1]. Scenarios refer to the challenges students will face in the cyber range. Within a cybersecurity setting, potential challenges include deploying defenses, identifying cyberattacks, and responding to cyber incidents. Recently, research has focused on adaptive learning, which deploys challenges according to the skill level and learning of the student. For example, the Alpaca project introduced an AI engine to create vulnerability lattices, or sequences of vulnerabilities, for students to address [28].

Next, monitoring in cybersecurity environments refers specifically to collecting and analyzing network traffic generated by student machines to observe their activity during exercises. This is distinct from infrastructure monitoring and is instead aimed at tracking how students interact with the cyber range, such as conducting scans, deploying services, or launching simulated attacks. Packet capture tools like tcpdump and Wireshark are commonly used to monitor this traffic. The collected data is aggregated and displayed to the instructor through a dashboard, providing visibility into student actions, enabling assessment of learning objectives, and helping to identify misconfigurations or unintended behavior in real time. Learning pertains to tools that assess student progress as they tackle challenges within the environment. Assessments range from multiple-choice and short-answer questions to hidden flags within the network. Advanced cyber ranges incorporate scoring systems that monitor the network for desired configuration changes. In addition to individual experiences, cyber ranges may be used during team engagements. Team exercises in cybersecurity frequently feature an offense–defense style, where one group launches attacks (offense or red team) against the other (defense or blue team). Research into these assessments is grouped under Teaming.

Environments concern the software and hardware that underpin the cyber range infrastructure. Nowadays, virtualization and cloud platforms are the most commonly deployed technologies. Examples of hypervisor software include VMware ESXi and the Proxmox VE [29,30,31,32,33]. For our case study, we deployed our cyber range with Proxmox VE 7.4-17. OpenStack is an alternative to VMware and Proxmox, used in the popular KYPO cyber range and a cyber range developed by Luchian et al. [10,11,12,34]. While KYPO serves as a comprehensive solution for cybersecurity training at scale, it introduces significant infrastructure complexity, requiring tight integration of multiple subsystems and dedicated support for orchestration, monitoring, and scoring. In contrast, the EMP was designed with a narrower but highly practical focus: to streamline and automate the provisioning, management, and reset of virtualized lab environments for instructors and students. The EMP avoids dependency on complex orchestration components by exposing a simple CLI and Web Interface that interact directly with the underlying virtualization infrastructure. Whereas KYPO addresses learning analytics, the EMP addresses the preparatory and operational burdens faced by educators running hands-on cybersecurity labs. As such, the two platforms serve complementary purposes, with EMP providing lightweight, fast deployment capabilities and KYPO offering a more structured training experience.

Lastly, research into management tools explores roles, interfaces, command and control, and resources for cyber range. Among the six categories, our research aligns most closely with this category, and we will benchmark our work against other studies in this category. In most cyber ranges, infrastructure supports API calls for creating users, provisioning virtual machines, and configuring network interfaces. When interacting with these APIs, researchers may use programming languages, such as Python and Ruby, or management software, such as Ansible, Chef, Puppet, and SaltStack [35]. When interacting with these tools, administrators supply their configuration requirements via a file, typically written in a markup language, such as XML [36,37,38], YAML [7], or JSON [39]. For instance, the Cyber Range Instantiation System (CyRIS) employs YAML files to detail the composition and content of the desired cyber range [7]. Alternatively, developers may define their own description language when using programming languages to interact with the cyber range API. The Virtual Cyber Security Testing Capability (VCSTC) adopted a novel description language for specifying the required environment and challenge machines [40]. In our implementation, users interact with the cyber range via the command line or in a configuration file.

In addition to exploring management infrastructure, several studies examined the performance of their management tools. Herold et al. designed a management tool, INSALATA, for replicating existing network infrastructure [41]. During operation, INSALATA scans and observes the network and creates a copy or updates an existing copy based on its observations. During their testing, they found that the tool recreated a virtualized cyber range in 45 min and 32 s. Yasuda et al. developed Alfons, a mimetic network environment construction system for deploying networks for cybersecurity experiments [31]. When evaluating the tool, they found they could create 21 instances of 5 servers in 6754 s. Lastly, White et al. used Netbed’s batch system to deploy 20 servers, with an average performance of 4.3 min for provisioning [42]. In comparison, our tool provisions a 6-machine network in roughly 15 s, a vast improvement over existing environment management tools.

Overall, this work improves on cyber range management literature by introducing the Environment Management Platform. Interaction with the EMP is accomplished through a set of four Command Line Interface scripts and a Web Interface, streamlining the process of selecting VMs to manage when compared to existing methods, such as through markup languages (XML, YAML, and JSON). Additionally, the EMP provisions student environments faster compared to its contemporaries while also offering an expanded toolkit, such as the ability to template VMs, revert to a snapshot, and purge VMs.

3. Environment Management Platform Architecture

The EMP is an interface between the instructor and a cyber range or Virtual Environment (VE). The platform offers two methods of interaction: Command Line Interface Scripts and a Web Interface. Compared to management through pre-existing cyber range interfaces or CLI, the EMP CLI dramatically reduces the number of steps for operations. The EMP CLI also offers bulk user creation and management. Additionally, the EMP WI provides a streamlined interface based on a defined lab environment configuration.

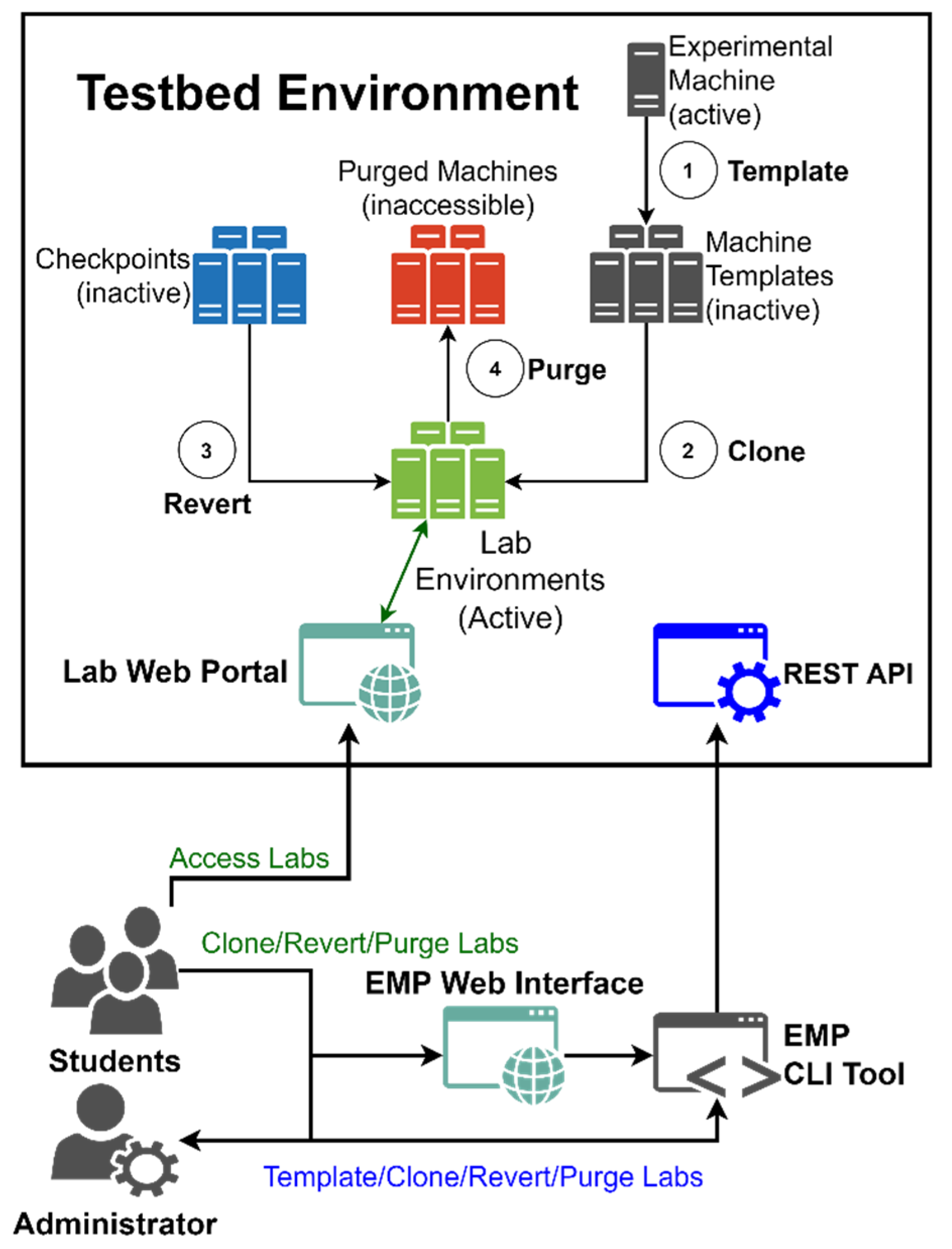

Instructors interact with the EMP through a Command Line Interface (CLI) script or Web Interface (WI). The CLI script handles secure administration of the cyber range for single-user and bulk-user operations. Alternatively, the WI offers a user-friendly interface for working with pre-defined environment configurations. A diagram of the EMP is presented in Figure 1. The instructor creates and updates virtual machines for class exercises when preparing for an upcoming semester. After finalizing the VM design, the instructor will generate a template via the Template command. Templates represent virtual machine hardware and storage configurations and are copied faster than an active VM. Next, student lab environments are spawned through the Clone command, copying the desired VM templates. If an initial VM snapshot is created, it may be accessed via the Revert command. Lastly, the Purge command deletes the assigned VMs when the semester ends.

Figure 1.

Design flow of the Environment Management Platform. Administrators interact with the cyber range environment through a Command Line Interface Script, which allows them to (1) generate virtual machine templates, (2) clone virtual machines, (3) revert virtual machines to a snapshot, and (4) purge virtual machines. Students have limited access to these functions (2, 3, and 4) through a Web Interface. Operations are executed through commands to the REST API exposed by the cyber range environment. Students access virtual machines via a lab web portal.

Core features of the EMP include Section 3.1 Template, Section 3.2 Clone, Section 3.3 Revert, Section 3.4 Purge and Section 3.5 Web Interface, which we will now explore in more detail.

3.1. Template Script

The Template script generates a virtual machine template based on an existing VM. Within the cyber range, a template represents the saved state and configuration of a VM and serves as the basis for creating a copy. This is an essential step during cyber range deployment and maintenance, as making a copy of a template is faster than copying an active VM. For example, cloning a template may take seconds compared to hours when cloning an active VM. Existing solutions offer mechanisms for converting VMs into templates on a per-machine basis. Our implementation improves this by allowing the instructor to select a set of VMs.

An additional concern for our Template function was to allow for the removal of network devices from the templated VM. Each network device must attach to a unique Linux Bridge to isolate student lab environments. Since existing network devices are copied during the cloning process, templates should only retain their own devices if destined for the original network. When designing the initial environment and challenges, the instructor typically tests Internet and intermachine connectivity, requiring pre-existing network devices. Under these circumstances, the instructor must remove the network device before generating their templates. To address this issue, the Template script can remove all network devices before conversion with the -r flag.

3.2. Clone Script

Our Clone script represents the most significant deviation from existing cyber range management solutions. Existing clone operations only create identical copies of VMs or templates. We expanded this functionality by adding the ability to create users and manage the network configuration of the target machines. Additionally, our Clone script was designed to streamline both the system administration and instructor-specific VM configuration tasks typically required to prepare student lab environments. Recognizing that these responsibilities often span across administrative roles, the Clone function is purposefully structured to serve both the system administrator, who manages infrastructure setup and access control, and the instructor, who prepares the VM environments for educational use. Arguments for this feature include the student’s username, the machines to be cloned, whether a new Linux Bridge should be created, and IP addressing. Clone also allows an instructor to take an initial snapshot after completing the provisioning process. An itemized list of core arguments is presented in Table 1.

Table 1.

Virtual machine features.

Basic features of the Clone script include clone-begin-id, clone-type, snapshot, and start-clone. During maintenance, we found it was significant to ensure that all machines related to a particular environment appeared next to each other. Clone-begin-id indicates the starting ID for the first cloned VM. Sequential clones are provided an ID with a value incremented by 1. In this way, new user environments are created with a unique ID and appear together when viewed in the pool of VMs. Next, clone-type determines whether the clone will be full or linked. Full clones are complete copies of the template disk, which is a lengthy and intensive operation. In most cases, we recommend using a linked clone, which maintains a list of changes compared to the template disk. Snapshot creates an initial snapshot after provisioning the VM. This is a helpful feature if a student needs to correct a mistake or would like to restart an exercise. The instructor may revert to the snapshot in the cyber range or through the Revert script. Lastly, start-clone designates whether a VM should start on cyber range restart and immediately after being cloned.

User-centric features include user, password, group, and role, presented in Table 1. When provisioning student environments, the instructor can provide a username and password associated with each student. If a password is not provided, the script will automatically generate a random string to be assigned to the provided user. Additionally, context such as full name and email may be included to provide documentation regarding the user. Groups allow an administrator to organize students, such as by class, to understand which environment configuration should be assigned to the user. The role flag enables the instructor to assign a role to their students. Depending on class requirements, the instructor may wish to provide students with basic or elevated privileges for their environments. For instance, students may need to adjust the network interfaces or may want to take snapshots. These elevated permissions are associated with the administrator role, but new roles with custom permissions can be created.

Network administration is accomplished via the create-bridge, bridge-subnet, and add-bridged-vms options. As noted in our Template script discussion, student lab environments are isolated through assigned Linux Bridges. A new bridge is generated and indicated as belonging to the specified user by setting create-bridge. Depending on the cyber range, a subnet range can be assigned to the newly generated bridge through bridge-subnet, although this is primarily for documentation purposes. VMs can be assigned to the student’s bridge through the add-bridged-vms option, in which the instructor indicates the VM templates that should have a new network interface. These three options allow us to create an internal network for each student lab environment.

We supported three methods, as shown in Table 1, for IP address assignment: (1) cloud-init-static, (2) dhcp-static, and (3) dynamic DHCP assignment through dhcp-begin and dhcp-end. These methods are unique to two technologies from our implementation: Qemu-Guest-Agent and pfSense’s DHCP services. Cloud-init-static and dhcp-static are practical tools for assigning IP addresses to machines on the same network bridge as the pfSense firewall. Static IP addresses are beneficial for ensuring consistent addressing for student environments. Due to the limitations of the Qemu-Guest-Agent, cloud-init-static may only be used with a Unix-based OS. However, cloud-init-static is advantageous when the VM and firewall reside on different network bridges. For circumstances where a dynamic address is acceptable, dhcp-begin and dhcp-end allow the instructor to identify a range of addresses supported by the firewall.

3.3. Revert Script

During course administration, we found it was beneficial to have a simple mechanism for resetting student machines to their initial states. In instances where students made mistakes, it was easy for them to revert to a clean state, encouraging experimentation and exploration. Similarly, students could restart exercises for extra practice. Using the Revert script requires an existing snapshot created through the Clone script or the cyber range interface.

3.4. Purge Script

Lastly, Purge is used to clean the cyber range after learning has been completed. This can be applied to individual student environments or all student environments. Additionally, the tool can remove selected users, network bridges, and virtual machines from the environment.

3.5. Web Interface

The Web Interface is intended for fast deployment of pre-configured network architectures. Unlike the EMP CLI, students directly use the WI to clone, revert, or purge their lab environment. User management is controlled through a sign-up portal with a registration key. Before an activity, the instructor may generate an account for each student. After creating user accounts, the registration page may be turned off. Alternatively, if the number of participants is not known, the registration key is provided to the students.

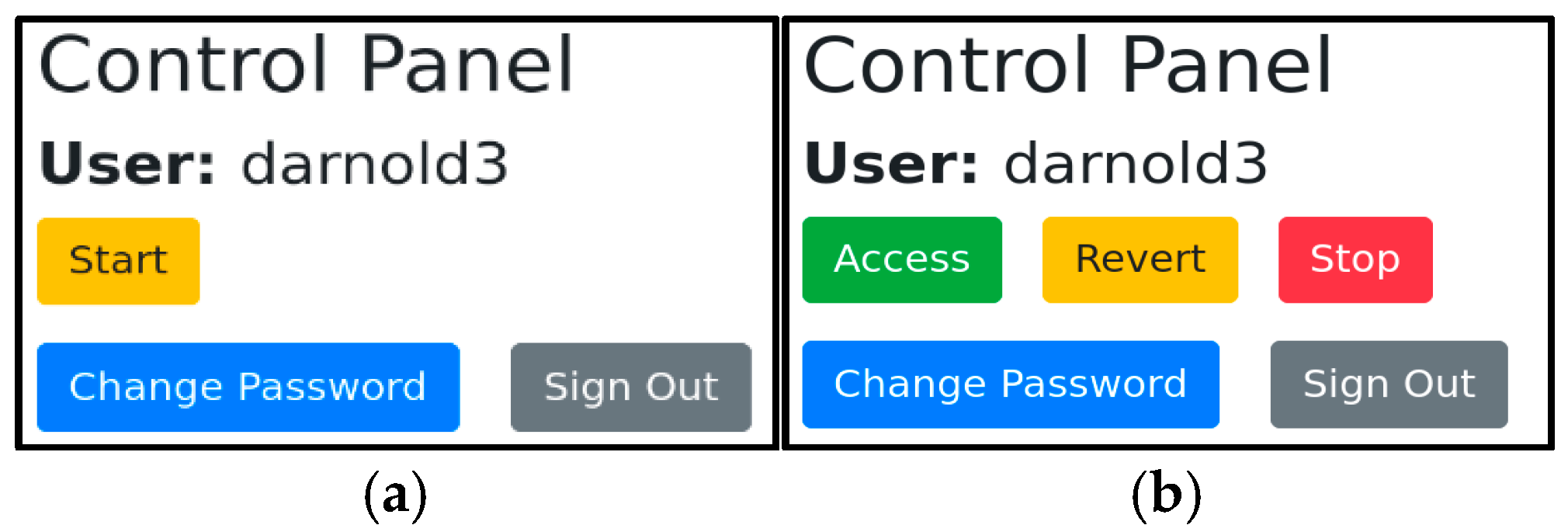

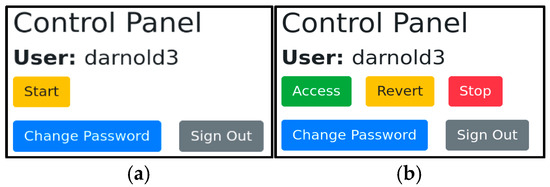

Upon logging in, students are met with the option to start their lab environment, as shown in Figure 2a. The pre-defined environment is configured through an INI file, such as config.ini. This allows an administrator to customize the environment without changing the underlying source code of the platform. All options available to the CLI Template, Clone, Revert, and Purge scripts are customizable through this file. This includes the IDs of VMs, firewall configurations, and user management. After starting their environment, the student has three options: Access, Revert, and Stop, as shown in Figure 2b. Access redirects students to the cyber range environment. Revert is identical to the EMP CLI Revert script, rolling the lab environment back to the initial snapshot. Purging the environment stops similarly to the EMP CLI Purge script. We encourage students to use this option after completing lab activities to reduce resource consumption.

Figure 2.

The User Web Interface after logging in as the user “darnold3”. Panel (a) represents the portal after initially signing in. Students are presented with the “Start” option, which provisions their network environment. Panel (b) represents the portal after provisioning the environment. “Access” redirects the students to the cyber range, “Revert” returns the VMs to a previous snapshot, and “Stop” purges the VMs from the cyber range.

A test page is provided to verify the validity of a configuration file with a temporary user. To simplify management, each user account is assigned a unique numeric ID. In conjunction with wildcards for firewall configuration, we can assign each instance of the lab environment to a unique IP address.

4. Platform Development Technologies

After defining the design flow of the EMP, we developed the Command Line Interface Script and Web Interface for a sample cyber range environment. For our implementation, we selected the Proxmox Virtual Environment (VE) as the core infrastructure for student lab environments [43]. Proxmox VE exposes a REST API for most management functionality. Along with the cyber range infrastructure, a pfSense firewall was introduced to handle DHCP IP assignments for student virtual machines. The inclusion of the firewall provides instructors with a mechanism for controlling traffic between the student lab environment and the outside Internet.

4.1. Command Line Interface

When interacting with our environment, the instructor has four Python scripts: (1) Template, (2) Clone, (3) Revert, and (4) Purge. The functionality of each script matches the design objectives mentioned in Section 2. Python 3 was selected as the publicly accessible Proxmoxer. Python libraries provide an easy-to-use method for accessing the Proxmox VE API [44]. If a different programming language is desired, client libraries are available for Ruby and Perl to interact with the Proxmox VE API. Proxmoxer utilizes Python’s standard requests library to make HTTP requests while written in Python-style dotted notation instead of forward-slash HTTP notation.

Many systems administration and automation tools leverage these client libraries for Proxmox integration. Ansible, a tool for remote host management, utilizes the Python client library to manage Proxmox. Similarly, OpenStack is a widely adopted alternative for managing virtualized environments at scale. Both platforms provide comprehensive support for managing virtual machines, storage, and networking, and both expose robust APIs for integration with external tools and automation frameworks.

The choice of Proxmox in our Environment Management Platform (EMP) was driven by its lightweight deployment model, ease of administration, and native support for KVM and LXC virtualization. Proxmox VE is particularly well-suited for educational institutions that require a self-contained and cost-effective solution for managing classroom-scale cyber ranges. In contrast, OpenStack is a more complex and modular platform, typically deployed in large-scale or enterprise cloud environments. While OpenStack offers greater flexibility and extensibility, its higher administrative overhead and steeper learning curve may pose barriers for smaller teams or academic use cases.

Furthermore, Proxmox’s REST API provides direct access to key virtualization functions with minimal configuration, making it ideal for tight integration with the EMP’s Python-based automation scripts. OpenStack, while powerful, often requires orchestration through additional services (e.g., Nova, Neutron, Keystone), which adds to the setup and operational complexity. For educational deployments that prioritize rapid provisioning, user isolation, and instructor-led control, Proxmox presents a pragmatic and scalable option.

4.2. Web Interface

For our Web Interface, the instructor interacts with a PHP web page to activate the 4 Python scripts according to a pre-defined student lab environment from a configuration file. PHP offers several advantages for application development, particularly in small- to mid-scale systems that do not require complex web frameworks. As a server-side scripting language, PHP handles back-end logic and dynamically generates HTML content that is then rendered by the client’s browser. In our implementation, PHP is used to coordinate the execution of Python scripts and to manage session handling and user interaction. The client-side execution, such as rendering the interface, handling user input, and initiating actions, is handled using HTML, JavaScript, and Bootstrap CSS, which are embedded within the PHP-generated pages. This integration allows for a streamlined development process while maintaining clear separation between client-side and server-side responsibilities.

The PHP web interface is fully configurable through a PHP INI file config.ini. All options available in the CLI Clone, Template, Revert, and Purge scripts are additionally customizable through this file. This includes the IDs of virtual machine templates to be cloned, firewall configurations, and user management decisions. The configuration can be easily tested on a test page: test.php. This page allows for creating an environment for a test user, reverting and destroying their environment, and providing helpful debugging information for each action. Unique IP addresses are assigned based on the user’s numeric ID.

Setup scripts are available to walk administrators through the setup process [45] to assist with installation and deployment. The administrator is prompted for information required to tailor the CLI for the desired environment specifications.

We recognize that combining PHP and Python in web-based systems can introduce potential security risks, particularly related to input validation, command injection, and improper handling of user data. In our implementation, we mitigate these concerns by strictly validating all user input on both the client and server sides. The PHP front end uses sanitization and input filtering functions to prevent injection attacks and restrict unexpected data types. Additionally, communication between the PHP interface and the Python scripts is tightly controlled, with inputs passed only through pre-defined and validated parameters. Wherever possible, system-level commands are executed with restricted permissions, and user actions are sandboxed to minimize the risk of unauthorized access. Future versions of the platform may incorporate web application firewalls (WAFs) and container-based isolation to further strengthen the system’s security posture.

5. A Cybersecurity Case Study

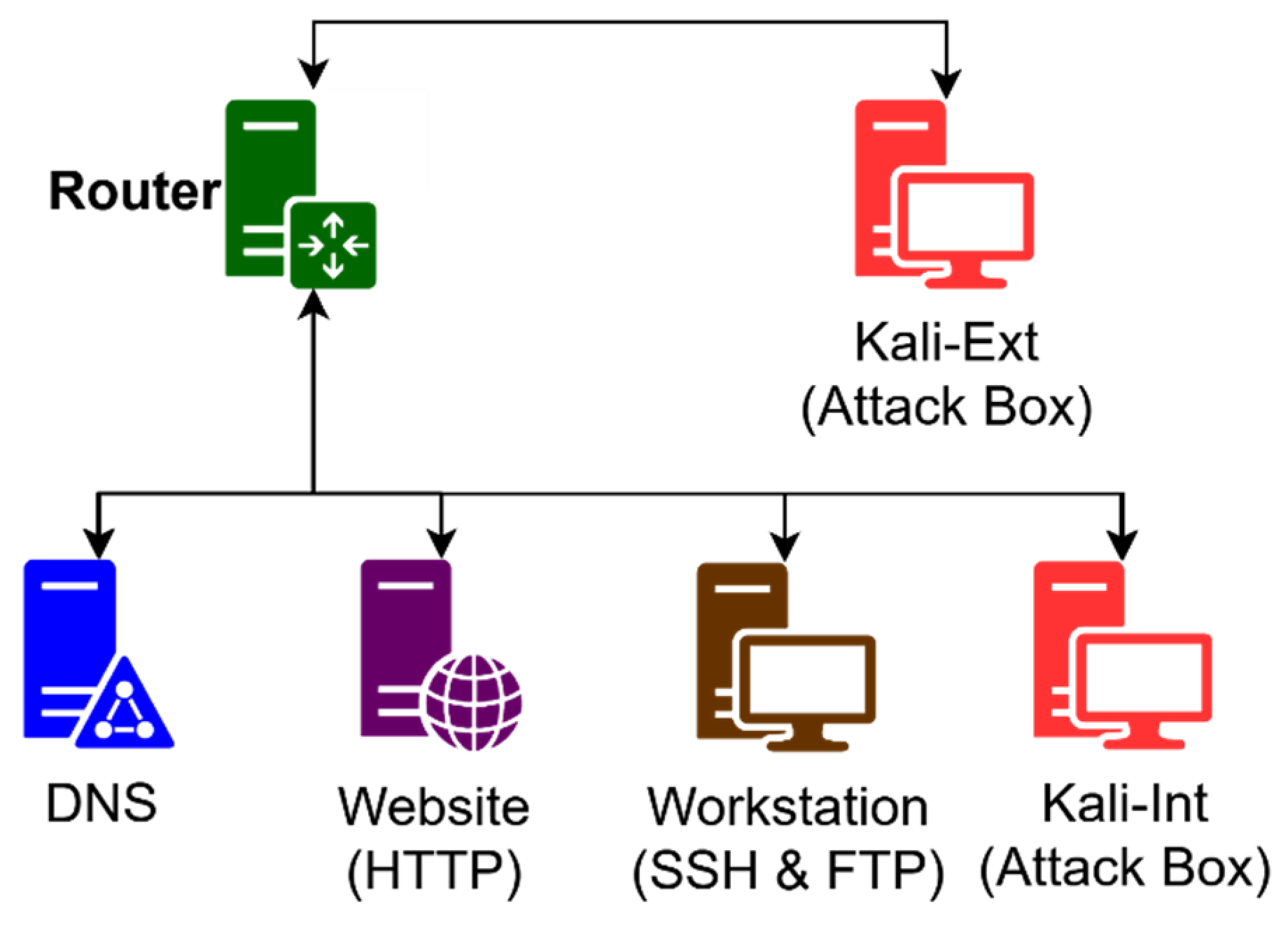

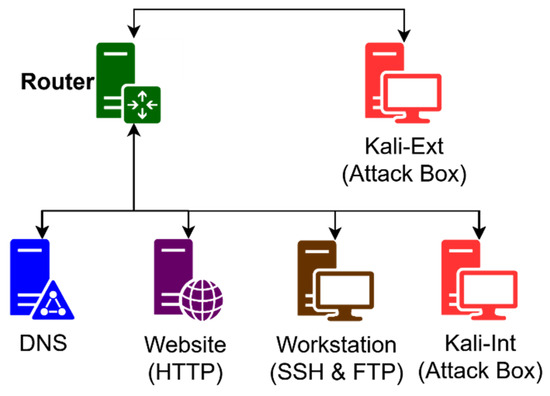

The EMP was developed to streamline the management of cybersecurity activities within the Department of Electrical and Computer Engineering at the Illinois Institute of Technology. In ECE 222, Introduction to Cybersecurity Engineering, experiential learning is crucial in accelerating students’ understanding of ethical hacking, firewall management, intrusion detection systems, and cyber forensics. To support this, student laboratory environments are equipped with 11 virtual machines, providing a comprehensive platform for hands-on learning. Additionally, the EMP supports our cybersecurity boot camps, which cover fundamental cybersecurity concepts, including computer networking and essential network services such as Secure Shell (SSH), File Transfer Protocol (FTP), and Domain Name System (DNS). During these boot camps, students engage with 6 VMs from a diverse range of Linux distributions, with specifications in Table 2. Additionally, we configured our network infrastructure with a 2-LAN approach, as shown in Figure 3.

Table 2.

Cybersecurity case study virtual machine configurations.

Figure 3.

Network diagram for our cybersecurity case study. The student lab is composed of 6 virtual machines of different Linux distributions. A 2-LAN approach is adopted. Students will learn how to install network services and implement network-based and host-based firewalls.

Before deploying the EMP, preparing for these activities was time-consuming and labor-intensive. For each student, we had to create a user account, clone the necessary VMs, configure the appropriate permissions, and set up the networking for each VM. For activities with high student counts, such as our cybersecurity boot camps with up to 50 participants, the EMP was essential for quick and efficient management. Its effectiveness in this scenario made it ideal for us to evaluate the platform.

6. Results and Discussions

After preparing our cyber range environment, we took timing measurements for the Template, Clone, Revert, and Purge scripts. We obtained these measurements by prefixing each command with the ‘time’ command on Linux. The Linux ‘time’ command accurately gauges the duration required to execute a command fully. Our scripts are designed to identify when all configuration changes to Proxmox and pfSense have been finalized. Consequently, the execution time of these commands provides a precise measure of the time needed to perform these operations thoroughly. Each command was executed ten times, and the average of these timings is presented in Table 3. Times are based on an environment of 6 VMs with an additional network bridge. Speed for HTTPS API requests to Proxmox VE and connections to the pfSense firewall depends on the distance between the remote manager and these devices.

Table 3.

Timing evaluations of the EMP.

We completed a similar time evaluation of the manual provisioning process. Times associated with manual provisioning are rough estimates, as they were measured with a stopwatch. Additionally, due to the number of steps, these times may vary significantly between users, depending on their proficiency with the cyber range environment. Timing results for manual provisioning are also presented in Table 3.

Based on these results, we have significantly reduced preparation time. Among our scripts, the most significant reduction (in s) was observed with our Clone script, saving an average of 186 s, or around 3 min. This was expected, as manual cloning is a comprehensive process that involves (1) creating a user, (2) creating a new network bridge, (3) cloning the desired VMs, (4) re-assigning network interfaces, (5) adding a new snapshot, and (6) assigning VMs to student accounts. Additionally, during our experiment, we did not include static IP assignments, which would drastically increase the manual provisioning times. During our case study, we provisioned 55 student environments. Using the EMP, it took 14 min and 26 s, whereas it would have taken roughly 185 min and 24 s. With the savings introduced by our Clone script, we saved a minimum of ~171 min, a little under 3 h of preparation. In terms of the factor of speed-up achieved by the EMP, VM templating was 5 times faster, VM cloning was 12.8 times faster, restoring from a snapshot was 100 times faster, and purging VMs was 6.5 times faster.

In addition to reducing our preparation time, the EMP is crucial in reducing errors during the provisioning process. When attempting to collect timing analysis for manual provisioning, we frequently restarted attempts due to mistakes when naming VMs, assigning network bridges, and assigning the VMs to the student accounts. When using the EMP, we can be confident that the environments are provisioned quickly and 100% accurate to our environment definitions.

When comparing the performance of the Environment Management Platform (EMP) to other environment management systems cited in the literature, our results demonstrate a substantial improvement in provisioning speed. For instance, IN-SALATA, as described by Herold et al., required approximately 45 min and 32 s to replicate a virtualized cyber range [41]. Similarly, Alfons, developed by Yasuda et al., deployed 21 instances of five-server environments in 6754 s (roughly 5.4 min per server) [31]. Netbed’s batch system, as used by White et al., achieved provisioning of 20 servers with an average time of 4.3 min per instance [42]. In contrast, the EMP provisions a six-virtual-machine student environment in approximately 15 s, translating to roughly 2.5 s per VM, representing an order of magnitude reduction in deployment time. While direct replication of other systems’ test scenarios (e.g., IN-SALATA, Alfons, or Netbed) is ideal for precise benchmarking, their published implementations often lack full configuration details such as VM images, network topology, and provisioning triggers. As a result, our comparisons are based on published deployment times and system descriptions. To approximate equivalence, our scenario used six Ubuntu 20.04 VMs configured with isolated bridges and initialized from snapshot templates. We acknowledge that these scenarios may differ in technical detail and therefore interpret our results as indicative of provisioning efficiency rather than strict one-to-one performance superiority. Future work will aim to replicate these environments more closely for direct benchmarking.

This dramatic performance gain is attributable to several key design choices in the EMP. First, our tool leverages lightweight templating and linked cloning techniques that minimize disk I/O and storage overhead, unlike the full VM replication approaches used by some prior systems. Second, the platform is tightly integrated with Proxmox VE’s REST API, allowing for efficient, script-driven orchestration of virtual machines, user accounts, and network bridges. Lastly, the EMP’s command structure and configuration model are optimized for bulk operations, allowing instructors to deploy dozens of lab environments with consistent parameters in a matter of minutes. These advantages position the EMP as a highly effective solution for educational settings that require rapid and repeatable provisioning of virtualized cybersecurity testbeds.

7. Conclusions

In this paper, we presented the design flow for an Environment Management Platform. This platform provides command line and web tools for efficiently creating VM templates, cloning student lab environments, and cleaning up the environment after learning concludes. During testing, we observed significant improvements in management times: VM templating was 5 times faster, VM cloning was 12.8 times faster, restoring from a snapshot was 100 times faster, and purging VMs was 6.5 times faster. On a per-student basis, this resulted in time savings of approximately 3 min, amounting to a total savings of 60 min for a 20-student session. In addition, the platform was crucial in reducing errors during the management and provisioning process. To support broader adoption and reproducibility, we have released the EMP source code and documentation under an open-source license. The source code is publicly available at https://github.com/RedefiningReality/Proxmox-Remote-Management (accessed on 13 July 2023).

While the EMP is an efficient platform for managing a diverse range of curricula, it has several limitations and opportunities for improvement. Firstly, the platform is limited to provisioning virtual machines, which can be resource-intensive and cost-ineffective depending on the lesson objective. For shorter lessons, incorporating the ability to provision lightweight Docker containers would offer greater flexibility and optimize resource usage. Additionally, the EMP functions solely as a management interface. Integrating other components of the cyber range development pipeline (Scenario, Monitoring, Learning, Environment, Teaming, and Management) would enhance its functionality. For instance, integrating monitoring technology would significantly aid in evaluating student performance, providing a more comprehensive educational experience.

Author Contributions

D.A., J.F. and J.S. conceived the concept of the paper. D.A. and J.F., as graduate researchers, implemented the goals and objectives. J.S., as the faculty advisor, oversaw and guided the overall direction of this work. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

The original contributions and results presented in this study are included in the article.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Yamin, M.M.; Katt, B.; Gkioulos, V. Cyber ranges and security testbeds: Scenarios, functions, tools, and architecture. Comput. Secur. 2020, 88, 101636–101694. [Google Scholar] [CrossRef]

- Ukwandu, E.; Farah, M.A.B.; Hindy, H.; Brosset, D.; Kavallieros, D.; Atkinson, R.; Tachtatzis, C.; Bures, M.; Andonovic, I.; Bellekens, X. A Review of Cyber-Ranges and Test-Beds: Current and Future Trends. Sensors 2020, 20, 7148. [Google Scholar] [CrossRef]

- Chuoliaras, N.; Kittes, G.; Kantzavelou, I.; Maglaras, L.; Pantziou, G.; Ferrag, M.A. Cyber Ranges and Testbeds for Education, Training, and Research. Appl. Sci. 2021, 11, 1809. [Google Scholar] [CrossRef]

- Priyadarshini, I. Features and Architecture of the Modern Cyber Range: A Qualitative Analysis and Survey. Master’s Thesis, University of Delaware, Newark, DE, USA, 2018. [Google Scholar]

- Vekaria, K.B.; Calyam, P.; Wang, S.; Payyavula, R.; Rockey, M.; Ahmed, N. Cyber Range for Research-Inspired Learning of “Attack Defense by Pretense” Principle and Practice. IEEE Trans. Learn. Technol. 2021, 14, 322–337. [Google Scholar] [CrossRef]

- Willems, C.; Klingbei, T.; Radvilavicius, L.; Cenys, A.; Meinel, C. A Distributed Virtual Laboratory Architecture for Cybersecurity Training. In Proceedings of the 2011 International Conference for Internet Technology and Secured Transactions, Abu Dhabi, United Arab Emirates, 11–14 December 2011. [Google Scholar]

- Pham, C.; Tang, D.; Chinen, K.-I.; Beuran, R. CyRIS: A Cyber Range Instantiation System for Facilitating Security Training. In Proceedings of the 7th Symposium on Information and Communication Technology, Ho Chi Minh City, Vietnam, 8–9 December 2016. [Google Scholar]

- Xu, L.; Huang, D.; Tsai, W.-T. Cloud-Based Virtual Laboratory for Network Security Education. IEEE Trans. Educ. 2014, 57, 145–150. [Google Scholar] [CrossRef]

- Kalyanam, R.; Yang, B. Try-CybSI: An Extensible Cybersecurity Learning and Demonstration Platform. In Proceedings of the 18th Annual Conference on Information Technology, Rochester, NY, USA, 4–7 October 2017. [Google Scholar]

- Vykopal, J.; Vizvary, M.; Oslejsek, R.; Celeda, P.; Tovarnak, D. Lessons learned from complex hands-on defence exercises in a cyber range. In Proceedings of the 2017 IEEE Frontiers in Education Conference, Indianapolis, IN, USA, 18–21 October 2017. [Google Scholar]

- Vykopal, J.; Seda, P.; Svabensky, V.; Celeda, P. Smart Environment for Adaptive Learning of Cybersecurity Skills. IEEE Trans. Learn. Technol. 2023, 16, 443–456. [Google Scholar] [CrossRef]

- Vykopal, J.; Celeda, P.; Seda, P.; Svabensky, V.; Tovarnak, D. Scalable Learning Environments for Teaching Cybersecurity Hands-on. In Proceedings of the 2021 IEEE Frontiers in Education Conferences (FIE), Lincoln, NE, USA, 13–16 October 2021. [Google Scholar]

- Salah, K.; Hammoud, M.; Zeadally, S. Teaching Cybersecurity Using the Cloud. IEEE Trans. Learn. Technol. 2015, 8, 383–392. [Google Scholar] [CrossRef]

- Siaterlis, C.; Garcia, A.P.; Genge, B. On the Use of Emulabe Testbeds for Scientifically Rigorous Experiments. IEEE Commun. Surv. Tutor. 2013, 15, 929–942. [Google Scholar] [CrossRef]

- Sommestad, T. Experimentation on operational cyber security in CRATE. In Proceedings of the NATO STO-MP-IST-133 Special Meeting, Munich, Germany, 15–16 October 2015. [Google Scholar]

- Greenspan, R.; Laracy, J.R.; Zaman, A. Real-Time Immersive Network Simulation Environment (RINSE); University of Illinois at Urbana-Champaign: Champaign, IL, USA, 2004. [Google Scholar]

- Rossey, L.M.; Cunningham, R.K.; Fried, D.J.; Rabek, J.C.; Lippmann, R.P.; Haines, J.W.; Zissman, M.A. LARIAT: Lincoln Adaptable Real-time Information Assurance Testbed. In Proceedings of the IEEE Aerospace Conference, Big Sky, MT, USA, 9–16 March 2002. [Google Scholar]

- Cruz, T.; Simoes, P. Down the Rabbit Hole: Fostering Active Learning through Guided Exploration of a SCADA Cyber Range. Appl. Sci. 2021, 11, 9509. [Google Scholar] [CrossRef]

- Coppolino, L.; D’Antonio, S.; Formicola, V.; Giuliano, V.; Mazzeo, G. ICSrange: A Simulation-based Cyber Range Platform for Industrial Control Systems. arXiv 2020, arXiv:1909.01910. [Google Scholar] [CrossRef]

- Gunathilaka, P.; Mashima, D.; Chen, B. SoftGrid: A Software-based Smart Grid Testbed for Evaluating Substation Cybersecurity Solutions. In Proceedings of the 2nd ACM Workshop on Cyber-Physical Systems Security and Privacy, Xi’an, China, 30 May 2016. [Google Scholar]

- Hammad, E.; Ezeme, M.; Farraj, A. Implementation and development of an offline co-simulation testbed for studies of power systems cyber security and control verification. Int. J. Electr. Power Energy Syst. 2019, 104, 817–826. [Google Scholar] [CrossRef]

- Nock, O.; Starkey, J.; Angelopoulos, C.M. Addressing the Security Gap in IoT: Towards and IoT Cyber Range. Sensors 2020, 20, 5439. [Google Scholar] [CrossRef] [PubMed]

- Balto, K.E.; Yamin, M.M.; Shalaginov, A.; Katt, B. Hybrid IoT Cyber Rang. Sensors 2023, 23, 3071. [Google Scholar] [CrossRef] [PubMed]

- Waraga, O.A.; Bettayeb, M.; Nasir, Q.; Talib, M.A. Design and Implementation of Automated IoT Security Testbed. Comput. Secur. 2020, 88, 101648–101675. [Google Scholar] [CrossRef]

- Lee, S.; Lee, S.; Yoo, H.; Kwon, S.; Shon, T. Design and Implementation of cybersecurity testbed for industrial IoT systems. J. Supercomput. 2018, 74, 4506–4520. [Google Scholar] [CrossRef]

- ISC2. How the Economy, Skills Gap and Artificial Intelligence are Challenging the Global Cybersecurity Workforce; ISC2: Alexandria, VA, USA, 2023. [Google Scholar]

- Hellmann, K. See Yourself in Cybersecurity. U.S. Department of Labor Blog, 22 September 2023. Available online: https://blog.dol.gov/2023/09/22/see-yourself-in-cybersecurity#:~:text=As%20of%20August%202022%2C%20there,cyber%20talent%20is%20in%20demand (accessed on 1 February 2024).

- Eckroth, J.; Chen, K.; Gatewood, H.; Belna, B. Alpaca: Building Dynamic Cyber Ranges with Procedurally-Generated Vulnerability Lattices. In Proceedings of the 2019 ACM Southeast Conference, Kennesaw, GA, USA, 18–20 April 2019. [Google Scholar]

- Rursch, J.A.; Jacobson, D. When a testbed does more than testing: The Internet-Scale Event Attack and Generation Environment (ISEAGE)—Providing learning and synthesizing experiences for cyber security students. In Proceedings of the 2013 IEEE Frontiers in Education Conference (FIE), Oklahoma City, OK, USA, 23–26 October 2013. [Google Scholar]

- Rursch, J.A.; Jacobson, D. This IS child’s play: Creating a “playground” (computer network testbed) for high school students to learn, practice, and compete in cyber defense competitions. In Proceedings of the 2013 IEEE Frontiers in Education Conference, Oklahoma City, OK, USA, 23–26 October 2013. [Google Scholar]

- Yasuda, S.; Miura, R.; Ohta, S.; Takano, Y.; Miyachi, T. Alfons: A Mimetic Network Environment Construction System. In Proceedings of the Testbeds and Research Infrastructures for the Development of Networks and Communities: 11th International Conference (TRIDENTCOM 2017), Dalian, China, 28–29 September 2017. [Google Scholar]

- Urias, V.; Leeuwen, B.V.; Richardson, B. Supervisory Command and Data Acquisition (SCADA) system Cyber Security Analysis using a Live, Virtual, and Constructive (LVC) Testbed. In Proceedings of the Milcom 2012 IEEE Military Communications Conference, Orlando, FL, USA, 29 October–1 November 2012. [Google Scholar]

- Pfrang, S.; Kippe, J.; Meier, D.; Haas, C. Design and Architecture of an Industrial IT Security Lab. In Proceedings of the Testbeds and Research Infrastructures for the Development of Networks and Communities: 11th International Conference (TRIDENTCOM 2017), Dalian, China, 28–29 September 2017. [Google Scholar]

- Luchian, E.; Filip, C.; Rus, A.B.; Ivanciu, I.-A.; Dobrota, V. Automation of the Infrastructure and Services for an OpenStack Deployment Using Chef Tool. In Proceedings of the 15th RoEduNet Conference: Networking in Education and Research, Bucharest, Romania, 7–9 September 2016. [Google Scholar]

- Kostromin, R.O. Survey of software configuration management tools of nodes in heterogeneous distributed computing environment. In Proceedings of the ICCS-DE, Online, 18–21 September 2020. [Google Scholar]

- Bergin, D.L. Cyber-attack and defense simulation framework. J. Def. Model. Simul. 2015, 12, 383–392. [Google Scholar] [CrossRef]

- Chadha, R.; Bowen, T.C.C.-Y.J.; Gottlieb, Y.M.; Poylisher, A.; Sapello, A.; Serban, C.; Sugrim, S.; Walther, G.; Marvel, L.M.; Newcomb, E.A.; et al. CyberVAN: A Cyber Security Virtual Assured Network Testbed. In Proceedings of the MILCOM 2016-2016 IEEE Military Communications Conference, Baltimore, MD, USA, 1–3 November 2016. [Google Scholar]

- Reed, T.; Nauer, K.; Silva, A. Instrumenting Competition-Based Exercises to Evaluate Cyber Defender Situation Awareness. In Proceedings of the Foundations of Augmented Cognition: 7th International Conference, Las Vegas, NV, USA, 21–26 July 2013. [Google Scholar]

- Alvarenga, I.D.; Duarte, O.C.M.B. RIO: A Denial of Service Experimentation Platform in a Future Internet Testbed. In Proceedings of the 7th International Conference on the Network of the Future (NOF), Rio de Janeiro, Brazil, 16–18 November 2016. [Google Scholar]

- Shu, G.; Chen, D.; Liu, Z.; Li, N.; Sang, L.; Lee, D. VCSTC: Virtual Cyber Security Testing Capability—An Application Oriented Paradigm for Network Infrastructure Protection. In Proceedings of the Testing of Software and Communication Systems: 20th IFIP TC 6/WG 6.1 International Conference, Tokyo, Japan, 10–13 June 2008. [Google Scholar]

- Herold, N.; Wachs, M.; Dorfhuber, M.; Rudolf, C.; Liebald, S.; Carle, G. Achieving Reproducible Network Environments with INSALATA. In Proceedings of the Security of Networks and Services in an All-Connected World: 11th IFIP WG 6.6 International Conference on Autonomous Infrastructure Management and Security, Zürich, Switzerland, 10–13 July 2017. [Google Scholar]

- White, B.; Lepreau, J.; Stoller, L.; Ricci, R.; Guruprasad, M.N.; Hibler, M.; Barb, C.; Joglekar, A. An Integrated Experimental Environment for Distributed Systems and Networks. ACM SIGOPS Oper. Syst. Rev. 2002, 36, 255–270. [Google Scholar] [CrossRef]

- Proxmox Server Solutions GmbH, Proxmox. 2023. Available online: https://www.proxmox.com/en/ (accessed on 1 September 2024).

- Welcome to Proxmoxer. 22 March 2022. Available online: https://proxmoxer.github.io/docs/2.0/ (accessed on 1 September 2024).

- Ford, J. Proxmox-Remote-Management. Available online: https://github.com/RedefiningReality/Proxmox-Remote-Management (accessed on 13 July 2023).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).