Modeling Word Learning and Processing with Recurrent Neural Networks

Abstract

1. Introduction

2. Related Work

3. Materials and Methods

3.1. The Data

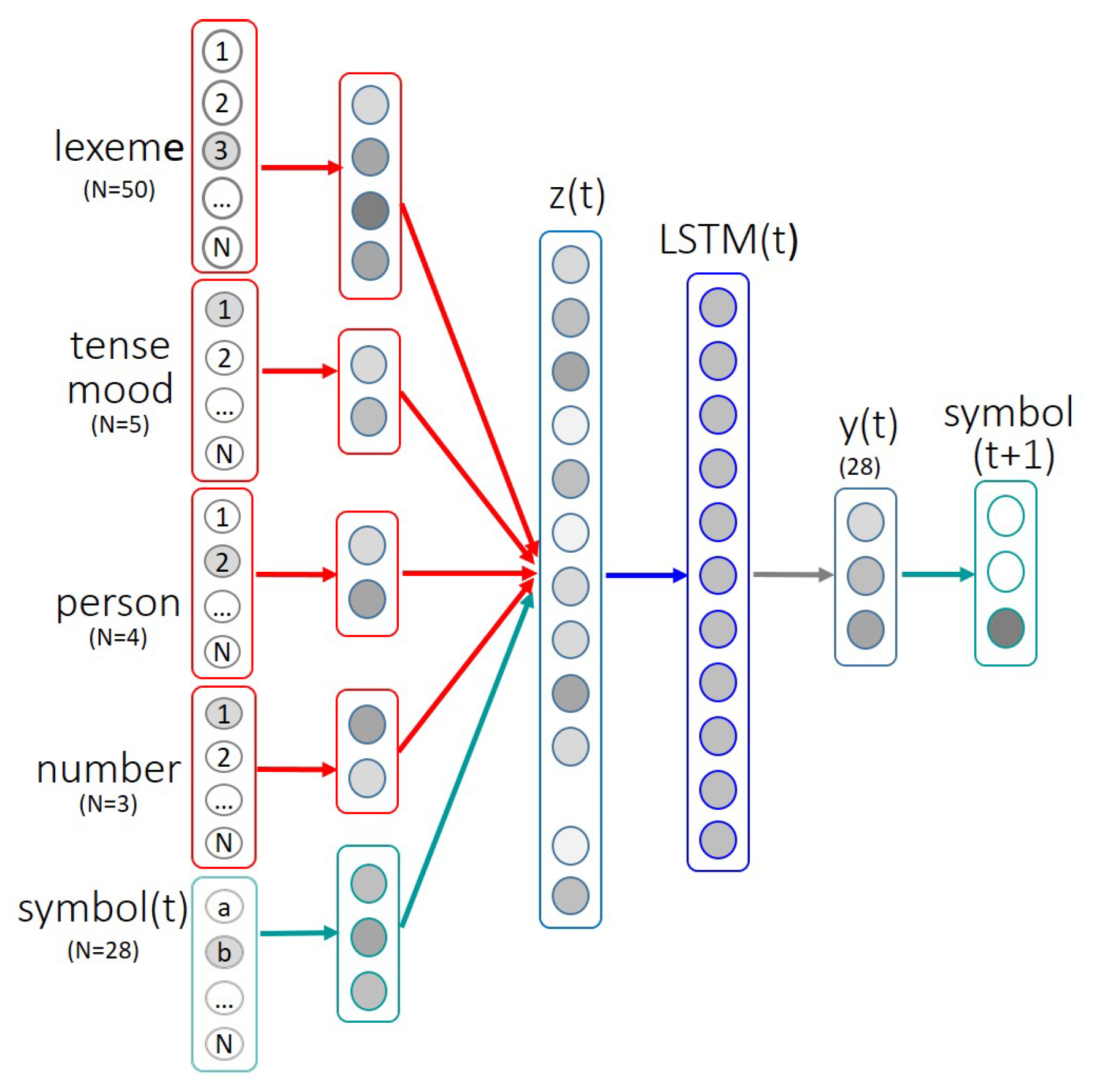

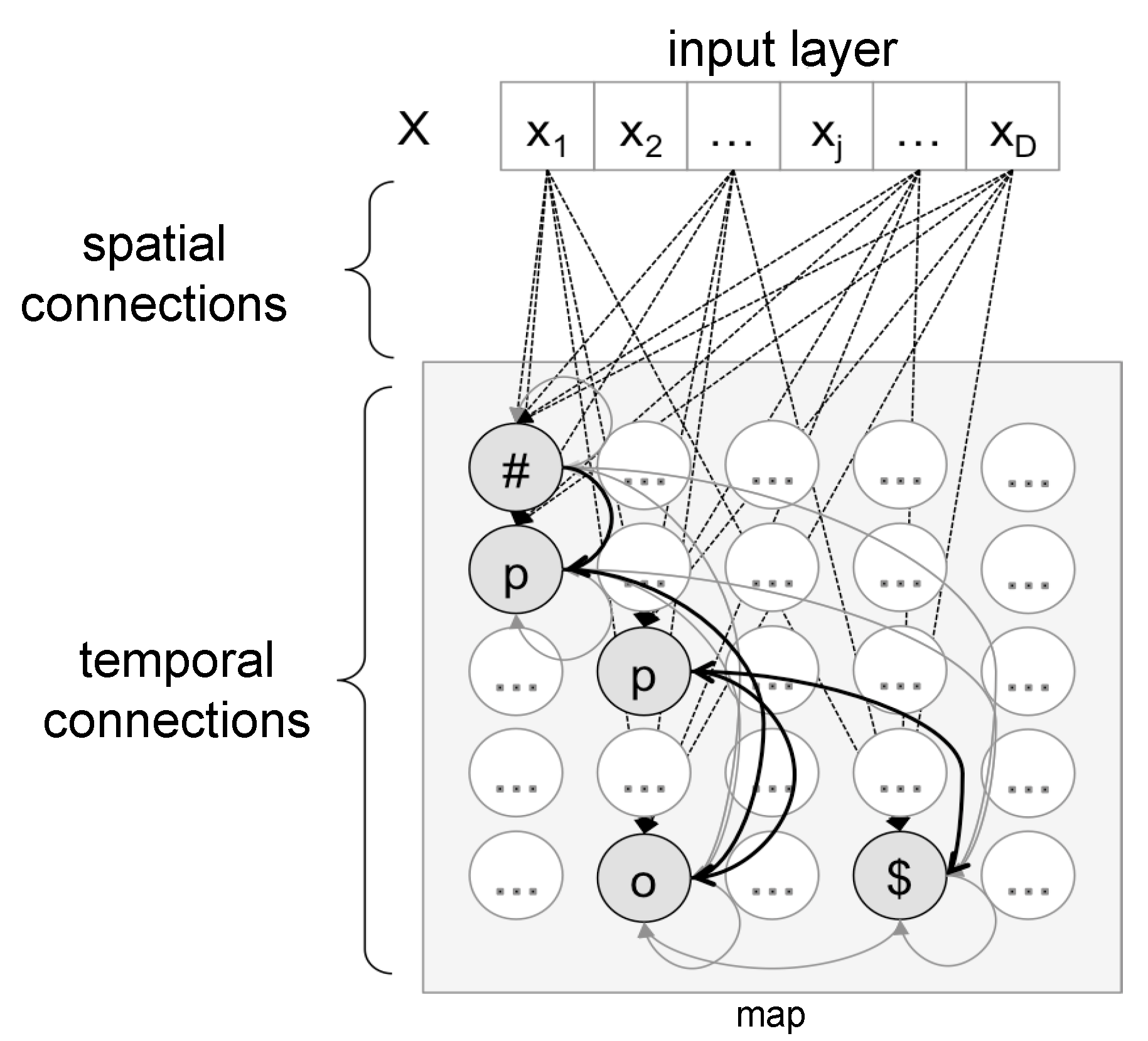

3.2. The Neural Networks

3.3. Training Protocol

4. Results

4.1. Training and Test Accuracy

4.2. Prediction Scores

4.3. Modeling Serial Processing

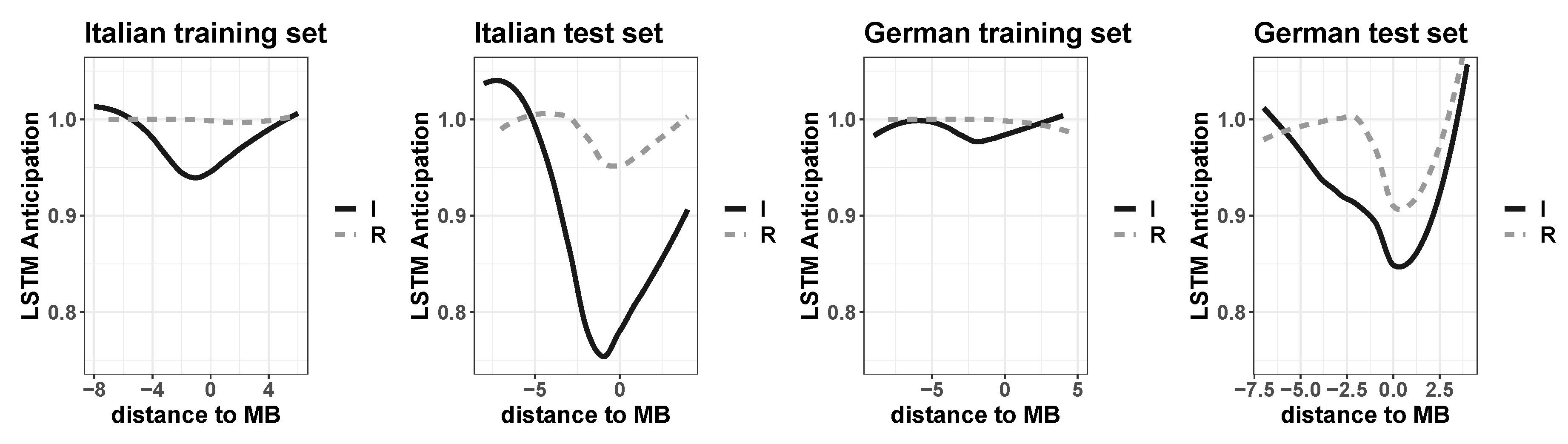

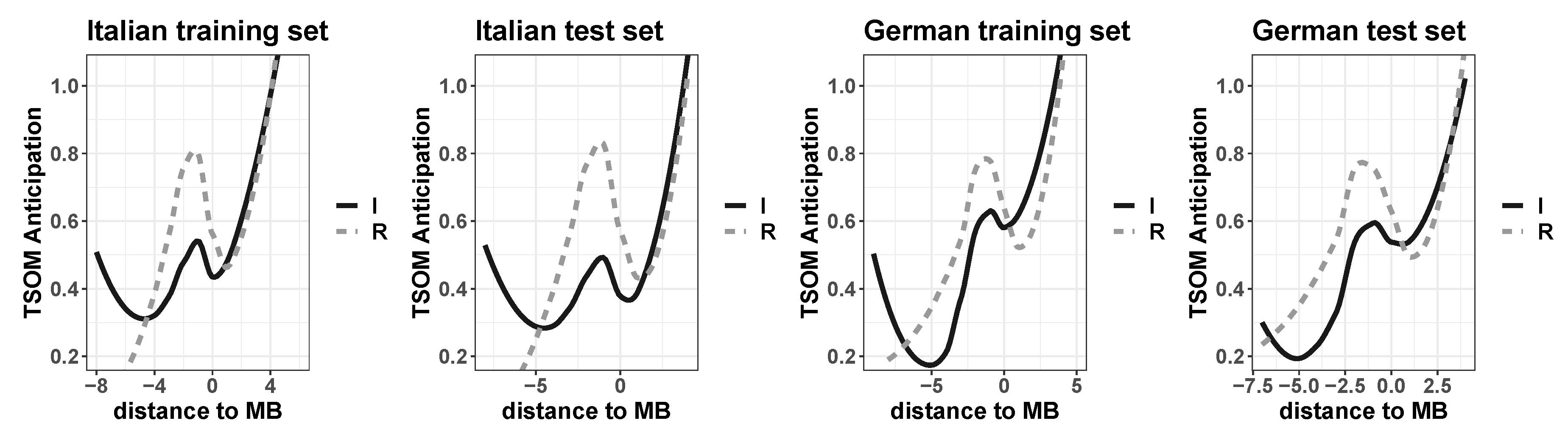

4.3.1. Prediction (1): Structural Effects

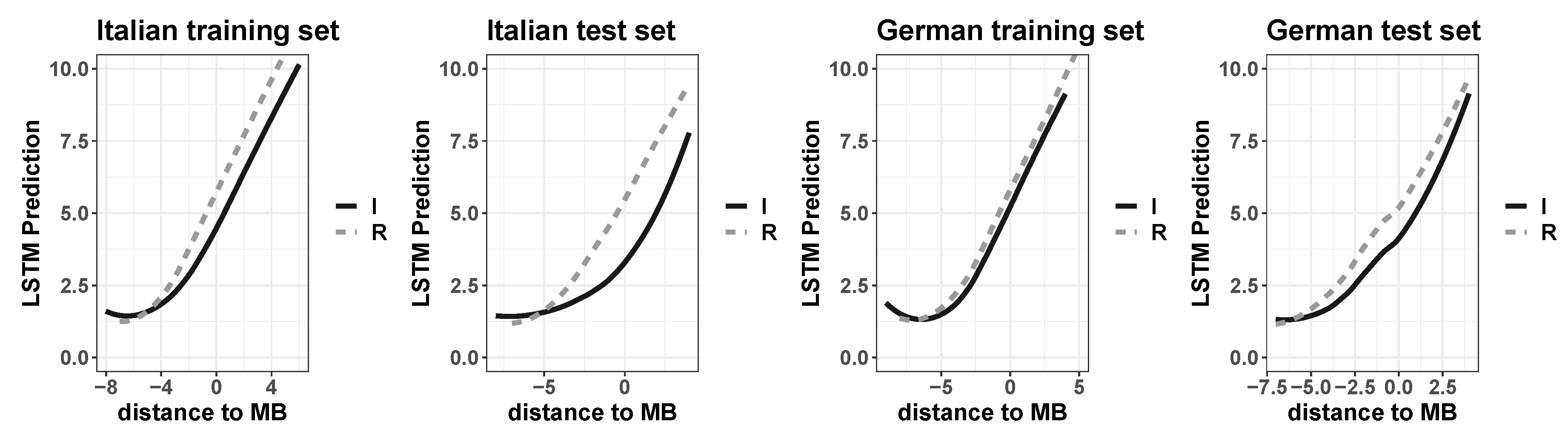

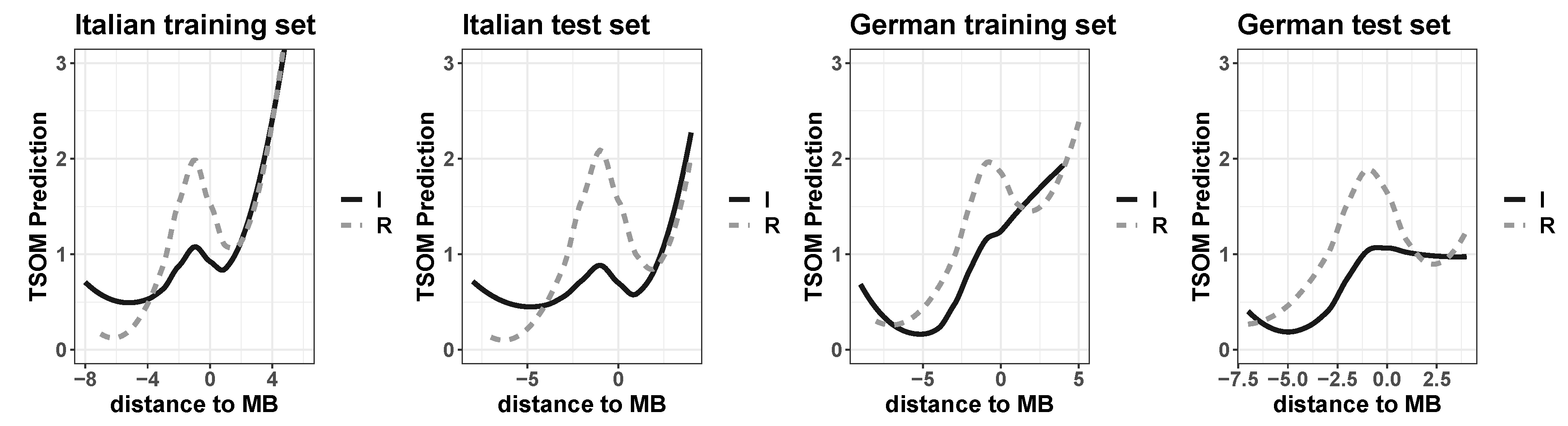

4.3.2. Prediction (2): Serial Processing Effects

5. Discussion

6. Conclusions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| RNN | Recurrent Neural Network |

| LSTM | Long Short-Term Memory |

| TSOM | Self-Organizing Map |

| BMU | Best Matching Unit |

| MB | Morpheme Boundary |

References

- Harris, Z. Methods in Structural Linguistics; University of Chicago Press: Chigaco, IL, USA, 1951. [Google Scholar]

- Post, B.; Marslen-Wilson, W.; Randall, B.; Tyler, L.K. The processing of English regular inflections: Phonological cues to morphological structure. Cognition 2008, 109, 1–17. [Google Scholar] [CrossRef] [PubMed]

- D’Esposito, M. From cognitive to neural models of working memory. Philos. Trans. R. Soc. Biol. Sci. 2007, 362, 761–772. [Google Scholar] [CrossRef] [PubMed]

- Ma, W.J.; Husain, M.; Bays, P.M. Changing concepts of working memory. Nat. Neurosci. 2014, 17, 347–356. [Google Scholar] [CrossRef] [PubMed]

- Bengio, Y.; Simard, P.; Frasconi, P. Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 1994, 5, 157–166. [Google Scholar] [CrossRef] [PubMed]

- Hochreiter, S.; Schmidhuber, J. Long short-term memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef] [PubMed]

- Jozefowicz, R.; Zaremba, W.; Sutskever, I. An empirical exploration of recurrent network architectures. In Proceedings of the 32nd International Conference on Machine Learning (ICML-15), Lille, France, 6–11 July 2015; pp. 2342–2350. [Google Scholar]

- Ferro, M.; Marzi, C.; Pirrelli, V. A self-organizing model of word storage and processing: Implications for morphology learning. Lingue Linguaggio 2011, 10, 209–226. [Google Scholar]

- Marzi, C.; Ferro, M.; Nahli, O. Arabic word processing and morphology induction through adaptive memory self-organisation strategies. J. King Saud Univ. Comput. Inf. Sci. 2017, 29, 179–188. [Google Scholar] [CrossRef]

- Pirrelli, V.; Ferro, M.; Marzi, C. Computational complexity of abstractive morphology. In Understanding and Measuring Morphological Complexity; Baerman, M., Brown, D., Corbett, G., Eds.; Oxford University Press: Oxford, UK, 2015; pp. 141–166. [Google Scholar]

- Marzi, C.; Ferro, M.; Pirrelli, V. A processing-oriented investigation of inflectional complexity. Front. Commun. 2019, 4, 1–48. [Google Scholar] [CrossRef]

- Cardillo, F.A.; Ferro, M.; Marzi, C.; Pirrelli, V. How “deep” is learning word inflection? In Proceedings of the 4th Italian Conference on Computational Linguistics, Rome, Italy, 1–12 December 2017; pp. 77–82. [Google Scholar]

- Mikolov, T.; Karafiát, M.; Burget, L.; Černockỳ, J.; Khudanpur, S. Recurrent neural network based language model. In Proceedings of the 11th Annual Conference of the International Speech Communication Association, Chiba, Japan, 26–30 September 2010; pp. 1045–1048. [Google Scholar]

- Botvinick, M.; Plaut, D.C. Short-term memory for serial order: A recurrent neural network model. Psychol. Rev. 2006, 113, 201–233. [Google Scholar] [CrossRef] [PubMed]

- Bowers, J.S.; Damian, M.F.; Davis, C.J. A fundamental limitation of the conjunctive codes learned in PDP models of cognition: Comment on Botvinick and Plaut (2006). Psychol. Rev. 2009, 116, 986–997. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Elman, J.L. Finding structure in time. Cogn. Sci. 1990, 4, 179–211. [Google Scholar] [CrossRef]

- Jaech, A.; Ostendorf, M. Personalized language model for query auto-completion. arXiv 2018, arXiv:1804.09661. [Google Scholar]

- Gers, F.A.; Schmidhuber, E. LSTM recurrent networks learn simple context-free and context-sensitive languages. IEEE Trans. Neural Netw. 2001, 12, 1333–1340. [Google Scholar] [CrossRef] [PubMed]

- Ghosh, S.; Vinyals, O.; Strope, B.; Roy, S.; Dean, T.; Heck, L. Contextual LSTM (CLSTM) models for large scale NLP tasks. arXiv 2016, arXiv:1602.06291. [Google Scholar]

- Xu, K.; Xie, L.; Yao, K. Investigating LSTM for punctuation prediction. In Proceedings of the 10th International Symposium on Chinese Spoken Language Processing (ISCSLP), Tianjin, China, 17–20 October 2016; pp. 1–5. [Google Scholar]

- Malouf, R. Generating morphological paradigms with a recurrent neural network. San Diego Linguist. Pap. 2016, 6, 122–129. [Google Scholar]

- Cardillo, F.A.; Ferro, M.; Marzi, C.; Pirrelli, V. Deep Learning of Inflection and the Cell-Filling Problem. Ital. J. Comput. Linguist. 2018, 4, 57–75. [Google Scholar]

- Marzi, C.; Ferro, M.; Nahli, O.; Belik, P.; Bompolas, S.; Pirrelli, V. Evaluating inflectional complexity crosslinguistically: A processing perspective. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018), Miyazaki, Japan, 7–12 May 2018; pp. 3860–3866. [Google Scholar]

- Lyding, V.; Stemle, E.; Borghetti, C.; Brunello, M.; Castagnoli, S.; Dell’Orletta, F.; Dittmann, H.; Lenci, A.; Pirrelli, V. The paisá corpus of italian web texts. In Proceedings of the 9th Web as Corpus Workshop (WaC-9)@ EACL, Gothenburg, Sweden, 26 April 2014; pp. 36–43. [Google Scholar]

- Baayen, H.R.; Piepenbrock, P.; Gulikers, L. The CELEX Lexical Database; Linguistic Data Consortium, University of Pennsylvania: Philadelphia, PA, USA, 1995. [Google Scholar]

- Aronoff, M. Morphology by Itself: Stems and Inflectional Classes; The MIT Press: Cambridge, MA, USA, 1994. [Google Scholar]

- Bittner, D.; Dressler, W.U.; Kilani-Schoch, M. Development of Verb Inflection in First Language Acquisition: A Cross-Linguistic Perspective; Mouton de Gruyter: Berlin, Germany, 2003. [Google Scholar]

- Wu, S.; Cotterell, R.; O’Donnell, T.J. Morphological Irregularity Correlates with Frequency. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 28 July–2 August 2019; pp. 5117–5126. [Google Scholar]

- Chen, Q.; Mirman, D. Competition and Cooperation Among Similar Representations: Toward a Unified Account of Facilitative and Inhibitory Effects of Lexical Neighbors. Psychol. Rev. 2012, 119, 417–430. [Google Scholar] [CrossRef] [PubMed]

- Balling, L.W.; Baayen, R.H. Morphological effects in auditory word recognition: Evidence from Danish. Lang. Cogn. Process. 2008, 23, 1156–11902. [Google Scholar]

- Balling, L.W.; Baayen, R.H. Probability and surprisal in auditory comprehension of morphologically complex words. Cognition 2012, 125, 80–106. [Google Scholar] [CrossRef] [PubMed]

Sample Availability: Datasets are available from the author. |

| Language | Word Types | Regular/Irregular Paradigms | Number of Characters | Maximum Length of Forms |

|---|---|---|---|---|

| Italian | 750 | 23/27 | 22 | 14 |

| German | 750 | 16/34 | 28 | 13 |

| LSTMs | Training | Test | TSOMs | Training | Test |

|---|---|---|---|---|---|

| Italian: 512-blocks | 93.55 (1.16) | 68.73 (5.54) | Italian: 42 × 42 nodes | 99.92 (0.13) | 95.62 (1.66) |

| German: 256-blocks | 97.25 (0.65) | 74.54 (6.06) | German: 40 × 40 nodes | 99.88 (0.11) | 100 (0) |

© 2020 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Marzi, C. Modeling Word Learning and Processing with Recurrent Neural Networks. Information 2020, 11, 320. https://doi.org/10.3390/info11060320

Marzi C. Modeling Word Learning and Processing with Recurrent Neural Networks. Information. 2020; 11(6):320. https://doi.org/10.3390/info11060320

Chicago/Turabian StyleMarzi, Claudia. 2020. "Modeling Word Learning and Processing with Recurrent Neural Networks" Information 11, no. 6: 320. https://doi.org/10.3390/info11060320

APA StyleMarzi, C. (2020). Modeling Word Learning and Processing with Recurrent Neural Networks. Information, 11(6), 320. https://doi.org/10.3390/info11060320