1. Introduction

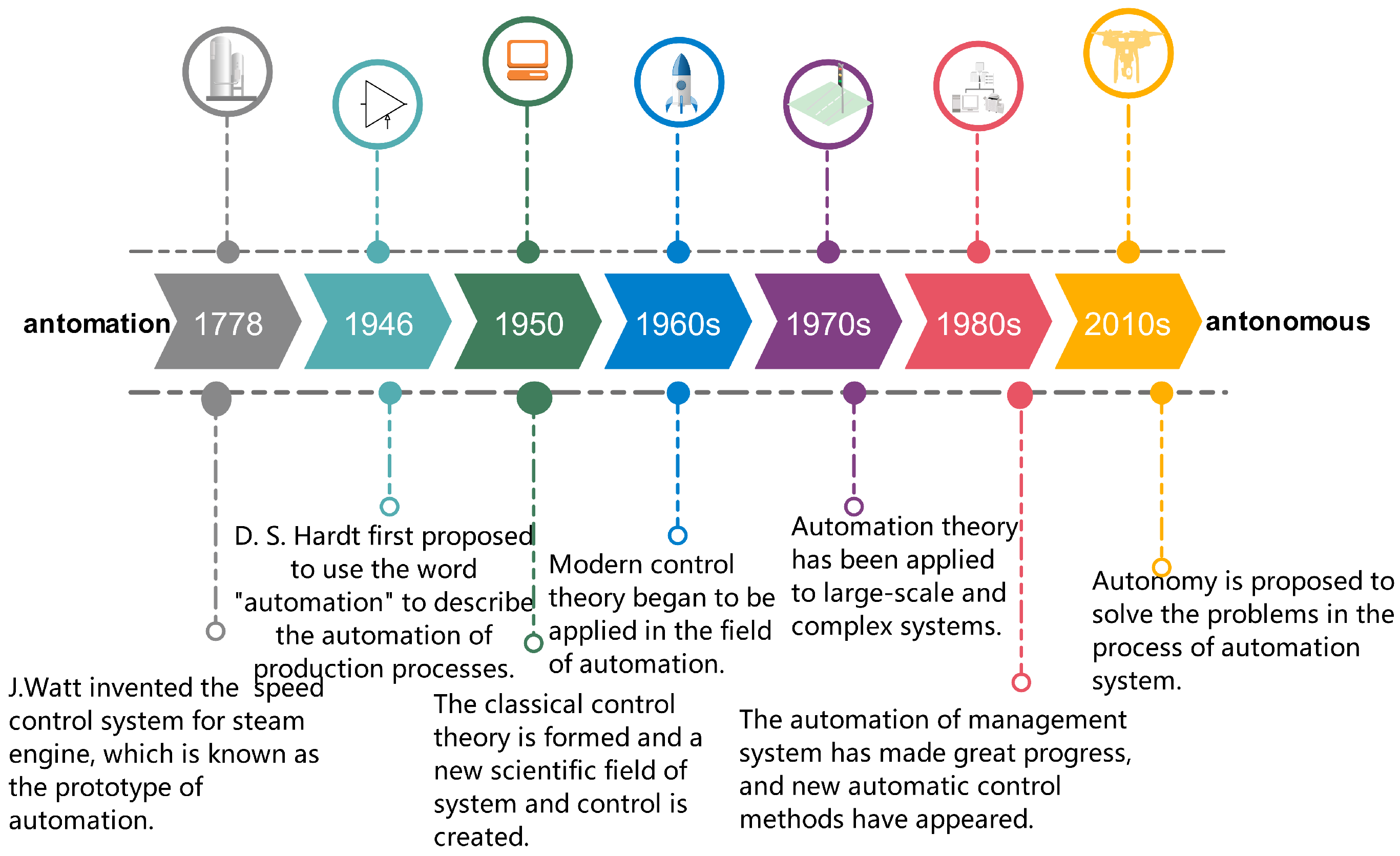

Industrial development and efficiency requirements have extensively promoted the development of automation technology [

1]. D.S. Hardt, working as a mechanical engineer at the Ford company in the United States, was the first person putting forward the use of automation to describe the automatic operation steps of the industrial production process in 1946. After the 1950s, automatic control was regarded as an effective technique to improve social production efficiency. It became more popular with various industries, industrial manufacturing automation, metallurgy, and other continuous production process automation.

Meanwhile, business management systems and other industry classifications have been formed. In the early 1960s, the multiple inputs and multiple outputs (MIMO) optimal control problem was seriously investigated, challenging the automation systems. To fix this kind of problem, the modern control theories involved with state-space and dynamic programming as the core was proposed and widely used in the engineering domain, particularly in aerospace. At the same time, some related aspects, including adaptive and stochastic control, system identification, differential game, distributed parameter system, were proposed. In the 1970s, some attention was laid on large-scale and complex systems, such as large-scale ecological environment protection systems, transportation management systems, and industrial control systems. Moreover, the aim of system control varied from controller optimization and accurate management of systems to forecast and state design of systems for which the cores involved operations research, information theory, and system integration theory. In the 1980s, with the rapid development of communication technology and computer technology, the automation of management systems has made significant progress. New automatic control methods, such as human-machine systems and expert systems, were proposed. These control methods improve the level of management automation and promote the development of management systems.

In the 21st century, with the development of information technology, single technology was difficult to fulfill systems requirements. Therefore, information and communication technology (ICT), including sensor technology, computer, and intelligent technology, communication technology, and control technology, was developed to solve system automation problems. However, due to the increasing complexity of system functions and the diversification of system decision-making, automation technology could not fully meet production needs. The demand for developing autonomy became increasingly vital to alleviate the dependence on human-related interactions, on the other hand, to improve the ability of individuals and systems to complete tasks independently.The development process of the automation system is shown in

Figure 1.

Afterward, the Defense Science Council of the United States issued a vital guidance document entitled the status of autonomy in the Department of defense unmanned system with obvious goal concerning the improvement of systems’ autonomy capability [

2] in which the importance of autonomous capability for systems, especially for unmanned systems, is highlighted. It can be seen from the above analysis that autonomy has been the hotpot research direction in promising automation, even intelligence of individuals and systems.

At present, the research on the autonomy system mainly includes perception [

3], planning [

4], decision-making [

5], control [

6], human-computer interaction [

7], and so on. Perception is the key aspect of realizing the autonomy of individuals and systems. Only through perception can systems interact with their environment and achieve the task objectives of the system. Up to date, studies on perception mainly include navigation perception [

8], task awareness [

9], system state awareness [

10], and execution operation perception [

11]. To achieve systems goals, it is necessary to plan the current or future actions or states of individuals and systems based on perception information to ensure navigation safety. This task was always done through effective planning, which refers to the process of calculating the sequence or partial sequence of actions that can change the current state into the expected state to achieve the task under the premise of safety [

12]. The purpose of decision-making is to make expected cost-efficiency decisions on future actions based on existing information acquired from perception and experience. Machine learning has become one of the most effective methods to decide for intelligent autonomous systems. Generally speaking, it is more effective to obtain information from data than manual knowledge engineering. By looking for reliable patterns from a large number of specific data, the accuracy and robustness of autonomous systems are higher than that of manual software engineering [

13], and the system can automatically adapt to the new environment according to the actual operation state [

14]. Human-computer interaction is a hot issue in the research of systematic autonomy. It mainly solves how to interact and cooperate among humans with the machine, computer, or other supporting techniques [

15]. The investigation laid on the relationship between human and computer system can help to improve system performance, reduce platform design and operation cost, improve the adaptability of existing systems to new environments and new tasks, and speed up the execution of their tasks [

16].

System architecture is a discipline used to describe the system’s internal structure and elements relationship and guide system design and system construction. On the one hand, the architecture mainly uses the perception module, planning module, function execution module, and information feedback module involved in autonomy development. On the other hand, the hierarchical relationship and logical relationship among the modules need to be defined in the autonomous system architecture. Generally, for such a system architecture [

17] several architectures should be considered and designed, including logical architecture, physical architecture, hierarchical architecture, functional architecture, computing architecture, network architecture, software architecture, functional architecture. The architecture of autonomous systems should also define the logical basis, organizational framework foundation, and related model prototype of the system’s internal structure. The purpose of architecture design is to support the development of intelligence and the realization of autonomy. Through the design of architecture, the perception ability of the system can be enhanced, the effectiveness and accuracy of the autonomous object design can be improved, and then the actionability of the system to achieve the established goals can be enhanced. Besides, it can enhance the self-adaptive and self-learning ability of the system. Therefore, the research on the architecture of an autonomous system is one of the primary issues of autonomy research. As the system gradually changes from automation to autonomous, the architecture is also changing significantly. Accordingly, this paper classifies the autonomous system architectures and analyzes the critical technologies contained in the architecture.

The main structure of this article is organized as follows: autonomy and autonomous system are defined in

Section 2. In

Section 3, we comprehensively reviewed the typical system architecture of the autonomous system and the current organizational form of the autonomous system. We came up with recommendations for the construction of the autonomous system architecture. Taking waterway transportation as an example, we present the construction process about the architecture of autonomous waterborne transportation systems according to our suggestions in

Section 4. Finally, the key technical challenges of autonomous systems are discussed.

2. Autonomy and Autonomous System

Automation is the automatic control and operation of an apparatus, process, or system by mechanical or electronic devices that take the place of human labor. The most notable feature of autonomy is characterized by the transfer of decision-making power, which transfers the decision-making power of the whole system from the critical node of the system to the trusted object [

18]. The trusted object can act upon the decision under permissions without outside intervention. The most significant difference between an automated and autonomous system is whether the system can self-learn and self-evolution. When a system acts according to the established rules without any error, it is called an automated system, not an autonomous system. To achieve the goal, the autonomous system must be able to accurately perceive and judge the environment, the state of the system, as well as the understanding of the task, to reason the system itself and the situation, and to formulate and select different action processes independently to achieve the relevant goals. Autonomy can be seen as the extension of automation, which is intelligent and automation with higher capabilities. Autonomy has different definitions in different fields, which is summarized in

Table 1.

An autonomous system refers to the system that can deal with the non-programmed or non-present situation and has the ability of particular self-management and self-guidance [

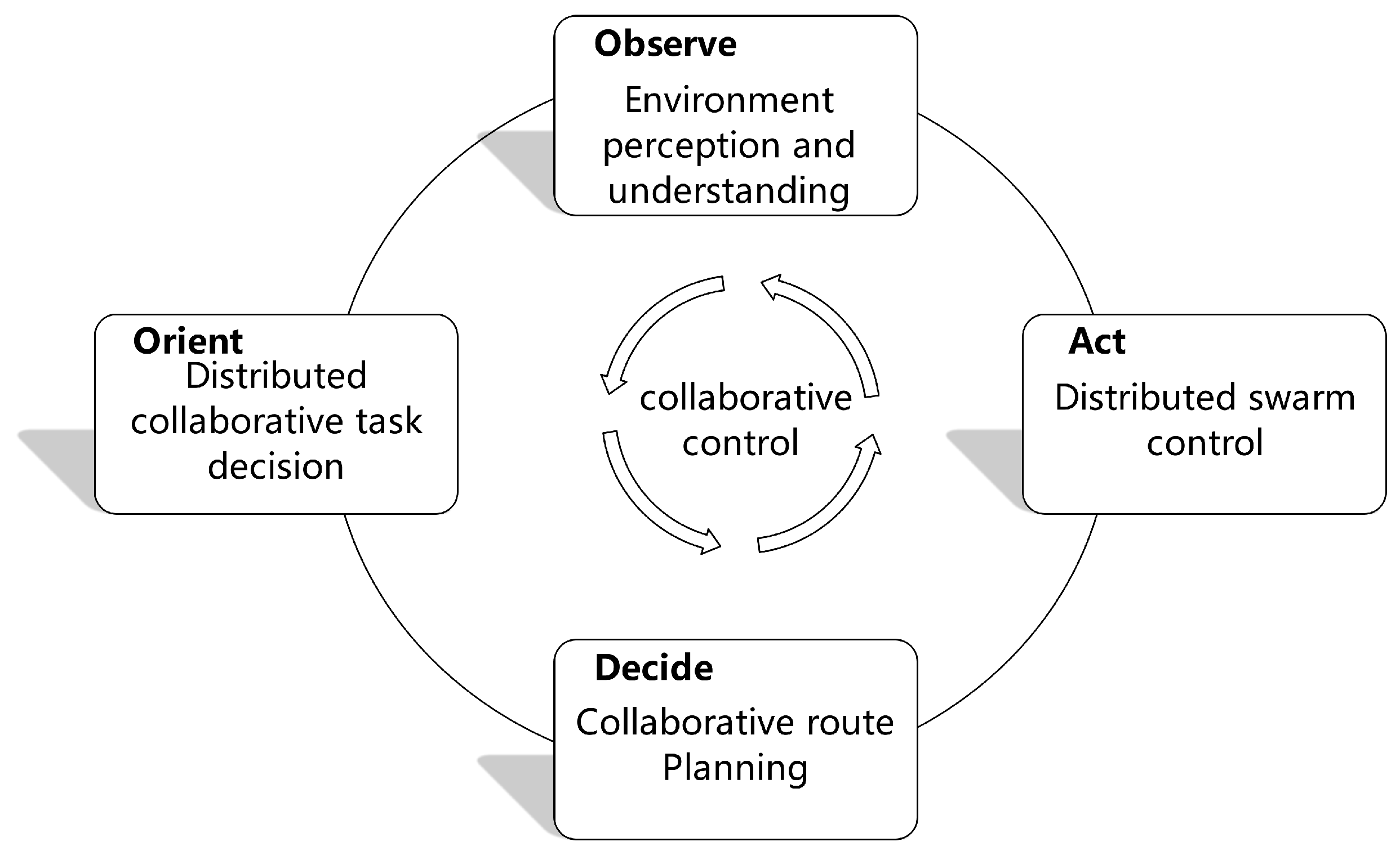

23]. The concept of the autonomous system develops alongside the development of artificial intelligence and cognitive technology. Due to the information technology and artificial intelligence technology constantly evolving, we can delegate tasks to the credit system. Autonomy is information-driven, even knowledge-driven. According to the task demands, the system can independently complete the dynamic process of perception, judgment, decision, and action. It can deal with non-present situations and the variation of the task. Meanwhile, it can also have certain fault tolerance. Autonomy has gradually changed the whole world through data collection, data analysis, network search, recommendation engine, prediction, and other applications. Comparatively, human is not good at handling a large amount of data quickly, but it is an easy piece for an autonomous system. Automated systems play a crucial role in future autonomous functionality. While operating within a predetermined scope, advanced automated systems can have the appearance of autonomy.

Autonomous systems can perceive and deal with more complex and changeable environments and complete more diverse actions and tasks independently than automated systems. Generally speaking, autonomy means that the system is equipped with multi-source sensors and software capable of processing complex tasks so that the system can complete the established tasks and objectives independently without external communication or limited communication with the outside world in a particular time, without the help of other outside intervention. Besides, it can learn and evolve in an unknown environment and continuously strengthen its ability to complete the task and maintain excellent performance. Autonomy can be considered the evolution of automation, which is the evolution of automation towards intelligence and higher mobility.

Currently, there is no utterly autonomous system, but scholars worldwide have described the target of the autonomous system. For example, the Unmanned Systems Integrated Roadmap 2013–2038 [

24] describes the autonomous combat system including Unmanned Aerial Vehicles and Unmanned Ground Vehicles, and it can improve the army’s autonomous combat capability through various types of unmanned equipment; the Unmanned Systems Integrated Roadmap 2017–2042 [

25] describes the unmanned autonomous combat system with the participation in ground commanders.

Depending upon the demands on the system, different levels of autonomous systems may be required in different situations. The level of an autonomous system is closely related to the function of the system. To a certain extent, improving the ability of the system will also promote the development of an autonomous system. Taking the military combat system as an example, the autonomy of the system can be divided into five levels [

26] depending on the ability of system task execution, environment perception, data interaction, and decision scheme generation, as shown in

Table 2.

On the one hand, enhancing the autonomy of the system thoroughly changes the operation mode of the system, improves the ability of the system to perform tasks, and the safety and reliability of the system [

27]. Due to the change of decision-making subject and model, system decisions’ response time and performance can be improved. On the other hand, the personnel burden of the system can be reduced, and the operation cost can be saved. What is more, because of increased autonomy, the system runs in the limited communication environment uninterruptedly, and the system shows fault tolerance and anti-interference; the self-evolution and self-learning ability of the system will be enhanced synchronously. Besides, in the foreseeable future, due to the increasing complexity of system hardware and software, the possibility of system failure, loopholes, and even overall failure will also increase. Therefore, human interactions are still involved in supporting autonomous system development.

3. Architecture of Automation System and Autonomous System

3.1. Composition of Architecture

From the view of system modeling, system architecture design covers several related significant models, including system logic model, system function model, task model, organizational structure model, etc. Among these models, the system logic model is determined mainly by accounting for the internal relationship among the system’s physical elements. The system architecture is a comprehensive and essential base to model and implement autonomous systems.

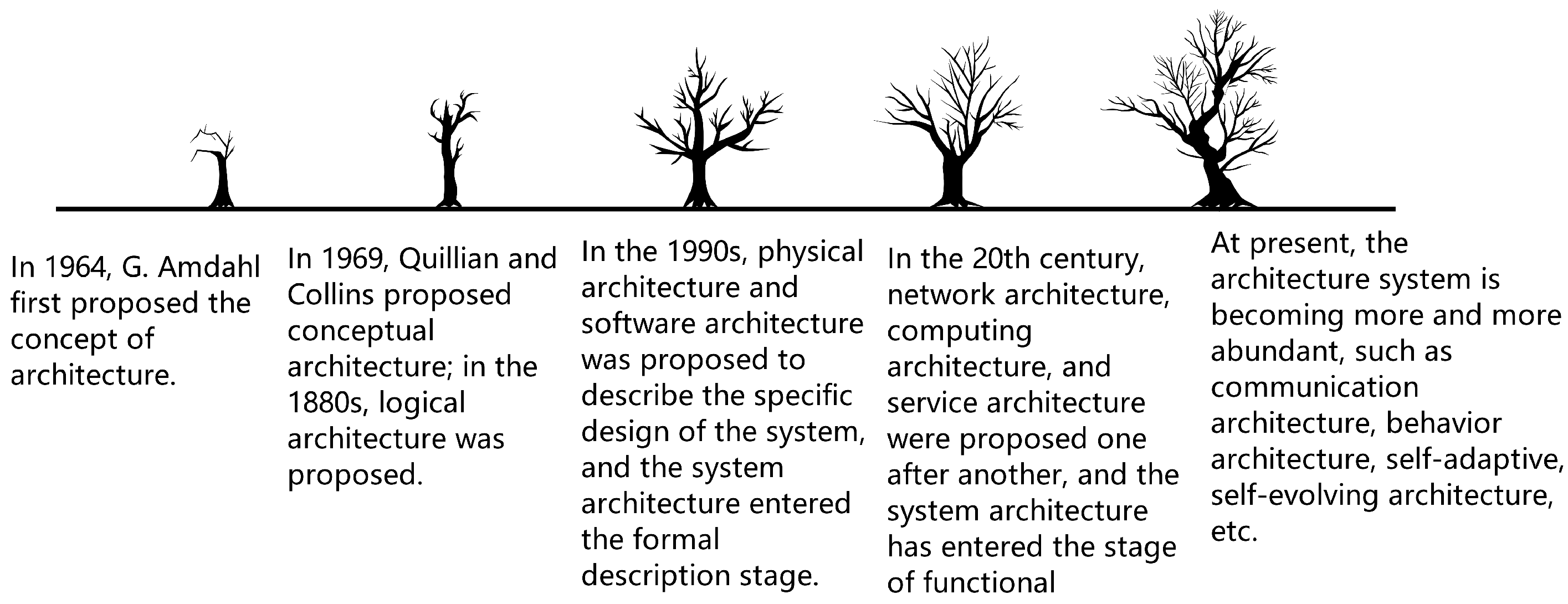

G. M. Amdahl, who was the first scholar, proposed the concept of Architecture in 1964 [

28]. Afterward, researchers paid increasing interest and attention to proposing a unified and commonly-approved explanation for architecture that promised the relevant theoretical foundation to a high degree. In the past half-century, architecture discipline, such as its related connotations, has made great progress [

29]. The architecture of autonomous systems can be seen as the effective integration of conceptual [

30], physical structure [

31], logical structure, software and hardware structure, organizational structure, functional structure, network structure, computing structure, and some other related structures [

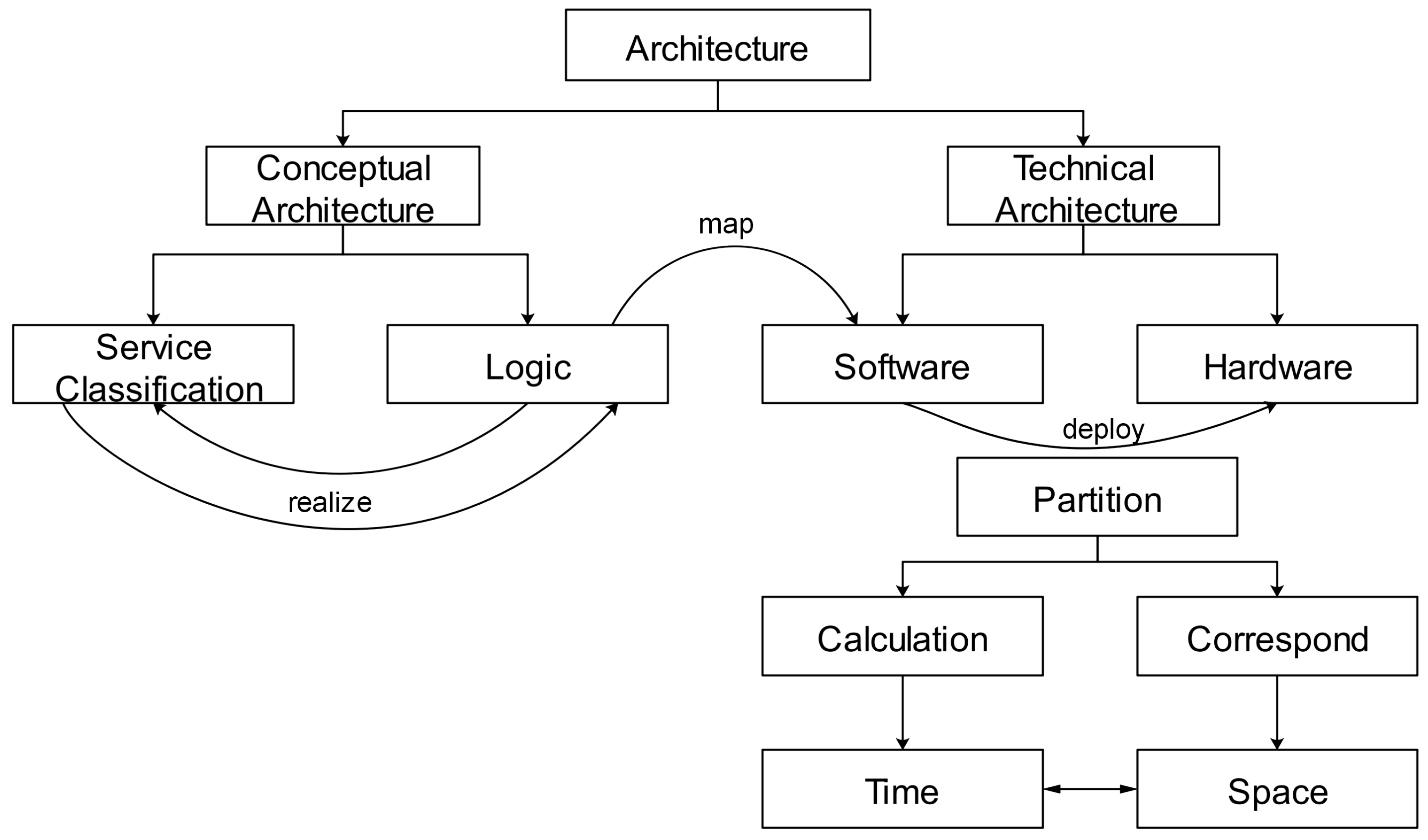

32]. In general, the system architecture contains a comprehensive logical conceptual structure and a system technical structure that can be varied flexibly according to the technology domain.

Furthermore, the comprehensive logical conceptual structure is comprised of services classification structure and logical structure. The logical structure is the basis of implementing services classification structure, and the services classification structure is the concrete manifestation of realizing logical structure. The technical structure can be divided into software structure and hardware structure. Hardware structure is the foundation of software structure deployment. Architecture design is a collection of system-related concepts used to describe the system composition, function, logic, and other aspects of the design. The physical structure mainly describes the overall design of the system and the operation mode to achieve the objectives, mainly including various software and hardware developed for the system. The software system is mainly applicable to competent the deployment of the system software, which contributes to implementing the required functions. It is noticeable that the efficient design of the software system involves both suitable hardware and software.

Some special attention should be paid to several paramount aspects of the physical structure, including the elements of physical construction and network form of the system to ensure the reliability, robustness, and diversity of tasks related to the system [

33]. The organizational structure [

34] focuses on the organizational relationship among the system components, including the organizational forms of user layer, system layer, and element layer. The cores of functional architecture [

35] are the functional elements of the system and the intrinsic logic of function realization, which build the bridge between the system and the user interaction. The main concern of the logical architecture [

36] is the internal correlations among the constituent objects or elements in the system, such as ships, ports, waterways, information flow, user interfaces, databases, and so on, in the waterway transportation system. The logical architecture focuses on the logical relationship within the system, and the remote system functions under the logical relationship [

37]. Furthermore, the logical architecture is more inclined to the “hierarchical” structure [

38]. The hierarchical structure of a system always consists of physical logic layer, business function layer, and network data layer, so-called a classic three-layer architecture [

39], which is illustrated in

Figure 2. The development process of system architecture is presented in

Figure 3.

In summary, it can be found that the primary purpose of building an architecture for an autonomous system is essential, and essential especially for designing the overall structure and realizing the expected functions of the system, which, in turn, enhances the autonomous and task fulfillment abilities of the system [

40]. For an autonomous system, four kinds of abilities, including environment perception and self-perception, planning, self-execution, and self-learning ability promising self-adaptation and self-evolution.

3.2. Representative Architecture of Automation System

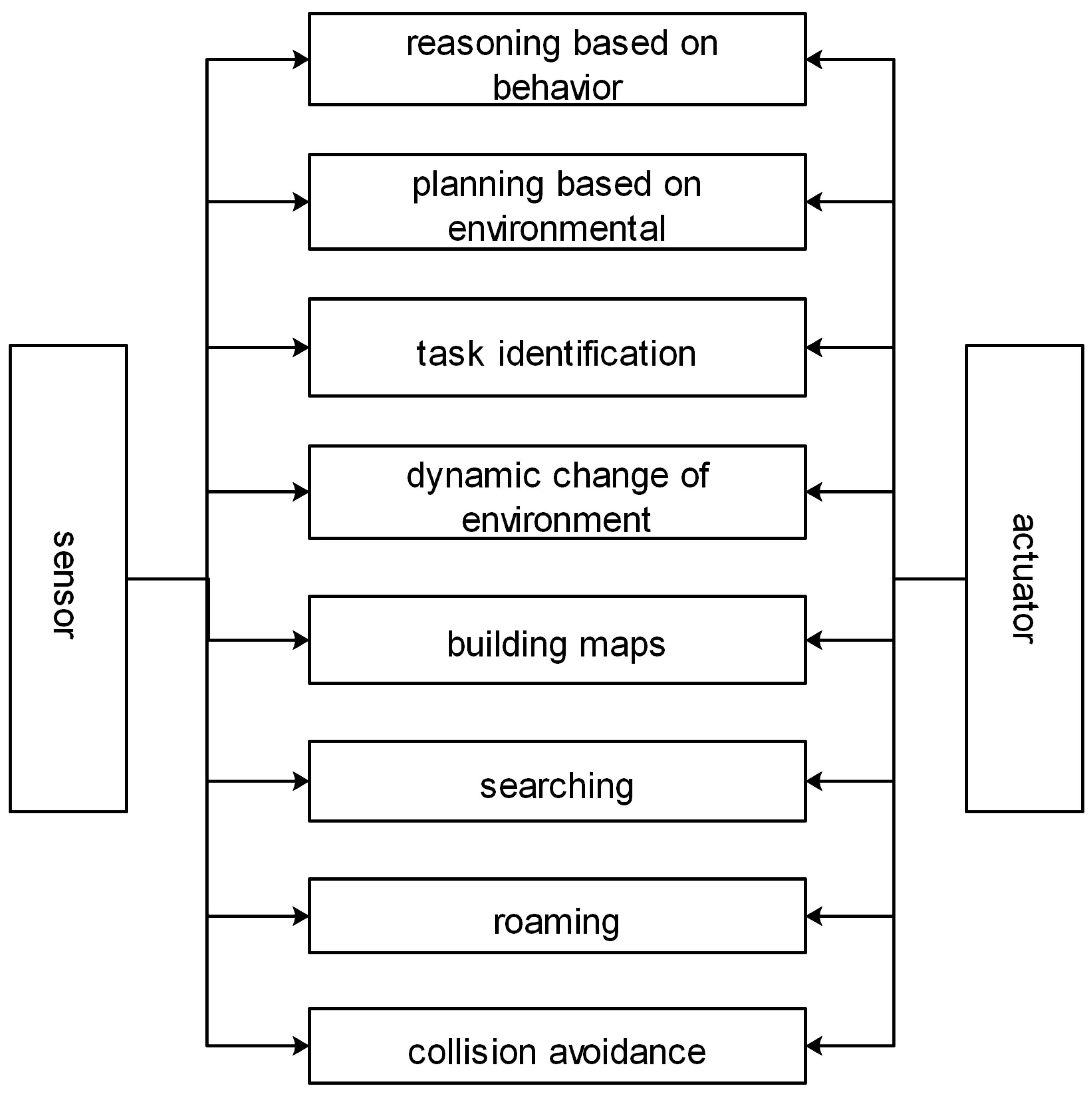

A robot system is a standard and simple automation system. As a representative form of the automation system, robot architecture is generally composed of perception subsystem, planning subsystem, and execution subsystem [

41]. According to the different combination forms of the three subsystems, they can be divided into knowledge-based architecture (also known as horizontal, decentralized architecture) [

42], behavior-based architecture (also known as vertical decomposition type) [

43], and hybrid architecture combining knowledge and behavior [

44].

At present, knowledge-based architecture is accepted as the prominent architecture of the Robot system, this kind of system architecture inspired by human cognition of knowledge. Human cognition usually contains the following processes: perceiving surrounding information; modeling the body model for its kinematic equation and dynamic equation; planning the tasks; executing the instruction; and repeating from perception module. The implementation of the module is detected and supervised. Besides, the environment model needs to be accurate. The knowledge-based architecture connects the modules according to human cognition.

An illustration of the knowledge-based architecture is presented in

Figure 4. In the architecture, each module can work and develop independently, and the system implementation is straightforward.

The construction of knowledge-based architecture is a typical top-down construction method. The top-down construction method refers to the generation of system actions, which go through a series of processes, from perception to execution.

The perception module also plays a significant role in the system. The perception module can construct the global environment information independently and infer the relationship of each element object in the global environment information. However, the sensed data will not affect the action execution directly.

The planning module is an essential component in this architecture, which determined the motion of the robot. The planning module will deal with the task. Through the given objectives and system constraints, the planner will give the following action instructions according to the perceived information and complete the whole task with the cooperation of each module.

In this structure, every module is essential. With the cooperation of each module, the system completes the whole task. The perception module can construct the global environment information independently and infer the relationship of each element in the global environmental information. If the global environment information is missing, the planning module cannot complete and correctly plan the action instructions. The establishment of environmental information depends on the hardware conditions of the system. Due to the weak computing power, there will be an unavoidable delay in the control loop of the system, which leads to the lack of real-time and flexibility of the system.

Due to concatenate structure of the knowledge-based architecture, there are some obvious shortcomings. The first shortcoming is the lack of system reliability. In the concatenate structure, if one of the modules fails, the whole system will collapse. Besides, the real-time performance of actions is flawed since the transmission of information will go through a series of processes and cannot respond to the rapidly changing environment.

In 1986, Professor Rodney A. Brooks, from Australia, proposed behavior-based architecture, also known as inclusive architecture [

45]. Comparing with knowledge-based architecture, behavior-based architecture adopts the parallel way to build the system.

The perception module and execution module are included in the function structure of each level of behavior-based architecture. According to the difference of each layer, the perception module, and planning module is activated based on the real-time demand. In the behavior-based architecture, the system plans different levels of behavior capabilities according to the different tasks. The behavior capabilities are superimposed in each level of the functional structure. In general, complex behavior will influence simple behavior, and simple behavior will also affect complex behavior, but the influence is limited. Whether it is low-level or high-level, each level has independent behavior and can independently control the intelligent robot to generate corresponding motion.

Behavior-based architecture highlights the behavior control structure from perception to action. Behavior-based architecture is a typical parallel architecture. Each behavior includes a series of capabilities from perception, modeling, planning, execution, etc. A typical behavior-based architecture is demonstrated in

Figure 5.

In this architecture, the basic behavior is relatively simple and fixed. So, it needs less physical resources and can respond to the rapidly changing environment and task information. The whole system can also be very flexible to achieve complex tasks. A specific layer completes each action, and each level contains the complete path of perception, planning, execution, etc.

What is more, there is an entirely parallel structure of all levels. The advantage of the parallel structure is that, even if one module fails, the other control loops can still work typically and perform the given actions. Therefore, the behavior-based architecture can improve the system’s survivability and enhance the system’s ability to complete the task.

Both knowledge-based architecture and behavior-based architecture have some limitations. The system using knowledge-based architecture components is relatively lacking in real-time and task diversity. while the behavior-based architecture improves the system’s real-time performance and action response, its system is challenging to implement. Combining the advantages of the two architectures seems to be a good solution. Thus, some scholars proposed a hybrid architecture [

46]. The goal of hybrid architecture design is to combine the advantages of the two kinds of architecture, that is, simple hierarchical structure and fast response speed of behavior-based architecture [

47].

A typical hybrid architecture, such as CASIA-I, is proposed by researchers from the Chinese Academy of Sciences, and an indoor mobile robot is designed based on the hybrid architecture. Researchers integrate two architectures, where the structure includes the human-computer interaction layer, task planning layer, map database, and behavior control layer.

Hybrid architecture is currently becoming a significant development trend of autonomous individual architecture, but many problems are still to be solved. First of all is the coordination and implementation among different levels, especially the coordination between knowledge-based behavior and reactive behavior. The second is how to adapt the hybrid architecture to the dynamic environment. The third is how to supervise the individual’s execution, find problems in time, and improve the individual’s performance.

When scenes and robots change rapidly, intelligent robots cannot perceive the scene timely due to the limitation of sensors and computing power. When the system’s hardware cannot meet the requirements of calculation, the real-time performance of the system will be seriously affected. To solve the problem, Dr. Kuffner from Carnegie Mellon University proposed a concept of “cloud robotics” in 2010 [

48]. The cloud robot is a combination of cloud computing and intelligent robot, moving the computing power of the robot to the cloud [

49]. When the intelligent robot needs to process the information, it connects to the corresponding cloud server and processes it. The results are sent back to the robot through the network [

50].

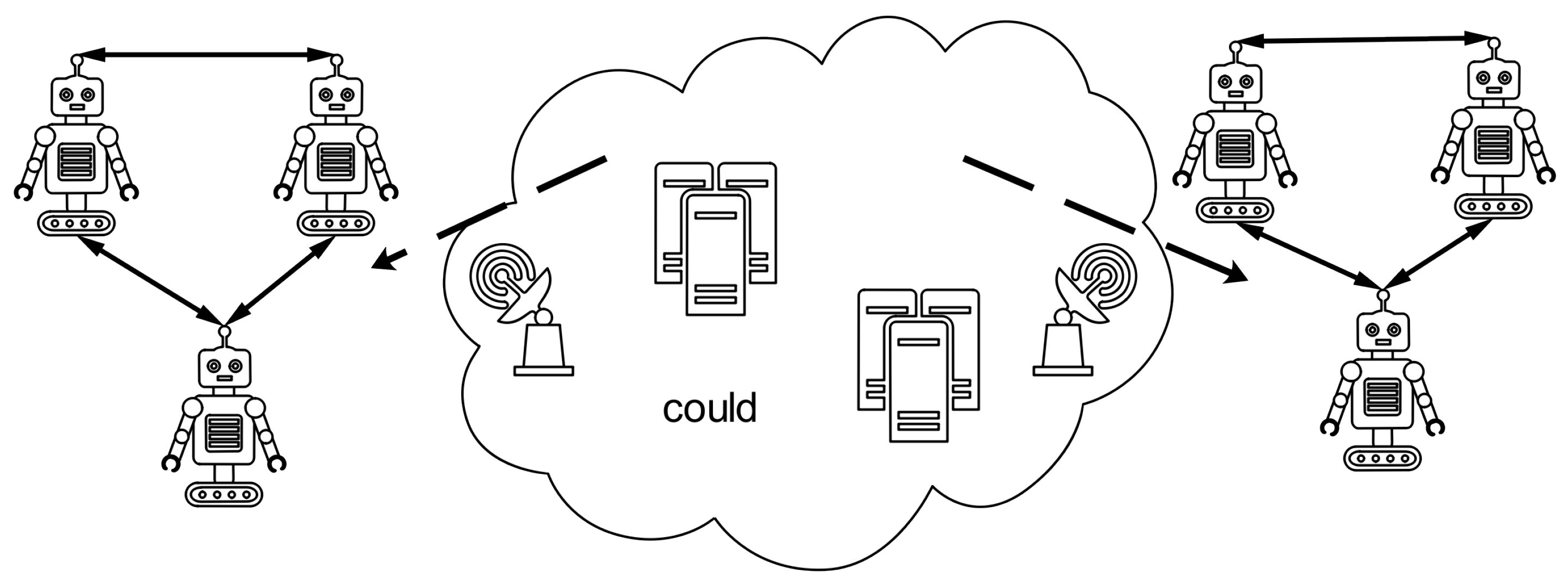

Compared with local robots, the cloud robot moves complex information perception acquisition and information processing computing tasks to the cloud service, which has lower requirements on local software and hardware in the local robot. At the same time, by connecting with the cloud, it can also receive the relevant data of other intelligent robots, realize information sharing, and enhance the learning ability of intelligent robots through information interaction. Through cloud connection, information sharing between intelligent robots will be faster and more convenient. The cloud robot architecture is shown in

Figure 6.

The four structural characteristics of the automation system are summarized in

Table 3.

3.3. Transformation of Architecture from Automation System to Autonomous System

The autonomous system refers to the system which can deal with the non-programmed or non-present situation and has self-management and self-guidance ability. The office of the chief scientist of the U.S. Air Force has issued a document to plan the future development of the autonomous system [

51] to clarify the role of autonomous systems in future systems. The system’s autonomy means that the system has the characteristics of self-management ability in a complex changing environment and non-present situation. Compared with traditional automation systems or a semi-autonomous system, the autonomous system can realize self-learning and self-adaptive ability in the face of a more complex and time-varying environment and has a broader application prospect, such as the aerospace field [

52], military field [

53], and mechanical engineering field [

54].

In an autonomous system, autonomy refers to the application of advanced software algorithms, a variety of heterogeneous sensors, and other hardware facilities so that the system can interact with individuals and the environment through limited communication for a long time. It only needs to issue the necessary task instructions without other external intervention to independently complete the task independently and independently adjust the system in an unknown environment. A fully autonomous system cannot be realized at the present stage, and the vast majority of systems require people to participate in decision-making and supervision.

The level of transformation is relatively low. In the existing research, most of the systems are semi-autonomous to some extent. In the future, full autonomy is the ultimate destination of the control field. With the development of autonomous systems, the emphasis of human operators moves from low-level decision-making to high-level tasks, including the overall task arrangement and coordination. In the future, these systems may contain more autonomous functionalities, human participation in decision-making is less and less, the weight of human decision-making is higher and higher at the regular operation of the system, and the system will gradually transition to full autonomy. The extension from the classical control system to the autonomous control system is shown in

Figure 7.

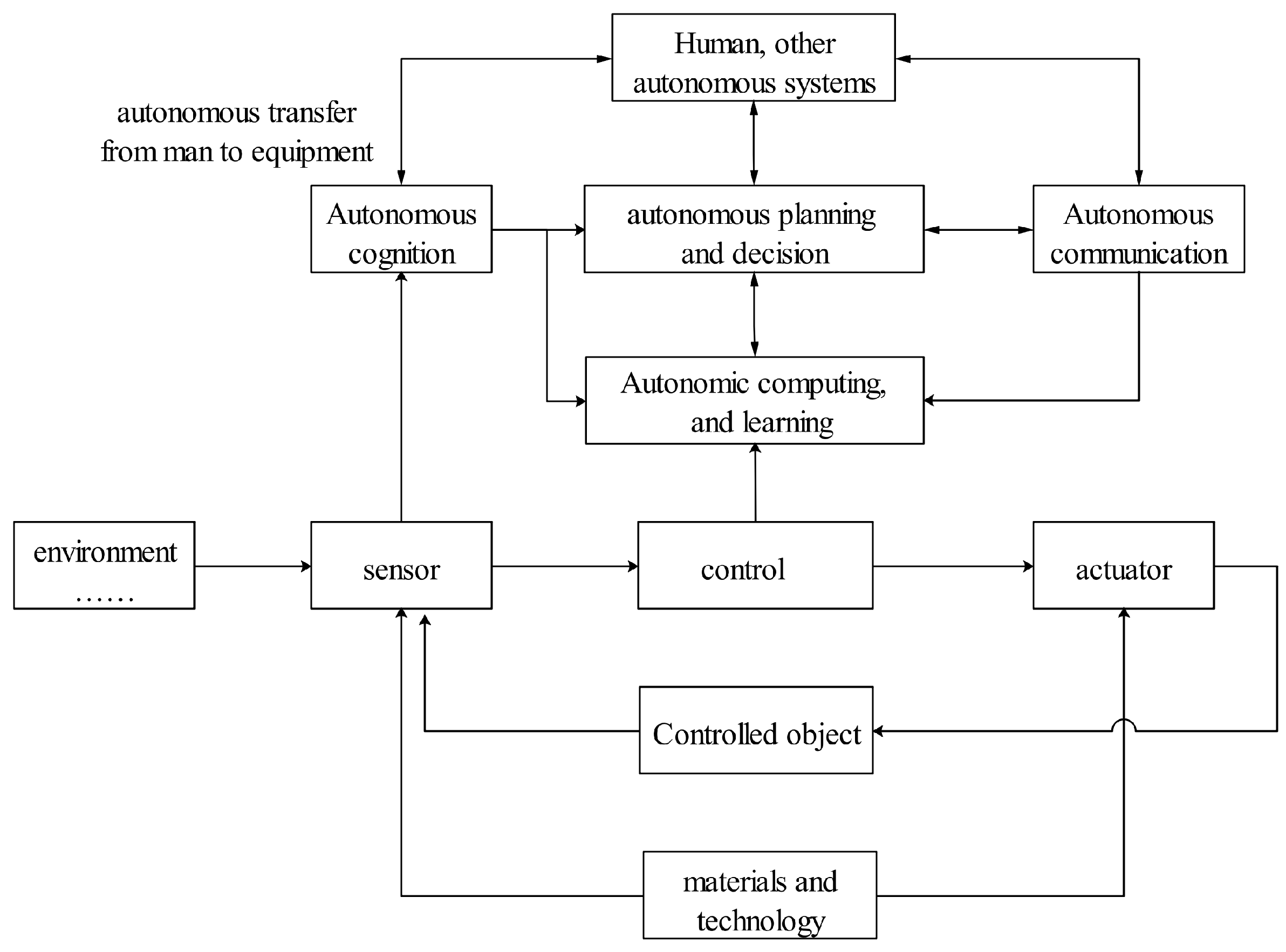

The classic semi-autonomous system [

55] is a closed-loop system composed of sensors, decision-makers, controllers, actuators, and control objects under specific environmental conditions. A large number of intelligent algorithms will be used in the system to organically combine with the emerging factors, such as perception, planning, decision-making, computing, and communication, to greatly enhance and expand the autonomous ability of the system [

56]. The semi-autonomous system can also interact with systems of different autonomous levels, including human beings, and even realize the transfer of intelligence from human to equipment.

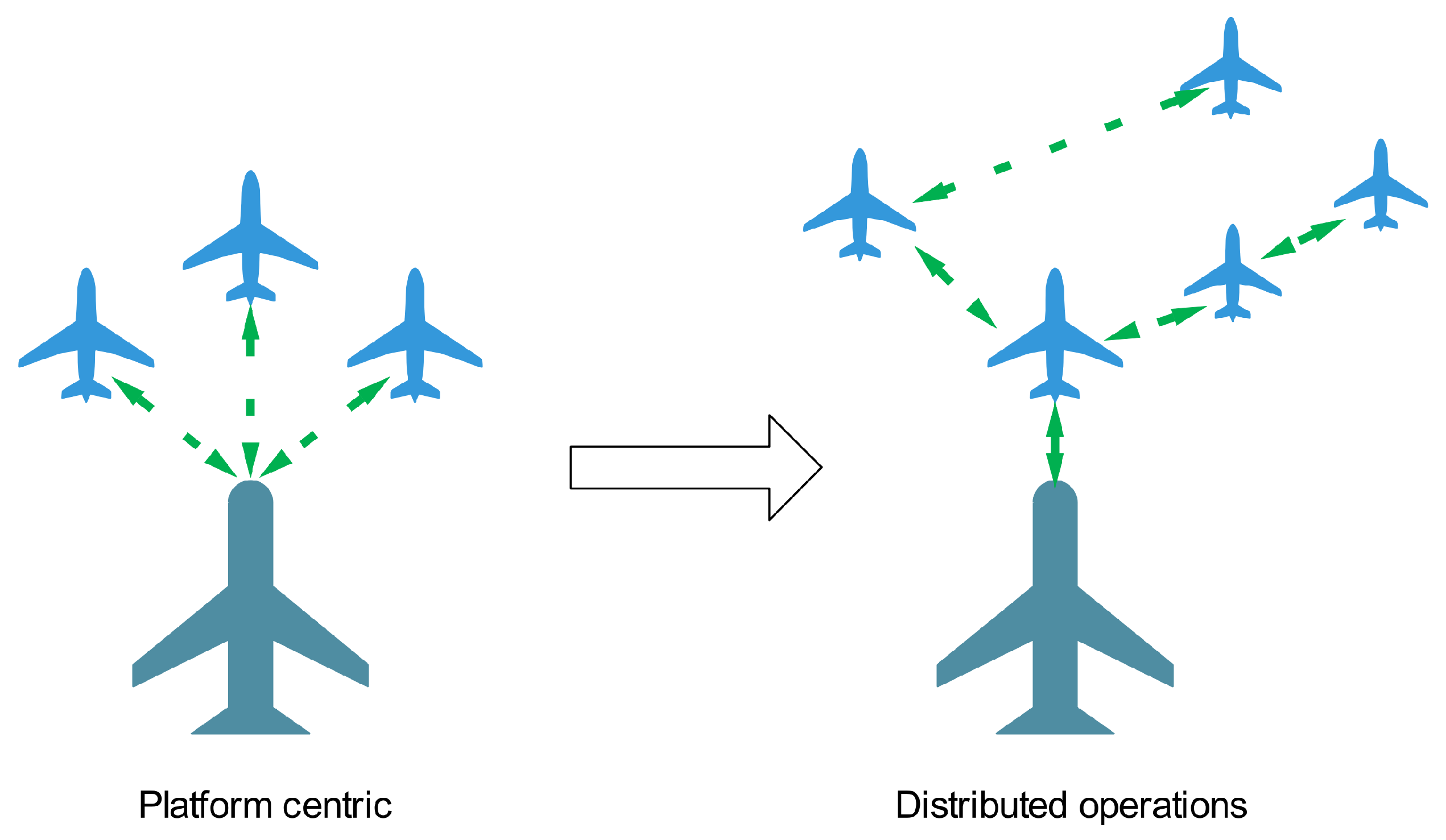

At present, the military field [

57] is the most widely used field of unmanned systems, and it is also the field closest to the application of the autonomous system. DARPA, together with ABC and Hopkins University, studied the standard operating system (COS) of the joint unmanned air combat system [

58]. Different types of UAV (Unmanned Aerial Vehicle), UAV ground control station, and communication satellite are integrated into an organic whole, which can effectively share information among members and make planning and decision at the system level and platform level, resulting in the networked operation between multiple platforms. The system framework is shown in

Figure 8.

In August 2018, The U.S. Department of Defense released the unmanned system integrated roadmap 2017–2042, which clarifies the importance of the unmanned autonomous system for future system operations. Well-known research institutions have developed a large number of UAV systems, including Roland University of Hungary, U.S. naval graduate school, DARPA (Defense Advanced Research Projects Agency) [

59], etc. In 2014, the Vicsek team of Roland University in Hungary [

60] had realized the autonomous system flight of 10 quadrotors in an outdoor environment [

61], which was reported by nature [

62]. The most important feature of the architecture is that it uses the birds’ behavior mechanism in the task decision-making level and realizes autonomous decision-making through information interaction with neighboring individuals. Timothy Chung’s team from the U.S. Navy Postgraduate School realized the swarm flight of 50 fixed-wing aircraft in 2015 [

63], using a wireless ad hoc network for information interaction. In the architecture, centralized control is adopted, and a ground control base station is used to control all UAVs simultaneously. In the task execution stage, the control power of the UAV is gradually transferred to the UAV itself so that the UAV can fly and make decisions independently [

64]. DARPA “Gremlins” project [

65] is committed to the development of an efficient and low-cost distributed air combat concept. A large number of small UAVs are launched and recovered by large transport aircraft in the target airspace to carry out reconnaissance and attack missions. In 2015, DARPA released the project “Cooperative Operation in Denied Environment” [

66], and built a set of modular system software architecture. Compared with existing technologies, the software architecture can overcome the bandwidth limitation and communication interruption and can be compatible with existing technical standards to improve the autonomy of the UAV system and the ability of cooperative operation. DARPA’s “System of Systems Integration Technology and Experimentation” project [

67] designed an open distributed system architecture (

Figure 9) to perform relevant UAV system tasks. The system provides a new design idea of interchangeable components and platforms. The system can be upgraded and replaced quickly by modular components. DARPA implemented the “Offensive Swarm enabled Tactics” project (OFFSET) in 2016. The purpose of the project is to develop a rapid generation tool for swarm tactics, evaluate the effectiveness of the generated tactics, and finally push the optimal tactics to the UAV system [

68]. To achieve the above objectives, developers build a swarm tactics development ecosystem supporting open system architecture. The system has an advanced human swarm interface and real-time networked virtual environment, which can assist participants in designing swarm tactics, combining collaborative behaviors and swarming algorithms [

69]. Based on the research background of UAV system wide-area search and strike integration mission, the U.S. Air Force Research Laboratory and Air Force Institute of Technology proposed a hierarchical and distributed architecture of UAV system [

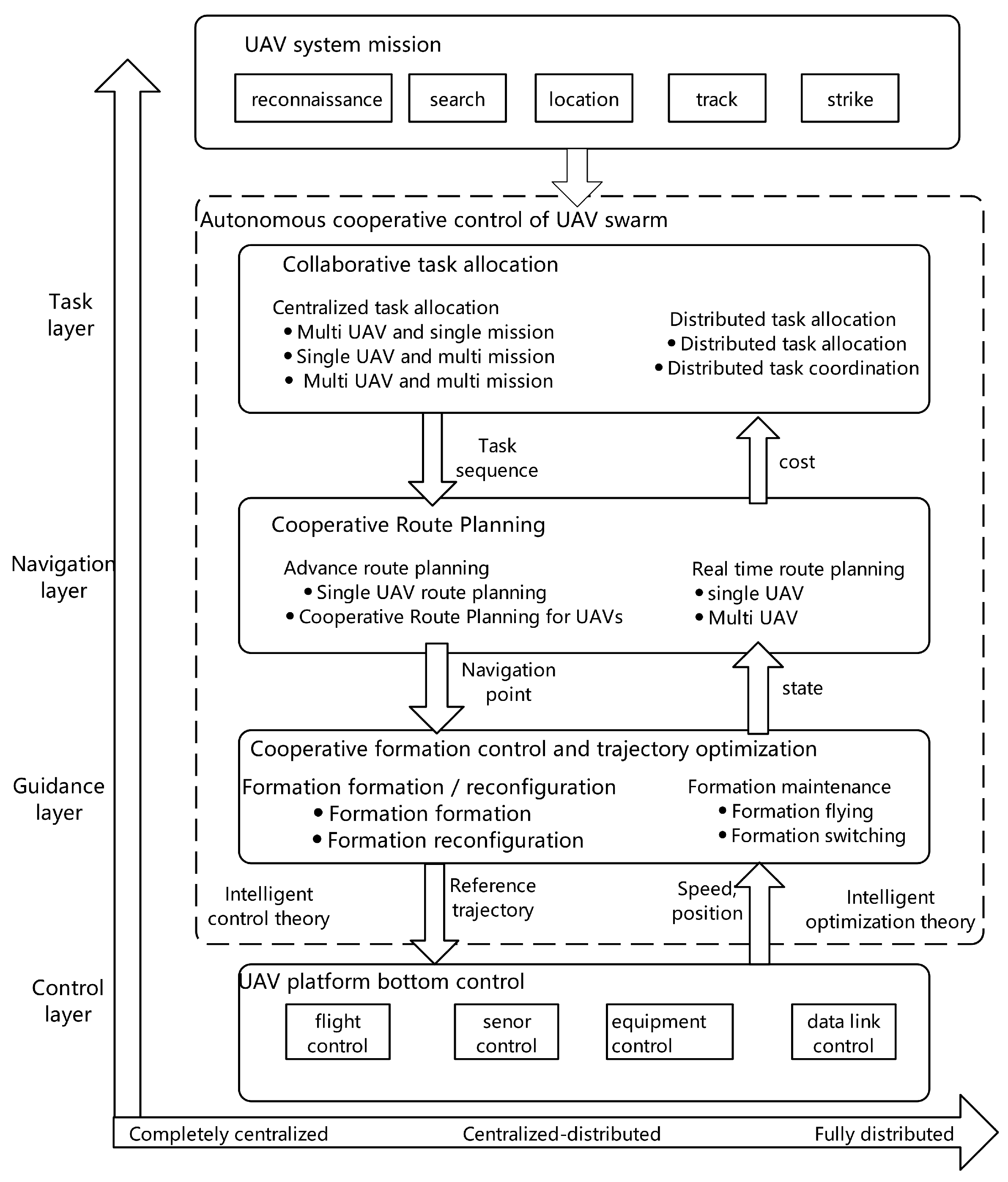

70], which decomposed the problem into four levels: task allocation, task coordination, path planning, and path optimization, significantly reducing the online computing load and realizing the multi-level Coordination and optimization [

71].

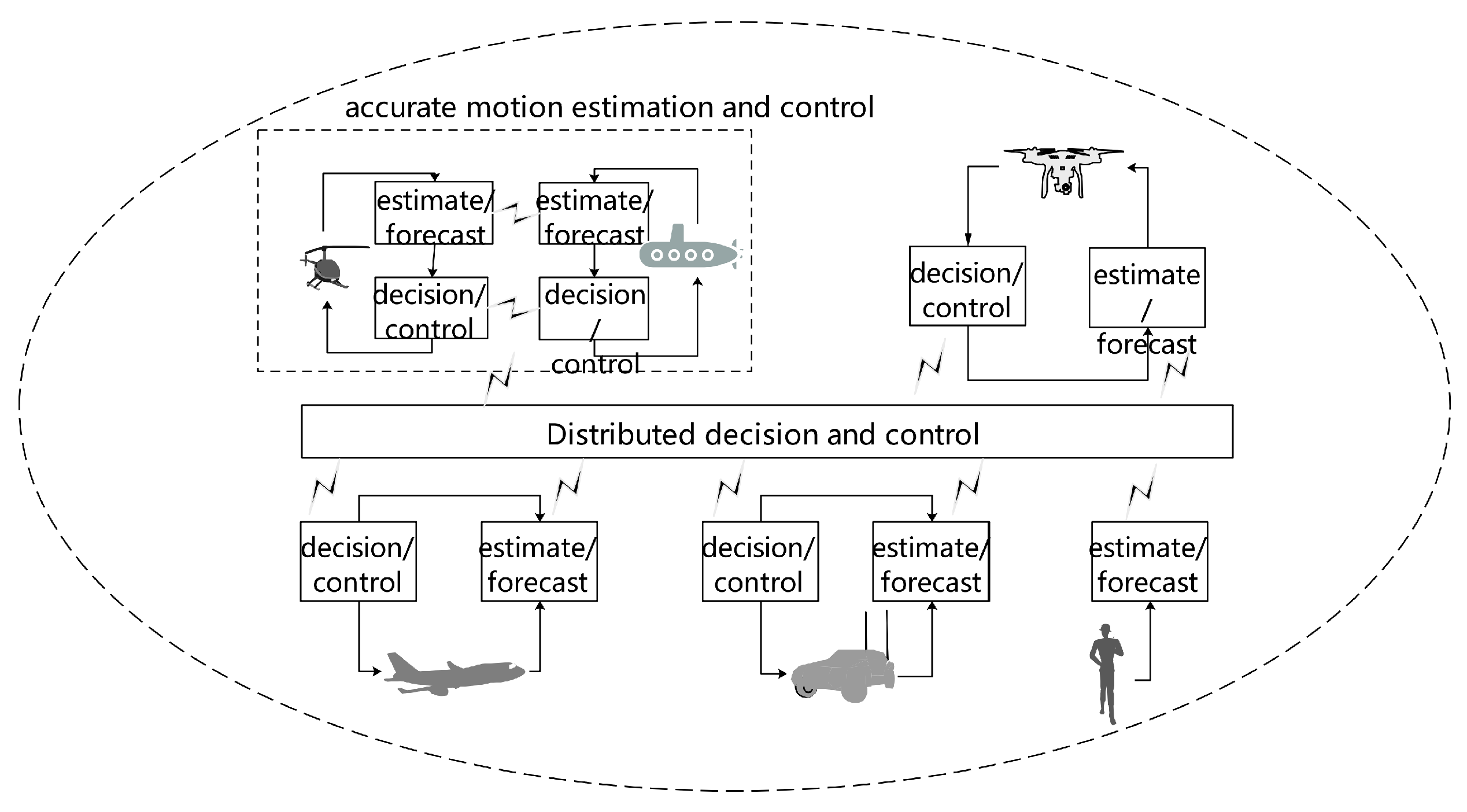

The EC-SAFEMOBIL (Estimation and Control for Safe Wireless High Mobility Cooperative Industrial Systems) project [

72], funded by the European Commission’s ICT (Information and Communication Technologies) program, combines UAVs, ground vehicles, and personnel to form a collaborative collaboration. A distributed autonomous decision-making framework for collaborative monitoring and tracking is designed and verified in disaster management and civil safety [

73]. The system framework is shown in

Figure 10.

Aerospace is an area where autonomous control systems are widely used [

74]. In Reference [

75], a system framework based on information flow and knowledge flow is proposed using the characteristics of high real-time, high reliability, and uncertainty of space scene. The information flow is transformed into the knowledge flow. The planning, decision-making, and control modules control the aircraft through the input knowledge flow. Besides, the human-machine interface is connected with the dynamic knowledge base to realize the dynamic update and control of knowledge.

In the field of engineering construction and production, aiming at the autonomous platform for exploration and navigation in the tunnel network, Tlig et. al [

76] proposed a system that allows two-mode including an exploration/investigation mode and autonomous navigation mode. In exploration/investigation mode, the remote management platform moves through the tunnel network, collects data, and links it to the 2D/3D map of the environment. In navigation mode, the supervisor assigns high-level tasks on the previously acquired survey map. Then, the motion planner transforms each task into a set of continuous navigation actions. The ability of real-time situation awareness and control of the tracking subsystem can be significantly reduced through real-time situation awareness and control capability. Mathilde M proposed is a high-level autonomous system named Has learned [

77], which includes multi-strategy learning functions. It is integrated through four stages of the incremental learning process. Like human operators, operational reactive action rules are learned to recover and eliminate any accident or abnormal situation, and predictive rules are learned to apply to nuclear power plant monitoring [

78].

Chinese institutions, such as Beijing University of Aeronautics and Astronautics [

79], University of Defense Science and technology [

80], and China Electronics Science and Technology Research Institute [

81], have carried out many studies on UAV swarm system design, collaborative perception, swarm control, etc. According to the mission requirements of aerial refueling, the team of Beijing University of Aeronautics and Astronautics designed a multiple- aircraft docking system based on bionic visual perception, and field experiments verify its effectiveness. According to the characteristics of the UAV swarm environment and tasks, the team of Unmanned System Research Institute of the University of Defense Science and technology proposed an adaptive distributed architecture and realized 21 UAV swarm flight tests in 2017 based on the characteristics of the UAV swarm environment and tasks. In June of the same year, the China Academy of Electronic Sciences, Tsinghua University, and other institutions realized the swarm flight of 119 fixed-wing UAVs together and realized the actions of intensive takeoff, air assembly, adaptive grouping, and swarm operation.

To sum up, the importance of improving the autonomous ability has been widely recognized in various fields, such as military field, engineering construction and production [

82], aerospace field [

83], and so on. At the same time, there is no fixed framework for constructing an autonomous system, which should be improved step by step according to the functional requirements, the system autonomous ability of objects, and the current technology level. When using the autonomous system, it will also face the problems of system situation awareness [

84], system reliability [

85], man-machine cooperation [

86], and so on. In order to better explain the process of autonomous system construction, this article summarizes autonomous system systems in different fields in the form of UAV cluster system construction. Two typical architectures of UAVs are shown in

Figure 11 and

Figure 12.

To sum up, in recent years, the cluster system architecture has developed vigorously and has achieved fruitful research results. At present, the more commonly used research ideas of the system architecture mainly include top-down and bottom-up. The top-down research is mainly to solve hierarchically and progressively, which decomposes cluster coordination tasks into tasks allocation, trajectory planning, and formation control. Each level of sub-problems is solved separately, which can effectively reduce the difficulty of solving the problem, this method has become the mainstream method at present. Bottom-up research is mainly based on self-organization, emphasizing the perception, judgment, decision-making, and dynamic response of individuals to the environment, as well as rule-based behavioral coordination between individuals.The characteristics of the two structures are summarized in

Table 4.

3.4. Recommendations of Building Autonomous System Architecture

In our view, when we try to build the typical inclusive architecture of the autonomous system, robotics, cybernetics, cognitive science, software, and hardware should be comprehensively considered. The typical architecture describes the autonomous behavior of the system from radically different viewpoints. Building a suitable and reasonable architecture has significant contributions to the development of proficient and trustworthy autonomous systems and provides the foundation for more rapid and efficient development (via cross-appropriation of validated concepts and modules) of conceptually well-founded future autonomous systems. We give some recommendations for building autonomous system architecture through the classification and summary of the above automation system and semi-autonomous system architecture as listed below.

Recommendation a: Extensibility. Build a common autonomous architecture that can subsume multiple frameworks currently used by different participants about the system. On the one hand, the architecture should provide for “end-to-end” functionality, at least, including a sensory ability to identify and recognize the key information of its environment; a cognitive ability to make assessments, plans, and decisions to achieve desired goals; and a motor ability to act on its environment if called upon. On the other hand, system function in the architecture should be structured and expanded flexibly and support human-teammate interaction as needed. What is more, it is better when the architecture can be deliberately engineering-focused and have applied scenarios. Alternatively, it could take a looser, nonfunctional approach provided by, such as rule-based systems [

87], Artificial Neural Network [

88], and so on. Whatever the form, common architectures should deal with the task assigned, the relationships between different agents, and the logical and cognitive approaches used. A key metric of an architecture’s utility will be its capability of bridging the conceptual and functional gaps across disparate agents working in the autonomous system.

Recommendation b: Evolvability. While developing one or more autonomous system architectures and defining the component functions underlying the architectures, we should pursue enabling technologies that provide the needed functionality at the different autonomous levels. Those technologies include the basic perception, decision-making, and control functions and enable effective human-computer interaction, learning, adaptation, and knowledge-base management. According to the different levels of technology development and autonomy, the common architectures should be paralleled from basic research to exploratory development to early prototyping. Develop parallel architectures for systems with different levels of autonomy. Through parallel development technology, the needed functionalities demanded by autonomous system architectures could be faster and more efficient development. A complete evolution of components and architectures over time, serving the entire system through common usage of proficient and validated components, some specific technologies can be used, such as artificial intelligence [

89], digital twins [

90], and so on.

Recommendation c: Collaborability. According to the current development trend, the autonomous system must not be a system without people, and people will play an essential supervisor in the system. Therefore, we should pay great attention to the oriented design of human function in architecture. To design architecture that better supports human-autonomous system collaboration and coordination, it is first important to understand the respective capabilities and limitations. The constraints of each autonomous system component also need to be understood. A taxonomy architecture designed to guide human and autonomous system coordination can help evaluate the relevant tradeoffs in terms of agility (ability to respond effectively to new inputs within a short time), detectability (the ability for an external party to influence the operation of an autonomous system), observability (level to which the actual state of the autonomous system can be determined), and transparency (ability to provide information about why and how the autonomous system is in its current state) of autonomous system [

91]. An alternative task-centered design is recommended in the common architecture design that focuses on the application’s mission or goal rather than distinct system user properties. Application-focused use cases, scenario vignettes, and task analyses can help guide architecture building that provides the needed mechanisms for human-autonomous system teams to work together and adapt to meet the dynamic requirements of the future system.

4. Architecture of Autonomous Waterborne Transportation System

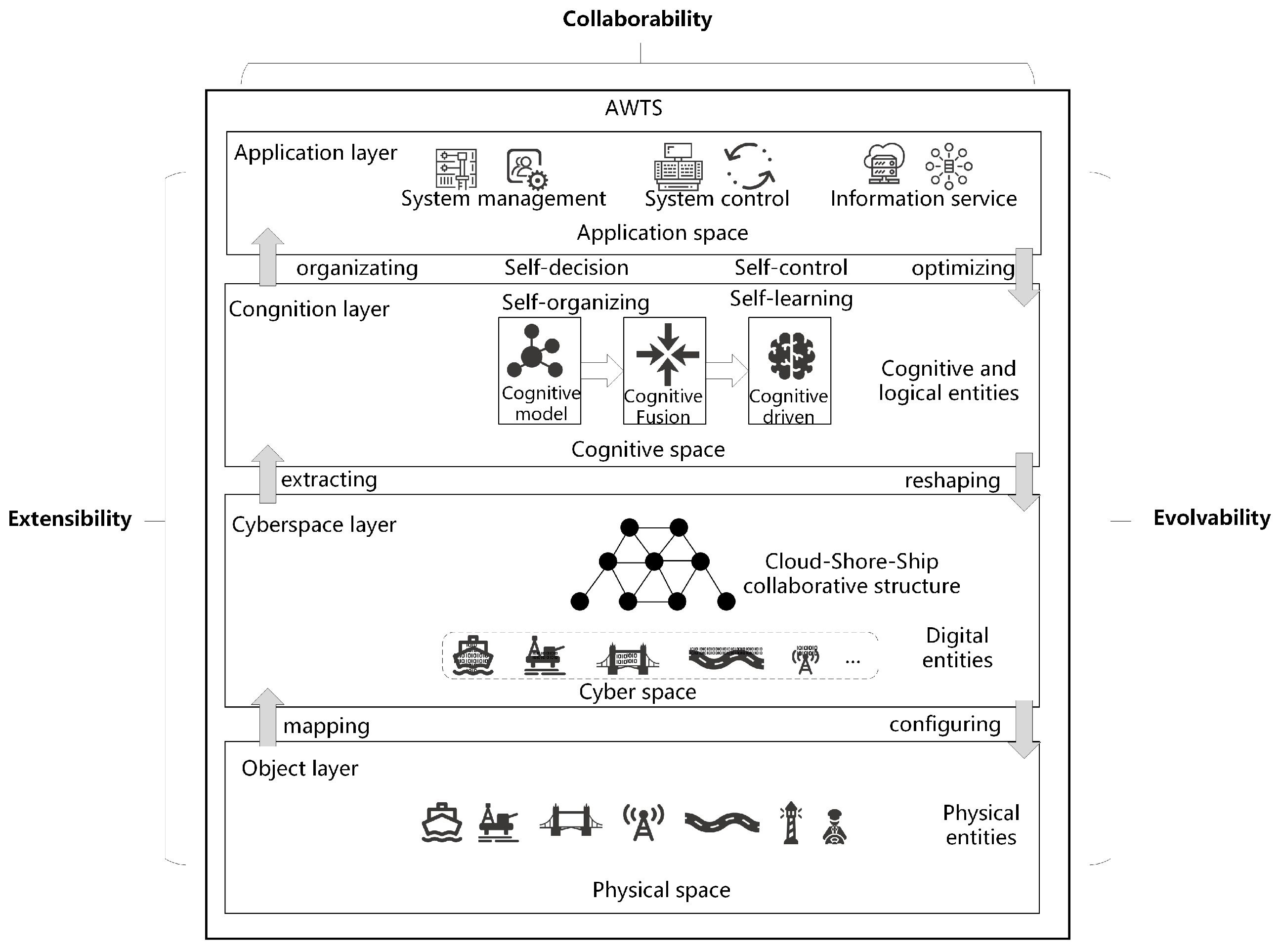

This article takes the architecture of the autonomous waterway transportation system (AWTS) as an example to address the building process of the system architecture.

Waterborne transportation is a strategic and fundamental industry in the world economy. It is also the primary transportation mode of international commodities, which is of great significance to its economic development. With enriching elements of the transportation system, especially the emergence of intelligent transportation tools and assisted facilities and equipment, the requirements for organization and management of waterway transportation, perception, and analysis of traffic environment, the abilities of self-learning and self-regulation are higher. The system application is isolated from the underlying data, so it is not easy to integrate and cooperate. The existing organization can not meet the demand entirely, so it is necessary to redesign the architecture to enhance the autonomous ability of the transportation system.

An autonomous waterborne transportation system is a waterborne transportation service system composed of physical entities, virtual entities, and logical entities involved in waterway transportation in terms of certain organizational forms. The system has the capabilities of self-perception, self-organization, self-determination, self-control, self-adaptive, and self-evolution to varying degrees. In AWTS, a physical entity, a virtual entity, and a logical entity show a pedigree-derived relationship among them. Taking the ship as an example, the ship in the transportation system is the physical object of the system; the corresponding virtual entity of the shipping entity is the digital ship constructed by digital technology and various information transmitted in the digital ship; logical entities are different cognitive roles of ships in specific traffic behaviors or traffic scenarios.

According to the suggestions given in

Section 3, firstly, the construction of the architecture is based on the goal. After the goal is clear, the specific functions under the goal can be determined. The goal and functional requirements of the whole system are precise can the other content of the system be determined. The architecture of AWTS is defined as four layers: object layer, cyberspace layer, cognition layer, and application layer. The specific framework is shown in

Figure 13.

Object layer is the description of physical entities in AWTS, including people, vessels, navigation facilities, external environment, and other traffic participants. The object layer is a set that aggregates the physical entities involved in AWTS, and it is the carrier of the whole system to realize the function. The object layer’s separated entities transmit information, receive instructions, and realize interaction relying on the cyberspace layer.

Cyberspace layer is the abstracting information in AWTS, including some physical entities (such as various sensors, communication facilities, and other hardware) and virtual entities (observable and descriptive information flow) to realize the mapping from physical space to information space in AWTS. The cyberspace layer realizes the interaction between various objects, and it is also the bridge for transmitting decision-making and control instructions. To ensure the stability of the communication network in the cyberspace layer, the cyberspace layer can optimize the network architecture of the information space according to the information of the cognition layer.

Cognition layer realizes the mapping from information space to cognition space in AWTS. According to traffic needs, participants obtain the required data and information from the cyberspace layer and organize the data and information in the cognitive layer to form knowledge supporting traffic perception, decision-making, and system control. The cognition layer realizes the logical expression of virtual entities in cognition space, makes virtual entities become logical entities with particular cognitive ability in AWTS, and establishes cognitive relations between different logical entities. The cognition layer is the key to realize autonomy in AWTS. It takes the information transferred in the cyberspace layer as input, realizes the construction of knowledge base through the learning mechanism, realizes evolution of knowledge base through the self-renewal mechanism, and finally outputs the knowledge to support autonomous decision-making and control AWTS.

Application layer extracts the constraints of the system from the cognition layer, which decomposes and plans the tasks of the system and physical objects according to the task requested and realizes the traffic optimization by controlling the behavior of the objects. The application layer is also the interactive interface between AWTS and other external systems (logistics, energy, regulatory systems, etc.). In this layer, the system responds to the needs of other external systems and provides services, including transportation services and transportation security services. The application layer can realize system control and system function by cognitive knowledge provided by the cognition layer. In AWTS, the object layer, the cyberspace layer, and the cognition layer are the basis for the realization of the system functions, and the application layer is the decision-making and interface center of the system to realize various functions.

The extensibility of the AWTS is embodied in that it can provide different levels of autonomous ships and autonomous transportation facilities with different functional services. The AWTS can provide a series of “end-to-end” functions from perception, cognition, decision-making, and control. Adopting the cloud-shore-ship organizational structure can effectively carry out the environment in which the physical objects of water transportation are located: Recognition, according to different application requirements, to recognize and organize the knowledge in the different domains; and make decisions and take actions based on the results of system cognition. In the cognition layer, there is not only machine-perceived knowledge but also knowledge from the system decision-makers. The corresponding cognitive model can effectively support the interaction between humans and machines to deal with structured knowledge and unstructured knowledge. By digitizing and networking all physical objects in the system and organizing knowledge according to specific tasks in the application space, the functional gap between physical entities of different autonomous levels in the transportation system can be effectively bridged.

The evolvability of the AWTS is reflected in the system’s self-learning and self-evolving capabilities. The system will perceive the feedback effects of decisions and actions in real-time to organize the knowledge of cognitive and logical entities, the network organization of digital entities, and the physical. The configuration of entities is optimized and reconstructed. According to the functional requirements of the application space, complete the reorganization of the cognitive space, cyber space, and physical space. In addition, the AWTS should include physical entities of different levels of autonomy. In the architecture designed in this article, physical entities of different levels of autonomy can quickly complete the mapping from physical space to digital and information space through the designed universal digital entity, to digitize and network all traffic participation objects in the traffic system through digital twin technology, which is conducive to the organization and expression of knowledge in the domain according to the needs of the application space. The formation of an organization-decision-action-feedback-optimization cycle mechanism in the entire system is conducive to realizing the upgrade and evolution of the entire system. It is conducive to improving the operating efficiency of the entire system.

The collaborability of the AWTS is embodied in the collaboration between humans and autonomous systems and the collaboration among traffic participants of different autonomous levels. In the specific implementation process, the system application is the center, and the resources, data and information, system application, and management of the entire system are collaboratively processed. System resources are distributed in cognitive space, cyberspace, and physical space in the system, and the coordinated deployment of resources is completed through extraction, organization, and mapping. The entire system’s operation can be regarded as information generation, information processing, and information feedback. Information can not only be self-perceived by physical objects but can also be input by external systems through application spaces. Information not only includes perception and intermediate data but also includes the cognitive information of system decision-makers. This information is uniformly organized and processed in the cognitive space to support the functional application of the application space. System application and management collaboration refer to an organization where all applications and management in the system have system decision-makers and autonomous systems through decision-making and execution, according to different tasks and goals, to coordinate system resources and system information.

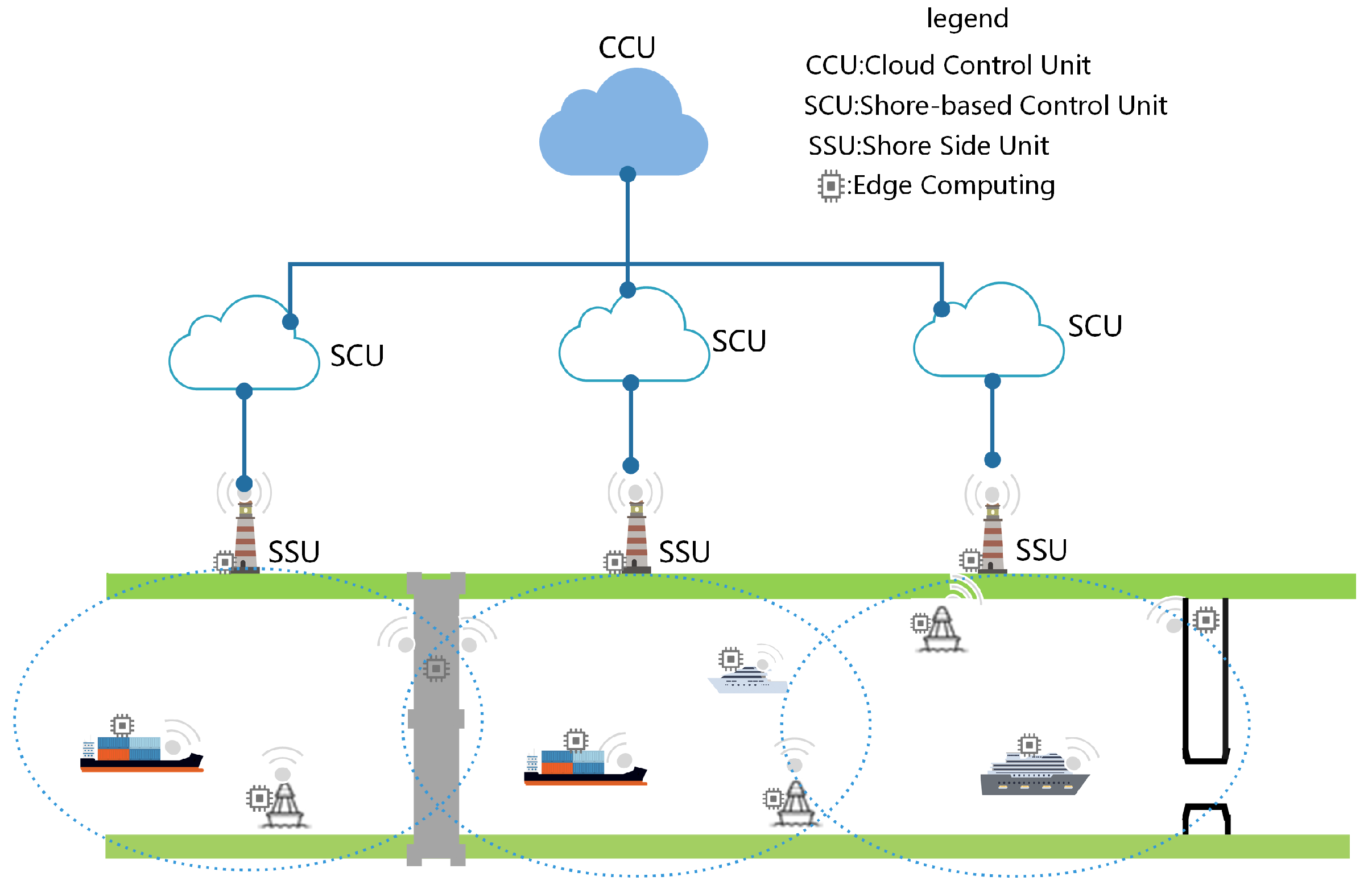

Specifically, AWTS provides a new way of transportation organization and management. The channel is divided into multiple segment units according to geographical segments to support extensive ship-ship and ship-shore interaction. The intelligent terminal conducts unified traffic management of the segment. The intelligent terminal is a shore-based intelligent management and control facility. It has the functions of perception, communication, calculation, decision control, and information service. It can provide navigation and environment for ships and other transportation facilities in the segment. At the same time, it also uploads the ship’s perception information and status information to the cloud for the system to make decisions. The cloud can make overall decision-making and control of the system and exchange information with external systems. The organizational structure of AWTS is shown in

Figure 14.

Take an autonomous navigating ship in the area sailing from port A to port B, as an example. The CCU obtains navigation tasks through information interaction with external logistics systems. After obtaining the task, CCU plans and decomposes the task and then distributes the decomposed task to the intelligent terminal of each SSU. After the task distribution is completed, the intelligent terminal of port A will issue navigation instructions in the ship. Simultaneously, complete the segment’s communication network, the ship will ship-shore (Vehicle to Infrastructure, V2I), ship-ship (Vehicle-to-Vehicle, V2V). The interactive information and the ship’s perception information are uploaded to the intelligent terminal, which is responsible for the management of all in-flight information segment of its jurisdiction, forming a local dynamic data link (LDD) for the segment, and push the processed data onto the CCU and ship as required. When sailing to the next segment, the ship exits the network of the previous and completes the communication network of the new intelligent terminal. The new intelligent terminal incorporates it into the local network and manages it. In this way, the ship finally arrives at port B and completes the sailing task.

5. Conclusions and Prospect

With the improvement on the level of autonomy, the uncertainty problems of the actual environment should be solved first to achieve different functions. However, on the other hand, due to the increasing complexity of the hardware and software components in the system, or the system may encounter a complex situation that has not been considered in advance, system failure or even overall failure will increase. Therefore, the operation of the autonomous system still needs participation in high-level human behavior decision-making, and the autonomous system can be realized by human-computer integration. Based on the above characteristics, in constructing the autonomous system architecture, we put forward that more attention should be paid to its extensibility, evolvability, and collaborability.

In the actual construction of the autonomous system, different levels of autonomous systems should be dynamically selected according to the level of system performance, task requirements, and system reliability level. In most cases, the highest control of the autonomous system is still mastered by humans. Full autonomy is the ultimate goal in the field of system control. However, autonomous systems still need to cooperate with human operators to complete system tasks. The role of the human in the system is still irreplaceable for a long time in the future. Therefore, this manuscript proposes the following key technical issues and challenges in the construction of autonomous system architecture:

5.1. Ubiquitous Cognitive Mechanism Design Based on Extensibility

In an autonomous system, it is necessary to perform extensive cognitive modeling of tasks and dynamic environments in real-time and to effectively organize and express information in different knowledge domains in the system so that it can be understood by humans and machines. With the continuous growth of complexity in an autonomous system, the system stability is relatively weakened, and the vulnerability increase. Therefore, most or even all of the autonomous systems will need humans and autonomous systems to complete the task together. The autonomous system can improve the data processing speed and keep running for a long time. The essence of interactive cognition is negotiation and learning. With the help of an autonomous system, personnel conduct command and control, acquire situation information, and machine can solve unknown problems not considered in system design under human decision. In the future, autonomous systems should have the ability to understand the situation. It should have a computer model that describes the current situation, integrates multi-source sensor information for situation understanding, planning, and decision-making, and can classify the situation according to the situation and have corresponding cognitive matching, planning, and action. It should have the cognitive ability to reorder dynamic targets and multi-target coordination. By constructing a multi-model cognitive library, it should be able to reason about unmatched environmental patterns.

5.2. Self-Learning and Evolution Mechanism Design Based on Evolvability

With the continuous improvement of self-determination ability, the system’s demand for human intervention will decline gradually in the future. The system will also be highly integrated with artificial intelligence technology and has the ability of self-learning. An autonomous system based on an artificial intelligence learning algorithm can deal with more environment change, task change, function change, or hardware change, which leads to an optimal solution to various task conditions and environments and results in a higher level of autonomy. In the future, the autonomous system should have the ability of high-level situation understanding and learning. The system should establish a suitable computer model for the current environment and tasks, independently match patterns, plans, and actions, and have the ability of active system learning, scenario optimization, and system model self-evolution. At the same time, the system also needs to understand, learn, and reason and provide stable and reliable solutions for dynamic and complex scenarios. Because of the complexity of the autonomous system, the self-monitoring network of the system should be considered in the system design to increase the network flexibility and improve the perception ability of the autonomous system. Autonomous systems based on learning algorithms can focus on more contextual elements (such as system environment, specific tasks, and dynamic risks) to provide robust solutions for the system to respond to multiple scenarios. If an autonomous system can self-organize and adapt to the environment, it requires the system to understand, learn, and reason. The learning ability of autonomous systems can learn from human cognitive science and biology to find ways to realize it.

5.3. Human-Machine Integration Mechanism Design Based on Collaborability

In the future autonomous system, the system and its users can judge each other’s trust. Therefore, it is necessary to ensure that the cognitions of the system and personnel are highly consistent, which helps to increase the system’s understanding ability. When designing an autonomous system, a reliable trust mechanism should be established, and the timing and degree of intervention should be made clear. Simultaneously, the trust mechanism should be evaluated to make dynamic adjustments according to the relevant situations. In the design of human-machine collaboration architecture, special attention should be paid to the design of safety mechanisms in communication network. A good safety design should be adopted to avoid completely uncontrollable situations. Given the complexity of autonomous systems, its software system is challenging to detect network viruses or malicious software. Further improving the environmental awareness of autonomous systems and improving the self-monitoring system will help cope with cybersecurity challenges. In addition, enhancing network resilience is also extremely important and needs to be considered at the beginning of system design. Network resilience is regarded as a fundamental principle for the creation and development of autonomous systems.