1. Introduction

Marine robotics is an exciting, yet complex field to develop. Due to the harsh environment, experimentation involves a higher level of logistics complexity when compared to other robotics fields. This includes not only the dependency on weather conditions, but also the transportation cost, robot deployment at sea, the need for a support Vessel, and so on. These factors create a barrier to many high-potential research groups that may not have the resources and the ability to go out and test their robots at sea. This barrier is especially high for new players in the marine robotics domain and student teams. At the same time, several world-class institutions do have the equipment and research infrastructure needed such as Underwater sensor networks, Autonomous Surface Vessels (ASVs), Autonomous Underwater Vehicles (AUVs), research Vessels, and so on. These are often underused and, therefore, can be shared with partners with difficulties accessing this type of infrastructure, allowing them to fully realize their potential. It can also attract new research teams that may not have all the skills necessary to develop, deploy, and operate marine robots (e.g., mechanical construction). Moreover, by sharing infrastructures, the community grows, new partnerships are established or reinforced, and ideas are cross-fertilized, all leading to the advancement of the state-of-the-art.

Several projects and institutions in the world have in different ways shared infrastructure or provided access/funding to support other research or student teams along the years. This support has come, for instance, in the form of equipment loans, competitive access through competitions or open calls, and grants for joint experiments through open calls and cascade funding. Specific examples of infrastructure sharing or funding with third-parties can be found in the following

Section 2. The remainder of the article is organized as follows:

Section 2 presents the related research.

Section 3 presents the infrastructure and methods used, while

Section 4 presents the experiment results of different trials and applications.

Section 5 discusses the results including the issues and limitations found. Finally,

Section 6 concludes the article.

2. Background

In Europe, a series of EU projects starting with Eurofleets, continuing with Eurofleets2 and, most recently, Eurofleets+ [

1], has been a long-standing enabler of young scientists by providing opportunities on board research Vessels and, to a lesser extent, by sharing AUVs and ROVs. At multi-domain robotics competitions such as ERL Emergency [

2] or euRathlon [

3], student teams with little or no experience in the maritime field were loaned commercial AUVs free of charge (following a call for applications). These free loans were instrumental to attract new teams to the field and to help teams that would be unable to develop their own Vehicles. These teams were given access to state-of-the-art hardware and could spend more time focusing on software development instead of solving mechanical or electronic issues. More info on these teams and their achievements is available in [

2]. More recently, an evolution of the ERL Emergency, the Robotics for Asset Maintenance and Inspection (RAMI) [

4] challenge, organized as part of the METRICS project [

5], has extended competitions to the Virtual domain. In this case, real data collected during previous competitions at sea are shared with teams participating in a Virtual competition. The Virtual competition takes place in preparation for the physical competition. The purpose of sharing data sets collected in real-world conditions is to not only help out teams from the field that may not have easy access to the sea for their preparation, but also to attract new teams from the artificial intelligence (AI) and machine learning field. Sharing of this type of data can be just as valuable as sharing robots and infrastructure. Results on both the physical and the Virtual challenge can be found in [

4].

Similarly, in the U.S. and funded by the Office of Naval Research (ONR), the RobotX competition [

6] loans at no-cost large Autonomous Surface Vehicles (ASVs) to student teams. This type of large Vehicle is difficult to develop, but also to test for students due to the cost and logistics. Thus, providing the Vehicles at no-cost is not enough to prepare teams as they may not be unable to deploy the ASVs until the competition. Considering this difficulty and wishing teams to increase their task accomplishments, since 2019 (before the COVID-19 pandemic), RobotX has run a Virtual competition [

7] as a way of preparing teams for the physical competition.

Other robotics challenges adopt a mixed approach of providing both hardware and funding to teams. This was the case for instance for the DARPA Robotics Challenge (DRC) [

8]. In the DRC, teams were divided into different tracks with different levels of funding (some were not funded). According to the performance on a Virtual challenge, teams could obtain more funding, and the best teams received as well a standard robot to compete in the challenge.

Providing funding and standard platforms through different phases, starting with a simulation phase, was also the approach taken by the European Robotics Challenges project [

9,

10]. Like the DRC, part of the teams (a third) received a robot to perform in the second and third phases, as well as increasing funding levels. While the second phase included benchmark tests, the third phase was focused on real experiments at end-user sites.

As part of the EU’s Robotics for Inspection and Maintenance (RIMA) project [

11], teams also receive funding to carry out real experiments in operational environments. The difference is that, in this case, funding is provided specifically for cross-border experiments involving European small- and medium-sized enterprises (SMEs) through open calls for proposals. The funding is provided for the development and testing of robotic systems. Moreover, RIMA wishes to help SMEs get to the market, and thus, it provides technical and economic viability validation and business and mentoring support services.

Another EU project that provided access to infrastructure and funding was the recently completed Marine Robotics Research Infrastructure Network (EUMarineRobots) [

12,

13] project. The main objective was to open up the most-important national and regional research infrastructures (RIs) for marine robotics to all researchers to optimize their use. To achieve this goal, a series of Transnational Access (TNA) actions were carried out. Sixty-one proposals from 68 applicants from all over the world were granted access. Full details regarding the TNA statistics are available in [

14]. Further information on all activities related to the project can be found on the website [

13].

In the case of EUMarineRobots, third-parties are granted both temporary access to infrastructure and travel funds to be able to execute the TNA actions. The TNA is also open to SMEs, but the focus of EUMarineRobots is different than RIMA. It offers opportunities for SMEs, universities, and research centers that may have difficulty accessing costly infrastructure such as research Vessels and others.

Within the EUMarineRobots project, the Laboratory for Underwater Systems and Technologies (LABUST) [

15] of the Faculty of Electrical Engineering and Computing, the University of Zagreb, has offered its infrastructure to partners around the globe, leveraging on previous experience in the framework of the EU project, Road-, Air-, and Water-based Future Internet Experimentation (RAWFIE) [

16]. In this project, LABUST ASVs [

17] were made available for third-party experiments as part of the RAWFIE federated test beds.

As shown, different models exist for infrastructure sharing and support to teams. These models have co-existed for over a decade. However, the full potential for sharing infrastructures was not completely exploited until the COVID-19 pandemic introduced lockdowns and harsh restrictions. Indeed, with the COVID-19 pandemic, it became nearly impossible to have other research actors using infrastructure in situ, and many projects/institutions adapted to the new reality by offering remote access to their infrastructure.

In that context, remote access became more popular. However, the roots of remote trials in marine robotics have been lain over two decades. For instance, in [

18], Internet-based remote teleoperation of an ROV was achieved in the Arctic and Antarctic environments (with a remote Control Center in Italy). This work is especially impressive given the bandwidths available in 2003 (and the usage of satellite communications). Although with limitations and limited testing time, this pioneering work proved the possibility of remote teleoperation of ROVs. Moreover, it allowed the public and other scientist teams to test the ROV going in the direction of infrastructure sharing.

Along the years, other works have similarly piloted ROVs from a remote location. For instance, in the DexROV project [

19], an on-shore remote Control Center was used to diminish the burden and cost of offshore operation. Similar to [

18], a satellite connection for the communication between offshore and onshore was used. Additionally, it had visual and haptic feedback for the user controlling the ROV. Indeed, using haptic feedback has been used in other remote (or teleoperation) operations such as the ones described in [

20,

21] together with Virtual Reality (VR) for better human–robot interaction. Our own work with haptic and VR feedback in the context of diver–robot interaction can be found in [

22].

An interesting approach for remote access was presented in [

23], where remote access was exemplified in a hardware-in-the-loop simulator. However, the overall aim of this work is to use a Virtual Control Cabin to Control a work-class ROV remotely.

More recently, in a joint work with Huazhong University of Science and Technology, our lab has performed cloud-based Control of multiple Unmanned Surface Vehicles [

24]. In this case, the work focuses on Surface Vehicles (instead of ROVs as most remote access examples), and it includes scenarios with multiple Vehicles, which increases the complexity.

Another very recent example is the one presented in the context of the Blue-RoSES project [

25]. This work follows previous pioneering work from the same above-mentioned research groups, but has the particularity of enabling not only professionals, but also non-experts in the remote piloting of an ROV. Like most examples, it focuses on ROVs.

Finally, a recent work [

26] presented a remote Control room that gives access to a wide range of assets, subsea infrastructure, Surface or Underwater Vehicles and assets at remote experimentation sites. This is a great example of a remote Control Center that can be used to Control different assets instead of being focused only on ROVs (as most of the state-of-the-art). The authors further elaborated on how to leverage Virtual experimentation and digital twins to complement the remote access to the physical infrastructure.

It was in this context that LABUST completed the construction of a new laboratory (including a new pool). Given the on-going pandemic (at the time of construction), the infrastructure was made ready to encompass remote trials and to allow any partner in the world to easily collaborate and test algorithms and devices without the need for physical presence. The infrastructure was initially shared remotely within the context of EUMarineRobots as many of the TNA had to be transformed into remote trials. However, the remote-access infrastructure was also used for educational purposes in the context of the Marine Robots for better Sea Knowledge awareness (MASK) Erasmus+ project [

27] and in the context of an Unmanned Ship project. Three examples (one per project) are included in

Section 4.

3. Materials and Methods

To increase its capabilities, LABUST invested in a new laboratory infrastructure at its home institution, the Faculty of Electrical Engineering and Computing, the University of Zagreb. The construction of this infrastructure partially coincided with the COVID-19 pandemic restrictions that made joint experiments, equipment sharing, and field trials nearly impossible. Thus, special attention was paid to prepare the infrastructure for the new needs of remote access so as to enable anyone in the world including students, scientists, researchers, and the even general public to exploit opportunities for scientific progress. In the following, the physical infrastructure, positioning system, ceiling, and Underwater cameras for situational awareness and the network infrastructure are described.

3.1. Physical Infrastructure

The whole infrastructure has an area of

, including

allotted for a workshop and offices and

for the pool area. The pool has an area of

and includes a crane for Vehicle deployment, as shown in

Figure 1. The pool size of

enables the testing of small Surface platforms such as the H2OmniX [

28], as well as micro-Unmanned Aerial Vehicles (UAVs), while the 3 m of depth allow testing with similarly sized AUVs or ROVs. The pool bottom and sides are currently gray, but may be covered with natural Underwater photos for visual applications.

For monitoring Underwater and Surface activities, the pool is equipped with four Underwater cameras and three ceiling cameras covering the complete pool. The cameras are supplied by SwimPro [

29], which provide infrastructure for detailed swim analysis in sports. In addition, the Pozyx Ultra-Wideband (UWB) system is used for real-time localization.

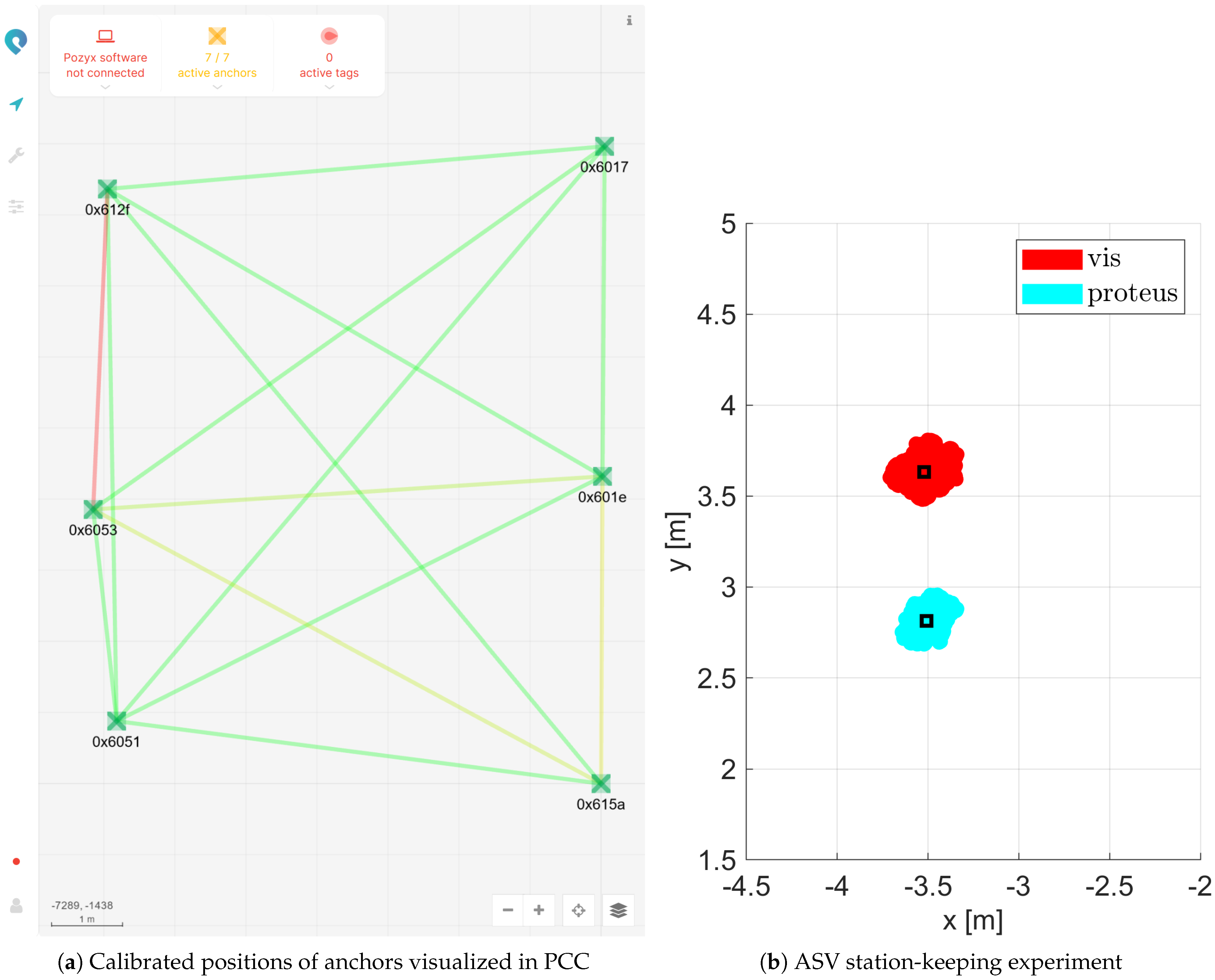

3.2. Robot Localization Using Pozyx

Considering the indoor environment, the Global Navigation Satellite System (GNSS) is not available. The Pozyx localization system provides an alternative positioning system for indoor robots, in this case Autonomous Surface Vessels (ASVs) and micro-UAVs. The system consists of UWB modules that serve as anchors or tags, with the anchors being placed in fixed positions, while the tags are attached to the robots. Any two Pozyx modules have the capability of estimating the range between them using the two-way travel time (TWTT) technique. The localization of the system is based on the tags measuring distances to known positions (anchors) and estimating their relative position. Due to the nature of the range-measuring procedure, only one range measurement can be made at a time. In order to localize a tag, it needs to measure the range to several tags in a round-robin fashion. This introduces a synchronization problem if we want to localize more than one tag, which is a sensible requirement. The Pozyx Creator Controller (PCC) solves this problem by introducing itself as a centralized tag (master) that queries other tags to initiate ranging with anchors. Location estimation is executed either on the tag itself or in the PCC. The manufacturer indicates that localizing in the PCC yields better precision and a faster localization rate. The latter approach aggregates the estimated ranges in the PCC, and the estimated positions are distributed via the MQTT network protocol. The location estimate is also available on the tag’s serial interface, but MQTT is a better option for our requirements.

We chose the PCC as a centralized localization provider that redistributes the estimated position via MQTT. The benefits of this approach include: flexibility in tag placement (tags can be battery-powered and attached to robots or other devices); no electrical integration is required; the robot can access the locations of all tags, facilitating multi-agent collaboration. While the benefits are plentiful, a noticeable delay in the localization reaction time was noticed, which may affect high-speed applications.

The LABUST pool facility has six Pozyx anchors placed in fixed locations on the walls. For the localization of the robots, a Pozyx tag is placed externally on the Vehicle. The calibration of the position of the anchors can be performed automatically with the PCC and/or manually. The initial calibration was carried out with the PCC. Subsequently, the anchor positions were corrected with a laser measuring device using the spatial properties of the room. The manual correction process can become tedious, but the calibration is performed once if not changing the anchor placement. To preserve the robot heading estimation capabilities using a magnetometer, it is necessary to align the Pozyx with the Magnetic or True North.

In addition, the Pozyx system can also be used to mimic indoor GNSS localization. In this case, the points surveyed by GNSS outdoors under a clear sky are used to calibrate the Pozyx system. Assuming an Earth-centered, Earth-fixed (ECEF) coordinate system at the Center of the fixed points, it is possible to generate a transformation of the Pozyx measurements directly into geographic coordinates, which can be converted to other formats as needed. The calibration details will be covered in

Section 4.1.

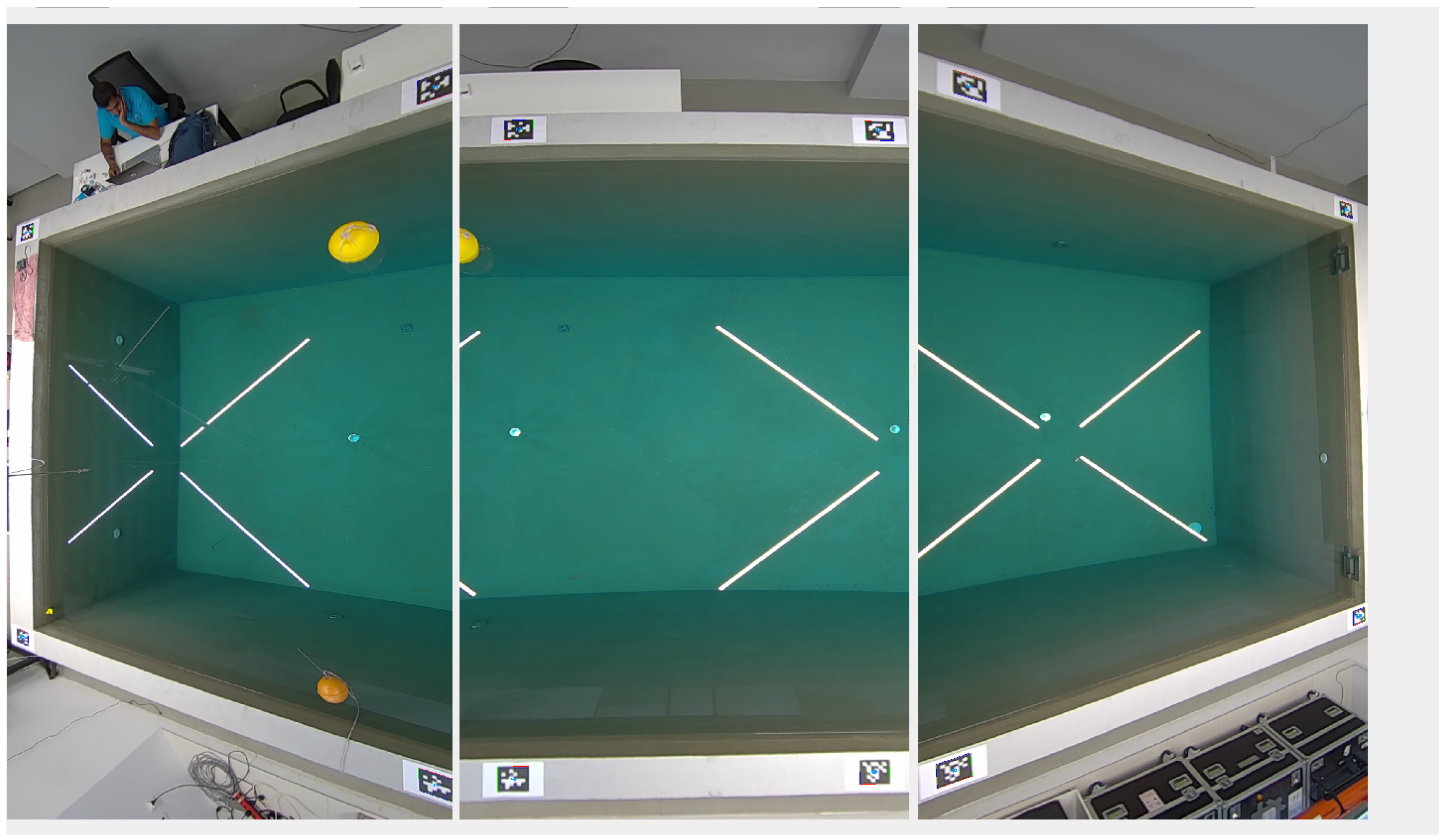

3.3. Visual Robot Localization for Surface Vehicles

Reliable information regarding the robot position is essential to test navigation algorithms in the pool area. Thus, using the three ceiling cameras, a visual robot localization system was developed for the pool, as shown in

Figure 2. The cameras are SwimPro IP Bullet cameras (SwimPro, Newcastle, Australia) with Full-HD resolution. The entire pool (

) is covered with a 10% overlap using these cameras mounted 3 m above the pool. They also meet the IP67 rating, due to the closeness to the water Surface and occasional water splash occurrence. Intrinsic camera calibration is extremely important in this particular case, as a significant fisheye effect was present in the camera streams. Without removing it, visual robot localization is impossible to perform. For that purpose, we used fiducial markers, which can be easily placed on the top of the robot to ensure good camera visibility. AprilTag, in particular, has proven to be an excellent tool for robot localization tasks. Therefore, the ROS package AprilTag_ros

1 was used to detect robots on the pool’s Surface [

30,

31]. This algorithm provides a simple, robust, and accurate detection of AprilTags.

Overall, the entire procedure of ceiling camera system localization on the pool can be separated into 3 main steps:

Intrinsic camera calibration;

Extrinsic camera calibration;

Localization and tracking algorithm.

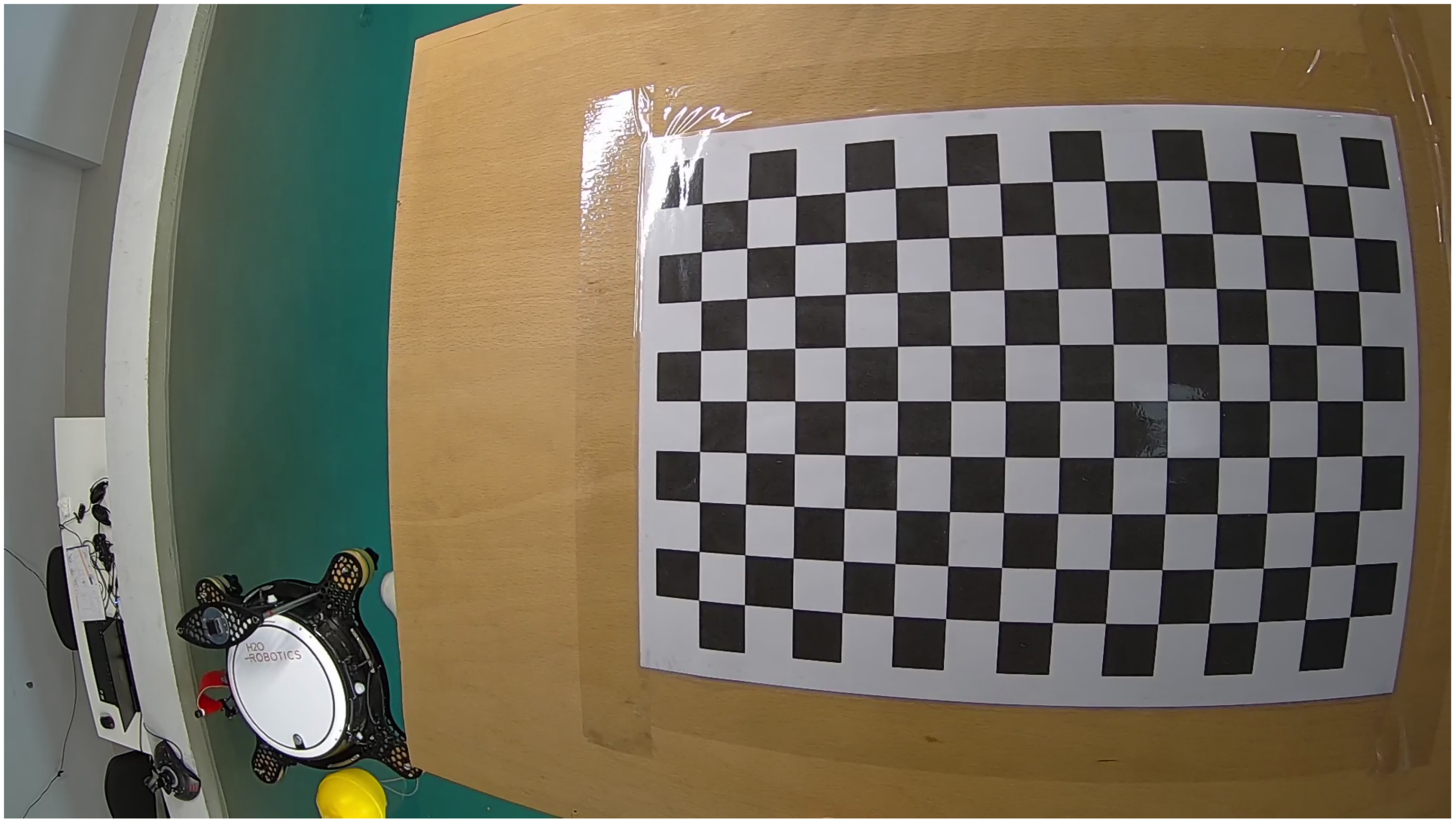

To be able to solve the localization issue, we first had to remove significant radial distortion in the camera stream. The OpenCV framework

2 has widely used tools for intrinsic and extrinsic camera calibration, which are well-documented and simple to use. The result of the intrinsic calibration should include the camera matrix and distortion coefficients:

where

f represents the focal lengths and

c the optical centers of the camera distortion coefficients. These can be separated into two,

k for the radial distortion and

p for the tangential distortion. The procedure of intrinsic calibration using OpenCV is performed with many versatile chessboard images with different distances and angles of the chessboard. The minimal requirement for the number of detectable points on the chessboard is 12, and to obtain a nice fit on the regular paper size, the width-to-height ratio should be close to 1.4142:1. Finally, one can obtain better accuracy with a higher number of squares, but at the same time, the squares should be big enough for easy detection. In our scenario, the chessboard was printed on A3 paper and, it had 15 × 10 squares. The biggest challenge in the calibration procedure was the small overlap in the camera frames, and therefore, during the calibration procedure, we had to make sure that not much of the image was lost. For this matter, we found it was vital to have many diverse images with different angles and positions of the chessboard. A significant fisheye effect and calibration procedure with the chosen chessboard is shown in

Figure 3.

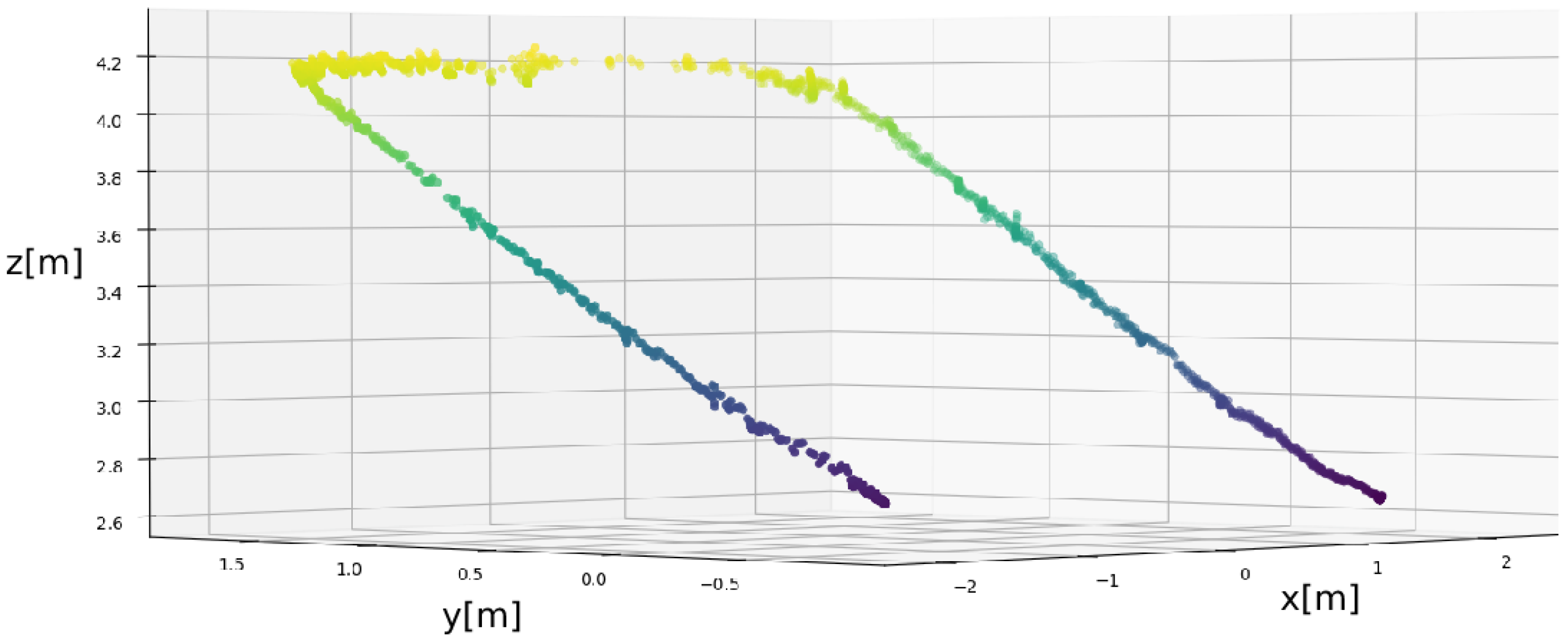

In the second step, the goal of the extrinsic camera calibration was to find the position and orientation for each of the 3 cameras in relation to one corner of the pool. This issue could be easily solved using the OpenCV default algorithm for calibration if we had a satisfactory overlap between camera streams. The transformation from camera to camera could be calculated using multiple images of the chessboard that is visible from both cameras, and the final transformation to the one corner of the pool could be achieved from a single AprilTag. Still, our overlap was very small, so we decided to implement a new method, which included 4 main parts:

Plane estimation for the z-axis position in the camera frame;

Line estimation for the x and y position in the camera frame;

AprilTag localization algorithm;

Manually defining the translations of the cameras to the corner of the pool.

The camera frame was positioned so that the z-axis points forward, the y-axis down, and the x-axis right. The pool coordinate frame was selected so that z-axis points up, the x-axis represents the length of the pool, and the y-axis represents the width of the pool. The first part of the algorithm was to estimate the orientation of the z-axis of the pool frame in the camera frame. This is defined by the normal vector of the plane, which is aligned with the pool’s Surface. To estimate this plane, we used the RANSAC algorithm [

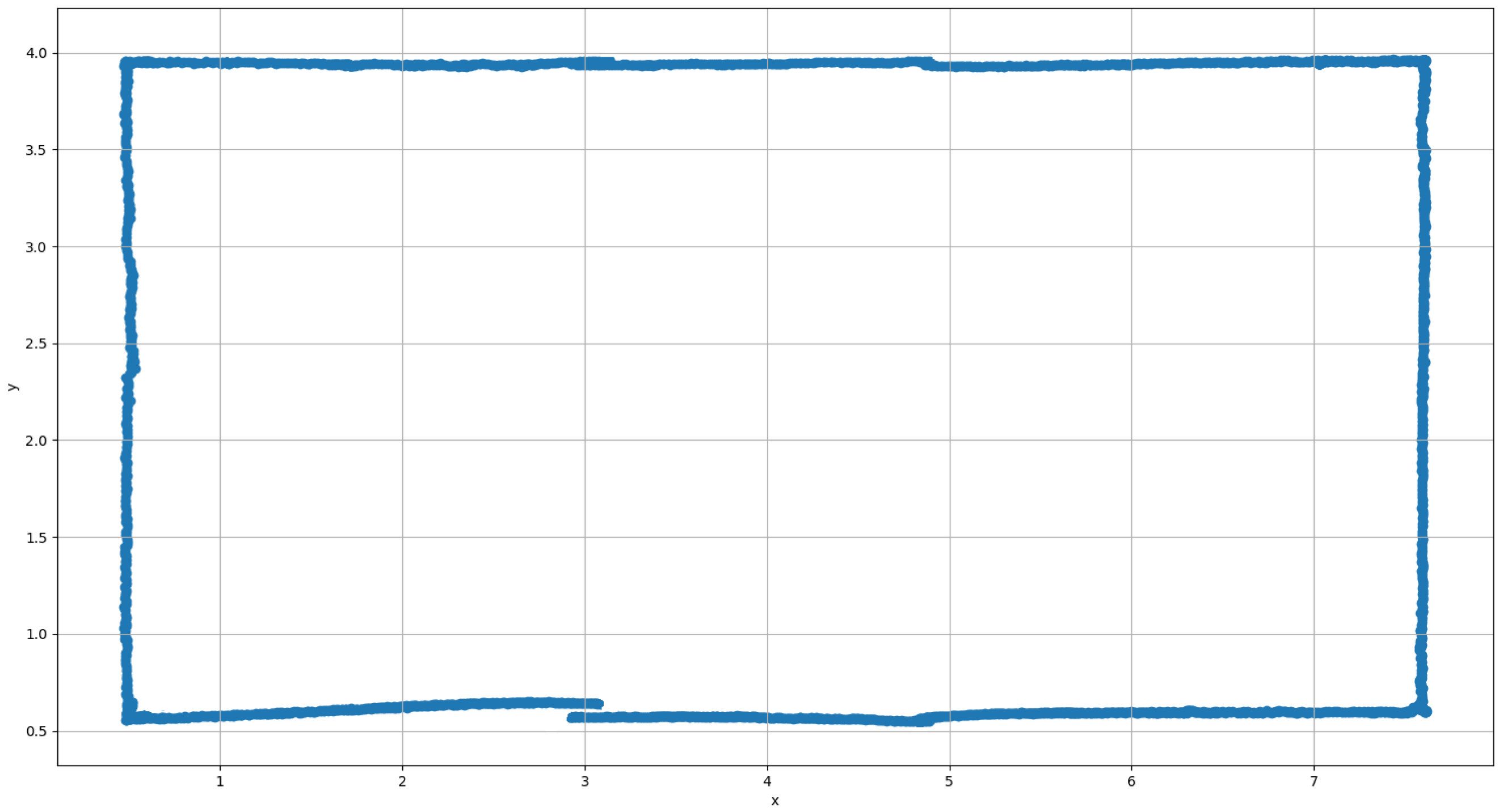

32] with multiple positions of the AprilTag as the input. The points were positioned approximately at the pool’s Surface. It was very important to have a wide area covered with a significant number of points to obtain a satisfactory estimation of the plane. We decided to slide the AprilTag on the corner of the pool and used every position of the AprilTag detection algorithm. This resulted in more than 300 points, as shown in

Figure 4. From the image, it is easy to see that we can estimate the camera rotation using these points. As described previously, we used RANSAC to obtain the z-axis of the pool frame in the camera frame.

To estimate the orientation of the x-axis and y-axis, we estimated one side of the pool as the line. Sliding one AprilTag parallel to the side of the pool resulted in a single line in the camera coordinate frame, which represents the position and orientation of the y-axis. Then, using the cross-product of two vectors, we were able to obtain the position of the remaining x-axis. The procedure was conducted for all 3 cameras separately. At this point, the orientation of every camera in the predefined pool frame was known. Still, the translations between the cameras had to be calculated by using the AprilTags at predefined locations. We chose one corner of the pool as the Center of the coordinate system and used a single AprilTag to detect the transformation of the first camera to that coordinate system. For the other 2 cameras, we placed the AprilTag in the frame of that camera and measured the translations to the pool’s coordinate frame. Using the AprilTag localization algorithm, we were able to obtain the translation of that camera to the coordinate frame of the pool. Future work will include implementing this localization system on the Underwater cameras.

3.4. Top-View Visualization

In addition to localization, ceiling cameras are also used to visualize the pool area. A single view of the entire pool is created from stitching together three camera streams. To perform the stitching, the perspective had to be changed due to the two corner cameras being at an angle, as shown in

Figure 2. This pool view provides a clear representation of the robot’s position in the pool and is, therefore, suitable for online streaming of the pool area.

To obtain a suitable single-frame view of the pool, we used the AprilTag ROS package. This package can easily give us an accurate position and orientation of the AprilTag visible in the image. By placing these AprilTags in the corners of each camera frame with middle tags in the frame of two neighboring cameras, as shown in

Figure 5, we were able to change the perspective of each camera frame and concatenated images using the AprilTag positions. At this stage, using an AprilTag detection algorithm directly on this single stitched image may seam like a simple and good approach for the localization problem. However, the process of stream concatenation could include a significant delay, which would present an issue for the localization algorithm. Another significant issue is that even a slight misalignment in the frames can result in the failed detection of the AprilTag when transitioning from one frame to other. Furthermore, this scenario could happen very often because objects below the plane of concatenation appear twice and the ones above are partly or fully lost.

Therefore, localization could only be performed on the plane of concatenation, as is visually described in

Figure 6.

Due to the issues described previously, the stitched stream of the pool is used only for visualization purposes (shown in

Figure 7), and it shall run on a separate thread from the localization algorithm to not introduce delays.

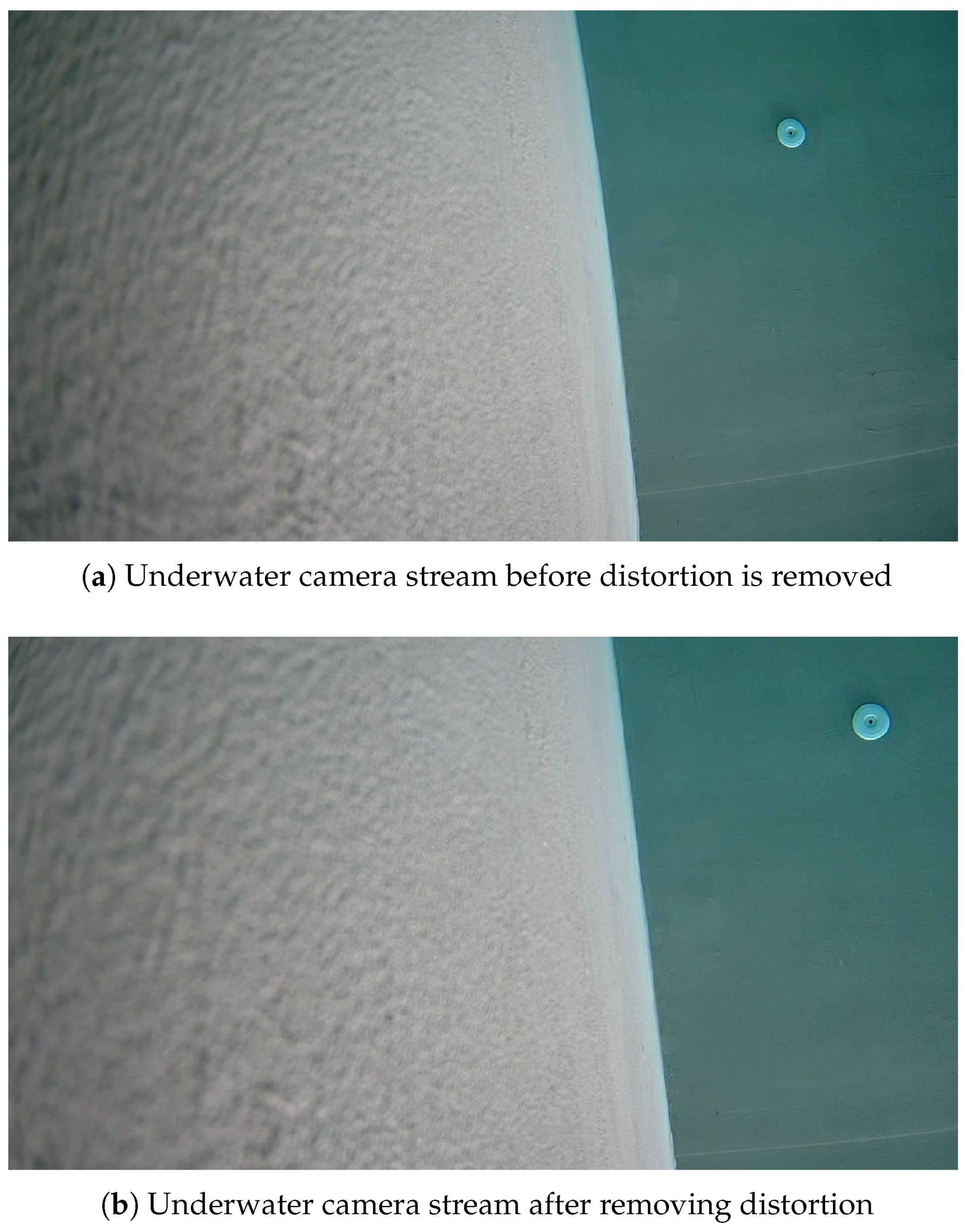

3.5. Robot Localization for Underwater Vehicles

Similar to the ceiling camera system, an Underwater camera localization system is currently under development using the 4 cameras (one in each corner). A similar setup as for the ceiling camera calibration can be used with a few differences. The first one is that the intrinsic calibration procedure changes as the cameras will be in the water and there will be refraction. There are typically two solutions: using the in situ calibration procedure (Underwater) or running the calibration procedure in dry conditions and compensating for the refraction in the water after. Our choice was to use the Pixar model for Underwater camera calibration [

33]. This work provides a simple solution with in-air calibration and post-rectification. The solution is applicable for the cameras sealed in the housing with flat glass with a small distance of the camera to the flat glass (<1 cm) and the optical axis of the camera perpendicular to the glass Surface. After running the calibration procedure, we obtained a reasonable result (shown in

Section 4). However, for extrinsic camera calibration, we used the OpenCV-based algorithm (as for the ceiling cameras), which required using a chessboard in the water. Therefore, with minimum additional effort, intrinsic calibration can be performed in the water, which can be considered a more-accurate approach because it is not dependent on the water refraction index. For the extrinsic camera calibration, we used the ROS package camera_calibration

3, which has a Graphical User Interface (GUI) implemented for the calibration procedure of the stereo camera. This tool can provide the transformation matrix between cameras, as well as perform the intrinsic calibration of both cameras. In our case, this procedure needs to be performed for all 4 cameras in the pool setup. In the case of our setup, one of the additional requirements for this procedure was to be easily repeatable because the cameras are modular and can be moved around the pool. Underwater camera calibration and localization procedures are currently under testing and will be completed in future work.

3.6. Network Infrastructure and Remote Access

For mission Control and Vehicle communication, for interoperability reasons with external partners, the Robotic Operating System (ROS) [

34] suite was used. ROS, a de facto standard in current robotic systems, uses a publisher–subscriber communication model for exchanging messages between different robots in an distributed system. The distributed system includes devices connected to the local network either by Ethernet cable or using WiFi. As detailed below, a Virtual private network (VPN) is configured so that external devices can connect to the internal network via the Internet. To provide real-time feedback and improve the situational awareness of external users, the cameras described in

Section 3.1 use a static IP and publish the current video stream to the ROS topics using the ROS gscam tool

4. Depending on the use case, a Vehicle Command and Control Center (CCC) can be instantiated. This was used in the experiment described in

Section 4.3.

The CCC used in the experiments described in

Section 4.3 (first remote trials using the infrastructure) was an HTTP server (implemented in Python). It served as the endpoint for remote Control of the mission execution. One of the good features available is that the server API can be easily extended and configured with mission-specific tasks. For data exchange, the JSON message format is used by the HTTP server. HTTP POST request handlers on the server implement Command executors. Monitoring and supervision parameters can be obtained remotely by using HTTP GET requests. While the JSON format is human-readable and programming-language-agnostic, the fairly large amount of data overhead in each HTTP message is a drawback. Moreover, data streaming is not supported, so the user cannot remotely stream ROS topic data via the CCC. For future work, a GRPC

5 server that offers stream capabilities and ensures the order and delivery of messages is being investigated.

While it would be possible to expose access to the HTTP server directly, a more-secure approach, using a VPN, is used. This allows safer use of the HTTP approach, but also is a more-direct approach. Two VPNs were used, OpenVPN [

35] (in bridging mode) and Wireguard [

36]. The two VPNs are installed on the poolside router, while the remote clients depend on the application. For fairs, demonstrations, and trials where OSI Layer 2 remote access is required, a dedicated Teltonika 4G router with an OpenVPN client (TAP) is used to bridge the local and remote network. Bridge mode makes little sense if only OSI Layer 3 traffic is required, so the simpler Wireguard VPN was added. Namely, for the MASK project mentioned in

Section 4.4, the router has a dedicated subnet for Wireguard clients, and students installed a client on their phones together with the ROV driving application.

A secondary task of the aforementioned Teltonika 4G router is enabling remote infrastructure access while in trials. While the OpenVPN bridge connection to the poolside network enables bidirectional access, the Wireguard server is configured on the remote router, which enables direct (not relayed) online access to the underlying network from any location not in the poolside network. An alternative approach is connecting to the poolside network via any VPN implementation and exploiting the Teltonika-poolside bridge connection, subsequently introducing additional latency and throughput reduction.

5. Discussion

The infrastructure described in

Section 3 and the results presented in

Section 4 are here discussed both in general terms (comparing our work to the state-of-the-art) and, in particular, as concerns any challenges/limitations found in dedicated subsections.

5.1. Comparison to State-of-the-Art

When comparing our infrastructure and our work with others found in the literature reviewed in

Section 2, we can note that most works on remote Control for marine robotics focus on ROVs [

18,

19,

20,

21,

23,

25,

40]. There are works that include a fleet of ASVs (our joint work [

24]) and a multitude of different heterogeneous Vehicles [

26]. In our work, similar to [

26], we showed the possibilities of our infrastructure to support experiments with divers, AUVs, ASVs, and ROVs. This wide scope and flexibility are not commonly found in the literature (besides the excellent example of [

26]. On the other hand, in some of the above-mentioned works, due to the narrow focus only on ROVs (or, in some cases, only one specific ROV model), Control centers with better latency and human–robot interfaces when compared to our infrastructure are presented. The potential improvements regarding network access are discussed in the next subsection.

Another major difference and the most-distinctive part of our work is that most works focus on describing the infrastructure of their remote Control centers and on experiments at sea (with minimal infrastructure). On the contrary, our paper describes physical and network infrastructure to allow remote experiments to a pool (with a similar concept at sea being included as well in the

Section 4). In particular, we detail the above and underwater camera setup and indoor positioning system to allow good tracking of Vehicles in the controlled environment of a pool. While on one side, this is not applicable to real trials at sea and those are invaluable, on the other side, it is extremely important to use pools for the testing and validation of marine robots before experiments at sea. Well-equipped pools such as ours can provide excellent conditions for repeatability tests and benchmarking of robots. Moreover, this infrastructure revealed itself to be essential during the COVID-19 pandemic restrictions, which prevented both third-parties and us from traveling to the seaside for trials. The installed cameras’ feedback was critical to the successful remote experiments as described in the

Section 4.

Finally, one common thing between our work and some of the work found in the literature [

21,

40] is the utilization of Virtual Reality (VR) as a way of either complementing or enhancing remote experiments. Although not detailed in this paper, we used VR infrastructure in [

22], which can function as a preparatory step for remote experiments in the pool.

5.2. Challenges and Limitations

In terms of challenges and limitations, we had some challenges with the methodology that still need to be fully addressed in future work, and we identified some limitations of the technology during real remote experiments that are being addressed. First of all, regarding Pozyx, as already mentioned, the flexibility of placing the tags (wireless installation, battery-powered) is both an advantage and disadvantage as one can try different setups until the best is found, but on the other hand, there is a noticeable delay in localization due to the wireless setup.

Another challenge found is related to the positioning of the cameras, as explained in

Section 3.4. Due to the physical configuration of the cameras, localization could only be performed on the plane of concatenation, and the stitched stream of the pool was used for visualization only.

Regarding Underwater cameras, intrinsic calibration is challenging because it requires either in-water calibration or dry calibration with correction. The first method may be more logistically challenging, while the second one is potentially less accurate. This is an area of on-going improvement as regards localization (as for general visual feedback, the current solution works well enough).

In terms of network access, the choice of VPN over simpler port forwarding provides not only security features, but also reduces configuration complexity for providers and users [

41]. The downside is that, depending on the location and connection options, VPN connections can be blocked (e.g., with carrier-based NAT solutions). Sometimes, this can be avoided by involving service providers’ support, but for regular data SIM cards, often, the options are limited. Potential alternatives, also considered as future work for the described network, are VPN services such as ZeroTier

7, which enable operations in situations where a simple VPN fails. Specifically, for ZeroTier, also, the overall configuration is simplified for both the providers and users. Providers can manage their Virtual network over the web, and users only require knowledge about the network id. This makes is extremely simple to include users as opposed to a VPN configuration that contains much more details about the network and requires more knowledge to set up. Some other benefits for marine systems are also described in [

26]. Additionally, as mentioned, one practical issue we found in the case of remote access provided to high schools was that the eduroam network at their schools would block the VPN ports.

Another practical issue was found during the intrinsic calibration of the ceiling cameras, as these are not easy to reach to obtain good chessboard images. This led to an imperfect calibration, but nonetheless, the impact was minimal as the maximum error (only one camera was affected) was less than 5 cm.

Similarly, in diver–robot experiments, a small practical issue occurred that was unrelated to our infrastructure. Given that the experiments took place in New Zealand and Croatia, the large time zone difference (12 h) created some issues as, on the New Zealand side, the working hours of the public pool used considerably restricted the amount of time the joint experiments could use. This required good planning and several short sessions instead of a longer one, and it is something to take into consideration when planning remote trials with far away countries.

Finally, latency is still something to improve in the case of the ceiling cameras installed above the pool. As mentioned, for remote ROV piloting with the high schools, there was a 2 s delay on the camera feed. On the one side, this introduced complexity, but on the other side, the students experienced a real-life effect especially for larger teleoperated ROV systems. Instead, for the remote Control of ASVs from the pool to a sea location, the latency was good enough for smooth Control.