3.1. Vb-Based Sampling the New AUV Pose

For each particle, according to the UKF method, the state vector of the

i-th particle is augmented with a control input. Assuming that the mean of the control noise is zero, the augmented state is formulated as:

here,

is the augmented vector for the state,

and

denote the previous state and covariance.

denotes the process noise.

,

,

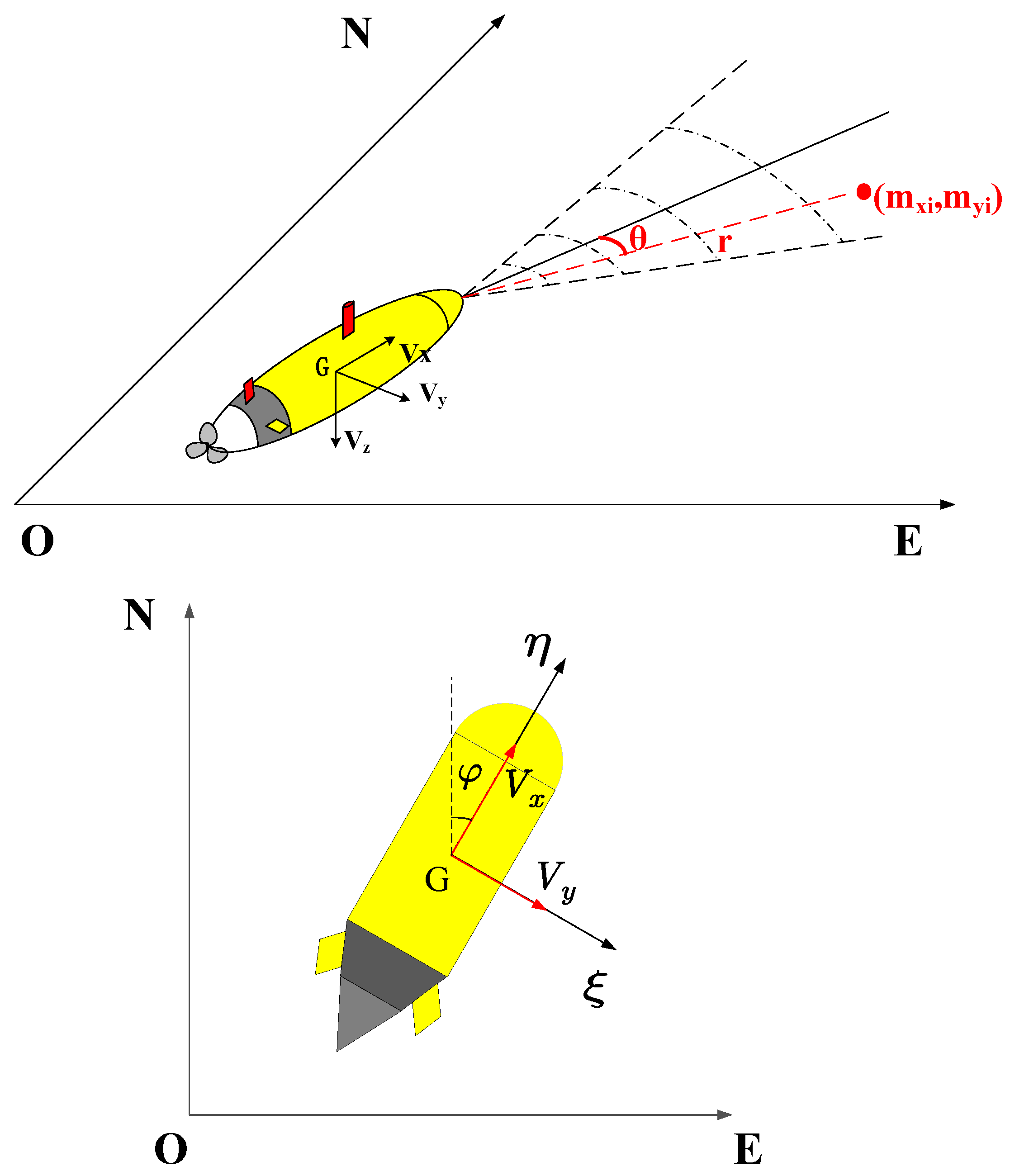

respectively represent the X-axis position, Y-axis position and yaw of the AUV.

VB-AUFastSLAM extracts sigma points from the Gaussian and passes these points through the nonlinear function. A symmetric set of 2

n + 1 sigma points

for the augmented state vector can be given by:

where the

is computed by

.

is a small number to avoid sampling nonlocal effects (

is appropriate).

is used to determine the distance between the sigma points and the mean which is not critical though, so a good default choice is

.

j represents the

j-th column of matrix

. Each

contains the state and control noise components given by:

The weights corresponding to the state and the covariance can be calculated as:

is the parameter used to introduce higher-order information items of the posterior probability distribution. For the Gaussian prior, the optimal choice is .

The set of sigma points are transformed by the motion model characterized by a nonlinear function as follows:

is the transformed sigma point of the AUV state. The prediction state and the prediction state covariance matrix are calculated based on the weights as:

When external observations are available, the statistical characteristic of the time-varying observations noise covariance are estimated to improve the performance of algorithm. Since the Inverse-Gamma distribution is the conjugate prior distribution of the variance of the Gaussian distribution, in Bayesian analysis, the Inverse-Gamma model is usually chosen to model the variance of the Gaussian distribution [

29].

and

represent the hyper-parameters of Inverse-Gamma distribution, respectively. The Inverse-Gamma distribution is shown as Equation (

13).

We introduce the VB method to approximate the observation noise. Based on VB, the joint posterior estimation of the system state

and the observation noise covariance

can be expressed as:

where

and

are the approximate probability distributions of the state vector and observation noise parameters, respectively. The VB approximation can be formed by minimizing the Kullback–Leibler (KL) divergence between the true distribution and the approximation:

When minimizing the KL divergence, fixing

or

respectively, the following can be obtained:

As the above equations are coupled, it cannot be solved directly, after computing the expectations separately, we can obtain:

where

denotes the expected value with respect to the approximating distribution

. As a function of

, this is a quadratic implying that

is a Gaussian distribution, both the mean and variance can be obtained by standard matrix operations.

Similarly, the expectation of

can be calculated as follows:

where

. From the above equation, it can be seen that

is the product of the Inverse-Gamma distribution. If we assume that

is d-dimensional, then

can be expressed as:

To ensure that the joint predicted probability distribution of

at time

k keeps the same form as the posterior probability distribution at time

, a forgetting factor

is introduced to reflect the degree of fluctuation of the noise. The prior of the observation noise parameters can be expressed as:

The exact observation noise posterior distribution is obtained cyclically and iteratively during the observation update. It should be emphasized that the loop iteration of the observation noise using the VB method is performed on the covariance matrix, so the observation noise still satisfies the Gaussian white noise distribution, i.e., .

As feature points are observed and matched with existing features after data association, the following stage will be employed to iterative update the

and the covariance

of the robot. From the observation model, the predicted observation of the sigma points can be calculated as:

The predicted observation and cross-covariance matrix can be obtained as:

The mean and covariance of the state vector are calculated as:

What needs to be noted here that the observation noise

at this time has been modeled as an Inverse-Gamma distribution. Furthermore

denotes the temporary value during the filtering loop, when the loop ends, save the final estimated noise of this observation:

where

i represents the

i-th particle and

j represents the observed noise for the

j-th feature. The noise of each observed feature is estimated and

will be used in the subsequent mapping stage. Moreover, the update of the noise hyper-parameters can be expressed as:

3.2. Feature State Estimation by VB-UKF

Based on

known in

Section 3.1, feature state estimation in VB-AUFastSLAM can be divided into two parts: the feature update stage and feature augmentation stage.

(1) Feature augmentation

Since the path at time

k is known, the observation noise can be used as the initial feature covariance matrix, so that in the mapping stage of the VB-AUFastSLAM, current observation

and the observation noise covariance matrix are chosen as the initial inputs, we can obtain the sigma point of the observation as:

where

, in the feature update stage, it is usually set

. After nonlinear transformation, the mean and covariance of the new features can be obtained as follows:

(2) Feature update

Feature points will be updated after feature matching is completed. We convert the feature points position to sigma points as:

According to the

at time

k and the nonlinear observation model

, the predicted observations can be obtained:

is the cross-covariance between state and observation in the feature update stage, which is used to compute the Kalman gain

:

Similar to the path estimation stage, when calculating the innovation covariance

, the observation noise

needs to be estimated by the VB method. The difference is that the initial observation noise

in the feature update stage is already obtained from the path estimation stage, so the observation noise requires fewer cycles than the path estimation stage. After loop iteration, the position and covariance of the feature can be obtained: