A Meta-Analysis of Relationships between Measures of Wisconsin Card Sorting and Intelligence

Abstract

1. Introduction

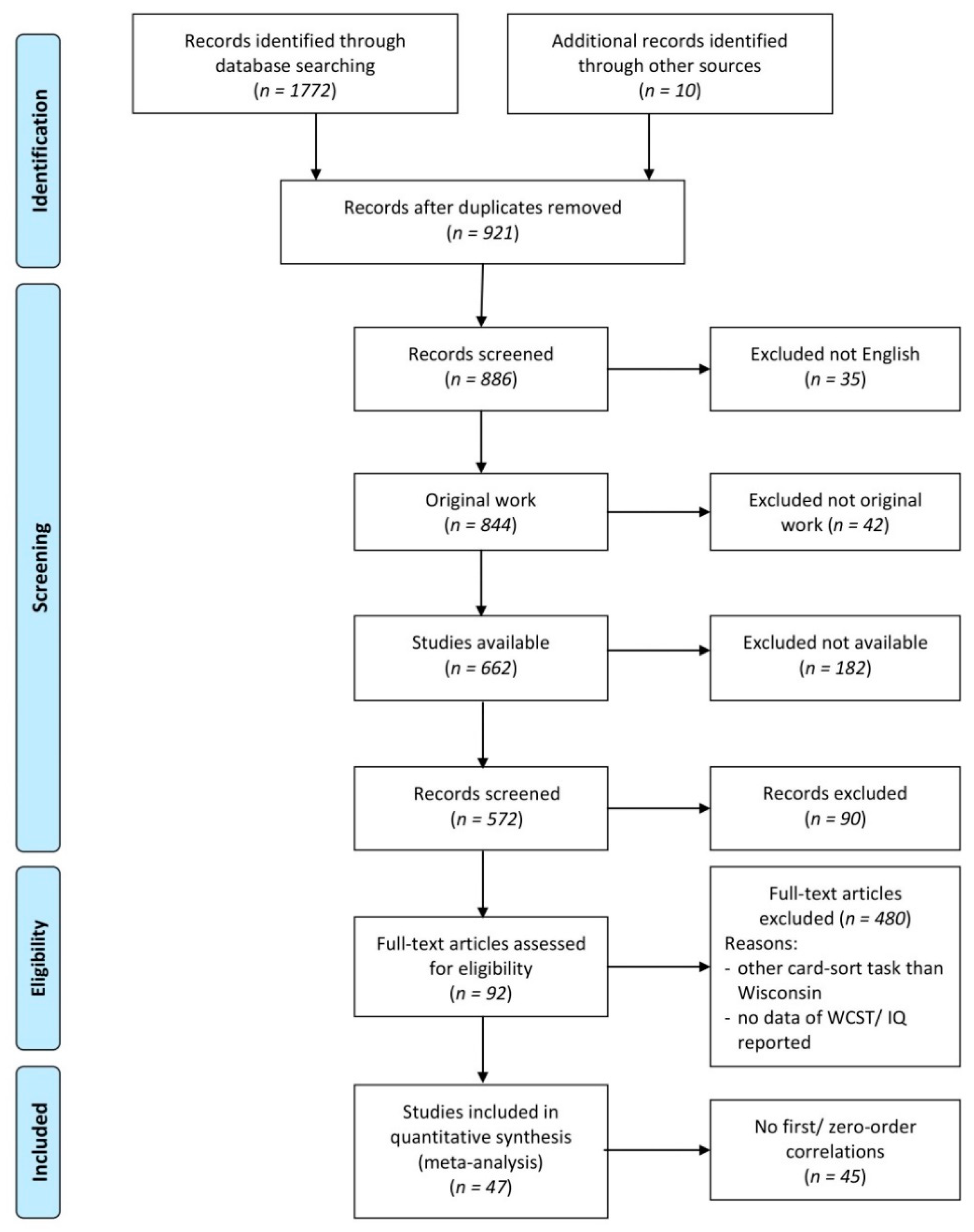

2. Materials and Methods

2.1. Search Strategy

2.2. Data Extraction and Coding

2.2.1. WCST Scores

- the number of categories completed (correct sequences of 6 or 10 consecutive correct matches to the criterion sorting category; the sequence length depends on the test version)

- the frequency of perseverative errors or responses (persisting to respond to an incorrect stimulus characteristic)

- the frequency of non-perseverative errors (errors that are not considered as perseverative errors)

- the frequency of failures to maintain the set (e.g., when five or more consecutive correct matches are made, followed by at least one error prior to successfully completing the category) and

- the frequency of total errors.

2.2.2. IQ Domains

2.3. Correlation Coefficients

2.4. Basic Meta-Analysis

2.5. Moderator Analyses

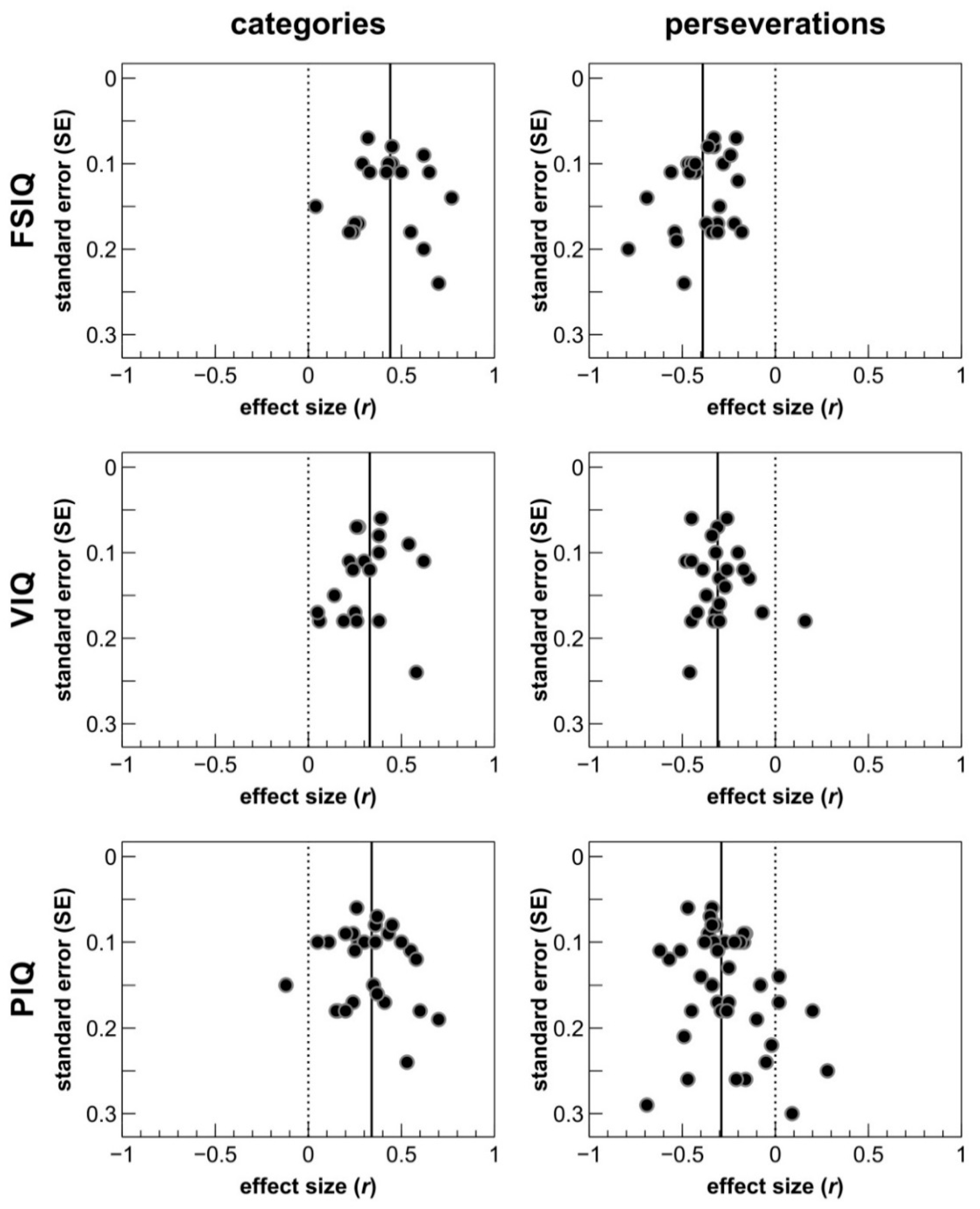

2.6. Publication Bias Analysis

2.7. Partial Correlations

3. Results

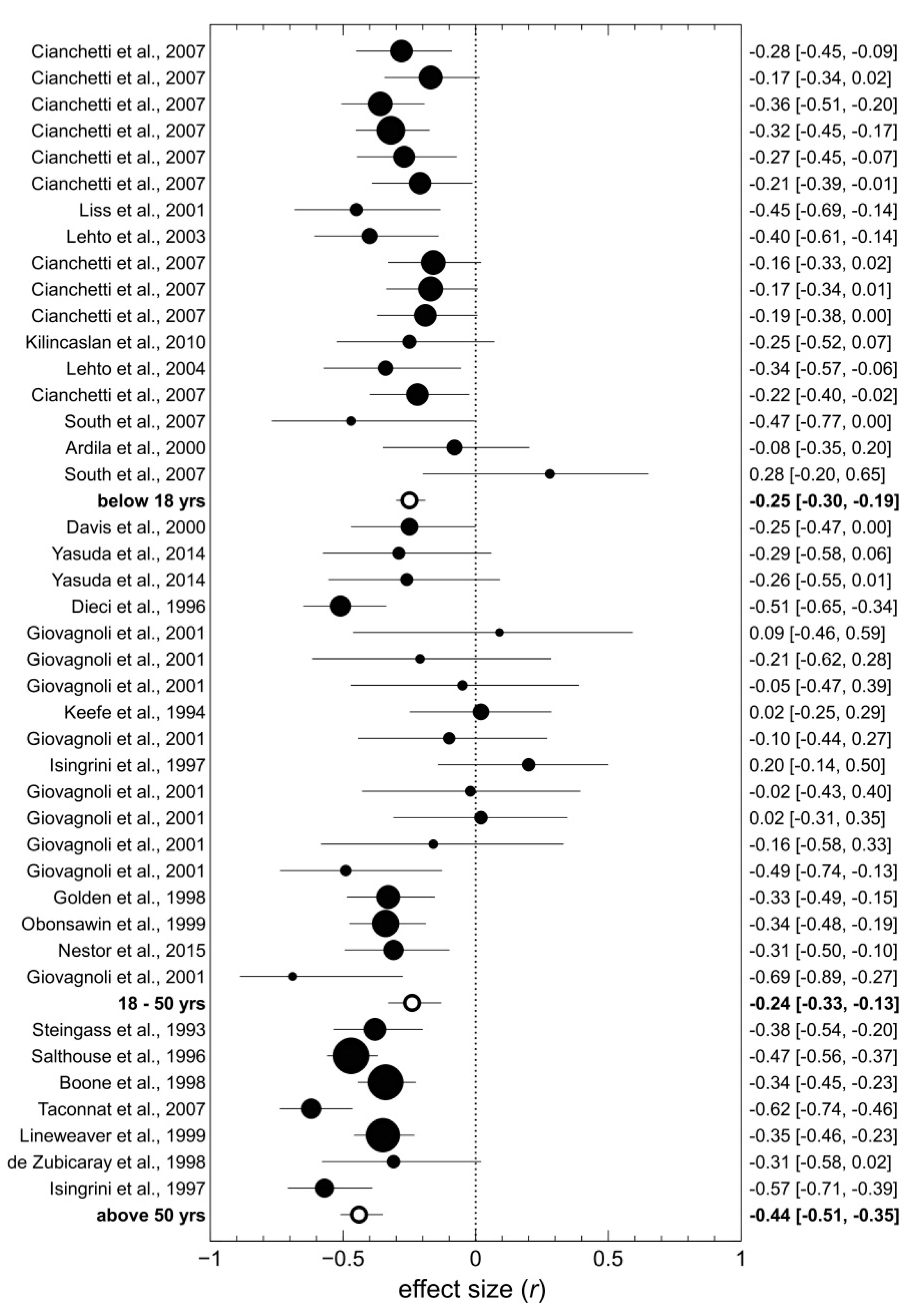

3.1. Moderator Analyses

3.2. Partial Correlation Analyses

4. Discussion

4.1. Discriminant Validity of the WCST

4.2. Differential Associations between WCST Scores and IQ Domains

4.3. The Role of Moderator Variables

4.4. Limitations of the Present Meta-Analysis and Suggestions for Future Studies

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Appendix A

| Study | Year | Population | N | Type of Reliability | Test-Retest Interval | WCST Version | WCST Measure | Coefficient | Type of Correlation |

|---|---|---|---|---|---|---|---|---|---|

| Basso et al. [167] | 1999 | Non-clinical | 50 | Test-retest | 12 months | Heaton et al. 1993 | CAT TE PE, P FMS | 0.54 0.50 0.52, 0.50 −0.02 | 6 6 6 6 |

| Bird et al. [85] | 2004 | Non-clinical | 90 | Test-retest | 1 month | Nelson 1976 | TE PE | 0.34 0.38 | 1 1 |

| Bowden et al. [168] | 1998 | Non-clinical | 75 | Test-retest(‘alternate’ forms) | Same day | Heaton et al. 1981 | CAT TE PE, P NPE | 0.60 0.51 0.32, 0.30 0.43 | 2 2 2 2 |

| de Zubicaray et al. [91] | 1998 | Non-clinical | 36 | Test-retest | 7.5 months | Nelson 1976 | CAT TE PE NPE FMS | 0.28 0.36 0.27 0.38 0.49 | 2 2 2 2 2 |

| Greve et al. [164] | 2002 | TBI | 34 | Test-retest | 66 weeks | Heaton et al. 1993 | CAT TE PE, P NPE FMS | 0.53 0.82 0.80, 0.78 0.50 0.26 | 3 2 2 2 3 |

| Heaton et al. [38] | 1993 | Non-clinical | 46 | Test-retest | 33 days | Heaton et al. 1993 | TE PE, P NPE | 0.71 0.52, 0.53 0.72 | 5 5 5 |

| Ingram et al. [169] | 1998 | Sleep apnoe patients | 29 | Test-retest | 12 days | Computerized WCST | CAT TE PE, P FMS | 0.70 0.79 0.83, 0.79 0.50 | 2 2 2 2 |

| Lineweaver et al. [108] | 1999 | Non-clinical | 142 | Test-retest | 24 months | Nelson 1976 | CAT PE NPE | 0.56 0.64 0.46 | 1 1 1 |

| Ozonoff [169] | 1995 | Autistic children | 17 | Test-retest | 30 months | Standard WCST | TE P | 0.94 0.93 | 5 5 |

| Learning disabled children and adolescents | 17 | Test- retest | 30 months | Standard WCST | TE P | 0.90 0.94 | 5 5 | ||

| Paolo et al. [170] | 1996 | Non-clinical | 87 | Test-retest | 12 months | Heaton et al. 1981 | CAT TE PE, P NPE FMS | 0.65 0.66 0.65, 0.63 0.55 0.13 | 3 3 3 3 |

| Steinmetz et al. [171] | 2010 | Non-clinical | 22 | Test-retest | Same day | Heaton et al. 1993 | TE PE FMS | 0.68 0.72 0.16 | 6 6 6 |

| Tate et al. [165] | 1998 | Non-clinical | 20 | Test-retest | 8 months | Heaton et al. 1993 | CAT TE PE, P NPE FMS | 0.88 0.79 0.72, 0.68 0.74 −0.04 | 2 4 4 4 4 |

| TBI | 23 | Test-retest | 10 months | Heaton et al. 1993 | CAT TE PE, P | 0.29 0.39 0.34, 0.33 | 2 4 4 | ||

| NPE FMS | 0.32 −0.32 | 4 4 |

| Overall and Sample-Specific | WCST Measure | Cumulative N | Unweighted Average | Weighted Average * |

| all (14) available samples | CAT TE PE, P NPE FMS | 496 546 688 463 301 | 0.56 0.65 0.61 0.51 0.15 | 0.55 0.58 0.56 0.50 0.16 |

| non-clinical (9) samples | CAT TE PE, P NPE FMS | 410 426 568 406 215 | 0.59 0.57 0.52 0.55 0.14 | 0.58 0.53 0.52 0.51 0.14 |

| clinical (5) samples | CAT TE PE, P NPE FMS | 86 120 120 57 86 | 0.51 0.77 0.76 0.41 0.15 | 0.52 0.76 0.77 0.43 0.19 |

| Test Versions (excl. cWCST) | WCST Measure | Cumulative N | Unweighted Average | Weighted Average * |

| Heaton et al. version (10) samples | CAT TE PE, P NPE FMS | 345 391 391 285 236 | 0.58 0.69 0.64 0.54 0.03 | 0.59 0.65 0.57 0.53 0.06 |

| Nelson version (3) samples | CAT TE PE, P NPE FMS | 178 126 268 178 36 | 0.42 0.35 0.43 0.42 0.49 | 0.50 0.35 0.50 0.44 0.49 |

| Observed Correlation | IQ Reliability | WCST Reliability | |||||

|---|---|---|---|---|---|---|---|

| 0.10 | 0.20 | 0.30 | 0.40 | 0.50 | 0.60 | ||

| 0.05 | 0.90 | 0.167 | 0.118 | 0.096 | 0.083 | 0.075 | 0.068 |

| 0.95 | 0.162 | 0.115 | 0.094 | 0.081 | 0.073 | 0.066 | |

| 0.99 | 0.159 | 0.112 | 0.092 | 0.079 | 0.071 | 0.065 | |

| 0.10 | 0.90 | 0.333 | 0.236 | 0.192 | 0.167 | 0.149 | 0.136 |

| 0.95 | 0.324 | 0.229 | 0.187 | 0.162 | 0.145 | 0.132 | |

| 0.99 | 0.318 | 0.225 | 0.183 | 0.159 | 0.142 | 0.130 | |

| 0.15 | 0.90 | 0.500 | 0.354 | 0.289 | 0.250 | 0.224 | 0.204 |

| 0.95 | 0.487 | 0.344 | 0.281 | 0.243 | 0.218 | 0.199 | |

| 0.99 | 0.477 | 0.337 | 0.275 | 0.238 | 0.213 | 0.195 | |

| 0.20 | 0.90 | 0.667 | 0.471 | 0.385 | 0.333 | 0.298 | 0.272 |

| 0.95 | 0.649 | 0.459 | 0.375 | 0.324 | 0.290 | 0.265 | |

| 0.99 | 0.636 | 0.449 | 0.367 | 0.318 | 0.284 | 0.259 | |

| 0.25 | 0.90 | 0.833 | 0.589 | 0.481 | 0.417 | 0.373 | 0.340 |

| 0.95 | 0.811 | 0.574 | 0.468 | 0.406 | 0.363 | 0.331 | |

| 0.99 | 0.795 | 0.562 | 0.459 | 0.397 | 0.355 | 0.324 | |

| 0.30 | 0.90 | 1.00 | 0.707 | 0.577 | 0.500 | 0.447 | 0.408 |

| 0.95 | 0.973 | 0.688 | 0.562 | 0.487 | 0.435 | 0.397 | |

| 0.99 | 0.953 | 0.674 | 0.550 | 0.477 | 0.426 | 0.389 | |

| 0.35 | 0.90 | 1.00 | 0.825 | 0.674 | 0.583 | 0.522 | 0.476 |

| 0.95 | 1.00 | 0.803 | 0.656 | 0.568 | 0.508 | 0.464 | |

| 0.99 | 1.00 | 0.787 | 0.642 | 0.556 | 0.497 | 0.454 | |

| 0.40 | 0.90 | 1.00 | 0.943 | 0.770 | 0.667 | 0.596 | 0.544 |

| 0.95 | 1.00 | 0.918 | 0.749 | 0.649 | 0.580 | 0.530 | |

| 0.99 | 1.00 | 0.899 | 0.734 | 0.636 | 0.569 | 0.519 | |

| 0.45 | 0.90 | 1.00 | 1.00 | 0.866 | 0.750 | 0.671 | 0.612 |

| 0.95 | 1.00 | 1.00 | 0.843 | 0.730 | 0.653 | 0.596 | |

| 0.99 | 1.00 | 1.00 | 0.826 | 0.715 | 0.640 | 0.584 | |

| 0.50 | 0.90 | 1.00 | 1.00 | 0.962 | 0.833 | 0.745 | 0.680 |

| 0.95 | 1.00 | 1.00 | 0.937 | 0.811 | 0.725 | 0.662 | |

| 0.99 | 1.00 | 1.00 | 0.917 | 0.795 | 0.711 | 0.649 | |

References

- Fuster, J. The Prefrontal Cortex, 5th ed.; Academic Press: New York, NY, USA, 2015. [Google Scholar]

- Miller, B.L.; Cummings, J.L. The Human Frontal Lobes: Functions and Disorders, 3rd ed.; The Guilford Press: New York, NY, USA, 2018. [Google Scholar]

- Passingham, R.E.; Wise, S.P. The Neurobiology of the Prefrontal Cortex; Oxford University Press: Oxford, MS, USA, 2012. [Google Scholar]

- Stuss, D.T.; Knight, R.T. Principles of Frontal Lobe Function; Oxford University Press: Oxford, MA, USA, 2013. [Google Scholar]

- Szczepanski, S.M.; Knight, R.T. Insights into Human Behavior from Lesions to the Prefrontal Cortex. Neuron 2014, 83, 1002–1018. [Google Scholar] [CrossRef] [PubMed]

- Grant, D.A.; Berg, E. A behavioral analysis of degree of reinforcement and ease of shifting to new responses in a Weigl-type card-sorting problem. J. Exp. Psychol. 1948, 38, 404–411. [Google Scholar] [CrossRef] [PubMed]

- Berg, E.A. A Simple Objective Technique for Measuring Flexibility in Thinking. J. Gen. Psychol. 1948, 39, 15–22. [Google Scholar] [CrossRef] [PubMed]

- Grange, J.; Houghton, G. Task Switching and Cognitive Control; Oxford University Press: New York, NY, USA, 2014. [Google Scholar]

- Kopp, B.; Lange, F.; Steinke, A. The Reliability of the Wisconsin Card Sorting Test in Clinical Practice. Assessment 2019, 1–16. [Google Scholar] [CrossRef] [PubMed]

- Lange, F.; Seer, C.; Müller, D.; Kopp, B. Cognitive caching promotes flexibility in task switching: Evidence from event-related potentials. Sci. Rep. 2015, 5, 1–12. [Google Scholar] [CrossRef] [PubMed]

- Lange, F.; Brückner, C.; Knebel, A.; Seer, C.; Kopp, B. Executive dysfunction in Parkinson’s disease: A meta-analysis on the Wisconsin Card Sorting Test literature. Neurosci. Biobehav. Rev. 2018, 93, 38–56. [Google Scholar] [CrossRef]

- Mitrushina, M.; Boone, K.B.; Razani, J.; D’Elia, L.F. Handbook of Normative Data for Neuropsychological Assessment, 2nd ed.; Oxford University Press: New York, NY, USA, 2005. [Google Scholar]

- Sherman, E.; Tan, J.; Hrabok, M. A Compendium of Neuropsychological Tests. Fundamentals of Neuropsychological Assessment and Test Reviews for Clinical Practice, 4th ed.; Oxford University Press: Oxford, MS, USA, 2020. [Google Scholar]

- Teuber, H.K.; Battersby, W.S.; Bender, M.B. Performance of complex visual tasks after cerebral lesions. J. Nerv. Ment. Dis. 1951, 114, 413–429. [Google Scholar]

- Milner, B. Effects of Different Brain Lesions on Card Sorting. Arch. Neurol. 1963, 9, 90–100. [Google Scholar] [CrossRef]

- Scoville, W.B.; Milner, B. Loss of recent memory after bilateral hippocampal lesions. J. Neurol. Neurosurg. Psychiatry 1957, 20, 11–21. [Google Scholar] [CrossRef]

- Wechsler, D. The Measurement of Adult Intelligence; Williams and Wilkins: Baltimore, MA, USA, 1939. [Google Scholar]

- Luria, A.R. Two kinds of motor perseveration in massive injury of the frontal lobes. Brain 1965, 88, 1–10. [Google Scholar] [CrossRef]

- Pribram, K.H. The Primate Frontal Cortex—Executive of the Brain. In Psychophysiology of the Frontal Lobes; Pribram, K.H., Luria, A.R., Eds.; Academic Press: New York, NY, USA, 1973; pp. 293–314. [Google Scholar]

- Teuber, H.L. Unity and diversity of frontal lobe functions. Acta Neurobiol. Exp. (Wars). 1972, 32, 615–656. [Google Scholar] [PubMed]

- Demakis, G.J. A meta-analytic review of the sensitivity of the Wisconsin Card Sorting Test to frontal and lateralized frontal brain damage. Neuropsychology 2003, 17, 255–264. [Google Scholar] [CrossRef] [PubMed]

- Lange, F.; Seer, C.; Salchow, C.; Dengler, R.; Dressler, D.; Kopp, B. Meta-analytical and electrophysiological evidence for executive dysfunction in primary dystonia. Cortex 2016, 82, 133–146. [Google Scholar] [CrossRef] [PubMed]

- Lange, F.; Seer, C.; Müller-Vahl, K.; Kopp, B. Cognitive flexibility and its electrophysiological correlates in Gilles de la Tourette syndrome. Dev. Cogn. Neurosci. 2017, 27, 78–90. [Google Scholar] [CrossRef]

- Lange, F.; Kip, A.; Klein, T.; Müller, D.; Seer, C.; Kopp, B. Effects of rule uncertainty on cognitive flexibility in a card-sorting paradigm. Acta Psychol. (Amst). 2018, 190, 53–64. [Google Scholar] [CrossRef]

- Nyhus, E.; Barceló, F. The Wisconsin Card Sorting Test and the cognitive assessment of prefrontal executive functions: A critical update. Brain Cogn. 2009, 71, 437–451. [Google Scholar] [CrossRef]

- Lezak, M.D.; Howieson, D.B.; Bigler, E.D.; Tranel, D. Neuropsychological Assessment, 5th ed.; Press, O.U., Ed.; Oxford University Press: Oxford, MS, USA, 2012. [Google Scholar]

- Banich, M.T. Executive Function: The Search for an Integrated Account. Curr. Dir. Psychol. Sci. 2009, 18, 89–94. [Google Scholar] [CrossRef]

- Diamond, A. Executive Functions. Annu. Rev. Psychol. 2013, 64, 135–168. [Google Scholar] [CrossRef]

- Miller, E.K.; Cohen, J.D. An Integrative Theory of Prefrontal Cortex Function. Annu. Rev. Neurosci. 2001, 24, 167–202. [Google Scholar] [CrossRef]

- Suchy, Y. Executive Functioning: Overview, Assessment, and Research Issues for Non-Neuropsychologists. Ann. Behav. Med. 2009, 37, 106–116. [Google Scholar] [CrossRef]

- Friedman, N.P.; Miyake, A.; Young, S.E.; DeFries, J.C.; Corley, R.P.; Hewitt, J.K. Individual differences in executive functions are almost entirely genetic in origin. J. Exp. Psychol. Gen. 2008, 137, 201–225. [Google Scholar] [CrossRef] [PubMed]

- Friedman, N.P.; Miyake, A.; Altamirano, L.J.; Corley, R.P.; Young, S.E.; Rhea, S.A.; Hewitt, J.K. Stability and change in executive function abilities from late adolescence to early adulthood: A longitudinal twin study. Dev. Psychol. 2016, 52, 326–340. [Google Scholar] [CrossRef] [PubMed]

- Miyake, A.; Friedman, N.P. The Nature and Organization of Individual Differences in Executive Functions. Curr. Dir. Psychol. Sci. 2012, 21, 8–14. [Google Scholar] [CrossRef]

- Friedman, N.P.; Miyake, A. Unity and diversity of executive functions: Individual differences as a window on cognitive structure. Cortex 2017, 86, 186–204. [Google Scholar] [CrossRef]

- Miyake, A.; Friedman, N.P.; Emerson, M.J.; Witzki, A.H.; Howerter, A.; Wager, T.D. The Unity and Diversity of Executive Functions and Their Contributions to Complex “Frontal Lobe” Tasks: A Latent Variable Analysis. Cogn. Psychol. 2000, 41, 49–100. [Google Scholar] [CrossRef]

- Nelson, H.E. A Modified Card Sorting Test Sensitive to Frontal Lobe Defects. Cortex 1976, 12, 313–324. [Google Scholar] [CrossRef]

- Heaton, R.K. Wisconsin Card Sorting Test (WCST); Psychological Assessment Ressources: Odessa, Ukraine, 1981. [Google Scholar]

- Heaton, R.K.; Chelune, G.; Talley, J.L.; Kay, G.G.; Curtis, G. Wisconsin Card Sorting Test Manual: Revised and Expanded; Psychological Assessment Resources: Odessa, Ukraine, 1993. [Google Scholar]

- Schretlen, D.J. Modified Wisconsin Card Sorting Test (m-WCST); Psychological Assessment Resources: Lutz, Germany, 2010. [Google Scholar]

- Wechsler, D. Measurement of Adult Intelligence, 3rd ed.; Williams and Wilkins: Baltimore, MD, USA, 1944. [Google Scholar]

- Deary, I.J. Intelligence. Annu. Rev. Psychol. 2012, 63, 453–482. [Google Scholar] [CrossRef]

- Spearman, C. "General intelligence”, objectively determined and measured. Am. J. Psychol. 1904, 15, 201–292. [Google Scholar] [CrossRef]

- Duncan, J. How Intelligence Happens; Yale University Press: London, UK, 2010. [Google Scholar]

- Jensen, A.R. The G Factor: The Science of Mental Ability; Praeger: New York, NY, USA, 1998. [Google Scholar]

- Cattell, R.B. Some theoretical issues in adult intelligence testing. Psychol. Bull. 1941, 38, 592. [Google Scholar]

- Horn, J.L.; Cattell, R.B. Refinement and test of the theory of fluid and crystallized general intelligences. J. Educ. Psychol. 1966, 57, 253–270. [Google Scholar] [CrossRef]

- Carroll, J.B. Human Cognitive Abilities: A Survey of Factor-Analytic Studies; Oxford University Press: New York, NY, USA, 1993. [Google Scholar]

- McGrew, K.S. CHC theory and the human cognitive abilities project: Standing on the shoulders of the giants of psychometric intelligence research. Intelligence 2009, 37, 1–10. [Google Scholar] [CrossRef]

- Schneider, W.J.; McGrew, K.S. The Cattell–Horn–Carroll Model of Intelligence. In Contemporary Intellectual Assessment: Theories, Tests, and Issues; Flanagan, D.P., Harrison, P.L., Eds.; Guilford Press: New York, NY, USA, 2012; pp. 99–144. [Google Scholar]

- Wechsler, D. Wechsler Adult Intelligence Scale: Manual; The Psychological Corporation: New York, NY, USA, 1955. [Google Scholar]

- Wechsler, D. Wechsler Adult Intelligence Scale–Revised; The Psychological Corporation: New York, NY, USA, 1981. [Google Scholar]

- Wechsler, D. Wechsler Adult Intelligence Scale (WAIS-III): Administration and Scoring Manual; The Psychological Corporation: San Antonio, TX, USA, 1997. [Google Scholar]

- Wechsler, D. Wechsler Adult Intelligence Scale (WAIS–IV); The Psychological Corporation: San Antonio, TX, USA, 2008. [Google Scholar]

- Wechsler, D. Wechsler Intelligence Scale for Children (WISC): Manual; The Psychological Corporation: New York, NY, USA, 1949. [Google Scholar]

- Wechsler, D. Manual of the Wechsler Intelligence Scale for Children–Revised; The Psychological Corporation: New York, NY, USA, 1974. [Google Scholar]

- Wechsler, D. WISC-III Wechsler Intelligence Scale for Children: Manual; The Psychological Corporation: San Antonio, TX, USA, 1991. [Google Scholar]

- Wechsler, D. Wechsler Intelligence Scale for Children (WISC-IV); The Psychological Corporation: San Antonio, TX, USA, 2003. [Google Scholar]

- Jewsbury, P.A.; Bowden, S.C.; Duff, K. The Cattell–Horn–Carroll Model of Cognition for Clinical Assessment. J. Psychoeduc. Assess. 2017, 35, 547–567. [Google Scholar] [CrossRef]

- Nelson, H.E. The National Adult Reading Test (NART): Test Manual; NFER-Nelson: Windsor, UK, 1982. [Google Scholar]

- Crawford, J.R.; Parker, D.M.; Stewart, L.E.; Besson, J.A.O.; De Lacey, G. Prediction of WAIS IQ with the National Adult Reading Test: Cross-validation and extension. Br. J. Clin. Psychol. 1989, 28, 267–273. [Google Scholar] [CrossRef]

- Raven, J.C.; Court, J.; Raven, J. Manual for Raven’s Progressive Matrices; H. K. Lewis: London, UK, 1976. [Google Scholar]

- Cattell, R.B.; Cattell, A.K. Measuring Intelligence with the Culture Fair Tests; Institute for Personality and Ability Testing: Champaign, IL, USA, 1973. [Google Scholar]

- Floyd, R.G.; Bergeron, R.; Hamilton, G.; Parra, G.R. How do executive functions fit with the Cattell-Horn-Carroll model? Some evidence from a joint factor analysis of the Delis-Kaplan Executive Function System and the Woodcock-Johnson III tests of cognitive abilities. Psychol. Sch. 2010, 47, 721–738. [Google Scholar] [CrossRef]

- Hoelzle, J.B. Neuropsychological Assessment and the Cattell-Horn-Carroll (CHC) Cognitive Abilities Model; University of Toledo: Toledo, Spain, 2008. [Google Scholar]

- Jewsbury, P.A.; Bowden, S.C.; Strauss, M.E. Confirmatory factor analysis of executive function models: A Cattell-Horn-Carroll based reanalysis integrating the switching, inhibition, and updating model of executive function with the Cattell–Horn–Carroll model. J. Exp. Psychol. Gen. 2016, 145, 220–245. [Google Scholar] [CrossRef] [PubMed]

- Roberds, E.L. Evaluating the Relationship Between CHC Factors and Executive Functioning, Ball State University. Available online: http://cardinalscholar.bsu.edu/handle/123456789/199524 (accessed on 2 May 2015).

- Salthouse, T.A. Relations Between Cognitive Abilities and Measures of Executive Functioning. Neuropsychology 2005, 19, 532–545. [Google Scholar] [CrossRef]

- van Aken, L.; Kessels, R.P.C.; Wingbermühle, E.; Wiltink, M.; van der Heijden, P.T.; Egger, J.I.M. Exploring the incorporation of executive functions in intelligence testing : Factor analysis of the WAIS-III and traditional tasks of executive functioning. Int. J. Appl. Psychol. 2014, 4, 73–80. [Google Scholar]

- van Aken, L.; Kessels, R.P.C.; Wingbermühle, E.; van der Veld, W.M.; Egger, J.I.M. Fluid intelligence and executive functioning more alike than different? Acta Neuropsychiatr. 2016, 28, 31–37. [Google Scholar] [CrossRef]

- van Aken, L.; van der Heijden, P.T.; van der Veld, W.M.; Hermans, L.; Kessels, R.P.C.; Egger, J.I.M. Representation of the Cattell–Horn–Carroll Theory of Cognitive Abilities in the Factor Structure of the Dutch-Language Version of the WAIS-IV. Assessment 2017, 24, 458–466. [Google Scholar] [CrossRef]

- van Aken, L.; van der Heijden, P.T.; Oomens, W.; Kessels, R.P.C.; Egger, J.I.M. Predictive Value of Traditional Measures of Executive Function on Broad Abilities of the Cattell–Horn–Carroll Theory of Cognitive Abilities. Assessment 2017, 26, 1375–1385. [Google Scholar] [CrossRef]

- Roca, M.; Parr, A.; Thompson, R.; Woolgar, A.; Torralva, T.; Antoun, N.; Manes, F.; Duncan, J. Executive function and fluid intelligence after frontal lobe lesions. Brain 2010, 133, 234–247. [Google Scholar] [CrossRef] [PubMed]

- Cronbach, L.J. Essentials of Psychological Testing, 3rd ed.; Harper & Row: New York, NY, USA, 1970. [Google Scholar]

- Jewsbury, P.A.; Bowden, S.C. Construct Validity has a Critical Role in Evidence-Based Neuropsychological Assessment. In Neuropsychological Assessment in the Age of Evidence-Based Practice; Oxford University Press: New York, NY, USA, 2017; pp. 33–63. [Google Scholar]

- Schönbrodt, F.D.; Perugini, M. At what sample size do correlations stabilize? J. Res. Pers. 2013, 47, 609–612. [Google Scholar] [CrossRef]

- Field, A.P.; Gillett, R. How to do a meta-analysis. Br. J. Math. Stat. Psychol. 2010, 63, 665–694. [Google Scholar] [CrossRef] [PubMed]

- Strauss, E.; Sherman, E.M.S.; Spreen, O. A Compendium of Neuropsychological Tests: Administration, Norms, and Commentary; Oxford University Press: New York, NY, USA, 2006. [Google Scholar]

- Lehrl, S. Mehrfach-Wortschatz-Intelligenztest (MWT-B): Handbuch; Straube: Erlangen, Germany, 1977. [Google Scholar]

- Ammons, R.B.; Ammons, C.H. The Quick Test (QT): Provisional Manual. Psychol. Rep. 1962, 11, 111–118. [Google Scholar] [CrossRef]

- Zachary, R.A.; Shipley, W.C. Shipley Institute of Living Scale: Revised Manual, 4th ed.; Western Psychological Services: Los Angeles, CA, USA, 1986. [Google Scholar]

- Thorndike, R.L. The Stanford-Binet Intelligence Scale: Guide for Administering and Scoring; The Riverside Publishing Company: Chicago, IL, USA, 1986. [Google Scholar]

- Thurstone, L.; Thurstone, T. SRA Primary Mental Abilities Test; Science Research Associates: Chicago, IL, USA, 1949. [Google Scholar]

- Kaufman, A.S.; Kaufman, N.L. Kaufman Brief Intelligence Test: Manual; American Guidance Service: Circle Pines, MN, USA, 1990. [Google Scholar]

- Ardila, A.; Pineda, D.; Rosselli, M. Correlation Between Intelligence Test Scores and Executive Function Measures. Arch. Clin. Neuropsychol. 2000, 15, 31–36. [Google Scholar] [CrossRef]

- Bird, C.M.; Papadopoulou, K.; Ricciardelli, P.; Rossor, M.N.; Cipolotti, L. Monitoring cognitive changes: Psychometric properties of six cognitive tests. Br. J. Clin. Psychol. 2004, 43, 197–210. [Google Scholar] [CrossRef]

- Boone, K.B.; Pontón, M.O.; Gorsuch, R.L.; González, J.J.; Miller, B.L. Factor Analysis of Four Measures of Prefrontal Lobe Functioning. Arch. Clin. Neuropsychol. 1998, 13, 585–595. [Google Scholar] [CrossRef]

- Chien, C.C.; Huang, S.F.; Lung, F.W. Maximally efficient two-stage screening: Determining intellectual disability in Taiwanese military conscripts. J. Multidiscip. Healthc. 2009, 2, 39–44. [Google Scholar]

- Cianchetti, C.; Corona, S.; Foscoliano, M.; Contu, D.; Sannio-Fancello, G. Modified Wisconsin Card Sorting Test (MCST, MWCST): Normative Data in Children 4–13 Years Old, According to Classical and New Types of Scoring. Clin. Neuropsychol. 2007, 21, 456–478. [Google Scholar] [CrossRef]

- Crawford, J.R.; Bryan, J.; Luszcz, M.A.; Obonsawin, M.C.; Stewart, L. The Executive Decline Hypothesis of Cognitive Aging: Do Executive Deficits Qualify as Differential Deficits and Do They Mediate Age-Related Memory Decline? Aging, Neuropsychol. Cogn. 2000, 7, 9–31. [Google Scholar] [CrossRef]

- Davis, R.N.; Nolen-Hoeksema, S. Cognitive Inflexibility Among Ruminators and Nonruminators. Cognit. Ther. Res. 2000, 24, 699–711. [Google Scholar] [CrossRef]

- de Zubicaray, G.I.; Smith, G.A.; Chalk, J.B.; Semple, J. The Modified Card Sorting Test: Test-retest stability and relationships with demographic variables in a healthy older adult sample. Br. Psychol. Soc. 1998, 37, 457–466. [Google Scholar] [CrossRef]

- Dieci, M.; Vita, A.; Silenzi, C.; Caputo, A.; Comazzi, M.; Ferrari, L.; Ghiringhelli, L.; Mezzetti, M.; Tenconi, F.; Invernizzi, G. Non-selective impairment of Wisconsin Card Sorting Test performance in patients with schizophrenia. Schizophr. Res. 1997, 25, 33–42. [Google Scholar] [CrossRef]

- Dolan, M.; Millington, J.; Park, I. Personality and Neuropsychological Function in Violent, Sexual and Arson Offenders. Med. Sci. Law 2002, 42, 34–43. [Google Scholar] [CrossRef] [PubMed]

- Evans, L.D.; Kouros, C.D.; Samanez-Larkin, S.; Garber, J. Concurrent and Short-Term Prospective Relations among Neurocognitive Functioning, Coping, and Depressive Symptoms in Youth. J. Clin. Child Adolesc. Psychol. 2016, 45, 6–20. [Google Scholar] [CrossRef]

- Giovagnoli, A.R. Relation of sorting impairment to hippocampal damage in temporal lobe epilepsy. Neuropsychologia 2001, 39, 140–150. [Google Scholar] [CrossRef]

- Golden, C.J.; Kushner, T.; Lee, B.; McMorrow, M.A. Searching for the meaning of the category test and the wisconsin card sort test: A Comparative analysis. Int. J. Neurosci. 1998, 93, 141–150. [Google Scholar] [CrossRef]

- Han, G.; Helm, J.; Iucha, C.; Zahn-Waxler, C.; Hastings, P.D.; Klimes-Dougan, B. Are Executive Functioning Deficits Concurrently and Predictively Associated with Depressive and Anxiety Symptoms in Adolescents? J. Clin. Child Adolesc. Psychol. 2016, 45, 44–58. [Google Scholar] [CrossRef]

- Heinrichs, R.W. Variables associated with Wisconsin Card Sorting Test performance in neuropsychiatric patients referred for assessment. Neuropsychiatry, Neuropsychol. Behav. Neurol. 1990, 3, 107–112. [Google Scholar]

- Ilonen, T.; Taiminen, T.; Lauerma, H.; Karlsson, H.; Helenius, H.Y.M.; Tuimala, P.; Leinonen, K.-M.; Wallenius, E.; Salokangas, R.K.R. Impaired Wisconsin Card Sorting Test performance in first-episode schizophrenia: Resource or motivation deficit? Compr. Psychiatry 2000, 41, 385–391. [Google Scholar] [CrossRef]

- Isingrini, M.; Vazou, F. Relation between Fluid Intelligence and Frontal Lobe Functioning in Older Adults. Int. J. Aging Hum. Dev. 1997, 45, 99–109. [Google Scholar] [CrossRef] [PubMed]

- Keefe, R.S.E.; Silverman, J.M.; Lees Roitman, S.E.; Harvey, P.D.; Duncan, M.A.; Alroy, D.; Siever, L.J.; Davis, K.L.; Mohs, R.C. Performance of nonpsychotic relatives of schizophrenic patients on cognitive tests. Psychiatry Res. 1994, 53, 1–12. [Google Scholar] [CrossRef]

- Kilincaslan, A.; Motavalli Mukkaddes, N.; Kücükyazici, G.S.; Gürvit, H. Assessment of executive/attentional performance in Asperger’s disorder. Turkish J. Psychiatry 2010, 21, 289–299. [Google Scholar]

- Lee, S.J.; Lee, H.-K.; Kweon, Y.-S.; Lee, C.T.; Lee, K.-U. The Impact of Executive Function on Emotion Recognition and Emotion Experience in Patients with Schizophrenia. Psychiatry Investig. 2009, 6, 156–162. [Google Scholar] [CrossRef] [PubMed]

- Lehto, J.E.; Elorinne, E. Gambling as an Executive Function Task. Appl. Neuropsychol. 2003, 10, 234–238. [Google Scholar] [CrossRef]

- Lehto, J.E. A Test for Children’s Goal-Directed Behavior: A Pilot Study. Percept. Mot. Skills 2004, 98, 223–236. [Google Scholar] [CrossRef]

- LeMonda, B.C.; Holtzer, R.; Goldman, S. Relationship between executive functions and motor stereotypies in children with autistic disorder. Res. Autism Spectr. Disord. 2012, 6, 1099–1106. [Google Scholar] [CrossRef]

- Lichtenstein, J.D.; Erdodi, L.A.; Rai, J.K.; Mazur-Mosiewicz, A.; Flaro, L. Wisconsin Card Sorting Test embedded validity indicators developed for adults can be extended to children. Child Neuropsychol. 2018, 24, 247–260. [Google Scholar] [CrossRef]

- Lineweaver, T.T.; Bondi, M.W.; Thomas, R.G.; Salmon, D.P. A Normative Study of Nelson’s (1976) Modified Version of the Wisconsin Card Sorting Test in Healthy Older Adults. Clin. Neuropsychol. 1999, 13, 328–347. [Google Scholar] [CrossRef]

- Liss, M.; Fein, D.; Allen, D.; Dunn, M.; Feinstein, C.; Morris, R.; Waterhouse, L.; Rapin, I. Executive Functioning in High-functioning Children with Autism. J. Child Psychol. Psychiatry 2001, 42, 261–270. [Google Scholar] [CrossRef]

- Lucey, J.V.; Burness, C.E.; Costa, D.C.; Gacinovic, S.; Pilowsky, L.S.; Ell, P.J.; Marks, I.M.; Kerwin, R.W. Wisconsin Card Sorting Task (WCST) errors and cerebral blood flow in obsessive-compulsive disorder (OCD). Br. J. Med. Psychol. 1997, 70, 403–411. [Google Scholar] [CrossRef] [PubMed]

- Minshew, N.J.; Meyer, J.; Goldstein, G. Abstract reasoning in autism: A dissociation between concept formation and concept identification. Neuropsychology 2002, 16, 327–334. [Google Scholar] [CrossRef] [PubMed]

- Mullane, J.C.; Corkum, P.V. The Relationship Between Working Memory, Inhibition, and Performance on the Wisconsin Card Sorting Test in Children With and Without ADHD. J. Psychoeduc. Assess. 2007, 25, 211–221. [Google Scholar] [CrossRef]

- Nestor, P.G.; Nakamura, M.; Niznikiewicz, M.; Levitt, J.J.; Newell, D.T.; Shenton, M.E.; McCarley, R.W. Attentional Control and Intelligence: MRI Orbital Frontal Gray Matter and Neuropsychological Correlates. Behav. Neurol. 2015, 2015, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Obonsawin, M.C.; Crawford, J.R.; Page, J.; Chalmers, P.; Low, G.; Marsh, P. Performance on the Modified Card Sorting Test by normal, healthy individuals: Relationship to general intellectual ability and demographic variables. Br. J. Clin. Psychol. 1999, 38, 27–41. [Google Scholar] [CrossRef]

- Obonsawin, M.; Crawford, J.R.; Page, J.; Chalmers, P.; Cochrane, R.; Low, G. Performance on tests of frontal lobe function reflect general intellectual ability. Neuropsychologia 2002, 40, 970–977. [Google Scholar] [CrossRef]

- Owashi, T.; Iwanami, A.; Nakagome, K.; Higuchi, T.; Kamijima, K. Thought Disorder and Executive Dysfunction in Patients with Schizophrenia. Int. J. Neurosci. 2009, 119, 105–123. [Google Scholar] [CrossRef]

- Perry, W.; Braff, D.L. A multimethod approach to assessing perseverations in schizophrenia patients. Schizophr. Res. 1998, 33, 69–77. [Google Scholar] [CrossRef]

- Roca, M.; Manes, F.; Chade, A.; Gleichgerrcht, E.; Gershanik, O.; Arévalo, G.G.; Torralva, T.; Duncan, J. The relationship between executive functions and fluid intelligence in Parkinson’s disease. Psychol. Med. 2012, 42, 2445–2452. [Google Scholar] [CrossRef]

- Rossell, S.L.; Coakes, J.; Shapleske, J.; Woodruff, P.W.R.; David, A.S. Insight: Its relationship with cognitive function, brain volume and symptoms in schizophrenia. Psychol. Med. 2003, 33, 111–119. [Google Scholar] [CrossRef]

- Salthouse, T.A.; Fristoe, N.; Rhee, S.H. How localized are age-related effects on neuropsychological measures? Neuropsychology 1996, 10, 272–285. [Google Scholar] [CrossRef]

- Schiebener, J.; García-Arias, M.; García-Villamisar, D.; Cabanyes-Truffino, J.; Brand, M. Developmental changes in decision making under risk: The role of executive functions and reasoning abilities in 8- to 19-year-old decision makers. Child Neuropsychol. 2015, 21, 759–778. [Google Scholar] [CrossRef] [PubMed]

- Shura, R.D.; Miskey, H.M.; Rowland, J.A.; Yoash-Gantz, R.E.; Denning, J.H. Embedded Performance Validity Measures with Postdeployment Veterans: Cross-Validation and Efficiency with Multiple Measures. Appl. Neuropsychol. Adult 2016, 23, 94–104. [Google Scholar] [CrossRef] [PubMed]

- South, M.; Ozonoff, S.; Mcmahon, W.M. The relationship between executive functioning, central coherence, and repetitive behaviors in the high-functioning autism spectrum. Autism 2007, 11, 437–451. [Google Scholar] [CrossRef]

- Steingass, H.-P.; Sartory, G.; Canavan, A.G.M. Chronic alcoholism and cognitive function: General decline or patterned impairment? Pers. Individ. Dif. 1994, 17, 97–109. [Google Scholar] [CrossRef]

- Sweeney, J.A.; Keilp, J.G.; Haas, G.L.; Hill, J.; Weiden, P.J. Relationships between medication treatments and neuropsychological test performance in schizophrenia. Psychiatry Res. 1991, 37, 297–308. [Google Scholar] [CrossRef]

- Syngelaki, E.M.; Moore, S.C.; Savage, J.C.; Fairchild, G.; Van Goozen, S.H.M. Executive Functioning and Risky Decision Making in Young Male Offenders. Crim. Justice Behav. 2009, 36, 1213–1227. [Google Scholar] [CrossRef]

- Taconnat, L.; Clarys, D.; Vanneste, S.; Bouazzaoui, B.; Isingrini, M. Aging and strategic retrieval in a cued-recall test: The role of executive functions and fluid intelligence. Brain Cogn. 2007, 64, 1–6. [Google Scholar] [CrossRef]

- Whiteside, D.M.; Kealey, T.; Semla, M.; Luu, H.; Rice, L.; Basso, M.R.; Roper, B. Verbal Fluency: Language or Executive Function Measure? Appl. Neuropsychol. Adult 2016, 23, 29–34. [Google Scholar] [CrossRef]

- Yasuda, Y. Cognitive inflexibility in Japanese adolescents and adults with autism spectrum disorders. World J. Psychiatry 2014, 4, 42. [Google Scholar] [CrossRef]

- Hedges, L.V.; Vevea, J.L. Fixed-and random-effects models in meta-anylsis. Psychol. Methods 1998, 3, 486–504. [Google Scholar] [CrossRef]

- Higgins, J.P.T.; Thompson, S.G.; Deeks, J.J.; Altman, D.G. Measuring inconsistency in meta-analyses. BMJ 2003, 327, 557–560. [Google Scholar] [CrossRef] [PubMed]

- Wan, X.; Wang, W.; Liu, J.; Tong, T. Estimating the sample mean and standard deviation from the sample size, median, range and/or interquartile range. BMC Med. Res. Methodol. 2014, 14, 135. [Google Scholar] [CrossRef] [PubMed]

- Carter, E.C.; McCullough, M.E. Publication bias and the limited strength model of self-control: Has the evidence for ego depletion been overestimated? Front. Psychol. 2014, 5, 1–11. [Google Scholar] [CrossRef]

- VassarStats: Website for Statistical Computation. Available online: http://vassarstats.net/ (accessed on 16 April 2018).

- Cohen, J. A power primer. Psychol. Bull. 1992, 112, 115–159. [Google Scholar] [CrossRef]

- Friedman, N.P.; Miyake, A.; Robinson, J.L.; Hewitt, J.K. Developmental trajectories in toddlers’ self-restraint predict individual differences in executive functions 14 years later: A behavioral genetic analysis. Dev. Psychol. 2011, 47, 1410–1430. [Google Scholar] [CrossRef]

- Spearman, C. The proof and measurement of association between two things. Am. J. Psychol. 1904, 15, 72–101. [Google Scholar] [CrossRef]

- Hunter, J.E.; Schmidt, F.L. Cumulative research knowledge and social policy formulation: The critical role of meta-analysis. Psychol. Public Policy, Law 1996, 2, 324–347. [Google Scholar] [CrossRef]

- Nunnally, J.C.; Bernstein, I.H. Psychometric Theory (Vol. 3); McGraw-Hill: New York, NY, USA, 1994. [Google Scholar]

- Tranel, D.; Manzel, K.; Anderson, S.W. Is the Prefrontal Cortex Important For Fluid Intelligence? A Neuropsychological Study Using Matrix Reasoning. Clin. Neuropsychol. 2008, 22, 242–261. [Google Scholar] [CrossRef]

- Nenty, H.J.; Dinero, T.E. A Cross-Cultural Analysis of the Fairness of the Cattell Culture Fair Intelligence Test Using the Rasch Model. Appl. Psychol. Meas. 1981, 5, 355–368. [Google Scholar] [CrossRef]

- Burke, H.R. Raven’s Progressive Matrices: Validity, Reliability, and Norms. J. Psychol. 1972, 82, 253–257. [Google Scholar] [CrossRef]

- Duncan, J.; Seitz, R.J.; Kolodny, J.; Bor, D.; Herzog, H.; Ahmed, A.; Newell, F.N.; Emslie, H. A Neural Basis for General Intelligence. Science (80-. ). 2000, 289, 457–460. [Google Scholar] [CrossRef] [PubMed]

- Gläscher, J.; Tranel, D.; Paul, L.K.; Rudrauf, D.; Rorden, C.; Hornaday, A.; Grabowski, T.; Damasio, H.; Adolphs, R. Lesion Mapping of Cognitive Abilities Linked to Intelligence. Neuron 2009, 61, 681–691. [Google Scholar] [CrossRef] [PubMed]

- Gläscher, J.; Rudrauf, D.; Colom, R.; Paul, L.K.; Tranel, D.; Damasio, H.; Adolphs, R. Distributed neural system for general intelligence revealed by lesion mapping. Proc. Natl. Acad. Sci. 2010, 107, 4705–4709. [Google Scholar] [CrossRef]

- Basten, U.; Hilger, K.; Fiebach, C.J. Where smart brains are different: A quantitative meta-analysis of functional and structural brain imaging studies on intelligence. Intelligence 2015, 51, 10–27. [Google Scholar] [CrossRef]

- Deary, I.J.; Penke, L.; Johnson, W. The neuroscience of human intelligence differences. Nat. Rev. Neurosci. 2010, 11, 201–211. [Google Scholar] [CrossRef] [PubMed]

- Euler, M.J. Intelligence and uncertainty: Implications of hierarchical predictive processing for the neuroscience of cognitive ability. Neurosci. Biobehav. Rev. 2018, 94, 93–112. [Google Scholar] [CrossRef] [PubMed]

- Lange, F.; Seer, C.; Kopp, B. Cognitive flexibility in neurological disorders: Cognitive components and event-related potentials. Neurosci. Biobehav. Rev. 2017, 83, 496–507. [Google Scholar] [CrossRef]

- Christoff, K.; Prabhakaran, V.; Dorfman, J.; Zhao, Z.; Kroger, J.K.; Holyoak, K.J.; Gabrieli, J.D.E. Rostrolateral Prefrontal Cortex Involvement in Relational Integration during Reasoning. Neuroimage 2001, 14, 1136–1149. [Google Scholar] [CrossRef]

- Kroger, J.K.; Sabb, F.W.; Fales, C.L.; Bookheimer, S.Y.; Cohen, M.S.; Holyoak, K.J. Recruitment of Anterior Dorsolateral Prefrontal Cortex in Human Reasoning: A Parametric Study of Relational Complexity. Cereb. Cortex 2002, 12, 477–485. [Google Scholar] [CrossRef]

- Morrison, R.G.; Krawczyk, D.C.; Holyoak, K.J.; Hummel, J.E.; Chow, T.W.; Miller, B.L.; Knowlton, B.J. A Neurocomputational Model of Analogical Reasoning and its Breakdown in Frontotemporal Lobar Degeneration. J. Cogn. Neurosci. 2004, 16, 260–271. [Google Scholar] [CrossRef] [PubMed]

- Waltz, J.A.; Knowlton, B.J.; Holyoak, K.J.; Boone, K.B.; Mishkin, F.S.; de Menezes Santos, M.; Thomas, C.R.; Miller, B.L. A System for Relational Reasoning in Human Prefrontal Cortex. Psychol. Sci. 1999, 10, 119–125. [Google Scholar] [CrossRef]

- Waltz, J.A.; Knowlton, B.J.; Holyoak, K.J.; Boone, K.B.; Back-Madruga, C.; McPherson, S.; Masterman, D.; Chow, T.; Cummings, J.L.; Miller, B.L. Relational Integration and Executive Function in Alzheimer’s Disease. Neuropsychology 2004, 18, 296–305. [Google Scholar] [CrossRef] [PubMed]

- Schmidt, F.L.; Hunter, J.E. Methods of Meta-Analysis: Correcting Error and Bias in Research Findings, 3rd ed.; Sage publications: London, UK, 2015. [Google Scholar]

- Chapman, L.J.; Chapman, J.P. Problems in the measurement of cognitive deficits. Psychol. Bull. 1973, 79, 380–385. [Google Scholar] [CrossRef] [PubMed]

- Chapman, L.J.; Chapman, J.P. The measurement of differential deficit. J. Psychiatr. Res. 1978, 14, 303–311. [Google Scholar] [CrossRef]

- Greve, K.M.; Ingram, F.; Bianchini, K.J.; Stanford, M.S. Latent Structure of the Wisconsin Card Sorting Test in a Clinical Sample. Arch. Clin. Neuropsychol. 1998, 13, 597–609. [Google Scholar] [CrossRef]

- Greve, K.W.; Stickle, T.R.; Love, J.M.; Bianchini, K.J.; Stanford, M.S. Latent structure of the Wisconsin Card Sorting Test: A confirmatory factor analytic study. Arch. Clin. Neuropsychol. 2005, 20, 355–364. [Google Scholar] [CrossRef]

- Paolo, A.; Tröster, A.I.; Axelrod, N.; Koller, W.C. Construct validity of the WCST in normal elderly and persons with Parkinson’s disease. Arch. Clin. Neuropsychol. 1995, 10, 463–473. [Google Scholar] [CrossRef]

- Sánchez-Cubillo, I.; Periáñez, J.A.; Arover-Roig, D.; Rodríguez-Sánchez, J.M.; Ríos-Lago, M.; Tirapu, J.; Barceló, F. Construct validity of the Trail Making Test: Role of task-switching, working memory, inhibition/interference control, and visuomotor abilities. J. Int. Neuropsychol. Soc. 2009, 15, 438–450. [Google Scholar] [CrossRef]

- Bowden, S.C. Neuropsychological Assessment in the Age of Evidence-Based Practice; Oxford University Press: New York, NY, USA, 2017. [Google Scholar]

- Chelune, G.J. Evidence-Based Practices in Neuropsychology. In Neuropsychological Assessment in the Age of Evidence-Based Practice; Bowden, S.C., Ed.; Oxford University Press: New York, NY, USA, 2017; pp. 155–181. [Google Scholar]

- Greve, K.W.; Love, J.M.; Sherwin, E.; Mathias, C.W.; Houston, R.J.; Brennan, A. Temporal Stability of the Wisconsin Card Sorting Test in a Chronic Traumatic Brain Injury Sample. Assessment 2002, 9, 271–277. [Google Scholar] [CrossRef]

- Tate, R.L.; Perdices, M.; Maggiotto, S. Stability of the Wisconsin Card Sorting Test and the Determination of Reliability of Change in Scores. Clin. Neuropsychol. 1998, 12, 348–357. [Google Scholar] [CrossRef]

- Charter, R.A. Sample Size Requirements for Precise Estimates of Reliability, Generalizability, and Validity Coefficients. J. Clin. Exp. Neuropsychol. 1999, 21, 559–566. [Google Scholar] [CrossRef] [PubMed]

- Basso, M.R.; Bornstein, R.A.; Lang, J.M. Practice Effects on Commonly Used Measures of Executive Function Across Twelve Months. Clin. Neuropsychol. 1999, 13, 283–292. [Google Scholar] [CrossRef]

- Bowden, S.C.; Fowler, K.S.; Bell, R.C.; Whelan, G.; Clifford, C.C.; Ritter, A.J.; Long, C.M. The Reliability and Internal Validity of the Wisconsin Card Sorting Test. Neuropsychol. Rehabil. 1998, 8, 243–254. [Google Scholar] [CrossRef]

- Ingram, F.; Greve, K.W.; Ingram, P.T.F.; Soukup, V.M. Temporal stability of the Wisconsin Card Sorting Test in an untreated patient sample. Br. J. Clin. Psychol. 1999, 38, 209–211. [Google Scholar] [CrossRef] [PubMed]

- Paolo, A.M.; Axelrod, B.N.; Tröster, A.I. Test-Retest Stability of the Wisconsin Card Sorting Test. Assessment 1996, 3, 137–143. [Google Scholar] [CrossRef]

- Steinmetz, J.-P.; Brunner, M.; Loarer, E.; Houssemand, C. Incomplete psychometric equivalence of scores obtained on the manual and the computer version of the Wisconsin Card Sorting Test? Psychol. Assess. 2010, 22, 199–202. [Google Scholar] [CrossRef] [PubMed]

| First Author | Year | N | Sample | % Fem | Age (M) | Age (SD) | WCST Version | FSIQ | VIQ | PIQ | Cor |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Ardila [84] | 2000 | 50 | children (healthy) | 0.0 | 14.4 | 1.0 | Heaton, 1981 | WISC-R | WISC-R | WISC-R | |

| Bird [85] | 2004 | 90 | adults (healthy) | 62.2 | 57.0 | 8.3 | Nelson, 1976 | NART | mix | ||

| Boone [86] | 1998 | 250 | adults (healthy and patients, psy & neuro) | 46.0 | 55.5 | 15.5 | Heaton, 1981 | WAIS-R | WAIS-R | ||

| Chien [87] | 2009 | 99 | adults (healthy) | 0.0 | 20.2 | 0.6 | Heaton, 1993 (c) | WAIS-R | |||

| Cianchetti [88] | 2007 | 101 | children (healthy) | 52.5 | 4.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 113 | children (healthy) | 50.4 | 5.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 119 | children (healthy) | 47.1 | 6.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 161 | children (healthy) | 52.8 | 7.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 94 | children (healthy) | 52.1 | 8.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 98 | children (healthy) | 50.0 | 9.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 119 | children (healthy) | 50.4 | 10.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 122 | children (healthy) | 48.4 | 11.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 100 | children (healthy) | 50.0 | 12.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Cianchetti | 2007 | 99 | children (healthy) | 48.5 | 13.0 | 0.0 | Nelson, 1976 | RPM | P | ||

| Crawford [89] | 2000 | 123 | adults (healthy) | 61.0 | 39.4 | 13.4 | Nelson, 1976 | WAIS-R | WAIS-R | P | |

| Crawford | 1999 | 90 | adults (healthy) | 55.6 | 72.8 | 6.5 | Nelson, 1976 | WAIS-R (s) | P | ||

| Davis [90] | 2000 | 62 | adults (healthy) | 51.6 | 20.3 | 1.5 | Heaton et al., 1993 | WAIS-III (s) | PMA (s) | P | |

| de Zubicaray [91] | 1998 | 36 | adults (healthy) | 66.7 | 70.1 | 5.6 | Nelson, 1976 | WAIS-R | WAIS-R | WAIS-R | S |

| Dieci [92] | 1997 | 88 | adults (healthy and patients, psy) | 28.4 | 27.3 | 7.0 | Heaton, 1981 | WAIS-R | WAIS-R | WAIS-R | S |

| Dolan [93] | 2002 | 60 | adults (patients, psy) | 0.0 | 29.8 | 6.6 | Heaton, 1981 | NART | S | ||

| Evans [94] | 2016 | 192 | children (healthy) | 52.1 | 12.4 | 1.8 | Heaton et al., 1993 | WASI (s) | P | ||

| Giovagnoli [95] | 2001 | 26 | adults (patients, neuro) | 36.8 | 10.9 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 21 | adults (patients, neuro) | 33.3 | 11.2 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 18 | adults (patients, neuro) | 36.6 | 13.4 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 15 | adults (patients, neuro) | 41.4 | 9.8 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 14 | adults (patients, neuro) | 30.7 | 8.8 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 18 | adults (patients, neuro) | 32.6 | 12.2 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 30 | adults (patients, neuro) | 35.2 | 14.3 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 23 | adults (patients, neuro) | 35.6 | 13.4 | Nelson, 1976 | RPM | S | |||

| Giovagnoli | 2001 | 36 | adults (healthy) | 36.1 | 10.7 | Nelson, 1976 | RPM | S | |||

| Golden [96] | 1998 | 112 | adults (patients, neuro) | 48.2 | 37.4 | 13.3 | Heaton, 1981 | WAIS-R | WAIS-R | WAIS-R | P |

| Han [97] | 2016 | 180 | adolescents (healthy and patients, psy) | 49.5 | 13.7 | 1.5 | Heaton, 1981 (c) | K-BIT (s) | |||

| Heinrichs [98] | 1990 | 56 | adults (patients, neuro) | 30.4 | 43.8 | 13.6 | Heaton, 1981 | WAIS-R | P | ||

| Ilonen [99] | 2000 | 27 | adults (patients, psy) | 63.0 | 33.0 | 13.6 | Heaton et al., 1993 | WAIS-R | S | ||

| Isingrini [100] | 1997 | 35 | adults (healthy) | 57.1 | 35.5 | 7.6 | Nelson, 1976 | WAIS (s) | CM | ||

| Isingrini | 1997 | 72 | adults (healthy) | 48.6 | 80.6 | 8.6 | Nelson, 1976 | WAIS (s) | CM | ||

| Keefe [101] | 1994 | 54 | adults (healthy and patients, psy) | 59.3 | 34.8 | 10.5 | Heaton, 1981 | WAIS-R (s,v) | WAIS-R (s) | P | |

| Kilincaslan [102] | 2010 | 39 | children (healthy and patients, psy) | 15.4 | 12.2 | 2.7 | Heaton, 1993 (c) | WISC-R | WISC-R | WISC-R | |

| Lee [103] | 2009 | 39 | adults (patients, psy) | 51.3 | 32.4 | 7.2 | Heaton, 1993 (c) | WAIS-R (s) | P | ||

| Lee | 2009 | 33 | adults (healthy) | 57.6 | 29.0 | 8.9 | Heaton, 1993 (c) | WAIS-R (s) | P | ||

| Lehto [104] | 2003 | 51 | children (healthy) | 41.2 | 9.2 | 0.3 | Heaton, 1981 | RPM | P | ||

| Lehto | 2003 | 40 | adults (healthy) | 62.5 | 30.1 | 9.6 | Heaton, 1981 | RPM | P | ||

| Lehto [105] | 2004 | 46 | children (healthy) | 43.5 | 12.5 | 0.3 | Heaton, 1981 | RPM | P | ||

| LeMonda [106] | 2012 | 44 | children (patients, psy) | 22.7 | 8.1 | 1.0 | Heaton et al., 1993 | WISC-R (s)/SB4(s) | |||

| Lichtenstein [107] | 2018 | 226 | adults and adolescents (healthy and patients, psy) | 35.4 | 13.6 | 2.6 | Heaton, et al. 1993 | WISC-III & WISC-IV | P | ||

| Lineweaver [108] | 1999 | 229 | adults (healthy) | 57.6 | 69.1 | 8.6 | Nelson, 1976 | WAIS-R (s) | WAIS-R (s) | WAIS-R (s) | S |

| Liss [109] | 2001 | 21 | children (patients, psy) | 14.3 | 9.2 | 0.3 | Heaton et al., 1993 | n/a | n/a | n/a | P |

| Liss | 2001 | 34 | children (patients, psy) | 29.4 | 9.1 | 0.1 | Heaton et al., 1993 | n/a | n/a | n/a | P |

| Lucey [110] | 1997 | 38 | adults (healthy and patients, psy) | 47.4 | 38.0 | 11.5 | Heaton, 1981 | NART | P | ||

| Minshew [111] | 2002 | 90 | adults and adolescents (patients, psy) | 21.4 | 9.7 | Heaton et al., 1993 | WAIS-R | ||||

| Minshew | 2002 | 107 | adults and adolescents (healthy) | 21.2 | 9.8 | Heaton et al., 1993 | WAIS-R | ||||

| Mullane [112] | 2007 | 30 | children (healthy and patients, psy) | 26.7 | 8.8 | 1.2 | Heaton et al., 1993 | WISC-III (s) | P | ||

| Nestor [113] | 2015 | 81 | adults (healthy) | 40.8 | 9.1 | Heaton, 1981 | WAIS-III | WAIS-III | WAIS-III | P | |

| Obonsawin [114] | 1999 | 146 | adults and adolescents (healthy) | 47.3 | 40.3 | 14.0 | Nelson, 1976 | WAIS-R | WAIS-R | WAIS-R | K |

| Obonsawin [115] | 2002 | 123 | adults (healthy) | 38.2 | 40.3 | 14.0 | Nelson, 1976 | WAIS-R | P | ||

| Owashi [116] | 2009 | 27 | adults (patients, psy) | 55.6 | 41.5 | 10.1 | Heaton, 1993 (c) | WAIS-R (s) | S | ||

| Perry [117] | 1998 | 71 | adults (patients, psy) | 60.6 | 34.2 | 8.7 | Heaton, 1981 | WAIS-R (s,v) | P | ||

| Roca [118] | 2012 | 31 | adults (healthy and patients, neuro) | 60.6 | 8.0 | Nelson, 1976 | RCPM | ||||

| Roca [72] | 2010 | 74 | adults (healthy and patients, neuro) | 49.9 | 12.6 | Nelson, 1976 | CFT (s) | P | |||

| Rossell [119] | 2003 | 78 | adults (patients, psy) | 0.0 | 33.7 | 8.5 | Heaton, 1981 | NART | P | ||

| Salthouse [120] | 1996 | 259 | adults (healthy) | 63.3 | 51.4 | 18.4 | Heaton et al., 1993 | WAIS-R (s)/SA | P | ||

| Schiebener [121] | 2015 | 112 | children (healthy) | 52.7 | 13.6 | 3.4 | Nelson, 1976 (c) | RPM | P | ||

| Shura [122] | 2016 | 205 | adults (patients, psy & neuro) | 10.8 | 34.9 | 9.1 | Heaton, 1981 (c) | WAIS-III (s) | P | ||

| South [123] | 2007 | 19 | children (patients, psy) | 26.3 | 14.9 | 2.7 | Grant, 1948 | n/a | n/a | n/a | P |

| South | 2007 | 18 | children (healthy) | 38.9 | 14.1 | 2.9 | Grant, 1948 | n/a | n/a | n/a | P |

| Steingass [124] | 1994 | 101 | adults (patients, psy) | 21.9 | 50.5 | 8.1 | Nelson, 1976 | WAIS (s) | WAIS (s)/MWT-B (v) | WAIS (s) | P |

| Sweeney [125] | 1991 | 44 | adults (patients, psy) | 40.9 | 28.5 | 8.6 | Heaton, 1981 | AQT (v) | |||

| Syngelaki [126] | 2009 | 70 | adults and adolescents (healthy and patients, psy) | 0.0 | 16.3 | 1.5 | Heaton, 2005 (c) | WASI (s) | P | ||

| Taconnat [127] | 2007 | 81 | adults (healthy) | 51.9 | 66.0 | 8.2 | Heaton et al, 1993 | CFT | P | ||

| Whiteside [128] | 2016 | 304 | adults (patients, neuro) | 54.9 | 45.1 | 13.4 | Heaton et al., 1993 | WAIS-III (s,v) | P | ||

| Yasuda [129] | 2014 | 33 | adults and adolescents (patients, psy) | 39.4 | 26.1 | 11.5 | Kashima et al., 1987 (c) | WAIS-III | WAIS-III | WAIS-III | P |

| Yasuda | 2014 | 33 | adults and adolescents (healthy) | 39.4 | 26.8 | 9.6 | Kashima et al., 1987 (c) | WAIS-III | WAIS-III | WAIS-III | P |

| IQ Domain | Statistic | Categories | Perseverations | NPE | FMS | TE |

|---|---|---|---|---|---|---|

| Number of samples (k) | 20 | 25 | 6 | 6 | 11 | |

| Significant correlations (%) | 70 | 76 | 50 | 0 | 64 | |

| FSIQ | Total N | 1533 | 2049 | 664 | 553 | 710 |

| Average effect size r | 0.44 | −0.39 | −0.29 | −0.05 | −0.42 | |

| [95% CI] | [0.36, 0.51] | [−0.45, −0.33] | [−0.46, −0.11] | [−0.14, 0.03] | [−0.51, −0.31] | |

| Q | 63.31 * | 50.41 * | 26.56 * | 1.34 | 22.02 * | |

| I² | 68.41 | 48.42 | 73.64 | 0 | 45.50 | |

| τBegg & Mazumar | −0.08 | −0.26 | 0.07 | −0.07 | 0.15 | |

| pBegg & Mazumar | 0.626 | 0.076 | 0.851 | 0.851 | 0.529 | |

| Number of samples (k) | 19 | 24 | 6 | 4 | 11 | |

| Significant effects (%) | 74 | 71 | 67 | 0 | 64 | |

| VIQ | Total N | 1755 | 2071 | 546 | 260 | 871 |

| Average effect size r | 0.33 | −0.31 | −0.30 | −0.02 | −0.37 | |

| [95% CI] | [0.26, 0.39] | [−0.36, −0.26] | [−0.44, −0.16] | [−0.15, 0.10] | [−0.45, −0.29] | |

| Q | 37.08 * | 30.99 | 14.33 * | 3.03 | 17.01 | |

| I² | 46.06 | 19.33 | 51.15 | 0 | 29.45 | |

| τBegg & Mazumar | −0.20 | −0.01 | −0.20 | 1 | 0.18 | |

| pBegg & Mazumar | 0.234 | 0.941 | 0.573 | − | 0.435 | |

| Number of samples (k) | 28 | 42 | 17 | 14 | 22 | |

| Significant effects (%) | 75 | 52 | 53 | 14 | 73 | |

| PIQ | Total N | 2506 | 3256 | 1784 | 1386 | 2015 |

| Average effect size r | 0.34 | −0.29 | −0.19 | −0.08 | −0.36 | |

| [95% CI] | [0.27, 0.39] | [−0.34, −0.24] | [−0.27, −0.11] | [−0.13, −0.02] | [−0.42, −0.29] | |

| Q | 69.75 * | 88.87 * | 44.76 * | 14.70 | 62.07 * | |

| I² | 58.42 | 51.61 | 59.79 | 0 | 62.95 | |

| τBegg & Mazumar | 0.05 | 0.17 | 0.14 | −0.11 | −0.11 | |

| pBegg & Mazumar | 0.693 | 0.121 | 0.433 | 0.584 | 0.498 |

| Moderator | Categories | Perseverations | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Continuous moderators | β | 95% CI | df | t | p | β | 95% CI | df | t | p |

| FSIQ | ||||||||||

| Mean age | 0.00 | [−0.09, 0.11] | 17 | 0.15 | 0.880 | 0.00 | [−0.08, 0.07] | 22 | −0.21 | 0.837 |

| SD age | 0.07 | [−0.05, 0.18] | 17 | 1.15 | 0.268 | −0.04 | [−0.11, 0.04] | 22 | −0.93 | 0.362 |

| Percent female | 0.01 | [−0.09, 0.12] | 14 | 0.27 | 0.795 | 0.01 | [−0.06, 0.09] | 19 | 0.35 | 0.733 |

| VIQ | ||||||||||

| Mean age | −0.02 | [−0.09, 0.06] | 16 | −0.49 | 0.633 | 0.00 | [−0.06, 0.06] | 21 | 0.64 | 0.949 |

| SD age | 0.06 | [−0.03, 0.15] | 16 | 1.35 | 0.195 | −0.03 | [−0.10, 0.04] | 21 | −0.73 | 0.471 |

| Percent female | 0.01 | [−0.06, 0.08] | 15 | 0.34 | 0.742 | 0.05 | [−0.04, 0.07] | 20 | 0.57 | 0.573 |

| PIQ | ||||||||||

| Mean age | 0.04 | [−0.01, 0.10] | 25 | 1.60 | 0.122 | −0.07 * | [−0.11, −0.02] | 39 | −3.05 | 0.004 |

| SD age | 0.04 | [−0.02, 0.11] | 25 | 1.33 | 0.197 | −0.03 | [−0.08, 0.01] | 39 | −1.38 | 0.174 |

| Percent female | 0.00 | [−0.09, 0.10] | 23 | 0.06 | 0.951 | −0.01 | [−0.10, 0.08] | 29 | −0.25 | 0.801 |

| Categorical moderators | χ2 | df | p | χ2 | df | p | ||||

| FSIQ | ||||||||||

| Age group | 1.14 | 2 | 0.566 | 2.89 | 2 | 0.236 | ||||

| Clinical status | 2.60 | 1 | 0.107 | 4.76 * | 1 | 0.029 | ||||

| WCST version | 0.01 | 1 | 0.911 | 1.16 | 1 | 0.282 | ||||

| WCST administration | 2.71 | 1 | 0.100 | 2.33 | 1 | 0.127 | ||||

| IQ test type | 0.33 | 1 | 0.567 | 2.93 | 1 | 0.087 | ||||

| VIQ | ||||||||||

| Age group | 1.81 | 2 | 0.405 | 0.48 | 2 | 0.787 | ||||

| Clinical status | 0.26 | 1 | 0.609 | 0.00 | 1 | 0.972 | ||||

| WCST version | 0.30 | 1 | 0.586 | 1.37 | 1 | 0.242 | ||||

| WCST administration | 2.78 | 1 | 0.095 | 0.83 | 1 | 0.363 | ||||

| IQ test type | 0.25 | 1 | 0.617 | 0.02 | 1 | 0.888 | ||||

| PIQ | ||||||||||

| Age group | 2.893 | 2 | 0.235 | 13.77 * | 2 | 0.001 | ||||

| Clinical status | 3.431 | 1 | 0.064 | 0.18 | 1 | 0.669 | ||||

| WCST version | 0.41 | 1 | 0.522 | 3.78 | 1 | 0.052 | ||||

| Categorical moderators | χ2 | df | p | χ2 | df | p | ||||

| PIQ WCST administration | 0.42 | 1 | 0.518 | 0.06 | 1 | 0.810 | ||||

| IQ test type | 0.03 | 1 | 0.858 | 2.55 | 1 | 0.110 | ||||

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kopp, B.; Maldonado, N.; Scheffels, J.F.; Hendel, M.; Lange, F. A Meta-Analysis of Relationships between Measures of Wisconsin Card Sorting and Intelligence. Brain Sci. 2019, 9, 349. https://doi.org/10.3390/brainsci9120349

Kopp B, Maldonado N, Scheffels JF, Hendel M, Lange F. A Meta-Analysis of Relationships between Measures of Wisconsin Card Sorting and Intelligence. Brain Sciences. 2019; 9(12):349. https://doi.org/10.3390/brainsci9120349

Chicago/Turabian StyleKopp, Bruno, Natasha Maldonado, Jannik F. Scheffels, Merle Hendel, and Florian Lange. 2019. "A Meta-Analysis of Relationships between Measures of Wisconsin Card Sorting and Intelligence" Brain Sciences 9, no. 12: 349. https://doi.org/10.3390/brainsci9120349

APA StyleKopp, B., Maldonado, N., Scheffels, J. F., Hendel, M., & Lange, F. (2019). A Meta-Analysis of Relationships between Measures of Wisconsin Card Sorting and Intelligence. Brain Sciences, 9(12), 349. https://doi.org/10.3390/brainsci9120349