1. Introduction

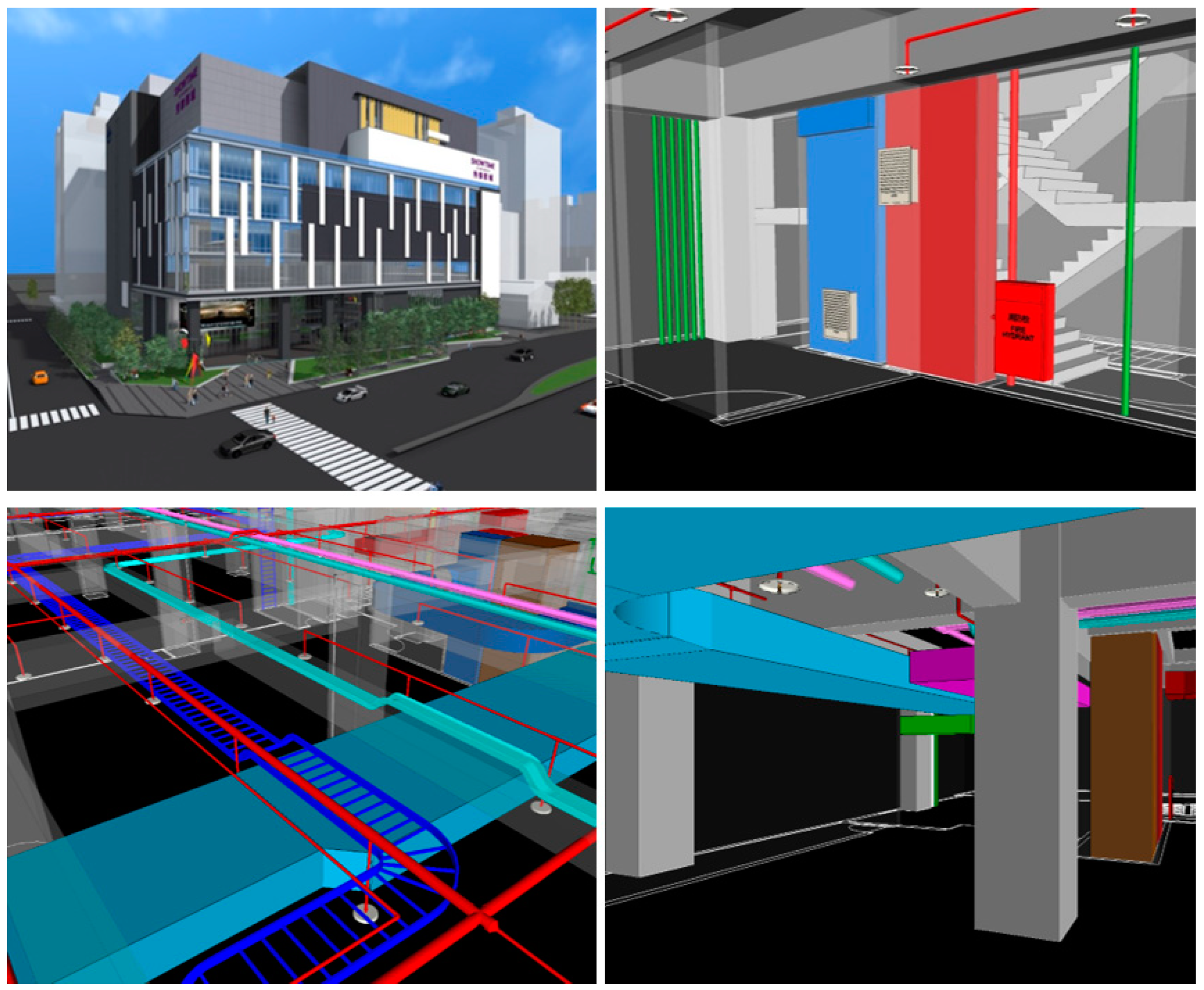

Design conflicts refer to the errors in which building components overlap with each other spatially when compiling various types of engineering drawings. Since engineering drawings are generally formed after compiling the designs by engineers of different professions, design conflicts between different system components are often common [

1,

2]. Minor design conflicts often result in rework and increase the project costs. In severe cases, design changes may be required, resulting in cost overruns, delay in progress, and compromising the structural safety. As pointed out by previous studies, unresolved design conflicts will hugely impact on the project success [

3].

In recent years, the emergence of BIM software has enabled the easy detection of design conflicts; conflict checking has become one of the important functions of BIM software. Since resolving design conflicts is critical to the success of a project, many countries have mandated all public projects to execute clash detection. In the United Kingdom, for example, the design team must perform clash detection once every week or every two weeks to ensure that the engineering design receives complete coordination and is free of conflicts, thereby reducing the probability of change orders [

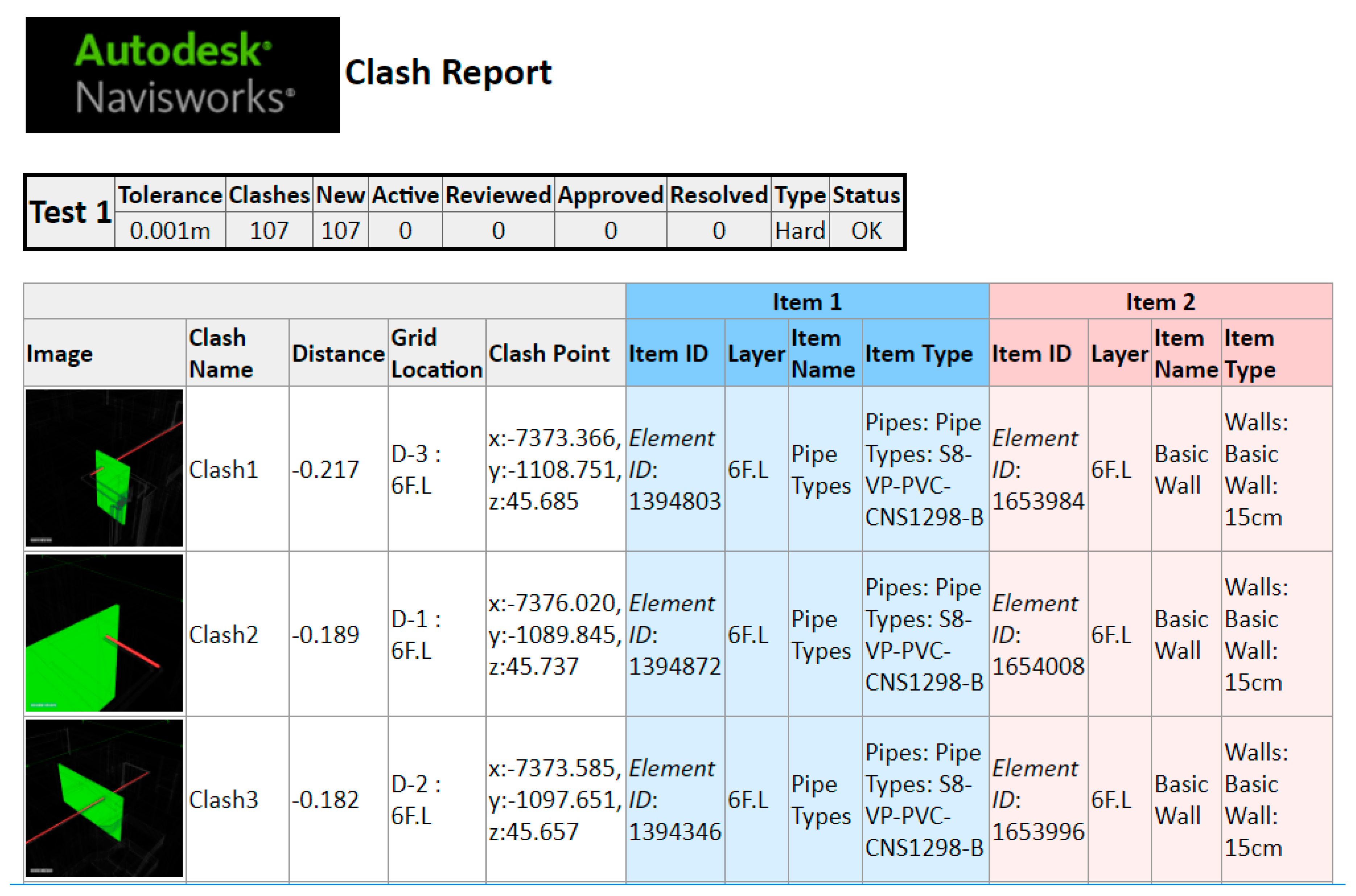

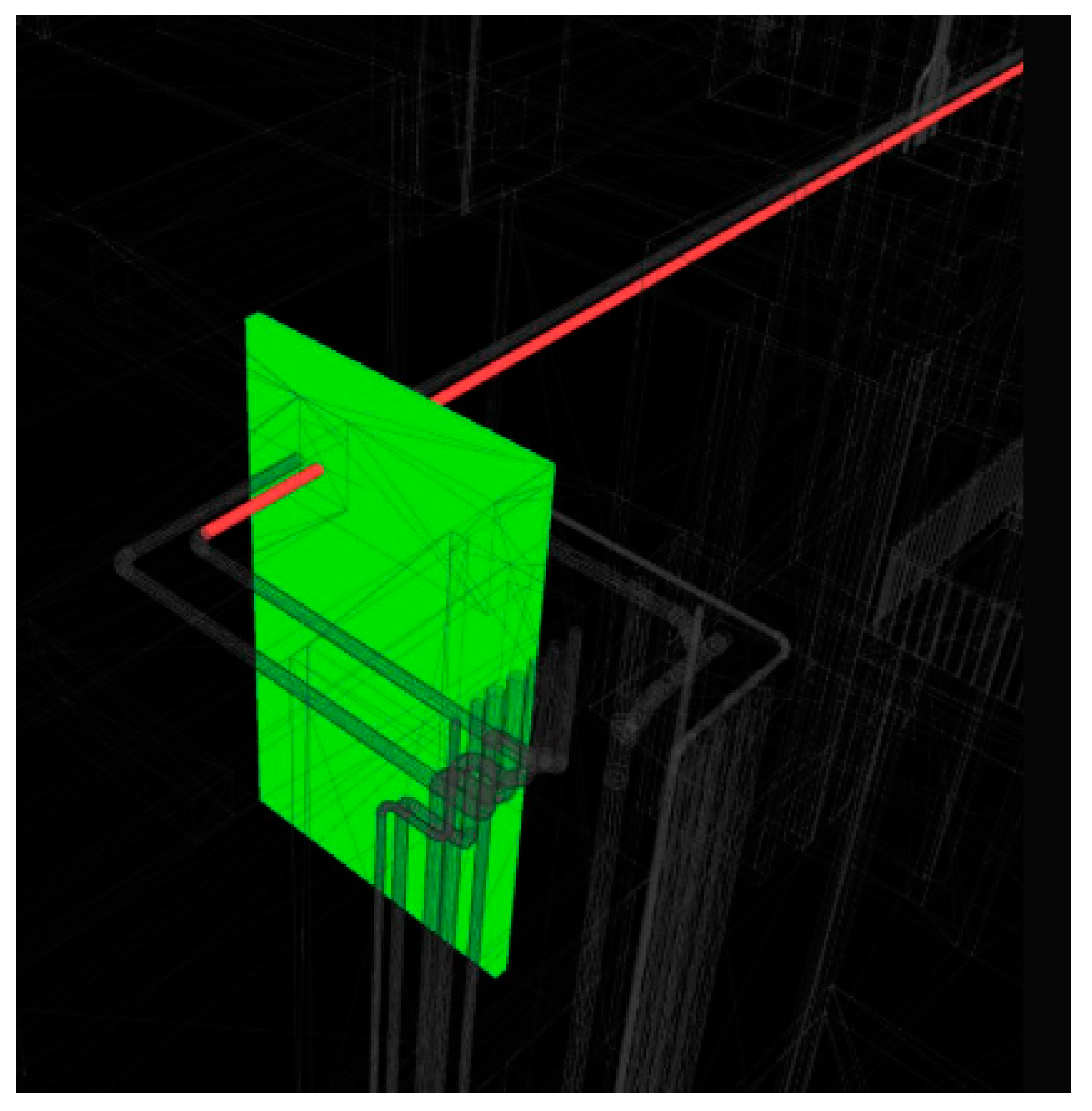

4]. However, the clash detection algorithms of most BIM software are simple; as long as two building components are spatially overlapping, in contact, or within a given distance, they will be identified as a conflict/clash and listed in the detection report. Therefore, even for small projects, the number of clashes detected using BIM software can be enormous [

5,

6,

7]. As many studies discovered, 50% or more of the clashes detected from BIM software are found to be “irrelevant clashes”; that is, these conflicts will not have a substantial impact on the projects, or they can be directly resolved by site engineers during the construction phase [

7]. However, the clash detection report of BIM software does not identify these irrelevant clashes. Ideally, every single clash in a detection report should be evaluated by engineers to decide whether the resolution is needed. This is an extremely time-consuming job. According to our interviews with senior project managers, many projects in Taiwan cannot afford the high incurred costs. Thus, their BIM managers merely selectively review a few clashes or even neglect the entire report. It is why many studies have pointed out that unless filtering those irrelevant clashes is automated, the clash detection report with an overwhelming number of clashes will become trivial and meaningless [

6,

7,

8].

Scholars have proposed methods to resolve this issue from three aspects, namely: clash avoidance, clash detection improvement, and clash filtering [

6,

7]. Clash avoidance begins with the modeling method with the emphasis on adopting collaboration or strengthened coordination to reduce the occurrence of clashes. Undoubtedly, this method will increase the burden on the design staff [

7]. For those project participants without direct contractual relationships, collaboration is also difficult to implement [

6,

9]. By contrast, other scholars consider that the algorithm to detect clashes in BIM software can be improved by increasing the accuracy of its detection, thereby reducing the number of irrelevant clashes [

5]. However, as some studies pointed out, the refinement of the clash detection algorithm cannot effectively prevent irrelevant clashes caused by human errors from happening [

7,

10]. Recent studies suggest that an alternative is to directly identify those irrelevant clashes and filter them out from the clash detection report generated by BIM software. This method is broadly divided into two approaches. One is to apply rules to identify the dependency relationship of conflicting/clashing components, thereby filtering out irrelevant clashes [

7]. However, the constructing dependency relationships between components and query algorithms is often time-consuming and labor-intensive when acquiring and maintaining the rules. Jiang et al. proposed a rule-based knowledge system to automate the code-checking process for green construction [

11]. They found that the domain knowledge is usually dispersed and fragmented, so rule acquisition requires human experts from many professional fields. Therefore, the process of knowledge representation and acquisition is often a complex and time-consuming task [

12]. The other is the use of machine learning methods that train classifiers of machine learning through historical data to filter out irrelevant clashes [

6]. However, researchers using machine learning on complex problems usually observe that a favorable classification performance often requires a larger training dataset that allows a more complex model with more features [

13]. Nevertheless, identifying and labeling a large number of clashes requires tremendous and expensive manpower; therefore, the prediction accuracy of machine learning is often insufficient before a sufficiently large number of cases are collected [

7]. The industry is in urgent need of more cost-effective solutions on this issue.

In the field of machine learning, many studies applied a combination of two or more sophisticated methods on specific domains and obtained better results than using individual methods. A hybrid method was developed for discretizing continuous attributes to enhance the accuracy of the naïve Bayesian classifier [

14]. An algorithm based on support vector machine (SVM), 2D fast Fourier transform (FFT), and hybrid fuzzy c-mean techniques was proposed to recognize and visualize the cracking incurred in the structure [

15]. The hybrid ML algorithm performs better to recognize cracks with higher accuracy than the traditional SVM. Another hybrid computational model based on genetic algorithm (GA) and support vector regression (SVR) was developed to predict bridge scour depth near piers and abutments [

16]. The proposed hybrid model achieved 80% more accurate error rates than those obtained using other methods, such as regression tree, chi-squared automatic interaction detector, artificial neural network, and ensemble models. These studies provide examples that demonstrate the effect of using a hybrid method.

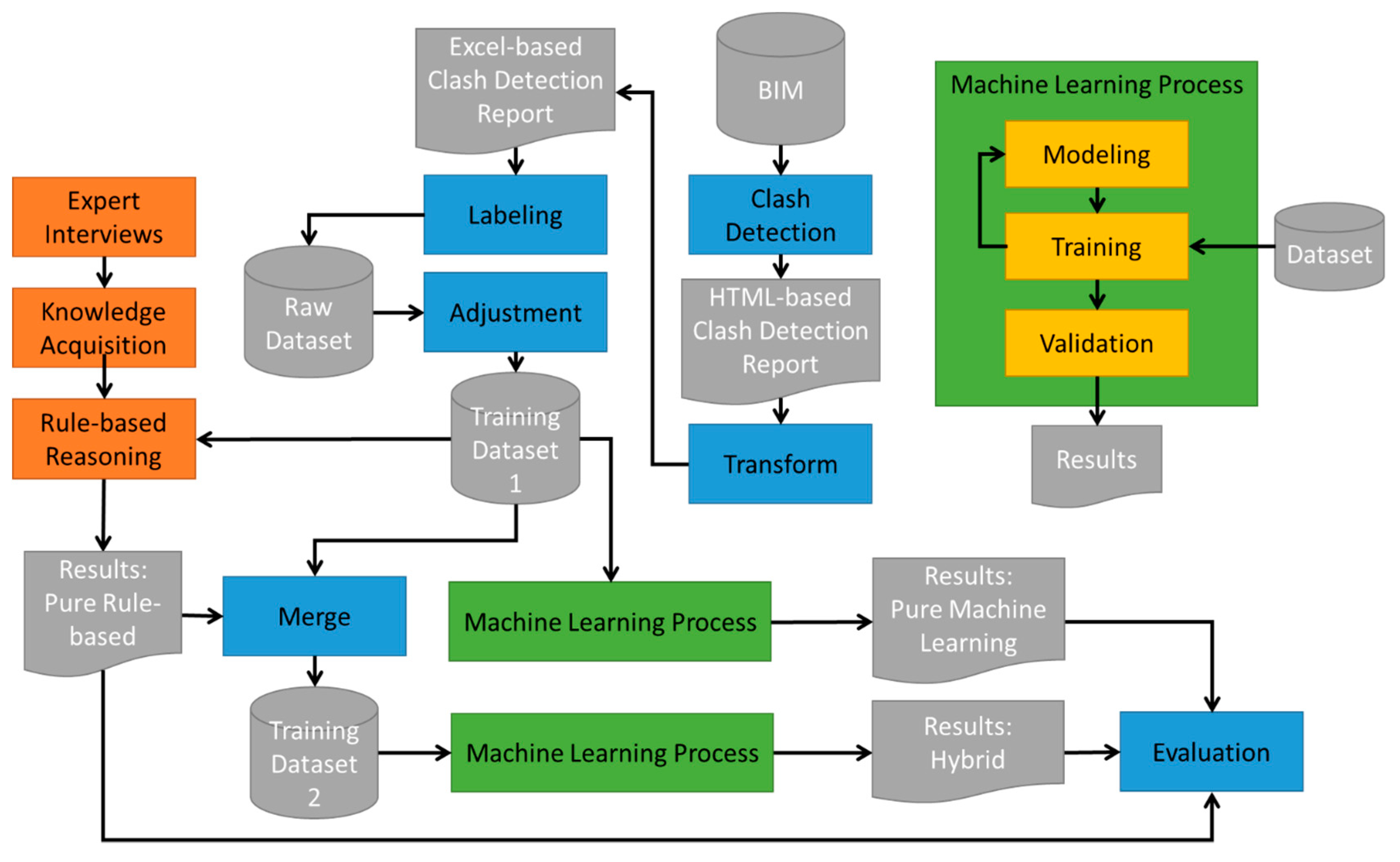

This study attempted to combine the techniques of rule-based reasoning and supervised machine learning to develop an algorithm that can automatically filter out irrelevant clashes from the BIM-generated clash detection reports. The main purpose of this study is to explore whether the hybrid method we proposed can enhance the predictive accuracy by machine learning algorithms without a large number of training cases as well as without increasing the development manpower. Unlike most rule-based systems that require an exhaustive knowledge acquisition process, the rule-based reasoning in this study is not intended to obtain accurate results, because this often requires a great amount of efforts regarding knowledge acquisition. Instead, we intend to first obtain a preliminary classification of clashes by applying a simple rule set acquired from the same experts of labeling for the subsequent machine learning process and incorporate these preliminary results in the machine learning process to see if they can help improve the prediction accuracy.

2. Related Work

In most construction projects, structural, mechanical, electrical, and plumbing (MEP) engineers develop their designs based on the architectural model. This base model is often constantly updated along with the progress of the design work. Without the adequate synchronization of all the updates among these design teams, there will be so-called “design clashes”. It refers to a conflict of building components overlapping each other spatially when various types of engineering drawings are compiled. As pointed out by some scholars, if a collaboration environment exists between design teams, most clashes can be avoided [

7]. However, in the participatory action research of the United Kingdom, researchers introduced a collaboration environment in a multi-floor large-scale construction project, where the engineers were assisted by software to avoid design clashes. However, there were still more than 400 clashes found between the structural model and the MEP model [

4]. Their study pointed out that collaboration can indeed reduce design conflicts, but clash detection is still a necessary operation.

In the era of 2D drawings, design conflicts were not easily detected at the design phase, but remained until the construction, leading to reworks or even change orders. Clashes have been regarded as one of the major factors causing cost overruns and project delays. The emergence of BIM software enables easy design conflict/clash detection; conflict checking has been one of the essential functions of BIM software. However, Helm et al. [

5] found that those clash detection algorithms in most BIM software are relatively simple: as long as two building components are spatially overlapping, touching, or within a given distance, they are recognized as conflicts and are listed in the detection report. Identifying and resolving those detected clashes is a time-consuming and laborious task [

4,

6,

7]. The clashes detected by BIM software can be roughly divided into three categories: (1) errors, which are the clashes that will affect the project and must be resolved, such as structural components penetrated by pipes; (2) deliberate clashes, which includes intentional clashes originating from the designer, such as the pipes and conduits penetrating through the slabs; (3) pseudo clashes, which are permissible clashes appearing to be errors. As Wang and Leite [

17] discovered, the proportions of deliberate and pseudo clashes, which are also known as “irrelevant clashes”, in a particular project were up to 50% [

10]. Among the cases considered in our study, this proportion was even higher. Scholars termed these conflicts that do not have substantial impacts on the project as “irrelevant clashes” [

6,

7]. These irrelevant clashes can be discovered in subsequent project stages and easily handled by the site engineers themselves; therefore, there is no need to resolve them during the clash detection. However, the clash detection report of BIM software does not disclose these irrelevant clashes, which means they must be manually identified by BIM managers instead. As pointed out by Hu et al. [

7], in practice, many projects can have millions of clashes, so automating the filtering of irrelevant clashes is an important and urgently needed function [

4,

6,

7].

Existing methods of reducing irrelevant clashes can be roughly divided into three aspects: clash avoidance, clash detection improvement, and clash filtering [

7]. Clash avoidance begins with the modeling method during the design phase, emphasizing the collaboration and coordination among the design teams to avoid the occurrence of clashes from the beginning. Mehrbod et al. [

18] established taxonomy to classify the causes of clashes into three categories, namely, process-based, model-based, and physical design [

12]. They aimed to understand the causes of design conflicts and the consideration factors for conflict/clash resolution. With the aim of reducing the occurrence of clashes through automated coordination, Wang and Leite [

17] constructed a sematic schema for MEP coordination that was used to present and acquire the experience and knowledge hidden behind the coordination issues. Both Hartmann [

19] and Gijezen [

20] re-examined the BIM model via a more organized work breakdown structure (WBS) to reduce the number of irrelevant clashes. However, scholars believe that this approach undoubtedly increases the burden on the design teams [

7]. Collaboration would be difficult to implement in many projects because project participants may not have a mutual contractual relationship [

6,

9].

Meanwhile, some scholars consider that improving the clashes detection algorithms in BIM software can increase the accuracy of its detection, thereby reducing the number of irrelevant clashes [

5]. These methods include the sphere-trees method [

21], approximate polyhedra with spheres and bounding volume hierarchy [

22,

23], oriented bounding boxes or OBB-trees method [

24], and ray–triangle intersection algorithm [

5]. These algorithms are continually improved to increase the accuracy of clash detection. Yet, as pointed out by scholars, the refined clash detection algorithms still cannot effectively reduce irrelevant clashes [

10], especially those caused by human errors [

7].

The third method is to directly identify and filter out irrelevant clashes in the clash detection report of BIM software. One of the popular methods of identification or diagnosis on a certain domain is rule-based systems [

25]. Rule-based systems, also known as rule-based expert systems, have been commonly used in many fields such as medical, engineering, manufacturing, education, etc. since the 1980s and have been proved to be effective in pattern recognition, diagnosis, decision-making, control, planning, and so on due to the transparency of knowledge reasoning and consistency of reasoning results [

12]. However, despite their advantages, rule-based systems require a considerable amount of time to acquire the knowledge that is needed for reasoning. A rule-based system was proposed to automate the code checking process for green construction. Still, the researchers found that the domain knowledge is dispersed and fragmented, and rule acquisition requires human experts from many professional fields [

11]. Hu et al. [

7] applied the rules to identify the dependency relationship of conflicting/clashing components, thereby constructing a component-dependent network. This network can be used to identify the central components of clashes, group those repetitive clashes, and finally filter out irrelevant clashes. However, the number of irrelevant clashes being filtered out depends on the components’ dependency relationships and their query algorithms, which are similar to a rule-based knowledge base, which requires a lot of effort to capture and maintain those rules. Besides, the rules developed by their study may not necessarily fit other projects.

Another method that also is popular for complex problems and does not require too many efforts on knowledge acquisition is machine learning. Machine learning algorithms use computational methods to predict results directly from historical data without relying on predetermined rules or equations on domain knowledge. Besides, the algorithms adaptively improve their performance as the number of training cases increases [

13,

15]. Despite its ease of identifying trends and patterns without human intervention, researchers often argued that machine learning requires a sufficiently large training dataset that allows a more complex model in order to obtain favorable results [

7,

15]. In the field of identifying design clashes, Hu and Castro-Lacouture [

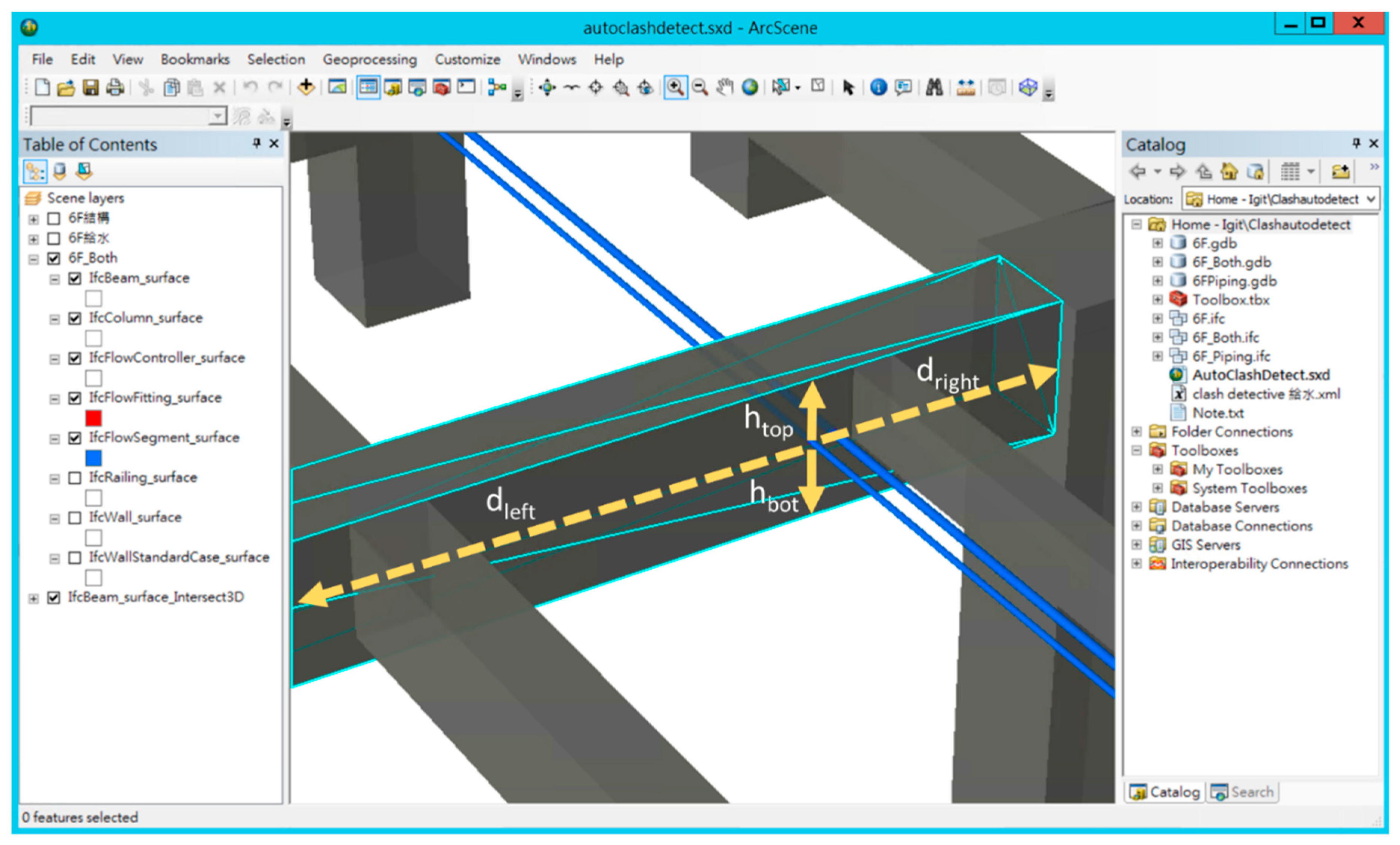

6] used a historical dataset of 204 clashes from a three-story building and implemented six different machine learning classifiers including J48-based decision trees, random forest, Jrip, binary logistic regression, naïve Bayesian, and Bayesian network to filter out irrelevant clashes. The features selected for machine learning process considered three aspects: (1) the information uncertainty level; (2) problem complexity, such as clashing objects’ size, priority, materials, type, and clashing volume, and (3) contextual flexibility, such as the location, spatial relationship, and available space [

6]. However, their method based on machine learning obtained an average prediction accuracy of 80%, but it required a great amount of labor to preprocess the training data. Some researchers then argued that before a sufficient number of training cases are collected, the prediction accuracy is insufficient [

7].

In summary, both rule-based reasoning and machine learning have their own pros and cons to provide a solution to domain problems. The former produces a favorable result no matter how big the training data size is but often requires a great number of efforts to acquire knowledge from human experts. On the contrary, the latter does not require human efforts to prepare and formulate the domain knowledge to produce results. Still, it requires sufficient training data in order to obtain a favorable result. In the field of machine learning, many studies have proved that applying the hybrid method that combines two or more sophisticated algorithms on certain domains can obtain better results than using individual methods [

14,

15,

16]. However, most studies combined two or more machine learning algorithms as their hybrid methods, but few have taken advantage of machine learning and rule-based systems from the perspectives of minimal development efforts and maximal predictive performances.

Considering the nature of clash detection, in which a large training dataset is not easy and cost-effective to collect and prepare, this study made the best use of expertise from human experts hired by the research team to both prepare the training dataset for machine learning and to be interviewed to acquire their heuristic know-how for rule-based reasoning. Based on the perspective of clash filtering, this study first used rule-based reasoning to preliminarily determine the type of clashes; subsequently, the results of the preliminary classification are added into the dataset of machine learning for training and the testing of classifiers in order to improve the prediction accuracy under a small training dataset.

4. Rule-Based Reasoning

While directly obtaining the labeling results from the experts, we also interviewed the two hired experts to acquire their knowledge used to classify the clash types. Different from most rule-based reasoning systems [

17,

27] acquiring as many rules as possible, we only focus on those rules of thumb requiring facts that can be found in the clash detection report. The reason for this is that the rule-based reasoning in this study is not meant to serve as a robust method for classifying clash types; instead, it is used to serve as the catalyst to improve the prediction performance of the supervised machine learning. In addition, the clash detection report is the only reference for the experts to do their jobs. The following six rules are directly acquired after interviewing the experts:

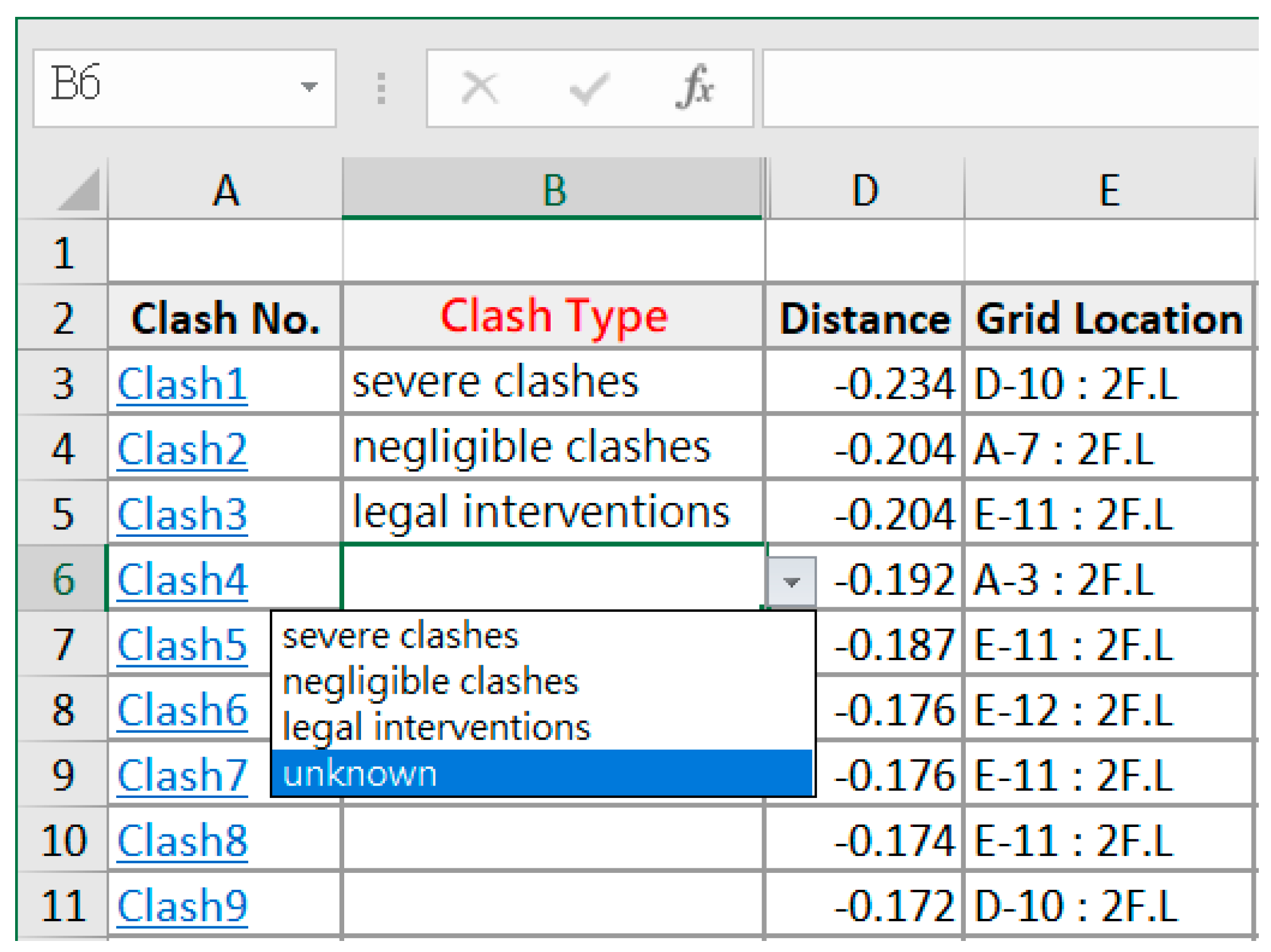

Beam Rule: If the type of clashing object from the structural model is a beam, the clash type will be an “error”.

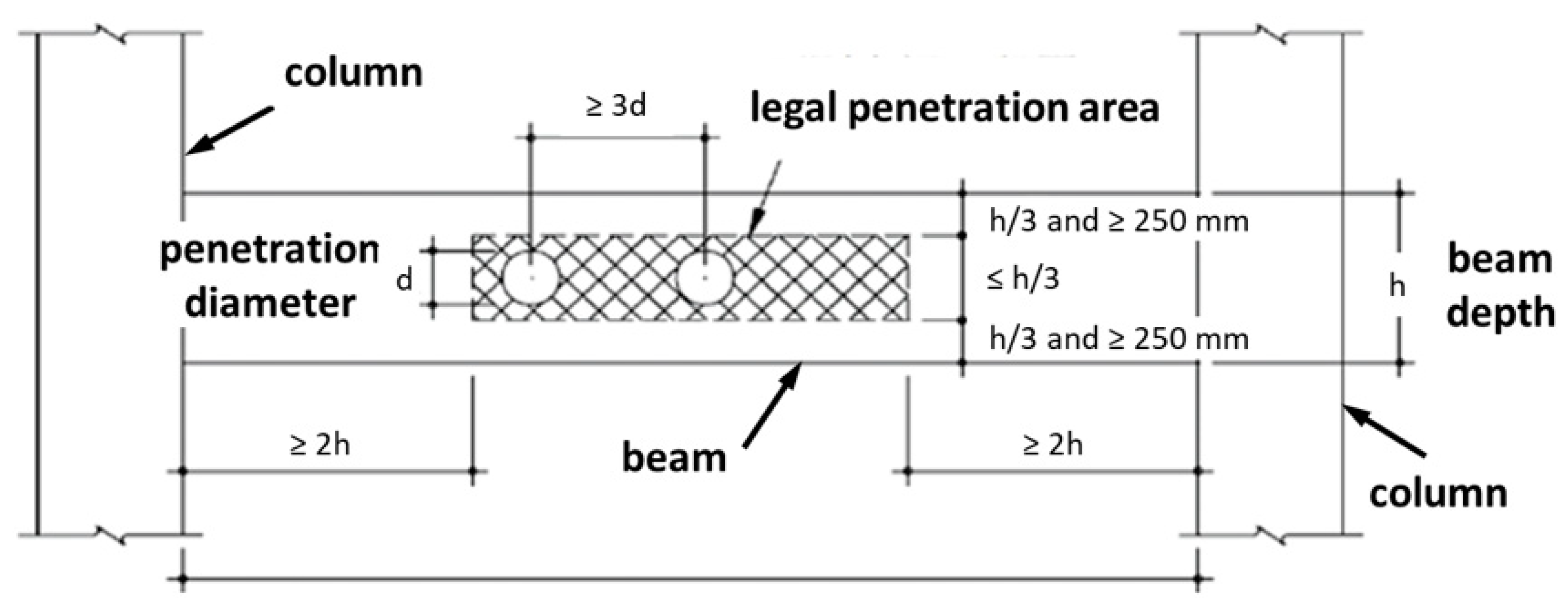

Extended Beam Rule: If the type of clashing object from the structural model is a beam and the clash position falls within the legal area defined by the specification, the clash type will be a “pseudo clash”. This rule is based on the specification we used to adjust the original labeling result mentioned in

Section 3.3.

Column Rule: If the type of clashing object from the structural model is a column, the clash type will be an “error”.

Slab Rule: If the type of clashing object from the structural model is a slab, the clash type will be a “deliberate clash”.

Wall Rule: If the type of clashing object from the structural model is a wall, the clash type can be a “deliberate clash” or an “error”.

Bearing Wall Rule: If the type of clashing object from the structural model is a wall and is not a bearing wall, the clash type will be a “deliberate clash”; otherwise, it is an “error”.

Among these rules, Rule #6 requires other supporting information that the clash detection report does not provide to ensure whether the clashing wall is a bearing wall or not. Therefore, it is excluded from our final rule repository. Instead of classifying those clashing walls as “errors”, the researchers simply revised Rule #5 as follows:

- 7.

Simplified Wall Rule: If the type of clashing structural component is a wall, the clash type will be unknown.

Then, the research team applied the Rules #1–4 and #7 stated above to perform the rule-based reasoning and obtained the clash classification result, as presented in

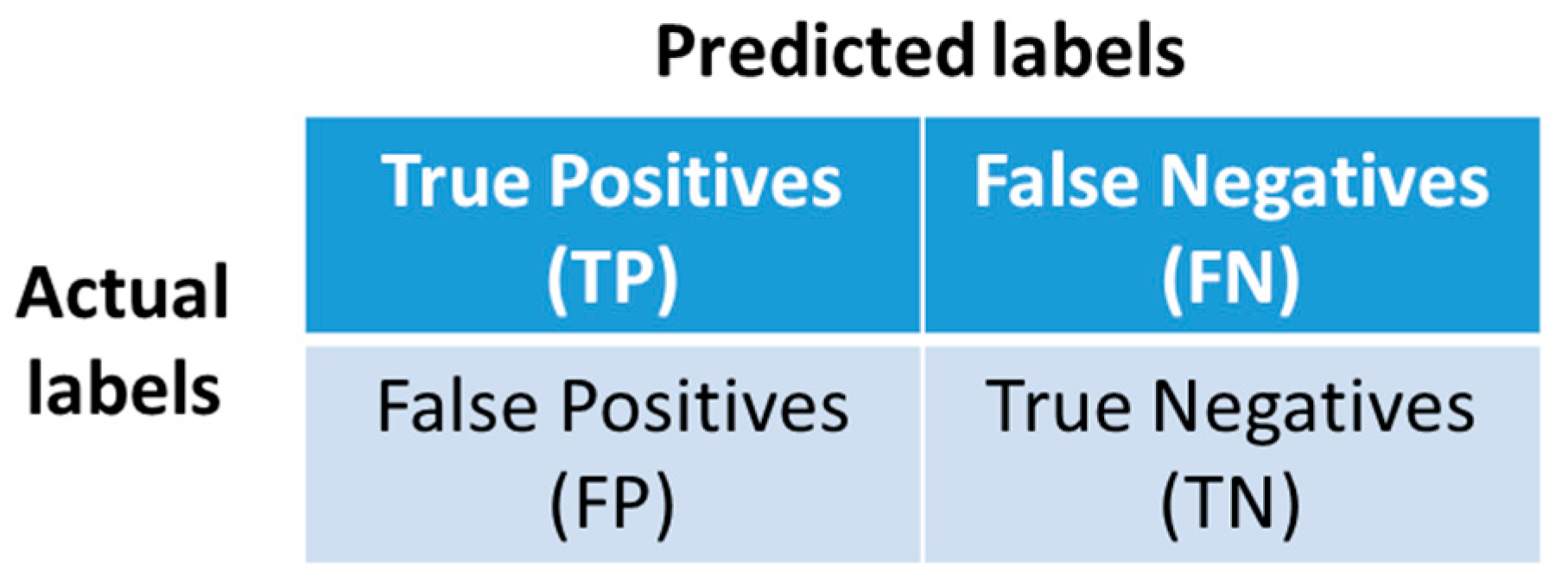

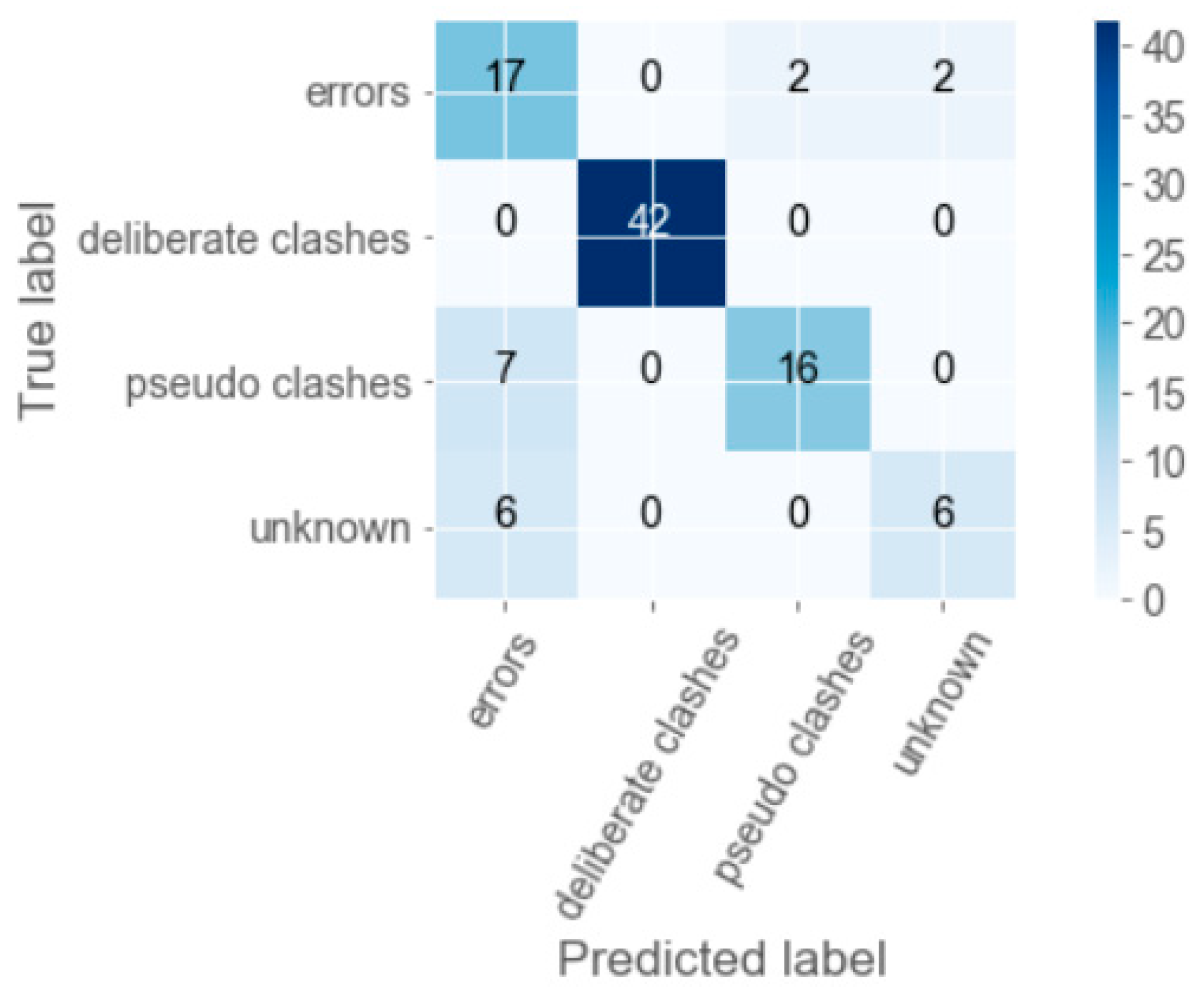

Table 3. The average accuracy rate is approximately 60% (194/326). The columns in

Table 3 represent the numbers of clash types predicted using the rules, whereas the rows represent the actual clash types specified by the two experts. For example, among the 100 true errors, the rule-based reasoning correctly predicts 98 of them, and the remaining two errors are determined as unknown.

As mentioned earlier, the aim of rule-based reasoning in this study is only to improve the outcomes of machine learning under a small training dataset. Therefore, the rules we applied did not consider deep and complex relationships among those clashing objects. As a result, the prediction accuracy (60%) tended to be low. The prediction results by rule-based reasoning here will be further included as a feature for the machine learning process that is introduced in

Section 5.5.

7. Conclusions

Previous studies stated that the clash detection reports produced by most BIM software are prone to present a huge number of clashes, many of which belong to irrelevant or ignorable clashes. Manually filtering out serious clashes from the long list in the clash detection report is both time and cost consuming; thus, automatic filtering out those irrelevant clashes by algorithms is a crucial need for the current industry.

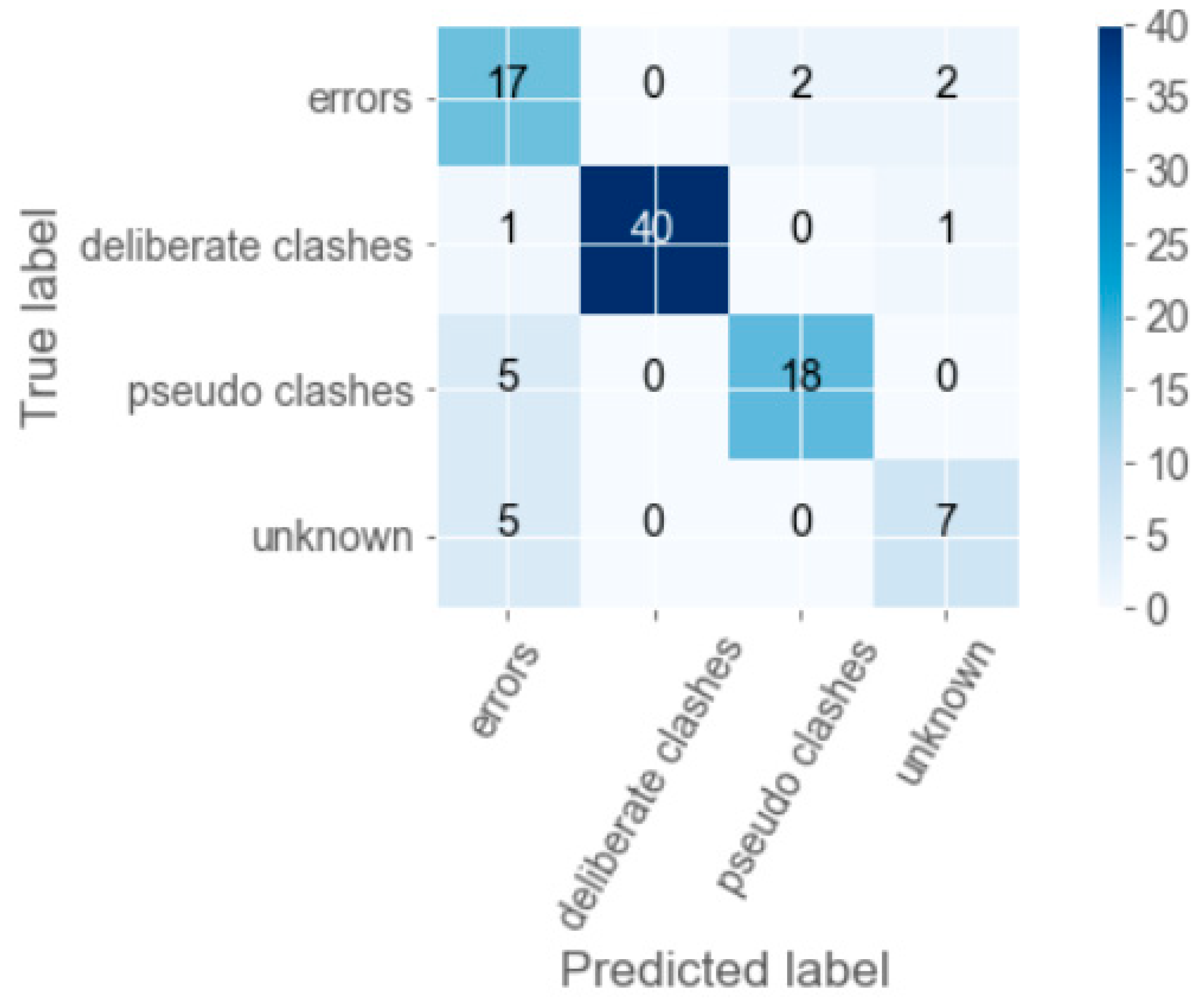

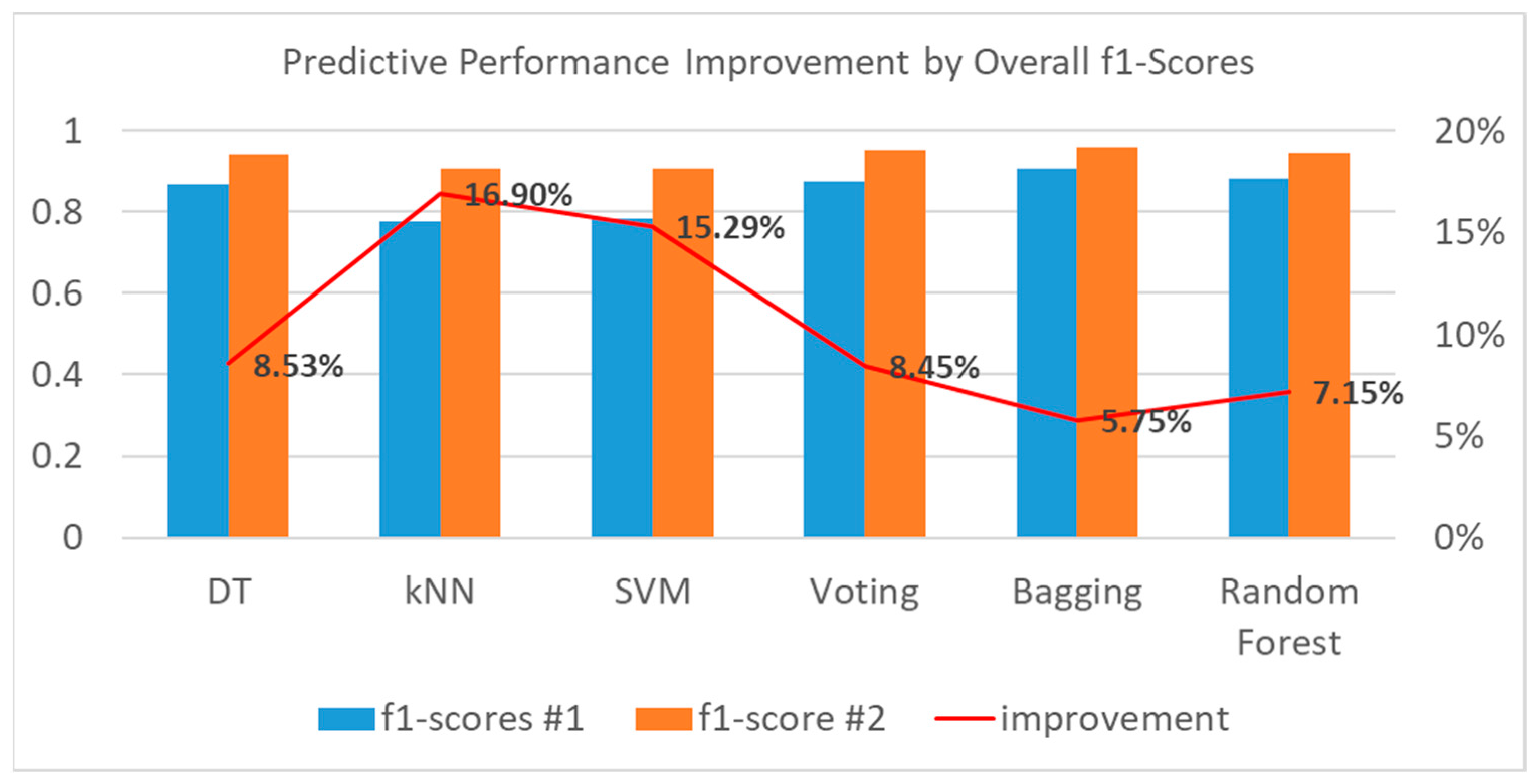

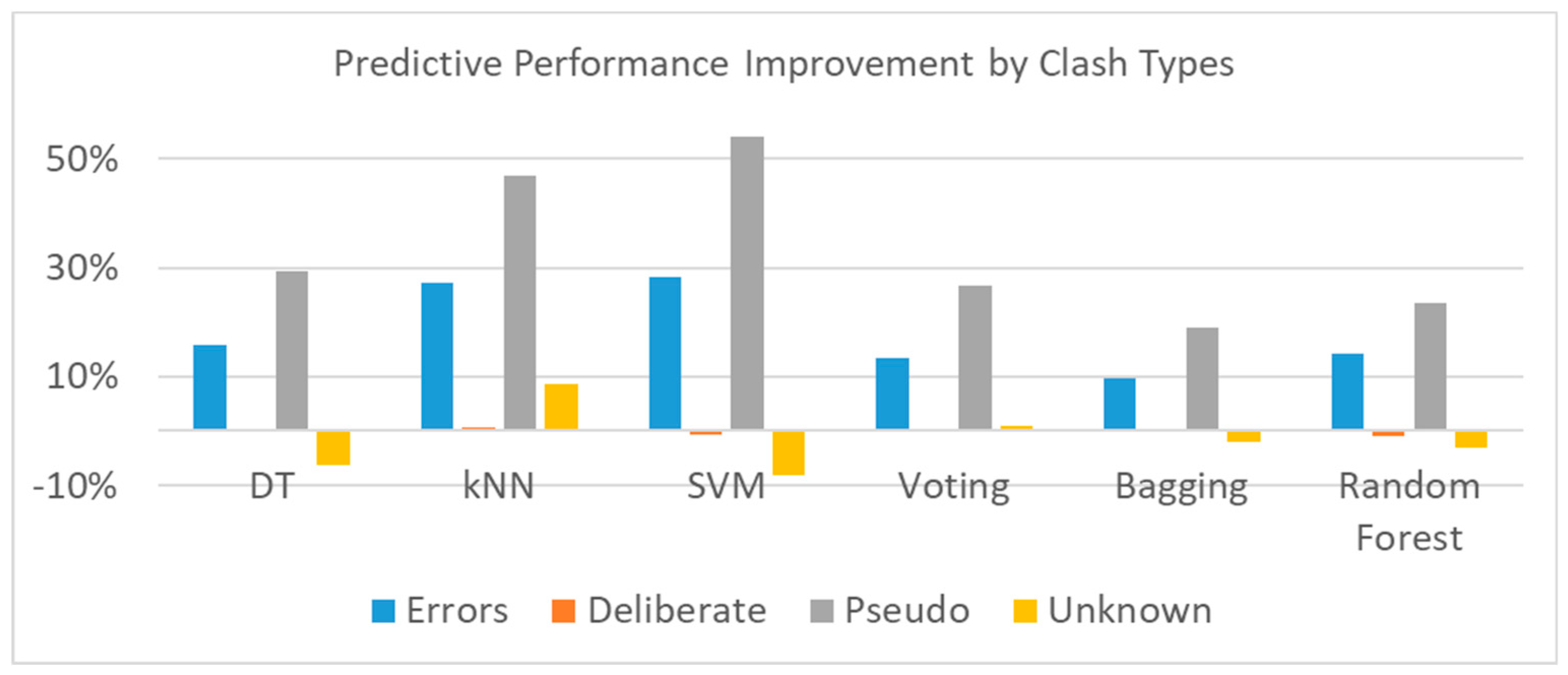

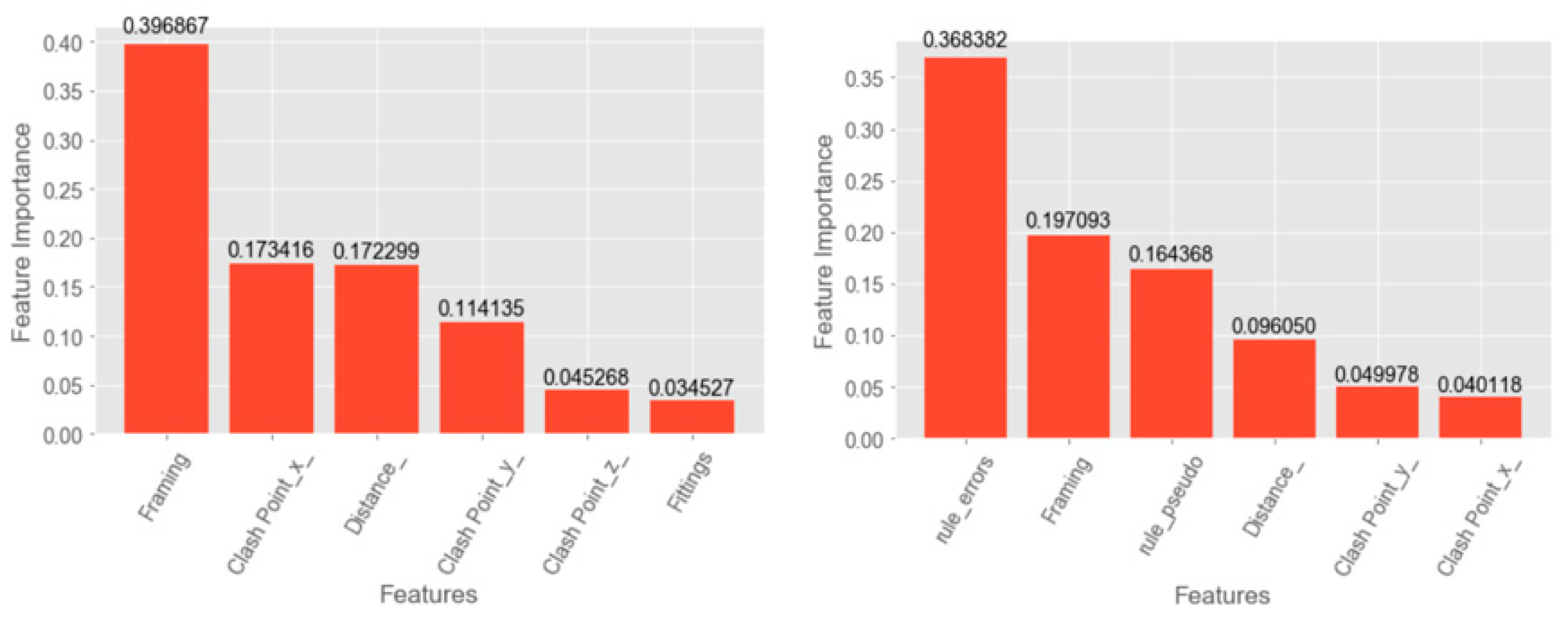

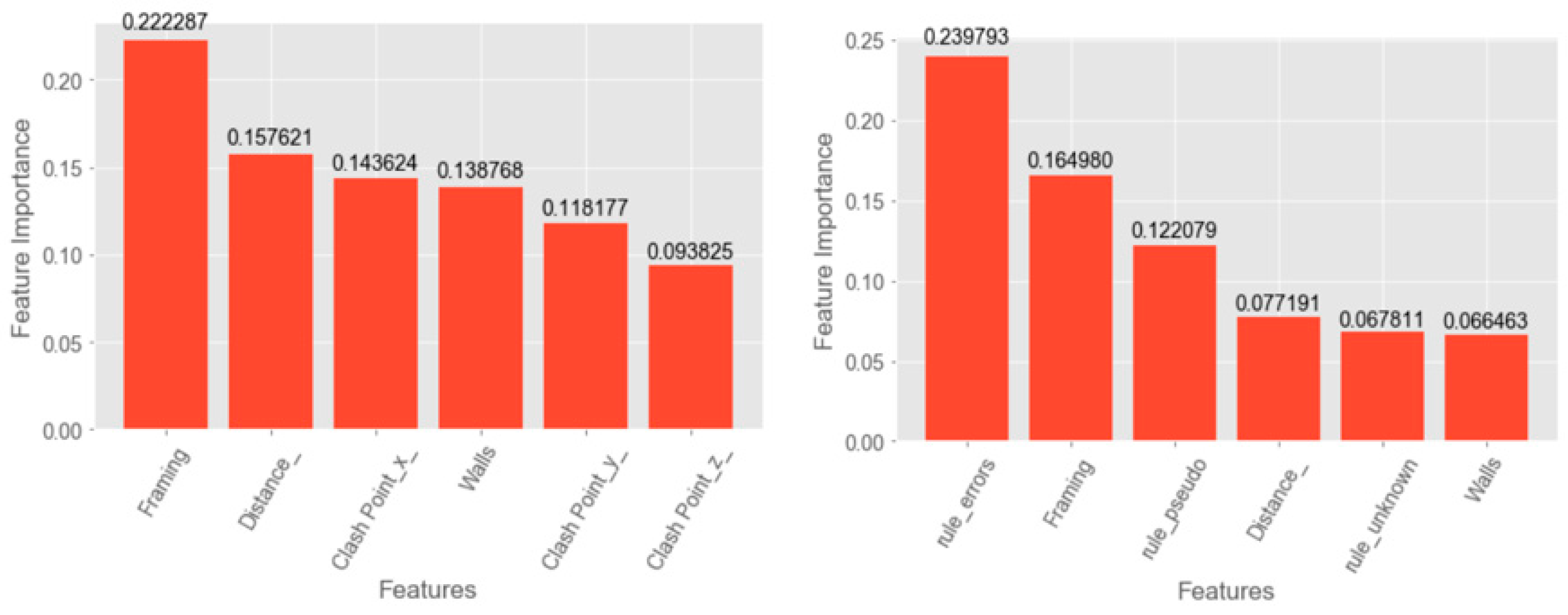

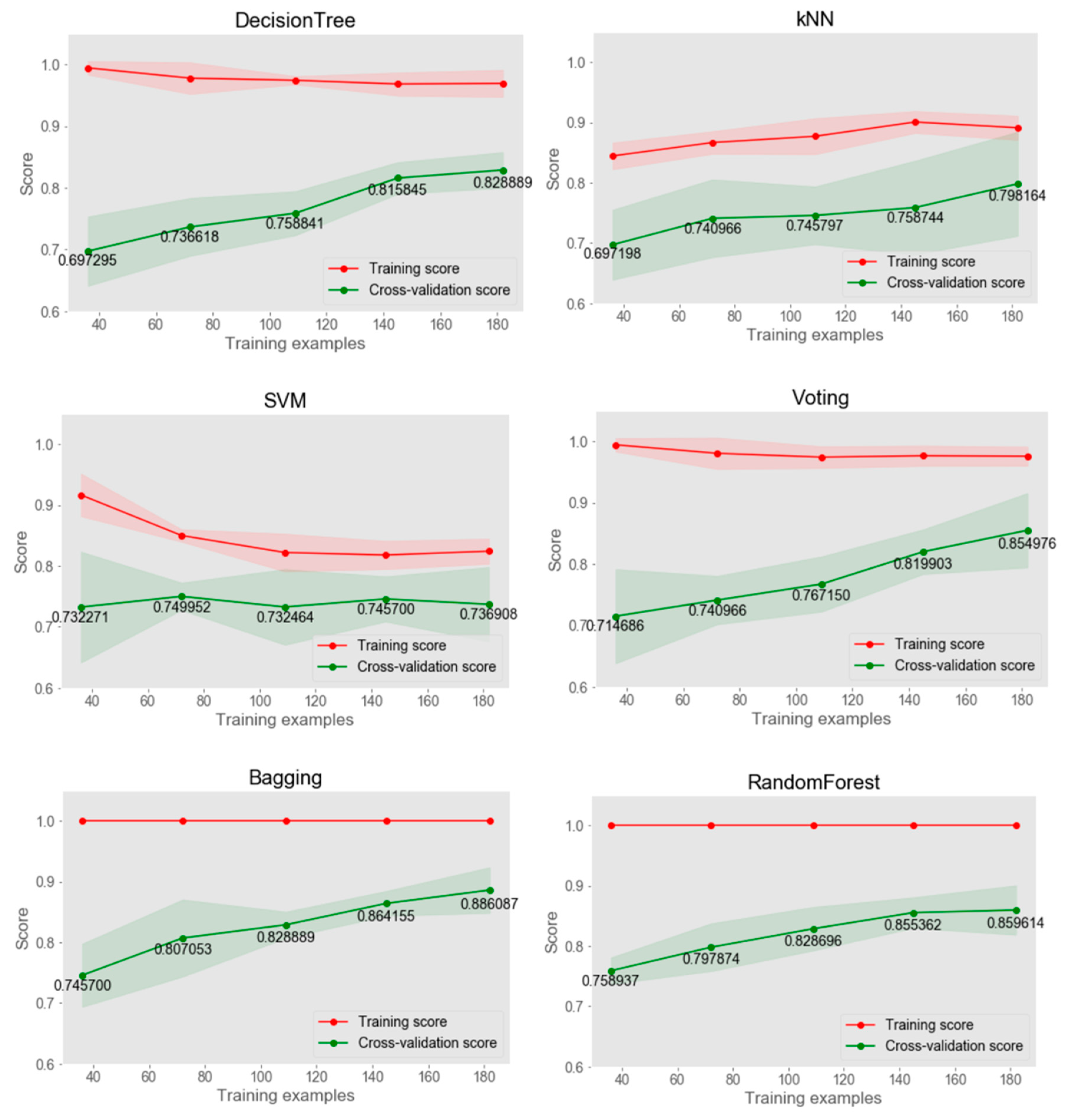

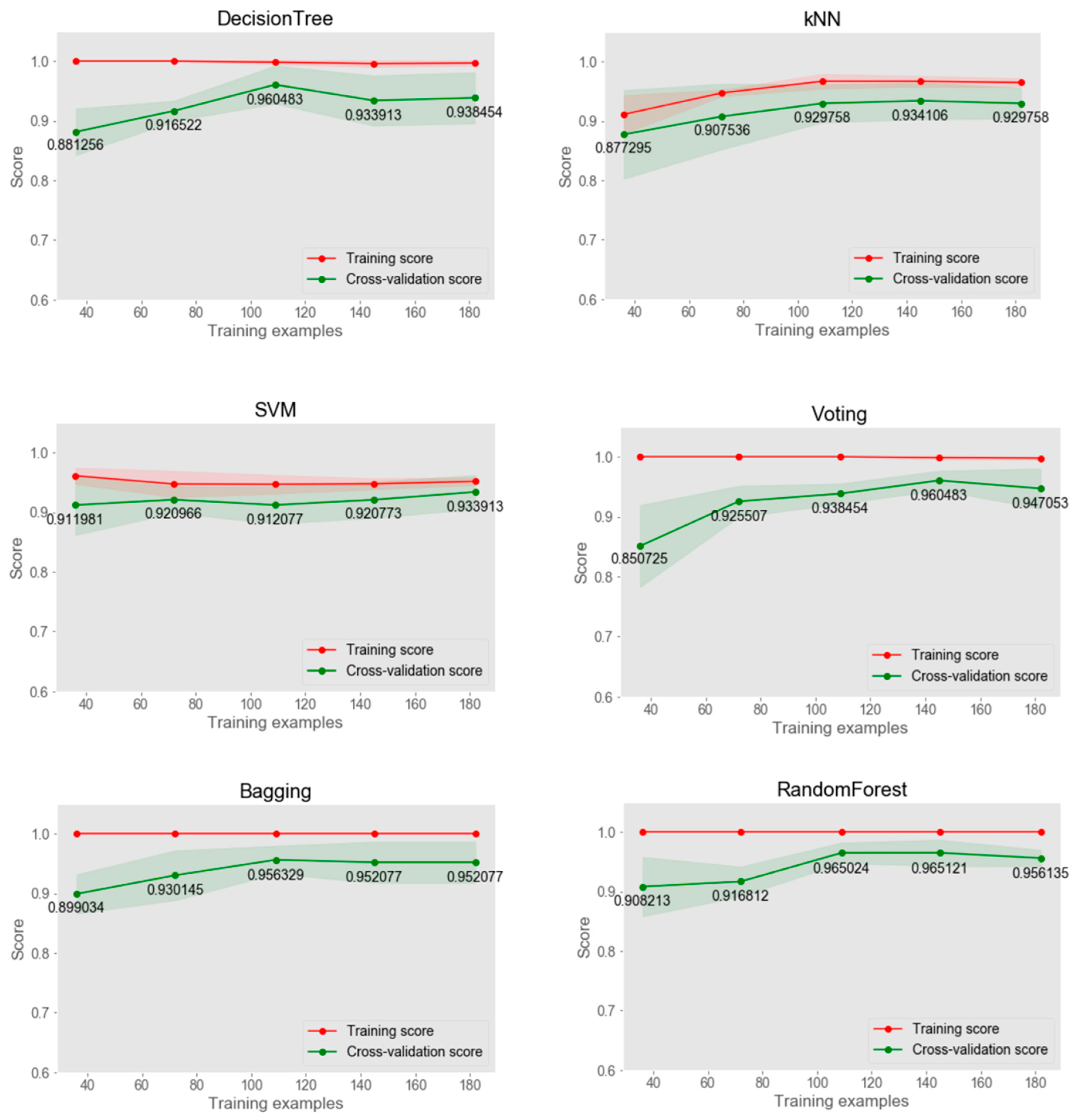

This study proposed a hybrid method that combines simple rule-based reasoning and supervised machine learning to automatically filter out irrelevant clashes from those conflicts detected by BIM software. The experiment results showed that the hybrid method can obtain a rise in the prediction accuracy of machine learning by 15%–17% for individual classifiers and 6%–8.5% for both DT and ensemble learning classifiers. The proposed method conquered the difficulties of purely developing a rule-based system considering complex relationships or merely implementing a machine learning to filter out irrelevant clashes. The former requires lots of effort for knowledge acquisition, while the number of cases collected often limits the latter’s performance. It indicated that when the predictive accuracy of the conventional supervised machine learning for design clash classification is unfavorable and more training cases cannot be collected shortly, adding a feature of prediction results obtained by rule-based reasoning to the original training dataset provides an alternative to improve the prediction performance. However, the extent of improvement may depend on how well the rule-based reasoning performs. In other words, there exists a trade-off between the accuracy improvement and efforts to acquire domain knowledge when implementing the rule-based reasoning system.

The ultimate goal of identifying clash types is to resolve those errors or serious clashes before the construction phase to avoid delays and costs incurred. The resolution of serious clashes is the most important task worthy of time and effort. Even though the average predictive accuracy we obtained by the hybrid method is as high as 95%, we still conducted an analysis of the misclassification of serious clashes by our method, where actual serious clashes are wrongly classified as pseudo or deliberate clashes. One of 30 tests with 98 cases from testing dataset #2, the serious clashes misclassification rate by the bagging classifier is up to 11% (out of actual serious clashes in testing cases). How to reduce and avoid the misclassification of serious clashes remains one of the important issues in future work.

Since the training data used to conduct the machine learning process contains only clashes between structural and piping components, the current models and their predictive results can only be applied to those clash detection reports with a similar setting. The training data needs to include more MEP components, such as ducts, conduits, fire alarm devices, or lighting devices, to extend the practical value of this study. Several individual classifiers also have overfitting issues. More training data are required for more experiments in the future. Another issue of this study is that labeling the dataset highly relied on manual work. Future studies can consider applying unsupervised machine learning based on those labeled training data to build up larger training data.