Featured Application

Learning how to play the guitar is a challenging task, despite the existence of many didactic materials designed to assist autonomous learning. This paper presents an ad-hoc app based on augmented reality (AR) for self-guided learning on guitar, and provides details of an experimental study designed to compare the learning experiences of guitar students when using the ad-hoc AR-based app designed by the authors versus the more traditional dynamic of face-to-face guitar lessons with a music teacher.

Abstract

Despite being one of the most commonly self-taught instruments, and despite the ready availability of significant amounts of didactic material, the guitar is a challenging instrument to learn. This paper proposes an application based on augmented reality (AR) that is designed to teach beginner students basic musical chords on the guitar, and provides details of the experimental study performed to determine whether the AR methodology produced faster results than traditional one-on-one training with a music teacher. Participants were divided into two groups of the same size. Group 1 consisted of 32 participants who used the AR app to teach themselves guitar, while Group 2, with a further 32 participants, received formal instruction from a music teacher. Results found no differences in learning times between the two groups based on the variables of method and gender. However, participant feedback suggested that there are advantages to the self-taught approach using AR that are worth considering. A system usability scale (SUS) questionnaire was used to measure the usability of the application, obtaining a score of 82.5, which was higher than the average of 68 that indicates an application to be good from a user experience point of view, and satisfied the purpose for which the application was created.

1. Introduction

Learning how to play a musical instrument has always been difficult, as it requires the learner develop a difficult set of skills [1]. Currently, when learning an instrument, a music student will be asked to learn how to read a score and follow instructions from a teacher in a traditional teacher–student model [2,3]. A score is a tool consisting of abstract graphic symbols that do not have an intuitive relationship with the musical note (pitch and duration of a sound) that each one represents [4]. For some instruments, such as the guitar, graphic–numerical adaptations known as tablatures or tabs are used instead as a way to simplify matters.

The guitar is arguably one of the most popular musical instruments, and is found in a vast array of musical genres from jazz to bossa nova. However, students attempting to learn this instrument and attune their hearing may encounter issues that make reading music and tuning the instrument particularly challenging, e.g., structural inconsistencies (differences in the sizes of guitars or spacing between strings) or the notational system used for this instrument versus other instruments (as notes are written graphically for interpretation). Such issues make it difficult for students to develop their own musical style [5]. As part of the learning process students must memorize complex and unfamiliar finger placements in order to play chords. It is difficult to perform these placements correctly and accurately reproduce the sound corresponding to each note [6,7]. This series of basic requirements reminds us that musical instruction commonly includes materials that can act as obstacles to learning [8].

Generally, music instruction is performed face-to-face in a one-on-one situation. A teacher will demonstrate how to perform a chord, the student will attempt to play according to the teacher’s instructions, and the teacher will then provide feedback, showing the student how to correct their mistakes [9,10,11]. Two faults have been identified with this model: firstly, students can experience boredom as a result of playing too passive a role [12,13,14], and secondly, students can feel demotivated as a result of a dynamic that involves consistently finding fault and errors in their performance. Clearly, both of these faults act as obstacles when it comes to learning a musical instrument [15].

Numerous studies have explored the relationship between musical instruments and their associations with a particular gender. Historically, there have been differences in the musical instruments played by men and women, with women preferring smaller, higher-pitched instruments [16]. Women prefer to play harp, flute, voice, fife/piccolo, clarinet, oboe, and violin, and men tend towards electric guitar, bass guitar, tuba, kit drums, table, and trombone. The least gendered instruments have been found to be African drums, cornet, French horn, saxophone, and tenor horn [17].

Although there are exceptions, there has been no evidence of significant differences between men and women in the process of learning musical subjects. The gendered pattern of learning is relatively consistent across education levels [17].

As it has been demonstrated that technology can help during basic training [18,19], certain ICT-based methods have been tested on the guitar, some of which have been aimed at facilitating learning, while others have aimed to make learning more attractive and effective. It is possible to indicate correct finger positioning using a mobile projector that projects different colored lights mounted on the headstock of the guitar. This methodology helps a student to quickly identify the correct position of fingers on the strings and frets in order to correctly play the desired chord [10]. Another, more intuitive proposal that has been used swaps projected light for a virtual hand. In this instance, the learner can clearly visualize the hand and finger positions, making it easier to understand how to correctly place the fingers on the strings and frets of the guitar [6]. Using a Bayesian classifier [20], other authors have added the possibility of correctly identifying whether the chord the student is playing is the one requested. In this approach, students are shown an image of how the fingers should be positioned to play a chord, together with audio of how it should sound when played correctly [21], and a camera is then used to detect the student’s finger positions via corresponding finger markers. This combination of audio overlay and 3D visual information is extremely well suited to guitar teaching [22].

Additionally, for both the bass guitar and the electric guitar, tests have been performed that pertain to musical instruction using video games like Rocksmith [23]. The authors argue that the use of music games can expand the conceptualization of what is “playful” and “serious” as opposed to traditional teaching. Experiments have also been run using a virtual musical instrument system (using Kinect) as an initiation method for someone who does not own the real instrument [24].

Furthermore, augmented reality (AR) has been used to mitigate the limitations of the different didactic materials used to learn guitar [18,25]. This technology has been used in education for several reasons, including reduced costs and the improvement of hardware/software, the need for more user-friendly interfaces when interacting with non-expert users, and the need to work with other teaching methodologies using active practices [26]. In addition, the use of augmented reality facilitates the visualization of elements such as finger placement [27]. Examples include HoloKeys [28] and PIANO [29], which are augmented reality tools that help users learn to play a real piano [30]; the Internet of Musical Things (IoMusT), which is a research field within the Internet of Things seeking to achieve computer–human interaction with music [31]; and a piece of research entitled Malaysia Music Augmented Reality, the goal of which is to help students to learn a musical instrument at any time [32]. It is important that augmented reality systems provide information in a way that clarifies the perceptions of the original material, while forming an integral part of the entire learning workflow [33,34].

The literature on AR in musical instrument instruction stretches over 14 years and includes different learning proposals. The novelty of the research detailed in this paper lay in the performance of a comparative study that compared an AR-based teaching methodology using an ad-hoc AR app against a traditional teaching methodology. The question to be addressed was whether the AR methodology would allow learners to pick up the basic skills needed to play the instrument more effectively than the traditional methodology. Therefore, the purpose of this research was to determine through a flexible and feasible system for users with minimal knowledge whether it is more efficient for beginner students to learn guitar with augmented reality than via a traditional teacher-led methodology.

2. Related Works

2.1. Studies of Augmented Reality Applied to Musical Instruments

The ARPiano [4] system illustrates that for over a decade, attempts have been made to make AR part of the learning processes involved in learning a musical instrument, and to create a didactic model that is both adequate and appealing. However, the authors of ARPiano have not provided specific data on how students’ learning was affected by the use of augmented reality in ARPiano. To assist piano instruction, Hackl and Anthes [28] developed an app called HoloKeys for the HoloLens by Microsoft. Due to limitations in the field of vision, the authors were unable to identify in their study which models worked best for learning.

2.2. Learning Guitar with Augmented Reality

Keebler et al. [8] developed a learning system for guitar that employed the use of augmented reality, and performed a comparative study exploring how their system affected learning compared to the traditional teacher-led methodology. They performed two experiments for each methodology in order to establish the differences between the two; the first experiment was conducted in the short term, and the second experiment was conducted over a longer term.

In research performed by Löchtefeld, Gehring, Jung, and Krüger [10], the authors stated that the use of a projector attached to the headstock of the guitar provided a good alternative for learning to play the guitar. The device, which projects colored lights onto the fret, indicates correct finger placement.

Similarly, in a study published by Motokawa and Saito [35], the authors used a USB camera and a projector to indicate the correct finger placement for playing a chord. Unlike the aforementioned research, the way in which finger placement was displayed was through the projection of a hand with fingers. This appears to be a more intuitive system, as it is easier for students to visualize how to position their fingers on the guitar.

Kerdvibulvech and Saito [27] proposed a framework based on markers and augmented reality. Users learned to play the guitar using a projector and an app. This application was more sophisticated than those previously mentioned, as it was capable of following the guitar and superimposing the virtual images onto it. It was also capable of identifying whether the chord was being played correctly by using a Bayesian classifier.

2.3. Auditory Reality

Liarokapis [21] used ARToolkit markers that can be identified by a webcam. Once identified, an image was displayed on a computer screen, together with instructions for the user. The author mentioned that the system was capable of recognizing and evaluating whether the chord is being played correctly by using the webcam’s microphone. The system also used a projector that indicated the position that the user’s fingers needed to be in to play a chord. Arguably, this was a very suitable alternative for learning guitar thanks to the two methods in a single system. The study by Liarokapis only measured user satisfaction and did not record any quantitative variables that would enable an objective comparison of user learning when using AR versus traditional methods.

2.4. Guitar Chord Classification System

De Jesus Guerrero-Turrubiates et al. [36] mentioned the use of formulae for correctly identifying chords. They believed that this method was better and more straightforward in comparison to those that existed previously. However, results varied depending on whether an electric guitar was used versus an acoustic guitar. Augmented reality technology has been of interest in research in many fields, and some studies particularly similar to the study described in this paper are displayed at Table 1. This table provides a list of existing studies, revealing the areas of opportunity that served as the starting point for this research.

Table 1.

Comparison of related existing studies.

3. Materials and Methods

The main objective of this study was to investigate whether the use of an application based on augmented reality is appropriate for learning to play basic chords on the guitar without the assistance of a teacher. Using the goal question metric (GQM) template [37], the specific objective can be defined as follows: To evaluate the effects of using augmented reality on learning processes in guitar playing by comparing the time taken to learn seven basic major chords using a traditional methodology against the proposed methodology. It was decided that participants would be selected from university students, and that they should have no prior experience or knowledge of how to play the guitar.

The goal was to establish which methodology (traditional instructor led class vs. AR-assisted class) promoted improved learning of seven major chords on the guitar, based on the interpretation of symbols indicating finger placement [38].

Study participants were randomly assigned to one of two groups: one group followed the AR-assisted methodology, while the other followed the traditional teacher-led methodology. In the self-taught group, participants were instructed to learn seven chords using the ad-hoc AR application designed for this study. Technical assistance was provided to explain to participants how the app worked before commencing self-taught lessons. In the teacher-led group, participants were instructed to learn the same seven chords following instructions provided by an expert guitar player. As with traditional music lessons, training was delivered one-on-one.

In addition to comparing effects between groups, researchers also analyzed the effect of gender on learning. This decision was based on it being assessed by other studies [39] and the idea that it may influence findings given its importance in the development of cognitive abilities and skills [40,41]. As such, the two independent variables taken into account in this study were “methodology” and “gender”, and the dependent variable was the time taken by participants to perform the proposed tasks.

Upon finishing the study, each participant from the group using the AR-assisted methodology completed a system usability scale (SUS) questionnaire to establish the quality of the application and measure the usability of the app.

The following null hypotheses were defined:

- Ho1: The time taken to learn the basic musical chords on the guitar does not depend on the methodology used.

- Ho2: The gender of participants does not affect the time taken.

In contrast, the alternative hypotheses were defined as:

- Ha1: The methodology used does affect the time taken.

- Ha2: The gender of participants does affect the time taken.

3.1. Archetype and Screener

A “student” archetype was defined, and only those individuals fitting this archetype were recruited for the purposes of this study. To determine whether individuals fit the archetype volunteers were asked to complete a screener created in Google Forms. We were looking for people who met the following conditions: no knowledge of solfeggio, not knowing how to play any musical instrument, with interest in music, and curiosity to learn how to play a musical instrument, among others. Individuals had to be right-handed, have an interest in music, a desire to learn to play the guitar, and no prior experience or knowledge of how to play any musical instrument. A total of 214 students were contacted, of which 86 met the requirements (40.2%). All who met these conditions were invited to participate in the experiment.

3.2. Participants

For the purpose of this study, participant recruitment was limited to individual students from a university as a first approximation to obtaining reliable results. These individuals were recruited from the University of Monterrey. Prior to commencing the study, a statistical review was performed to determine the appropriate sample size needed to guarantee reliable results.

The University of Monterrey had approximately 12,177 individual students in 2018, and the estimated percentage archetype students calculated in the previous section was 40.2%.

Having established the number of students, the percentage of archetype students, a statistical confidence level of 95%, a margin of error of 20%, and heterogeneity of 50%, it was then possible to establish that the optimal sample size for the research was 18 participants. Despite qualitative studies indicating that just six to nine participants [42] are required in order to identify usability problems in an application or product during Think Aloud testing, more recent studies [43] suggest that 10 ± 2 participants are in fact required.

A total of 64 participants with no prior knowledge of how to play the guitar were interested to be recruited for this study. These individuals were recruited from degrees in Engineering and Business Studies. Participants’ ages ranged from 18–25 years old (mean (M) = 21, standard deviation (SD) = 1.36). Two groups were formed, consisting of 32 participants in each group. Individuals were randomly assigned to each group, while also ensuring that there were an equal number of males and females in each group. A total of 16 men and 16 women were assigned to Group 1, and a total of 17 men and 15 women were assigned to Group 2. Group 1 was informed they would follow the AR-assisted methodology, and Group 2 was informed they would follow the traditional teacher-led methodology.

3.3. Equipment

The AR app used in this study was developed using Vuforia Engine 8.1 and Unity 2019.1.1. The app was run on a Macbook Pro 13” (2017) with the following characteristics: Intel Core i5 processor with 2.3 Ghz, 8 GB RAM, and a screen resolution of 2560 × 1600 Pixels. For the purpose of this experiment, the computer was placed at a suitable height so that the camera could see the guitar headstock and fret board and the user could easily see the finger placements required for each chord (mirror image).

3.4. Experimental Study

3.4.1. Procedure

Participants were informed they would be set the task of learning how to perform seven basic major guitar chords (C major, D major, E major, F major, G major, A major, and B major), one after another. In both the AR-assisted and traditional teacher-led methodologies, participants performed tasks individually. All training sessions for both methodologies were recorded with a video camera. An assistant participated in each session in order to time participants, recording how long it took them to perform and learn the seven guitar chords. The assistant was also on hand to answer any questions participants might have regarding the set task.

3.4.2. Tasks

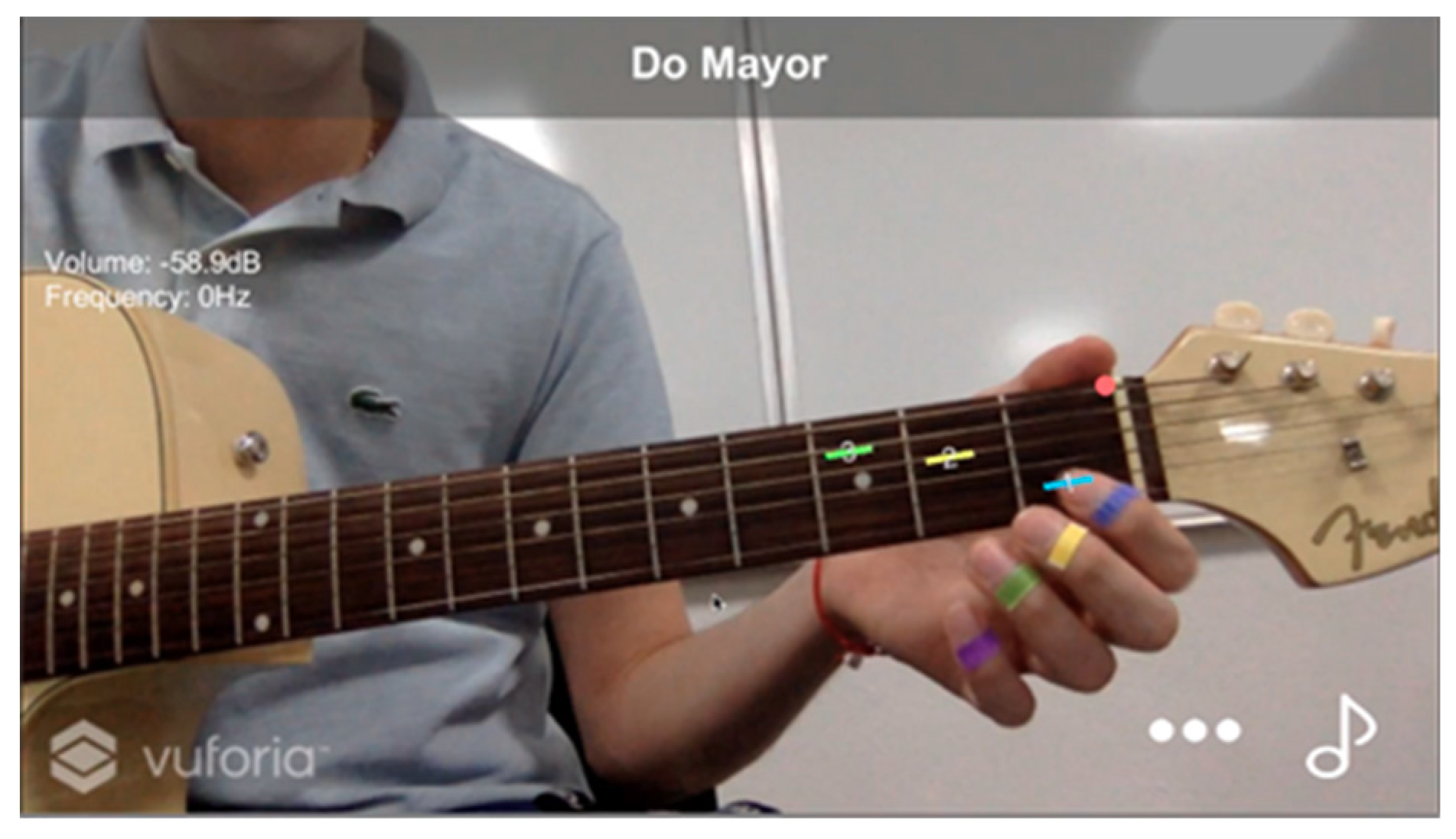

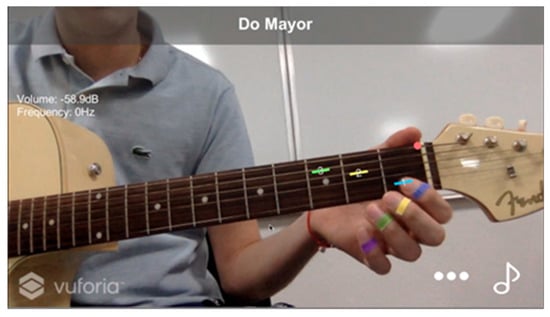

During training sessions involving AR-assisted tasks, participants need to wear color-coded markers on the fingers of their left hand that corresponded to the color-coded instructions provided by the ad-hoc app created for this study: Blue: index finger, yellow: middle finger, green: ring finger, violet: little finger (see Figure 1).

Figure 1.

Color-coded markers used on fingers.

The app displayed the chord that needed to be played by the user at the top of the screen. It recognized the fret board of the guitar and then displayed the necessary finger placements onscreen (mirror view). Using AR, color-coded instructions were superimposed on the neck of the guitar on the correct string and fret (see Figure 2).

Figure 2.

Example superimposed image of Augmented Reality (AR) color-coded instructions.

The assistant asked the participant to place his or her fingers on the strings as indicated by the app, ensuring the color of the markers on their fingers matched the AR color-coded instruction onscreen. If fingers were not placed correctly, a red error marker appeared onscreen. When fingers were placed correctly, the participant could then play the chord by strumming the strings of the guitar over the sound hole of the guitar with the thumb of their right hand.

Using the computer’s in-built microphone, the ad-hoc application evaluated the sound frequencies emitted as the participant attempted to play the chord. If the chord was played correctly, the app allowed the user to advance to the next chord.

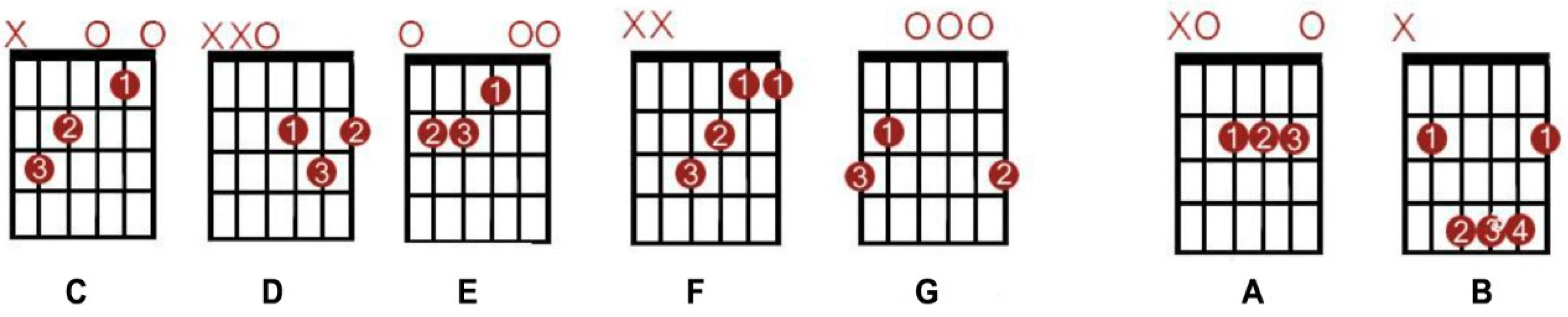

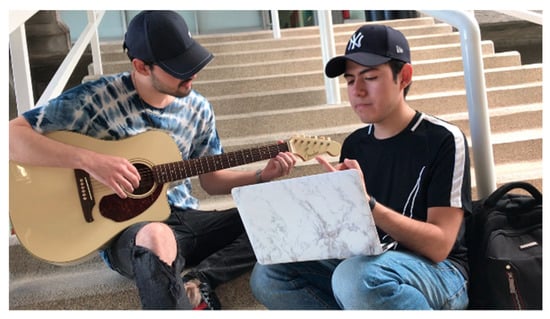

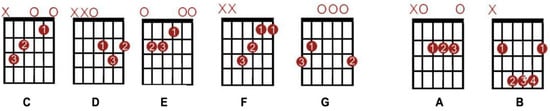

In the case of the traditional teacher-led methodology, participants received feedback from the teacher as they played the chord (Figure 3). The teacher showed the participants how to perform chords using guitar chord charts (Figure 4). Participants read these chord charts to understand finger placement and then played the chord. The teacher provided assistance as necessary.

Figure 3.

Evaluation in the traditional methodology.

Figure 4.

Chord chart of seven major chords. Numbers indicate where should fingers be placed on the fret board of the guitar.

For both tests, a time limit of 2 min was set for each task. Tasks were logged either as “successful” or “unsuccessful”. The 2 min time limit was established based on it being 4 times the average time taken by the guitar teacher to correctly perform the chord in the AR app.

• Task 1: Play C major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 on fret one of the second string; finger 2 on fret two of the fourth string; finger 3 on fret three of the fifth string. They were also told not to strum the sixth string to ensure the chord was played correctly.

• Task 2: Play D major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 on fret two of the third string; finger 2 on fret two of the first string; finger 3 on fret three of the second string. They were also told not to strum the fifth or sixth strings to ensure the chord was played correctly.

• Task 3: Play E major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 on fret one of the third string; finger 2 on fret two of the fifth string; finger 3 on fret two of the fourth string. They were also told to strum the sixth string to ensure the chord was played correctly.

• Task 4: Play F major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 on fret one of the first and second strings; finger 2 on fret two of the third string; finger 3 on fret three of the fourth string. They were also told not to strum the sixth string to ensure the chord was played correctly.

• Task 5: Play G major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 on fret two of the fifth string; finger 2 on fret three of the first string; finger 3 on fret three of the sixth string. They were also told to strum the sixth string to ensure the chord was played correctly.

• Task 6: Play A major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 on fret two of the fourth string; finger 2 on fret two of the third string; finger 3 on fret two of the second string. They were also told not to strum the sixth string to ensure the chord was played correctly.

• Task 7: Play B major chord

Participants were asked to position their fingers as instructed and press firmly: finger 1 bar fret two until string 5; finger 2 on fret four of the fourth string; finger 3 on fret four of the third string; and finger 4 on fret four of the second string. They were also told to strum the sixth string to ensure the chord was played correctly.

4. Results

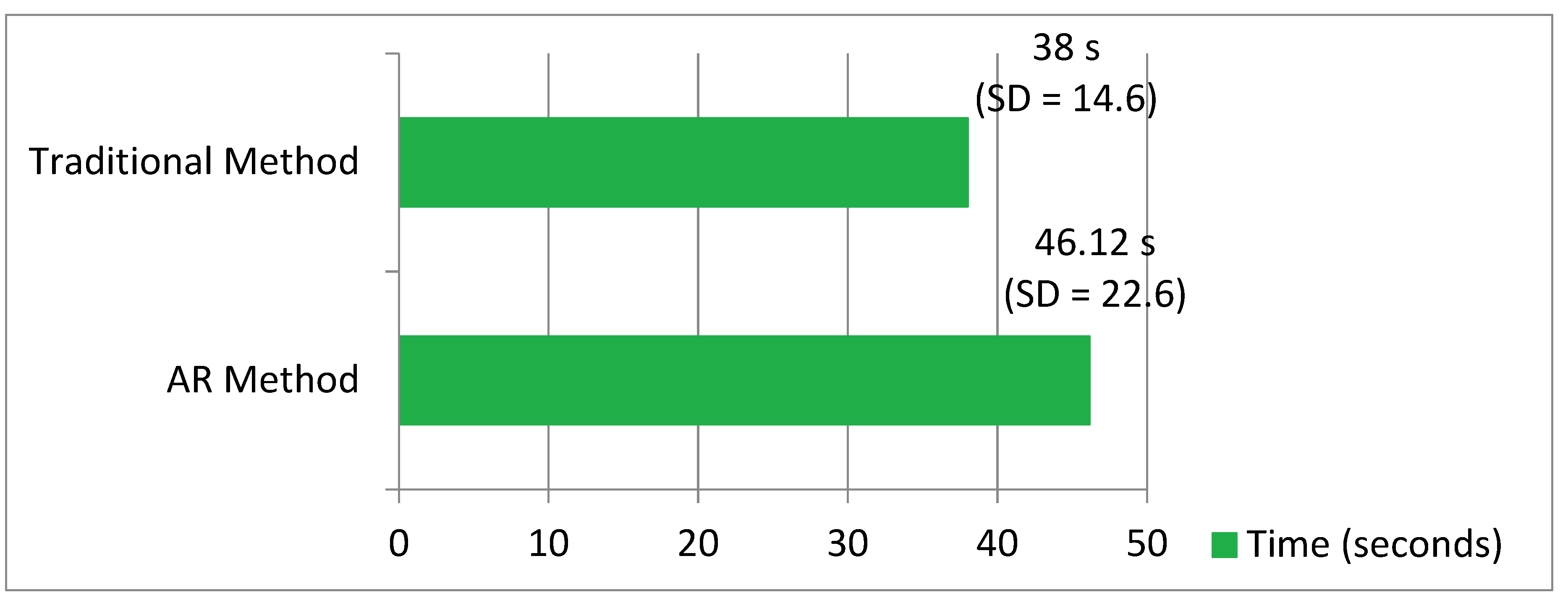

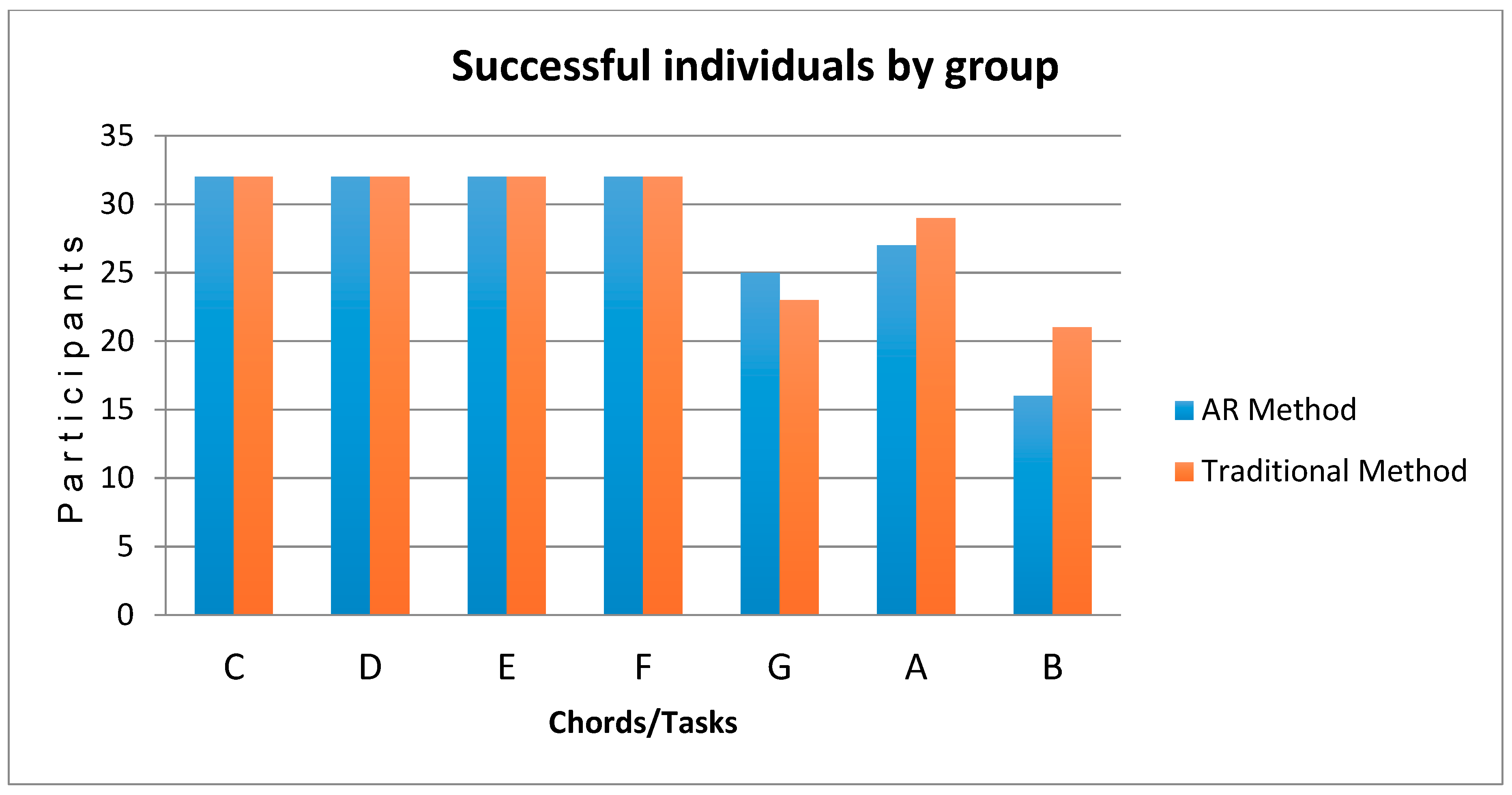

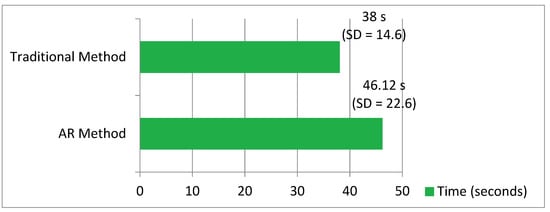

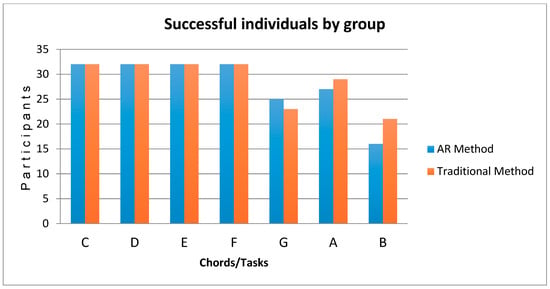

This section contains the results of the experimental study for each of the aforementioned independent variables. Figure 5 displays the mean time (in seconds) taken to perform tasks, displaying findings for both methodologies. Figure 6 shows the success rate for each task by learning methodology. All participants successfully performed the tasks of playing C major, D major, E major, and F major (all 32 in each group). However, participants in both groups encountered greater difficulty performing the tasks G major, A major, and B major, as finger placement was more complex. In particular, the B chord proved the most difficult as it is a bar chord. This means participants had to press five strings simultaneously across the fret with their index finger. It should be remembered that participants were complete beginners and this was their first contact with the instrument; as such, a chord was recorded as successful if it was performed correctly in under two minutes.

Figure 5.

Comparison of augmented reality (AR) methodology and traditional methodology: total mean times (seconds) for tasks.

Figure 6.

Comparison of AR methodology and traditional methodology: success rates.

4.1. Analysis of Results

Although the number of successful tasks was not an explicit variable in this study, it has been included to support the acceptance or rejection of the null hypotheses. Fisher’s F test was used to compare variables. The results obtained had a p-value of 0.88. As this was greater than 0.05, it indicated that there was no significant difference in the success rates for either methodology.

A two-way analysis of variance (ANOVA) was performed to analyze effects on task completion times. The two independent variables were training methodology and gender. The combination of both factors was also analyzed to observe whether interference between them produced any effects on the time taken to perform tasks.

As seen in Table 2, the p-values obtained for each factor were >0.05; thus, the two null hypotheses proposed in Section 3 could not be rejected:

Table 2.

p-Value obtained by performing a two-way analysis of variance (ANOVA) analysis.

- The time taken to learn the basic musical chords on the guitar did not depend on the methodology used.

- The gender of participants did not affect the time taken.

Based on these findings, it is possible to state that there was no significant difference between the two methodologies with regard to learning times. It can also be stated that there were no differences in learning by gender within the group itself or between different methodologies.

Observational findings indicated that each methodology had its own set of advantages and disadvantages.

Table 3 shows a t-student analysis (paired series) of the variable “time” for each task, in order to identify whether there was a significant difference in the execution time of each chord by learning method.

Table 3.

p-Value obtained upon performing t-students analysis to compare mean time of tasks by methodology group.

These results show in detail the result of Table 2. In this case, we can see that all tasks (except Task 7 “Play B major chord”) showed no difference in the time of execution (one method or another of learning). Task 7 required more time from the participants of the AR methodology group.

4.2. User Feedback

Once the experimental study was completed, participants filled out the usability scale (SUS) questionnaire to establish the usability of the ad-hoc app that had been developed to teach guitar chords using AR technology. The questionnaire consisted of 10 questions evaluated on a “ikert scale (“strongly disagree”–“strongly agree”) [44]. The score obtained for this usability questionnaire was 82.5. This indicator was higher than the average of 68. Scores higher than 68 indicate a good usability for software [45].

4.3. Observational Findings

Group 1 participants were surprised by how easy it was to follow the instructions provided by the ad-hoc AR app. In-app feedback messages (red cross—“try again”, green tick—“well done!”) proved motivation and encouraged participants to follow the instructions explaining how to play chords. They did not experience the stress associated with waiting for instructions or feedback from a human instructor. However, some did state they would have preferred to receive feedback and reassurances from a human instructor to confirm whether they had played a chord correctly or not. Participants appeared relaxed as a result of studying autonomously. Upon completing a task they were often heard to exclaim, “How easy”, “Excellent”, “Awesome”, or “Bring it on!” (next task). These comments served as self-motivation. The last task, which involved playing the B major chord, created confusion. Users did not understand that the same color superimposed across multiple strings on the same fret was an instruction to bar the fret with a single finger. The majority of participants took a while to understand that they needed to use the entire length of the index finger to bar the fret. Having completed all tasks, the participants declared their interest in learning to play guitar using AR assistance and that they wished to continue using this methodology. Some stated that this type of learning could be enhanced by contact with a music teacher to ensure their technique was correct.

Group 2 participants following the dynamic traditionally encountered in music classes felt comfortable with their instructor’s expectations. They were able to confirm the correct execution of each task. Some participants felt a certain amount of pressure to please the teacher. In other cases, they would address the instructor to complain how difficult they found certain finger placements, and in some instances the instructor provided physical assistance by moving their fingers into the correct positions. In the majority of cases, these techniques were appreciated, although some participants did experience frustration on certain tasks and wanted to get the task over and done with quickly to have a respite from the stress.

5. Conclusions

This experimental study evaluated the speed at which 64 participants were able to learn to play seven basic major chords on the guitar (C major, D major, E major, F major, G major, A major, and B major) using two different methodologies. The goal was to establish which methodology proved more efficient in terms of effectiveness and time taken to complete learning.

With regards to the success rate of each methodology, both outperformed expectations and virtually all participants played all chords successfully. The statistical analysis also confirmed that the time taken to play chords was similar in both methodologies, as no significant difference was found.

More specifically, upon analyzing how long it took participants on average to complete tasks using the AR app, it was found that only the B major chord reflected a significant difference when compared against the mean times recorded for the traditional teacher-led methodology. This was due to users not understanding the onscreen instructions indicating how to perform the bar chord. Users had to figure out for themselves what the onscreen instructions meant when all the strings of the same fret were marked in blue. It took them a while to understand that they had to use the entire index finger to press on all the marked strings at the same time.

The results obtained in this study suggest that the methodology used did not affect the time taken to perform tasks, nor were there differences as a result of participants’ gender; both men and women were able to play chords successfully with no differences in execution times.

Both training models had a positive impact on participants, as more than 85% of tasks were completed in the established timeframes. It is recommended that students are taught to play the C chord first, as all participants found it the easiest to play and were able to do so much faster than all other chords.

All of this led the authors of this study to agree that both methods are technically feasible for learning, although participants who used the AR application were observed to be more motivated by the use of the application. However, caution is advised when looking at the results and conclusions of this study, as the authors’ initial aim was to merely build a pilot study that would reveal a suitable approach for a larger scale experiment and more tasks. In particular, one great limitation relates to the participants, limited to university students. Nevertheless, there is no doubt that the results delivered positive findings. The fact that this study has provided good results can and should serve as an invitation to further exploit these types of pedagogical methodologies and initiatives as a means through which to comfortably handle the new teaching styles that are currently being imposed.

Finally, the results and findings of this study open a door for developers to create applications in which AR interacts directly with the physical guitar. Even they provide a guide to develop this type of application, which cannot follow the common design standards [46].

Applications using visual and audio augmented reality, like the one described in this study, could provide a reasonable alternative for individuals hoping to learn a musical instrument. On one hand, the low cost of such apps offer users savings when compared against traditional classes that require a music teacher, and, on the other hand, they offer individuals seeking an escape from external pressures the opportunity to pursue self-guided learning and autonomy. In this study the methodology using AR generated satisfaction among learners. This statement is based on the comments received from participants and the score obtained from the system usability scale (SUS) questionnaire. The degree of usability of the model was acceptable, confirming that AR has a high value for the field of education, as learners felt comfortable interacting with the app and can learn in their free time without the need for a teacher [47].

With regards to future research on visual and audio augmented reality based on the findings of this study, AR apps could be developed to teach entire songs, not just chords. It would also be interesting to continue this line of research into audio recognition and superimposed images to create similar applications aimed at fostering self-guided learning on different musical instruments. To achieve positive results, it is essential to have a well-designed learning model based on the use of augmented reality, and it is fundamental that the tool is intuitive and motivates learning [33].

Author Contributions

The contributions to this paper are as follows: conceptualization and software, R.F.P. and M.A.R.S.; Investigation, methodology and supervision J.M.-G. and M.S.D.R.-G.; validation R.F.P. and M.A.R.S.; formal analysis, J.M.-G.; writing—original draft preparation, R.F.P., M.A.R.S. and M.S.D.R.-G.; writing—review and editing, J.M.-G. and V.A.L.-C.

Funding

This research was funded by the research program of the Universidad de Monterrey and the research program of Universidad de La Laguna through an International Cooperation Agreement between both institutions.

Acknowledgments

The authors would like to thank the students from the University of Monterrey (UDEM) who volunteered to be part of this study.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Schafer, R. The Soundscape: Our Sonic Environment and the Tuning of the World; Inner Traditions Bear and Company Rochester: Rochester, VT, USA, 1999. [Google Scholar]

- Ramirez, R.; Canepa, C.; Ghisio, S.; Kolykhalova, K.; Mancini, M.; Volta, E.; Volpe, G.; Giraldo, S.; Mayor, O.; Perez, A.; et al. Enhancing Music Learning with Smart Technologies. In Proceedings of the 5th International Conference on Movement and Computing-MOCO ’18, Genoa, Italy, 28–30 June 2018; pp. 1–4. [Google Scholar]

- Alonso-Jartin, R.; Chao-Fernández, R. Creatividad en el aprendizaje instrumental lenguaje metafórico, velocidad del procesamiento cognitivo y cinestesia. Creat. Soc. Rev. Asoc. Para Creat. 2018, 28, 7–30. [Google Scholar]

- Trujano, F.; Khan, M.; Maes, P. ARPiano Efficient Music Learning Using Augmented Reality. In Lecture Notes in Computer Science, Proceedings of the Innovative Technologies and Learning -ICITL 2018, Portoroz, Slovenia, 27-30 August 2018; Wu, T.T., Huang, Y.M., Shadiev, R., Lin, L., Starčič, A., Eds.; Springer: Cham, Switzerland, 2018; Volume 11003, pp. 3–17. [Google Scholar]

- Harrison, E. Challenges Facing Guitar Education. Music Educ. J. 2010, 97, 50–55. [Google Scholar] [CrossRef]

- Motokawa, Y.; Saito, H. Support System Using Augmented Reality Display for Use in Teaching the Guitar. J. Inst. Image Inf. Telev. Eng. 2007, 61, 789–796. [Google Scholar] [CrossRef]

- Längler, M.; Nivala, M.; Gruber, H. Peers, parents and teachers: A case study on how popular music guitarists perceive support for expertise development from ‘persons in the shadows’. Music. Sci. 2018, 22, 224–243. [Google Scholar] [CrossRef]

- Keebler, J.R.; Wiltshire, T.J.; Smith, D.C.; Fiore, S.M.; Bedwell, J.S. Shifting the paradigm of music instruction: Implications of embodiment stemming from an augmented reality guitar learning system. Front. Psychol. 2014, 5, 471. [Google Scholar] [CrossRef]

- Schön, A. Educating the Reflective Practicioner; Jossey-Bass: San Francisco, CA, USA, 1987. [Google Scholar]

- Löchtefeld, M.; Krüger, A.; Gehring, S.; Jung, R. GuitAR-Supporting guitar learning through mobile projection. In Proceedings of the Conference on Human Factors in Computing Systems, Vancouver, BC, Canada, 7–12 May 2011; pp. 1447–1452. [Google Scholar]

- Waldron, J.; Mantie, R.; Partti, H.; Tobias, E.S. A brave new world: Theory to practice in participatory culture and music learning and teaching. Music Educ. Res. 2018, 20, 289–304. [Google Scholar] [CrossRef]

- Gaunt, H. One-to-one tuition in a conservatoire: The perceptions of instrumental and vocal students. Psychol. Music 2010, 38, 178–208. [Google Scholar] [CrossRef]

- Gaunt, H. Understanding the one-to-one relationship in instrumental/vocal tuition in Higher Education: Comparing student and teacher perceptions. Br. J. Music Educ. 2011, 28, 159–179. [Google Scholar] [CrossRef]

- Bjøntegaard, B.J. A combination of one-to-one teaching and small group teaching in higher music education in Norway—A good model for teaching? Br. J. Music Educ. 2015, 32, 23–36. [Google Scholar] [CrossRef]

- Alonso-Jartín, R.; Chao-Fernández, R. Aprendiendo a enseñar un instrumento musical en edades tempranas. In Fórmulas Docentes de Vanguardia; Cantalapiedra, B., Aguilar, P., Requeijo, P., Eds.; Gedisa: Barcelona, Spain, 2018; pp. 27–35. [Google Scholar]

- Wych, G.M.F. Gender and Instrument Associations, Stereotypes, and Stratification. Update Appl. Res. Music Educ. 2012, 30, 22–31. [Google Scholar] [CrossRef]

- Hallam, S.; Rogers, L.; Creech, A. Gender differences in musical instrument choice. Int. J. Music Educ. 2008, 26, 7–19. [Google Scholar] [CrossRef]

- Keebler, J.R.; Wiltshire, T.J.; Smith, D.C.; Fiore, S.M. Picking Up STEAM: Educational Implications for Teaching with an Augmented Reality Guitar Learning System. Comput. Vis.–ECCV 2012 2013, 8022, 170–178. [Google Scholar]

- Chao-Fernandez, R.; Román-García, S.; Chao-Fernandez, A. Analysis of the use of ICT through Music Interactive Games as Educational Strategy. Procedia-Soc. Behav. Sci. 2017, 237, 576–580. [Google Scholar] [CrossRef]

- Kerdvibulvech, C.; Saito, H. Vision-Based Guitarist Fingering Tracking Using a Bayesian Classifier and Particle Filters. In Advances in Image and Video Technology; Springer: Berlin/Heidelberg, Germany, 2007; pp. 625–638. [Google Scholar]

- Liarokapis, F. Augmented reality scenarios for guitar learning. In Proceedings of the Theory and Practice of Computer Graphics 2005, TPCG 2005-Eurographics UK Chapter Proceedings, Canterbury, UK, 14 March 2005; pp. 163–170. [Google Scholar]

- Pedgley, O.; Norman, E.; Armstrong, R. Materials-inspired innovation for acoustic guitar design. METU J. Fac. Archit. 2009, 29, 157–174. [Google Scholar]

- Havre, S.J.; Väkevä, L.; Christophersen, C.R.; Haugland, E. Playing to learn or learning to play? Playing Rocksmith to learn electric guitar and bass in Nordic music teacher education. Br. J. Music Educ. 2019, 36, 21–32. [Google Scholar] [CrossRef]

- Hakim, N.L.; Sun, S.W.; Hsu, M.H.; Shih, T.K.; Wu, S.J. Virtual guitar: Using real-time finger tracking for musical instruments. Int. J. Comput. Sci. Eng. 2019, 18, 438–450. [Google Scholar] [CrossRef]

- Graham, K.; Schofield, D. Rock god or game guru: Using Rocksmith to learn to play a guitar. J. Music Technol. Educ. 2018, 11, 65–82. [Google Scholar] [CrossRef]

- Martins, V.F.; Gomes, L.; de Guimarães, M. Challenges and possibilities of use of augmented reality in education case study in music education. In Lecture Notes in Computer Science, Proceedings of the Computational Science and Its Applications -ICCSA 2015, Banff, Canada, 22–25 June 2015; Gervasi, O., Murgante, B., Misra, S., Gavrilova, M.L., Rocha, A.M.A.C., Torre, C.M., Taniar, D., Apduhan, B.O., Eds.; Springer: Cham, Switzerland, 2015; Volume 9159, pp. 223–233. [Google Scholar]

- Kerdvibulvech, C.; Saito, H. Guitarist Fingertip Tracking by Integrating a Bayesian Classifier into Particle Filters. Adv. Hum.-Comput. Interact. 2008, 2008, 1–10. [Google Scholar] [CrossRef]

- Hackl, D.; Anthes, C. HoloKeys-An augmented reality application for learning the piano. In Proceedings of the 10th Forum Media Technology and 3rd All Around Audio Symposium, St. Pölten, Austria, 29–30 November 2017; pp. 140–144. [Google Scholar]

- Rogers, K.; Röhlig, A.; Weing, M.; Gugenheimer, J.; Könings, B.; Klepsch, M.; Schaub, F.; Rukzio, E.; Seufert, T.; Weber, M. PIANO: Faster Piano Learning with Interactive Projection. In Proceedings of the Ninth ACM International Conference on Interactive Tabletops and Surfaces-ITS ’14, Dresden, Germany, 16–19 November 2014; pp. 149–158. [Google Scholar]

- Akbari, M.; Liang, J.; Cheng, H. A real-time system for online learning-based visual transcription of piano music. Multimed. Tools Appl. 2018, 77, 25513–25535. [Google Scholar] [CrossRef]

- Turchet, L.; Fischione, C.; Essl, G.; Keller, D.; Barthet, M. Internet of Musical Things: Vision and Challenges. IEEE Access 2018, 6, 61994–62017. [Google Scholar] [CrossRef]

- Tan, K.L.; Lim, C.K. Development of traditional musical instruments using augmented reality (AR) through mobile learning. AIP Conf. Proc. 2018, 2016, 020140. [Google Scholar]

- Patzer, B.; Smith, D.C.; Keebler, J.R. Novelty and Retention for Two Augmented Reality Learning Systems. Proc. Hum. Factors Ergon. Soc. Annu. Meet. 2014, 58, 1164–1168. [Google Scholar] [CrossRef]

- Del Rio Guerra, M.; Martín-Gutiérrez, J.; Vargas-Lizárraga, R.; Garza-Bernal, I. Determining which touch gestures are commonly used when visualizing physics problems in augmented reality. In Lecture Notes in Computer Science, Proceedings of the Virtual, Augmented and Mixed Reality -VAMR2018, Las Vegas, USA, 15–20 July 2018; Chen, J., Fragomeni, G., Eds.; Springer: Cham, Switzerland, 2018; Volume 10909, pp. 3–12. [Google Scholar]

- Motokawa, Y.; Saito, H. Support system for guitar playing using augmented reality display. In Proceedings of the 2006 IEEE/ACM International Symposium on Mixed and Augmented Reality, Santa Barbard, CA, USA, 22–25 October 2006; pp. 243–244. [Google Scholar]

- Guerrero-Turrubiates, J.D.J.; Ledesma, S.; Gonzalez-Reyna, S.E.; Avina-Cervantes, G.; Ilunga-Mbuyamba, E.; Avina-Cervantes, J.G. Guitar audio signal classification by collapsed Pitch Class Profile. In Proceedings of the 2016 IEEE International Autumn Meeting on Power, Electronics and Computing (ROPEC), Ixtapa, Mexico, 9–11 November 2016; pp. 1–6. [Google Scholar]

- Van Solingen, R.; Basili, V.; Caldiera, G.; Rombach, H.D. Goal Question Metric (GQM) Approach. In Encyclopedia of Software Engineering; John Wiley & Sons, Inc.: Hoboken, NJ, USA, 2002. [Google Scholar]

- Kashiwagi, Y.; Ochi, Y. A Study of Left Fingering Detection Using CNN for Guitar Learning. In Proceedings of the 2018 International Conference on Intelligent Autonomous Systems (ICoIAS), Singapore, 1–3 March 2018; pp. 14–17. [Google Scholar]

- Silla, C.N.; Przybisz, A.L.; Rivolli, A.; Gimenez, T.; Barroso, C.; Machado, J. Girls, music and computer science. In Proceedings of the 2018 IEEE Frontiers and Education (FIE), San Jose, CA, USA, 3–6 October 2018; pp. 1–6. [Google Scholar]

- Kurtz-Costes, B.; Copping, K.E.; Rowley, S.J.; Kinlaw, C.R. Gender and age differences in awareness and endorsement of gender stereotypes about academic abilities. Eur. J. Psychol. Educ. 2014, 29, 603–618. [Google Scholar] [CrossRef]

- Vogel, S.A. Gender Differences in Intelligence, Language, Visual-Motor Abilities, and Academic Achievement in Students with Learning Disabilities. J. Learn. Disabil. 1990, 23, 44–52. [Google Scholar] [CrossRef] [PubMed]

- Nielsen, J. Estimating the number of subjects needed for a thinking aloud test. Int. J. Hum. Comput. Stud. 1994, 41, 385–397. [Google Scholar] [CrossRef]

- Hwang, W.; Salvendy, G. Number of people required for usability evaluation. Commun. ACM 2010, 53, 130–133. [Google Scholar] [CrossRef]

- Thomas, N. Usability Geek. How to Use the System Usability Scale (SUS) to Evaluate the Usability. Available online: https://usabilitygeek.com/how-to-use-the-system-usability-scale-sus-to-evaluate-the-usability-of-your-website/ (accessed on 28 September 2019).

- Bangor, A.; Kortum, P.; Miller, J. Determining What Individual SUS Scores Mean: Adding an Adjective Rating Scale. J. Usabil. Stud. 2009, 4, 114–123. [Google Scholar]

- da Silva, M.M.O.; Teixeira, J.M.X.N.; Cavalcante, P.S.; Teichrieb, V. Perspectives on how to evaluate augmented reality technology tools for education: A systematic review. J. Braz. Comput. Soc. 2019, 25, 3. [Google Scholar] [CrossRef]

- Kularbphettong, K.; Roonrakwit, P.; Chutrtong, J. Effectiveness of enhancing classroom by using augmented reality technology. In Advances in Intelligent Systems and Computing, Proceedings of the International Conference on Human Factors in Traning, Education, and Learning Sciences, Orlando, USA, 21–25 July 2018; Nazir, S., Teperi, A.M., Polak-Sopinska, A., Eds.; Springer: Cham, Switzerland, 2019; Volume 785, pp. 125–133. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).