An Overview of Lidar Imaging Systems for Autonomous Vehicles

Abstract

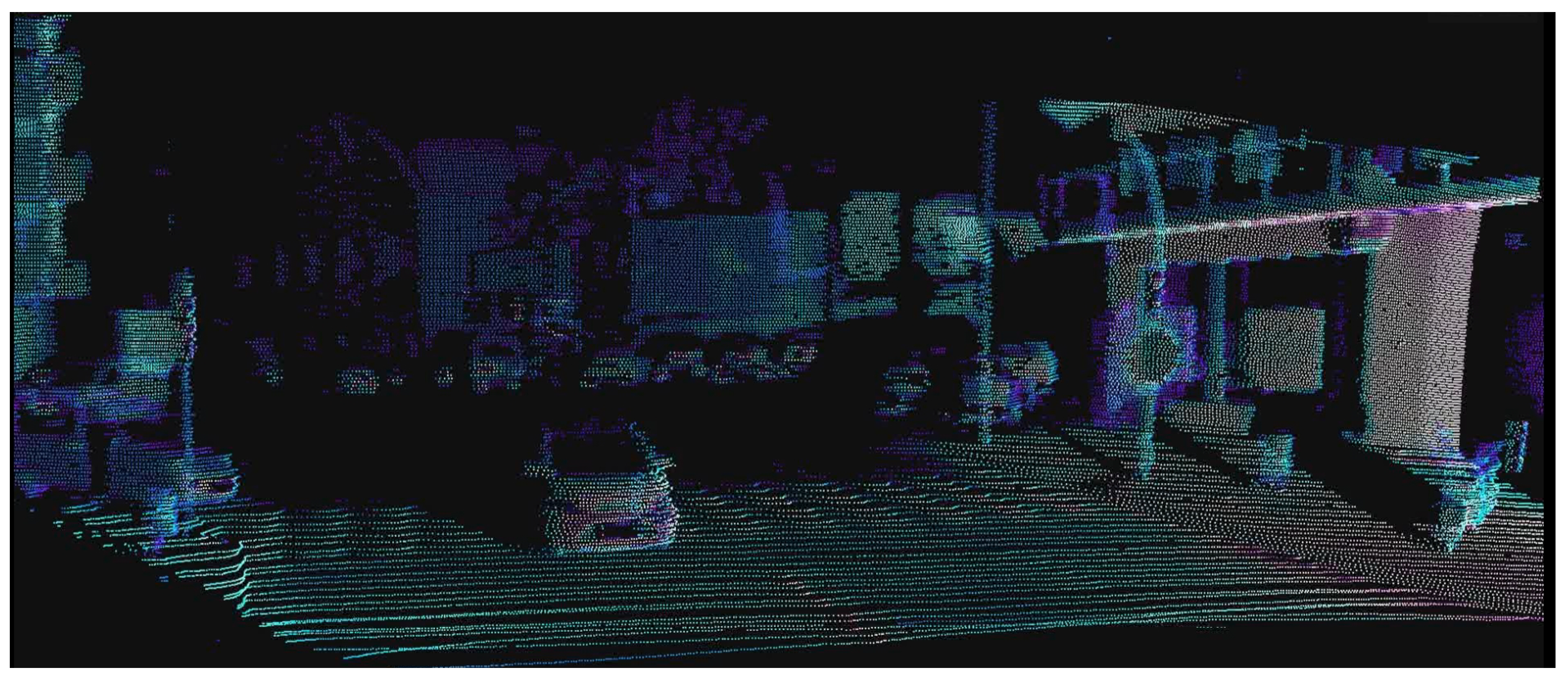

1. Introduction

2. Basics of Lidar Imaging

2.1. Measurement Principles

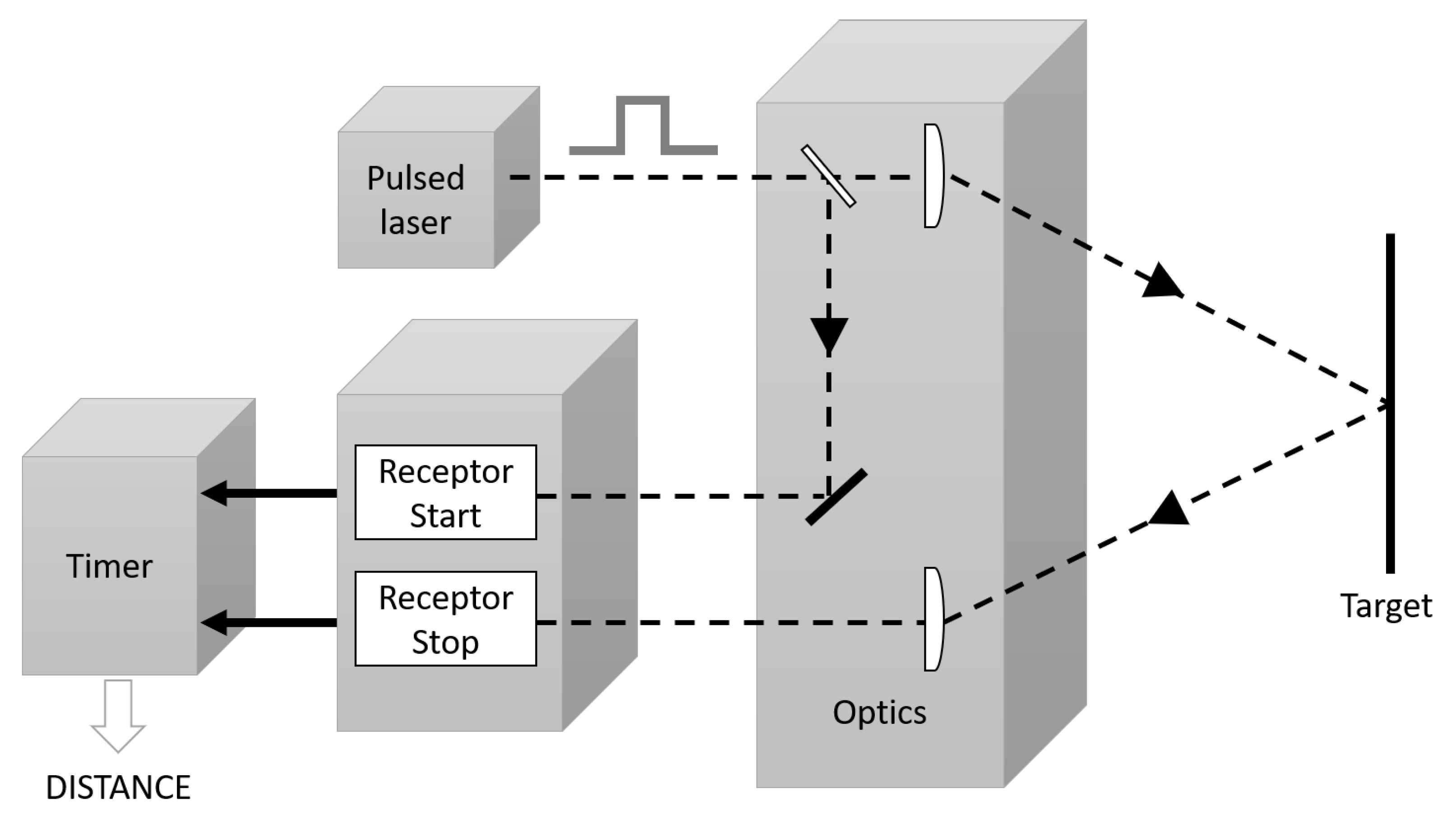

2.1.1. Pulsed Approach

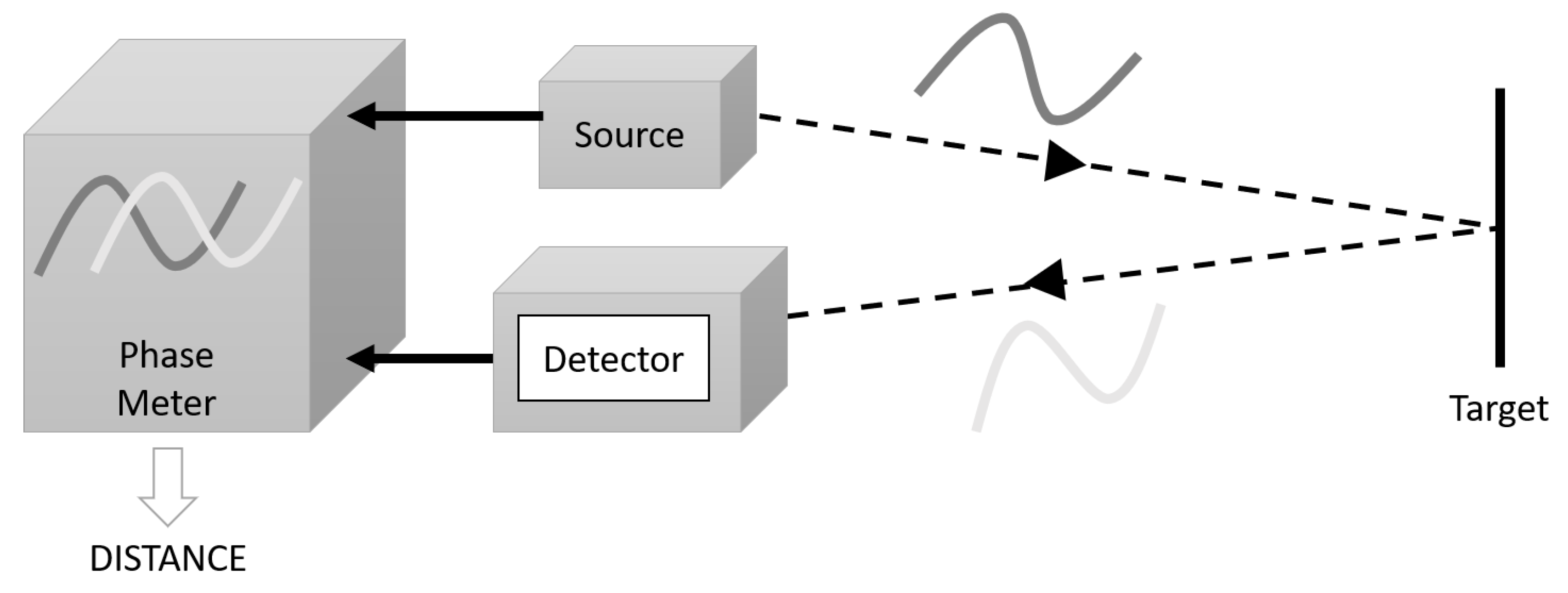

2.1.2. Continuous Wave Amplitude Modulated (AMCW) Approach

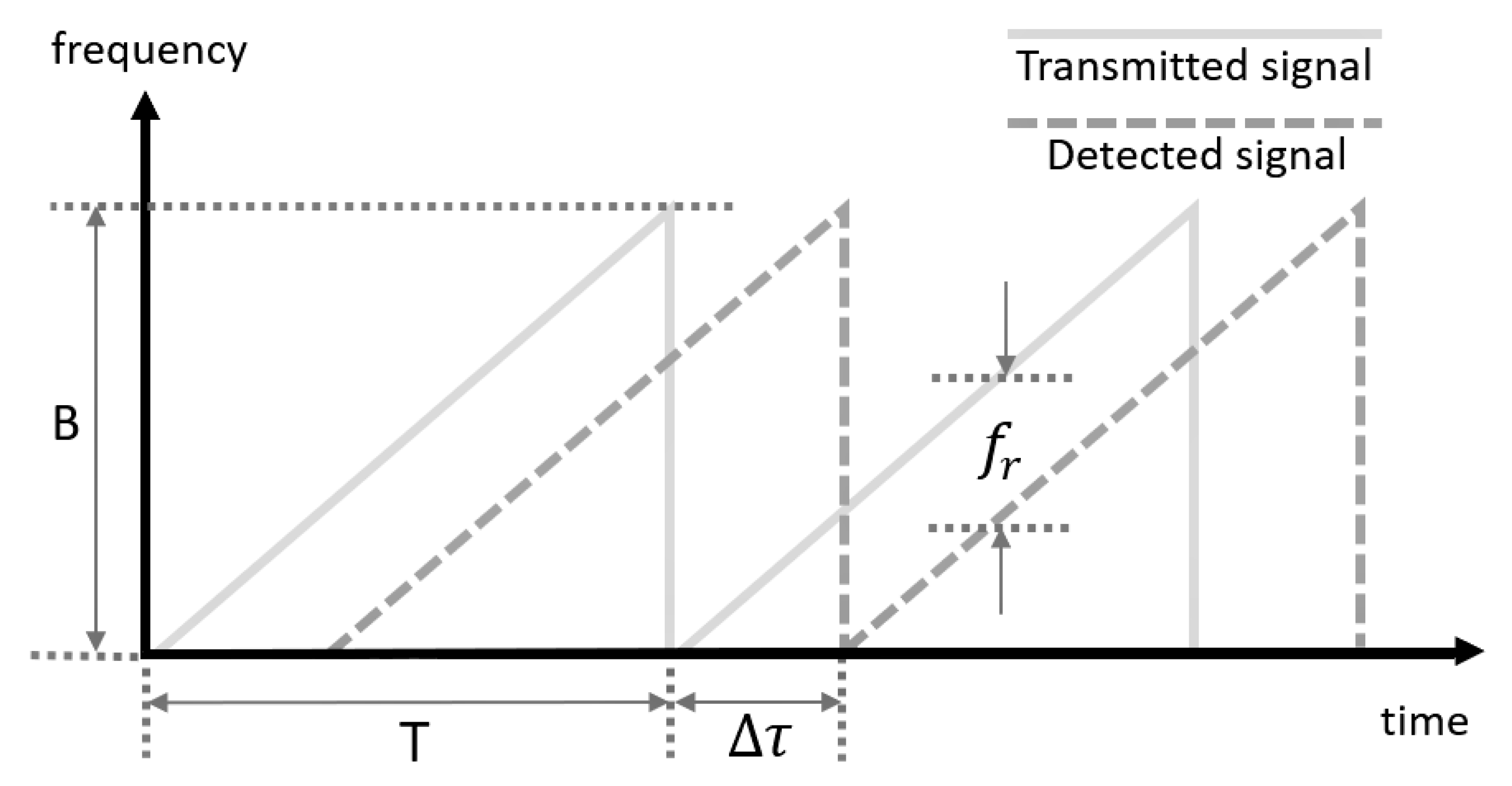

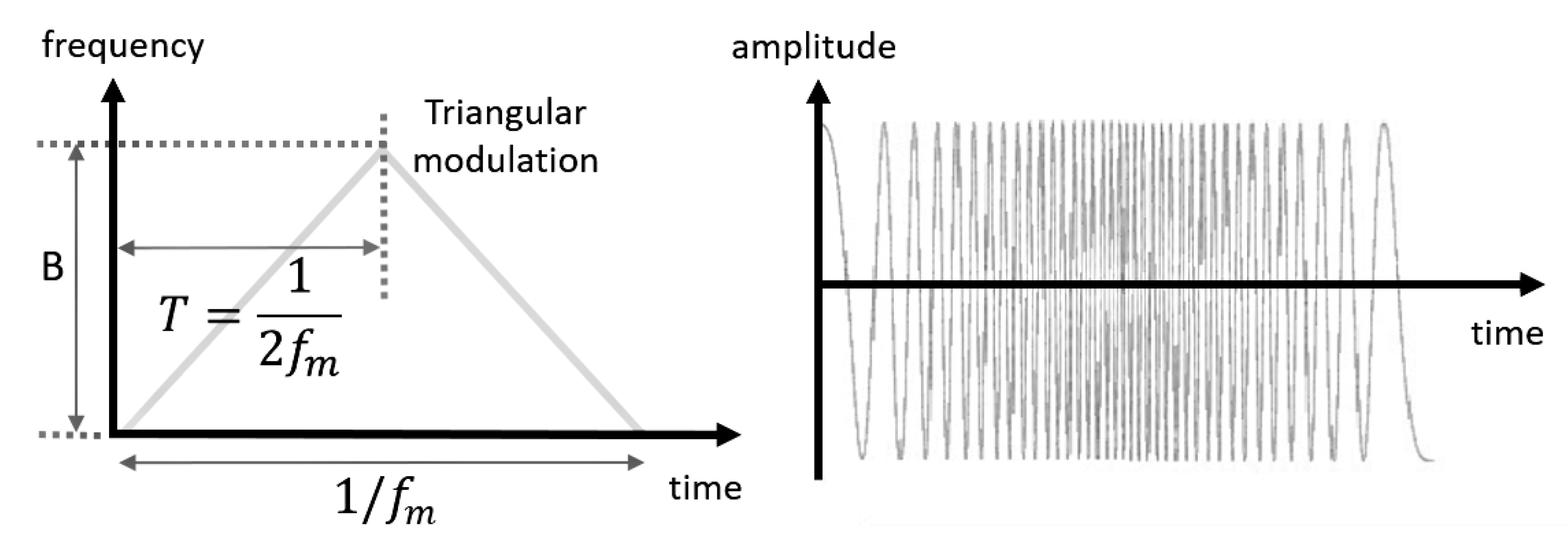

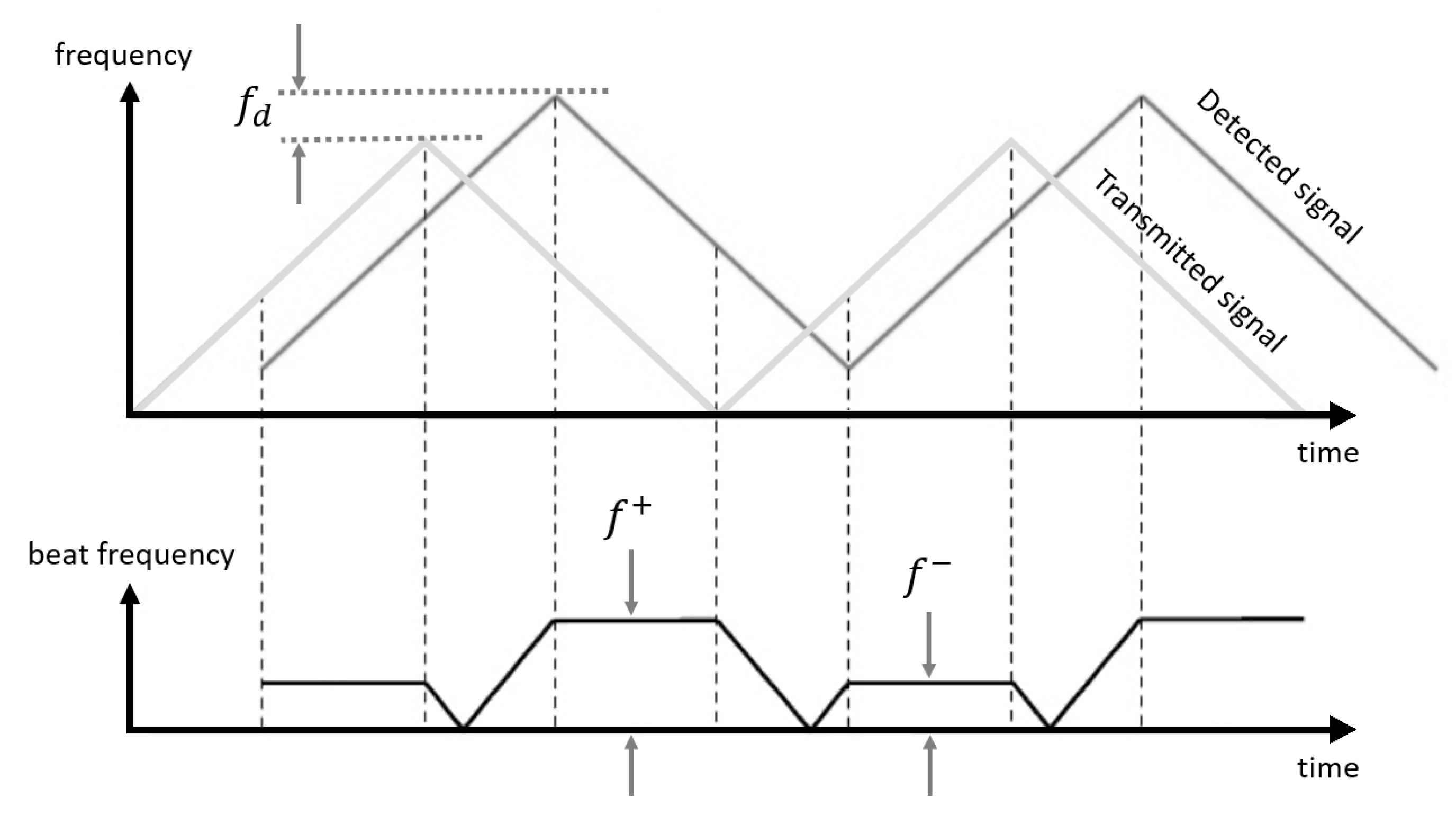

2.1.3. Continuous Wave Frequency Modulated (FMCW) Approach

2.1.4. Summary

2.2. Imaging Strategies

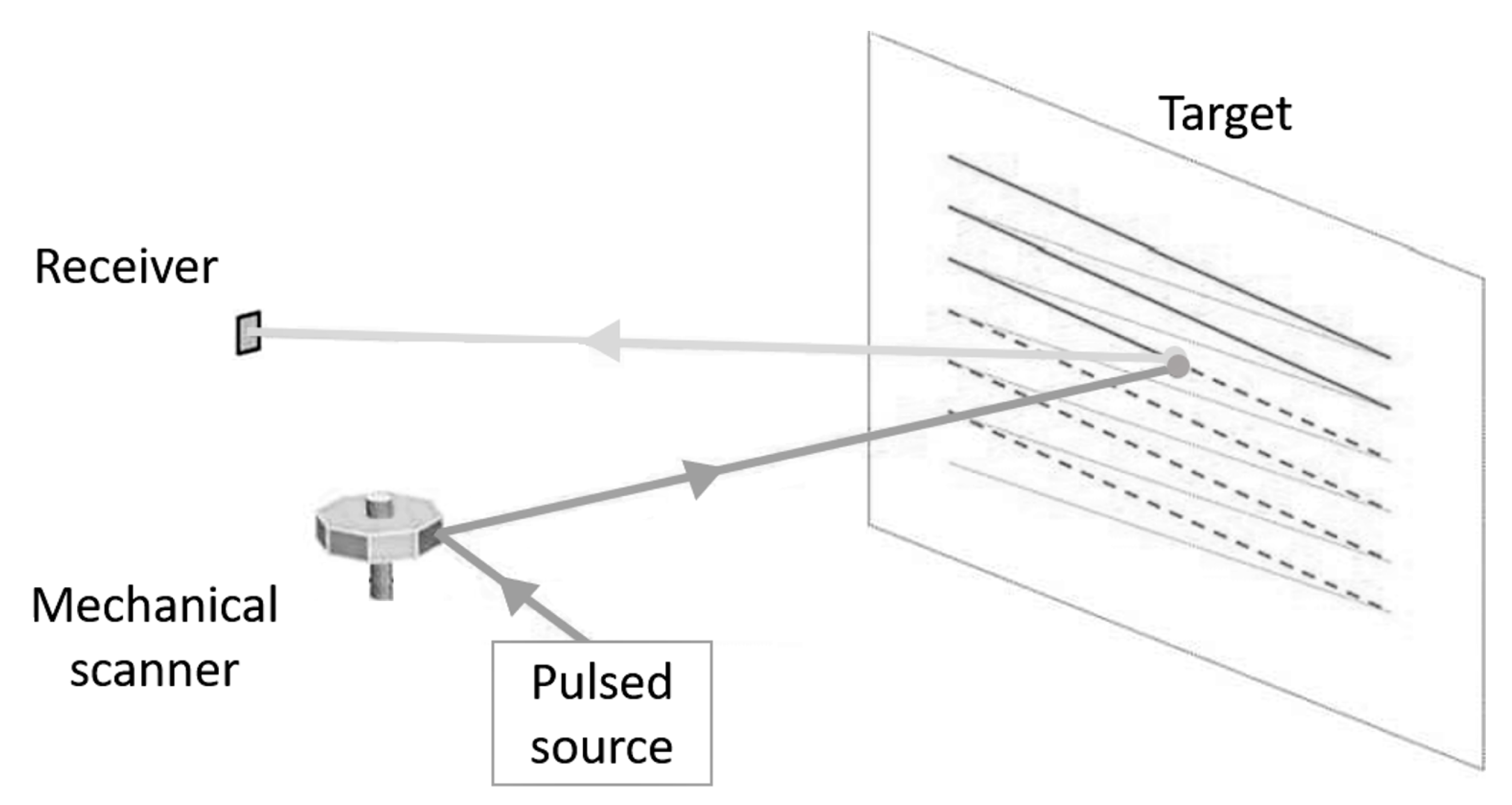

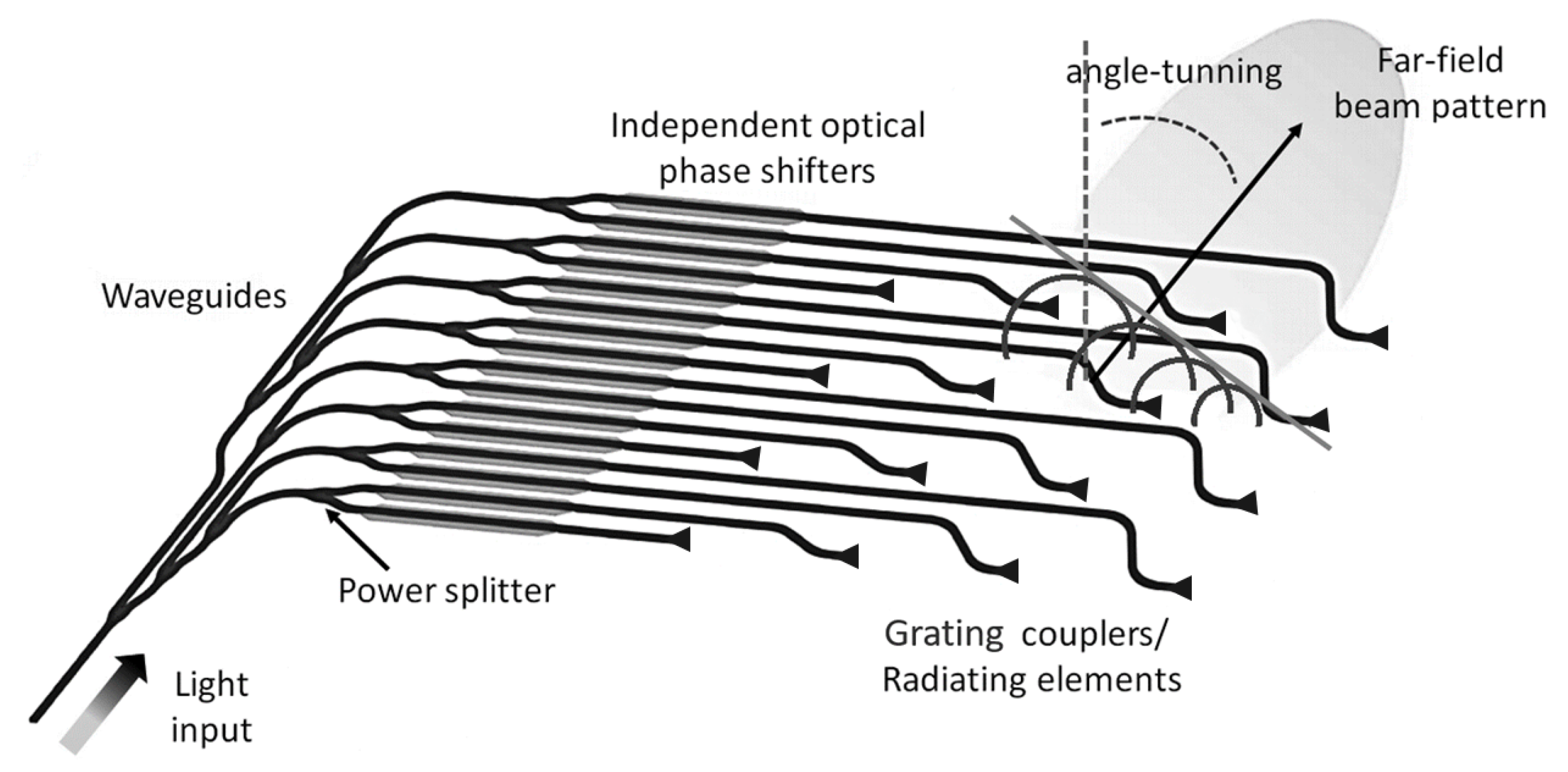

2.2.1. Scanners

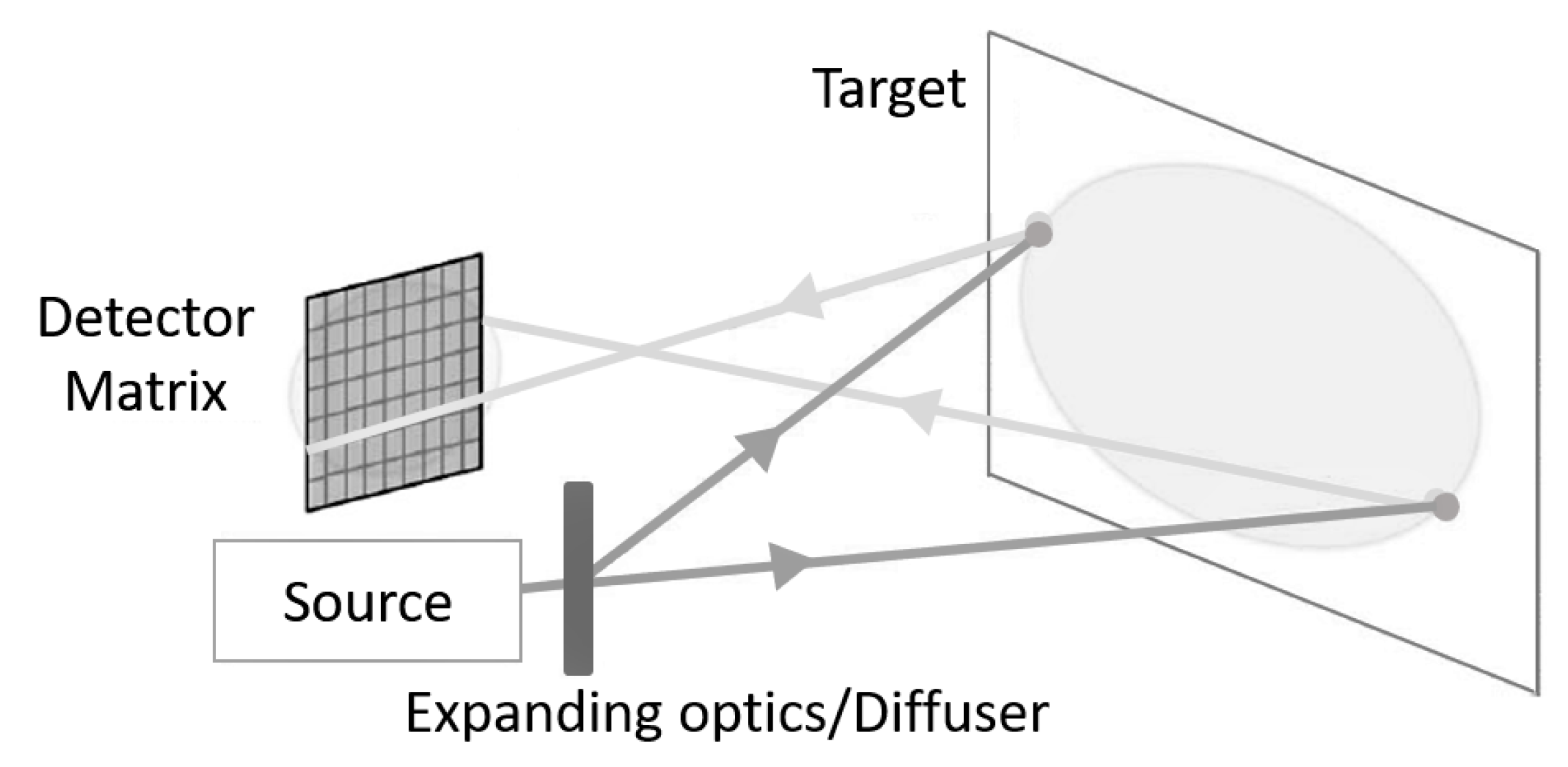

2.2.2. Detector Arrays

2.2.3. Mixed Approaches

2.2.4. Summary

3. Sources and Detectors for Lidar Imaging Systems in Autonomous Vehicles

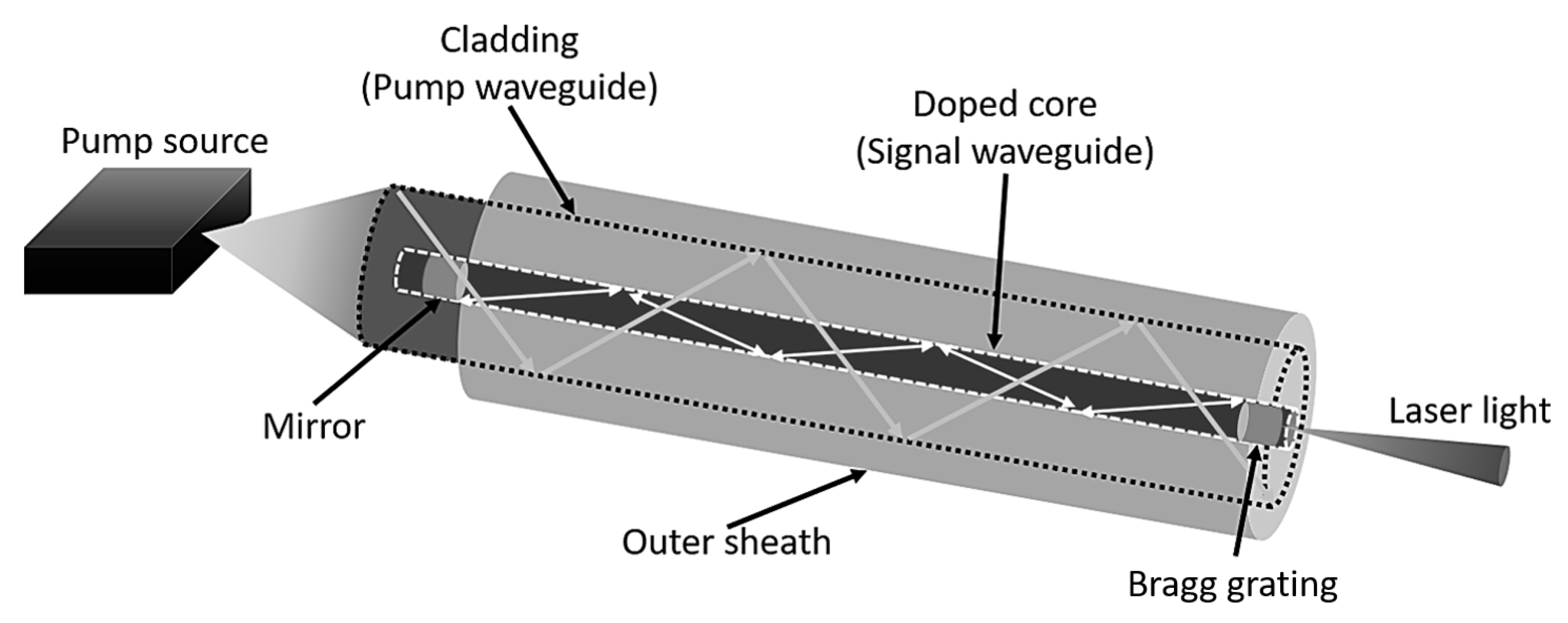

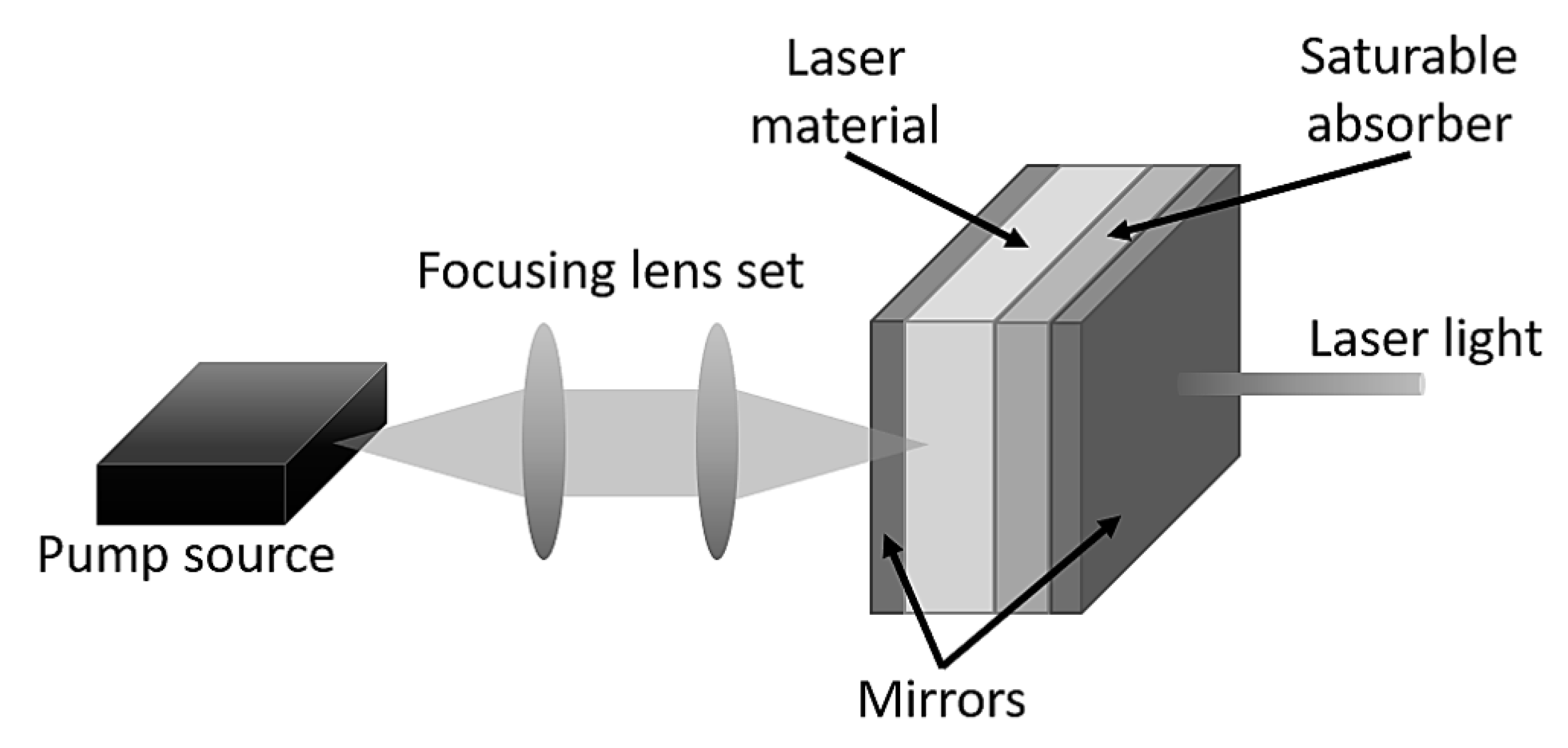

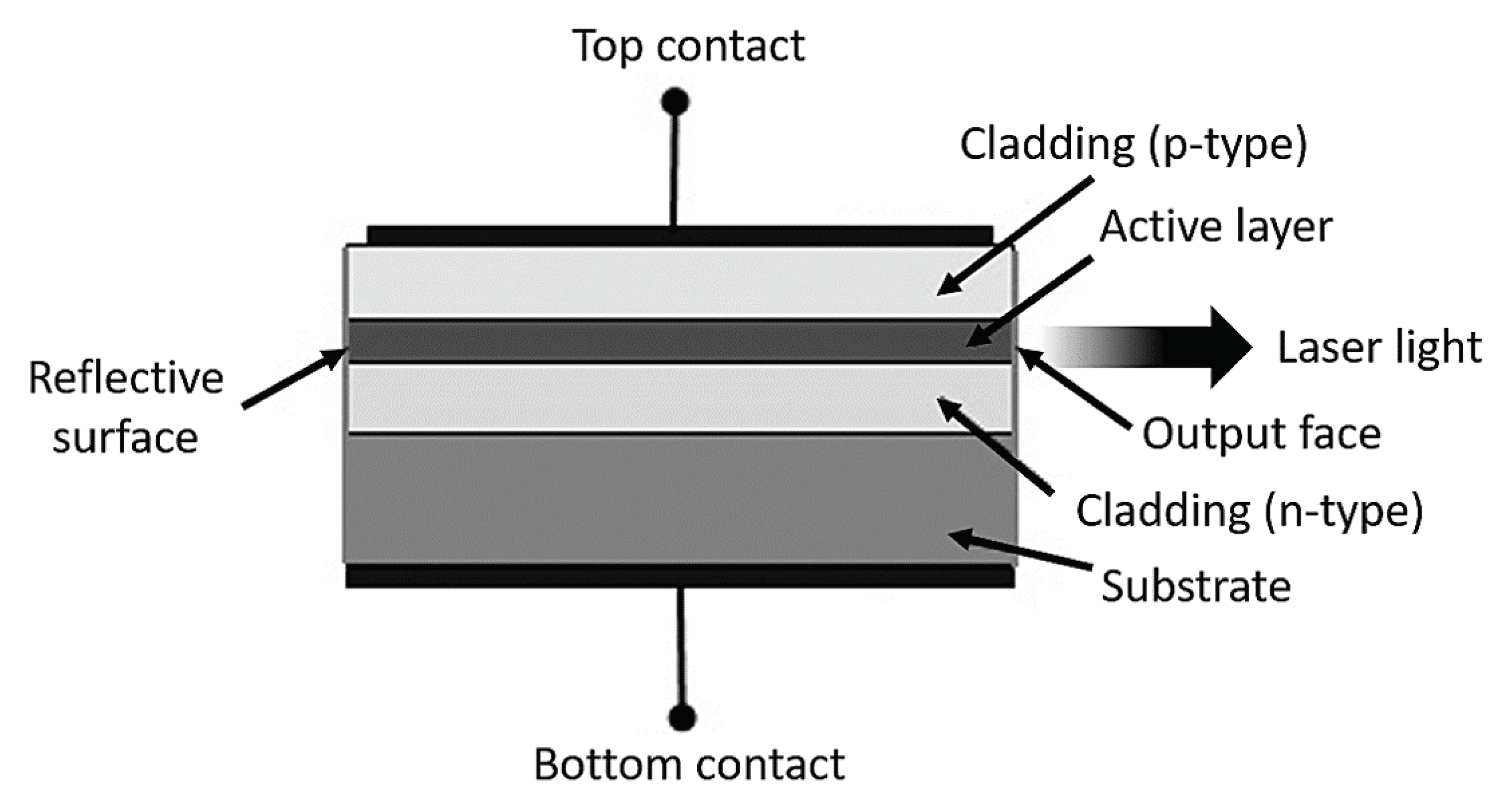

3.1. Sources

Sources in Lidar Imaging Systems

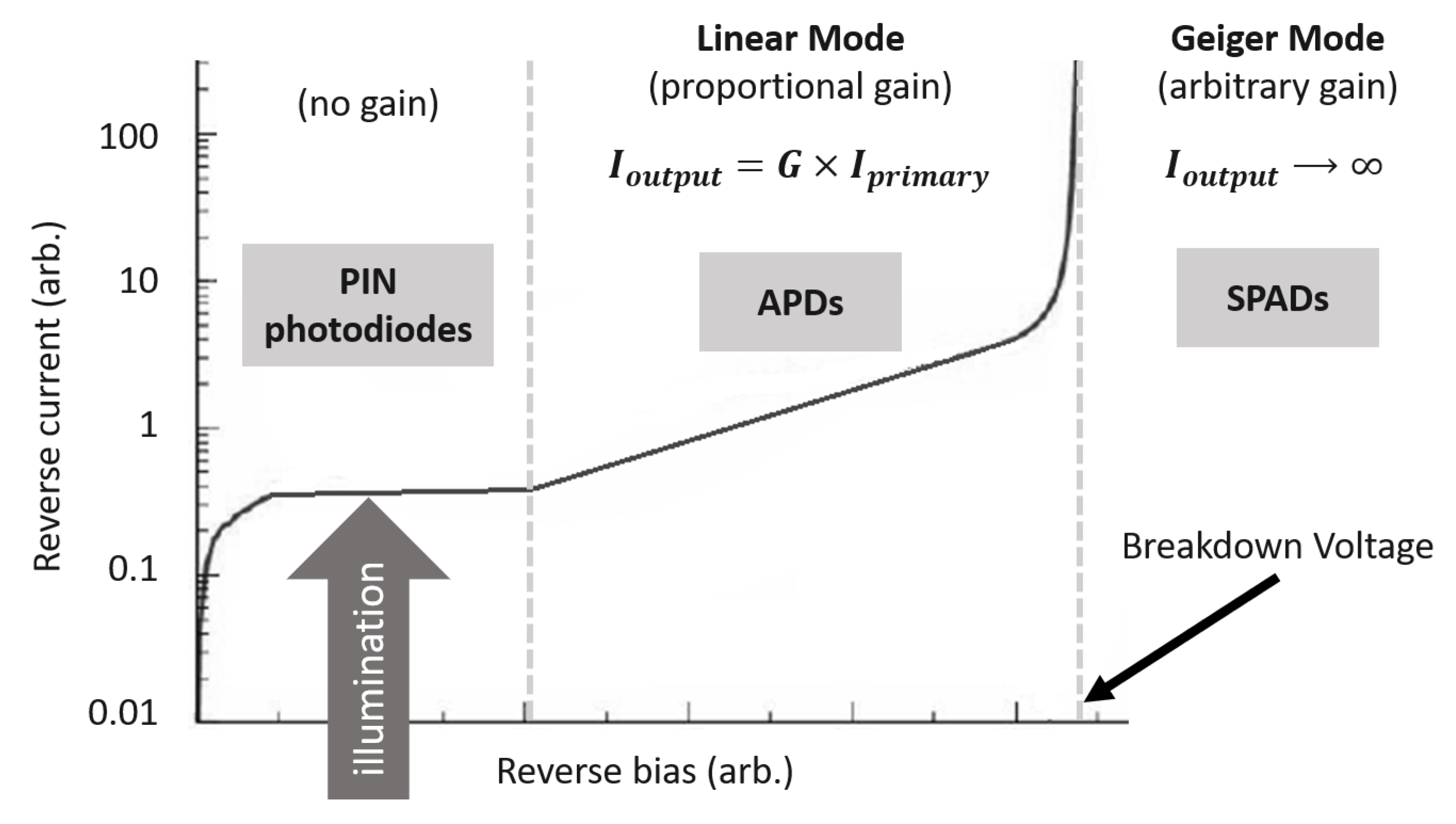

3.2. Photodetectors

3.2.1. Gain and Noise

3.2.2. Photodetectors in Lidar Imaging Systems

4. Pending Issues

4.1. Spatial Resolution

4.2. Sensor Fusion and Data Management

4.3. Sensor Distribution

4.4. Bad Weather Conditions

4.5. Mutual Interference

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Woodside Capital Partners & Yole Développement. Automotive LiDAR Market Report; OIDA Publications & Reports; Optical Society of America: Washington, DC, USA, 2018. [Google Scholar]

- Schoonover, D. The Driverless Car Is Closer Than You Think—And I Can’t Wait; Forbes: Randall Lane, NJ, USA, 2019. [Google Scholar]

- Comerón, A.; Muñoz-Porcar, C.; Rocadenbosch, F.; Rodríguez-Gómez, A.; Sicard, M. Current research in LIDAR technology used for the remote sensing of atmospheric aerosols. Sensors 2017, 17, 1450. [Google Scholar] [CrossRef] [PubMed]

- McManamon, P.F. Field Guide to Lidar; SPIE: Bellingham, WA, USA, 2015. [Google Scholar]

- Weitkamp, C. LiDAR: Introduction. In Laser Remote Sensing; CRC Press: Boca Raton, FL, USA, 2005; pp. 19–54. [Google Scholar]

- Dong, P.; Chen, Q. LiDAR Remote Sensing and Applications; CRC Press: Boca Raton, FL, USA, 2018. [Google Scholar]

- Remote Sensing—Open Access Journal. Available online: https://www.mdpi.com/journal/remotesensing (accessed on 27 September 2019).

- Remote Sensing—Events. Available online: https://www.mdpi.com/journal/remotesensing/events (accessed on 27 September 2019).

- Douillard, B.; Underwood, J.; Kuntz, N.; Vlaskine, V.; Quadros, A.; Morton, P.; Frenkel, A. On the segmentation of 3D LIDAR point clouds. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 2798–2805. [Google Scholar]

- Premebida, C.; Carreira, J.; Batista, J.; Nunes, U. Pedestrian detection combining rgb and dense lidar data. In Proceedings of the IEEE International Conference on Intelligent Robots and Systems, Chicago, IL, USA, 14–18 September 2014; pp. 4112–4117. [Google Scholar]

- Neumann, U.; You, S.; Hu, J.; Jiang, B.; Lee, J. Augmented virtual environments (ave): Dynamic fusion of imagery and 3d models. In Proceedings of the IEEE Virtual Reality, Los Angeles, CA, USA, 22–26 March 2003; pp. 61–67. [Google Scholar]

- Himmelsbach, M.; Mueller, A.; Lüttel, T.; Wünsche, H.J. LIDAR-Based 3D Object Perception; IRIT: Toulouse, France, 2008; p. 1. [Google Scholar]

- Kolb, A.; Barth, E.; Koch, R.; Larsen, R. Time-of-flight cameras in computer graphics. Comput. Gr. Forum 2010, 29, 141–159. [Google Scholar] [CrossRef]

- Han, J.; Shao, L.; Xu, D.; Shotton, J. Enhanced computer vision with microsoft kinect sensor: A review. IEEE Trans. Cybern. 2013, 43, 1318–1334. [Google Scholar]

- Darlington, K. The Social Implications of Driverless Cars; BBVA OpenMind: Madrid, Spain, 2018. [Google Scholar]

- Moosmann, F.; Stiller, C. Velodyne slam. In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Baden-Baden, Germany, 5–9 June 2011; pp. 393–398. [Google Scholar]

- Behroozpour, B.; Sandborn, P.A.; Wu, M.C.; Boser, B.E. Lidar system architectures and circuits. IEEE Commun. Mag. 2017, 55, 135–142. [Google Scholar] [CrossRef]

- Illade-Quinteiro, J.; Brea, V.; López, P.; Cabello, D.; Doménech-Asensi, G. Distance measurement error in time-of-flight sensors due to shot noise. Sensors 2015, 15, 4624–4642. [Google Scholar] [CrossRef]

- Sarbolandi, H.; Plack, M.; Kolb, A. Pulse Based Time-of-Flight Range Sensing. Sensors 2018, 18, 1679. [Google Scholar] [CrossRef] [PubMed]

- Theiß, S. Analysis of a Pulse-Based ToF Camera for Automotive Application. Master’s Thesis, University of Siegen, Siegen, Germany, 2015. [Google Scholar]

- O’Connor, D. Time-Correlated Single Photon Counting; Academic Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Koskinen, M.; Kostamovaara, J.T.; Myllylae, R.A. Comparison of continuous-wave and pulsed time-of-flight laser range-finding techniques. In Proceedings of the Optics, Illumination, and Image Sensing for Machine Vision VI, Anaheim, CA, USA, 1 March 1992; Volume 1614, pp. 296–305. [Google Scholar]

- Wehr, A.; Lohr, U. Airborne laser scanning—An introduction and overview. ISPRS J. Photogramm. Remote Sens. 1999, 54, 68–82. [Google Scholar] [CrossRef]

- Horaud, R.; Hansard, M.; Evangelidis, G.; Ménier, C. An overview of depth cameras and range scanners based on time-of-flight technologies. Mach. Vis. Appl. 2016, 27, 1005–1020. [Google Scholar] [CrossRef]

- Richmond, R.; Cain, S. Direct-Detection LADAR Systems; SPIE Press: Bellingham, WA, USA, 2010. [Google Scholar]

- Amann, M.C.; Bosch, T.M.; Lescure, M.; Myllylae, R.A.; Rioux, M. Laser ranging: A critical review of unusual techniques for distance measurement. Opt. Eng. 2001, 40, 10–20. [Google Scholar]

- Hansard, M.; Lee, S.; Choi, O.; Horaud, R.P. Time-of-Flight Cameras: Principles, Methods and Applications; Springer Science & Business Media: Berlin, Germany, 2012. [Google Scholar]

- Gokturk, S.B.; Yalcin, H.; Bamji, C. A time-of-flight depth sensor-system description, issues and solutions. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshop, Washington, DC, USA, 27 June–2 July 2004; p. 35. [Google Scholar]

- Möller, T.; Kraft, H.; Frey, J.; Albrecht, M.; Lange, R. Robust 3D measurement with PMD sensors. Range Imaging Day Zürich 2005, 7, 906467(1-14)7. [Google Scholar]

- Lefloch, D.; Nair, R.; Lenzen, F.; Schäfer, H.; Streeter, L.; Cree, M.J.; Koch, R.; Kolb, A. Technical foundation and calibration methods for time-of-flight cameras. In Time-of-Flight and Depth Imaging. Sensors, Algorithms, and Applications; Springer: Berlin, Germany, 2013; pp. 3–24. [Google Scholar]

- Lange, R.; Seitz, P. Solid-state time-of-flight range camera. IEEE J. Quantum Electron. 2001, 37, 390–397. [Google Scholar] [CrossRef]

- Foix, S.; Alenya, G.; Torras, C. Lock-in time-of-flight (ToF) cameras: A survey. IEEE Sens. J. 2011, 11, 1917–1926. [Google Scholar] [CrossRef]

- Oggier, T.; Büttgen, B.; Lustenberger, F.; Becker, G.; Rüegg, B.; Hodac, A. SwissRanger SR3000 and first experiences based on miniaturized 3D-TOF cameras. In Proceedings of the First Range Imaging Research Day at ETH Zurich, Zurich, 2005. [Google Scholar]

- Petermann, K. Advances in Optoelectronics; Springer: Berlin, Germany, 1988. [Google Scholar]

- Jha, A.; Azcona, F.J.; Royo, S. Frequency-modulated optical feedback interferometry for nanometric scale vibrometry. IEEE Photon. Technol. Lett. 2016, 28, 1217–1220. [Google Scholar] [CrossRef]

- Agishev, R.; Gross, B.; Moshary, F.; Gilerson, A.; Ahmed, S. Range-resolved pulsed and CWFM lidars: Potential capabilities comparison. Appl. Phys. B 2006, 85, 149–162. [Google Scholar] [CrossRef]

- Uttam, D.; Culshaw, B. Precision time domain reflectometry in optical fiber systems using a frequency modulated continuous wave ranging technique. J. Lightw. Technol. 1985, 3, 971–977. [Google Scholar] [CrossRef]

- Aulia, S.; Suksmono, A.B.; Munir, A. Stationary and moving targets detection on FMCW radar using GNU radio-based software defined radio. In Proceedings of the IEEE International Symposium on Intelligent Signal Processing and Communication Systems (ISPACS), Nusa Dua, Indonesia, 9–12 November 2015; pp. 468–473. [Google Scholar]

- Wojtkiewicz, A.; Misiurewicz, J.; Nalecz, M.; Jedrzejewski, K.; Kulpa, K. Two-dimensional signal processing in FMCW radars. In Proceeding of the XXth National Conference on Circuit Theory and Electronic Networks; University of Mining and Metallurgy: Kolobrzeg, Poland, 1997; pp. 475–480. [Google Scholar]

- Feneyrou, P.; Leviandier, L.; Minet, J.; Pillet, G.; Martin, A.; Dolfi, D.; Schlotterbeck, J.P.; Rondeau, P.; Lacondemine, X.; Rieu, A.; et al. Frequency-modulated multifunction lidar for anemometry, range finding, and velocimetry—1. Theory and signal processing. Appl. Opt. 2017, 56, 9663–9675. [Google Scholar] [CrossRef] [PubMed]

- Rasshofer, R.; Gresser, K. Automotive radar and lidar systems for next generation driver assistance functions. Adv. Radio Sci. 2005, 3, 205–209. [Google Scholar] [CrossRef]

- Williams, G.M. Optimization of eyesafe avalanche photodiode lidar for automobile safety and autonomous navigation systems. Opt. Eng. 2017, 56, 031224. [Google Scholar] [CrossRef]

- Duong, H.V.; Lefsky, M.A.; Ramond, T.; Weimer, C. The electronically steerable flash lidar: A full waveform scanning system for topographic and ecosystem structure applications. IEEE Trans. Geosci. Remote Sens. 2012, 50, 4809–4820. [Google Scholar] [CrossRef]

- lThakur, R. Scanning LIDAR in Advanced Driver Assistance Systems and Beyond: Building a road map for next-generation LIDAR technology. IEEE Consum. Electron. Mag. 2016, 5, 48–54. [Google Scholar] [CrossRef]

- National Research Council. Laser Radar: Progress and Opportunities in Active Electro-Optical Sensing; National Academies Press: Washington, DC, USA, 2014. [Google Scholar]

- Montagu, J. Galvanometric and Resonant Scanners. In Handbook of Optical and Laser Scanning, 2nd ed.; CRC Press: Boca Raton, FL, USA, 2016; pp. 418–473. [Google Scholar]

- Zhou, Y.; Lu, Y.; Hei, M.; Liu, G.; Fan, D. Motion control of the wedge prisms in Risley-prism-based beam steering system for precise target tracking. Appl. Opt. 2013, 52, 2849–2857. [Google Scholar] [CrossRef] [PubMed]

- Davis, S.R.; Farca, G.; Rommel, S.D.; Johnson, S.; Anderson, M.H. Liquid crystal waveguides: New devices enabled by > 1000 waves of optical phase control. In Proceedings of the Emerging Liquid Crystal Technologies V, San Francisco, CA, USA, 23–28 January 2010; SPIE: Bellingham, WA, USA, 2010; Volume 7618, p. 76180E. [Google Scholar]

- Han, W.; Haus, J.W.; McManamon, P.; Heikenfeld, J.; Smith, N.; Yang, J. Transmissive beam steering through electrowetting microprism arrays. Opt. Commun. 2010, 283, 1174–1181. [Google Scholar] [CrossRef]

- Akatay, A.; Ataman, C.; Urey, H. High-resolution beam steering using microlens arrays. Opt. Lett. 2006, 31, 2861–2863. [Google Scholar] [CrossRef] [PubMed]

- Ayers, G.J.; Ciampa, M.A.; Vranos, N.A. Holographic Optical Beam Steering Demonstration. In Proceedings of the IEEE Photonic Society 24th Annual Meeting, Arlington, VA, USA, 9–13 October 2011; pp. 361–362. [Google Scholar]

- Yoo, H.W.; Druml, N.; Brunner, D.; Schwarzl, C.; Thurner, T.; Hennecke, M.; Schitter, G. MEMS-based lidar for autonomous driving. Elektrotechnik Informationstechnik 2018, 135, 408–415. [Google Scholar] [CrossRef]

- Ullrich, A.; Pfennigbauer, M.; Rieger, P. How to read your LIDAR spec—A comparison of single-laser-output and multi-laser-output LIDAR instruments. In Riegl Laser Measurement Systems; GmbH Riegl: Salzburg, Austria, 2013. [Google Scholar]

- Baluja, S. Evolution of an Artificial Neural Network Based Autonomous Land Vehicle Controller. IEEE Trans. Syst. Man Cybern. Part B Cybern. 1996, 26, 450–463. [Google Scholar] [CrossRef] [PubMed]

- Jo, K.; Kim, J.; Kim, D.; Jang, C.; Sunwoo, M. Development of autonomous car—Part I: Distributed system architecture and development process. IEEE Trans. Ind. Electron. 2014, 61, 7131–7140. [Google Scholar] [CrossRef]

- Ackerman, E. Hail, robo-taxi! IEEE Spec. 2017, 54, 26–29. [Google Scholar] [CrossRef]

- Ataman, Ç.; Lani, S.; Noell, W.; De Rooij, N. A dual-axis pointing mirror with moving-magnet actuation. J. Micromech. Microeng. 2012, 23, 025002. [Google Scholar] [CrossRef]

- Ye, L.; Zhang, G.; You, Z. 5 V compatible two-axis PZT driven MEMS scanning mirror with mechanical leverage structure for miniature LiDAR application. Sensors 2017, 17, 521. [Google Scholar] [CrossRef]

- Schenk, H.; Durr, P.; Haase, T.; Kunze, D.; Sobe, U.; Lakner, H.; Kuck, H. Large deflection micromechanical scanning mirrors for linear scans and pattern generation. IEEE J. Sel. Top. Quantum Electron. 2000, 6, 715–722. [Google Scholar] [CrossRef]

- Stann, B.L.; Dammann, J.F.; Giza, M.M. Progress on MEMS-scanned ladar. In Proceedings of the Laser Radar Technology and Applications XXI; SPIE: Bellingham, WA, USA, 2016; Volume 9832, p. 98320L. [Google Scholar]

- Kim, G.; Eom, J.; Park, Y. Design and implementation of 3d lidar based on pixel-by-pixel scanning and ds-ocdma. In Smart Photonic and Optoelectronic Integrated Circuits XIX; SPIE: Bellingham, WA, USA, 2017; Volume 10107, p. 1010710. [Google Scholar]

- Holmström, S.T.; Baran, U.; Urey, H. MEMS laser scanners: A review. IEEE J. Microelectromech. Syst. 2014, 23, 259–275. [Google Scholar] [CrossRef]

- Urey, H.; Wine, D.W.; Osborn, T.D. Optical performance requirements for MEMS-scanner-based microdisplays. In Proceedings of the MOEMS and Miniaturized Systems, Ottawa, ON, Canada, 22 August 2000; SPIE: Bellingham, WA, USA, 2000; Volume 4178, pp. 176–186. [Google Scholar]

- Yalcinkaya, A.D.; Urey, H.; Brown, D.; Montague, T.; Sprague, R. Two-axis electromagnetic microscanner for high resolution displays. IEEE J. Microelectromech. Syst. 2006, 15, 786–794. [Google Scholar] [CrossRef]

- Mizuno, T.; Mita, M.; Kajikawa, Y.; Takeyama, N.; Ikeda, H.; Kawahara, K. Study of two-dimensional scanning LIDAR for planetary explorer. In Proceedings of the Sensors, Systems, and Next-Generation Satellites XII; SPIE: Bellingham, WA, USA, 2008; Volume 7106, p. 71061A. [Google Scholar]

- Park, I.; Jeon, J.; Nam, J.; Nam, S.; Lee, J.; Park, J.; Yang, J.; Ebisuzaki, T.; Kawasaki, Y.; Takizawa, Y.; et al. A new LIDAR method using MEMS micromirror array for the JEM-EUSO mission. In Proceedings of the 31st ICRC Conference, Lodz 2009, Commission C4; IUPAP: Singapore, 2009. [Google Scholar]

- Moss, R.; Yuan, P.; Bai, X.; Quesada, E.; Sudharsanan, R.; Stann, B.L.; Dammann, J.F.; Giza, M.M.; Lawler, W.B. Low-cost compact MEMS scanning ladar system for robotic applications. In Proceedings of the Laser Radar Technology and Applications XVI, Baltimore, MD, USA, 16 May 2012; SPIE: Bellingham, WA, USA, 2012; Volume 8379, p. 837903. [Google Scholar]

- Riu, J.; Royo, S. A compact long-range lidar imager for high spatial operation in daytime. In Proceedings of the 8th International Symposium on Optronics in Defence and Security; 3AF—The French Aerospace Society: Paris, France, 2018; pp. 1–4. [Google Scholar]

- Heck, M.J. Highly integrated optical phased arrays: Photonic integrated circuits for optical beam shaping and beam steering. Nanophotonics 2016, 6, 93–107. [Google Scholar] [CrossRef]

- Hansen, R.C. Phased Array Antennas; John Wiley & Sons: Hoboken, NJ, USA, 2009; Volume 213. [Google Scholar]

- Hutchison, D.N.; Sun, J.; Doylend, J.K.; Kumar, R.; Heck, J.; Kim, W.; Phare, C.T.; Feshali, A.; Rong, H. High-resolution aliasing-free optical beam steering. Optica 2016, 3, 887–890. [Google Scholar] [CrossRef]

- Sun, J.; Timurdogan, E.; Yaacobi, A.; Hosseini, E.S.; Watts, M.R. Large-scale nanophotonic phased array. Nature 2013, 493, 195. [Google Scholar] [CrossRef]

- Van Acoleyen, K.; Bogaerts, W.; Jágerská, J.; Le Thomas, N.; Houdré, R.; Baets, R. Off-chip beam steering with a one-dimensional optical phased array on silicon-on-insulator. Opt. Lett. 2009, 34, 1477–1479. [Google Scholar] [CrossRef] [PubMed]

- Eldada, L. Solid state LIDAR for ubiquitous 3D sensing, Quanergy Systems. In Proceedings of the GPU Technology Conference, 2018. [Google Scholar]

- Fersch, T.; Weigel, R.; Koelpin, A. Challenges in miniaturized automotive long-range lidar system design. In Proceedings of the Three-Dimensional Imaging, Visualization, and Display, Orlando, FL, USA, 10 May 2017; SPIE: Bellingham, WA, USA, 2017; Volume 10219, p. 102190T. [Google Scholar]

- Rabinovich, W.S.; Goetz, P.G.; Pruessner, M.W.; Mahon, R.; Ferraro, M.S.; Park, D.; Fleet, E.F.; DePrenger, M.J. Two-dimensional beam steering using a thermo-optic silicon photonic optical phased array. Opt. Eng. 2016, 55, 111603. [Google Scholar] [CrossRef]

- Laux, T.E.; Chen, C.I. 3D flash LIDAR vision systems for imaging in degraded visual environments. In Proceedings of the Degraded Visual Environments: Enhanced, Synthetic, and External Vision Solutions, Baltimore, MD, USA, 25 June 2014; SPIE: Bellingham, WA, USA, 2014; Volume 9087, p. 908704. [Google Scholar]

- Rohrschneider, R.; Masciarelli, J.; Miller, K.L.; Weimer, C. An overview of ball flash LiDAR and related technology development. In Proceedings of the AIAA Guidance, Navigation, and Control Conference, American Institute of Aeronautics And Astronautics, Boston, MA, USA, 19–22 August 2013; p. 4642. [Google Scholar]

- Carrara, L.; Fiergolski, A. An Optical Interference Suppression Scheme for TCSPC Flash LiDAR Imagers. Appl. Sci. 2019, 9, 2206. [Google Scholar] [CrossRef]

- Gelbart, A.; Redman, B.C.; Light, R.S.; Schwartzlow, C.A.; Griffis, A.J. Flash lidar based on multiple-slit streak tube imaging lidar. In Proceedings of the Laser Radar Technology and Applications VII; SPIE: Bellingham, WA, USA, 2002; Volume 4723, pp. 9–19. [Google Scholar]

- McManamon, P.F.; Banks, P.; Beck, J.; Huntington, A.S.; Watson, E.A. A comparison flash lidar detector options. In Laser Radar Technology and Applications XXI; SPIE: Bellingham, WA, USA, 2016; Volume 9832, p. 983202. [Google Scholar]

- Continental Showcases Innovations in Automated Driving, Electrification and Connectivity. In Automotive Engineering Exposition 2018 Yokohama, Japan; Continental Automotive Corporation, 2018; Available online: https://www.continental.com/resource/blob/129910/d41e02236f04251275f55a71a9514f6d/press-release-data.pdf (accessed on 27 September 2019).

- Christian, J.A.; Cryan, S. A survey of LIDAR technology and its use in spacecraft relative navigation. In Proceedings of the AIAA Guidance, Navigation, and Control Conference; American Institute of Aeronautics And Astronautics: Reston, VA, USA, 2013; p. 4641. [Google Scholar]

- Lee, T. How 10 leading companies are trying to make powerful, low-cost lidar. ArsTechnica 2019, 1, 1–3. [Google Scholar]

- Jokela, M.; Kutila, M.; Pyykönen, P. Testing and Validation of Automotive Point-Cloud Sensors in Adverse Weather Conditions. Appl. Sci. 2019, 9, 2341. [Google Scholar] [CrossRef]

- Crouch, S. Advantages of 3D Imaging Coherent Lidar for Autonomous Driving Applications. In Proceedings of the 19th Coherent Laser Radar Conference, Okinawa, Japan, 18–21 June 2018. [Google Scholar]

- Elder, T.; Strong, J. The infrared transmission of atmospheric windows. J. Franklin Inst. 1953, 255, 189–208. [Google Scholar] [CrossRef]

- International Standard IEC 60825-1. Safety of Laser Products-Part 1: Equipment Classification and Requirements, 2007.

- Coldren, L.A.; Corzine, S.W.; Mashanovitch, M.L. Diode Lasers and Photonic Integrated Circuits; John Wiley & Sons: Hoboken, NJ, USA, 2012; Volume 218. [Google Scholar]

- Udd, E.; Spillman, W.B., Jr. Fiber Optic Sensors: An Introduction for Engineers and Scientists; John Wiley & Sons: Hoboken, NJ, USA, 2011. [Google Scholar]

- Barnes, W.; Poole, S.B.; Townsend, J.; Reekie, L.; Taylor, D.; Payne, D.N. Er3+-Yb3+ and Er3+-doped fibre lasers. J. Lightw. Technol. 1989, 7, 1461–1465. [Google Scholar] [CrossRef]

- Kelson, I.; Hardy, A.A. Strongly pumped fiber lasers. IEEE J. Quantum Electron. 1998, 34, 1570–1577. [Google Scholar] [CrossRef]

- Koo, K.; Kersey, A. Bragg grating-based laser sensors systems with interferometric interrogation and wavelength division multiplexing. IEEE J. Lightw. Technol. 1995, 13, 1243–1249. [Google Scholar] [CrossRef]

- Fomin, V.; Gapontsev, V.; Shcherbakov, E.; Abramov, A.; Ferin, A.; Mochalov, D. 100 kW CW fiber laser for industrial applications. In Proceedings of the IEEE 2014 International Conference Laser Optics, St. Petersburg, Russia, 30 June–4 July 2014; p. 1. [Google Scholar]

- Wang, Y.; Xu, C.Q.; Po, H. Thermal effects in kilowatt fiber lasers. IEEE Photon. Technol. Lett. 2004, 16, 63–65. [Google Scholar] [CrossRef]

- Lee, B. Review of the present status of optical fiber sensors. Opt. Fiber Technol. 2003, 9, 57–79. [Google Scholar] [CrossRef]

- Paschotta, R. Field Guide to Optical Fiber Technology; SPIE: Bellingham, WA, USA, 2010. [Google Scholar]

- Sennaroglu, A. Solid-State Lasers and Applications; CRC Press: Boca Raton, FL, USA, 2006. [Google Scholar]

- Huber, G.; Kränkel, C.; Petermann, K. Solid-state lasers: Status and future. JOSA B 2010, 27, B93–B105. [Google Scholar] [CrossRef]

- Zayhowski, J.J. Q-switched operation of microchip lasers. Opt. Lett. 1991, 16, 575–577. [Google Scholar] [CrossRef]

- Taira, T.; Mukai, A.; Nozawa, Y.; Kobayashi, T. Single-mode oscillation of laser-diode-pumped Nd: YVO 4 microchip lasers. Opt. Lett. 1991, 16, 1955–1957. [Google Scholar] [CrossRef]

- Zayhowski, J.; Dill, C. Diode-pumped microchip lasers electro-optically Q switched at high pulse repetition rates. Opt. Lett. 1992, 17, 1201–1203. [Google Scholar] [CrossRef]

- Zayhowski, J.J.; Dill, C. Diode-pumped passively Q-switched picosecond microchip lasers. Opt. Lett. 1994, 19, 1427–1429. [Google Scholar] [CrossRef] [PubMed]

- Młyńczak, J.; Kopczyński, K.; Mierczyk, Z.; Zygmunt, M.; Natkański, S.; Muzal, M.; Wojtanowski, J.; Kirwil, P.; Jakubaszek, M.; Knysak, P.; et al. Practical application of pulsed “eye-safe” microchip laser to laser rangefinders. Opt. Electron. Rev. 2013, 21, 332–337. [Google Scholar] [CrossRef]

- Zayhowski, J.J. Passively Q-switched microchip lasers and applications. Rev. Laser Eng. 1998, 26, 841–846. [Google Scholar] [CrossRef]

- Faist, J.; Capasso, F.; Sivco, D.L.; Sirtori, C.; Hutchinson, A.L.; Cho, A.Y. Quantum cascade laser. Science 1994, 264, 553–556. [Google Scholar] [CrossRef] [PubMed]

- Chow, W.W.; Koch, S.W. Semiconductor-Laser Fundamentals: Physics of the Gain Materials; Springer Science & Business Media: Berlin, Germany, 1999. [Google Scholar]

- Sun, H. A Practical Guide to Handling Laser Diode Beams; Springer: Berlin, Germany, 2015. [Google Scholar]

- Taimre, T.; Nikolić, M.; Bertling, K.; Lim, Y.L.; Bosch, T.; Rakić, A.D. Laser feedback interferometry: A tutorial on the self-mixing effect for coherent sensing. Adv. Opt. Photon. 2015, 7, 570–631. [Google Scholar] [CrossRef]

- VCSELs. Fundamentals, Technology and Applications of Vertical-Cavity Surface-Emitting Lasers; Springer: Heidelberg, Germany, 2013. [Google Scholar]

- Iga, K.; Koyama, F.; Kinoshita, S. Surface emitting semiconductor lasers. IEEE J. Quantum Electron. 1988, 24, 1845–1855. [Google Scholar] [CrossRef]

- Kogelnik, H.; Shank, C. Coupled-wave theory of distributed feedback lasers. J. Appl. Phys. 1972, 43, 2327–2335. [Google Scholar] [CrossRef]

- Bachmann, F.; Loosen, P.; Poprawe, R. High Power Diode Lasers: Technology and Applications; Springer: Berlin, Germany, 2007; Volume 128. [Google Scholar]

- Lang, R.; Kobayashi, K. External optical feedback effects on semiconductor injection laser properties. IEEE J. Quantum Electron. 1980, 16, 347–355. [Google Scholar] [CrossRef]

- Kono, S.; Koda, R.; Kawanishi, H.; Narui, H. 9-kW peak power and 150-fs duration blue-violet optical pulses generated by GaInN master oscillator power amplifier. Opt. Express 2017, 25, 14926–14934. [Google Scholar] [CrossRef]

- Injeyan, H.; Goodno, G.D. High Power Laser Handbook; McGraw-Hill Professional: New York, NY, USA, 2011. [Google Scholar]

- McManamon, P.F. Review of ladar: A historic, yet emerging, sensor technology with rich phenomenology. Opt. Eng. 2012, 51, 060901. [Google Scholar] [CrossRef]

- Rogalski, A. Infrared detectors: An overview. Infrared Phys. Technol. 2002, 43, 187–210. [Google Scholar] [CrossRef]

- Yu, C.; Shangguan, M.; Xia, H.; Zhang, J.; Dou, X.; Pan, J.W. Fully integrated free-running InGaAs/InP single-photon detector for accurate lidar applications. Opt. Express 2017, 25, 14611–14620. [Google Scholar] [CrossRef] [PubMed]

- Capasso, F. Physics of avalanche photodiodes. Semicond. Semimetals 1985, 22, 1–172. [Google Scholar]

- Renker, D. Geiger-mode avalanche photodiodes, history, properties and problems. Nucl. Instrum. Methods Phys. Res. Sec. A Accel. Spectrom. Detect. Assoc. Equip. 2006, 567, 48–56. [Google Scholar] [CrossRef]

- Piatek, S.S. Physics and Operation of an MPPC; Hamamatsu Corporation and New Jersey Institute of Technology: Hamamatsu, Japan, 2014. [Google Scholar]

- Nabet, B. Photodetectors: Materials, Devices and Applications; Woodhouse Publishing: Exeter, UK, 2016. [Google Scholar]

- Yotter, R.A.; Wilson, D.M. A review of photodetectors for sensing light-emitting reporters in biological systems. IEEE Sens. J. 2003, 3, 288–303. [Google Scholar] [CrossRef]

- Melchior, H.; Fisher, M.B.; Arams, F.R. Photodetectors for optical communication systems. Proc. IEEE 1970, 58, 1466–1486. [Google Scholar] [CrossRef]

- Alexander, S.B. Optical Communication Receiver Design; SPIE Optical Engineering Press: London, UK, 1997. [Google Scholar]

- McManamon, P. LiDAR Technologies and Systems; SPIE Press: Bellingham, WA, USA, 2019. [Google Scholar]

- Kwok, K.N. Avalanche Photodiode (APD). In Complete Guide to Semiconductor Devices; Wiley-IEEE Press: Hoboken, NJ, USA, 2002; pp. 454–461. [Google Scholar]

- Zappa, F.; Lotito, A.; Giudice, A.; Cova, S.; Ghioni, M. Monolithic active-quenching and active-reset circuit for single-photon avalanche detectors. IEEE J. Solid State Circ. 2003, 38, 1298–1301. [Google Scholar] [CrossRef]

- Cova, S.; Ghioni, M.; Lotito, A.; Rech, I.; Zappa, F. Evolution and prospects for single-photon avalanche diodes and quenching circuits. J. Mod. Opt. 2004, 51, 1267–1288. [Google Scholar] [CrossRef]

- Charbon, E.; Fishburn, M.; Walker, R.; Henderson, R.K.; Niclass, C. SPAD-based sensors. In TOF Range-Imaging Cameras; Springer: Berlin, Germany, 2013; pp. 11–38. [Google Scholar]

- Yamamoto, K.; Yamamura, K.; Sato, K.; Ota, T.; Suzuki, H.; Ohsuka, S. Development of multi-pixel photon counter (MPPC). In Proceedings of the 2006 IEEE Nuclear Science Symposium Conference Record, San Diego, CA, USA, 29 October–1 November 2006; Volume 2, pp. 1094–1097. [Google Scholar]

- Gomi, S.; Hano, H.; Iijima, T.; Itoh, S.; Kawagoe, K.; Kim, S.H.; Kubota, T.; Maeda, T.; Matsumura, T.; Mazuka, Y.; et al. Development and study of the multi pixel photon counter. Nucl. Instrum. Methods Phys. Res. Sec. A Accel. Spectrom. Detect. Assoc. Equip. 2007, 581, 427–432. [Google Scholar] [CrossRef]

- Ward, M.; Vacheret, A. Impact of after-pulse, pixel crosstalk and recovery time in multi-pixel photon counter (TM) response. Nucl. Instrum. Methods Phys. Res. Sec. A Accel. Spectrom. Detect. Assoc. Equip. 2009, 610, 370–373. [Google Scholar] [CrossRef]

- Riu, J.; Sicard, M.; Royo, S.; Comerón, A. Silicon photomultiplier detector for atmospheric lidar applications. Opt. Lett. 2012, 37, 1229–1231. [Google Scholar] [CrossRef]

- Foord, R.; Jones, R.; Oliver, C.J.; Pike, E.R. The Use of Photomultiplier Tubes for Photon Counting. Appl. Opt. 1969, 8, 1975–1989. [Google Scholar] [CrossRef] [PubMed]

- Schwarz, B. LIDAR: Mapping the world in 3D. Nat. Photon. 2010, 4, 429. [Google Scholar] [CrossRef]

- Gotzig, H.; Geduld, G. Automotive LIDAR. In Handbook of Driver Assistance Systems; Springer: Berlin, Germany, 2015; pp. 405–430. [Google Scholar]

- Hecht, J. Lidar for Self-Driving Cars. Opt. Photon. News 2018, 29, 26–33. [Google Scholar]

- Rosique, F.; Navarro, P.J.; Fernández, C.; Padilla, A. A systematic review of perception system and simulators for autonomous vehicles research. Sensors 2019, 19, 648. [Google Scholar] [CrossRef] [PubMed]

- Heinrich, S. Flash Memory in the emerging age of autonomy. In Proceedings of the Flash Memory Summit, Santa Clara, CA, USA, 7–10 August 2017. [Google Scholar]

- Bijelic, M.; Gruber, T.; Ritter, W. A Benchmark for Lidar Sensors in Fog: Is Detection Breaking Down? In Proceedings of the IEEE Intelligent Vehicles Symposium (IV), Changshu, China, 26–30 June 2018; pp. 760–767. [Google Scholar]

- Rasshofer, R.H.; Spies, M.; Spies, H. Influences of weather phenomena on automotive laser radar systems. Adv. Radio Sci. 2011, 9, 49–60. [Google Scholar] [CrossRef]

- Duthon, P.; Colomb, M.; Bernardin, F. Light Transmission in Fog: The Influence of Wavelength on the Extinction Coefficient. Appl. Sci. 2019, 9, 2843. [Google Scholar] [CrossRef]

- Kim, G.; Eom, J.; Choi, J.; Park, Y. Mutual Interference on Mobile Pulsed Scanning LIDAR. IEMEK J. Embed. Syst. Appl. 2017, 12, 43–62. [Google Scholar] [CrossRef][Green Version]

| Pulsed | AMCW | FMCW | |

|---|---|---|---|

| Parameter measured | Intensity of emitted and received pulse | Phase of modulated amplitude | Relative beat of modulated frequency, and Doppler shift |

| Measurement | Direct | Indirect | Indirect |

| Detection | Incoherent | Incoherent | Coherent |

| Use | Indoor/Outdoor | Only indoor | Indoor/Outdoor |

| Main advantage | Simplicity of setup; long ambiguity range | Established commercially | Simultaneous speed and range measures |

| Main limitation | Low SNR of returned pulse | Short ambiguity distance | Coherence length/Stability in operating conditions (e.g., thermal) |

| Depth resolution (typ) | 1 cm | 1 cm | 0.1 cm |

| Mechanical Scanners | MEMS Scanners | OPAs | Flash | AMCWs | |

|---|---|---|---|---|---|

| Working principle | Galvos, rotating mirrors or prisms | MEMS micromirror | Phased array of antennas | Pulsed flood illumination | Pixelated phase meters |

| Main advantage | 360 deg FOV in horizontal | Compact and lightweight | Full Solid State | Fast frame rate | Commercial |

| Main disadvantage | Moving elements, bulky | Laser power management, linearity | Lab-only for long-range | Limited range/Blindable | Only indoor |

| Fibre Laser | Microchip Laser | Diode Laser | |

|---|---|---|---|

| Amplifying media | Doped optical fibre | Semiconductor crystal | Semiconductor PN junction |

| Peak power (typ) | >10 kW | >1 kW | 0.1 kW |

| PRR | <1 MHz | <1 MHz | ≈100 KHz |

| Pulse width | <5 ns | <5 ns | 100 ns |

| Main advantage | Pulse peak power, PRR, beam quality. Beam delivery | Pulse peak power, PRR, beam quality | Cost, compact |

| Main disadvantage | Cost | Cost, beam delivery | Max output power and PRR. Beam quality |

| PIN | APDs | SPADs | MPPCs | PMTs | |

|---|---|---|---|---|---|

| Solid state | Yes | Yes | Yes | Yes | No |

| Gain (typ) | 1 | Linear (≈200) | Geiger (10) | Geiger (10) | Avalanche (10) |

| Main advantage | Fast | Adjustable gain by bias | Single photon detection | Single photon counting | Gain, UV detection |

| Main disadvantage | Limited for low SNR | Limited gain | Recovery time | Saturable, bias voltage dependence | Bulky, low QE, high voltage, magnetic fields |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Royo, S.; Ballesta-Garcia, M. An Overview of Lidar Imaging Systems for Autonomous Vehicles. Appl. Sci. 2019, 9, 4093. https://doi.org/10.3390/app9194093

Royo S, Ballesta-Garcia M. An Overview of Lidar Imaging Systems for Autonomous Vehicles. Applied Sciences. 2019; 9(19):4093. https://doi.org/10.3390/app9194093

Chicago/Turabian StyleRoyo, Santiago, and Maria Ballesta-Garcia. 2019. "An Overview of Lidar Imaging Systems for Autonomous Vehicles" Applied Sciences 9, no. 19: 4093. https://doi.org/10.3390/app9194093

APA StyleRoyo, S., & Ballesta-Garcia, M. (2019). An Overview of Lidar Imaging Systems for Autonomous Vehicles. Applied Sciences, 9(19), 4093. https://doi.org/10.3390/app9194093