Machine Learning-Based Dimension Optimization for Two-Stage Precoder in Massive MIMO Systems with Limited Feedback

Abstract

1. Introduction

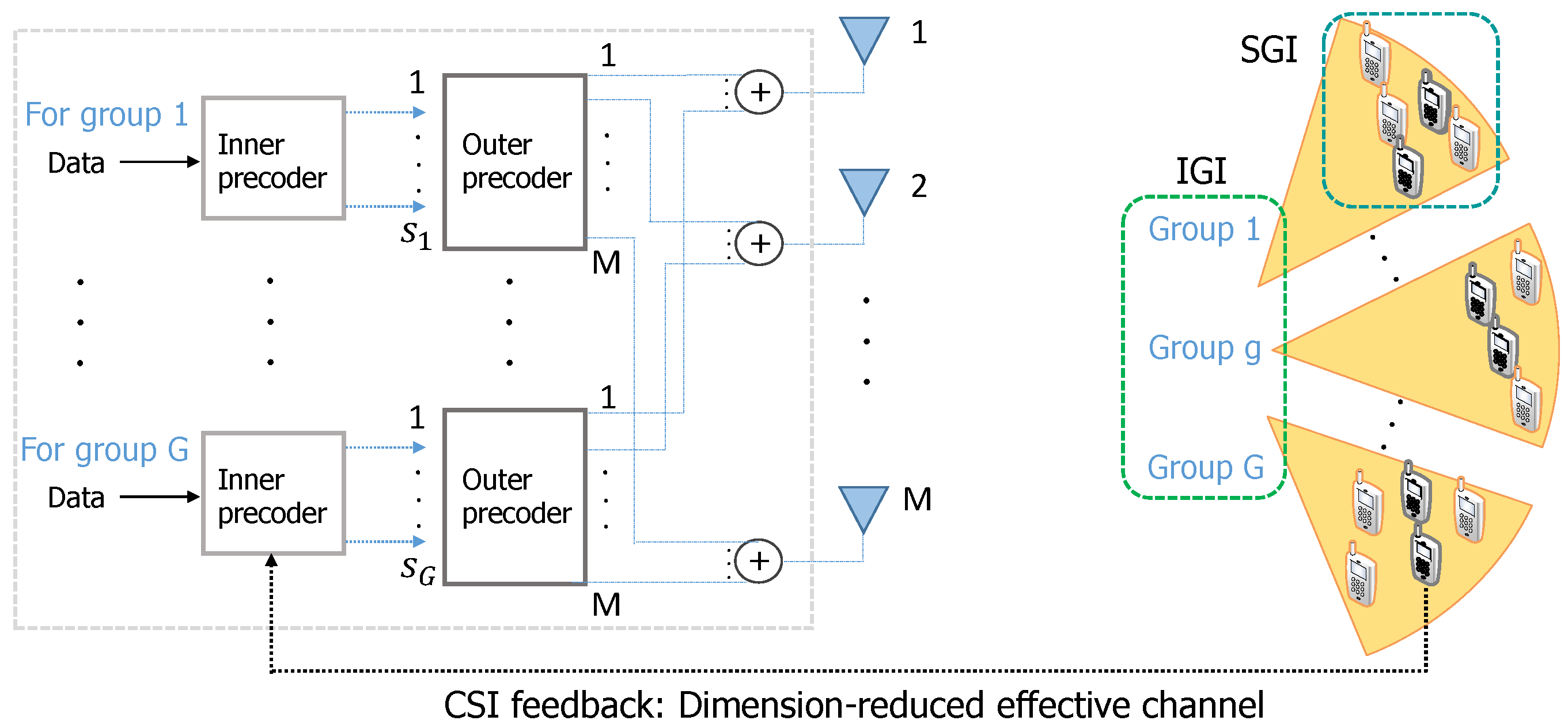

- We introduce our two-stage precoder design with limited feedback, where the quantized CDI of the dimension-reduced effective channel is only fed back to the BS. We first derive a lower bound of the average sum rate and then optimize the dimension of the outer precoder to maximize average sum rate.

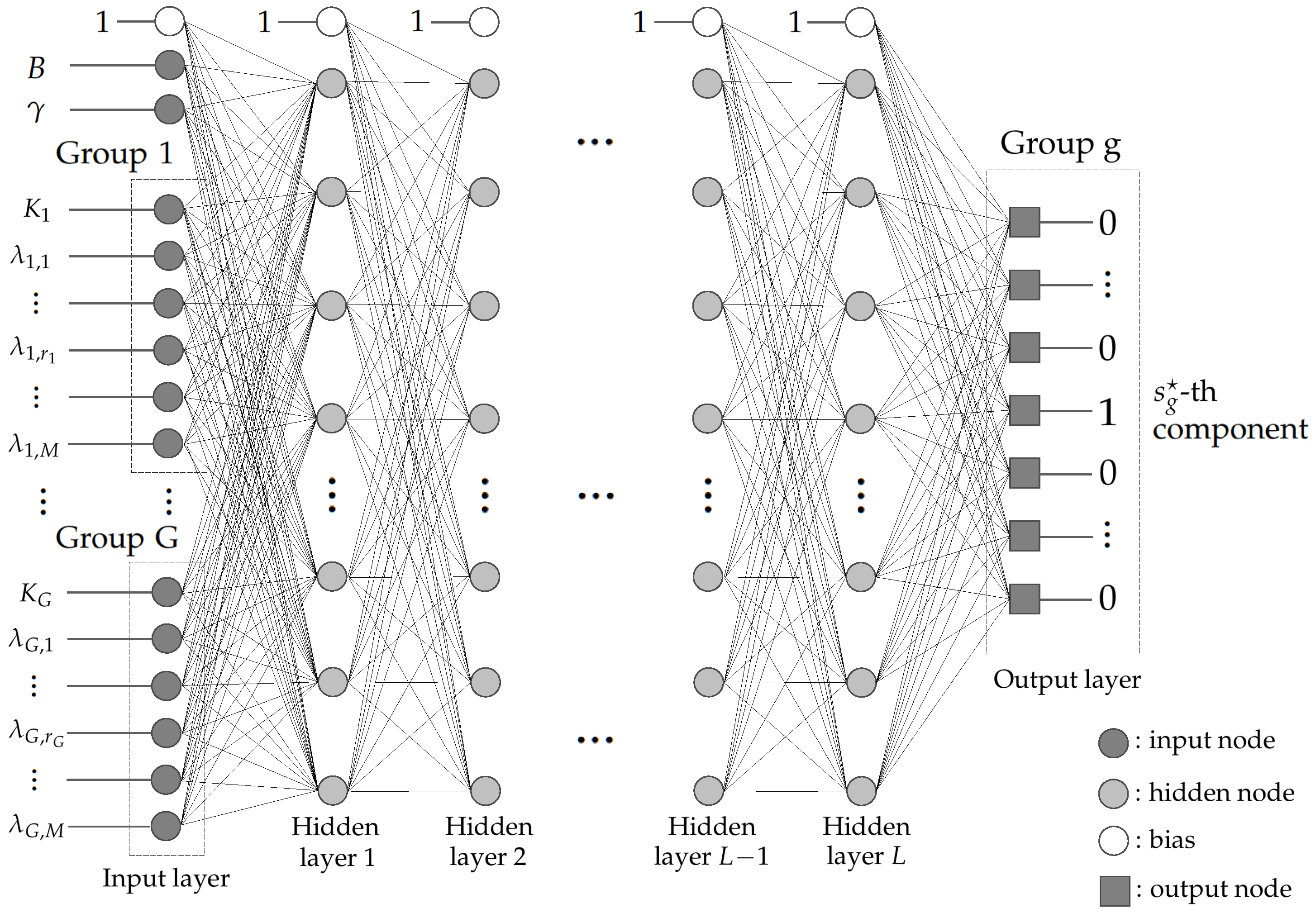

- We propose the machine learning framework for the dimension optimization based on a deep neural network (DNN); we determine the DNN architecture of the input, the hidden, and the output layers as well as training procedure. Our DNN architecture takes eigenvalues of covariance matrices of user groups as inputs and returns the structure of outer precoder, which represents the allocated dimensions for all user groups.

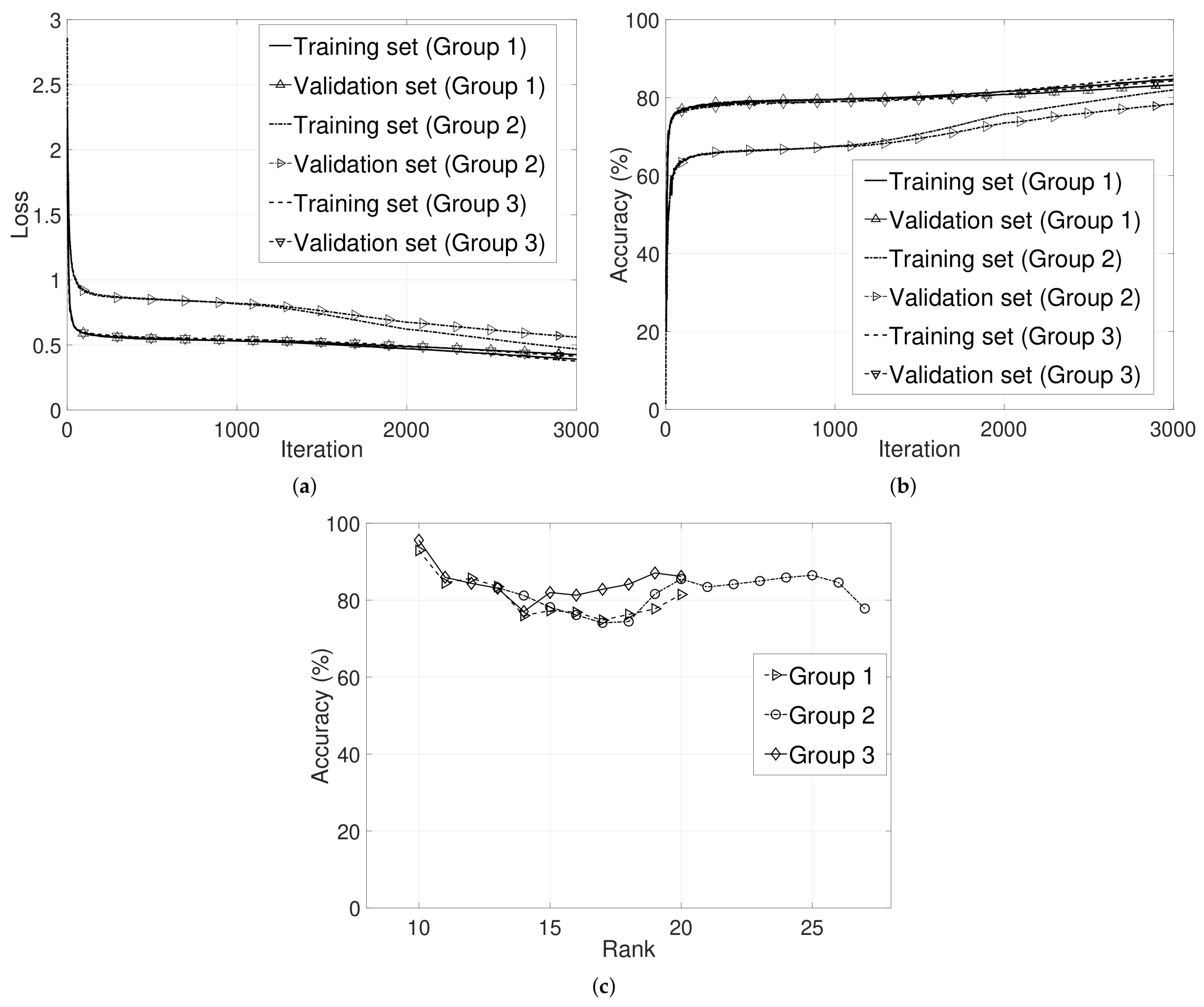

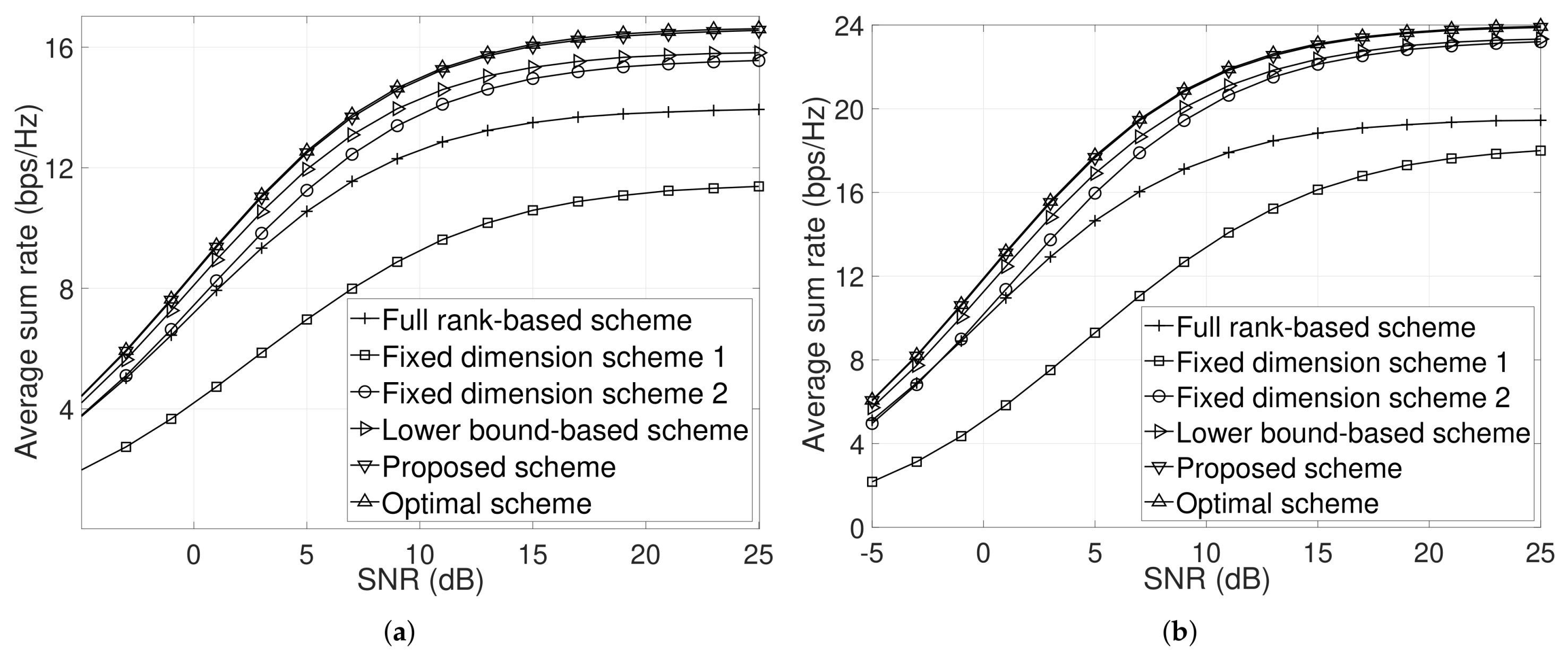

- We evaluate our DNN model and show that our proposed machine learning based outer precoder dimension optimization improves the average sum-rate and achieves near-optimal performance.

2. System Model

3. Limited Feedback with Two-Stage Precoder

3.1. Two-Stage Precoder

3.2. Limited Feedback Method with a Two-Stage Precoder

3.3. Problem Formulation

4. Our Previous Work on Dimension Optimization

| Algorithm 1 Finding the optimal solution of the problem |

|

5. Machine Learning Framework for Dimension Optimization

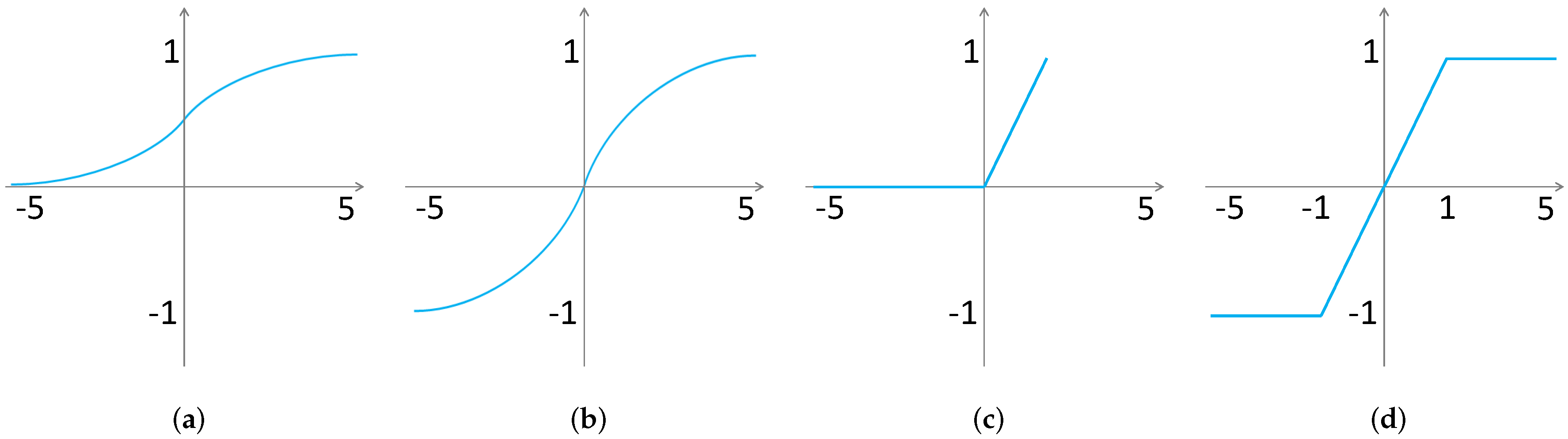

5.1. Preliminary: The General DNN Architecture

5.2. The Proposed DNN Framework

5.2.1. Input Layer

5.2.2. Output Layer

5.2.3. Hidden Layer

5.2.4. DNN Training

6. DNN Performance and Numerical Results

- Optimal scheme: The optimal dimensions of the outer precoder are obtained via brute-force numerical search.

- Full rank-based scheme: The dimensions of the outer precoder are the number of ranks of covariance matrices.

- Lower bound-based scheme: The dimensions of the outer precoder are obtained by the lower bound-based analysis and the solution of problem .

- Fixed dimension scheme 1: The outer precoder dimensions are fixed to five, i.e., .

- Fixed dimension scheme 2: The outer precoder dimensions are fixed to eight, i.e., .

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Larsson, E.G.; Edfors, O.; Tufvesson, F.; Marzetta, T.L. Massive MIMO for next generation wireless systems. IEEE Commun. Mag. 2014, 52, 186–195. [Google Scholar] [CrossRef]

- Larsson, E.G.; Edfors, O.; Tufvesson, F.; Marzetta, T.L. An overview of massive MIMO: Benefits and challenge. IEEE J. Sel. Top. Signal Process. 2014, 8, 742–758. [Google Scholar]

- Zhang, Q.; Jin, S.; Wong, K.K.; Zhu, H.; Matthaiou, M. Power scaling of uplink massive MIMO systems with arbitrary-rank channel means. IEEE J. Sel. Top. Signal Process. 2014, 8, 966–981. [Google Scholar] [CrossRef]

- Ngo, H.Q.; Larsson, E.G.; Marzetta, T.L. Energy and spectral efficiency of very large multiuser MIMO systems. IEEE Trans. Commun. 2013, 61, 1436–1449. [Google Scholar]

- Marzetta, T.L. How much training is required for multiuser MIMO? In Proceedings of the 2006 Fortieth Asilomar Conference on Signals, Systems and Computers, Pacific Grove, CA, USA, 29 October–1 November 2006; pp. 359–363. [Google Scholar]

- Jindal, N. MIMO broadcast channels with finite-rate feedback. IEEE Trans. Inf. Theory 2006, 52, 5045–5060. [Google Scholar] [CrossRef]

- Lee, J.H.; Choi, W. Unified codebook design for vector channel quantization in MIMO broadcast channels. IEEE Trans. Signal Process. 2015, 63, 2509–2519. [Google Scholar] [CrossRef]

- Xie, H.; Gao, F.; Zhang, S.; Jin, S. A unified transmission strategy for TDD/FDD massive MIMO systems with spatial basis expansion model. IEEE Trans. Veh. Technol. 2017, 66, 3170–3184. [Google Scholar] [CrossRef]

- Wagner, S.; Couillet, R.; Debbah, M.; Slock, D.T.M. Large system analysis of linear precoding in correlated MISO broadcast channels under limited feedback. IEEE Trans. Inf. Theory 2012, 58, 4509–4537. [Google Scholar] [CrossRef]

- Adhikary, A.; Nam, J.; Ahn, J.-Y.; Caire, G. Joint Spatial division and multiplexing—The large-scale array regime. IEEE Trans. Inf. Theory 2013, 59, 6441–6463. [Google Scholar] [CrossRef]

- Kim, D.; Lee, G.; Sung, Y. Two-stage beamformer design for massive MIMO downlink by trace quotient formulation. IEEE Trans. Commun. 2015, 63, 2200–2211. [Google Scholar] [CrossRef]

- Park, J.; Clerckx, B. Multi-user linear precoding for multi-polarized Massive MIMO system under imperfect CSIT. IEEE Trans. Wirel. Commun. 2015, 14, 2532–2547. [Google Scholar] [CrossRef]

- Jeon, Y.-S.; Min, M. Large system analysis of two-stage beamforming with limited feedback in FDD massive MIMO systems. IEEE Trans. Veh. Technol. 2018, 67, 4984–4997. [Google Scholar] [CrossRef]

- Sohrabi, F.; Yu, W. Hybrid digital and analog beamforming design for large-scale antenna arrays. IEEE J. Sel. Top. Signal Process. 2016, 10, 501–513. [Google Scholar] [CrossRef]

- Lin, Y.-P. Hybrid MIMO-OFDM beamforming for wideband mmWave channels without instantaneous feedback. IEEE Access 2017, 5, 21806–21817. [Google Scholar] [CrossRef]

- Castanheira, D.; Lopes, P.; Silva, A.; Gameiro, A. Hybrid beamforming designs for massive MIMO millimeter-wave heterogeneous systems. IEEE Access 2017, 5, 21806–21817. [Google Scholar] [CrossRef]

- Magueta, R.; Castanheira, D.; Silva, A.; Dinis, R.; Gameiro, A. Hybrid iterative space-time equalization for multi-user mmW massive MIMO systems. IEEE Trans. Commun. 2017, 65, 608–620. [Google Scholar] [CrossRef]

- Castañeda, E.; Castanheira, D.; Silva, A.; Gameiro, A. Parametrization and applications of precoding reuse and downlink interference alignment. IEEE Trans. Wirel. Commun. 2017, 16, 2641–2650. [Google Scholar] [CrossRef]

- Kang, J.; Lee, J.H.; Choi, W. Two-stage precoder for massive MIMO systems with limited feedback. In Proceedings of the 2018 2nd International Conference on Recent Advances in Signal Processing, Telecommunications & Computing (SigTelCom), Ho Chi Minh, Vietnam, 29–31 January 2018; pp. 91–96. [Google Scholar]

- Klaine, P.V.; Imran, M.A.; Onireti, O.; Souza, R.D. A survey of machine learning techniques applied to self-organizing cellular networks. IEEE Commun. Surv. Tutor. 2017, 19, 2392–2431. [Google Scholar] [CrossRef]

- Mao, Q.; Hu, F.; Hao, Q. Deep Learning for Intelligent Wireless Networks: A Comprehensive Survey. IEEE Commun. Surv. Tutor. 2018, 20, 2595–2621. [Google Scholar] [CrossRef]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2017. [Google Scholar]

- Joung, J. Machine learning-based antenna selection in wireless communications. IEEE Commun. Lett. 2016, 20, 2241–2244. [Google Scholar] [CrossRef]

- Kwon, H.J.; Lee, J.H.; Choi, W. Machine learning-based beamforming in two-user MISO interference channels. In Proceedings of the 2019 International Conference on Artificial Intelligence in Information and Communication (ICAIIC), Okinawa, Japan, 11–12 February 2019; pp. 496–499. [Google Scholar]

- Huang, H.; Yang, J.; Huang, H.; Song, Y.; Gui, G. Deep learning for super-resolution channel estimation and DOA estimation based massive MIMO system. IEEE Trans. Veh. Technol. 2018, 67, 8549–8560. [Google Scholar] [CrossRef]

- Huang, H.; Song, Y.; Yang, J.; Gui, G.; Adachi, F. Deep-learning-based millimeter-wave massive for hybrid precoding. IEEE Trans. Veh. Technol. 2019, 68, 3027–3032. [Google Scholar] [CrossRef]

- Hinton, G.E.; Osindero, S.; Teh, Y.W. A fast learning algorithm for deep belief nets. Neural Comput. 2006, 18, 1527–1554. [Google Scholar] [CrossRef]

- Spencer, Q.H.; Swindlehurst, A.L.; Haardt, M. Zero-forcing methods for downlink spatial multiplexing in multiuser MIMO channels. IEEE Trans. Signal Process. 2004, 52, 461–471. [Google Scholar] [CrossRef]

- Li, P.; Paul, D.; Narasimhan, R.; Cioffi, J. On the distribution of SINR for the MMSE MIMO receiver and performance analysis. IEEE Trans. Inf. Theory 2006, 52, 271–286. [Google Scholar]

- Duchi, J.; Hazan, E.; Singer, Y. Adaptive subgradient methods for online learning and stochastic optimization. J. Mach. Learn. Res. 2011, 12, 2121–2159. [Google Scholar]

- Tieleman, T.; Hinton, G. Lecture 6.5-rmsprop: Divide the gradient by a running average of its recent magnitude. COURSERA Neural Netw. Mach. Learn. 2012, 4, 26–31. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- MathWorks. Deep Learning Toolbox. Available online: https://www.mathworks.com/products/deep-learning.html (accessed on 18 July 2019).

| Name | Sigmoid | tanh | ReLU | SSaLU | Softmax |

|---|---|---|---|---|---|

| Name | Mean Square Error | Cross Entropy |

|---|---|---|

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kang, J.; Lee, J.H.; Choi, W. Machine Learning-Based Dimension Optimization for Two-Stage Precoder in Massive MIMO Systems with Limited Feedback. Appl. Sci. 2019, 9, 2894. https://doi.org/10.3390/app9142894

Kang J, Lee JH, Choi W. Machine Learning-Based Dimension Optimization for Two-Stage Precoder in Massive MIMO Systems with Limited Feedback. Applied Sciences. 2019; 9(14):2894. https://doi.org/10.3390/app9142894

Chicago/Turabian StyleKang, Jinho, Jung Hoon Lee, and Wan Choi. 2019. "Machine Learning-Based Dimension Optimization for Two-Stage Precoder in Massive MIMO Systems with Limited Feedback" Applied Sciences 9, no. 14: 2894. https://doi.org/10.3390/app9142894

APA StyleKang, J., Lee, J. H., & Choi, W. (2019). Machine Learning-Based Dimension Optimization for Two-Stage Precoder in Massive MIMO Systems with Limited Feedback. Applied Sciences, 9(14), 2894. https://doi.org/10.3390/app9142894