Usability Measures in Mobile-Based Augmented Reality Learning Applications: A Systematic Review

Abstract

1. Introduction

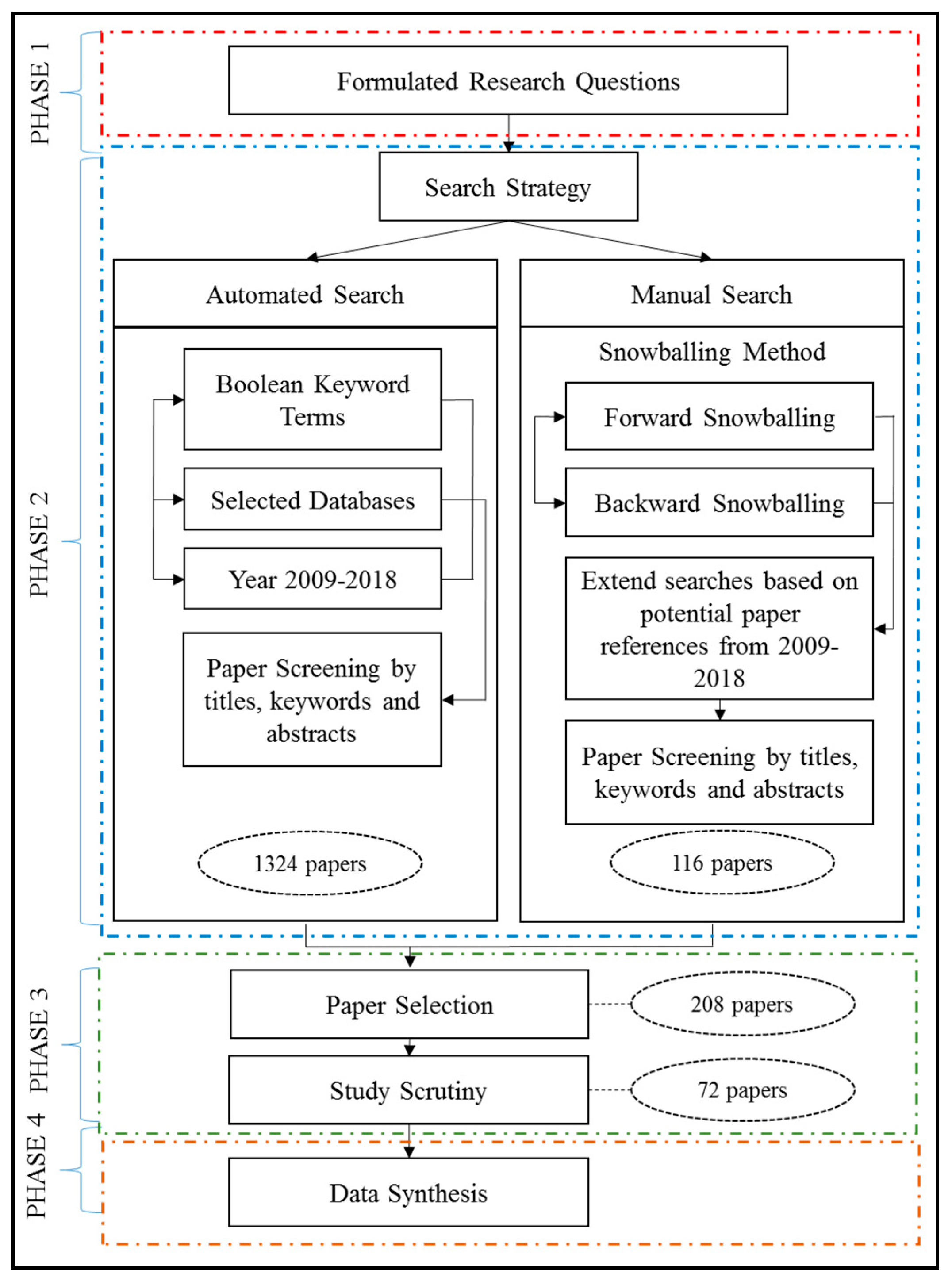

2. Research Method

2.1. Research Questions

2.2. Search Strategies

2.2.1. Automated Search

2.2.2. Manual Search

2.2.3. Literature Resources

- IEEEXplore

- Web of Science

- ScienceDirect

- SpringerLink

- ACM Digital Library

- Google Scholar

2.2.4. Search Process

2.3. Study Selection

2.3.1. Study Scrutiny

2.4. Data Synthesis

3. Threats to Validity

- Construct validity was confirmed through the implementation of an automated and manual (snowballing) search from the very beginning of data collection aimed to mitigate calculated risks. In order to further restraint this TTV, major steps of scrutiny plus additional QAs were carried out complementing existing RQs and clear selection criteria.

- Internal validity was solved by adopting a method used by [7]. In order to eliminate biases in paper selection through an exhaustive search, a combination approach of automated search and snowballing was carried out for a more inclusive selection approach. Every extracted study underwent strict selection protocols after being extracted from all major databases in similar research areas [1,7,11].

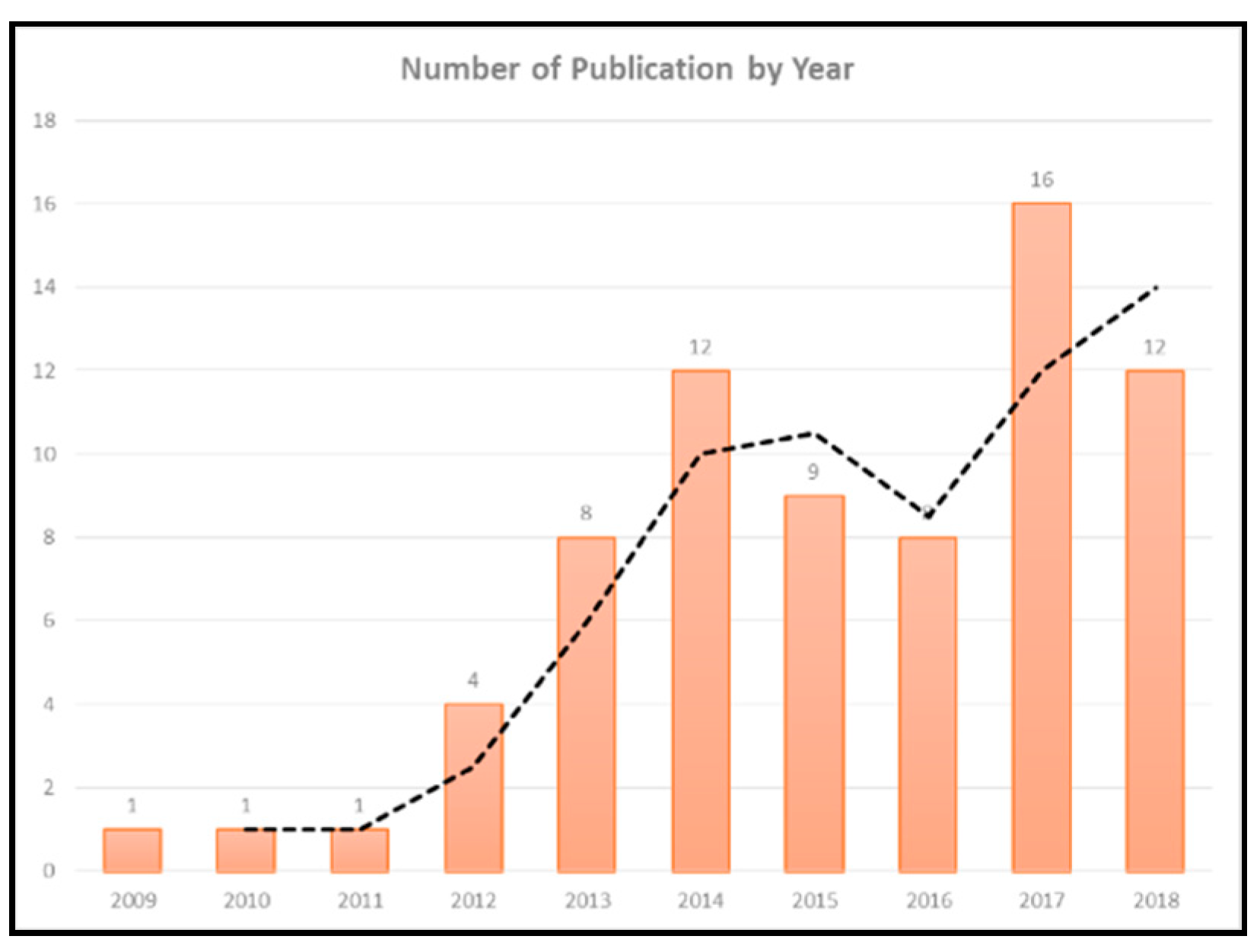

- External validity was mitigated with a generalizability of results by incorporating a 10 years’ timeframe in MAR studies with a usability evaluation. The incremental collection of papers by year was parallel to the number of available papers by year, which can be an indicator that this SLR is able to maintain a generalized report aligned with the research’s external validity requirements.

- Conclusion validity was managed by implementing SLR methods and techniques used in this study following the established, specific, and well-defined guidelines explored by scholars from credible publications such as [8]. It is therefore possible for each and every research chronology in this SLR to be replicated with measurable and near-identical outcomes.

4. Results and Discussion

4.1. Detailed Information of Selected Studies

4.2. Domains, Research Types, and Contributions in Mobile Augmented Reality Based Usability Studies (RQ1)

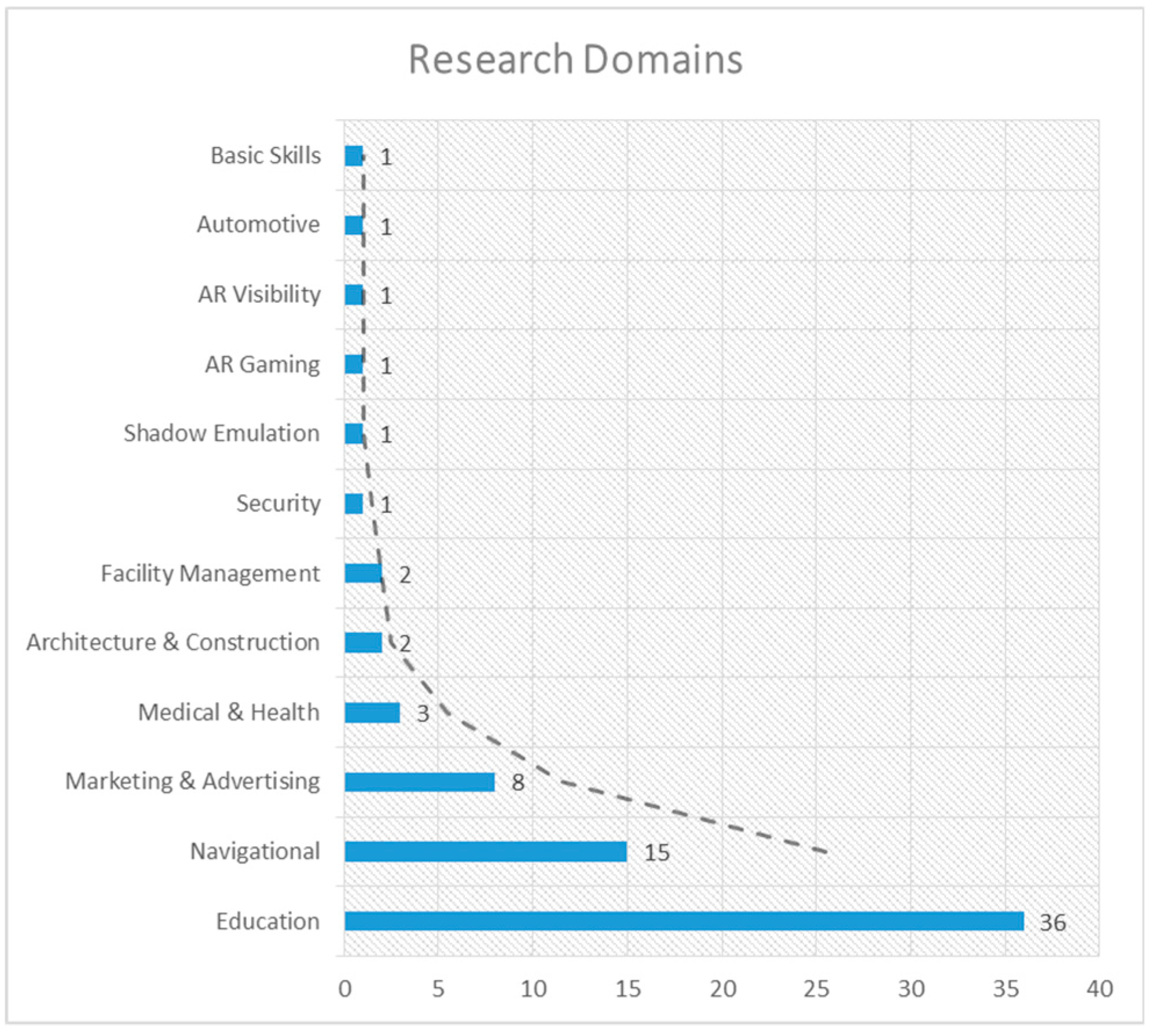

4.2.1. Research Domains

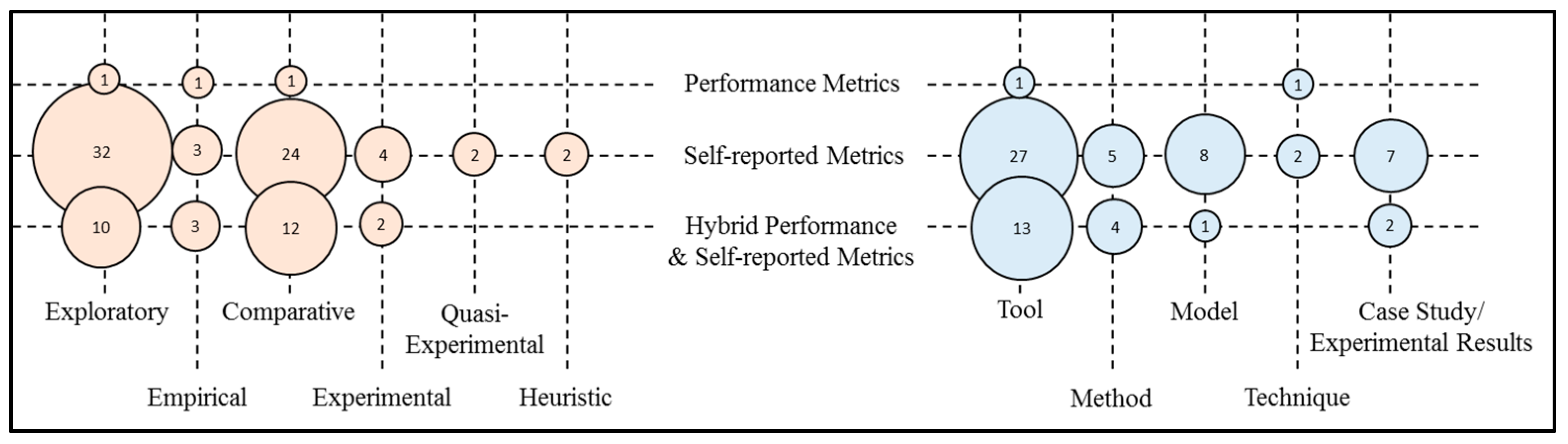

4.2.2. Research Types

4.2.3. Research Contributions

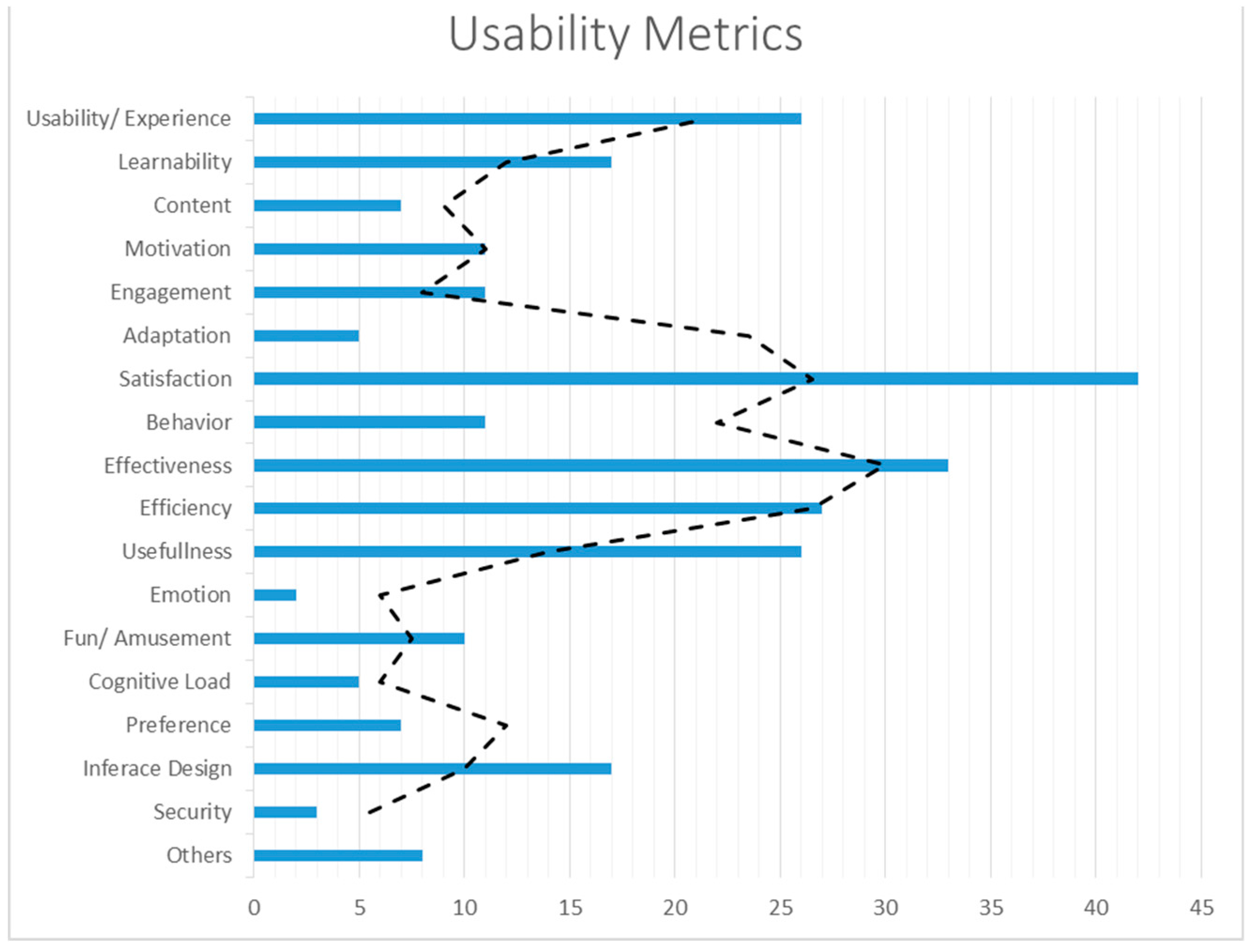

4.3. Usability Metrics (RQ2)

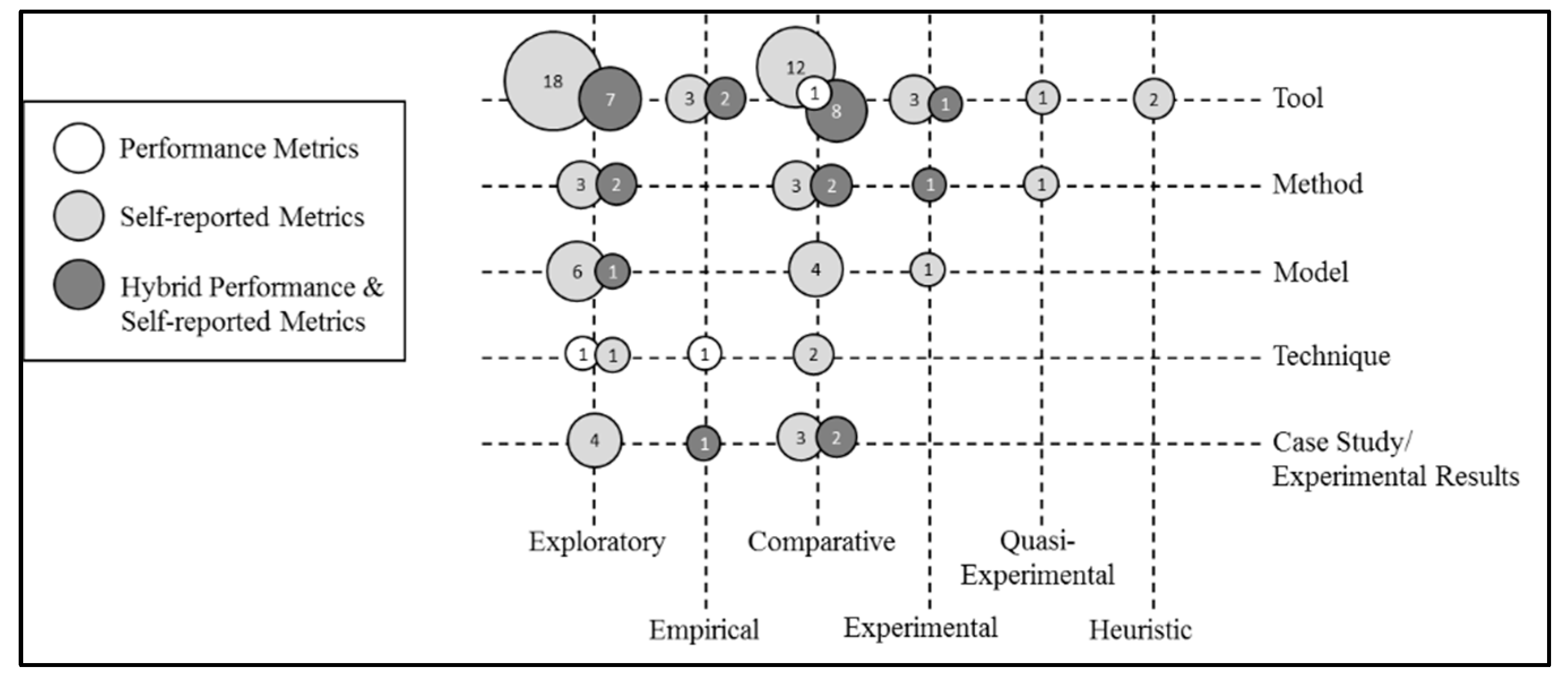

4.3.1. Performance vs. Self-Reported

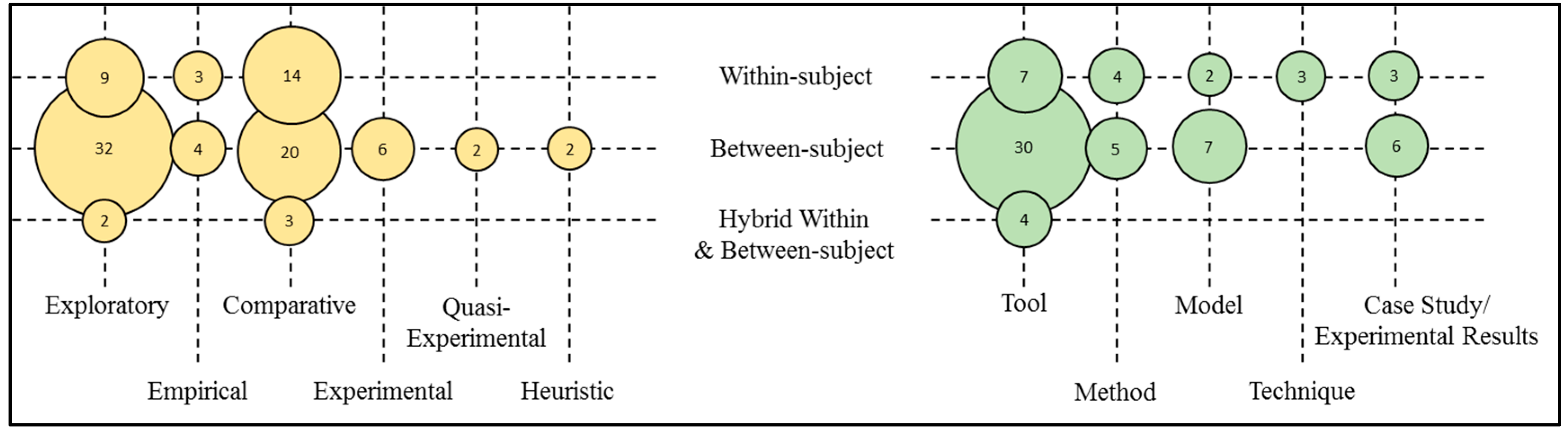

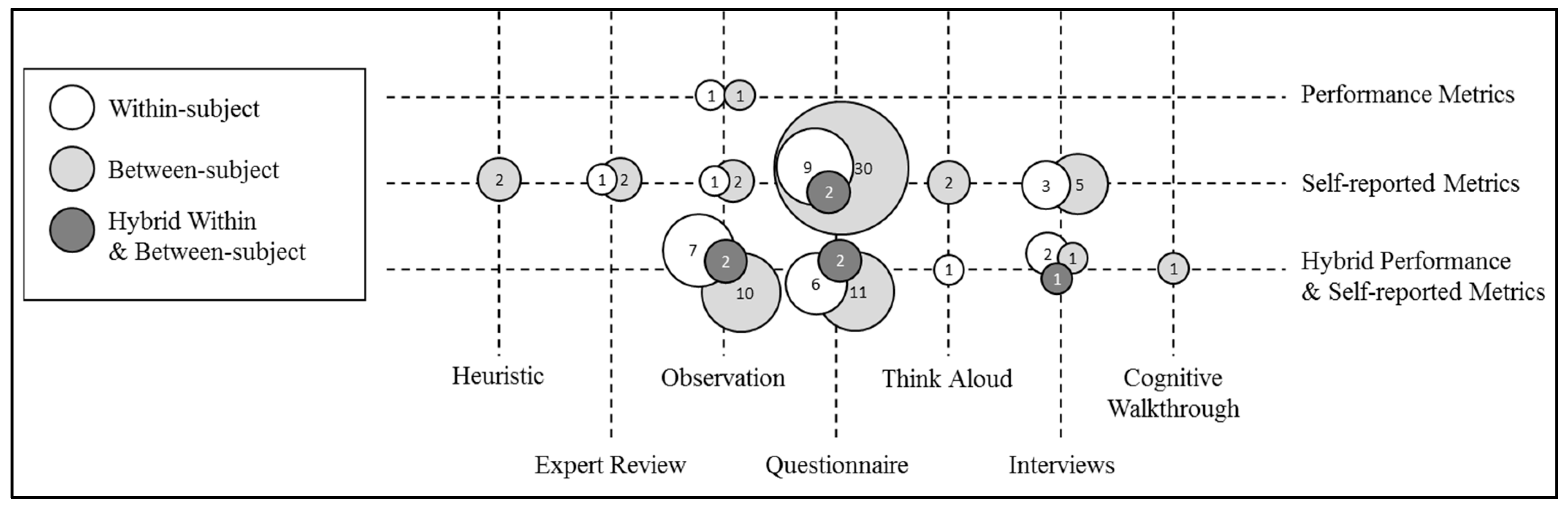

4.3.2. Within-Subjects vs. Between-Subjects

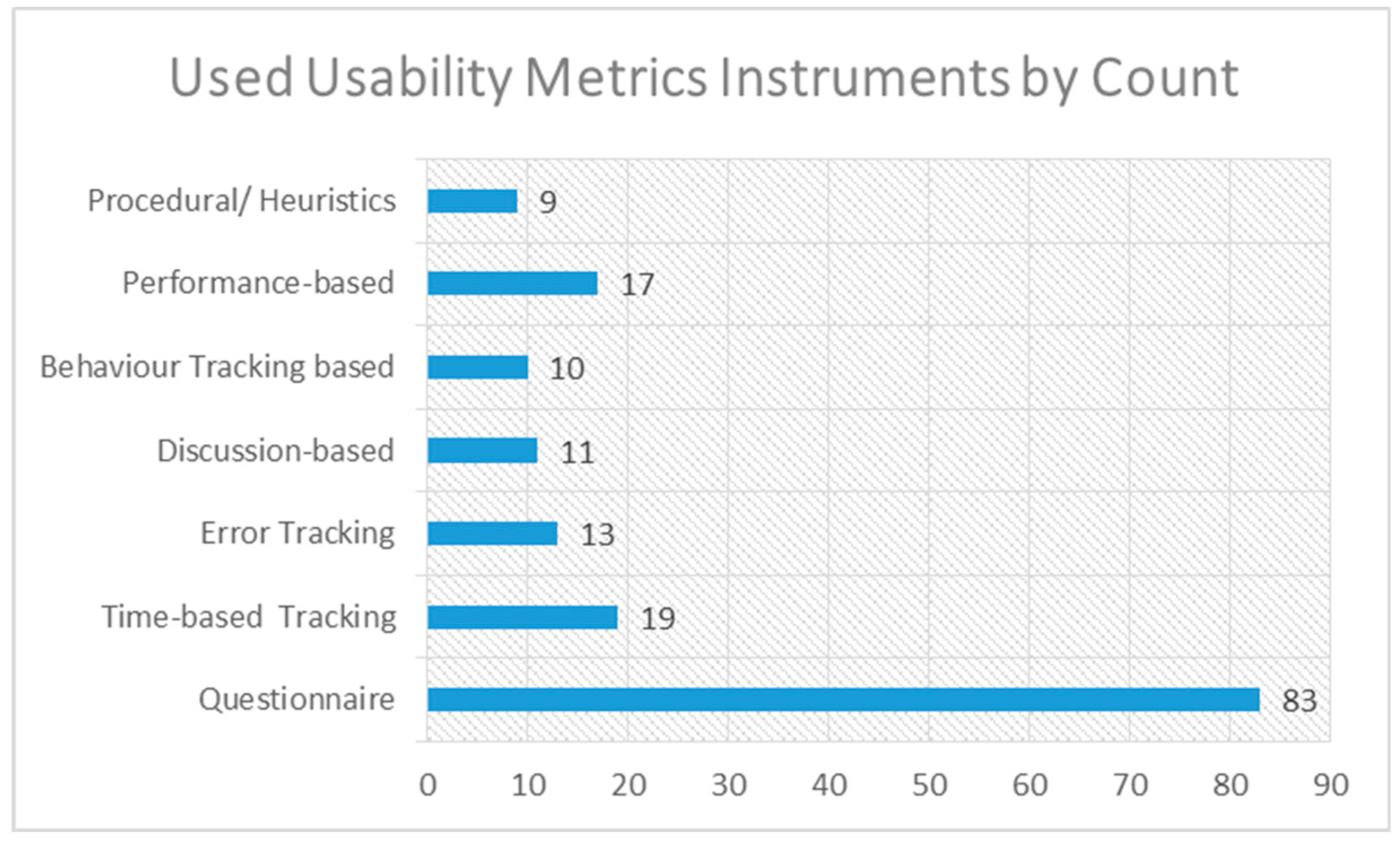

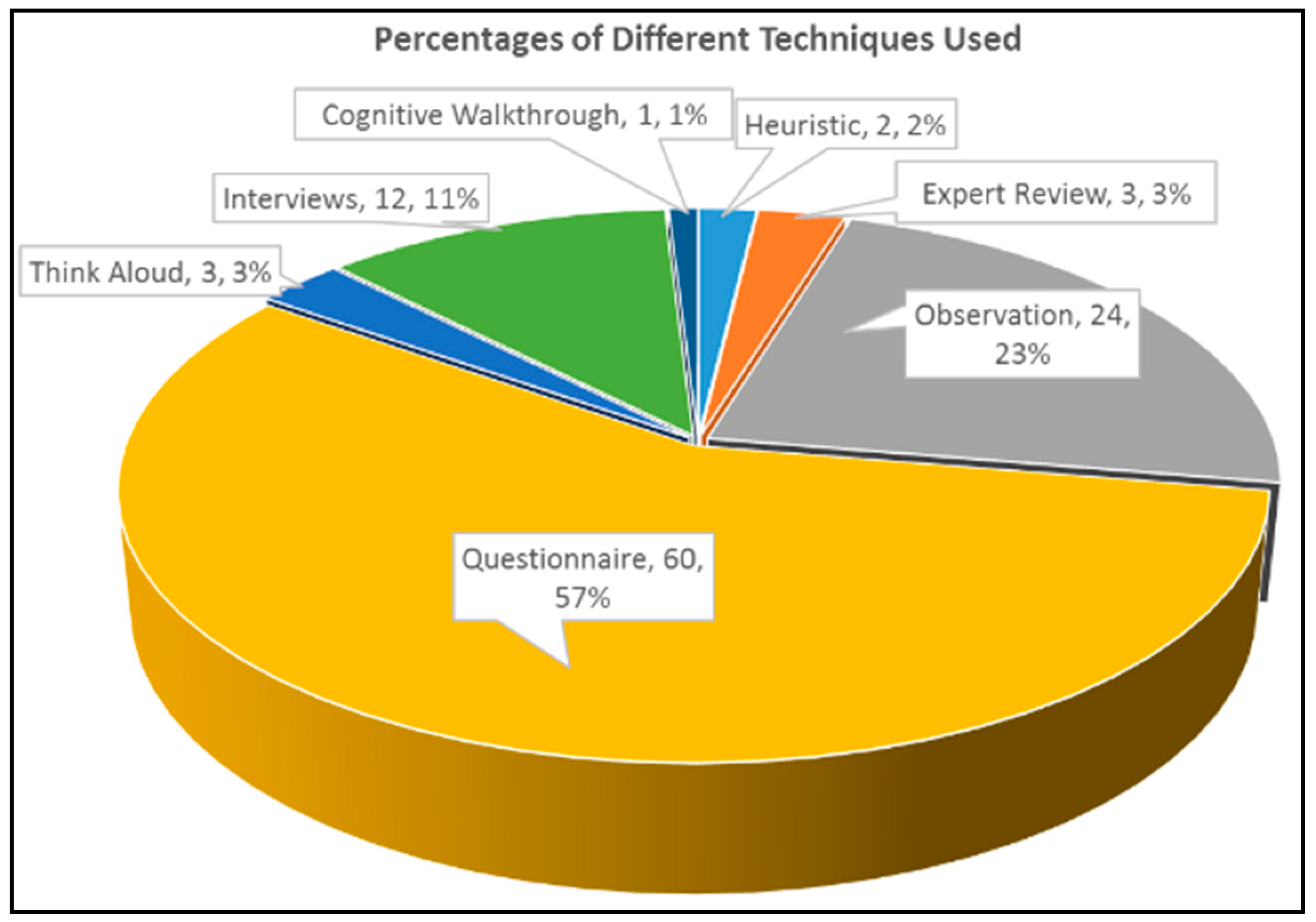

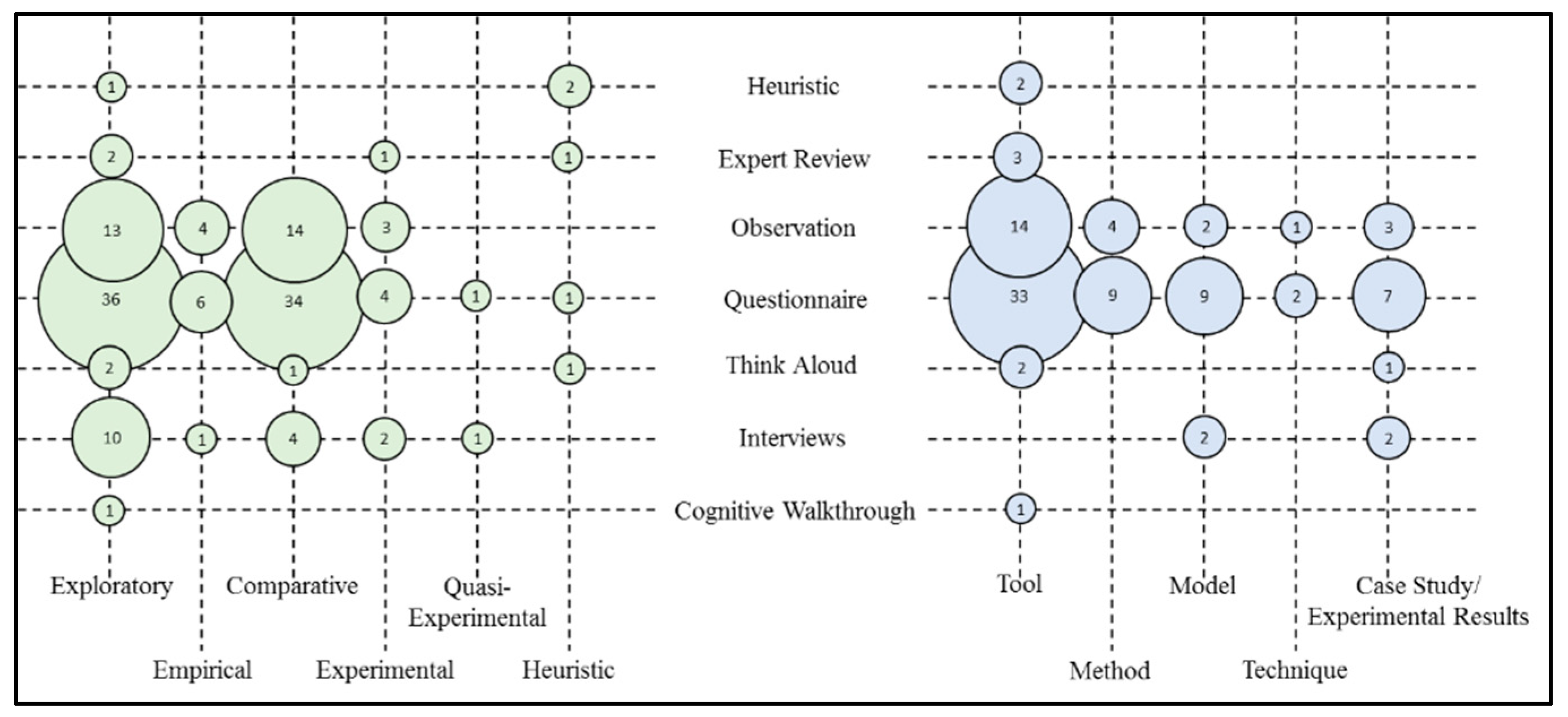

4.4. Usability Methods, Techniques, and Instruments (RQ3)

4.4.1. Open-Ended Questionnaires

4.4.2. Close-Ended Questionnaires

4.4.3. Standardized Questionnaires

4.4.4. Time-Based Tracking

4.4.5. Error Tracking

4.4.6. Discussion-Based

4.4.7. Expression Observation

4.4.8. Performance-Based Tracking

4.4.9. Procedural

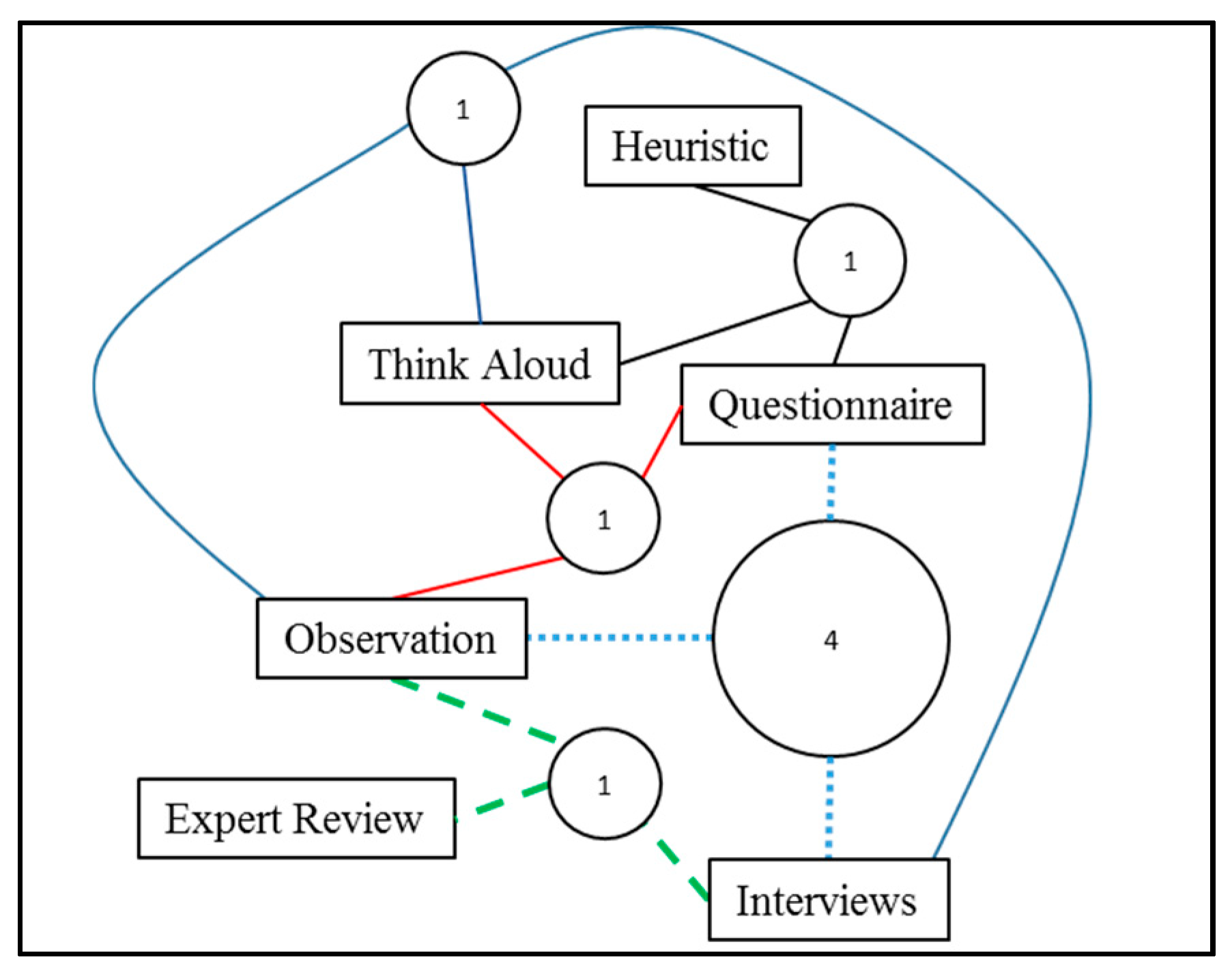

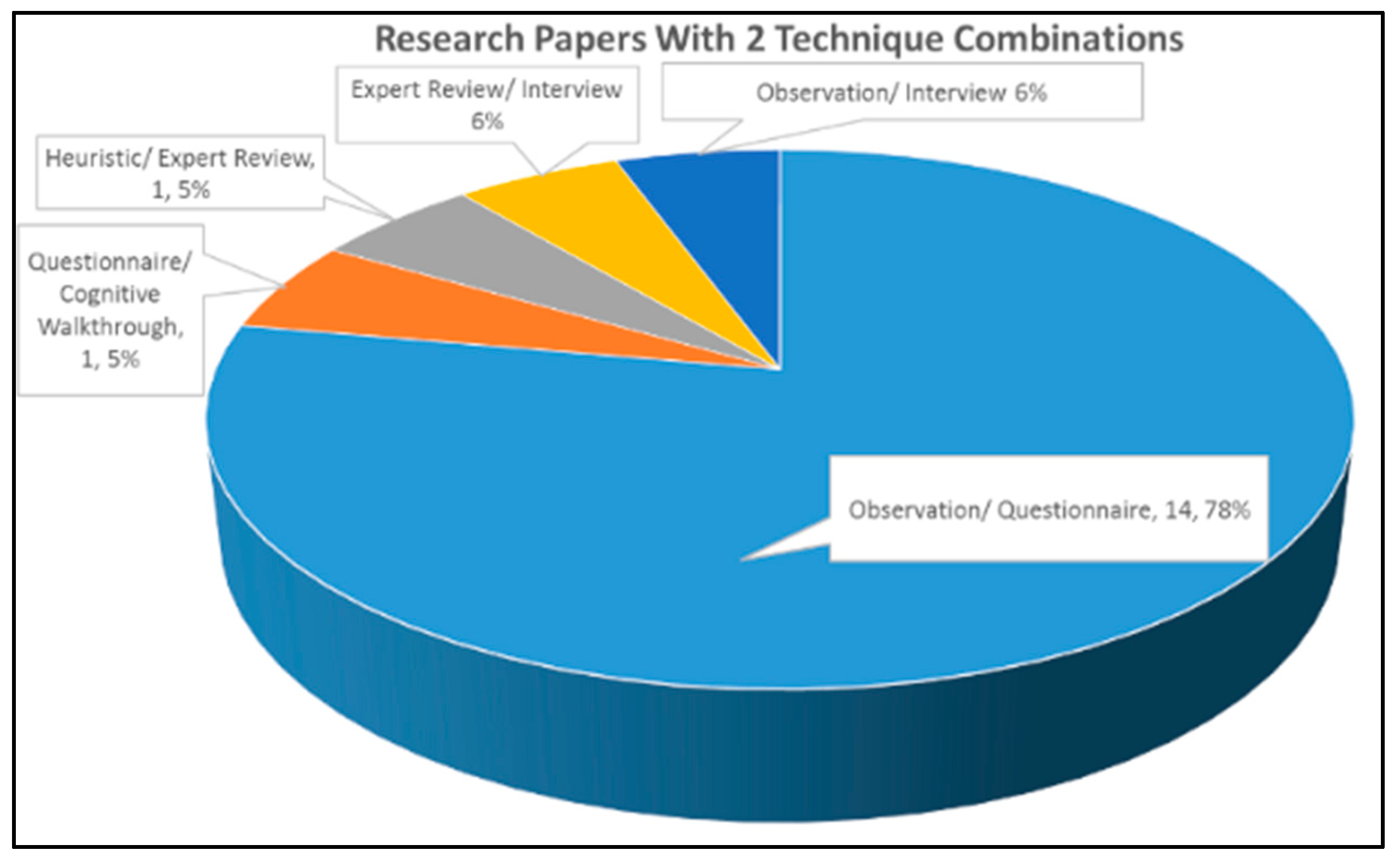

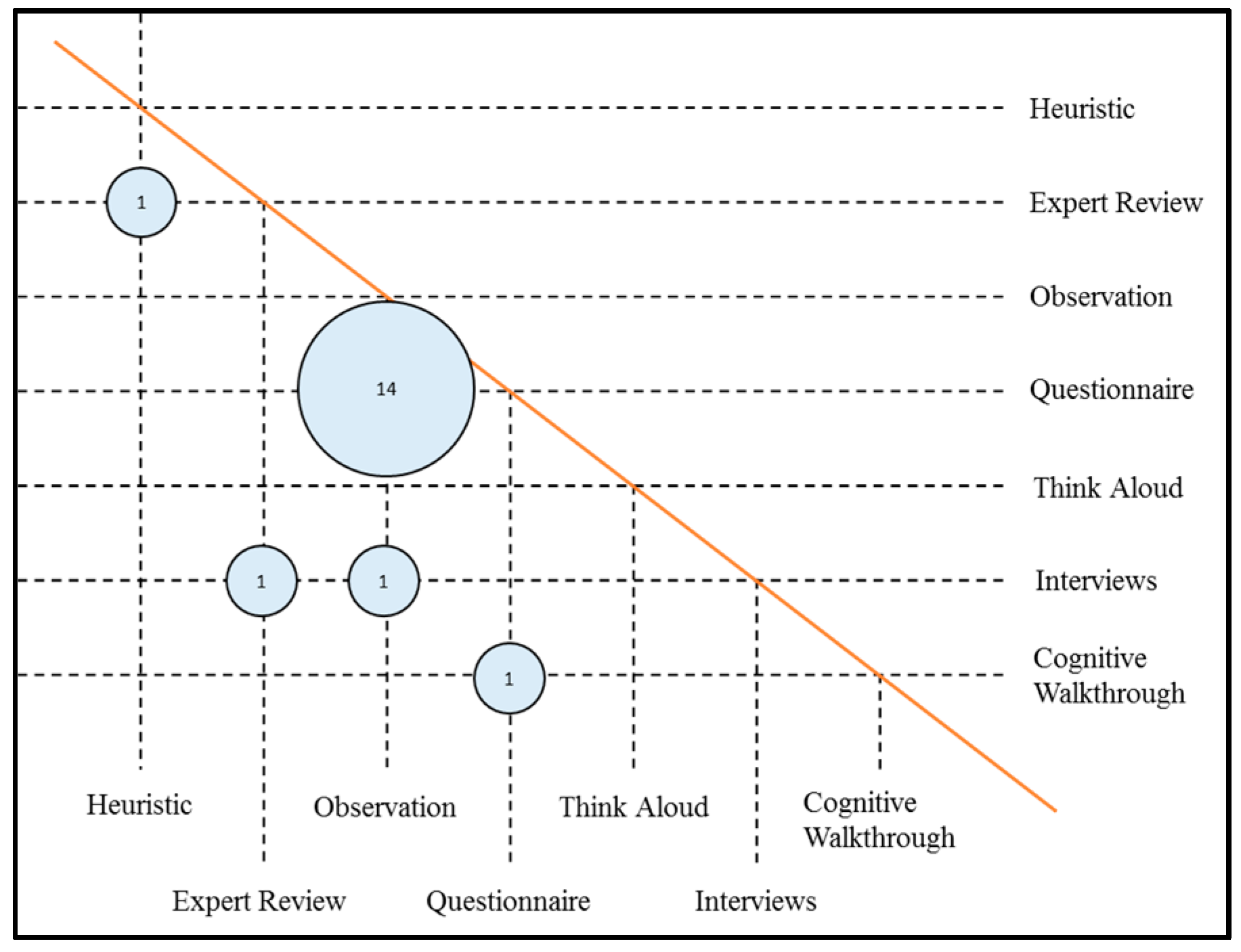

4.5. Correlational Usability Mapping (RQ4)

5. Research Findings on Identified Gaps

5.1. Educational Domains versus Others (G1)

5.2. Modes of Contributions (G2)

5.3. Standardization of Usability Metrics (G3)

5.4. Limited Quality versus Large Sample Convenience (G4)

5.5. Limitation of Hybrid Usability Methods (G5)

6. Recommendations

6.1. Potential of MAR Usability in Myriad of Domains

6.2. Implementation of Research Types

6.3. Validation of New Usability Metrics in MAR

6.4. Utilization of Performance Metrics

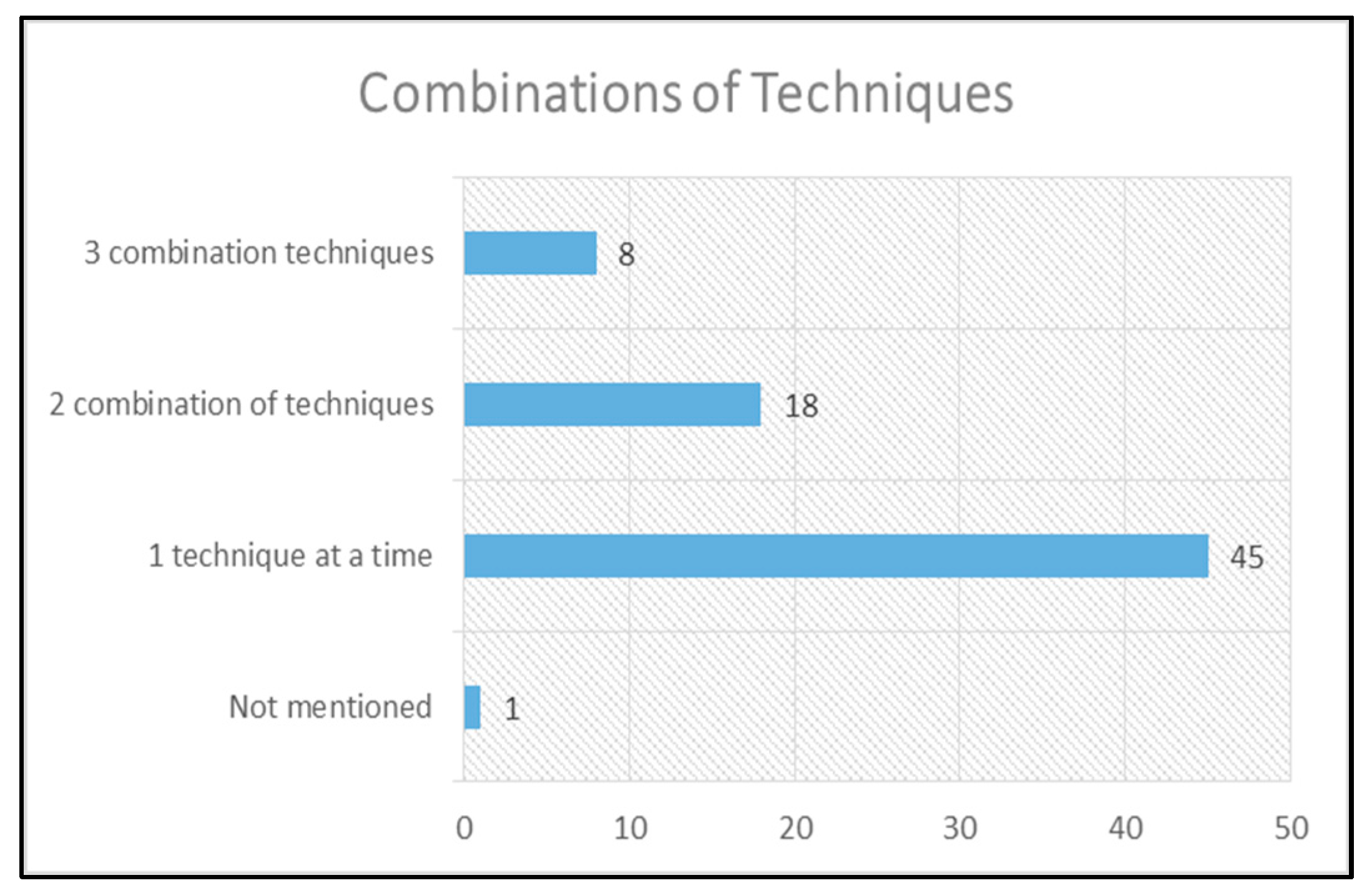

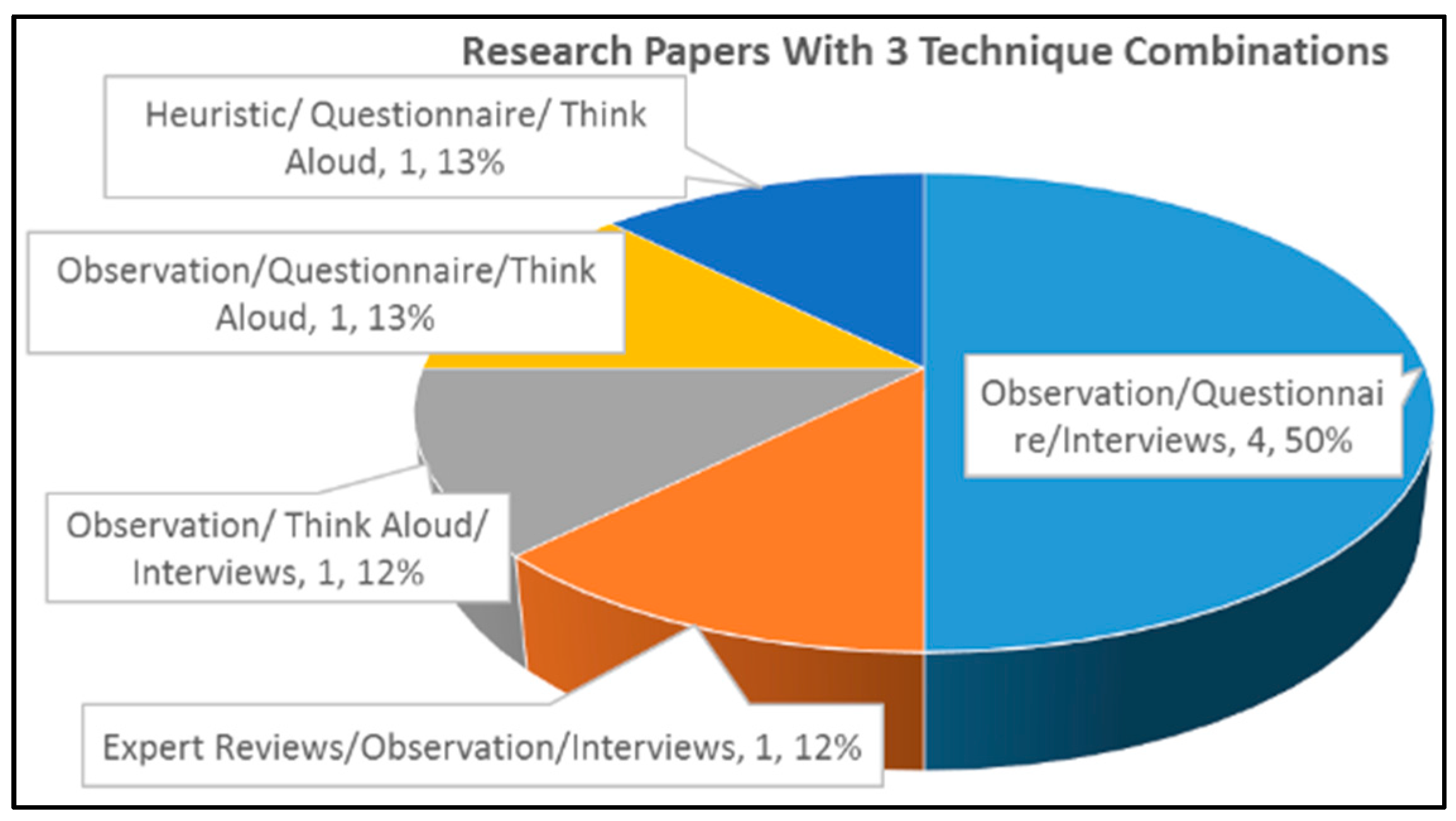

6.5. Potential of Hybrid Techniques in MAR Usability Evaluation

6.6. Correlational Research

7. Limitations

7.1. Quality of Work

7.2. Biases in Paper Selection

7.3. Data Synthesis

8. Conclusion

Author Contributions

Funding

Conflicts of Interest

References

- Santos, M.E.C.; Chen, A.; Taketomi, T.; Yamamoto, G.; Miyazaki, J.; Kato, H. Augmented reality learning experiences: Survey of prototype design and evaluation. IEEE Trans. Learn. Technol. 2014, 7, 38–56. [Google Scholar] [CrossRef]

- Albert, W.; Tullis, T. Measuring the User Experience: Collecting, Analyzing, and Presenting Usability Metrics; Newnes: Boston, MA, USA, 2013. [Google Scholar]

- Edward, J.; Ii, S.; Gabbard, J.L. Survey of User-Based Experimentation in augmented Reality. In Proceedings of the 1st International Conference on Virtual Reality, Las Vegas, NV, USA, 22–27 July 2005. [Google Scholar]

- Arth, C.; Grasset, R.; Gruber, L.; Langlotz, T.; Mulloni, A.; Wagner, D. The History of Mobile Augmented Reality; ArXiv150501319 Cs; Cornell University: Ithaca, NY, USA, 2015. [Google Scholar]

- Nincarean, D.; Alia, M.B.; Halim, N.D.A.; Rahman, M.H.A. Mobile augmented reality: The potential for education. Procedia-Soc. Behav. Sci. 2013, 103, 657–664. [Google Scholar] [CrossRef]

- Liao, T. Future directions for mobile augmented reality research: Understanding relationships between augmented reality users, nonusers, content, devices, and industry. Mob. Media Commun. 2019, 7, 131–149. [Google Scholar] [CrossRef]

- Zhou, X.; Jin, Y.; Zhang, H.; Li, S.; Huang, X. A Map of Threats to Validity of Systematic Literature Reviews in Software Engineering. In Proceedings of the 2016 23rd Asia-Pacific Software Engineering Conference (APSEC), Hamilton, New Zealand, 6–9 December 2016; pp. 153–160. [Google Scholar]

- Kitchenham, B.; Brereton, O.P.; Budgen, D.; Turner, M.; Bailey, J.; Linkman, S. Systematic literature reviews in software engineering—A systematic literature review. Inf. Softw. Technol. 2009, 51, 7–15. [Google Scholar] [CrossRef]

- Wohlin, C. Guidelines for Snowballing in Systematic Literature Studies and a Replication in Software Engineering. In Proceedings of the 18th International Conference on Evaluation and Assessment in Software Engineering, New York, NY, USA, 13–14 May 2014; p. 38. [Google Scholar]

- Zhang, H.; Babar, M.A.; Tell, P. Identifying relevant studies in software engineering. Inf. Softw. Technol. 2011, 53, 625–637. [Google Scholar] [CrossRef]

- Achimugu, P.; Selamat, A.; Ibrahim, R.; Mahrin, M.N. A systematic literature review of software requirements prioritization research. Inf. Softw. Technol. 2014, 56, 568–585. [Google Scholar] [CrossRef]

- Liu, T.-Y. A context-aware ubiquitous learning environment for language listening and speaking. J. Comput. Assist. Learn. 2009, 25, 515–527. [Google Scholar] [CrossRef]

- Liu, P.-H.E.; Tsai, M.-K. Using augmented-reality-based mobile learning material in EFL English composition: An exploratory case study. Br. J. Educ. Technol. 2013, 44, E1–E4. [Google Scholar] [CrossRef]

- Albrecht, U.-V.; Folta-Schoofs, K.; Behrends, M.; von Jan, U. Effects of Mobile Augmented Reality Learning Compared to Textbook Learning on Medical Students: Randomized Controlled Pilot Study. J. Med. Internet Res. 2013, 15, e182. [Google Scholar] [CrossRef]

- Cocciolo, A.; Rabina, D. Does place affect user engagement and understanding? Mobile learner perceptions on the streets of New York. J. Doc. 2013, 69, 98–120. [Google Scholar] [CrossRef]

- Olsson, T.; Lagerstam, E.; Kärkkäinen, T.; Väänänen-Vainio-Mattila, K. Expected user experience of mobile augmented reality services: A user study in the context of shopping centres. Pers. Ubiquitous Comput. 2011, 17, 287–304. [Google Scholar] [CrossRef]

- Pérez-Sanagustín, M.; Hernández-Leo, D.; Santos, P.; Kloos, C.D.; Blat, J. Augmenting Reality and Formality of Informal and Non-Formal Settings to Enhance Blended Learning. IEEE Trans. Learn. Technol. 2014, 7, 118–131. [Google Scholar] [CrossRef]

- Fonseca, D.; Martí, N.; Redondo, E.; Navarro, I.; Sánchez, A. Relationship between student profile, tool use, participation, and academic performance with the use of Augmented Reality technology for visualized architecture models. Comput. Hum. Behav. 2014, 31, 434–445. [Google Scholar] [CrossRef]

- Blanco-Fernández, Y.; López-Nores, M.; Pazos-Arias, J.J.; Gil-Solla, A.; Ramos-Cabrer, M.; García-Duque, J. REENACT: A step forward in immersive learning about Human History by augmented reality, role playing and social networking. Expert Syst. Appl. 2014, 41, 4811–4828. [Google Scholar] [CrossRef]

- Shatte, A.; Holdsworth, J.; Lee, I. Mobile augmented reality based context-aware library management system. Expert Syst. Appl. 2014, 41, 2174–2185. [Google Scholar] [CrossRef]

- Escobedo, L.; Tentori, M.; Quintana, E.; Favela, J.; Garcia-Rosas, D. Using Augmented Reality to Help Children with Autism Stay Focused. IEEE Pervasive Comput. 2014, 13, 38–46. [Google Scholar] [CrossRef]

- Riera, A.S.; Redondo, E.; Fonseca, D. Geo-located teaching using handheld augmented reality: Good practices to improve the motivation and qualifications of architecture students. Univers. Access Inf. Soc. 2014, 14, 363–374. [Google Scholar] [CrossRef]

- Muñoz-Cristóbal, J.A.; Jorrín-Abellán, I.M.; Asensio-Pérez, J.I.; Martínez-Monés, A.; Prieto, L.P.; Dimitriadis, Y. Supporting Teacher Orchestration in Ubiquitous Learning Environments: A Study in Primary Education. IEEE Trans. Learn. Technol. 2015, 8, 83–97. [Google Scholar] [CrossRef]

- Ibáñez, M.B.; Di-Serio, Á.; Villarán-Molina, D.; Delgado-Kloos, C. Augmented Reality-Based Simulators as Discovery Learning Tools: An Empirical Study. IEEE Trans. Educ. 2015, 58, 208–213. [Google Scholar] [CrossRef]

- Saracchini, R.; Catalina, C.; Bordoni, L. A Mobile Augmented Reality Assistive Technology for the Elderly. Comunicar 2015, 23, 65–73. [Google Scholar] [CrossRef]

- Martín-Gutiérrez, J.; Fabiani, P.; Benesova, W.; Meneses, M.D.; Mora, C.E. Augmented reality to promote collaborative and autonomous learning in higher education. Comput. Hum. Behav. 2015, 51, 752–761. [Google Scholar] [CrossRef]

- Kourouthanassis, P.; Boletsis, C.; Bardaki, C.; Chasanidou, D. Tourists responses to mobile augmented reality travel guides: The role of emotions on adoption behaviour. Pervasive Mob. Comput. 2015, 18, 71–87. [Google Scholar] [CrossRef]

- Rodas, N.L.; Barrera, F.; Padoy, N. See It with Your Own Eyes: Markerless Mobile Augmented Reality for Radiation Awareness in the Hybrid Room. IEEE Trans. Biomed. Eng. 2017, 64, 429–440. [Google Scholar]

- Hartl, A.D.; Arth, C.; Grubert, J.; Schmalstieg, D. Efficient Verification of Holograms Using Mobile Augmented Reality. IEEE Trans. Vis. Comput. Graph. 2016, 22, 1843–1851. [Google Scholar] [CrossRef] [PubMed]

- Nagata, J.J.; Giner, J.R.G.-B.; Abad, F.M. Virtual Heritage of the Territory: Design and Implementation of Educational Resources in Augmented Reality and Mobile Pedestrian Navigation. IEEE Rev. Iberoam. Tecnol. Aprendiz. 2016, 11, 41–46. [Google Scholar] [CrossRef]

- Sekhavat, Y.A. Privacy Preserving Cloth Try-On Using Mobile Augmented Reality. IEEE Trans. Multimed. 2017, 19, 1041–1049. [Google Scholar] [CrossRef]

- Pantano, E.; Rese, A.; Baier, D. Enhancing the online decision-making process by using augmented reality: A two country comparison of youth markets. J. Retail. Consum. Serv. 2017, 38, 81–95. [Google Scholar] [CrossRef]

- Frank, J.A.; Kapila, V. Mixed-reality learning environments: Integrating mobile interfaces with laboratory test-beds. Comput. Educ. 2017, 110, 88–104. [Google Scholar] [CrossRef]

- Rese, A.; Baier, D.; Geyer-Schulz, A.; Schreiber, S. How augmented reality apps are accepted by consumers: A comparative analysis using scales and opinions. Technol. Forecast. Soc. Chang. 2017, 124, 306–319. [Google Scholar] [CrossRef]

- Dacko, S.G. Enabling smart retail settings via mobile augmented reality shopping apps. Technol. Forecast. Soc. Chang. 2017, 124, 243–256. [Google Scholar] [CrossRef]

- Lima, J.P.; Roberto, R.; Simões, F.; Almeida, M.; Figueiredo, L.; Teixeira, J.M.; Teichrieb, V. Markerless tracking system for augmented reality in the automotive industry. Expert Syst. Appl. 2017, 82, 100–114. [Google Scholar] [CrossRef]

- Turkan, Y.; Radkowski, R.; Karabulut-Ilgu, A.; Behzadan, A.H.; Chen, A. Mobile augmented reality for teaching structural analysis. Adv. Eng. Inform. 2017, 34, 90–100. [Google Scholar] [CrossRef]

- Maia, L.F.; Nolêto, C.; Lima, M.; Ferreira, C.; Marinho, C.; Viana, W.; Trinta, F. LAGARTO: A LocAtion based Games AuthoRing TOol enhanced with augmented reality features. Entertain. Comput. 2017, 22, 3–13. [Google Scholar] [CrossRef]

- Sekhavat, Y.A.; Parsons, J. The effect of tracking technique on the quality of user experience for augmented reality mobile navigation. Multimed. Tools Appl. 2018, 77, 11635–11668. [Google Scholar] [CrossRef]

- Chiu, C.-C.; Lee, L.-C. System satisfaction survey for the App to integrate search and augmented reality with geographical information technology. Microsyst. Technol. 2018, 24, 319–341. [Google Scholar] [CrossRef]

- Gimeno, J.; Portalés, C.; Coma, I.; Fernández, M.; Martínez, B. Combining traditional and indirect augmented reality for indoor crowded environments. A case study on the Casa Batlló museum. Comput. Graph. 2017, 69, 92–103. [Google Scholar] [CrossRef]

- Brito, P.Q.; Stoyanova, J. Marker versus Markerless Augmented Reality. Which Has More Impact on Users? Int. J. Hum.–Comput. Interact. 2018, 34, 819–833. [Google Scholar] [CrossRef]

- Rogado, A.B.G.; Quintana, A.M.V.; Mayo, L.L. Evaluation of the Use of Technology to Improve Safety in the Teaching Laboratory. IEEE Rev. Iberoam. Tecnol. Aprendiz. 2017, 12, 17–23. [Google Scholar]

- Ahn, E.; Lee, S.; Kim, G.J. Real-time Adjustment of Contrast Saliency for Improved Information Visibility in Mobile Augmented Reality. In Proceedings of the 21st ACM Symposium on Virtual Reality Software and Technology; ACM: New York, NY, USA, 2015; p. 199. [Google Scholar]

- Léger, É.; Drouin, S.; Collins, D.L.; Popa, T.; Kersten-Oertel, M. Quantifying attention shifts in augmented reality image-guided neurosurgery. Healthc. Technol. Lett. 2017, 4, 188–192. [Google Scholar] [CrossRef]

- Chu, M.; Matthews, J.; Love, P.E.D. Integrating mobile Building Information Modelling and Augmented Reality systems: An experimental study. Autom. Constr. 2018, 85, 305–316. [Google Scholar] [CrossRef]

- Liu, F.; Seipel, S. Precision study on augmented reality-based visual guidance for facility management tasks. Autom. Constr. 2018, 90, 79–90. [Google Scholar] [CrossRef]

- Peleg-Adler, R.; Lanir, J.; Korman, M. The effects of aging on the use of handheld augmented reality in a route planning task. Comput. Hum. Behav. 2018, 81, 52–62. [Google Scholar] [CrossRef]

- Dieck, M.C.T.; Jung, T.H.; Rauschnabel, P.A. Determining visitor engagement through augmented reality at science festivals: An experience economy perspective. Comput. Hum. Behav. 2018, 82, 44–53. [Google Scholar] [CrossRef]

- Scholz, J.; Duffy, K. We ARe at home: How augmented reality reshapes mobile marketing and consumer-brand relationships. J. Retail. Consum. Serv. 2018, 44, 11–23. [Google Scholar] [CrossRef]

- Imottesjo, H.; Kain, J.-H. The Urban CoBuilder—A mobile augmented reality tool for crowd-sourced simulation of emergent urban development patterns: Requirements, prototyping and assessment. Comput. Environ. Urban Syst. 2018, 71, 120–130. [Google Scholar] [CrossRef]

- Barreira, J.; Bessa, M.; Barbosa, L.; Magalhães, L. A Context-Aware Method for Authentically Simulating Outdoors Shadows for Mobile Augmented Reality. IEEE Trans. Vis. Comput. Graph. 2018, 24, 1223–1231. [Google Scholar] [CrossRef] [PubMed]

- Fenu, C.; Pittarello, F. Svevo tour: The design and the experimentation of an augmented reality application for engaging visitors of a literary museum. Int. J. Hum.-Comput. Stud. 2018, 114, 20–35. [Google Scholar] [CrossRef]

- Chiu, C.-C.; Lee, L.-C. Empirical study of the usability and interactivity of an augmented-reality dressing mirror. Microsyst. Technol. 2018, 24, 4399–4413. [Google Scholar] [CrossRef]

- Michel, T.; Genevès, P.; Fourati, H.; Layaïda, N. Attitude estimation for indoor navigation and augmented reality with smartphones. Pervasive Mob. Comput. 2018, 46, 96–121. [Google Scholar] [CrossRef]

- Torres-Jiménez, E.; Rus-Casas, C.; Dorado, R.; Jiménez-Torres, M. Experiences Using QR Codes for Improving the Teaching-Learning Process in Industrial Engineering Subjects. IEEE Rev. Iberoam. Tecnol. Aprendiz. 2018, 13, 56–62. [Google Scholar] [CrossRef]

- Liu, T.-Y.; Tan, T.-H.; Chu, Y.-L. QR code and augmented reality-supported mobile English learning system. In Mobile Multimedia Processing; Springer: Berlin, Germany, 2010; pp. 37–52. [Google Scholar]

- Fonseca, D.; Martí, N.; Navarro, I.; Redondo, E.; Sánchez, A. Using augmented reality and education platform in architectural visualization: Evaluation of usability and student’s level of sastisfaction. In Proceedings of the 2012 International Symposium on Computers in Education (SIIE), Andorra la Vella, Andorra, 29–31 October 2012; pp. 1–6. [Google Scholar]

- Sánchez, A.; Redondo, E.; Fonseca, D. Developing an Augmented Reality Application in the Framework of Architecture Degree. In Proceedings of the 2012 ACM Workshop on User Experience in e-Learning and Augmented Technologies in Education, New York, NY, USA, 2 November 2012; pp. 37–42. [Google Scholar]

- Santana-Mancilla, P.C.; Garc’a-Ruiz, M.A.; Acosta-Diaz, R.; Juárez, C.U. Service Oriented Architecture to Support Mexican Secondary Education through Mobile Augmented Reality. Procedia Comput. Sci. 2012, 10, 721–727. [Google Scholar] [CrossRef]

- Shirazi, A.; Behzadan, A.H. Technology-enhanced learning in construction education using mobile context-aware augmented reality visual simulation. In Proceedings of the 2013 Winter Simulations Conference (WSC), Washington, DC, USA, 8–11 December 2013; pp. 3074–3085. [Google Scholar]

- Corrêa, A.G.D.; Tahira, A.; Ribeir, J.B.; Kitamura, R.K.; Inoue, T.Y.; Ficheman, I.K. Development of an interactive book with Augmented Reality for mobile learning. In Proceedings of the 2013 8th Iberian Conference on Information Systems and Technologies (CISTI), Lisboa, Portugal, 19–22 June 2013; pp. 1–7. [Google Scholar]

- Ferrer, V.; Perdomo, A.; Rashed-Ali, H.; Fies, C.; Quarles, J. How Does Usability Impact Motivation in Augmented Reality Serious Games for Education? In Proceedings of the 2013 5th International Conference on Games and Virtual Worlds for Serious Applications (VS-GAMES), Poole, UK, 11–13 September 2013; pp. 1–8. [Google Scholar]

- Redondo, E.; Fonseca, D.; Sánchez, A.; Navarro, I. New Strategies Using Handheld Augmented Reality and Mobile Learning-teaching Methodologies, in Architecture and Building Engineering Degrees. Procedia Comput. Sci. 2013, 25, 52–61. [Google Scholar] [CrossRef]

- Fonseca, D.; Villagrasa, S.; Valls, F.; Redondo, E.; Climent, A.; Vicent, L. Motivation assessment in engineering students using hybrid technologies for 3D visualization. In Proceedings of the 2014 International Symposium on Computers in Education (SIIE), Logrono, Spain, 12–14 November 2014; pp. 111–116. [Google Scholar]

- Sánchez, A.; Redondo, E.; Fonseca, D.; Navarro, I. Academic performance assessment using Augmented Reality in engineering degree course. In Proceedings of the 2014 IEEE Frontiers in Education Conference (FIE) Proceedings, Madrid, Spain, 22–25 October 2014; pp. 1–7. [Google Scholar]

- Fonseca, D.; Villagrasa, S.; Vails, F.; Redondo, E.; Climent, A.; Vicent, L. Engineering teaching methods using hybrid technologies based on the motivation and assessment of student’s profiles. In Proceedings of the 2014 IEEE Frontiers in Education Conference (FIE) Proceedings, Madrid, Spain, 22–25 October 2014; pp. 1–8. [Google Scholar]

- Camba, J.; Contero, M.; Salvador-Herranz, G. Desktop vs. mobile: A comparative study of augmented reality systems for engineering visualizations in education. In Proceedings of the 2014 IEEE Frontiers in Education Conference (FIE) Proceedings, Madrid, Spain, 22–25 October 2014; pp. 1–8. [Google Scholar]

- Chen, W. Historical Oslo on a Handheld Device—A Mobile Augmented Reality Application. Procedia Comput. Sci. 2014, 35, 979–985. [Google Scholar] [CrossRef]

- He, J.; Ren, J.; Zhu, G.; Cai, S.; Chen, G. Mobile-Based AR Application Helps to Promote EFL Children’s Vocabulary Study. In Proceedings of the 2014 IEEE 14th International Conference on Advanced Learning Technologies, Athens, Greece, 7–10 July 2014; pp. 431–433. [Google Scholar]

- Lai, A.S.Y.; Wong, C.Y.K.; Lo, O.C.H. Applying Augmented Reality Technology to Book Publication Business. In Proceedings of the 2015 IEEE 12th International Conference on e-Business Engineering (ICEBE), Beijing, China, 23–25 October 2015; pp. 281–286. [Google Scholar]

- Rogers, K.; Frommel, J.; Breier, L.; Celik, S.; Kramer, H.; Kreidel, S.; Brich, J.; Riemer, V.; Schrader, C. Mobile Augmented Reality as an Orientation Aid: A Scavenger Hunt Prototype. In Proceedings of the 2015 International Conference on Intelligent Environments (IE), Prague, Czech Republic, 15–17 July 2015; pp. 172–175. [Google Scholar]

- Jamali, S.S.; Shiratuddin, M.F.; Wong, K.W.; Oskam, C.L. Utilising Mobile-Augmented Reality for Learning Human Anatomy. Procedia-Soc. Behav. Sci. 2015, 197, 659–668. [Google Scholar] [CrossRef]

- Majid, N.A.A.; Mohammed, H.; Sulaiman, R. Students’ Perception of Mobile Augmented Reality Applications in Learning Computer Organization. Procedia-Soc. Behav. Sci. 2015, 176, 111–116. [Google Scholar] [CrossRef]

- Zainuddin, N.; Idrus, R.M. The use of augmented reality enhanced flashcards for arabic vocabulary acquisition. In Proceedings of the 2016 13th Learning and Technology Conference (L T), Jeddah, Saudi Arabia, 10–11 April 2016; pp. 1–5. [Google Scholar]

- Bazzaza, M.W.; Alzubaidi, M.; Zemerly, M.J.; Weruga, L.; Ng, J. Impact of smart immersive mobile learning in language literacy education. In Proceedings of the 2016 IEEE Global Engineering Education Conference (EDUCON), Abu Dhabi, United Arab Emirates, 10–13 April 2016; pp. 443–447. [Google Scholar]

- Qassem, L.M.M.S.A.; Hawai, H.A.; Shehhi, S.A.; Zemerly, M.J.; Ng, J.W.P. AIR-EDUTECH: Augmented immersive reality (AIR) technology for high school Chemistry education. In Proceedings of the 2016 IEEE Global Engineering Education Conference (EDUCON), Abu Dhabi, United Arab Emirates, 10–13 April 2016; pp. 842–847. [Google Scholar]

- Kulpy, A.; Bekaroo, G. Fruitify: Nutritionally augmenting fruits through markerless-based augmented reality. In Proceedings of the 2017 IEEE 4th International Conference on Soft Computing Machine Intelligence (ISCMI), Mauritius, Mauritius, 23–24 November 2017; pp. 149–153. [Google Scholar]

- Chessa, M.; Solari, F. [POSTER] Walking in Augmented Reality: An Experimental Evaluation by Playing with a Virtual Hopscotch. In Proceedings of the 2017 IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct), Nantes, France, 9–13 October 2017; pp. 143–148. [Google Scholar]

- Bratitsis, T.; Bardanika, P.; Ioannou, M. Science Education and Augmented Reality Content: The Case of the Water Circle. In Proceedings of the 2017 IEEE 17th International Conference on Advanced Learning Technologies (ICALT), Timisoara, Romania, 3–7 July 2017; pp. 485–489. [Google Scholar]

- Selviany, A.; Kaburuan, E.R.; Junaedi, D. User interface model for Indonesian Animal apps to kid using Augmented Reality. In Proceedings of the 2017 International Conference on Orange Technologies (ICOT), Singapore, 8–10 December 2017; pp. 134–138. [Google Scholar]

- Müller, L.; Aslan, I.; Krüßen, L. GuideMe: A Mobile Augmented Reality System to Display User Manuals for Home Appliances. In Advances in Computer Entertainment; Reidsma, D., Katayose, H., Nijholt, A., Eds.; Springer Nature: Cham, Switzerland, 2013; pp. 152–167. [Google Scholar]

- Tsai, T.-H.; Chang, H.-T.; Yu, M.-C.; Chen, H.-T.; Kuo, C.-Y.; Wu, W.-H. Design of a Mobile Augmented Reality Application: An Example of Demonstrated Usability. In Universal Access in Human-Computer Interaction. Interaction Techniques and Environments; Springer International Publishing: Cham, Switzerland, 2016; pp. 198–205. [Google Scholar]

- Master Journal List—Clarivate Analytics. Available online: http://mjl.clarivate.com/ (accessed on 17 January 2019).

- Scimago Journal & Country Rank. Available online: https://www.scimagojr.com/ (accessed on 17 January 2019).

- Bhattacherjee, A. Social Science Research: Principles, Methods, and Practices; Textbooks Collection; CreateSpace Independent Publishing Platform: Scotts Valley, CA, USA, 2012. [Google Scholar]

- Abásolo, M.J.; Abreu, J.; Almeida, P.; Silva, T. Applications and Usability of Interactive Television: 6th Iberoamerican Conference, jAUTI 2017; Aveiro, Portugal, October 12–13, 2017, Revised Selected Papers; Springer: Berlin, Germany, 2018. [Google Scholar]

- Patten, M.L. Proposing Empirical Research: A Guide to the Fundamentals; Taylor & Francis: Milton Park, Didcot, UK; Abingdon, UK, 2016. [Google Scholar]

- MacKenzie, I.S. Human-Computer Interaction: An Empirical Research Perspective; Newnes: Boston, MA, USA, 2012. [Google Scholar]

- Niessen, M.; Peschar, J. Comparative Research on Education: Overview, Strategy and Applications in Eastern and Western Europe; Elsevier: Amsterdam, The Netherlands, 2013. [Google Scholar]

- Randolph, J.J. Multidisciplinary Methods in Educational Technology Research and Development; HAMK Press/Justus Randolph: Hameenlinna, Finland, 2008. [Google Scholar]

- Purchase, H.C. Experimental Human-Computer Interaction: A Practical Guide with Visual Examples; Cambridge University Press: Cambridge, UK, 2012. [Google Scholar]

- Srinagesh, K. The Principles of Experimental Research; Elsevier: Amsterdam, The Netherlands, 2011. [Google Scholar]

- Thyer, B.A. Quasi-Experimental Research Designs; Oxford University Press: Oxford, UK, 2012. [Google Scholar]

- Thompson, C.B.; Panacek, E.A. Research study designs: Experimental and quasi-experimental. Air Med. J. 2006, 25, 242–246. [Google Scholar] [CrossRef] [PubMed]

- Moustakas, C. Heuristic Research: Design, Methodology, and Applications; SAGE Publications: Thousand Oaks, CA, USA, 1990. [Google Scholar]

- Sultan, N. Heuristic Inquiry: Researching Human Experience Holistically; SAGE Publications: Thousand Oaks, CA, USA, 2018. [Google Scholar]

- Salhi, S. Heuristic Search: The Emerging Science of Problem Solving; Springer: Berlin, Germany, 2017. [Google Scholar]

- Salehi, S.; Selamat, A.; Fujita, H. Systematic mapping study on granular computing. Knowl.-Based Syst. 2015, 80, 78–97. [Google Scholar] [CrossRef]

- ISO, W. 9241-11. Ergonomic requirements for office work with visual display terminals (VDTs). Int. Organ. Stand. 1998, 45, 1–29. [Google Scholar]

- Sauro, J.; Lewis, J.R. Quantifying the User Experience: Practical Statistics for User Research; Morgan Kaufmann: Burlington, MA, USA, 2016. [Google Scholar]

- Wilson, C. Credible Checklists and Quality Questionnaires: A User-Centered Design Method; Newnes: Boston, MA, USA, 2013. [Google Scholar]

- Portigal, S. Interviewing Users: How to Uncover Compelling Insights; Rosenfeld Media: New York, NY, USA, 2013. [Google Scholar]

- Isbister, K.; Schaffer, N. Game Usability: Advancing the Player Experience; CRC Press: Boca Raton, FL, USA, 2015. [Google Scholar]

- James, M.R., Jr.; Dale, M.; James, D.; Minsoo, K. Measurement and Evaluation in Human Performance, 5E; Human Kinetics: Champaign, IL, USA, 2015. [Google Scholar]

- Bernsen, N.O.; Dybkjær, L. Multimodal Usability; Springer Science & Business Media: Berlin, Germany, 2009. [Google Scholar]

- Cheng, J.M.-S.; Blankson, C.; Wang, E.S.-T.; Chen, L.S.-L. Consumer attitudes and interactive digital advertising. Int. J. Advert. 2009, 28, 501–525. [Google Scholar] [CrossRef]

- Lavie, T.; Tractinsky, N. Assessing dimensions of perceived visual aesthetics of web sites. Int. J. Hum.-Comput. Stud. 2004, 60, 269–298. [Google Scholar] [CrossRef]

- Westbrook, R.A.; Oliver, R.L. The Dimensionality of Consumption Emotion Patterns and Consumer Satisfaction. J. Consum. Res. 1991, 18, 84–91. [Google Scholar] [CrossRef]

- Kizony, R.; Katz, N.; Rand, D.; Weiss, P. Short Feedback Questionnaire (SFQ) to enhance client-centered participation in virtual environments. Cyberpsychol. Behav. 2006, 9, 687–688. [Google Scholar]

- Loureiro, S.M.C. The role of the rural tourism experience economy in place attachment and behavioral intentions. Int. J. Hosp. Manag. 2014, 40, 1–9. [Google Scholar] [CrossRef]

- Mehmetoglu, M.; Engen, M. Pine and Gilmore’s Concept of Experience Economy and Its Dimensions: An Empirical Examination in Tourism. J. Qual. Assur. Hosp. Tour. 2011, 12, 237–255. [Google Scholar] [CrossRef]

- Oh, H.; Fiore, A.M.; Jeoung, M. Measuring Experience Economy Concepts: Tourism Applications. J. Travel Res. 2007, 46, 119–132. [Google Scholar] [CrossRef]

- Quadri-Felitti, D.L.; Fiore, A.M. Destination loyalty: Effects of wine tourists’ experiences, memories, and satisfaction on intentions. Tour. Hosp. Res. 2013, 13, 47–62. [Google Scholar] [CrossRef]

- Nielsen, J. Usability Engineering; Morgan Kaufmann Publishers Inc.: San Francisco, CA, USA, 1993. [Google Scholar]

- Lewis, J.R. IBM Computer Usability Satisfaction Questionnaires: Psychometric Evaluation and Instructions for Use. Int. J. Hum.-Comput. Interact. 1995, 7, 57–78. [Google Scholar] [CrossRef]

- Jordan, P.W.; Thomas, B.; McClelland, I.L.; Weerdmeester, B. Usability Evaluation in Industry; CRC Press: Boca Raton, FL, USA, 1996. [Google Scholar]

- Davis, F.D. Perceived Usefulness, Perceived Ease of Use, and User Acceptance of Information Technology. MIS Q. 1989, 13, 319–340. [Google Scholar] [CrossRef]

- Pintrich, P.R.; Smith, D.A.F.; Garcia, T.; Mckeachie, W.J. Reliability and Predictive Validity of the Motivated Strategies for Learning Questionnaire (Mslq). Educ. Psychol. Meas. 1993, 53, 801–813. [Google Scholar] [CrossRef]

- Olsson, T. Concepts and subjective measures for evaluating user experience of mobile augmented reality services. In Human Factors in Augmented Reality Environments; Springer: Berlin, Germany, 2013; pp. 203–232. [Google Scholar]

- Lewis, J.R. Psychometric Evaluation of the Post-Study System Usability Questionnaire: The PSSUQ. Proc. Hum. Factors Ergon. Soc. Annu. Meet. 1992, 36, 1259–1260. [Google Scholar] [CrossRef]

- Pifarré, M.; Tomico, O. Bipolar Laddering (BLA): A Participatory Subjective Exploration Method on User Experience. In Proceedings of the 2007 Conference on Designing for User eXperiences, New York, NY, USA, 5–7 November 2007; p. 2. [Google Scholar]

- Martín-Gutiérrez, J.; Saorín, J.L.; Contero, M.; Alcañiz, M.; Pérez-López, D.C.; Ortega, M. Design and validation of an augmented book for spatial abilities development in engineering students. Comput. Graph. 2010, 34, 77–91. [Google Scholar] [CrossRef]

- O’Brien, H.L.; Toms, E.G. The development and evaluation of a survey to measure user engagement. J. Am. Soc. Inf. Sci. Technol. 2010. Available online: https://onlinelibrary.wiley.com/doi/full/10.1002/asi.21229 (accessed on 8 January 2019).

- Padda, H. QUIM: A Model for Usability/Quality in Use Measurement; LAP LAMBERT Academic Publishing: Saarbrücken, Germany, 2009. [Google Scholar]

- Venkatesh, V.; Thong, J.Y.L.; Xu, X. Consumer Acceptance and Use of Information Technology: Extending the Unified Theory of Acceptance and Use of Technology. MIS Q. 2012, 36, 157–178. [Google Scholar] [CrossRef]

- Buchanan, T.; Paine, C.; Joinson, A.N.; Reips, U.-D. Development of measures of online privacy concern and protection for use on the Internet. J. Am. Soc. Inf. Sci. Technol. 2007, 58, 157–165. [Google Scholar] [CrossRef]

- Mathwick, C.; Malhotra, N.; Rigdon, E. Experiential value: Conceptualization, measurement and application in the catalog and Internet shopping environment☆11☆This article is based upon the first author’s doctoral dissertation completed while at Georgia Institute of Technology. J. Retail. 2001, 77, 39–56. [Google Scholar] [CrossRef]

- Cockton, G.; Lavery, D.; Woolrych, A. The Human-computer Interaction Handbook; Jacko, J.A., Sears, A., Eds.; Lawrence Erlbaum Associates Inc.: Hillsdale, NJ, USA, 2003; pp. 1118–1138. [Google Scholar]

- Abellán, I.M.J.; Stake, R.E. Does Ubiquitous Learning Call for Ubiquitous Forms of Formal Evaluation? An Evaluand oriented Responsive Evaluation Model. Ubiquitous Learn. Int. J. 2009, 1, 71–82. [Google Scholar]

- Nielsen, J. Usability Inspection Methods. In Conference Companion on Human Factors in Computing Systems; ACM: New York, NY, USA, 1994; pp. 413–414. [Google Scholar]

- Ko, S.M.; Chang, W.S.; Ji, Y.G. Usability Principles for Augmented Reality Applications in a Smartphone Environment. Int. J. Hum.–Comput. Interact. 2013, 29, 501–515. [Google Scholar] [CrossRef]

- Gómez, R.Y.; Caballero, D.C.; Sevillano, J.-L. Heuristic Evaluation on Mobile Interfaces: A New Checklist. Sci. World J. 2014. Available online: https://www.hindawi.com/journals/tswj/2014/434326/ (accessed on 9 January 2019).

- Dix, A. Human-computer interaction; Springer: Berlin, Germany, 2009. [Google Scholar]

- Dünser, A.; Billinghurst, M. Evaluating augmented reality systems. In Handbook of Augmented Reality; Springer: Berlin, Germany, 2011; pp. 289–307. [Google Scholar]

- Lim, K.C. A Comparative Study of GUI and TUI for Computer Aided Learning; Universiti Tenaga Nasional: Selangor, Malaysia, 2011. [Google Scholar]

- Uras, S.; Ardu, D.; Paddeu, G.; Deriu, M. Do Not Judge an Interactive Book by Its Cover: A Field Research. In Proceedings of the 10th International Conference on Advances in Mobile Computing & Multimedia, New York, NY, USA, 3–5 December 2012; pp. 17–20. [Google Scholar]

| Code | Research Questions |

|---|---|

| RQ1 | What are the common domains, research types, and contributions for combined mobile augmented reality learning applications and usability studies? |

| RQ2 | What are the common usability metrics used to measure usability factors of the mobile augmented reality environment? |

| RQ3 | From the usability metrics used, what are the common methods, techniques, and instruments used in gathering usability data? |

| RQ4 | What are the correlations in between these identified usability metrics, research types, contributions, methods, and techniques? |

| Inclusion Criteria | Exclusion Criteria |

|---|---|

| Include only: 1. Articles published in English language; 2. Articles with usability methods, techniques and metrics implemented; 3. Articles involving handheld mobile MAR learning applications. | Exclude all: 1. Articles published in languages other than English; 2. Articles that discuss only about application development and does not implement usability measures; 3. Articles that present study other than handheld mobile MAR learning applications. |

| QA No. | Quality Assessment Questions |

|---|---|

| 1 | Does the paper clearly describe the method/methods of usability used? |

| 2 | Does the paper highlight the usability evaluation process clearly? |

| 3 | Does the paper clearly present the contribution of study? |

| 4 | Does the paper clearly present the metrics used relating to types of subject study (between-subjects, within-subjects, or both)? |

| 5 | Does the paper add value to contributions towards academia, industry or community? |

| Online Databases | Articles Collected | Articles Selected after Filtering |

|---|---|---|

| IEEEXplore | 91 | 27 |

| ScienceDirect | 13 | 24 |

| Web of Science | 53 | 12 |

| SpringerLink | 38 | 8 |

| ACM Digital Library | 13 | 1 |

| Google Scholar | 121 | 0 |

| Pub. Type | Q | Impact Factor | Year | Pub. Name | Refs. |

|---|---|---|---|---|---|

| Journal | 1 | 1.313 | 2009 | Journal of Computer Assisted Learning | [12] |

| Journal | 1 | 1.394 | 2013 | British Journal of Educational Technology | [13] |

| Journal | 1 | 4.669 | 2013 | Journal of Medical Internet Research | [14] |

| Journal | 2 | 1.035 | 2013 | Journal of Documentation | [15] |

| Journal | 2 | 0.938 | 2011 | Personal and Ubiquitous Computing | [16] |

| Journal | 1 | 1.283 | 2014 | IEEE Transactions on Learning Technologies | [17] |

| Journal | 1 | 2.694 | 2014 | Computers in Human Behavior | [18] |

| Journal | 1 | 2.240 | 2014 | Expert Systems with Applications | [19,20] |

| Journal | 2 | 1.545 | 2014 | IEEE Pervasive Computing | [21] |

| Journal | 3 | 0.475 | 2014 | Universal Access in the Information Society | [22] |

| Journal | 1 | 1.129 | 2015 | IEEE Transactions on Learning Technologies | [23] |

| Journal | 1 | 1.330 | 2015 | IEEE Transactions on Education | [24] |

| Journal | 1 | 1.438 | 2015 | Comunicar | [25] |

| Journal | 1 | 2.880 | 2015 | Computers in Human Behavior | [26] |

| Journal | 1 | 1.719 | 2015 | Pervasive and Mobile Computing | [27] |

| Journal | 1 | 4.288 | 2017 | IEEE Transactions on Biomedical Engineering | [28] |

| Journal | 1 | 2.840 | 2016 | IEEE Transactions on Visualization and Computer Graphics | [29] |

| Journal | 3 | NA | 2016 | IEEE Revista Iberoamericana de Tecnologias del Aprendizaje | [30] |

| Journal | 1 | 3.977 | 2017 | IEEE Transactions on Multimedia | [31] |

| Journal | 1 | NA | 2017 | Journal of Retailing and Consumer Services | [32] |

| Journal | 1 | 4.538 | 2017 | Computers and Education | [33] |

| Journal | 1 | 3.129 | 2017 | Technological Forecasting and Social Change | [34,35] |

| Journal | 1 | 3.768 | 2017 | Expert Systems with Applications | [36] |

| Journal | 1 | 3.358 | 2017 | Advanced Engineering Informatics | [37] |

| Journal | 2 | NA | 2017 | Entertainment Computing | [38] |

| Journal | 2 | 1.541 | 2017 | Multimedia Tools and Applications | [39] |

| Journal | 2 | 1.581 | 2017 | Microsystem Technologies | [40] |

| Journal | 2 | 1.200 | 2017 | Computers & Graphics | [41] |

| Journal | 2 | NA | 2017 | International Journal of Human–Computer Interaction | [42] |

| Journal | 3 | NA | 2017 | IEEE Revista Iberoamericana de Tecnologias del Aprendizaje | [43] |

| Journal | 3 | 0.568 | 2015 | Virtual Reality | [44] |

| Journal | 3 | NA | 2017 | Healthcare Technology Letters | [45] |

| Journal | 1 | 4.032 | 2018 | Automation in Construction | [46,47] |

| Journal | 1 | 3.536 | 2018 | Computers in Human Behavior | [48,49] |

| Journal | 1 | NA | 2018 | Journal of Retailing and Consumer Services | [50] |

| Journal | 1 | 3.724 | 2018 | Computers, Environment and Urban Systems | [51] |

| Journal | 1 | 3.078 | 2018 | IEEE Transactions on Visualization and Computer Graphics | [52] |

| Journal | 1 | 2.300 | 2018 | International Journal of Human-Computer Studies | [53] |

| Journal | 2 | 1.581 | 2018 | Microsystem Technologies | [54] |

| Journal | 2 | 2.974 | 2018 | Pervasive and Mobile Computing | [55] |

| Journal | NA | NA | 2018 | Revista Iberoamericana de Tecnologias del Aprendizaje | [56] |

| Proceeding | NA | NA | 2010 | Mobile Multimedia Processing | [57] |

| Proceeding | NA | NA | 2012 | International Symposium on Computers in Education (SIIE) | [58] |

| Proceeding | NA | NA | 2012 | Proceedings of the 2012 ACM workshop on User experience in e-learning and augmented technologies in education | [59] |

| Proceeding | NA | NA | 2012 | Procedia Computer Science | [60] |

| Proceeding | NA | NA | 2013 | Winter Simulations Conference (WSC) | [61] |

| Proceeding | NA | NA | 2013 | 8th Iberian Conference on Information Systems and Technologies (CISTI) | [62] |

| Proceeding | NA | NA | 2013 | 5th International Conference on Games and Virtual Worlds for Serious Applications (VS-GAMES) | [63] |

| Proceeding | NA | NA | 2013 | Procedia Computer Science | [64] |

| Proceeding | NA | NA | 2014 | International Symposium on Computers in Education (SIIE) | [65] |

| Proceeding | NA | NA | 2014 | IEEE Frontiers in Education Conference (FIE) Proceedings | [66,67,68] |

| Proceeding | NA | NA | 2014 | Procedia Computer Science | [69] |

| Proceeding | NA | NA | 2014 | IEEE 14th International Conference on Advanced Learning Technologies | [70] |

| Proceeding | NA | NA | 2015 | IEEE 12th International Conference on e-Business Engineering | [71] |

| Proceeding | NA | NA | 2015 | International Conference on Intelligent Environments | [72] |

| Proceeding | NA | NA | 2015 | Procedia—Social and Behavioral Sciences | [73,74] |

| Proceeding | NA | NA | 2016 | 13th Learning and Technology Conference (L&T) | [75] |

| Proceeding | NA | NA | 2016 | IEEE Global Engineering Education Conference (EDUCON) | [76,77] |

| Proceeding | NA | NA | 2017 | IEEE 4th International Conference on Soft Computing & Machine Intelligence (ISCMI) | [78] |

| Proceeding | NA | NA | 2017 | IEEE International Symposium on Mixed and Augmented Reality (ISMAR-Adjunct) | [79] |

| Proceeding | NA | NA | 2017 | IEEE 17th International Conference on Advanced Learning Technologies (ICALT) | [80] |

| Proceeding | NA | NA | 2017 | International Conference on Orange Technologies (ICOT) | [81] |

| Book Chapter | NA | NA | 2013 | Advances in Computer Entertainment | [82] |

| Book Chapter | NA | NA | 2016 | Universal Access in Human–Computer Interaction. Interaction Techniques and Environments | [83] |

| Domain | Sub-domain | Fr. | Refs. |

|---|---|---|---|

| Education | Engineering | 7 | [24,26,37,61,66,67,68] |

| Architecture | 7 | [18,22,58,59,63,64,65] | |

| Language | 6 | [12,13,57,70,75,76] | |

| Medical & Health | 2 | [14,73] | |

| History | 2 | [19,60] | |

| Sciences | 2 | [33,80] | |

| Others | 10 | [21,23,25,43,56,62,71,74,77,81] | |

| Navigational | - | 15 | [15,16,17,27,30,39,40,41,48,49,53,55,69,72,79] |

| Marketing & Advertising | - | 8 | [31,32,34,35,42,50,54,83] |

| Medical & Health | - | 3 | [28,45,78] |

| Architecture & Construction | - | 2 | [46,51] |

| Facility Management | - | 2 | [20,47] |

| Security | - | 1 | [29] |

| Shadow Emulation | - | 1 | [52] |

| AR Gaming | - | 1 | [38] |

| AR Visibility | - | 1 | [44] |

| Automotive | - | 1 | [36] |

| Basic Skills | - | 1 | [82] |

| Comb. | Type | Refs. | Q1 | Q2 | Q3 | NI | P | BC |

|---|---|---|---|---|---|---|---|---|

| 1 | Exploratory | [13,16,17,21,22,23,35,41,47,49,50,51,53,57,58,60,61,62,65,67,71,72,74,75,78] | 9 | 3 | 1 | - | 12 | - |

| Empirical | - | - | - | - | - | - | - | |

| Comparative | [20,29,34,36,38,40,42,44,45,52,55,56,59,64,66,68,70,76,77,79,82] | 5 | 4 | 2 | 1 | 8 | 1 | |

| Experimental | - | - | - | - | - | - | - | |

| Quasi-Experimental | [30] | - | - | 1 | - | - | - | |

| Heuristic | [83] | - | - | - | - | - | 1 | |

| 2 | Exploratory/Empirical | [24,27,28] | 3 | - | - | - | - | - |

| Exploratory/Comparative | [18,31,32,33,43,63,80,81] | 4 | - | 1 | - | 3 | - | |

| Exploratory/Experimental | [14,15,19,25] | 3 | 1 | - | - | - | - | |

| Exploratory/Heuristic | [69] | - | - | - | - | 1 | - | |

| Empirical/Comparative | [26,39,54] | 1 | 2 | - | - | - | - | |

| Comparative/Experimental | [46] | 1 | - | - | - | - | - | |

| Comparative/Quasi-Experimental | [12,37] | 2 | - | - | - | - | - | |

| 3 | Exploratory/Empirical/Comparative | [48] | 1 | - | - | - | - | - |

| Exploratory/Comparative/Experimental | [73] | - | - | - | - | 1 | - |

| Types of Contribution | Fq. | Refs. |

|---|---|---|

| Tool | 41 | [12,13,15,20,21,22,23,24,25,26,27,33,36,38,41,43,45,46,48,51,53,54,57,59,60,62,63,64,69,70,71,73,74,75,76,77,78,79,81,82,83] |

| Method | 10 | [14,18,29,30,37,44,47,56,58,65] |

| Model | 9 | [17,19,32,34,40,49,61,72,80] |

| Technique | 3 | [28,31,55] |

| Case Study/Experience Paper | 9 | [16,35,39,42,50,52,66,67,68] |

| Types of Metrics | Fq. | Refs. |

|---|---|---|

| Performance | 2 | [28,36] |

| Self-reported | 49 | [12,13,15,16,18,19,22,25,26,27,31,32,33,34,35,37,40,41,43,49,50,51,52,53,54,55,56,57,58,59,61,62,64,65,66,67,68,69,70,71,72,73,74,75,76,77,80,81,83] |

| Combination of Both | 20 | [14,17,20,21,23,24,29,38,39,42,44,45,46,47,48,60,63,78,79,82] |

| Types of Evaluation | Fq. | Refs. |

|---|---|---|

| Within-subjects | 19 | [21,28,29,31,40,44,45,47,48,50,51,52,54,55,56,68,80,81,82] |

| Between-subjects | 48 | [12,13,14,15,16,17,18,19,22,24,25,26,27,32,34,35,36,37,38,39,41,42,43,46,49,53,57,58,59,60,61,62,63,65,66,67,69,70,71,72,73,74,75,76,77,78,79,83] |

| Combination of Both | 4 | [20,23,33,64] |

| Metric | Interchangeable Terminologies | Refs. |

|---|---|---|

| Usability/Experience | Experience | [61] |

| User Experience | [14,16,19] | |

| Quality of experience | [39] | |

| Interactive experience | [42] | |

| Usability | [14,23,25,33,39,40,51,54,63,69,73,78,82] | |

| Usability ratings of severity | [69] | |

| User’s perception | [52] | |

| Expectation | [16] | |

| Perception | [39] | |

| Nielsen Usability Heuristics | [83] | |

| Ko et al.’s MAR usability principles (five usability principles for AR) | [83] | |

| Usability items by (Lavie and Tractinsky) addition of (response speed and ease of control) | [42] | |

| Learnability | Learnability | [12,23,33,38,47,48,51,81] |

| Learning effectiveness | [24,63] | |

| Learning improvement | [73] | |

| Increased learning efficiency | [14] | |

| Education (learning) | [49] | |

| Learning curves | [29] | |

| Comprehension | [76] | |

| Enhancement of understanding | [73] | |

| Understandability | [44] | |

| Content | Knowledge | [33] |

| Perceived informativeness | [34] | |

| Information-feedback presentation | [40,54] | |

| Quality of information | [72] | |

| Perceived understanding | [15] | |

| Context awareness | [39] | |

| Motivation | Motivation | [24,63,65,67,74] |

| View angle for stimulating interest and motivating learning | [73] | |

| Personal innovativeness | [27] | |

| Behavioral intention to use | [34,37] | |

| Effort expectancy | [27,39] | |

| Engagement | Engagement | [33,45,49,50,53,60,61,67] |

| Perceived engagement | [15] | |

| Emotional engagement of the different types of augmentations | [53] | |

| Attention (engagement) | [21] | |

| Adaptation | Adaptation | [23] |

| Comfort | [79] | |

| Eyestrain | [79] | |

| Facial expressions and body movements (Frowning, Smiling, Surprise, Concentration/Focus, Leaning close to screen) | [42] | |

| Sickness | [79] | |

| Satisfaction | Satisfaction | [13,18,22,26,29,31,39,40,41,43,44,45,48,49,50,51,54,56,58,59,60,62,64,66,68,71,74,75,77,81] |

| Perceived satisfaction | [65,70] | |

| Pleasure—satisfaction | [27] | |

| Pleasure (is happy, angry or frustrated) | [62] | |

| Arousal—level of satisfaction | [27] | |

| Factor of amusement (satisfaction) | [80] | |

| Satisfaction (confidence) | [38] | |

| User satisfaction | [35] | |

| Difficulty level (satisfaction) | [47] | |

| Overall satisfaction | [53] | |

| Satisfaction (exciting) | [21] | |

| Likeness | [29] | |

| Behavior | Behavior | [17] |

| Experimental behavior | [24] | |

| Behavioral Intention | [27] | |

| Attitude | [57] | |

| Perceived attitude | [32] | |

| Attitude towards using | [34,37] | |

| Appreciation | [79] | |

| Dominance | [27] | |

| Positive response | [29] | |

| Self-expressiveness | [39] | |

| Effectiveness | Effectiveness | [12,18,20,22,29,30,31,33,36,37,39,44,46,47,48,52,57,58,59,64,65,66,67,71,78,81] |

| Effectiveness (Accuracy) | [28] | |

| User Experience of the acceptable stability limit (effectiveness) | [55] | |

| Effectives—task completion | [62] | |

| Accuracy (performance) | [36] | |

| Performance expectancy | [27,39] | |

| Correct tasks | [48] | |

| Efficiency | Efficiency | [18,20,22,28,29,35,36,39,44,45,46,47,48,58,59,60,64,65,66,67,81,82] |

| Efficiency—understood task | [62] | |

| Performance (efficiency) | [38,39,43] | |

| productivity | [81] | |

| Usefulness | Usefulness | [14,21,62,72,81] |

| Ease of Use | [21,38,41,50,51,53,62] | |

| Perceived usefulness | [20,32,34,37,57,65] | |

| Perceived ease of use | [20,32,34,37] | |

| Manipulation Check (relative ease of use) | [42] | |

| Easiness | [57,79] | |

| User Friendliness | [57] | |

| Emotion | Emotion | [14] |

| Emotional Response (Arousal) | [42] | |

| Fun/Amusement | Fun | [13,50] |

| Fun (amused) | [62] | |

| Fun (interesting, annoying, entertaining) | [42] | |

| Factor of amusement (satisfaction) | [80] | |

| Perceived enjoyment | [32,34,37] | |

| Entertainment (Enjoyment) | [49] | |

| Negative Tone—Boring (gratifying, pleasant, confusing, and disappointing) | [42] | |

| Cognitive Load | Metacognitive Self-Regulation Skills | [24] |

| Cognitive effort | [39] | |

| Task load | [82] | |

| Memories | [49] | |

| Labelling assist memorization | [73] | |

| Preference | Preference | [40,44,54] |

| Preferred methods of interaction | [68] | |

| Interest (would use again) | [62] | |

| Object manipulation | [73] | |

| Degree of interest for the content | [53] | |

| Interface Design | Aesthetics | [49,53] |

| Aesthetically appreciable interface (nice) | [62] | |

| Attractiveness (ATT) | [14] | |

| Attractiveness (triggered curiosity when the instructor was presenting the Augmented Reality technology) | [62] | |

| Interface style | [37] | |

| Presentation | [54] | |

| The realism of the 3-Dimensional images | [73] | |

| The smooth changes of images | [73] | |

| Realism | [79] | |

| Precision of 3-Dimensional images | [73] | |

| Quality of interface design | [72] | |

| Consistency | [38] | |

| Quality of interaction | [46] | |

| Simple visibility | [44] | |

| Universality | [81] | |

| Accessibility | [81] | |

| Security | Trustfulness | [81] |

| Stability | [26] | |

| Safety | [81] | |

| Others | Escapism | [49] |

| Facilitating conditions | [39] | |

| Identification (HQ-I) | [14] | |

| Novelty | [53] | |

| Pragmatic quality (PQ), hedonic | [14] | |

| Price value | [27] | |

| Social influence | [39] | |

| Stimulation (HQ-S), hedonic | [14] |

| Type | Instruments | Lik | Refs. |

|---|---|---|---|

| Open-Ended | “Profile of Mood States” Questionnaire (POMS, German Variation) | - | [14] |

| Questionnaires—Subject Content Performance | - | [76] | |

| Self-Designed Open-Ended Questionnaires | - | [12,13,17,19,20,29,38,41,45,53,56,62,72,74,75,77,79] | |

| Open-Ended Questionnaire for Descriptive Comments and Suggestions (34 Categories) | - | [33] | |

| Close-Ended | Improved Satisfaction Questionnaire | 5 | [43] |

| Self-Reported (Wide-Awake/Sleepy, Super Active/Passive, Enthusiastic/Apathetic, Jittery/Dull, Unaroused/Aroused) Questions based on [107,108,109] | 5 | [42] | |

| SFQ (Short Feedback Questionnaire) based on [110] | 5 | [48] | |

| Attrakdiff2 | 7 | [14] | |

| Established Reflective Multi-Item Construct Scales from Previous Literature Questionnaire [111,112,113,114] | 5 | [49] | |

| IMMS (Keller’s Instructional Materials Motivation Survey) | 5 | [24] | |

| ISO 9241-11 Questionnaire [100] | 5 | [18,22,59,64,66,67] | |

| Nielsen’s Heuristic Evaluation & Nielsen’s Attributes Of Usability [115] | 5 | [67] | |

| Usability Satisfaction Questionnaires based on [116] | 5 | [67] | |

| The System Usability Scale (SUS) Questionnaire [117] | 5 | [26,40,48,58,78] | |

| The System Usability Scale (SUS) Questionnaire [117]—Modified | 5 | [38] | |

| Technology Acceptance Model (TAM) [118] | 7 | [20,32,37,57,67] | |

| Technology Acceptance Model (TAM) [118]—Modified | 7 | [34,37] | |

| The Motivated Strategies for Learning Questionnaires (MSLQ) [119] | 5 | [24] | |

| NASA TLX Questionnaire | 5 | [45] | |

| NASA TLX Questionnaire—Modified | 21 | [82] | |

| Post Experiment Questionnaire’ based on Olsson [120], Designed to measure experience of MAR services | 5 | [46] | |

| Post-Study System Usability Questionnaire (PSSUQ) [121] | 7 | [72] | |

| Post-Study System Usability Questionnaire (PSSUQ) [121]—Modified | 5 | [33] | |

| Qualitative Bipolar Laddering (BLA) Questionnaire—Test Motivation Before Use And After Use [122] | 5 | [65,67] | |

| Quality of Experience (QOE) Questionnaire | 5 | [19] | |

| Questionnaires based on [123] | 5 | [74] | |

| Questionnaire based on [124] | 5 | [53] | |

| Questionnaire Based On QUIM (Quality In Use Integrated Measurement) Factors (4) Test Data Processing To Determine Usability Percentage Value Level. [125] | 5 | [81] | |

| Questionnaire Based On The Second Iteration Of The Unified Theory Of Acceptance And Use Of Technology, Which Is Commonly Referred To As UTAUT2 [126] | 7 | [27,39] | |

| User Perception Questionnaire based on [127] | 5 | [31] | |

| Questionnaire for User Interface and Satisfaction—QUIS Method | 5 | [62] | |

| Self-Designed Questionnaire based on [128] | 10 | [35] | |

| Self-Designed Questionnaire (Ipsative Yes/No) | 2 | [41,56,79] | |

| Self-Designed Questionnaire by Giving 3 Separate Propositions (Not Acceptable, Acceptable, Excellent) | 3 | [55] | |

| Self-Designed Questionnaires | 4 | [71,77] | |

| Self-Designed Questionnaires | 5 | [16,41,42,54,61,63,68,69,73] | |

| Self-Designed Questionnaires | 6 | [23,52] | |

| Self-Designed Questionnaires | 7 | [44,79] | |

| Self-Designed Questionnaires | 10 | [52,60] | |

| Close-Ended Questionnaires | - | [17] |

| Category | Technique/Instruments | Refs. |

|---|---|---|

| Time-Based Tracking | Time-on-task | [20,23,29,36,39,45,46,47,48] |

| Interaction time-on-task | [63] | |

| Time-on-tasks for optimal configuration | [63] | |

| Task completion time | [82] | |

| Number of time-on-tasks registration | [60] | |

| Time-on-tasks for performance | [38] | |

| Response time | [28,44] | |

| Time-on-tasks across time | [48] | |

| User decision time | [29] | |

| Time-on-tasks for engagement | [21] | |

| Error Tracking | Registering the number of interaction errors | [63] |

| Number of errors for optimal configuration | [63] | |

| Error rates | [44,48,82] | |

| Reverse error registration | [36] | |

| Error counts | [39] | |

| Error registration | [28,29,46,47] | |

| Absolute pose error (APE) as evaluation metrics | [28] | |

| Relative pose error (RPE) | [28] | |

| Discussion-Based | Interview | [12,16,23,51] |

| Interview (Interviews were transcribed and coded by two independent coders. The coders assigned a scale value (5=Strongly Agree and 1=Strongly Disagree) | [15] | |

| Interview for usability - only interview the teachers | [70] | |

| Satisfaction interviews | [80] | |

| 3 rounds of mini-interviews per participant (face-to-face or video) | [50] | |

| Informal interview | [48] | |

| Interview (with teachers, since most students, 9 of them cannot pronounce) | [21] | |

| Group discussion | [51] | |

| Behavior Observation | Emotion tracking (happy, angry, unmotivated, determined) using video recording | [21] |

| Facial expression (coding through video by 2 independent coders | [42] | |

| Action and impression registration | [23] | |

| Observation on student’s communication and interactivity with peers | [14] | |

| Observation on student’s focus on or distraction from the learning material, | [14] | |

| Observation on the way students dealt with the learning object (learning material) | [14] | |

| Overserving interactions | [16] | |

| Engagement (switch view from mobile to non-mobile) | [45] | |

| Qualitative observation by a facilitator, general tendencies in the use of a technology | [17] | |

| Observing facial reaction | [80] | |

| Performance-based Tracking | Pre-test and post-test on content understanding | [12,33,37,43] |

| Effectiveness (task completion) | [78] | |

| Effectiveness (number of correct points) | [36] | |

| Observation-correct number of answers | [52] | |

| User Experience of the acceptable stability limit (effectiveness) | [55] | |

| Effectiveness (accuracy) | [28] | |

| Artifact Collection (observe learning process) | [23] | |

| Screen recording | [23] | |

| Observation-video recording | [24] | |

| Content multiple choice | [24] | |

| Pre-test—evaluating IT and motivational profile | [65,70] | |

| Observation of completion | [60] | |

| Frequency of positive and negative descriptive adjectives | [34] | |

| Procedural/Heuristics | The laboratory experiments all followed the standard procedure in usability testing [129] | [34] |

| Evaluand-oriented Responsive Evaluation Model (EREM) [130] | [23] | |

| Cognitive walkthrough | [78] | |

| Qualitative Bipolar Laddering (BLA) based on [122] | [67] | |

| Heuristic (Nielsen) [131] | [69] | |

| Think aloud protocol | [69] | |

| Expert Reviews were used as the Nielsen heuristic evaluation (HE) method [131] | [83] | |

| Ko et al.’s MAR usability principles (five usability principles for AR applications in a smart phone environment) [132] | [83] | |

| Gómez et al.’s mobile-specific HE checklist [133] | [83] |

| Combination | Technique | Fq. | Refs. |

|---|---|---|---|

| Single Technique | Q | 40 | [13,18,19,22,26,27,31,32,33,34,35,37,40,41,43,49,52,53,54,55,56,57,58,59,61,62,64,65,66,67,68,71,72,73,74,75,76,77,81] |

| Iw | 4 | [12,15,50,70] | |

| Obs | 2 | [28,36] | |

| Combination of 2 techniques | Obs & Q | 14 | [14,20,24,29,38,39,42,44,45,46,47,60,63,79] |

| Obs & Iw | 1 | [21] | |

| ER & Iw | 1 | [51] | |

| Hc & Er | 1 | [83] | |

| Q & CW | 1 | [78] | |

| Combination of 3 techniques | Obs, Q & Iw | 4 | [17,23,48,80] |

| Obs, TA & Iw | 1 | [16] | |

| Obs, ER & Iw | 1 | [25] | |

| Obs, Q & TA | 1 | [82] | |

| Hc, TA & Q | 1 | [69] | |

| Unclear | - | 1 | [30] |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lim, K.C.; Selamat, A.; Alias, R.A.; Krejcar, O.; Fujita, H. Usability Measures in Mobile-Based Augmented Reality Learning Applications: A Systematic Review. Appl. Sci. 2019, 9, 2718. https://doi.org/10.3390/app9132718

Lim KC, Selamat A, Alias RA, Krejcar O, Fujita H. Usability Measures in Mobile-Based Augmented Reality Learning Applications: A Systematic Review. Applied Sciences. 2019; 9(13):2718. https://doi.org/10.3390/app9132718

Chicago/Turabian StyleLim, Kok Cheng, Ali Selamat, Rose Alinda Alias, Ondrej Krejcar, and Hamido Fujita. 2019. "Usability Measures in Mobile-Based Augmented Reality Learning Applications: A Systematic Review" Applied Sciences 9, no. 13: 2718. https://doi.org/10.3390/app9132718

APA StyleLim, K. C., Selamat, A., Alias, R. A., Krejcar, O., & Fujita, H. (2019). Usability Measures in Mobile-Based Augmented Reality Learning Applications: A Systematic Review. Applied Sciences, 9(13), 2718. https://doi.org/10.3390/app9132718