Turbocharger Axial Turbines for High Transient Response, Part 2: Genetic Algorithm Development for Axial Turbine Optimisation

Abstract

1. Introduction

2. Genetic Algorithm Dimensioning

2.1. Population Member Chromosome Encoding

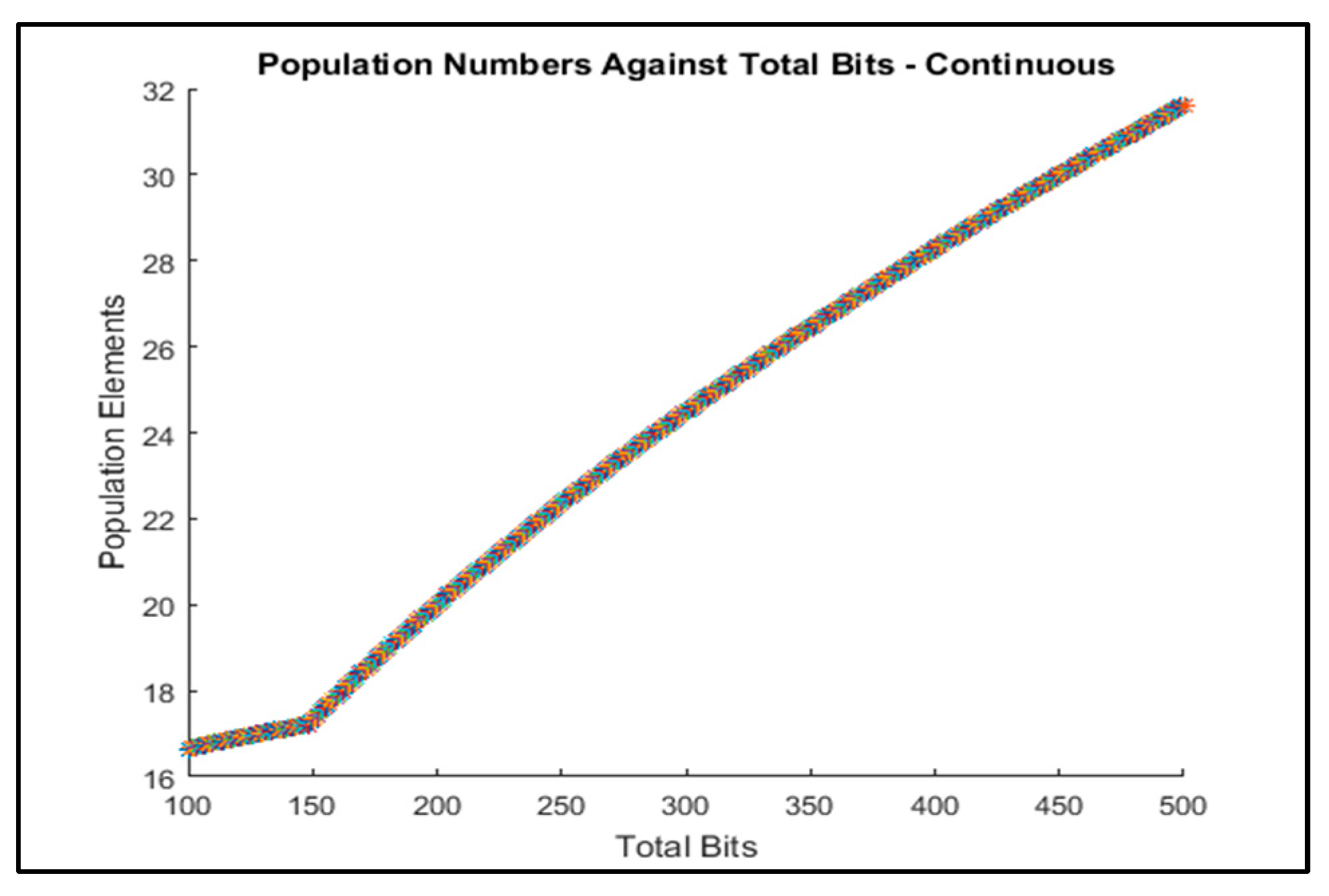

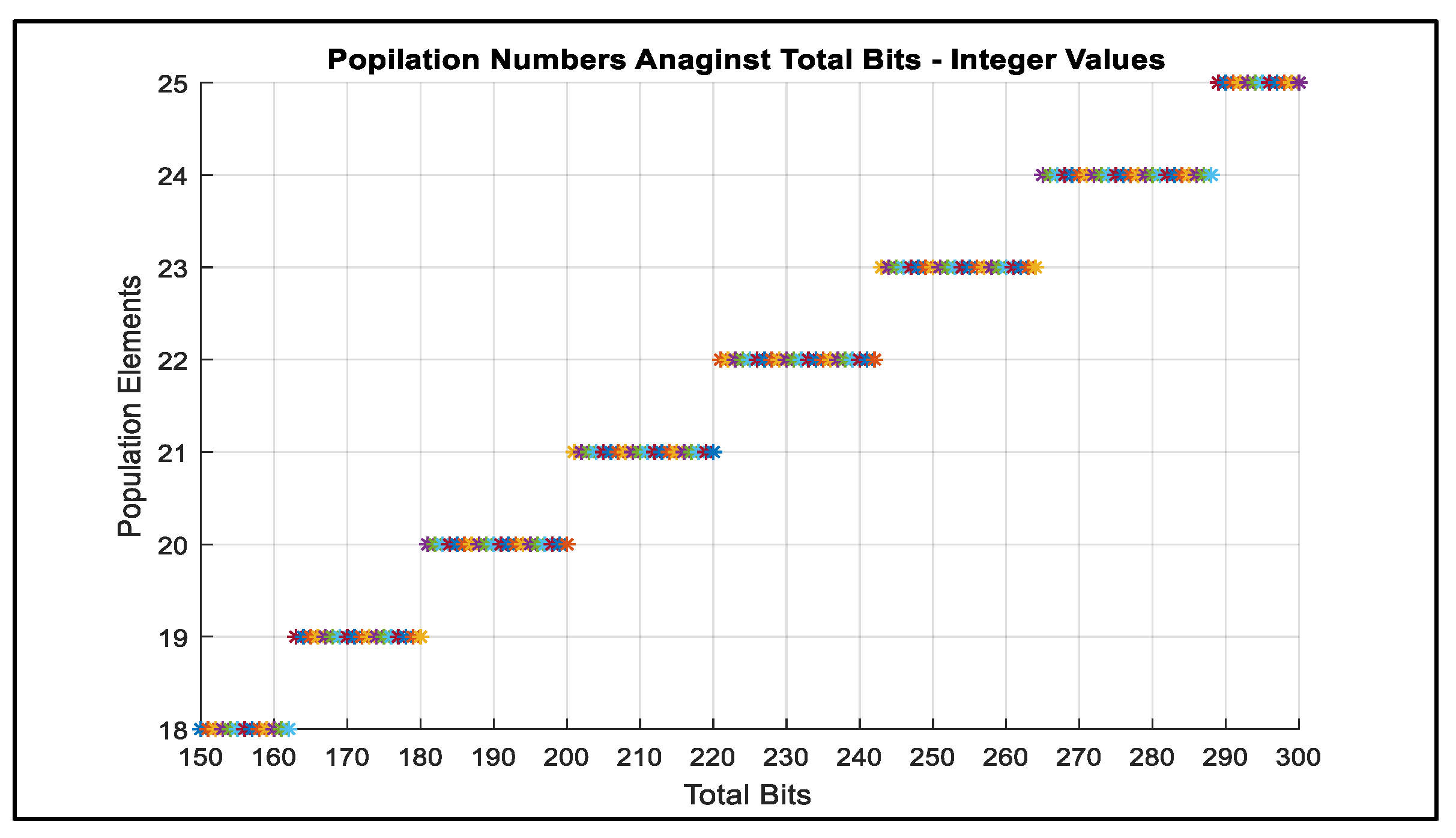

2.2. Population Size

2.3. Mutation Rate

2.4. Generation Number

2.5. Elitism

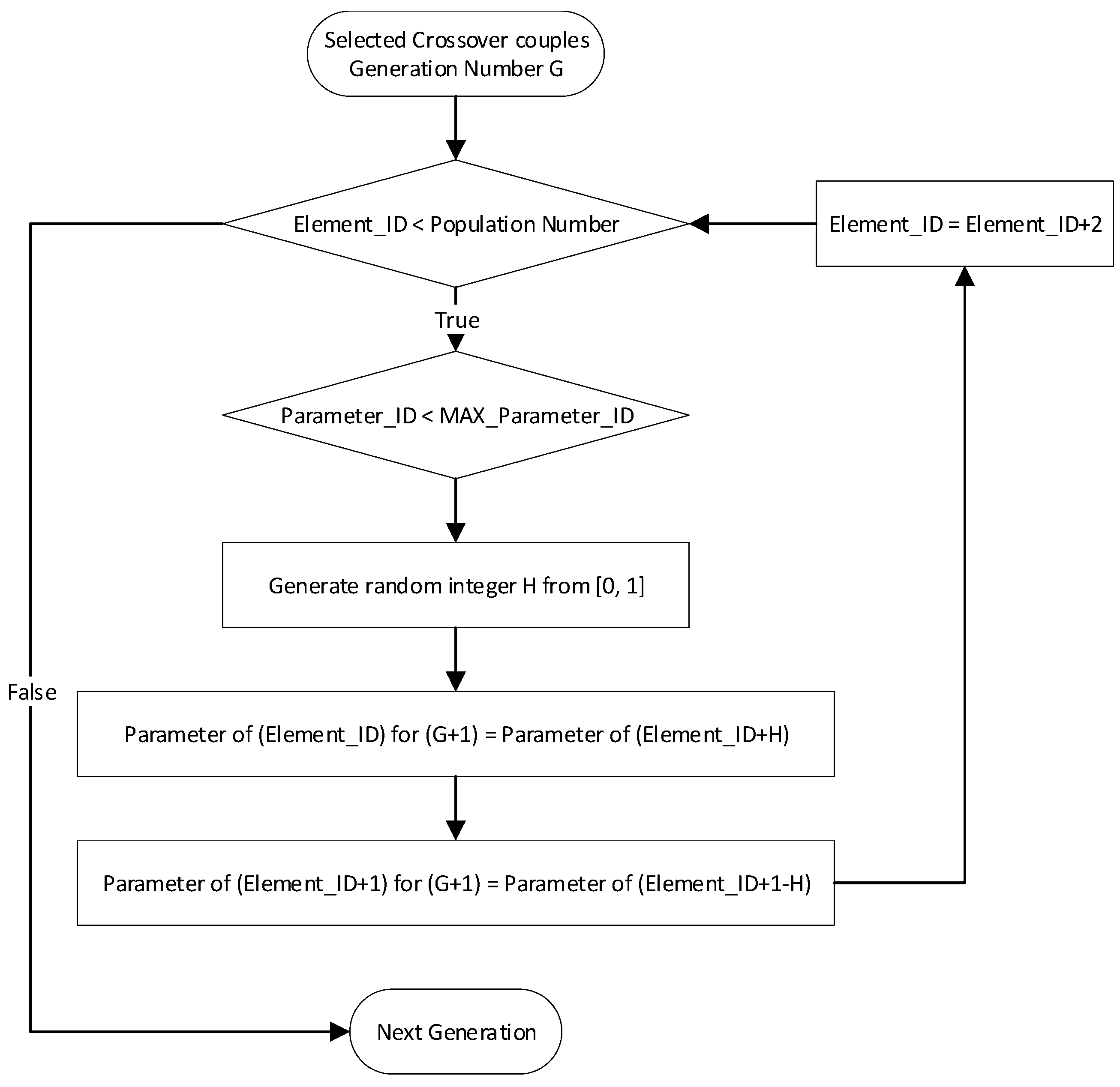

2.6. Crossover Method

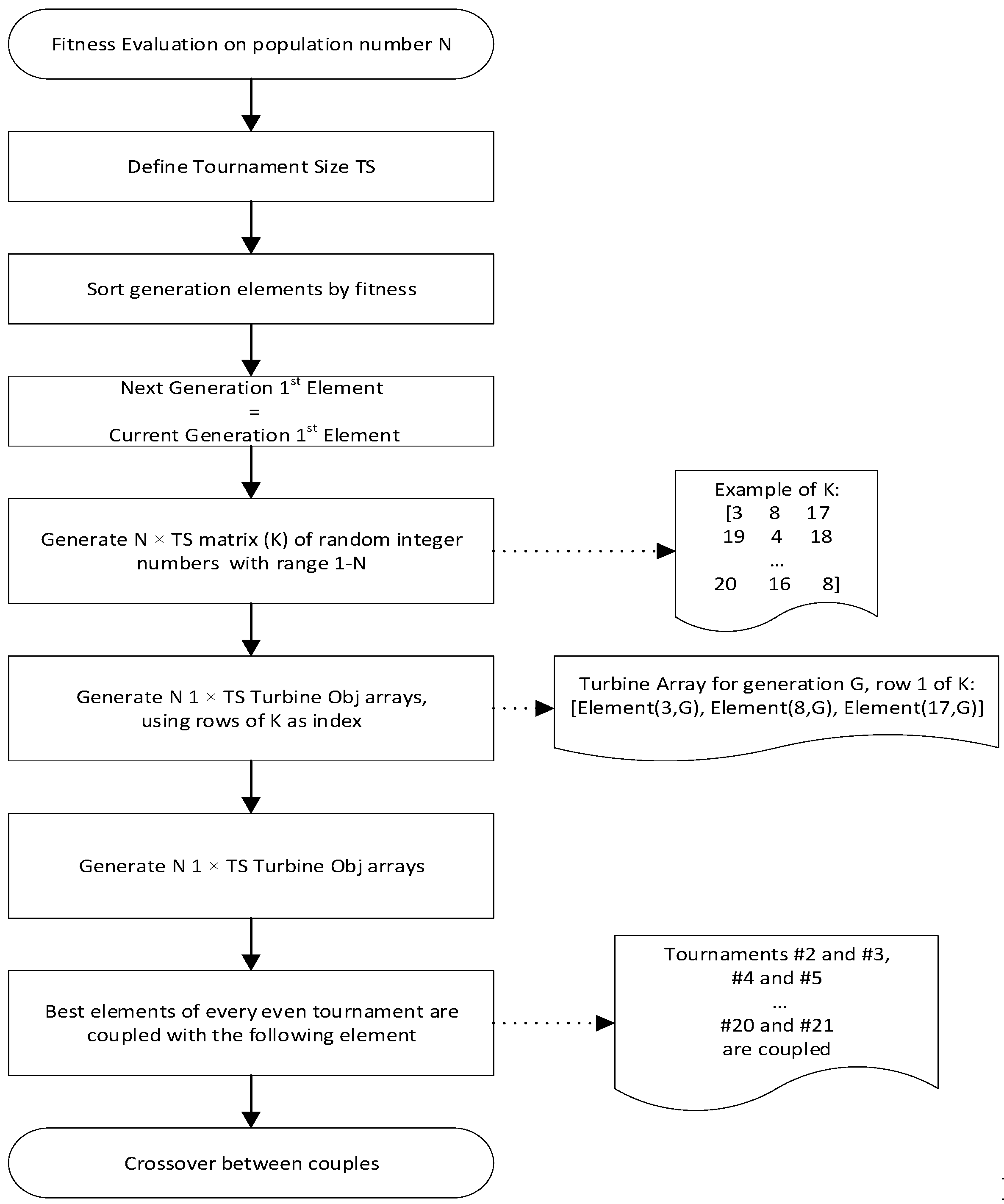

2.7. Parent Selection

2.8. Fitness Function

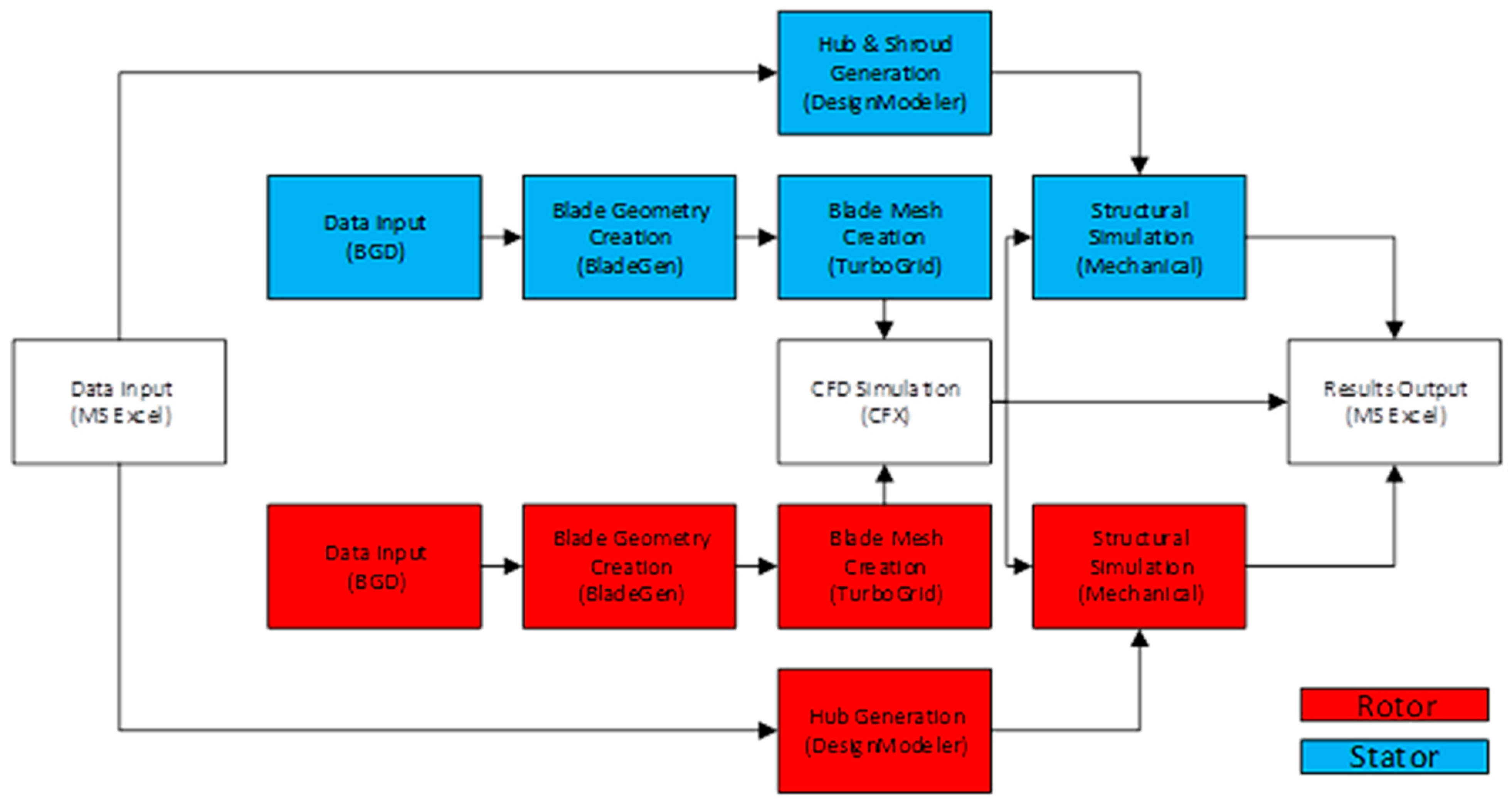

3. Numerical Modelling Setup

- Excel—Data interface tool between MATLAB and ANSYS Workbench;

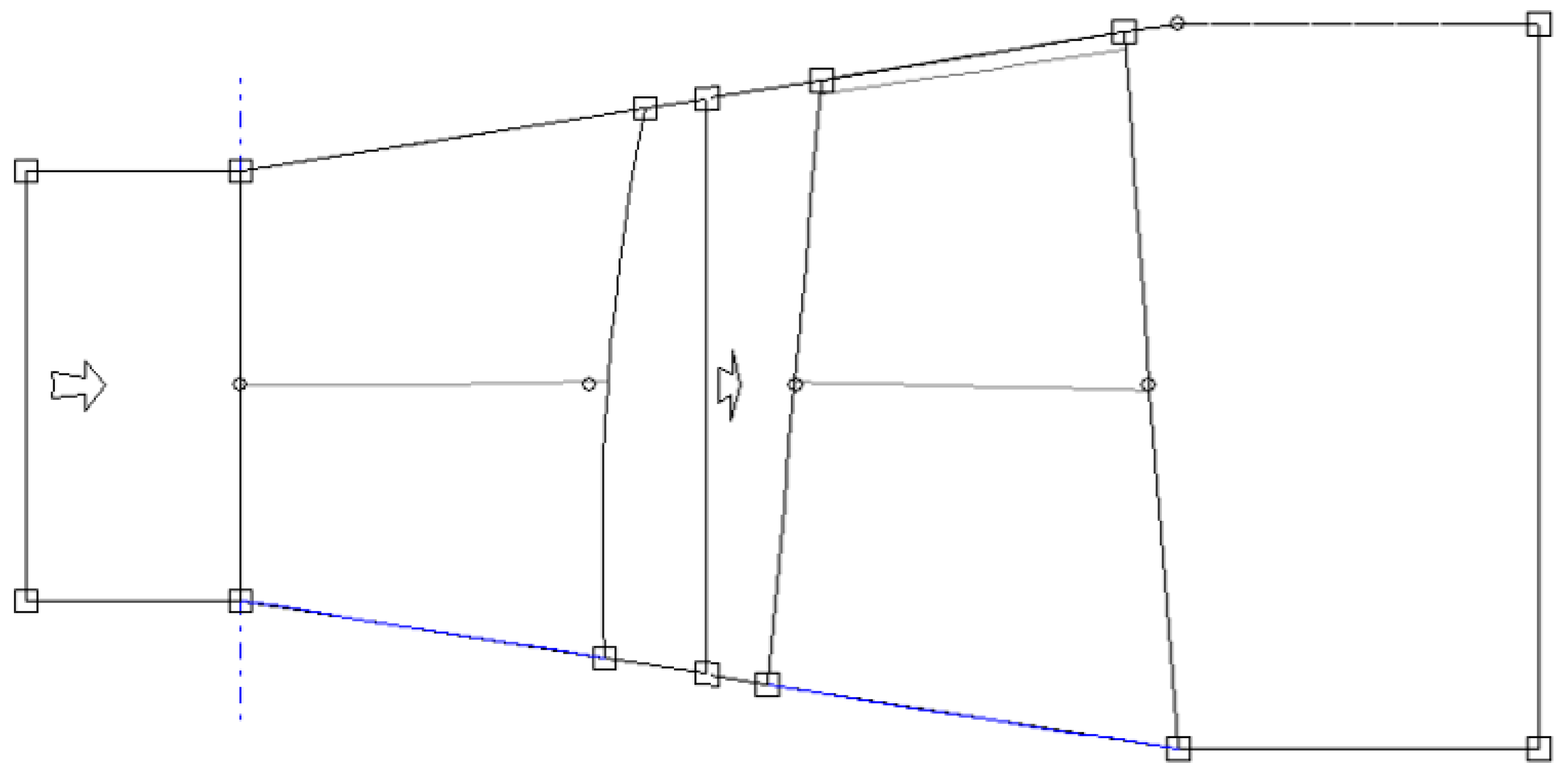

- BladeGen—Blade 3D geometry creation;

- TurboGrid—Generation of blade meshes for CFD analysis;

- CFX—Fluid analysis;

- Mechanical—Structural analysis.

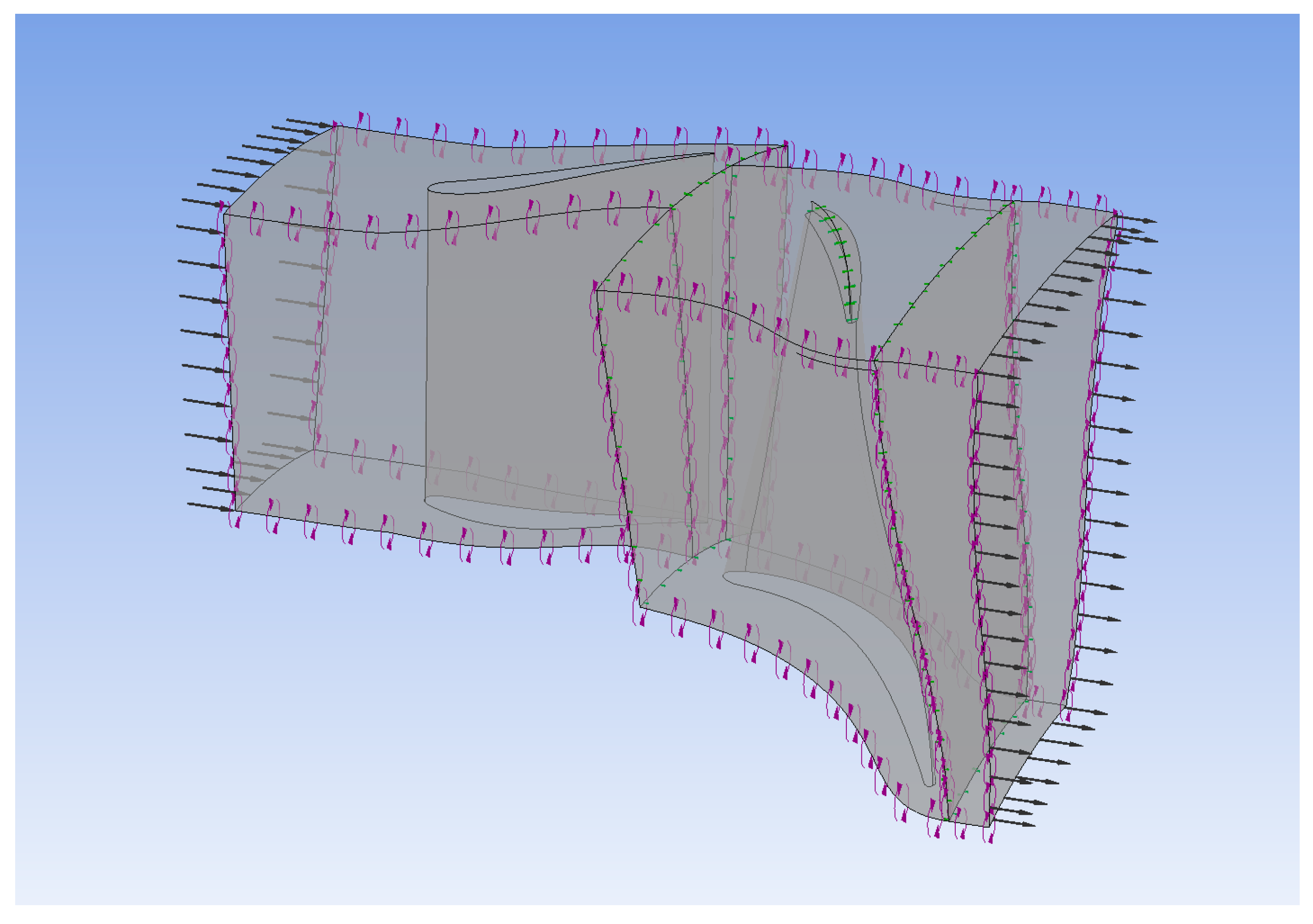

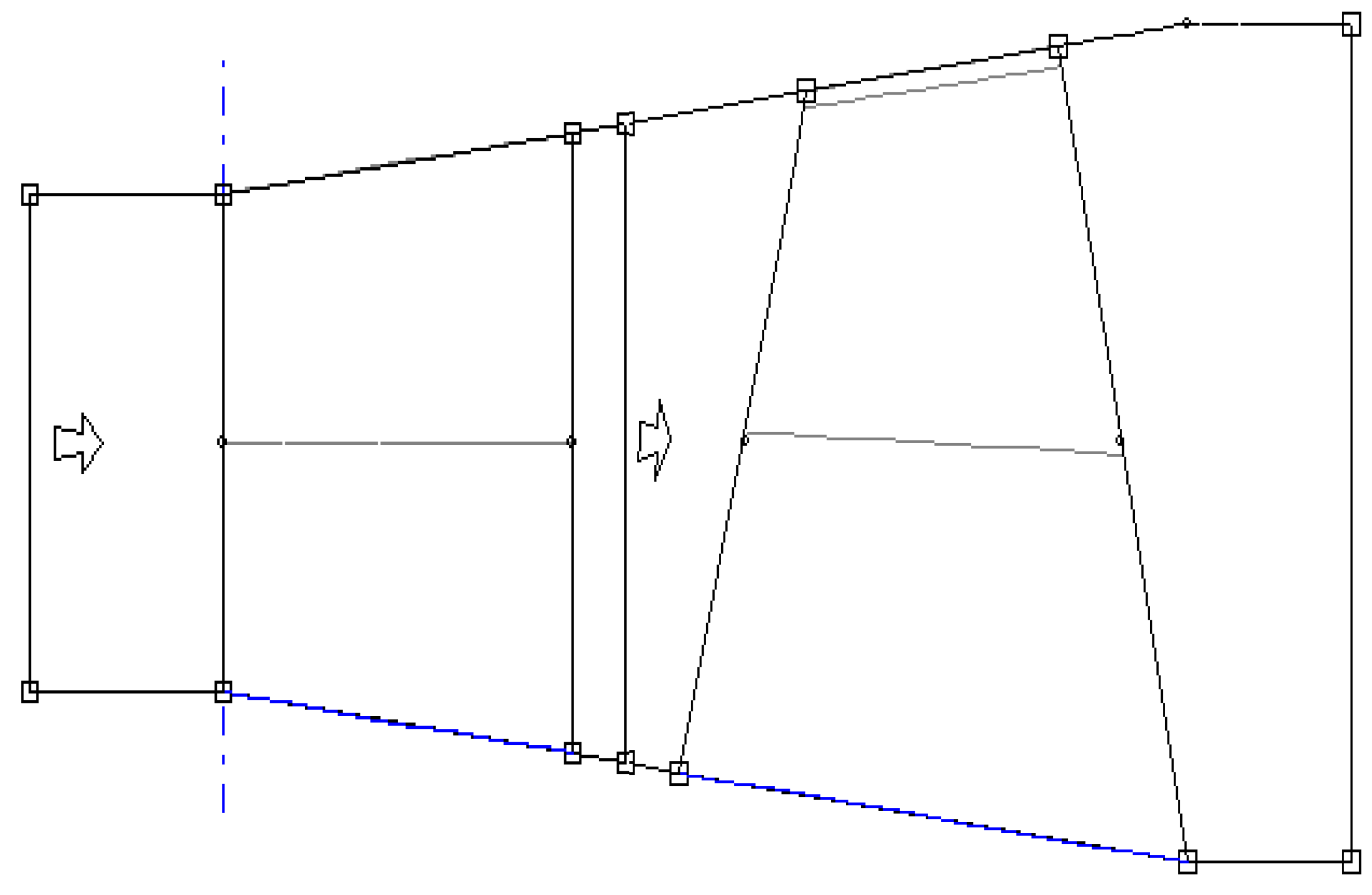

3.1. Computational Fluid Dynamics (CFD)

- The fluid is modelled as air (ideal gas).

- K-ω shear stress transport turbulence model was chosen, which uses an automatic wall function, which automatically switches between a wall function and low-Re wall treatment.

- Interfaces between stator and rotor, and rotor and outlet are selected as stage (mixing-plane).

- Interfaces between stator blades and interfaces between rotor blades are set as periodic.

- The meshes were setup using ANSYS Turbo Grid consisting of a total of 267,089 nodes. From previous investigation, this was found to be acceptable for accuracy.

- Stator blade average Y + 12.2053.

- Rotor blade average Y + 9.8685.

3.2. Finite Element Analysis (FEA)

- Temperature dependent properties were implemented into the material model using manufacturing data [23].

- The fluid solid interaction (C) was added to the boundary conditions of each FEA model, importing temperature and pressures across the surface of the blades as calculated by the CFD simulation under design point boundary conditions.

- Both blades were given the cylindrical support (B) applied to the bottom surface of the hub as shown below. The stator was fixed in the tangential direction, the rotor was fixed in the radial and axial directions.

- A rotational velocity of 16,635 rad/s was applied to the rotor (A)

- A displacement boundary (A) condition of 0 mm in the z direction was applied to the front surface of the stator hub to simulate a connection to the volute.

- The rotor analysis was simulated using the Coriolis effect.

3.3. Algorithm Computational Cost

4. Results and Discussion

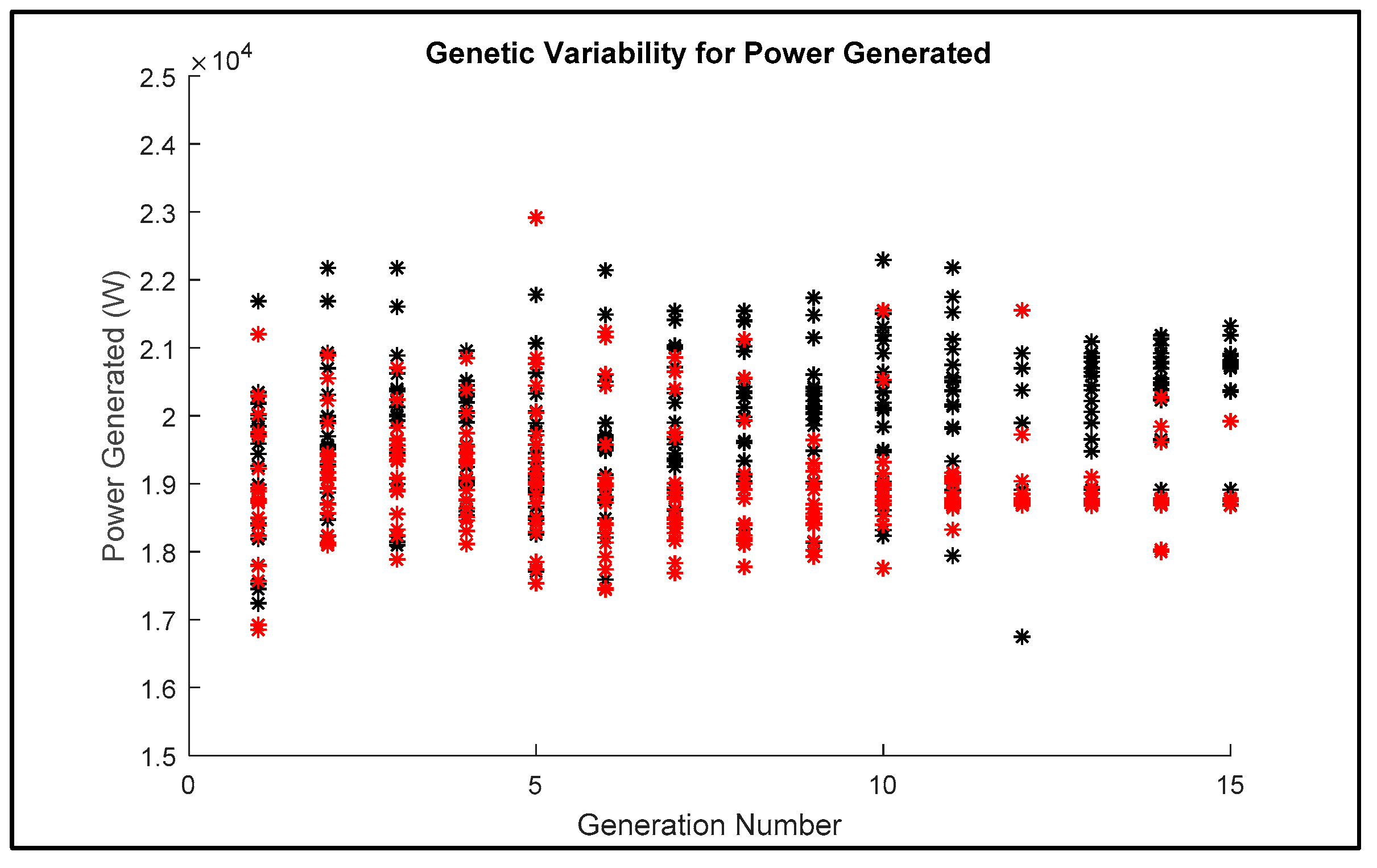

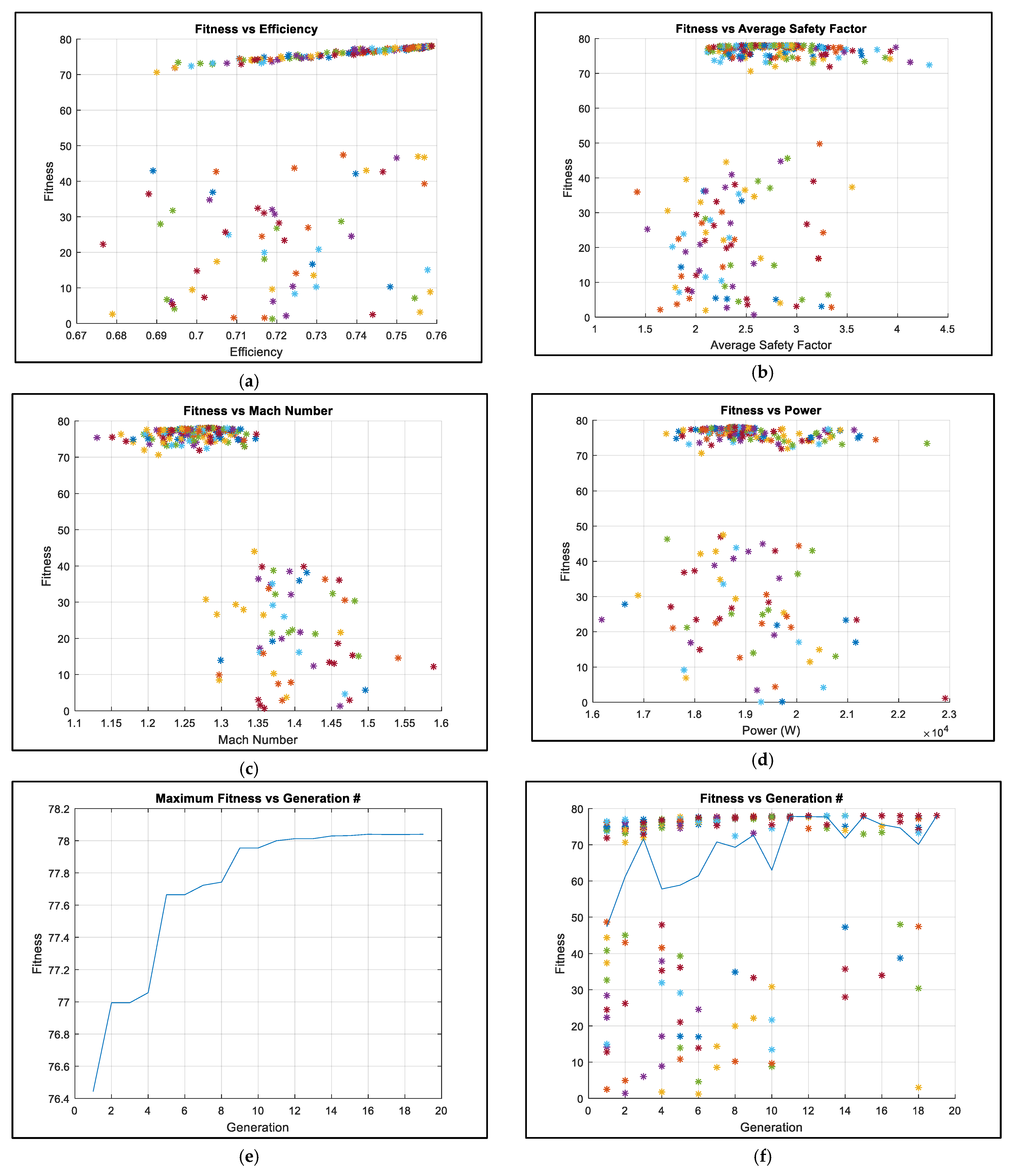

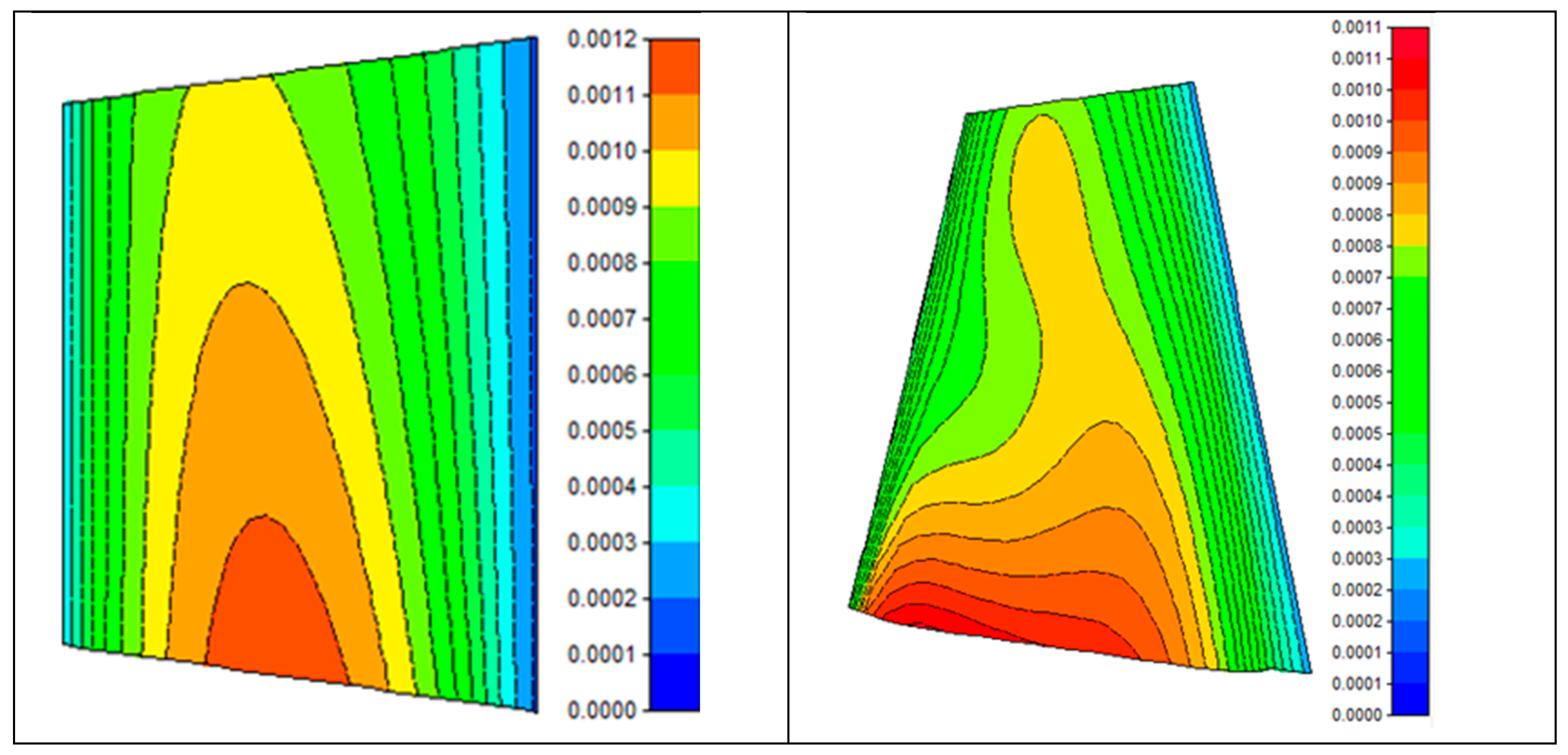

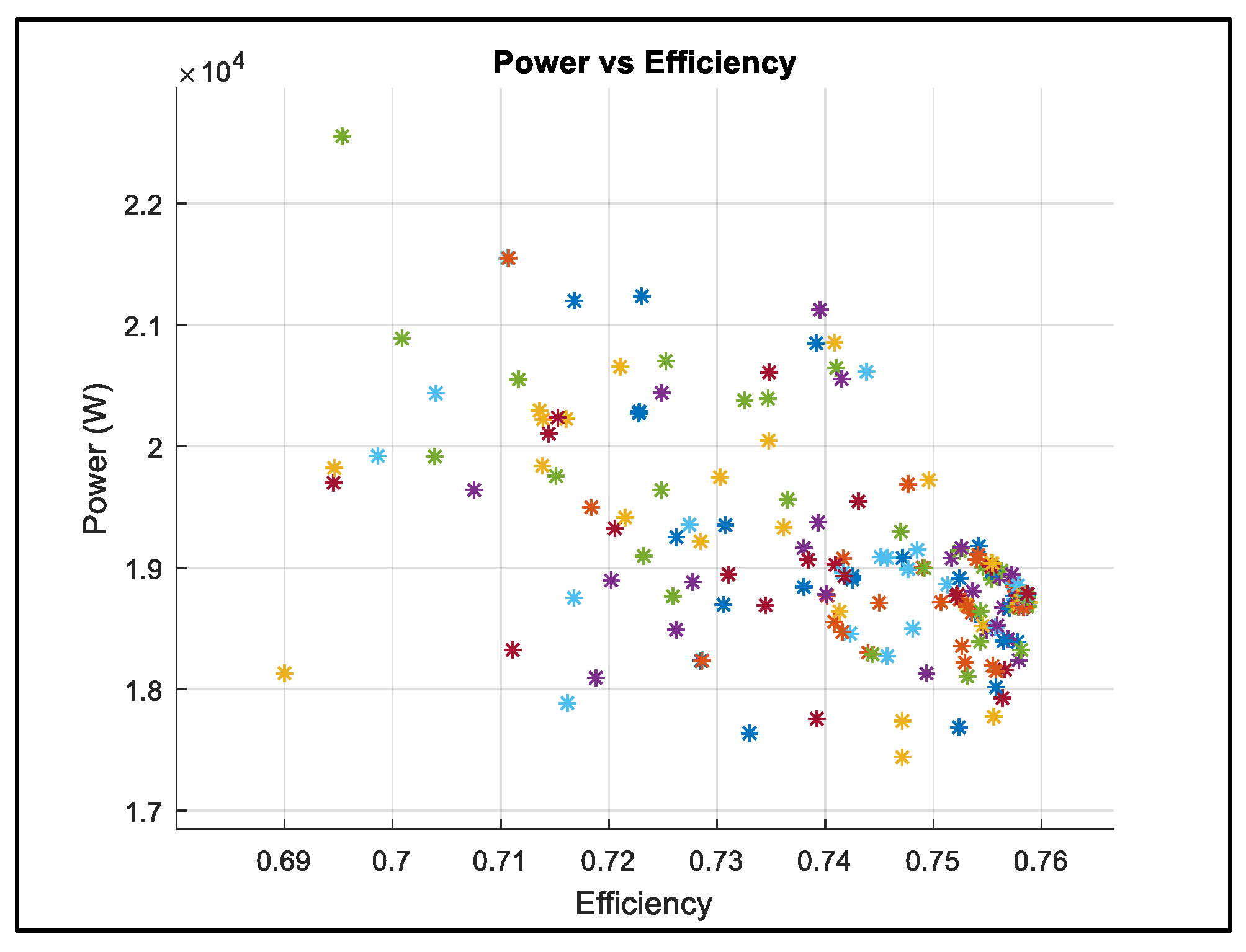

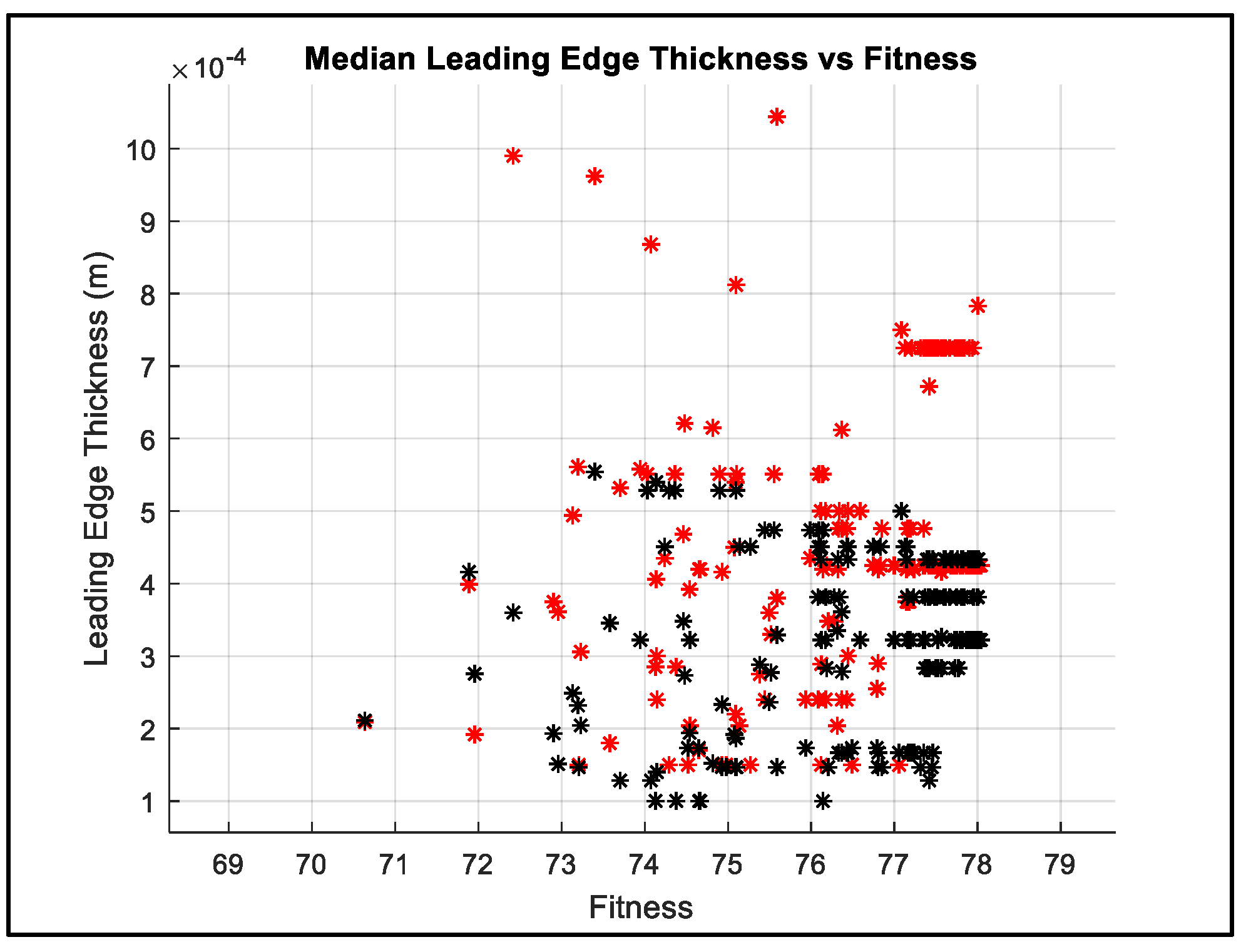

4.1. Algorithm Design Exploration

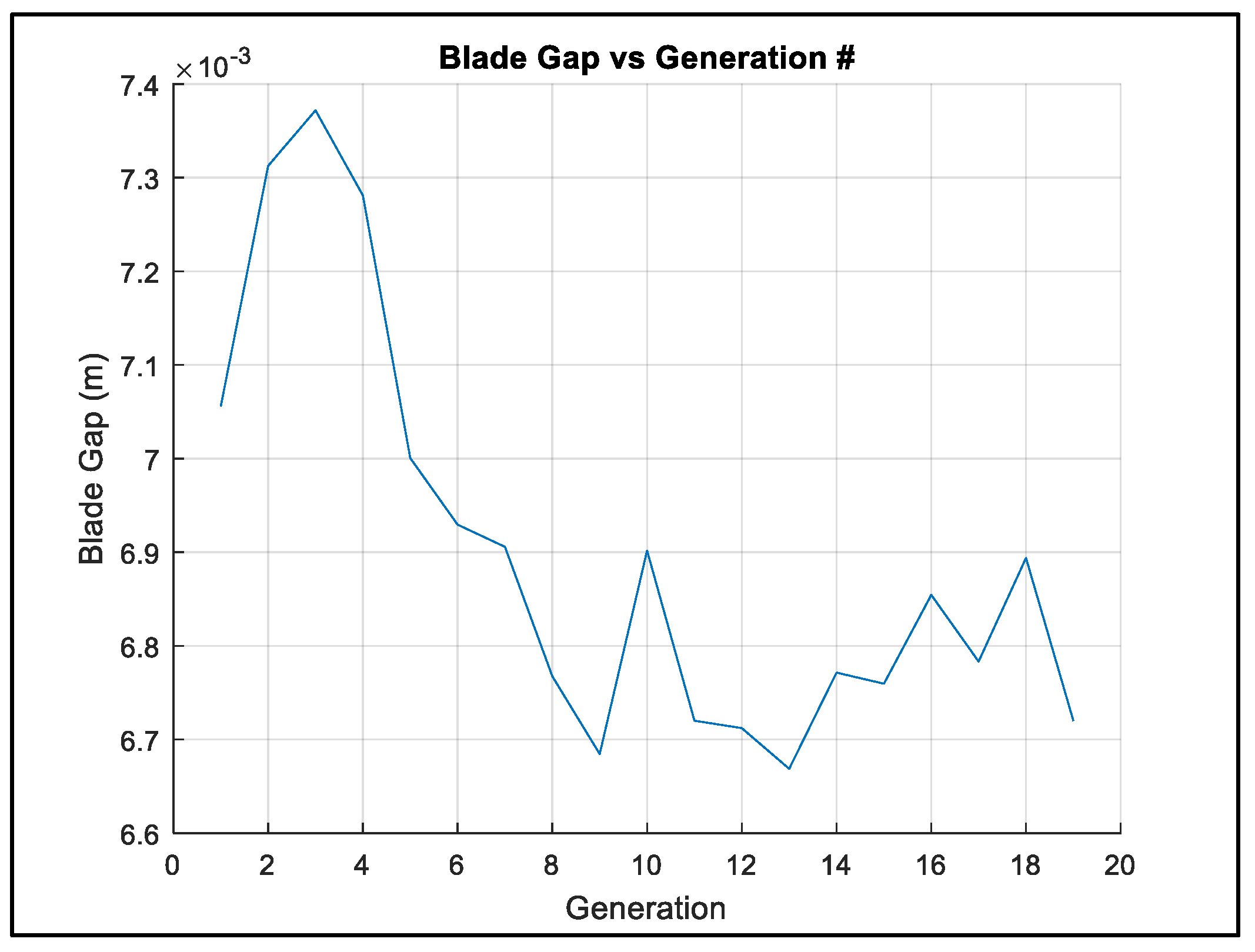

4.2. Parameter Evolutionary Behaviour

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

Nomenclature

| Bit size of every chromosome | |

| Number of Generations | |

| Population number | |

| Probability of a bit having the same value throughout the genetic pool | |

| Mutation Rate | |

| Chosen Tournament Size | |

| Abbreviations | |

| CFD | Computational Fluid Dynamics |

| FEA | Finite Element Analysis |

| GA | Genetic Algorithm |

| MDO | Multidisciplinary Design Optimisation |

References

- Walsh, G.; Berchiolli, M.; Guarda, G.; Pesyridis, A. Turbocharger Axial Turbines for High Transient Response, Part 1: A Preliminary Design Methodology. Appl. Sci. 2019, 9, 838. [Google Scholar] [CrossRef]

- Poloni, C. Hybrid GA for multi objective aerodynamic shape optimization. In Genetic Algorithms in Engineering and Computer Science; John Wiley & Sons: New York, NY, USA, 1995; pp. 397–415. [Google Scholar]

- Nemec, M.; Zingg, D.W.; Pulliam, T.H. Multipoint and Multi-Objective Aerodynamic Shape Optimization. AIAA J. 2004, 42, 1057–1065. [Google Scholar] [CrossRef]

- Sobieszczanski-Sobiesk, J.; Haftka, R. Multidisciplinary aerospace design optimization: Survey of recent developments. Struct. Optim. 1997, 14, 1–23. [Google Scholar] [CrossRef]

- Pritchard, L. An Eleven Parameter Axial Turbine Airfoil Geometry Model. In Proceedings of the ASME 1985 International Gas Turbine Conference and Exhibit, Houston, TX, USA, 18–21 March 1985. [Google Scholar]

- Trigg, M.; Tubby, G.; Sheard, A. Automatic Genetic Optimization Approach to Two-Dimensional Blade Profile Design for Steam Turbines. J. Turbomach. 1999, 121, 11–17. [Google Scholar] [CrossRef]

- Öksüz, Ö.; Akmandor, İ.S. Multi-Objective Aerodynamic Optimization of Axial Turbine Blades Using a Novel Multilevel Genetic Algorithm. J. Turbomach. 2010, 132, 1–14. [Google Scholar] [CrossRef]

- Fonseca, C.M.; Fleming, P.J. An Overview of Evolutionary Algorithms in Multiobjective Optimization. Evol. Comput. 1995, 3, 1–16. [Google Scholar] [CrossRef]

- Google, Inc. “Google Scholar”. 2019. Available online: https://scholar.google.co.uk/scholar?as_vis=1&q=genetic+algorithm+optimization&hl=en&as_sdt=1,5&as_ylo=2018 (accessed on 3 March 2019).

- Sudholt, D. The Benefits of Population Diversity in Evolutionary Algorithms: A Survey of Rigorous Runtime Analyses; Cornell University: Ithaca, NY, USA, 2018. [Google Scholar]

- Qu, B.; Zhu, Y.; Jiao, Y.; Wu, M.; Suganthan, P.; Liang, J. A survey on multi-objective evolutionary algorithms for the solution of the environmental/economic dispatch problems. Swarm Evol. Comput. 2017, 38, 1–11. [Google Scholar] [CrossRef]

- Chan, C.; Bai, H.; He, D. Blade shape optimization of the Savonius wind turbine using a genetic algorithm. Appl. Energy 2018, 213, 148–157. [Google Scholar] [CrossRef]

- Zhang, X.; Song, X.; Qiu, W.; Yuan, Z.; You, Y.; Deng, N. Multi-objective optimization of Tension Leg Platform using evolutionary algorithm based on surrogate model. Ocean Eng. 2018, 148, 612–631. [Google Scholar] [CrossRef]

- Harris Corporation Computer Systems Divisions. High Performance Super-Minicomputers; Harris Corporation: Fort Lauderdale, FL, USA, 1981. [Google Scholar]

- Goel, S.; Cofer IV, J.I.; Singh, H. Turbine Airfoil Design Optimization. In Proceedings of the ASME 1996 International Gas Turbine and Aeroengine Congress and Exhibition, Birmingham, UK, 10–13 June 1996. [Google Scholar]

- Cravero, C.; Dawes, W.N. Throughflow Design Using an Automatic Optimisation Strategy. In Proceedings of the ASME 1997 International Gas Turbine and Aeroengine Congress and Exhibition, Orlando, FL, USA, 2–5 June 1997. [Google Scholar]

- Urdhwareshe, A. Object-Oriented Programming and its Concepts. Int. J. Innov. Sci. Res. 2016, 26, 1–6. [Google Scholar]

- Gutowski, M.W. Biology, Physics, Small Worlds and Genetic Algorithms. In Leading Edge Computer Science Research; Shannon, S., Ed.; Nova Science Publishers: Hauppauge, NY, USA, 2005; pp. 165–218. [Google Scholar]

- Alajmi, A.; Wright, J. Selecting the most efficient genetic algorithm sets in solving unconstrained building optimization problem. Int. J. Sustain. Built Environ. 2014, 3, 18–26. [Google Scholar] [CrossRef]

- Coley, D. Improving the Algorithm. In An Introduction to Genetic Algorithms for Scientists and World Scientific; World Scientific: Singapore, 1999. [Google Scholar]

- Chawdhry, P.K.; Roy, R.; Pant, R.K. Soft Computing in Engineering Design and Manufacturing, 1st ed.; Springer: Berlin/Heidelberg, Germany, 1998. [Google Scholar]

- Miller, B.L.; Goldberg, D.E. Genetic Algorithms, Tournament Selection, and the Effects of Noise. Complex Syst. 1995, 9, 193–212. [Google Scholar]

- The International Nickel Company. Alloy IN-738 Technical Data; The International Nickel Company: New York, NY, USA, 2002. [Google Scholar]

| Parameter | Constraint |

|---|---|

| Power (W) | >17,000 |

| Rotor Safety Factor | >2 |

| Stator Safety Factor | >2 |

| Global Maximum Mach Number | <1.35 |

| Boundary Conditions | Value |

|---|---|

| Inlet Mass Flow Rate | 0.127238 kg/s |

| Inlet Total Temperature | 1168.55 K |

| Inlet Turbulence Intensity | 5% |

| Outlet Static Pressure | 1.30352 bar |

| Rotational Speed | 158,850 rpm |

| Design Evaluation Phase | Time (s) |

|---|---|

| MATLAB script and Ansys Start-up | 92 |

| File Reading and Importing | 75 |

| CFD Analysis | 328 |

| FSI Analysis (including CFD results importing) | 207 |

| Total | 702 |

| Preliminary Design | Optimised Design | Percentage Change | |

|---|---|---|---|

| Efficiency (T-s) | 73.98% | 75.87% | +2.55% |

| Power (kW) | 20.3 | 18.79 | −7.44% |

| Rotor Safety Factor | 1.78 | 2.38 | +33.71% |

| Stator Safety Factor | 1.87 | 2.78 | +48.66% |

| Preliminary Design | ||||||

| Stator | Rotor | |||||

| Hub | Mean | Tip | Hub | Mean | Tip | |

| Axial Chord (mm) | 6.43 | 6.43 | 6.43 | 9.36 | 6.93 | 4.63 |

| Stagger Angle (°) | 48.96 | 48.96 | 48.96 | 41.64 | 56.45 | 64.24 |

| Chord (mm) | 9.79 | 9.79 | 9.79 | 12.52 | 12.54 | 10.65 |

| LE Wedge (°) | 26 | 26 | 26 | 24 | 24 | 24 |

| TE Wedge (°) | 4 | 4 | 4 | 2 | 3 | 2 |

| LE Thickness (mm) | 0.35 | 0.35 | 0.35 | 0.62 | 0.45 | 0.38 |

| TE Thickness (mm) | 0.17 | 0.17 | 0.17 | 0.22 | 0.22 | 0.22 |

| Optimised Design | ||||||

| Stator | Rotor | |||||

| Hub | Mean | Tip | Hub | Mean | Tip | |

| Axial Chord (mm) | 6.06 | 5.81 | 6.72 | 6.81 | 5.87 | 5.03 |

| Stagger Angle (°) | 45.39 | 46.35 | 52.3771 | 39.98 | 52.24 | 63.05 |

| Chord (mm) | 8.63 | 8.42 | 11.01 | 8.89 | 9.59 | 11.10 |

| LE Wedge (°) | 28 | 27 | 28 | 27 | 27 | 27 |

| TE Wedge (°) | 3.43 | 5.37 | 5.85 | 4.88 | 5.37 | 5.37 |

| LE Thickness (mm) | 0.39 | 0.32 | 0.23 | 0.75 | 0.43 | 0.43 |

| TE Thickness (mm) | 0.22 | 0.20 | 0.22 | 0.23 | 0.19 | 0.16 |

| Percentage Change | ||||||

| Stator | Rotor | |||||

| Hub | Mean | Tip | Hub | Mean | Tip | |

| Axial Chord (mm) | −5.75% | −9.64% | 4.51% | −27.24% | −15.30% | 8.64% |

| Stagger Angle (°) | −7.29% | −5.33% | 6.98% | −3.99% | −7.46% | −1.85% |

| Chord (mm) | −11.85% | −13.99% | 12.46% | −28.99% | −23.52% | 4.23% |

| LE Wedge (°) | 7.69% | 3.85% | 7.69% | 12.50% | 12.50% | 12.50% |

| TE Wedge (°) | −14.25% | 34.25% | 46.25% | 144.00% | 79.00% | 168.50% |

| LE Thickness (mm) | 11.43% | −8.57% | −34.29% | 20.97% | −4.44% | 13.16% |

| TE Thickness (mm) | 29.41% | 17.65% | 29.41% | 4.55% | −13.64% | −27.27% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Berchiolli, M.; Guarda, G.; Walsh, G.; Pesyridis, A. Turbocharger Axial Turbines for High Transient Response, Part 2: Genetic Algorithm Development for Axial Turbine Optimisation. Appl. Sci. 2019, 9, 2679. https://doi.org/10.3390/app9132679

Berchiolli M, Guarda G, Walsh G, Pesyridis A. Turbocharger Axial Turbines for High Transient Response, Part 2: Genetic Algorithm Development for Axial Turbine Optimisation. Applied Sciences. 2019; 9(13):2679. https://doi.org/10.3390/app9132679

Chicago/Turabian StyleBerchiolli, Marco, Gregory Guarda, Glen Walsh, and Apostolos Pesyridis. 2019. "Turbocharger Axial Turbines for High Transient Response, Part 2: Genetic Algorithm Development for Axial Turbine Optimisation" Applied Sciences 9, no. 13: 2679. https://doi.org/10.3390/app9132679

APA StyleBerchiolli, M., Guarda, G., Walsh, G., & Pesyridis, A. (2019). Turbocharger Axial Turbines for High Transient Response, Part 2: Genetic Algorithm Development for Axial Turbine Optimisation. Applied Sciences, 9(13), 2679. https://doi.org/10.3390/app9132679