1. Introduction

Micro-expression is a brief, involuntary, and external representation of a real emotion that can be exploited to determine the “real” behaviors and feelings of an individual [

1]. Micro-expression was first discovered by psychologists Ekman and Friesen in 1969 [

2]. Compared with ordinary facial expressions, micro-expressions have three significant characteristics: short duration, (generally lasts 1/25 to 1/3 s), low intensity, and (usually) local movement [

3]. Based on these characteristics, micro-expressions are very difficult to detect by human beings [

4]. Only highly trained individuals may be able to distinguish them, but even with proper training, the accuracy of their recognition falls below 50% in general [

5]. However, it is very crucial to have the capability to detect and recognize micro-expressions in many areas, such as psychological and clinical diagnosis, police interrogation, and national security [

6]. Up till now, there have been plentiful research works for this area in the literature [

7,

8,

9,

10,

11,

12,

13], in which computer-aided techniques have been established to automatically recognize micro-expressions.

According to the known research results [

8,

9,

10], extracting features from the whole facial region can be found in most experiments, thus generating a lot of unnecessary redundant features so that the efficiency of recognition is greatly reduced. Psychologists have also found that micro-expressions tend to be partial movements that do not appear in the upper and lower portions of the facial region simultaneously [

5] and are generally concentrated near the region of eyes, nose, and mouth. Ekman proposed that facial expression represents slight changes in several discrete facial motion units [

5]. He also established Facial Action Coding System (FACS) [

14] to describe the relationship between facial muscle changes and emotional states. This system illustrates that facial expressions are associated with subtle changes in several action units (AUs). Compared with ordinary expression, micro-expressions have extremely brief facial representations and invoke less muscle action units. For example, even without the involvement of eyebrows and mouth, one can express their inner state of surprise only by raising the upper eyelids. Later on, the theory of necessary morphological patches (NMPs) for micro-expression has established [

15]. NMPs denote that some regions are indispensable in micro expression. For example, in all facial representations of disgust, the eyebrows and mouth do not need to be raised and open respectively, but the upper lip must be raised and appearing nasolabial sulcus on both sides of the nose.

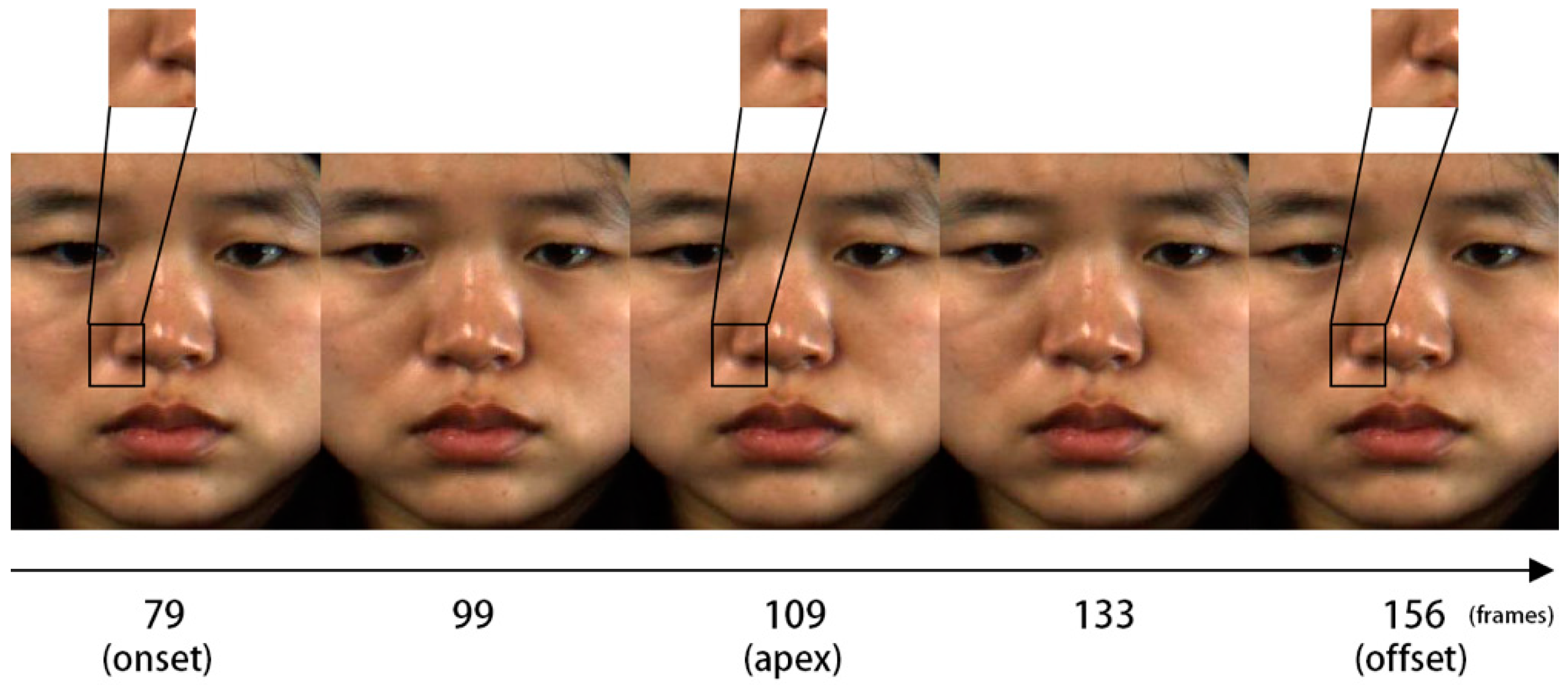

Figure 1 shows the NMPs of disgust, which are the indications of micro-expression to judge whether a person has a disgust emotional state. Almost all emotional information of micro-expressions is concentrated in these patches, therefore we need to separate these patches from the whole facial area.

In this paper, we aim to find some NMPs that play a key role in micro-expression recognition, and use these patches to train and learn how to identify micro-expression sequences. Automatic facial landmark detection is the first step in this work. This technique can detect the face in the video sequences, and adjust the position of the face in order to cut out the facial region. Face alignment is applied to extract facial active patches. In this study, in order to locate the facial active patches more accurately, we chose the algorithm of 68 landmarks [

10]. After finding these patches in the face, we calibrated the active patches of the eyebrows, eyes, nose, and mouth based on the FACS criterion and landmarks technology. Later, we manually cut out 18 active patches, known as regions of interest (ROI) [

13] in the whole face area. Moreover, we need to extract more effective features from these active patches [

16,

17,

18,

19]. Many existing works apply optical flow [

12] and Local Binary Patterns from Three Orthogonal Panels (LBP-TOP) [

8] algorithm to extract feature form dynamic micro-expression video sequences. The optical flow method reflects the close correlation between the frames by calculating the two adjacent frames. However, the changes between adjacent frames in micro-expressions are very weak and hence the algorithm does not reflect the changes of the facial active patches. The LBP-TOP algorithm analyzes image texture from temporal and spatial pattern. Texture is a feature that shows the spatial distribution property of pixels, and can convey the necessary information of micro-expression. In addition, it can also display the local structural information of facial images. Compared to ordinary expressions, micro-expressions call for less muscle motion to convey emotions and to evaluate the current emotional state we can we identify only some NMPs.

To reduce the dimensionality and improve the recognition efficiency, we use the Entropy-Weight method to screen the NMPs, which are essential for micro-expression recognition from 18 active patches. The concept of entropy is first introduced into information theory by Shannon, which describes the size of the average information amount of events. The entropy weight has been widely used in engineering, social and economic fields, whose basic idea is to determine objective weights according to the variability of indexes. In general, the smaller information entropy of an index indicates the greater the variability of the index value. Therefore, this index affects more in terms of comprehensive evaluation, and its weight will be greater. In order to assess the contribution of 18 active patches to micro-expression recognition, we use Entropy-Weight method to evaluate its weights. Entropy-Weight method can not only filter out NMPs from active patches but weight these patches, thus increasing the discriminative ability of our algorithm. Finally, the multi-class SVM classifier is used to identify these NMPs, and the recognition rate is obtained from CASME II and SMIC databases.

The rest of this paper is organized as follows. The next section describes the related work on facial landmark detector and NMP selection, feature extraction, and weighting these necessary regions.

Section 3 illustrates the particulars of the databases and discusses the experimental results in detail. Finally,

Section 4 concludes the paper.

2. Methods

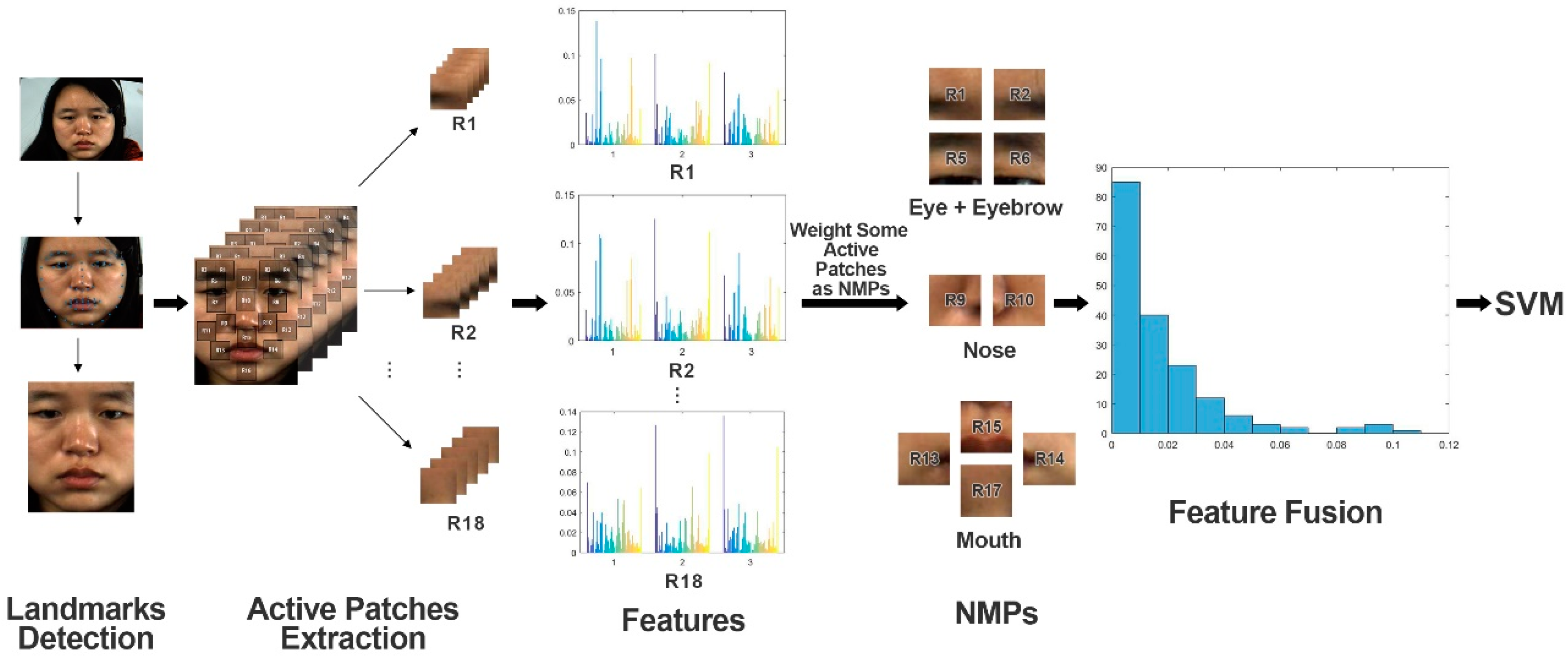

The subtle local movement of the facial muscles allows the facial expression to change and involve the relative positions of facial landmarks. The texture information of these regions also changes as the expression changes. In this paper, we aim at exploring different facial areas towards the recognition accuracy based on the subtle, local qualities of micro-expression. In other words, our goal is to identify the crucial facial areas corresponding to different emotions of the micro expression. The framework of the proposed algorithm is shown in

Figure 2.

2.1. Facial Landmark Location

The goal of facial landmark detection is to accurately locate the key points of the face through the detection algorithm. The landmarks generally refer to the points around eyes, eyebrows, nose, mouth, and face contour. Studies have shown that the active facial areas are mainly concentrated in the interlaced area of eyebrows and nasal bridge, as well as the corners of the eyes and mouth. In this paper, we firstly use face detection and landmarks detection to accurately locate the active facial patches. After that, we cut out the regions and extract the necessary features. Therefore, in order to get better location effect in active facial patches, 68 landmarks algorithm is used to calibrate micro-expression sequence.

To the best of our best knowledge, there are many machine learning methods to locate 68 landmarks, such as Active Appearance Model (AAM), Active Shape Model (ASM), and deep neural network algorithm, etc. Taking into account the real time and accuracy of position, we used ASM to localize the landmarks of micro-expression images [

20]. The algorithm learns facial images, which are calibrated by using a training set, then the best matching points are searched on the test set and the landmarks of the face are located accordingly. We located facial landmarks in micro-expression images based on a previously published algorithm [

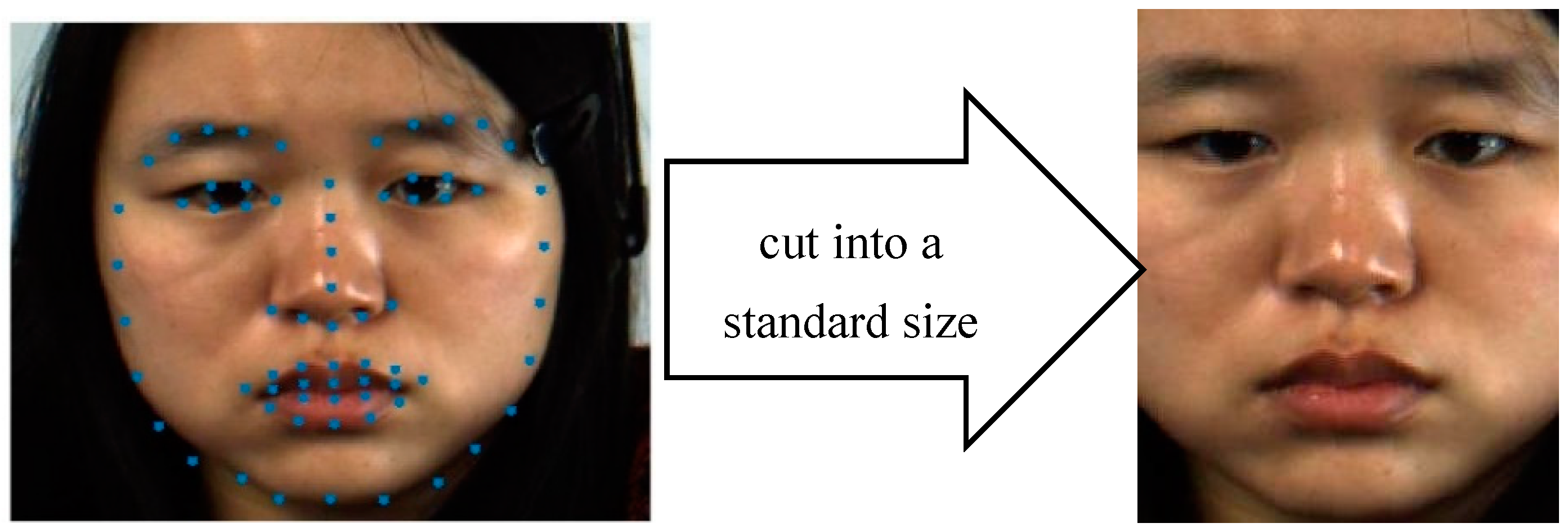

21]. The 68 landmarks we drew on a facial image are shown in

Figure 3. This method is applied in our algorithm and 68 landmarks are employed to align the active areas. These landmarks indicate the shape of eyebrows, eyes, nose, mouth, and the whole face, which are beneficial for researchers to cut the active patches.

2.2. Extraction of Facial Active Patches

There are two main drawbacks in direct training of the classifier through the whole face: (1) The dimensions of the features are too large and the training time is relatively long; (2) Some regions on the face do not express emotion and contribute little to the representation of facial expressions. Hence, the features obtained from these regions are most likely to introduce noise.

The face must be partitioned appropriately for micro-expression recognition to be feasible [

22]. The FACS criterion quantifies several muscle movements of the face and reveals 57 elementary components of the expression. These elementary components are known as the action units (AUs) and action descriptors (ADs). Similar to other facial expressions, a micro-expression is also a spatial combination of AUs. Each AU describes a local movement of micro-expression.

Table 1 defines several relationships between AUs and facial movements [

14].

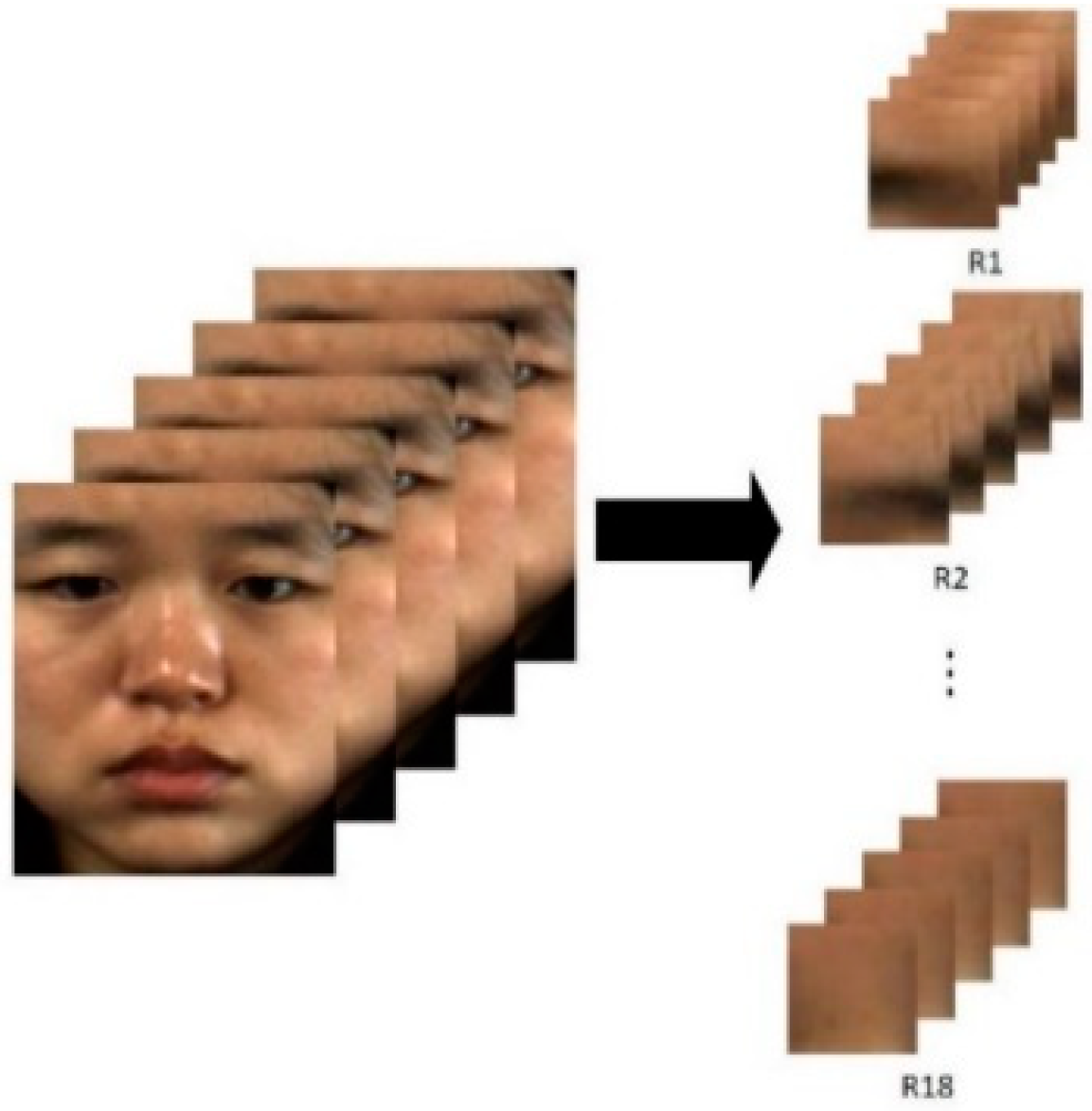

Considering that micro-expressions only involve certain local muscle movements and AUs, extracting only a few active facial patches instead of the entire image of the face is an effective approach to recognition. The eyebrows and eyes, for example, are involved in nearly all basic emotions [

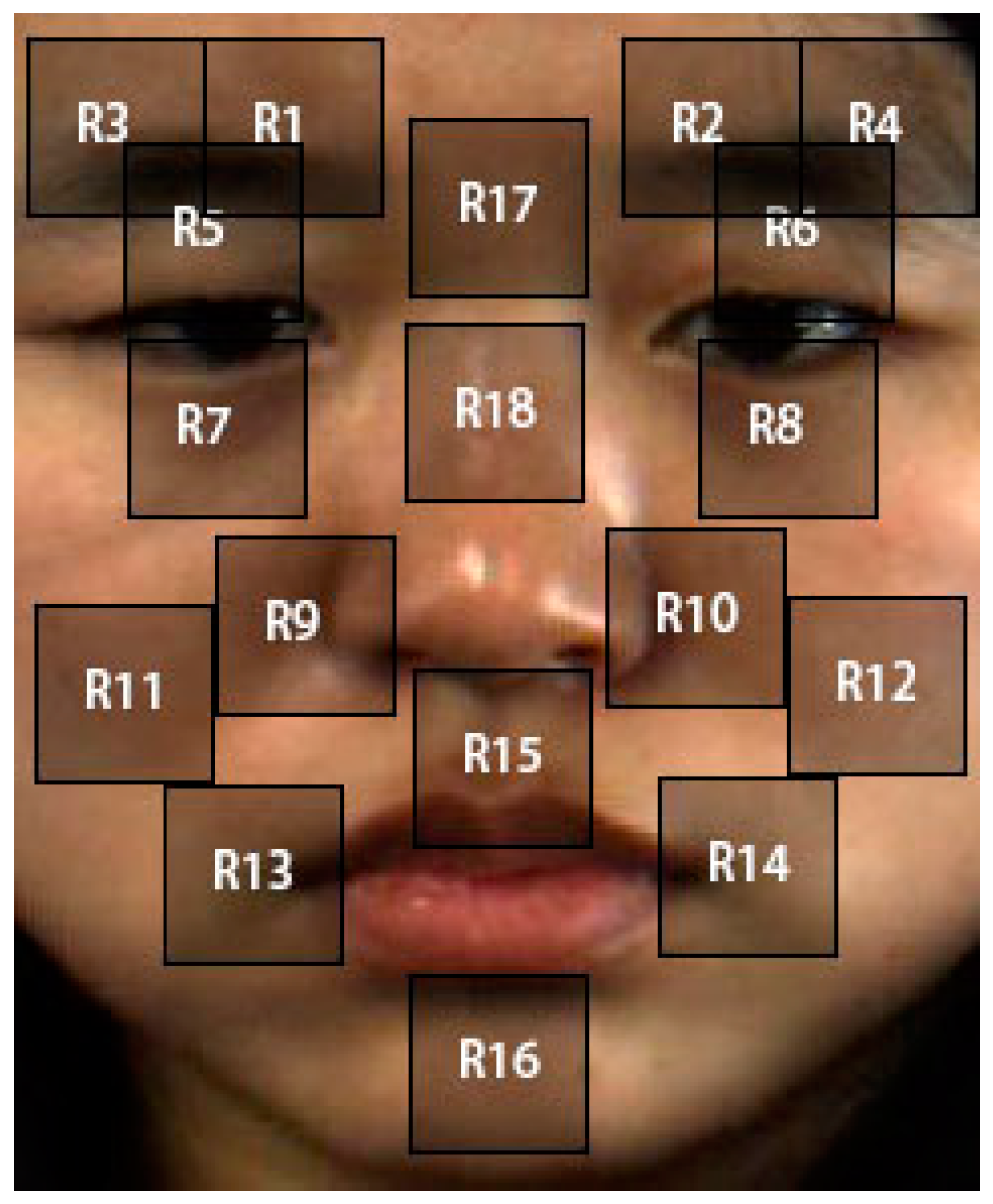

23]. The morphological characteristics of the eyes and brow are important cues of different micro-expressions. The mouth is another key discriminant area for expression recognition. Here we manually choose a frontal neutral face image as a template, and divide the image into 18 ROIs, as shown in

Figure 4.

The patches are separated according to the movements of micro-expressions. Each patch represents the active facial area of the micro-expression. We maintain the same size of each patch and extract the sequences of active patches for subsequent research.

2.3. Extraction Features

Micro expressions differ from ordinary (“macro”) expression in regards to their low intensity, short duration, and local movements. It is unreasonable to use ordinary expression recognition methods to deal with micro-expression sequences. Here, we extend the classic LBP descriptor to a LBP-TOP to manage dynamic textures and events across spatial-temporal dimensions [

24,

25].

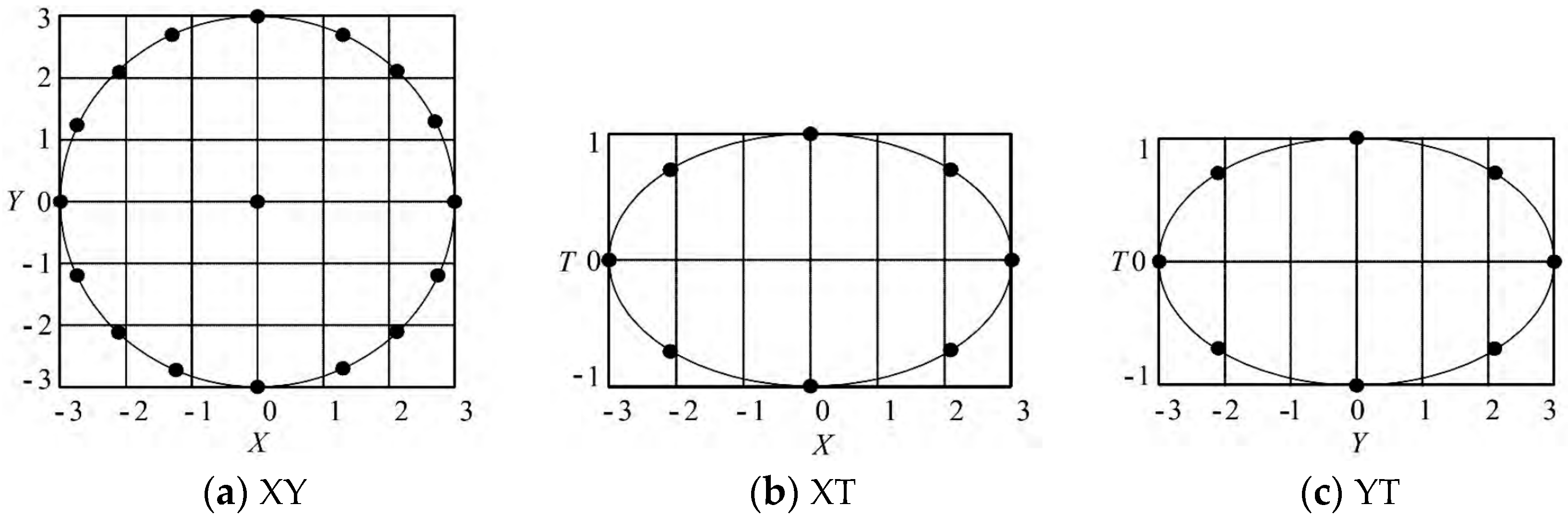

The LBP-TOP operator extends the LBP to three orthogonal planes, it was first proposed by Ojala et al. This operator reveals the local binary pattern of each image as well as the motion features of the spatial-temporal domain on the whole sequence. The LBP-TOP operator firstly divides the temporal and spatial domain into three orthogonal planes (XY, XT, and YT), then calculates the LBP values of the center pixels in each plane and eventually yields statistics of the expression information in three directions.

In practical applications, the spatial and temporal feature scales are different due to the unpredictable texture orientation, and the differences in the image resolution as well as frame rate. Here, we use an elliptical structure to define all neighboring points on the three orthogonal planes respective to the center point between frames, as shown in

Figure 5.

The LBP code is extracted from the XY, XT, and YT planes and denoted as XY-LBP, XT-LBP, and YT-LBP. The statistics of three different planes were obtained for all pixels, and then concatenated into a single histogram [

24]. In this paper, we extract LBP-TOP features and generate feature histograms for 18 active patches sequences. Only a few facial muscles are called on because of the micro expression. If 18 active patches are used to represent micro-expression sequences, the dimension of feature is too large, which makes the feature matching extremely complex and consumes too much system resources. Moreover, the movement range of the micro expression is much smaller than that of ordinary expression so that micro-expressions can be represented by some NMPs. For the next stage, we estimate some NMPs that are of significance for the micro expression from these 18 active patches.

2.4. Learning Crucial Facial Patches

Only a few NMPs play key roles in micro-expression recognition [

5], because each active patch has a different importance for micro-expression recognition. For example, the eye area and the mouth area are highly distinguishable for people to express their emotion. Therefore, we should set different weights for each active patches so as to find out the NMPs and improve the subsequent recognition accuracy.

In this paper, the Entropy-Weight method is used to calculate the weights of each active patch and select the NMPs essential for micro-expression recognition. Information entropy can represent the information content of an image, and express the richness of the image texture. When an image is divided into many sub-patches, the local information entropy can partly reflect the quantity of information for each patch. We therefore can calculate the contribution of the texture feature of each patch based on local information entropy. The weight of the histogram of the patches is given by information entropy, which can absolutely embody the importance of each patch.

Information entropy of the local patch indicates the information contained in the pixel. The greater the amount of information, the more abundant texture information of the patch. Considering the strong discriminating ability of texture features to the expression details, our paper introduces the concept of entropy by using the entropy weight to express the NMPs weight.

The steps of determining the weight by the Entropy-Weight method are as follows:

Suppose that there are

m objects to be evaluated and

n evaluation indexes, the original data matrix of the image is as follows:

- (1)

Step 1: Standardization of the original matrix, thus the normalized matrix is obtained.

where

is the Establishing evaluation matrix.

where the formula is the standard value of the

jth evaluation index on the

ith evaluation object.

- (2)

Step 2: Calculating the proportion

of index value of the

i-th object the

j-th index.

- (3)

Step 3: Determining information entropy. In the case of

m objects and

n indexes, the information entropy of the

jth index is defined as follows:

where,

.

- (4)

Step 4: Defining entropy weight. The entropy weight of the

jth index is defined as follows:

where,

.

2.5. Multi-Class Classification

In this study, we used Support Vector Machine (SVM) [

25] as a classifier for micro-expression recognition. It projects feature vectors to a higher dimensional plane by nonlinear mapping and finds a linear hyperplane for classification. SVM is a linear two-class model that maximize the margin in the feature space maximum. Micro-expression recognition is a multi-classification problem, in this paper, so we used Leave One Sample Out Cross Validation (LOSOCV) and 10-fold cross validation to it. However, micro-expression recognition is a multi-classification problem, there are two common methods to solve this problem: one-versus-rest (OVR) and one-versus-one (OVO). In this paper, we use the OVO SVMs. The approach is to design a SVM between any two classes of samples, so we need to design

k(

k − 1)/2 SVMs. After that, when classifying an unknown sample, the sample will select the class with the largest number of votes. The advantage of this method is that it does not need to retrain all SVMs, but only needs to retrain and add classifiers related to the samples. In addition, we also need to use the kernel function to map the sample from the original space to a higher dimensional feature space, so that the sample is linearly separable in this feature space. The kernel functions include the linear kernel, polynomial kernel, and Radial Basis Function (RBF). In this paper, RBF kernel:

is used as our classifier.

4. Results and Discussion

In this section, we conduct extensive experiments to evaluate the performance of the proposed micro-expression method on two widely-used micro-expression databases.

4.1. NMPs

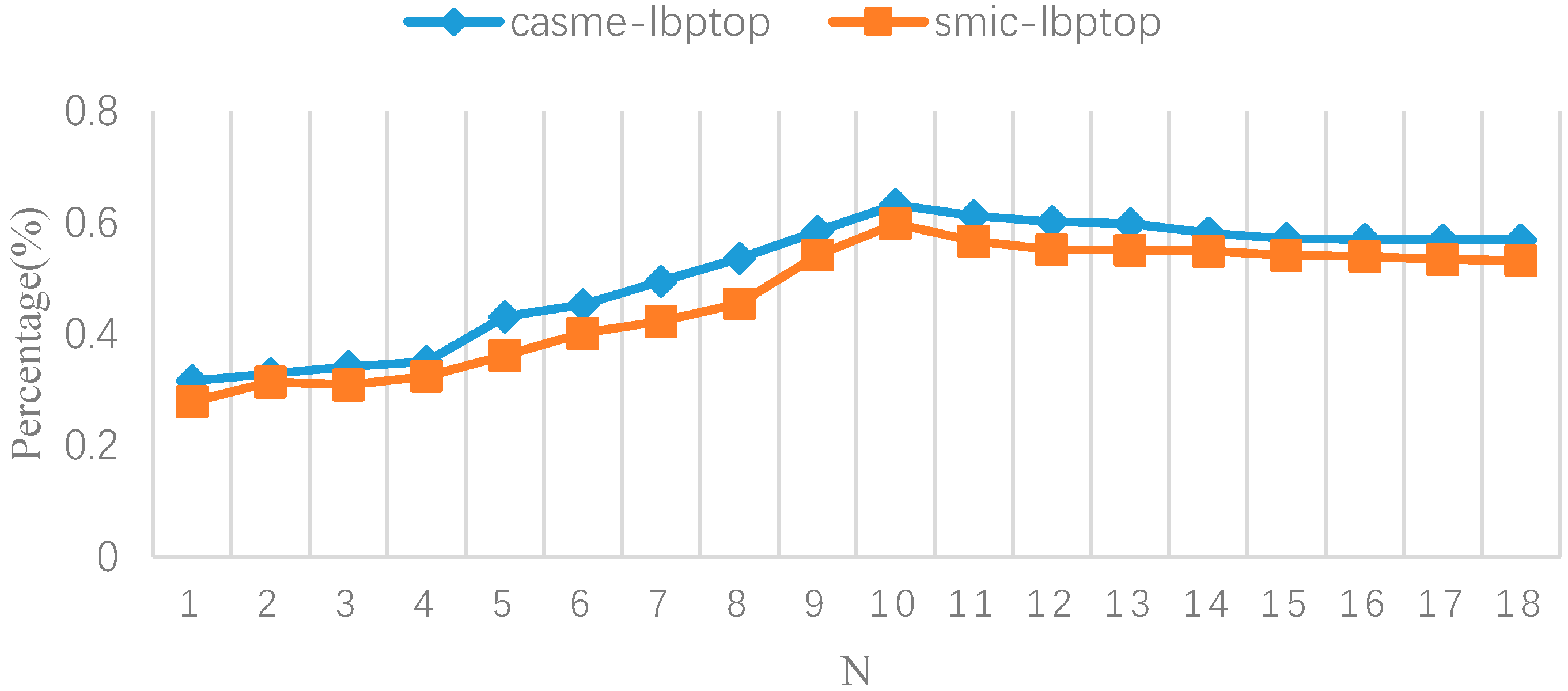

The number of facial active patches also affects the performance and recognition rate.

Figure 7 shows the relationship between the number of patches and the recognition rate. Even the use of a single crucial patch yields a recognition rate of 31.62%; the use of all 18 active patches produces a recognition rate of 56.93%. We found that the features of some unimportant patches do not play a significant role in identifying micro-expression. Instead of applying all 18 facial active patches, we can extract some crucial patches (NMPs) with discriminant ability for micro-expression recognition.

In the figure, N represents the number and location of NMPs. The micro expression only involves a few of facial active patches. The recognition rate reaches to the highest when the number of patches is 10. High values of N contain some extra patches that contribute less to subtle movement and micro expression recognition. Moreover, low values of N lost some important information.

The occurrence of micro-expressions is very weak, most of the movements focus on the corners of the eyes, eyebrows, nose, and mouth. The Entropy-Weight method as a feature selection algorithm can evaluate the importance of each feature on the classification problem. This paper compares the contribution of the Entropy-Weight method and other feature selection algorithms in the number of NMPs and recognition accuracy. The experimental results are shown in

Table 5. Then, comparing with the data, each algorithm chooses the number of NMPs in the region of the eyes, eyebrows, and mouth, which are basically the same while the number for the cheek and nose area is different. This is because the muscle movement of micro-expressions is mainly concentrated in the eye, eyebrow, and mouth regions, and the action units of the cheek and nose regions are very few. Micro-expressions are usually restrained facial movements, which are very subtle and easily overlooked. The Pearson coefficient is insensitive and misleading to these micro-expression areas because of the small correlation between the motions. The lasso model is very unstable, when the data changes slightly, it may lead to great changes in the model. The Entropy-Weight method has the advantages of high robustness and easy use, and the experimental results show that the NMPs selected by this method are basically in line with the most representative facial muscle motion patches proposed by psychologists when micro-expressions occur. In addition, the Entropy-Weight method can give weight to feature vectors, which can better represent the motion characteristics of micro-expressions in the classification process.

Each micro expression affects a few specific facial muscles. In other words, only part of the AUs are crucial for micro expression. In this paper, we use Entropy-Weight method to determine the location of NMPs. The optimum number of NMPs and location corresponding to different micro expressions are shown in

Table 6.

The subtle muscle movements of micro expressions mainly concentrate in the patches of the eyes, the eyebrows, the alar sides, and the mouth according to the weight value derived. The proposed method chooses 10 patches (R1, R2, R5, R6, R9, R10, R13, R14, R15, R17) which get the highest weights of these regions as NMP.

4.2. Recognition Performance

Firstly, we use a LBP-TOP descriptor to extract features in the whole face area, 18 facial active patches, and 10 facial NMP, respectively. Following the original implementations [

26,

27], we employ

for CASME II and

for SMIC. We summarize the results in

Table 7. It can be concluded from the experimental result that a higher recognition efficiency can be obtained by using some regions of NMPs, while a lot of redundant features are introduced by using the whole face area. Because of the local characteristic and low intensity, micro expressions involve a few muscle movements when people try to conceal and suppress their true emotions. Therefore, using 18 facial active patches to identify micro-expression can also increase the dimensions and complexities of features, and, meanwhile, reduce the accuracy.

Next, we calculate the information entropy to weight the active patches, and then obtain the 10 regions of NMPs with the greatest weight. The weights not only represent the importance of these patches for micro expressions, but also shows the information they could convey. Furthermore, the weighted values of these NMPs generate weighted LBP-TOP features for classifying and recognizing the micro-expressions. The results are shown in

Table 8 and

Table 9.

We compare the recognition rates between the dimensionality reduction algorithm and the original method. The weighted LBP-TOP achieves the highest accuracy rate. Compared with other traditional dimensionality reduction algorithms, this proposed algorithm has an obvious advantage that can directly explain the importance of NMPs for micro-expression recognition. The proposed method not only increases the significance of the features, but also improves the robustness of the algorithm. Although the traditional dimensionality reduction algorithm can reduce the high-dimension of the feature in a simple manner, it tends to lose some vital and useful information for recognition. Because the micro expression is a subtle facial muscle movement, many useful features would be filtered out as redundant information when applying the traditional algorithm to identify them.

Table 10 shows the accuracy rate of different algorithms for micro expression recognition on the CASME II [

28,

29,

30,

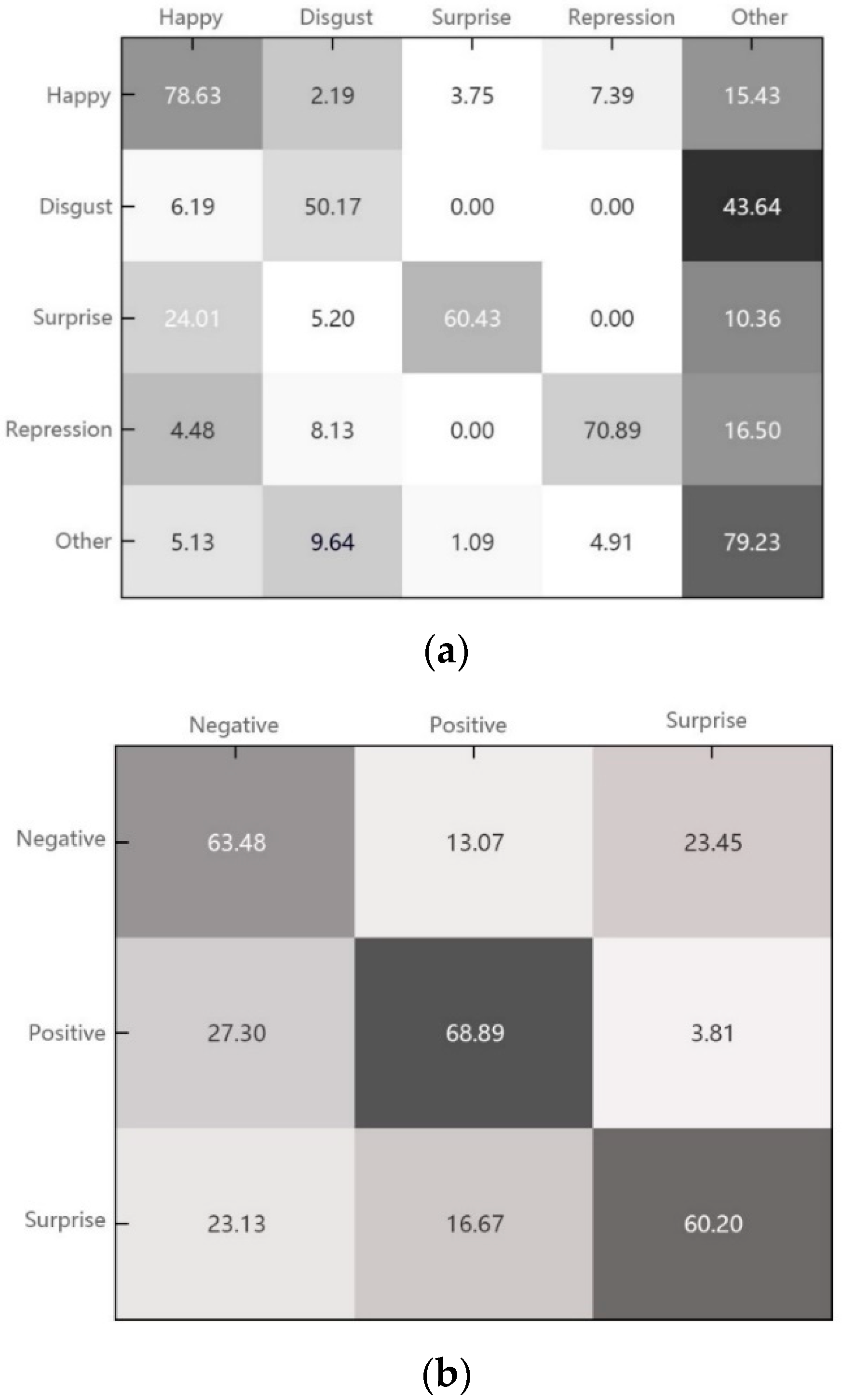

31]. Our method achieves a higher recognition rate than the others. Compared with other algorithms, our proposed method is more effective in the extractions of different feature regions for different micro-expression sequences compared with other algorithms and it also reveals the discriminative feature vectors in each NMP more accurately. We get the recognition performances of all kinds of emotion in CASME II and SMIC databases as show in

Figure 8.

From the experiment results, the emotions of “surprise” and “disgust” acquire a lower recognition rate since they are very easy to cause confusion with other micro-expressions. This is due to using some single muscle action units or the combines of these units to convey them. Because of that, many NMP regions can express them, which shows that these two micro expressions lack uniqueness. Compared to other emotional types, the stimulus of the two emotions are relatively simple, especially the emotion of “surprise”, because many emotions begin with surprise. Happiness is easily recognized by a machine because it has unique muscle movements, particularly in the mouth area. Therefore, they are more likely to be captured by machines or humans when people are happy. Furthermore, for SMIC, the figure also illustrates the accuracy of three expressions: negative, surprise, and positive.

Table 11 shows the accuracy of different methods for micro-expression recognition [

32,

33,

34,

35,

36]. Our algorithm outperforms the others on the SMIC database, which is benefit from the extraction of NMP on the whole face that effectively increases the micro-expression recognition rate.

5. Conclusions

Many research organizations pay close attention to the automatic recognition of micro expression in the field of computer vision. However, according to most literature, researchers have used the whole face region as a feature to test their algorithm. Psychologists have shown that micro expression is a kind of emotional category with local characteristics. Compared to facial macro-expressions, micro-expression is usually produced when people try to suppress their own emotions. Based on this situation, this is due to the inadequate contraction of facial muscles and involve less action units. This paper proposes a recognition method which exploits the local motion characteristics of micro-expressions.

In this paper, we studied the correlation between different facial regions. Based on the ground of the NMP of micro-expressions provided by a psychologist, we extract the active facial patches representing the features of facial deformation. After analyzing the active areas, we apply the Entropy-Weight method to identify some active patches as NMP. Thereby, we calculate the weighted LBP-TOP in these patches. These features reduce dimensions and improve recognition accuracy. Experiments on two public micro-expression databases demonstrates that our method achieves a remarkably high micro-expression recognition accuracy rate.

In addition, the proposed algorithm manually extracts 18 facial active patches, which may increase the complexity of the algorithm. Moreover, the artificial extraction of these patches is bound to have subjectivity, in future work we would be eager to construct an algorithm that automatically extracts the NMP regions.