1. Introduction

A mobile manipulator is a high-dimensional robotic system with a mobile platform and a multi-degree-of-freedom manipulator. Its applications are rapidly expanding across various industrial fields such as logistics, assembly, inspection, and service robots [

1,

2,

3,

4,

5]. This hybrid structure offers high potential by enabling a single robot to perform a wide range of manipulation and locomotion tasks. Nevertheless, it also presents a structural limitation in that it is difficult for users to control intuitively.

In particular, in fields such as disaster response, remote maintenance, and human–robot collaboration, where the operator’s intent must be reflected precisely and in real time, there is a strong requirement for the mobile base and manipulator to move in a seamlessly integrated manner while minimizing the user’s cognitive load. In such cases, tele-operation technology has emerged as an effective solution.

However, most tele-operation systems currently in widespread use treat the mobile base and the manipulator as separate control targets. In other words, the operator should either input the mobile base motion and manipulator actions independently or rely on large consoles capable of providing complex multi-DOF (Degree of Freedom) inputs. Due to the reduced operability, spatial inefficiency, and device complexity, these methods are difficult to apply in real industrial and service environments and impose fundamental limitations that hinder continuous and intuitive operation.

Recently, whole-body tele-operation platforms such as Mobile ALOHA [

6] have emerged. Concurrently, research efforts demonstrating the integrated control of mobile manipulators via tele-operation and evaluating their feasibility in real-world environments have been reported [

7,

8,

9]. Mobile ALOHA [

6], specifically proposed for whole-body imitation learning of mobile manipulators, has garnered significant attention within the research community. However, for direct manual control by an operator, the system requires separate control interfaces for the mobile base and the manipulator, resulting in a complex hardware architecture. Furthermore, the ANA Avatar XPRIZE competition has fostered various tele-operation approaches for mobile dual-arm robots [

7,

8,

9]. These studies emphasized the critical role of whole-body control and attempted to achieve intuitive operation by providing immersive sensory feedback to the operator. Nevertheless, control interface structures that require separate management of the mobile base and the manipulator entail high hardware complexity and cost. Moreover, they impose significant physical and cognitive loads on the operator, leading to rapid fatigue during operation.

Even if the limitations of physical interfaces are overcome, tele-operation algorithms are still required to naturally integrate the operator’s intended motions. As a solution, whole-body control algorithms for mobile manipulators have been proposed. They can provide unified motion that integrates both locomotion and manipulation. Nevertheless, their complexity and strong dependence on high-performance computational systems impose constraints when applying them in real environments or executing simple tasks. To naturally integrate the operator’s intended motions, various whole-body control algorithms have been proposed, each offering distinct advantages in terms of reactivity and motion quality. For instance, Haviland et al. [

10] introduced a reactive WBC framework based on Quadratic Programming (QP), which excels in providing immediate responsiveness and maximizing manipulability to avoid obstacles in real time. To further enhance motion smoothness and safety, coupled trajectory optimization methods [

11] and perceptive model predictive control frameworks [

12] have been developed. These approaches allow the robot to generate globally optimal trajectories and navigate through cluttered environments by explicitly considering future time horizons and dynamic constraints. More recently, to address specific task requirements, Zhao et al. [

13] utilized Reinforcement Learning to optimize base placement for physical interaction tasks, while Yang et al. [

14] proposed the RAMPAGE framework using efficient solvers like AL-DDP to achieve highly agile and dynamic motion planning.

However, despite these individual technical merits, a critical drawback common to these state-of-the-art methods is their strong coupling of the mobile base and manipulator models. This dependency necessitates solving high-dimensional nonlinear optimization problems or processing complex learning models at every control loop. Consequently, such optimization-based algorithms inevitably require high-performance solvers and advanced computing hardware to maintain real-time stability. This heavy reliance imposes significant constraints on their practicality in harsh, cost-sensitive industrial environments where robustness and cost-effectiveness are paramount. While alternative non-optimization approaches, such as kinesthetic learning based on the Fast Marching Square method [

15], offer an intuitive interface for teaching by demonstration, they are primarily designed for trajectory reproduction rather than addressing the dynamic needs of real-time tele-operation. Therefore, there remains a pressing need for a streamlined control strategy that reduces algorithmic complexity and minimizes reliance on high-level solvers to ensure safe and widespread deployment.

Therefore, for the intuitive tele-operation of a mobile manipulator, this paper proposes an end-effector (EE)-driven whole-body tele-operation strategy to control the entire mobile base and manipulator simultaneously using only EE motion commands, while decomposing the motion to reduce the dimensionality of the mobile manipulator.

This paper focuses on the planar motion of a mobile manipulator equipped with a mobile base that has non-holonomic constraints. In other words, the objective is to control the movement of EE in the X–Y plane. This paper introduces a strategy that separates linear motion and angular motion and controls each independently. With this decomposition strategy, vertical movement along the Z-axis can be represented through linear motion and can be independently controlled. Therefore, EE height movement is not addressed in this paper and is instead reserved as future work.

Based on these assumptions, the main idea proposed in this paper is a control-authority switching strategy that allows EE to move beyond the manipulator’s work space (WS) limitations when tele-operation commands are given. When EE is located within WS, the manipulator is given control authority, and when EE must move outside WS, control authority is handed over to the mobile base. To implement this switching strategy, the following elements are introduced. First, the motion of EE is defined in a similar form to the kinematic model of a mobile base that uses linear and angular velocity inputs. Because EE’s motion is influenced by both the manipulator and the mobile base simultaneously, its motion is expressed in terms of the relationship between these two components. Next, to allow EE to move as commanded, regions are defined that determine whether the control authority is assigned to the mobile base or the manipulator. The criterion for selecting the control authority is EE’s WS.

Within each region, the motion of EE is decomposed into linear and angular components. This reduces the high dimensionality of the mobile manipulator and simplifies computation. Ultimately, EE motion commands received through tele-operation are separated into longitudinal and angular motions, each expressed as a combination of manipulator and mobile base movements. Control authority is switched depending on EE’s position. Through this mechanism, when EE is within WS, only the manipulator is moved, and when EE is required to move outside WS, the mobile base has control authority.

In addition, because switching the control authority near the boundaries of each region is expected to cause chattering, a blending algorithm is introduced so that the control authority transitions smoothly and naturally.

This paper presents a novel whole-body tele-operation framework that enables integrated control of both the mobile base and the manipulator using only the user’s EE motion commands. Considering the structural complexity, operability limitations, and WS constraints of existing tele-operation systems, this paper offers the following academic contributions.

Formulation of EE-driven whole-body tele-operation method: Departing from the conventional control paradigm that relies on separate input devices, this work systematically establishes the concept of EE-driven tele-operation, in which the entire motion of a mobile manipulator can be directly determined using only EE commands. This fundamentally simplifies the user input structure and proposes a new tele-operation approach that enables intuitive and consistent control.

Control-authority switching mechanism based on the manipulator WS: The proposed approach provides the switching strategy that automatically transitions between manipulator-centered control and mobile-base-centered control depending on whether the EE is located inside or outside the manipulator WS. This allows the system to actively overcome the geometric constraints of the WS and enables whole-body control that ensures continuous end-effector motion.

Structural simplification of high-dimensional control problems via linear–angular motion decomposition: Considering the high DOF of mobile manipulators and the non-holonomic constraints of the mobile base, this work proposes a control strategy that separates EE motion into linear and angular components. In contrast to existing whole-body control methods—such as those based on optimization (e.g., Quadratic Programming) or learning-based approaches—which often suffer from high computational costs and challenges in ensuring real-time performance due to simultaneous consideration of all DOFs, the proposed decomposition effectively reduces the control dimensionality to only the essential DOFs required for tele-operation. This enables superior computational efficiency and control stability, providing a distinct advantage in achieving intuitive and responsive tele-operation without the need for complex optimization algorithms.

Blending-based transition strategy to mitigate discontinuous control at switching boundaries: To suppress chattering that may occur in regions where the control authority switches, a blending algorithm is designed to ensure continuous transitions of control inputs. This algorithm effectively reduces control discontinuities at WS boundaries.

This paper is organized as follows.

Section 2 defines the components and variables of the overall system, and

Section 3 presents the decomposition of linear and angular motions.

Section 4 describes the control-authority switching algorithm based on EE position and the controller blending algorithm.

Section 5 analyzes the performance of the proposed algorithm through numerical simulations, and

Section 6 provides the conclusions.

2. System Description

As mentioned in the introduction, EE motion is described similarly to a mobile robot with non-holonomic constraints. To provide a clear mathematical foundation for the proposed whole-body tele-operation framework, we establish the following three primary assumptions:

Assumption 1.

This study focuses on movement within the X-Y plane. Vertical motion along the Z-axis is considered decoupled and can be controlled independently; therefore, it is reserved for future work and not addressed in this paper.

Assumption 2.

For the simplicity of kinematic derivations, the coordinate frames of the mobile base and the manipulator are assumed to be aligned. This alignment allows for a more straightforward mapping of the EE motion relative to the mobile base.

Assumption 3.

The mobile base is assumed to operate under non-holonomic constraints, where its kinematic model is governed by linear and angular velocity inputs.

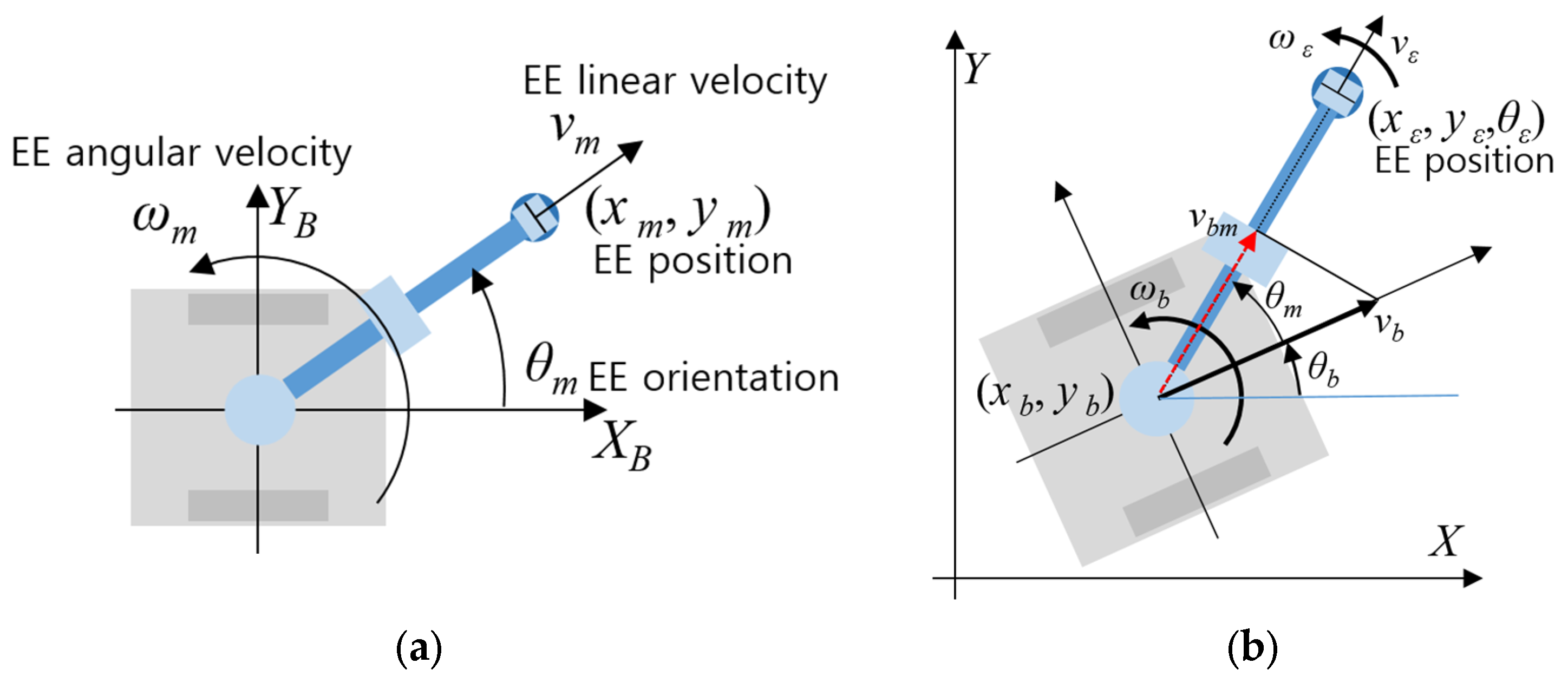

Figure 1 illustrates the motion representation of each component of the mobile manipulator based on the above assumptions.

Figure 1 illustrates the position and motion of EE with respect to both the mobile base and the world coordinate frames.

Figure 1a shows EE position and velocity information represented relative to the mobile base. Here, it is assumed that the coordinate frames of the mobile base and the manipulator are aligned. (

xm,

ym) denotes the position relative to the mobile base, and

θm represents the orientation.

vm and

ωm indicate the linear and angular velocities, respectively, and the direction of

vm corresponds to the outward direction from the origin of the mobile base.

Figure 1b shows the motion of the mobile manipulator in the world coordinate frame. (

xε,

yε) represents EE position in the world frame,

θε is its orientation, and

vε and

ωε denote its linear and angular velocities, respectively. (

xb,

yb) denotes the mobile base position in the world frame,

θb is its orientation, and

vb and

ωb are its linear and angular velocities. To account for the effect of the mobile base’s linear velocity on EE’s linear velocity,

vbm =

vb cos(

θm) is defined.

The kinematic model of the mobile base in

Figure 1b can be expressed as follows:

From (1) and

Figure 1b, the kinematic model of EE can be expressed as follows:

Here,

vε and

ωε can be expressed as follows:

Based on Assumptions 1 and 2, and

Figure 1, the velocity of EE can be simplified as a 2D vector summation. (3) represents the superposition of velocities where the base and manipulator motions are coupled within the same coordinate alignment, allowing for a straightforward linear decomposition.

4. Tele-Operation Based on Control Authority Switching

When implementing a tele-operation algorithm for EE, it is necessary to determine whether the command directs EE to remain within the manipulator’s WS or to move beyond it, in order to address WS limitations caused by the manipulator’s physical constraints. If EE is located within the WS, the manipulator alone can move it sufficiently to the desired position. However, in the case that EE is required that move outside SW, this cannot be achieved through manipulator control alone, and the mobile base must be moved instead. This section proposes a control-authority switching algorithm that assigns control to the manipulator when EE is within its reachable WS, and to the mobile base when EE should move beyond it.

Figure 4 illustrates the criteria used for defining the switching regions.

In

Figure 4,

lBE represents the extended length of the manipulator, and

ψBE denotes the angular position of the manipulator. WS is defined by the minimum and maximum longitudinal distances of the manipulator,

Lmin and

Lmax, and by the minimum and maximum angular positions,

Ψmin and

Ψmax. Considering WS defined in

Figure 4, control authority for linear motion is switched based on

Lmin and

Lmax, while control authority for angular motion is switched based on

Ψmin and

Ψmax.

4.1. Linear Motion Switching

To determine the linear velocity command

vε for EE, it is necessary to examine how the magnitude of

lBE relates to

Lmin and

Lmax.

Figure 5 can be referenced to explain the linear velocity control. As shown in

Figure 5a, when

lBE lies within WS, the manipulator is actuated; when it lies outside WS, the mobile platform should be moved instead.

Note: A simple criterion based solely on whether EE is inside or outside WS is not sufficient to achieve the desired switching between the manipulator and the mobile base. If EE moves outside WS, control authority switches to the mobile base. However, once the mobile base has the control authority, EE cannot re-enter the manipulator’s WS regardless of the input, meaning that a return switch back to the manipulator would not occur.

Figure 5b,c illustrate the behavioral characteristics according to the direction of EE’s movement. When EE is located outside WS, even if it is controlled using the tele-operation input, only the mobile platform will move, and EE cannot re-enter WS. Therefore, the criterion for selecting the control authority must be determined based on the direction of movement requested by the user.

In (10),

vε is expressed as a combination of

vb and

vm, and the values of

vb and

vm are selected depending on which component has control authority. When EE moves in the direction where

lBE decreases, as in

Figure 5b, the reference point is set to

Lmin. If EE moves closer to the base such that

lBE <

Lmin,

vb should be activated. Conversely, when the EE moves in the direction where

lBE increases, as in

Figure 5c, the reference point is set to

Lmax, and if

lBE >

Lmax,

vR should be activated so that EE can continue moving into regions unreachable by the manipulator. For the remaining case, where

Lmin ≤

lBE ≤

Lmax,

vBE is activated. Through this control authority switching strategy, the following linear-motion control commands can be generated.

Here, vc is the linear velocity command received through tele-operation, and its direction is aligned with the direction of lBE. In (12a,b), vb can be naturally obtained through vbm, therefore, for clarity, vbm is used in place of vb.

4.2. Angular Motion Switching

For controlling the rotational velocity of EE,

Ψmin and

Ψmax in

Figure 4 are used. The rotational velocity control method follows the same methodology as the linear motion switching algorithm described earlier. A conceptual diagram of the angular motion is shown in

Figure 6.

In

Figure 6,

ωc represents the rotational input provided through tele-operation. As shown in the figure, the angular motion is determined based on EE’s

ψBE. When

ψBE lies within WS, only

ωm is activated, whereas when it lies outside WS, only

ωb is selected. However, as mentioned in the linear velocity control section, using such a simple rule would prevent an end-effector located outside WS from re-entering it. Therefore, when the direction of

ωc is negative with respect to

ψBE, the control authority is switched between the manipulator and the mobile platform based on

Ψmin. Conversely, when the direction of

ωc is positive, the switching is performed based on

Ψmax. Using this control-authority switching strategy, the following control commands for angular motion can be generated.

4.3. Control Switching Blending Function

In the previous sections, the process of determining

vm and

vb, as well as

ωm and

ωb, through simple switching around the corresponding boundary values has been presented. However, when the control authority switches in this manner, chattering occurs near the boundaries, specifically when

lBE moves around

Lmin or

Lmax, and when

ψBE moves around

Ψmin or

Ψmax. Such chattering can induce undesirable oscillations in the overall system. Therefore, a blending function is introduced to suppress these effects. The blending functions for the four cases in (12a,b) and (13a,b) can be defined as follows:

Here,

α and

β are parameters that determine the width of the transition region.

Figure 7 illustrates the blending functions defined in (14) and (15).

The parameters α and β determine the width of the transition region Q; as these values increase, Q narrows, and as they decrease, Q expands. The blending and switching effects vary depending on the width of Q. Specifically, a narrower Q reduces the smoothing effect of the blending mechanism. In extreme cases, the blending functions μ(∙) and σ(∙) converge to a step function, effectively eliminating the blending transition. Conversely, while a wider Q allows for a smoother transition over a broader range, it requires careful adjustment because it may lead the manipulator to deviate significantly from its designated WS.

Using the blending functions in (14a,b) and (15a,b), the control-authority switching strategy in (12a,b) and (13a,b) can be modified as follows:

With (14a,b)–(16a,b), when EE enters WS from outside, the manipulator is activated, and when it moves outside WS boundary, the mobile platform becomes active. The proposed blending function in (16a,b) enables the two control commands to switch smoothly while simultaneously blending their contributions. Even though the tele-operation commands vc and ωc are switched according to WS as defined in (16a,b), they are directly implemented as vε and ωε.

Note: As shown in

Figure 7, a wider transition region increases the smoothing effect but may hinder proper switching. Conversely, if the transition region is too narrow, switching becomes faster but fails to adequately suppress chattering. The parameters

α and

β should therefore be selected based on user experience and the characteristics of the system.

5. Numerical Simulation Results

To evaluate the behavior and performance of the proposed end-effector–motion-driven whole-body tele-operation control algorithm, numerical simulations are conducted. For this purpose, a mobile manipulator capable of moving in a two-dimensional plane is modeled in a Python environment in

Figure 8. Also, the technical summary and parameters for the simulation are provided in

Table 1.

As shown in

Figure 8 and

Table 1, the mobile manipulator is implemented to move within a two-dimensional plane, and the user can generate (

vc,

ωc) commands, each with a maximum value of 0.4, by manipulating the circular control pad located at the bottom of the simulator.

Although EE of the actual mobile manipulator is capable of moving in three-dimensional (3D) space, this study focuses on implementing tele-operation commands for planar motion, and thus height information is not utilized. As previously noted in the Introduction, extending this to 3D spatial movement including vertical control is reserved for future research.

To validate the performance of the proposed algorithm within the simulation environment detailed in Algorithm 1, a simulation software was implemented as outlined in the following pseudo-code.

| Algorithm 1. Simulation scenarios for evaluating linear and angular velocity control performance. |

0: // Initialize parameters

1: Initialize robot parameters: L1, L2,

2: Initialize control thresholds: psi_max, psi_min, L_max, L_min, alpha, beta

3: Initialize robot state: x_base, y_base, theta1, theta2

4:

5: // Main Control Loop (execute at every time step ts)

6: if (new joystick input vc, wc is received) then

7: // 1. Calculate forward kinematics for EE local position

8: Calculate x_eff, y_eff using Forward Kinematics (theta1, theta2)

9:

10: // 2. Determine blending factors (mu) based on WS boundaries

11: if (vc >= 0) then

12: v_mu = 0.5 * tanh((x_eff − L_max) * alpha) + 0.5

13: else

14: v_mu = 1.0 − (0.5 * tanh((x_eff − L_min) * alpha) + 0.5)

15: end if

16:

17: if (wc >= 0) then

18: w_mu = 0.5 * tanh((y_eff − psi_max) * beta) + 0.5

19: else

20: w_mu = 1.0 − (0.5 * tanh((y_eff − psi_min) * beta) + 0.5)

21: end if

22:

23: // 3. Decompose velocity commands for Mobile Base

24: v_base = vc * v_mu

25: w_base = wc * w_mu

26:

27: // 4. Decompose velocity commands for Manipulator

28: v_manipulator = vc * (1 − v_mu)

29: w_manipulator = wc * (1 − w_mu)

30:

31: // 5. Update Robot State

32: Update Base State (x_base, y_base, th_base) using v_base, w_base

33: Update EE Target Position (x_end, y_end) using v_manipulator, w_manipulator

34: Calculate Joint Angles (theta1, theta2) using Inverse Kinematics (x_end, y_end)

35: Apply w_shoulder to theta1, and theta2

36: end if |

To show the performance of each motion type based on

Table 1 and

Table 2, linear and angular motions were applied independently according to the four scenarios listed in

Table 3.

As shown in

Table 1, Scenarios 1 and 2 correspond to linear motion tests, while Scenarios 3 and 4 correspond to angular motion tests. The simulation results are presented in

Figure 9. The plots on the left side of

Figure 9 show the time histories of

lBE,

ψBE,

vb,

vm,

ωb, and

ωm, whereas the plots on the right depict the relationships between

lBE and

vb,

vm, and between

ψBE and

ωb,

ωm.

A new scenario is introduced to validate the performance of the proposed algorithm under arbitrary tele-operation inputs. This scenario is specifically designed to verify whether the transition between control authorities occurs seamlessly under realistic switching conditions and to ensure that the operator’s inputs are faithfully executed by EE without distortion.

Figure 10 presents the experimental results obtained from this scenario.

Figure 10a,b show the tele-operational longitudinal and angular velocity command inputs, respectively, while

Figure 10c,d illustrate the resulting EE velocity as well as the velocities of the mobile base and the manipulator. From

Figure 10, it can be confirmed that for both linear and angular motions, the control authority transitions smoothly at the boundaries, and EE effectively reproduces the commanded tele-operation inputs. From

Figure 10c,d, it can be seen that the tele-operation inputs, which appear separately in the manipulator and mobile base outputs, are combined and reproduced accurately at EE without distortion. Since the proposed framework is designed to map the commands directly to the output based on kinematic decomposition, the tracking error is theoretically negligible in the simulation environment, except for minor numerical integration errors.

To validate the computational efficiency and real-time performance of the proposed algorithm, a comparative analysis was conducted against a standard optimization-based whole-body control method using Quadratic Programming (QP) [

10]. The QP controller was designed to minimize the following cost function

where

vref = [

vc,

wc],

q = [

vb,

wb,

,

]

T, 0 <

λ < 1 is the damping factor, and

qmax = 0.01.

Figure 11 shows the performance comparison between the proposed and QP-based algorithm.

Figure 11 compares the performance of the proposed algorithm (left column) and the QP-based algorithm (right column). Although the QP-based method successfully tracks the user’s tele-operation input, its behavior differs fundamentally from our approach. The QP-based algorithm distributes inputs to the mobile base and manipulator at every instant, resulting in transient peak inputs that strain the system. On the other hand, the proposed algorithm switches inputs based on the WS regions, preventing excessive system loads and keeping all control commands within safe limits. Moreover, the proposed algorithm is significantly more efficient in terms of computation time.

As shown in the table below, it outperforms the QP-based algorithm by being 35.4 times faster on average, 20.7 times faster in the worst case (maximum), and 37.5 times faster in the best case (minimum).

6. Conclusions

This paper proposed EE-driven whole-body tele-operation framework that enables consistent control of both the mobile base and the manipulator using only the user’s EE motion commands. The proposed framework is significant in that it systematically decomposes EE motion into linear and angular components while accounting for the high degrees of freedom of the mobile manipulator and the non-holonomic characteristics of the mobile base, and designs a switching-based whole-body control structure that automatically transitions control authority based on the manipulator WS. In addition, a smooth blending function was introduced to suppress discontinuous control and chattering at WS boundaries, enabling stable and natural control transitions.

The proposed method ensures that tele-operation inputs provided by the user are reflected continuously and intuitively in the overall motion of the mobile manipulator by clearly defining the respective contributions of the mobile base and the manipulator based on EE kinematics. Simulation results demonstrated that, for both linear and angular motions, control authority was appropriately switched according to the predefined WS boundaries (Lmin, Lmax, Ψmin, Ψmax), and that the application of the blending function effectively eliminated discontinuities in control inputs by enabling smooth transitions in velocity commands. In particular, even in situations where EE is continuously commanded to move beyond the WS, the mobile base naturally assumed control, ensuring that EE remained stably within the physical WS limits, a result that successfully fulfils the core objective of whole-body tele-operation.

In the additional simulation using arbitrary tele-operation inputs, the mobile manipulator accurately followed the user commands, and the velocity contributions of the mobile base and the manipulator were smoothly redistributed depending on the situation. These results confirm the generality and practical applicability of the proposed algorithm. They also indicate that end-effector-based tele-operation enables efficient and intuitive remote operation without the need for complex input devices or high-dimensional manipulation.

However, a limitation of this study is that the performance verification was conducted solely in a simulation environment. Therefore, applying the proposed algorithm to a physical mobile manipulator platform to validate its robustness against real-world uncertainties remains a primary objective for our future research. Beyond this physical validation, we plan to extend this framework to a full three-dimensional mobile manipulator system and further develop it toward intent-based human control, WS adaptation based on environmental perception, and bidirectional tele-operation incorporating physical interaction. Additionally, by validating improvements in stability, safety, and operability across various remote task scenarios, we aim to further expand the applicability of the proposed method as a next-generation tele-operation technology for mobile manipulators.