Semantic-Vertex-Based Topological Detection for Automatic Dimension Generation in Building Information Modeling (BIM) with Industry Foundation Classes (IFC)

Abstract

1. Introduction

- Platform dependency on geometric representation: IFC objects can be represented in either parametric or mesh-based geometric formats, and even for the same object, the coordinate system of vertex indices may differ across software platforms. Due to the diversity and complexity of IFC representation methods, different software may interpret or process identical data inconsistently, which affects the reliability of automation and dimensional computation [10].

- Lack of dimensional invariance: When an IFC object is rotated or scaled, it cannot be consistently recognized as the same object [11]. Specifically, once scale or rotational transformations occur, the vertex coordinates change, making it difficult to guarantee consistent dimensional values.

- Limited explicit dimension representation: The IFC schema provides only standardized basic dimensions of objects, whereas user-defined dimensions require manual measurement tools [12]. Although IFC viewers allow users to select surfaces or edges to measure length or area, the IFC data do not explicitly provide a logical basis for such dimensional calculations.

2. Research Objectives and Methodology

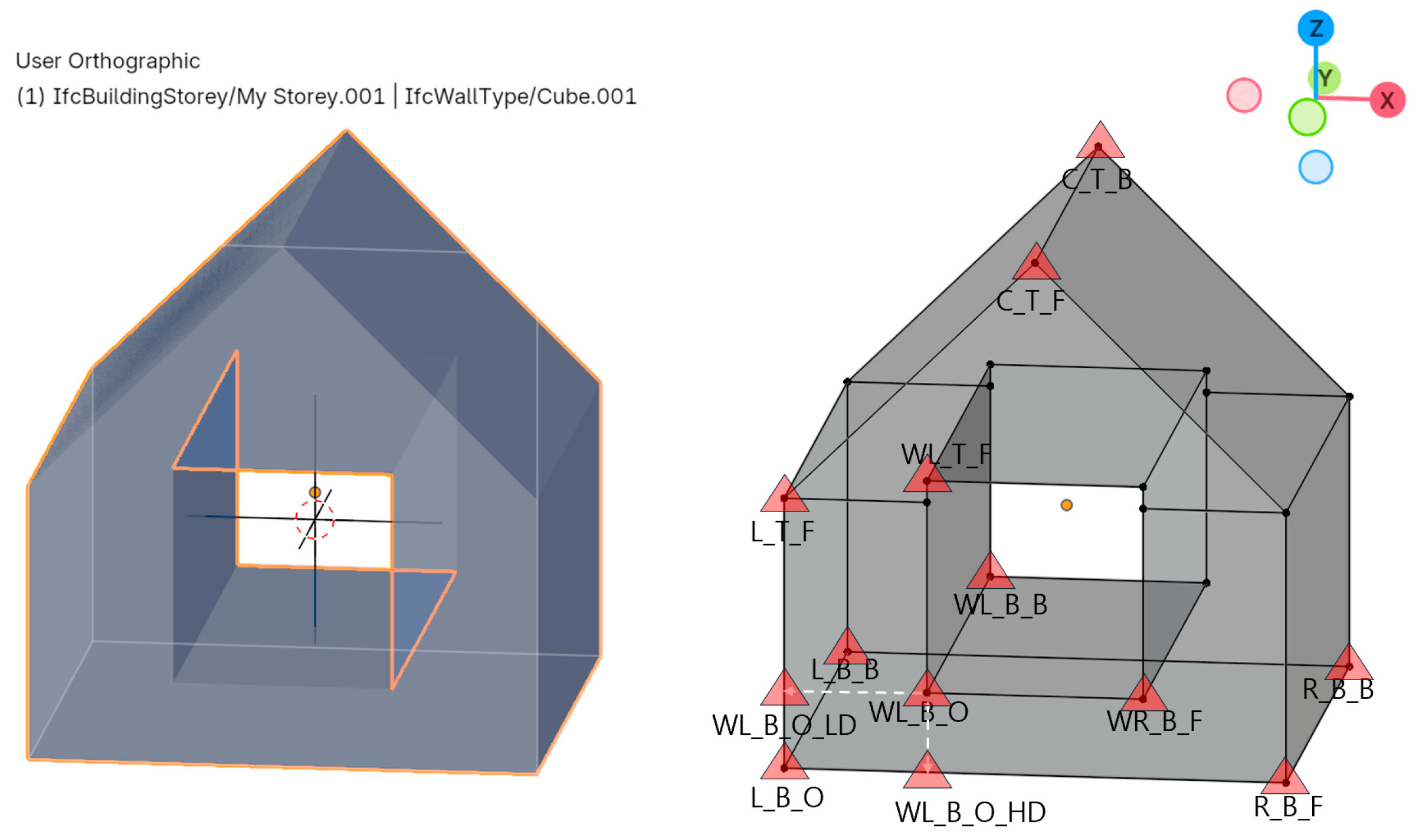

- Automatic detection of semantic vertices: Identifying semantically meaningful reference points for each object (e.g., left–bottom–origin (L_B_O), right–bottom–front (R_B_F), and left–top–front (L_T_F)), assigning unique indices and labels, and visualizing them in a 3D environment using a dimension helper mesh (DHM) method.

- Dimension generation: A system that automatically generates dimensions—such as length, width, height, and diameter—by calculating the distances between corresponding semantic vertices.

- Dimension recording and export: The generated dimensional data are stored within IFC property structures or exported into formats such as Excel or JSON to improve interoperability and workflow continuity.

- (1)

- Concept definition stage: IFC objects were converted into topology-based objects, and Semantic Vertices were defined for 3D models.

- (2)

- Object data construction stage: Wall and column objects were selected as IFC samples, and then converted into mesh- and vertex-based geometric forms to construct an experimental dataset (IFC objects generated using BlenderBIM were converted into 3D objects with geometric topology).

- (3)

- Algorithm design stage: Using the topological information of the objects, an automatic Semantic vertex detection algorithm was designed, and a system for generating dimension helper meshes (DHM) for detected vertices was established. Next, an automated system was designed to compute major dimensions—such as length, width, and height—based on DHM pairing relationships (implemented with Blender Python).

- (4)

- Application and validation stage: The proposed method was applied to sample 3D objects to verify the automation, accuracy, and consistency of dimension generation. Practical applicability was also validated via case study experiments.

3. Previous Research and Status

3.1. Current Status of IFC-Based QTO in the Construction Industry

3.2. Limitations of IFC-Based QTO

4. Semantic Vertex and Dimension Generation

4.1. Semantic Vertex

4.2. Automatic Search of Semantic Vertices and Dimension Generation

- Common method: Definition of semantic vertices based on the outermost rectangular bounding box.

- First method: Vector-based vertex search from reference points.

- Second method: Axis-based scanning along the X-, Y-, and Z-axes using a rectangular bounding box (detecting tangential intersections between the scanning plane and the object surface and identifying the endpoints of these tangents as semantic vertices).

5. Vector-Rules-Based Semantic Vertex Detection

5.1. Definition of Vector-Based Exploration

5.2. Vector Rules: Purpose and Composition Principle

- Primary exploration—definition of basic semantic vertices using the outer bounding box: From all vertices of the object, the minimum and maximum values along the X-, Y-, and Z-axes are calculated to generate an AABB. The eight corner points of this bounding box are used to define the first-order semantic vertices: left–bottom–origin (L_B_O), left–bottom–back(L_B_B), right–bottom–front(R_B_F), right–bottom–back (R_B_B), left–top–front(L_T_F), left–top–back(L_T_B), right–top–front(R_T_F), and right–top–back(R_T_B) (Figure 3. (2) First semantic vertex detection).Each semantic vertex identified through this process is assigned a unique index corresponding to its original vertex in the object, and it is visualized as a semantic vertex label and DHM.The semantic vertex label serves as a visual marker, whereas the DHM provides a functional line structure for measuring distances between vertices to generate dimension lines.

- Secondary exploration—internal semantic vertex detection based on vector directions: Using the first-order bounding box as a reference, the algorithm searches for internal vertices along specific vector directions (e.g., ±X, ±Y, and ±Z). The search was conducted according to the following vector-based rules (Figure 3. (3) Second stage of semantic vertex detection):

- ①

- Reference point: A previously defined semantic vertex (e.g., L_B_O).

- ②

- Search axis: A specified vector direction (e.g., X-, Y-, or Z-axes).

- ③

- Primary selection condition: The scalar distance between the reference point and candidate vertices along the search axis (e.g., I_L_B_O).

- ④

- Secondary selection condition: The scalar distance between a previously found semantic vertex (e.g., I_L_B_O) and new candidate vertices along the same axis.

- ⑤

- Deterministic rule: When multiple candidates met the same conditions, the vertex or edge with the smallest or largest coordinate value was selected.

For example, the inner bottom reference point of an opening (I_L_B_O) is determined by selecting the vertex closest to the X-axis parallel to L_B_O (primary selection).Using this principle, detailed semantic vertices required for calculating specific dimensions, such as inner width (IW) and inner height (IH), can be automatically defined. - Labels and DHM of semantic vertexEach detected semantic vertex is assigned a Unique Vertex Index (UVI) that remains invariant within the object. This index encapsulates not only the spatial coordinates of the vertex but also its semantic identity. The semantic vertex label serves as a visual indicator, while the DHM is generated at each semantic vertex as an object containing positional coordinates for measuring distances between vertices.

5.3. Logic of Vector-Rule-Based Detection

- Primary detection: AABB-based semantic generation

- 2.

- Secondary detection: vector-rule semantic refinement

- 3.

- UVI and DHM registration

6. Scanning Rules-Based Semantic Vertex Detection

6.1. Definition of Scanning-Based Exploration

6.2. Scanning Rules: Purpose and Composition Principle

- (1)

- Select scanning axis or axes of the object;

- (2)

- Subdivide the axis into scan intervals (geometry-based resolution), with the scanning range defined slightly larger than the object;

- (3)

- Collect minimum/maximum points and internal vertex candidates per interval;

- (4)

- Generate slicing planes based on candidate sets;

- (5)

- Evaluate tangency change and detect transition patterns;

- (6)

- Select valid tangency indicators relevant to dimension measurement;

- (7)

- Confirm endpoints of the final tangency lines as semantic vertices;

- (8)

- Generate dimension helper meshes (DHMs) at the detected semantic vertices.

6.3. Logic of Scanning-Based Detection

- Definition of scanning variables

- 2.

- Interval construction

- 3.

- Candidate vertex selection

- 4.

- Tangency-segment extraction

- 5.

- Detection of transition zones

- 6.

- Semantic vertex allocation

- 7.

- DHM registration

7. Fundamental Case Study of Dimension Generation

7.1. Basic Example: Wall with a Window Opening

- Outermost rectangular bounding box

- 2.

- Second-stage vector-based search using the reference point

- ①

- Condition for WL_B_O: Reference point: L_B_O, The search principle is defined by . The candidate selection rule chooses that minimizes , meaning both and are minimal and non-degenerate (not equal).

- ②

- Condition for WR_B_F: Reference point: WL_B_O, The search principle is (minimum ). The selection rule picks, among candidates satisfying the above, the vertex with the smallest .

- ③

- Condition for WL_T_F: Reference point: WL_B_O, The search principle is (minimum ). The selection rule picks, among candidates satisfying the above, the vertex with the smallest .

- ④

- Condition for WL_B_O_BD: Reference point: WL_B_O, A virtual ray is projected in the −Z-axis direction, and the first intersection coordinate with the mesh defines WL_B_O.BD. The parametric form of the ray is .

- ⑤

- Condition for WL_B_O_LD: Reference point: WL_B_O, A virtual ray is projected in the −X-axis direction, and the first intersection coordinate with the mesh defines WL_B_O.LD.

7.2. Dimension Generation for Wall Objects

- Semantic vertex detection

- Generation of labels and dimension helper meshes

- Creation of final dimension lines and numerical dimension values

7.3. Example: Round Column with a Capital

- Outermost rectangular bounding boxFirst, the column object is aligned along the user’s viewing reference frame, specifically the X-, Y-, and Z-axes. Next, the definition of semantic vertices begins based on the outermost rectangular bounding box. The outermost bounding box automatically defines eight semantic vertices: Left–Bottom–Front (L_B_O), Left–Bottom–Back (L_B_B), Right–Bottom–Front (R_B_F), Right–Bottom–Back (R_B_B), Left–Top–Front (L_T_F), Left–Top–Back (L_T_B), Right–Top–Front (R_T_F), and Right–Top–Back (R_T_B). To mark the coordinates of these eight semantic vertices, each vertex is assigned a unique index, and visualized labels, as well as dimension helper meshes are generated. The origin of each label and the origin of each helper mesh are set to exactly coincide with the corresponding semantic vertex coordinates. The following figure illustrates an example of first-stage semantic vertex detection and visualization for a cylindrical column object. When the column object is aligned with the world coordinate axes, the X, Y, and Z coordinate values of each semantic vertex are defined as follows.

- Semantic vertex detection by X-axis scanning methodThe X-axis directional scanning method is applied for second-stage semantic vertex detection. By referencing the outermost bounding box of the target object, a series of virtual cross-sectional planes is sequentially moved across the object from the leftmost to the rightmost side. At each scan position, the intersection lines between the plane and the object’s mesh are calculated. Among the vertical segments generated by these intersections, filtering and clustering are performed based on their height, position, and overlap ratio to extract semantic vertex candidates. In particular, for each scan plane, a stable set of vertical intersection lines is identified where the number of intersection points converges consistently along the vertical direction. These lines are defined as vertical intersection lines, and among them, the one with the lowest Z value is used to define two semantic vertices: the upper endpoint and the lower endpoint.

- ①

- On the left side of the column, the vertex with the maximum Z value is defined as CM_L_T_P.

- ②

- On the left side, the vertex with the minimum Z value is defined as CM_L_B_P.

- ③

- On the right side of the column, the vertex with the maximum Z value is defined as CM_R_T_P.

- ④

- On the right side, the vertex with the minimum Z value is defined as CM_R_B_P.

This axis-based scanning method allows for reliable semantic vertex detection even in objects with circular, bent, or asymmetric geometries. Furthermore, it can detect contact points or tangent lines based on actual geometric intersections, even for complex structures that are not perfectly aligned to a specific axis. Therefore, the scanning method achieves a higher detection reliability than simple outermost bounding box–based approaches. Figure 9 and Figure 10 show the results of first-stage and second-stage (scanning-based) semantic vertex detection, respectively, and Table 5 and Table 6 provide descriptions of the corresponding semantic vertex labels.

7.4. Dimension Generation for Column Objects

- Semantic vertex detection

- Generation of labels and dimension helper meshes

- Creation of dimension lines and numerical dimension values

8. Empirical Case Study

9. Discussion

- Comparison with existing dimensioning systems

- 2.

- Interoperability

- 3.

- Accuracy of semantic vertex detection

- 4.

- Role of semantic vertices within the IDS/EIR-based BIM workflow

- ①

- Quantitative reference points for IDS constraints;

- ②

- A parametric basis for automated QTO and dimensional extraction;

- ③

- An extendable interface toward AR verification workflows.

- 5.

- Future work: full round-trip validation and AR/MR deployment

- (1)

- Authoring and modification within native BIM tools;

- (2)

- IFC export;

- (3)

- Semantic vertex detection and dimension extraction;

- (4)

- IFC write-back or JSON-based metadata embedding;

- (5)

- Model re-import into an IDS-compliant viewer for rule-evaluation feedback.

10. Conclusions

- Outermost bounding-box detection effectively identified primary semantic vertices for structural objects.

- Semantic vertices not detected through bounding-box evaluation alone were successfully resolved using either reference vector exploration or axis scanning, selected depending on the object geometry.

- The scanning-based approach demonstrated stable and reliable performance for non-orthogonal, circular, and asymmetric shapes.

- The generated dimensions remained invariant under object rotation and realignment, confirming the coordinate-independent robustness of the proposed framework.

- Case studies of slabs, columns, beams, and walls verified that accurate dimensional outputs can be achieved using semantic vertex pairing alone, without dependence on auxiliary helper meshes.

- Semantic vertex labels serve primarily as visual indicators, whereas dimension helper meshes function as measurable reference points during dimension calculation.

- After generating the dimensions, the results could be stored in Blender custom properties or exported to external systems along with the dimension objects.

11. Patents

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AEC | Architecture, Engineering, and Construction |

| AR | Augmented Reality |

| BIM | Building Information Modeling |

| DHM | Dimension Helper Mesh |

| IDS | Information Delivery Specification |

| IFC | Industry Foundation Classes |

| LLMs | Large Language Models |

| QTO | Quantity Take-Off |

| UDSV | User-Defined Semantic vertex |

| UVI | Unique Vertex Index |

Appendix A. Axis-Scanning-Based Semantic Vertex Detection Algorithm

Appendix A.1. Input Parameters

- M = (V, F): mesh vertices and faces

- axis ∈ {X, Y, Z}: scanning direction

- N: number of scan intervals (sampling resolution along axis)

- K: slicing planes per interval

- τ_len: minimum segment length threshold

- τ_overlap: clustering overlap threshold

- ε: extended scan boundary margin

- Output

- S_scan: detected semantic vertex set

- DHM_scan: optional helper-mesh instances at each vertex

Appendix A.2. Pseudocode (Word-Paste Stable Version)

| Algorithm A1. Axis-Scanning-Based Semantic Vertex Detection |

|

Appendix A.3. Key Clarification—Sampling, Not Continuous Scanning

Appendix A.4. Termination Condition

- Outer loop executes exactly N times (finite).

- Each interval processes only K slicing planes.

- No recursion is used.

- The algorithm always terminates.

Appendix A.5. Computational Complexity

- Time complexity: O(N · K · |F|) ≈ O(N · |F|)

- With BVH/spatial tree acceleration: ≈O(N · log|F|)

- Space complexity: O(|V| + |F| + S_i)

References

- Maunula, A.; Smeds, R.; Hivernasalo, A. Implementation of Building Information Modeling (BIM)—A Process Perspective. In APMS 2008: Innovations in Networks; IFIP International Federation for Information Processing; Springer: Boston, MA, USA, 2008; pp. 379–386. [Google Scholar]

- Gerbino, S.; Cieri, L.; Rainieri, C.; Fabbrocino, G. On BIM Interoperability via the IFC Standard: An Assessment from the Structural Engineering and Design Viewpoint. Appl. Sci. 2021, 11, 11430. [Google Scholar] [CrossRef]

- ISO 10303-21:2016; Industrial Automation Systems and Integration—Product Data Representation and Exchange—Part 21. ISO: Geneva, Switzerland, 2016.

- Noardo, F.; Arroyo Ohori, K.; Krijnen, T.; Stoter, J. An Inspection of IFC Models from Practice. Appl. Sci. 2021, 11, 2232. [Google Scholar] [CrossRef]

- Akanbi, T.; Zhang, J. IFC-Based Algorithms for Automated Quantity Take-Off from Architectural Model: Case Study on Residential Development Project. J. Archit. Eng. 2023, 29, 05023007. [Google Scholar] [CrossRef]

- Hesselink, F.; Krijnen, T.; Pannekoek, E. Automatic Analysis and Quantity Estimation of Balcony Elements from IFC Files. In Proceedings of the CIB W78 Conference, Luxembourg, 11–15 October 2021. [Google Scholar]

- Taghaddos, H.; Mashayekhi, A.; Sherafat, B. Automation of Construction Quantity Take-Off: Using Building Information Modeling (BIM). In Proceedings of the Construction Research Congress (CRC 2016); San Juan, Puerto Rico, 31 May–2 June 2016, American Society of Civil Engineers (ASCE): Reston, VA, USA, 2016; pp. 2218–2227. [Google Scholar]

- Lee, G. What Information Can or Cannot Be Exchanged? J. Comput. Civ. Eng. 2011, 25, 1–9. [Google Scholar] [CrossRef]

- Ma, H.; Ha, K.M.E.; Chung, C.K.J.; Amor, R. Testing Semantic Interoperability. In Proceedings of the Joint International Conference on Computing and Decision Making in Civil and Building Engineering, Montreal, QC, Canada, 14–16 June 2006. [Google Scholar]

- Noardo, F.; Krijnen, T.; Arroyo Ohori, K.; Biljecki, F.; Ellul, C.; Harrie, L.; Eriksson, H.; Polia, L.; Salheb, N.; Tauscher, H.; et al. Reference Study of IFC Software Support: The GeoBIM Benchmark 2019—Part I. Trans. GIS 2021, 25, 805–841. [Google Scholar] [CrossRef]

- Wu, J.; Sadraddin, H.L.; Ren, R.; Zhang, J.; Shao, X. Invariant Signatures of Architecture, Engineering, and Construction Objects to Support BIM Interoperability between Architectural Design and Structural Analysis. J. Constr. Eng. Manag. 2021, 147, 04020148. [Google Scholar] [CrossRef]

- Noardo, F.; Cavkaytar, D.; Arroyo Ohori, K.; Krijnen, T.; Ellul, C.; Harrie, L.; Biljecki, F.; Eriksson, H.; Polia, L.; Salheb, N.; et al. IFC Models for Semi-Automating Common Planning Checks for Building Permits. Autom. Constr. 2022, 134, 104097. [Google Scholar] [CrossRef]

- Seib, S. Development of Model Checking Rules for Validation and Content Checking. WIT Trans. Built Environ. 2019, 192, 245–253. [Google Scholar] [CrossRef]

- Alathamneh, S.; Collins, W.; Azhar, S. BIM-Based Quantity Take-Off: Current State and Future Opportunities. Autom. Constr. 2024, 165, 105549. [Google Scholar] [CrossRef]

- Monteiro, A.; Martins, J. A Survey on Modeling Guidelines for Quantity Take-Off-Oriented BIM-Based Design. Autom. Constr. 2013, 35, 238–253. [Google Scholar] [CrossRef]

- Valinejadshoubi, M.; Moselhi, O.; Iordanova, I.; Valdivieso, F.; Bagchi, A. Automated System for High-Accuracy Quantity Takeoff Using BIM. Autom. Constr. 2024, 157, 105155. [Google Scholar] [CrossRef]

- Chen, B.; Jiang, S.; Qi, L.; Su, Y.; Mao, Y.; Wang, M.; Cha, H.S. Design and Implementation of Quantity Calculation Method Based on BIM Data. Sustainability 2022, 14, 7797. [Google Scholar] [CrossRef]

- Löhr, F.; Gerber, A.; Stobbe, M. Semi-Automated Generation of Multi-Zone Thermal Models from Building-Information-Modeling Data. In Proceedings of the BauSIM 2022—9th IBPSA-Germany & Austria Conference, Weimar, Germany, 20–22 September 2022; pp. 1–6. [Google Scholar] [CrossRef]

- Gopee, M.A.; Prieto, S.A.; García de Soto, B. Improving Autonomous Robotic Navigation Using IFC Files. Constr. Robot. 2023, 7, 235–251. [Google Scholar] [CrossRef]

- Pauwels, P.; van den Bersselaar, E.; Verhelst, L. Validation of Technical Requirements for a BIM Model Using Semantic Web Technologies. Adv. Eng. Inform. 2024, 60, 102426. [Google Scholar] [CrossRef]

- Kim, Y.; Chin, S.; Choo, S. Rule-based automation algorithm for generating 2D deliverables from BIM. J. Build. Eng. 2024, 97, 111033. [Google Scholar] [CrossRef]

- Akanbi, T.; Zhang, J.; Lee, Y.-C. Data-Driven Reverse Engineering Algorithm Development (D-READ) Method for Developing Interoperable Quantity Take-Off Algorithms Using IFC-Based BIM. J. Comput. Civ. Eng. 2020, 34, 04020036. [Google Scholar] [CrossRef]

- Fürstenberg, D.; Hjelseth, E.; Klakegg, O.J.; Lohne, J.; Lædre, O. Automated Quantity Take-Off in a Norwegian Road Project. Sci. Rep. 2024, 14, 458. [Google Scholar] [CrossRef]

- Zhang, S.; Zhang, S.; Liu, H.; Wang, C.; Zhao, Z.; Wang, X.; Yan, L. Semantic enrichment of BIM models for construction cost estimation in pumped-storage hydropower using Industry Foundation Classes and interconnected data dictionaries. Adv. Eng. Inform. 2025, 68, 103670. [Google Scholar] [CrossRef]

- Moreira, G.d.P.; Carvalho, M.T.M.; Roriz Junior, E. IFC-Based Automated Validation of Quantity Take-Off Requirements for Cost Estimation. SSRN 2025. [Google Scholar] [CrossRef]

- Isatto, E.L. An IFC Representation for Process-Based Cost Modeling. In Proceedings of the 18th International Conference on Computing in Civil and Building Engineering (ICCCBE 2020); Lecture Notes in Civil Engineering; Springer: Cham, Switzerland, 2021; Volume 98, pp. 519–528. [Google Scholar] [CrossRef]

- Zhang, S.; Pauwels, P. Cypher4BIM: Releasing the Power of Graph for Building Knowledge Discovery. Adv. Eng. Inform. 2021, 47, 101234. [Google Scholar] [CrossRef]

- Iranmanesh, S.; Saadany, H.; Vakaj, E. LLM-assisted Graph-RAG Information Extraction from IFC Data. arXiv 2025, arXiv:2504.16813. [Google Scholar] [CrossRef]

- ACCA Software S.p.A. PriMus-IFC: Quick Start (User Manual); ACCA Software S.p.A.: Bagnoli Irpino, Italy, 2020. [Google Scholar]

- Autodesk, Inc. Navisworks Manage—Quantification User Guide; Autodesk, Inc.: San Rafael, CA, USA, 2021. [Google Scholar]

- Kreo Software. Kreo BIM Take-Off: AI-Driven Quantity Take-Off from IFC Models; Kreo: London, UK, 2022. [Google Scholar]

- BEXEL Consulting. BEXEL Manager—5D BIM and Cost Management; BEXEL: Belgrade, Serbia, 2021. [Google Scholar]

- RIB Software. CostX—Next Generation 2D and BIM Estimating Software; RIB Software: Stuttgart, Germany, 2020. [Google Scholar]

- Cadwork Informatik. Cadwork 3D to 6D BIM for Timber Construction; Cadwork Informatik: Basel, Switzerland, 2021. [Google Scholar]

- BlenderBIM Development Team. BlenderBIM Add-on Documentation; IfcOpenShell Community (Open-Source Project): Online, 2023. [Google Scholar]

- buildingSMART International. IFC Schema Documentation (IFC2x3 and IFC4); buildingSMART International: London, UK, 2018. [Google Scholar]

- Karlshoej, J. IFC Interoperability Issues in AEC Industry. Autom. Constr. 2013, 35, 164–177. [Google Scholar]

- Applied Software Technology Inc. Auto-Dimensioning REVIT Models. U.S. Patent US10902580B2, 26 January 2021. [Google Scholar]

- Zhang, C.; Beetz, J.; Weise, M. Interlinking Building Geometry, Topology and Semantics. Adv. Eng. Inform. 2015, 29, 550–558. [Google Scholar]

| Category | Semantic Vertex | Coordinate Definition (X, Y, Z) and Description |

|---|---|---|

| Object | L_B_O | X: min, Y: min, Z: min—Left–bottom–origin vertex (origin corner) |

| L_B_B | X: min, Y: max, Z: min—Left–bottom–back vertex | |

| R_B_F | X: max, Y: min, Z: min—Right–bottom–front vertex | |

| R_B_B | X: max, Y: max, Z: min—Right–bottom–back vertex | |

| L_T_F | X: min, Y: min, Z: max—Left–top–front vertex | |

| C_T_F | X: average of L_B_O and R_B_F (X-axis), Y: min, Z: max—Center–top–front vertex | |

| C_T_B | X: average of L_B_B and R_B_B (X-axis), Y: max, Z: max—Center–top–back vertex | |

| Window | WL_B_O | Y: same as L_B_O, minimal vector-scalar distance—Left–bottom–origin of window opening |

| WR_B_F | X: max, Y: same as WL_B_O, Z: same—Right–bottom–front of window opening | |

| WL_B_B | X: same as WL_B_O, Y: max, Z: same—Left–bottom–back of window opening | |

| WL_T_F | X: same as WL_B_O, Y: same, Z: max—Left–top–front of window opening | |

| WL_B_O_HD | X: same, Y: same, Z: min—First edge contact along (−) Z axis; measures vertical offset from window base to wall bottom | |

| WL_B_O_LD | X: min, Y: same, Z: same—First edge contact along (−) X axis; measures horizontal offset from window to left wall surface |

| Semantic Vertex | Coordinate Definition (X, Y, Z) | Semantic Vertex | Coordinate Definition (X, Y, Z) |

|---|---|---|---|

| L_B_O | X: min, Y: min, Z: min | R_B_F | X: max, Y: min, Z: min |

| L_B_B | X: min, Y: max, Z: min | R_B_B | X: max, Y: max, Z: min |

| L_T_F | X: min, Y: min, Z: max | R_T_F | X: max, Y: min, Z: max |

| L_T_B | X: min, Y: max, Z: max | R_T_B | X: max, Y: max, Z: max |

| Semantic Vertex | Coordinate Definition (X, Y, Z) | Description |

|---|---|---|

| WL_B_O | X: Min, Y: Min, Z: Min | Left–bottom–outer corner of the window opening (reference origin). |

| WR_B_F | X: Max, Y: Min, Z: Min | Right–bottom–front corner of the window opening (width reference). |

| WL_T_F | X: Min, Y: Min, Z: Max | Left–top–front corner of the window opening (height reference). |

| WL_B_O_BD | Same X, Y as WL_B_O; Z direction = WL_B_O − Depth | Depth offset reference point of the window origin (derived from WL_B_O origin). |

| WL_B_O_LD | Same Y, Z as WL_B_O; X direction = WL_B_O − Width offset | Left side offset reference point of the window origin (WL_B_O reference origin). |

| Dimension | Formula | Description |

|---|---|---|

| Wall_L | Wall_L = R_B_F − L_B_O | Wall length (distance between left–bottom–front and right–bottom–front vertices) |

| Wall_H | Wall_H = L_T_F − L_B_O | Wall height (distance between bottom and top along Z-axis) |

| Wall_W | Wall_W = L_B_B − L_B_O | Wall thickness (distance between front and back faces) |

| Win_L | Win_L = WR_B_F − WL_B_O | Window length (horizontal distance between left and right window edges) |

| Win_H | Win_H = WL_T_F − WL_B_O | Window height (vertical distance between bottom and top window edges) |

| Win_L_B_O_HD | Win_L_B_O_HD = WL_B_O − WL_B_O_BD | Distance from window base point to lower edge (downward direction from the window reference point) |

| Win_L_B_O_LD | Win_L_B_O_LD = WL_B_O − WL_B_O_LD | Distance from window base point to left edge (leftward direction from the window reference point) |

| Semantic Vertex | Coordinate Definition (X, Y, Z) | Semantic Vertex | Coordinate Definition (X, Y, Z) |

|---|---|---|---|

| L_B_O | X: min, Y: min, Z: min | R_B_F | X: max, Y: min, Z: min |

| L_B_B | X: min, Y: max, Z: min | R_B_B | X: max, Y: max, Z: min |

| L_T_F | X: min, Y: min, Z: max | R_T_F | X: max, Y: min, Z: max |

| L_T_B | X: min, Y: max, Z: max | R_T_B | X: max, Y: max, Z: max |

| Semantic Vertex | Coordinate Definition (X, Y, Z) | Description |

|---|---|---|

| CM_L_T_P | Scanned from the −X direction; endpoint of the candidate intersection line detected on the surface; Z: max | Upper-left vertex of the column shaft |

| CM_L_B_P | Scanned from the −X direction; endpoint of the candidate intersection line detected on the surface; Z: min | Lower-left vertex of the column shaft |

| CM_R_T_P | Scanned from the +X direction; endpoint of the candidate intersection line detected on the surface; Z: ma | Upper-right vertex of the column shaft |

| CM_R_B_P | Scanned from the +X direction; endpoint of the candidate intersection line detected on the surface; Z: min | Lower-right vertex of the column shaft |

| Dimension | Formula | Description |

|---|---|---|

| Col_T_H | Col_T_H = L_T_P − L_B_O | Total height of the cylindrical column, including the capital |

| Col_C_W | Col_C_W = R_T_F − L_T_F | Width of the column capital (front view) |

| Col_C_D | Col_C_D = L_T_F − L_T_B | Depth of the column capital (side view) |

| Col_M_H_L | Col_M_H_L = CM_L_T_P − CM_L_B_P | Height of the left side of the column body (excluding capital) |

| Col_M_H_R | Col_M_H_R = CM_R_T_P − CM_R_B_P | Height of the right side of the column body (excluding capital) |

| Col_M_DI | Col_M_DI = CM_R_B_P − CM_L_B_P | Diameter of the cylindrical column body (base view) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Cho, J. Semantic-Vertex-Based Topological Detection for Automatic Dimension Generation in Building Information Modeling (BIM) with Industry Foundation Classes (IFC). Appl. Sci. 2026, 16, 139. https://doi.org/10.3390/app16010139

Cho J. Semantic-Vertex-Based Topological Detection for Automatic Dimension Generation in Building Information Modeling (BIM) with Industry Foundation Classes (IFC). Applied Sciences. 2026; 16(1):139. https://doi.org/10.3390/app16010139

Chicago/Turabian StyleCho, Jaeho. 2026. "Semantic-Vertex-Based Topological Detection for Automatic Dimension Generation in Building Information Modeling (BIM) with Industry Foundation Classes (IFC)" Applied Sciences 16, no. 1: 139. https://doi.org/10.3390/app16010139

APA StyleCho, J. (2026). Semantic-Vertex-Based Topological Detection for Automatic Dimension Generation in Building Information Modeling (BIM) with Industry Foundation Classes (IFC). Applied Sciences, 16(1), 139. https://doi.org/10.3390/app16010139