1. Introduction

Defect detection on wood surfaces is a critical task in the furniture and woodworking industries, directly influencing product quality, customer satisfaction, and production efficiency. While most modern manufacturing lines have adopted automation in machining and finishing processes, visual quality inspection remains predominantly manual. Such human-dependent inspection is prone to inconsistencies, fatigue-induced errors, and reduced throughput, leading to both false acceptance (leakage) and false rejection (overkill) of products. The integration of automated optical inspection (AOI) systems into production lines offers a practicable path toward smart manufacturing, enabling real-time, objective, and rapid defect detection. Recent advances in artificial intelligence (AI), particularly deep learning (DL), have significantly improved image-based defect detection in various industrial domains [

1,

2]. However, despite the progress in generic defect detection, research on wood defect detection remains limited [

1,

3,

4,

5,

6], primarily due to the complex texture patterns and wide variability in defect appearance.

Gabor functions are based on the sinusoidal plane wave with particular frequency and orientation, which characterizes the spatial frequency information of the image. A set of Gabor filters with a variety of frequencies and orientations can effectively extract invariant features from an image. Due to these capabilities, Gabor filters are widely employed in image processing applications, such as texture classification, image retrieval and wood defect detection [

7]. Through multi-scale and multi-orientation design, Gabor filters effectively extract rich texture features, making them particularly valuable for wood defect detection. They can capture slight texture variations on wood surfaces, aiding in the identification of defect locations and types. However, relying solely on Gabor filters for feature extraction may not be sufficient to handle the complexity and diversity of wood defects. Since wood defects exhibit diverse characteristics, more advanced feature learning and recognition techniques are required for accurate detection. To overcome this limitation, integrating Gabor filters with deep learning techniques has proven to be a highly effective strategy [

8,

9,

10].

Convolutional neural networks (CNNs) are a deep learning architecture that leverages multiple layers of convolution and pooling operations to efficiently extract hierarchical features from images. CNNs have demonstrated highly effectiveness for tasks such as image classification, object detection, and segmentation [

2]. Gabor convolutional networks (GCNs) integrate Gabor filters into CNNs, leveraging both the local feature extraction capability of Gabor filters and the feature learning and classification abilities of CNNs. This integration enhances the robustness of learned features against variations in orientation and scale [

9]. However, despite these advantages, GCNs suffer from a more complex network architecture. Thus, optimizing the network architecture to enhance GCN performance and computational efficiency has become a valuable research topic.

The Taguchi method, developed by Dr. Genichi Taguchi, is a quality engineering approach primarily used for product design and process optimization. It employs design of experiments (DOE), particularly orthogonal arrays (OAs), to efficiently evaluate multiple factors affecting quality [

11]. Additionally, it incorporates the signal-to-noise (S/N) ratio to measure system robustness with the goal of reducing variation and improving product reliability. In addition, the Taguchi method offers the following advantages: (i) Reduced experimental cost and time—By leveraging orthogonal arrays, the method significantly reduces the number of experimental runs while still achieving optimal design parameters. (ii) Systematic problem-solving approach—Through parameter design and tolerance design, the method optimizes product performance during the development phase, thereby minimizing the need for costly modifications. Due to these benefits, the Taguchi method has been widely adopted in industries such as manufacturing, electronics, biomedical engineering, automotive, and semiconductor [

11,

12,

13,

14].

Generally speaking, the design of CNN and GCN involves numerous hyperparameters, such as convolutional kernel size, Gabor convolutional filters, pooling strategies, number of layers, and learning rate. Traditional hyperparameter optimization methods such as grid search and random search suffer from high computational costs and may fail to capture robust parameter settings under small-sample conditions. In contrast, the Taguchi method utilizes orthogonal arrays to efficiently select representative parameter sets, significantly reducing the number of experimental runs and computational costs. This advantage motivates the adoption of the Taguchi method for CNN and GCN optimization, as it not only reduces the computational burden of hyperparameter tuning but also enhances model robustness, convergence speed, and generalization ability. Furthermore, the Taguchi method is particularly well-suited for applications with limited data and computational resources, such as texture image analysis, industrial inspection, and intelligent surveillance systems. Therefore, it provides a highly efficient and systematic approach for optimizing CNN and GCN architectures.

Based on the aforementioned reasons and advantages, this study proposes a wood defect recognition model based on Gabor convolutional networks, integrating convolutional neural networks, Gabor filters, and the Taguchi method. The proposed GCN model employs the Taguchi method to optimize the network architecture. Furthermore, to address the issue of limited training samples, this study utilizes image tiling and data augmentation techniques to effectively increase the number of training samples, thereby enhancing the stability and accuracy of the model.

To address the challenges of detecting diverse wood surface defects with limited training data, this study employs a Taguchi-optimized Gabor convolutional network (GCN). The integration of Gabor filters with CNNs enables effective extraction of texture and hierarchical features, while the Taguchi method provides a systematic and efficient framework for hyperparameter optimization. This combination offers both accuracy and robustness, making it particularly suitable for wood defect detection tasks. The main contributions of this work are as follows:

- (i)

Integration of interpretable texture feature extraction and deep feature learning through a GCN architecture specifically adapted for wood surface inspection.

- (ii)

Systematic optimization of GCN hyperparameters using the Taguchi method, enabling high performance with reduced computational cost.

- (iii)

Data augmentation and tiling strategies to overcome limited training data, enhancing model stability and generalization.

- (iv)

Extensive comparative evaluation against a baseline CNN on the MVTec AD wood category dataset, demonstrating a 2.73% accuracy improvement.

The remainder of this study is organized as follows.

Section 2 briefly reviews some studies on Gabor filters, CNNs, and their combination. The proposed optimization of Gabor convolutional networks using the Taguchi method and their application in wood defect detection are given in

Section 3. Finally,

Section 4 presents some conclusions of this study.

2. Literature Review

2.1. Gabor Filters

Gabor filters are widely recognized for their effectiveness in extracting spatial frequency and orientation information from images. A two-dimensional Gabor function can be formulated as a Gaussian kernel modulated by a sinusoidal plane wave, enabling multi-scale and multi-orientation analysis [

5]. The two-dimensional (2D) Gabor function, represented as a Gaussian kernel function modulated by a sinusoidal plane wave, is defined as follows:

where

where

x and

y are the Cartesian coordinates,

represents the frequency,

denotes the orientation,

corresponds to the bandwidth (the standard deviation of the Gaussian function),

indicates the phase offset, and

refers to the spatial aspect ratio. A set of Gabor filters with a variety of frequencies and orientations can extract invariant features from an image. Hence, the Gabor filters are widely used in the field of image processing, such as texture classification and retrieval. This property makes Gabor filters particularly suitable for texture analysis tasks, including texture classification, content-based image retrieval, and industrial surface inspection [

8,

9,

10].

In the context of wood surface inspection, Gabor filters can capture subtle variations in grain patterns, facilitating the identification of defects such as scratches, discolorations, or holes. However, their handcrafted nature imposes limitations: the extracted features are fixed once the filter bank is designed, making them less adaptive to variations in illumination, defect shape, or environmental noise. Furthermore, when applied in isolation, Gabor-based methods often struggle with complex intra-class variability, leading to reduced robustness in unconstrained industrial settings.

2.2. Convolutional Neural Networks

In the field of machine vision, convolutional neural networks are the most widely used deep learning architecture, such as the LeNet-5 architecture shown in

Figure 1 [

15]. This architecture comprises three primary types of neural layers: convolutional layers, pooling layers, and fully connected layers. The convolutional layers are responsible for extracting local features from images, while the pooling layers reduce image dimensions and network parameters. The fully connected layers transform the two-dimensional image representation into a one-dimensional vector. Finally, the output layer, utilizing the Softmax function as the activation function, determines the network’s final prediction by selecting the neuron with the highest activation.

Nonetheless, conventional CNN kernels are learned from data without explicit constraints on their frequency or orientation selectivity. While this flexibility can be advantageous in learning task-specific features, it may result in redundancy or suboptimal exploitation of structural priors in texture-rich domains such as wood surfaces. This limitation motivates the incorporation of domain-specific filters, such as Gabor filters, into CNN architectures to improve orientation-sensitive feature representation.

2.3. Integrating Gabor Filters with CNN Architectures

The integration of Gabor filters into CNNs has been investigated to combine the interpretable, orientation-sensitive feature extraction of Gabor filters with the deep, hierarchical feature learning capabilities of CNNs. Existing approaches can be broadly categorized into two strategies:

- (i)

Pre-processing-based integration—Gabor filters are first applied to raw images to generate feature maps, which are then fed into a CNN for further processing [

10,

16,

17]. This approach enhances the input representation but does not alter the CNN architecture.

- (ii)

Kernel-substitution integration—Conventional convolutional kernels in the CNN are replaced with Gabor kernels, either fixed or learnable, enabling the network to perform Gabor-based filtering in its early layers [

9,

18,

19]. This strategy embeds prior knowledge directly into the architecture, often improving robustness to variations in orientation and scale.

Empirical studies have demonstrated that Gabor-augmented CNNs (GCNs) can outperform conventional CNNs in tasks involving fine-grained textures, such as natural image classification task (CIFAR datasets) [

9], small-sample object detection [

10], and print defect detection [

17]. However, most prior work has relied on heuristic or trial-and-error tuning of Gabor parameters (e.g., frequency, orientation, phase offset) and CNN hyperparameters (e.g., kernel size, number of filters), which can be computationally expensive and may not yield globally optimal configurations.

2.4. Parameter Optimization in Deep Learning Using the Taguchi Method

The Taguchi method is a robust design optimization approach that utilizes orthogonal arrays and signal-to-noise (S/N) ratio analysis to identify optimal parameter configurations with a minimal number of experiments [

11,

12]. Originally developed for manufacturing process optimization, it has been successfully applied to domains such as electronics, biomedical engineering, and industrial quality control. In the context of deep learning, the Taguchi method offers a computationally efficient alternative to exhaustive hyperparameter searches, especially for scenarios with limited training data and resources.

Despite its advantages, the application of the Taguchi method to optimize deep learning architectures—particularly Gabor-based CNNs—remains underexplored. This represents a significant opportunity to systematically determine both Gabor filter parameters and CNN architectural hyperparameters, potentially leading to performance gains without incurring prohibitive computational costs.

3. Proposed Methodology

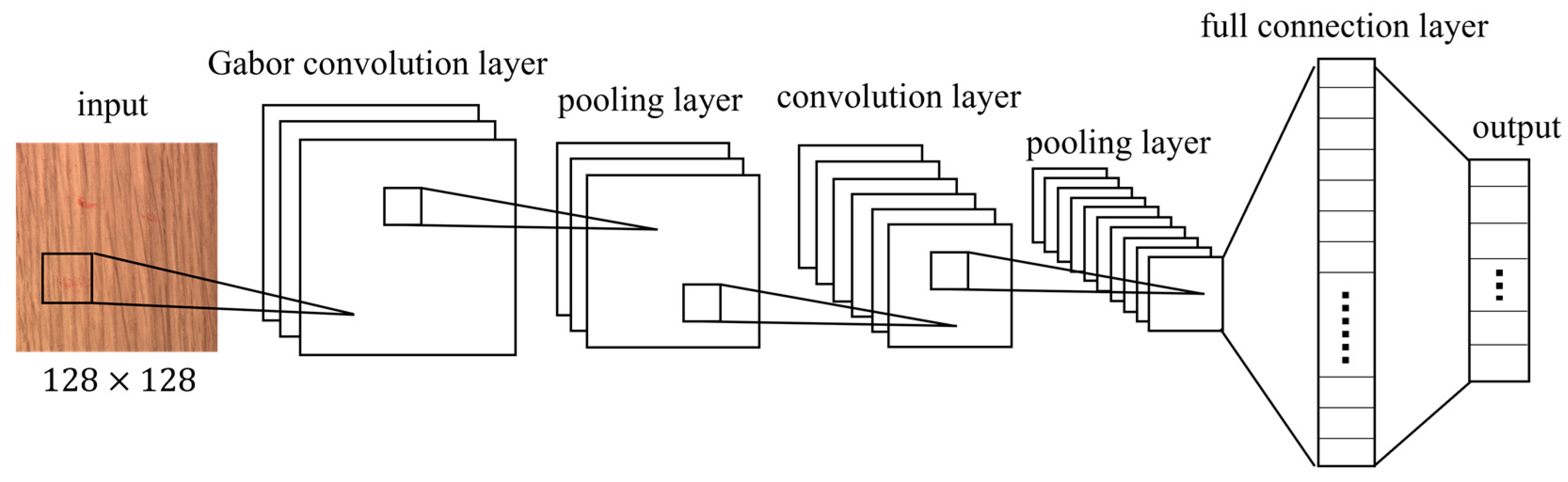

The architecture of Gabor convolutional network (GCN) is illustrated in

Figure 2. This design builds upon the foundational LeNet-5 architecture, as depicted in

Figure 1. Notably, in the GCN architecture, the initial layer is a convolutional layer that employs Gabor kernels [

20]. This modification preserves the filtering and feature extraction capabilities inherent to traditional convolutional layers. Additionally, it offers advantages such as reducing the need for extensive data preprocessing and enhancing the computational efficiency of the network.

This study utilizes the wood category from the MVTec Anomaly Detection (MVTec AD) dataset [

17,

18,

19]. The MVTec AD dataset comprises 15 categories with 3629 images for training and validation and 1725 images for testing. The training set contains only images without defects. The test set contains both: images containing various types of defects and defect-free images. Within this dataset, the wood category includes images of defects such as color anomalies, holes, liquid, and scratch, as illustrated in

Figure 3.

Table 1 presents the number of images for each type within the wood category, where “Good” represents defect-free. Note that MVTec AD dataset includes 247 defect-free images for training and validation and 19 images for testing. Therefore, the total number of defect-free images, as represented in the “Good” category (second row of the table), is 266.

In the experimental setup, the model-building environment utilized Pytorch 2.3.0, Jupyter Notebook 7.0.6, and TensorFlow 2.10.0. The test environment comprised an Intel(R) Core™ i7-13700H CPU @ 3.40 GHz processor, 32.0 GB of RAM, an Nvidia GeForce RTX 3060 Ti GPU, and a Windows 11 64-bit operating system.

3.1. Model Selection

The design of an effective model for wood defect detection requires both domain-specific feature extraction and robust optimization of network parameters. In this study, a Taguchi-optimized GCN was selected over alternative approaches for three primary reasons.

First, the integration of domain knowledge and deep learning provides a strong foundation for surface inspection tasks. Gabor filters are particularly effective in capturing orientation- and texture-sensitive features of wood surfaces, while CNNs are well suited for extracting hierarchical and abstract representations. The combination of these two techniques forms a GCN architecture that is highly adapted to the characteristics of wood defect detection [

2].

Second, the Taguchi method offers a systematic and efficient framework for hyperparameter optimization. Unlike exhaustive grid search or heuristic-based tuning, the Taguchi design of experiments requires significantly fewer trials, while still enabling robust and reproducible optimization. This advantage is particularly valuable in scenarios where computational resources are limited, and reliable factor-level evaluation is required [

12,

13,

14].

Third, the method is especially suitable under limited data conditions. The MVTec AD wood dataset, although widely used, contains relatively few defect samples [

21]. Under such circumstances, Taguchi’s structured design enables stable optimization outcomes and improved generalization without excessive computational burden.

Although other optimization strategies such as grid search and Bayesian optimization were considered, they would have required substantially more computational resources without guaranteeing superior performance. For these reasons, the Taguchi-optimized GCN represents a balanced choice that combines interpretability, efficiency, and robustness, making it particularly suitable for the wood defect detection problem addressed in this study.

3.2. Data Preprocessing

Due to the lack of training samples for wood defect detection, this study employs two approaches to increase the number of training samples and reduce computational load:

- (i)

Image tiling: The original wood images are divided into smaller tiles to increase the number of defect samples while simultaneously reducing computational complexity. In this study, a 1024 × 1024-pixel image is tiled into 64 sub-images of 128 × 128 pixels. Furthermore, when the tiled images serve as input to the defect detection model, if a specific sub-image is classified as a certain defect category, the corresponding region in the original image can be marked as the defect location. This enables both defect classification and localization within wood images.

- (ii)

Image augmentation: To enhance the diversity of training samples and expand the dataset, this study applies various image augmentation techniques, including rotation, horizontal translation, vertical translation, and random scaling.

After applying the aforementioned two data preprocessing methods, the training dataset for this study consists of a total of 10,000 samples (2000 per category), while the test dataset contains 2000 samples (400 per category), as shown in

Table 1. During the training process, 80% of the training samples are used for model training to optimize the model parameters, while the remaining 20% serve as the validation set to evaluate the model’s confirm the optimal configuration performance.

3.3. Steps for Implementing the Taguchi Method

The Taguchi method is a systematic approach for optimizing process parameters by minimizing variability and improving robustness. It employs a structured experimental design using orthogonal arrays (OAs) to efficiently determine the optimal factor levels [

10,

11]. The following steps outline its implementation in optimizing a GCN or CNN for wood defect detection.

- (i)

Define the problem and objective: The first step involves identifying the need for optimization, such as improving the accuracy of wood defect detection using a GCN or CNN. To achieve this, it is crucial to determine the key factors influencing model performance. These factors include convolutional kernel size, the number of filters, and Gabor filter properties like frequency, orientation, and phase offset.

- (ii)

Select control factors and levels: After defining the problem, the next step is to choose the parameters (control factors) that will be optimized and assign appropriate levels to each. For instance, convolutional kernel size may have three levels: 3 × 3, 5 × 5, and 7 × 7, while the number of filters in different layers may vary across experiments. Proper selection of these factors ensures that the experimental design captures a wide range of potential improvements.

- (iii)

Design the experiment using an orthogonal array: Instead of testing all possible combinations, which would be computationally expensive, an orthogonal array (OA) is selected to systematically conduct experiments with reduced trials. The OA helps distribute experiments evenly across factor levels, ensuring a balanced analysis.

- (iv)

Conduct experiments and record performance: Each experimental configuration is implemented by training and testing the GCN or CNN under the selected parameter settings.

- (v)

Calculate the signal-to-noise (S/N) ratio: To measure the robustness of each configuration, the signal-to-noise (S/N) ratio is calculated.

- (vi)

Analyze results and determine optimal factor levels: Once the S/N ratios are computed, the average S/N ratio for each factor level is analyzed to determine the optimal combination. The best parameter settings are selected based on the highest S/N ratios, and response plots are generated to visualize their effects on model performance.

- (vii)

Confirm the optimal configuration: The GCN is then retrained using the optimized parameters to validate its performance. A comparison with a baseline CNN is conducted to assess improvements in accuracy and computational efficiency. The results confirm whether the optimized model outperforms the traditional approach.

- (viii)

Implement and verify performance: Finally, the optimized model is applied to real-world wood defect detection tasks. Further evaluations are conducted to ensure its robustness and generalization ability. If needed, additional refinements can be made to enhance the model’s effectiveness.

3.4. Optimizing Gabor Convolutional Networks Using the Taguchi Method

This study employs the Taguchi method to optimize the architecture of the Gabor convolutional network. The control factors to be optimized, along with their corresponding levels, are shown in

Table 2. Among these, three control factors—frequency (

ω), orientation (

θ), and phase offset (

ψ)—are related to the Gabor filter parameters, while the remaining five control factors are hyperparameters associated with the convolutional layers. As shown in

Table 2, there is one factor with two levels and seven factors with three levels. According to the Taguchi method, the degrees of freedom (DOF) are calculated as the sum of (number of levels – 1) for each factor. Therefore, (2 − 1) + 7 × (3 − 1) = 1 + 14 = 15, resulting in a total of 15 degrees of freedom for the eight control factors. Consequently, an orthogonal array with at least 15 degrees of freedom is required for the Taguchi experiment. In this study, the L18 (2

1 × 3

7) orthogonal array, as listed in

Table 3, is selected, requiring 18 experimental runs to systematically evaluate the factor-level combinations. Without the orthogonal array, a total of 4374 (2

1 × 3

7) experiments would be required. However, by applying the orthogonal array, only 18 experiments are needed in this study.

To reduce the influence of randomness and enhance reliability, each experimental configuration is independently executed 10 times. The average accuracy for each independent trial is recorded, and the mean of these 10 trials is used to represent the experimental performance of that configuration, as shown in

Table 4. For convenience, the average accuracy is also included in the aforementioned

Table 3. A higher mean value indicates a higher average accuracy for that configuration. Since the objective of applying the Taguchi method is to improve the recognition accuracy of the Gabor convolutional network, this study considers the “larger-the-better” quality characteristic. Therefore, the “larger-the-better” formulation of the Taguchi method is applied for the calculation of the signal-to-noise (S/N) ratio, as expressed in Equation (4):

where

represents the

ith observed value, and

n denotes the total number of observations.

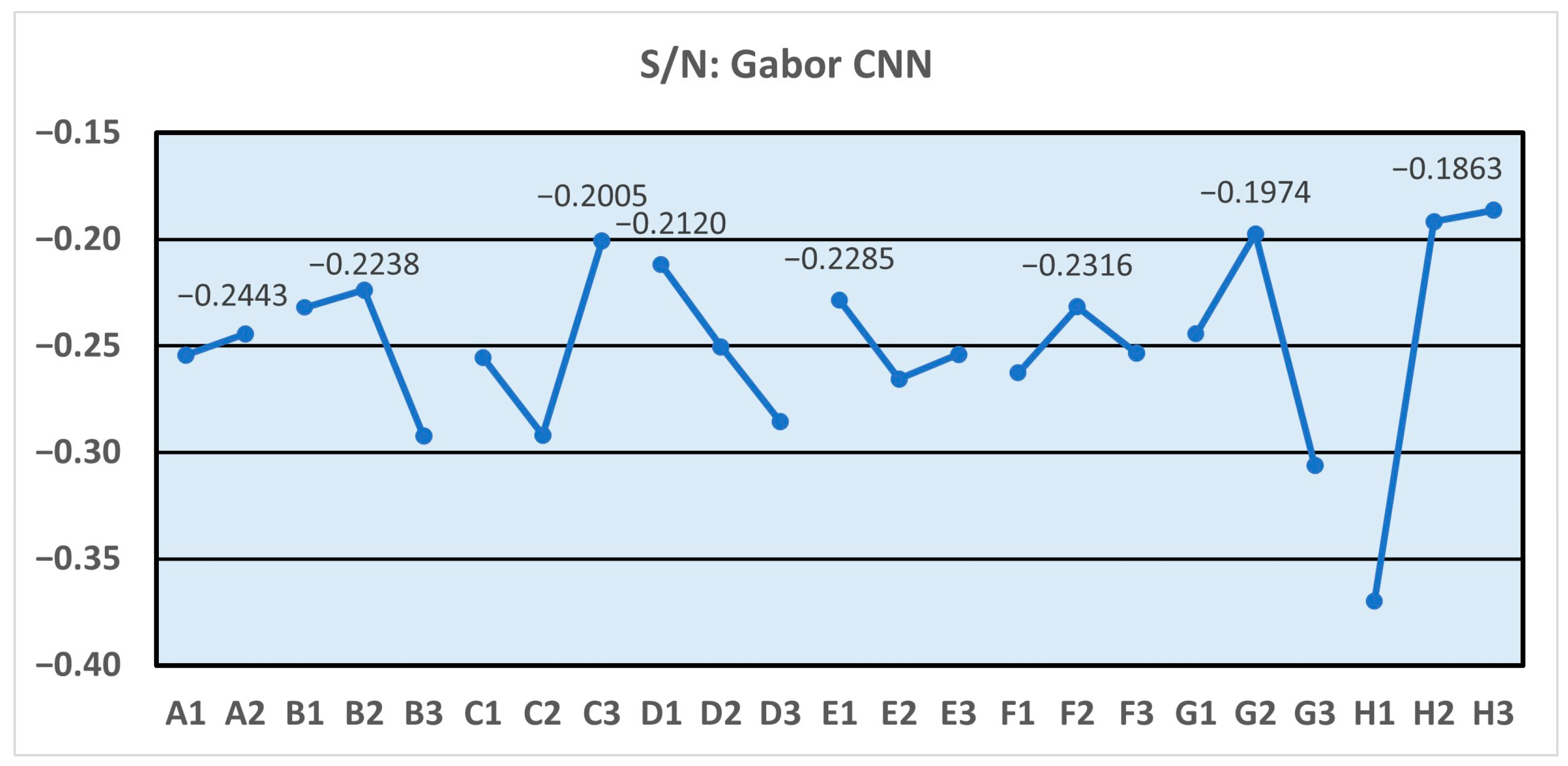

Based on the L18 orthogonal array and the recognition results of

Table 4 (at the end of the paper), the S/N ratio for each experimental setup is listed in the last column of

Table 3. The average S/N ratio of each factor and level is listed in

Table 5. The higher S/N ratio represents the higher stability of the quality. The optimal parameter of each factor is also displayed in the last row in

Table 5.

Figure 4 presents the factor S/N ratio response plot, which illustrates the mean response values calculated for each control factor and its corresponding levels. Since the quality characteristic of this study follows the “larger-the-better” criterion, the optimal factor settings can be identified from the response plot in

Figure 4 as follows: the pooling method is max pooling, the number of filters in the first convolutional layer is 256, the kernel size of the first convolutional layer is (7,7), the number of filters in the second convolutional layer is 128, the kernel size of the second convolutional layer is (3,3), the number of frequency components is 6, the number of orientations is 4, and the number of phase offsets is 3.

3.5. Optimizing Convolutional Neural Networks Using the Taguchi Method

In this study, the control group employs a traditional convolutional neural network (CNN) with an architecture similar to the Gabor convolutional network shown in

Figure 2. This network comprises two convolutional layers with corresponding pooling layers, followed by two fully connected layers. To optimize the CNN’s hyperparameters, the Taguchi method is applied, focusing on control factors and levels. The control factors and their corresponding levels are listed in

Table 6, while

Table 7 presents the L8 orthogonal array used in this study. The experimental results and S/N ratios are provided in

Table 8 and the last column of

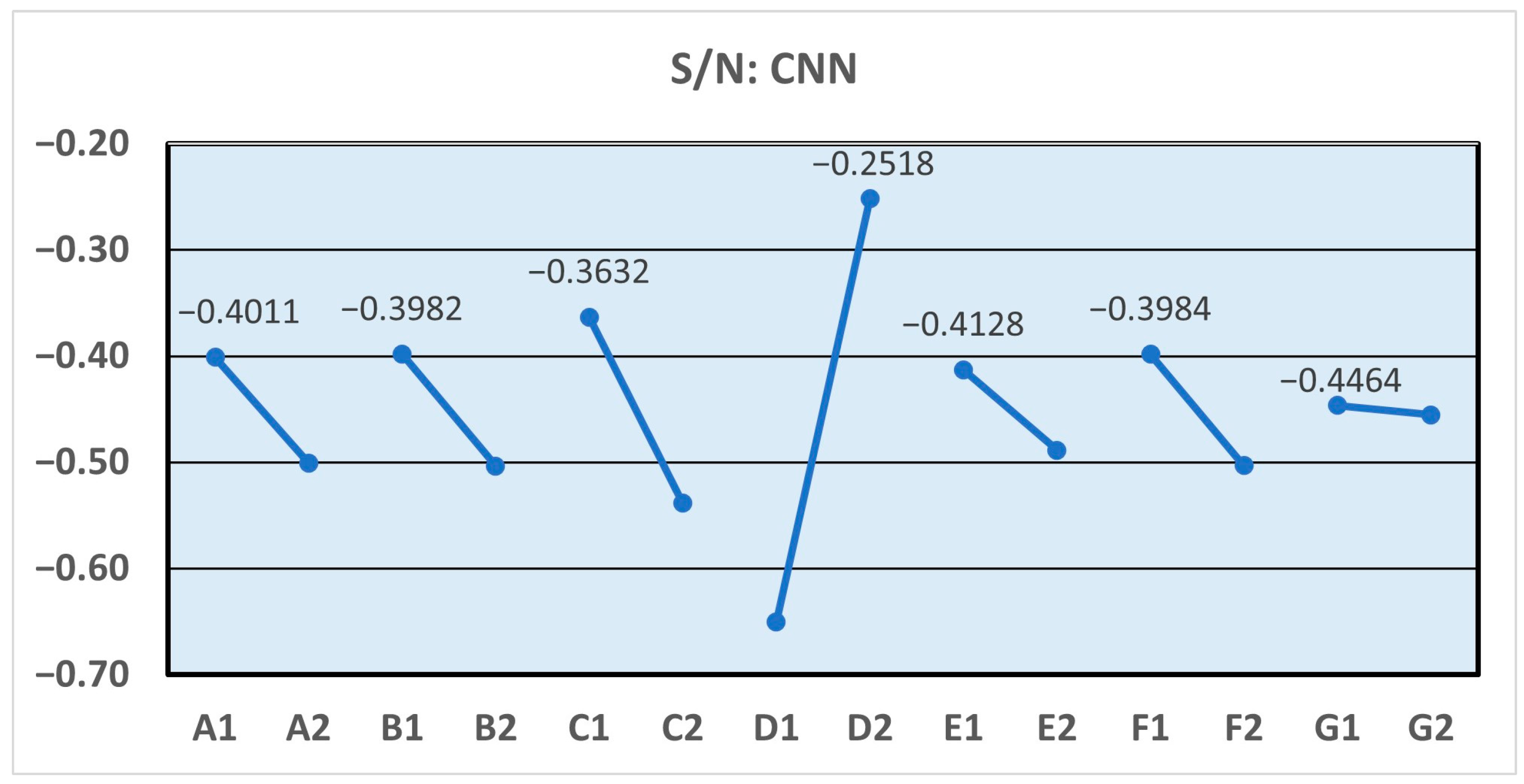

Table 7, respectively.

The average S/N ratio of each factor and level, along with the optimal parameter of each factor, is presented in

Table 9. Additionally,

Figure 5 depicts the corresponding Taguchi response plot, which illustrates the mean response values calculated for each control factor and its associated levels. Based on these results, the optimized control factors for the CNN are identified as follows: the pooling method is max pooling, the number of filters in the first convolutional layer is 128, the kernel size of the first convolutional layer is (3,3), the pooling size of the first pooling layer is 4, the number of filters in the second convolutional layer is 128, the kernel size of the second convolutional layer is (3,3), and the pooling size of the second pooling layer is 2.

3.6. Comparative Analysis of Optimal Factor Combinations

Table 10 presents the comparative results of the Taguchi-optimized Gabor convolutional network and the Taguchi-optimized convolutional neural network. The optimized GCN achieved an average accuracy of 98.92%, whereas the optimized CNN reached 96.19%. Beyond the accuracy difference of 2.73%, the GCN also demonstrated greater robustness, as indicated by smaller performance variations across the ten independent runs. This improvement can be attributed to the integration of Gabor filters, which enhance orientation- and texture-sensitive feature extraction, combined with the systematic parameter optimization achieved by the Taguchi method.

Furthermore, the S/N ratio analysis (

Table 5 and

Table 9) confirms that the GCN configuration yields higher stability under different factor-level combinations. For instance, the factor response plots in

Figure 5 illustrate that the choice of kernel size and Gabor orientation exerts a significant influence on performance, with the optimized settings leading to consistently higher S/N values. These observations highlight that the Taguchi-based design not only improves mean accuracy but also enhances the reliability of the network under stochastic training conditions.

In summary, the comparative analysis underscores the superiority of the Taguchi-optimized GCN over the conventional CNN in terms of accuracy, stability, and computational efficiency. These findings demonstrate that incorporating interpretable texture filters with a robust design methodology provides a practical solution for wood defect detection in real-world manufacturing scenarios.