Abstract

Regular inspection and monitoring of buildings and infrastructure, that is collectively called the built environment in this paper, is critical. The built environment includes commercial and residential buildings, roads, bridges, tunnels, and pipelines. Automation and robotics can aid in reducing errors and increasing the efficiency of inspection tasks. As a result, robotic inspection and monitoring of the built environment has become a significant research topic in recent years. This review paper presents an in-depth qualitative content analysis of 269 papers on the use of robots for the inspection and monitoring of buildings and infrastructure. The review found nine different types of robotic systems, with unmanned aerial vehicles (UAVs) being the most common, followed by unmanned ground vehicles (UGVs). The study also found five different applications of robots in inspection and monitoring, namely, maintenance inspection, construction quality inspection, construction progress monitoring, as-built modeling, and safety inspection. Common research areas investigated by researchers include autonomous navigation, knowledge extraction, motion control systems, sensing, multi-robot collaboration, safety implications, and data transmission. The findings of this study provide insight into the recent research and developments in the field of robotic inspection and monitoring of the built environment and will benefit researchers, and construction and facility managers, in developing and implementing new robotic solutions.

1. Introduction

The built environment consists of human-made buildings and infrastructures such as commercial and residential buildings, bridges, roads, tunnels, storage tanks, and pipelines. These structures must be routinely inspected and monitored both during and after construction. The structure is monitored and assessed through regular inspections performed by different stakeholders including owners, project managers, architects, engineers, contractors, sub-contractors, end users, and facility managers [1]. Irrespective of who is performing the inspection, manual inspection is a time-consuming process and adds to the project cost [2]. Manual inspection is also characterized by a high degree of variability in the quality of assessment and subjectivity [3,4].

Some inspection tasks are difficult for humans to perform due to inaccessibility, e.g., confined spaces such as inside air-conditioning ducts [5,6], water-filled pipelines and tunnels [7], offshore structures [8], and small spaces in walls [9]. Some situations might be hazardous for humans, such as inspection at heights [10], structures subjected to natural disasters [11], or a bridge deck [12]. Different inspection and monitoring activities differ in their requirements and face different challenges. Therefore, not all types of inspection can be performed by the same robot. This review paper identifies robot types and various application subdomains within the domain of inspection and monitoring of the built environment and discusses their challenges.

Robots have been used in building and infrastructure projects in many ways—e.g., for concrete production, automated brickwork, steel welding, concrete distribution, steel reinforcement positioning, concrete finishing, tile placement, fireproof coating, painting, earthmoving, material handling, and road maintenance [13]. Robots with many different locomotion types and sensors have been used for the inspection of the built environment [3]. Some examples are unmanned aerial vehicles (UAV), unmanned ground vehicles (UGV), marine vehicles, wall-climbing robots, and cable-crawling robots, among others. Robotic inspection provides a safer alternative to manual inspection [14,15]. Automated robotic inspection improves the frequency of inspections and reduces subjectivity in detecting errors [16,17,18].

As described in this review, challenges associated with developing and implementing robots for inspection and monitoring of the built environment are substantial. One of the biggest challenges is that buildings and infrastructure differ widely in design and intended use. Programming and designing a robot to operate in a wide range of input environments is a significantly difficult task [3]. Furthermore, various inspection subtasks need completely different robot functionalities, such as defect detection [19], progress estimation [20], and resource tracking [21], among many others. During this review, it was identified that previous research can be categorized into distinct research areas based on what challenges it addressed. All these research areas are identified, and the findings are presented in this paper.

Rakha and Gorodetsky [22] presented a comprehensive review of the use of drones for building inspection. However, the current review includes all types of robots, including but not limited to drones. Lattanzi and Miller [3] reviewed the literature on the robotic inspection of infrastructure. They studied different types of robots based on their mobility. They also studied different methods developed by past researchers for improving the autonomy and damage perceptions of these robots. However, there have been numerous advances In the field of robotics, and in their supporting technologies, such as computer vision and deep learning recently. In fact, a bibliometric analysis of the papers reviewed in this study revealed that more than 60% of the papers analyzed were published after 2017, when [3] published their review. The results of the bibliometric analysis are presented in Section 3. Furthermore, Bock and Linner published the handbook on Construction Robots [23]. They studied various robots in use in construction. While they briefly discussed inspection robots, they did not focus on the various research areas on the implementation of robots in inspection and monitoring of buildings and infrastructure, nor did they discuss specific application sub-domains of inspection and monitoring. They also did not perform a comprehensive review of all research conducted on this topic. This article synthesizes the findings of previous studies and offers a comprehensive review of current research on robotic inspection of the built environment. The goal of this research is to consolidate information from the most recent body of literature using the qualitative content analysis methodology and answer the following research questions:

- What types of robots have been studied in the literature on robotic inspection of buildings and infrastructure based on their locomotion?

- What are the prevalent application domains for the robotic inspection of buildings and infrastructure?

- What are the prevalent research areas in the robotic inspection of buildings and infrastructure?

- What research gaps currently exist in the robotic inspection of buildings and infrastructure?

This paper is structured as follows. First, the methodology used for this review is explained in Section 2. After that, the findings of the bibliometric analysis performed on the reviewed papers are presented in Section 3. Then, a critical review of different types of robots found in the literature is presented in Section 4. Next, various application domains are identified from the literature and are presented in Section 5. Then, common research areas are identified that have been addressed by different researchers in Section 6. These are the different challenges faced in the implementation of robotics for the inspection and monitoring of the built environment. After that, based on the research gaps in the literature identified during the review, future directions for research are provided in Section 7. Finally, the paper is concluded in Section 8 with a brief discussion of the implications and limitations of this study.

2. Research Methodology

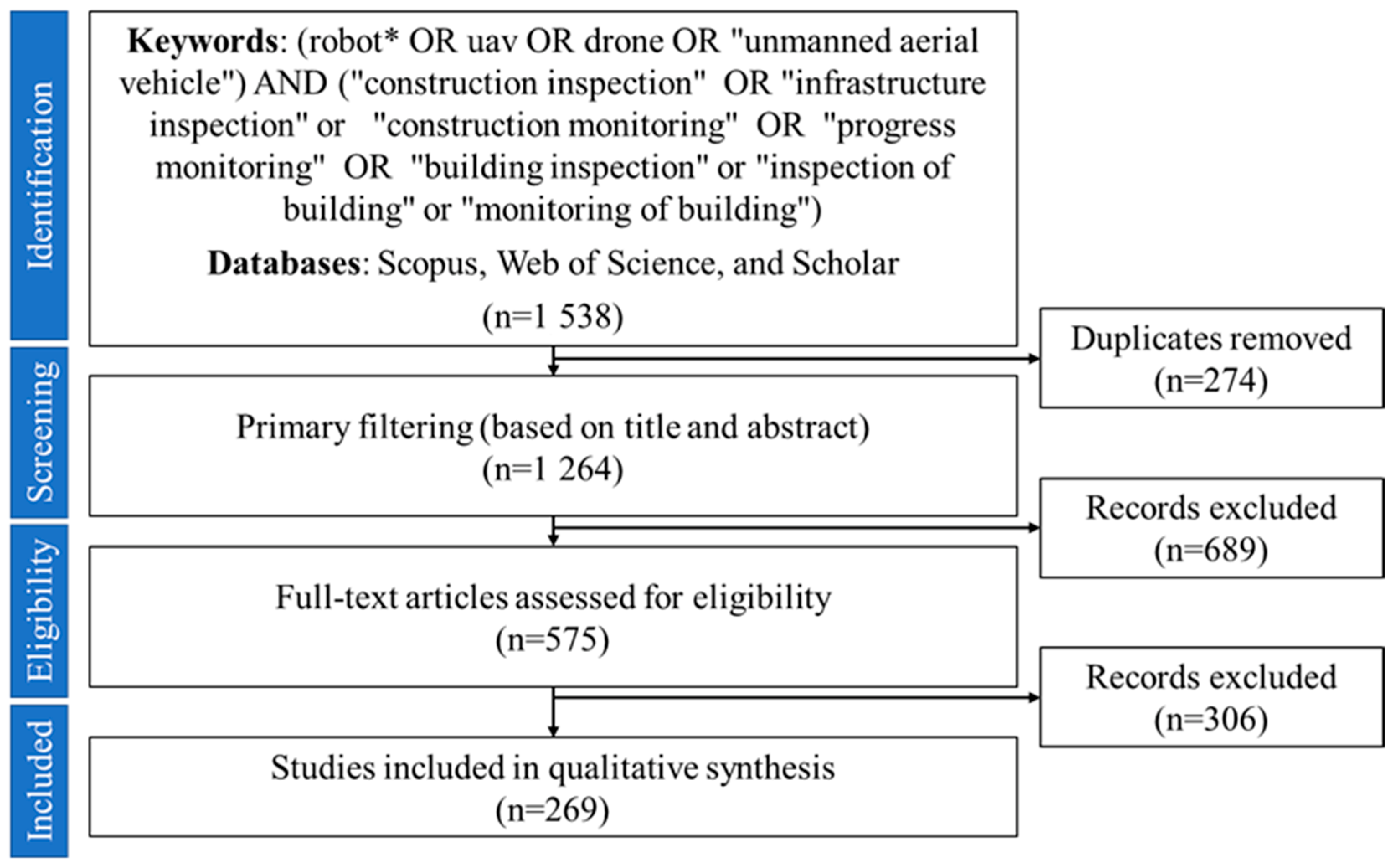

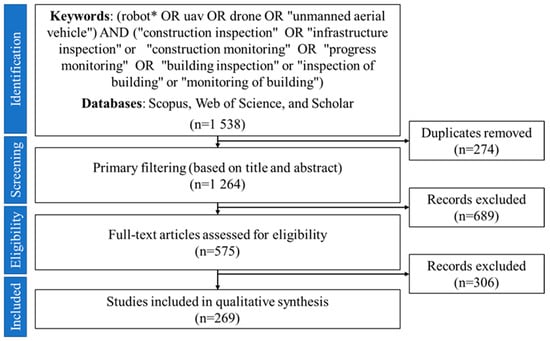

This review is based on bibliometric analysis and content analysis of the literature body on the topic of using robots for inspection and monitoring. Previous literature review studies [3,24] have used Web of Science (WoS) and Scopus databases to search for relevant papers on a topic. Both of these databases have a large range of high-quality academic publications in engineering and management domains [24]. To further ensure that all relevant papers are included in the review, the authors also used Google Scholar to find any relevant papers not indexed in WoS and Scopus. The keywords (robot* OR uav OR drone OR “unmanned aerial vehicle”) AND (“construction inspection” OR “infrastructure inspection” or “construction monitoring” OR “progress monitoring” OR “building inspection” or “inspection of building” or “monitoring of building”) were used to search in all the fields of the publications. The star (*) symbol was used as a wildcard to include words such as “robots”, “robotic”, and “robotics”. After compiling the papers from all sources, 1538 results were generated in total (308 from WoS, 553 from Scopus, and 677 from Google Scholar). After removing the duplicates, 1264 sources were retrieved. Conditional formatting function in MS Excel was used to remove duplicate entries.

Preliminary shortlisting was performed by reading the titles and the abstracts of the papers. Only those publications were kept that utilized robots in some way for building and infrastructure inspection and monitoring. Papers on the use of robots for labor or construction jobs were not included. Research on general-purpose robots that were not specific to the built environment application was also removed. For the purpose of this review, a “robot” is any mobile system that can operate autonomously or manually and has sensors to navigate and collect data. Some examples of robots in the context of this study are unmanned aerial vehicles (UAVs), unmanned ground vehicles (UGVs), marine vehicles, microbots, wall-climbing robots, cable-suspended robots, and legged robots. Apart from the actual applications of robots, research on enabling technologies (such as computer vision) that make the use of robots more efficient and safer in the future while being independent of the type of robot has also been included in this review.

After preliminary filtering, 575 sources were shortlisted for further review. This was followed by a secondary filtering, in which the shortlisted papers were analyzed by reading the major sections to ascertain the overall theme. The criteria discussed above were used to include or exclude the papers. Finally, 269 papers remained for in-depth analysis. The remaining papers were reviewed using the qualitative content analysis methodology used by past researchers [24,25] to identify the application domains and research areas commonly studied in the literature. In this step, papers were tagged with custom keywords for classification. The resulting classes and categories are discussed in more detail in the following sections. The methodology for this review is illustrated graphically in Figure 1.

Figure 1.

Methodology used for the selection of sources.

3. Bibliometric Analysis

Bibliometry, or bibliometric analysis, is the application of mathematics and statistics to uncover structures and patterns of research in a certain area [26]. For this purpose, Biblioshiny, an open-source application running on R, was used. Biblioshiny is the web version of the bibliometrix tool developed by Aria and Cuccurullo [27]. It provides an intuitive web interface for performing bibliometric analysis [28]. Table 1 presents the descriptive statistics of the reviewed sources. The review included 269 documents from 185 different sources. Among these, 100 (37.17%) were journal articles, 163 (60.59%) were conference papers, and 6 (2.23%) were book chapters. The documents were written by 663 different authors. For this review, only documents written in English were considered.

Table 1.

Descriptive statistics of the reviewed sources.

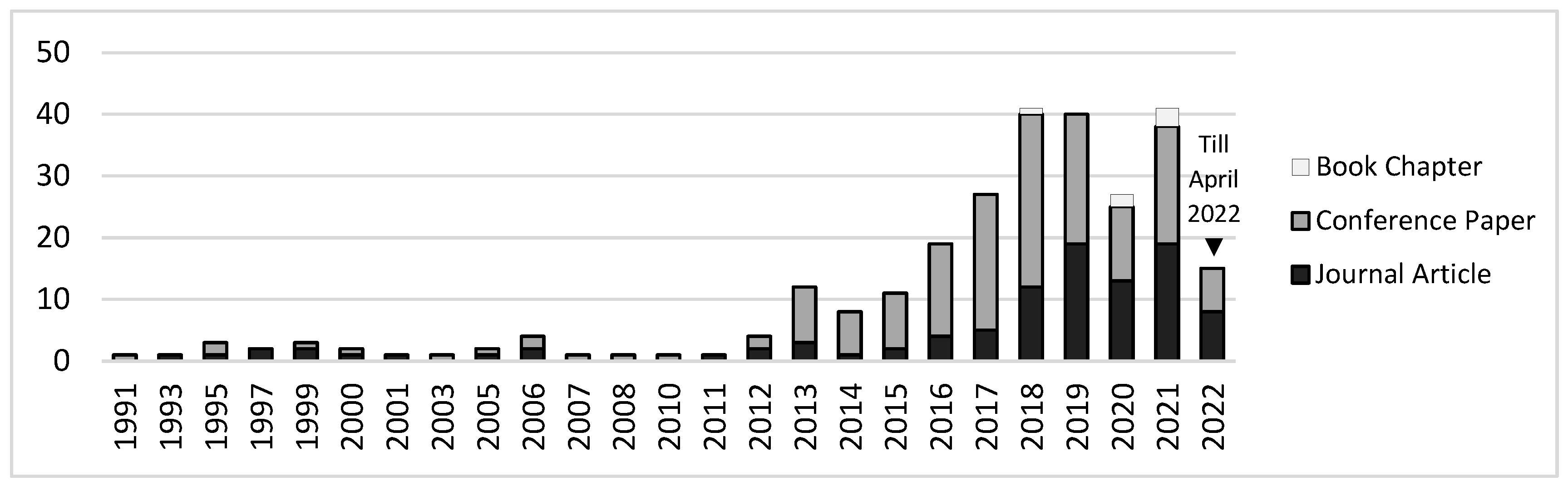

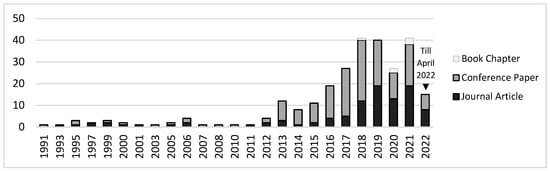

Figure 2 shows the publication output in the area of inspection and monitoring of buildings and infrastructure by the year of publication. The earliest source included in this review was published in 1991 and titled, “A mobile robot for inspection of power transmission lines” [29]. Research in this area gained more popularity only after 2012, as can be seen in Figure 2. The median of the data was found to be in the year 2017, which means more than half of the papers were published in or after 2017. This again emphasizes the significance of our review paper compared to past reviews. From 1991–2021, an average year-on-year growth of 17% was noted in the publication output on this topic. This shows that more researchers are being attracted to this area of research. The consistently high output of publications in recent years shows that the topic of robotic inspection of the built environment is still relevant. This finding supports the need for this review.

Figure 2.

Historical frequency of research.

4. Types of Robots

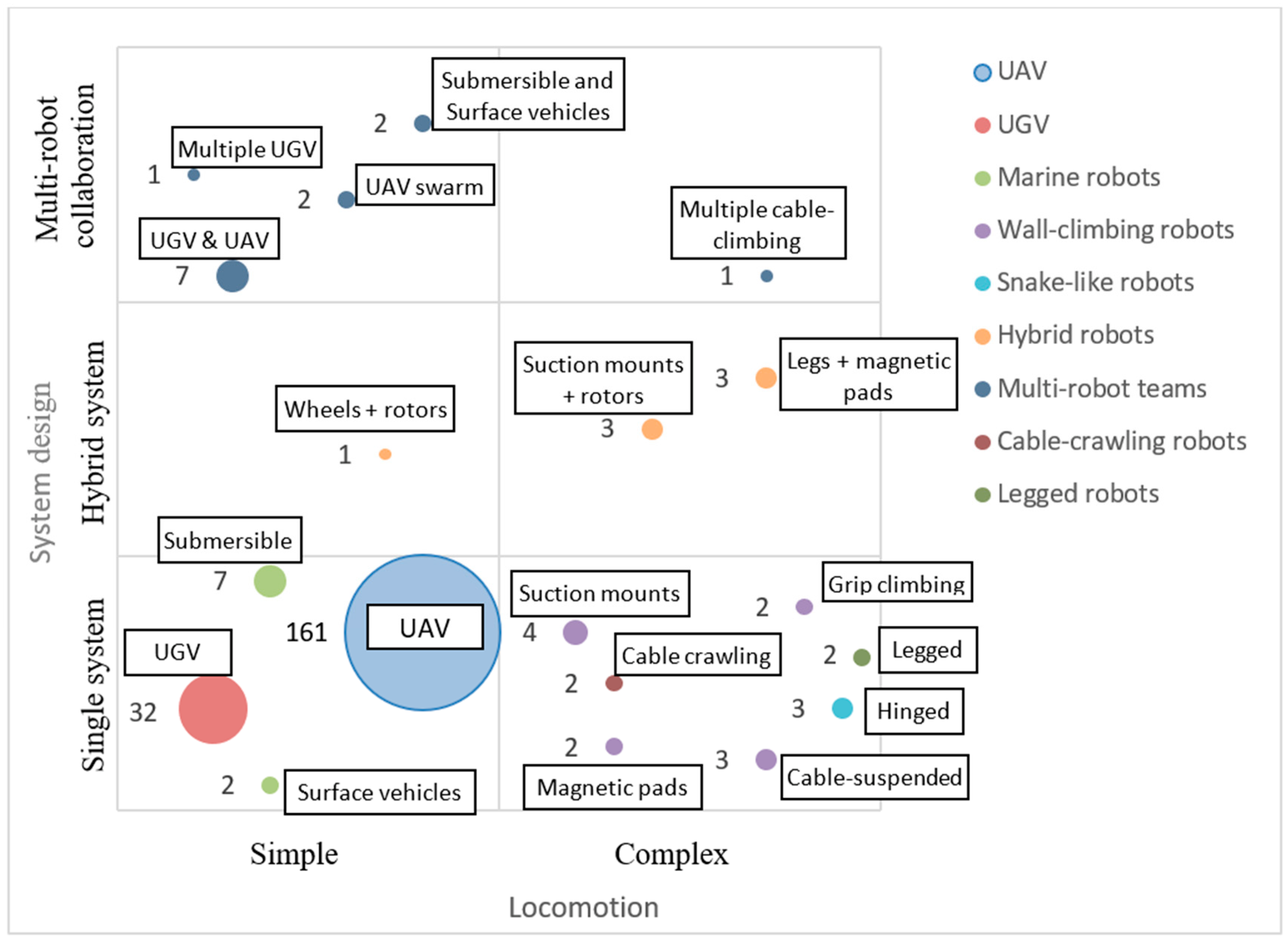

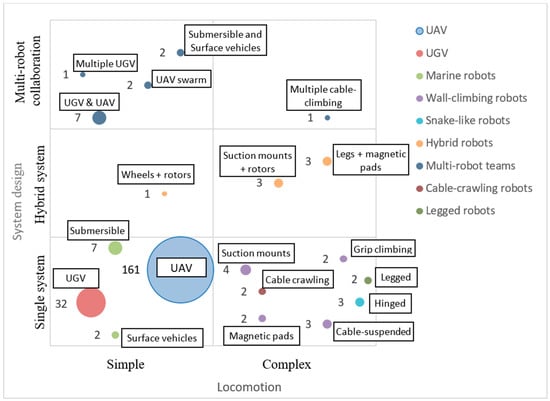

This section critically reviews various types of robots used in research. Figure 3 shows the types of robots identified. The numbers in the figure denote the number of papers reporting the use of that type of robot. Most researchers used UAVs, and because of their ability to reach places that humans cannot, they are found to be useful tools for many inspection applications [30,31]. They provide valuable support to quickly and safely access exterior facades of high-rise buildings and hard-to-reach places of bridge decks [32]. The second-most-common type of robot found to be used is the UGV. Unlike UAVs, which offer small payload capacities, ground-based robots such as wheeled robots offer much higher payload capacities and are useful tools in the longer inspection of buildings [3].

Figure 3.

Types of robots used in literature.

Some researchers used multiple types of robots in collaboration, e.g., a UAV with a ground robot [33,34]. Other researchers developed custom hybrid robots with more than one locomotion system [20,35,36,37]. These hybrid robots provide better reach and are more versatile than simple robots. For example—a wheeled robot with a rotor attached developed by Lee et al. [20] can jump around obstacles and inspect both the interior and exterior of a building.

4.1. Unmanned Aerial Vehicle (UAV)

UAVs are by far the most commonly used type of robot for inspection and monitoring of the built environment, as found in the literature. According to Association for Unmanned Vehicle Systems International (AUVSI), the market for UAVs is estimated to be $11.3 billion in the U.S. alone, which will grow to $140 billion in the next 10 years [38]. Examples of commercial UAVs used in research are DJI Phantom 3 [39], DJI Phantom 4 [40], Parrot AR.Drone 2.0 [41], DJI M600 Pro [42], DJI Matrice 100 [43], FlyTop FlyNovex [44], DJI Mavic Mini [45], DJI Mavic Pro [46], and Tarot FY680 [47]. The use of UAVs, also known as drones, started in military operations; however, they are now increasingly being used in the construction and maintenance of civil infrastructures for inspection and monitoring [30,48,49]. Their versatility and low operating and maintenance costs make them appealing to a wide range of sectors, including construction [50,51]. UAVs are a preferred tool for data collection because of their maneuverability and higher angles of measurement [52,53]. They are also very lightweight and take little time to set up. They can reach places where it is difficult to reach for humans [51,54,55]. UAVs can also undertake at-height inspections isolating humans from fall hazards [56,57]. UAVs are also faster than human inspectors [58]. High-rise towers with glass facades often get damaged after extreme weather events. These facades need to be inspected before re-occupancy of the building is allowed [59]. Due to their speed, UAVs can provide accurate information much faster and more frequently than humans [58,60]. UAVs can thereby reduce the cost and risk of inspection of the built environment [61].

UAVs are classified into two categories—fixed-wing and rotary-wing UAVs. While fixed-wing UAVs are faster, they cannot hover or take-off vertically. Rotary-wing UAVs can take off vertically from any location, eliminating the need for a horizontal plane on which to gain speed [62]. The most popular rotary-wing UAV is a quadrotor with four rotors [63]. They are agile and can hover in one place. They are, however, slower and have a shorter range [62]. As a result, rotary-wing UAVs are better suited for building applications. However, fixed-wing UAVs can be useful in long, linear infrastructure projects such as highways and railways. A relatively rare UAV type is a lighter-than-air-platform; examples are balloons and kites [64,65]. These systems are substantially slower and have very little wind resistance.

Typically, UAVs carry digital cameras as payloads to capture visual data from the site [66,67]. They can also carry other equipment, such as thermal cameras [68,69]. However, images or videos captured from UAVs can be noisy and require post-processing [66]. The design and payload of the UAV may vary based on the desired application [70]. The common use of UAVs in building inspections is to collect visual data by utilizing their onboard cameras [15,71]. UAVs are also commonly used for bridge and powerline inspections, because of the high risk of fall accidents in the manual inspection of bridges [72,73]. Lyu et al. [74] identified the following requirements for a UAV system for building inspection:

- UAVs can hover anywhere to take photos.

- UAVs can zoom in and focus on a small region of interest.

- UAV operation should be simple, and the operator can get started without professional training.

- UAVs should have a long enough flight time to improve operational efficiency.

- UAVs should be capable of autonomous flight.

- UAVs should be small enough for transportation and maintenance.

A UAV can be operated either remotely or autonomously and also fly over difficult-to-access areas [75]. Researchers [72] have found that for large linear infrastructure projects, and with manual control of the UAV, frequent turning and climbing maneuvers can be exhausting for the UAV pilot. The quality of the images captured by UAVs also depends on the pilot’s performance [76]. This motivated the development of autonomous trajectory planning for UAVs [77,78]. Active collision avoidance for UAVs is required to avoid accidents on sites due to their use, and it is more complicated than that for autonomous ground vehicles [79]. Shared autonomy has also been developed, in which humans provide higher-level goals to the UAV, but lower-level control, such as keeping a safe distance from the building and avoiding objects, is conducted autonomously [80]. Shared autonomy reduces the cognitive load on the human operator while still utilizing their experience and expertise [80].

Although UAVs are efficient and versatile in an outdoor environment, they are of limited use in indoor situations because of visual obstructions [81]. Another challenge for UAVs is that GPS signals used by outdoor autonomous UAVs may become unreliable in indoor settings [82,83]. Many UAVs use other sensors to aid in navigation, such as gyroscopes, magnetometers, barometers, SONAR, and inertial navigation systems [65]. Vision-based localization and navigation have also been developed and tested [84]. These autonomous navigation and path-planning techniques for UAVs and other robots are discussed in detail in Section 6.1.

4.2. Unmanned Ground Vehicles (UGV)

UGVs, also known as rovers, are the simplest in the design of all the robots discussed in this review. These robots work well on flat surfaces but perform poorly on cluttered surfaces [85]. UGVs can be wheel-driven, using pneumatic wheels to move [86], or crawler-mounted or tracked systems [87]. Crawlers, which are also used in military tanks and heavy equipment, have better traction on slick or wet terrain. If the surface conditions are suitable, wheeled UGVs are the most efficient in terms of power consumption, cost, control, robustness, and speed [85]. Owing to lower power consumption and longer runtime, UGVs can be used as human assistants for long inspection rounds [88]. Some examples of commercially available UGVs used for research are the Clearpath Jackal [89] and Clearpath Husky [90].

Due to their low center of gravity, UGVs are the most stable and can carry large payloads. Some common payloads attached to UGVs in the built environment for inspection and monitoring purposes are (a) regular fixed cameras and stereo cameras for visual data collection [90,91]; (b) LiDAR and laser scanners for 3D data capture [89,91]; (c) mechanical arms for obstacle removal [90]; (d) ground-penetrating RADAR (GPR), ultrasonic sensors, and infrared sensors for behind-wall and under-ground sensing [92,93,94,95]; (e) a graphics processing unit (GPU) for processing [89]; and (f) IMU, GPS, and UWB sensors for localizing and navigation [89,96].

UGVs have been used in a variety of situations other than buildings due to their ease of design and operation. They have been used for the inspection of bridge decks [89,97], storage tanks [98], HVAC ducts [5], and even sewer and water pipelines [99]. Owing to their adaptability, they can perform floor cleaning, wall construction, and wall painting in addition to inspection [90]. They make an excellent test bed for algorithms and sensor design due to their ease of operation.

The low height of UGVs is also a disadvantage because it limits their reach in large halls or spaces with high ceilings. As a result, UGVs are also used in close collaboration with other robots, such as UAVs. Such multi-robot collaborations are explained in more detail in Section 4.9.

4.3. Wall-Climbing Robots

Wall-climbing robots are used for the inspections of building facades, windows, or external pipes. Manual inspection of exterior utilities on a high-rise building is performed by human inspectors supported on a temporary frame suspended from the roof of the structure, which poses a huge safety risk to the human inspector [100]. A UAV can be the tool of choice for such applications because of its easy setup and flexibility. However, as discussed above, UAVs are susceptible to high winds and legal restrictions. Liu et al. [100] developed a cable-suspended wall-climbing robot for the inspection of exterior pipelines. Such robots are much safer and can carry larger sensor payloads. They can also be used for the external inspection of large above-ground storage tanks [101]. When supported by a single cable, as developed by [100], the robot can only move in the vertical direction. However, horizontal motion can be added by supporting the robot with two cables suspended from two different horizontally-spaced points at the top of the structure [101].

Other methods of wall-climbing include grip-climbing using mechanical actuators and springs to create gripping action [102]. These robots do not require any pre-installed infrastructure to climb the vertical structure. However, they need surface protrusions to grip while climbing and are not suitable for smooth surfaces.

Magnetic climbing robots use electromagnets controlled by circuits to climb steel structures [103]. The power needed to operate the magnets in these robots is very little compared to UAVs, and they can hold a position indefinitely [103]. A similar robot was developed by [104] with magnetic tracks. The robot was designed to inspect the interior of large steel storage tanks. Magnetic climbers are susceptible to losing traction from the surface due to dust or unevenness, which can be dangerous [105]. Therefore, both grip-climbing and magnetic-climbing robots are limited in their use for special types of surfaces.

Owing to the need for dedicated infrastructure or a special type of surface in the previously mentioned wall-climbing robots, researchers have also developed suction-mounts that can stick to a wider range of wall surface types. The Alicia robot was developed by [106] as three circular suction mounts linked together by two arms. The mounts are sealed by special sealants to maintain negative pressure and develop strong adhesion with the wall surface. A similar design was seen in [107]. Their robot Mantis was also designed as three suction modules joined by two links used for the inspection of window frames. When moving from one frame to another, one of the modules would detach and go over the frame followed by the other two modules one-by-one. Kouzehgar et al. [107] acknowledged that cracked glass windows may create a dangerous situation for their robot; therefore, they also developed a crack-detection algorithm to avoid attaching to cracked surfaces. In contrast, the ROMERIN robot developed by [108] used six inter-linked suction cups and turbines, instead of pumps, to create stronger air flow and negative air pressure. The use of many suction cups/mounts and high air-flow turbines provided the robot resilience against cracked surfaces [108].

4.4. Cable-Crawling Robots

Cable-crawling robots have a niche area of application in the inspection of stay cables in cable-stayed and suspension bridges [109]. These robots are different from the cable-suspended robots used for wall-climbing explained in Section 4.2. These robots do not need additional infrastructure to navigate but crawl along the existing steel cables in bridges through the use of drive rollers [110]. Although drones can also be used to safely inspect these bridge cables at high-altitudes, drones need to maintain a minimum safe distance to avoid collision [109]. Images captured from a distance do not provide enough clarity to detect micro-cracks on the cable surface [109]. Additionally, cable-crawling robots equipped with multiple cameras, as performed in [109], can inspect the whole surface of the steel cables instead of only one side at a time.

A similar cable-crawling robot was also developed by [110] in Japan for the inspection of the steel cables of suspension bridges. Their design was similar to [109], yet slightly different. Their robot consisted of a base platform that crawled the main cables of the bridge connecting the support tower and a tethered camera module that was lowered from the base platform for the inspection of the hanging ropes. The actual inspection was carried out by the camera module suspended from the base platform, while the base platform provided horizontal locomotion along the bridge.

4.5. Marine Robots

Marine robots are used for the inspection of marine structures such as bridge piers, dam embankments, underwater pipes, and offshore structures. There are two types of marine robots found in the literature—(a) submersible robots, also known as unmanned underwater vehicles (UUVs) [111] or autonomous underwater vehicles (AUVs) [112]; and (b) unmanned surface vehicles (USV), sometimes called surface water platforms (SWPs) [113]. Both USV and UUV are sometimes clubbed together under a single term of unmanned marine vehicles [114].

Submersible robots, as the name suggests, can be fully submerged in water and can perform inspection under water. They are also sometimes referred to as underwater drones [115]. Underwater inspection is conventionally performed by human divers, which is costly, labor-intensive, and unsafe [116,117]. With UUVs, positioning and navigating the robot is a huge challenge because most sensor and positioning techniques (GPS, fiducials, UWB, visual odometry, etc.) used on the ground are inviable underwater [111]. Dead reckoning techniques using inertial navigation, as explained later in Section 6.1.1, can be used for underwater navigation, but its accuracy deteriorates with time due to drift [111]. Optic and acoustic systems are also used that measure distance from the reflected light or sound waves [8]. However, inertial systems do not rely on information from outside and therefore are not affected by underwater rocks or marine life, as is the case with optic and acoustic systems [8].

Unmanned surface vehicles, on the other hand, operate on the surface of a water body. They are low-cost devices that can facilitate safer inspection of marine structures. USVs are often designed as large devices so they are stable in rough waters, though small USVs have also been developed and tested. The use of waterproof cameras and other equipment is a crucial design consideration for USVs [118]. Although USVs have been primarily used in military applications before, the construction industry can also benefit largely from their application. Bridge inspection is one of the largest areas where USV can be utilized because out of 575,000 bridges in the US, 85% of them span waterways [119].

4.6. Hinged Microbots

Hinged microbots are made up of several tiny body sections hinged together. These robots imitate the movements of snakes or worms. Instead of wheels, they move by using multiple motored joints along their body. They can move by contracting and stretching their bodies like worms, or by sidewinding like snakes [120]. Various propagation techniques for these robots have previously been investigated, such as piezoelectric, hydraulic, pneumatic, and electrical micro-actuators [9]. Electrical micro-motors have been found to provide the best speed and power.

The main advantage of hinged microbots is that they are lighter and smaller in size than other types of robots [9]. They are only a few cubic centimeters in size. As a result, they are appropriate for inspecting sewer, gas, water, or other pipes [9,120]. However, due to size limitations, they can only carry a limited amount of data-collection equipment. They are most often equipped with cameras on their heads [120]. Pipeline inspection is also complicated by a limited and unreliable network. Owing to unreliable communication within pipelines, Paap et al. [120] developed autonomous path-finding for their robot that can navigate sewer pipes even if there is no communication with the operator. With the advancement in machine learning techniques, Lakshmanan et al. [121] created a path-planning method for their hinged microbot based on reinforcement learning that quickly finds the best path with the least energy requirement.

4.7. Legged Robots

Legged robots are relatively newer than other types of robots used for the inspection and monitoring of the built environment. They move using mechanical limbs controlled by multiple motors in each limb. Legged robots may have two legs (bipedal) [122], four legs (quadrupeds) [123], or even six legs (hexapods) [124]. They have the adaptability and mobility to traverse various types of terrains, making them well-suited for construction sites [123]. Numerous legged robots have been developed by NASA, MIT, IIT, ETH, Boston Dynamics, Ghost Robotics, ANYbotics, and Unitree [125]. However, due to high technical complexity, very few have been used outside of a laboratory setting [125]. Due to less availability of commercial legged robots in the market, the literature on these in the construction domain is also sparse. A major advantage of using legged robots over UGVs is that the former can traverse stairs, which facilitates multi-story inspections [126].

4.8. Hybrid Robots

A hybrid class of robots uses more than one locomotion to navigate. These are a special type of robots that are developed for specific problems and are not commercially available in the market for general use. A simple hybrid robot is a wheeled robot with additional rotors on top as developed by [20]. In such a robot, the wheels provide stability on a flat surface indoors whereas the rotors assist in inspection at height, obstacle avoidance, and floor change.

Another hybrid robot that was developed by [127] adds rotors to wall-climbing robots. As discussed above, wall-climbing robots using suction or magnetic mounts can be useful for flat exterior surfaces. However, protrusions on the façade such as columns and mullions limit the maneuverability of wall-climbing robots. Rotors allow skipping over these protrusions easily without any additional infrastructure, such as cables, thereby extending the reach of wall-climbing robots. A similar robot developed by [128] used wheels with adhesive coating. The rotors not only provided the vertical thrust but also horizontal thrust to maintain contact with the structure. The wall-sticking mechanism can also be provided through the use of electro-magnets as done by [37]. Electro-magnets can be more stable against strong winds, however, may only work with steel structures and not with concrete or glass surfaces.

Another example of hybrid robot was provided by [35] that consists of legs with magnetic padding. The magnetic legs cannot only climb steel elements for at-height inspections but can also walk over wall protrusions. The strong electro-magnets also provide some fall protection against high winds. However, glass and ceramic surfaces may not work with this type of robot. A similar robot with six magnetic legs was developed by [129]. The robot matched a spider in appearance that climb walls for at-height inspection. Such complex robots require complex control systems and circuity to control the legs and their magnetism in tune with the walking motion, which is a separate research area.

4.9. Multi-Robot Systems

Researchers have also used teams of multiple robots of the same or different types for inspection and monitoring of the built environment. The robotic system developed by [130] comprised two quadruped robots working in a “so-called” master-slave relationship. The master robot was responsible for mission planning, task allocation, and comprehensive report generation and carried a high-performance computing unit for these purposes. The slave or the secondary robot carried other payloads such as a thermal camera, robotic arm, and long-range Lidar along with a smaller computing unit for running local control algorithms. Such systems can carry larger payloads without the need for an over-size robotic platform. The primary focus of the research with multiple robots is the development of multi-robot collaboration strategies.

Another type of multi-robot system used by researchers is a team of Unmanned Aerial and unmanned ground vehicles. In the multi-robot system developed by [33], the wheeled robot on the ground carried the sensors for mapping the environment while the UAV provided a wider view of the area from a higher vantage point for better path planning and navigation. A similar approach was undertaken by [34,131,132], in which the UAV provided an initial scan of the area for the identification of obstacles and occlusions. Based on the initial scan by the UAV, the optimum scan locations were selected for the wheeled robot for higher-quality and longer scans.

Multi-UAV swarms have been used by Khaloo et al. [133] and Mansouri et al. [134] independently. Large infrastructure projects, such as gravity dams and linear transportation infrastructure, can be too large for a single UAV to inspect in a single mission and require mid-mission recharging. Utilizing multiple UAVs together may reduce the time for data collection while covering a large area. In studies related to multiple UAVs where each robot is inspecting a part of the target structure, the combine path planning and data fusion from multiple data sources become the primary research focus [135]. This even applies to other multi-robot systems as well.

Finally, a team of underwater robots and USV has been independently developed by Ueda et al. [136], Yang et al. [137], and Shimono et al. [113] for the underwater inspection of dams and bridge piers. In these studies, the USV provided horizontal navigation from the surface of the water body and lowered the submersible robot suspended by cables or winch for closer inspection under the water.

5. Application Domain

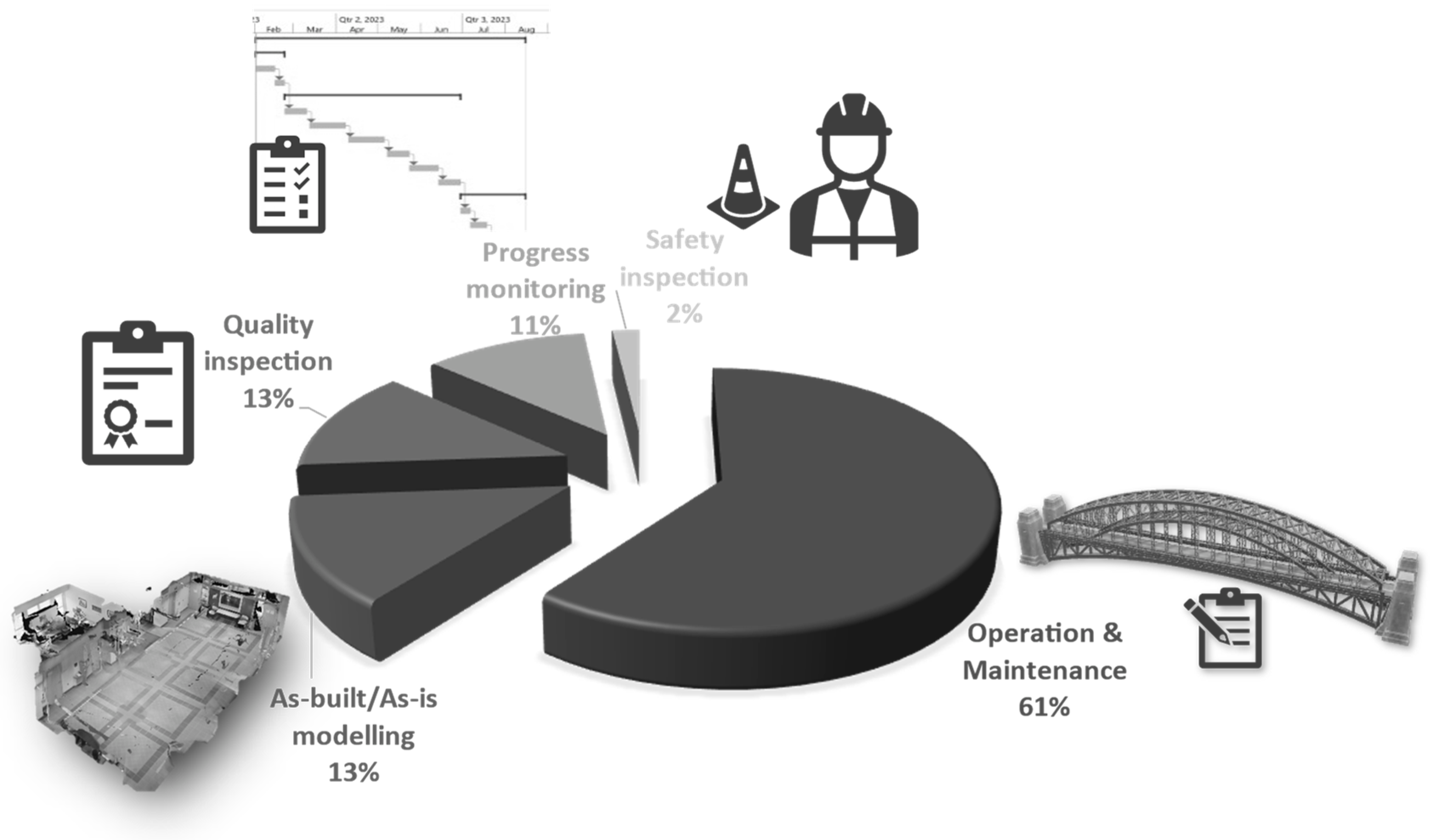

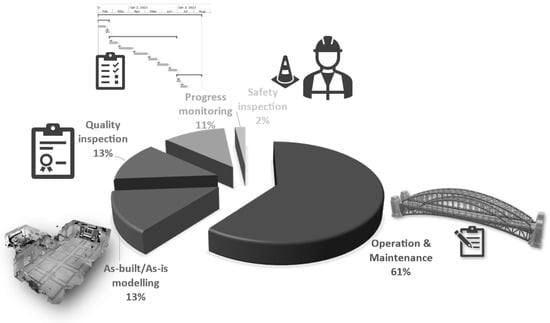

After conducting a qualitative content analysis of the shortlisted papers, many different application domains and research areas were identified from the literature. It was found that, within the context of building and infrastructure inspection and monitoring, robots have been used for five different applications, namely, (a) maintenance inspection, (b) construction quality inspection, (c) as-built/as-is modeling, (d) progress monitoring, and (e) safety inspection. These application domains are explained in detail in the following subsections. The frequency of work in each of these application domains is presented in Figure 4.

Figure 4.

Application domains of using robots for inspection and monitoring.

5.1. Maintenance Inspection

Operation and maintenance of a building cost about 50–70% of the total life-cycle cost of a project [138]. Structures deteriorate due to aging and extreme weather [139]. Regular maintenance increases the useful life of the building [138]. It is crucial to identify defects before they get worse and cause building failure [140]. Periodic monitoring of a structure after it has been constructed for maintenance purposes is also known as structural health monitoring (SHM) [141]. SHM is critical to ensure the safety and integrity of the structures [142]. Visual inspection has long been used to uncover structural flaws in order to guide building rehabilitation [143]. Current practices involve regular and manual inspection of the structure for any structural defects, which is slow and costly [138,140]. Robots can regularly monitor the structural elements for any signs of damage and alert humans if further analysis is required [144]. Maintenance inspection also involves measuring the rate of deterioration for predictive analysis [145]. Images captured using robots are more consistent in their perspective, which is more useful in noticing the rate of change as compared to images captured manually [145].

Robotic inspection also provides a safer alternative to manual inspection. High-rise towers with glass facades, which are a common sight in most cities, require regular maintenance [107]. The classical approach to the maintenance of high-rise buildings poses a high risk to human workers due to high winds [107]. UAVs and wall-climbing robots provide a safer alternative for performing maintenance inspections of high-rise buildings [108,146]. Inspection of tall cylindrical structures such as storage tanks and silos also poses a similar risk to human inspectors [147], and so do roof inspections, since manually inspecting roofs can be difficult due to access difficulties [56].

Old structures may also become unsafe for humans to inspect, especially after sustaining damage from a natural disaster [11,148]. Many historical buildings are abandoned because of numerous structural defects that make them hazardous for manual inspection [149]. Robots equipped with special sensors can be used to diagnose structural integrity before a human can perform a detailed inspection [150]. Ghosh Mondal et al. [151] trained a convolutional neural network (CNN) model with images captured from UAVs for assessing the extent of damage in disaster-affected structures. Sometimes, a single type of sensor is not adequate to identify all types of defects; therefore, multi-robot systems with different types of sensors are also deployed to monitor the structure [152]. One such sensor used is an infrared thermal camera to collect thermal images of the building [153]. Thermal images may reveal special defects such as material degradation and air leakage that might not be detectable using the naked eye or simple RGB images [153].

The monitoring of bridges is often undertaken as a manual inspection task. However, it involves a human inspector being exposed to high-speed traffic and fall hazards from going under the bridge deck [154]. It also involves the bridge being closed and the use of lifting equipment to reach the hard-to-reach places under the bridge [152]. Due to these challenges, bridges may suffer from poor maintenance, which has been the reason for many collapses [154]. UAVs can be used for regular structural health monitoring of bridge decks where it is riskier for humans to reach frequently [32]. Schober [155] used a climbing robot for the inspection and testing of high bridge pillars. Lee et al. [156] studied the use of a teleoperated robot for the inspection of hard-to-reach parts of a bridge.

Robots are also used for measuring building performance. Thermal leakages in a building may cause up to 40% energy loss, thereby decreasing building performance [22]. UAVs fitted with infrared sensors have been used for monitoring of thermal efficiency of buildings [157,158,159]. They have also been used to create heat maps of a building envelope to detect thermal anomalies using thermal cameras [22]. This process is called thermography [160]. Pini et al. [161] argued that using a robotic system for inspection improves repeatability in measurement and reduces human involvement.

5.2. Construction Quality Inspection

Construction quality inspection involves checking the building elements while they are being constructed to ensure they are within tolerable limits and meet industry standards [130].The use of low-quality and changes in temperature may cause cracks in the structures [162]. Cracks in critical structural components must be inspected and documented in detail [163]. Field workers manually inspect the status and condition of the building of interest in a traditional visual inspection [130,164]. Manual inspection requires a significant amount of manpower, which in turn increases the chances of human errors [129]. Undetected defects in the structure may affect the safety of the structure in the long run [129]. Using robots in collaboration with humans for construction quality inspection reduces labor and improves productivity and accuracy [165].

Prieto et al. [130] developed a multi-robot system called AutoCIS for performing quality inspection of construction work. Quality inspection of different types of elements needs different automation approaches [130]. Some examples of quality defects found regularly in construction projects are cracks, wall cavities, surface finish defects, alignment errors, unevenness, and inclination defects [130]. Detection of each of these defects needs a different automation approach. The defect detection methods are categorized into two categories—destructive and non-destructive [130]. For robotic inspection, non-destructive methods are preferred that use different non-destructive sensors such as cameras, 3D scanners, RGB-D cameras, thermal cameras, or other sensing probes [130].

Cracks and other surface defects can be detected by non-destructive methods. Two of the most extensively used approaches for crack-detection are edge detector-based and deep learning-based approaches [163]. While edge detection is an image processing technique that does not require large training datasets, deep learning approaches such as CNNs do [166]. Kouzehgar et al. [107] used a CNN for the detection of cracks in the glass façades of high-rise towers, which is a high-risk and labor-intensive process, using a suction-mounted wall-climbing robot. More discussion on knowledge extraction methods using image processing and deep learning is presented in Section 6.2.

UGV with LiDAR was used to detect defects in infrastructure projects such as bridge decks, which are more dangerous for humans to perform [89]. Lorenc et al. [167] developed an encapsulated robotic system for the inspection of drilled shafts which can be used in high-water-table situations that would normally require a costly and time-consuming dewatering process for human inspection. Barry et al. [101] used a cable-suspended robot for the inspection of vertical structures, such as storage tanks, ship hulls, and high-rise towers.

Various methods for inspecting the quality of building interior components have also been developed. For example, Shutin et al. [168] used robots to inspect the quality of blockwork. Many defects arise during the bricklaying or blockwork activities. and inspecting and correcting them takes significant time and effort [168]. Quality inspection of indoor drywall partitions has been performed using a UAV by Hamledari et al. [169]. Hussung et al. [170] developed a ground-based robot called BetoScan for the non-destructive strength-testing of concrete and reinforced concrete elements. An integrated framework for combining these techniques used by different researchers in order to develop a single robotic system capable of independently checking the quality of various elements should be developed.

5.3. Progress Monitoring

Construction projects can get delayed due to various causes, such as weather, supply chain disruptions, or human error [20]. Projects must be monitored regularly, and corrective actions must be taken to reduce the impact of the delays [20]. Traditionally, the project manager (PM) visits the jobsite to monitor construction operations and compares the as-built state of the construction with as-planned state [171]. It allows project managers to timely identify schedule discrepancies [172]. When the PM cannot visit the site, time-series images that show the progress over time are also used by them for progress monitoring [173]. Typically, this process is manual and strenuous [123,174,175]. Automated progress monitoring aims to make this process more efficient and precise through the use of technology such as BIM, robotics, and image processing [171].

Lee et al. [20] developed a robotic system equipped with LiDAR, wheels, and rotors connected with a remote server for remote progress monitoring. The advantage of such a system is that it saves time for the project manager, as the robotic system moves faster than a human, so can cover a larger area in less time [176,177]. The PM can monitor multiple projects from one remote location [178]. Bang et al. [179] developed an image stitching algorithm that converts a video captured through a UAV into a sitewide panorama. This allows the PM to view the entire jobsite at one glance and monitor the progress.

An extension to the above approach is to automatically detect progress from visual data by robots. Computer vision has been used to automatically identify building components (e.g., drywall) from 2D images and update the progress information in an Industry Foundation Classes (IFC)-based 4D Building Information Model (BIM) [169]. Such algorithms used in conjunction with autonomously navigating robotic systems [180,181] driven by BIMs automate a significant part of progress monitoring, thereby reducing human effort.

Using point clouds from laser scans is also used to detect constructed elements to measure progress [174]. Since laser scanning is a time-consuming task, using autonomous robots for laser scanning can reduce the human resource requirement. A major challenge in automating the progress monitoring process through visual scans is to identify the optimum points that provide the best view of all constructed elements [174].

Visualization of construction progress based on information collected by robots needs to be considered too. Human inspectors receive a wealth of contextual information during manual walkthroughs, which helps them understand the construction work. Looking at 2D images is not intuitive for that task [126]. Halder et al. [126] created a methodology for visualizing 360° images collected on-site using a quadruped robot within a 3D virtual environment created with BIM. The 360° images were geolocated inside the building using BIM, which provides enough contextual information to understand the image’s relationship to the building. Immersive virtual reality has also been tested to assist a remote inspector visualize the site visuals easily and intuitively [178].

5.4. As-Built/As-Is Modeling

During construction, deviations may arise between the as-built structure and the as-planned model of the project due to various reasons. To avoid disputes, the changes must be communicated to all stakeholders in a timely manner [182]. Three-dimensional reconstruction of the as-built state is useful for measuring those deviations [46,183]. Three-dimensional reconstruction is creating a 3D model of the building using visual data from it. Capturing visual data from the site using manual means is laborious and time-consuming [184]. Robots can be used to automate the data-capturing process for 3D reconstruction or as-built modeling [185,186].

Point clouds are a popular data structure for storing as-built information in the form of physical scans of structures [187]. Point clouds are generated either using laser scanners or image-based photogrammetry [187]. Photogrammetry techniques rely only on RGB cameras to capture 2D images for as-built modeling [188]. Photogrammetry also allows taking volumetric measurements from images [189]. Image processing techniques such as structure-from-motion are used to build a 3D model from 2D images from a moving camera for photogrammetry [190]. However, this requires heavy post-processing of the images. Laser scanners are more accurate, but they require specialized equipment and skilled operators, which is substantially more expensive than conventional RGB image capturing. Photogrammetry using regular RGB cameras is more suitable than bulky laser scanners for UAVs. However, the accuracy of measurements generated from photogrammetry is subject to various factors, such as the choice of lens, sensor resolution, the sensitivity of the sensor, and lighting conditions [191]. LiDAR is also used instead of laser scanners. Being lighter in weight, LiDAR mounted on UAVs has also been used for as-built modeling [192]. Another approach is using Kinect sensors [48]. Kinect measures the distance of objects using structured light projection [48]. Kinect sensors are lighter than laser scanners; therefore, they have also been used with UAVs [48].

Visual obstructions at construction sites, such as scaffolding, stacks of building materials, and people moving around, create the requirement for multiple scans within a single space [193]. This makes manual laser-scanning time-consuming and labor-intensive. By automating this procedure, the rate of generating as-built information may be raised from weekly inspections to daily data collection. [187].

Both UAVs and UGVs can be used for as-built data collection [34,91,194]. Robots equipped with laser scanners were used for as-built/as-is modeling of construction sites [193]. Drones were used with photogrammetry to create automatic point clouds [187]. Hamledari et al. [52] used a UAV for collecting as-built information and updating the BIM model by updating the IFC data pre-loaded on its system. Patel et al. [30] used Pix4D to create automatic flight plans to fully automate the capturing of images of a building using a UAV and create a point cloud for inspection.

5.5. Safety Inspection

The Bureau of Labor Statistics reported that one-fifth of worksite fatalities in 2017 occurred on construction worksites [195]. Safety managers perform safety inspections of construction sites on a day-to-day basis to control hazards. Safety inspection involves safety managers performing walkthroughs of the site and visually observing construction operations to enable the early detection of problems [196]. In response, project managers implement precautionary actions to prevent accidents [196]. However, this the project managers from engaging in other productive. Additionally, safety is an important issue that can benefit from more frequent monitoring. One of the leading causes of accidents on construction sites is falls [197]. Implementing site-wide fall protection practices has been found inadequate to prevent falls; instead, continuous monitoring is important for preventing fall accidents [197].

UAVs have been used for remote surveillance of construction sites for improving safety and preventing accidents [21,196,197]. UAVs move faster than humans and can access hard-to-reach places [196] and perform continuous monitoring. Gheisari et al. [197] developed computer vision algorithms to detect unguarded openings from video streams transmitted by drones. Kim et al. [21] trained computer vision models for object detection and object tracking for proximity monitoring. They used a drone to survey a construction site and track construction resources (workers, equipment, and material) to prevent struck-by accidents.

6. Research Areas

To study the challenges of using robots for inspection and monitoring of the built environment, the papers were reviewed and categorized based on the research areas studied by the researchers. There were eight main research areas discovered in the literature—(a) autonomous navigation, (b) knowledge extraction, (c) motion control system, (d) safety implications, (e) sensing, (f) human factors, (g) multi-robot collaboration, and (h) data transmission. The following subsections discuss each of these research areas in detail.

6.1. Autonomous Navigation

Atyabi et al. [112] define intelligence as “the ability to discover knowledge and use it” and autonomy as the “ability to describe its own purpose without instructions”. Navigating a robot autonomously on a construction site for inspection has been a prime focus of research for many researchers [89,198,199,200]. Technologies have been developed to make the robot aware of its surroundings. Autonomous navigation of robots can be divided into three stages—localization, planning, and navigation.

6.1.1. Localization

In the localization stage, the robot estimates its current position in the target space. Myung et al. [201] used the local navigation system (LNS) for the localization of any robot inside a building. The LNS uses preinstalled anchor nodes for calculating the location of the robot, similar to how the Global Positioning System (GPS) works, though GPS is ineffective in an indoor setting. Traditional LNS requires a direct line of sight with the anchor nodes and produces an accuracy of up to 11 m in a non-line-of-sight setting, which is not very useful. However, Myung et al. [201] developed an algorithm to compensate for non-line-of-sight and achieved an accuracy of 2 m. This is a significant improvement but may not be sufficient for reliable inspection in small spaces.

In GPS-denied environments, such as inside large buildings or areas surrounded by high-rise structures, additional technologies such as Real-Time Kinematic (RTK), Differential GPS (DGPS), and Ultra-Wide Band (UWB) technologies are used to augment the capabilities of GPS and improve the positioning accuracy to within the centimeter range [200]. However, these technologies require additional infrastructure to set up and operate [53].

The Simultaneous Localization and Mapping (SLAM) algorithm is used to create a digital map of the surroundings using a camera, LiDAR, or other sensors. Phillips and Narasimhan [89] combined measurements from an IMU sensor, wheel odometry, and GPS (whenever available) and used the extended Kalman filter (EKF) for estimating the position of a Clearpath Jackal robot, a medium-sized UGV. This technique is called dead-reckoning, which can enable localization without any external infrastructure, but can be subject to drift over time and needs to be re-calibrated repeatedly [53]. Parameters required for localization of the robot are categorized into four categories—interior orientation parameters (e.g., camera focal length, the principle point, and length distortion), exterior orientation parameters (e.g., orientation from IMU), ground control points (e.g., any known natural or artificial targets), and tie/pass points (e.g., unique feature points on the scene that can be tracked in multiple images) [202]. The scale-invariant feature transform (SIFT) technique was used for feature extraction in the surrounding environment and comparison with a CAD model for estimating the position of the robot in the frame of reference of the building [203,204,205].

In underwater inspection, such as inside water tanks, visual sensors become ineffective. An underwater robot needs ultrasonic acoustic and inertial navigation sensors [8]. GNSS and underwater acoustic position systems have been used together to provide reliable localization underwater [206]. The other approach is to use a USV to carry a submersible vehicle. The USV being above water can receive GNSS signal, and the submersible vehicle’s absolute position can be calculated from its position relative to the USV [137].

6.1.2. Path Planning

Path planning involves finding the optimum path or a series of control points (called waypoints) from the robot’s current location to its target location while avoiding collision with the different elements of the structure [207]. Besides the positions of the control points, the path information may also include the velocity and yaw of the robot [135]. BIM has been used as a planning tool for robot path planning [207,208]. Most research on path planning focuses on single-building inspection. However, a multi-building path planning approach has also been performed with UAVs by Lin et al. [209]. Another technique used for path planning for UAVs is discrete particle swarm optimization [31]. Dynamically changing site conditions in construction projects also require the robot to re-adjust its path, which means any pre-programmed path may not work in the long run or must be revised each time the robot is expected to complete its mission. Therefore, path planning should consider the current state of the structure. Therefore, 4D BIM is used for path planning because it captures the state of the structure with time during construction [81]. However, the success of the path planning will depend on how accurately the 4D BIM represents the actual temporal state of the structure. BIM also provides information about the openings (e.g., doors) that needs to be considered in indoor path planning [126,210]. When BIM is not available, a 3D point cloud can also be used in its place for path planning [211].

Krishna Lakshmanan et al. [121] used reinforced learning (RL) to train a robot to find the optimum path. RL is one of three types of machine learning models, other than supervised and unsupervised learning. It is based on finding the correct solution based on a positive and negative reward system. Asadi et al. [180] used an embedded Nvidia GPU on the robot body for mapping and navigation using deep-learning-based semantic segmentation of images captured by the robot’s camera. This eliminates the continuous need for connectivity and improves the autonomy of the robot.

6.1.3. Navigation

Construction sites are highly cluttered environments. During the navigation, the robot must be aware of its surroundings and make minor adjustments to its path [212]. Warszawski et al. [213] compared laser sensors, ultrasonic sensors, and infrared sensors for mapping a floor for robot navigation. Zhang et al. [214] and Asadi et al. [212] used deep-learning for vision-based obstacle avoidance of the robot. Asadi et al. [90] developed an autonomous arm for a UGV for detecting and removing obstacles from the robot’s path. They used a CNN-based segmentation model for object detection. This is useful for removing small obstacles up to the payload capacity of the attached arm.

Wang and Luo [165] developed a wall-following algorithm using LiDAR sensors and a ground-based wheeled robot to run along the walls and find defects. This is a simple algorithm that does not need any pre-planning of the path, but it cannot move away from the walls. In large halls or auditoriums, the wall-following feature can be a limitation. Al-Kaff et al. [215] used visual servoing with UAV to maintain a safe distance from the wall. Visual servoing uses a feedback loop between the camera image and robot control to dynamically control the robot with the changing condition [215].

6.2. Knowledge Extraction

Computer vision is used extensively to create a rich set of information from site images and videos for inspection purposes [216]. Computer vision and deep-learning have been used to detect defects in a building structure, such as exposed aggregates on a concrete surface [217] or cracks on the walls of storage tanks [218]. It is also used for post-disaster reconnaissance of damaged buildings [148,151]. CNNs have performed remarkably well in detecting defects from images [219]. Li et al. [217] trained a U-net (a CNN-based deep-learning model) to identify exposed aggregate on a concrete surface. The U-net is a popular deep-learning model used for pixel-wise image segmentation. With a training dataset of 408 images and a validation dataset of 52 images, they achieved an accuracy of 91.43%. The results are promising, which could be further improved with a larger dataset. Alipour and Harris [220] used a publicly available dataset of forty thousand images to train a deep-learning model to identify cracks on concrete and asphalt surfaces. They achieved an F1-score of up to 99.1%, which is a measure of how well a classifier function performs. The major challenge with crack detection using deep-learning is the wide range of complex backgrounds due to different types of facade materials [221].

Computer vision has also been used for the automatic creation of as-built models from site images. Hamledari et al. [169] used computer vision to identify components of indoor partitions, such as electrical outlets and doors using pictures captured from a UAV. Hamledari et al. [52] further developed the technique to compare as-built elements with the as-designed elements in an IFC database. If a discrepancy is found, the element type, property, or geometry is updated to build an as-built model automatically.

Researchers also developed non-ML-based image processing approaches that do not require big training datasets. For example, mathematical transformations for stitching multiple images across a single site to create a panoramic view of the project has been developed for inspection [179,222,223]. Photogrammetry, as explained in Section 5.4, is an image-processing technique [224]. Pix4D is a commercial platform used for photogrammetry that provides the option of taking building measurements from a 3D model created from 2D images. Pix4D has been used for knowledge extraction (taking measurements) using robots [30]. Edge detection techniques, which find discontinuities in the image, such as the Laplacian of Gaussian algorithm, are used to detect cracks on walls [225,226] but do not require training data.

Another important consideration in using robots in the field is that due to their efficiency and speed in capturing data, the high volume of data may soon create data overload which slows down learning from the data [227]. Thus, it is important to extract only useful data from the complete pool of data collected by the robot. In this direction, Ham and Kamari [227] developed a method to rank and select images captured by a UAV based on the number of construction-related objects visible in the image using semantic segmentation.

6.3. Motion Control System

Inspections of building facades, ducts, pipes, and shafts require a different type of locomotion and navigation. Barry et al. [101] studied the use of cable-suspended robots for the inspection of high-rise towers or storage tanks from the outside. Heffron [228] developed a remotely-operated submersible vehicle for underwater inspection. Brunete et al. [9] developed a worm-like microbot for the inspection of small-diameter pipes and used infrared sensors for navigation and defect detection. ALICIA3 [229] is a wall-climbing robot that uses pressure-controlled suction cups to stay affixed to the walls and is used for the inspection of building facades. Researchers have installed multi-joint arms and grippers, telescopic supports, and rotating heads [29,35] to enable the robots to reach hard-to-reach places. These works improved the overall reach of the robots.

Apart from the basic wheel or rotor-based locomotion, hybrid locomotive systems further improve the maneuverability of the robot in cluttered environments, such as construction sites. A drone equipped with wheels and a propeller can inspect a building’s exterior, move in small spaces, and also climb stairs [20]. A legged robot with magnetic pads under its feet can walk on the ground and climb on steel columns and pipes for closer inspection [35]. A climbing-flying robot can climb on walls and poles for better quality inspection and can fly to pass obstacles and avoid falling [36,37].

Aerial robots deal with a high degree of freedom and are extremely prone to environmental disturbances. Images captured from an aerial robot or UAV are often characterized as “noisy” [230]. To perform an effective inspection, the robot (or drone) needs to keep adjusting to the external environment. González-deSantos et al. [231] equipped a UAV with Lidar to measure distance and orientation for maintaining a stable altitude and position with respect to the inspection target, which was important for the ultrasonic gauge sensor used to measure the thickness of metallic sheets. Kuo et al. [232] developed a fuzzy logic-based control system to control the camera and the thrust of the UAV to get more stable images.

In wall-climbing robots, the pressure in the suction cups is controlled using pressure sensors installed inside the robotic systems. This adjusts the suction strength for different surface conditions [233]. Barry et al. [101] developed a rope-climbing robot for climbing and inspecting walls of storage tanks and high-rise buildings. They designed the control system to counteract the oscillations of the rope caused by the climbing motion of the robot.

6.4. Sensing

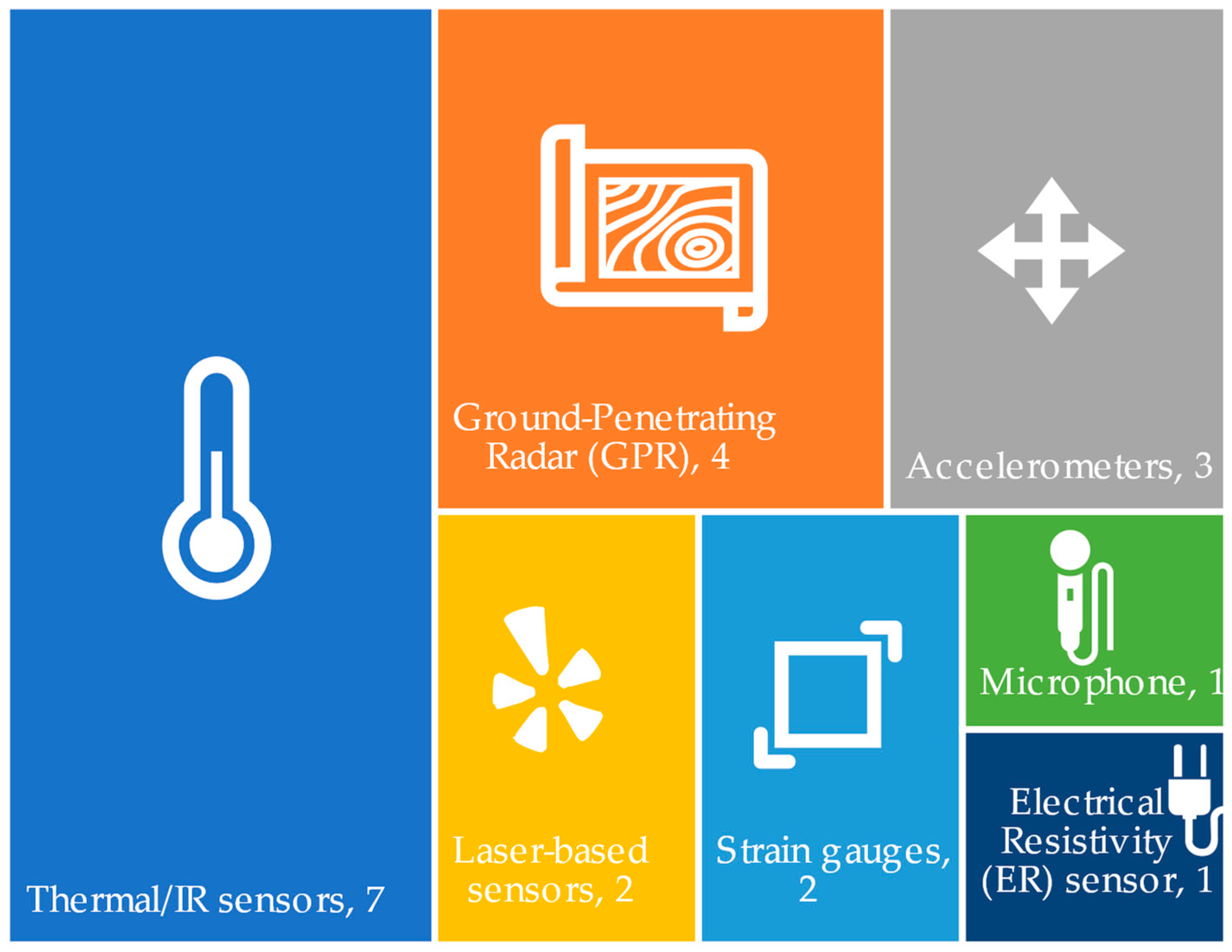

Robots can be equipped with various types of sensors to perform different types of inspections. They can be equipped with high-precision servo-accelerometers to measure story drift in earthquake-affected structures without the need for a human inspector [234]. Many other sensors have been used with robots for non-destructive testing and structural health monitoring, such as eddy current, RADAR, infrared (IR), and ultrasound sensors [95,170]. Liu et al. [100] proposed using gas detectors with a pipe-climbing robot for detecting gas leakage in high-rise towers. Elbanhawi and Simic [235] developed a robotic arm to automatically collect water samples from a solar pond. The arm was equipped with multiple sensors for in situ measurement of density, salinity, pH, and turbidity. Huston et al. [236] embedded sensors, such as strain gauges, in the building structure. They used a UGV to power the sensors and collected data wirelessly through electromagnetic induction. This avoided the need for embedding power cables for powering the sensors. Davidson and Chase [92] installed RADAR on a robotic cart to create a digital reconstruction of the interior of a bridge deck through diffraction tomography. Kriengkomol et al. [129] used audio sensors to perform a hammering test on steel structures using a hexapod robot. The hammering test detects and analyses the vibration of a structural element after striking it with a hammer. Anomalies in the resulting vibrations indicate loose bolts or other structural defects [129]. An emissometer connected to robotic arms has been used for autonomous mapping of the thermal emissivity of the building envelope for energy studies [161]. Robots equipped with IR sensors are used to monitor the building envelope for any thermal anomalies [158,237,238,239]. When using IR, the angle of tilt of the sensors affects the accuracy of measurement [240]. Therefore, UAVs can be advantageous in maintaining the right angle while collecting data for high-rise buildings. Experts may choose to combine multiple sensors into a single robotic platform for faster inspection and more detailed inspection. These sensors can be grouped together into six categories—thermal/IR sensors, ground-penetrating radars (GPR), accelerometers, laser-based sensors (LIDAR or laser scanners), strain gauges, microphones, and electrical resistivity sensors. The frequency of works reporting the use of these sensors found in the review is presented in Figure 5. The numbers in the figure represent the number of papers reviewed reporting the use of such type of sensors.

Figure 5.

Types of sensing technologies used with robots for inspection and monitoring.

When using multiple sensors together in a single robotic platform, fusing multiple sensor data together to present more meaningful data is important and was the focus of research in [94] and [86]. Massaro et al. [95] combined data from a ground-penetrating RADAR, thermal IR camera, LIDAR, GPS, and accelerometer into a long short-term memory neural network for detecting road defects. Combining and processing the data from different types of sensors, such as positioning sensors (GPS, IMU), visual sensors (camera), and non-visual sensors (RADAR, thermal camera) can provide more in-depth inspection in a shorter time [86,94]. However, more sensors will increase the weight the robot needs to carry, and its power requirements [241]. The more sensors a robot carries, the larger the capacity required, and the larger it has to be in size, which in turn increases the power requirements even further.

6.5. Multi-Robot Collaboration

Researchers have used multiple robots, such as UAV-UAV or UAV-UGV pairs for better navigation. In the UAV-UGV pair, the UGV performs the actual inspection while the UAV provides navigational support by being its eye from a higher vantage point [33,34,131]. Construction sites are highly cluttered, and it becomes difficult for a ground-based robot to navigate the site due to limited visibility [33]. To address this challenge, a collaborative system of a UAV and a UGV can be used for localization and path planning [33,34]. The UAV provides an eagle-eyed view of the construction site and creates a point cloud with 2D photogrammetry which is then used by the UGV for navigation [34,131]. However, in indoor spaces, the presence of a large number of obstacles limits the effectiveness of this approach without a suitable anti-collision system in the UAV [242].

Another approach is to use the UAV for the main inspection and the UGV as a docking station for recharging the battery of the UAV [243]. This is useful in long-duration missions since the UAV needs to be lightweight and can be equipped with a smaller battery with a limited runtime. The UGV with a larger battery accompanies the UAV and provides periodic power boosts [243].

The UAV-UAV approach is used for navigation and localization in Global Navigation Satellite System (GNSS)-challenged environments [244]. This is achieved by coordinating the movements of two UAVs in such a way that one of them is in a location where GNSS is available, while the other calculates its absolute position based on its relative position from the first UAV [244].

Mantha et al. [208] developed an algorithm for the efficient allocation of tasks and path-finding for multiple robots while keeping path obstructions and the single-charge run capacity of robots as constrained. Genetic and k-means algorithms in conjunction with the Travelling Salesman Problem (TSP) also allow finding the optimum solution to the multi-robot path planning and task allocation problem [245,246]. Multi-robot collaboration is important for the efficient use of these tools while avoiding clashes.

6.6. Safety Implications

Requirements for the safe use of robots on construction sites are not well-explored, as there are very few notable works on the safety and usability assessment of robots for inspection. There also needs to be more research on regulating the use of robots on construction sites. To the best of the authors’ knowledge, there are no statutory regulations on using ground robots on construction sites that provide guidelines for ensuring the safety of people from robots, especially autonomous robots. The Federal Aviation Administration (FAA) provides regulations on the use of UAVs [247], which are generally applicable to its use anywhere including construction. The FAA previously disallowed the use of UAVs out of sight of the pilot and over a populated area [247]. This rule necessitated the involvement of a human with the UAV at all times during its operation, which defeated the purpose of fully autonomous inspection. However, recent amendments in the FAA rule effective on 21 April 2021 allow the use of UAVs in populated areas and at night-time [248]. The FAA also provides a waiver from part 107.31, which prohibits the use of UAVs out of sight of the pilot, for small aircraft [248]. Using the FAA rules, the Occupational Safety and Health Administration (OSHA) [249], through its memorandum to regional administrators, outlined some guidelines and recommended some best practices for using drones specifically for inspection in a workplace setting. These recommendations include procedures for “pre-deployment, pre-flight operations, postflight field procedures, postflight office procedures, flight reporting, program monitoring and evaluation, training, recordkeeping, and accident reporting” [250]. OSHA too prohibits the use of drones over people, out of sight of the operator, and after sunset.

Various models and simulations have been developed and tested for qualitative and quantitative analysis of risks associated with the use of aerial robots in construction sites [247,250,251]. Flight simulators are also used to train UAV pilots for the safe usage of UAVs in construction sites and to reduce risk from the use of UAVs [252].

6.7. Data Transmission

In this category, researchers have developed data compression and transmission techniques for faster and more robust data transfer from wireless robots over the Internet or local area network [253,254,255]. Li and Zhang [256] combined the Internet of Things (IoT) with robotics for wirelessly collecting data from multiple sensors inside the building for quality inspection. Wireless communication with robots usually occurs via radio frequency (RF) signals using protocols such as WiFi and Bluetooth [256,257]. Other RF-based communication protocols have also been used, such as LoRa and ZigBee [256,257]. However, in urban settings, where there is a large number of sources of RF signals, signal interference and/or loss of signal can severely affect the safe performance of the robot. Therefore, Mahama et al. [257] studied the effect of RF noise in urban settings in the operation of UAVs and recommended using redundant channels and faster frequency switching for seamless operations.

A robotic inspection may generate a vast amount of data in the form of images or point clouds [255]. Depending on the fidelity of the data required, either lossless or lossy data compression techniques may be used. Lossless compression produces exact reconstruction, whereas lossy conversion has a higher compression ratio but loses some details during reconstruction [255].

6.8. Human Factors

Humans and robots collect and process information differently. While humans can understand abstract and unstructured data easily, robots need structured instructions. On the other hand, robots can process and execute instructions more accurately than humans without tiring. Human–robot interactions deal with understanding the intent of the human operator and breaking it down into simple instructions that can be executed by the robot. At the same time, it also deals with presenting the raw data collected by robots in a form that makes sense to humans. Human–robot collaboration (HRC) can utilize the strengths of both agents [258].

Augmented, virtual, and mixed reality are excellent tools for presenting site information in a human-friendly format. Van Dam et al. [259] discovered that annotating the visual data captured by a UAV helps human operators perform inspection tasks by reducing their workload and decreasing the likelihood of missing an error in the work. Liu et al. [164] augmented the live visual feed from a UAV with the BIM. Jacob-Loyola et al. [175], on the other hand, augmented the as-planned BIM model with a reconstructed 3D model of the building created from UAV images in an augmented reality environment. Both of these methods used and converted information captured by robots into a more intuitive format for human evaluation. However, using augmented reality with BIM is challenged by the problem of alignment, as consistently keeping the BIM aligned with the reality in a moving UAV is a challenging task [164].

Even though many studies are working on making robots more and more autonomous, full or partial human intervention is still required for the safe and efficient use of robots [260]. Robotics, being a fairly new technology, requires proper training for the operators [115,260]. Virtual reality is found to be an excellent test-bed for prototyping new human–robot interactions [261,262]. It provides a safe environment to train human operators without the risk of harm to the site, the robot, or any human [260].

7. Future Research Directions

The comprehensive review of the literature revealed a few research gaps in each of the identified research areas. These research gaps are presented as follows.

7.1. Autonomous Navigation

Currently, robots that can successfully perform regular autonomous navigation through the unstructured and dynamically changing environments of construction sites do not exist. Therefore, future research should develop control and autonomy algorithms to this end. Additionally, robots are efficient data collection agents because of their speed and consistency, ensuring that the data available to project stakeholders are up-to-date and consistent [145]. Studies on autonomous navigation, including [30], have considered predefined scan locations from where the robot would perform scans for as-built modeling. A good quality laser scan may take several minutes at each point to complete [263]. Therefore, it is important to minimize the number of scan locations to enable the robot to cover the whole structure on a single charge. Human reasoning is still required for selecting the optimum locations from where the robot would collect data through autonomous navigation. The next step towards improving autonomy should be making the robot able to select scan locations itself. The selection of target scan locations requires experience and domain knowledge in addition to the knowledge about the structural geometry. Future studies can investigate the process followed by humans and the visual cues used by them in selecting right locations for capturing site images or performing laser scans. This knowledge can help robots to independently identify the navigation goals that meets human requirements.

7.2. Knowledge Extraction