Feature-Oriented CBCT Self-Calibration Parameter Estimator for Arbitrary Trajectories: FORCAST-EST

Abstract

1. Introduction

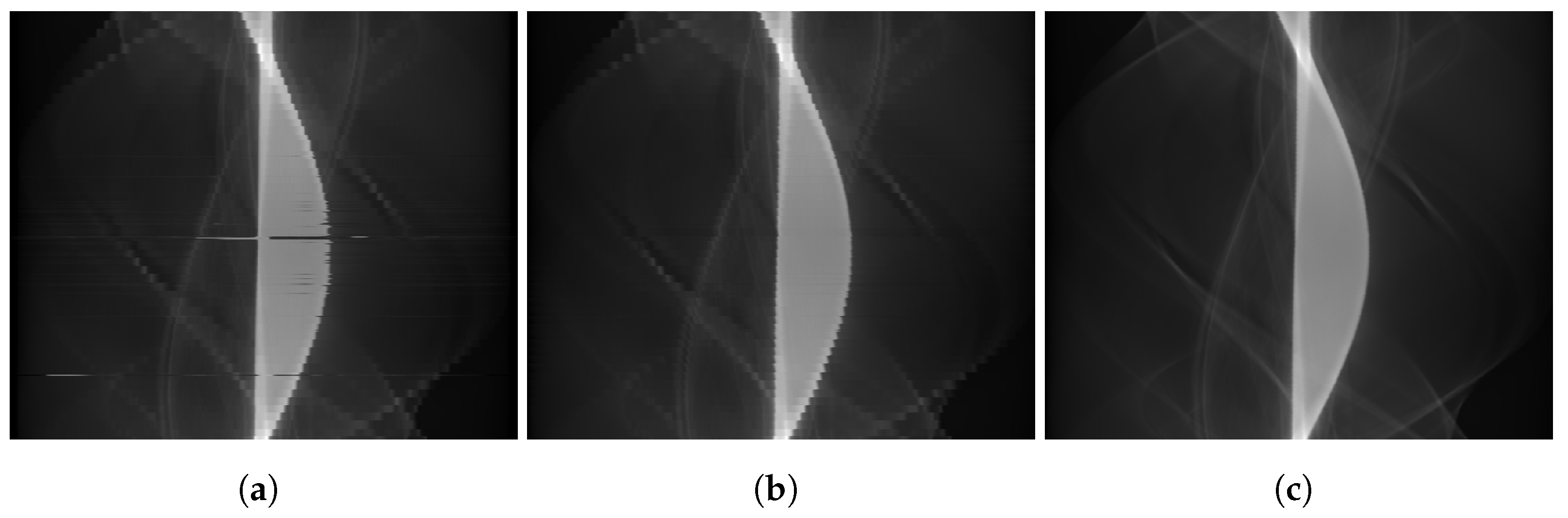

2. Materials and Methods

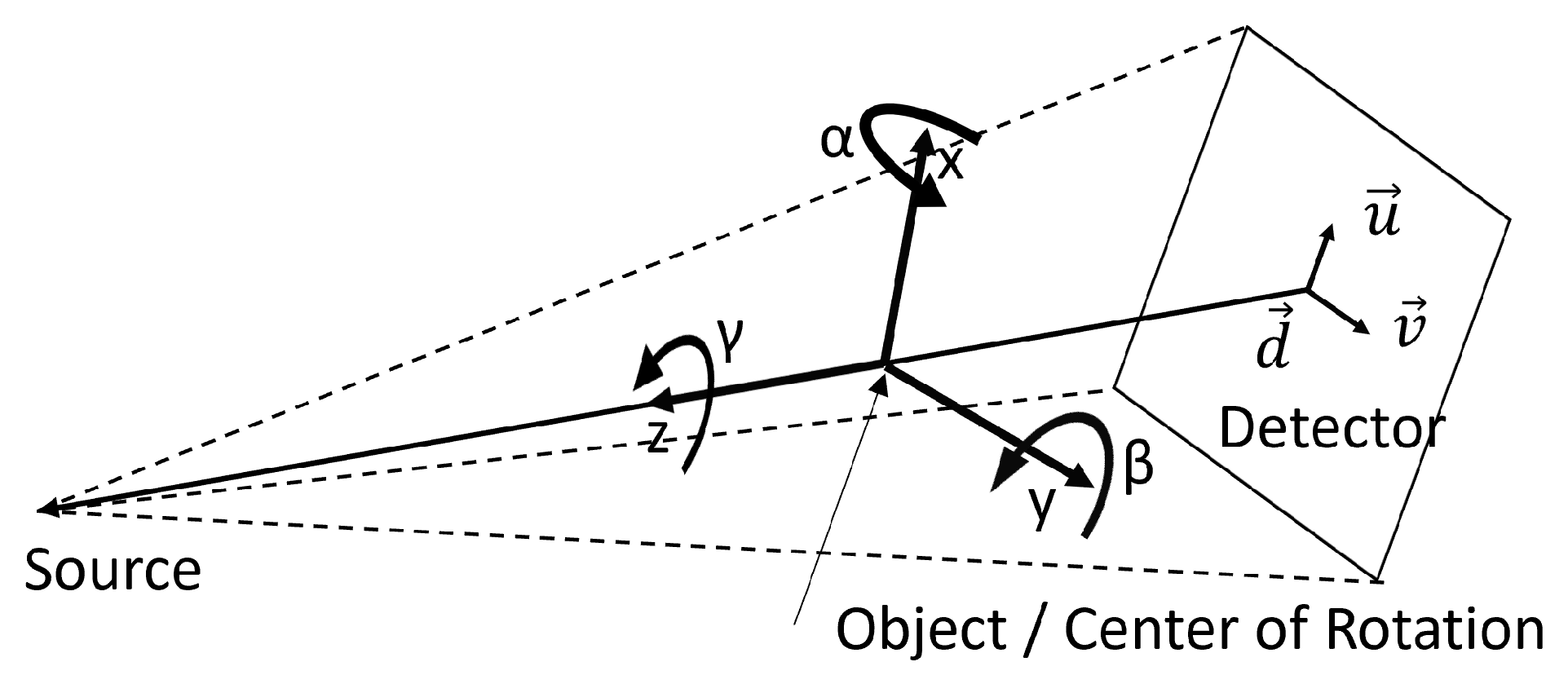

2.1. Projection and Optimization Parameters

2.2. Feature Points Matching

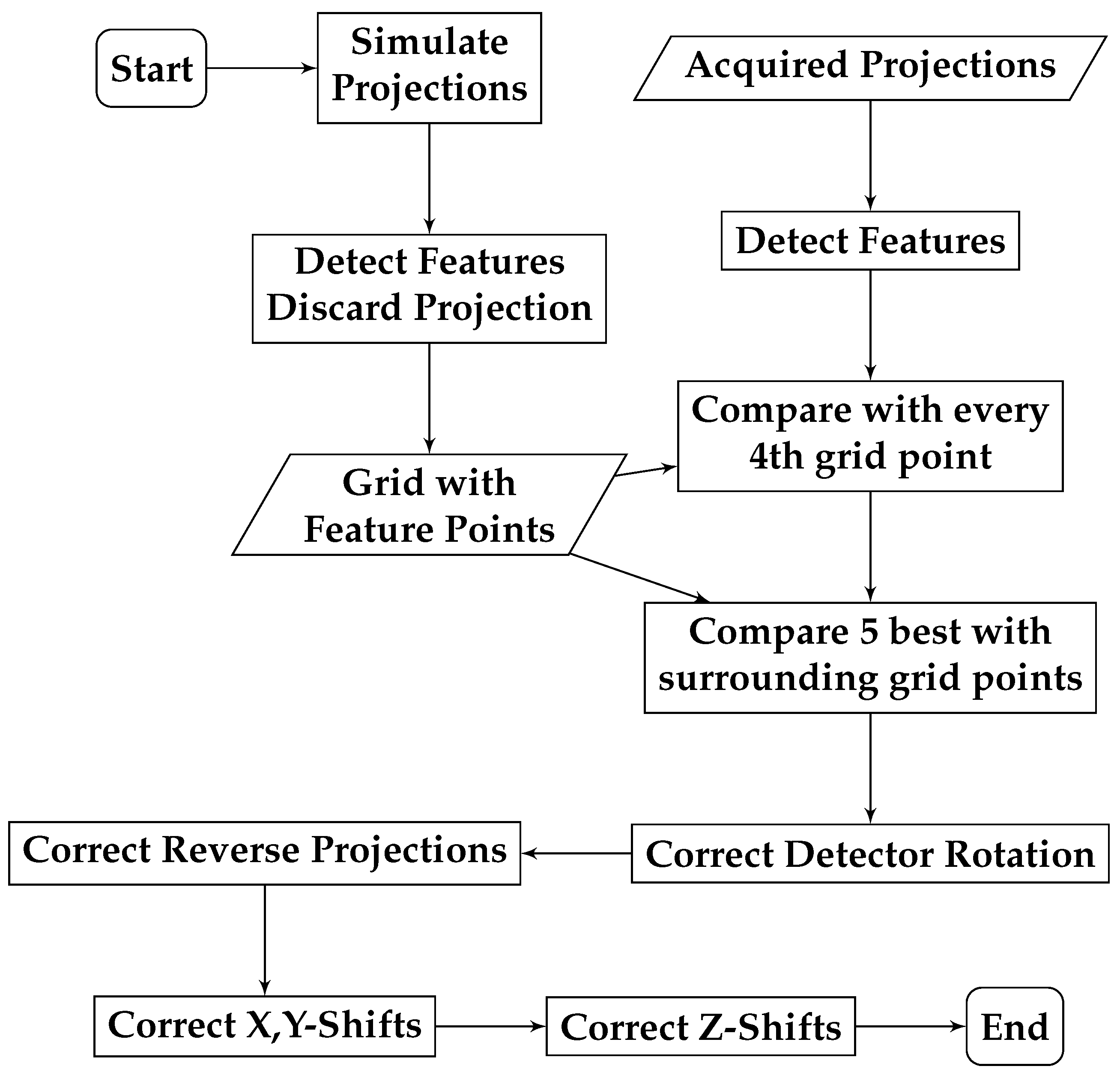

2.3. Algorithm

| Listing 1. Approximation function for the detector rotation. | |

| 1 | def approximate_detector_rotation(current_parameters): |

| 2 | # simulate projection and track features |

| 3 | simulated_projection = ForwardProjection(current_parameters) |

| 4 | simulated_feature_points = trackFeatures(simultaded_projection, |

| ↪ real_projection) | |

| 5 | # calculate center point |

| 6 | simulated_mid = mean(real_feature_points, axis=0) |

| 7 | real_mid = mean(simulated_feature_points, axis=0) |

| 8 | sim_points = simulated_feature_points - simulated_mid |

| 9 | real_points = real_feature_points - real_mid |

| 10 | # calculate angle |

| 11 | angles = (arctan2(sim_points[:,0], sim_points[:,1]) |

| 12 | -arctan2(real_points[:,0], real_points[:,1])) ∗ 180.0/PI |

| 13 | angles[angle<-180] += 360 |

| 14 | angles[angle>180] -= 360 |

| 15 | detector_angle = median(angles) |

| 16 | # test in which direction to rotate |

| 17 | proj = ForwardProjection(applyRotation(current_parameters, |

| ↪ 0,0,-detector_angle)) | |

| 18 | points = trackFeatures(proj, real_projection) |

| 19 | diffn = points - real_feature_points |

| 20 | proj = ForwardProjection(applyRotation(current_parameters, |

| ↪ 0,0,+detector_angle)) | |

| 21 | points = trackFeatures(proj, real_projection) |

| 22 | diffp = points - real_feature_points |

| 23 | if sum( abs(diffn) ) < sum( abs(diffp) ): |

| 24 | return -detector_angle |

| 25 | else: |

| 26 | return detector_angle |

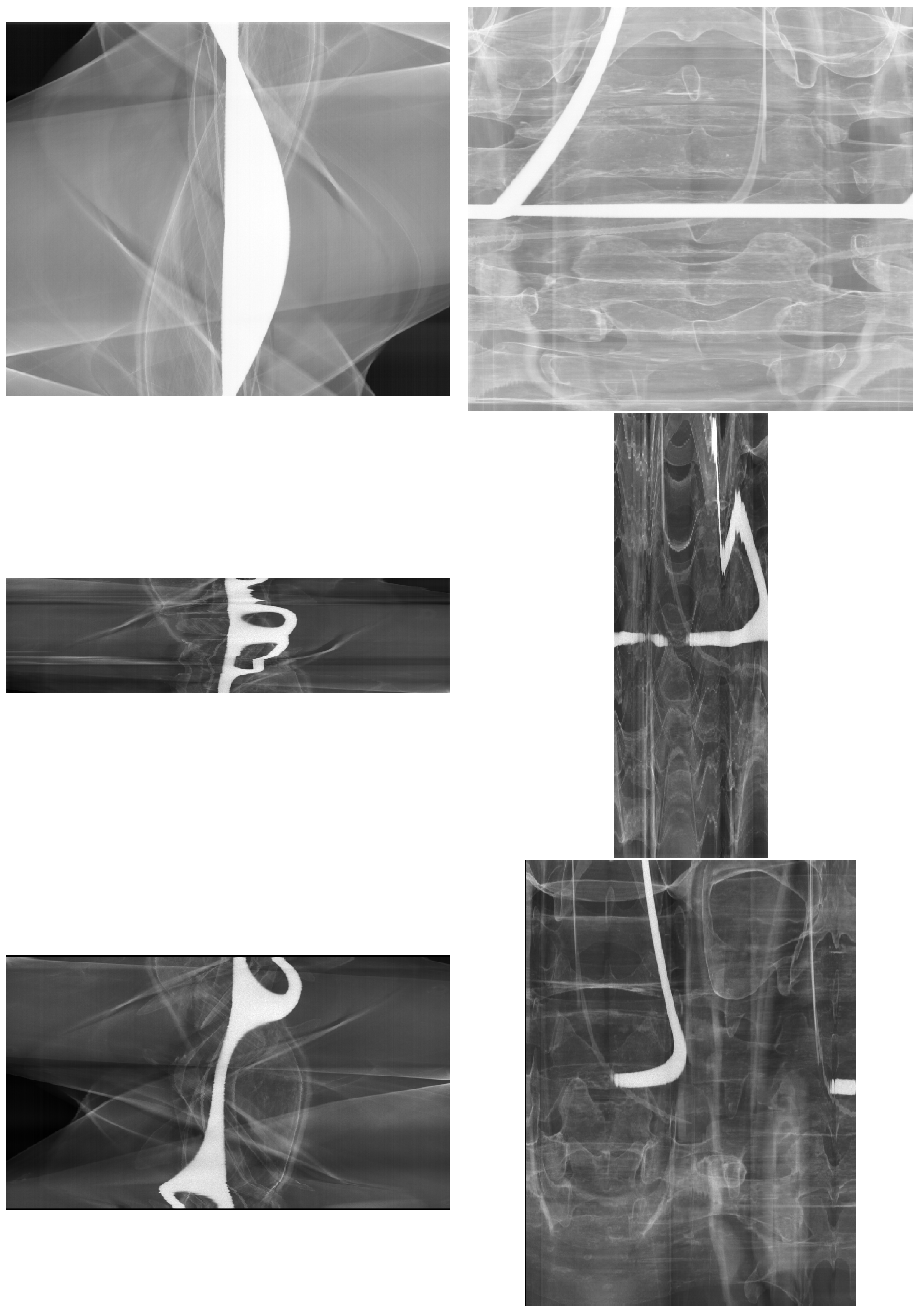

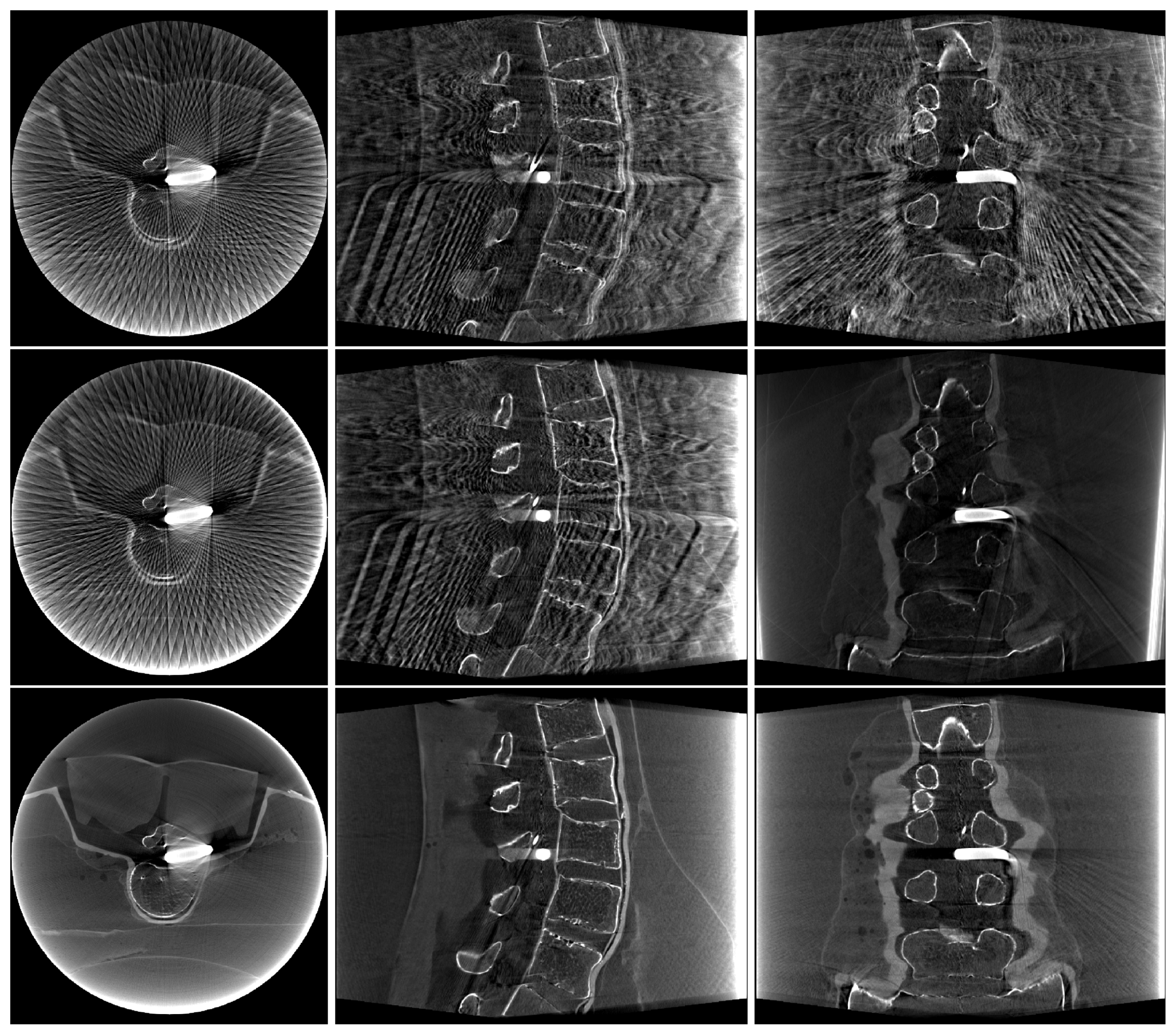

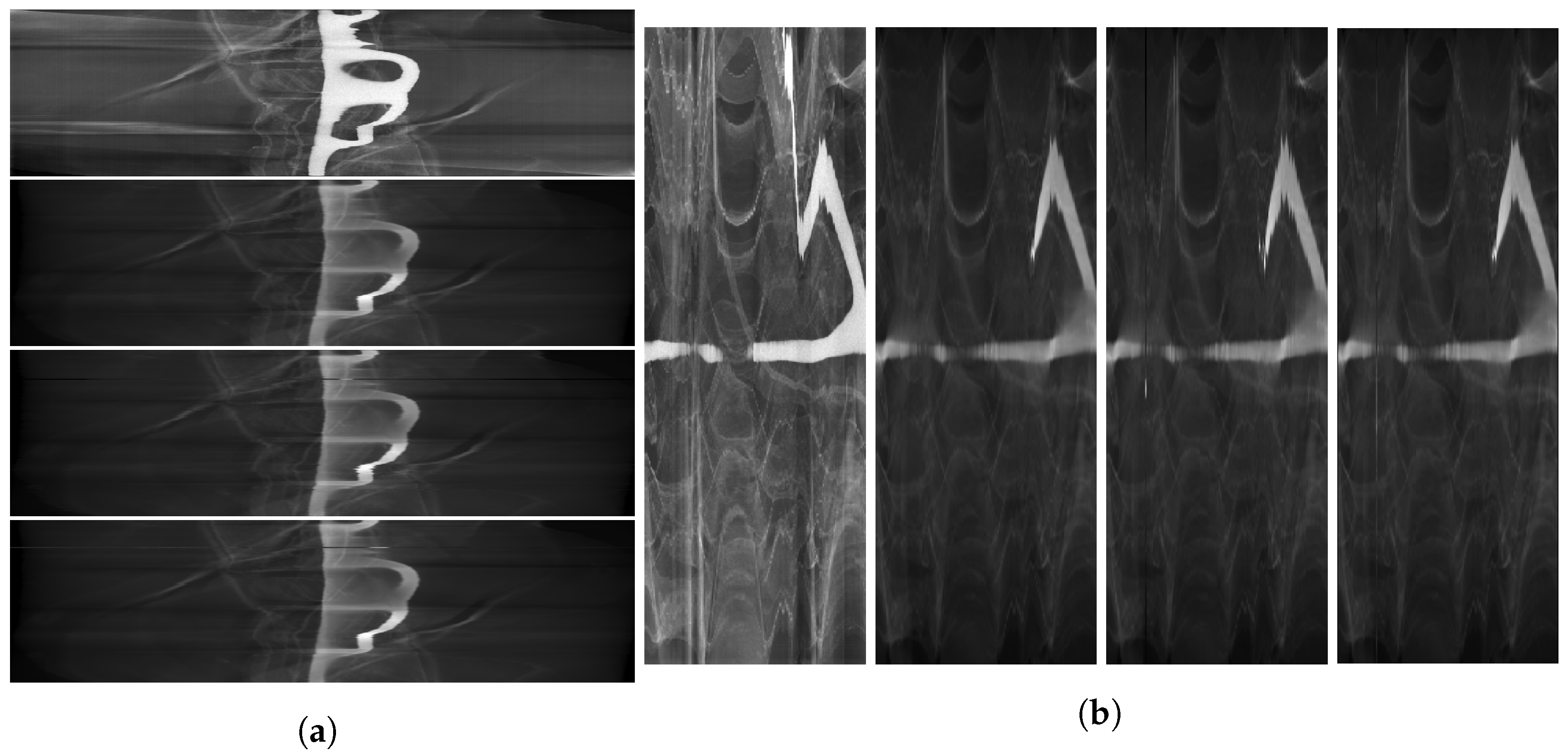

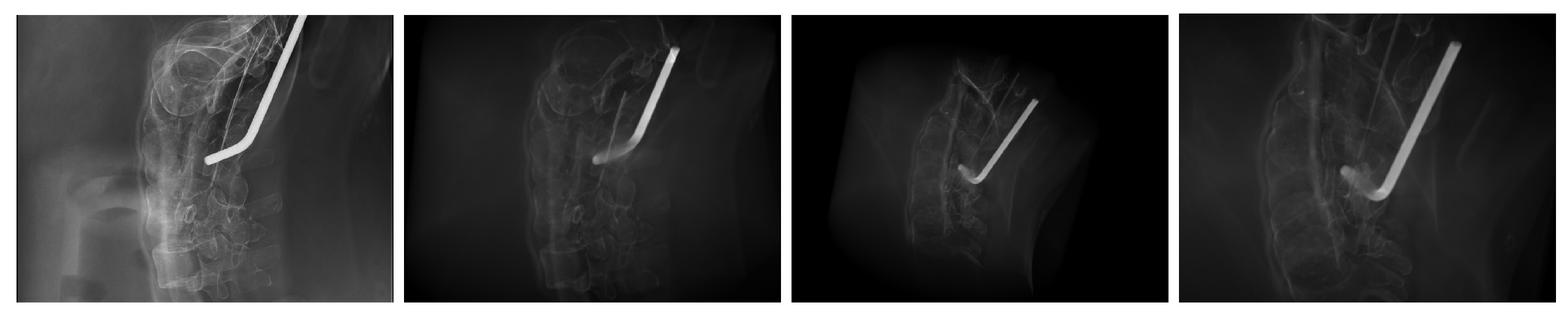

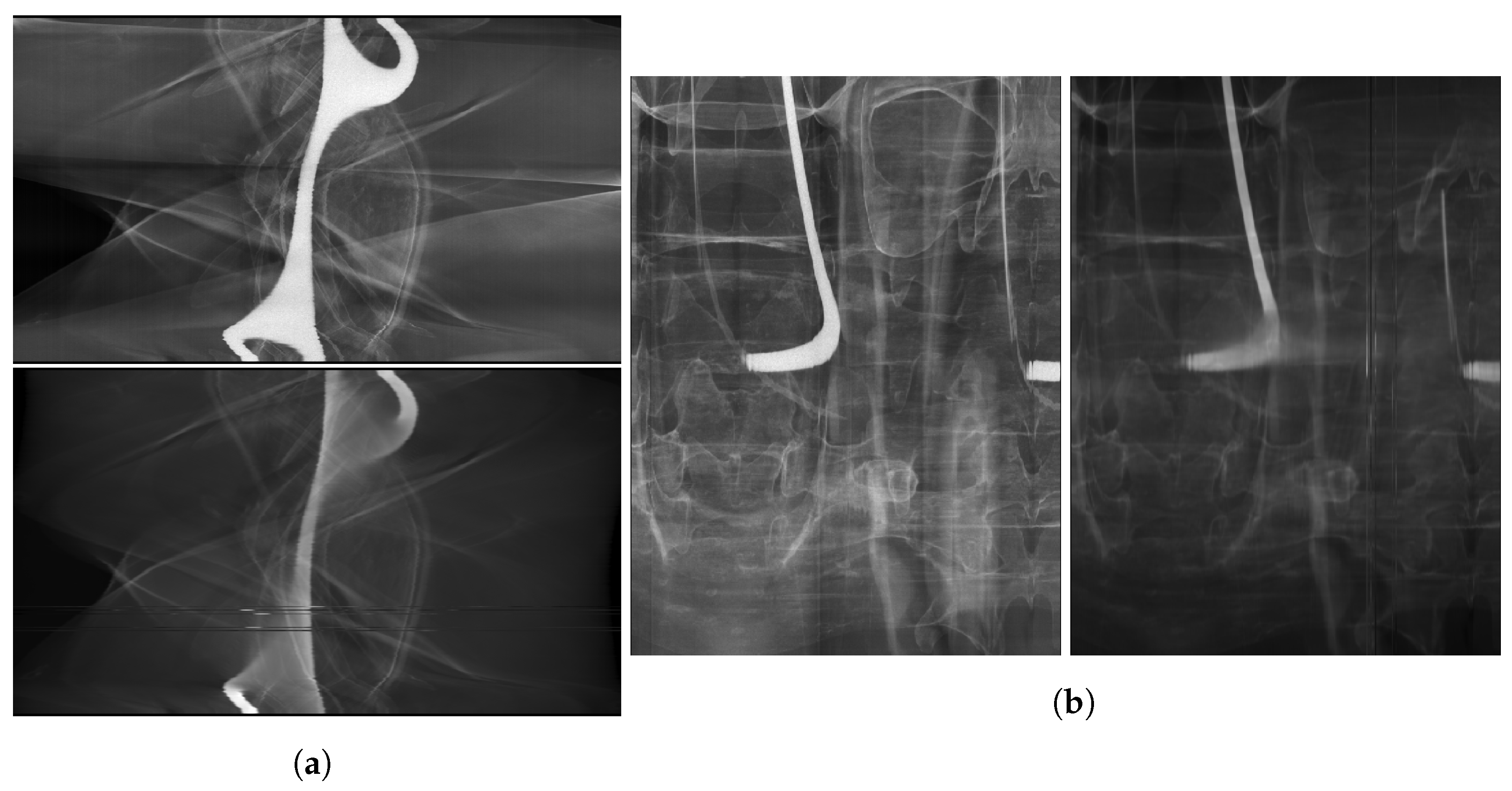

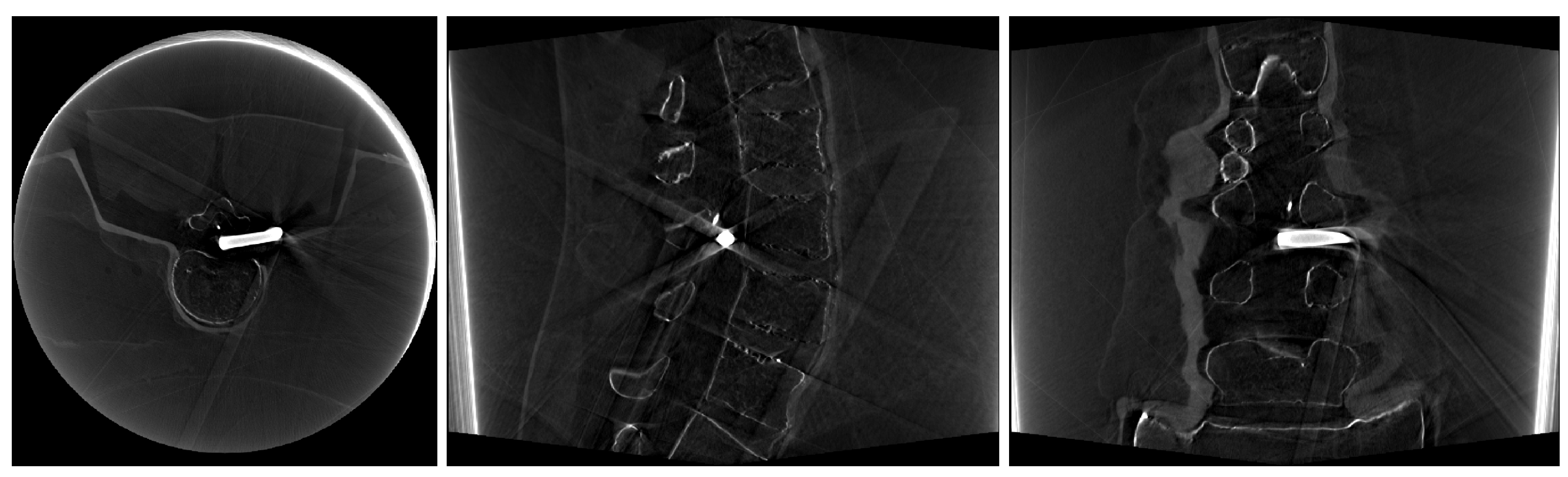

2.4. Image Data

2.5. Evaluation

2.5.1. Metrics

2.5.2. System Specifications

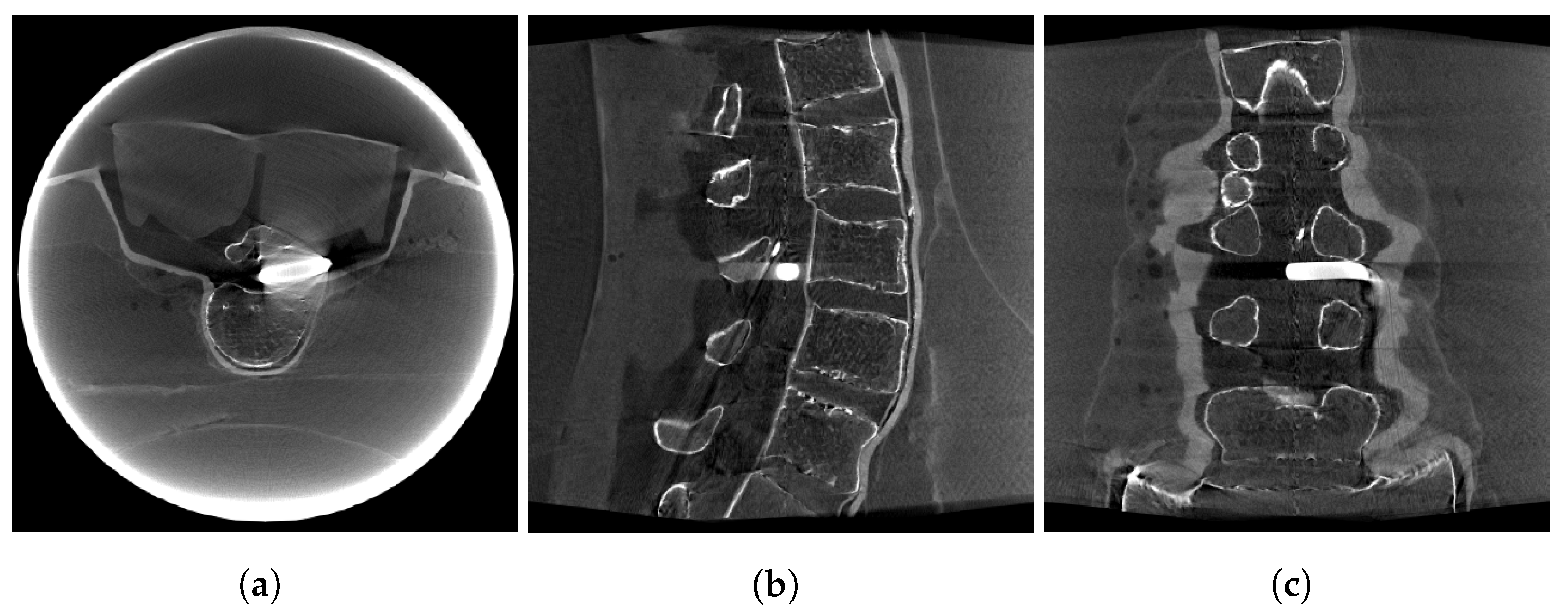

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Von Smekal, L.; Kachelrieß, M.; Stepina, E.; Kalender, W.A. Geometric misalignment and calibration in cone-beam tomography. Med. Phys. 2004, 31, 3242–3266. [Google Scholar] [CrossRef]

- Yang, K.; Kwan, A.L.C.; Miller, D.F.; Boone, J.M. A geometric calibration method for cone beam CT systems. Med. Phys. 2006, 33, 1695–1706. [Google Scholar] [CrossRef] [PubMed]

- Mennessier, C.; Clackdoyle, R.; Noo, F. Direct determination of geometric alignment parameters for cone-beam scanners. Phys. Med. Biol. 2009, 54, 1633. [Google Scholar] [CrossRef] [PubMed]

- Jacobson, M.W.; Ketcha, M.D.; Capostagno, S.; Martin, A.; Uneri, A.; Goerres, J.; Silva, T.D.; Reaungamornrat, S.; Han, R.; Manbachi, A.; et al. A line fiducial method for geometric calibration of cone-beam CT systems with diverse scan trajectories. Phys. Med. Biol. 2018, 63, 025030. [Google Scholar] [CrossRef] [PubMed]

- Ferrucci, M.; Heřmánek, P.; Ametova, E.; Sbettega, E.; Vopalensky, M.; Kumpová, I.; Vavřík, D.; Carmignato, S.; Craeghs, T.; Dewulf, W. Measurement of the X-ray computed tomography instrument geometry by minimization of reprojection errors—Implementation on experimental data. Precis. Eng. 2018, 54, 107–117. [Google Scholar] [CrossRef]

- Ferrucci, M.; Leach, R.K.; Giusca, C.; Carmignato, S.; Dewulf, W. Towards geometrical calibration of X-ray computed tomography systems—A review. Meas. Sci. Technol. 2015, 26, 092003. [Google Scholar] [CrossRef]

- Zechner, A.; Stock, M.; Kellner, D.; Ziegler, I.; Keuschnigg, P.; Huber, P.; Mayer, U.; Sedlmayer, F.; Deutschmann, H.; Steininger, P. Development and first use of a novel cylindrical ball bearing phantom for 9-DOF geometric calibrations of flat panel imaging devices used in image-guided ion beam therapy. Phys. Med. Biol. 2016, 61, N592. [Google Scholar] [CrossRef]

- Ouadah, S.; Stayman, J.W.; Gang, G.; Uneri, A.; Ehtiati, T.; Siewerdsen, J.H. Self-calibration of cone-beam CT geometry using 3D-2D image registration: Development and application to tasked-based imaging with a robotic C-arm. In Medical Imaging 2015: Image-Guided Procedures, Robotic Interventions, and Modeling; SPIE: Piscataway, NJ, USA, 2015; Volume 9415, pp. 336–342. [Google Scholar] [CrossRef]

- Chung, K.; Schad, L.R.; Zöllner, F.G. Tomosynthesis implementation with adaptive online calibration on clinical C-arm systems. Int. J. Comput. Assist. Radiol. Surg. 2018, 13, 1481–1495. [Google Scholar] [CrossRef]

- Tönnes, C.; Russ, T.; Schad, L.R.; Zöllner, F.G. Feature-based CBCT self-calibration for arbitrary trajectories. Int. J. Comput. Assist. Radiol. Surg. 2022, 17, 2151–2159. [Google Scholar] [CrossRef]

- Yang, S.; Han, Y.; Li, L.; Xi, X.; Tan, S.; Zhu, L.; Liu, M.; Yan, B. Geometric Parameter Self-Calibration Based on Projection Feature Matching for X-ray Nanotomography. Appl. Sci. 2022, 12, 11675. [Google Scholar] [CrossRef]

- Muders, J.; Hesser, J. Stable and Robust Geometric Self-Calibration for Cone-Beam CT Using Mutual Information. IEEE Trans. Nucl. Sci. 2014, 61, 202–217. [Google Scholar] [CrossRef]

- Kim, C.; Jeong, C.; Park, M.J.; Cho, B.; Song, S.Y.; Lee, S.W.; Kwak, J. A feasibility study of data redundancy based on-line geometric calibration without dedicated phantom on Varian OBI CBCT system. In Medical Imaging 2021: Physics of Medical Imaging; Bosmans, H., Zhao, W., Yu, L., Eds.; SPIE: Piscataway, NJ, USA, 2021; Volume 11595, p. 115952H. [Google Scholar] [CrossRef]

- Zhang, J.; He, B.; Yang, Z.; Kang, W. A Novel Geometric Parameter Self-Calibration Method for Portable CBCT Systems. Electronics 2023, 12, 720. [Google Scholar] [CrossRef]

- Zheng, L.; Luo, S.; Chen, L.; Luo, S. Self-geometric calibration of circular cone beam CT based on epipolar geometry consistency. In Medical Imaging 2019: Physics of Medical Imaging; Schmidt, T.G., Chen, G.H., Bosmans, H., Eds.; SPIE: Piscataway, NJ, USA, 2019; Volume 10948, p. 109482J. [Google Scholar] [CrossRef]

- Hatamikia, S.; Biguri, A.; Kronreif, G.; Russ, T.; Kettenbach, J.; Birkfellner, W. Short Scan Source-detector Trajectories for Target-based CBCT. In Proceedings of the 2020 42nd Annual International Conference of the IEEE Engineering in Medicine Biology Society (EMBC), Virtual, 20–24 July 2020; pp. 1299–1302. [Google Scholar] [CrossRef]

- Ouadah, S.; Stayman, J.W.; Gang, G.J.; Ehtiati, T.; Siewerdsen, J.H. Self-calibration of cone-beam CT geometry using 3D–2D image registration. Phys. Med. Biol. 2016, 61, 2613–2632. [Google Scholar] [CrossRef]

- Grzeda, V.; Fichtinger, G. C-arm rotation encoding with accelerometers. Int. J. Comput. Assist. Radiol. Surg. 2010, 5, 385–391. [Google Scholar] [CrossRef] [PubMed]

- Lemammer, I.; Michel, O.; Ayasso, H.; Zozor, S.; Bernard, G. Online mobile C-arm calibration using inertial sensors: A preliminary study in order to achieve CBCT. Int. J. Comput. Assist. Radiol. Surg. 2020, 15, 213–224. [Google Scholar] [CrossRef] [PubMed]

- Mitschke, M.M.; Navab, N.; Schuetz, O. Online geometrical calibration of a mobile C-arm using external sensors. In Medical Imaging 2000: Image Display and Visualization; Mun, S.K., Ed.; SPIE: Piscataway, NJ, USA, 2000; Volume 3976, pp. 580–587. [Google Scholar] [CrossRef]

- Sorensen, S.; Mitschke, M.; Solberg, T. Cone-beam CT using a mobile C-arm: A registration solution for IGRT with an optical tracking system. Phys. Med. Biol. 2007, 52, 3389. [Google Scholar] [CrossRef]

- Alcantarilla, P.F.; Solutions, T. Fast explicit diffusion for accelerated features in nonlinear scale spaces. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 34, 1281–1298. [Google Scholar]

- Lowe, D.G. Distinctive Image Features from Scale-Invariant Keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Van Aarle, W.; Palenstijn, W.J.; Cant, J.; Janssens, E.; Bleichrodt, F.; Dabravolski, A.; Beenhouwer, J.D.; Batenburg, K.J.; Sijbers, J. Fast and flexible X-ray tomography using the ASTRA toolbox. Opt. Express 2016, 24, 25129–25147. [Google Scholar] [CrossRef]

- Wang, Z.; Bovik, A.; Sheikh, H.; Simoncelli, E. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef]

- Otake, Y.; Wang, A.S.; Stayman, J.W.; Uneri, A.; Kleinszig, G.; Vogt, S.; Khanna, A.J.; Gokaslan, Z.L.; Siewerdsen, J.H. Robust 3D–2D image registration: Application to spine interventions and vertebral labeling in the presence of anatomical deformation. Phys. Med. Biol. 2013, 58, 8535. [Google Scholar] [CrossRef] [PubMed]

| Algorithm | Runtime [hh:mm] | NGI | SSIM | NRMSE |

|---|---|---|---|---|

| Coarse Estimate | 01:06 | * | * | * |

| Refined Estimate | 01:26 | |||

| Est. + FORCASTER | 03:28 | * | * | * |

| Est. + CMA-ES & NGI | 05:39 | * | * | * |

| Algorithm | NGI | SSIM | NRMSE | Dice |

|---|---|---|---|---|

| Coarse estimate | * | * | * | * |

| Refined estimate | ||||

| Est. + FORCASTER | * | * | * | * |

| Est. + CMA-ES & NGI | * | * | * | * |

| Algorithm | Runtime [hh:mm] | NGI | SSIM | NRMSE |

|---|---|---|---|---|

| Coarse estimate | 00:24 | * | * | * |

| Refined estimate | 00:27 | |||

| Est. + FORCASTER | 00:54 | * | * | |

| Est. + CMA-ES & NGI | 01:50 | * | * | * |

| Algorithm | Runtime [hh:mm] | NGI | SSIM | NRMSE |

|---|---|---|---|---|

| Coarse estimate | 01:26 | * | * | * |

| Refined estimate | 01:31 | |||

| Est. + FORCASTER | 03:43 | * | * | * |

| Est. + CMA-ES & NGI | 07:23 | * | * | * |

| Correct cal. CBCT traj. | 00:35 | |||

| Est. + FORCASTER CBCT traj. | 03:28 | |||

| Correct cal. sinus traj. | 00:10 | |||

| Est. + FORCASTER sinus traj. | 00:37 |

| Algorithm | NGI | SSIM | NRMSE | Dice |

|---|---|---|---|---|

| Coarse estimate | * | * | * | * |

| Refined estimate | ||||

| Est. + FORCASTER | * | * | * | |

| Est. + CMA-ES & NGI | * | * | * |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tönnes, C.; Zöllner, F.G. Feature-Oriented CBCT Self-Calibration Parameter Estimator for Arbitrary Trajectories: FORCAST-EST. Appl. Sci. 2023, 13, 9179. https://doi.org/10.3390/app13169179

Tönnes C, Zöllner FG. Feature-Oriented CBCT Self-Calibration Parameter Estimator for Arbitrary Trajectories: FORCAST-EST. Applied Sciences. 2023; 13(16):9179. https://doi.org/10.3390/app13169179

Chicago/Turabian StyleTönnes, Christian, and Frank G. Zöllner. 2023. "Feature-Oriented CBCT Self-Calibration Parameter Estimator for Arbitrary Trajectories: FORCAST-EST" Applied Sciences 13, no. 16: 9179. https://doi.org/10.3390/app13169179

APA StyleTönnes, C., & Zöllner, F. G. (2023). Feature-Oriented CBCT Self-Calibration Parameter Estimator for Arbitrary Trajectories: FORCAST-EST. Applied Sciences, 13(16), 9179. https://doi.org/10.3390/app13169179