SLNER: Chinese Few-Shot Named Entity Recognition with Enhanced Span and Label Semantics

Abstract

1. Introduction

- We propose a simple and effective model named SLNER, which leverages enhanced span representations and label semantics to address the issues of inadequate prior knowledge and limitations in feature representation in Chinese few-shot named entity recognition;

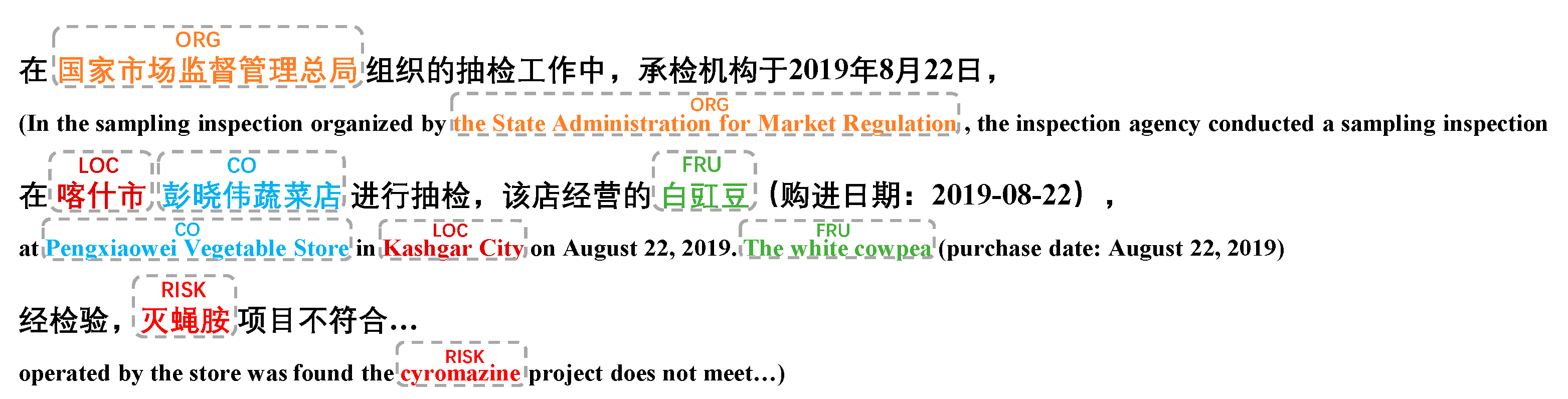

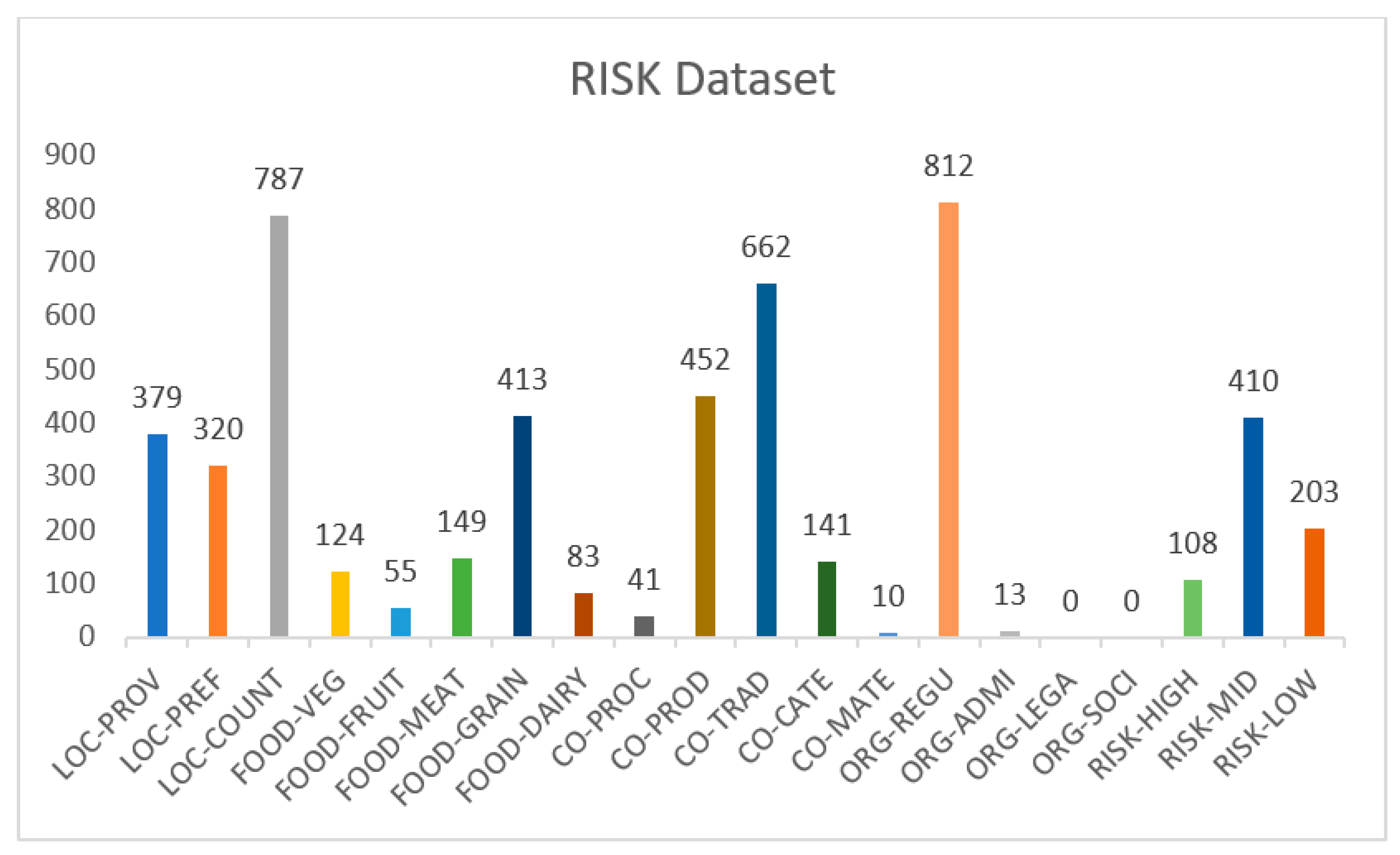

- We created a challenging food safety risk domain dataset, RISK, which is divided into 5 coarse-grained and 20 fine-grained entity categories. This dataset provides data support for the development of named entity recognition applications in the domain of food safety;

- Our proposed model achieved promising performance on the four sampling Chinese NER datasets (including our self-built dataset). Specifically, our model outperformed previous works with F1 scores ranging from 0.20% to 6.57% in different few-shot settings (following the settings of PCBERT) on the Ontonotes, MSRA and Resume datasets. It also achieved promising F1 scores on our self-built RISK dataset.

2. Related Work

2.1. Few-Shot NER

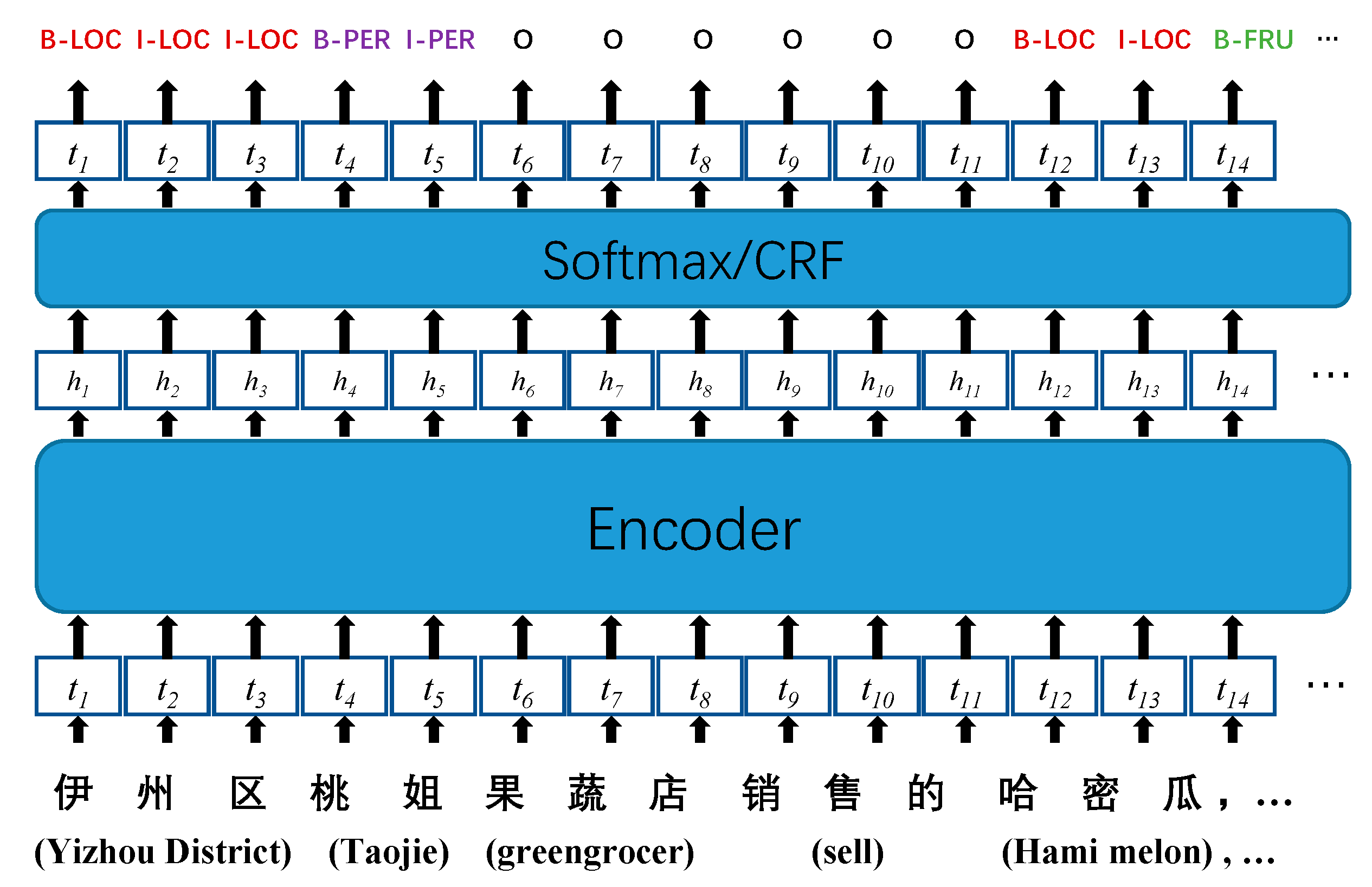

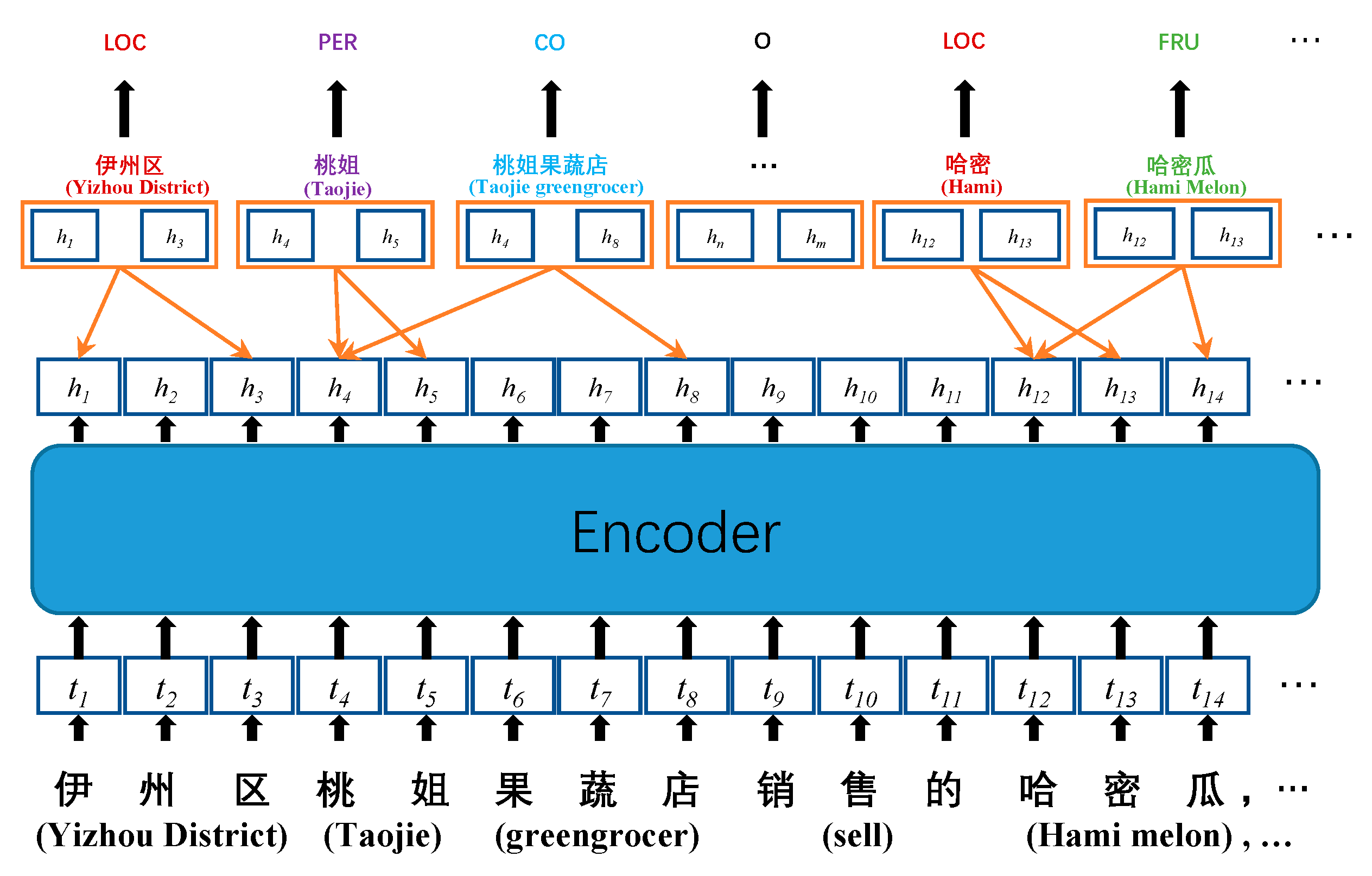

2.2. Span-Based Method

2.3. Label Semantics

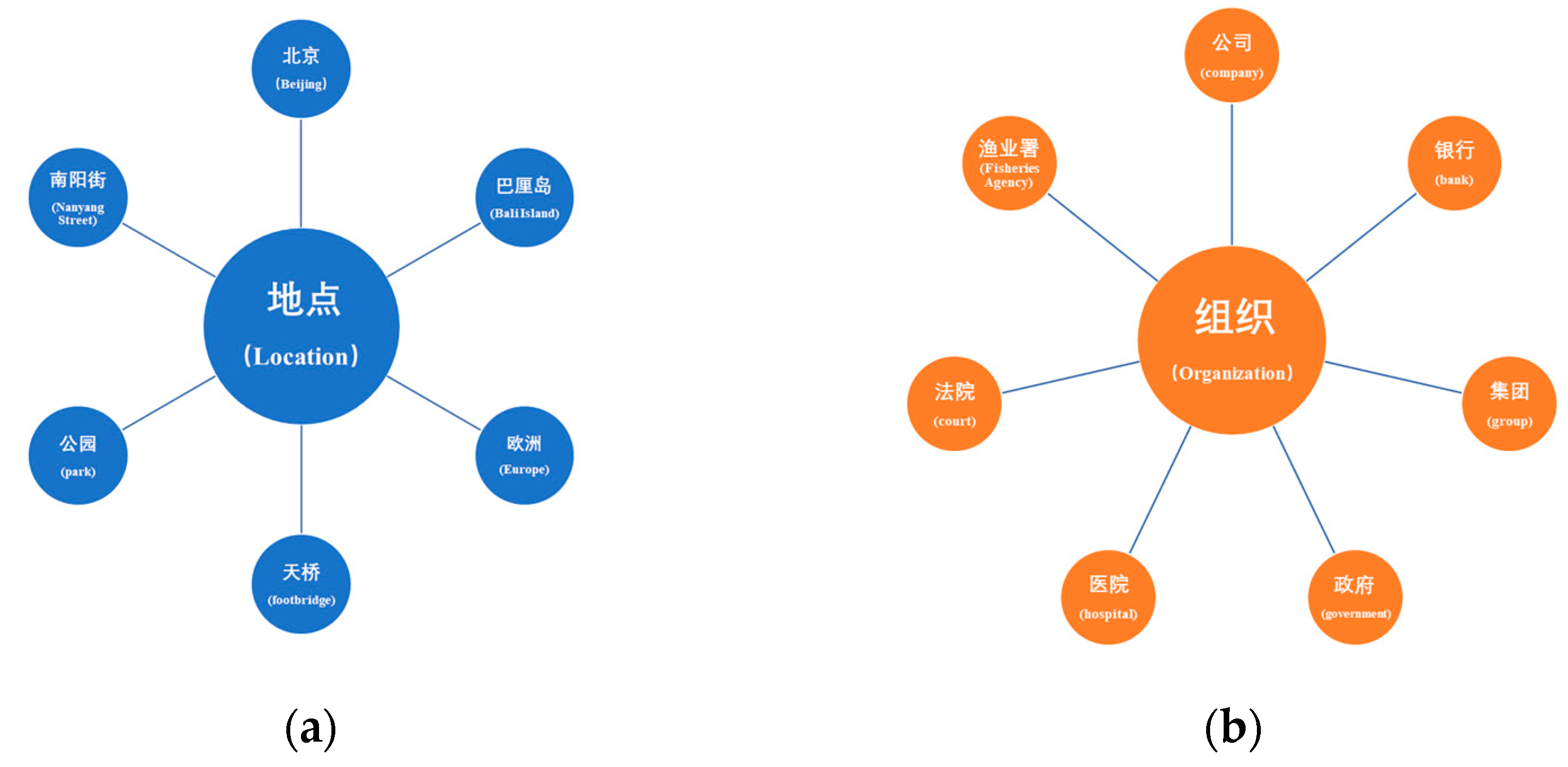

3. Method

3.1. Few-Shot NER Task Formalization

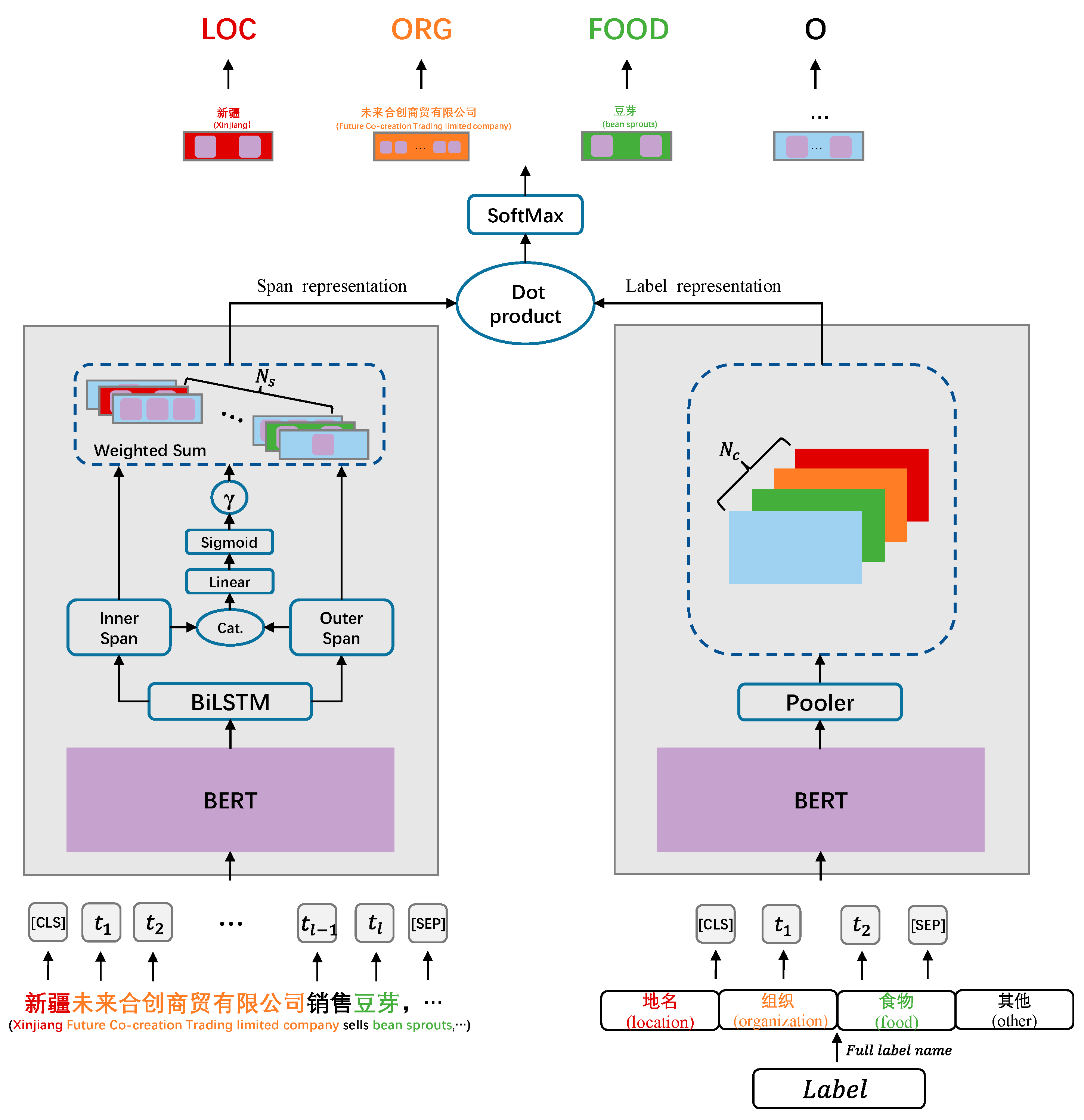

3.2. Overall Structure

3.3. Specific Structure

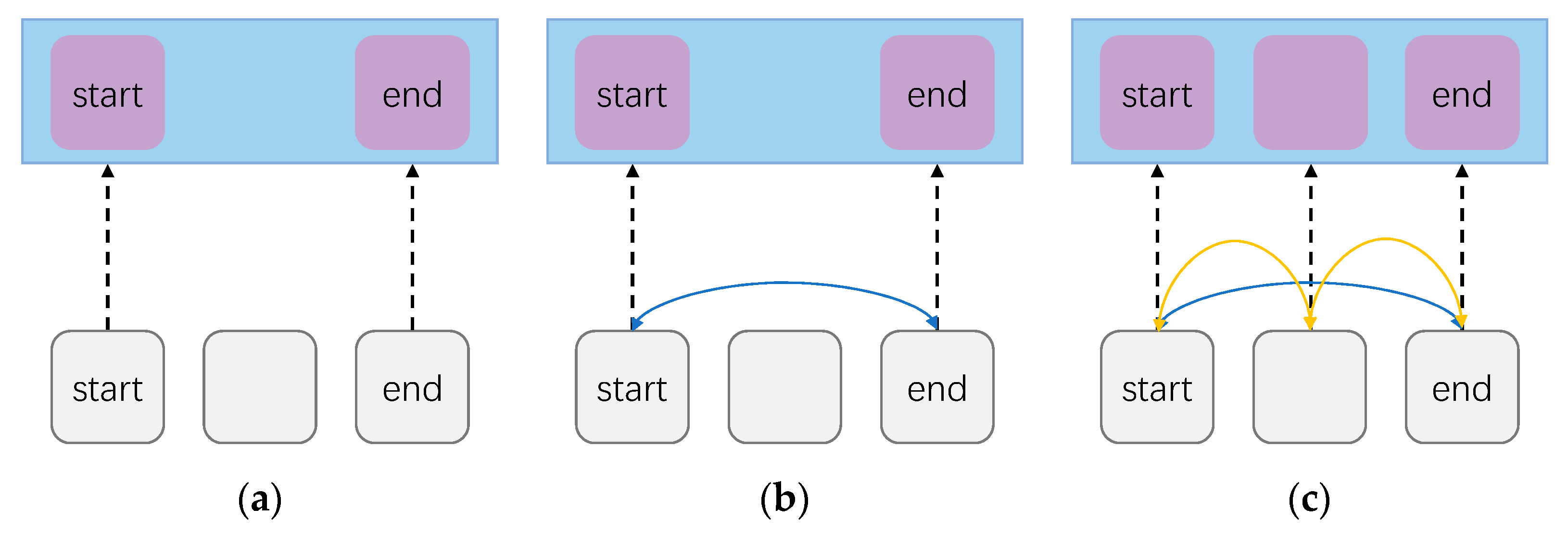

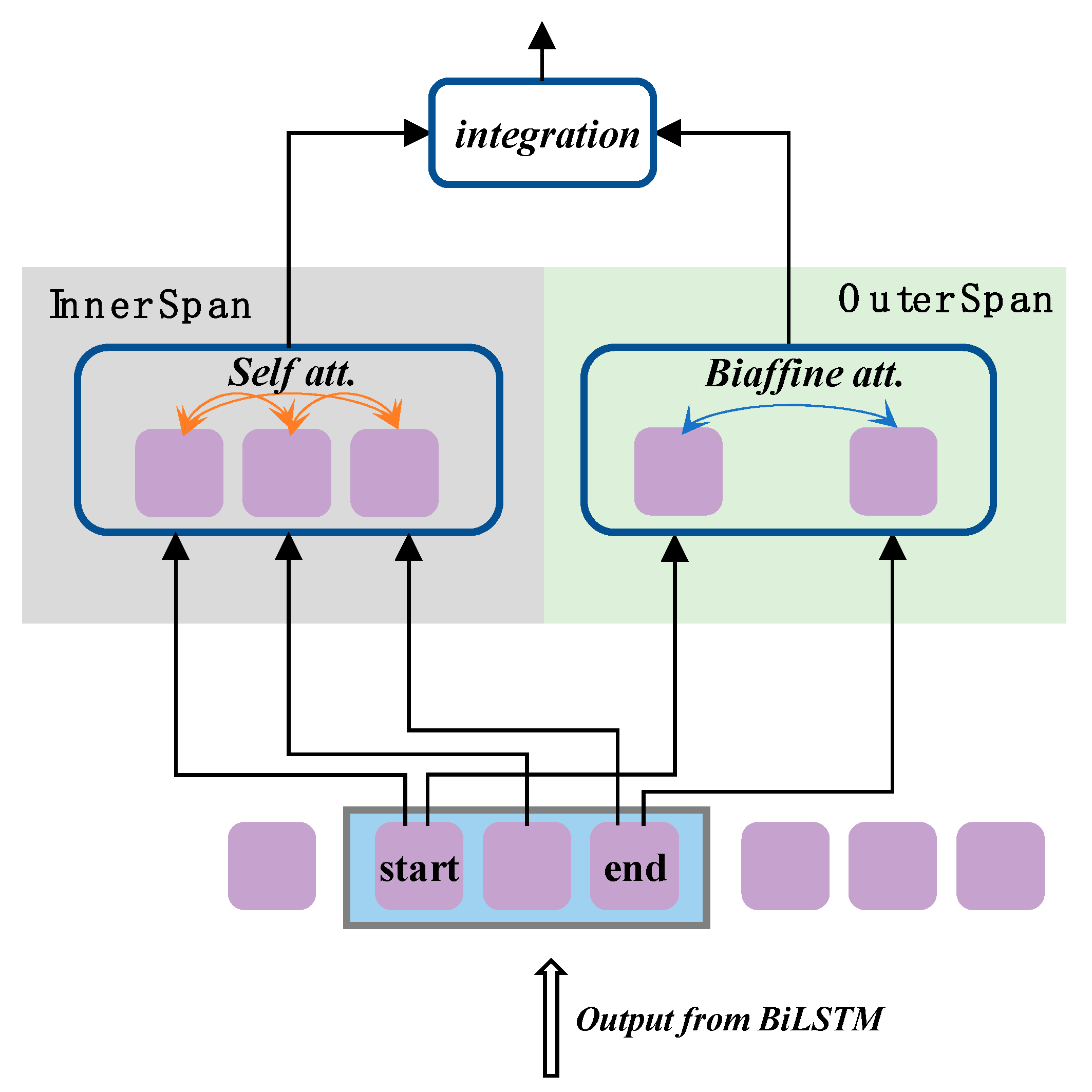

3.3.1. Enhanced Span Representation

3.3.2. Label Representation

3.4. Training Strategy

4. Experiments

4.1. Datasets

- Target Domain Datasets

- Source Domain Dataset

4.2. Implementation Details

4.3. Baseline

4.4. Experimental Results

5. Analysis

5.1. Ablation Study

5.2. The Impact of Enhanced Span Representation

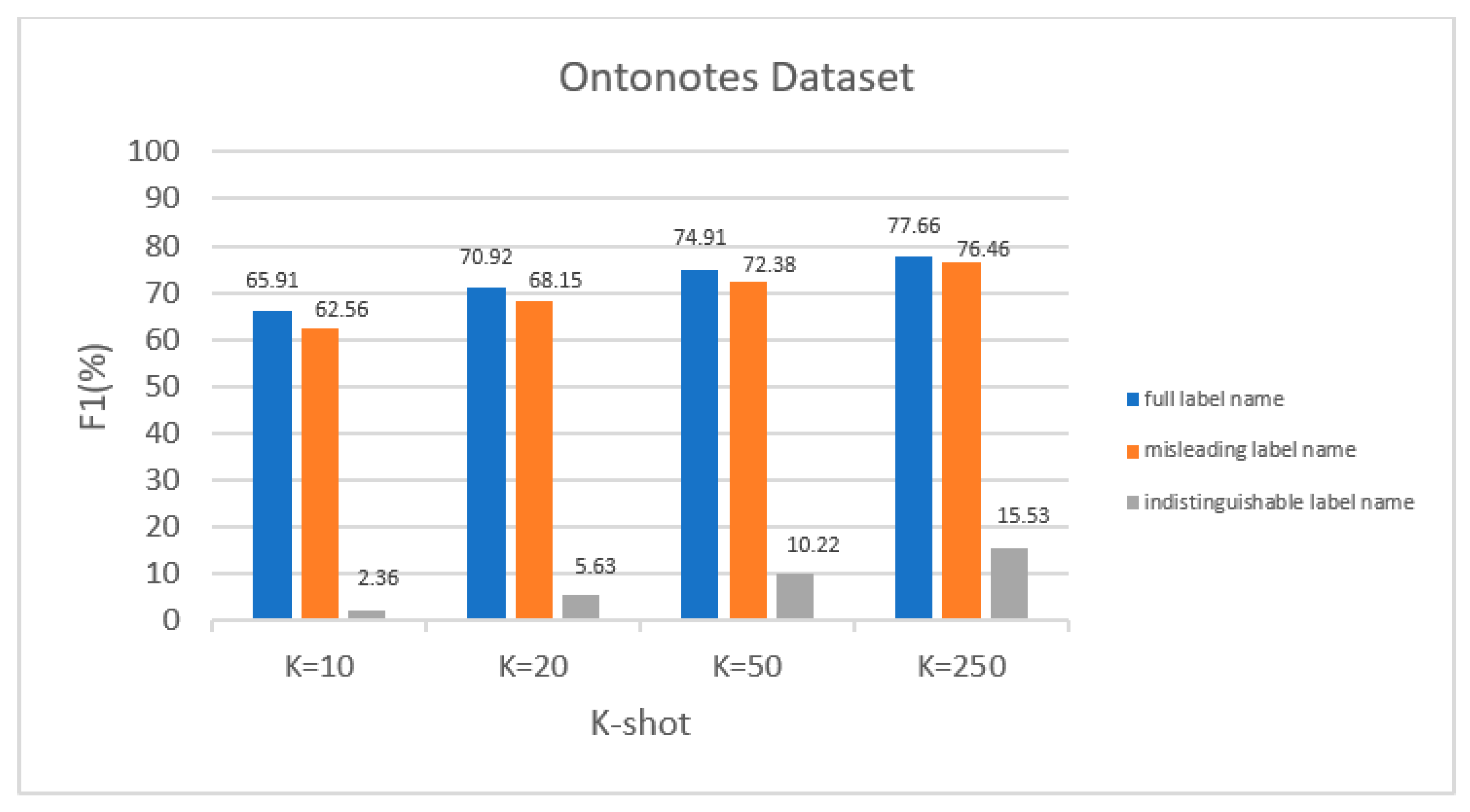

5.3. The Impact of Label Representation

6. Conclusions

Author Contributions

Funding

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Lu, Y.; Liu, Q.; Dai, D.; Xiao, X.; Lin, H.; Han, X.; Sun, L.; Wu, H. Unified Structure Generation for Universal Information Extraction. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, Dublin, Ireland, 22–27 May 2022; Volume 1, pp. 5755–5772. [Google Scholar]

- Mollá, D.; Van Zaanen, M.; Smith, D. Named entity recognition for question answering. In Proceedings of the Australasian Language Technology Workshop 2006, Sydney, Australia, 11 November 2006; pp. 51–58. [Google Scholar]

- Stahlberg, F. Neural Machine Translation: A Review. J. Artif. Intell. Res. 2020, 69, 343–418. [Google Scholar] [CrossRef]

- Lample, G.; Ballesteros, M.; Subramanian, S.; Kawakami, K.; Dyer, C. Neural architectures for named entity recognition. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, San Diego, CA, USA, 12–17 June 2016; Association for Computational Linguistics: Stroudsburg, PA, USA, 2016; pp. 260–270. [Google Scholar]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the NAACL-HLT 2019, Minneapolis, MN, USA, 2–7 June 2019. [Google Scholar]

- Vinyals, O.; Blundell, C.; Lillicrap, T.; Wierstra, D. Matching networks for one shot learning. In Proceedings of the Advances in Neural Information Processing Systems 29 (NIPS 2016), Barcelona, Spain, 5–10 December 2016. [Google Scholar]

- Snell, J.; Swersky, K.; Zemel, R. Prototypical networks for few-shot learning. In Proceedings of the Advances in Neural Information Processing Systems 30 (NIPS 2017), Long Beach, CA, USA, 4–9 December 2017. [Google Scholar]

- Hou, Y.; Che, W.; Lai, Y.; Zhou, Z.; Liu, Y.; Liu, H.; Liu, T. Few-shot slot tagging with collapsed dependency transfer and label-enhanced task-adaptive projection network. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020; Association for Computational Linguistics: Stroudsburg, PA, USA, 2020; pp. 1381–1393. [Google Scholar]

- Cui, L.; Wu, Y.; Liu, J.; Yang, S.; Zhang, Y. Template-based named entity recognition using BART. In Proceedings of the Findings of the Association for Computational Linguistics, ACL/IJCNLP 2021, Online, 1–6 August 2021; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 1835–1845. [Google Scholar]

- Lai, P.; Ye, F.; Zhang, L.; Chen, Z.; Fu, Y.; Wu, Y.; Wang, Y. PCBERT: Parent and Child BERT for Chinese Few-shot NER. In Proceedings of the 29th International Conference on Computational Linguistics, Gyeongju, Republic of Korea, 12–17 October 2022; pp. 2199–2209. [Google Scholar]

- Zhang, Y.; Yang, J. Chinese NER using lattice LSTM. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, Melbourne, Australia, 15–20 July 2018; Volume 1, pp. 1554–1564. [Google Scholar]

- Dong, X.; Xin, X.; Guo, P. Chinese NER by Span-Level Self-Attention. In Proceedings of the 2019 15th International Conference on Computational Intelligence and Security (CIS), Macao, China, 13–16 December 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 68–72. [Google Scholar]

- Li, X.; Feng, J.; Meng, Y.; Han, Q.; Wu, F.; Li, J. A Unified MRC Framework for Named Entity Recognition. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020. [Google Scholar]

- Chiu, J.P.; Nichols, E. Named entity recognition with bidirectional LSTM-CNNs. Trans. Assoc. Comput. Linguist. 2016, 4, 357–370. [Google Scholar] [CrossRef]

- Cui, L.; Zhang, Y. Hierarchically refined label attention network for sequence labeling. In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), Hong Kong, China, 3–7 November 2019; Association for Computational Linguistics: Stroudsburg, PA, USA, 2019; pp. 4115–4128. [Google Scholar]

- Tong, M.; Wang, S.; Xu, B.; Cao, Y.; Liu, M.; Hou, L.; Li, J. Learning from Miscellaneous Other-Class Words for Few-Shot Named Entity Recognition. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing, Virtual, 1–6 August 2021; Volume 1, pp. 6236–6247. [Google Scholar]

- Lee, D.H.; Kadakia, A.; Tan, K.; Agarwal, M.; Feng, X.; Shibuya, T.; Mitani, R.; Sekiya, T.; Pujara, J.; Ren, X. Good Examples Make A Faster Learner: Simple Demonstration-based Learning for Low-resource NER. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, Dublin, Ireland, 22–27 May 2022; Volume 1, pp. 2687–2700. [Google Scholar]

- Chen, X.; Li, L.; Deng, S.; Tan, C.; Xu, C.; Huang, F.; Si, L.; Chen, H.; Zhang, N. LightNER: A Lightweight Tuning Paradigm for Low-resource NER via Pluggable Prompting. In Proceedings of the 29th International Conference on Computational Linguistics, Gyeongju, Republic of Korea, 12–17 October 2022; pp. 2374–2387. [Google Scholar]

- Wang, L.; Li, R.; Yan, Y.; Yan, Y.; Wang, S.; Wu, W.; Xu, W. Instructionner: A multi-task instruction-based generative framework for few-shot ner. arXiv 2022, arXiv:2203.03903. [Google Scholar]

- Chen, J.; Liu, Q.; Lin, H.; Han, X.; Sun, L. Few-shot Named Entity Recognition with Self-describing Networks. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, Dublin, Ireland, 22–27 May 2022; Volume 1, pp. 5711–5722. [Google Scholar]

- Ma, T.; Jiang, H.; Wu, Q.; Zhao, T.; Lin, C.Y. Decomposed Meta-Learning for Few-Shot Named Entity Recognition. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2022, Dublin, Ireland, 22–27 May 2022; pp. 1584–1596. [Google Scholar]

- Das, S.S.S.; Katiyar, A.; Passonneau, R.J.; Zhang, R. CONTaiNER: Few-Shot Named Entity Recognition via Contrastive Learning. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, Dublin, Ireland, 22–27 May 2022; Volume 1. [Google Scholar]

- Wang, S.; Li, X.; Meng, Y.; Zhang, T.; Ouyang, R.; Li, J.; Wang, G. kNN-NER: Named Entity Recognition with Nearest Neighbor Search. arXiv 2022, arXiv:2203.17103. [Google Scholar]

- Huang, Z.; Wei, X.; Kai, Y. Bidirectional lstmcrf models for sequence tagging. Computer Science arXiv 2015, arXiv:1508.01991. [Google Scholar]

- Peters, M.E.; Neumann, M.; Iyyer, M.; Gardner, M.; Clark, C.; Lee, K.; Zettlemoyer, L. Deep contextualized word representations. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, New Orleans, LA, USA, 1–6 June 2018; Association for Computational Linguistics: Stroudsburg, PA, USA, 2018; Volume 1, pp. 2227–2237. [Google Scholar]

- Yu, J.; Bohnet, B.; Poesio, M. Named entity recognition as dependency parsing. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020; Association for Computational Linguistics: Stroudsburg, PA, USA, 2020; pp. 6470–6476. [Google Scholar]

- Shen, Y.; Ma, X.; Tan, Z.; Zhang, S.; Wang, W.; Lu, W. Locate and label: A two-stage identifier for nested named entity recognition. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics, Virtual, 1–6 August 2021. [Google Scholar]

- Yu, D.; He, L.; Zhang, Y.; Du, X.; Pasupat, P.; Li, Q. Few-shot intent classification and slot filling with retrieved examples. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Online, 6–11 June 2021; Association for Computational Linguistics: Stroudsburg, PA, USA, 2021; pp. 734–749. [Google Scholar]

- Wang, P.; Xu, R.; Liu, T.; Zhou, Q.; Cao, Y.; Chang, B.; Sui, Z. An enhanced span-based decomposition method for few-shot sequence labeling. arXiv 2021, arXiv:2109.13023. [Google Scholar]

- Wang, J.; Wang, C.; Tan, C.; Qiu, M.; Huang, S.; Huang, J.; Gao, M. SpanProto: A Two-stage Span-based Prototypical Network for Few-shot Named Entity Recognition. arXiv 2022, arXiv:2210.09049. [Google Scholar]

- Luo, Q.; Liu, L.; Lin, Y.; Zhang, W. Don’t miss the labels: Label-semantic augmented meta-learner for few-shot text classification. In Proceedings of the Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, Online, 1–6 August 2021; pp. 2773–2782. [Google Scholar]

- Ma, R.; Zhou, X.; Gui, T.; Tan, Y.; Li, L.; Zhang, Q.; Huang, X. Template-free Prompt Tuning for Few-shot NER. In Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Stroudsburg, PA, USA, 10–15 July 2022; pp. 5721–5732. [Google Scholar]

- Zhong, Z.; Chen, D. A Frustratingly Easy Approach for Entity and Relation Extraction. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Online, 6–11 June 2021; pp. 50–61. [Google Scholar]

- Ye, D.; Lin, Y.; Li, P.; Sun, M. Packed Levitated Marker for Entity and Relation Extraction. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics, Dublin, Ireland, 22–27 May 2022; Volume 1, pp. 4904–4917. [Google Scholar]

- Bekoulis, G.; Deleu, J.; Demeester, T.; Develder, C. Joint entity recognition and relation extraction as a multi-head selection problem. Expert Syst. Appl. 2018, 114, 34–45. [Google Scholar] [CrossRef]

- Weischedel, R.; Pradhan, S.; Ramshaw, L.; Palmer, M.; Xue, N.; Marcus, M.; Taylor, A.; Greenberg, C.; Hovy, E.; Houston, A.; et al. Ontonotes Release 4.0. LDC2011T03; Linguistic Data Consortium: Philadelphia, PA, USA, 2011. [Google Scholar]

- Gina-Anne, L. The third international Chinese language processing bakeoff: Word segmentation and named entity recognition. In Proceedings of the Fifth SIGHAN Workshop on Chinese Language Processing, Sydney, Australia, 22–23 July 2006; pp. 108–117. [Google Scholar]

- Xu, L.; Dong, Q.; Yu, C.; Tian, Y.; Liu, W.; Li, L.; Zhang, X. Cluener2020: Fine-grained name entity recognition for Chinese. arXiv 2020, arXiv:2001.04351. [Google Scholar]

- Sui, D.; Tian, Z.; Chen, Y.; Liu, K.; Zhao, J. A large-scale Chinese multimodal NER dataset with speech clues. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing, Virtual, 1–6 August 2021; Volume 1. [Google Scholar]

- Xia, Y.; Yu, H.; Nishino, F. The Chinese named entity categorization based on the people’s daily corpus. Int. J. Comput. Linguist. Chin. Lang. Process. 2005, 10, 533–542. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Li, X.; Yan, H.; Qiu, X.; Huang, X. Flat: Chinese ner using flat-lattice transformer. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020; pp. 6836–6842. [Google Scholar]

- Liu, W.; Fu, X.; Zhang, Y.; Xiao, W. Lexicon enhanced Chinese sequence labelling using bert adapter. arXiv 2021, arXiv:2105.07148. [Google Scholar]

| Dataset | Entity Label | Full Label Name |

|---|---|---|

| Ontonotes | PER | 人名 (person name) |

| ORG | 组织 (organization) | |

| LOC | 位置 (location) | |

| GPE | 地名 (geographic name) | |

| MSRA | NS | 位置 (location) |

| NR | 姓名、名字 (person name) | |

| NT | 组织 (organization) | |

| Resume | CONT | 国籍 (nationality) |

| EDU | 教育背景、学历 (educational background) | |

| LOC | 位置、地名 (location) | |

| NAME | 姓名、名字 (person name) | |

| ORG | 组织 (organization) | |

| PRO | 专业 (profession) | |

| RACE | 民族 (race) | |

| TITLE | 职称、职业 (title and occupation) | |

| RISK | LOC-PROV | 省 (province) |

| LOC-PREF | 市、区 (city, district) | |

| LOC-COUNT | 县 (county) | |

| FOOD-VEG | 蔬菜 (vegetable) | |

| FOOD-FRUIT | 水果 (fruit) | |

| FOOD-MEAT | 肉 (meat) | |

| FOOD-GRAIN | 粮食 (foodstuff) | |

| FOOD-DAIRY | 奶制品、饮品 (dairy products and beverages) | |

| CO-PROC | 加工生产方式 (processing and production methods) | |

| CO-PROD | 公司、厂 (company, factory) | |

| CO-TRAD | 售卖商店 (sales store) | |

| CO-CATE | 饭店 (hotel) | |

| CO-MATE | 超市 (supermarket) | |

| ORG-REGU | 监督局 (supervisory authority) | |

| ORG-ADMI | 管理部门 (management department) | |

| ORG-LEGA | 法律机构 (legal agency) | |

| ORG-SOCI | 社会组织 (social organization) | |

| RISK-HIGH | 病毒 (virus) | |

| RISK-MID | 化学物质、细菌 (chemicals, bacteria) | |

| RISK-LOW | 添加剂 (additive) |

| Dataset | Train | Dev | Test | Entity Types |

|---|---|---|---|---|

| Ontonotes | 15.7 k | 4.3 k | 4.3 k | 4 |

| MSRA | 41.7 k | 4.6 k | 4.3 k | 3 |

| Resume | 3.8 k | 0.46 k | 0.48 k | 8 |

| RISK | 1.2 k | 0.26 k | 0.26 k | 5 (20) 1 |

| Dataset | Method | K = 250 | K = 500 | K = 1000 | K = 1350 |

|---|---|---|---|---|---|

| Ontonotes | BERT | 63.85 | 69.50 | 71.33 | 72.42 |

| BERT-LC | 65.69 | 73.54 | 74.97 | 77.19 | |

| Lattice LSTM | 39.71 | 45.46 | 54.54 | 57.48 | |

| FLAT | 49.01 | 46.35 | 49.34 | 57.44 | |

| LEBERT | 69.48 | 69.01 | 73.78 | 74.84 | |

| LEBERT-LC | 70.26 | 69.89 | 73.83 | 76.01 | |

| PCBERT | 74.42 | 75.62 | 78.33 | 81.52 | |

| SLNER (ours) | 77.66 | 80.46 | 81.53 | 82.23 | |

| MSRA | BERT | 68.44 | 72.28 | 81.21 | 82.28 |

| BERT-LC | 79.01 | 83.13 | 87.84 | 89.32 | |

| Lattice LSTM | 54.69 | 63.61 | 74.27 | 76.31 | |

| FLAT | 59.62 | 70.20 | 80.79 | 64.95 | |

| LEBERT | 79.11 | 85.18 | 87.77 | 89.35 | |

| LEBERT-LC | 80.92 | 86.09 | 88.11 | 88.70 | |

| PCBERT | 81.08 | 85.25 | 87.88 | 89.72 | |

| SLNER (ours) | 87.65 | 89.30 | 89.67 | 90.08 | |

| Resume | BERT | 53.80 | 62.64 | 69.36 | 70.65 |

| BERT-LC | 92.26 | 94.66 | 95.16 | 96.41 | |

| Lattice LSTM | 85.63 | 89.60 | 92.01 | 93.13 | |

| FLAT | 84.62 | 90.77 | 92.97 | 87.79 | |

| LEBERT | 89.15 | 92.56 | 94.02 | 95.19 | |

| LEBERT-LC | 91.60 | 93.03 | 95.40 | 95.16 | |

| PCBERT | 93.42 | 94.01 | 94.96 | 95.97 | |

| SLNER (ours) | 94.02 | 94.86 | 95.99 | 96.36 |

| Dataset | K-Shot | Coarse-Grained | Fine-Grained |

|---|---|---|---|

| RISK | K = 250 | 69.67 | 63.97 |

| K = 500 | 71.73 | 65.62 | |

| K = 1000 | 72.70 | 68.06 | |

| Full 1 | 72.62 | 68.98 |

| K-Shot | Dataset | |||

|---|---|---|---|---|

| Ontonotes | MSRA | Resume | RISK | |

| K = 10 | 65.90 | 73.18 | 77.23 | 59.50 (44.61) 1 |

| K = 20 | 70.92 | 81.19 | 81.70 | 67.57 (45.02) |

| K = 50 | 74.91 | 86.34 | 90.55 | 68.87 (57.31) |

| Dataset | Method | K = 250 | K = 500 | K = 1000 | K = 1350 |

|---|---|---|---|---|---|

| Ontonotes | SLNER | 77.66 | 80.46 | 81.53 | 82.23 |

| -ESR w/SD | 75.63 | 80.02 | 81.04 | 81.91 | |

| -LR w/o SD | 72.90 | 75.62 | 77.98 | 78.09 | |

| MSRA | SLNER | 87.65 | 89.30 | 89.67 | 90.08 |

| -ESR w/SD | 87.99 | 89.60 | 89.42 | 89.80 | |

| -LR w/o SD | 80.72 | 84.06 | 84.87 | 84.54 | |

| Resume | SLNER | 94.02 | 94.86 | 95.99 | 96.36 |

| -ESR w/SD | 93.52 | 94.46 | 95.38 | 95.95 | |

| -LR w/o SD | 87.64 | 94.17 | 95.48 | 95.35 | |

| RISK | SLNER | 63.97 | 65.62 | 68.06 | N/A 1 |

| -ESR w/SD | 63.27 | 65.26 | 67.29 | N/A | |

| -LR w/o SD | 55.67 | 58.94 | 61.23 | N/A |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ren, Z.; Qin, X.; Ran, W. SLNER: Chinese Few-Shot Named Entity Recognition with Enhanced Span and Label Semantics. Appl. Sci. 2023, 13, 8609. https://doi.org/10.3390/app13158609

Ren Z, Qin X, Ran W. SLNER: Chinese Few-Shot Named Entity Recognition with Enhanced Span and Label Semantics. Applied Sciences. 2023; 13(15):8609. https://doi.org/10.3390/app13158609

Chicago/Turabian StyleRen, Zhe, Xizhong Qin, and Wensheng Ran. 2023. "SLNER: Chinese Few-Shot Named Entity Recognition with Enhanced Span and Label Semantics" Applied Sciences 13, no. 15: 8609. https://doi.org/10.3390/app13158609

APA StyleRen, Z., Qin, X., & Ran, W. (2023). SLNER: Chinese Few-Shot Named Entity Recognition with Enhanced Span and Label Semantics. Applied Sciences, 13(15), 8609. https://doi.org/10.3390/app13158609