Motion Capture for Sporting Events Based on Graph Convolutional Neural Networks and Single Target Pose Estimation Algorithms

Abstract

1. Introduction

- (1)

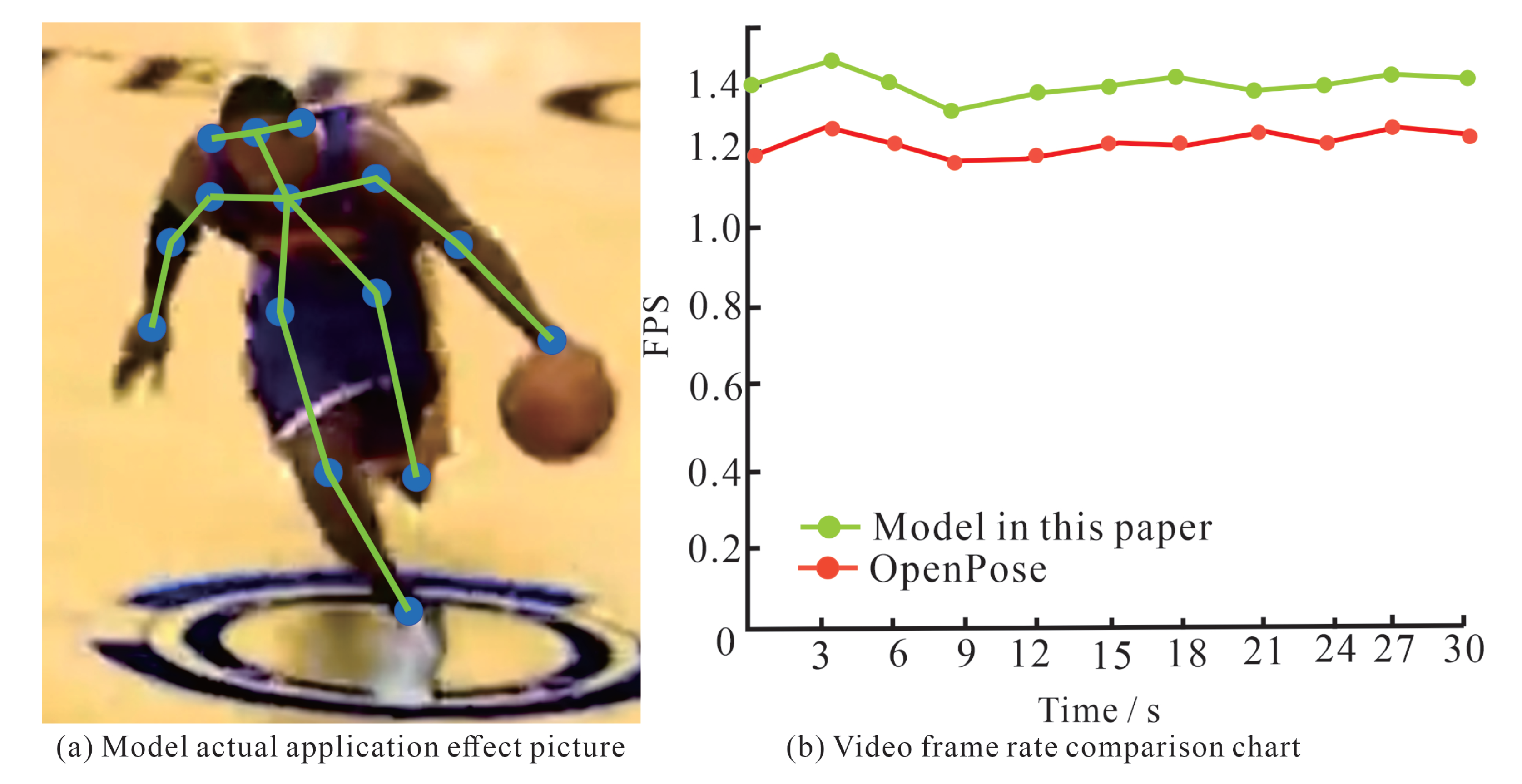

- The model combining graph neural network and HRNet can effectively improve the accuracy and efficiency of pose estimation tests. This can help accurately identify the limb movements of athletes in the game and provide an effective reference for athletes’ movement analysis.

- (2)

- Based on the end-to-end deep network for personnel detection and deconvolution operation through multiple upsampling and HR-Net, the shallow layer in the fusion neural network for human location information recognition improves single target recognition. This can circumvent some inspection errors caused by overlapping or shading and, to a certain extent, reduce the occurrence of missed and wrong inspections.

- (3)

- The graph neural network-based human pose estimation method is further optimized on the traditional graph structure-based method. This ensures adequate extraction of human pose features while reducing the time required for extraction and further improving the accuracy and efficiency of object detection and human pose estimation.

2. Related Work

3. Method

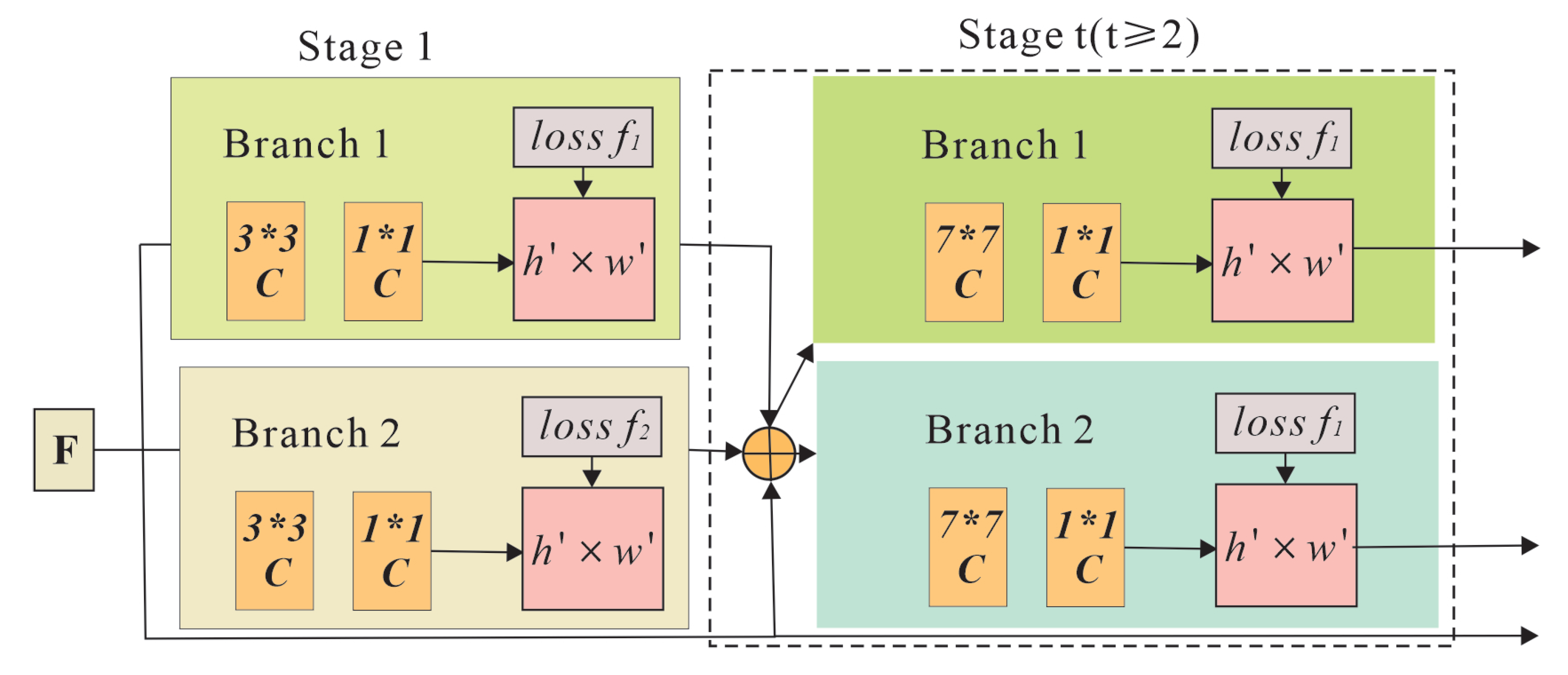

3.1. OpenPose-Based Human Pose Estimation Algorithm

| Algorithm 1: Learning Iterative Error Feedback with Fixed |

Path Consolidation 1: procedure FPC-LEARN 2: Initialize 3: E←{} 4: for to do 5: for all training examples do 6: ← 7: 8: ∪ 9: for to N do 10: and with SGD, using loss and target corrections E 11: 12: end for 13: end procedure |

3.2. Graph Convolutional Neural Network

| Algorithm 2: ID-GNN embedding computation algorithm |

Input: Graph input node features }; Number of layers K trainable functions for nodes with identity coloring, for the rest of nodes; EGO extracts the K -hop ego network centered at node v, indicator function if else 0 Output: Node embeddings for all v 1: do 2: ← EGO ← 3: for do 4: for 5: 6: |

4. Experiment

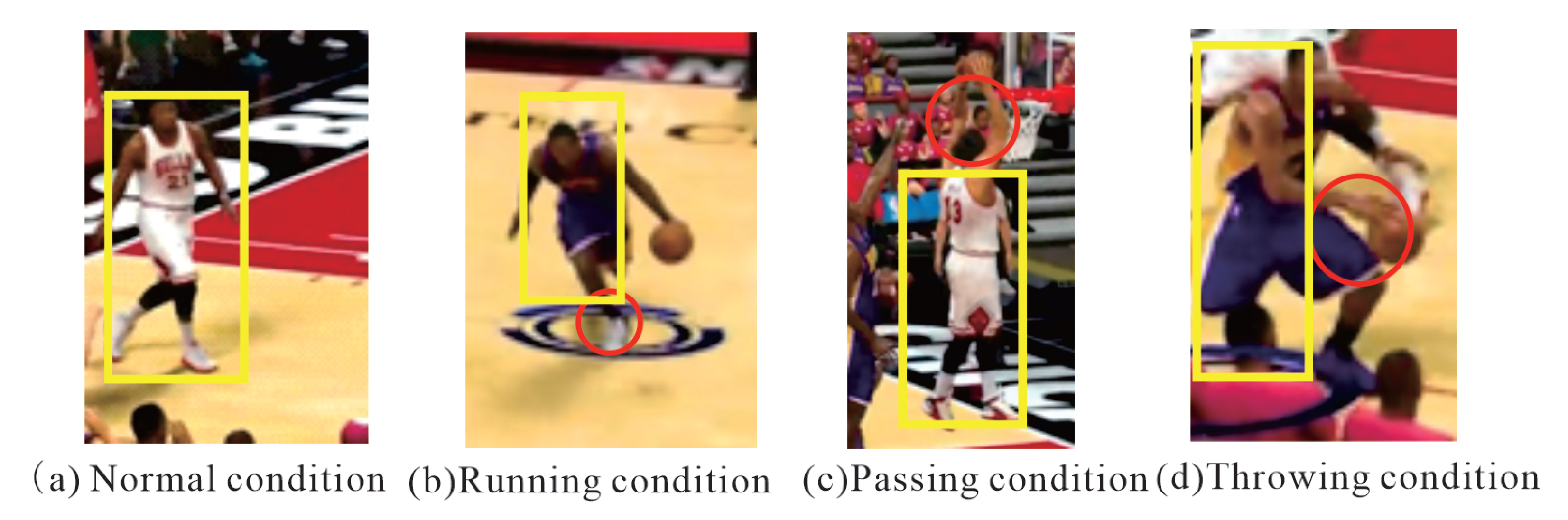

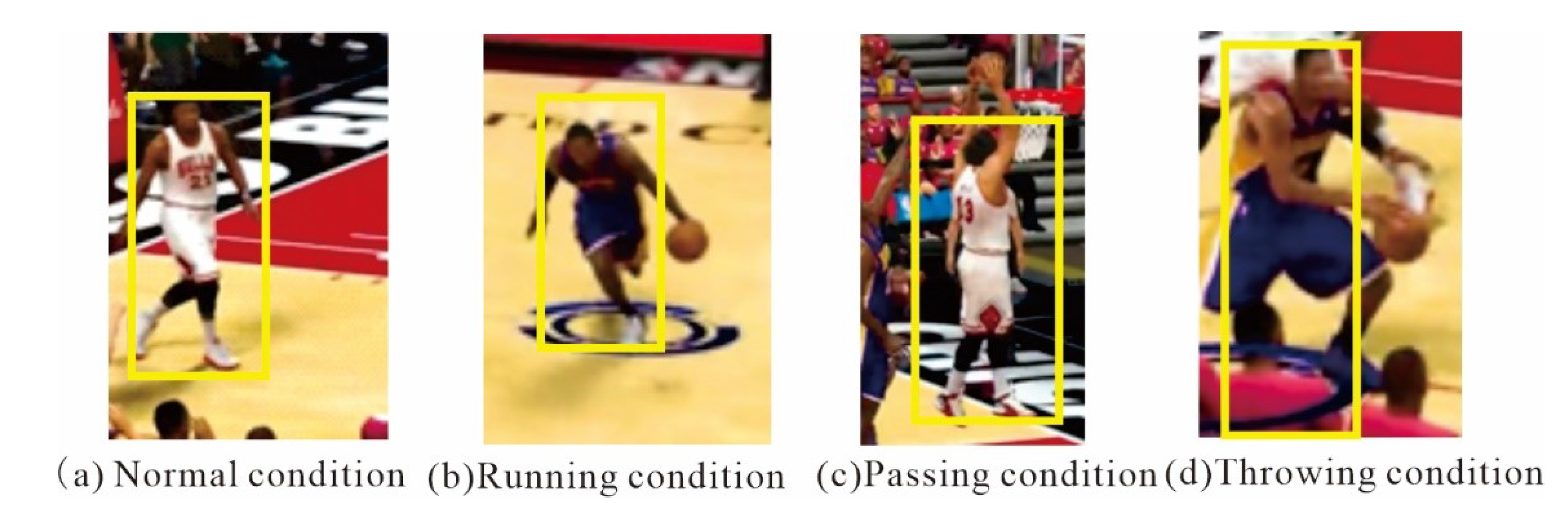

4.1. Introduction to the Dataset

4.2. Experimental Platform

4.3. Evaluation Criteria

4.4. Analysis of Experimental Results

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Gomes, J.F.S.; Leta, F.R. Applications of computer vision techniques in the agriculture and food industry: A review. Eur. Food Res. Technol. 2012, 235, 989–1000. [Google Scholar] [CrossRef]

- Song, Y.; Demirdjian, D.; Davis, R. Continuous body and hand gesture recognition for natural human-computer interaction. ACM Trans. Interact. Intell. Syst. (TiiS) 2012, 2, 1–28. [Google Scholar] [CrossRef]

- Shotton, J.; Girshick, R.; Fitzgibbon, A.; Sharp, T.; Cook, M.; Finocchio, M.; Moore, R.; Kohli, P.; Criminisi, A.; Kipman, A.; et al. Efficient human pose estimation from single depth images. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 35, 2821–2840. [Google Scholar] [CrossRef] [PubMed]

- Fastovets, M.; Guillemaut, J.-Y.; Hilton, A. Athlete pose estimation by non-sequential key-frame propagation. In Proceedings of the 11th European Conference on Visual Media Production, London, UK, 13–14 November 2014; pp. 1–9. [Google Scholar]

- Chun, S.; Ghalehjegh, N.H.; Choi, J.; Schwarz, C.; Gaspar, J.; McGehee, D.; Baek, S. Nads-net: A nimble architecture for driver and seat belt detection via convolutional neural networks. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops, Seoul, Republic of Korea, 27 October–2 November 2019. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv 2016, arXiv:1609.02907. [Google Scholar]

- Yoon, Y.; Yu, J.; Jeon, M. Predictively encoded graph convolutional network for noise-robust skeleton-based action recognition. Appl. Intell. 2022, 52, 2317–2331. [Google Scholar] [CrossRef]

- Simonovsky, M.; Komodakis, N. Dynamic edge-conditioned filters in convolutional neural networks on graphs. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3693–3702. [Google Scholar]

- Maxwell, J.A.; Mittapalli, K. Realism as a stance for mixed methods research. In SAGE Handbook of Mixed Methods in Social & Behavioral Research; Sage: Thousand Oaks, CA, USA, 2010; pp. 145–168. [Google Scholar]

- Bouraffa, T.; Feng, Z.; Yan, L.; Xia, Y.; Xiao, B. Multi–feature fusion tracking algorithm based on peak–context learning. Image Vis. Comput. 2022, 123, 104468. [Google Scholar] [CrossRef]

- Gamboa, H.; Fred, A. A behavioral biometric system based on human-computer interaction. In Biometric Technology for Human Identification; SPIE: Bellingham, WA, USA, 2004; Volume 5404, pp. 381–392. [Google Scholar]

- Wu, S.; Wang, J.; Ping, Y.; Zhang, X. Research on individual recognition and matching of whale and dolphin based on efficientnet model. In Proceedings of the 2022 3rd International Conference on Big Data, Artificial Intelligence and Internet of Things Engineering (ICBAIE), Nanchang, China, 15–17 July 2022; pp. 635–638. [Google Scholar]

- Zhang, X.; Ping, Y.; Li, C. Artificial intelligence-based early warning method for abnormal operation and maintenance data of medical and health equipment. In Proceedings of the IoT and Big Data Technologies for Health Care: Third EAI International Conference, IoTCare 2022, Virtual, 12–13 December 2022; Springer: Berlin/Heidelberg, Germany, 2023; pp. 309–321. [Google Scholar]

- Farin, D.; Krabbe, S.; de With, P.H.N.; Effelsberg, W. Robust camera calibration for sport videos using court models. In Storage and Retrieval Methods and Applications for Multimedia 2004; SPIE: Bellingham, WA, USA, 2003; Volume 5307, pp. 80–91. [Google Scholar]

- Dargan, S.; Kumar, M. A comprehensive survey on the biometric recognition systems based on physiological and behavioral modalities. Expert Syst. Appl. 2020, 143, 113114. [Google Scholar] [CrossRef]

- Roussaki, I.; Strimpakou, M.; Kalatzis, N.; Anagnostou, M.; Pils, C. Hybrid context modeling: A location-based scheme using ontologies. In Proceedings of the Fourth Annual IEEE International Conference on Pervasive Computing and Communications Workshops (PERCOMW’06), Pisa, Italy, 13–17 March 2006; p. 6. [Google Scholar]

- Albert, J. Baseball data at season, play-by-play, and pitch-by-pitch levels. J. Stat. Educ. 2010, 18. [Google Scholar] [CrossRef]

- Doroniewicz, I.; Ledwoń, D.J.; Affanasowicz, A.; Kieszczyńska, K.; Latos, D.; Matyja, M.; Mitas, A.W.; Myśliwiec, A. Writhing movement detection in newborns on the second and third day of life using pose-based feature machine learning classification. Sensors 2020, 20, 5986. [Google Scholar] [CrossRef]

- Toshev, A.; Szegedy, C. Deeppose: Human pose estimation via deep neural networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1653–1660. [Google Scholar]

- Tompson, J.J.; Jain, A.; LeCun, Y.; Bregler, C. Joint training of a convolutional network and a graphical model for human pose estimation. Adv. Neural Inf. Process. Syst. 2014, 27. [Google Scholar]

- Carreira, J.; Agrawal, P.; Fragkiadaki, K.; Malik, J. Human pose estimation with iterative error feedback. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 4733–4742. [Google Scholar]

- Farrukh, W.; Haar, D.v.d. Computer-assisted self-training for kyudo posture rectification using computer vision methods. In Proceedings of the Fifth International Congress on Information and Communication Technology, London, UK, 17–19 December 2021; Springer: Berlin/Heidelberg, Germany, 2021; pp. 202–213. [Google Scholar]

- Fan, X.; Zhao, S.; Zhang, X.; Meng, L. The impact of improving employee psychological empowerment and job performance based on deep learning and artificial intelligence. J. Organ. End User Comput. (JOEUC) 2023, 35, 1–14. [Google Scholar] [CrossRef]

- Paul, M.K.A.; Kavitha, J.; Rani, P.A.J. Key-frame extraction techniques: A review. Recent Patents Comput. Sci. 2018, 11, 3–16. [Google Scholar] [CrossRef]

- Cabán, C.C.T.; Yang, M.; Lai, C.; Yang, L.; Subach, F.V.; Smith, B.O.; Piatkevich, K.D.; Boyden, E.S. Tuning the sensitivity of genetically encoded fluorescent potassium indicators through structure-guided and genome mining strategies. ACS Sens. 2022, 7, 1336. [Google Scholar] [CrossRef] [PubMed]

- Li, C.; Chen, Z.; Jiao, Y. Vibration and bandgap behavior of sandwich pyramid lattice core plate with resonant rings. Materials 2023, 16, 2730. [Google Scholar] [CrossRef]

- Nasr, M.; Ayman, H.; Ebrahim, N.; Osama, R.; Mosaad, N.; Mounir, A. Realtime multi-person 2d pose estimation. Int. J. Adv. Netw. Appl. 2020, 11, 4501–4508. [Google Scholar] [CrossRef]

- Osokin, D. Real-time 2d multi-person pose estimation on cpu: Lightweight openpose. arXiv 2018, arXiv:1811.12004. [Google Scholar]

- Wei, S.-E.; Ramakrishna, V.; Kanade, T.; Sheikh, Y. Convolutional pose machines. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 4724–4732. [Google Scholar]

- Newell, A.; Yang, K.; Deng, J. Stacked hourglass networks for human pose estimation. In Proceedings of the European Conference on Computer Vision, Seoul, Republic of Korea, 27 October–2 November 2019; Springer: Berlin/Heidelberg, Germany, 2016; pp. 483–499. [Google Scholar]

- Presti, L.L.; Cascia, M.L. 3d skeleton-based human action classification: A survey. Pattern Recognit. 2016, 53, 130–147. [Google Scholar] [CrossRef]

- Pavllo, D.; Feichtenhofer, C.; Grangier, D.; Auli, M. 3d human pose estimation in video with temporal convolutions and semi-supervised training. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 7753–7762. [Google Scholar]

- Gärtner, E.; Pirinen, A.; Sminchisescu, C. Deep reinforcement learning for active human pose estimation. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; Volume 34, pp. 10835–10844. [Google Scholar]

- Vila, M.; Bardera, A.; Xu, Q.; Feixas, M.; Sbert, M. Tsallis entropy-based information measures for shot boundary detection and keyframe selection. Signal Image Video Process. 2013, 7, 507–520. [Google Scholar] [CrossRef]

- Jain, A.K. Data clustering: 50 years beyond k-means. Pattern Recognit. Lett. 2010, 31, 651–666. [Google Scholar] [CrossRef]

- Hara, K.; Chellappa, R. Growing regression tree forests by classification for continuous object pose estimation. Int. J. Comput. Vis. 2017, 122, 292–312. [Google Scholar] [CrossRef]

- Papadaki, S.; Wang, X.; Wang, Y.; Zhang, H.; Jia, S.; Liu, S.; Yang, M.; Zhang, D.; Jia, J.-M.; Köster, R.W.; et al. Dual-expression system for blue fluorescent protein optimization. Sci. Rep. 2022, 12, 1–16. [Google Scholar] [CrossRef] [PubMed]

- Ning, X.; Gong, K.; Li, W.; Zhang, L.; Bai, X.; Tian, S. Feature refinement and filter network for person re-identification. IEEE Trans. Circuits Syst. Video Technol. 2020, 31, 3391–3402. [Google Scholar] [CrossRef]

- Ning, X.; Nan, F.; Xu, S.; Yu, L.; Zhang, L. Multi-view frontal face image generation: A survey. Concurr. Comput. Pract. Exp. 2020, e6147. [Google Scholar] [CrossRef]

- Ning, X.; Duan, P.; Li, W.; Zhang, S. Real-time 3d face alignment using an encoder-decoder network with an efficient deconvolution layer. IEEE Signal Process. Lett. 2020, 27, 1944–1948. [Google Scholar] [CrossRef]

- He, F.; Ye, Q. A bearing fault diagnosis method based on wavelet packet transform and convolutional neural network optimized by simulated annealing algorithm. Sensors 2022, 22, 1410. [Google Scholar] [CrossRef] [PubMed]

- Chen, C.-C.; Chang, C.; Lin, C.-S.; Chen, C.-H.; Chen, I.C. Video based basketball shooting prediction and pose suggestion system. Multimed. Tools Appl. 2023, 1–20. [Google Scholar] [CrossRef]

- Zhang, Y.-H.; Wen, C.; Zhang, M.; Xie, K.; He, J.-B. Fast 3d visualization of massive geological data based on clustering index fusion. IEEE Access 2022, 10, 28821–28831. [Google Scholar] [CrossRef]

- Zhang, M.; Xie, K.; Zhang, Y.-H.; Wen, C.; He, J.-B. Fine segmentation on faces with masks based on a multistep iterative segmentation algorithm. IEEE Access 2022, 10, 75742–75753. [Google Scholar] [CrossRef]

- Saiki, Y.; Kabata, T.; Ojima, T.; Kajino, Y.; Inoue, D.; Ohmori, T.; Yoshitani, J.; Ueno, T.; Yamamuro, Y.; Taninaka, A.; et al. Reliability and validity of openpose for measuring hip-knee-ankle angle in patients with knee osteoarthritis. Sci. Rep. 2023, 13, 3297. [Google Scholar] [CrossRef]

- Hooren, B.V.; Pecasse, N.; Meijer, K.; Essers, J.M.N. The accuracy of markerless motion capture combined with computer vision techniques for measuring running kinematics. Scand. J. Med. Sci. Sport. 2023, 33, 966–978. [Google Scholar]

- Yi, G.; Wu, H.; Wu, X.; Li, Z.; Zhao, X. Human action recognition based on skeleton features. Comput. Sci. Inf. Syst. 2023, 20, 537–550. [Google Scholar] [CrossRef]

- Gao, M.; Li, J.; Zhou, D.; Zhi, Y.; Zhang, M.; Li, B. Fall detection based on openpose and mobilenetv2 network. IET Image Process. 2023, 17, 722–732. [Google Scholar] [CrossRef]

- Dewi, C.; Chen, A.P.S.; Christanto, H.J. Deep learning for highly accurate hand recognition based on yolov7 model. Big Data Cogn. Comput. 2023, 7, 53. [Google Scholar] [CrossRef]

- Chen, Y.; Wang, Z.; Peng, Y.; Zhang, Z.; Yu, G.; Sun, J. Cascaded pyramid network for multi-person pose estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 7103–7112. [Google Scholar]

- Sun, K.; Xiao, B.; Liu, D.; Wang, J. Deep high-resolution representation learning for human pose estimation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 5693–5703. [Google Scholar]

- Martinez, J.; Hossain, R.; Romero, J.; Little, J.J. A simple yet effective baseline for 3d human pose estimation. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2640–2649. [Google Scholar]

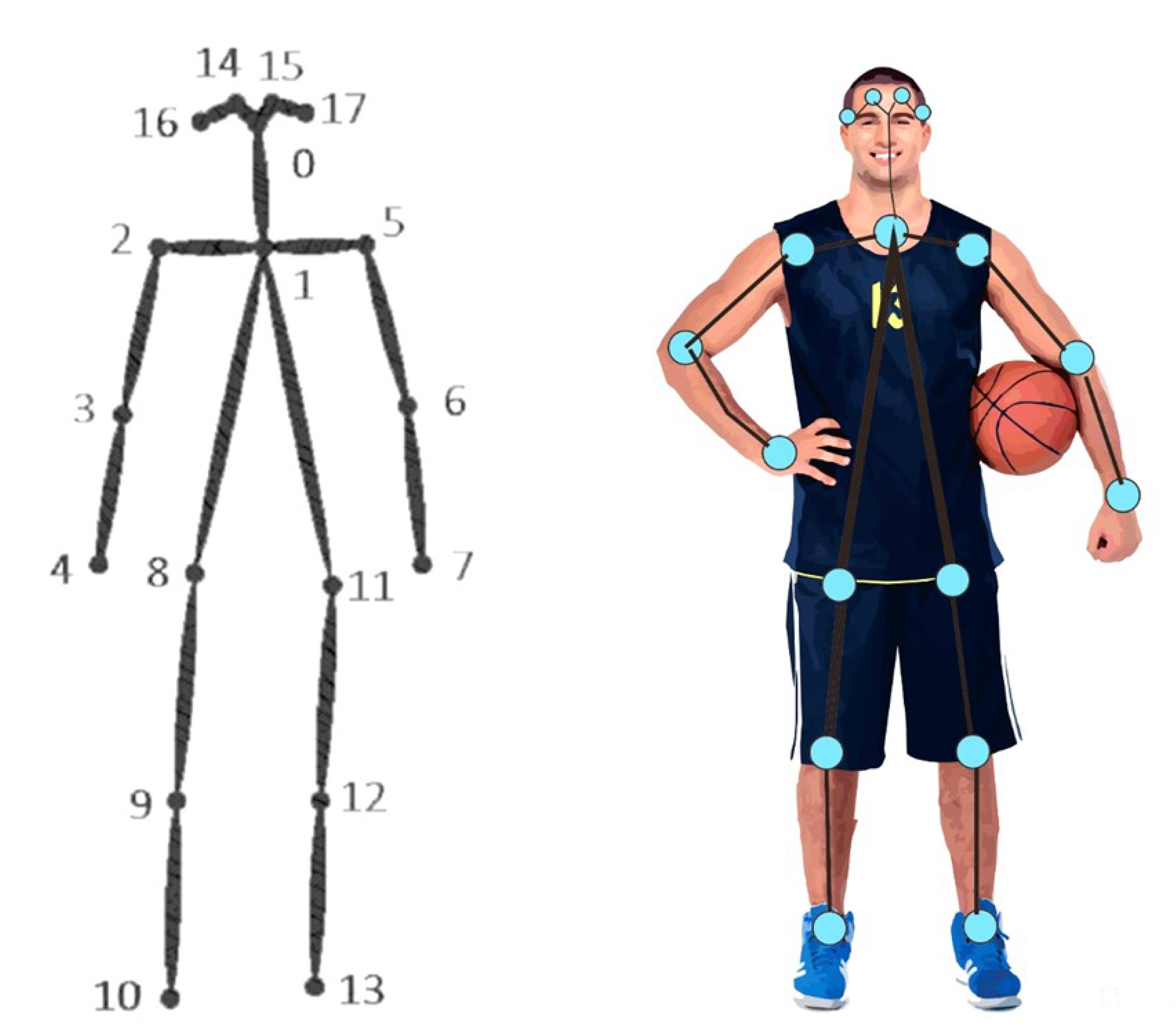

| Marking Number | Name | Marking Number | Name |

|---|---|---|---|

| 0 | Nose | 9 | Right knee |

| ]1*1 | Neck | 10 | Right ankle |

| ]1*2 | Right shoulder | 11 | Left hip |

| ]1*3 | Right elbow | 12 | Left knee |

| ]1*4 | Right wrist | 13 | Left ankle |

| ]1*5 | Left shoulder | 14 | Right eye |

| ]1*6 | Left elbow | 15 | Left eye |

| ]1*7 | Left wrist | 16 | Right ear |

| ]1*8 | right hip | 17 | Left ear |

| Method | Input Size | GFLOPS | mAP | AR | ||

|---|---|---|---|---|---|---|

| CPN [50] | 256 * 192 | 6.20 | - | - | 68.6 | - |

| CPN + OHKM [50] | 256 * 192 | 6.20 | - | - | 69.4 | - |

| HRNet-32 [51] | 256 * 192 | 7.10 | 89.5 | 80.7 | 73.4 | 78.9 |

| SimpleBaseline-50 [52] | 256 * 192 | 8.90 | 88.6 | 78.3 | 70.4 | 76.3 |

| SimpleBaseline-101 [52] | 256 * 192 | 12.40 | 89.3 | 79.3 | 71.4 | 77.1 |

| SimpleBaseline-152 [52] | 256 * 192 | 15.70 | 89.5 | 79.8 | 72.0 | 77.8 |

| Ours | 256 * 192 | 8.1 | 91.2 | 81.5 | 74.3 | 79.3 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Duan, C.; Hu, B.; Liu, W.; Song, J. Motion Capture for Sporting Events Based on Graph Convolutional Neural Networks and Single Target Pose Estimation Algorithms. Appl. Sci. 2023, 13, 7611. https://doi.org/10.3390/app13137611

Duan C, Hu B, Liu W, Song J. Motion Capture for Sporting Events Based on Graph Convolutional Neural Networks and Single Target Pose Estimation Algorithms. Applied Sciences. 2023; 13(13):7611. https://doi.org/10.3390/app13137611

Chicago/Turabian StyleDuan, Chengpeng, Bingliang Hu, Wei Liu, and Jie Song. 2023. "Motion Capture for Sporting Events Based on Graph Convolutional Neural Networks and Single Target Pose Estimation Algorithms" Applied Sciences 13, no. 13: 7611. https://doi.org/10.3390/app13137611

APA StyleDuan, C., Hu, B., Liu, W., & Song, J. (2023). Motion Capture for Sporting Events Based on Graph Convolutional Neural Networks and Single Target Pose Estimation Algorithms. Applied Sciences, 13(13), 7611. https://doi.org/10.3390/app13137611