Streaming-Based Anomaly Detection in ITS Messages

Abstract

1. Introduction

- , the mean of the h observations with the least possible determinant of the sample covariance matrix.

- is the associated covariance matrix multiplied by a consistency factor c.

- If instances return s(x, n) extremely close to 1, then they are anomalies;

- If instances have an s(x, n) less than 0.5, then they are deemed normal instances;

- If all the instances return an s(x, n) of 0.5, then there is no differentiation between normal and anomalous instances.

- Contextual attributes: These are utilised for establishing the context (or neighbourhood) of a particular instance. Contextual features of a location in spatial datasets include its longitude and latitude. In time series data, time serves as a contextual characteristic that determines the position of an instance within the entirety of the sequence.

- Behavioural/indicator attributes: These attributes have a direct correlation with the anomaly detection process, as they establish the anomalous behaviour. Within a spatial data collection outlining the mean precipitation levels within a specific nation, the proportion of rainfall observed at a given site shall be deemed a behavioural attribute.

- (a)

- What is the significance of data associations in anomaly detection, especially in a constrained road network?

- (b)

- How can a balance between variance and bias be achieved in ensemble learning?

- (c)

- How can we improve the detection rate of anomalies in CAM data streams?

- (d)

- Can enhancing the LSCP algorithm improve the identification of anomalies in CAM data streams?

- (e)

- How can the adapted technique be applied to real-world problems?

- We define and investigate the issue of completely unsupervised anomaly ensemble construction;

- We propose a robust ensemble-based methodology for the detection of anomalies from data streams in the C-ITS context;

- We evaluate the proposed technique using a dataset of CAM messages generated in the C-ITS environment and compare its performance with state-of-the-art techniques in the streaming context.

2. Materials and Methods

2.1. Data Generation, Pre-Processing and Transformation

2.1.1. Data Generation

2.1.2. Data Pre-Processing and Transformation

2.2. Problem Statement

- is the vehicle identifier;

- t is the timestamp of the message;

- x is the longitude of the vehicle at time t;

- y is the latitude of at time t;

- s is the speed of at time t in metres/second;

- h is the heading of at time t in degrees.

- is a trajectory: = .

- , .

- All consecutive messages in are also consecutive in , i.e., there does not exist a message of situated between messages of that does not belong to :

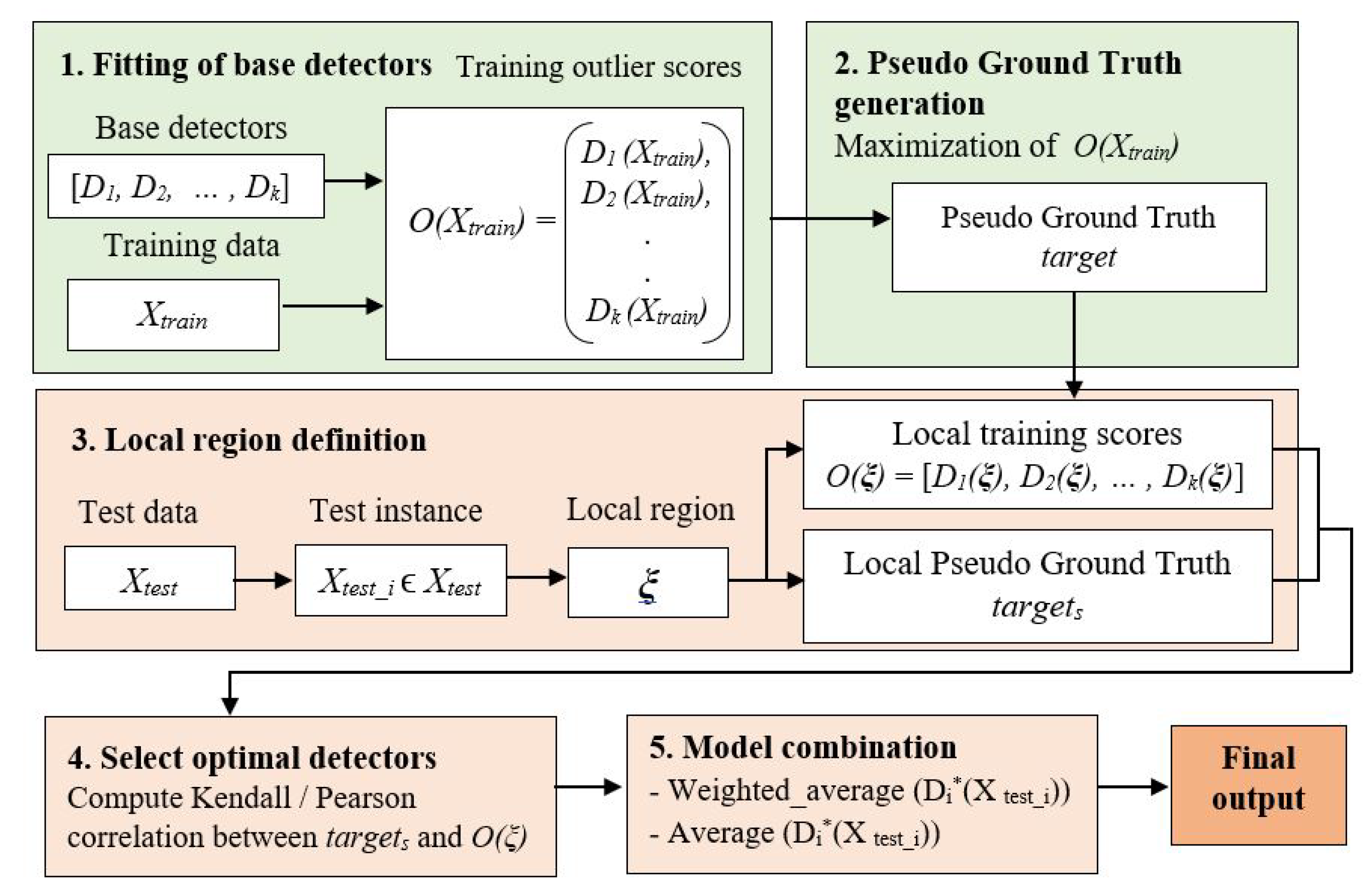

2.3. Proposed Enhanced LSCP Algorithm (ELSCP)

| Algorithm 1: Enhanced LSCP. |

|

- Using the ball tree nearest neighbour algorithm with the Haversine distance metric, the local region is defined to be the set of the nearest training points in randomly sampled feature subspaces that occur more frequently using a defined threshold over multiple iterations.

- Using the local region, a local pseudo ground truth is defined, and the Pearson correlation is calculated between each base detector’s training outlier scores and the pseudo ground truth.

- Weights are computed for each detector by ranking the Pearson correlation scores such that the detector with the best score obtains the highest weight.

- Using the correlation scores, the best detector is selected. The final score for the test instance is computed using a weighted average of the best detector’s local region scores.

2.4. Performance Indicators

- True positive (TP): True positives are correctly identified anomalies.

- False positive (FP): False positives are incorrectly identified normal data.

- True negative (TN): True negatives are correctly identified normal data.

- False negative (FN): False negatives are incorrectly rejected anomalies.

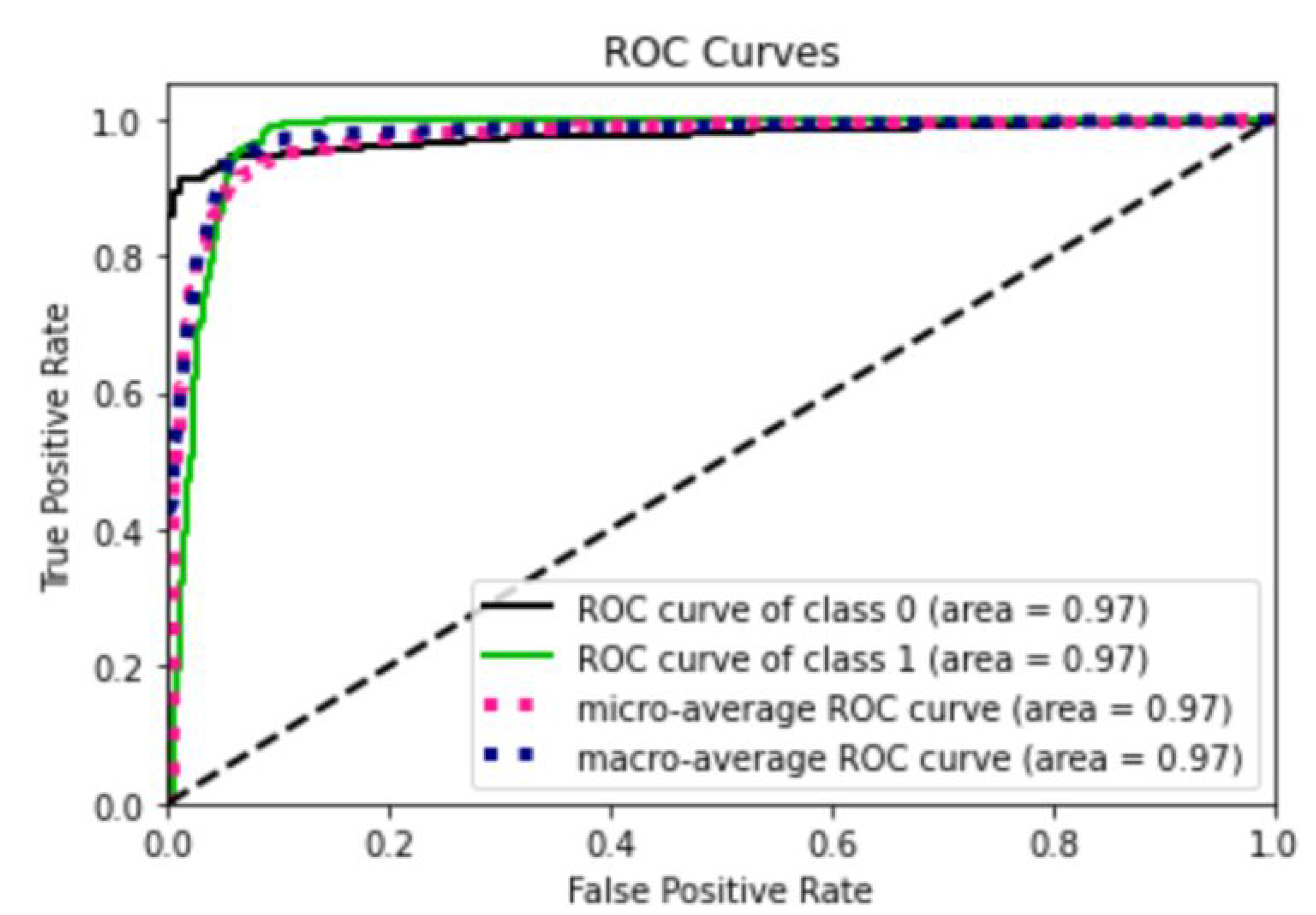

3. Results

4. Discussion

4.1. Use Cases

4.2. Limitations

- It takes much time to find the test instance’s nearest neighbours;

- When many features or attributes are irrelevant, performance in a multidimensional space can be degraded.

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| AUC-ROC | Area under the curve of the receiver operating characteristic |

| AUCPR | Area under the curve of precision–recall |

| CAM | Cooperative awareness message |

| C-ITS | Cooperative intelligent transport systems |

| ELSCP | Enhanced locally selective combination in parallel outlier ensembles |

| ETSI TS | European Telecommunications Standards Institute Technical Specifications |

| FDM | Filter–discovery–match |

| FP | False positive |

| FPR | False positive rate |

| FN | False negative |

| GPS | Global positioning system |

| HBOS | Histogram-based outlier score |

| IForest | Isolation forest |

| ITS | Intelligent transportation systems |

| IoT | Internet of Things |

| KD tree | K-dimensional tree |

| kNN | k-nearest neighbours detector |

| LOF | Local outlier factor |

| LoTAD | Long-term traffic anomaly detection |

| LSCP | Locally selective combination in parallel outlier ensembles |

| MAD | Median absolute deviation |

| MCD | Minimum covariance determinant |

| MTTD | Mean time-to-detect |

| OMNET | Objective modular network testbed |

| P | Precision |

| PKI | Public key infrastructure |

| PyOD | Python outlier detection |

| PySAD | Python streaming anomaly detection |

| R | Recall |

| ROC | Receiver operating characteristic |

| RRCF | Robust random cut forest |

| RSU | Roadside unit |

| STORM | Stream outlier miner |

| SUMO | Simulation of urban mobility |

| TP | True positive |

| TPR | True positive rate |

| TN | True negative |

| VANET | Vehicular ad hoc network |

| V2I | Vehicle-to-infrastructure |

| V2V | Vehicle-to-vehicle |

| V2X | Vehicle-to-everything |

References

- Fahim, M.; Sillitti, A. Anomaly detection, analysis and prediction techniques in iot environment: A systematic literature review. IEEE Access 2019, 7, 81664–81681. [Google Scholar] [CrossRef]

- Lu, M.; Türetken, O.; Adali, O.E.; Castells, J.; Blokpoel, R.; Grefen, P. C-ITS (cooperative intelligent transport systems) deployment in Europe: Challenges and key findings. In Proceedings of the 25th ITS World Congress, Copenhagen, Denmark, 17–21 September 2018; p. EU-TP1076. [Google Scholar]

- Chandola, V.; Banerjee, A.; Kumar, V. Anomaly detection: A survey. ACM Comput. Surv. (CSUR) 2009, 41, 1–58. [Google Scholar] [CrossRef]

- Aggarwal, C.C. Outlier analysis. In Data Mining: The Textbook; Springer: Cham, Switzerland, 2015; Volume 1, Chapter 8; pp. 237–263. [Google Scholar]

- Kamran, S.; Haas, O. A multilevel traffic incidents detection approach: Identifying traffic patterns and vehicle behaviours using real-time gps data. In Proceedings of the 2007 IEEE Intelligent Vehicles Symposium, Istanbul, Turkey, 13–15 June 2007; pp. 912–917. [Google Scholar]

- Zhang, M.; Li, T.; Yu, Y.; Li, Y.; Hui, P.; Zheng, Y. Urban Anomaly Analytics: Description, Detection and Prediction. IEEE Trans. Big Data 2020, 8, 809–826. [Google Scholar] [CrossRef]

- Kong, X.; Song, X.; Xia, F.; Guo, H.; Wang, J.; Tolba, A. LoTAD: Long-term traffic anomaly detection based on crowdsourced bus trajectory data. World Wide Web 2018, 21, 825–847. [Google Scholar] [CrossRef]

- Fouchal, H.; Bourdy, E.; Wilhelm, G.; Ayaida, M. A validation tool for cooperative intelligent transport systems. J. Comput. Sci. 2017, 22, 283–288. [Google Scholar] [CrossRef]

- Han, X.; Grubenmann, T.; Cheng, R.; Wong, S.C.; Li, X.; Sun, W. Traffic incident detection: A trajectory-based approach. In Proceedings of the 2020 IEEE 36th International Conference on Data Engineering (ICDE), Dallas, TX, USA, 20–24 April 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1866–1869. [Google Scholar]

- Toshniwal, A.; Mahesh, K.; Jayashree, R. Overview of Anomaly Detection techniques in Machine Learning. In Proceedings of the 2020 Fourth International Conference on I-SMAC (IoT in Social, Mobile, Analytics and Cloud) (I-SMAC), Palladam, India, 7–9 October 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 808–815. [Google Scholar]

- Goldstein, M.; Dengel, A. Histogram-based outlier score (hbos): A fast unsupervised anomaly detection algorithm. KI-2012 Poster Demo Track 2012, 1, 59–63. [Google Scholar]

- Kind, A.; Stoecklin, M.P.; Dimitropoulos, X. Histogram-based traffic anomaly detection. IEEE Trans. Netw. Serv. Manag. 2009, 6, 110–121. [Google Scholar] [CrossRef]

- Rousseeuw, P.J.; Driessen, K.V. A fast algorithm for the minimum covariance determinant estimator. Technometrics 1999, 41, 212–223. [Google Scholar] [CrossRef]

- Hubert, M.; Debruyne, M.; Rousseeuw, P.J. Minimum covariance determinant and extensions. Wiley Interdiscip. Rev. Comput. Stat. 2018, 10, e1421. [Google Scholar] [CrossRef]

- Rousseeuw, P.J.; Hubert, M. Anomaly detection by robust statistics. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2018, 8, e1236. [Google Scholar] [CrossRef]

- Liu, F.T.; Ting, K.M.; Zhou, Z.H. Isolation forest. In Proceedings of the 2008 Eighth IEEE International Conference on Data Mining, Pisa, Italy, 15–19 December 2008; IEEE: Piscataway, NJ, USA, 2008; pp. 413–422. [Google Scholar]

- Chen, W.R.; Yun, Y.H.; Wen, M.; Lu, H.M.; Zhang, Z.M.; Liang, Y.Z. Representative subset selection and outlier detection via isolation forest. Anal. Methods 2016, 8, 7225–7231. [Google Scholar] [CrossRef]

- Guha, S.; Mishra, N.; Roy, G.; Schrijvers, O. Robust random cut forest based anomaly detection on streams. In Proceedings of the International Conference on Machine Learning, New York, NY, USA, 19–24 June 2016; pp. 2712–2721. [Google Scholar]

- Zhao, Y.; Nasrullah, Z.; Hryniewicki, M.K.; Li, Z. LSCP: Locally selective combination in parallel outlier ensembles. In Proceedings of the 2019 SIAM International Conference on Data Mining, Calgary, AB, Canada, 2–4 May 2019; pp. 585–593. [Google Scholar]

- Breunig, M.M.; Kriegel, H.P.; Ng, R.T.; Sander, J. LOF: Identifying density-based local outliers. In Proceedings of the 2000 ACM SIGMOD International Conference on Management of Data, Dallas, TX, USA, 16–18 May 2000; pp. 93–104. [Google Scholar]

- Angiulli, F.; Fassetti, F. Detecting distance-based outliers in streams of data. In Proceedings of the Sixteenth ACM Conference on Conference on Information and Knowledge Management, Lisbon, Portugal, 6–10 November 2007; pp. 811–820. [Google Scholar]

- Tan, S.C.; Ting, K.M.; Liu, T.F. Fast anomaly detection for streaming data. In Proceedings of the Twenty-Second International Joint Conference on Artificial Intelligence, Barcelona, Spain, 16–22 July 2011; pp. 1511–1516. [Google Scholar]

- Gaber, M.M. Advances in data stream mining. Wiley Interdiscip. Rev. Data Min. Knowl. Discov. 2012, 2, 79–85. [Google Scholar] [CrossRef]

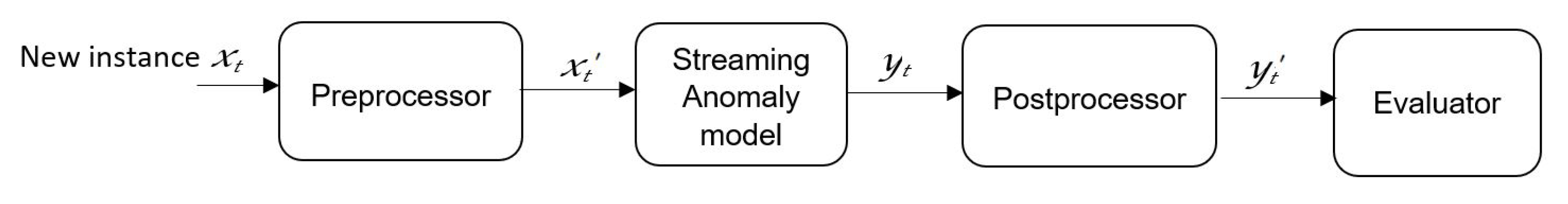

- Calikus, E.; Nowaczyk, S.; Sant’Anna, A.; Dikmen, O. No free lunch but a cheaper supper: A general framework for streaming anomaly detection. Expert Syst. Appl. 2020, 155, 113453. [Google Scholar] [CrossRef]

- Britto, A.S., Jr.; Sabourin, R.; Oliveira, L.E. Dynamic selection of classifiers—A comprehensive review. Pattern Recognit. 2014, 47, 3665–3680. [Google Scholar] [CrossRef]

- Polikar, R. Ensemble based systems in decision making. IEEE Circuits Syst. Mag. 2006, 6, 21–45. [Google Scholar] [CrossRef]

- Alghushairy, O.; Alsini, R.; Soule, T.; Ma, X. A Review of Local Outlier Factor Algorithms for Outlier Detection in Big Data Streams. Big Data Cogn. Comput. 2021, 5, 1. [Google Scholar] [CrossRef]

- Ho, T.K.; Hull, J.J.; Srihari, S.N. Decision combination in multiple classifier systems. IEEE Trans. Pattern Anal. Mach. Intell. 1994, 16, 66–75. [Google Scholar]

- Woods, K.; Kegelmeyer, W.P.; Bowyer, K. Combination of multiple classifiers using local accuracy estimates. IEEE Trans. Pattern Anal. Mach. Intell. 1997, 19, 405–410. [Google Scholar] [CrossRef]

- Rayana, S.; Akoglu, L. An ensemble approach for event detection and characterization in dynamic graphs. In Proceedings of the ACM SIGKDD ODD Workshop, New York, NY, USA, 24–27 August 2014. [Google Scholar]

- Zimek, A.; Campello, R.J.; Sander, J. Ensembles for unsupervised outlier detection: Challenges and research questions a position paper. ACM Sigkdd Explor. Newsl. 2014, 15, 11–22. [Google Scholar] [CrossRef]

- Fouchal, H.; Habbas, Z. Distributed backtracking algorithm based on tree decomposition over wireless sensor networks. Concurr. Comput. Pract. Exp. 2013, 25, 728–742. [Google Scholar] [CrossRef]

- Fouchal, H.; Francillette, Y.; Hunel, P.; Vidot, N. A distributed power management optimisation in wireless sensors networks. In Proceedings of the 34th Annual IEEE Conference on Local Computer Networks, LCN, Zurich, Switzerland, 20–23 October 2009; IEEE Computer Society: Piscataway, NJ, USA, 2009; pp. 763–769. [Google Scholar] [CrossRef]

- Salva, S.; Petitjean, E.; Fouchal, H. A simple approach to testing timed systems. In Proceedings of the FATES01 (Formal Approaches for Testing Software), a Satellite Workshop of CONCUR, Aalborg, Denmark, 25 August 2001. [Google Scholar]

- Varga, A. The OMNeT++ discrete event simulation system. In Proceedings of the European Simulation Multiconference, Prague, Czech Republic, 6–9 June 2001; pp. 319–324. [Google Scholar]

- Krajzewicz, D.; Hertkorn, G.; Rössel, C.; Wagner, P. SUMO (Simulation of Urban MObility)—An open-source traffic simulation. In Proceedings of the 4th Middle East Symposium on Simulation and Modelling, Berlin-Adlershof, Germany, 1–30 September 2002; pp. 183–187. [Google Scholar]

- Riebl, R.; Obermaier, C.; Günther, H.J. Artery: Large scale simulation environment for its applications. In Recent Advances in Network Simulation; Springer: Cham, Switzerland, 2019; pp. 365–406. [Google Scholar]

- 103 097 V1. 4.1; Intelligent Transport Systems (ITS); Security; Security Header and Certificate Formats. ETSI: Valbonne, France, 2020.

- Zhang, Z.; He, Q.; Tong, H.; Gou, J.; Li, X. Spatial-temporal traffic flow pattern identification and anomaly detection with dictionary-based compression theory in a large-scale urban network. Transp. Res. Part C Emerg. Technol. 2016, 71, 284–302. [Google Scholar] [CrossRef]

- Leblanc, B.; Fouchal, H.; De Runz, C. Obstacle Detection based on Cooperative-Intelligent Transport System Data. In Proceedings of the 2020 IEEE Symposium on Computers and Communications (ISCC), Rennes, France, 7–10 July 2020; IEEE: Piscataway, NJ, USA, 2020; pp. 1–6. [Google Scholar]

- Moso, J.C.; Boutahala, R.; Leblanc, B.; Fouchal, H.; de Runz, C.; Cormier, S.; Wandeto, J. Anomaly Detection on Roads Using C-ITS Messages. In Proceedings of the International Workshop on Communication Technologies for Vehicles, Bordeaux, France, 16–17 November 2020; Springer: Cham, Switzerland, 2020; pp. 25–38. [Google Scholar]

- Aggarwal, C.C.; Sathe, S. Theoretical foundations and algorithms for outlier ensembles. ACM Sigkdd Explor. Newsl. 2015, 17, 24–47. [Google Scholar] [CrossRef]

- Dolatshah, M.; Hadian, A.; Minaei-Bidgoli, B. Ball*-tree: Efficient spatial indexing for constrained nearest-neighbor search in metric spaces. arXiv 2015, arXiv:1511.00628. [Google Scholar]

- Witten, I.H.; Frank, E. Data Mining: Practical Machine Learning Tools and Techniques; Morgan Kaufmann: San Francisco, CA, USA, 2005; Chapter 4. [Google Scholar]

- Kumar, N.; Zhang, L.; Nayar, S. What is a good nearest neighbors algorithm for finding similar patches in images? In Proceedings of the European Conference on Computer Vision, Marseille, France, 12–18 October 2008; Springer: Berlin/Heidelberg, Germany, 2008; pp. 364–378. [Google Scholar]

- Zhao, Y.; Hryniewicki, M.K. DCSO: Dynamic combination of detector scores for outlier ensembles. arXiv 2019, arXiv:1911.10418. [Google Scholar]

- Hanley, J.A.; McNeil, B.J. The meaning and use of the area under a receiver operating characteristic (ROC) curve. Radiology 1982, 143, 29–36. [Google Scholar] [CrossRef] [PubMed]

- Boyd, K.; Eng, K.H.; Page, C.D. Area under the precision-recall curve: Point estimates and confidence intervals. In Proceedings of the Joint European Conference on Machine Learning and Knowledge Discovery in Databases, Prague, Czech Republic, 23–27 September 2013; Springer: Berlin/Heidelberg, Germany, 2013; pp. 451–466. [Google Scholar]

- Campos, G.O.; Zimek, A.; Sander, J.; Campello, R.J.; Micenková, B.; Schubert, E.; Assent, I.; Houle, M.E. On the evaluation of unsupervised outlier detection: Measures, datasets, and an empirical study. Data Min. Knowl. Discov. 2016, 30, 891–927. [Google Scholar] [CrossRef]

- Fawcett, T. An introduction to ROC analysis. Pattern Recognit. Lett. 2006, 27, 861–874. [Google Scholar] [CrossRef]

- Saito, T.; Rehmsmeier, M. The precision-recall plot is more informative than the ROC plot when evaluating binary classifiers on imbalanced datasets. PLoS ONE 2015, 10, e0118432. [Google Scholar] [CrossRef]

- Yilmaz, S.F.; Kozat, S.S. PySAD: A Streaming Anomaly Detection Framework in Python. arXiv 2020, arXiv:2009.02572. [Google Scholar]

- Zhao, Y.; Nasrullah, Z.; Li, Z. PyOD: A Python Toolbox for Scalable Outlier Detection. J. Mach. Learn. Res. 2019, 20, 1–7. [Google Scholar]

- Mandrekar, J.N. Receiver operating characteristic curve in diagnostic test assessment. J. Thorac. Oncol. 2010, 5, 1315–1316. [Google Scholar] [CrossRef]

- Xia, X.; Meng, Z.; Han, X.; Li, H.; Tsukiji, T.; Xu, R.; Zheng, Z.; Ma, J. An automated driving systems data acquisition and analytics platform. Transp. Res. Part Emerg. Technol. 2023, 151, 104120. [Google Scholar] [CrossRef]

- Hajebi, K.; Abbasi-Yadkori, Y.; Shahbazi, H.; Zhang, H. Fast approximate nearest-neighbor search with k-nearest neighbor graph. In Proceedings of the Twenty-Second International Joint Conference on Artificial Intelligence, Barcelona, Spain, 16–22 July 2011. [Google Scholar]

- Cruz, R.M.; Sabourin, R.; Cavalcanti, G.D. Dynamic classifier selection: Recent advances and perspectives. Inf. Fusion 2018, 41, 195–216. [Google Scholar] [CrossRef]

- Rayana, S.; Akoglu, L. Less is more: Building selective anomaly ensembles. ACM Trans. Knowl. Discov. Data (TKDD) 2016, 10, 1–33. [Google Scholar] [CrossRef]

| Parameter | Value |

|---|---|

| Network simulator | OMNeT++ |

| Road traffic simulator | SUMO |

| Framework | Artery (Vanetza, INET, Veins) |

| Number of nodes | 100 |

| Simulation time | 7200 s |

| Road map of Reims | 10,000 m × 10,000 m |

| CAM message interval | 0.1 s |

| Carrier Frequency | 5.9 GHz |

| Number of channels | 180 |

| Transmitter power | 20 mW |

| Parameters | Values |

|---|---|

| Window sizes | 50, 100, 200, 300, 400, 500, 600 |

| Sliding window size | 50 |

| Initial window (training set) | 1000 |

| AUC-ROC | AUCPR | |||

|---|---|---|---|---|

| Window Size | LSCP | ELSCP | LSCP | ELSCP |

| 50 | 0.7408 | 0.7794 | 0.1793 | 0.2157 |

| 100 | 0.7763 | 0.8065 | 0.2126 | 0.2496 |

| 200 | 0.8203 | 0.8453 | 0.2525 | 0.3125 |

| 300 | 0.8706 | 0.8977 | 0.2989 | 0.3841 |

| 400 | 0.8833 | 0.9138 | 0.3145 | 0.4208 |

| 500 | 0.8910 | 0.9247 | 0.3206 | 0.4331 |

| 600 | 0.8917 | 0.9277 | 0.3206 | 0.4373 |

| Average | 0.8392 | 0.8707 | 0.2713 | 0.3504 |

| Model | AUC-ROC | AUCPR |

|---|---|---|

| ELSCP | 0.8945 | 0.3841 |

| LSCP | 0.8581 | 0.2858 |

| IForest | 0.7825 | 0.2210 |

| MCD | 0.7587 | 0.1665 |

| RRCF | 0.6042 | 0.1146 |

| Exact-STORM | 0.5000 | 0.0885 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Moso, J.C.; Cormier, S.; de Runz, C.; Fouchal, H.; Wandeto, J.M. Streaming-Based Anomaly Detection in ITS Messages. Appl. Sci. 2023, 13, 7313. https://doi.org/10.3390/app13127313

Moso JC, Cormier S, de Runz C, Fouchal H, Wandeto JM. Streaming-Based Anomaly Detection in ITS Messages. Applied Sciences. 2023; 13(12):7313. https://doi.org/10.3390/app13127313

Chicago/Turabian StyleMoso, Juliet Chebet, Stéphane Cormier, Cyril de Runz, Hacène Fouchal, and John Mwangi Wandeto. 2023. "Streaming-Based Anomaly Detection in ITS Messages" Applied Sciences 13, no. 12: 7313. https://doi.org/10.3390/app13127313

APA StyleMoso, J. C., Cormier, S., de Runz, C., Fouchal, H., & Wandeto, J. M. (2023). Streaming-Based Anomaly Detection in ITS Messages. Applied Sciences, 13(12), 7313. https://doi.org/10.3390/app13127313