Research on a Real-Time Driver Fatigue Detection Algorithm Based on Facial Video Sequences

Abstract

:1. Introduction

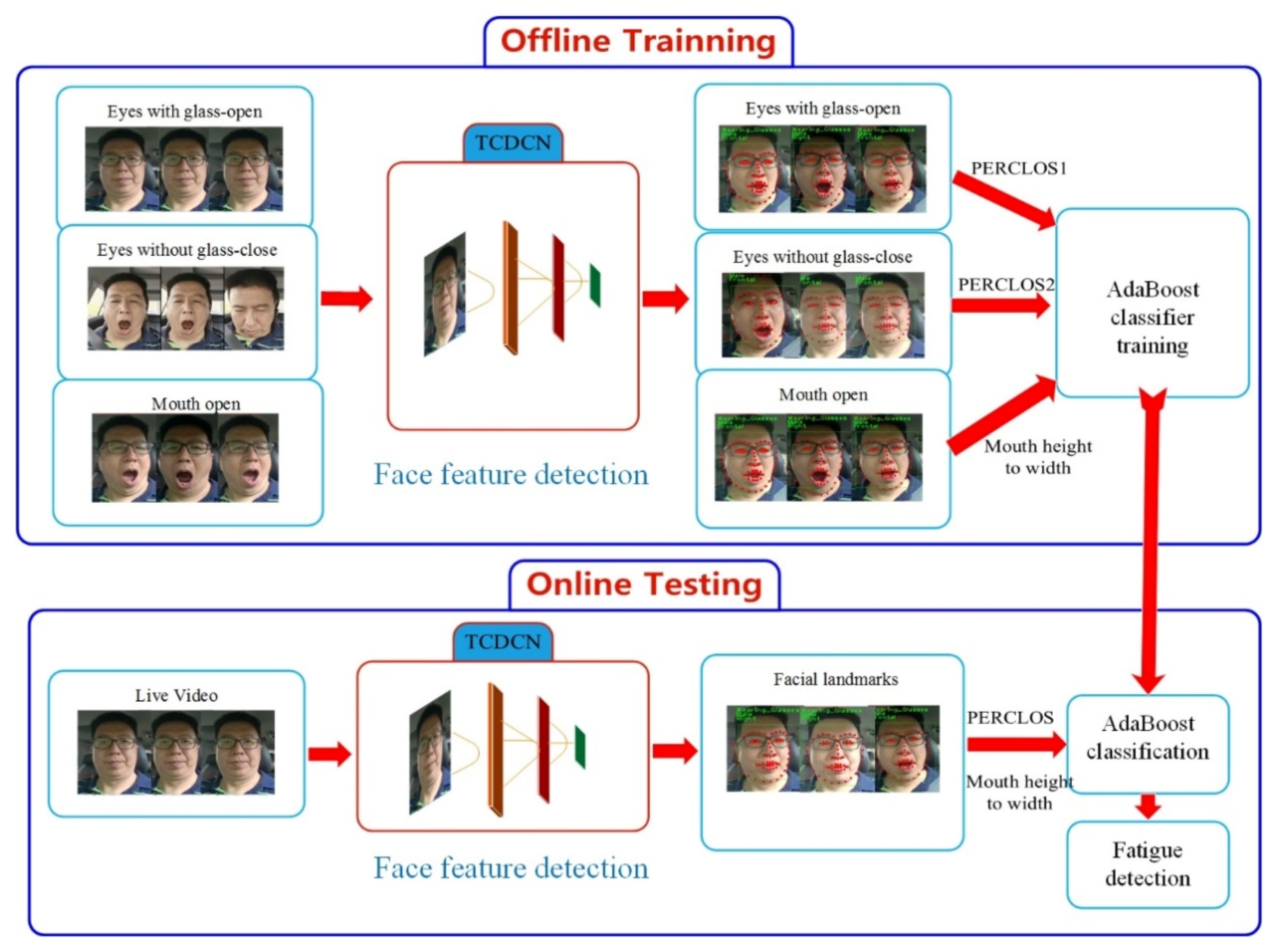

2. Approach

2.1. Tasks-Constrained Deep Convolution Network

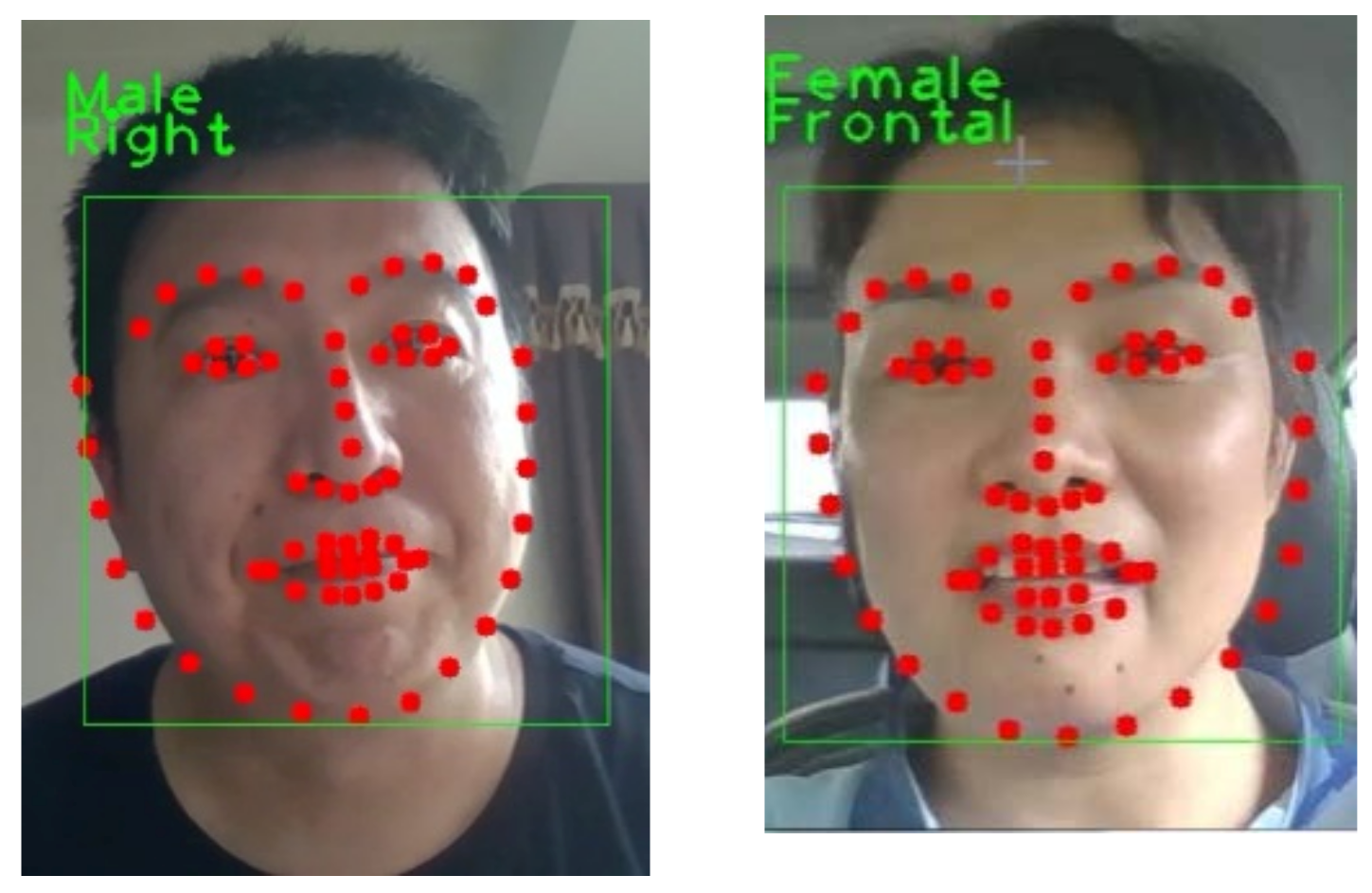

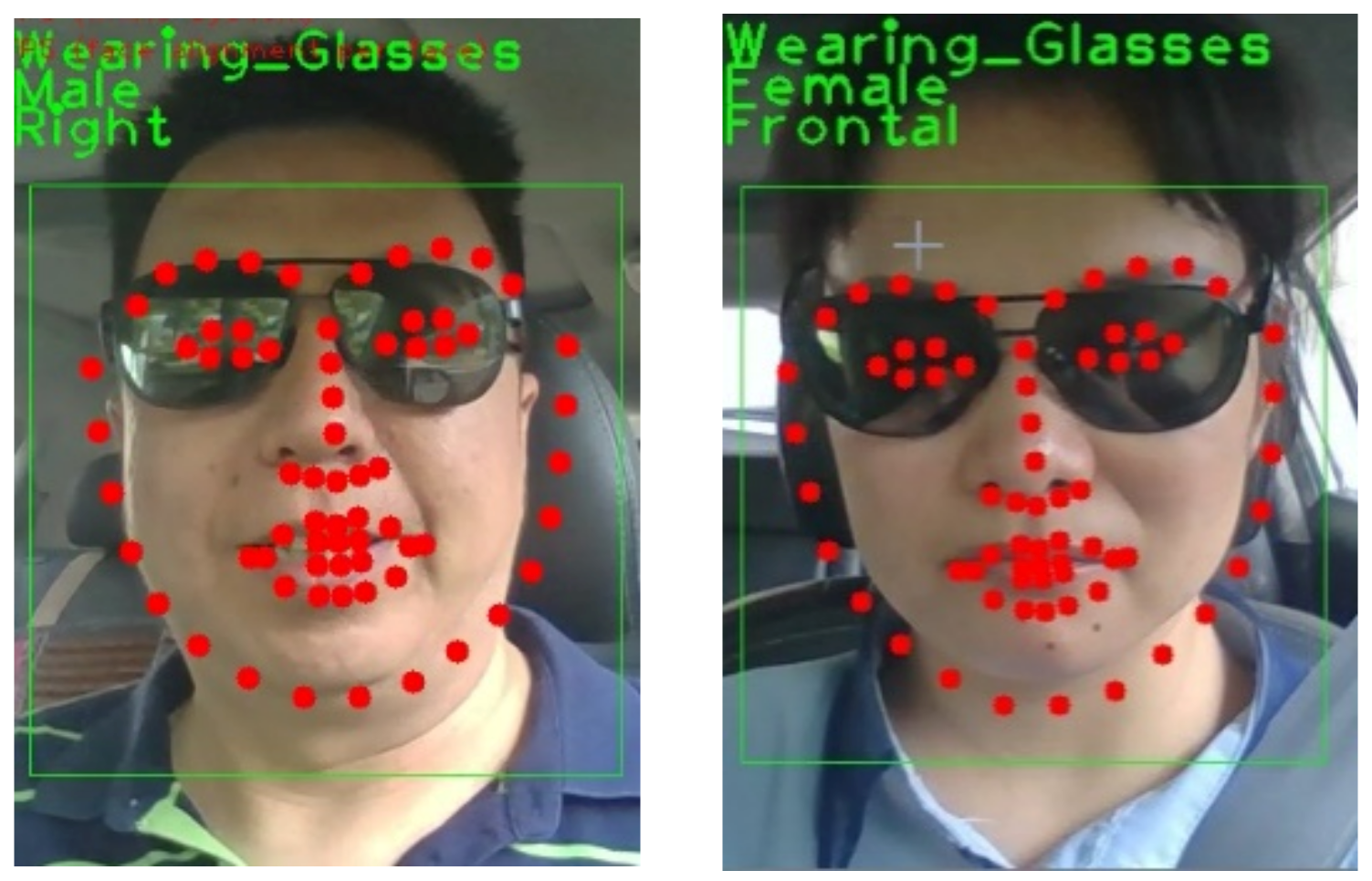

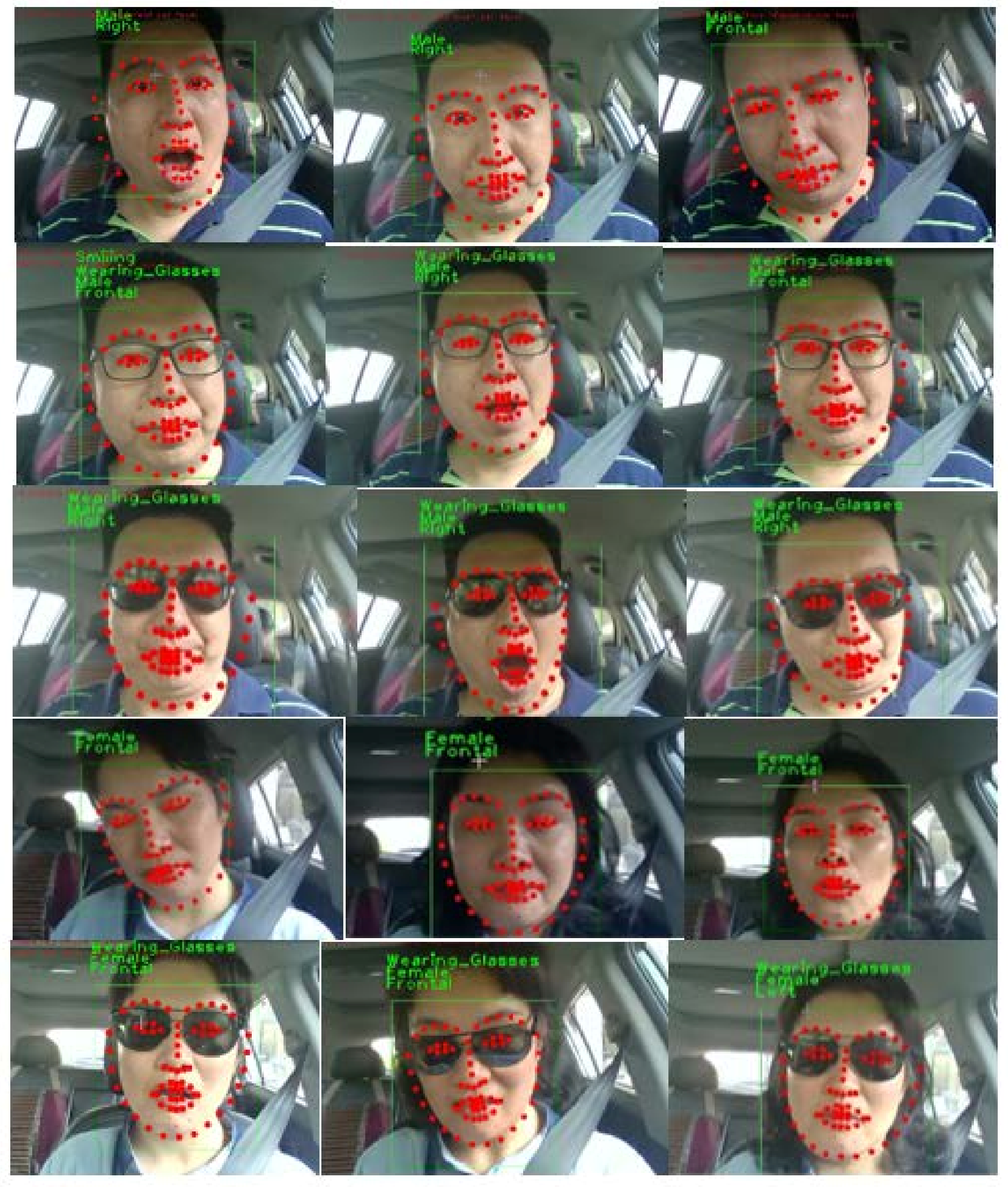

2.2. Facial Landmarks and Auxiliary Task

2.3. Driver Fatigue Recognition Features

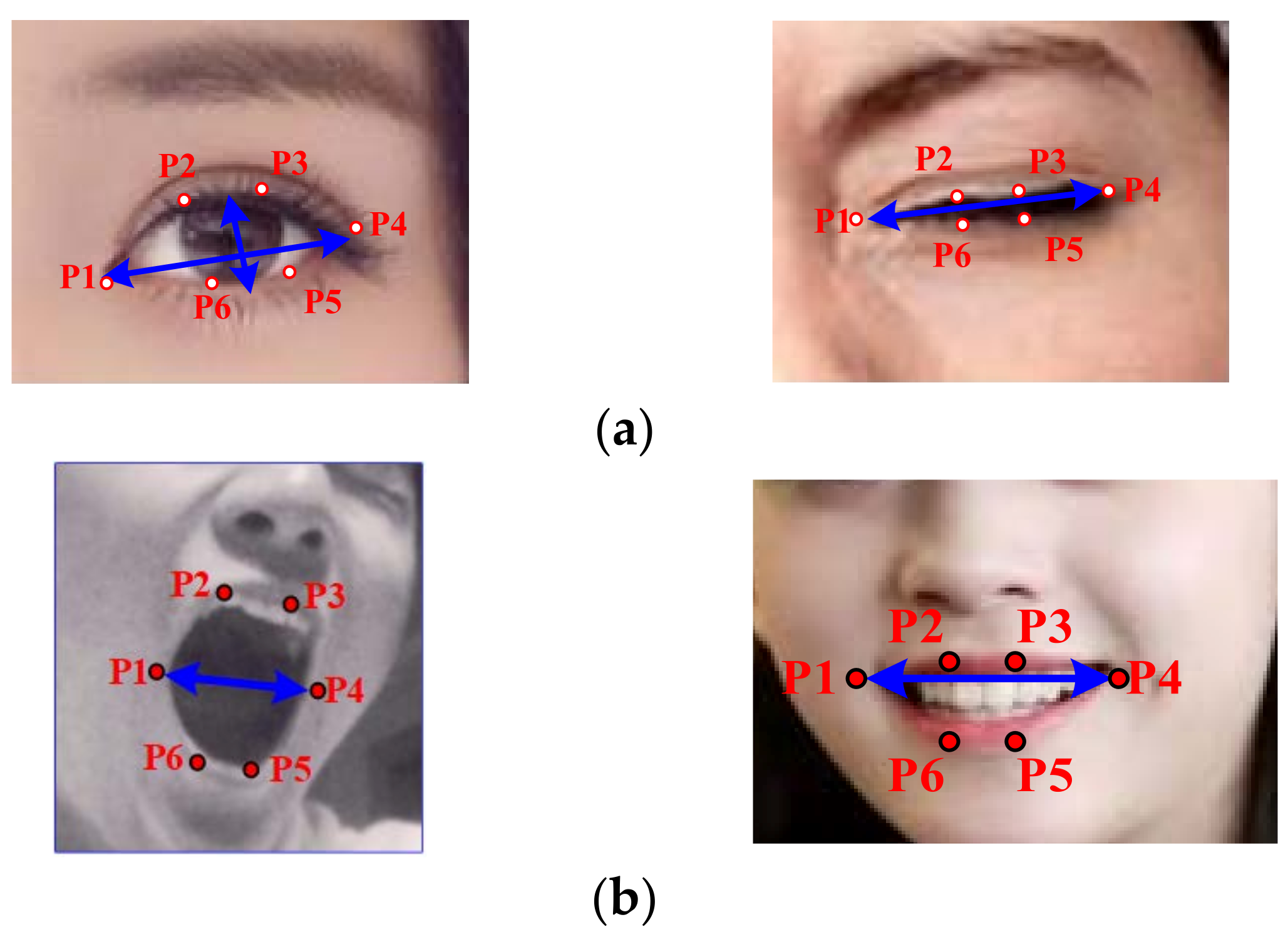

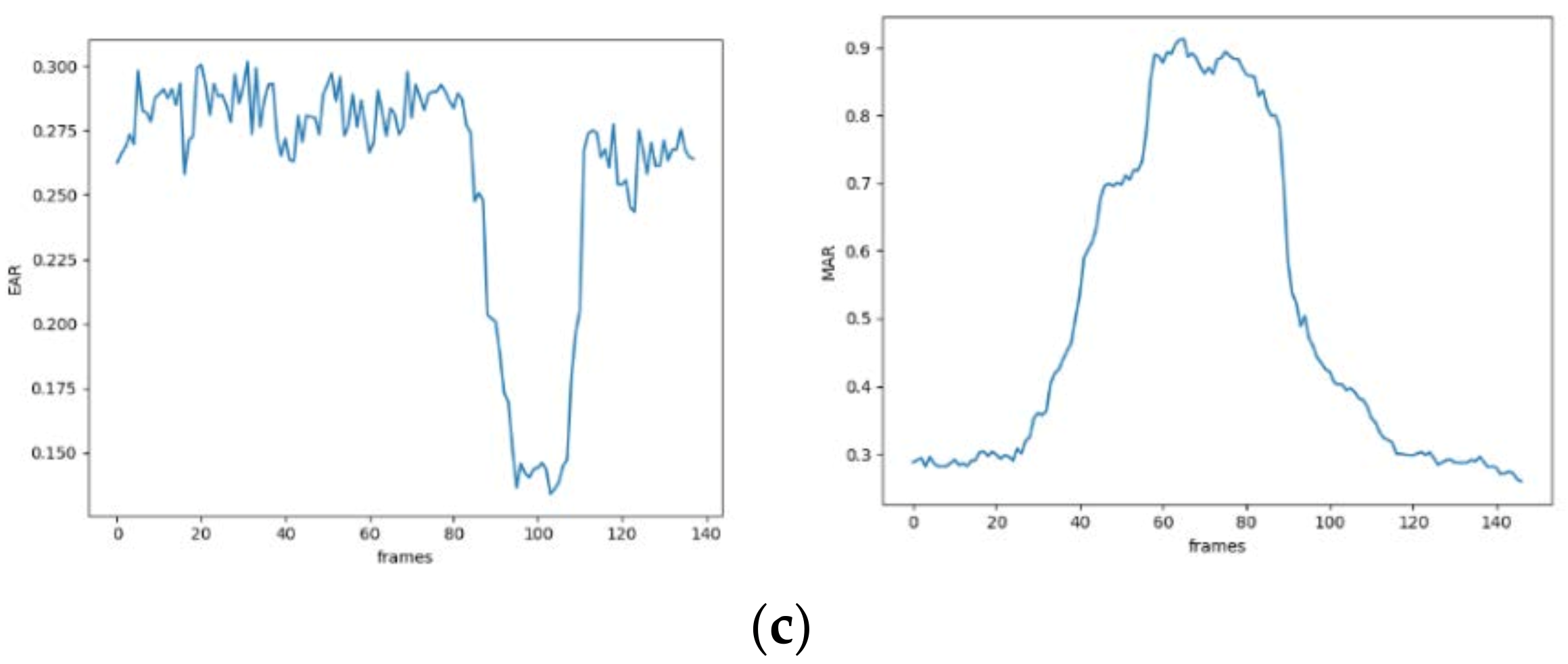

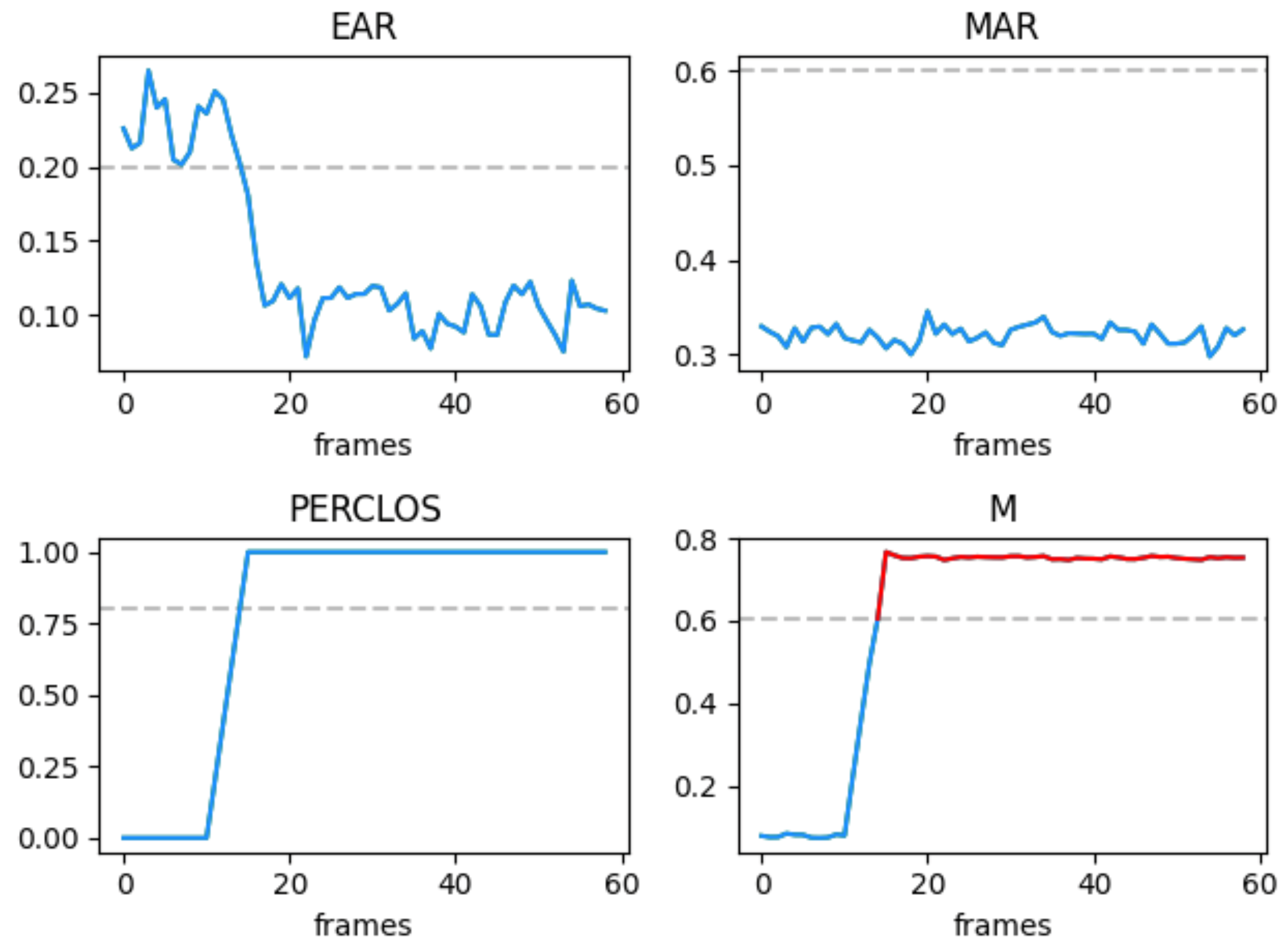

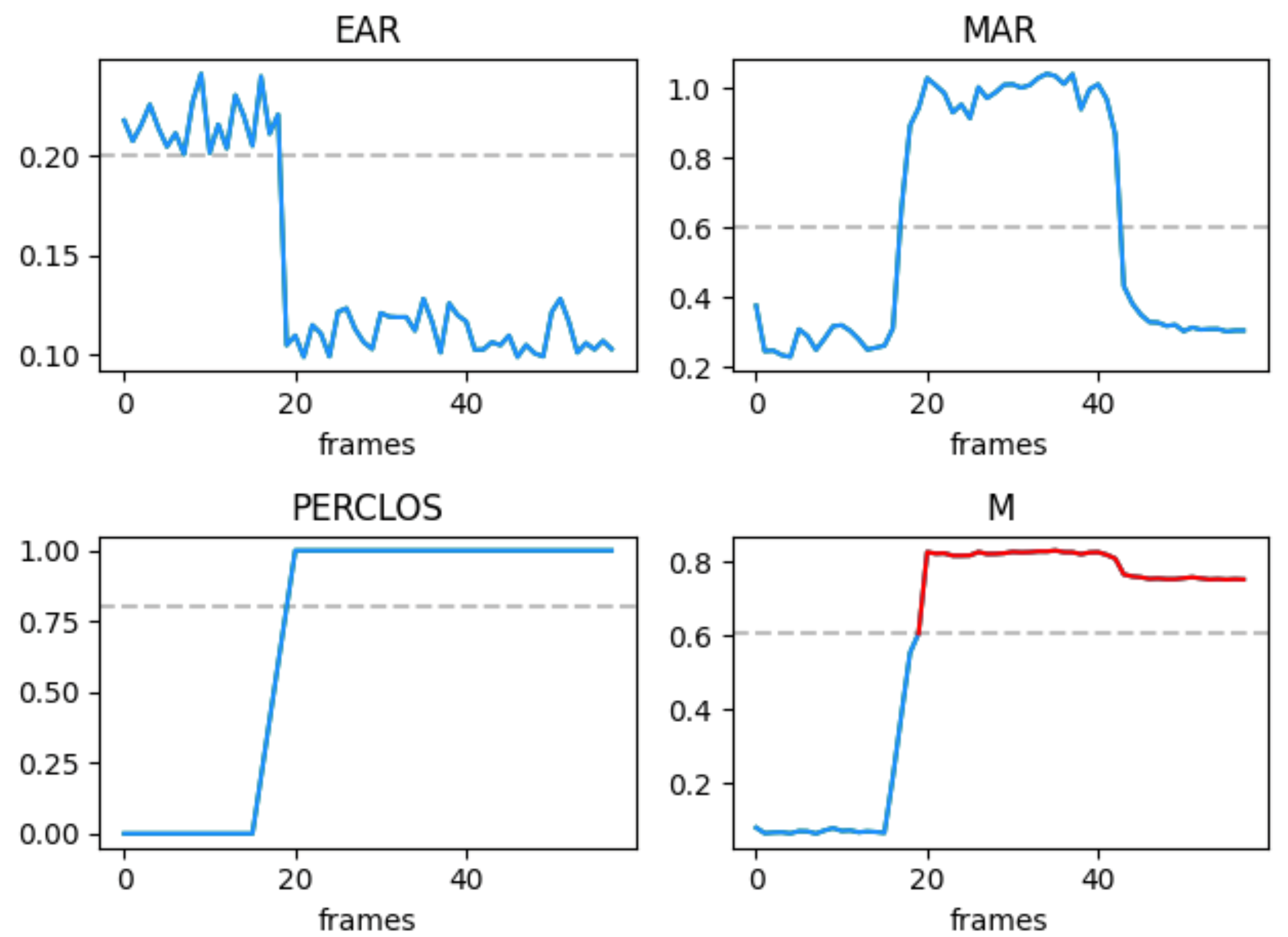

2.3.1. EAR/MAR: An Outstanding Feature for Eyes/Mouth State Recognition

2.3.2. PERCLOS: An Effective Cue for Driver Fatigue Detection

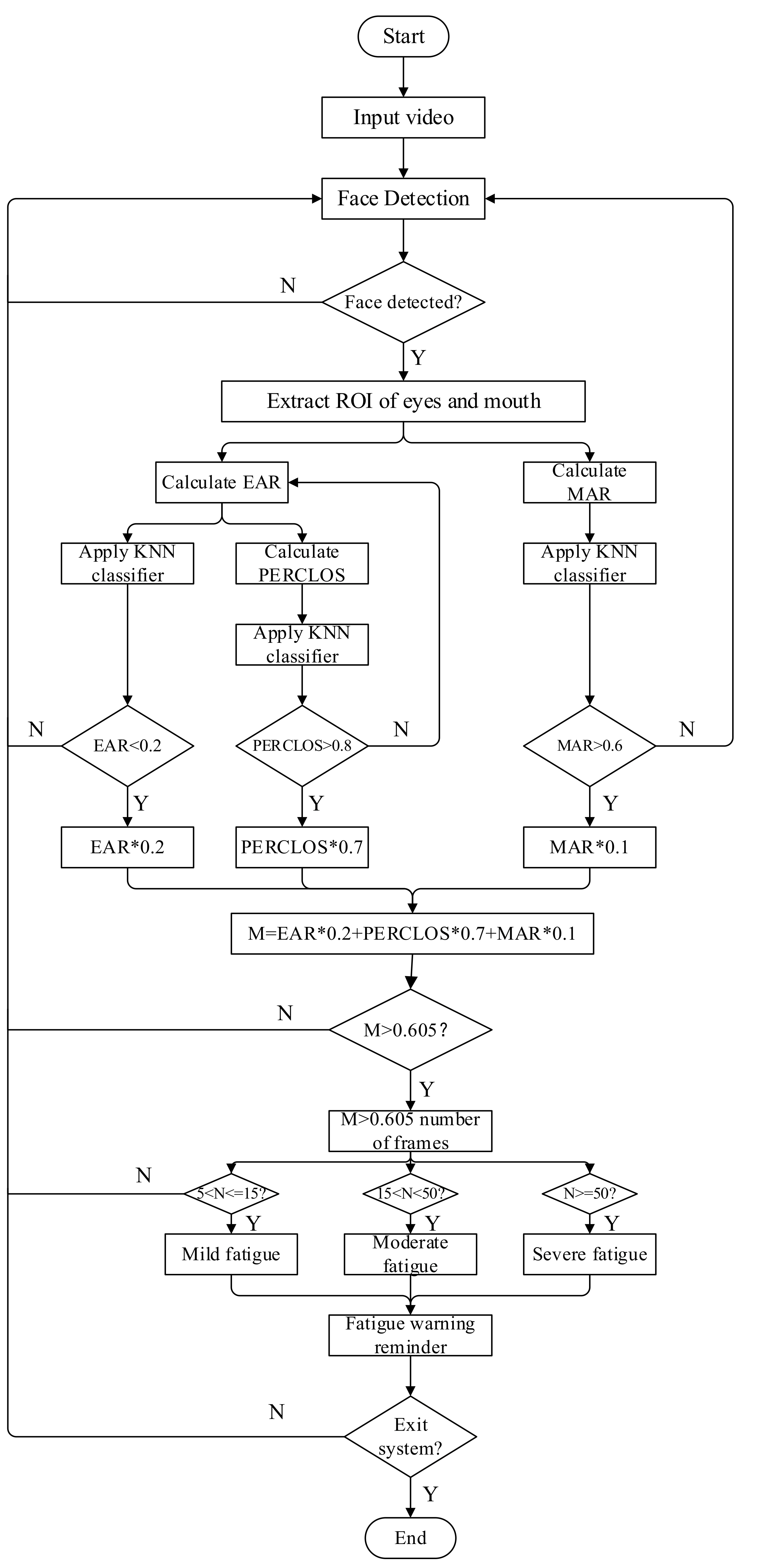

2.3.3. The Flow Chart of Online Monitoring

- (1)

- The camera collects video input, detects each frame of image, and filters out the image containing face.

- (2)

- ROI (region of interest) of eyes and mouth of face image are extracted.

- (3)

- Based on the extracted ROI, EAR and MAR are calculated for the eye and mouth regions, respectively, to obtain the values of EAR and MAR. According to the obtained EAR value, PERCLOS is calculated, and the obtained EAR, PERCLOS, and MAR values are applied to K-nearest neighbor (KNN), respectively.

- (4)

- Through experiments, the threshold of EAR is set to 0.2 and the threshold of PERCLOS is set to 0.8 according to the p80 criterion.

- (5)

- When the Mar value is greater than 0.6, the driver is considered to start yawning, and the Mar threshold is set to 0.6.

- (6)

- Set the weight values of EAR and PERCLOS to 0.2 and 0.8, respectively, and the Mar value is the auxiliary value, and its weight value is 0.1.

- (7)

- Through experimental calculation, it is reasonable that the M threshold is 0.605. In this part, N is recorded as the number of times M > 0.605 (i.e., the cumulative length of driver’s eyes closed within a certain period of time).

- (8)

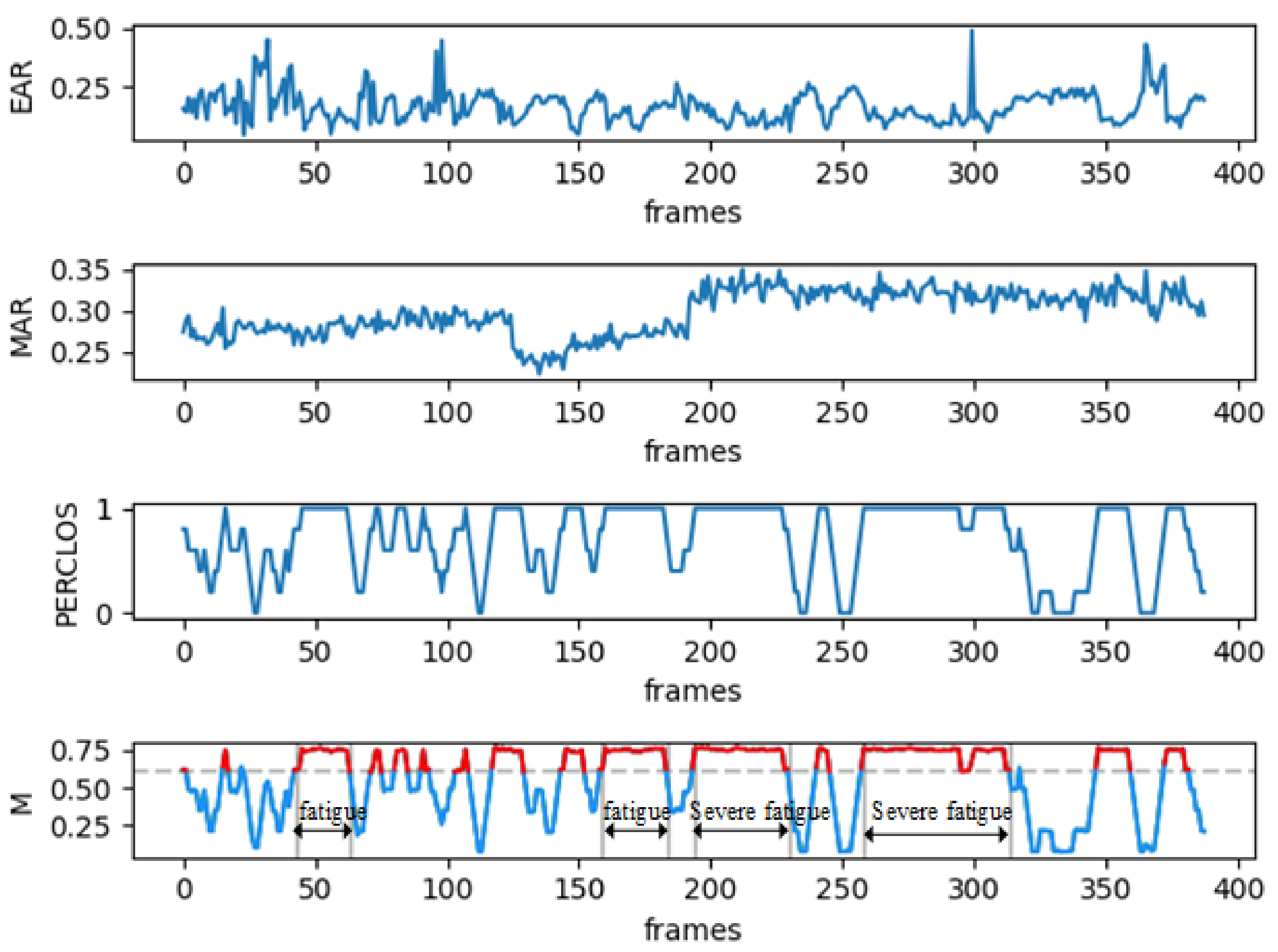

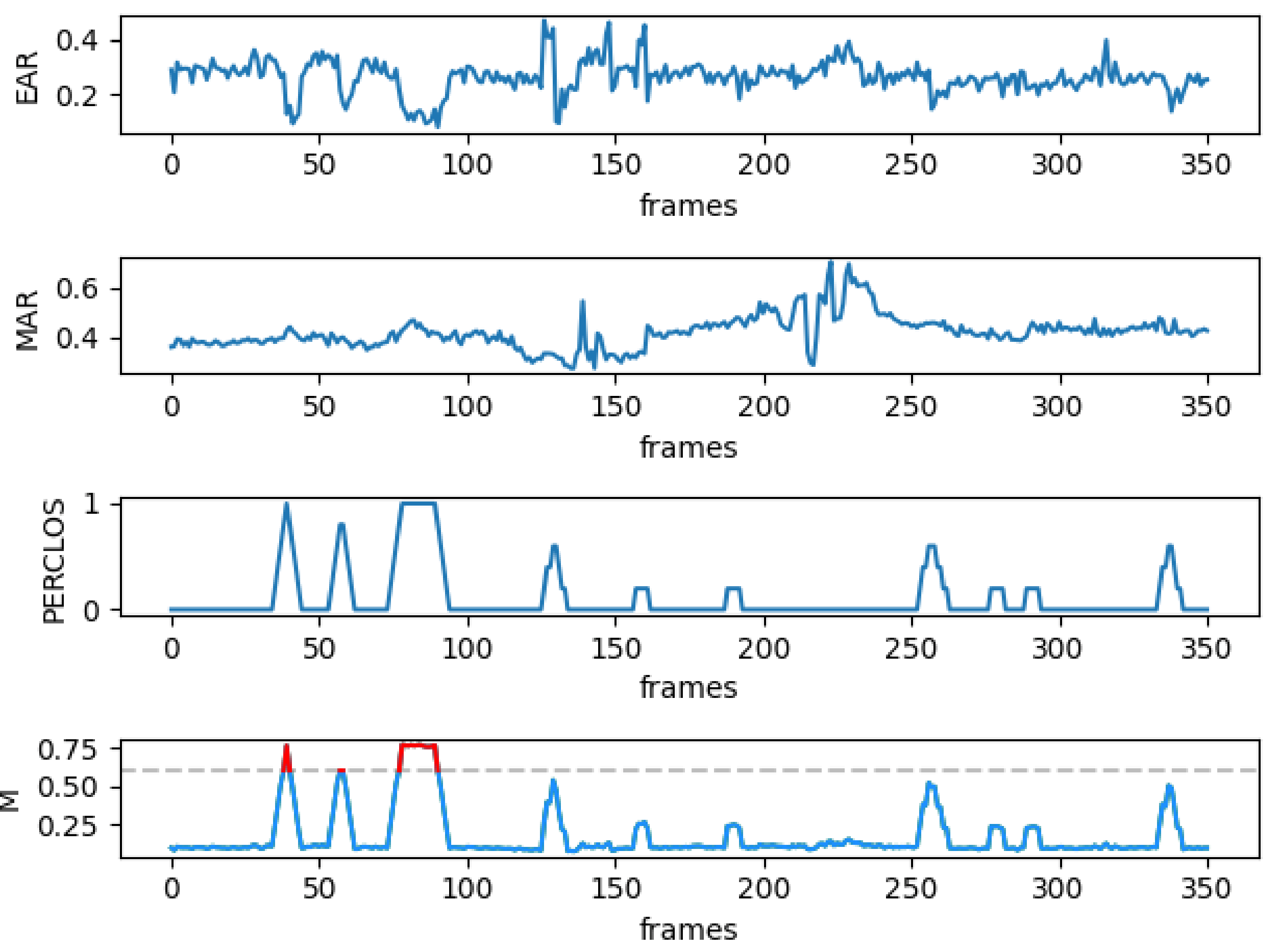

- According to the experimental results, when 10 < N < = 20, mild fatigue; 20 < N < 50, moderate fatigue; N ≥ 50, severe fatigue.

3. Experimental Data and Results

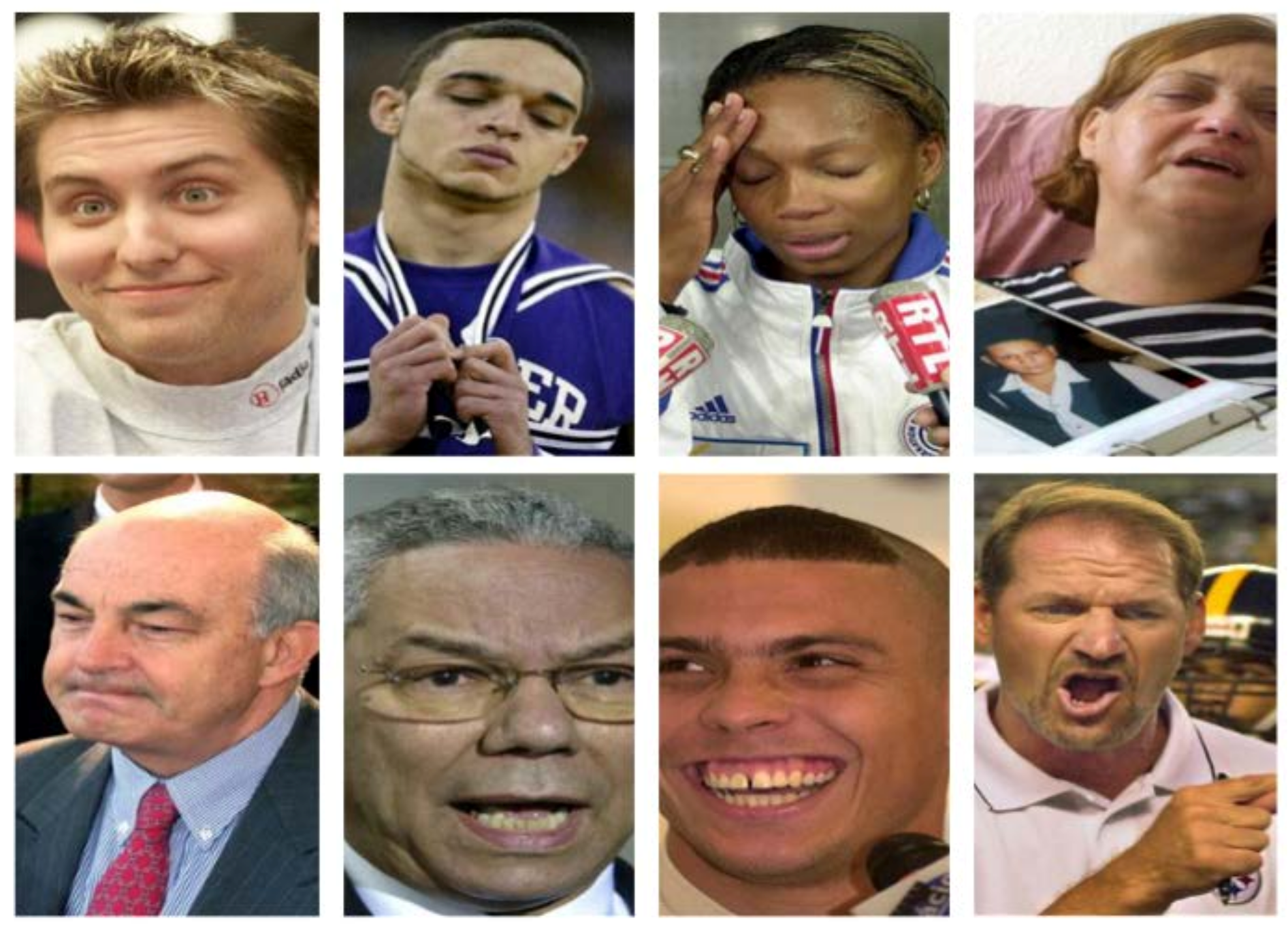

3.1. Environment and Data Set

3.2. Short-Term Test

3.3. Long-Term Test in Different Driving Conditions

4. Conclusions

- (1)

- A comprehensive driving fatigue detection method based on multi-facet feature fusion based on TCDCN deep learning is proposed, which makes comprehensive use of facial features (such as eyes and mouth) to fuse visual information and effectively improve the accuracy of driving fatigue detection. The experimental results show that the proposed algorithm can greatly improve the detection accuracy of driving fatigue under various driving conditions.

- (2)

- The EAR/MAR/PERCLOS calculated through TCDCN is a more concise solution regardless of whether the driver wears glasses or not. No matter whether the driver wears glasses or not, the TCDCN method can accurately identify the eye feature points. This feature is very important for subsequent driving fatigue detection.

- (3)

- The fatigue detection algorithm proposed in this paper can not only effectively identify the driver’s fatigue state such as dozing and yawning in real time, but also automatically determine the level of fatigue for a driver: mild, moderate, or severe fatigue.

- (4)

- In the future research work, we will focus on the following aspects: (1) the above research will be installed on a real vehicle to further verify the recognition effect of similar driving conditions at night; and (2) we will add other visual features to fuse information, such as the driver’s head posture.

Author Contributions

Funding

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- Amodio, A.; Ermidoro, M.; Maggi, D.; Formentin, S.; Savaresi, S.M. Automatic detection of driver impairment based on pupillary light relex. IEEE Trans. Intell. Transp. Syst. 2019, 20, 3038–3048. [Google Scholar] [CrossRef]

- Li, X.; Lian, X.; Liu, F. Rear-End Road Crash Characteristics Analysis Based on Chinese In-Depth Crash Study Data. CICTP 2016, 2016, 1536–1545. [Google Scholar] [CrossRef]

- Williamson, A.; Lombardi, D.A.; Folkard, S.; Stutts, J.; Courtney, T.; Connor, J. The link between fatigue and safety. Accid. Anal. Prev. 2011, 43, 498–515. [Google Scholar] [CrossRef] [PubMed]

- Zhang, G.; Yau, K.K.; Zhang, X.; Li, Y. Traffic accidents involving fatigue driving and their extent of casualties. Accid. Anal. Prev. 2016, 87, 34–42. [Google Scholar] [CrossRef]

- Borghini, G.; Astolfi, L.; Vecchiato, G.; Mattia, D.; Babiloni, F. Measuring neurophysiological signals in aircraft pilots and car drivers for the assessment of mental workload, fatigue and drowsiness. Neurosci. Biobehav. Rev. 2014, 44, 58–75. [Google Scholar] [CrossRef]

- Davidovi, J.; Pei, D.; Lipovac, K.; Anti, B. The significance of the development of road safety performance indicators related to driver fatigue. Transp. Res. Procedia 2020, 45, 333–342. [Google Scholar] [CrossRef]

- Cui, Z.; Sun, H.M.; Yin, R.N. Real-time detection method of driver fatigue state based on deep learning of face video. Multimed. Tools Appl. 2021, 80, 25495–25515. [Google Scholar] [CrossRef]

- Hu, X.; Lodewijks, G. Exploration of the effects of task-related fatigue on eye-motion features and its value in improving driver fatigue-related technology. Transp. Res. Part F Traffic Psychol. Behav. 2021, 80, 150–171. [Google Scholar] [CrossRef]

- You, F.; Li, Y.-H.; Huang, L.; Chen, K.; Zhang, R.-H.; Xu, J.-M. Monitoring drivers’ sleepy status at night based on machine vision. Multimed. Tools Appl. 2017, 76, 14869–14886. [Google Scholar] [CrossRef]

- Ren, S.; Cao, X.; Wei, Y.; Sun, J. Face Alignment at 3000 FPS via Regressing Local Binary Features. In Proceedings of the 2014 IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1685–1692. [Google Scholar]

- Zhang, K.; Zhang, Z.; Li, Z.; Qiao, Y. Joint Face Detection and Alignment Using Multitask Cascaded Convolutional Networks. IEEE Signal Process. Lett. 2016, 23, 1499–1503. [Google Scholar] [CrossRef] [Green Version]

- Chaudhuri, A.; Routray, A. Driver Fatigue Detection through Chaotic Entropy Analysis of Cortical Sources Obtained From Scalp EEG Signals. IEEE Trans. Intell. Transp. Syst. 2019, 21, 185–198. [Google Scholar] [CrossRef]

- Zhang, C.; Ma, J.; Zhao, J.; Liu, P.; Cong, F.; Liu, T.; Li, Y.; Sun, L.; Chang, R. Decoding Analysis of Alpha Oscillation Networks on Maintaining Driver Alertness. Entropy 2020, 22, 787. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhu, Y.; He, Y.; Liu, L.; Lu, B.; Lin, W. Detection of Malicious PDF Files Using a Two-Stage Machine Learning Algorithm. Chin. J. Electron. 2020, 29, 1165–1177. [Google Scholar] [CrossRef]

- Hao, Z.; Wan, G.; Tian, Y.; Tang, Y.; Dai, T.; Liu, M.; Wei, R. Research on Driver Fatigue Detection Method Based on Parallel Convolution Neural Network. In Proceedings of the 2019 IEEE International Conference on Power, Intelligent Computing and Systems (ICPICS), Shenyang, China, 30–31 May 2019; pp. 164–168. [Google Scholar]

- Liu, W.; Sun, H.; Shen, W. Driver fatigue detection through pupil detection and yawing analysis. In Proceedings of the 2010 International Conference on Bioinformatics and Biomedical Technology, Chengdu, China, 16–18 April 2010; pp. 404–407. [Google Scholar]

- Wadhwa, A.; Roy, S.S. Driver drowsiness detection using heart rate and behavior methods: A study. Data Anal. Biomed. Eng. Healthc. 2021, 55, 163–177. [Google Scholar]

- Villanueva, A.; Benemerito, R.L.L.; Cabug-Os, M.J.M.; Chua, R.B.; Rebeca, C.K.D.C.; Miranda, M. Somnolence Detection System Utilizing Deep Neural Network. In Proceedings of the 2019 International Conference on Information and Communications Technology (ICOIACT), Yogyakarta, Indonesia, 24–25 July 2019; pp. 602–607. [Google Scholar]

- Savas, B.K.; Becerikli, Y. Real Time Driver Fatigue Detection System Based on Multi-Task ConNN. IEEE Access 2020, 8, 1–17. [Google Scholar] [CrossRef]

- Zhang, Y.; Han, X.; Gao, W.; Hu, Y. Driver Fatigue Detection Based On Facial Feature Analysis. Int. J. Pattern Recognit. Artif. Intell. 2021, 35, 345–356. [Google Scholar] [CrossRef]

- Tuncer, T.; Dogan, S.; Ertam, F.; Subasi, A. A dynamic center and multi threshold point based stable feature extraction network for driver fatigue detection utilizing EEG signals. Cogn. Neurodyn. 2021, 15, 2533–2543. [Google Scholar] [CrossRef] [PubMed]

- Wang, F.; Xu, Q.; Fu, R. Study on the Effect of Man-Machine Response Mode to Relieve Driving Fatigue Based on EEG and EOG. Sensors 2019, 19, 4883. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Yang, Y.; Gao, Z.; Li, Y.; Cai, Q.; Marwan, N.; Kurths, J. A Complex Network-Based Broad Learning System for Detecting Driver Fatigue From EEG Signals. IEEE Trans. Syst. Man Cybern. Syst. 2019, 99, 1–9. [Google Scholar] [CrossRef]

- Min, J.; Xiong, C.; Zhang, Y.; Cai, M. Driver fatigue detection based on prefrontal EEG using multi-entropy measures and hybrid model. Biomed. Signal Process. Control 2021, 69, 102857–102865. [Google Scholar] [CrossRef]

- He, J.; Chen, J.; Liu, J.; Li, H. A Lightweight Architecture for Driver Status Monitoring via Convolutional Neural Networks. In Proceedings of the 2019 IEEE International Conference on Robotics and Biomimetics (ROBIO), Dali, China, 6–8 December 2019; pp. 388–394. [Google Scholar]

- Carlotta Olivetti, E.; Violante, M.G.; Vezzetti, E.; Marcolin, F.; Eynard, B. Engagement evaluation in a virtual learning environment via facial expression recognition and self-reports: A Preliminary Approach. Appl. Sci. 2020, 10, 314. [Google Scholar] [CrossRef] [Green Version]

- Useche, O.; El-Sheikh, E. An Intelligent System Framework for Measuring Attention Levels of Students in Online Course Environments. In Proceedings of the International Conference on Artificial Intelligence (ICAI), The Steering Committee of The World Congress in Computer Science, Computer Engineering and Applied Computing (WorldComp), Las Vegas, NV, USA, 27–30 July 2015; p. 452. [Google Scholar]

- Nonis, F.; Dagnes, N.; Marcolin, F.; Vezzetti, E. 3D Approaches and Challenges in Facial Expression Recognition Algorithms—A Literature Review. Appl. Sci. 2019, 9, 3904. [Google Scholar] [CrossRef] [Green Version]

- Teng, T.; Yang, X. Facial expressions recognition based on convolutional neural networks for mobile virtual reality. ACM Siggraph Conf. 2016, 1, 475–478. [Google Scholar]

- Freund, Y.; Schapire, R.E. A Decision-Theoretic Generalization of On-Line Learning and an Application to Boosting. J. Comput. Syst. Sci. 1997, 55, 119–139. [Google Scholar] [CrossRef] [Green Version]

- Zhang, Z.; Luo, P.; Loy, C.C.; Tang, X. Facial Landmark Detection by Deep Multi-task Learning. In European Conference on Computer Vision; Springer: Cham, Switzerland, 2014. [Google Scholar]

- Cheng, R.; Zhao, Y.; DAI, Y. An On-Board Embedded Driver Fatigue Warning System Based on Adaboost Method; Acta Scientiarum Naturalium Universitatis Pekinensis: Beijing, China, 2012; Volume 48, pp. 719–726. [Google Scholar]

- Shi-Ru, Q.U.; Peng, J.C. Design of multi-feature fusion driver fatigue state detection system based on FPGA. Transducer Microsyst. Technol. 2013, 32, 86–105. [Google Scholar]

- Dinges, D.F.; Grace, R. PERCLOS: A Valid Psychophysiological Measure of Alertness as Assessed by Psychomotor Vigilance; Technical Briefs; Federal Highway Administration, Office of Motor Carriers: Washington, DC, USA, 1998.

- Cheng, Q.; Wang, W.; Jiang, X.; Hou, S.; Qin, Y. Assessment of Driver Mental Fatigue Using Facial Landmarks. IEEE Access 2019, 7, 150423–150434. [Google Scholar] [CrossRef]

- Fddb Dataset Official. Available online: http://vis-www.cs.umass.edu/fddb/index.html (accessed on 7 October 2021).

| Item | Description | Scale |

|---|---|---|

| 1 | Do you feel drowsy? | 1–4 |

| 2 | Do you have difficulty with thinking clearly? | 1–4 |

| 3 | Do you have difficulty with making an immediate response to a question? | 1–4 |

| 4 | Do you have difficulty with control over your muscles? | 1–4 |

| 5 | Do you have difficulty with focusing? | 1–4 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhu, T.; Zhang, C.; Wu, T.; Ouyang, Z.; Li, H.; Na, X.; Liang, J.; Li, W. Research on a Real-Time Driver Fatigue Detection Algorithm Based on Facial Video Sequences. Appl. Sci. 2022, 12, 2224. https://doi.org/10.3390/app12042224

Zhu T, Zhang C, Wu T, Ouyang Z, Li H, Na X, Liang J, Li W. Research on a Real-Time Driver Fatigue Detection Algorithm Based on Facial Video Sequences. Applied Sciences. 2022; 12(4):2224. https://doi.org/10.3390/app12042224

Chicago/Turabian StyleZhu, Tianjun, Chuang Zhang, Tunglung Wu, Zhuang Ouyang, Houzhi Li, Xiaoxiang Na, Jianguo Liang, and Weihao Li. 2022. "Research on a Real-Time Driver Fatigue Detection Algorithm Based on Facial Video Sequences" Applied Sciences 12, no. 4: 2224. https://doi.org/10.3390/app12042224

APA StyleZhu, T., Zhang, C., Wu, T., Ouyang, Z., Li, H., Na, X., Liang, J., & Li, W. (2022). Research on a Real-Time Driver Fatigue Detection Algorithm Based on Facial Video Sequences. Applied Sciences, 12(4), 2224. https://doi.org/10.3390/app12042224