A Study of Network Intrusion Detection Systems Using Artificial Intelligence/Machine Learning

Abstract

1. Introduction

2. Intrusion Detection Systems (IDS)

- Network Intrusion Detection Systems are IDSs placed in the network at strategic points. NIDS analyses the overall traffic of the network to detect if there are malicious activities in the network. NIDS helps to detect attacks from your own hosts and is a key component of the security of most organization networks.

- Host Intrusion Detection Systems are IDSs that are in all client computers (hosts) of the network. Contrary to NIDS, HIDS analyses the traffic of a single host as well as its activities, if it detects abnormal behaviour, it will raise an alarm.

- Misuse detection, also known as signature detection, searches for known patterns of intrusion in the network or in the host. Each attack has a specific signature, for instance, it can be the payload of the packet, the source IP address, or a specific header. The IDS can raise an alarm if it detects an attack that has one of the signatures listed in the list of known signatures of the IDS. The advantage of this approach is its high accuracy to detect known attacks. However, its weakness is that it is inefficient against unknown or zero-day (never seen before attack) patterns.

- Anomaly detection defines a normal state of the network or the host, called a baseline, and any deviation from this baseline is reported as a potential attack. For instance, anomaly-based IDS can create a baseline based on the common network traffic such as the services provided by each host, the services used by each host and the volume of activity during the day. Thus, if an attacker accesses an internal resource at midnight, and if in the baseline there should be almost no activity at midnight, then the IDS will raise an alarm. The advantage of anomaly detection is its flexibility to find unknown intrusion attacks. However, in most cases it is difficult to precisely define what the baseline of a network is, thus, the false detection rate of these techniques can be high.

- Hybrid detection combines both of the aforementioned detections. Generally, they have a lower false detection rate than anomaly techniques and can discover new attacks.

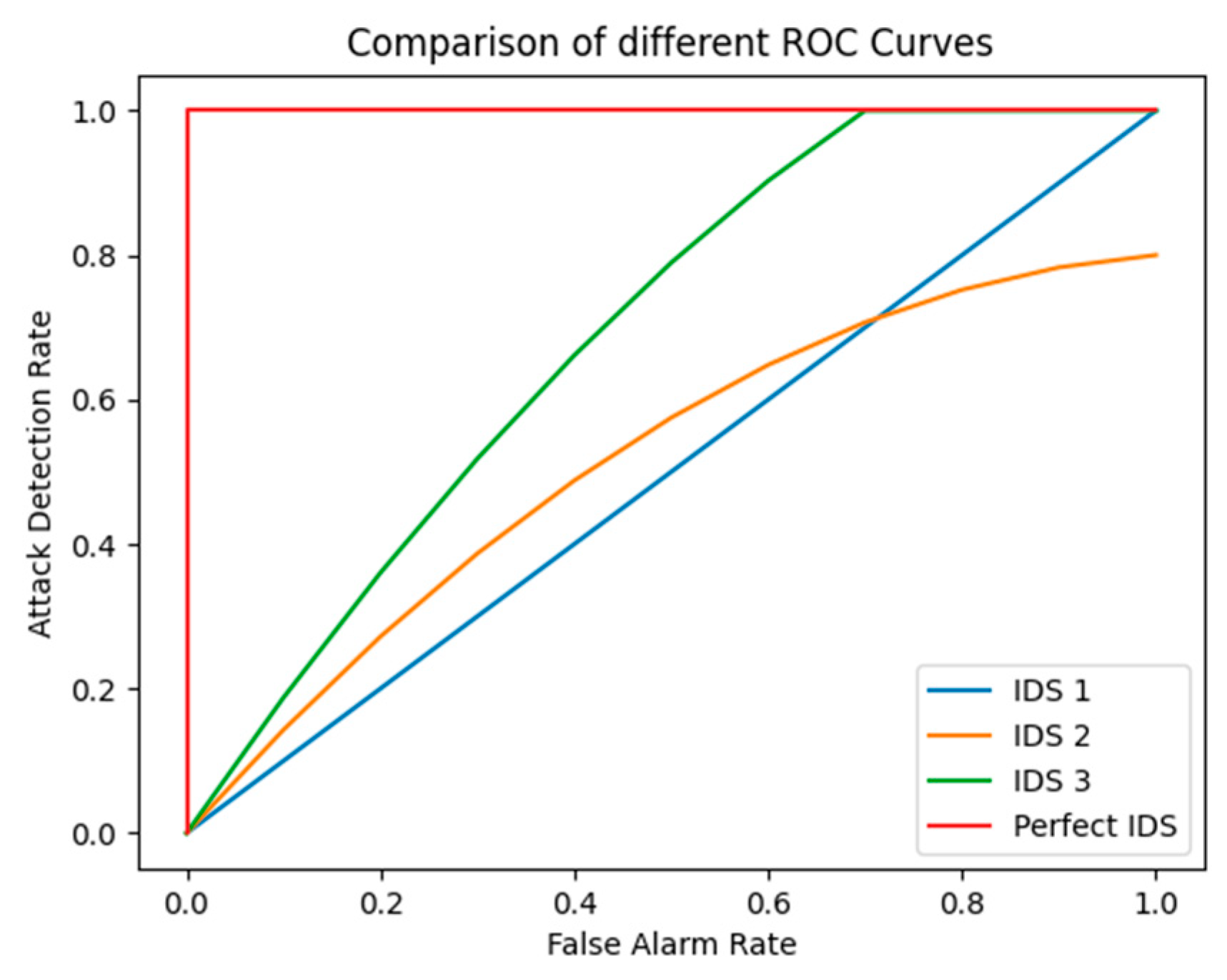

3. IDS Performance Evaluation

- True Positive (TP): An attack sample has been correctly identified as an attack.

- True Negative (TN): A normal sample has been correctly identified as normal traffic.

- False Positive (FP): A normal sample has been incorrectly identified as an attack.

- False Negative (FN): An attack sample has been incorrectly identified as normal traffic

3.1. Precision

3.2. Recall

3.3. False Alarm Rate

3.4. True Negative Rate

3.5. Accuracy

3.6. F-Measure

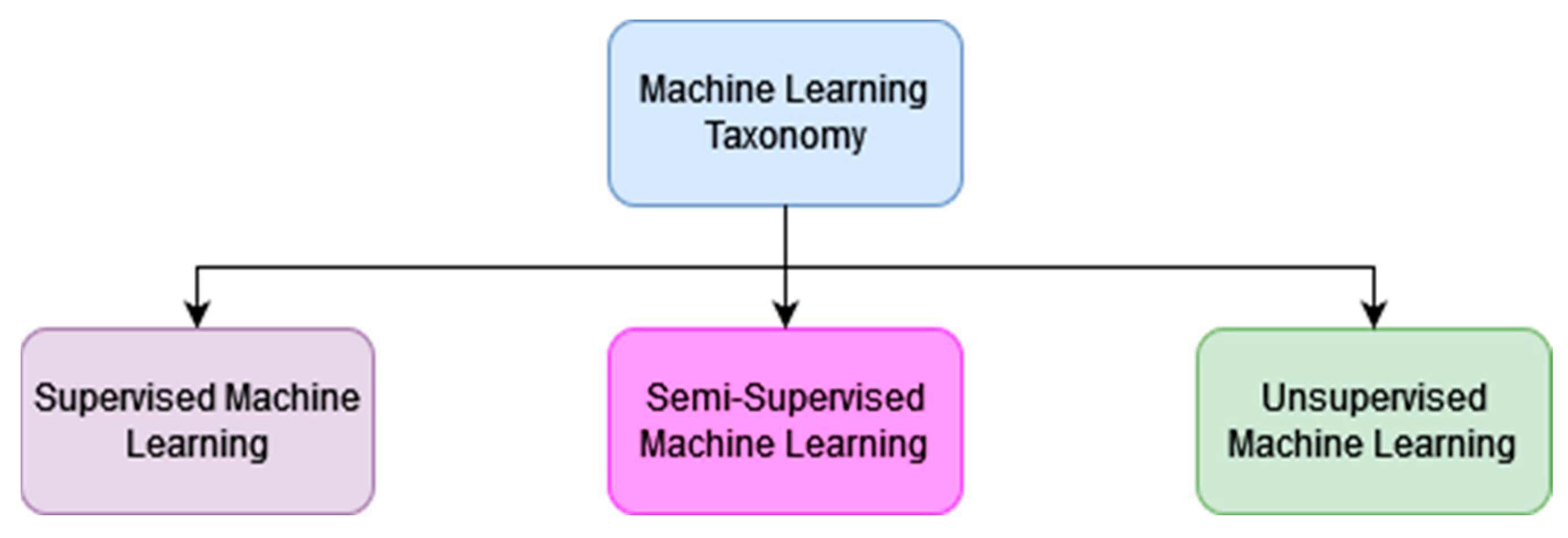

4. Machine Learning

4.1. Supervised Machine Learning

- Classification models are used to put data into specific categories. From a dataset it recognizes the category it belongs to based on its features. The model will be trained on labelled input and output data to understand what the features of the input data are so as to correctly classify it. Classification models are very useful to detect attacks. For instance, for traffic coming into our network, a well-trained classification model can classify the traffic as normal traffic or as abnormal traffic. If the model performs well, the abnormal traffic can then be classified into subcategories of well-known attacks such as DoS, phishing, worms, port scan, etc. Common classification algorithms are decision tree, k-nearest neighbour, support vector machine, random forest, and neural network [6].

- Regression models are used to predict continuous outcomes. The model is trained to understand the relationship between independent variables and a dependent variable. Regression is used to find patterns and relationships in datasets that can then be applied to a new dataset. Regression models are mainly used for forecasting the evolution of market prices or predicting trends [7]. Common regression algorithms are linear regression, logistic regression, decision tree, random forest, and support vector machine.

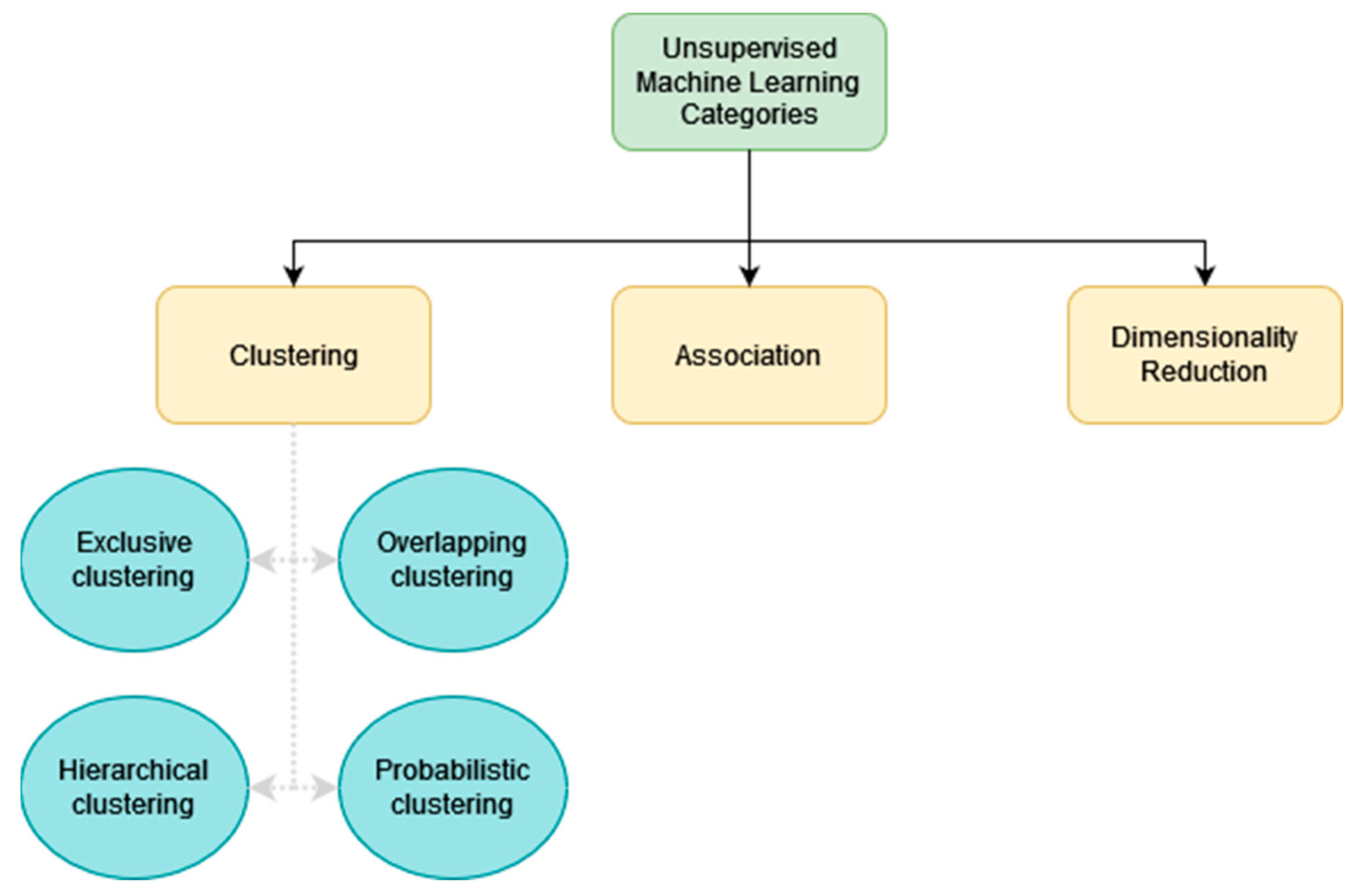

4.2. Unsupervised Machine Learning

- Clustering is a technique which groups unlabeled data according to their common or uncommon features [8]. At the end of this process, the unlabeled data will be sorted into different data groups. Clustering algorithms can be subcategorized as exclusive, overlapping, hierarchical and probabilistic [9]. With exclusive clustering, data that are grouped can only belong to one cluster only. Overlapping clustering allows data to belong to multiple clusters to have a richer model when data can belong to different categories. For instance, overlapping clustering is required for video analysis, since video can have multiple categories [10]. Hierarchical clustering is when the different isolated clusters are merged iteratively based on the similarity of the clusters until only one cluster is left. Different methods can be used to measure the similarities between two different clusters. The similarities between clusters are often measured as the distance between the clusters or their data. One of the most common metrics used is the Euclidean distance. The Manhattan distance is another metric often used [9]. With hierarchical clustering, it is possible to use the differences instead of the similarities to merge the clusters into a single cluster. It is known as Divisive clustering. With probabilistic clustering data points are put into clusters based on the probability that they belong to this cluster. Common clustering algorithms of the different clustering types are K-means, Fuzzy K-means, and Gaussian Mixture.

- Association is a method that finds relationships between the input data. Association is often used for marketing purposes to find the relationship between products and thus, to propose other products based on customer purchases. A common association algorithm is the Apriori algorithm [11].

- Dimensionality reduction is a method used to reduce the number of features in a dataset to make it easier to process. Nowadays, due to the growing size of the dataset that is used, it is common to use a dimensionality reduction method before applying a specific machine learning algorithm to a dataset. There are two main methods to reduce the dimensionality of a dataset. The first one is feature elimination. It consists of removing features that will not be useful for the prediction that we want to make. The second one is feature extraction. Feature extraction will create the same number of features that already exist in the dataset. These new features are a combination of the old features. These features are independent and ordered by importance. Thus, we can remove the least important new features. The advantage of this approach is that we reduce the dataset while keeping the important part of each original feature. One of the most used methods for feature extraction is Principal Component Analysis (PCA) [12].

4.3. Semi-Supervised Machine Learning

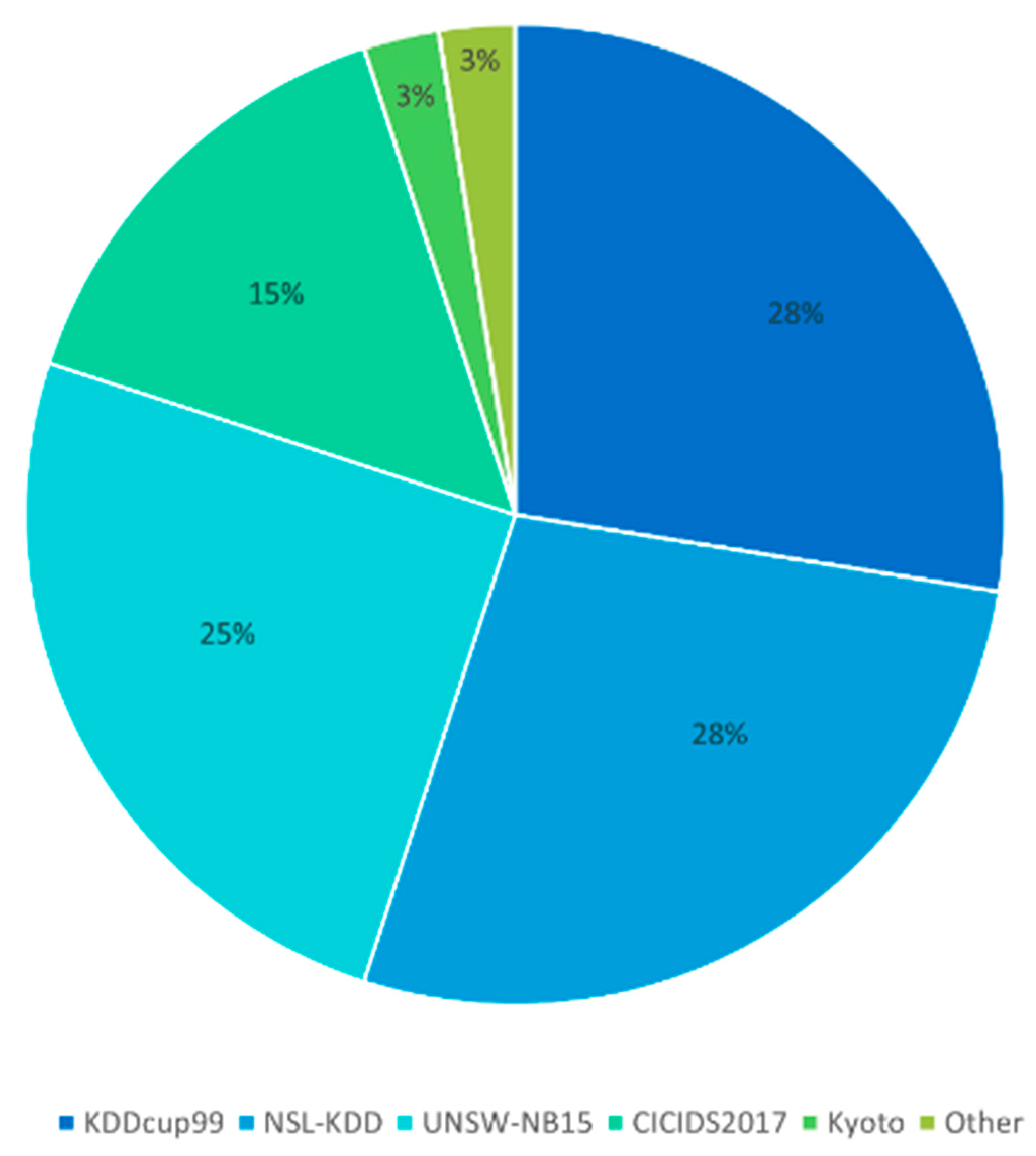

5. Datasets

5.1. KDDcup99

5.2. Kyoto 2006

5.3. NSL-KDD

5.4. UNSW-NB15

5.5. CICIDS2017

6. Literature Review

- U is the data with an unknown class

- H is the hypothesis class of U

- P() is the Probability

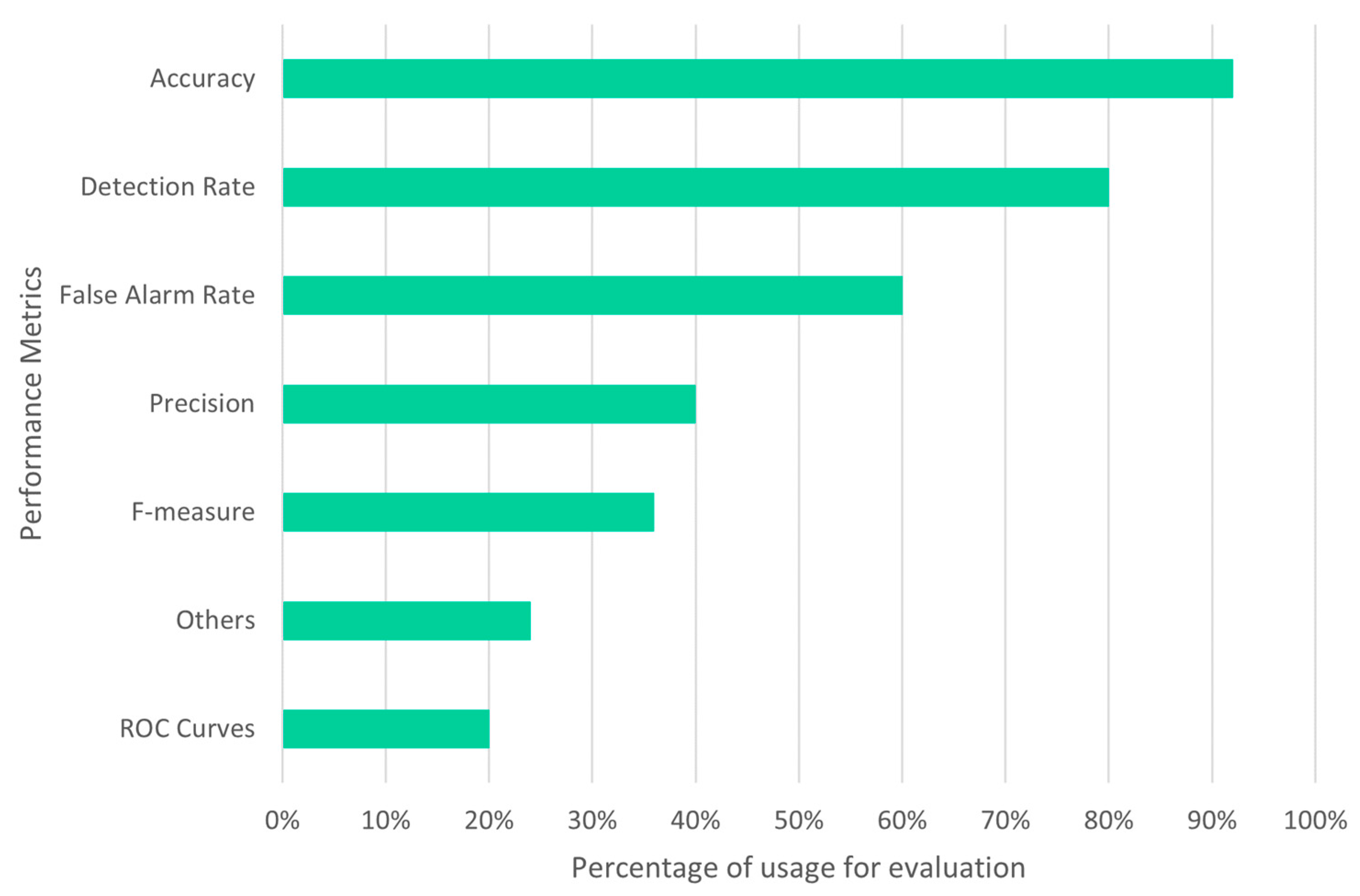

7. Evaluation Metrics

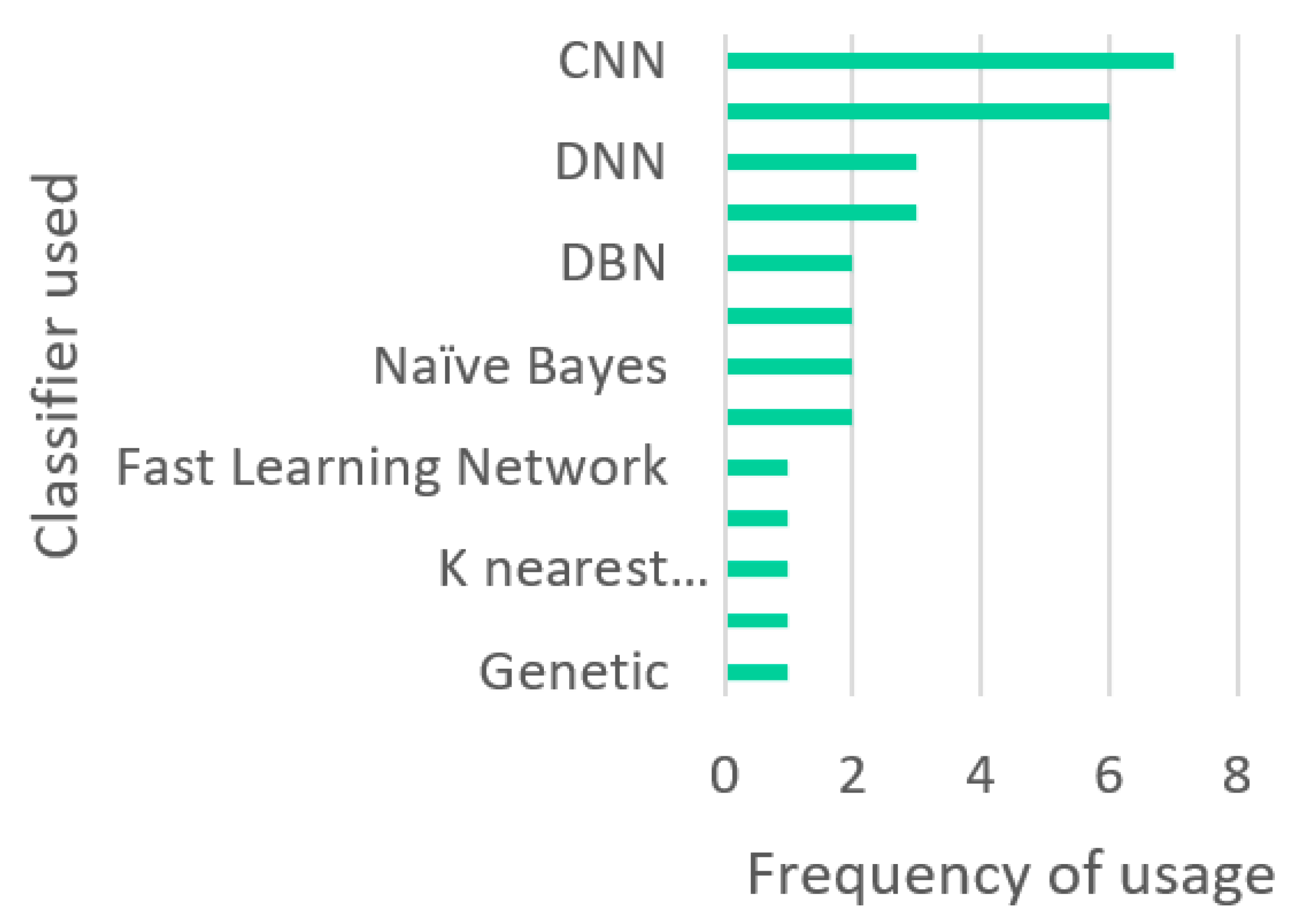

8. Discussion

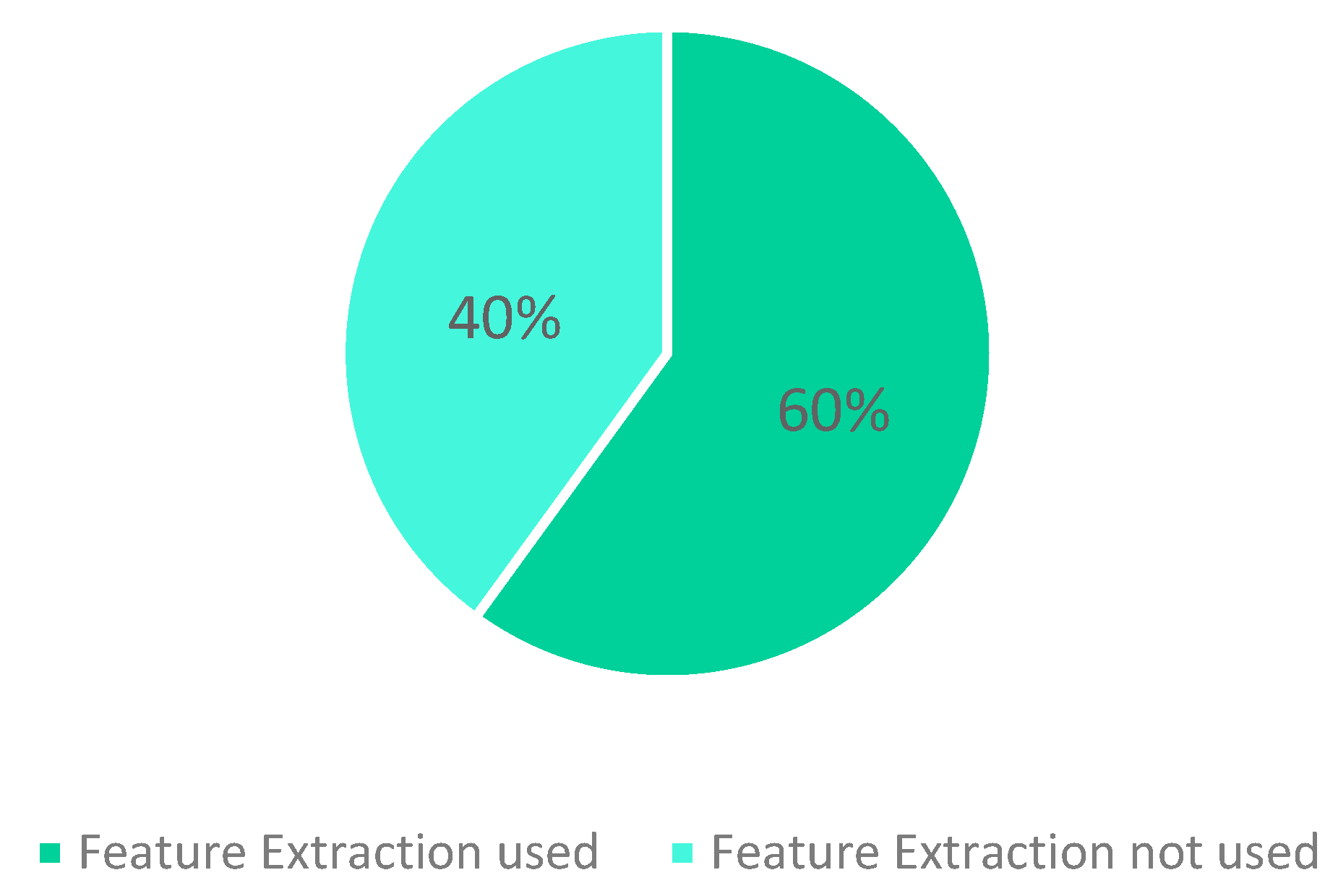

8.1. Observations

8.2. Research Challenges

8.2.1. Unavailability of Recent Dataset

8.2.2. Lower Detection Accuracy for Minor Classes

8.2.3. Low Performance in a Real-World Environment

8.2.4. Resources Consumed by Complex Models

8.2.5. IDS for IoT

8.3. Future Trends

8.3.1. Efficient NIDS

8.3.2. Solution to Complex Models

8.3.3. Detecting Encrypted Traffic

8.3.4. Use of Feature Extraction

9. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Acknowledgments

Conflicts of Interest

References

- Anderson, P. Computer Security Threat Monitoring and Surveillance. 1980. Available online: https://csrc.nist.gov/csrc/media/publications/conference-paper/1998/10/08/proceedings-of-the-21st-nissc-1998/documents/early-cs-papers/ande80.pdf (accessed on 19 May 2022).

- ThreatStack. The History of Intrusion Detection Systems (IDS)—Part 1. Available online: https://www.threatstack.com/blog/the-history-of-intrusion-detection-systems-ids-part-1 (accessed on 19 May 2022).

- Checkpoint. What Is an Intrusion Detection System? Available online: https://www.checkpoint.com/cyber-hub/network-security/what-is-an-intrusion-detection-system-ids/ (accessed on 19 May 2022).

- Sabahi, F.; Movaghar, A. Intrusion Detection: A Survey. In Proceedings of the 2008 Third International Conference on Systems and Networks Communications, Sliema, Malta, 26–31 October 2008; pp. 23–26. [Google Scholar] [CrossRef]

- IBM Cloud Education. Machine Learning. Available online: https://www.ibm.com/cloud/learn/machine-learning (accessed on 19 May 2022).

- IBM Cloud Education. Supervised Learning. Available online: https://www.ibm.com/cloud/learn/supervised-learning (accessed on 19 May 2022).

- Seldon. Machine Learning Regression Explained. Available online: https://www.seldon.io/machine-learning-regression-explained (accessed on 19 May 2022).

- Terence, S. All Machine Learning Models Explained in 6 Minutes. Available online: https://www.ibm.com/cloud/learn/unsupervised-learning (accessed on 19 May 2022).

- IBM Cloud Education. Unsupervised Learning. Available online: https://www.ibm.com/cloud/learn/unsupervised-learning (accessed on 19 May 2022).

- Ben n’cir, C.-E.; Cleuziou, G.; Nadia, E. Overview of Overlapping Partitional Clustering Methods. In Partitional Clustering Algorithms; Springer: Cham, Switzerland, 2015; pp. 245–275. [Google Scholar]

- Joos, K. The Apriori Algorithm. Available online: https://towardsdatascience.com/the-apriori-algorithm-5da3db9aea95 (accessed on 22 June 2022).

- Matt, B. A One-Stop Shop for Principal Component Analysis. Available online: https://towardsdatascience.com/a-one-stop-shop-for-principal-component-analysis-5582fb7e0a9c (accessed on 28 June 2022).

- Ashiku, L.; Dagli, C. Network Intrusion Detection System using Deep Learning. Procedia Comput. Sci. 2021, 185, 239–247. [Google Scholar] [CrossRef]

- Tavallaee, M.; Bagheri, E.; Lu, W.; Ghorbani, A.A. A detailed analysis of the KDD CUP 99 data set. In Proceedings of the 2009 IEEE Symposium on Computational Intelligence for Security and Defense Applications, Ottawa, ON, Canada, 8–10 July 2009; pp. 1–6. [Google Scholar] [CrossRef]

- Protic, D. Review of KDD Cup ‘99, NSL-KDD and Kyoto 2006+ Datasets. Vojnoteh. Glas. 2018, 66, 580–596. [Google Scholar] [CrossRef]

- Moustafa, N. The UNSW-NB15 Dataset. Available online: https://research.unsw.edu.au/projects/unsw-nb15-dataset (accessed on 28 February 2022).

- Sharafaldin, I.; Lashkari, A.H.; Ghorbani, A. Toward Generating a New Intrusion Detection Dataset and Intrusion Traffic Characterization; Canadian Institute for Cybersecurity (CIC): Fredericton, NB, Canada, 2018; pp. 108–116. [Google Scholar]

- Chen, L.; Kuang, X.; Xu, A.; Suo, S.; Yang, Y. A Novel Network Intrusion Detection System Based on CNN. In Proceedings of the 2020 Eighth International Conference on Advanced Cloud and Big Data (CBD), Taiyuan, China, 5–6 December 2020; pp. 243–247. [Google Scholar] [CrossRef]

- Gautam, R.K.S.; Doegar, E.A. An Ensemble Approach for Intrusion Detection System Using Machine Learning Algorithms. In Proceedings of the 2018 8th International Conference on Cloud Computing, Data Science & Engineering (Confluence), Noida, India, 11–12 January 2018; pp. 14–15. [Google Scholar] [CrossRef]

- Al-Yaseen, W.L.; Othman, Z.A.; Nazri, M.Z.A. Multi-level hybrid support vector machine and extreme learning machine based on modified K-means for intrusion detection system. Expert Syst. Appl. 2017, 67, 296–303. [Google Scholar] [CrossRef]

- Kanimozhi, P.; Victoire, T.A.A. Oppositional tunicate fuzzy C-means algorithm and logistic regression for intrusion detection on cloud. Concurr. Comput. Pract. Exp. 2022, 34, e6624. [Google Scholar] [CrossRef]

- Chen, Y.; Yuan, F. Dynamic detection of malicious intrusion in wireless network based on improved random forest algorithm. In Proceedings of the 2022 IEEE Asia-Pacific Conference on Image Processing, Electronics and Computers (IPEC), Dalian, China, 14–16 April 2022; pp. 27–32. [Google Scholar] [CrossRef]

- Jabez, J.; Muthukumar, B. Intrusion Detection System (IDS): Anomaly Detection Using Outlier Detection Approach. Procedia Comput. Sci. 2015, 48, 338–346. [Google Scholar] [CrossRef]

- Kurniawan, Y.; Razi, F.; Nofiyati, N.; Wijayanto, B.; Hidayat, M. Naive Bayes modification for intrusion detection system classification with zero probability. Bull. Electr. Eng. Inform. 2021, 10, 2751–2758. [Google Scholar] [CrossRef]

- Chauhan, N. Naïve Bayes Algorithm: Everything You Need to Know. Available online: https://www.kdnuggets.com/2020/06/naive-bayes-algorithm-everything.html#:~:text=One%20of%20the%20disadvantages%20of,all%20the%20probabilities%20are%20multiplied (accessed on 9 September 2022).

- Gu, J.; Lu, S. An effective intrusion detection approach using SVM with naïve Bayes feature embedding. Comput. Secur. 2021, 103, 102158. [Google Scholar] [CrossRef]

- Pan, J.-S.; Fan, F.; Chu, S.C.; Zhao, H.; Liu, G. A Lightweight Intelligent Intrusion Detection Model for Wireless Sensor Networks. Secur. Commun. Networks 2021, 2021, 1–15. [Google Scholar] [CrossRef]

- Xiao, Y.; Xing, C.; Zhang, T.; Zhao, Z. An Intrusion Detection Model Based on Feature Reduction and Convolutional Neural Networks. IEEE Access 2019, 7, 42210–42219. [Google Scholar] [CrossRef]

- Zhang, X.; Chen, J.; Zhou, Y.; Han, L.; Lin, J. A Multiple-Layer Representation Learning Model for Network-Based Attack Detection. IEEE Access 2019, 7, 91992–92008. [Google Scholar] [CrossRef]

- Yu, Y.; Bian, N. An Intrusion Detection Method Using Few-Shot Learning. IEEE Access 2020, 8, 49730–49740. [Google Scholar] [CrossRef]

- Gao, X.; Shan, C.; Hu, C.; Niu, Z.; Liu, Z. An Adaptive Ensemble Machine Learning Model for Intrusion Detection. IEEE Access 2019, 7, 82512–82521. [Google Scholar] [CrossRef]

- Marir, N.; Wang, H.; Feng, G.; Li, B.; Jia, M. Distributed Abnormal Behavior Detection Approach Based on Deep Belief Network and Ensemble SVM Using Spark. IEEE Access 2018, 6, 59657–59671. [Google Scholar] [CrossRef]

- Wei, P.; Li, Y.; Zhang, Z.; Hu, T.; Li, Z.; Liu, D. An Optimization Method for Intrusion Detection Classification Model Based on Deep Belief Network. IEEE Access 2019, 7, 87593–87605. [Google Scholar] [CrossRef]

- Vinayakumar, R.; Alazab, M.; Soman, K.P.; Poornachandran, P.; Al-Nemrat, A.; Venkatraman, S. Deep Learning Approach for Intelligent Intrusion Detection System. IEEE Access 2019, 7, 41525–41550. [Google Scholar] [CrossRef]

- Shone, N.; Ngoc, T.N.; Phai, V.D.; Shi, Q. A Deep Learning Approach to Network Intrusion Detection. IEEE Trans. Emerg. Top. Comput. Intell. 2018, 2, 41–50. [Google Scholar] [CrossRef]

- Yan, B.; Han, G. Effective Feature Extraction via Stacked Sparse Autoencoder to Improve Intrusion Detection System. IEEE Access 2018, 6, 41238–41248. [Google Scholar] [CrossRef]

- Khan, F.A.; Gumaei, A.; Derhab, A.; Hussain, A. A Novel Two-Stage Deep Learning Model for Efficient Network Intrusion Detection. IEEE Access 2019, 7, 30373–30385. [Google Scholar] [CrossRef]

- Andresini, G.; Appice, A.; Mauro, N.D.; Loglisci, C.; Malerba, D. Multi-Channel Deep Feature Learning for Intrusion Detection. IEEE Access 2020, 8, 53346–53359. [Google Scholar] [CrossRef]

- Ali, M.H.; Mohammed, B.A.D.A.; Ismail, A.; Zolkipli, M.F. A New Intrusion Detection System Based on Fast Learning Network and Particle Swarm Optimization. IEEE Access 2018, 6, 20255–20261. [Google Scholar] [CrossRef]

- Liang, D.; Liu, Q.; Zhao, B.; Zhu, Z.; Liu, D. A Clustering-SVM Ensemble Method for Intrusion Detection System. In Proceedings of the 2019 8th International Symposium on Next Generation Electronics (ISNE), Zhengzhou, China, 9–10 October 2019; pp. 1–3. [Google Scholar] [CrossRef]

- Wisanwanichthan, T.; Thammawichai, M. A Double-Layered Hybrid Approach for Network Intrusion Detection System Using Combined Naive Bayes and SVM. IEEE Access 2021, 9, 138432–138450. [Google Scholar] [CrossRef]

- Elhefnawy, R.; Abounaser, H.; Badr, A. A Hybrid Nested Genetic-Fuzzy Algorithm Framework for Intrusion Detection and Attacks. IEEE Access 2020, 8, 98218–98233. [Google Scholar] [CrossRef]

| Predicted Class | |||

|---|---|---|---|

| Normal | Attack | ||

| Actual class | Normal | TN | FP |

| Attack | FN | TP | |

| ML Methods | Frequently Used Algorithms | Advantages | Disadvantages |

|---|---|---|---|

| Supervised ML Based on Known + unknown = Known (Labelled data used to generate a function that maps an input to an output). | SVM, Random Forest, Linear and Logistic Regression [6,7] | Uses labelled/trained data sets and can work with larger data sets. Works well in predictive environment/use-cases. | Takes a longer time to compute. |

| Unsupervised ML Based on Known + unknown = Unknown (Uses unlabeled data) | K-means, Apriori, Principal Component Analysis [9,10,11] | Uses unlabeled data sets, works well in analytical environment/use-cases | Takes less time to compute |

| Semi-Supervised ML (Mixed labelled and unlabelled) | Q-Learning, Deep Learning (DQN) | Is a combination of supervised and unsupervised learning and enables the predictive and analytical aspects of analysing data. | Time factor and computing complexity may depend on the combination of algorithms used |

| Dataset | Year | Attack Types | Attacks |

|---|---|---|---|

| KDDcup99 | 1999 | 4 | Probe, DoS, U2R, R2L |

| Kyoto 2006 | 2006 | 2 | Known attacks, Unknown attacks |

| NSL-KDD | 2009 | 4 | Probe, DoS, U2R, R2L |

| UNSW-NB15 | 2015 | 7 | Fuzzers, Analysis, Backdoors, DoS, Exploits, Generic, Reconnaissance, Shellcode, Worms |

| CICIDS2017 | 2017 | 7 | Brute Force, DoS, HeartBleed, Web attack, Infiltration, Botnet, DDoS |

| Authors | Year | Feature Extraction Method Used | Classifier Used | Attack Detected |

|---|---|---|---|---|

| Jabez et al. [23] | 2015 | NA | Outlier detection | Network attacks |

| Al-Yaseen et al. [20] | 2017 | Modified K-means | Multi-level Hybrid SVM and ELM | DoS, User to Root (U2R) and Remote to Local (R2L) attacks |

| Rohit et al. [19] | 2018 | Correlation method | Ensemble method (Naïve Bayes, PART, and Adaptive Boost) | DoS, Probe, U2R, R2L |

| Marir et al. [32] | 2018 | Deep Belief Network | Multi-layer ensemble method SVM | DoS, U2R, R2, Probe, Fuzzers, Analysis, Backdoors, Exploits, Generic, Reconnaissance, Shellcode, Worms, Brute Force FTP, Brute Force SSH, Heartbleed, Web Attack, Infiltration, Botnet and DDoS |

| Shone et al. [35] | 2018 | Non-symmetric Deep Auto-Encoder (NDAE) | Random Forest | DoS (back, land, Neptune), Probe (ipsweep, nmap, portsweep, satan), R2L (ftp_write, guess_password, imap, multihop, phf, spy, warezclient, warezmaster), U2R (loadmodule, buffer_overflow, rootkit, perl) |

| Yan et al. [36] | 2018 | Stacked Sparse Auto-Encoder (SSAE) | SVM | DoS, Probe, R2L, U2R |

| Ali et al. [39] | 2018 | NA | Fast Learning Network improved using PSO | DoS, U2R, R2L and Probing |

| Xiao et al. [28] | 2019 | PCA and Auto-Encoder | CNN | DoS, U2R (illegal Access from remote machines), R2L (illegal access to local super user privileges), probe (supervisory and detection). |

| Zhang et al. [29] | 2019 | NA | CNN with improved gcForest | DoS, Exploits, Generic, Reconnaissance, Virus and Web attacks |

| Gao et al. [31] | 2019 | PCA | Ensemble method (DT, RF, KNN, DNN and MultiTree) | DoS (SYN flood), Probe (port scanning), R2L (guessing password), U2R (buffer overflow attacks) |

| Wei et al. [33] | 2019 | NA | DBN improved using optimizing algorithm (PSO-AFSA-GA) | Analysed 39 types of attacks that fall under the following categorises: Probe (scan and probe), DoS, U2R (illegal access to local superuser) and R2L (unauthorized remote access) |

| Vinayakumar et al. [34] | 2019 | NA | DNN with scalable hidden layers | Normal, DoS, Probe, R2L, U2R |

| Khan et al. [37] | 2019 | Deep Stack Auto-Encoder (DSAE) | Soft-max | Normal, DoS, Probe, R2L, U2R (22 different categories of attacked tested, i.e., analysis, backdoor, exploits, fuzzers, generic, reconnaissance, shellcode, worm) |

| Dong et al. [40] | 2019 | NA | K-mean clustering with SVM | - |

| Lin et al. [18] | 2020 | NA | CNN | FTP Brute Force, SSH Brute Force, DoS (slowloris, slowtptest, Hulk), Web attacks (web brute force, XSS, SQL injection), penetration attacks (infiltration Dropbox download) |

| Yu et al. [30] | 2020 | Embedded function using CNN and DNN | Few-Shot Learning | DoS (Teardrop, Smurf), Probe (Satan, Portsweep, saint), U2R (Rootkit, Buffer_overflow, Loadmodule) and R2L (Xsnoop, Httptunnel). Other attack types tested were: normal, generic, fuzzers, reconnaissance, shellcode, worms, backdoor and exploits) |

| Andresini et al. [38] | 2020 | Dual Auto-Encoder | Soft-max | - |

| Elhefnawy et al. [42] | 2020 | Naïve Bayes | Hybrid Nested Genetic Fuzzy Algorithm | Probe, DoS, U2R, and R2L. Other attack types tested were: normal, generic, fuzzers, reconnaissance, shellcode, worms, backdoor and exploits) |

| Lirim et al. [17] | 2021 | NA | CNN with multi-layer perceptron | DoS, DDoS, PortScan, Web Attack, Heartbleed, Benign, Infiltration, Brute Force, SSH, FTP |

| Kanimozhi et al. [21] | 2021 | Logistic Regression | Oppositional tunicate fuzzy C-mean | - |

| Kurniawan et al. [24] | 2021 | Correlation-based features selection | Modified Naïve Bayes | Normal, DoS, Probe, R2L, U2R |

| Gu et al. [26] | 2021 | Naïve Bayes | SVM | - |

| Pan et al. [27] | 2021 | NA | KNN using PM-CSCA for optimization | DoS, Sniffing (Probe), U2R and R2L |

| Wisanwanichthan et al. [41] | 2021 | ICFS and PCA | Naïve Bayes and SVM | Employed Double Layered Hybrid Approach (DLHA) for detecting DoS, Probe, R2L and U2R |

| Yiping et al. [22] | 2022 | NA | Improved random forest algorithm | Wireless network attacks |

| Authors | Advantages | Disadvantages |

|---|---|---|

| Jabez et al. [23] (2015) | An outlier detection approach for IDS. The neighborhood outlier factor is used to detect isolated points. Compared to back propagation neural network the execution time is significantly better. | Old dataset KDDcup99 was used, and no information was given on the accuracy for the different types of attacks. |

| Al-Yaseen et al. [20] (2017) | A Hybrid multi-level model merging SVM and ELM. The model is divided into 5 levels, with each level responsible to detect one categories of attack. In addition, they used Modified K-means for feature extraction. They achieved a better overall accuracy compared to multi-level SVM and ELM. | Old dataset used for the model. The accuracy for R2L and U2R attacks is very low. |

| Rohit et al. [19] (2018) | An ensemble approach combining three algorithms: Naïve Bayes, PART, and Adaptive Boost with an average voting result to decide the outcome. Feature extraction methods are used. | The dataset used, KDDcup99, is very old. There is no description on how well the model can classified the different categories of attacks. |

| Marir et al. [32] (2018) | Use of a multi-layer ensemble SVM to detect abnormal traffic. DBN was used for feature extraction before forwarding the result to the ensemble SVM and the voting algorithm. They tested their solution on old and recent datasets KDDcup99, NSL-KDD, UNSW-NB15, and CICIDS2017. | When more layers are used, the model is more time consuming. Lack of information on the different attacks classes that are identified. |

| Shone et al. [35] (2018) | A Non-symmetric Deep Auto-Encoder (NDAE) and random forest model to detect abnormal behavior. They used two NDAE that they stacked for feature extraction. Compared to a DBN solution they obtained good accuracy. | Their solution struggles to detect small classes such as R2L and U2R on old datasets. |

| Yan et al. [36] (2018) | A NIDS using Stacked Sparse Auto-Encoder (SSAE) for feature extraction and SVM as classifier. Their solution reduces significantly the time required for training and testing | Old dataset NSL-KDD is used, and their model has a low detection rate of U2R and R2L attacks. |

| Ali et al. [39] (2018) | Use of Fast Learning Network (FLN) and particle swarm optimization to detect abnormal traffic. | Model is tested on old KDDcup99 dataset and has low accuracy when identifying one of the small classes. |

| Xiao et al. [28] (2019) | CNN model for IDS using PCA and Auto-Encoder for feature extraction. | Old dataset KDDcup99 is used, and their model has a low detection rate of U2R and R2L attacks. |

| Zhang et al. [29] (2019) | A multi-layer model combining random forest technique with CNN. They achieved an outstanding accuracy of 99.24% on a combination of two recent datasets UNSW-NB15 and CICIDS2017 compared to the single algorithms. The accuracy on each attack class is given. | Lack of feature extraction method. |

| Gao et al. [31] (2019) | An ensemble machine learning IDS combining Decision Tree, Random Forest, KNN, DNN and MultiTree. Weights are used to have a better accuracy during the voting process. PCA was used for feature extraction. | Model lacks in efficiency when analyzing attack that are not in large quantity. Old-fashioned dataset NSL-KDD was used. |

| Wei et al. [33] (2019) | An improved DBN model using a combination of Particle Swarm, Fish Swarm and Genetic algorithm. | Model is tested on old NSL-KDD dataset. Lack of feature extraction method. |

| Vinayakumar et al. [34] (2019) | A hybrid IDS using DNN. They used old and new datasets to test their model. | Lack of feature extraction method. In addition, the model is complex and has low detection rate for weaker attack classes. |

| Khan et al. [37] (2019) | A two-stage deep learning model (TSDL) using a DNN approach to detect abnormal traffic. The first stage is used to classify the traffic with a given probability. The second stage used this probability as an additional feature to improve the classification results. | They tested their solution on KDDcup99 and UNSW-NB15 datasets. However, even if they achieved a 99.996% on the old dataset there is a 10% gap compared to the accuracy with the recent dataset. Thus, the model still needs to be improved to reduce this gap. |

| Dong et al. [40] (2019) | Hybrid solution combining clustering with SVM. K-means is used to divide the data in different subsets and SVM method is performed on each of those subsets. Their solution requires less time processing compared to SVM algorithms using different parameters. | The solution uses old dataset, and no information was provided for the accuracy of each class of attack. |

| Lin et al. [18] (2020) | A NIDS using CNN which achieves excellent results on one of the most recent datasets, the CICIDS2017. | There are no accuracy details on each kind of attacks. No feature extraction methods. |

| Yu et al. [30] (2020) | IDS based on Few-Shot Learning which gives good accuracy results using only a small portion of the datasets. DNN and CNN were used to perform feature extraction. Their solution was tested on an old dataset NSL-KDD and a recent dataset UNSW-NB15. | Although the detection rates for U2R and R2L is not bad compared to other methods, there is still a lot of room for improvement. |

| Andresini et al. [38] (2020) | An IDS based on two auto-encoders for feature extraction and using a soft-max classifier to detect intrusions. The model is tested on an old and a recent dataset. | The solution does not provided details about the different types of attacks. |

| Elhefnawy et al. [42] (2020) | A Hybrid Nested Genetic-Fuzzy Algorithm (HNGFA) to detect attacks. Feature selection is performed using Naïve Bayes. The model combines two genetic-fuzzy algorithms that use the results of each other to improve the accuracy. Also, they tested their solution on an old and a recent dataset. | The model is complex and requires a high training time. |

| Lirim et al. [17] (2012) | A NIDS based on CNN with multi-layer perceptron that achieves good accuracy on recent dataset. | The model has a class imbalance between the upper and bottom class. Lack of feature reduction methods. |

| Kanimozhi et al. [21] (2021) | An innovative method using oppositional tunicate fuzzy C-mean for cloud intrusion. Logistic regression is used for feature selection to improve the accuracy. The model is tested on a recent dataset, the CICIDS2017. | The proposed model is complex and only achieved an overall accuracy of 80%. |

| Kurniawan et al. [24] (2021) | A NIDS using a modified Naïve Bayes model. Feature selection is used to improve the model. | Old dataset NSL-KDD used. No detailed accuracy for the different classes of attacks is given. |

| Gu et al. [26] (2021) | An SVM model using Naïve Bayes for feature selection with great accuracy results on two recent datasets UNSW-NB15 and CICIDS2017. | Their solution does not identify what kind of attack is used. |

| Pan et al. [27] (2021) | KNN model using PM-CSCA for optimization that achieves great accuracy on an old dataset NSL-KDD and a recent dataset UNSW-NB15. Their solution is used for wireless network and is based on the cloud to reduce the time and speed required by the algorithm. | No identification of the attacks classes is provided. |

| Wisanwanichthan et al. [41] (2021) | An IDS using a Double-Layered Hybrid Approach (DLHA). They first used Intersectional Correlated Feature Selection (ICFS) and PCA to select important features. They then split the NSL-KDD dataset into two groups, one with all the classes and one with only the U2R, R2L and normal classes. These two groups are used to train the model which is composed of a first layer using Naïve Bayes classifier and a second layer using SVM. Their solution achieved outstanding accuracy for R2L and U2R with respective accuracy of 96.67% and 100%. | Although their solution outperformed other solutions when identifying small classes, the accuracy for large classes could be improved. |

| Yiping et al. [22] (2022) | A random forest model for a wireless network. | The mean accuracy obtained is 96.93%. No known datasets were used. |

| Authors | Database Used | Outcome/Accuracy |

|---|---|---|

| Jabez et al. [23] | KDDcup99 | NA |

| Al-Yaseen et al. [20] | KDDcup99 | 95.75% |

| Rohit et al. [19] | KDDcup99 | 99.97% |

| Marir et al. [32] | KDDcup99 NSL-KDD UNSW-NB15 CICIDS2017 | 94.76% (KDDcup99) 97.27% (NSL-KDD) 90.47% (UNSW-NB15) 90.40% (CICIDS2017) |

| Shone et al. [35] | KDDcup99 NSL-KDD | 97.85% (KDDcup99) 85.42% (NSL-KDD) |

| Yan et al. [36] | NSL-KDD | 99.35% |

| Ali et al. [39] | KDDcup99 | 89.23% |

| Xiao et al. [28] | KDDcup99 | 94% |

| Zhang et al. [29] | Combination of UNSW-NB15 and CICIDS2017 | 99.24% |

| Gao et al. [31] | NSL-KDD | 85.20% |

| Wei et al. [33] | NSL-KDD | 82.36% |

| Vinayakumar et al. [34] | KDDcup99 NSL-KDD Kyoto UNSW-NB15 CICIDS2017 | 93% (KDDcup99) 79.42% (NSL-KDD) 87.78 % (Kyoto) 76.48% (UNSW-NB15) 94.5% (CICIDS2017) |

| Khan et al. [37] | KDDcup99 UNSW-NB15 | 99.996% (KDDcup99) 89.134% (UNSW-NB15) |

| Dong et al. [40] | NSL-KDD | 99.45% |

| Lin et al. [18] | CICIDS2017 | 99.56% |

| Yu et al. [30] | NSL-KDD UNSW-NB15 | 92.34% (NSL-KDD) 92% (UNSW-NB15) |

| Andresini et al. [38] | KDDcup99 UNSW-NB15 CICIDS2017 | 92.49% (KDDcup99) 93.40% (UNSW-NB15) 97.90% (CICIDS2017) |

| Elhefnawy et al. [42] | KDDcup99 UNSW-NB15 | 98.19% (KDDcup99) 80.54% (UNSW-NB15) |

| Lirim et al. [17] | UNSW-NB15 | 95.60% |

| Kanimozhi et al. [21] | CICIDS2017 | 80% |

| Kurniawan et al. [24] | NSL-KDD | 89.33% |

| Gu et al. [26] | CICIDS2017 UNSW-NB15 | 98.92% (CICIDS2017) 93.75% (UNSW-NB15) |

| Pan et al. [27] | NSL-KDD UNSW-NB15 | 99.33% (NSL-KDD) 98.27% (UNSW-NB15) |

| Wisanwanichthan et al. [41] | NSL-KDD | 93.11% |

| Yiping et al. [22] | NA | 96.93% |

| Authors | Evaluation Metrics | ||||||

|---|---|---|---|---|---|---|---|

| Accuracy | Precision | Detection Rate | F-Measure | False Alarm Rate | ROC Curves | Others | |

| Jabez et al. [23] | ✓ | ||||||

| Al-Yaseen et al. [20] | ✓ | ✓ | ✓ | ✓ | |||

| Rohit et al. [19] | ✓ | ✓ | ✓ | ||||

| Marir et al. [32] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Shone et al. [35] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| Yan et al. [36] | ✓ | ✓ | ✓ | ✓ | |||

| Ali et al. [39] | ✓ | ||||||

| Xiao et al. [28] | ✓ | ✓ | ✓ | ✓ | |||

| Zhang et al. [29] | ✓ | ✓ | ✓ | ||||

| Gao et al. [31] | ✓ | ✓ | ✓ | ✓ | |||

| Wei et al. [33] | ✓ | ✓ | ✓ | ✓ | |||

| Vinayakumar et al. [34] | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| Khan et al. [37] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Dong et al. [40] | ✓ | ✓ | ✓ | ||||

| Lin et al. [18] | ✓ | ||||||

| Yu et al. [30] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Andresini et al. [38] | ✓ | ✓ | |||||

| Elhefnawy et al. [42] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Lirim et al. [17] | ✓ | ✓ | |||||

| Kanimozhi et al. [21] | ✓ | ✓ | ✓ | ||||

| Kurniawan et al. [24] | ✓ | ✓ | ✓ | ||||

| Gu et al. [26] | ✓ | ✓ | ✓ | ||||

| Pan et al. [27] | ✓ | ✓ | ✓ | ✓ | |||

| Wisanwanichthan et al. [41] | ✓ | ✓ | ✓ | ✓ | ✓ | ||

| Yiping et al. [22] | ✓ | ✓ | |||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vanin, P.; Newe, T.; Dhirani, L.L.; O’Connell, E.; O’Shea, D.; Lee, B.; Rao, M. A Study of Network Intrusion Detection Systems Using Artificial Intelligence/Machine Learning. Appl. Sci. 2022, 12, 11752. https://doi.org/10.3390/app122211752

Vanin P, Newe T, Dhirani LL, O’Connell E, O’Shea D, Lee B, Rao M. A Study of Network Intrusion Detection Systems Using Artificial Intelligence/Machine Learning. Applied Sciences. 2022; 12(22):11752. https://doi.org/10.3390/app122211752

Chicago/Turabian StyleVanin, Patrick, Thomas Newe, Lubna Luxmi Dhirani, Eoin O’Connell, Donna O’Shea, Brian Lee, and Muzaffar Rao. 2022. "A Study of Network Intrusion Detection Systems Using Artificial Intelligence/Machine Learning" Applied Sciences 12, no. 22: 11752. https://doi.org/10.3390/app122211752

APA StyleVanin, P., Newe, T., Dhirani, L. L., O’Connell, E., O’Shea, D., Lee, B., & Rao, M. (2022). A Study of Network Intrusion Detection Systems Using Artificial Intelligence/Machine Learning. Applied Sciences, 12(22), 11752. https://doi.org/10.3390/app122211752