Towards a Technological Ecosystem to Provide Information Dashboards as a Service: A Dynamic Proposal for Supplying Dashboards Adapted to Specific Scenarios

Abstract

1. Introduction

2. Background

3. Materials and Methods

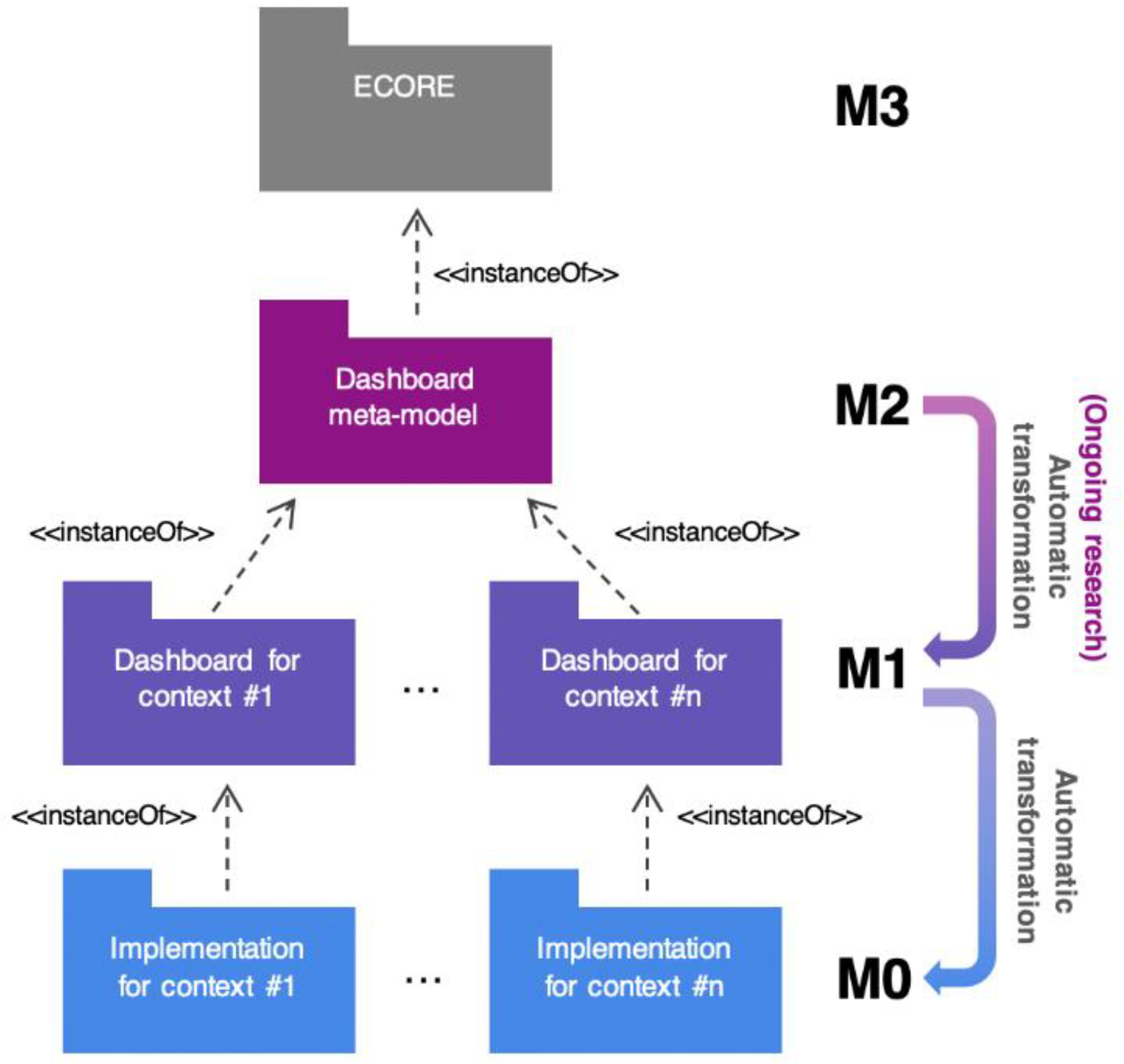

3.1. Metamodeling

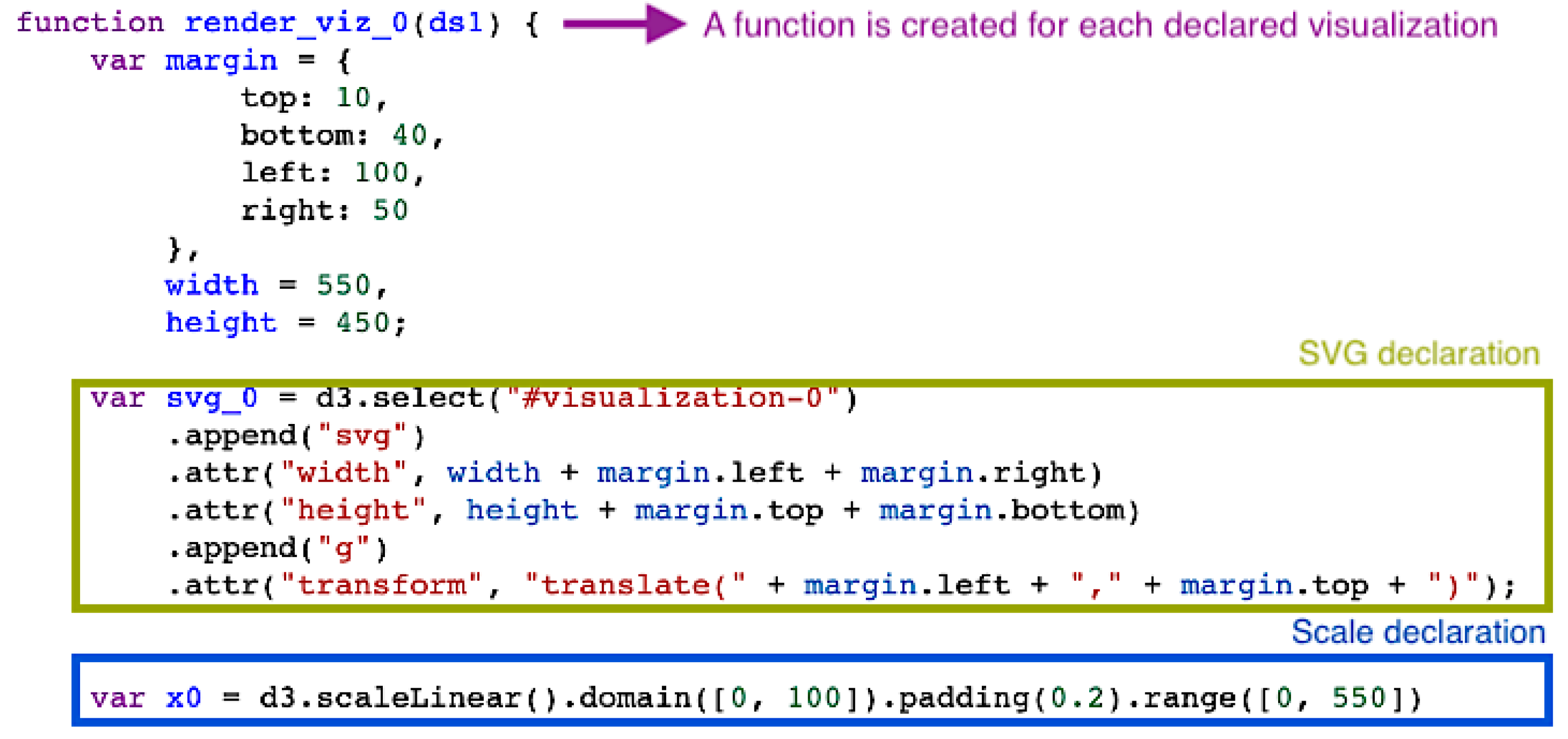

3.2. Code Templates

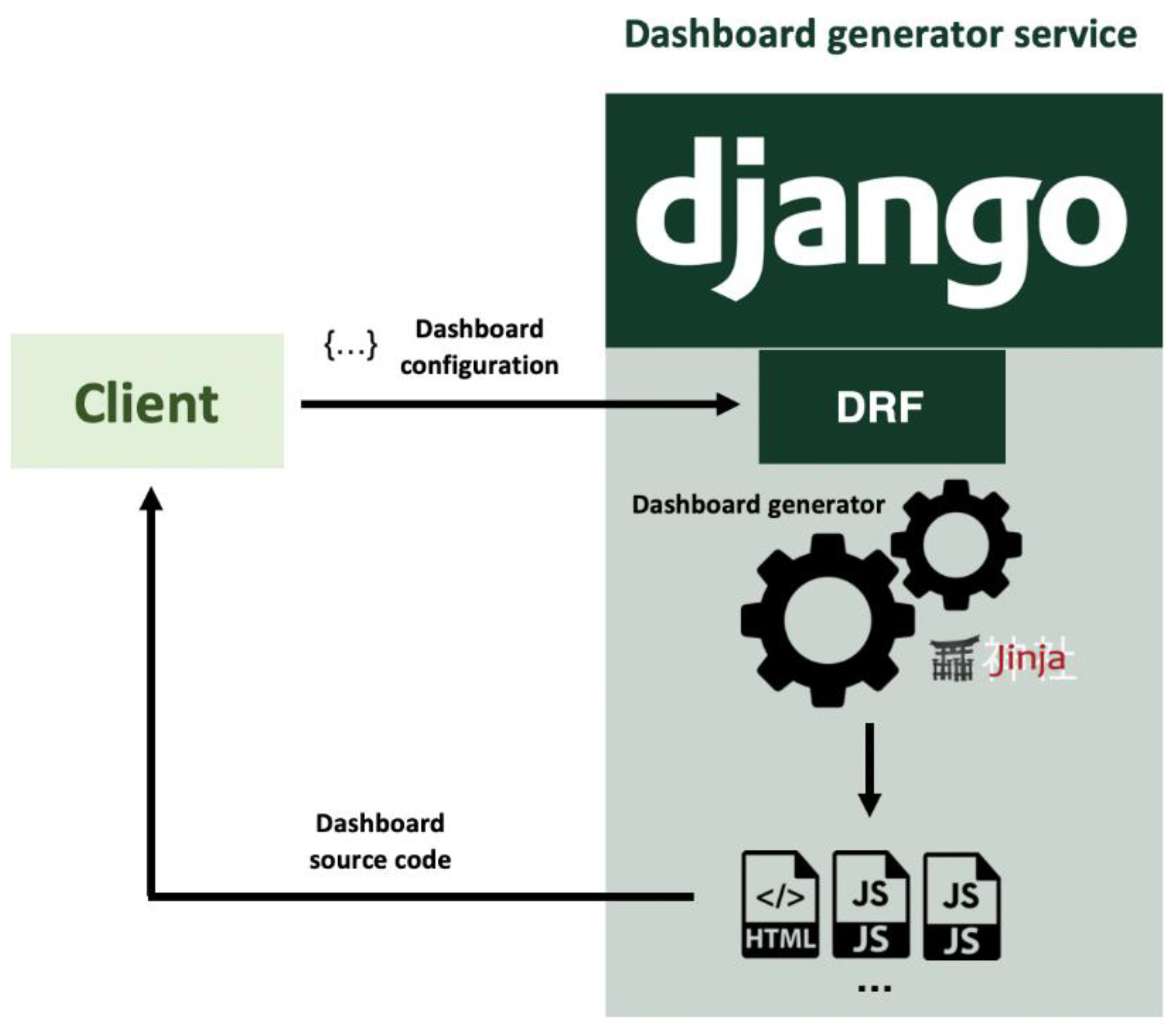

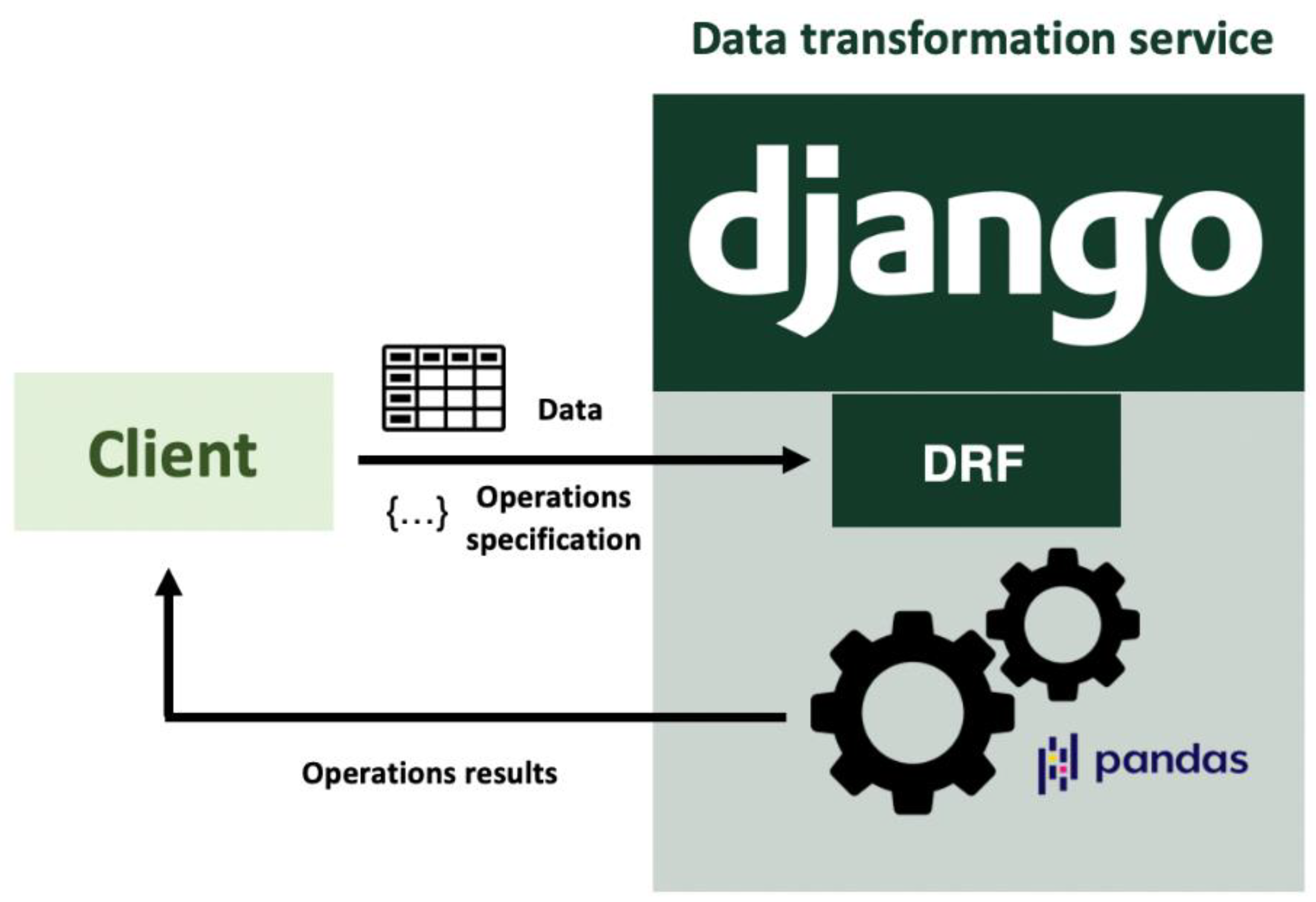

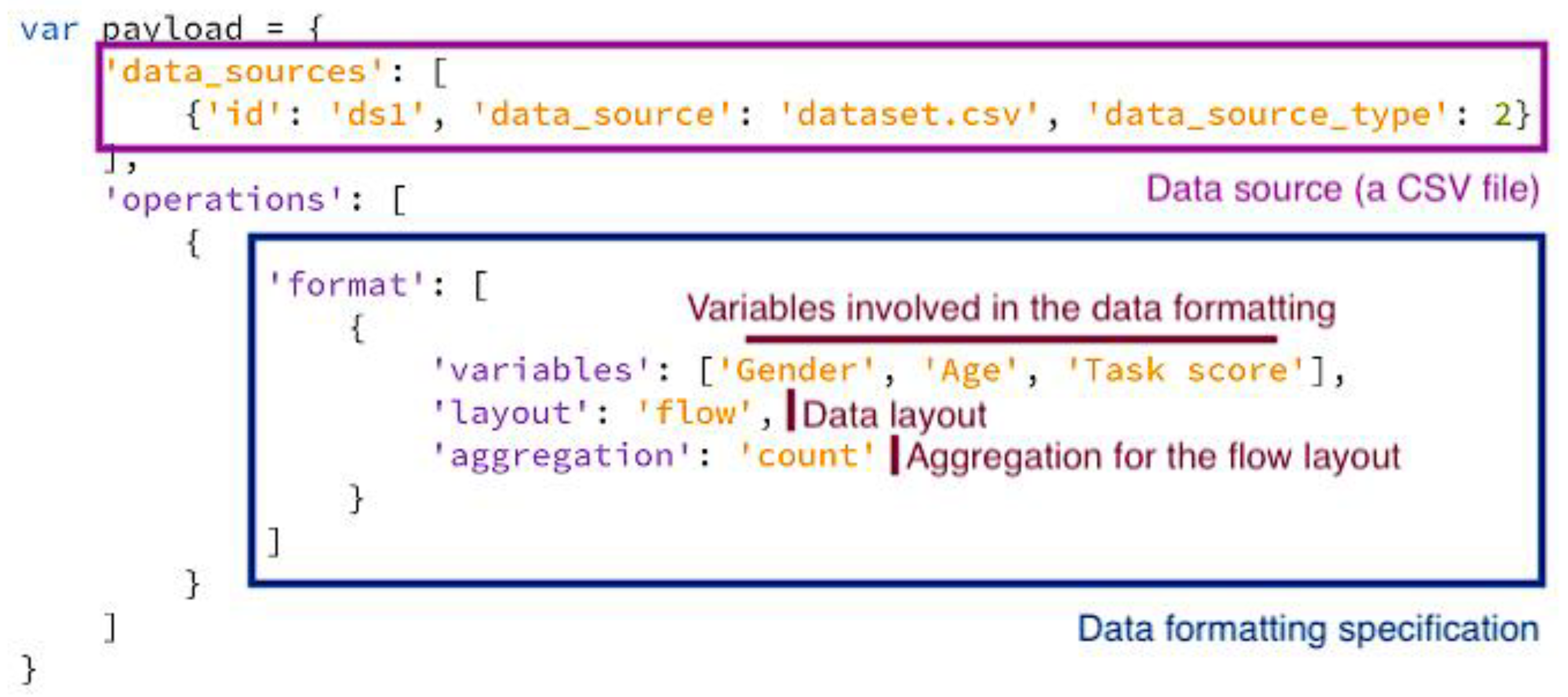

3.3. Code as a Service

4. Architecture Proposal

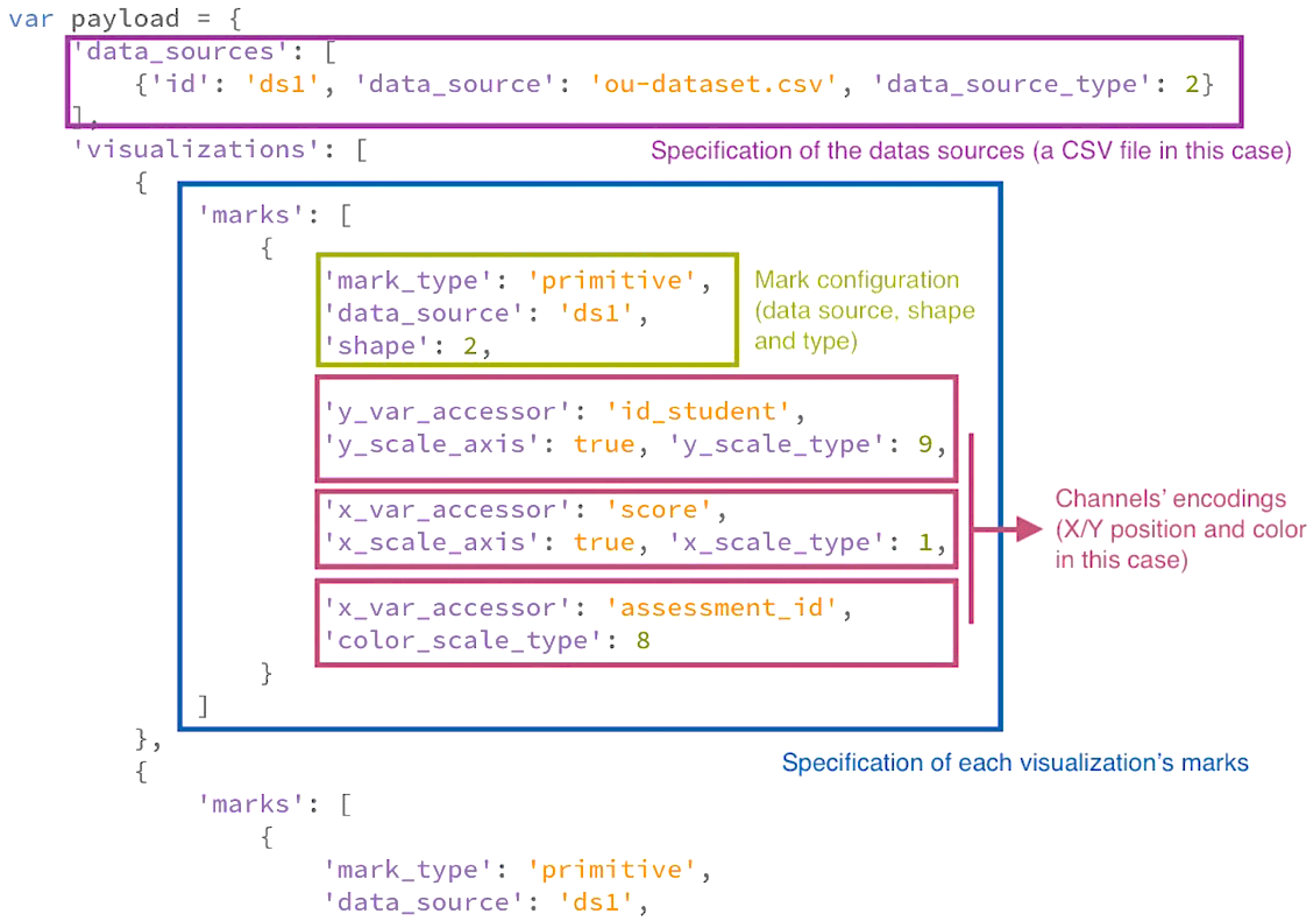

- Information about the dataset or datasets to be displayed. Data sources could be external APIs or files.

- The disposition or layout of the elements.

- The features of the visualization:

- ○

- Number and type (X position, Y position, size, color, etc.) of visual channels;

- ○

- Visual mark type (bar, circle, topographic, arc, etc.);

- ○

- Dataset’s variables to be represented;

- ○

- Interaction events and effects [42].

- The set of variables from the dataset that will take part in the computations;

- The operation or operations to be performed (summary statistics, regressions, ratios, etc.);

- Filters (optional);

- Groupings (optional);

- Output data layout: tabular (default), nested, linked, etc.

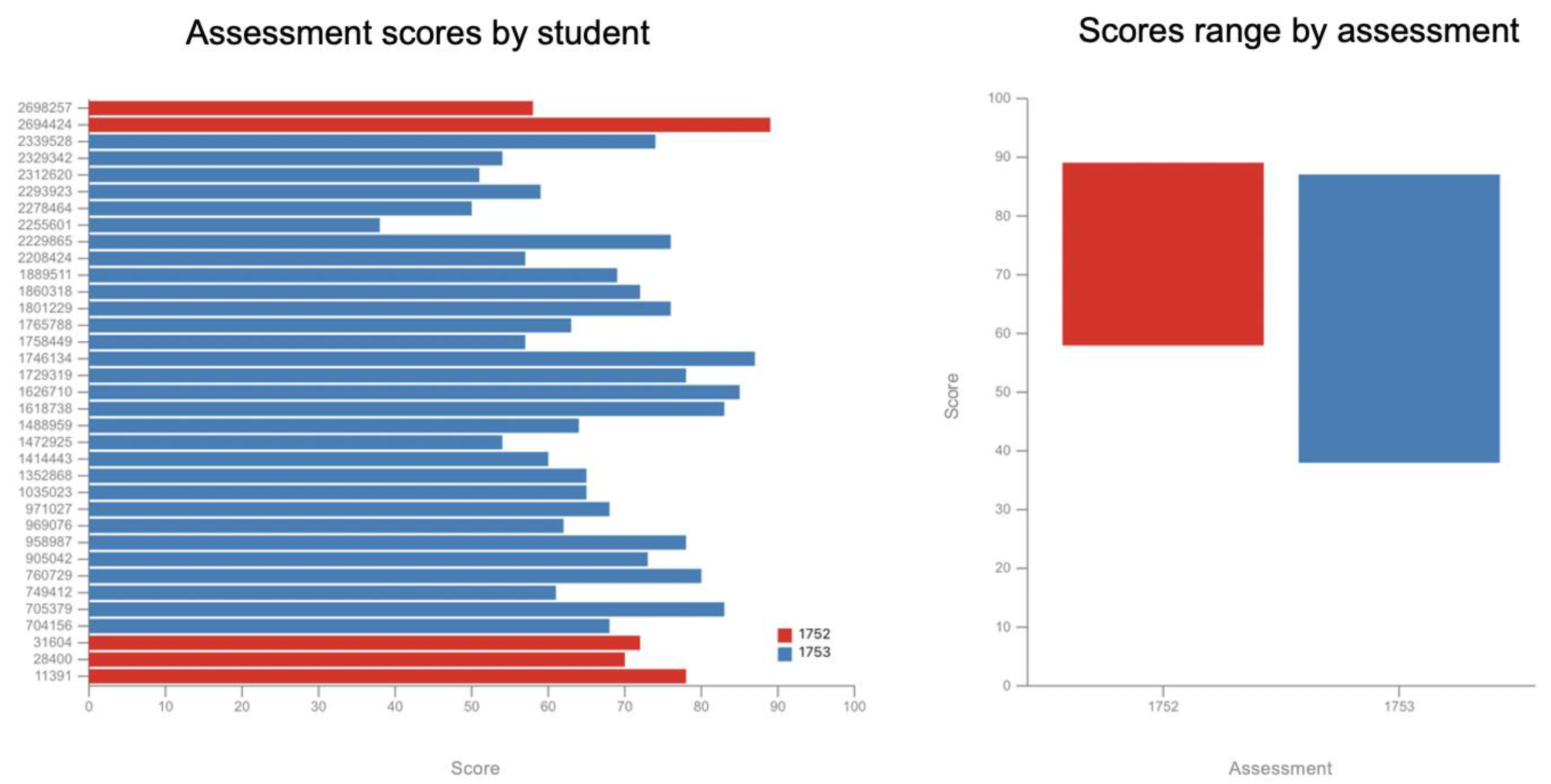

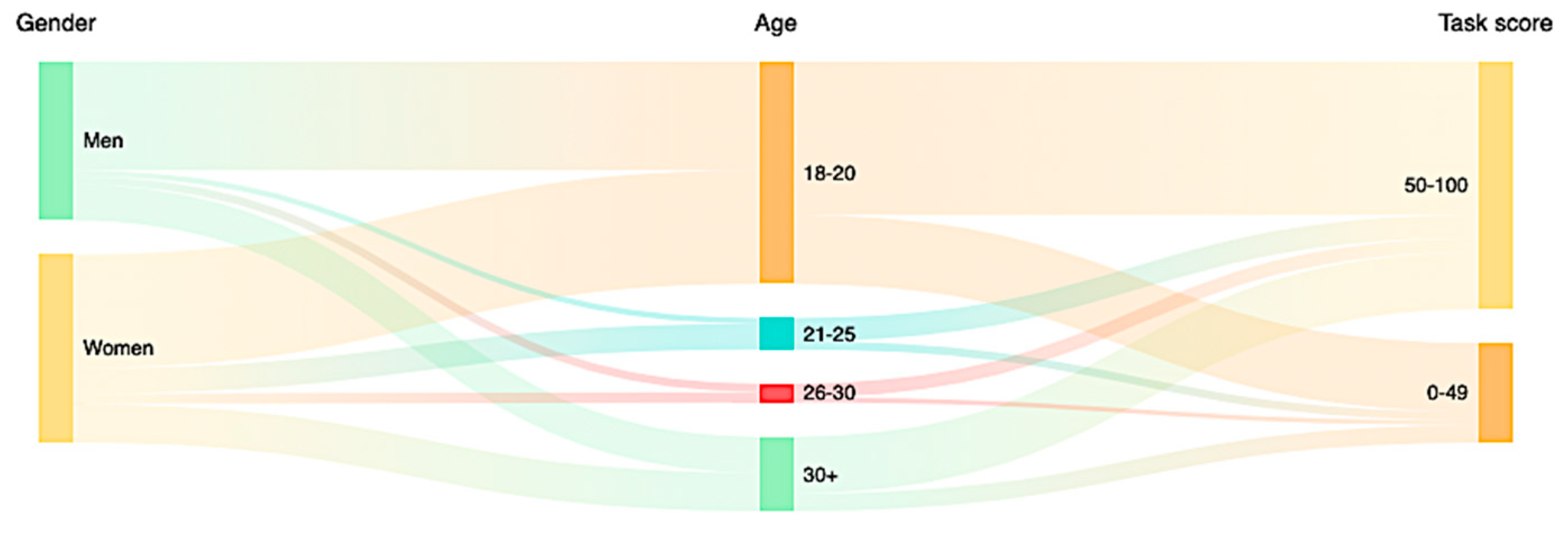

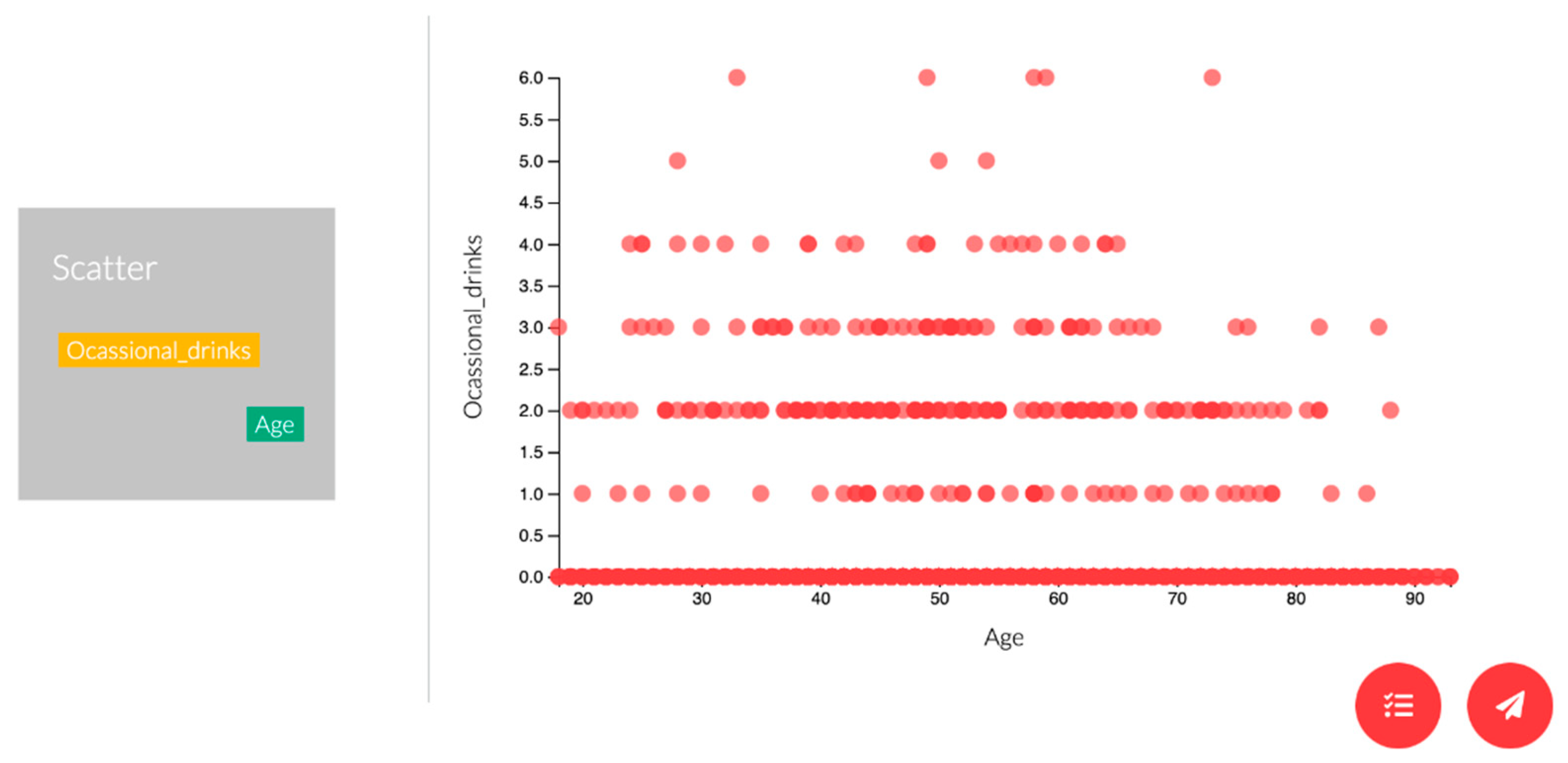

5. Use Cases

5.1. Requesting Source Code to Obtain a Standalone Dashboard

- Creation of the SVG container;

- Declaration of the scales;

- Creation of the visual marks;

- Addition of each visual mark’s channels.

5.2. Integration with Other Components

5.3. Dynamic Implementation of a Dashboard with Educative Purposes

6. Discussion

7. Limitations

8. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Sarikaya, A.; Correll, M.; Bartram, L.; Tory, M.; Fisher, D. What Do We Talk About When We Talk About Dashboards? IEEE Trans. Vis. Comput. Graph. 2018, 25, 682–692. [Google Scholar] [CrossRef] [PubMed]

- García-Holgado, A.; García-Peñalvo, F.J. The evolution of the technological ecosystems: An architectural proposal to enhancing learning processes. In Proceedings of the First International Conference on Technological Ecosystems for Enhancing Multiculturality (TEEM’13), Salamanca, Spain, 14–15 November 2013; ACM International Conference Proceeding Series (ICPS); García-Peñalvo, F.J., Ed.; ACM: New York, NY, USA, 2013; pp. 565–571. [Google Scholar]

- García-Holgado, A.; García-Peñalvo, F.J. A metamodel proposal for developing learning ecosystems. In Learning and Collaboration Technologies. Novel Learning Ecosystems. 4th International Conference, LCT 2017. Held as Part of HCI International 2017, Vancouver, BC, Canada, 9–14 July 2017. Proceedings, Part I; Lecture Notes in Computer Science, No. 10295; Zaphiris, P., Ioannou, A., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 100–109. [Google Scholar]

- Yoo, Y.; Lee, H.; Jo, I.-H.; Park, Y. Educational dashboards for smart learning: Review of case studies. In Emerging Issues in Smart Learning; Springer: Berlin/Heidelberg, Germany, 2015; pp. 145–155. [Google Scholar]

- Álvarez-Arana, A.; Villamañe-Gironés, M.; Larrañaga-Olagaray, M. Improving Assessment Using Visual Learning Analytics. Educ. Knowl. Soc. 2020, 21. [Google Scholar] [CrossRef]

- Schwendimann, B.A.; Rodriguez-Triana, M.J.; Schwendimann, B.A.; Vozniuk, A.; Prieto, L.P.; Boroujeni, M.S.; Holzer, A.; Gillet, D.; Dillenbourg, P. Perceiving learning at a glance: A systematic literature review of learning dashboard research. IEEE Trans. Learn. Technol. 2017, 10, 30–41. [Google Scholar] [CrossRef]

- Teasley, S.D. Student facing dashboards: One size fits all? Technol. Knowl. Learn. 2017, 22, 377–384. [Google Scholar] [CrossRef]

- Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R. Information Dashboards and Tailoring Capabilities A Systematic Literature Review. IEEE Access 2019, 7, 109673–109688. [Google Scholar] [CrossRef]

- Filonik, D.; Medland, R.; Foth, M.; Rittenbruch, M. A Customisable Dashboard Display for Environmental Performance Visualisations. In Persuasive Technology. PERSUASIVE 2013; Springer: Berlin/Heidelberg, Germany, 2013; pp. 51–62. [Google Scholar]

- Mayer, B.; Weinreich, R. A dashboard for microservice monitoring and management. In Proceedings of the 2017 IEEE International Conference on Software Architecture Workshops (ICSAW), Gothenburg, Sweden, 5–7 April 2017; pp. 66–69. [Google Scholar]

- Michel, C.; Lavoué, E.; George, S.; Ji, M. Supporting awareness and self-regulation in project-based learning through personalized dashboards. Int. J. Technol. Enhanc. Learn. 2017, 9, 204–226. [Google Scholar] [CrossRef]

- Miotto, G.L.; Magnoni, L.; Sloper, J.E. The TDAQ Analytics Dashboard: A real-time web application for the ATLAS TDAQ control infrastructure. J. Phys. Conf. Ser. 2011, 331, 022019. [Google Scholar] [CrossRef]

- Nascimento, B.S.; Vivacqua, A.S.; Borges, M.R. A flexible architecture for selection and visualization of information in emergency situations. In Proceedings of the 2016 IEEE International Conference on Systems, Man, and Cybernetics (SMC), Budapest, Hungary, 9–12 October 2016; pp. 003317–003322. [Google Scholar]

- Elias, M.; Bezerianos, A. Exploration views: Understanding dashboard creation and customization for visualization novices. In IFIP Conference on Human-Computer Interaction; Springer: Berlin/Heidelberg, Germany, 2011; pp. 274–291. [Google Scholar]

- Yalçın, M.A.; Elmqvist, N.; Bederson, B.B. Keshif: Rapid and expressive tabular data exploration for novices. IEEE Trans. Vis. Comput. Graph. 2018, 24, 2339–2352. [Google Scholar] [CrossRef] [PubMed]

- Petasis, G.; Triantafillou, A.; Karstens, E. YourDataStories: Transparency and Corruption Fighting Through Data Interlinking and Visual Exploration. In International Conference on Internet Science; Springer: Berlin/Heidelberg, Germany, 2017; pp. 95–108. [Google Scholar]

- Kumar, K.; Bose, J.; Soni, S.K. A Generic Visualization Framework based on a Data Driven Approach for the Analytics data. In Proceedings of the 2017 14th IEEE India Council International Conference (INDICON), Roorkee, India, 15–17 December 2017; pp. 1–6. [Google Scholar]

- Cardoso, A.; Teixeira, C.J.V.; Pinto, J.S. Architecture for Highly Configurable Dashboards for Operations Monitoring and Support. Stud. Inform. Control 2018, 27, 319–330. [Google Scholar] [CrossRef]

- Pastushenko, O.; Hynek, J.; Hruška, T. Generation of test samples for construction of dashboard design guidelines: Impact of color on layout balance. In World Conference on Information Systems and Technologies; Springer: Berlin/Heidelberg, Germany, 2018; pp. 980–990. [Google Scholar]

- Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R. Domain engineering for generating dashboards to analyze employment and employability in the academic context. Presented at the 6th International Conference on Technological Ecosystems for Enhancing Multiculturality, Salamanca, Spain, 24–26 October 2018. [Google Scholar]

- Logre, I.; Mosser, S.; Collet, P.; Riveill, M. Sensor data visualisation: A composition-based approach to support domain variability. In European Conference on Modelling Foundations and Applications; Springer: Berlin/Heidelberg, Germany, 2014; pp. 101–116. [Google Scholar]

- Kintz, M. A semantic dashboard description language for a process-oriented dashboard design methodology. In Proceedings of the 2nd International Workshop on Model-based Interactive Ubiquitous Systems (MODIQUITOUS 2012), Copenhagen, Denmark, 25–28 June 2012; Volume 947, pp. 31–36. [Google Scholar]

- Kintz, M.; Kochanowski, M.; Koetter, F. Creating User-specific Business Process Monitoring Dashboards with a Model-driven Approach. In Proceedings of the MODELSWARD, Porto, Portugal, 19–21 February 2017; pp. 353–361. [Google Scholar]

- Palpanas, T.; Chowdhary, P.; Mihaila, G.; Pinel, F. Integrated model-driven dashboard development. Inf. Syst. Front. 2007, 9, 195–208. [Google Scholar] [CrossRef]

- Radovanović, S.; Majstorović, B.; Kukolj, S.; Bjelica, M.Z. Device Cloud platform with customizable Remote User Interfaces. In Proceedings of the 2014 IEEE Fourth International Conference on Consumer Electronics Berlin (ICCE-Berlin), Berlin, Germany, 7–10 September 2014; pp. 202–204. [Google Scholar]

- Belo, O.; Rodrigues, P.; Barros, R.; Correia, H. Restructuring Dynamically Analytical Dashboards Based on Usage Profiles. In International Symposium on Methodologies for Intelligent Systems; Springer: Berlin/Heidelberg, Germany, 2014; pp. 445–455. [Google Scholar]

- Arjun, S. Personalizing data visualization and interaction. In Proceedings of the Adjunct Publication of the 26th Conference on User Modeling, Adaptation and Personalization, Nanyang Technological University, Singapore, 8–11 July 2018; ACM: New York, NY, USA, 2018; pp. 199–202. [Google Scholar]

- van Hoecke, S.; Huys, C.; Janssens, O.; Verborgh, R.; van de Walle, R. Dynamic Monitoring Dashboards Through Composition of Web and Visualization Services. In International Internet of Things Summit; Springer: Berlin/Heidelberg, Germany, 2015; pp. 465–474. [Google Scholar]

- Santos, H.; Dantas, V.; Furtado, V.; Pinheiro, P.; McGuinness, D.L. From data to city indicators: A knowledge graph for supporting automatic generation of dashboards. In European Semantic Web Conference; Springer: Berlin/Heidelberg, Germany, 2017; pp. 94–108. [Google Scholar]

- Pleuss, A.; Wollny, S.; Botterweck, G. Model-driven development and evolution of customized user interfaces. In Proceedings of the 5th ACM SIGCHI Symposium on Engineering Interactive Computing Systems, London, UK, 24–27 June 2013; pp. 13–22. [Google Scholar]

- Kleppe, A.G.; Warmer, J.; Bast, W. MDA Explained. The Model Driven Architecture: Practice and Promise; Addison-Wesley Longman Publishing Co., Inc.: Boston, MA, USA, 2003. [Google Scholar]

- Mellor, S.J.; Scott, K.; Uhl, A.; Weise, D. Model-Driven Architecture. In Proceedings of the Advances in Object-Oriented Information Systems: OOIS 2002 Workshops, Montpellier, France, 2 September 2002; Bruel, J.-M., Bellahsene, Z., Eds.; Springer: Berlin/Heidelberg, Germany, 2002; pp. 290–297. [Google Scholar]

- Álvarez, J.M.; Evans, A.; Sammut, P. Mapping between Levels in the Metamodel Architecture. In Proceedings of the UML 2001—The Unified Modeling Language. Modeling Languages, Concepts, and Tools. UML 2001; Lecture Notes in Computer Science; Gogolla, M., Kobryn, C., Eds.; Springer: Berlin/Heidelberg, Germany, 2001; Volume 2185, pp. 34–46. [Google Scholar]

- Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R.; González, M.Á.C. Extending a dashboard meta-model to account for users’ characteristics and goals for enhancing personalization. Presented at the Learning Analytics Summer Institute (LASI), Vigo, Spain, 27–28 June 2019. [Google Scholar]

- Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R. Capturing high-level requirements of information dashboards’ components through meta-modeling. Presented at the 7th International Conference on Technological Ecosystems for Enhancing Multiculturality (TEEM 2019), León, Spain, 16–28 October 2019. [Google Scholar]

- García-Holgado, A.; García-Peñalvo, F.J. Validation of the learning ecosystem metamodel using transformation rules. Future Gener. Comput. Syst. 2019, 91, 300–310. [Google Scholar] [CrossRef]

- Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R.; Filvà, D.A.; Escudero, D.F. Connecting domain-specific features to source code: Towards the automatization of dashboard generation. Clust. Comput. 2020, 23, 1803–1816. [Google Scholar] [CrossRef]

- Clements, P.; Northrop, L. Software Product Lines; Addison-Wesley: Boston, MA, USA, 2002. [Google Scholar]

- Gomaa, H. Designing Software Product Lines with UML: From Use Cases to Pattern-Based Software Architectures; Addison Wesley Longman Publishing Co., Inc.: Boston, MA, USA, 2004. [Google Scholar]

- Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R. Taking advantage of the software product line paradigm to generate customized user interfaces for decision-making processes: A case study on university employability. PeerJ Comput. Sci. 2019, 5, e203. [Google Scholar] [CrossRef]

- Django Software Foundation. Django Web Framework. Available online: https://www.djangoproject.com/ (accessed on 15 March 2015).

- Vázquez-Ingelmo, A.; García-Peñalvo, F.; Therón, R.; García-Holgado, A. Specifying information dashboards’ interactive features through meta-model instantiation. In Proceedings of the LASI-SPAIN 2020. Learning Analytics Summer Institute Spain 2020: Learning Analytics. Time for Adoption? Valladolid, Spain, 15–16 June 2020; CEUR-WS.org: Aachen, Germany, 2020. [Google Scholar]

- Meeks, E. D3. js in Action: Data Visualization with JavaScript; Manning Publications: Shelter Island, NY, USA, 2018. [Google Scholar]

- Kuzilek, J.; Hlosta, M.; Zdrahal, Z. Open university learning analytics dataset. Sci. Data 2017, 4, 170171. [Google Scholar] [CrossRef] [PubMed]

- Wilke, C.O. Fundamentals of Data Visualization: A Primer on Making Informative and Compelling Figures; O’Reilly Media: Newton, MA, USA, 2019. [Google Scholar]

- Ferguson, R. Learning analytics: Drivers, developments and challenges. Int. J. Technol. Enhanc. Learn. 2012, 4, 304–317. [Google Scholar] [CrossRef]

- Liñán, L.C.; Pérez, Á.A.J. Educational Data Mining and Learning Analytics: Differences, similarities, and time evolution. Int. J. Educ. Technol. High. Educ. 2015, 12, 98–112. [Google Scholar]

- Picciano, A.G. The evolution of big data and learning analytics in American higher education. J. Asynchronous Learn. Netw. 2012, 16, 9–20. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Vázquez-Ingelmo, A.; García-Peñalvo, F.J.; Therón, R. Towards a Technological Ecosystem to Provide Information Dashboards as a Service: A Dynamic Proposal for Supplying Dashboards Adapted to Specific Scenarios. Appl. Sci. 2021, 11, 3249. https://doi.org/10.3390/app11073249

Vázquez-Ingelmo A, García-Peñalvo FJ, Therón R. Towards a Technological Ecosystem to Provide Information Dashboards as a Service: A Dynamic Proposal for Supplying Dashboards Adapted to Specific Scenarios. Applied Sciences. 2021; 11(7):3249. https://doi.org/10.3390/app11073249

Chicago/Turabian StyleVázquez-Ingelmo, Andrea, Francisco José García-Peñalvo, and Roberto Therón. 2021. "Towards a Technological Ecosystem to Provide Information Dashboards as a Service: A Dynamic Proposal for Supplying Dashboards Adapted to Specific Scenarios" Applied Sciences 11, no. 7: 3249. https://doi.org/10.3390/app11073249

APA StyleVázquez-Ingelmo, A., García-Peñalvo, F. J., & Therón, R. (2021). Towards a Technological Ecosystem to Provide Information Dashboards as a Service: A Dynamic Proposal for Supplying Dashboards Adapted to Specific Scenarios. Applied Sciences, 11(7), 3249. https://doi.org/10.3390/app11073249