Dynamic Workpiece Modeling with Robotic Pick-Place Based on Stereo Vision Scanning Using Fast Point-Feature Histogram Algorithm

Abstract

:1. Introduction

2. Research Methodology

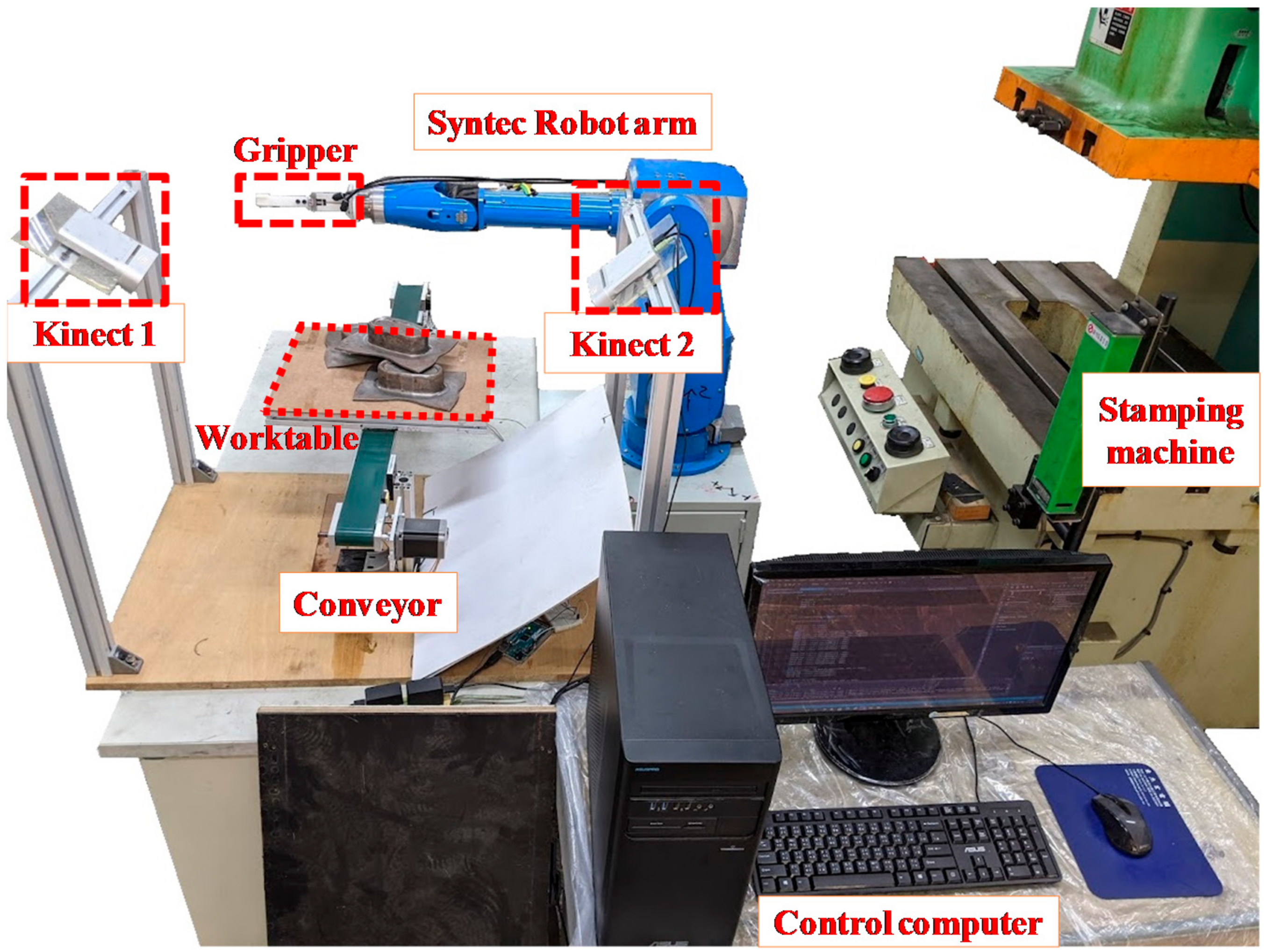

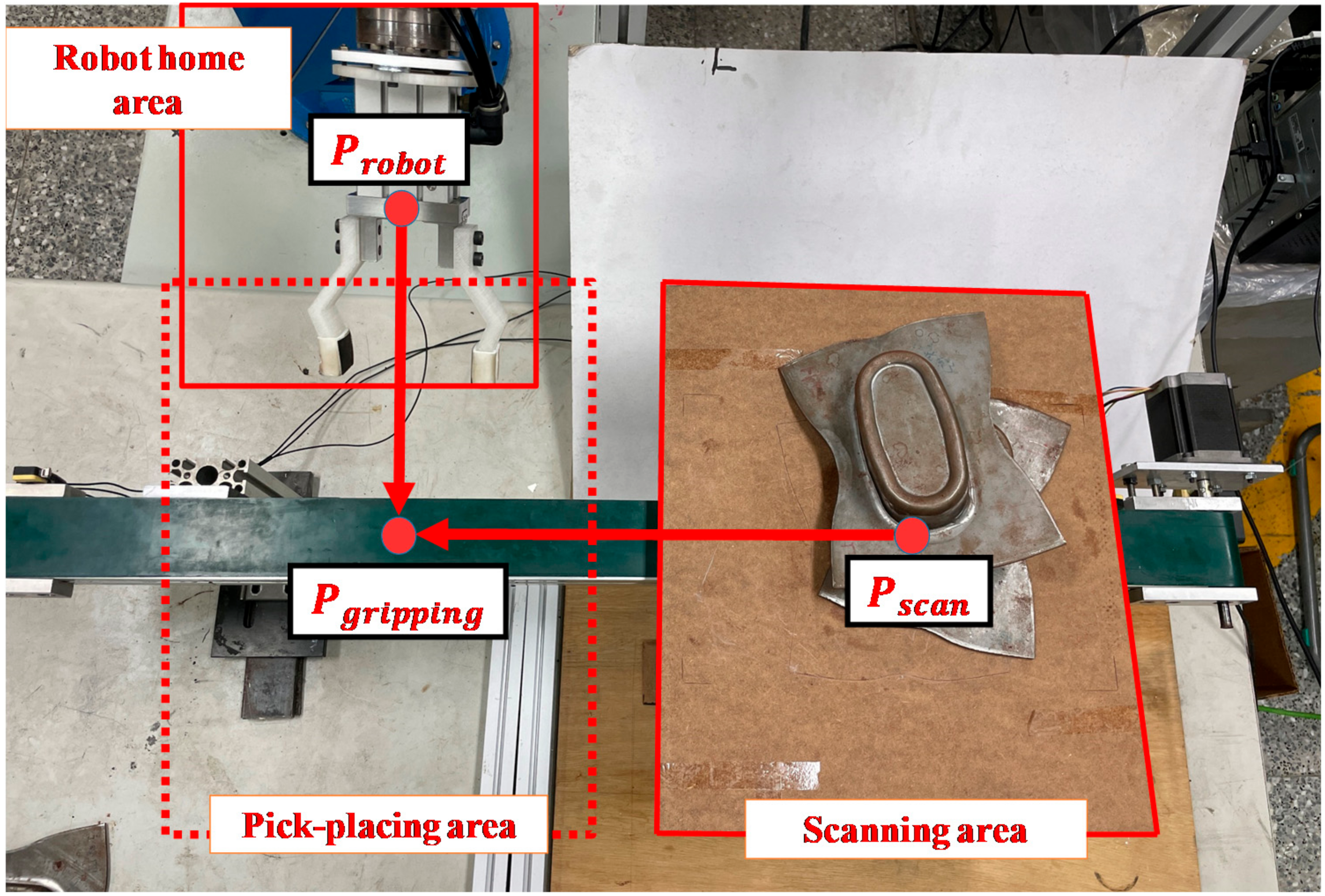

2.1. Experimental Devices and Setup

2.2. Point Cloud Contrustion and Pre-Processing

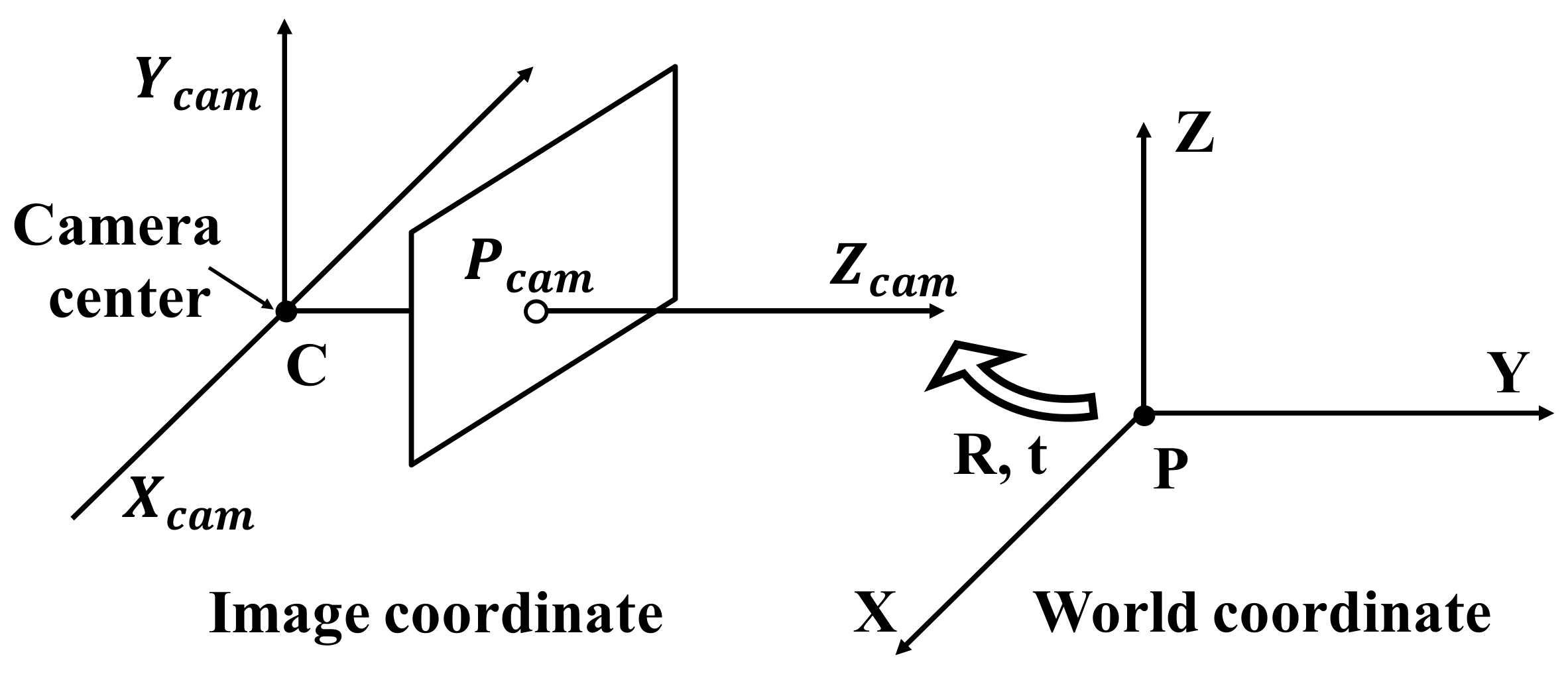

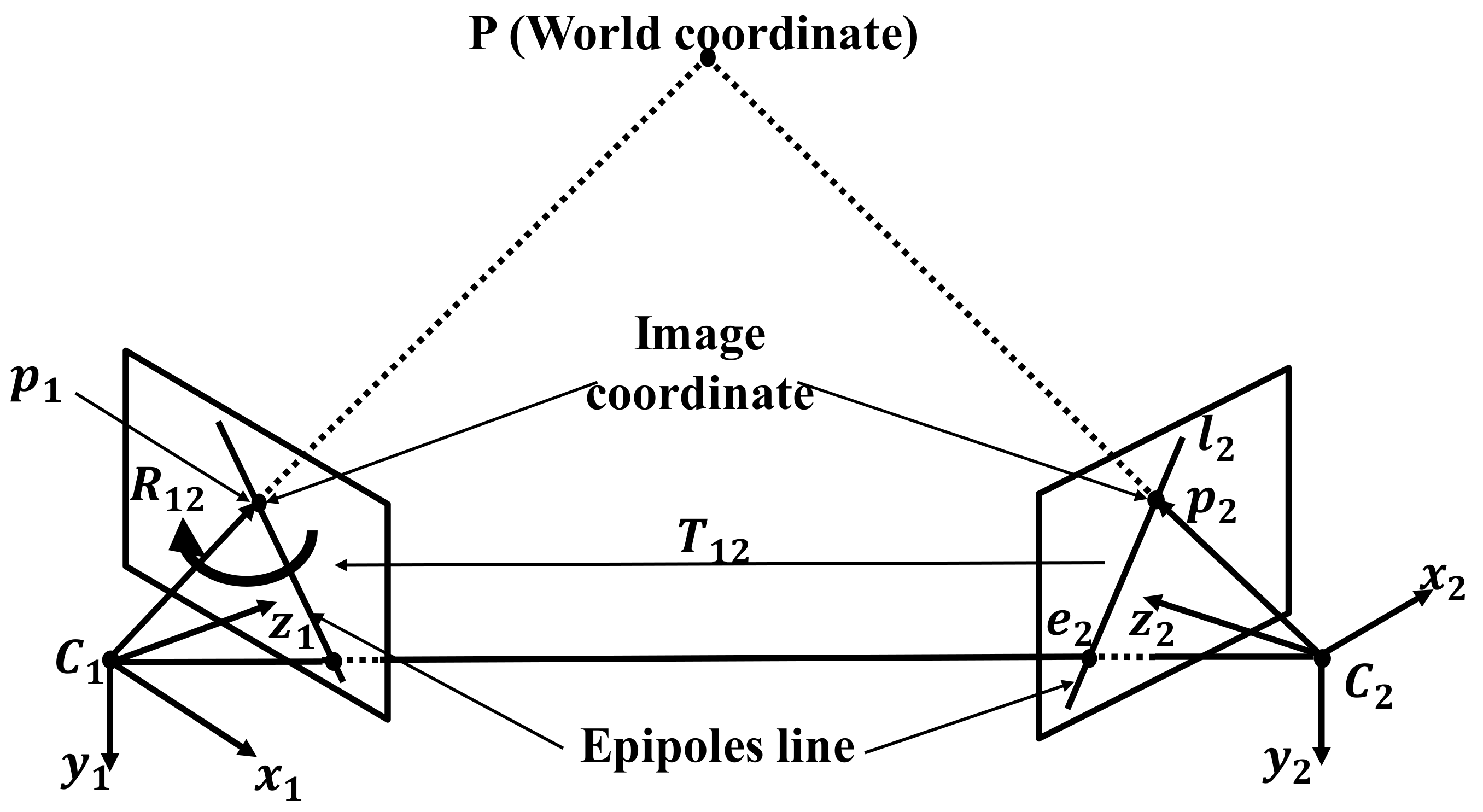

2.2.1. Stereo Calibration Principle

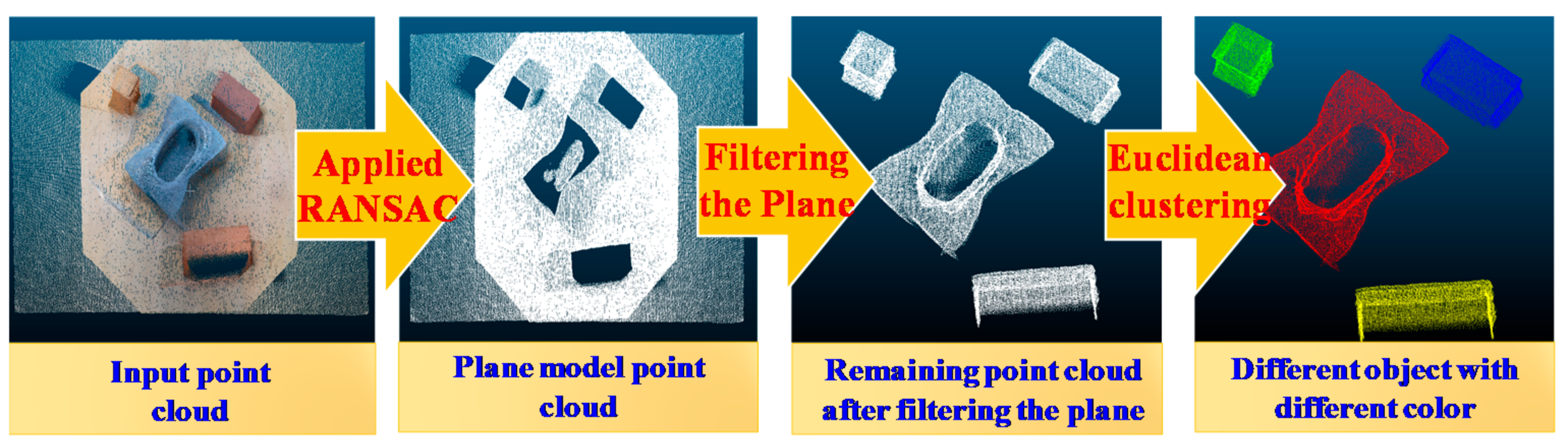

2.2.2. Object Segmentation

| Algorithm 1 RANSAC algorithm to find plane model |

| Input: Point cloud and model estimation. |

| Output: Plane Model , which was rated best amongst all iterations |

| While () do |

| Sample points; |

| Estimate a plane model ; |

| Compute model inliers; |

| If ( is better than ) then |

| ; |

| ; |

| End if; |

| ; |

| End while; |

| Return |

| Algorithm 2 Euclidean cluster extraction algorithm to extract the workpiece point cloud |

| Input: Point cloud data . |

| Output: Point cloud clusters |

| ;//list of clusters |

| ;//list of checked points |

| While () do |

| ; |

| While () do |

| If () then |

| ; |

| End if; |

| While () |

| If ( has not been processed) then |

| ; |

| End if; |

| End while; |

| End while; |

| If (all points are processed) then |

| ; |

| ; |

| End if; |

| End while |

2.3. Pose Estimation System Construction

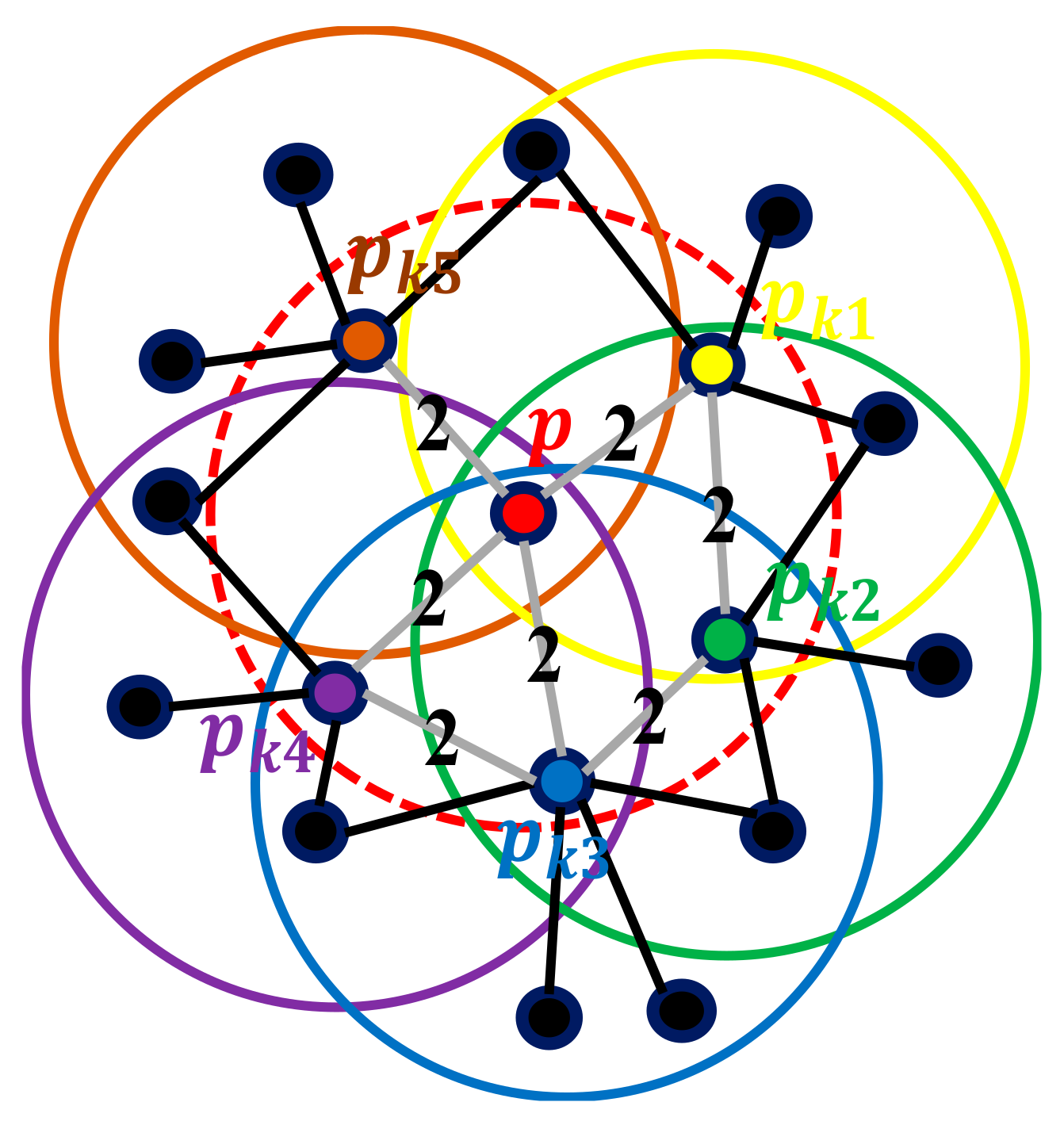

2.3.1. Feature Point Descriptor

2.3.2. Coarse Alignment Matching

2.3.3. Finish Alignment Matching

2.4. Position Error Compensation of Robot Arm

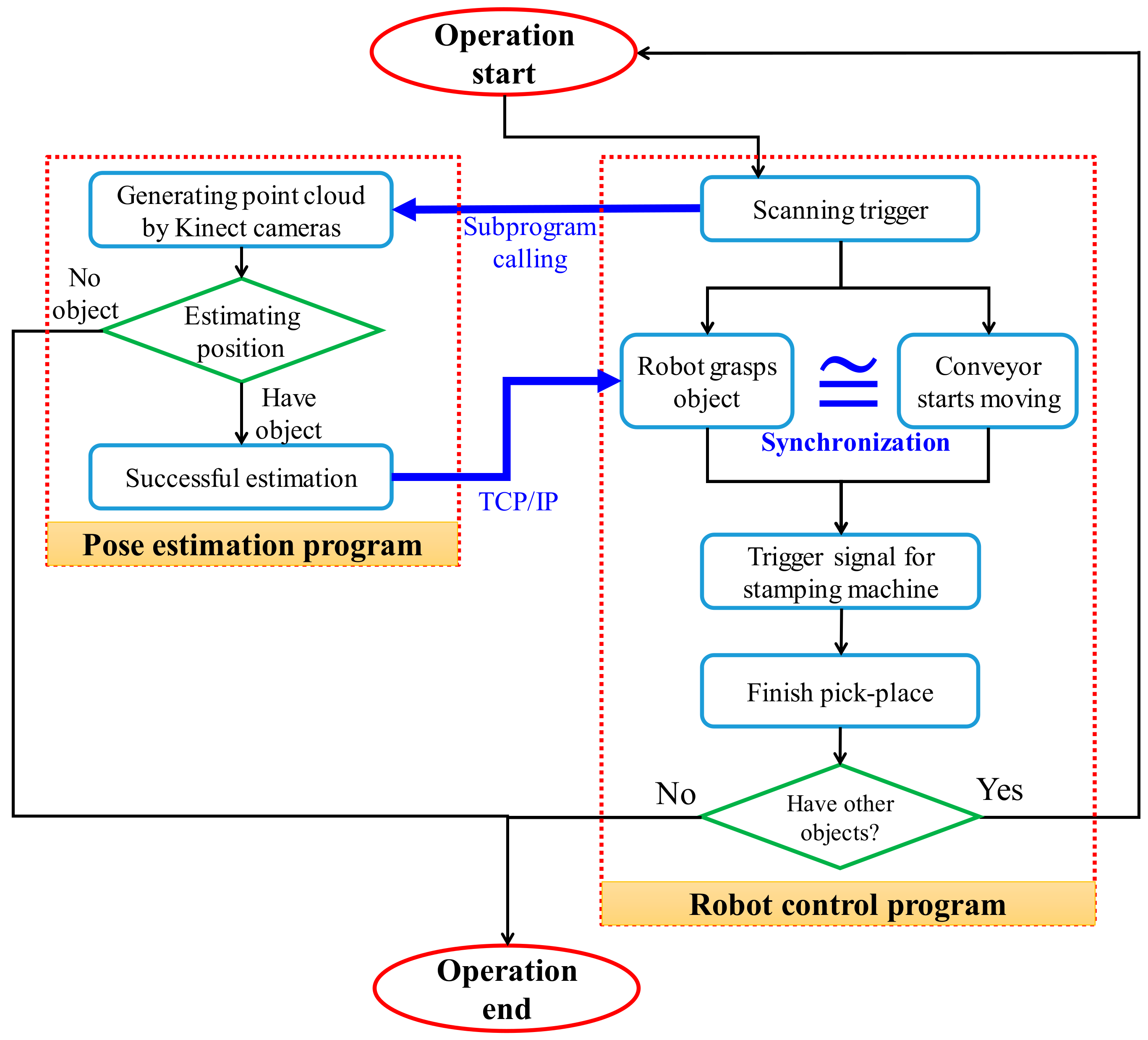

2.5. Synchronization of Transmission Conveyor and Robot Arm

3. Experimental Results

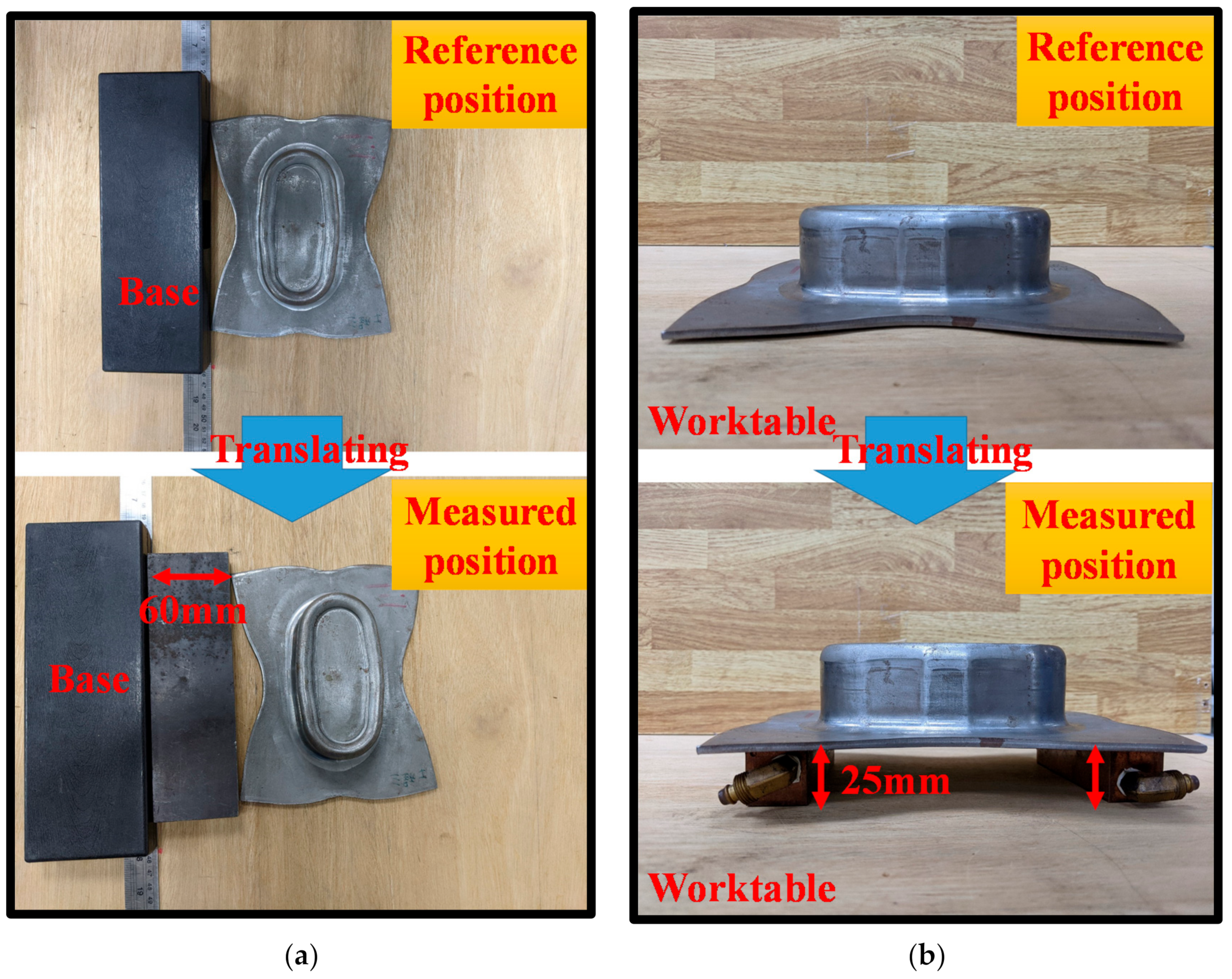

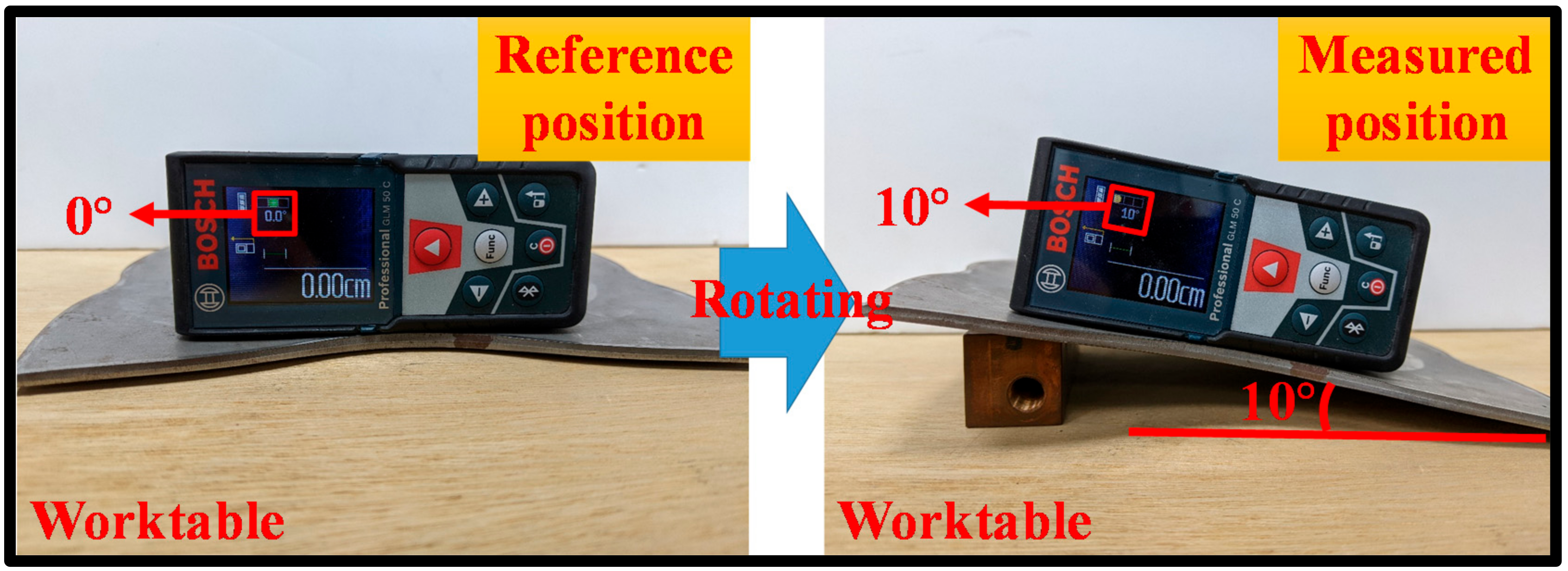

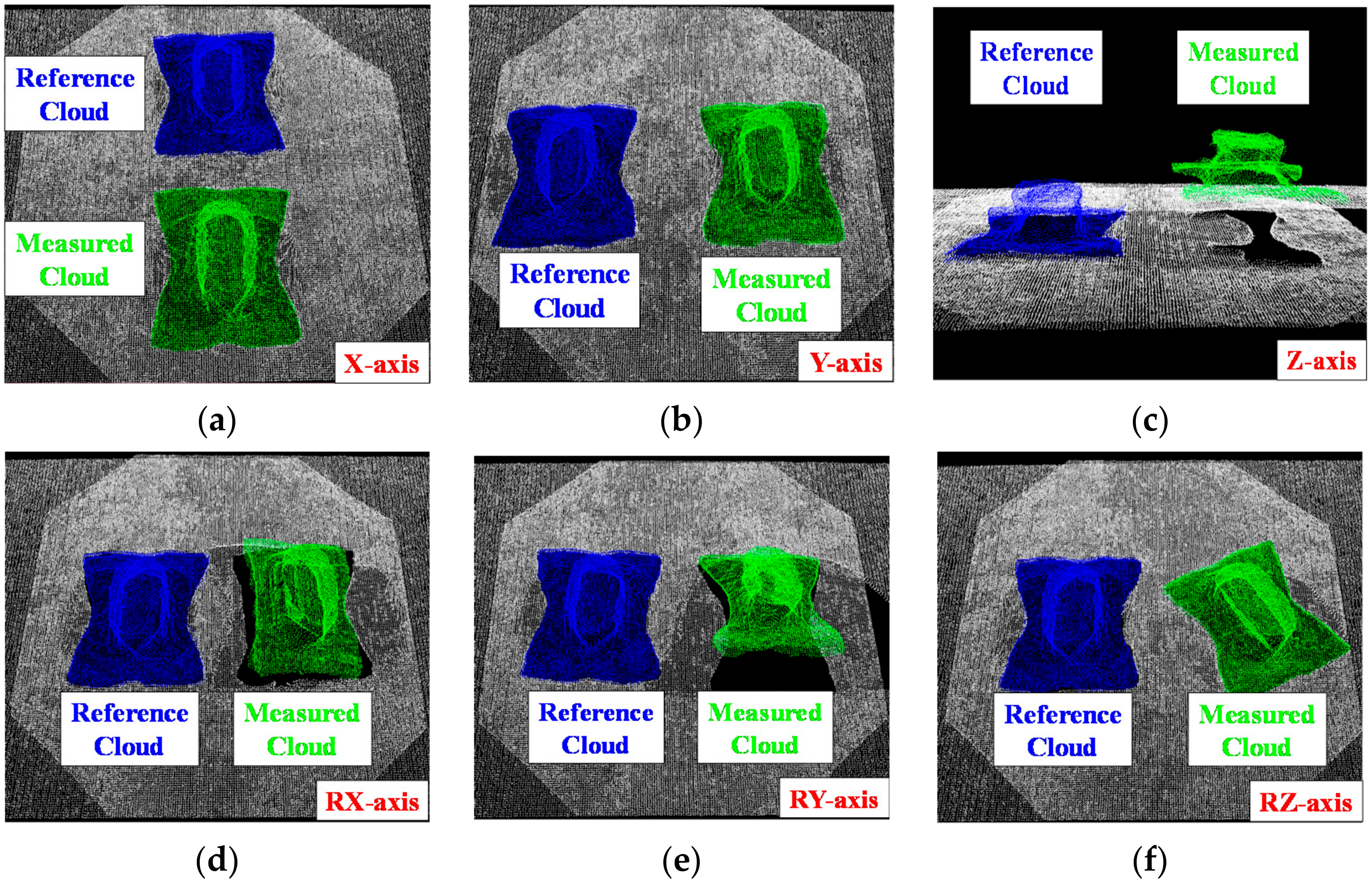

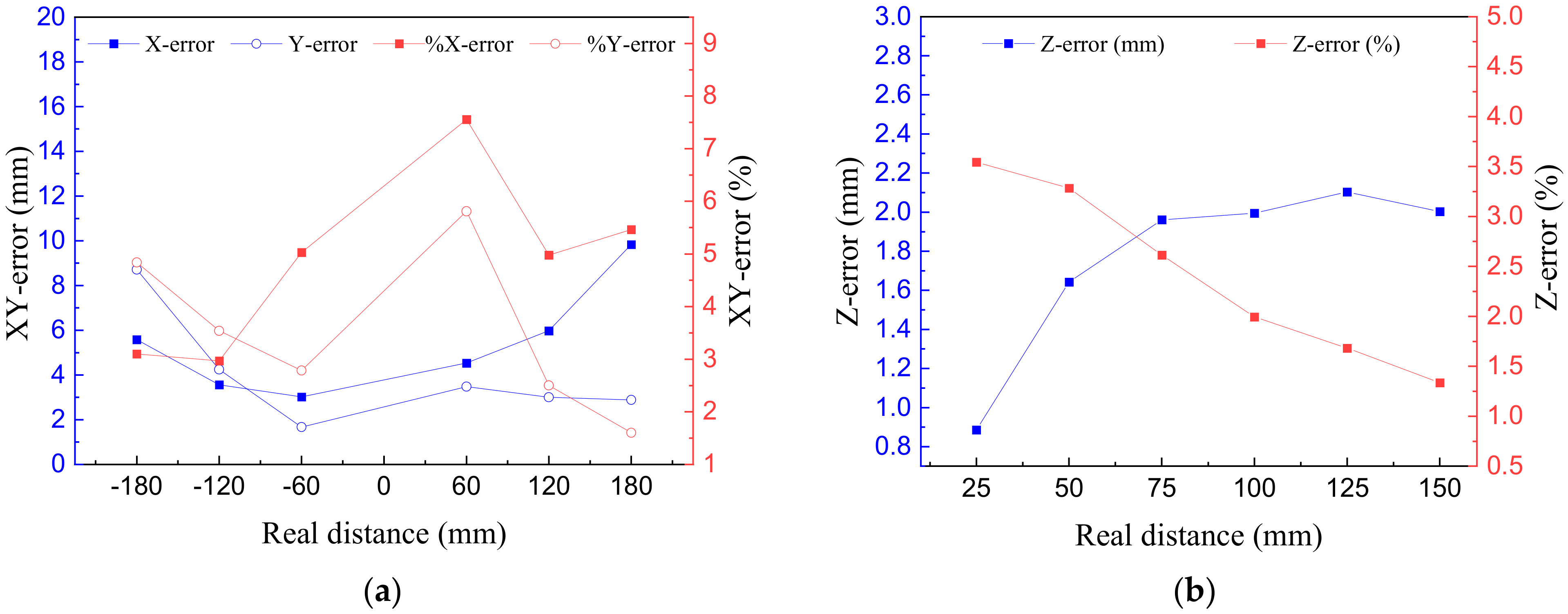

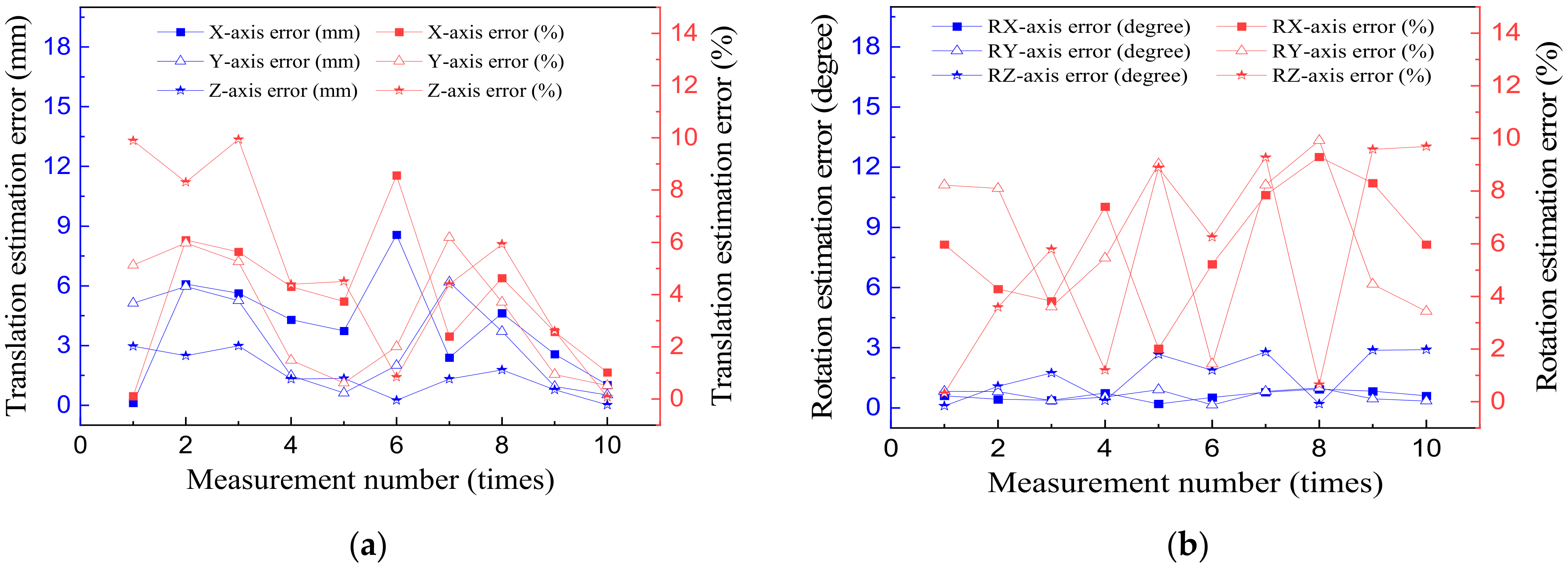

3.1. Experiment on the Accuracy of the Pose Estimation System

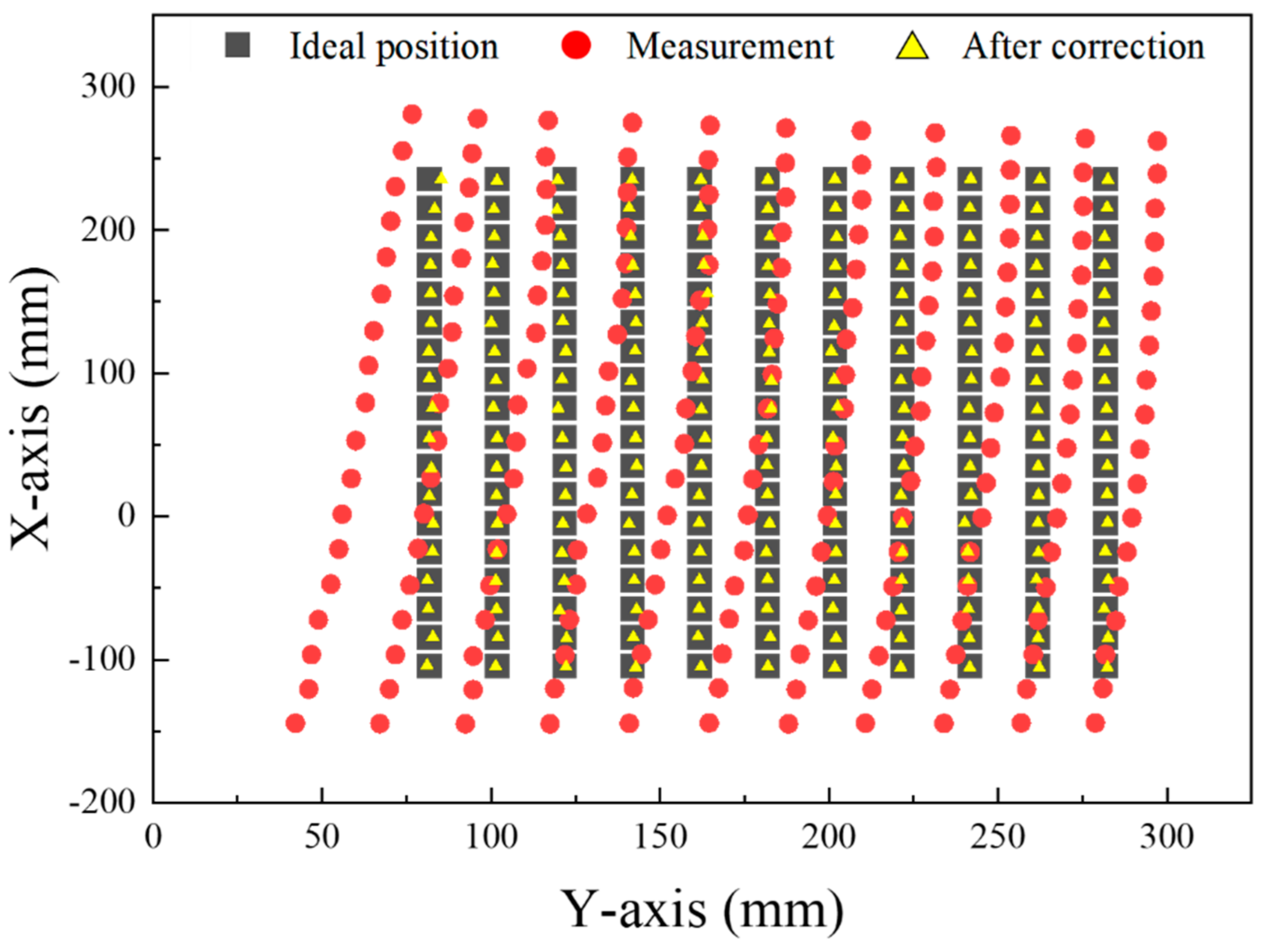

3.2. Robot Error Compensation Results

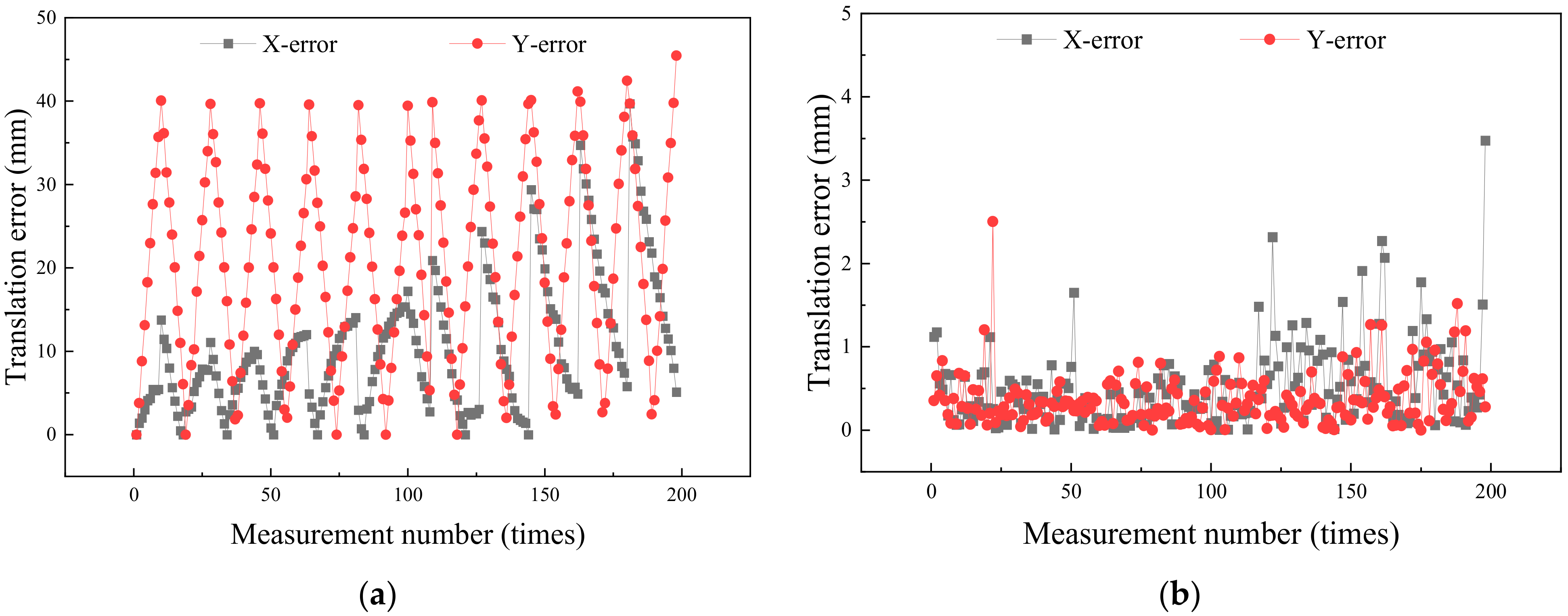

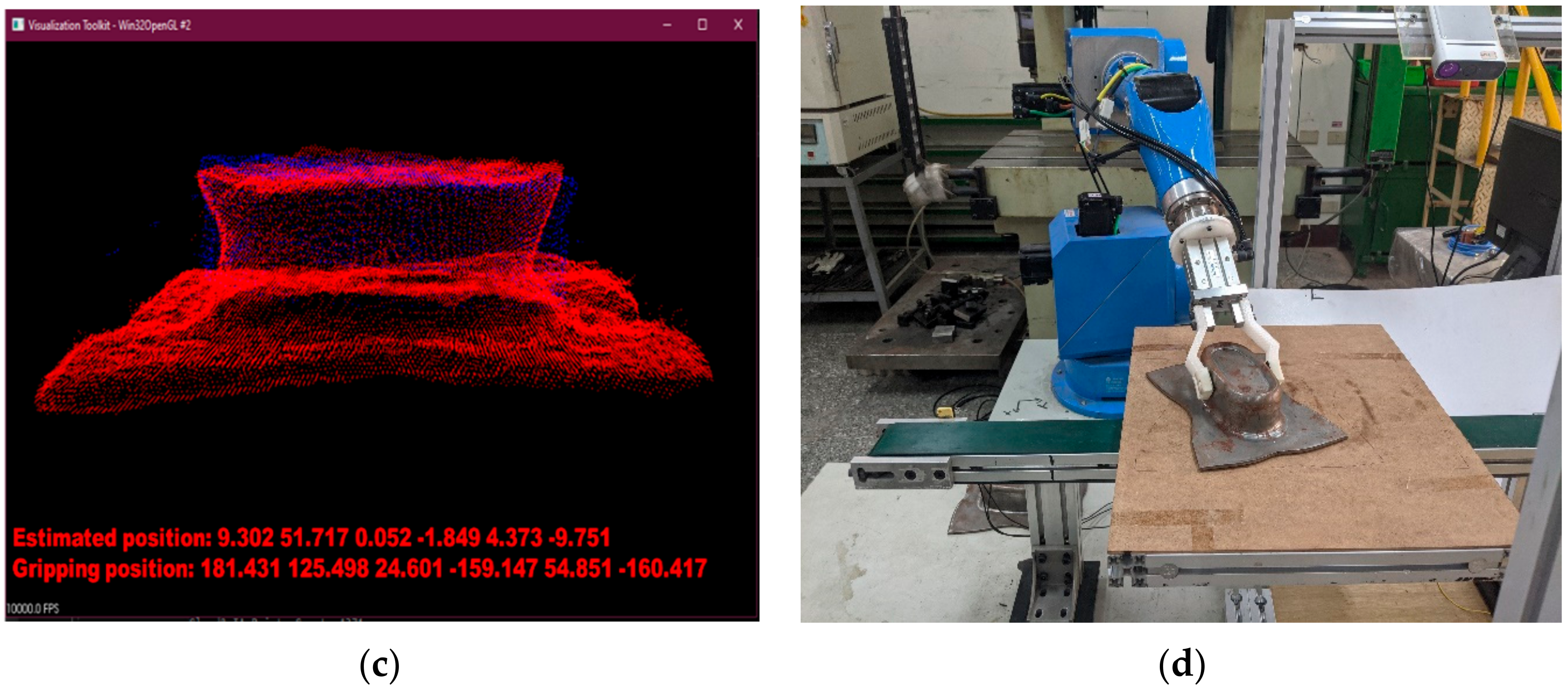

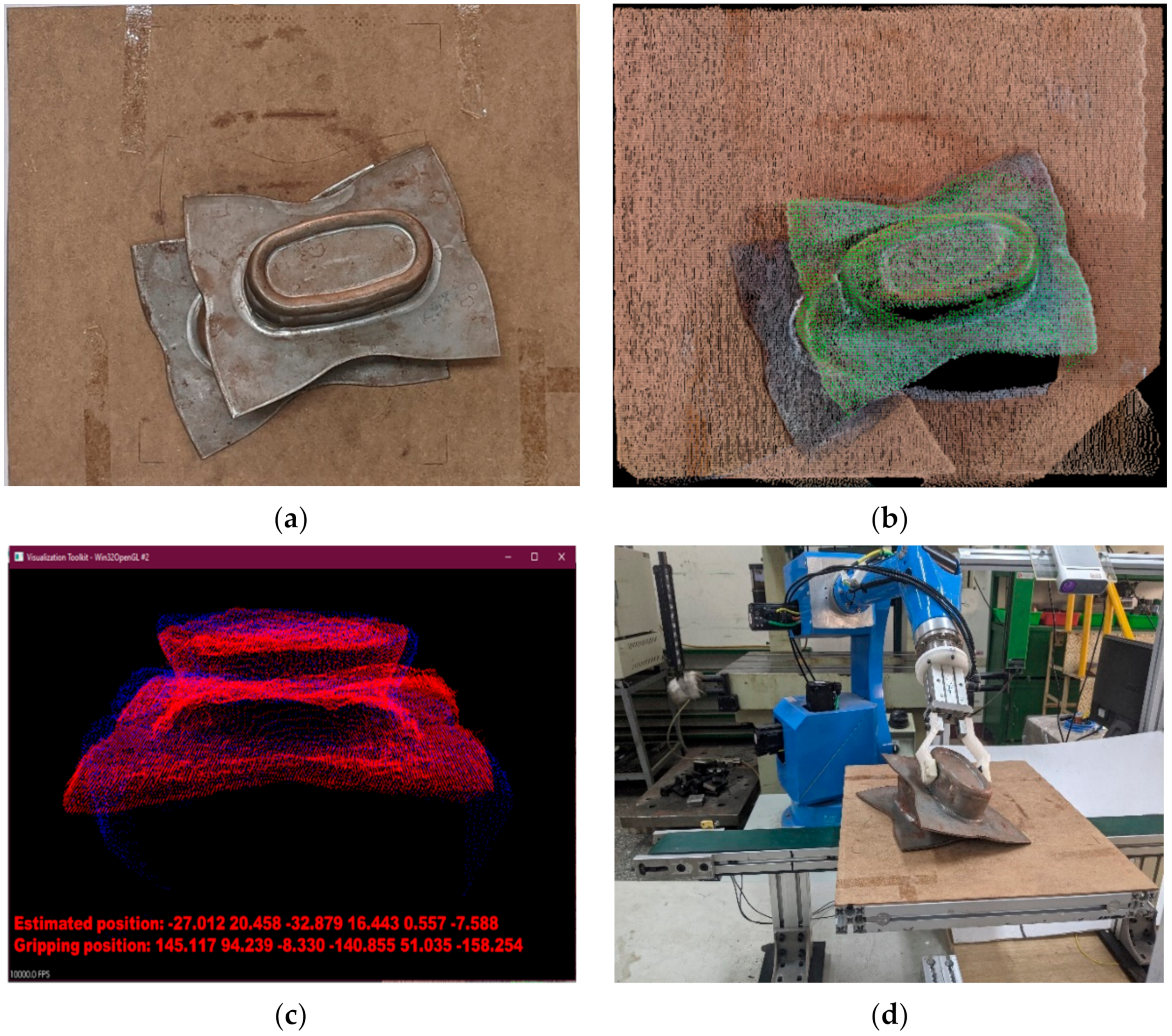

3.3. Experiment of the Dynamic Stack Workpiece Feeding System

4. Conclusions and Future Prospects

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Srasrisom, K.; Srinoi, P.; Chaijit, S.; Wiwatwongwana, F. Modeling, analysis and effective improvement of aluminum bowl embossing process through robot simulation tools. Procedia Manuf. 2019, 30, 443–450. [Google Scholar] [CrossRef]

- Karbasian, H.; Tekkaya, A.E. A review on hot stamping. J. Mater. Process. Tech. 2010, 210, 2103–2118. [Google Scholar] [CrossRef]

- Handreg, T.; Froitzheim, P.; Fuchs, N.; Flügge, W.; Stoltmann, M.; Woernle, C. Concept of an automated framework for sheet metal cold forming. In Proceedings of the 4th Kongresses Montage Handhabung Industrieroboter, Berlin/Heidelberg, Germany, 3 May 2019; pp. 117–127. [Google Scholar]

- Tölgyessy, M.; Dekan, M.; Chovanec, Ľ.; Hubinský, P. Evaluation of the Azure Kinect and Its Comparison to Kinect V1 and Kinect V2. Sensors 2021, 21, 413. [Google Scholar] [CrossRef]

- Anwer, A.; Ali, S.S.A.; Khan, A.; Meriaudeau, F. Underwater 3-D Scene Reconstruction Using Kinect v2 Based on Physical Models for Refraction and Time of Flight Correction. IEEE Access 2017, 5, 15960–15970. [Google Scholar] [CrossRef]

- Hänsch, R.; Weber, T.; Hellwich, O. Comparison of 3D Interest Point Detectors and Descriptors for Point Cloud Fusion. In Proceedings of the Photogrammetric Computer Vision, Zürich, Switzerland, 5–7 September 2014. [Google Scholar]

- Guo, N.; Zhang, B.H.; Zhou, J.; Zhan, K.T.; Lai, S. Pose estimation and adaptable grasp configuration with point cloud registration and geometry understanding for fruit grasp planning. Comput. Electron. Agric. 2020, 179, 105818. [Google Scholar] [CrossRef]

- Zhang, Y.; Meng, J.; Sun, Y.; Wang, Q.; Wang, L.; Zheng, G. Research on the cooperative work of multi manipulator in hot stamping production line. In Proceedings of the 5th International Conference on Advanced Design and Manufacturing Engineering, Shenzhen, China, 19–20 September 2015. [Google Scholar]

- Lindner, M.; Schiller, I.; Kolb, A.; Koch, R. Time-of-Flight sensor calibration for accurate range sensing. Comput. Vis. Image Underst. 2010, 114, 1318–1328. [Google Scholar] [CrossRef]

- Rathnayaka, P.; Baek, S.-H.; Park, S.-Y. An Efficient Calibration Method for a Stereo Camera System with Heterogeneous Lenses Using an Embedded Checkerboard Pattern. J. Sens. 2017, 2017, 1–12. [Google Scholar] [CrossRef] [Green Version]

- Fischler, M.A.; Bolles, R.C. Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography. Commun. ACM 1981, 24, 381–395. [Google Scholar] [CrossRef]

- Rusu, R.B. Semantic 3D Object Maps for Everyday Manipulation in Human Living Environments. KI Künstliche Intell. 2010, 24, 345–348. [Google Scholar] [CrossRef] [Green Version]

- Saval-Calvo, M.; Azorin-Lopez, J.; Fuster-Guillo, A.; Garcia-Rodriguez, J. Three-dimensional planar model estimation using multi-constraint knowledge based on k-means and RANSAC. Appl. Soft Comput. 2015, 34, 572–586. [Google Scholar] [CrossRef] [Green Version]

- Hajebi, K.; Abbasi-Yadkori, Y.; Shahbazi, H.; Zhang, H. Fast Approximate Nearest-Neighbor Search with k-Nearest Neighbor Graph. In Proceedings of the 22nd International Joint Conference on Artificial Intelligence, Barcelona, Spain, 16–22 July 2011; pp. 1312–1317. [Google Scholar]

- Rusu, R.B.; Blodow, N.; Beetz, M. Fast Point Feature Histograms (FPFH) for 3D registration. In Proceedings of the 2009 IEEE International Conference on Robotics and Automation, Kobe, Japan, 12–17 May 2009; pp. 3212–3217. [Google Scholar]

- Rusu, R.B.; Marton, Z.C.; Blodow, N.; Beetz, M. Learning informative point classes for the acquisition of object model maps. In Proceedings of the 2008 10th International Conference on Control, Automation, Robotics and Vision, Hanoi, Vietnam, 17–20 December 2008; pp. 643–650. [Google Scholar]

- Shi, X.; Peng, J.; Li, J.; Yan, P.; Gong, H. The Iterative Closest Point Registration Algorithm Based on the Normal Distribution Transformation. Procedia Comput. Sci. 2019, 147, 181–190. [Google Scholar] [CrossRef]

- Besl, P.J.; McKay, N.D. A method for registration of 3-D shapes. IEEE Trans. Pattern Anal. Mach. Intell. 1992, 14, 239–256. [Google Scholar] [CrossRef]

- Ji-ming, Z. Research on nonlinear correction algorithm of two-dimensional PSD based on ploynominals. Ship Sci. Technol. 2019, 41, 89–93. [Google Scholar]

| Item | Static | Dynamic | ||

|---|---|---|---|---|

| Case 2 | Case 1 | Case 2 | Case 1 | |

| Number of experiments | 20 | 20 | 20 | 20 |

| Successful case | 18 | 19 | 13 | 14 |

| Failure case | 3 | 1 | 7 | 6 |

| Successful rate (%) | 90 | 95 | 65 | 70 |

| Pose estimation time (seconds) | 12 | 7 | 12 | 7 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Do, Q.-T.; Chang, W.-Y.; Chen, L.-W. Dynamic Workpiece Modeling with Robotic Pick-Place Based on Stereo Vision Scanning Using Fast Point-Feature Histogram Algorithm. Appl. Sci. 2021, 11, 11522. https://doi.org/10.3390/app112311522

Do Q-T, Chang W-Y, Chen L-W. Dynamic Workpiece Modeling with Robotic Pick-Place Based on Stereo Vision Scanning Using Fast Point-Feature Histogram Algorithm. Applied Sciences. 2021; 11(23):11522. https://doi.org/10.3390/app112311522

Chicago/Turabian StyleDo, Quoc-Trung, Wen-Yang Chang, and Li-Wei Chen. 2021. "Dynamic Workpiece Modeling with Robotic Pick-Place Based on Stereo Vision Scanning Using Fast Point-Feature Histogram Algorithm" Applied Sciences 11, no. 23: 11522. https://doi.org/10.3390/app112311522

APA StyleDo, Q.-T., Chang, W.-Y., & Chen, L.-W. (2021). Dynamic Workpiece Modeling with Robotic Pick-Place Based on Stereo Vision Scanning Using Fast Point-Feature Histogram Algorithm. Applied Sciences, 11(23), 11522. https://doi.org/10.3390/app112311522