Robot Scheduling for Assistance and Guidance in Hospitals

Abstract

:1. Introduction

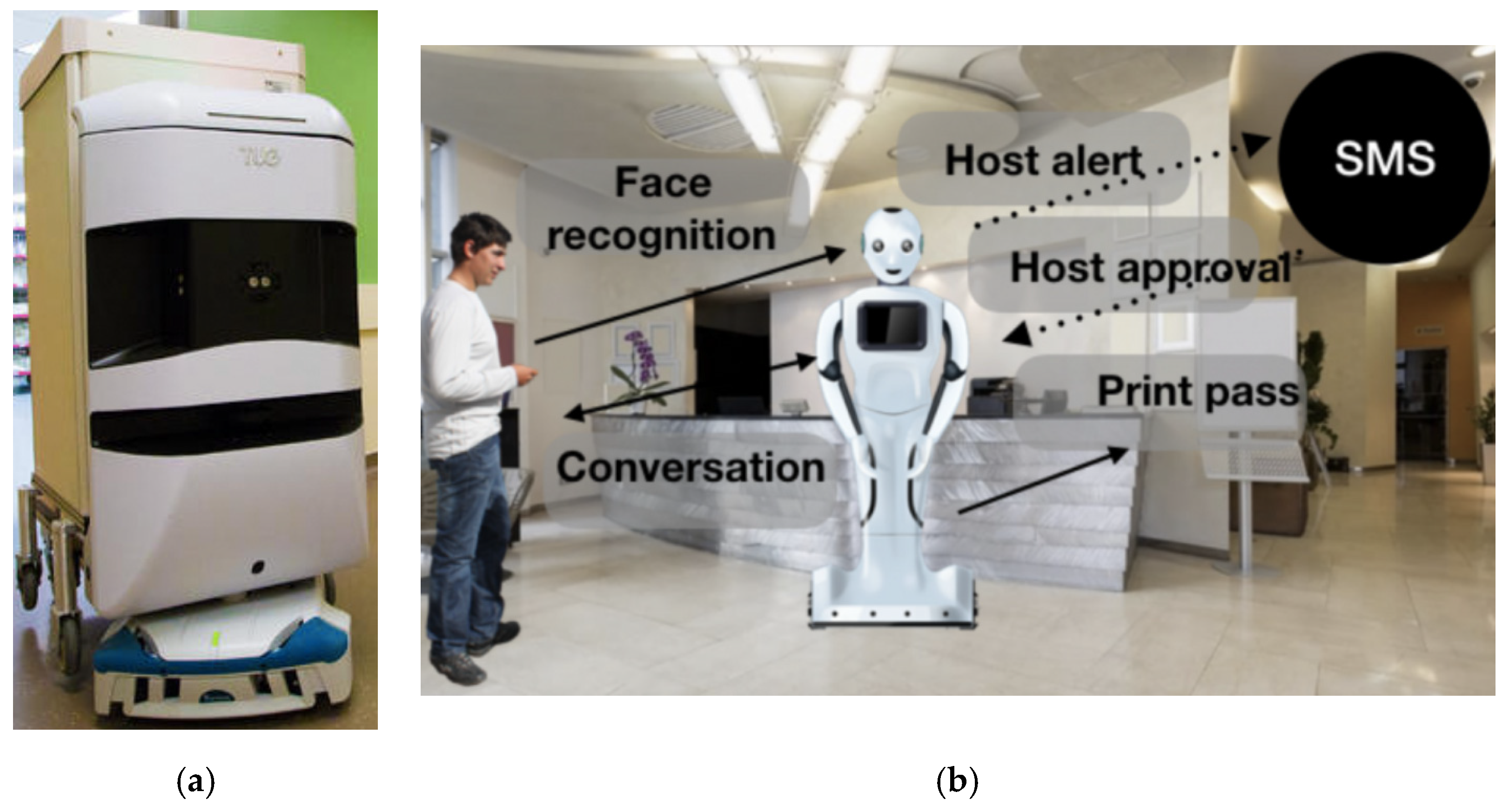

2. Related Work

3. Methods

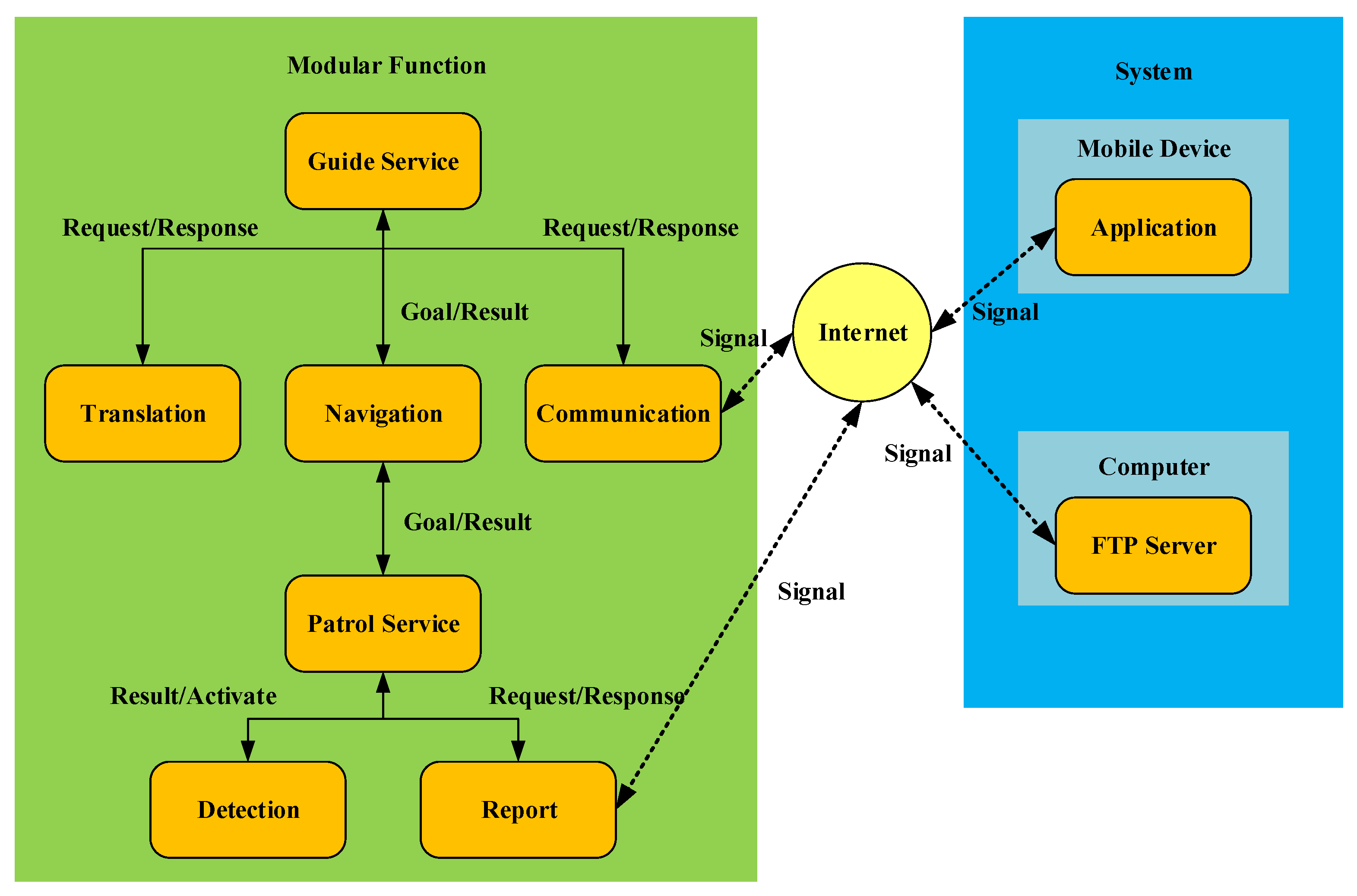

3.1. System Structure

3.2. Pre-Processing Work

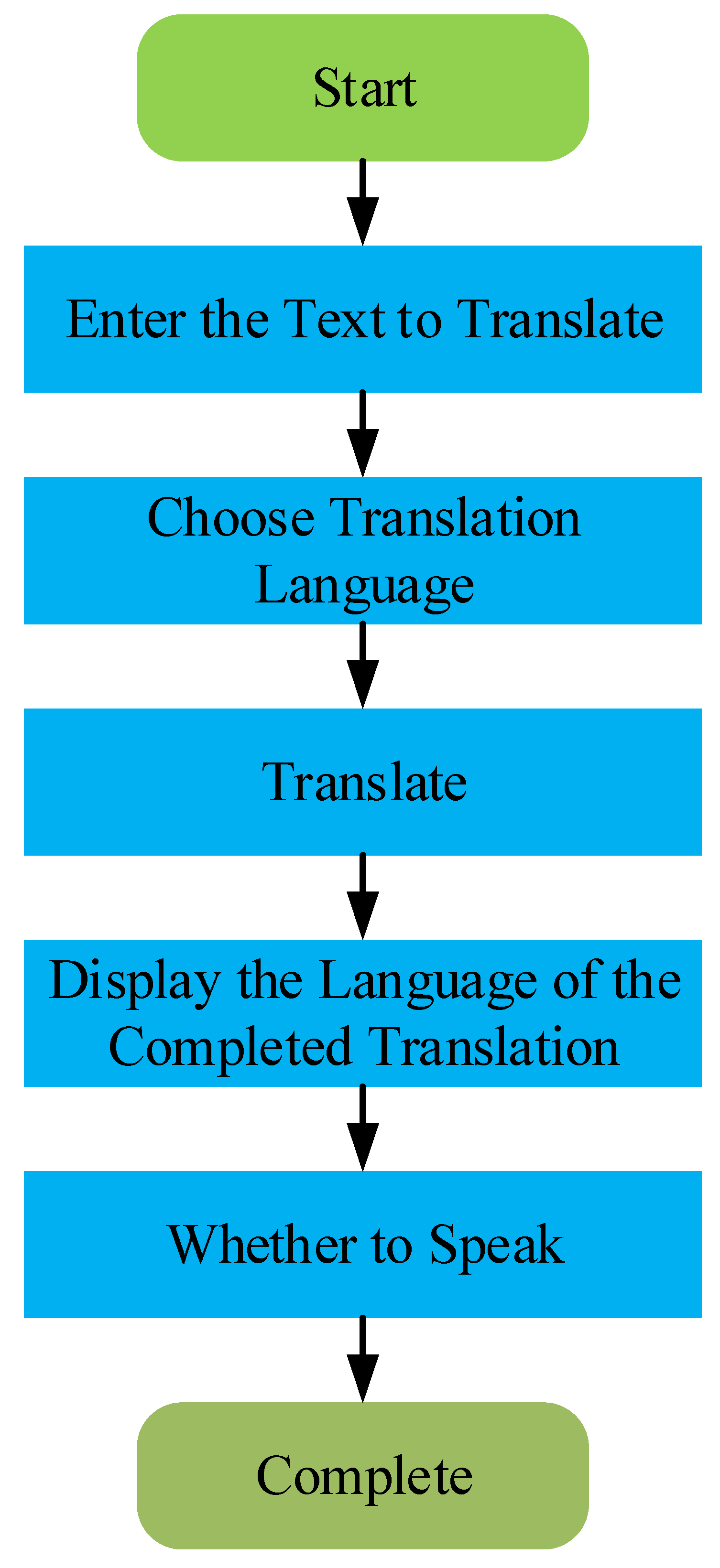

3.3. Emergency Contact and Translation Service

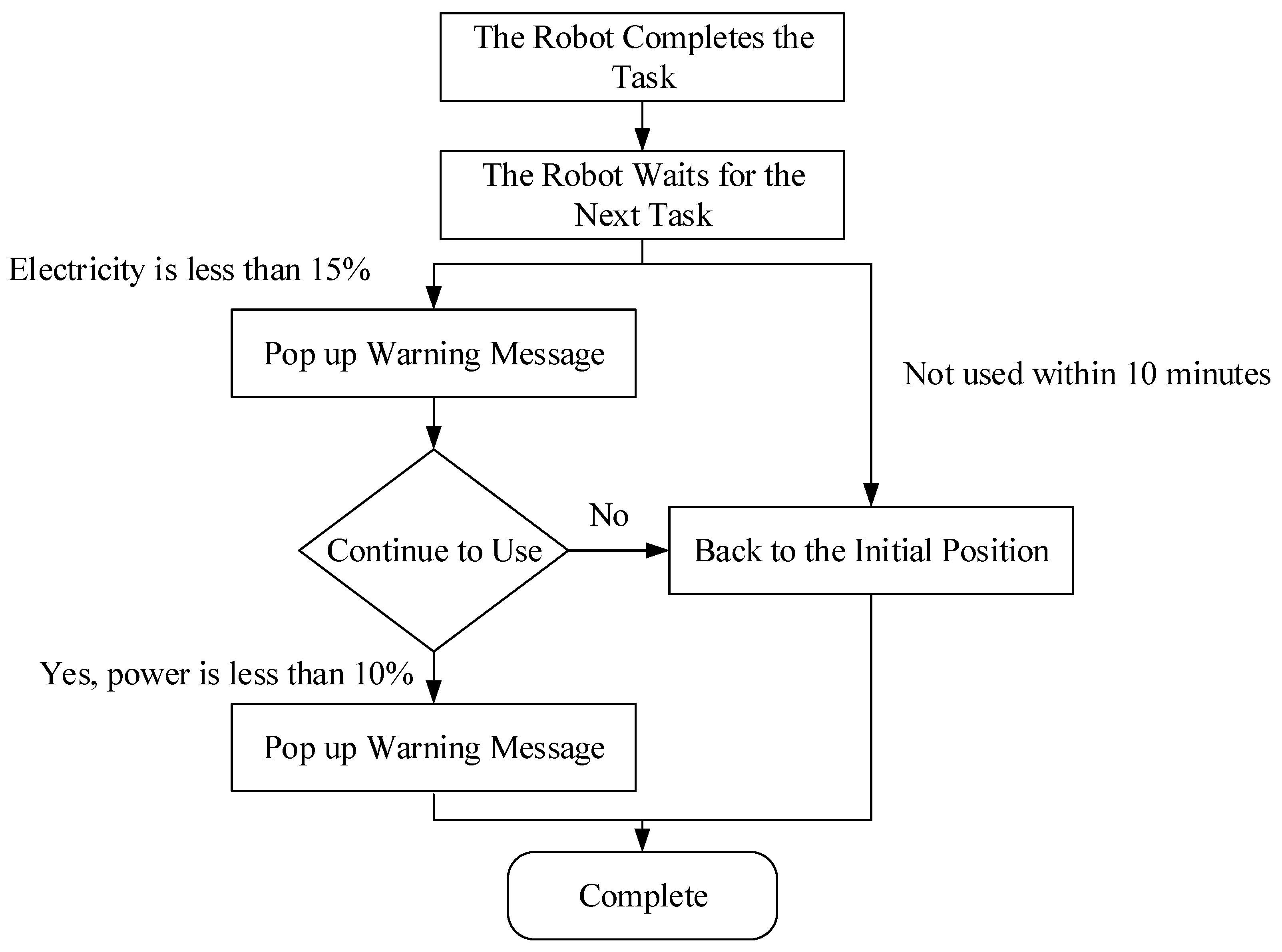

3.4. Return to the Initial Position

3.5. Obstacle Detection Report System

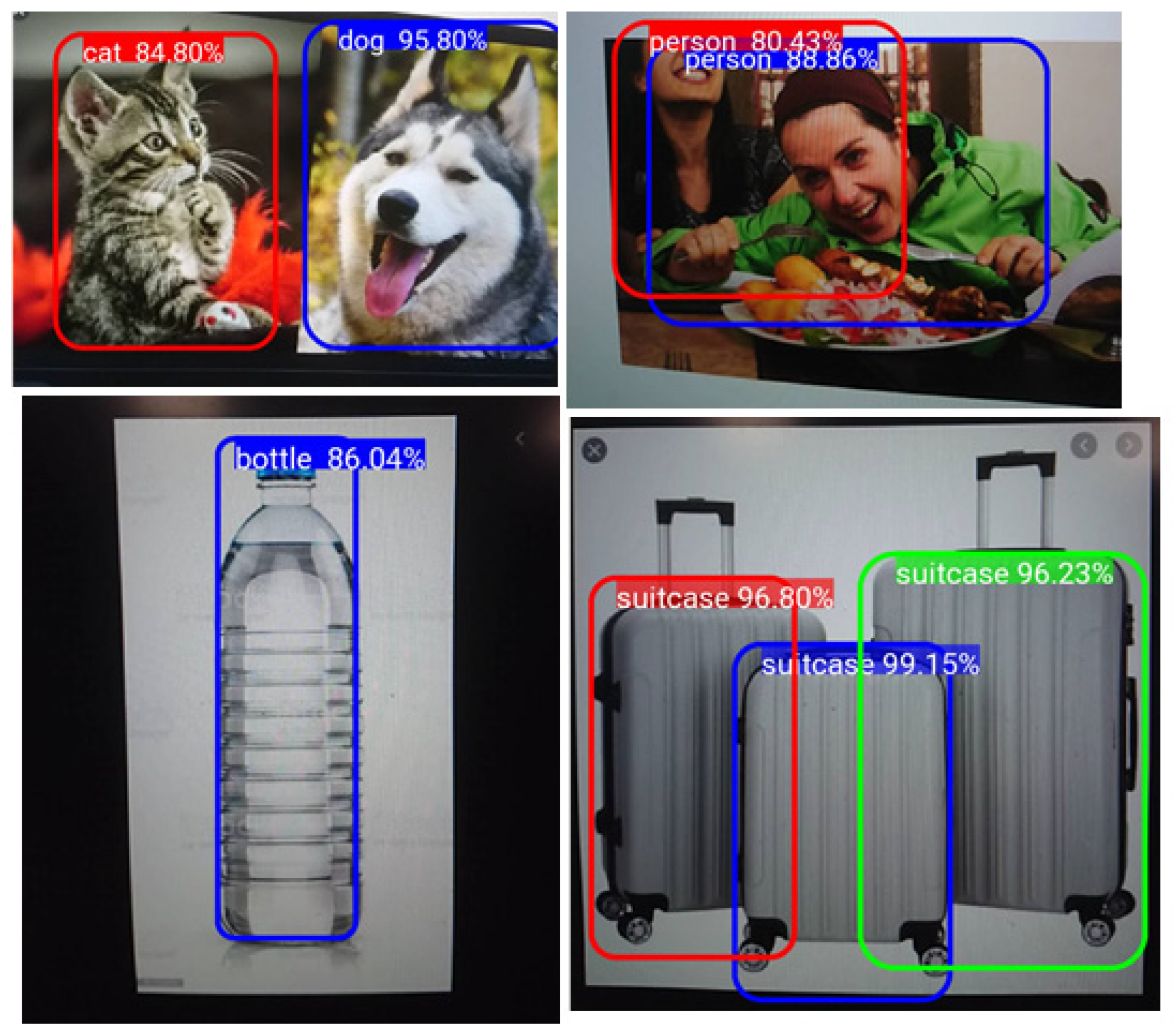

3.6. Face Recognition and Object Detection

4. Experimental Result

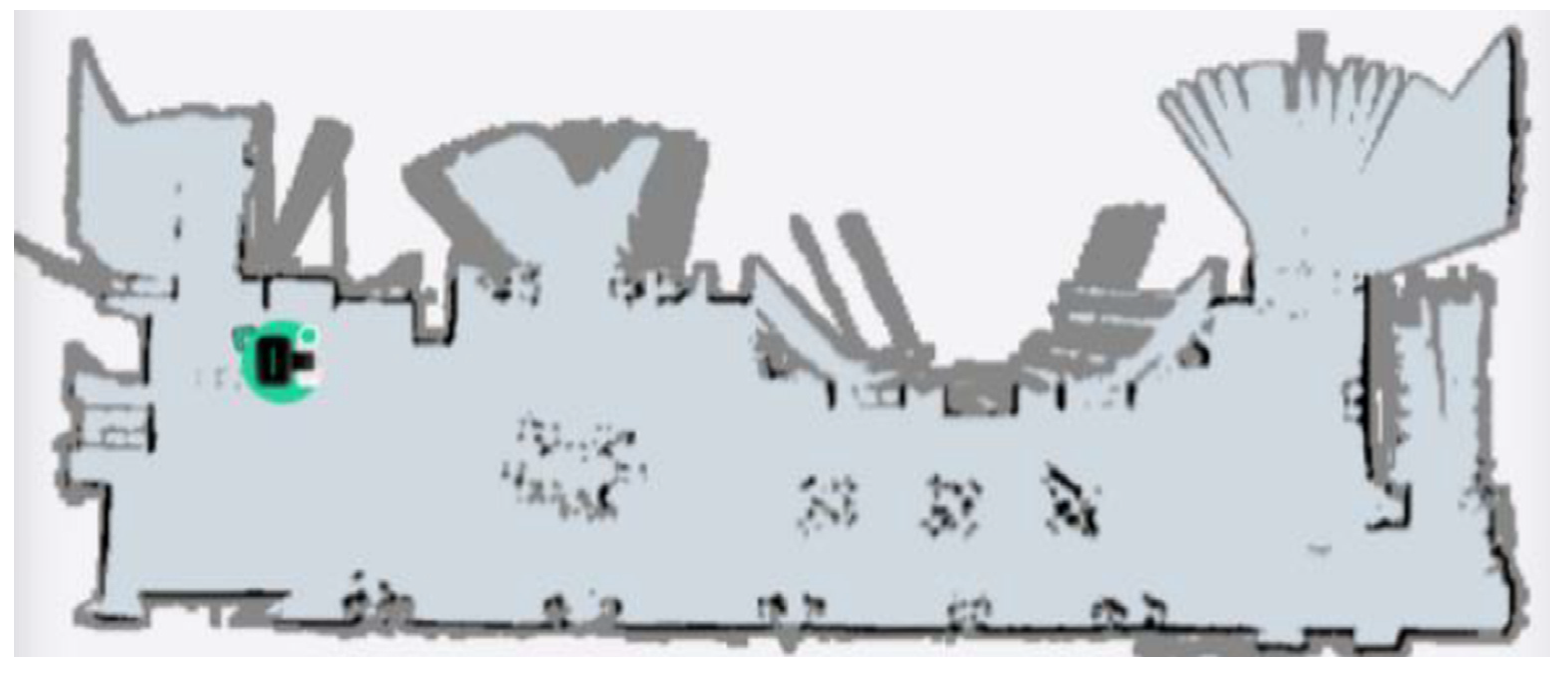

4.1. Map Building

4.2. Location Guide

4.3. Recognition Result

4.4. Human-Computer Interaction

5. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Werner, M. Proceedings of the International Conference on Ageing. Wiener Klinische Wochenschrift 2009, 121, S1–S84. [Google Scholar]

- Churnrurtai, K.; Magnus, L.; Timothy, J.; Piya, H.; Fely, M.L.; Nguyen, L.H.; Siswanto, A.W.; Jennifer, F.D.R. Human resources for health in southeast Asia: Shortages, distributional challenges, and international trade in health services. Lancet 2011, 377, 769–781. [Google Scholar] [CrossRef]

- Michaela, S.; Tobias, M. Service robots. Bus. Inf. Sys. Eng. 2015, 57, 271–274. [Google Scholar]

- Koichi, Y.; Miho, F.; Sophia, K. COVID-19 pathophysiology: A review. Clin. Immunol. 2020, 215, 108427. [Google Scholar] [CrossRef]

- Tao, S.; Zhe, W.; Qinghua, L. A medical garbage bin recycling system based on AGV. In Proceedings of the 2019 International Conference on Robotics, Intelligent Control and Artificial Intelligence, New York, NY, USA, 20–22 September 2019. [Google Scholar]

- Tharma, G.; Nicholas, G.H.; Chelliah, S. Design and operational issues in AGV-served manufacturing systems. Ann. Oper. Res. 1998, 76, 109–154. [Google Scholar]

- Smart Hospital Robot Is an Autonomous, High Tech Courier. Available online: https://www.springwise.com/smart-hospital-robot-autonomous-high-tech-courier (accessed on 12 May 2020).

- Robots Are Joining the Fight against Coronavirus in India. Available online: https://edition.cnn.com/2020/11/11/tech/robots-india-covid-spc-intl/index.html (accessed on 21 July 2021).

- Liliana, E.; Edisson, T.; Pablo, A.Q.S.; José, G. Smart Office: Development of a Mobile Application for Android with Firebase Services Oriented to GroupMe Messaging. In Proceedings of the 14th International Conference on Web Information Systems and Technologies (WEBIST), Seville, Spain, 18–20 September 2018. [Google Scholar]

- Tomoki, H.; Ryuichi, Y.; Katsuki, I. ESPnet-TTS: Unified, reproducible, and integratable open source end-to-end text-to-speech toolkit. In Proceedings of the IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Barcelona, Spain, 4–8 May 2020. [Google Scholar]

- Li, S. Tensorflow lite: On-device machine learning framework. J. Comput. Res. Dev. 2020, 57, 1839. [Google Scholar]

- Robert, B. Robots in a contagious world. Ind. Robot. Int. J. Robot. Res. Appl. 2020, 47, 642–673. [Google Scholar]

- Chen, S.; Liu, Y.; Gao, X.; Han, Z. Mobilefacenets: Efficient cnns for accurate real-time face verification on mobile devices. In Proceedings of the Chinese Conference on Biometric Recognition, Beijing, China, 9 August 2018. [Google Scholar]

- Souhaib, B.T.; Rob, J.H. A gradient boosting approach to the Kaggle load forecasting competition. Int. J. Forecast. 2014, 30, 382–394. [Google Scholar]

- Lin, T.Y.; Michael, M.; Serge, B.; James, H.; Pietro, P.; Piotr, D.; Zitnick, C.L. Computer Vision—ECCV 2014. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014. [Google Scholar]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Siao, C.-Y.; Chien, T.-H.; Chang, R.-G. Robot Scheduling for Assistance and Guidance in Hospitals. Appl. Sci. 2022, 12, 337. https://doi.org/10.3390/app12010337

Siao C-Y, Chien T-H, Chang R-G. Robot Scheduling for Assistance and Guidance in Hospitals. Applied Sciences. 2022; 12(1):337. https://doi.org/10.3390/app12010337

Chicago/Turabian StyleSiao, Cheng-Yan, Ting-Hsuan Chien, and Rong-Guey Chang. 2022. "Robot Scheduling for Assistance and Guidance in Hospitals" Applied Sciences 12, no. 1: 337. https://doi.org/10.3390/app12010337

APA StyleSiao, C.-Y., Chien, T.-H., & Chang, R.-G. (2022). Robot Scheduling for Assistance and Guidance in Hospitals. Applied Sciences, 12(1), 337. https://doi.org/10.3390/app12010337