Industrial assembly is performed by grouping individual parts and fitting them together to create the finished commodities with great additional value. Thus, assembly is an important step to connect the manufacturing processes and the business processes. In assembly, training is significant for technicians to improve the skills. Effective assembly training can increase the efficiency and quality of assembly tasks to achieve more value. Therefore, many businesses and researches have paid attention to assembly training [

1]. In traditional assembly training, trainees need repeated practice to improve assembly skills, which leads to high resource consumption. Furthermore, it is not easy to evaluate the standard and achievement during the traditional assembly training operations. Nowadays, these problems can be addressed by augmented reality (AR) technology.

AR is a novel human computer interaction (HCI) technique. AR can enable users to experience the real world in which virtual objects and real objects coexist, and interact with them in the real time. In the past two decades, AR application has been a trending research topic in many areas, such as education, entertainment, medicine, and industry [

2,

3]. Volkswagen intended to use AR to compare the calculated crash test imagery with the actual case [

4]. Fuchs et al. [

5] developed an optical see-through augmentation for laparoscopic surgery which could simulate the view of the laparoscopes from small incisions. Pokémon GO [

6] enabled users to capture and battle with different virtual Pokémon in a real environment by mobile phone. Liarokapis et al. [

7] employed a screen-based AR to assist engineering education based on the Construct3D tool. AR is capable of assisting assembly training because of its low time costs and high effectiveness, which allows trainees to conduct real-time assembly training tasks at any place or time with minimum cost [

8,

9,

10,

11]. Furthermore, in an augmented environment, trainees can analyze the behaviors and achievements according to virtual information and real feedback. From AR interaction, trainees can obtain intuitive data to standardize their training operations.

Currently there are no commercial products for AR assisted assembly training (ARAAT), thus many research works have focused on it. Early ARAAT studies mainly converged on marker-based tools or gloves. Wang et al. [

12] established an AR assembly workspace which enabled trainees to assemble virtual objects by real marked tools. Valentini [

13] developed a system which allowed trainees to assemble the virtual components using a glove with sensors. These methods can be accurate and intuitive, but come with the high cost of the devices. Recently, benefiting from the rapid development of computer vision, researchers are increasingly focused on the vision-based bare-hand ARAAT which is natural and intuitive with the low costs of the vision cameras [

14,

15,

16]. Most ARAAT tasks are conducted by real-time hand operations. Trainees need to use their real hands to operate virtual workpieces. Thus, precise gesture recognition plays an important role in the bare-hand ARAAT, and also in evaluating the standard and achievement of training tasks. Lee et al. [

17] applied hand orientation estimation and collision recognition from trainees’ hands to virtual substances. They proposed a hand interaction technique that ensured a seamless experience for trainees in the AR environment. Nevertheless, the precision of Lee’s research depended on the range between trainees’ hands and stereo cameras. Thus, calculation errors existed when only one finger was used and its application was limited. Wang [

18] proposed a Restricted Coulomb Energy network to segment hands for AR empty-hand assembly. Virtual objects were controlled by two fingertips in the experiment to simulate assembly tasks. Since algorithms of fingertip tracking were implemented in 2D space without depth information, the results had lower recognition accuracy. Most current studies on the bare-hand ARAAT have received a low recognition accuracy. Hence, more effort has been made to raise the recognition precision. Choi [

19] developed a hand-based AR mobile phone interface by executing the “grasping” and “releasing” gestures with virtual substances. The interface provided a natural interaction benefit from the hand detection, palm pose estimation, and finger gesture recognition. Figueiredo et al. [

20] evaluated interactions on tabletop applications with virtual objects by hand tracking and gesture recognition. During the interaction, they applied the “grasping” and “releasing” gestures and used the Kinect device for hand tracking. These studies have increased the recognition rate by various image processing methods, but the interaction gestures are confined to few types of interaction gestures. Even though for the up-to-date AR device HoloLens (1st Generation) [

21] that is broadly used in numerous AR applications, the operation gestures are also limited to only two gestures: “pointing” and “blooming”. Limited types of interaction gestures are not only inadequate for practical industrial assembly tasks, but also giving unnatural experiences to trainees. In ARAAT, giving a realistic and natural experience in performing assembly tasks is also a significant issue as well as precise gesture recognition [

22,

23,

24]. Aside from inadequate gestures, a long response time will also bring an unnatural interaction experience in ARAAT. Thus, many researches have focused on early recognition by predicting or estimating gestures to reduce the response time and make the process of assembly operations appear natural. Zhu et al. [

25] proposed a progressive filtering approach to predict ongoing human tasks to ensure a natural and friendly interaction. Du et al. [

26] predicted gestures using improved particle filters to accomplish the tasks of welding, painting, and stamping. With the help of additional physical properties of 3D virtual objects, Imbert et al. [

27] found a more natural approach to doing assembly tasks and the results showed that trainees could perform assembly tasks easily. There is a problem that current studies for bare-hand ARAAT mostly focus on the single gesture recognition rather than the whole assembly task evaluation. Compared with single gesture recognition, the whole task evaluation can analyze the trainees’ operations overall and is more helpful to trainees for improving their standard and achievement of operations. However, the whole task evaluation remains a challenge because the whole assembly task contains many different gestures together which are difficult to be distinguished. Directly evaluating the performance of a whole ARAAT task is a complicated process because only limited types of gestures for ARAAT can be recognized and recognition accuracy is not high.

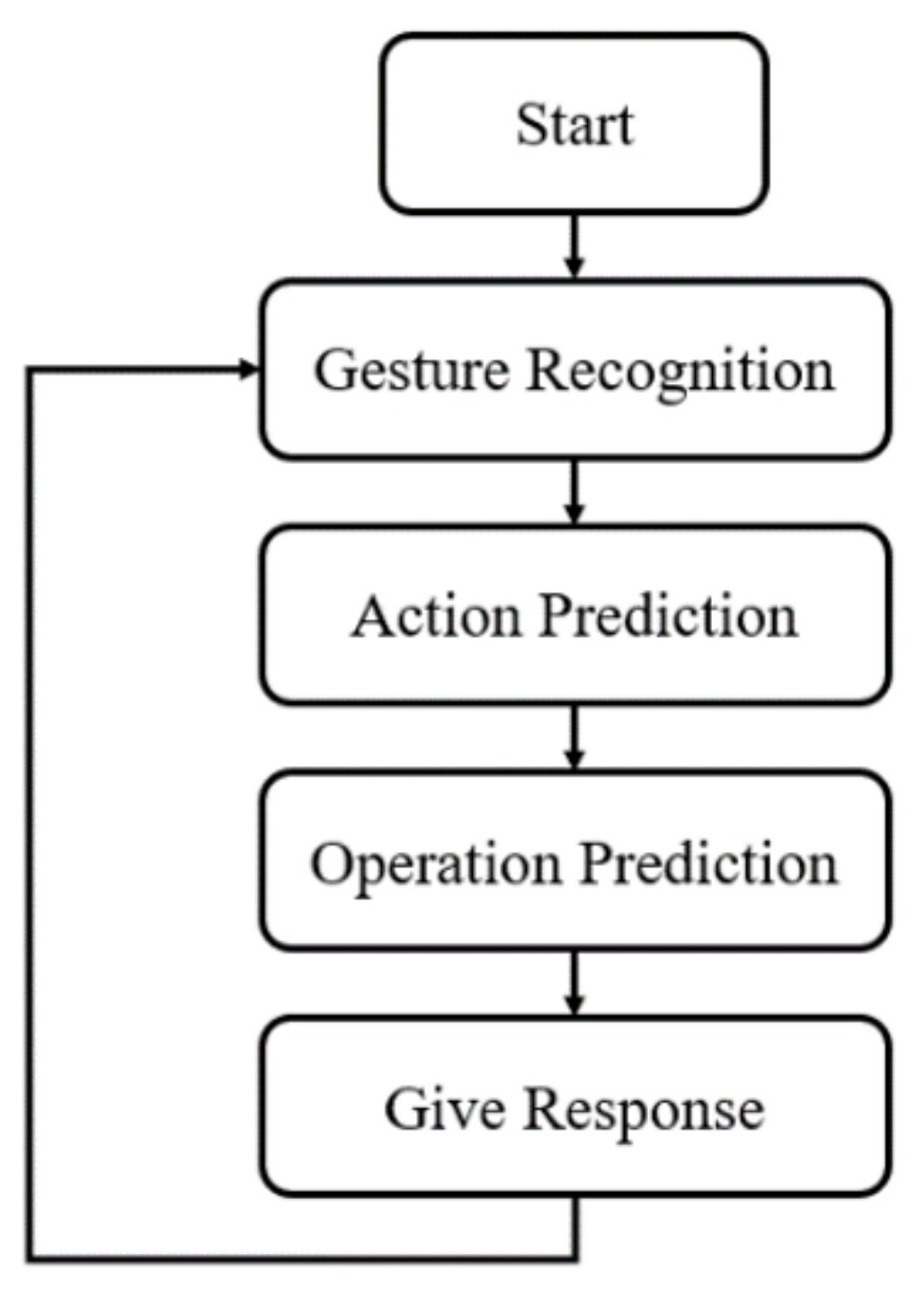

Based on the related ARAAT studies mentioned thus far, ARAAT has the following areas of improvement: (1) lack of the whole complex assembly task evaluation, (2) limitation of interaction gestures, (3) low recognition accuracy, and (4) unnatural interaction experiences resulting from long response time. With the aim of resolving these problems, in this paper, we develop an ARAAT system. The flowchart of ARAAT is shown in

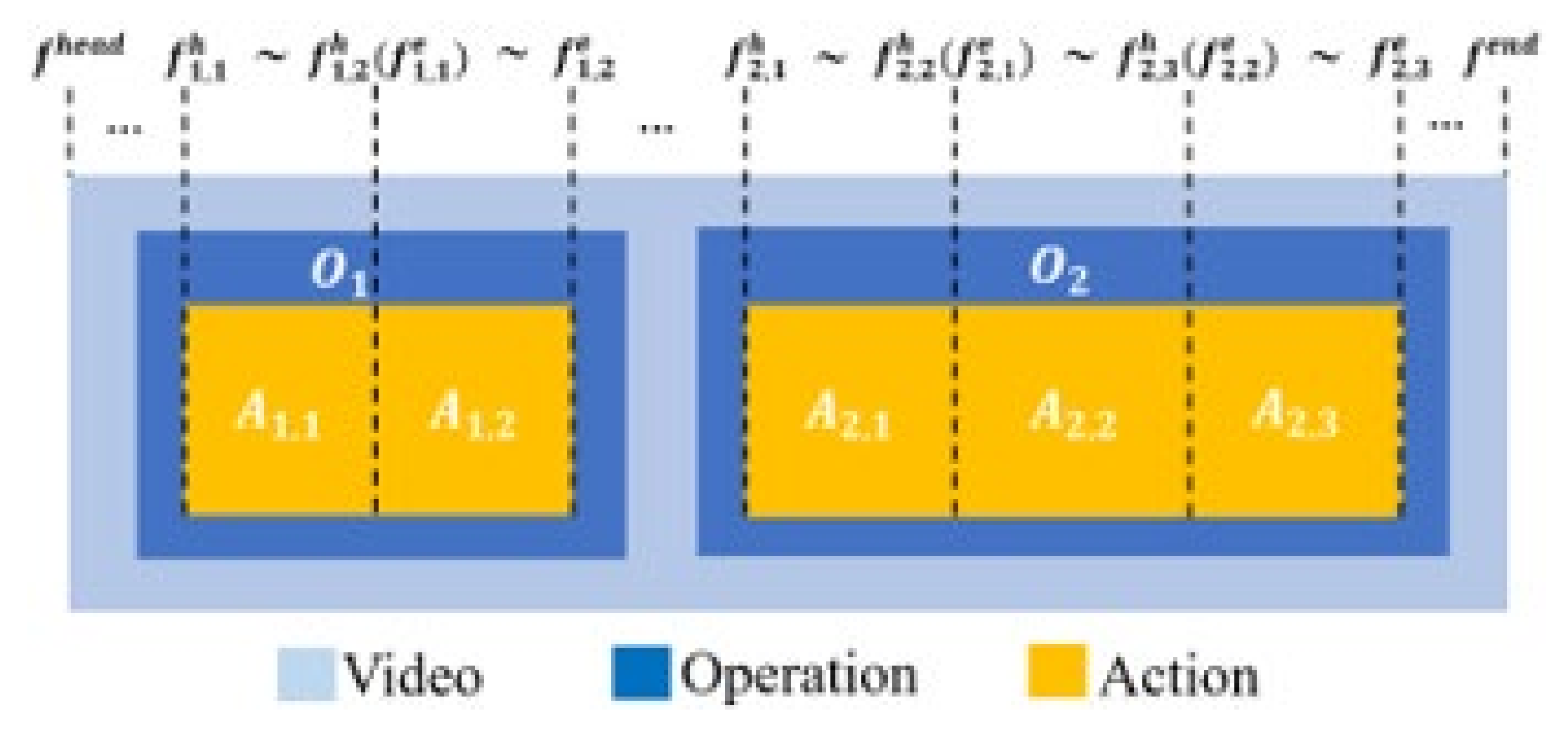

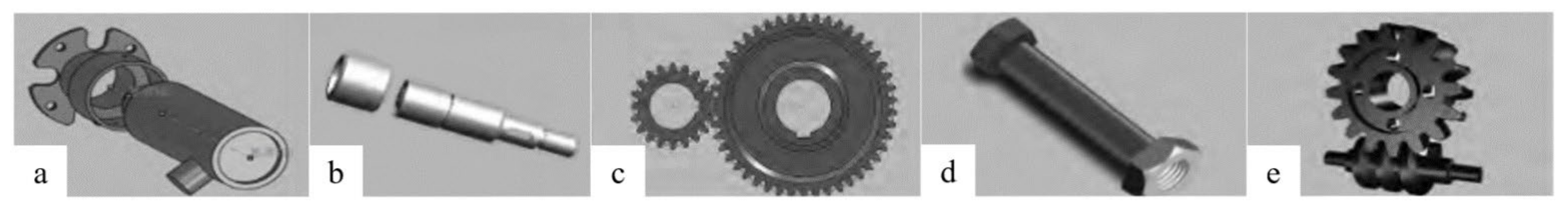

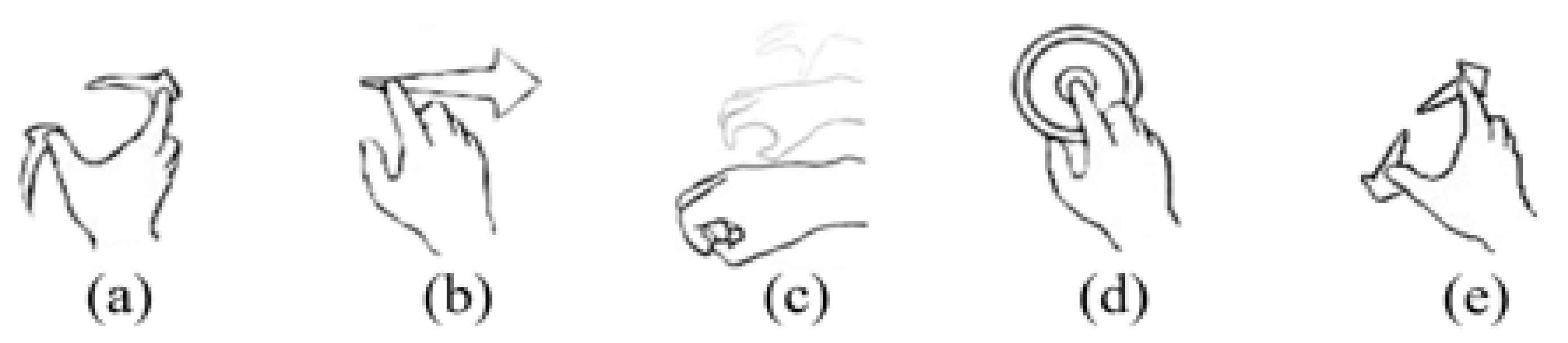

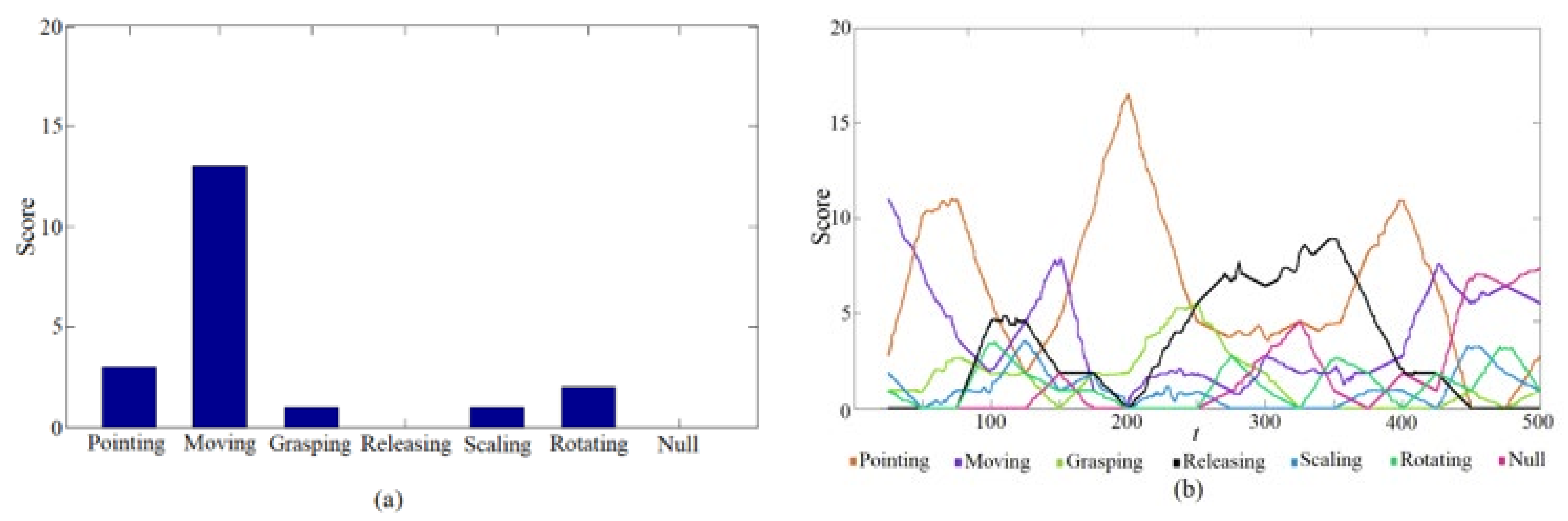

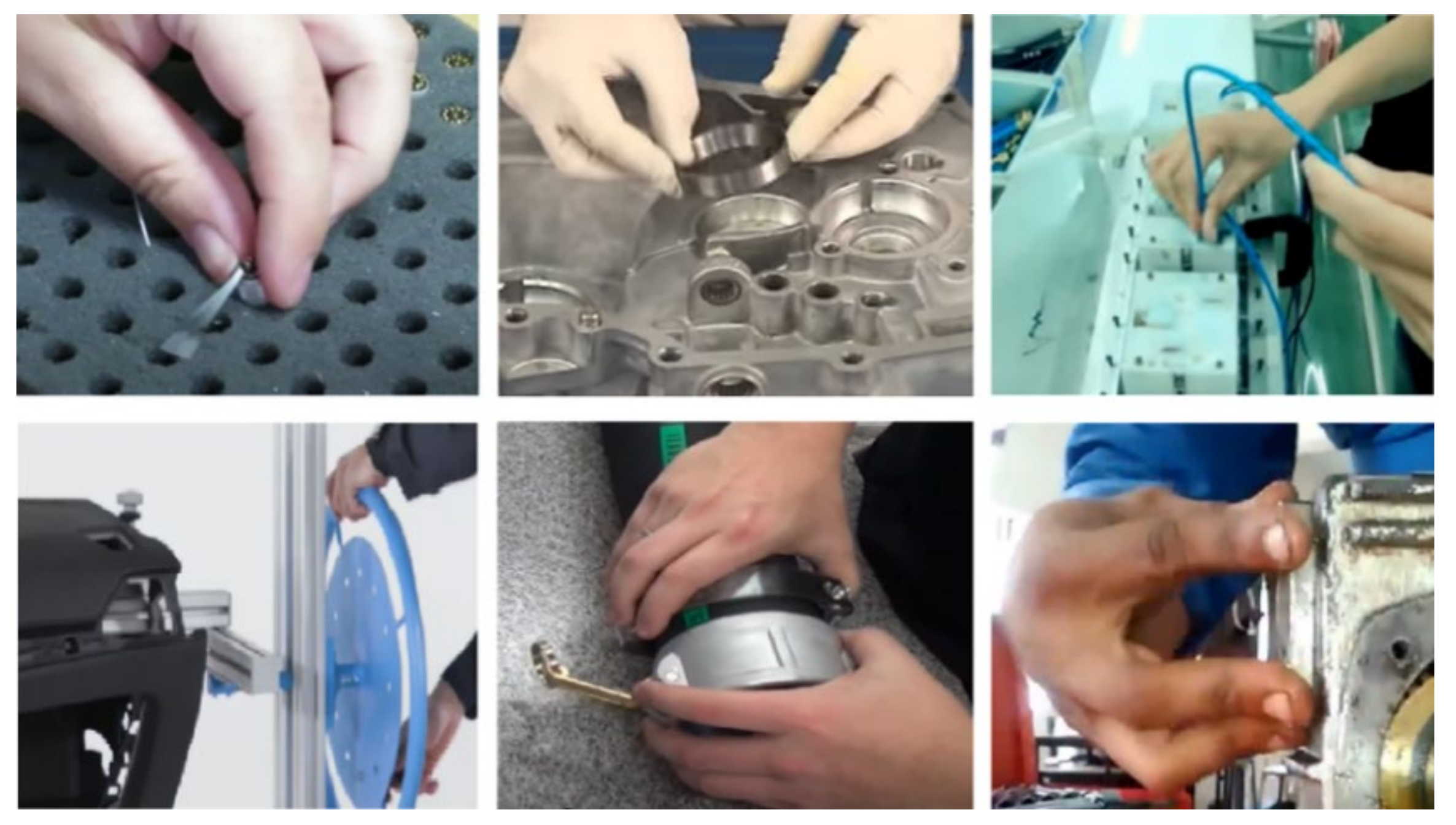

Figure 1. Trainees choose the ARAAT tasks and conduct the corresponding operations. The AR device (HoloLens) records trainees’ gesture videos during the tasks and the multimodal features are extracted from the videos. After classification, these multimodal features are used for gesture segmentation and optimization. After recognition with the optimal gesture boundaries, the gesture results will be used to evaluate the standard and achievement of hand operations in ARAAT tasks. In ARAAT, we have made the following contributions: (1) Building a model for the whole complex assembly task evaluation. We decompose an ARAAT task into a series of hand operations. Each hand operation is further decomposed into several continuous actions. Each action can be considered as an identifiable gesture. Using the classification and sequences of gestures, we can easily distinguish actions and predict operations to evaluate the performance of ARAAT tasks. (2) Increasing the types of interaction gestures. We generalize three typical operations and six standard actions based on practical industrial assembly tasks. (3) Improving the recognition accuracy. For evaluating the standard and achievement of hand operations in ARAAT tasks, an algorithm for gesture recognition is proposed in this paper to improve recognition accuracy and efficiency. The ARAAT task is recorded into an input video by an AR device. To ensure precise interactions for trainees to work with virtual workpieces by real hands (empty hands or using assembly kits), virtual workpieces must match correctly to hands or tools according to spatial-temporal consistency. Based on the spatial-temporal consistency, we use Zernike moments combined histogram of oriented gradient and linear interpolation motion trajectories to simultaneously represent 2D static and 3D dynamic features, respectively. The directional pulse-coupled neural network is chosen as the classifier to recognize gestures. To reduce the computational cost, we define an action unit to reduce the dimensions of features. The score probability density distribution is defined and applied to optimize gesture boundaries iteratively to decrease the interference of invalid gestures during gesture recognition. (4) Decreasing the response time. We proposed an action and operation prediction method based on the standard operation order. The prediction method can early recognize the action and operation to reduce the response time and ensure a natural experience in ARAAT.

The subsequent sections of this paper are divided as follows:

Section 2 describes the modeling for ARAAT;

Section 3 presents the action categories, action recognition, and operation prediction;

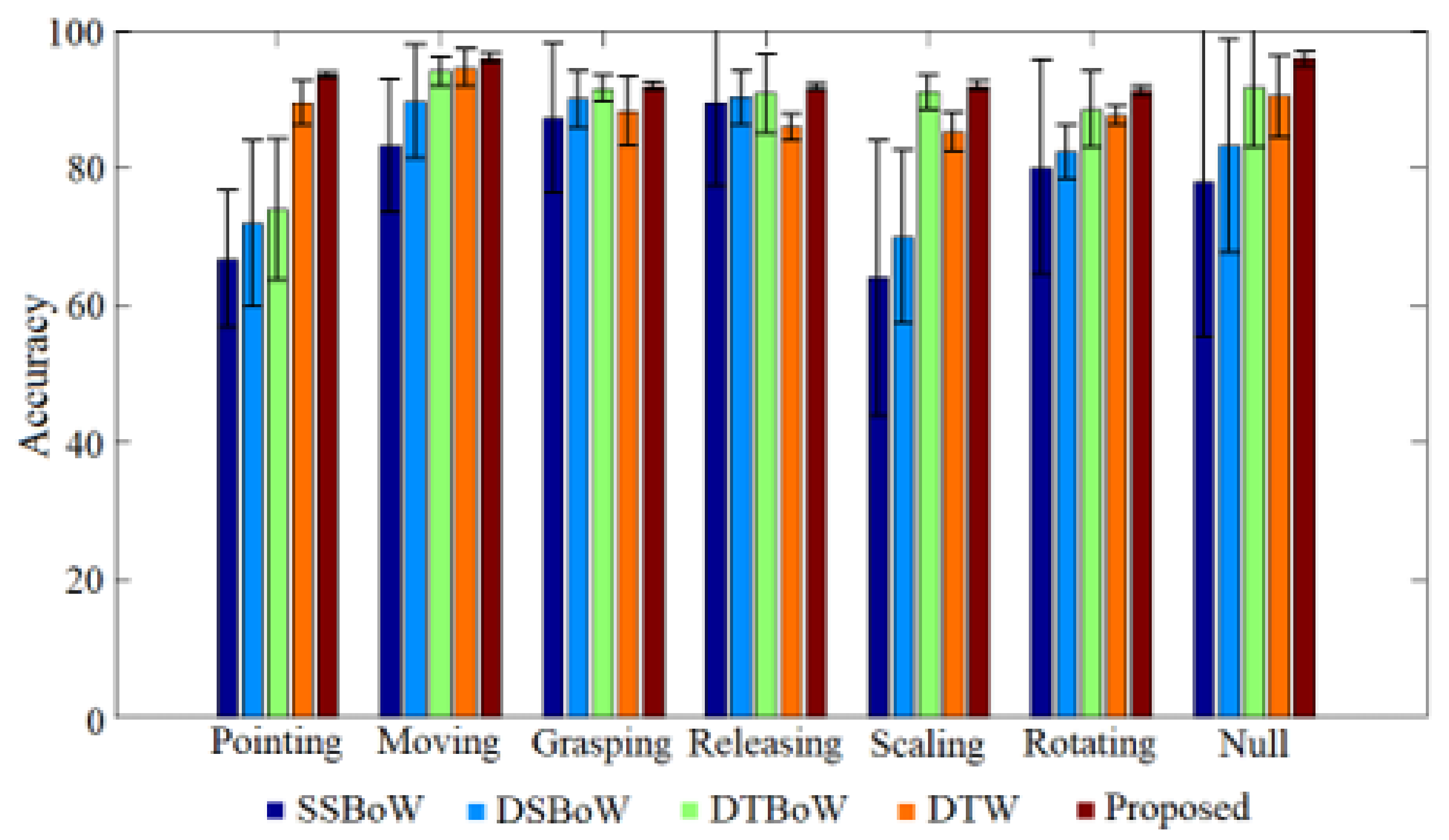

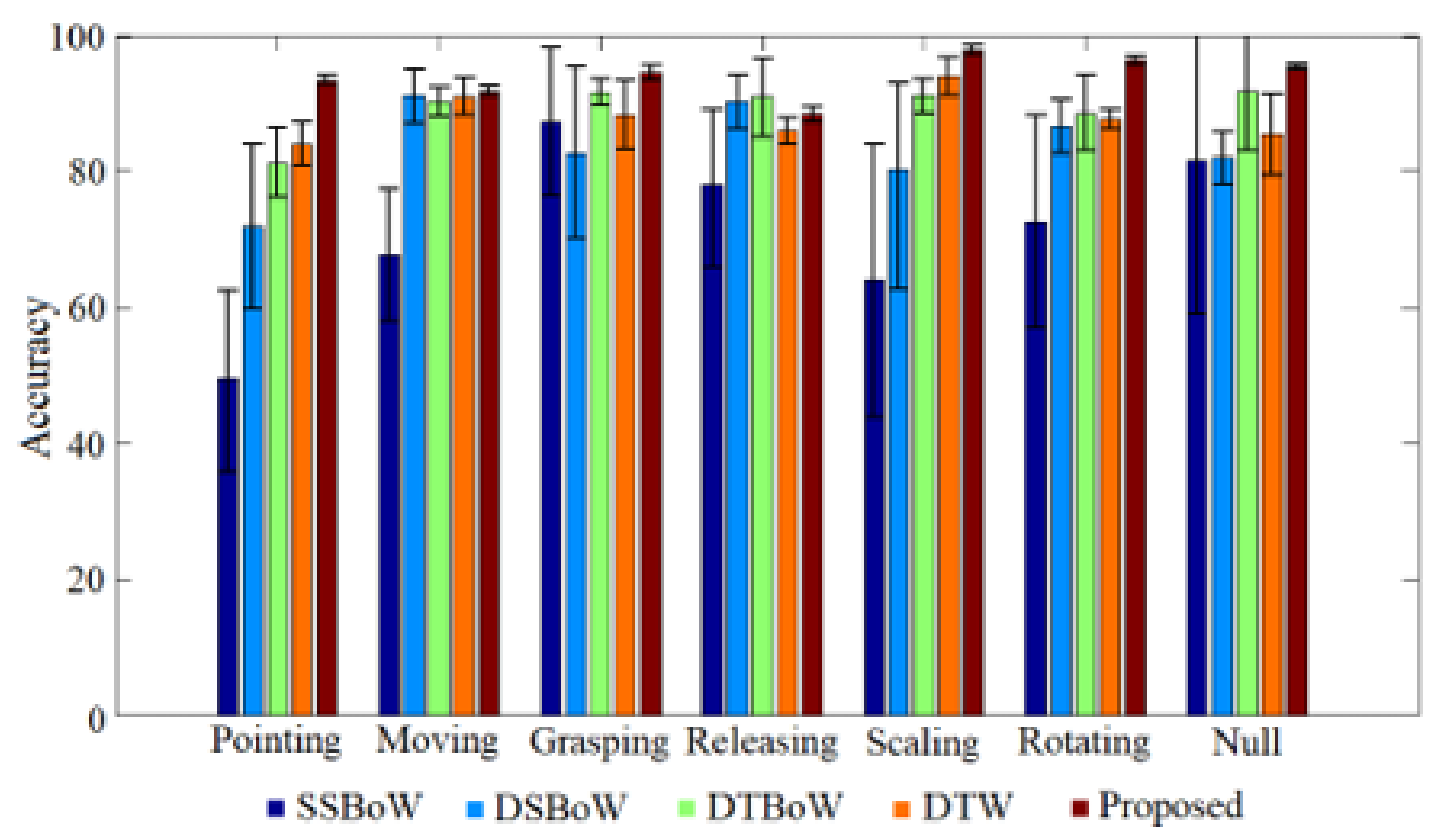

Section 4 details the experimental results compared with other algorithms on a homemade dataset and the experimental analysis; finally,

Section 5 provides a short conclusion and suggestions for future research.