UAV Detection with Transfer Learning from Simulated Data of Laser Active Imaging

Abstract

:1. Introduction

- (1)

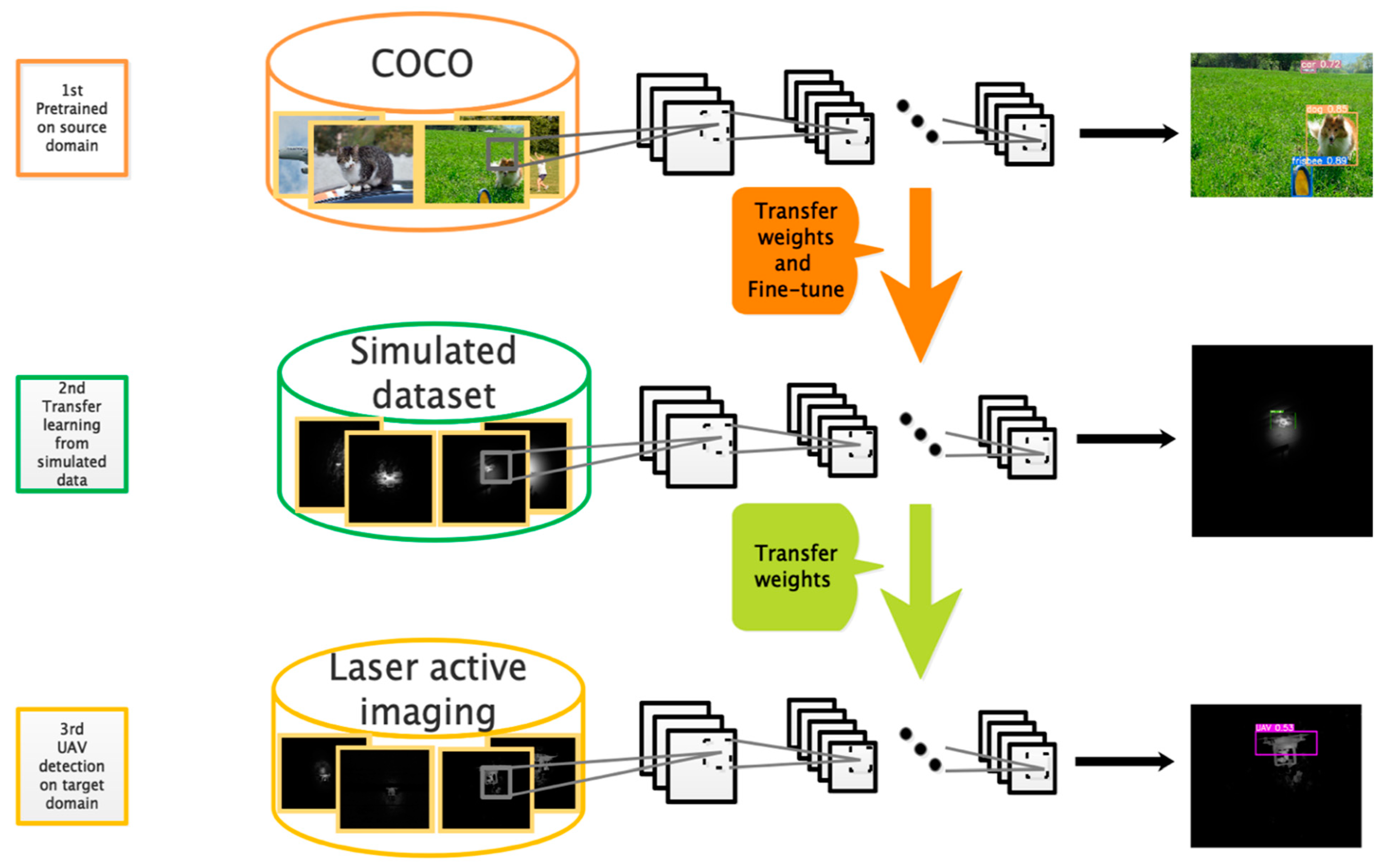

- A real-time UAV detection framework is established based on a CNN cooperating with transfer learning. To the best of our knowledge, this is the first study to analyze the problem of zero-shot object detection in the laser active imaging domain.

- (2)

- A dataset is constructed by simulating the process of laser active imaging. The knowledge learned from the simulated dataset is beneficial to UAV detection in real data.

- (3)

- We experimentally show that our algorithm can realize a high-precision UAV detection for our laser active imaging system, which proves the authenticity of the simulated data and the success of our solution.

2. Related Work

2.1. Laser Active Imaging

2.2. Object Detection

2.3. Transfer Learning

3. Data Simulation

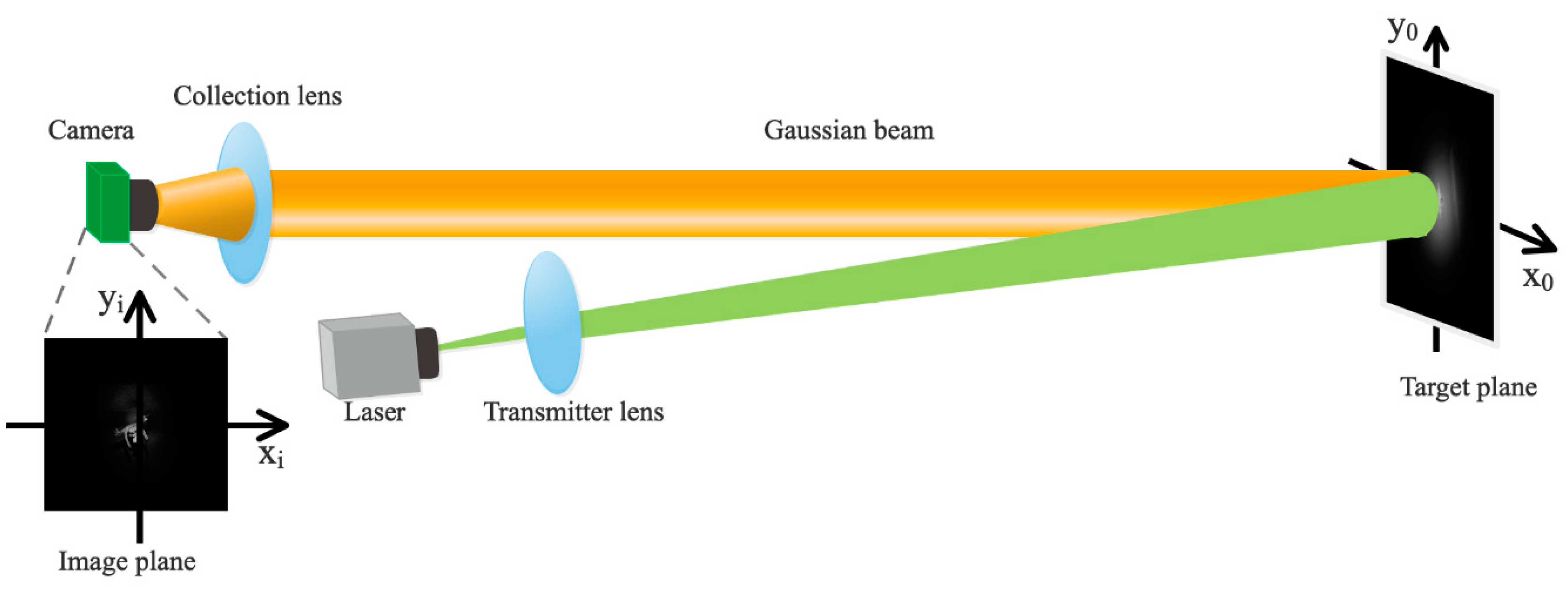

3.1. Coherent Imaging

3.2. Incoherent Imaging

4. Methodology

4.1. Principle of YOLO

4.2. Network Structure

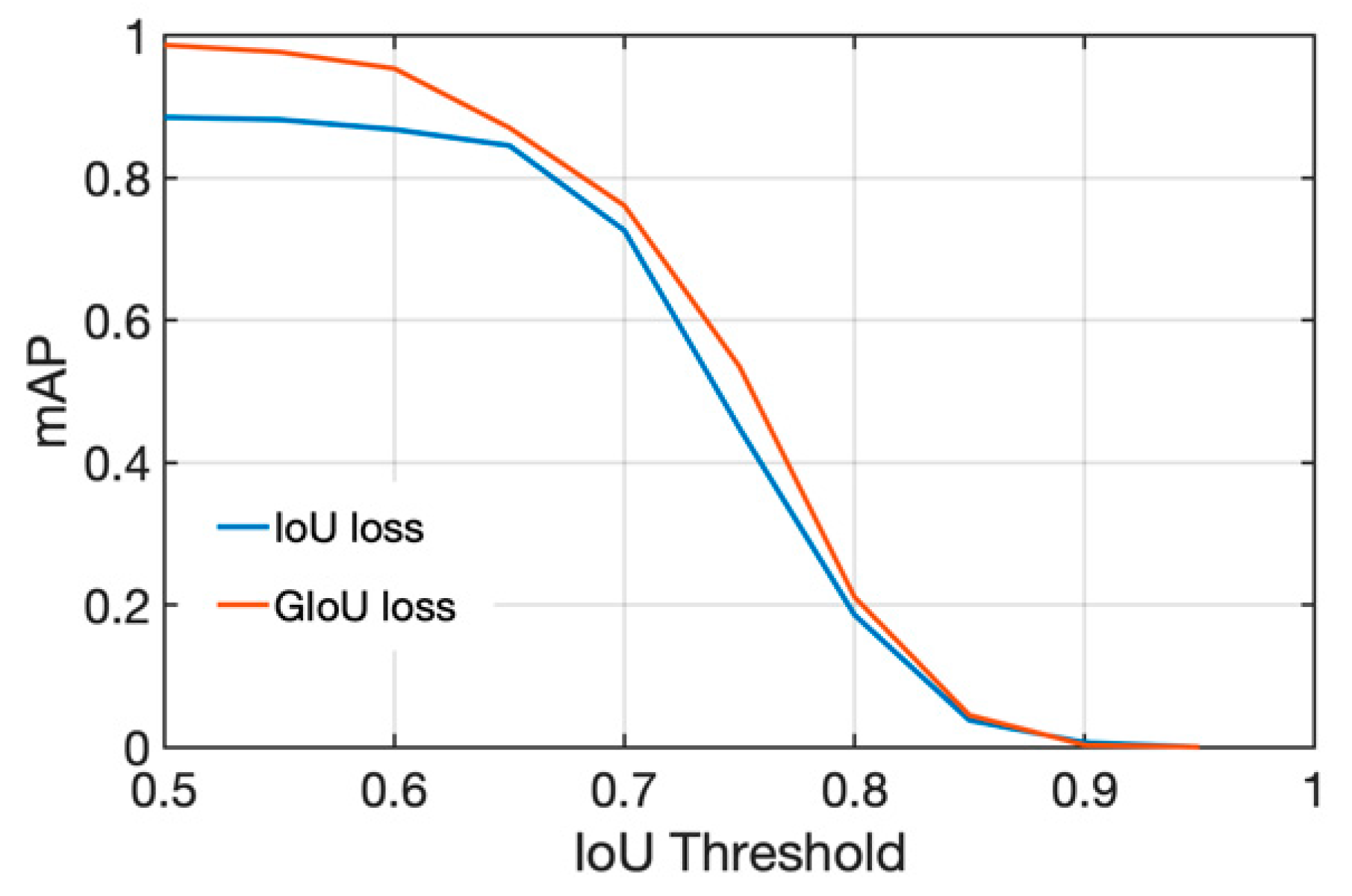

4.3. GIoU Loss

4.4. Dataset

- (1)

- Simulated dataset: The dataset consists of simulated laser active illumination images according to the method described in Section 3. Firstly, 744 natural images of UAVs in different scenes are collected by a camera. Then ten simulated images with different illumination centers (xc, yc) and spot sizes w(z) are generated from each image under coherent imaging and incoherent imaging, respectively. The selection of illumination center and spot size is random following the constraint that the illumination area covers the UAV target and does not exceed the image edge.

- (2)

- Real dataset: We first construct our laser active imaging system according to Figure 2. We choose a continuous laser as the illumination source. The laser beam is collimated and expanded by the transmitting lens and then illuminates the target. The callback signal is acquired in an intensified CCD camera after passing through the collection lens. The detail parameters of the camera and laser are listed in Table 2. The setup and experimental scene are shown in Figure 5 left and right respectively. The transmitting and receiving equipment are placed on a turntable to facilitate scene scanning and subsequent tracking and monitoring. We collect three laser active imaging videos of UAVs using this system in different scenes including city, forest, and sky. The distance between UAV and imaging system is 100–500 m. Then we extracted 861 images from the videos to make up the real dataset.

4.5. Training Protocol

5. Experimental Results

5.1. Model Initialization

5.2. Transferability of Simulated Data

5.3. GIoU Loss vs. IoU Loss

5.4. Comparison with the Previous Method

5.5. Experimental Results on Laser Active Imaging System

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Gaszczak, A.; Breckon, T.P.; Han, J. Real-time people and vehicle detection from UAV imagery. Proc. SPIE Int. Soc. Opt. Eng. 2011, 7878, 78780B. [Google Scholar]

- Wang, J.; Jiang, C.; Han, Z.; Ren, Y.; Maunder, R.G.; Hanzo, L. Taking Drones to the Next Level: Cooperative Distributed Unmanned-Aerial-Vehicular Networks for Small and Mini Drones. IEEE Veh. Technol. Mag. 2017, 12, 73–82. [Google Scholar] [CrossRef] [Green Version]

- Li, B.; Yang, Z.P.; Chen, D.Q.; Liang, S.Y.; Ma, H. Maneuvering target tracking of UAV based on MN-DDPG and transfer learning. Def. Technol. 2020, 17, 457–466. [Google Scholar] [CrossRef]

- Busck, J. Underwater 3-D optical imaging with a gated viewing laser radar. Opt. Eng. 2005, 44, 6001. [Google Scholar] [CrossRef]

- Espinola, R.L.; Jacobs, E.L.; Halford, C.E.; Vollmerhausen, R.; Tofsted, D.H. Modeling the target acquisition performance of active imaging systems. Opt. Express 2007, 15, 3816–3832. [Google Scholar] [CrossRef]

- Wang, C.; Sun, T.; Wang, T.; Chen, J. Fast contour torque features based recognition in laser active imaging system. J. Light Electronoptic 2015, 126, 3276–3282. [Google Scholar] [CrossRef]

- Zhao, Z.Q.; Zheng, P.; Xu, S.T.; Wu, X. Object Detection With Deep Learning: A Review. IEEE Trans. Neural Netw. Learn. Syst. 2019, 30, 3212–3232. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Krizhevsky, A.; Sutskever, I.; Hinton, G. ImageNet Classification with Deep Convolutional Neural Networks. Adv. Neural Inf. Process. Syst. 2012, 25, 1097–1105. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature Pyramid Networks for Object Detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015. [Google Scholar]

- Pan, S.J.; Yang, Q. A Survey on Transfer Learning. IEEE Trans. Knowl. Data Eng. 2010, 16, 1345–1359. [Google Scholar] [CrossRef]

- Yang, Z.; Yu, W.; Liang, P.; Guo, H.; Xia, L.; Zhang, F.; Ma, Y.; Ma, J. Deep transfer learning for military object recognition under small training set condition. Neural Comput. Appl. 2019, 31, 6469–6478. [Google Scholar] [CrossRef]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, F.F. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision & Pattern Recognition, Miami, FL, USA, 20–25 June 2009. [Google Scholar]

- Yang, Y.; Yan, L.F.; Zhang, X.; Han, Y.; Nan, H.Y.; Hu, Y.C.; Hu, B.; Yan, S.L.; Zhang, J.; Cheng, D.L.; et al. Glioma Grading on Conventional MR Images: A Deep Learning Study With Transfer Learning. Front. Neurosci. 2018, 12, 804. [Google Scholar] [CrossRef] [Green Version]

- Zhu, L.; Zhang, S. Multilevel Recognition of UAV-to-Ground Targets Based on Micro-Doppler Signatures and Transfer Learning of Deep Convolutional Neural Networks. IEEE Trans. Instrum. Meas. 2020, 70, 1–11. [Google Scholar] [CrossRef]

- Sommer, L.; Schumann, A.; Muller, T.; Schuchert, T.; Beyerer, J. Flying object detection for automatic UAV recognition. In Proceedings of the IEEE International Conference on Advanced Video & Signal Based Surveillance, Lecce, Italy, 29 August–1 September 2017. [Google Scholar]

- Zhao, Y.; Yi, S. Cyclostationary Phase Analysis on Micro-Doppler Parameters for Radar-Based Small UAVs Detection. IEEE Trans. Instrum. Meas. 2018, 67, 2048–2057. [Google Scholar] [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Zitnick, C.L. Microsoft COCO: Common Objects in Context; Springer: Cham, Switzerland, 2014. [Google Scholar]

- Driggers, R.G. Impact of speckle on laser range-gated shortwave infrared imaging system target identification performance. Opt. Eng. 2003, 42, 738–746. [Google Scholar] [CrossRef]

- Ge, W.L.; Zhang, X.H. Design and implementation of range-gated underwater laser imaging system. Int. Soc. Opt. Photonics 2014, 9142, 914216. [Google Scholar]

- Glenn, J.; Liu, C.; Adam, H.; Yu, L.; changyu98; Rai, P.; Sullian, T. Ultralytics/yolov5: Initial Release (Version v1.0). Zenodo. Available online: http://doi.org/10.5281/zenodo.3908560 (accessed on 13 July 2020).

- Yosinski, J.; Clune, J.; Bengio, Y.; Lipson, H. How transferable are features in deep neural networks. In International Conference on Neural Information Processing Systems; MIT Press: Palais des Congrès de Montréal, MO, Canada, 2014. [Google Scholar]

- Öztürk, A.E.; Erçelebi, E. Real UAV-Bird Image Classification Using CNN with a Synthetic Dataset. Appl. Sci. 2021, 11, 3863. Available online: https://www.mdpi.com/2076-3417/11/9/3863 (accessed on 21 May 2021). [CrossRef]

- Liu, J.M. Simple technique for measurements of pulsed Gaussian-beam spot sizes. Opt. Lett. 1982, 7, 196. [Google Scholar] [CrossRef]

- Ioffe, S.; Szegedy, C. Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift. In Proceedings of the 32nd International Conference on International Conference on Machine Learning, Lille, France, 6–11 July 2015; Volume PMLR 37, pp. 448–456. [Google Scholar]

- Maas, A.L.; Hannun, A.Y.; Ng, A.Y. Rectifier nonlinearities improve neural network acoustic models. Proc. Icml 2013, 30, 3. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar]

- Wang, C.Y.; Liao, H.Y.M.; Wu, Y.H.; Chen, P.Y.; Yeh, I.H. CSPNet: A New Backbone That Can Enhance Learning Capability of CNN. In Proceedings of the 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Seattle, WA, USA, 14–19 June 2020. [Google Scholar]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path Aggregation Network for Instance Segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18-23 June 2018. [Google Scholar]

- Rezatofighi, H.; Tsoi, N.; Gwak, J.Y.; Sadeghian, A.; Savarese, S. Generalized Intersection Over Union: A Metric and a Loss for Bounding Box Regression. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019. [Google Scholar]

- Wang, Z.; Bovik, A.C.; Sheikh, H.R.; Simoncelli, E.P. Image Quality Assessment: From Error Visibility to Structural Similarity. IEEE Trans. Image Process. 2004, 13, 600–612. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Dalal, N. Histograms of Oriented Gradients for Human Detection. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, San Diego, CA, USA, 20–25 June 2005. [Google Scholar]

- Felzenszwalb, P.F.; Girshick, R.B.; McAllester, D.; Ramanan, D. Object Detection with Discriminatively Trained Part-Based Models. IEEE Trans. Pattern Anal. Mach. Intell. 2010, 32, 1627–1645. [Google Scholar] [CrossRef] [PubMed] [Green Version]

| Type | Filters | Size | Output | |

|---|---|---|---|---|

| Backbone | ||||

| Focus | 32 | 3 × 3 | 320 × 320 | |

| Convolutional | 64 | 3 × 3 | 160 × 160 | |

| BottleneckCSP | 64 | 1 × 1 + 3 × 3 | 160 × 160 | |

| Convolutional | 128 | 3 × 3 | 80 × 80 | |

| BottleneckCSP | 3 × | 128 | 1 × 1 + 3 × 3 | 80 × 80 |

| Convolutional | 256 | 3 × 3 | 40 × 40 | |

| BottleneckCSP | 3 × | 256 | 1 × 1 + 3 × 3 | 40 × 40 |

| Convolutional | 512 | 3 × 3 | 20 × 20 | |

| SPP | 20 × 20 | |||

| BottleneckCSP | 512 | 1 × 1 + 3 × 3 | 20 × 20 | |

| Head | ||||

| Convolutional | 512 | 1 × 1 | 20 × 20 | |

| Upsample | 2 × 2 | 40 × 40 | ||

| Concatenation | 40 × 40 | |||

| BottleneckCSP | 256 | 1 × 1 + 3 × 3 | 40 × 40 | |

| Convolutional | 256 | 1 × 1 | 40 × 40 | |

| Upsample | 2 × 2 | 80 × 80 | ||

| Concatenation | 80 × 80 | |||

| BottleneckCSP | 128 | 1 × 1 + 3 × 3 | 80 × 80 | |

| Convolutional | 128 | 3 × 3 | 40 × 40 | |

| Concatenation | 40 × 40 | |||

| BottleneckCSP | 256 | 1 × 1 + 3 × 3 | 40 × 40 | |

| Convolutional | 256 | 3 × 3 | 20 × 20 | |

| Concatenation | 20 × 20 | |||

| BottleneckCSP | 512 | 1 × 1 + 3 × 3 | 20 × 20 | |

| Detection | ||||

| Camera | Laser | ||

|---|---|---|---|

| No. of camera pixels | 1280 × 1024 | Wavelength | 532 nm |

| Pixel size | 4.8 μm | Power | 0–10 w |

| Frame rate | 210 | Divergence angle | 10 mrad |

| Data Composition | Precision | Recall | F1-Score | AP | AP50 | AP75 |

|---|---|---|---|---|---|---|

| Gray images | 0.7486 | 0.6592 | 0.7011 | 0.2442 | 0.6802 | 0.0893 |

| Coherent imaging | 0.9755 | 0.6667 | 0.7921 | 0.3815 | 0.8094 | 0.2560 |

| Incoherent imaging | 1.0000 | 0.8847 | 0.9388 | 0.5344 | 0.9870 | 0.5350 |

| 50% coherent and 50% incoherent | 0.9903 | 0.8516 | 0.9157 | 0.5200 | 0.9610 | 0.5240 |

| Metric\Training Set | Gray Images | Coherent Imaging | Incoherent Imaging | 50% Coherent and 50% Incoherent |

|---|---|---|---|---|

| PSNR | 20.03 | 21.70 | 22.54 | 22.22 |

| SSIM | 0.39 | 0.61 | 0.83 | 0.75 |

| Loss\Evaluation | AP | AP50 | AP75 |

|---|---|---|---|

| IoU | 0.489 | 0.886 | 0.448 |

| GIoU Relative improv.% | 0.534 9.20% | 0.987 11.4% | 0.535 19.4% |

| Method | Precision | Recall | F1-Score | Device | FPS |

|---|---|---|---|---|---|

| HOG | 0.247 | 0.436 | 0.315 | Intel Core i7-7700K | 1.484 |

| DPM | 0.3404 | 0.598 | 0.434 | Intel Core i7-7700K | 0.816 |

| YOLOv3 | 1.000 | 0.888 | 0.941 | GeForce GTX 1080 | 29.412 |

| YOLOv5s | 1.000 | 0.884 | 0.938 | GeForce GTX 1080 | 104.167 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, S.; Yang, G.; Sun, T.; Du, K.; Guo, J. UAV Detection with Transfer Learning from Simulated Data of Laser Active Imaging. Appl. Sci. 2021, 11, 5182. https://doi.org/10.3390/app11115182

Zhang S, Yang G, Sun T, Du K, Guo J. UAV Detection with Transfer Learning from Simulated Data of Laser Active Imaging. Applied Sciences. 2021; 11(11):5182. https://doi.org/10.3390/app11115182

Chicago/Turabian StyleZhang, Shao, Guoqing Yang, Tao Sun, Kunyang Du, and Jin Guo. 2021. "UAV Detection with Transfer Learning from Simulated Data of Laser Active Imaging" Applied Sciences 11, no. 11: 5182. https://doi.org/10.3390/app11115182

APA StyleZhang, S., Yang, G., Sun, T., Du, K., & Guo, J. (2021). UAV Detection with Transfer Learning from Simulated Data of Laser Active Imaging. Applied Sciences, 11(11), 5182. https://doi.org/10.3390/app11115182