Transfer Learning Algorithm of P300-EEG Signal Based on XDAWN Spatial Filter and Riemannian Geometry Classifier

Abstract

1. Introduction

2. Materials and Methods

2.1. Datasets

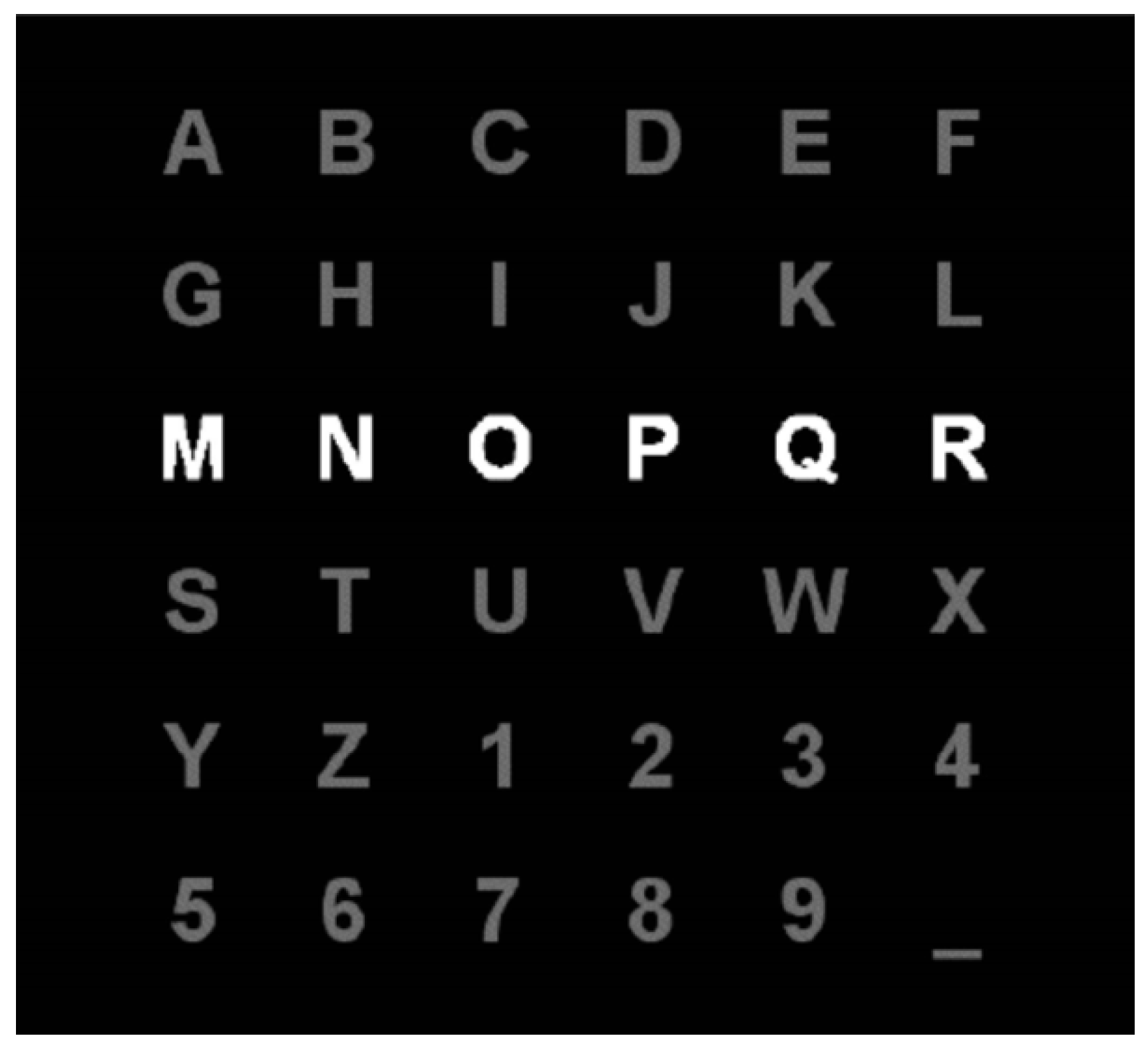

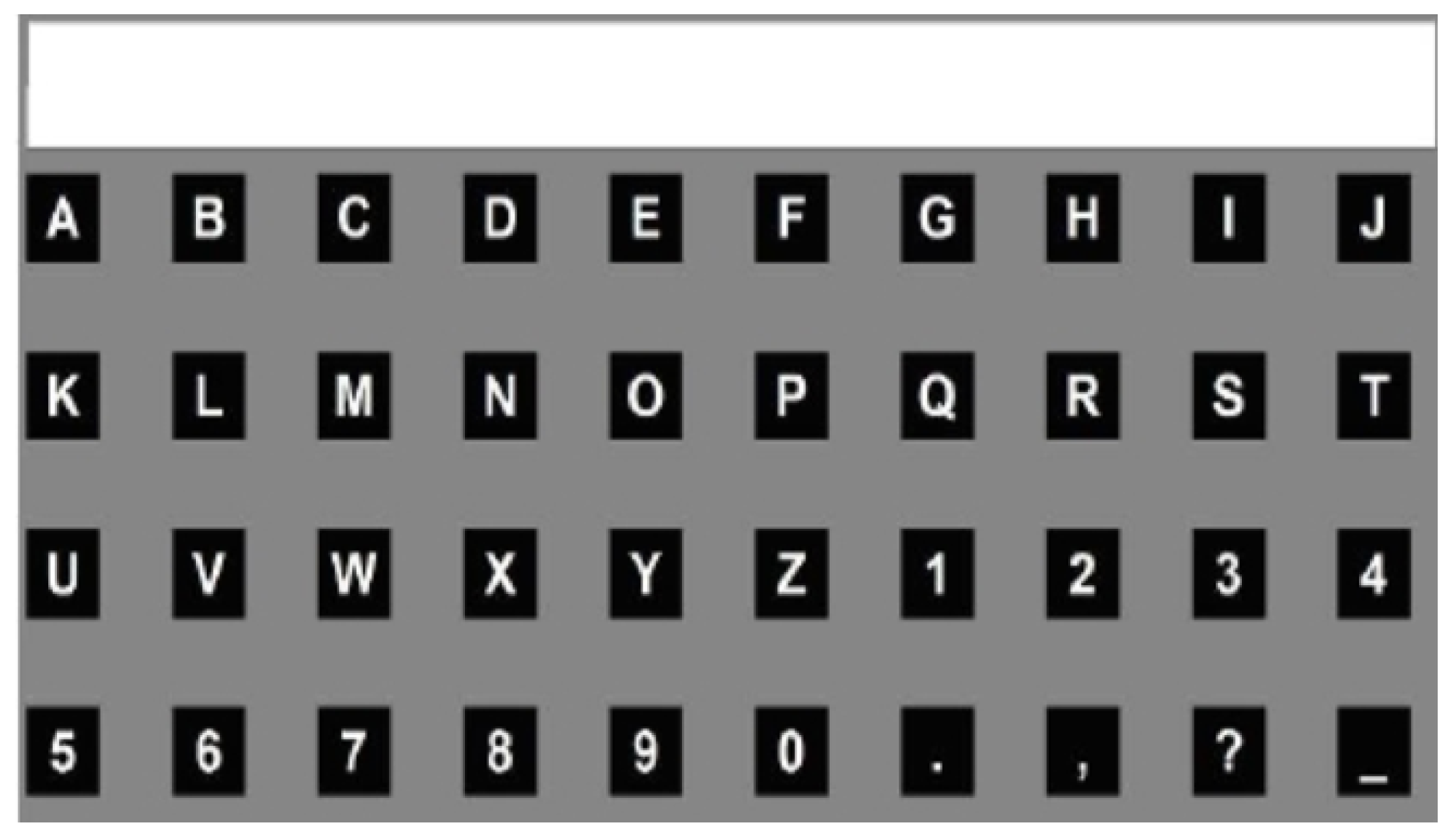

2.1.1. Dataset I

2.1.2. Dataset II

2.2. Methods

2.2.1. XDAWN Spatial Filter

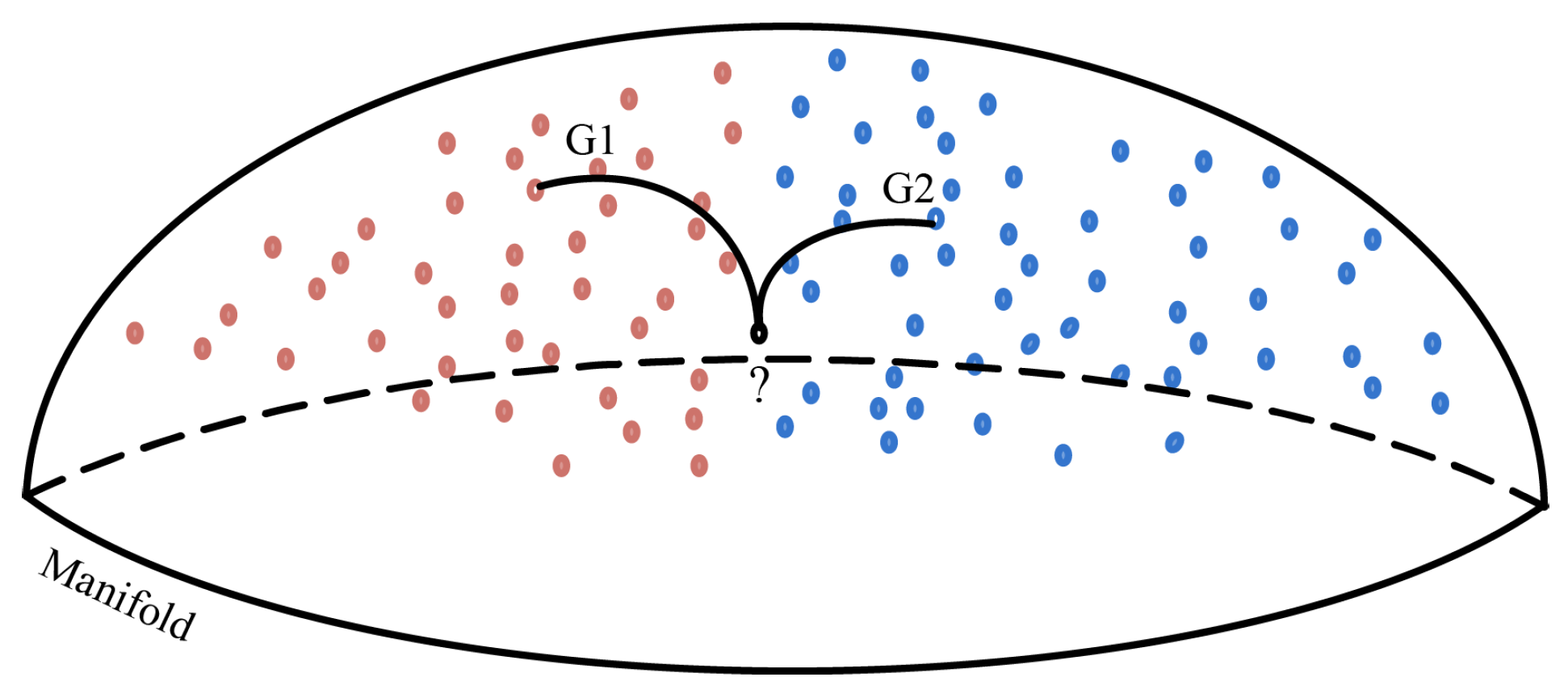

2.2.2. Riemannian Geometry Classifier

2.2.3. Affine Transformation of SPD Covariance Matrix

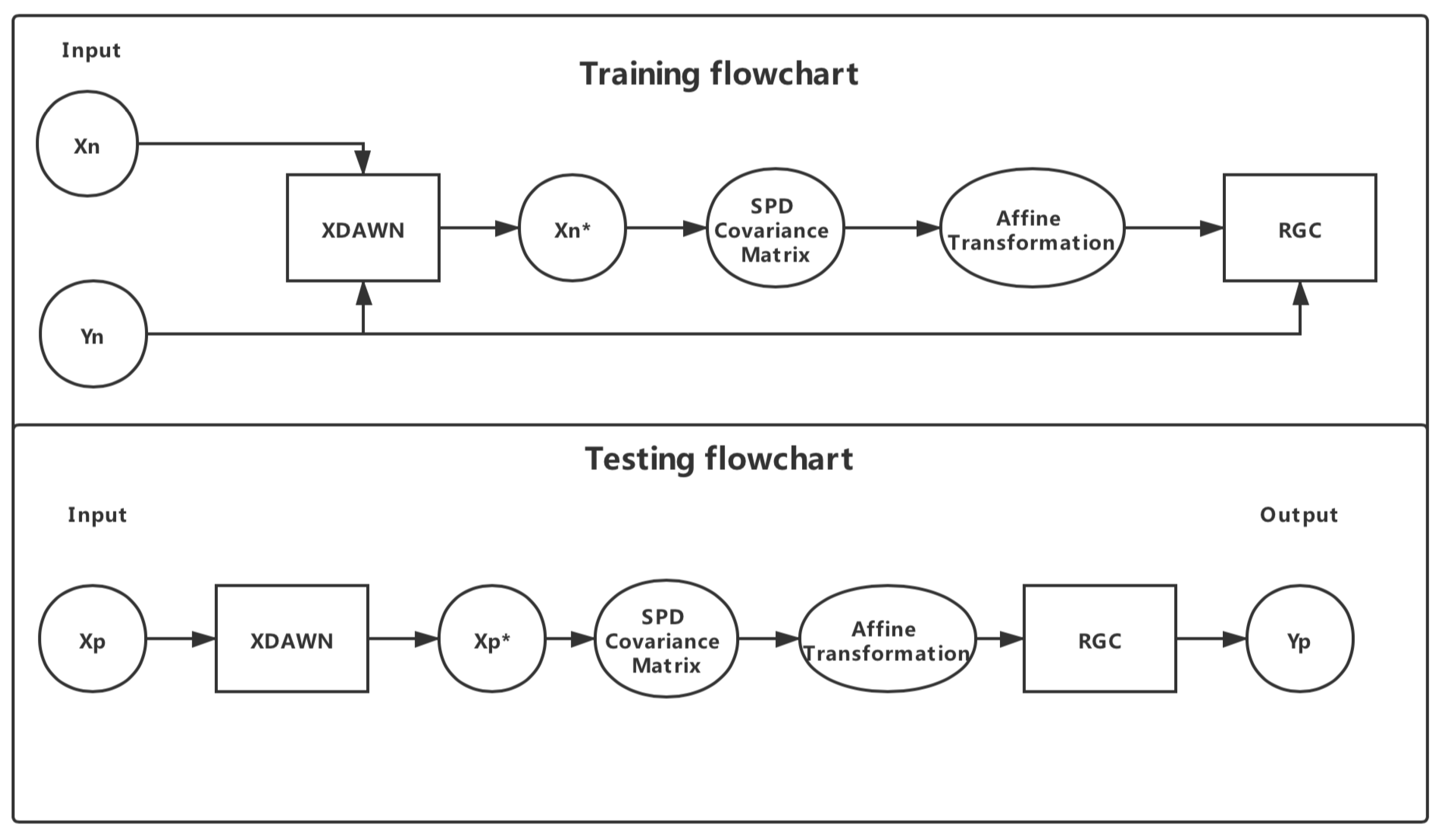

2.2.4. Algorithm of XDAWN + RGC

2.2.5. Data Preprocessing

2.3. Experiment

2.3.1. Experiment 1: One-to-One Transfer Learning

2.3.2. Experiment 2: All-to-One Transfer Learning

3. Results

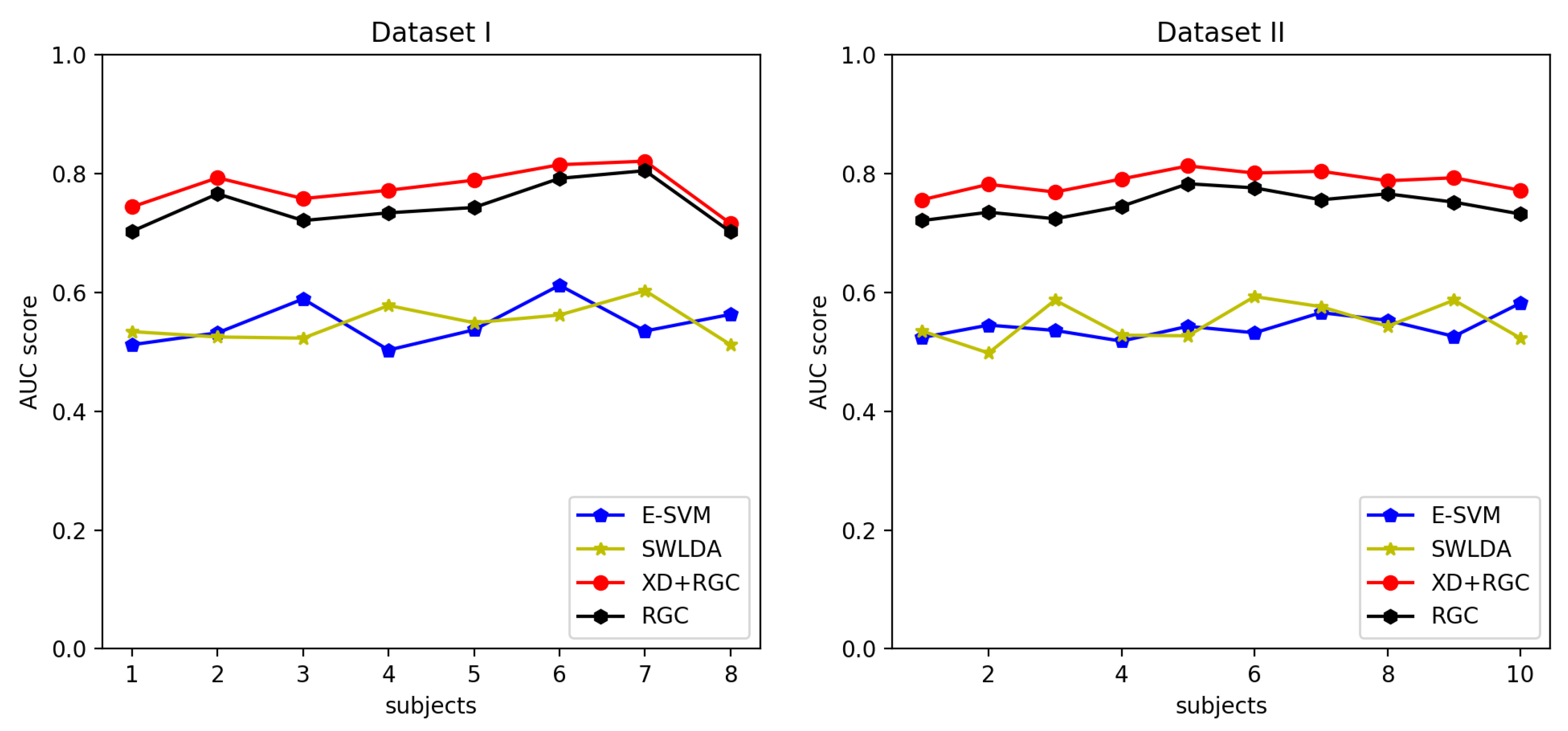

3.1. One-to-One Transfer Learning

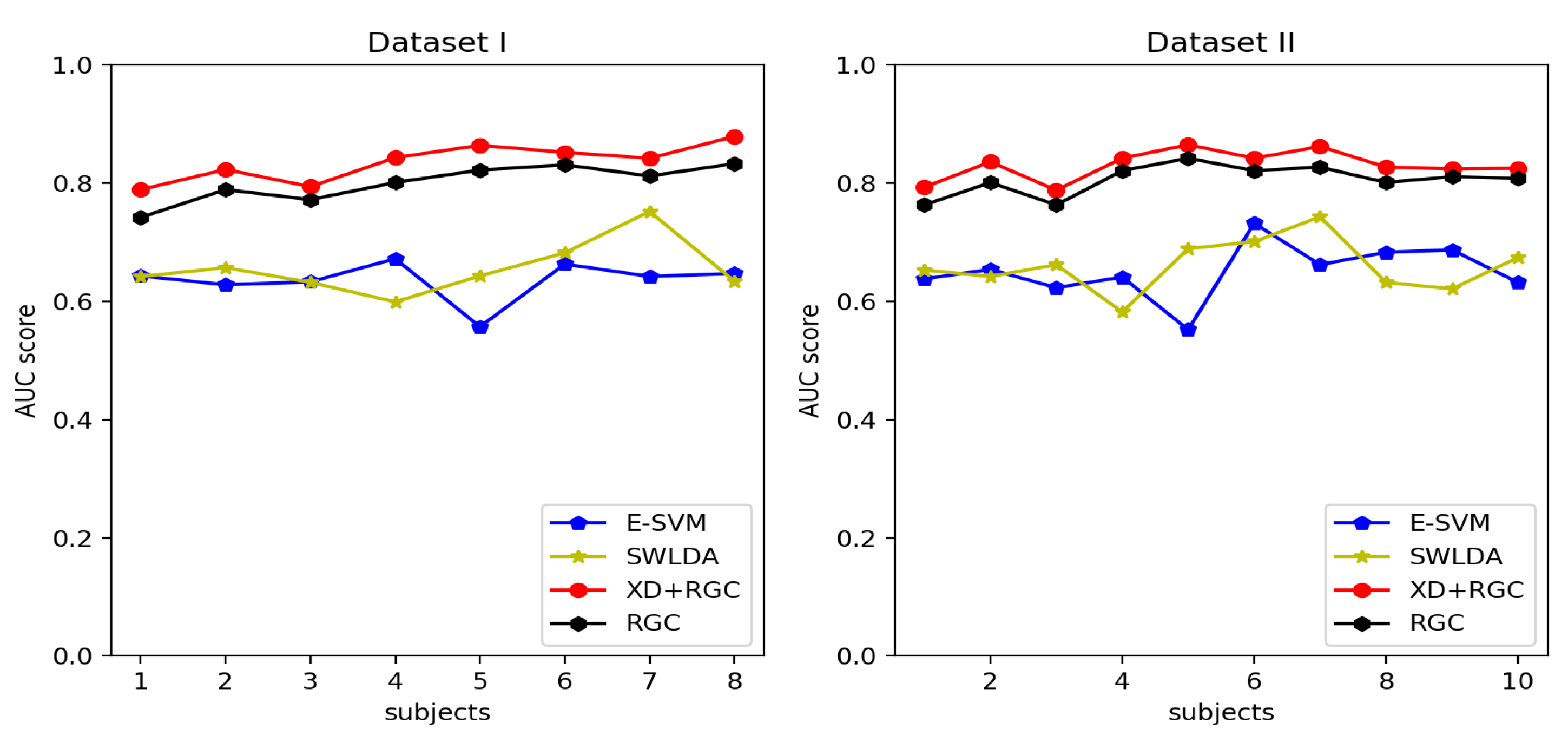

3.2. All-to-One Transfer Learning

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Clerc, M. Brain Computer Interfaces, Principles and Practise. Biomed. Eng. Online 2013, 12, 1–4. [Google Scholar]

- Wolpaw, J.R.; McFarland, D.J.; Neat, G.W.; Forneris, C.A. An EEG-based brain-computer interface for cursor control. Electroencephalogr. Clin. Neurophysiol. 1991, 78, 1–259. [Google Scholar] [CrossRef]

- Birbaumer, N. Brain–computer-interface research: Coming of age. Clin. Neurophysiol. 2006, 117, 479–482. [Google Scholar] [CrossRef] [PubMed]

- Hochberg, L.R.; Serruya, M.D.; Friehs, G.M.; Mukand, J.A.; Saleh, M.; Caplan, A.H.; Branner, A.; Chen, D.; Penn, R.D.; Donoghue, J.P. Neuronal ensemble control of prosthetic devices by a human with tetraplegia. Nature 2006, 442, 164–171. [Google Scholar] [CrossRef]

- Lin, Z.; Zhang, C.; Wu, W.; Gao, X. Frequency Recognition Based on Canonical Correlation Analysis for SSVEP-Based BCIs. IEEE Trans. Biomed. Eng. 2006, 53, 2610–2614. [Google Scholar] [CrossRef]

- Pfurtscheller, G.; Neuper, C. Motor imagery and direct brain-computer communication. Proc. IEEE 2001, 89, 1123–1134. [Google Scholar] [CrossRef]

- Polich, J. Updating P300: An integrative theory of P3a and P3b. Clin. Neurophysiol. 2007, 118, 2128–2148. [Google Scholar] [CrossRef]

- Farwell, L.A.; Donchin, E. Talking off the top of your head: Toward a mental prosthesis utilizing event-related brain potentials. Electroencephalogr. Clin. Neurophysiol. 1988, 70, 1–523. [Google Scholar] [CrossRef]

- Blankertz, B.; Sannelli, C.; Halder, S.; Hammer, E.M.; Kübler, A.; Müller, K.R.; Curio, G.; Dickhaus, T. Neurophysiological predictor of SMR-based BCI performance. Neuroimage 2010, 51, 1303–1309. [Google Scholar] [CrossRef]

- Clerc, M.; Daucé, E.; Mattout, J. Adaptive Methods in Machine Learning; John Wiley & Sons: Hoboken, NJ, USA, 2016. [Google Scholar]

- Lotte, F. Signal Processing Approaches to Minimize or Suppress Calibration Time in Oscillatory Activity-Based Brain–Computer Interfaces. Proc. IEEE 2015, 103, 871–890. [Google Scholar] [CrossRef]

- Pan, S.J.; Yang, Q. A Survey on Transfer Learning. IEEE Trans. Knowl. Data Eng. 2010, 22, 1345–1359. [Google Scholar] [CrossRef]

- Pieter-Jan, K.; Martijn, S.; Benjamin, S.; Klaus-Robert, M.; Michael, T.; Marco, C. True Zero-Training Brain-Computer Interfacing—An Online Study. PLoS ONE 2014, 9, e102504. [Google Scholar]

- Gayraud, N.T.; Rakotomamonjy, A.; Clerc, M. Optimal Transport Applied to Transfer Learning for P300 Detection. In 7th Graz Brain-Computer Interface Conference; Springer: Graz, Austria, 2017. [Google Scholar]

- Lu, S.; Guan, C.; Zhang, H. Unsupervised Brain Computer Interface Based on Intersubject Information and Online Adaptation. IEEE Trans. Neural Syst. Rehabil. Eng. 2009, 17, 135–145. [Google Scholar] [PubMed]

- Morioka, H.; Kanemura, A.; Hirayama, J.I.; Shikauchi, M.; Ogawa, T.; Ikeda, S.; Kawanabe, M.; Ishii, S. Learning a common dictionary for subject-transfer decoding with resting calibration. NeuroImage 2015, 111, 167–178. [Google Scholar] [CrossRef] [PubMed]

- Li, Y.; Guan, C. A Semi-supervised SVM Learning Algorithm for Joint Feature Extraction and Classification in Brain Computer Interfaces. IEEE Eng. Med. Biol. Soc. 2006, 1, 2570–2573. [Google Scholar]

- Zhang, D.; Gaobo, Y.; Feng, L.; Jin, W.; Kumar, S.A. Detecting seam carved images using uniform local binary patterns. In Multimedia Tools & Applications; Springer: Berlin, Germany, 2018. [Google Scholar]

- Zanini, P.; Congedo, M.; Jutten, C.; Said, S.; Berthoumieu, Y. Transfer Learning: A Riemannian Geometry framework with applications to Brain-Computer Interfaces. IEEE Trans. Biomed. Eng. 2017, 65, 1107–1116. [Google Scholar] [CrossRef]

- Congedo, M.; Barachant, A.; Bhatia, R. Riemannian Geometry for EEG-based brain-computer interfaces; A primer and a review. In Brain-Computer Interfaces; Taylor & Francis: Abingdon, UK, 2017; pp. 1–20. [Google Scholar]

- Yger, F.; Berar, M.; Lotte, F. Riemannian Approaches in Brain-Computer Interfaces: A Review. IEEE Trans. Neural Syst. Rehabil. Eng. 2017, 25, 1753–1762. [Google Scholar] [CrossRef]

- Lotte, F.; Bougrain, L.; Cichocki, A.; Clerc, M.; Congedo, M.; Rakotomamonjy, A.; Yger, F. A review of classification algorithms for EEG-based brain–computer interfaces: A 10 year update. J. Neural Eng. 2018, 15, 031005. [Google Scholar] [CrossRef]

- Rivet, B.; Souloumiac, A.; Attina, V.; Gibert, G. xDAWN Algorithm to Enhance Evoked Potentials: Application to Brain–Computer Interface. IEEE Trans. Biomed. Eng. 2009, 56, 2035–2043. [Google Scholar] [CrossRef]

- Schalk, G.; McFarland, D.J.; Hinterberger, T.; Birbaumer, N.; Wolpaw, J.R. BCI2000: A general-purpose brain-computer interface (BCI) system. IEEE Trans. Biomed. Eng. 2004, 51, 1034–1043. [Google Scholar] [CrossRef]

- Lang, S. Differential and Riemannian Manifolds; Springer: Berlin, Germany, 2012; p. 160. [Google Scholar]

- Absil, P.A.; Mahony, R.; Sepulchre, R. Optimization Algorithms on Matrix Manifolds; Princeton University Press: Princeton, NJ, USA, 2008. [Google Scholar]

- Reuderink, B.; Farquhar, J.; Poel, M.; Nijholt, A. A subject-independent brain-computer interface based on smoothed, second-order baselining. In Proceedings of the International Conference of the IEEE Engineering in Medicine and Biology Society, Boston, MA, USA, 30 August–3 September 2011. [Google Scholar]

- Barachant, A.; Bonnet, S.; Congedo, M.; Jutten, C. Classification of covariance matrices using a Riemannian-based kernel for BCI applications. Neurocomputing 2013, 112, 172–178. [Google Scholar] [CrossRef]

- Selesnick, I.W.; Burrus, C.S. Generalized digital Butterworth filter design. IEEE Trans. Signal Process. 1998, 46, 1688–1694. [Google Scholar] [CrossRef]

- Breiman, L. Bagging predictors. In Machine Learning; Springer: Berlin, Germany, 1996; Volume 24, pp. 123–140. [Google Scholar]

- Blankertz, B.; Dornhege, G.; Müller, K.R.; Schalk, G.; Krusienski, D.; Wolpaw, J.R.; Schlogl, A.; Graimann, B.; Pfurtscheller, G.; Chiappa, S.; et al. Results of the BCI Competition III. In BCI Meeting; Elsevier: Amsterdam, The Netherlands, 2005. [Google Scholar]

- Sun, S.; Zhang, C.; Zhang, D. An experimental evaluation of ensemble methods for EEG signal classification. In Pattern Recognition Letters; Elsevier: Amsterdam, The Netherlands, 2007; Volume 28, pp. 2157–2163. [Google Scholar]

- Lobo, J.M. AUC: A misleading measure of the performance of predictive distribution models. Glob. Ecol. Biogeogr. 2007, 17, 145–151. [Google Scholar] [CrossRef]

- Krusienski, D.J.; Sellers, E.W.; Cabestaing, F.; Bayoudh, S.; McFarland, D.J.; Vaughan, T.M.; Wolpaw, J.R. A comparison of classification techniques for the P300 Speller. J. Neural Eng. 2006, 3, 299–305. [Google Scholar] [CrossRef]

- Rakotomamonjy, A.; Guigue, V. BCI competition III: Dataset II—Ensemble of SVMs for BCI P300 speller. IEEE Trans. Biomed. Eng. 2008, 55, 1147–1154. [Google Scholar] [CrossRef]

- Lin, T.; Zha, H. Riemannian Manifold Learning. IEEE Trans. Pattern Anal. Mach. Intell. 2008, 30, 796. [Google Scholar]

- Xiang, L.; Guo, G.; Yu, J. A convolutional neural network-based linguistic steganalysis for synonym substitution steganography. Math. Biosci. Eng. 2020, 18, 1041–1058. [Google Scholar] [CrossRef]

- Liu, Z.; Lai, Z.; Ou, W.; Zhang, K.; Zheng, R. Structured optimal graph based sparse feature extraction for semi-supervised learning. Signal Process. 2020, 170, 107456. [Google Scholar] [CrossRef]

- Zeng, D.; Dai, Y.; Li, F.; Wang, J.; Sangaiah, A.K. Aspect based sentiment analysis by a linguistically regularized CNN with gated mechanism. J. Intell. Fuzzy Syst. 2019, 36, 3971–3980. [Google Scholar] [CrossRef]

| 1. Leave one subject’s data for testing purpose. |

| 2. For s in k: |

| 3. Input training data , label . |

| 4. Calculate after XDAWN filtering. |

| 5. Calculate SPD covariance matrix according to . |

| 6. Calculate the Riemannian Geometry mean point G, as reference matrix and use G to affine transform the to get |

| 7. Map to the Riemannian manifold, and calculate the Riemannian Geometry mean points for two classes, G1 and G2. |

| 8. End for |

| 9. Input test data . |

| 10. After XDAWN filtering, calculate its SPD covariance matrix and affine transform it and use RGC classifiers to classify. |

| 11. Output the class with the smallest Riemann distance. |

| 1. Leave one subject’s data for testing purpose. |

| 2. for s in k-1: |

| 3. Input training data set , label |

| 4. Calculate after XDAWN filtering |

| 5. Calculate the SPD covariance matrix M of . |

| 6. Calculate the Riemannian Geometry mean point G, as reference matrix and use G to affine transform the M to get |

| 7. Project into the Riemannian manifold, and calculate the Riemannian Geometry mean points for two classes, G1 and G2. |

| 8. end for |

| 9. Input test data and After XDAWN filtering, calculate its SPD covariance matrix and affine transform it and use RGC classifiers to classify. |

| 10. Output the result by the largest votes. |

| Testing Subjects | S1 | S2 | S3 | S4 | S5 | S6 | S7 | S8 | Avg. |

|---|---|---|---|---|---|---|---|---|---|

| E-SVM | 0.512 | 0.532 | 0.589 | 0.503 | 0.537 | 0.612 | 0.535 | 0.563 | 0.547 |

| SWLDA | 0.534 | 0.525 | 0.523 | 0.578 | 0.549 | 0.562 | 0.603 | 0.512 | 0.548 |

| RGC | 0.703 | 0.766 | 0.721 | 0.734 | 0.743 | 0.792 | 0.805 | 0.702 | 0.746 |

| XD + RGC | 0.744 | 0.793 | 0.758 | 0.772 | 0.789 | 0.815 | 0.821 | 0.716 | 0.776 |

| Testing Subjects | S1 | S2 | S3 | S4 | S5 | S6 | S7 | S8 | S9 | S10 | Avg. |

|---|---|---|---|---|---|---|---|---|---|---|---|

| E-SVM | 0.524 | 0.545 | 0.536 | 0.518 | 0.543 | 0.532 | 0.566 | 0.553 | 0.526 | 0.582 | 0.542 |

| SWLDA | 0.535 | 0.521 | 0.587 | 0.528 | 0.527 | 0.593 | 0.576 | 0.543 | 0.587 | 0.523 | 0.552 |

| RGC | 0.721 | 0.735 | 0.724 | 0.745 | 0.783 | 0.776 | 0.756 | 0.766 | 0.752 | 0.732 | 0.749 |

| XD+RGC | 0.756 | 0.782 | 0.769 | 0.791 | 0.813 | 0.801 | 0.804 | 0.788 | 0.793 | 0.772 | 0.787 |

| Testing Subjects | S1 | S2 | S3 | S4 | S5 | S6 | S7 | S8 | Avg. |

|---|---|---|---|---|---|---|---|---|---|

| E-SVM | 0.643 | 0.628 | 0.633 | 0.672 | 0.557 | 0.663 | 0.642 | 0.647 | 0.636 |

| SWLDA | 0.642 | 0.657 | 0.632 | 0.599 | 0.643 | 0.682 | 0.752 | 0.634 | 0.655 |

| RGC | 0.742 | 0.789 | 0.772 | 0.801 | 0.822 | 0.831 | 0.812 | 0.833 | 0.800 |

| XD+RGC | 0.789 | 0.823 | 0.794 | 0.843 | 0.864 | 0.852 | 0.842 | 0.879 | 0.836 |

| Testing Subjects | S1 | S2 | S3 | S4 | S5 | S6 | S7 | S8 | S9 | S10 | Avg. |

|---|---|---|---|---|---|---|---|---|---|---|---|

| E-SVM | 0.638 | 0.654 | 0.623 | 0.641 | 0.552 | 0.732 | 0.662 | 0.683 | 0.687 | 0.632 | 0.650 |

| SWLDA | 0.653 | 0.642 | 0.662 | 0.582 | 0.689 | 0.701 | 0.743 | 0.632 | 0.621 | 0.674 | 0.660 |

| RGC | 0.763 | 0.801 | 0.763 | 0.821 | 0.842 | 0.821 | 0.827 | 0.801 | 0.811 | 0.808 | 0.806 |

| XD+RGC | 0.793 | 0.836 | 0.788 | 0.842 | 0.865 | 0.842 | 0.862 | 0.827 | 0.824 | 0.825 | 0.830 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, F.; Xia, Y.; Wang, F.; Zhang, D.; Li, X.; He, F. Transfer Learning Algorithm of P300-EEG Signal Based on XDAWN Spatial Filter and Riemannian Geometry Classifier. Appl. Sci. 2020, 10, 1804. https://doi.org/10.3390/app10051804

Li F, Xia Y, Wang F, Zhang D, Li X, He F. Transfer Learning Algorithm of P300-EEG Signal Based on XDAWN Spatial Filter and Riemannian Geometry Classifier. Applied Sciences. 2020; 10(5):1804. https://doi.org/10.3390/app10051804

Chicago/Turabian StyleLi, Feng, Yi Xia, Fei Wang, Dengyong Zhang, Xiaoyu Li, and Fan He. 2020. "Transfer Learning Algorithm of P300-EEG Signal Based on XDAWN Spatial Filter and Riemannian Geometry Classifier" Applied Sciences 10, no. 5: 1804. https://doi.org/10.3390/app10051804

APA StyleLi, F., Xia, Y., Wang, F., Zhang, D., Li, X., & He, F. (2020). Transfer Learning Algorithm of P300-EEG Signal Based on XDAWN Spatial Filter and Riemannian Geometry Classifier. Applied Sciences, 10(5), 1804. https://doi.org/10.3390/app10051804