1. Introduction

Nowadays, manipulation has become an increasingly important standing research topic in robotics. Most of the related works in this field consider the grasping of rigid bodies as an extensively studied area, which is rich with theoretical analysis and implementations using different robotic hands ([

1,

2,

3,

4,

5,

6,

7,

8]). Robotic grasping of deformable objects has also acquired importance recently due to several potential applications in various areas, including biomedical processing, the food processing industry, service robotics, robotized surgery, etc. ([

9,

10,

11,

12,

13,

14]). Recent surveys covering the literature related to the robotic grasping and manipulation of deformable objects can be found in [

12,

15].

Nevertheless, efficient and precise (i.e., deformation/force control) robotic grasping of 3D deformable objects remains an underdeveloped area within the robotics community due to the technical and methodological difficulties of the problem. In fact, this problem involves implementing two main steps with their own subproblems: determining the initial location of the grasp points and moving the hand + arm system towards them (i.e., pregrasping strategy), and closing the fingers of the robotic hand until a robust grasp is reached while taking into account the deformation of the object (i.e., grasping strategy).

In the case of the pregrasp strategies, two main problems arise: firstly, implementing a grasp quality measure to choose the most stable grasp configuration; secondly, applying the kinematic constraints of the hand + arm system so that the chosen grasp is reachable. Several previous surveys to quantify grasp quality were developed in [

1,

16,

17,

18,

19]. All of these approaches have studied stable grasps and developed various stability criteria to find optimal grasps for rigid objects. Nevertheless, the development of algorithms that can efficiently synthesize grasps in 3D deformable objects is still a challenging research problem. Wakamatsu et al. [

20] analyzed the stable grasping of deformable objects based on extending the concept of force closure of rigid objects to deformable planar objects with bounded applied forces. Mira et al. [

21] presented a grasp planner that can reproduce the actions of a human hand to determine the contact points. Their algorithm combines the position information of the hand with visual and tactile data in order to achieve a hand–object configuration. This method is applied to planar objects without considering applied forces by fingers and object deformation. Xu et al. [

22] proposed a grasp quality metric for hollow plastic objects based on minimal work in order to combine wrench resistance and object deformation. Jorgensen et al. [

23] presented a generic solution for doing pick and place operations of meat pieces with a vacuum gripper. This solution used a grasp quality metric that combines wrench resistance and object deformation. It was based on the computation of the required work to resist an external wrench and it can only be applied to planar objects, without a direct relation between applied forces and deformation. In fact, none of these works can be applied to general 3D deformable objects and they are solutions for specific types of objects.

For the second step of the grasping problem (i.e., closing of the fingers), previous grasping synthesis methods are mainly based on the generation of force closure (FC) grasps ([

24,

25,

26]). This means that the robotic hand is able to maintain the manipulated object inside the palm despite any external disturbance and evaluate the contact forces including those due to friction. FC is generally used to implement the grasping of rigid objects with a low number of frictional contacts by taking into account geometric criteria of their contact surfaces. Nevertheless, traditional FC-based approaches cannot be applied to deformable objects due the complexity of their interaction with the robotic hand’s fingers: the object deforms (i.e., its size and shape are changed) and the contact surfaces evolve dynamically while contact forces are applied by the fingers. New reactive methods based on the detection and avoidance of slippage have been developed in order to solve this limitation in the grasping of deformable objects. For instance, Kaboli et al. [

27] computed the tangential forces required for slippage avoidance and adjusted the fingers’ relative positions and grasping forces accordingly, taking into account an estimation of the weight of the grasped object. The main drawback of this approach is that the relationship between applied forces and object deformation is not considered. Zhao et al. [

28] proposed a new slip detection sensor based on video processing in order to perceive multidimensional information, including absolute slip displacement, the deformation at the object surface, and force information. This method is only applied for 2D objects with transparent and reflective surface, such as pure glass. However, these types of materials are not common in deformable objects. In addition, the stress state of the gripper–object interface is not precisely analyzed when taking into account the properties of the object’s material.

In order to solve all these limitations of reactive strategies, it is crucial to use a suitable contact model ([

29,

30,

31,

32]) that explains the coupling between the contact forces and the object deformations. A precise contact model can be exploited to provide a set of grasping forces and torques that can be applied to maintain equilibrium before and after the deformation [

33]. Thereby, the grasp stability can be computed in real-time while the contact surfaces evolve during deformation. This evolution of the object surface is obtained by sampling mechanical deformation models [

34], mainly mass-spring systems ([

35,

36]), finite element methods (FEM) ([

37,

38]), and impulse-based methods ([

39]). The mass-spring system is a fast and interactive physical model with a solid mathematical foundation and well-understood dynamics. This model provides a more realistic force/deformation behavior in the case of large deformations, while maintaining the capability of real-time response. FEM provides higher accuracy, but it requires higher numerical computation, which is more appropriate for offline simulations. Therefore, this paper uses a deformation model based on nonlinear mass-spring elements distributed across a tetrahedral mesh: lumped masses are attached to the nodes and nonlinear springs represent the edges. The tracking of the mesh nodes positions is assured by solving a dynamic equation based on Newton’s second law. The main interests of this deformation model are the dynamic predictions of the object’s deformations and its realistic behavior, which results from the nonlinearity of the object. More details about this object deformation model can be found in previous works [

40] and it will be used by the new proposed grasp synthesis algorithm.

Several previous works have taken into account the deformation of soft objects while grasping them without integrating precise contact interaction models. Berenson et al. [

41] presented a vision-based deformation controller for elastic planar objects without considering the grasp properties. This method takes into account just one contact point which cannot be used with a multifingered hand for grasping deformable objects. Similarly, Nadon et al. presented a model-free algorithm in [

42] based on visual information for automatically selecting the contact points between the fingers and the object’s contour in order to control its shape. However, contact forces and object stability were not considered. Grasping deformable planar objects using contact analysis was proposed in [

43]. It was based on an algorithm to track the contact regions during the squeezing process and determine the stick/slip mode in the contact area. Fingers’ displacements were considered rather than forces, and object stability was not guaranteed. Similarly, Sanchez et al. [

44] developed a manipulation pipeline that is able to estimate and control the shape of a deformable object with tactile information but the stability of the grasp is not considered. Authors in [

45] proposed a new approach to lift a deformable 3D object, causing small deformations of the object by employing two rigid fingers with contact friction. This approach considered small deformations within the scope of the linear elasticity during the grasp operation and did not deal with the nonlinear relationships between deformations and forces of 3D soft objects. Jorgensen et al. [

46] presented a simulation framework for using machine learning techniques to grasp deformable objects. In this framework, robot motions were parameterized in terms of grasp points, robot trajectory, and robot speed. Hu et al. [

47] presented a general approach to automatically handle soft objects using a dual-arm robot. An online Gaussian process regression model was used to estimate the deformation function of the manipulated objects and low-dimension features described the object’s configuration. Finally, new works (such as [

48,

49]) integrate deep-learning techniques, 3D vision, and tactile information in order to fold/unfold and pick-and-place clothes. Although all these works consider the deformation of the object while manipulating it, an initial stable grasp is supposed to be known and nonlinear force–deformation relations are not computed during the handling process.

None of these works combine all the previously explained elements that are required for the implementation of a general and complete grasp planning pipeline for 3D deformable objects: a pregrasp strategy for reaching the object that takes into account grasp quality metrics for 3D deformable objects, and a grasp strategy for closing the fingers that guarantees a stable configuration of the object by considering its deformation and the applied contact forces. This paper involves an extension of our previous grasping strategy in [

33] in order to implement this complete grasp planning pipeline. Firstly, we adapt the stability criteria developed for robotic grasp synthesis of rigid objects to 3D deformable objects (see

Section 2 for a general description of the strategy and

Section 3 for the new geometric criterion of the initial grasp). We also defined a new pregrasp strategy (

Section 4 and

Section 5) in order to approach the deformable objects in an optimal way before manipulating them by using the previous grasping metrics. This grasping strategy is based on an interaction model developed by the authors in a previous work [

40].

Section 6 indicates how this finger–object contact model is used in order to compute the contact forces to be applied by the fingers for a stable grasp of the deformable object and what their precise values are in the proposed experiments. We consider the problem of grasping and manipulation of a 3D isotropic deformable objects with a 3-fingered Barrett hand. This hand is installed as the end-effector of a 6-DOF Adept Viper robotic arm S1700D. Finally, a complete grasping planning strategy combining the pregrasp and grasp synthesis strategies of the hand + arm system is developed and tested with real objects (

Section 7).

2. General Description of the Grasp Planning Pipeline

As indicated in the introduction section, by using our previously developed contact model [

33], we can handle highly deformable objects and give precise estimations of the contact forces generated while deforming them. Those precise estimations would guarantee the static equilibrium of the object by considering new grasps metrics (

Section 3) and optimizing the pregrasp configuration of the robotic hand around the object (

Section 4 and

Section 5).

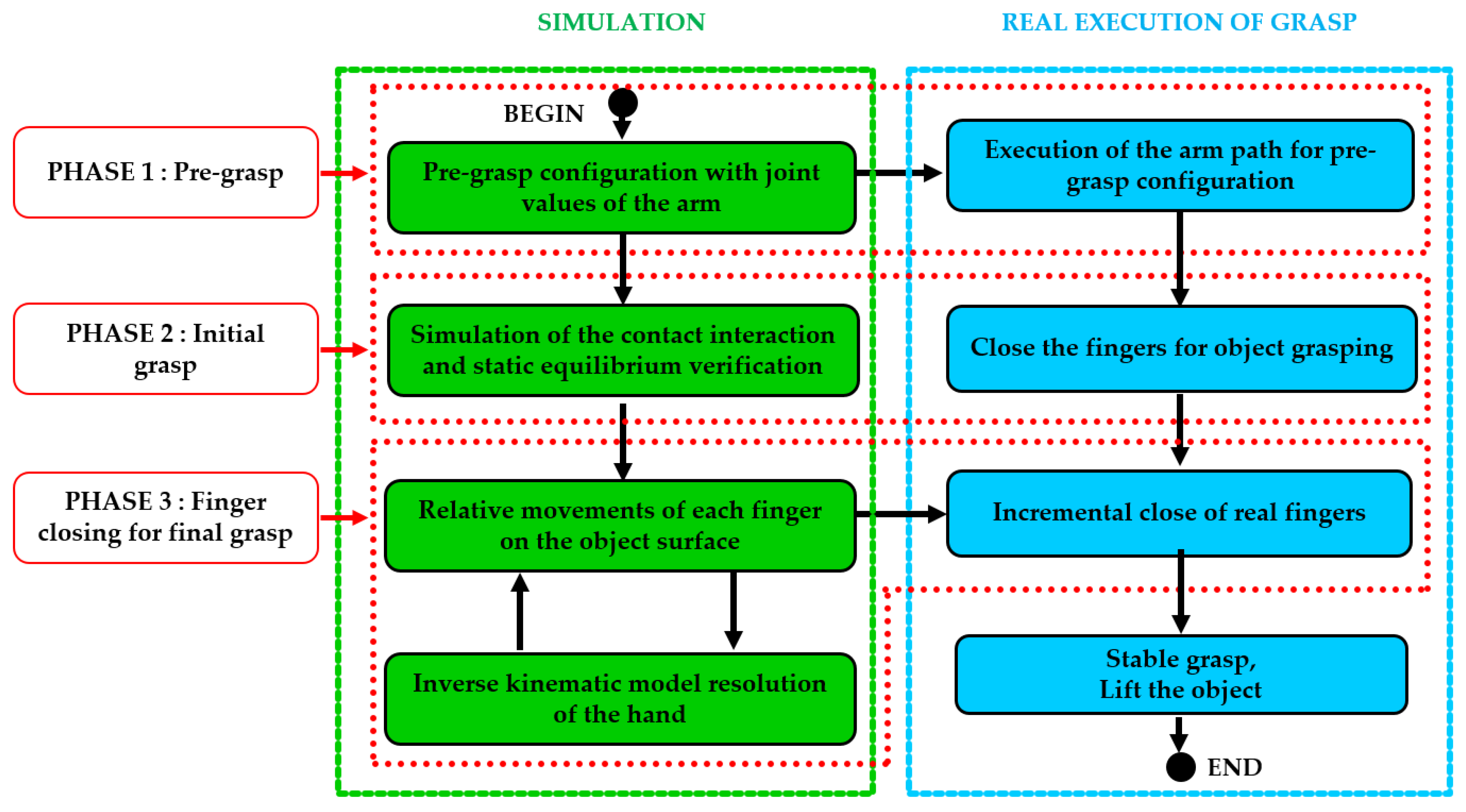

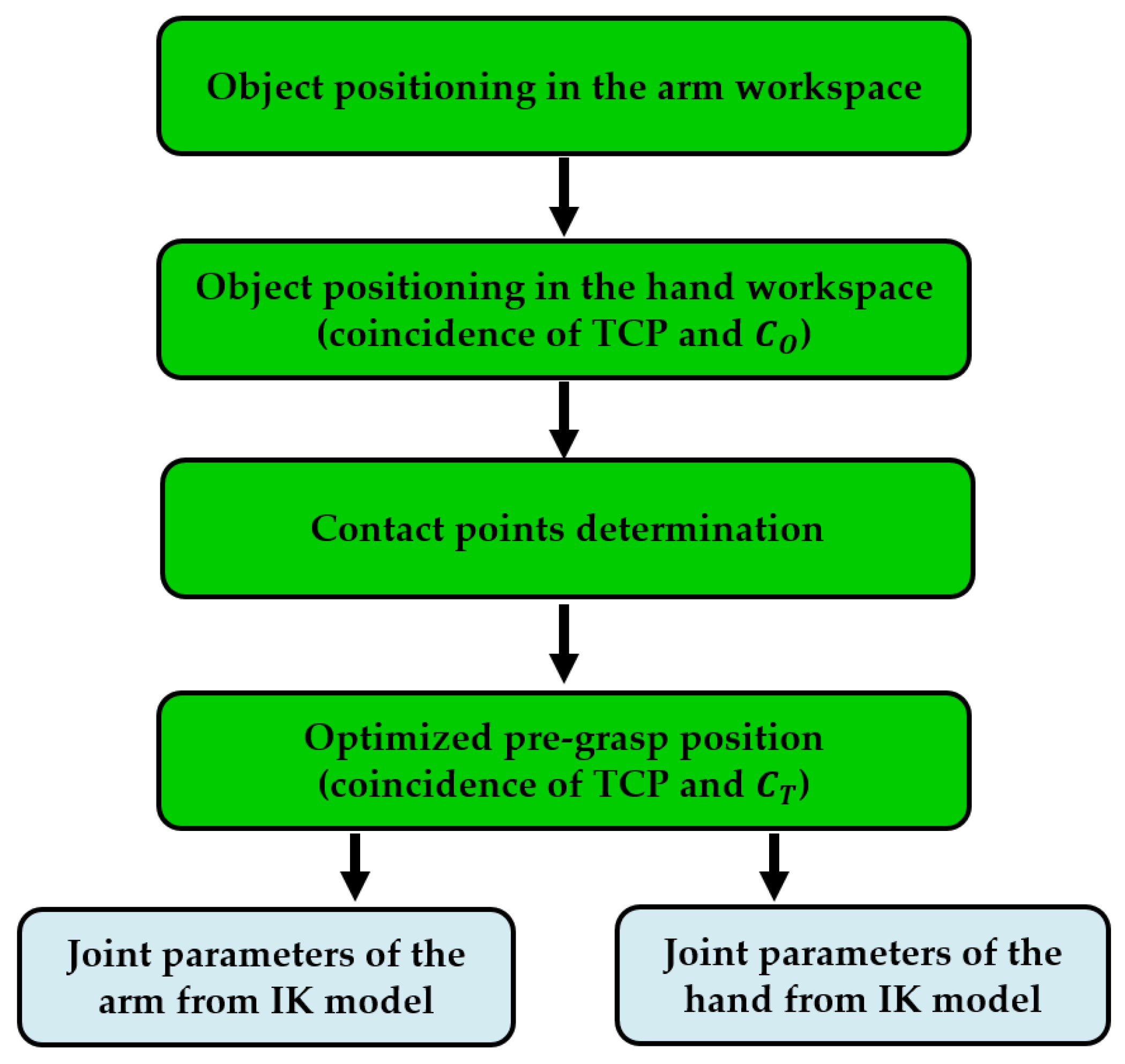

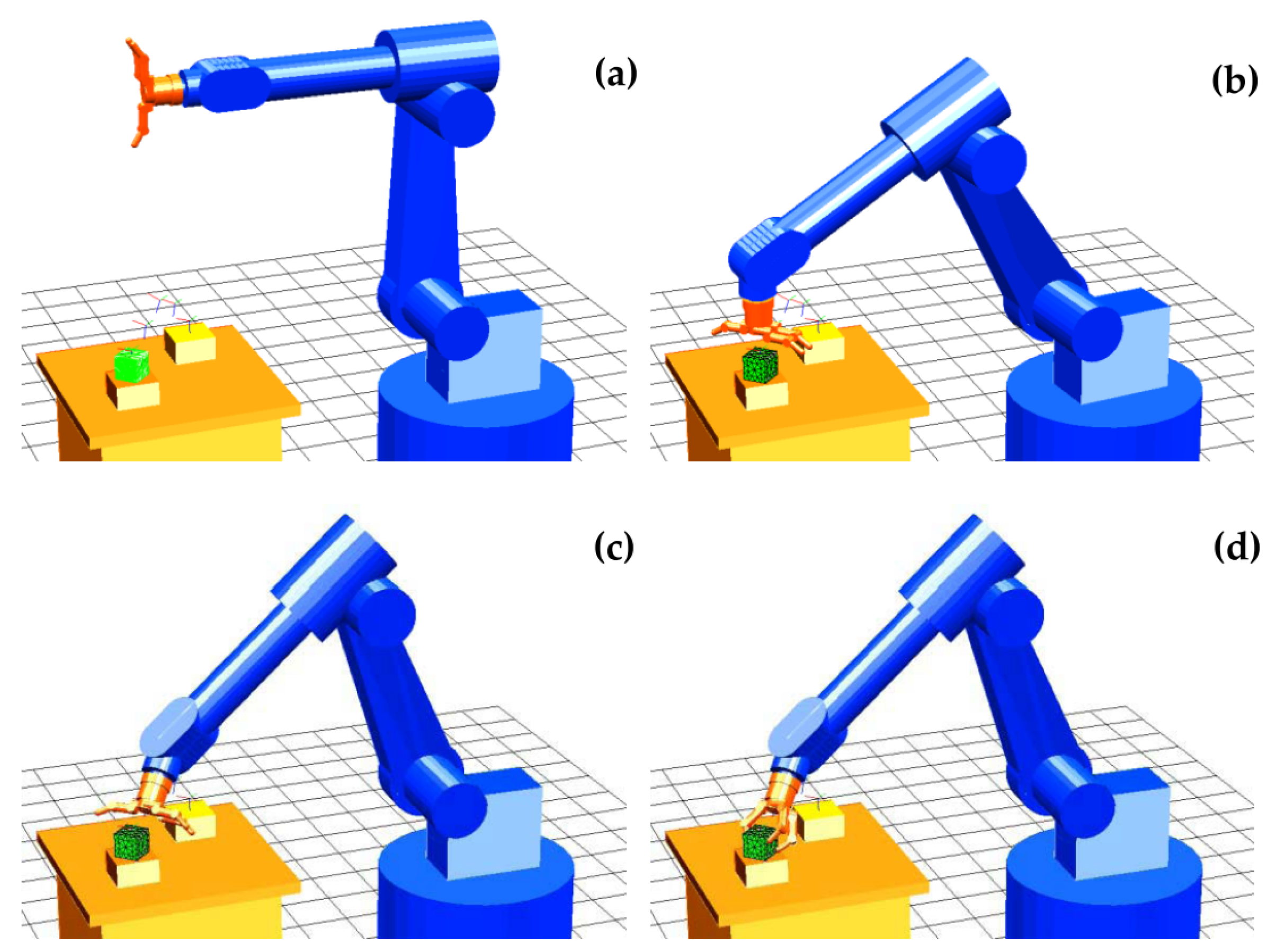

The flowchart in

Figure 1 shows all the steps that are required in order to compute and execute a robust grasp (i.e., the configuration of fingers over the surface of the object) that guarantees the stability of the object with our grasp planning pipeline.

In order to execute the computed grasp, the first step determines the pregrasp configuration. In fact, the complete grasp planning strategy is divided into two phases: First, determining the configuration of the robotic arm to bring the hand close to the object so that the fingers of the hand can reach the surface of the object (

Section 5). The second phase is determining the appropriate initial configuration of the fingers of the hand to grasp the object based on a new geometric criterion (

Section 3 and

Section 4). The determination of the joint parameters of the arm and the hand for executing both phases is ensured by the resolution of their inverse kinematics (IK).

Once the pregrasp step is performed, the robot moves next to the object and the fingers reach the initial grasp. No important deformation is supposed to be generated when the fingers come into contact with the object since a very small contact force will be firstly applied in order to avoid that the grasp location changes. As soon as this initial grasp is executed precisely, the third step of the algorithm is activated and an iterative closing of the fingers begins, based on the simulation of the contact interaction model (

Section 6). At each iteration of the simulation, the deformations of the object are updated and the generated contact forces are computed. The computed contact forces are used to evaluate the static equilibrium of the object + fingers system. The iterative process is repeated in simulation until the static equilibrium is reached (see all these steps in simulation on the left side of the flowchart in

Figure 1).

Upon completion of the simulation process, the real handling and manipulation of the object can be performed by executing the contact forces obtained from simulation (see steps on the right side of the flowchart). Firstly, the object is installed at the workspace of the robot with the same relative configuration as at the beginning of the simulation. Then, the robotic arm moves towards the pregrasp configuration (i.e., phase 1 in reality) and the hand fingers are moved towards the initial contact points by position control (i.e., phase 2 in reality). At this stage (beginning of phase 3 in reality), the fingers are closed by force control and apply progressive squeezing of the object until the contact forces (which are measured by contact sensors installed inside each finger of the Barrett hand) become equal to the contact forces computed at the simulation step. These contact forces guarantee the equilibrium of the object–hand system (as validated by the simulation). Thereby, the object can be lifted up from the table without any risk of sliding and can be robustly manipulated. In the next sections, all these steps are described in detail and are validated with real pick-and-place experiments of deformable objects by a hand + arm robot system (

Section 7).

3. Synthesis of the Initial Grasp Configuration

In this section, we address the characterization of the object stability for a three-fingered grasp. This implies determining a force closure configuration based on the choice of three contact points from the set of all the points representing the outer 3D surface of the object. The following assumptions are considered in this procedure:

The use of three fingers for the grasp operation modeled as hemispheres with radius R.

The first contacts between the fingers and the object are point contacts.

The outer surface of the object is represented by a set of points , which are described by their position vectors measured with respect to a reference frame located at the center of mass () of the object.

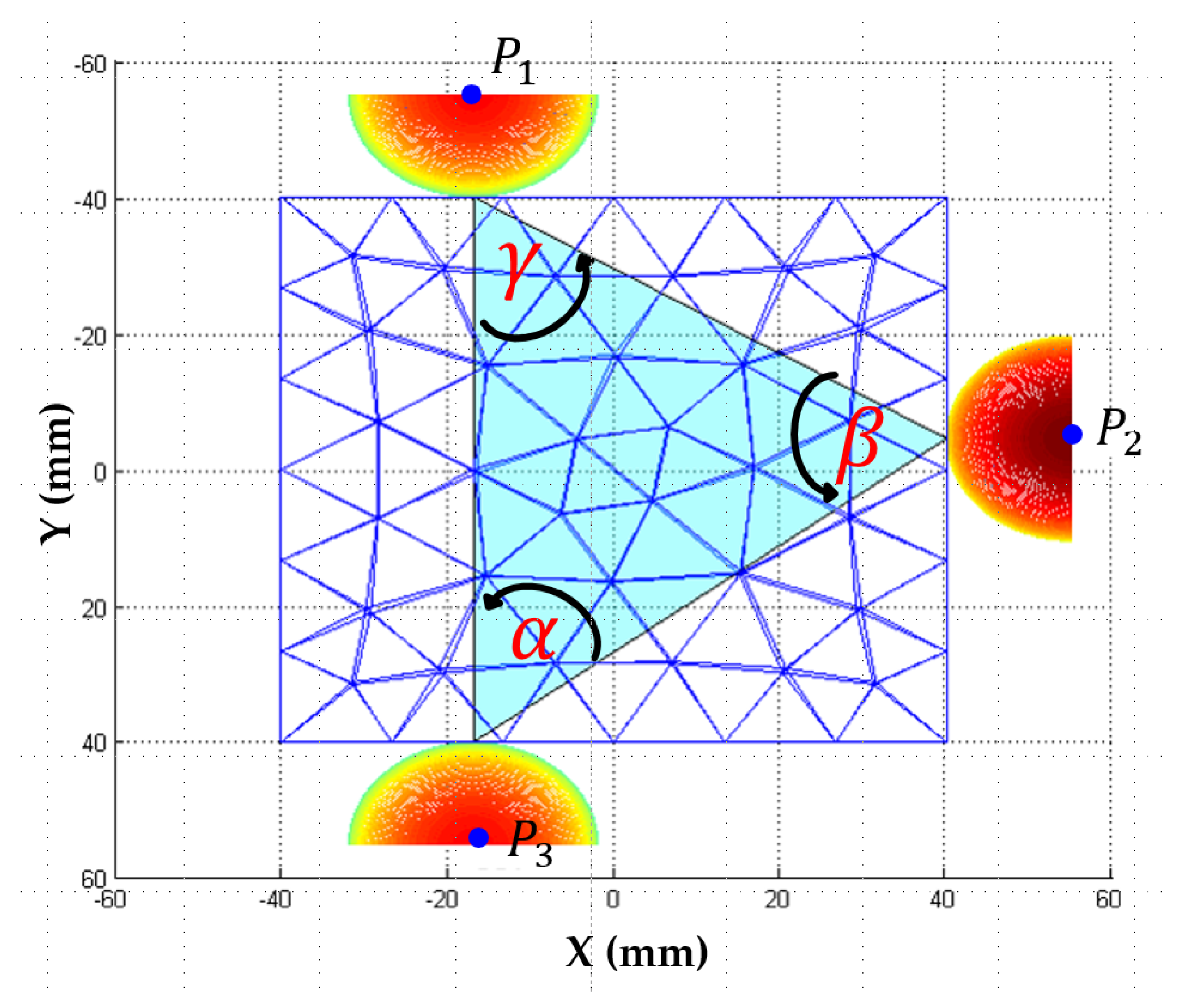

In fact, a 3-fingered grasp is more reliable in terms of stability, slip avoidance, and balance of forces when it converges towards an ideal equilateral grasp [

17]. Thus, each three-fingered grasp can be characterized by a value which represents its similarity to an equilateral triangle. We suggest an algorithm based on geometric criteria to find this equilateral grasp. This algorithm determines, at first, the set of all possible grasping triangles by scanning the points belonging to the contact surface

. Then, using the

criterion presented by Roa [

17], our algorithm compares the angle values (alpha, beta, and lambda) of these triangles with

(i.e., the angle that characterizes an equilateral triangle) in order to choose the configuration closest to an equilateral triangle (see

Figure 2):

For a target angle value of , the minimum possible value of is 0 for a perfect equilateral triangle, 2 for the most unfavorable case (the triangle degenerates into a segment), and 1 for an intermediate state.

For evaluating the angles of the triangle, we use a margin of error defined by the interval [0, 0.3]. Depending on the density of the points of the contact surface

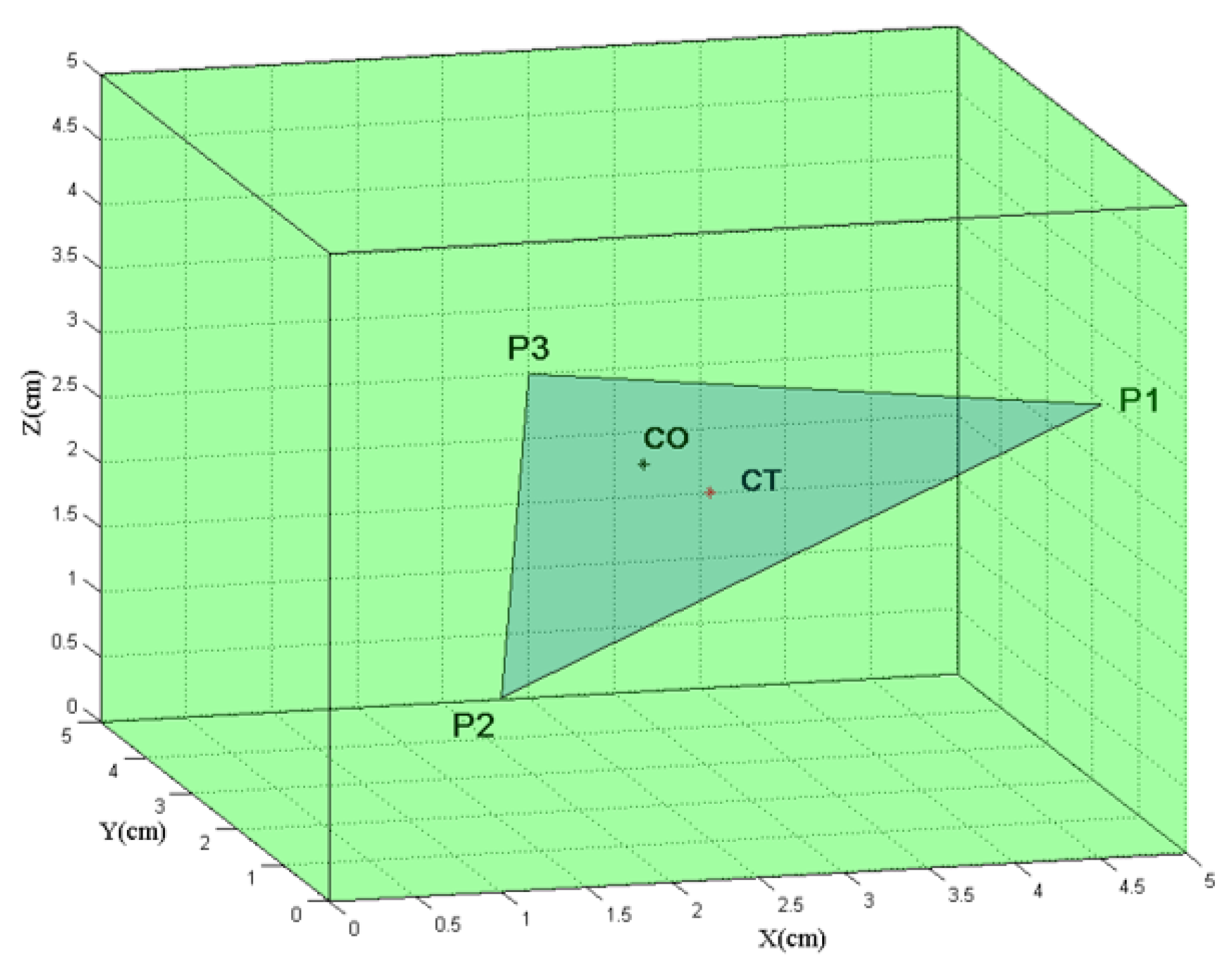

, this algorithm can give several grasp configurations. Finally, a second criterion, referred to as

, is used in order to choose one between them. It measures the distance between the center of the mass of the object

and the center of the grasping triangle

(

Figure 3), defined as

This criterion was developed to obtain stable grasps with respect to the torques generated by gravitational and inertial forces [

17]. The complete grasp synthesis algorithm based on the these two criteria

and

is represented in the diagram “Algorithm 1”:

| Algorithm 1 Synthesis of the 3 contact points for initial grasp configuration |

Grasp synthesis algorithm. Input: points on contact surfaces with their 3D coordinates

Calculation of the set of grasp triangles defined from points . Calculation of the value of the angles of the grasp triangles of the set . Choice of the grasp triangles closer to an equilateral triangle by applying criterion , the set of these triangles is named . Calculation of the barycenter of the grasp triangles of the set . Calculation of the distance between and the centers of the triangles of the set by applying criterion . Choosing the triangle that minimizes .

Output: 3 initial contact points of the grasp configuration

|

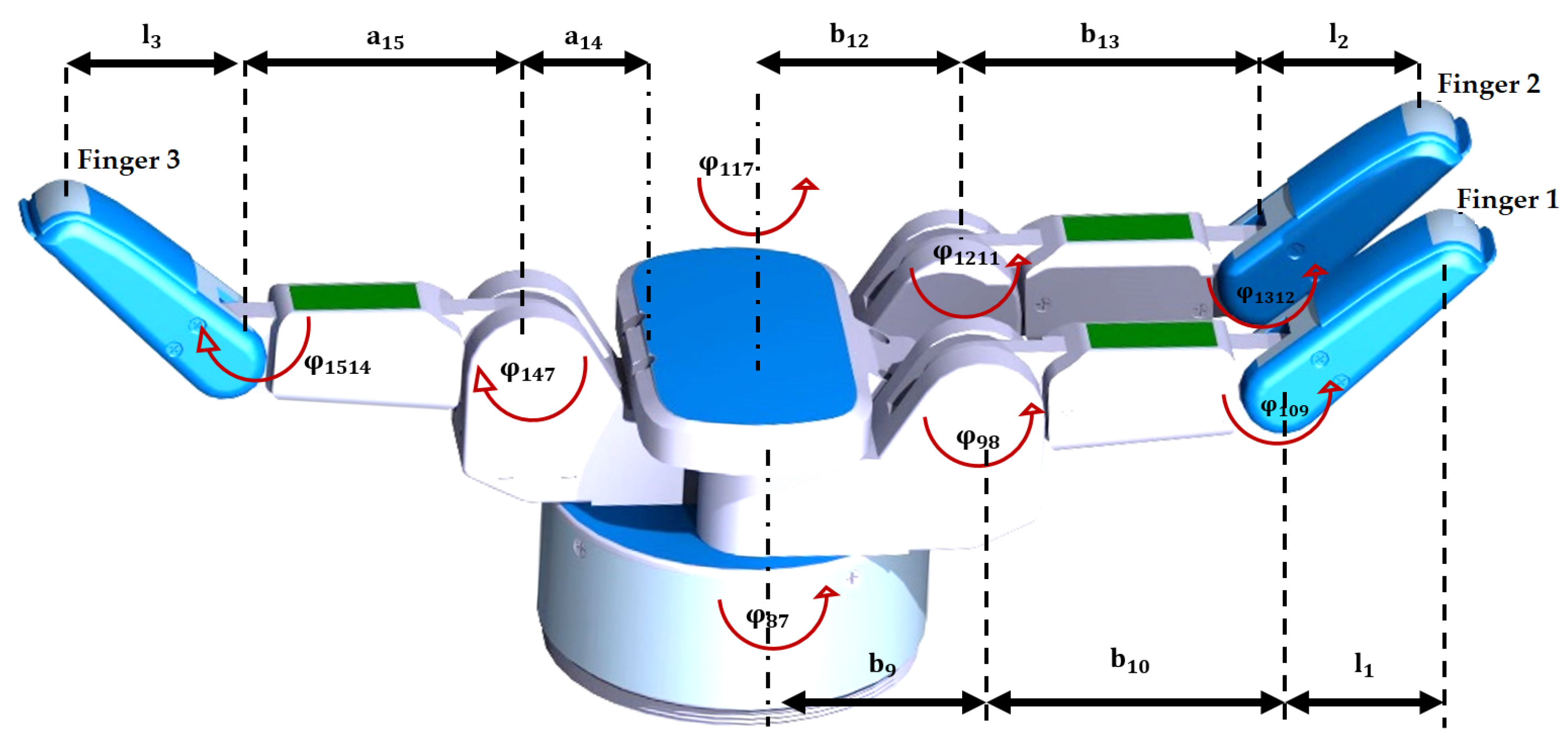

4. Pregrasp Strategy for the Robotic Hand: Placement of the Fingers

In this section, we present the pregrasp strategy for reaching the three contact points of the initial grasp computed in

Section 3 with a three-fingered robotic hand (e.g., the Barrett hand [

50] in our experiments). In order to describe the kinematic model of the Barrett hand, we establish the joint frames of

Figure 4. The movement of the fingers of the Barrett hand is controlled by defining 4 encoder values

that are connected to the joint values

of the fingers (

Figure 4) by the following coupling relationships:

where

for

(i.e., the range of variation of the encoders of the motors for closing/opening the three fingers), and

(i.e., the range of variation of the encoder of the motor 4 for separating fingers 1 and 2 around the palm).

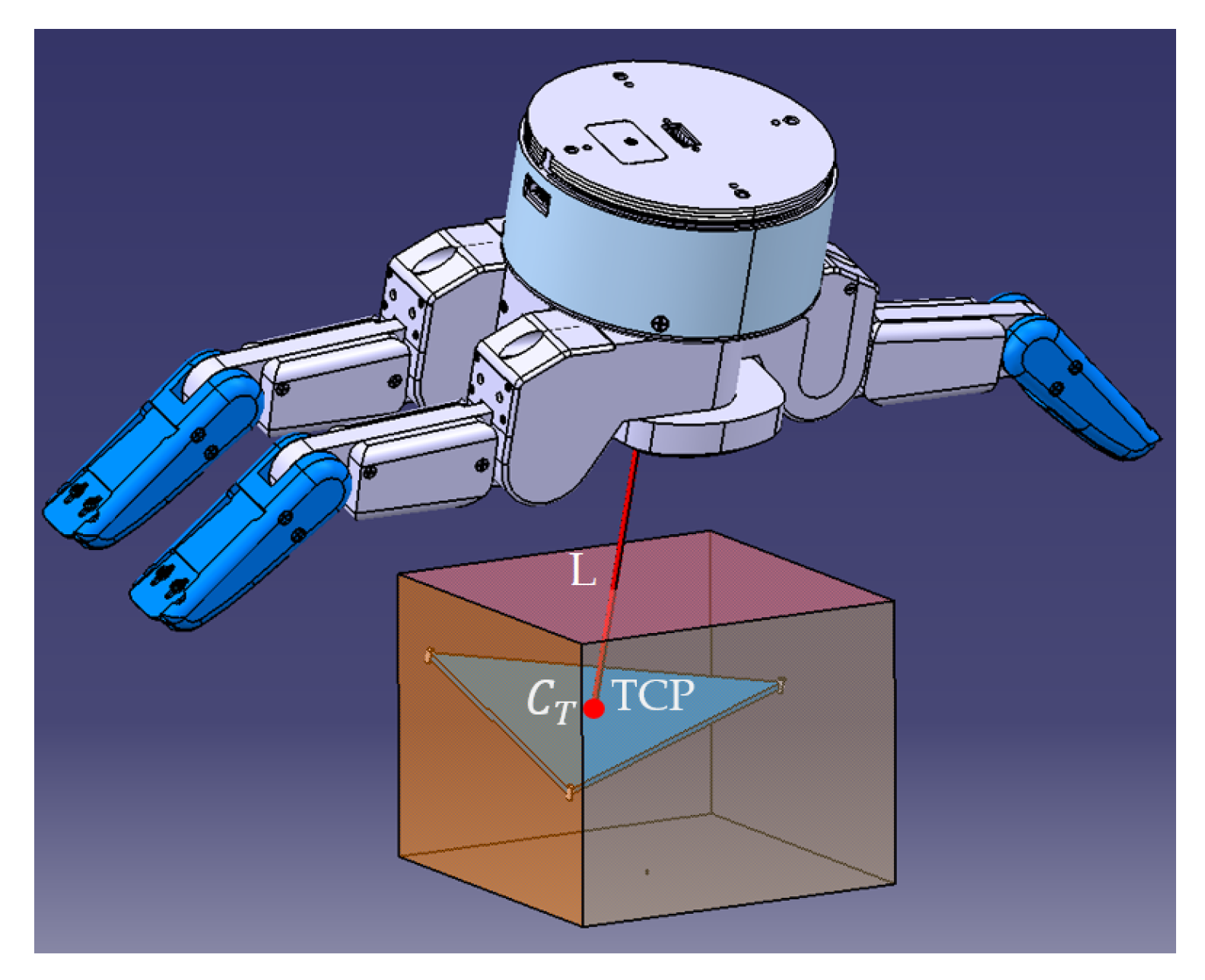

The pregrasp strategy for the three-fingered robotic hand involves two steps: the first one aligns the orientation of the hand with the initial grasping triangle computed in

Section 3; the second one adjusts this initial estimation of the grasp by taking into consideration the kinematic constraints of the hand. The first step consists of orientating the hand so that the TCP (Tool Center Point, defined along the axis perpendicular to the palm of the hand) coincides with the

grasp triangle center (as shown in

Figure 5). The second step involves the resolution of the IK of the fingers in order to estimate the joint values to reach the three grasp points (

,

, and

) of the initial grasp triangle. If no IK solution is found, the length of the TCP line is changed and the IK resolution is recomputed. When an IK solution is found, the motor commands

are computed in order to attend to these joint values, by applying the inverse expression of (

3). The implemented algorithm is shown in the diagram “Algorithm 2”. In the context of this iterative solution search, we consider that the solution is validated for a value of joint angle difference for each of the fingers smaller than 2 degrees.

| Algorithm 2 Pregrasp strategy for the robotic hand |

Finger placement algorithm for the initial grasp. Input: grasp triangle computed by Algorithm 1

Reorientation of the hand in the grasp plan and coincidence of TCP with . Calculation of IK and definition of finger joint values. Searching for a solution for , , and by successive iteration over the length L in order to find a solution. Reorientation of the hand around TCP and adjustment of the distance L to define a solution for .

Output: Definition of the values of and the 6 components (position + orientation) of the tool frame defining the hand’s TCP.

|

5. Pregrasp Strategy for the Hand + Arm System: Reaching Trajectory by the Arm

After establishing the initial grasp points of the fingers (

Section 3) and the corresponding pregrasp configuration of the hand around the object (

Section 4), we should execute the latter by moving the hand with a robotic arm that carries it.

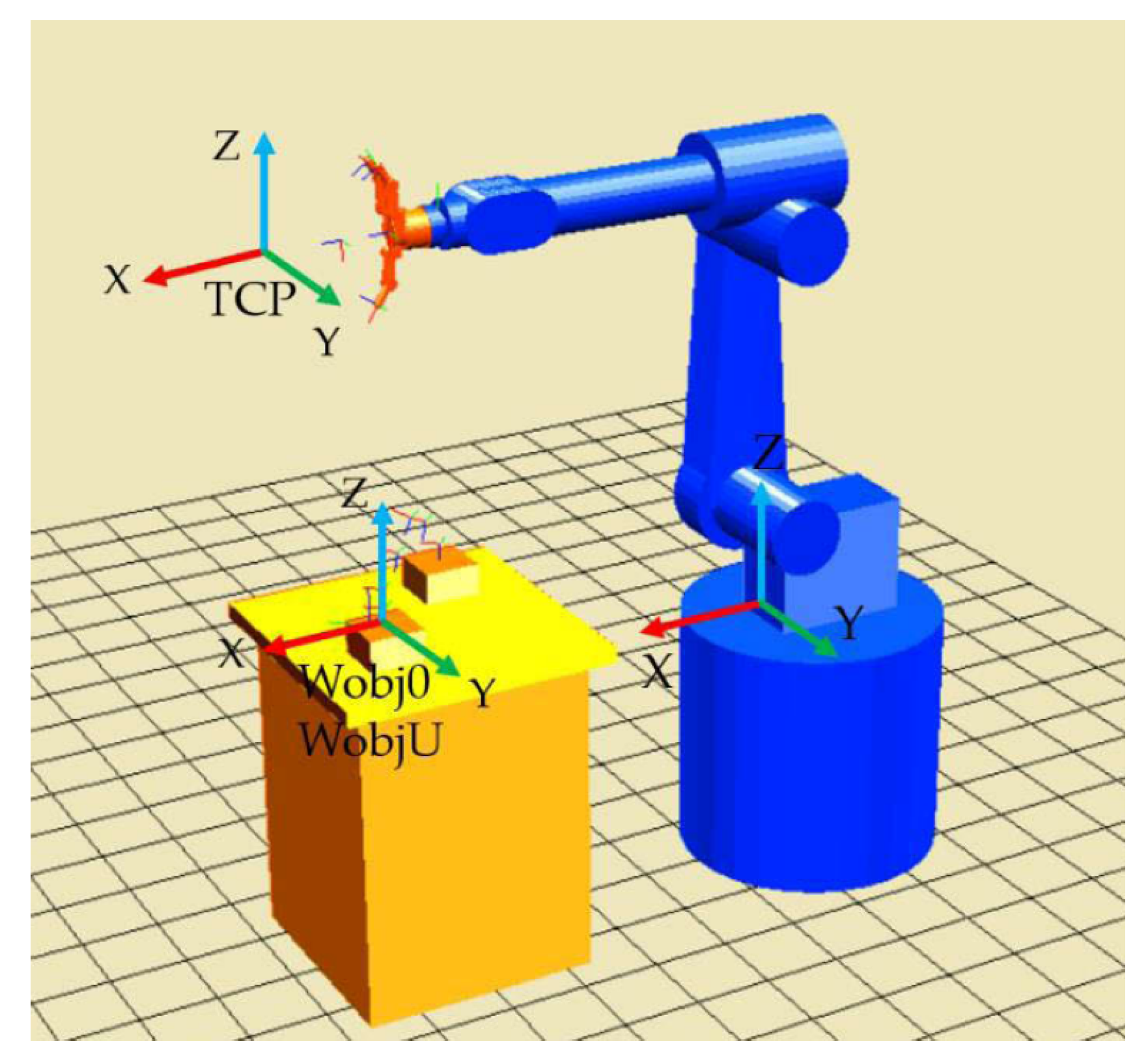

Figure 6 shows the flowchart of the pregrasp strategy for the hand + arm system that uses the IK of the arm and the hand for achieving this initial grasp. For implementing this planning strategy, we have chosen a 6-DOF robotic arm (e.g., a Viper S1700D for our real experiments) so that the hand can attain any pose (position + orientation) in the space around the object. Initially, the object is resting on a table, modeled by a plane parallel to the XY plane of the arm base.

For performing this pregrasp strategy, we should represent the object pose (position

in mm and orientation

in ZYZ Euler angles) from its original

frame (object mark) to the

frame (user mark), known by the arm (see

Figure 7). The TCP pose will also be represented firstly in the

frame in order to easily define relative movements between the object and the hand. Later, it will be transformed into the arm frame (

) so that final movements of the arm can be computed.

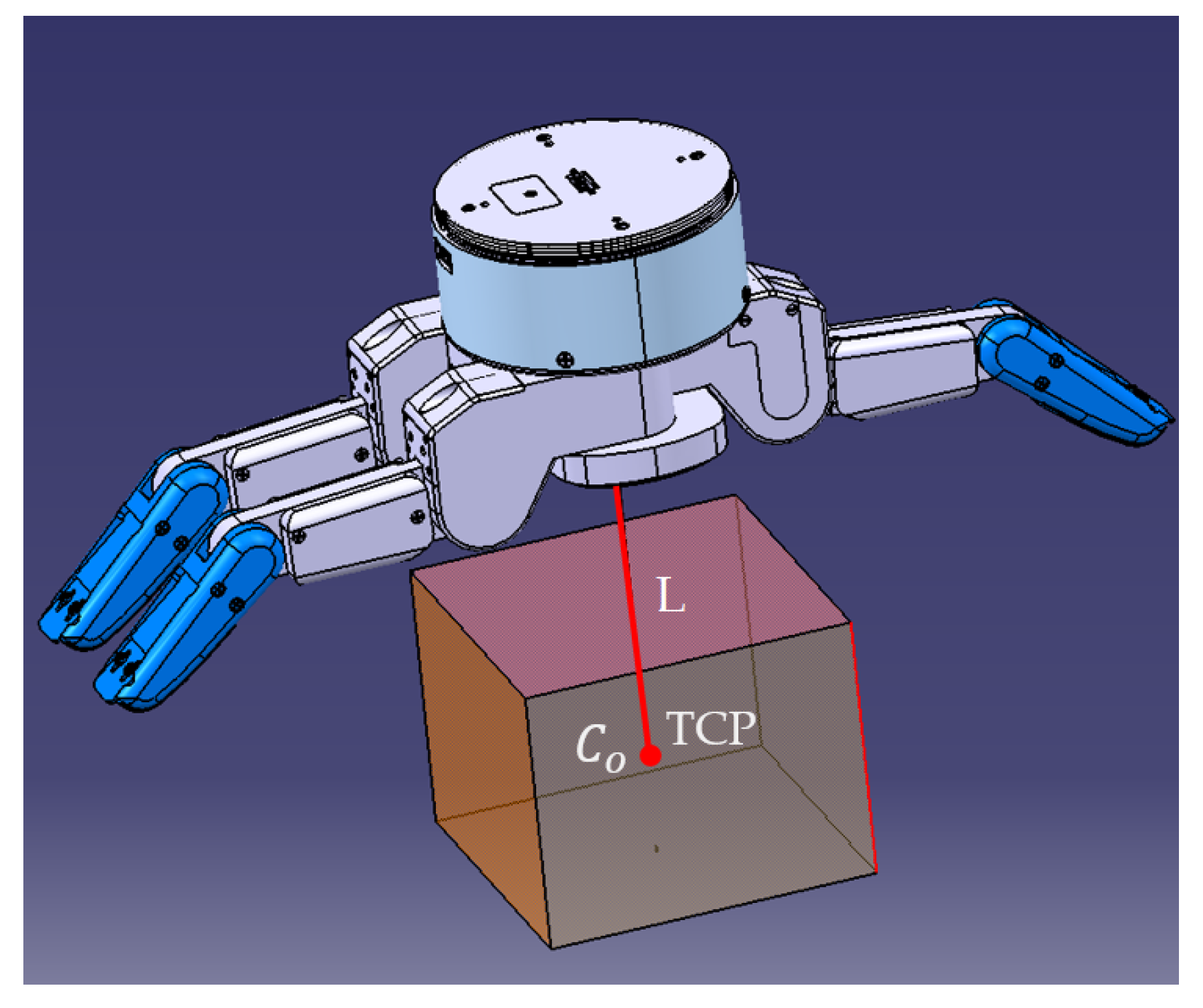

Firstly, we want to reach the relative object + hand configuration of

Figure 8, which consists of putting the TCP in coincidence with

(see

Figure 9b). Then, we use the results from

Section 3 to determine the contact points. We calculate the grasp triangle formed by these three contact points as well as its centroid

. Finally, we define the final pregrasp configuration (defined in

Figure 5) by the translation and orientation of the hand. This step consists of matching the two TCP and

points and aligning the Z axis of the TCP coordinate system perpendicular to the grasp triangle (see

Figure 9c) by computing the arm movements required to reach the tool frame obtained by Algorithm 2. When this final pregrasp configuration is reached by the hand, the fingers will be closed by applying the joints’ angles obtained with Algorithm 2. An example of the main execution steps of this pregrasp strategy for a cube is shown in

Figure 9.

6. Final Stable Grasp Execution Based on Contact Interaction Modeling

In order to be able to evaluate the equilibrium with all the forces that take place in the real experiment while the fingers of the hand are closing, a model of the contact interactions between the fingertips and the object is required for precisely determining the forces–deformations relationship during grasping. This model will receive as input the initial grasp of the object without any deformation (i.e., the initial contact points of the fingertips over the object’s surface or contact polygon determined at

Section 3 and the fingers’ configuration of the pregrasp strategy are described in

Section 4 and

Section 5).

After this initial grasp, the fingers should close iteratively towards the center of the object until the equilibrium of all forces over the object is reached and a stable grasp is performed. With the aim of computing the required fingertip forces for this equilibrium, the simulation of this contact model is achieved in several sequential steps. Firstly, the contact between fingers and the object is detected. Secondly, contact forces are computed (by the evaluation of the relative velocity between the fingers and the object). Finally, the static equilibrium is checked for grasping stability. At each iteration of the simulation, the dynamic model updates the overall shape and contact area deformations due to the applied forces. Contact forces are individually calculated for each contact point, which gives a realistic distribution of the contact pressure in the contact zone. The contact model takes into account the normal forces and the two modes of the tangential forces due to friction: slipping and sticking modes. As the object is modeled by a set of nonlinear spring–damper pairs, the nonlinear normal force is given by

where

is the penetration distance measured along the normal direction to the contact surface

j;

K and

C are the contact stiffness and damping constants, respectively. Those parameters depend on Young’s modulus and the Poisson ratios. Based on the work presented in the literature [

51,

52], the stiffness constant is calculated by

where

is a constant, calculated according to the mechanical properties of the two objects in contact, and it is given by

where

and

are the Young’s modulus;

and

are the Poisson ratios of the finger and the object, respectively. The damping constant is determined by

where

is a constant.

To characterize these mechanical parameters, compression tests are done for obtaining curves of stress evolution according to compressive deformation (see [

33] for photos and detailed results of this calibration process). Thereby, the Young’s modulus (4.928 MPa) and the Poisson’s ratio (0.39) of the material of the objects (i.e., foam) to be grasped during the real experiments in

Section 7 are identified.

Modeling of the tangential force, acting along each contact, is required in order to prevent any slippage and to ensure grasp stability. The contact model takes into account the two modes of the tangential forces due to friction: slipping and sticking modes. In this model, a parallel spring–damper is attached to the ground at one end, via a slider element, and to the fingertip at the other end. Therefore, the contact point location dynamically changes in the slipping condition. These variables correspond to the relative displacements at the contact point due, respectively, to sticking and slipping. They are dynamically reset to zero if the contact is broken. The sliding friction force can be defined in terms of the Coulomb law as follows:

where

,

, and

are friction coefficient, normal force, and tangential velocity, respectively. With this model, the contact point location dynamically changes in the slipping condition. If the tangential force norm is less than the threshold of sliding, then we have a sticking mode whose tangential force is defined by

where

v is the tangential deformation at the contact facet,

is the contact point position, and

is the contact point position at the sticking regime. The parameters

and

are respectively tangential stiffness and damping coefficients estimated by using Dopico’s method [

30].

As indicated at the beginning of this section, this contact model will be executed after the initial grasp configuration (obtained as output of the pregrasp strategy) is reached and the fingers begin to touch the surface of the object. In fact, a simulation of the grasping execution strategy is implemented in Matlab in order to determine the contact forces that should be applied to reach a robust grasp. This simulation will iteratively close the fingers towards the center of the object, evaluate the forces transmitted to the object by solving the contact model, update the state of the contact surface due to deformations, and verify the static equilibrium of the hand + object system. When this static equilibrium is obtained in the simulation, the contact forces applied by the simulated fingers will be used as references for the force control of the real robotic hand. An example of this grasping execution strategy will be shown in

Section 7 in order to justify its application in our pipeline. Nevertheless, more experiments of this grasp execution strategy can be found in the previous work [

33] by the authors, where it was first proposed.

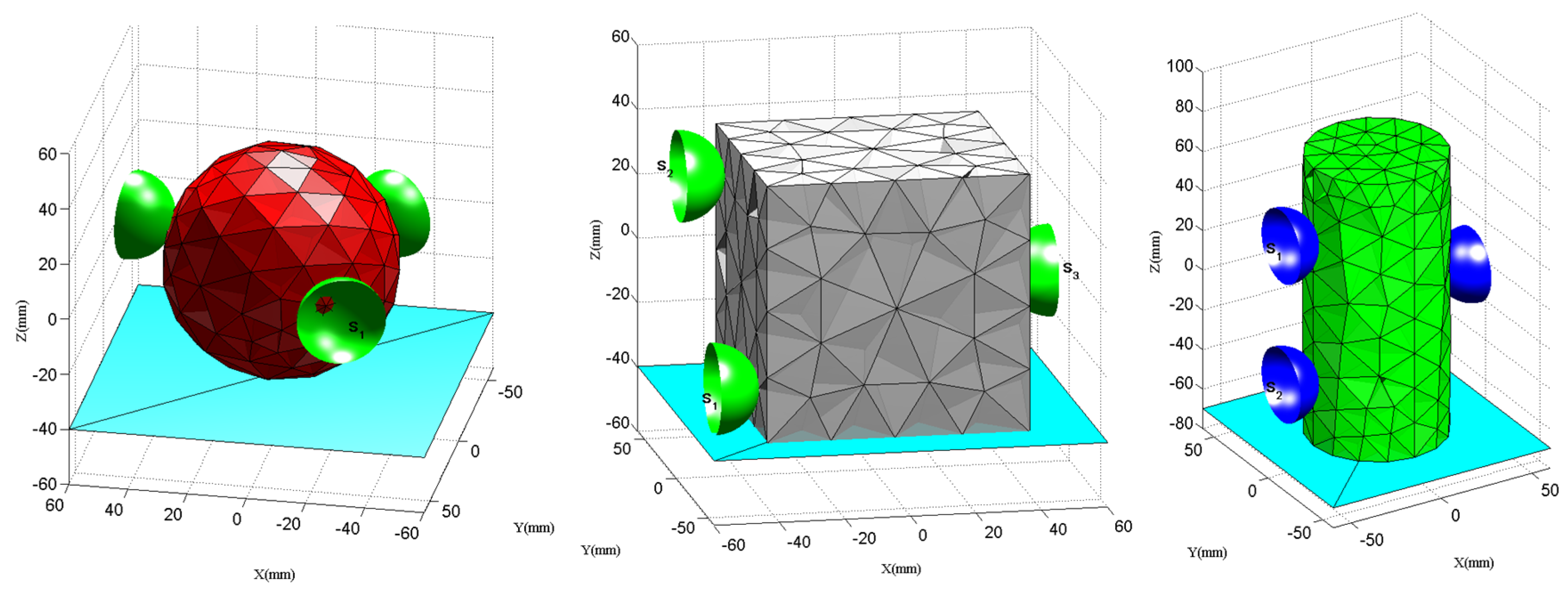

7. Experimental Validation of the Grasp Planning Pipeline for the Hand + Arm System

The different steps of our complete grasp planning pipeline are validated with simulation and real experiments. First of all, the synthesis algorithm for the first initial grasp configuration proposed in

Section 3 (i.e., Algorithm 1) is validated for three different shapes: a sphere, a cylinder, and a cube (

Figure 10). This algorithm has been implemented in Matlab, by integrating a discrete 3D tetrahedral representation of the shape of these objects. The number of nodes of this 3D representation and the values of the two grasping quality metrics computed by the algorithm for the three objects are shown in

Table 1. The initial grasping points obtained are shown in

Figure 10 and they do not consider any deformation of the objects.

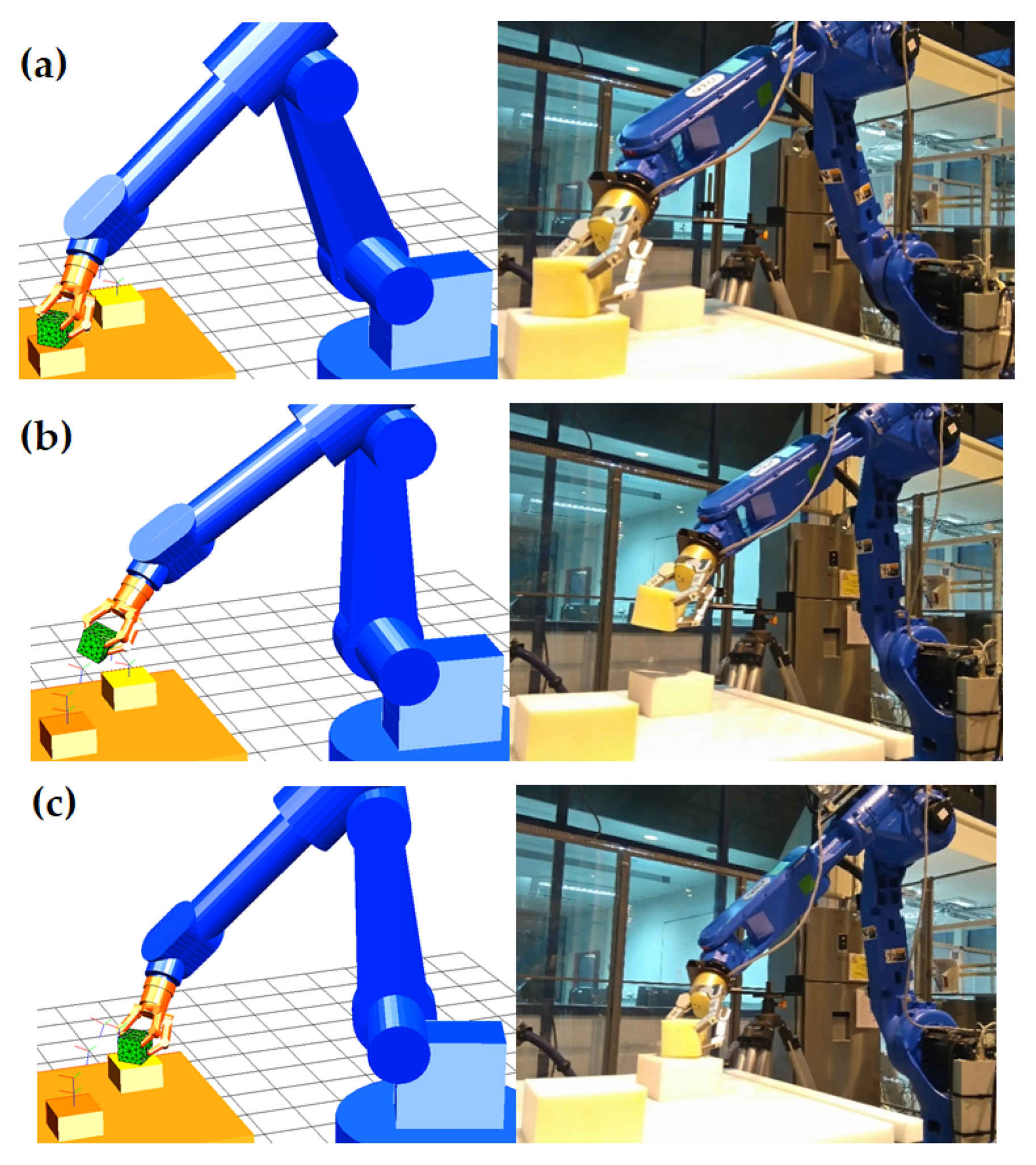

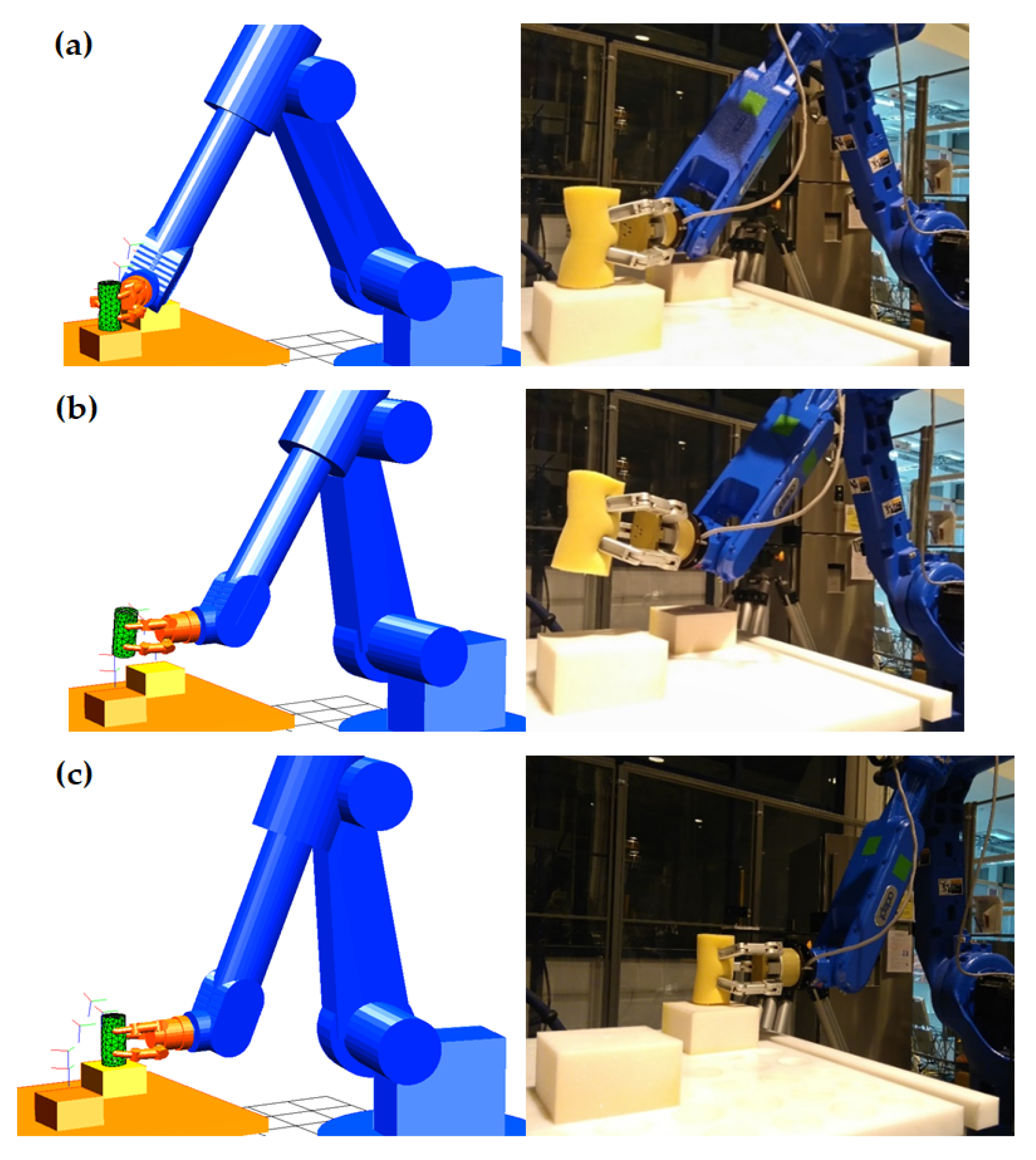

These initial grasp configurations are used as input for the pregrasp and grasp execution steps of the grasp planning pipeline. For validating both steps, real experiments with the Adept arm and the Barrett hand grasping a foam cube (

Figure 11) and a cylinder (

Figure 12) are performed. Both objects represent typical shapes found in grasping applications of industrial products: a planar-faced surface (e.g., a cube) and a revolution surface (e.g., a cylinder). After the grasping, the object is lifted and carried to another position over the table in order to validate the stability of the grasp. The first step consists of generating and executing the path of the Adept arm for the pregrasp configuration in simulation, ensuring that the object is within the Barrett hand workspace (as explained for the cube in

Figure 9a–c). The trajectory of the arm and the closing of the hand are then performed in the simulation (

Figure 9d) until the fingers come into contact with the object.

The second step is to model the interaction between the fingers and the object for closing the fingers against the object surface in order to get the required contact forces in simulation (as explained in

Section 6). The real contact forces at each finger are obtained by strain gauges installed in the phalanxes of the Barrett hand. Thereby, the Barrett hand is iteratively closed until the contact forces obtained from the simulation of the contact model are attained (as shown in

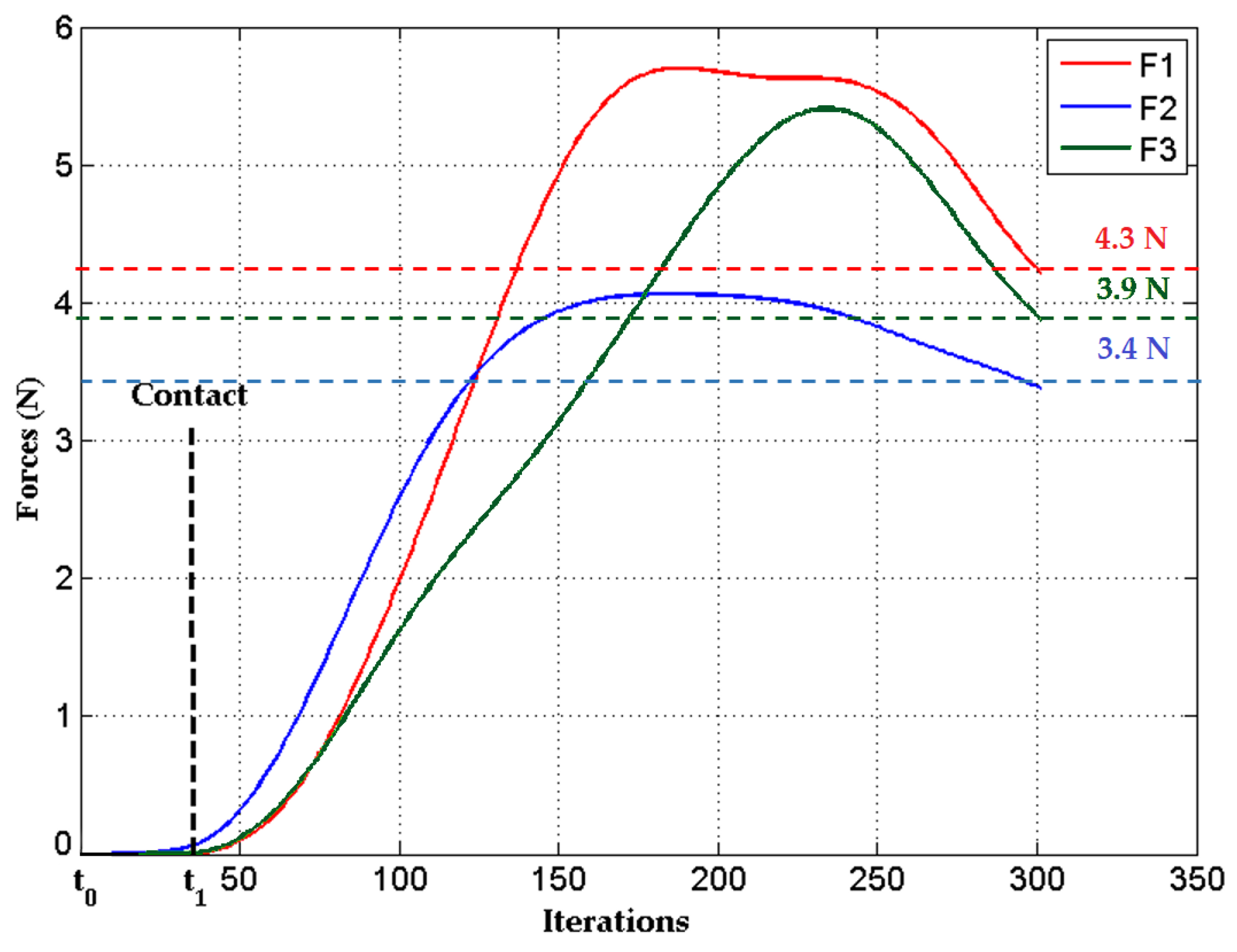

Figure 13). Thus, a stable final grasp of the deformable object is obtained and the object can be lifted without any danger of falling down.

Figure 13 shows the variation in the norm of the three forces applied to the cube during the simulation of the contact model. At the initial step (

) of the simulation, the fingers move towards the object in order to reach the initial contact points. When the fingers come into contact with the object (

), the contact model starts to evaluate the applied forces. This figure also shows that the contact forces are continuous and directly related to the amount of local deformation inside the contact area. The fingers continue to apply forces until equilibrium conditions are satisfied (i.e., reaching the three fingers’ contact forces thresholds, identified by horizontal dashed lines in

Figure 13). Once this state is reached and the three force thresholds are obtained, the fingers should keep these contact forces in order to ensure stable handling and then the simulation can stop.

The relation between these force thresholds and penetration of the fingers inside the object (i.e., the deformation of its surface) is obtained by the simulation of the contact interaction model while closing the fingertips over the object. The real relation force–penetration is very similar to the one computed in the simulation (see real validation experiments in [

33]). Thereby, our strategy of applying the contact forces obtained from simulation in real grasping is suitable. In fact, the mean error of the contact force obtained by our model is of

, which implies that only

of typical contact forces is required for grasping most common life deformable objects. In addition, the nonlinear relation force–penetration shows that simplified linear models cannot be used for grasping significantly deformable objects and justifies the necessity of the proposed model. The proposed grasp planning strategy is validated by the correct execution of two pick-and-place tasks (for a cube and a cylinder): lifting and stable transportation of both objects with the real robot without any slippage (as shown in the real photographs of

Figure 11 and

Figure 12).

8. Conclusions

This work has been devoted to the complete process of deformable object grasp planning with an industrial manipulator (robotic hand + robotic arm). First of all, this requires the definition of the grasp points over the surface of the object and thus, the determination of an initial grasp configuration. Later, we presented our approach for determining the pregrasp strategy for reaching this initial grasp by taking into account the kinematic constraints of the arm and the hand. Thereby, the grasp planning has been divided into two phases: firstly, determining the configuration of the robot arm to bring the hand close to the object; secondly, determining the appropriate configuration of the fingers of the hand to grasp the object. The determination of the joint parameters of the arm and the hand is ensured by the resolution of their inverse kinematics. In the case of the hand, the implemented strategy consists first of putting the TCP (line perpendicular to the palm of the hand) at an intersection with the center of the grasp triangle (obtained in the initial grasp synthesis) and aligning it with the normal vector of this grasp triangle. The second step is to iteratively search for a solution to the articular parameters of the three fingers of the Barrett hand in order to bring them into correspondence with the grasping points previously defined by force-closure-type stability conditions. Thereby, this final grasp solution combines the initial grasp based on a general geometric criteria for force closure (i.e., it only considers the shape of the object) with the kinematic constraints of the robotic hand by applying the proposed pregrasp strategy. Nevertheless, this grasp configuration does guarantee the robustness of the grasp only for rigid objects but not for deformable objects. Thus, a force–deformation serving scheme is activated when the fingers come into contact with the deformable object to be grasped. This scheme is based on a simulation of the object–fingers interaction (previously developed by the authors in [

33]), which obtains the contact force thresholds that should be applied by the fingers in order to guarantee a stable grasp while deforming the surface of the object. Finally, an iterative closing of the real fingers is performed in order to attain these contact forces with the real object.

Objects with simple geometrical shapes (sphere, cube, and cylinder) were chosen for the experiments to more efficiently simulate the interaction model described in

Section 6. The proposed pregrasp/grasp pipeline is not restricted to simple shapes and can be applied to any shape of object. In fact, the mesh construction process required by the interaction model has been applied by the authors to very irregular shapes of meat pieces in a previous work [

10]. This grasp planning pipeline is designed for nonlinear deformable objects to be manipulated by three-fingered robotic hands, but its architecture could be redesigned as a group of interchangeable modules in future works. These modules could include the following: other types of objects (e.g., changing the contact interaction model for rigid objects, granular media [

53]), new sensing data (i.e., combining tactile and vision information for estimating the 3D shape of the object and the real contact forces in real-time [

54]), multiple robotic fingers (e.g., dexterous robotic hands [

55]), or even more complex manipulation tasks (e.g., in-hand manipulation [

56]).