A Novel Statistic-Based Corpus Machine Processing Approach to Refine a Big Textual Data: An ESP Case of COVID-19 News Reports

Abstract

1. Introduction

2. Literature Review

2.1. Corpus Analysis

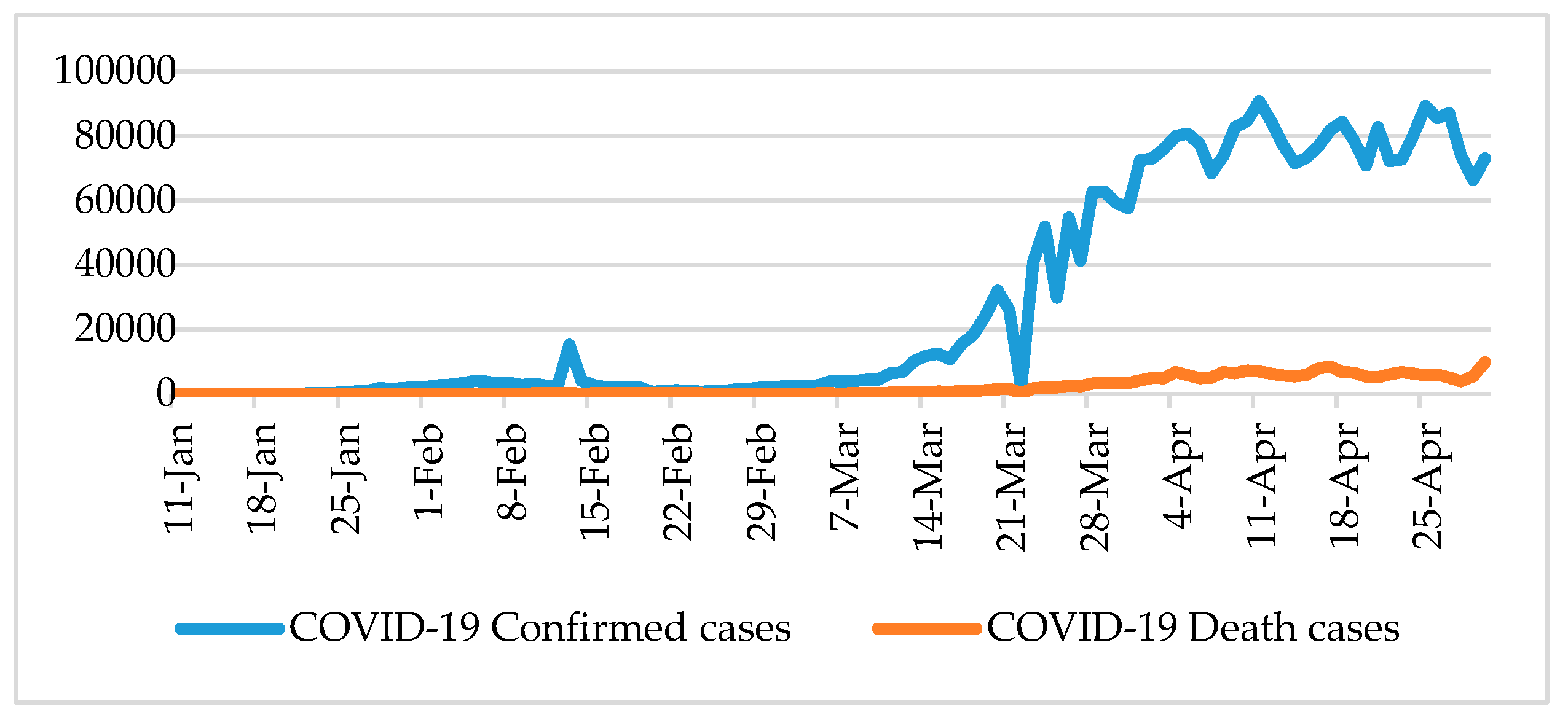

2.2. COVID-19

3. Methodology

4. Empirical Study

4.1. Overviews of the Target Corpora

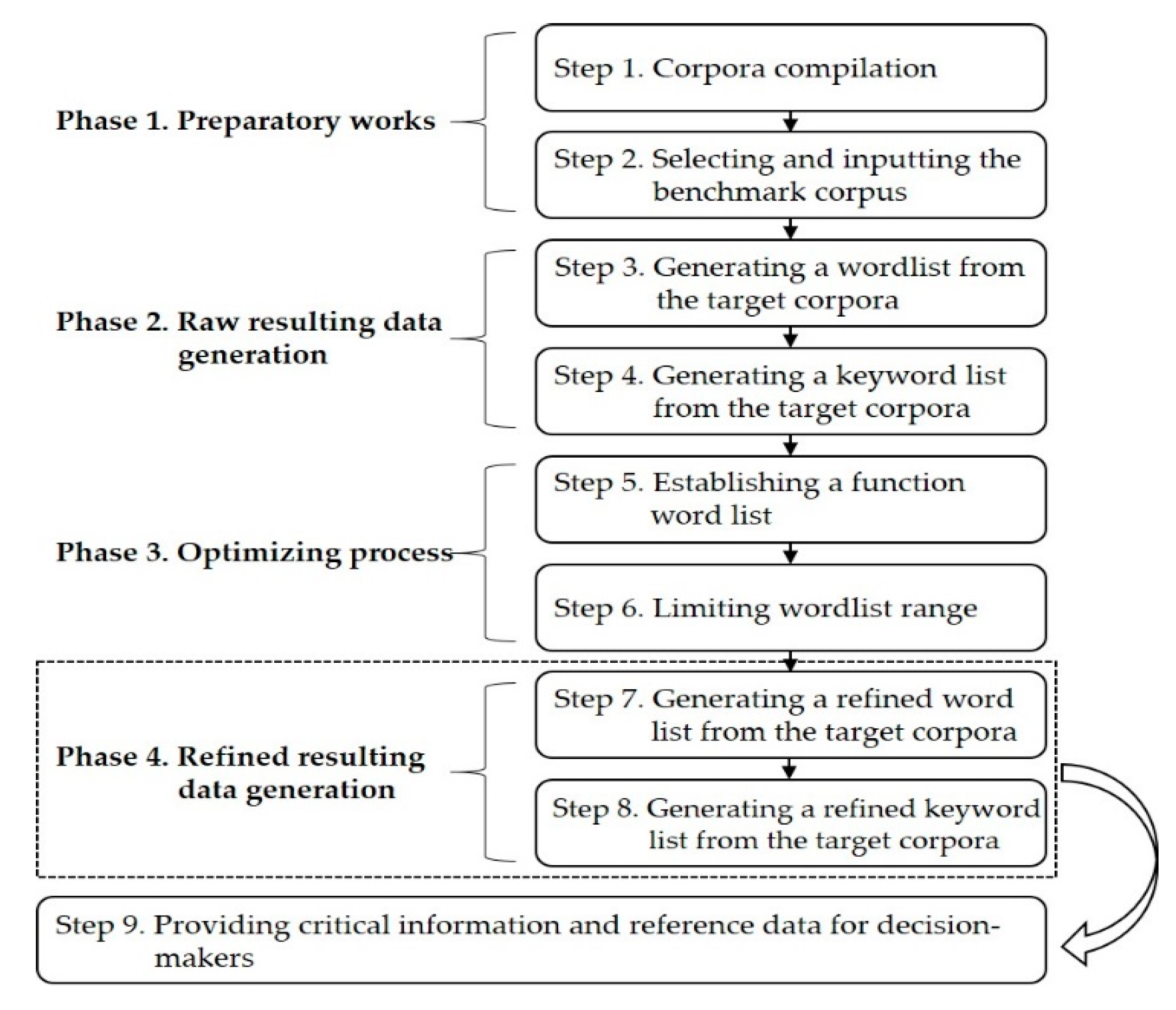

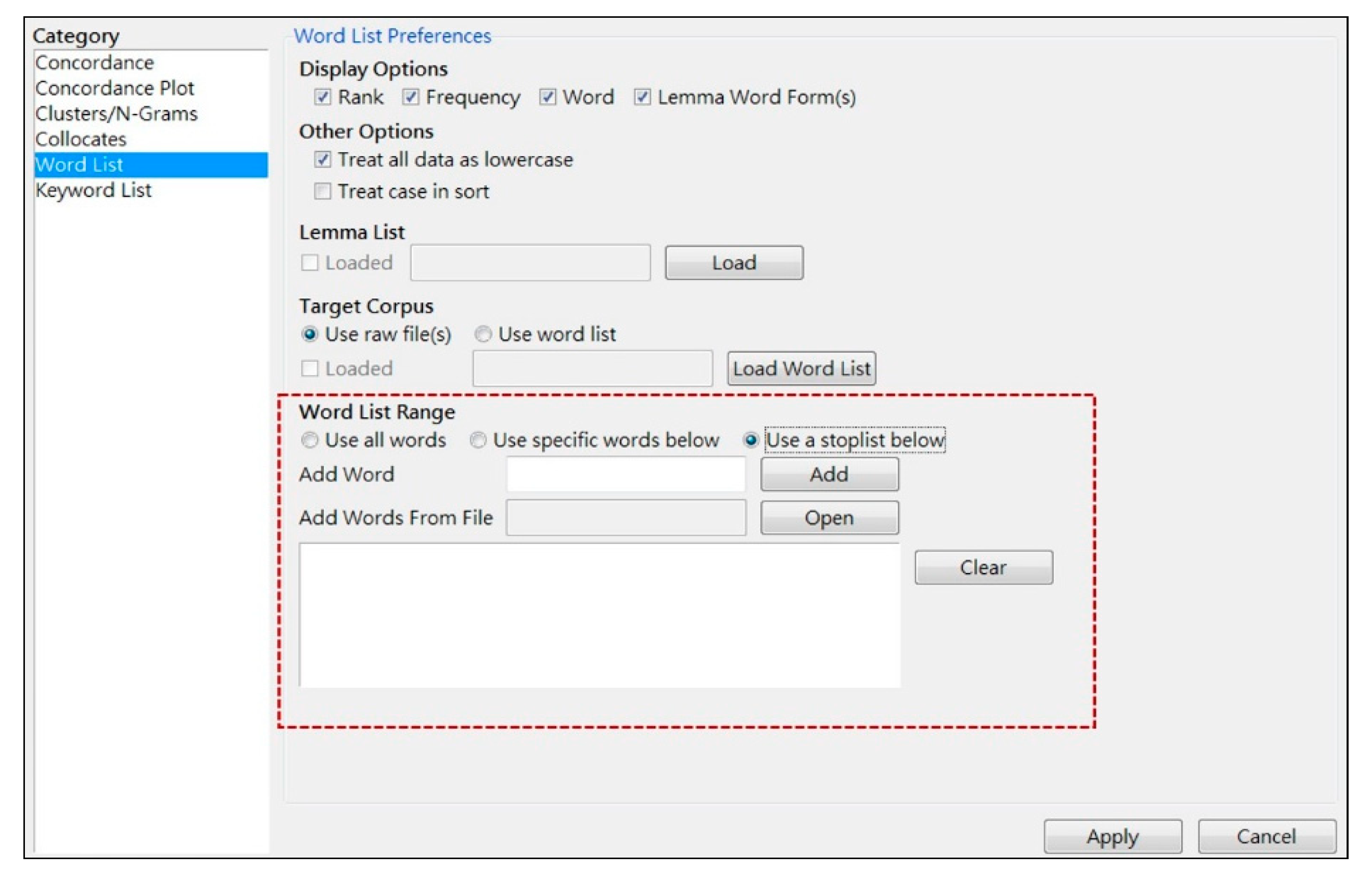

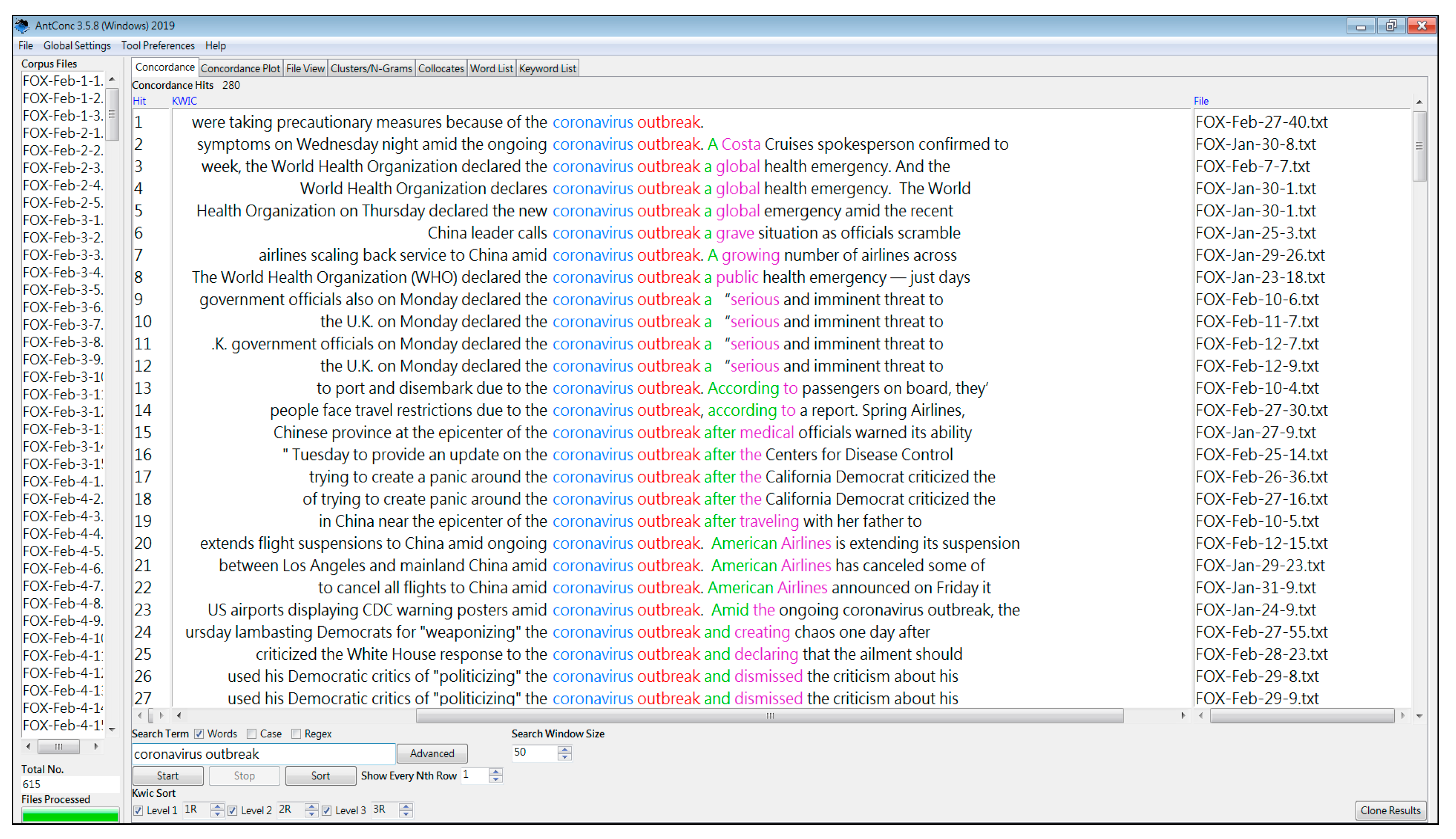

4.2. The Proposed Method

4.3. Comparison and Discussion

- 1-1

- (retrieved from FOX-Jan-26-5) Coronavirus outbreak spurs Paris to cancel Lunar New Year parade…

- 1-2

- (retrieved from FOX-Feb-4-1) A Chinese doctor who claimed he quietly warned of the coronavirus outbreak that has besieged the country…

- 1-3

- (retrieved from FOX-Feb-15-6) …medical supplies to help China combat a coronavirus outbreak that has infected over 67,000 people.

- 1-4

- (retrieved from FOX-Feb-17-3) …the deadly coronavirus outbreak that’s sickened over 70,500 in the country and killed at least 1,770.

- 1-5

- (retrieved from FOX-Feb-17-4) …prayed for a cure to combat the coronavirus outbreak that has killed 1,770 people.

- 2-1

- (retrieved from FOX-Jan-20-2) Human-to-human transmission of coronavirus in China confirmed.

- 2-2

- (retrieved from FOX-Feb-3-6) Currently, there are six cases of the novel coronavirus in California, one in Arizona…

- 2-3

- (retrieved from FOX-Feb-10-10) Leaving someone with coronavirus in a hallway could expose countless patients…

- 2-4

- (retrieved from FOX-Feb-11-16) Evacuee confirmed to have coronavirus in California as US total reaches 13.

- 2-5

- (retrieved from FOX-Feb-28-1) Dog tests ‘weak positive’ for coronavirus in Hong Kong, first possible infection in pet.

- 3-1

- (retrieved from FOX-Jan-24-7) CDC confirms coronavirus case in Illinois, dozens more under investigation.

- 3-2

- (retrieved from FOX-Jan-27-13) CDC: 110 suspected coronavirus cases in US under investigation…

- 3-3

- (retrieved from FOX-Jan-27-11) Africa investigating first possible coronavirus case in Ivory Coast student: officials.

- 3-4

- (retrieved from FOX-Feb-13-24) The number of coronavirus cases in China significantly increased on Thursday…

- 3-5

- (retrieved from FOX-Feb-21-13) Coronavirus cases balloon in South Korea as outbreak spreads.

- 4-1

- (retrieved from FOX-Feb-10-1) The coronavirus is primarily transmitted through respiratory droplets, meaning human saliva and mucus.

- 4-2

- (retrieved from FOX-Feb-11-3) The new coronavirus is a respiratory virus, and we know respiratory viruses are often seasonal, but not always.

- 4-3

- (retrieved from FOX-Feb-24-3) The strain of coronavirus is believed to have jumped from bats and snakes…

- 4-4

- (retrieved from FOX-Feb-26-24) Dr. Marc Siegel said Wednesday that coronavirus is appearing to be more contagious than the flu.

- 4-5

- (retrieved from FOX-Feb-29-17) Donald Trump: Coronavirus is Democrats’ ‘new hoax’.

- 5-1

- (retrieved from FOX-Jan-24-2) Coronavirus death toll rises to 41 in China, more than 1200 sickened.

- 5-2

- (retrieved from FOX-Feb-2-5) Coronavirus death in Philippines said to be first outside China.

- 5-3

- (retrieved from FOX-Feb-4-4) A second coronavirus death outside of China was reported earlier Tuesday by Hong Kong…

- 5-4

- (retrieved from FOX-Feb-16-3) China sees coronavirus death toll rise by 105.

- 5-5

- (retrieved from FOX-Feb-18-2) Japan announced its first coronavirus death last Thursday.

- 6-1

- (retrieved from FOX-Jan-23-8) Suspected coronavirus patients in Scotland being tested: reports.

- 6-2

- (retrieved from FOX-Feb-18-3) Japan to trial HIV medications on coronavirus patients.

- 6-3

- (retrieved from FOX-Feb-18-15) ‘SARS-like damage’ seen in dead coronavirus patient in China, report says.

- 6-4

- (retrieved from FOX-Feb-19-9) Iran’s first 2 coronavirus patients die, state media says.

- 6-5

- (retrieved from FOX-Feb-26-20) First US docs to analyze coronavirus patients’ lungs say insight could lead to quicker diagnosis.

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Cotet, C.E.; Deac, G.C.; Deac, C.N.; Popa, C.L. An innovative industry 4.0 cloud data transfer method for an automated waste collection system. Sustainability 2020, 12, 1839. [Google Scholar] [CrossRef]

- Da Silva, F.S.T.; da Costa, C.A.; Crovato, C.D.P.; Righi, R.D. Looking at energy through the lens of industry 4.0: A systematic literature review of concerns and challenges. Comput. Ind. Eng. 2020, 143, 106426. [Google Scholar] [CrossRef]

- Tiwari, K.; Khan, M.S. Sustainability accounting and reporting in the industry 4.0. J. Clean Prod. 2020, 258, 120783. [Google Scholar] [CrossRef]

- Nicolae, A.; Korodi, A.; Silea, I. Identifying data dependencies as first step to obtain a proactive historian: Test scenario in the water Industry 4.0. Water 2019, 11, 1144. [Google Scholar] [CrossRef]

- Sung, S.I.; Kim, Y.S.; Kim, H.S. Study on reverse logistics focused on developing the collection signal algorithm based on the sensor data and the concept of Industry 4.0. Appl. Sci. 2020, 10, 5016. [Google Scholar] [CrossRef]

- Hozdic, E.; Butala, P. Concept of socio-cyber-physical work systems for industry 4.0. Teh. Vjesn. 2020, 27, 399–410. [Google Scholar]

- Kong, W.C.; Qiao, F.; Wu, Q.D. Real-manufacturing-oriented big data analysis and data value evaluation with domain knowledge. Comput. Stat. 2020, 35, 515–538. [Google Scholar] [CrossRef]

- Nasrollahi, M.; Ramezani, J. A model to evaluate the organizational readiness for big data adoption. Int. J. Comput. Commun. Control 2020, 15, UNSP 3874. [Google Scholar]

- Holmlund, M.; Van Vaerenbergh, Y.; Ciuchita, R.; Ravald, A.; Sarantopoulos, P.; Ordenes, F.V.; Zaki, M. Customer experience management in the age of big data analytics: A strategic framework. J. Bus. Res. 2020, 116, 356–365. [Google Scholar] [CrossRef]

- Balakrishna, S.; Thirumaran, M.; Solanki, V.K.; Nunez-Valdez, E.R. Incremental Hierarchical Clustering driven Automatic Annotations for Unifying IoT Streaming Data. Int. J. Interact. Multimed. Artif. Intell. 2020, 6, 56–70. [Google Scholar]

- Ebrahimi, N.; Trabelsi, A.; Islam, M.S.; Hamou-Lhadj, A.; Khanmohammadi, K. An HMM-based approach for automatic detection and classification of duplicate bug reports. Inf. Softw. Technol. 2019, 113, 98–109. [Google Scholar] [CrossRef]

- Baroni, M. Linguistic generalization and compositionality in modern artificial neural networks. Philos. Trans. R. Soc. B 2020, 375, 20190307. [Google Scholar] [CrossRef] [PubMed]

- Zhang, S.T.; Tan, H.B.; Chen, L.F.; Lv, B. Enhanced text matching based on semantic transformation. IEEE Access 2020, 8, 30897–30904. [Google Scholar] [CrossRef]

- Csomay, E.; Petrovic, M. “Yes, your honor!”: A corpus-based study of technical vocabulary in discipline-related movies and TV shows. System 2012, 40, 305–315. [Google Scholar] [CrossRef]

- Coxhead, A.; Dang, T.N.Y.; Mukai, S. Single and multi-word unit vocabulary in university tutorials and laboratories: Evidence from corpora and textbooks. J. Engl. Acad. Purp. 2017, 30, 66–78. [Google Scholar] [CrossRef]

- Moon, S.; Oh, S.Y. Unlearning overgenerated be through data-driven learning in the secondary EFL classroom. ReCALL 2018, 30, 48–67. [Google Scholar] [CrossRef]

- Lee, H.; Warschauer, M.; Lee, J.H. Advancing CALL research via data-mining techniques: Unearthing hidden groups of learners in a corpus-based L2 vocabulary learning experiment. ReCALL 2019, 31, 135–149. [Google Scholar] [CrossRef]

- Dong, J.H.; Lu, X.F. Promoting discipline-specific genre competence with corpus-based genre analysis activities. Engl. Specif. Purp. 2020, 58, 138–154. [Google Scholar] [CrossRef]

- Paterson, L.L. Electronic supplement analysis of multiple texts exploring discourses of UK poverty in below the line comments. Int. J. Corpus Linguist. 2020, 25, 62–88. [Google Scholar] [CrossRef]

- Yager, R.R.; Reformat, M.Z.; To, N.D. Drawing on the iPad to input fuzzy sets with an application to linguistic data science. Inf. Sci. 2019, 479, 277–291. [Google Scholar] [CrossRef]

- Pawar, A.; Mago, V. Challenging the boundaries of unsupervised learning for semantic similarity. IEEE Access 2019, 7, 16291–16308. [Google Scholar] [CrossRef]

- Doan, P.T.H.; Arch-int, N.; Arch-int, S. A semantic framework for extracting taxonomic relations from text corpus. Int. Arab J. Inf. Technol. 2020, 17, 325–337. [Google Scholar]

- Legrand, J.; Gogdemir, R.; Bousquet, C.; Dalleau, K.; Devignes, M.D.; Digan, W.; Lee, C.J.; Ndiaye, N.C.; Petitpain, N.; Ringot, P.; et al. PGxCorpus, a manually annotated corpus for pharmacogenomics. Sci. Data 2020, 7, 3. [Google Scholar] [CrossRef] [PubMed]

- Gan, L.; Li, S.J.; Shu, Z.; Yu, W. Big data metrics: Time sensitivity analysis of multimedia news. J. Intell. Fuzzy Syst. 2020, 38, 1181–1188. [Google Scholar] [CrossRef]

- Georgiadou, E.; Angelopoulos, S.; Drake, H. Big data analytics and international negotiations: Sentiment analysis of Brexit negotiating outcomes. Int. J. Inf. Manag. 2020, 51, 102048. [Google Scholar] [CrossRef]

- Vianna, F.R.P.M.; Graeml, A.R.; Peinado, J. The role of crowdsourcing in industry 4.0: A systematic literature review. Int. J. Comput. Integr. Manuf. 2020, 33, 411–427. [Google Scholar] [CrossRef]

- Carrion, B.; Onorati, T.; Diaz, P.; Triga, V. A taxonomy generation tool for semantic visual analysis of large corpus of documents. Multimed. Tools Appl. 2019, 78, 32919–32937. [Google Scholar] [CrossRef]

- Scott, M. PC analysis of key words—And key key words. System 1997, 25, 233–245. [Google Scholar] [CrossRef]

- Graham, D. KeyBNC [Computer Software]. 2014. Available online: http://crs2.kmutt.ac.th/Key-BNC/ (accessed on 24 April 2016).

- Anthony, L. AntConc (Version 3.5.8) [Computer Software]; Waseda University: Tokyo, Japan, 2019; Available online: https://www.laurenceanthony.net/software/antconc/ (accessed on 5 May 2020).

- Li, S.L. A corpus-based study of vague language in legislative texts: Strategic use of vague terms. Engl. Specif. Purp. 2016, 45, 98–109. [Google Scholar] [CrossRef]

- Todd, R.W. An opaque engineering word list: Which words should a teacher focus on? Engl. Specif. Purp. 2016, 45, 31–39. [Google Scholar] [CrossRef]

- Ross, A.S.; Rivers, D.J. Discursive deflection: Accusation of “fake news” and the spread of mis- and disinformation in the Tweets of President Trump. Soc. Med. Soc. 2018, 4. [Google Scholar] [CrossRef]

- Anthony, L.; Hardaker, C. FireAnt (Version 1.1.4) [Computer software]; Wasada University: Tokyo, Japan, 2017; Available online: http://www.laurenceanthony.net (accessed on 5 May 2020).

- Lippi, G.; Sanchis-Gomar, F.; Henry, B.M. Coronavirus disease 2019 (COVID-19): The portrait of a perfect storm. Ann. Transl. Med. 2020, 8, 497. [Google Scholar] [CrossRef] [PubMed]

- Ahmed, W.; Vidal-Alaball, J.; Downing, J.; Segui, F.L. COVID-19 and the 5G conspiracy theory: Social network analysis of twitter data. J. Med. Internet Res. 2020, 22, e19458. [Google Scholar] [CrossRef] [PubMed]

- Abd-Alrazaq, A.; Alhuwail, D.; Househ, M.; Hamdi, M.; Shah, Z. Top concerns of tweeters during the COVID-19 pandemic: Infoveillance study. J. Med. Internet Res. 2020, 22, e19016. [Google Scholar] [CrossRef] [PubMed]

- Leung, W.W.F.; Sun, Q.Q. Charged PVDF multilayer nanofiber filter in filtering simulated airborne novel coronavirus (COVID-19) using ambient nano-aerosols. Sep. Purif. Technol. 2020, 245, 116887. [Google Scholar] [CrossRef] [PubMed]

- Nikolaou, P.; Dimitriou, L. Identification of critical airports for controlling global infectious disease outbreaks: Stress-tests focusing in Europe. J. Air Transp. Manag. 2020, 85, 101819. [Google Scholar] [CrossRef]

- Yang, Y.; Shang, W.L.; Rao, X.C. Facing the COVID-19 outbreak: What should we know and what could we do? J. Med. Virol. 2020, 92, 536–537. [Google Scholar] [CrossRef]

- Singhal, T. A review of coronavirus disease-2019 (COVID-19). Indian J. Pediatr. 2020, 87, 281–286. [Google Scholar] [CrossRef]

- Yuan, J.J.; Lu, Y.L.; Cao, X.H.; Cui, H.T. Regulating wildlife conservation and food safety to prevent human exposure to novel virus. Ecosyst. Health Sustain. 2020, 6, 1741325. [Google Scholar] [CrossRef]

- Wu, F.; Zhao, S.; Yu, B.; Chen, Y.M.; Wang, W.; Song, Z.G.; Hu, Y.; Tao, Z.W.; Tian, J.H.; Pei, Y.Y.; et al. A new coronavirus associated with human respiratory disease in China. Nature 2020, 579, 265–269. [Google Scholar] [CrossRef]

- Lu, R.J.; Zhao, X.; Li, J.; Niu, P.H.; Yang, B.; Wu, H.L.; Wang, W.; Song, H.; Huang, B.; Zhu, N.; et al. Genomic characterisation and epidemiology of 2019 novel coronavirus: Implications for virus origins and receptor binding. Lancet 2020, 395, 565–574. [Google Scholar] [CrossRef]

- Sun, P.F.; Lu, X.H.; Xu, C.; Sun, W.J.; Pan, B. Understanding of COVID-19 based on current evidence. J. Med. Virol. 2020, 92, 548–551. [Google Scholar] [CrossRef] [PubMed]

- Wan, Y.S.; Shang, J.; Graham, R.; Baric, R.S.; Li, F. Receptor recognition by the novel coronavirus from Wuhan: An analysis based on decade-long structural studies of SARS coronavirus. J. Virol. 2020, 94, e00127-20. [Google Scholar] [CrossRef] [PubMed]

- Brown, J.; Pope, C. Personal protective equipment and possible routes of airborne spread during the COVID-19 pandemic. Anaesthesia 2020, 75, 116–117. [Google Scholar] [CrossRef] [PubMed]

- Kim, Y.J.; Sung, H.; Ki, C.S.; Hur, M. COVID-19 testing in South Korea: Current status and the need for faster diagnostics. Ann. Lab. Med. 2020, 40, 349–350. [Google Scholar] [CrossRef]

- Mullins, E.; Evans, D.; Viner, R.M.; O’Brien, P.; Morris, E. Coronavirus in pregnancy and delivery: Rapid review. Ultrasound Obstet. Gynecol. 2020, 55, 586–592. [Google Scholar] [CrossRef]

- Porcheddu, R.; Serra, C.; Kelvin, D.; Kelvin, N.; Rubino, S. Similarity in case fatality rates (CFR) of COVID-19/SARS-COV-2 in Italy and China. J. Infect. Dev. Ctries. 2020, 14, 125–128. [Google Scholar] [CrossRef]

- Zhao, Y.X.; Cheng, S.X.; Yu, X.Y.; Xu, H.L. Chinese public’s attention to the COVID-19 epidemic on social media: Observational descriptive study. J. Med. Internet Res. 2020, 22, e18825. [Google Scholar] [CrossRef]

- Dunning, T. Accurate methods for the statistics of surprise and coincidence. Comput. Linguist. 1993, 19, 61–74. [Google Scholar]

- O’Keeffe, A.; McCarthy, M.; Carter, R. From Corpus to Classroom: Language Use and Language Teaching; Cambridge University Press: New York, NY, USA, 2007. [Google Scholar]

- Hong, K.H.; Lee, S.W.; Kim, T.S.; Huh, H.J.; Lee, J.; Kim, S.Y.; Park, J.S.; Kim, G.J.; Sung, H.; Roh, K.H.; et al. Guidelines for laboratory diagnosis of coronavirus disease 2019 (COVID-19) in Korea. Ann. Lab. Med. 2020, 40, 351–360. [Google Scholar] [CrossRef]

- Li, L.Q.; Huang, T.; Wang, Y.Q.; Wang, Z.P.; Liang, Y.; Huang, T.B.; Zhang, H.Y.; Sun, W.; Wang, Y.P. COVID-19 patients’ clinical characteristics, discharge rate, and fatality rate of meta-analysis. J. Med. Virol. 2020, 92, 577–583. [Google Scholar] [CrossRef] [PubMed]

- Sinclair, J. Collins COBUILD English Grammar; HarperCollins Publishers Limited: London, UK, 2011. [Google Scholar]

| FOX News Corpora | Word Types | Tokens | TTR | |||

|---|---|---|---|---|---|---|

| Sub-Corpora | Year | Month | Numbers of Reports | |||

| Corpora 1 | 2020 | January | 159 | 9938 | 173,531 | 5.7% |

| Corpora 2 | 2020 | February | 456 | 13,513 | 284,360 | 4.8% |

| Whole corpora | 615 | 16,536 | 457,891 | 3.6% | ||

| Rank | Freq. | Tokens | Rank | Freq. | Tokens | Rank | Freq. | Tokens |

|---|---|---|---|---|---|---|---|---|

| 1 | 26,375 | the | 35 | 1767 | who | 69 | 905 | out |

| 2 | 13,479 | to | 36 | 1585 | virus | 70 | 905 | which |

| 3 | 11,042 | and | 37 | 1551 | all | 71 | 880 | no |

| 4 | 10,138 | of | 38 | 1531 | been | 72 | 845 | like |

| 5 | 8978 | in | 39 | 1449 | an | 73 | 844 | would |

| 6 | 8404 | a | 40 | 1412 | about | 74 | 843 | other |

| 7 | 6899 | that | 41 | 1396 | there | 75 | 840 | know |

| 8 | 6276 | s | 42 | 1332 | will | 76 | 829 | after |

| 9 | 5038 | is | 43 | 1323 | so | 77 | 829 | than |

| 10 | 4378 | it | 44 | 1319 | president | 78 | 796 | going |

| 11 | 4259 | for | 45 | 1304 | by | 79 | 786 | over |

| 12 | 3821 | on | 46 | 1295 | what | 80 | 764 | up |

| 13 | 3561 | i | 47 | 1292 | health | 81 | 746 | our |

| 14 | 3319 | you | 48 | 1258 | or | 82 | 732 | some |

| 15 | 3186 | have | 49 | 1227 | more | 83 | 724 | officials |

| 16 | 2916 | this | 50 | 1198 | TRUMP | 84 | 720 | because |

| 17 | 2805 | we | 51 | 1184 | new | 85 | 717 | its |

| 18 | 2736 | with | 52 | 1182 | cases | 86 | 704 | don |

| 19 | 2707 | he | 53 | 1114 | one | 87 | 674 | right |

| 20 | 2699 | are | 54 | 1098 | were | 88 | 669 | WUHAN |

| 21 | 2586 | they | 55 | 1076 | outbreak | 89 | 666 | reported |

| 22 | 2531 | from | 56 | 1049 | can | 90 | 664 | country |

| 23 | 2432 | was | 57 | 1034 | if | 91 | 664 | first |

| 24 | 2425 | coronavirus | 58 | 1023 | u | 92 | 663 | when |

| 25 | 2419 | as | 59 | 1014 | their | 93 | 656 | FOX |

| 26 | 2386 | be | 60 | 1009 | had | 94 | 654 | how |

| 27 | 2354 | said | 61 | 998 | re | 95 | 652 | she |

| 28 | 2279 | at | 62 | 995 | his | 96 | 637 | well |

| 29 | 2225 | CHINA | 63 | 982 | do | 97 | 620 | two |

| 30 | 2160 | not | 64 | 977 | now | 98 | 616 | them |

| 31 | 2155 | has | 65 | 969 | news | 99 | 613 | CHINESE |

| 32 | 1936 | people | 66 | 967 | think | 100 | 609 | time |

| 33 | 1859 | but | 67 | 926 | also | |||

| 34 | 1808 | t | 68 | 906 | just |

| Rank | Freq. | Keyness | Keywords | Rank | Freq. | Keyness | Keywords |

|---|---|---|---|---|---|---|---|

| 1 | 2425 | 14,637.48 | coronavirus | 51 | 561 | 968.67 | according |

| 2 | 2225 | 9515.52 | CHINA | 52 | 218 | 963.68 | flu |

| 3 | 1585 | 8708.51 | virus | 53 | 1936 | 961.93 | people |

| 4 | 1076 | 6230.89 | outbreak | 54 | 156 | 958.1 | HUBEI |

| 5 | 1198 | 4354.6 | TRUMP | 55 | 191 | 950.62 | BOLTON |

| 6 | 669 | 4082.42 | WUHAN | 56 | 182 | 940.26 | doesn |

| 7 | 1182 | 4078.78 | cases | 57 | 320 | 934.19 | February |

| 8 | 1292 | 3074.65 | health | 58 | 185 | 845.64 | respiratory |

| 9 | 704 | 2876.85 | don | 59 | 206 | 843.84 | amid |

| 10 | 566 | 2869.1 | infected | 60 | 245 | 841.06 | illness |

| 11 | 502 | 2844.33 | CDC | 61 | 259 | 803.62 | tested |

| 12 | 577 | 2507.33 | confirmed | 62 | 351 | 798.05 | democrats |

| 13 | 656 | 2435.6 | FOX | 63 | 220 | 797.32 | airlines |

| 14 | 445 | 2213.44 | CARLSON | 64 | 221 | 786.84 | prevention |

| 15 | 1319 | 2196.98 | president | 65 | 189 | 779.76 | province |

| 16 | 724 | 2030.99 | officials | 66 | 135 | 773.99 | epicenter |

| 17 | 969 | 1944.92 | news | 67 | 208 | 737.75 | KOREA |

| 18 | 415 | 1938.04 | flights | 68 | 2155 | 728.95 | has |

| 19 | 327 | 1926.38 | quarantine | 69 | 181 | 723.5 | princess |

| 20 | 573 | 1877.98 | travel | 70 | 268 | 703.85 | Thursday |

| 21 | 613 | 1793.13 | CHINESE | 71 | 193 | 688.55 | witnesses |

| 22 | 345 | 1766.64 | impeachment | 72 | 180 | 688.1 | reportedly |

| 23 | 523 | 1763.09 | spread | 73 | 123 | 685.38 | pandemic |

| 24 | 666 | 1751.98 | reported | 74 | 372 | 684.73 | medical |

| 25 | 543 | 1645.35 | disease | 75 | 416 | 682.33 | video |

| 26 | 390 | 1605.22 | passengers | 76 | 144 | 681.19 | PENCE |

| 27 | 290 | 1501.96 | GUTFELD | 77 | 664 | 674.5 | country |

| 28 | 244 | 1498.61 | COVID | 78 | 211 | 649.51 | centers |

| 29 | 2354 | 1491.2 | said | 79 | 114 | 647.32 | SCHIFF |

| 30 | 1023 | 1474.3 | u | 80 | 142 | 636.76 | epidemic |

| 31 | 250 | 1458.16 | SARS | 81 | 26375 | 627.84 | the |

| 32 | 305 | 1264.01 | BIDEN | 82 | 261 | 626.82 | JAPAN |

| 33 | 256 | 1258.14 | HONG | 83 | 101 | 620.3 | PERINO |

| 34 | 238 | 1240.31 | mainland | 84 | 525 | 606.19 | united |

| 35 | 329 | 1226.3 | symptoms | 85 | 228 | 600.42 | airport |

| 36 | 277 | 1221.59 | CAVUTO | 86 | 318 | 598.97 | hospital |

| 37 | 252 | 1211.33 | KONG | 87 | 118 | 594.53 | INGRAHAM |

| 38 | 372 | 1205.53 | clip | 88 | 242 | 589.22 | announced |

| 39 | 270 | 1153.46 | cruise | 89 | 245 | 588.01 | novel |

| 40 | 228 | 1105.23 | BERNIE | 90 | 269 | 585.74 | Tuesday |

| 41 | 376 | 1097.71 | ship | 91 | 100 | 580.62 | BUTTIGIEG |

| 42 | 182 | 1079.54 | quarantined | 92 | 165 | 579.43 | FEB |

| 43 | 229 | 1076.63 | BAIER | 93 | 97 | 576.35 | KLOBUCHAR |

| 44 | 258 | 1069.86 | BLOOMBERG | 94 | 136 | 575.8 | vaccine |

| 45 | 280 | 1056.24 | deaths | 95 | 254 | 570.14 | Monday |

| 46 | 185 | 1026.06 | sickened | 96 | 218 | 560.89 | authorities |

| 47 | 210 | 1009.43 | didn | 97 | 157 | 558.46 | BEIJING |

| 48 | 322 | 999.87 | Wednesday | 98 | 102 | 555.34 | evacuees |

| 49 | 203 | 993.76 | masks | 99 | 149 | 549.24 | spreading |

| 50 | 233 | 991.96 | SANDERS | 100 | 356 | 542.45 | department |

| Taxonomy | Tokens |

|---|---|

| Auxiliary verbs | am, are, be, being, been, do, does, did, get, got, has, had, have, is, was, were |

| Conjunctions | after, and, as, although, because, before, but, for, however, nevertheless, neither, or, since, so, until, when, while, yet |

| Determiners | |

| Articles | a, an, the |

| Demonstratives | that, these, this, those |

| Possessive pronouns | each, hers, his, its, my, our, ours, other, others, their, whose, which, your |

| Quantifiers | all, any, a lot of, a few, a little, both, enough, many, most, more, much, none, several, some |

| Modals | can, could, may, might, must, shall, should, will, would |

| Prepositions | about, above, across, against, along, alongside, among, around, at, before, behind, below, beneath, beside, between, beyond, by, down, for, from, in, inside, into, near, off, of, on, opposite, out, outside, over, past, round, through, throughout, to, toward, towards, under, underneath, up, with, within, without |

| Pronouns | anyone, anybody, everybody, everyone, he, her, herself, him, himself I, it, me, myself, us, self, she, somebody, someone, such, they, them, you, yours, we |

| Qualifiers | pretty (much), quite, rather, really, somewhat, too, very |

| Question words | how, what, when, where, who, why |

| Comparatives | even, less, more, much, than |

| Conditionals | if, then, unless |

| Concessive clause | even if, even though |

| Frequency | ever, now |

| Other words | also, back, backward, backwards, forward, forwards, just, last, least, only, re, still, there, well |

| Negatives | couldn, don, doesn, didn, isn, no, not, non, weren |

| Meaningless words | b, c, d, e, f, g, h, j, k, l, m, n, o, p, q, r, s, t, u, v, w, x, y, z, ve, ll, th |

| Rank | Freq. | Tokens | Rank | Freq. | Tokens | Rank | Freq. | Tokens |

|---|---|---|---|---|---|---|---|---|

| 1 | 2425 | coronavirus | 35 | 525 | united | 69 | 339 | three |

| 2 | 2354 | said | 36 | 523 | spread | 70 | 338 | number |

| 3 | 2225 | CHINA | 37 | 515 | public | 71 | 332 | make |

| 4 | 1936 | people | 38 | 502 | CDC | 72 | 329 | days |

| 5 | 1585 | virus | 39 | 486 | case | 73 | 329 | symptoms |

| 6 | 1319 | president | 40 | 484 | world | 74 | 328 | media |

| 7 | 1292 | health | 41 | 466 | say | 75 | 328 | south |

| 8 | 1198 | TRUMP | 42 | 453 | told | 76 | 327 | including |

| 9 | 1184 | new | 43 | 445 | CARLSON | 77 | 327 | quarantine |

| 10 | 1182 | cases | 44 | 436 | states | 78 | 322 | Wednesday |

| 11 | 1114 | one | 45 | 424 | American | 79 | 320 | February |

| 12 | 1076 | outbreak | 46 | 422 | government | 80 | 319 | report |

| 13 | 969 | news | 47 | 416 | video | 81 | 319 | take |

| 14 | 967 | think | 48 | 415 | flights | 82 | 318 | hospital |

| 15 | 845 | like | 49 | 415 | week | 83 | 310 | countries |

| 16 | 840 | know | 50 | 412 | house | 84 | 305 | BIDEN |

| 17 | 796 | going | 51 | 395 | go | 85 | 297 | press |

| 18 | 724 | officials | 52 | 393 | year | 86 | 296 | national |

| 19 | 674 | right | 53 | 390 | passengers | 87 | 296 | patients |

| 20 | 669 | WUHAN | 54 | 379 | city | 88 | 294 | Americans |

| 21 | 666 | reported | 55 | 378 | yes | 89 | 293 | international |

| 22 | 664 | country | 56 | 377 | day | 90 | 293 | saying |

| 23 | 664 | first | 57 | 376 | ship | 91 | 290 | GUTFELD |

| 24 | 656 | FOX | 58 | 372 | clip | 92 | 287 | years |

| 25 | 620 | two | 59 | 372 | medical | 93 | 286 | home |

| 26 | 613 | CHINESE | 60 | 370 | good | 94 | 284 | made |

| 27 | 609 | time | 61 | 364 | see | 95 | 283 | need |

| 28 | 584 | here | 62 | 358 | control | 96 | 280 | deaths |

| 29 | 577 | confirmed | 63 | 356 | department | 97 | 280 | tonight |

| 30 | 573 | travel | 64 | 351 | democrats | 98 | 277 | CAVUTO |

| 31 | 566 | infected | 65 | 351 | way | 99 | 277 | person |

| 32 | 561 | according | 66 | 349 | end | 100 | 277 | today |

| 33 | 543 | disease | 67 | 349 | want | |||

| 34 | 543 | state | 68 | 345 | impeachment |

| Rank | Freq. | Keyness | Keywords | Rank | Freq. | Keyness | Keywords |

|---|---|---|---|---|---|---|---|

| 1 | 2425 | 17,780.87 | coronavirus | 51 | 185 | 1264.35 | sickened |

| 2 | 2225 | 12,348.46 | CHINA | 52 | 203 | 1253.67 | masks |

| 3 | 1585 | 10,753.89 | virus | 53 | 218 | 1241.01 | flu |

| 4 | 1076 | 7620.99 | outbreak | 54 | 351 | 1213.73 | democrats |

| 5 | 1198 | 5856.95 | TRUMP | 55 | 191 | 1195.39 | BOLTON |

| 6 | 1182 | 5553.66 | cases | 56 | 525 | 1159.65 | united |

| 7 | 669 | 4947.36 | WUHAN | 57 | 156 | 1159.64 | HUBEI |

| 8 | 1292 | 4617.01 | health | 58 | 416 | 1150.17 | video |

| 9 | 2354 | 3687.51 | said | 59 | 245 | 1146.14 | illness |

| 10 | 1319 | 3686.49 | president | 60 | 259 | 1122.74 | tested |

| 11 | 566 | 3595.57 | infected | 61 | 372 | 1111.22 | medical |

| 12 | 502 | 3491.79 | CDC | 62 | 206 | 1104.42 | amid |

| 13 | 656 | 3259.14 | FOX | 63 | 185 | 1081.51 | respiratory |

| 14 | 577 | 3240.89 | confirmed | 64 | 220 | 1072.71 | airlines |

| 15 | 969 | 3072.41 | news | 65 | 221 | 1063.01 | prevention |

| 16 | 724 | 2913.26 | officials | 66 | 268 | 1027.51 | Thursday |

| 17 | 445 | 2783.92 | CARLSON | 67 | 189 | 1018.97 | province |

| 18 | 1936 | 2672.81 | people | 68 | 208 | 997.57 | KOREA |

| 19 | 573 | 2588.52 | travel | 69 | 318 | 964.88 | hospital |

| 20 | 666 | 2556.98 | reported | 70 | 515 | 952.75 | public |

| 21 | 613 | 2543.76 | CHINESE | 71 | 181 | 952 | princess |

| 22 | 415 | 2468.07 | flights | 72 | 135 | 948.08 | epicenter |

| 23 | 523 | 2413.47 | spread | 73 | 261 | 938.27 | JAPAN |

| 24 | 327 | 2348.55 | quarantine | 74 | 356 | 937.64 | department |

| 25 | 543 | 2312.9 | disease | 75 | 193 | 929.77 | witnesses |

| 26 | 345 | 2209.53 | impeachment | 76 | 180 | 914.48 | reportedly |

| 27 | 390 | 2098.88 | passengers | 77 | 211 | 909.23 | centers |

| 28 | 290 | 1874.42 | GUTFELD | 78 | 269 | 902.27 | Tuesday |

| 29 | 244 | 1813.89 | COVID | 79 | 245 | 880.33 | novel |

| 30 | 250 | 1780.79 | SARS | 80 | 242 | 878.53 | announced |

| 31 | 372 | 1666.13 | clip | 81 | 228 | 875.85 | airport |

| 32 | 305 | 1650.23 | BIDEN | 82 | 254 | 870.34 | Monday |

| 33 | 329 | 1639.26 | symptoms | 83 | 144 | 865.17 | PENCE |

| 34 | 561 | 1605.28 | according | 84 | 486 | 856.75 | case |

| 35 | 256 | 1586 | HONG | 85 | 123 | 843.83 | pandemic |

| 36 | 277 | 1573.95 | CAVUTO | 86 | 296 | 838.98 | patients |

| 37 | 376 | 1557.85 | ship | 87 | 218 | 823.44 | authorities |

| 38 | 238 | 1546.05 | mainland | 88 | 142 | 817.56 | epidemic |

| 39 | 252 | 1533.66 | KONG | 89 | 310 | 811.58 | countries |

| 40 | 270 | 1496.12 | cruise | 90 | 114 | 794.27 | SCHIFF |

| 41 | 280 | 1408.04 | deaths | 91 | 165 | 785.33 | FEB |

| 42 | 228 | 1397 | BERNIE | 92 | 328 | 771.61 | south |

| 43 | 322 | 1396.68 | Wednesday | 93 | 328 | 757.84 | media |

| 44 | 258 | 1396.56 | BLOOMBERG | 94 | 157 | 754.61 | BEIJING |

| 45 | 229 | 1369.13 | BAIER | 95 | 101 | 750.77 | PERINO |

| 46 | 664 | 1357.01 | country | 96 | 274 | 750.18 | response |

| 47 | 320 | 1325.76 | February | 97 | 136 | 748.25 | vaccine |

| 48 | 182 | 1314.51 | quarantined | 98 | 118 | 745.83 | INGRAHAM |

| 49 | 1184 | 1313.17 | new | 99 | 149 | 736.02 | spreading |

| 50 | 233 | 1287.58 | SANDERS | 100 | 280 | 723.38 | tonight |

| Methods | Function Words Elimination | Machine Eliminates Function Words |

|---|---|---|

| Li’s approach [29] | No | No |

| Todd’s approach [30] | Yes | No |

| Ross & River’s approach [31] | Yes | No |

| The proposed method | Yes | Yes |

| COVID-19 News Reports Corpora | Data Types | Word Types | Tokens | TTR | ||

|---|---|---|---|---|---|---|

| Year | Month | Numbers of Reports | ||||

| 2020 | January & February | 615 | Original data | 16,536 | 457,891 | 3.6% |

| Refined data | 16,325 | 234,612 | 7% | |||

| Data discrepancy | −211 (−1.2%) | −223,279 (−48.8%) | From 3.6% to 7% | |||

| COVID-19 News Reports Corpora | Data Types | Keyword Types | Tokens | ||

|---|---|---|---|---|---|

| Year | Month | Numbers of Reports | |||

| 2020 | January & February | 615 | Original data | 1346 | 226,271 |

| Refined data | 2149 | 156,841 | |||

| Data discrepancy | +803 (+89.7%) | −69,430 (−30.7%) | |||

| Classifications | Clusters | Freq. | Clusters Freq./Total Freq. |

|---|---|---|---|

| Example 1 | coronavirus outbreak | 279 | 11.5% |

| Example 2 | coronavirus in | 109 | 4.5% |

| Example 3 | coronavirus cases/ coronavirus case | 117 | 4.8% |

| Example 4 | coronavirus is | 51 | 2.1% |

| Example 5 | coronavirus death | 27 | 1.1% |

| Example 6 | coronavirus patients/ coronavirus patient | 32 | 1.3% |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chen, L.-C.; Chang, K.-H.; Chung, H.-Y. A Novel Statistic-Based Corpus Machine Processing Approach to Refine a Big Textual Data: An ESP Case of COVID-19 News Reports. Appl. Sci. 2020, 10, 5505. https://doi.org/10.3390/app10165505

Chen L-C, Chang K-H, Chung H-Y. A Novel Statistic-Based Corpus Machine Processing Approach to Refine a Big Textual Data: An ESP Case of COVID-19 News Reports. Applied Sciences. 2020; 10(16):5505. https://doi.org/10.3390/app10165505

Chicago/Turabian StyleChen, Liang-Ching, Kuei-Hu Chang, and Hsiang-Yu Chung. 2020. "A Novel Statistic-Based Corpus Machine Processing Approach to Refine a Big Textual Data: An ESP Case of COVID-19 News Reports" Applied Sciences 10, no. 16: 5505. https://doi.org/10.3390/app10165505

APA StyleChen, L.-C., Chang, K.-H., & Chung, H.-Y. (2020). A Novel Statistic-Based Corpus Machine Processing Approach to Refine a Big Textual Data: An ESP Case of COVID-19 News Reports. Applied Sciences, 10(16), 5505. https://doi.org/10.3390/app10165505