Deep Learning at the Mobile Edge: Opportunities for 5G Networks

Abstract

1. Introduction

Contributions

2. Mobile Edge Computing

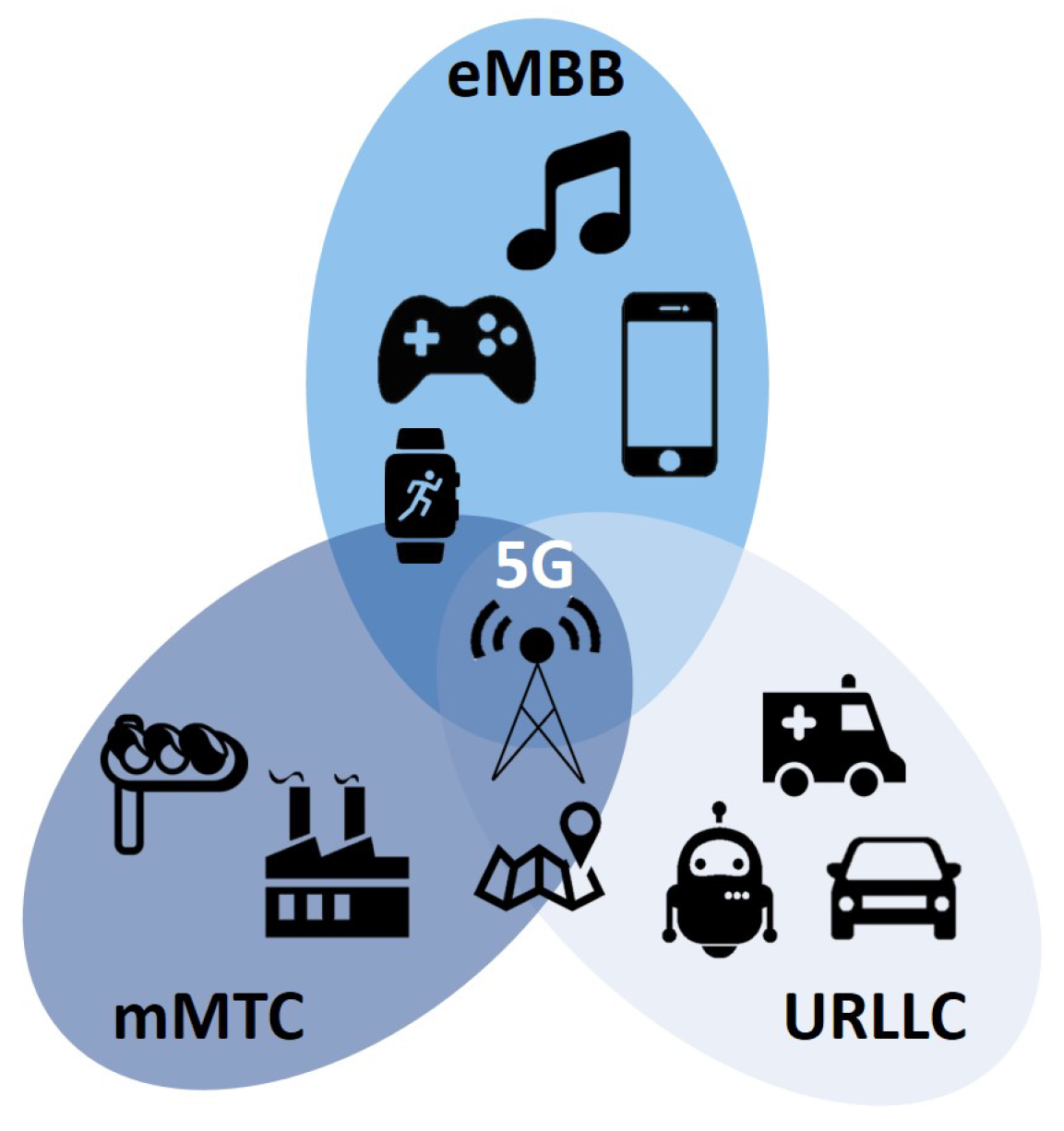

2.1. 5G Network Purpose and Design

- Enhanced mobile broadband (eMBB) will support general consumers applications, such as video streaming, browsing, and cloud-based gaming.

- Ultra-reliable low-latency communications (URLLC) will support latency-sensitive applications, such as AR/VR, autonomous vehicles and drones, smart city infrastructure, Industry 4.0, and tele-robotics.

- Massive machine-type communications (mMTC) will support scalable peer-to-peer networks for IoT applications without high bandwidth.

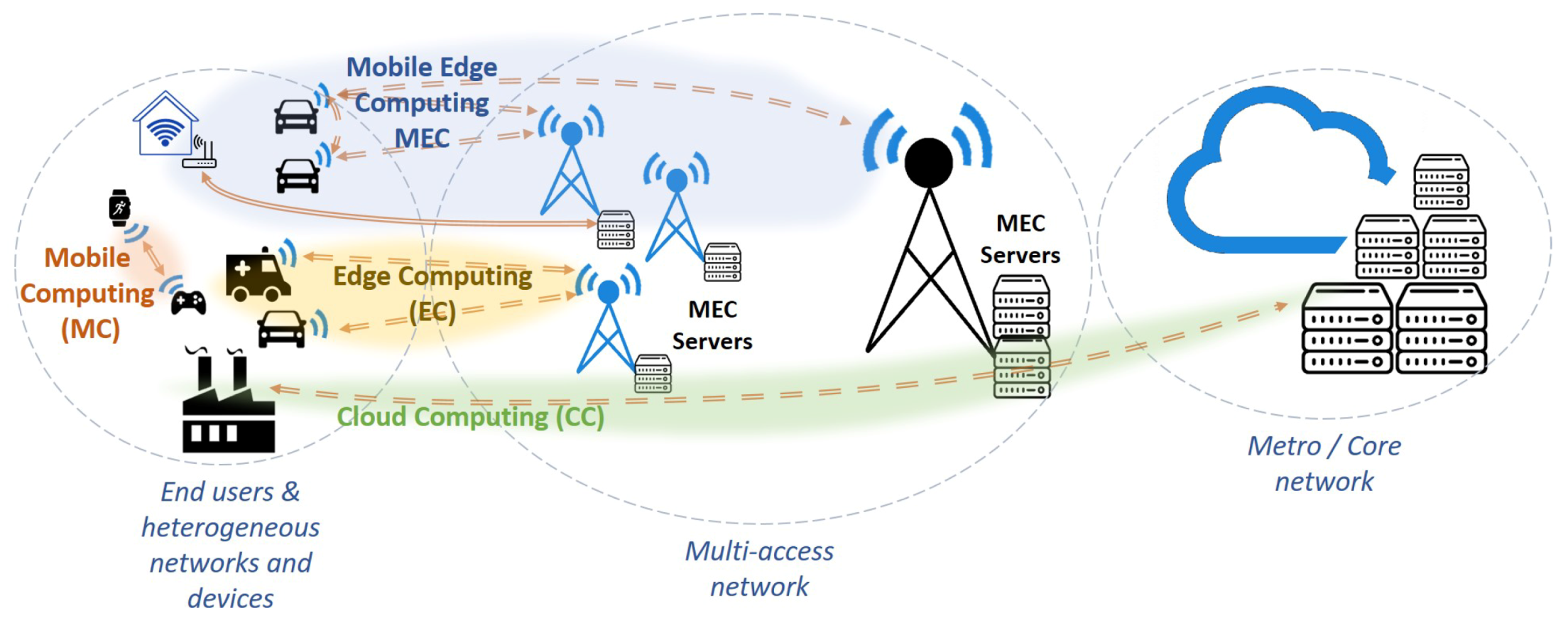

2.2. Mobile Edge Computing for 5G

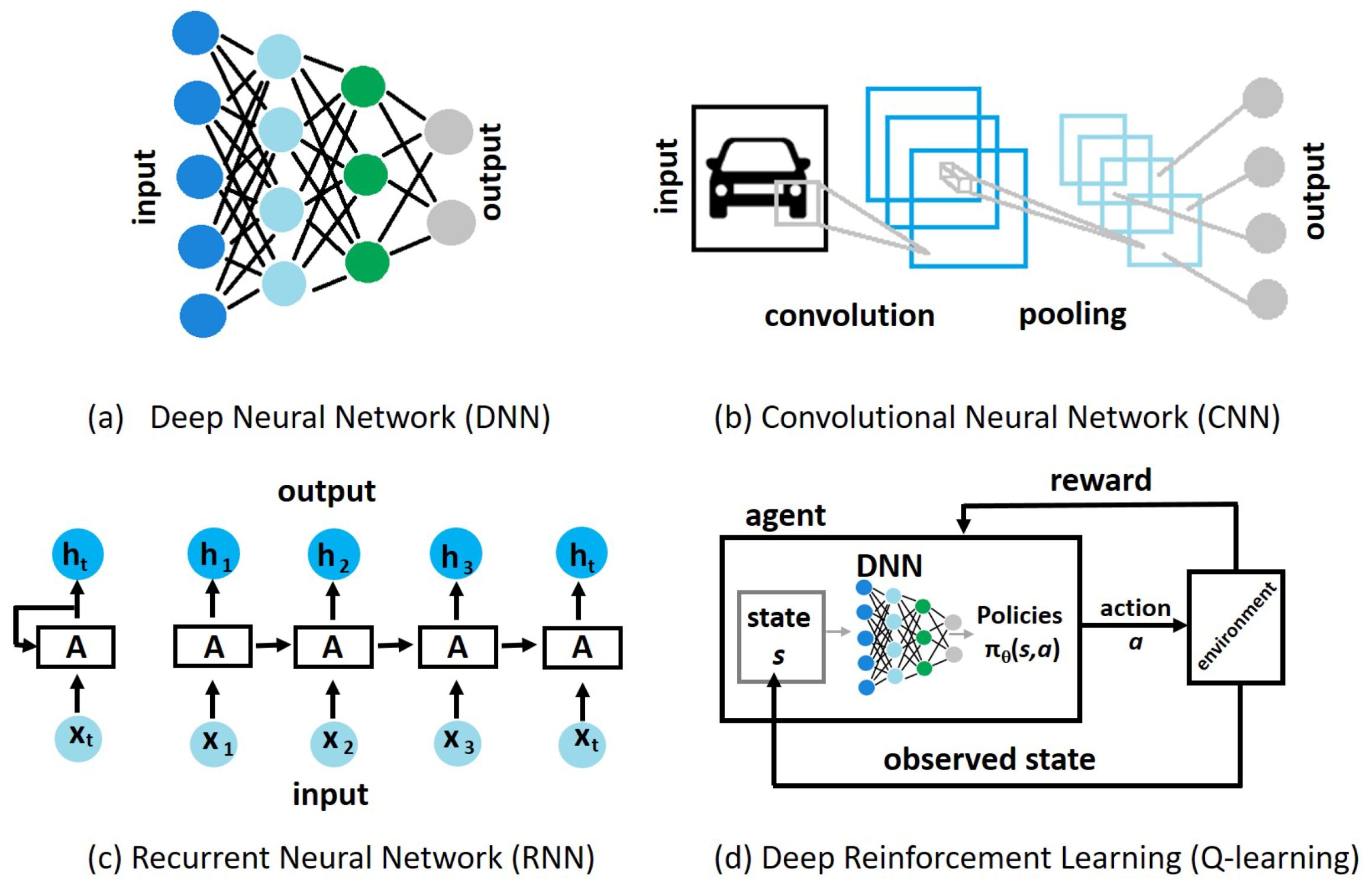

3. Deep Learning Techniques

3.1. Deep Neural Networks

3.2. Reinforcement Learning

3.3. Enabling ML in the Mobile Edge

- Efficient ML models specialized to require less energy, memory, or time to train, and

- Distributed ML models that distribute the training and inference tasks between large data centers and smaller edge devices for parallel processing and efficiency.

3.3.1. Efficient ML Models

3.3.2. Distributed ML Models

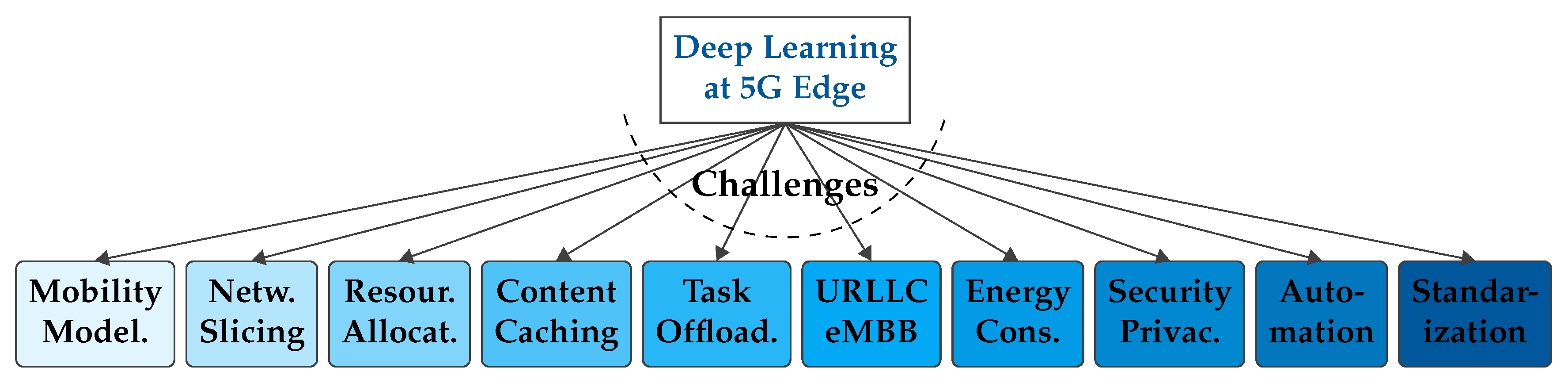

4. Challenges of DL for 5G Operations at the Mobile Edge

4.1. Mobility Modeling

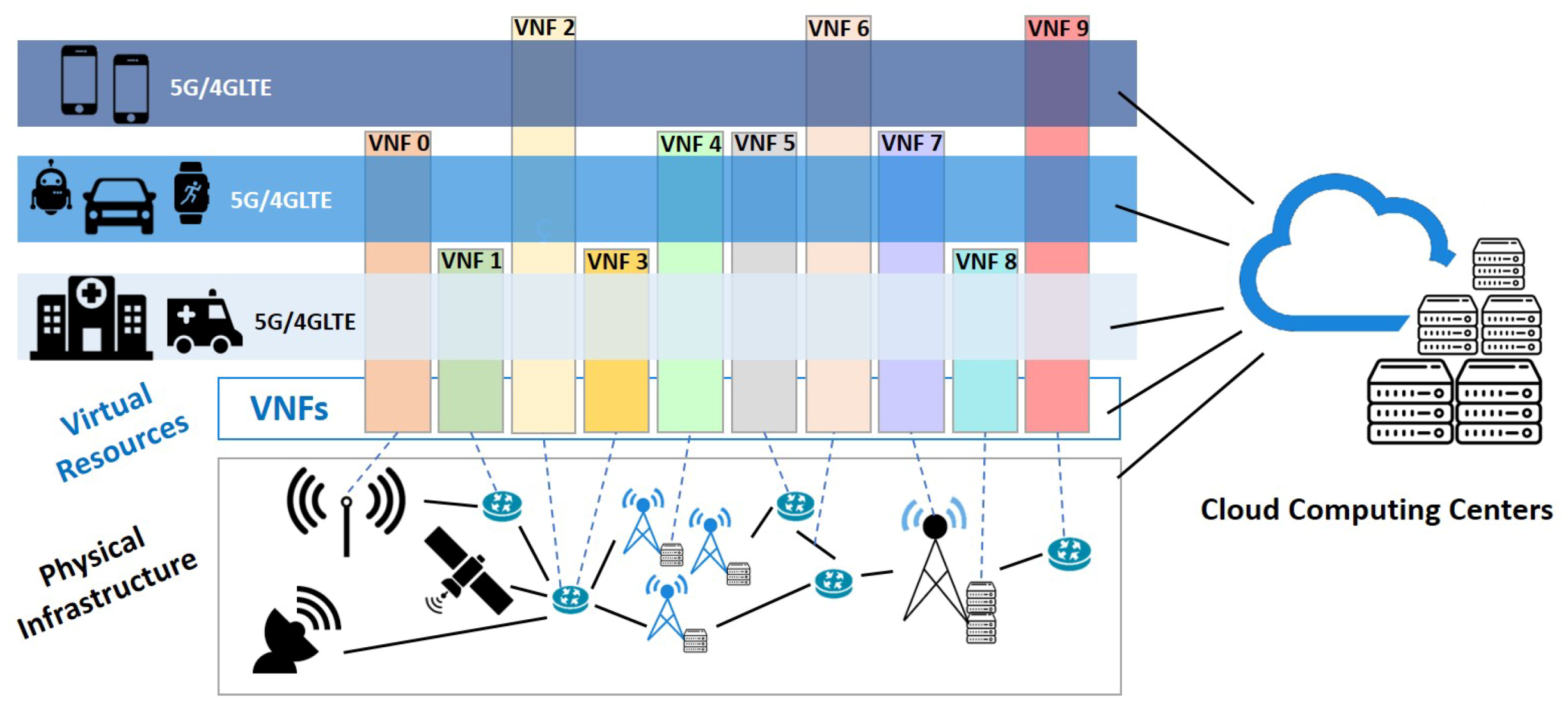

4.2. Slicing

4.3. Resource Allocation

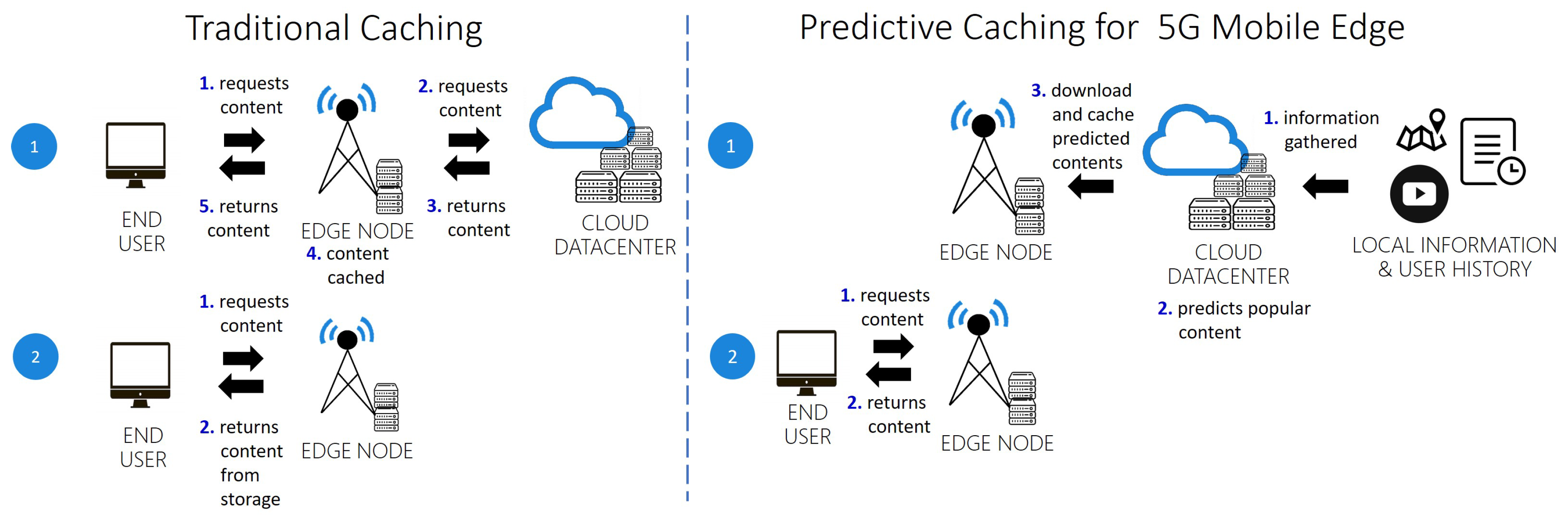

4.4. Caching

4.5. Task Offloading

4.6. URLLC and eMBB through Quality of Service Constraints

4.7. Energy Consumption

4.8. Security and Privacy

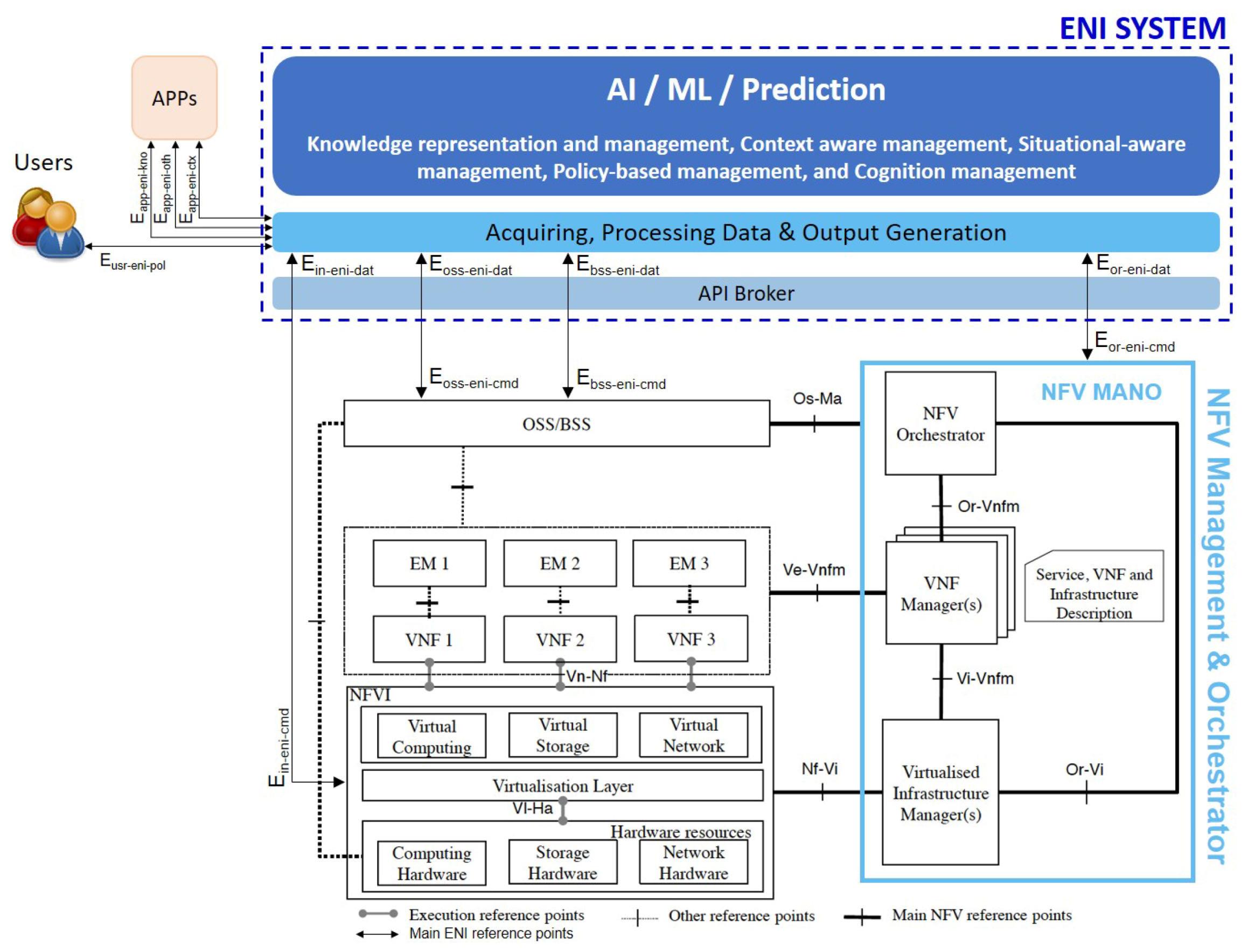

5. Standards towards 5G Automation

5.1. ETSI

- NFV Orchestrator that controls network services onboarding, lifecycle management, resource management including capacity planning, migration and fault management,

- VNF Manager configures and coordinates of VNF instances, and

- Virtualized Infrastructure Manager (VIM) that controls and manages the physical and virtual infrastructure, i.e., computing, storage, and network resources.

5.2. ITU

5.3. IETF

5.4. Automation

6. Implementations and Use Cases

6.1. 5G Implementations

6.2. New Mobile Edge Applications

6.2.1. Internet-of-(Every)Thing

6.2.2. Connected Vehicles

6.2.3. Smart Cities

6.2.4. Robotics and Industry

7. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| 5G | “Fifth-Generation” Mobile Networks |

| MEC | Mobile Edge Computing / Multi-Access Edge Computing |

| SDN | Software-Defined Networks |

| NFV | Network Function Virtualization |

| European 5GPPP | European 5G Public Private Partnership |

| ML | Machine Learning |

| DL | Deep Learning |

| OPEX | Operating Expenditures |

| CAPEX | Capital Expenditures |

| ISP | Internet Service Providers |

| eMBB | Enhanced Mobile Broadband |

| URRLC | Ultra-reliable Low-latency Communications |

| mMTC | Massive Machine-type Communications |

| vCPE | Virtual Customer Premise Equipment |

| VNF | Virtualized Network Function |

| AR/VR | Augmented Reality/Virtual Reality |

| QoE | Quality of Experience |

| MC | Mobile Computing |

| CC | Cloud Computing |

| EC | Edge Computing |

| LTE | Long-Term Evolution |

| RNC | Radio Network Controllers |

| RAT | Radio Access Technology |

| DNN | Deep Neural Network |

| CNN | Convolutional Neural Network |

| RNN | Recurrent Neural Network |

| LSTM | Long-short Term Memory model |

| RL | Reinforcement Learning |

| DQN | Deep Q-learning Network |

| KPI | Key Performance Indicators |

| ETSI | European Telecommunications Standards Institute |

| ESO | European Standards Organization |

| 3GPP | Third Generation Partnership Project |

| ITU | International Telecommunication Union |

| IETF | Internet Engineering Task Force |

| ISG | Industry Specific Groups |

| ENI | Experimental Network Intelligence |

| MANO | Management And Orchestration |

| VIM | Virtualized Infrastructure Manager |

| ZSM | Zero-touch Service Management |

| SON | Self-Organizing Network |

| NTA | Network Telemetry and Analytics |

| NAI | Network Artificial Intelligence |

| SFC | Service Function Chaining |

| AI | Artificial Intelligence |

| IoT | Internet of Things |

| UAV | Unmanned Aerial Vehicle |

| SLA | Service-Level Agreement |

| E2E | End-to-end |

| QoS | Quality of Service |

| VoIP | Voice over IP |

References

- Wood, L. 5G Optimization: Mobile Edge Computing, APIs, and Network Slicing 2019–2024; Technical Report for Research and Markets: Dublin, Ireland, 22 October 2019. [Google Scholar]

- Hu, Y.C.; Patel, M.; Sabella, D.; Sprecher, N.; Young, V. ETSI White Paper No. 11. Mobile Edge Computing: A Key Technology towards 5G. Technical Report, ETSI. 2015. Available online: https://www.etsi.org/images/files/ETSIWhitePapers/etsi_wp11_mec_a_key_technology_towards_5g.pdf (accessed on 8 July 2020).

- Porambagea, P.; Okwuibe, J.; Liyanage, M.; Ylianttila, M.; Taleb, T. Survey on Multi-Access Edge Computing for Internet of Things Realization. IEEE Commun. Surv. Tutor. 2018, 20, 2961–2991. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. Available online: https://arxiv.org/abs/1409.1556 (accessed on 8 July 2020).

- Collobert, R.; Weston, J. A Unified Architecture for Natural Language Processing: Deep Neural Networks with Multitask Learning. In Proceedings of the 25th International Conference on Machine Learning (ICML 2008), Helsinki, Finland, 5–9 July 2008; pp. 160–167. [Google Scholar]

- Park, J.; Samarakoon, S.; Bennis, M.; Debbah, M. Wireless Network Intelligence at the Edge. Proc. IEEE 2019, 107, 2204–2239. [Google Scholar] [CrossRef]

- Yousefpour, A.; Fung, C.; Nguyen, T.; Kadiyala, K.; Jalali, F.; Niakanlahiji, A.; Kong, J.; Jue, J.P. All one needs to know about fog computing and related edge computing paradigms: A complete survey. J. Syst. Archit. 2019, 98, 289–330. [Google Scholar] [CrossRef]

- Pham, Q.; Fang, F.; Ha, V.N.; Piran, M.J.; Le, M.; Le, L.B.; Hwang, W.; Ding, Z. A Survey of Multi-Access Edge Computing in 5G and Beyond: Fundamentals, Technology Integration, and State-of-the-Art. IEEE Access 2020. [Google Scholar] [CrossRef]

- Miller, D.J.; Xiang, Z.; Kesidis, G. Adversarial Learning Targeting Deep Neural Network Classification: A Comprehensive Review of Defenses Against Attacks. Proc. IEEE 2020, 108, 402–433. [Google Scholar] [CrossRef]

- Tang, F.; Kawamoto, Y.; Kato, N.; Liu, J. Future Intelligent and Secure Vehicular Network Toward 6G: Machine-Learning Approaches. Proc. IEEE 2020, 108, 292–307. [Google Scholar] [CrossRef]

- Chen, X.; Proietti, R.; Yoo, S.J.B. Building Autonomic Elastic Optical Networks with Deep Reinforcement Learning. IEEE Commun. Mag. 2019, 57, 20–26. [Google Scholar] [CrossRef]

- Yu, Y.; Wang, T.; Liew, S.C. Deep-Reinforcement Learning Multiple Access for Heterogeneous Wireless Networks. IEEE J. Sel. Areas Commun. 2019, 37, 1277–1290. [Google Scholar] [CrossRef]

- ETSI GS MEC 002 (V2.1.1): Multi-Access Edge Computing (MEC); Phase 2: Use Cases and Requirements. 2018; Available online: https://www.etsi.org/deliver/etsi_gs/MEC/001_099/002/02.01.01_60/gs_MEC002v020101p.pdf (accessed on 8 July 2020).

- Increasing Mobile Operators’ Value Proposition with Edge Computing. Technical Report, Intel and Nokia Siemens Networks. 2013. Available online: https://www.intel.co.id/content/dam/www/public/us/en/documents/technology-briefs/edge-computing-tech-brief.pdf (accessed on 8 July 2020).

- Varghese, B.; Wang, N.; Barbhuiya, S.; Kilpatrick, P.; Nikolopoulos, D. Challenges and Opportunities in Edge Computing. In Proceedings of the 1st IEEE International Conference on Smart Cloud (SmartCloud 2016), New York, NY, USA, 18–20 November 2016; pp. 20–26. [Google Scholar]

- ETSI GS MEC 003 (V2.1.1): Multi-Access Edge Computing (MEC); Framework and Reference Architecture. 2019. Available online: https://www.etsi.org/deliver/etsi_gs/MEC/001_099/003/02.01.01_60/gs_MEC003v020101p.pdf (accessed on 8 July 2020).

- Enable Edge Computing with Azure IoT Edge. Available online: https://azure.microsoft.com/en-us/resources/videos/microsoft-ignite-2017-enable-edge-computing-with-azure-iot-edge/ (accessed on 8 July 2020).

- Edge TPU. Available online: https://cloud.google.com/edge-tpu/ (accessed on 30 May 2020).

- AWS IoT Greengrass. Available online: https://aws.amazon.com/greengrass/ (accessed on 30 May 2020).

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. In Proceedings of the 25th International Conference on Neural Information Processing Systems (NIPS 2012), Lake Tahoe, Nevada, 5–9 July 2008; Volume 1, pp. 160–167. [Google Scholar]

- Donahue, J.; Jia, Y.; Vinyals, O.; Hoffman, J.; Zhang, N.; Tzeng, E.; Darrell, T. DeCAF: A Deep Convolutional Activation Feature for Generic Visual Recognition. In Proceedings of the 31st International Conference on International Conference on Machine Learning (ICML 2014), Beijing, China, 21–26 June 2014; Volume 32, pp. I-647–I-655. [Google Scholar]

- Silver, D.; Schrittwieser, J.; Simonyan, K.; Antonoglou, I.; Huang, A.; Guez, A.; Huberta, T.; Bakera, L.; Lai, M.; Bolton, A.; et al. Mastering the game of Go without human knowledge. Nature 2017, 550, 354–359. [Google Scholar] [CrossRef]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Graves, A.; Antonoglou, I.; Wierstra, D.; Riedmiller, M.A. Playing Atari with Deep Reinforcement Learning. arXiv 2013, arXiv:1312.5602. Available online: https://arxiv.org/abs/1312.5602 (accessed on 8 July 2020).

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016; Available online: http://www.deeplearningbook.org (accessed on 8 July 2020).

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. ImageNet: A Large-Scale Hierarchical Image Database. In Proceedings of the IEEE Computer Society Conference on Computer Vision (CVPR 2009), Miami, FL, USA, 20–25 June 2009; pp. 248–255. [Google Scholar]

- Wang, J.; Tang, J.; Xu, Z.; Wang, Y.; Xue, G.; Zhang, X.; Yang, D. Spatiotemporal modeling and prediction in cellular networks: A big data enabled deep learning approach. In Proceedings of the IEEE International Conference on Computer Communications (INFOCOM 2017), Atlanta, GA, USA, 1–4 May 2017; pp. 1–9. [Google Scholar]

- Wang, X.; Zhou, Z.; Xiao, F.; Xing, K.; Yang, Z.; Liu, Y.; Peng, C. Spatio-Temporal Analysis and Prediction of Cellular Traffic in Metropolis. IEEE Trans. Mobile Comput. 2019, 18, 2190–2202. [Google Scholar] [CrossRef]

- Nakao, A.; Du, P. Toward In-Network Deep Machine Learning for Identifying Mobile Applications and Enabling Application Specific Network Slicing. IEICE Trans. Commun. 2018, E101-B, 1536–1543. [Google Scholar] [CrossRef]

- Utgoff, P.E.; Stracuzzi, D.J. Many-Layered Learning. Neural Comput. 2002, 14, 2497–2529. [Google Scholar] [CrossRef] [PubMed]

- Boutaba, R.; Salahuddin, M.A.; Limam, N.; Ayoubi, S.; Shahriar, N.; Estrada-Solano, F.; Caicedo, O.M. A Comprehensive Survey on Machine Learning for Networking: Evolution, Applications and Research Opportunities. J. Internet Serv. Appl. 2018, 9, 1–99. [Google Scholar] [CrossRef]

- Zeiler, M.D.; Fergus, R. Visualizing and Understanding Convolutional Networks. In Proceedings of the 13th European Conference on Computer Vision (ECCV 2014), Zurich, Switzerland, 6–12 September 2014; pp. 818–833. [Google Scholar]

- Bega, D.; Gramaglia, M.; Fiore, M.; Banchs, A.; Costa-Pérez, X. DeepCog: Cognitive Network Management in Sliced 5G Networks with Deep Learning. In Proceedings of the IEEE International Conference on Computer Communications (INFOCOM 2019), Paris, France, 25–27 September 2019; pp. 280–288. [Google Scholar]

- Shakev, N.G.; Ahmed, S.A.; Popov, V.L.; Topalov, A.V. Recognition and Following of Dynamic Targets by an Omnidirectional Mobile Robot using a Deep Convolutional Neural Network. In Proceedings of the 9th International Conference on Intelligent Systems (IS 2018), Madeira, Portugal, 25–27 September 2018; pp. 589–594. [Google Scholar]

- Azari, A.; Papapetrou, P.; Denic, S.Z.; Peters, G. User Traffic Prediction for Proactive Resource Management: Learning-Powered Approaches. arXiv 2019, arXiv:1906.00951. Available online: https://arxiv.org/abs/1906.00951 (accessed on 8 July 2020).

- Ozturk, M.; Gogate, M.; Onireti, O.; Adeel, A.; Hussain, A.; Imran, M. A novel deep learning driven low-cost mobility prediction approach for 5G cellular networks: The case of the Control/Data Separation Architecture (CDSA). Neurocomputing 2019, 358, 479–489. [Google Scholar] [CrossRef]

- Geron, A. Hands-On Machine Learning with Scikit-Learn, Keras, and TensorFlow, 2nd ed.; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2019. [Google Scholar]

- Bellman, R. A Markovian Decision Process. J. Math. Mech. 1957, 6, 679–684. [Google Scholar] [CrossRef]

- Agarwal, P.; Alam, M. A Lightweight Deep Learning Model for Human Activity Recognition on Edge Devices. arXiv 2019, arXiv:1909.12917. Available online: https://arxiv.org/abs/1909.12917 (accessed on 8 July 2020). [CrossRef]

- Yang, S.; Gong, Z.; Ye, K.; Wei, Y.; Huang, Z.; Huang, Z. EdgeCNN: Convolutional Neural Network Classification Model with small inputs for Edge Computing. arXiv 2019, arXiv:1909.13522. Available online: https://arxiv.org/abs/1909.13522 (accessed on 8 July 2020).

- Li, E.; Zeng, L.; Zhou, Z.; Chen, X. Edge AI: On-Demand Accelerating Deep Neural Network Inference via Edge Computing. arXiv 2019, arXiv:1910.05316. Available online: https://arxiv.org/abs/1910.05316 (accessed on 8 July 2020). [CrossRef]

- Teerapittayanon, S.; McDanel, B.; Kung, H.T. BranchyNet: Fast inference via early exiting from deep neural networks. In Proceedings of the 23rd International Conference on Pattern Recognition (ICPR 2016), Cancún, Mexico, 4–8 December 2016; pp. 2464–2469. [Google Scholar]

- Wong, A.; Lin, Z.Q.; Chwyl, B. AttoNets: Compact and Efficient Deep Neural Networks for the Edge via Human-Machine Collaborative Design. In Proceedings of the 1st Computer Vision and Pattern Recognition Workshops (CVPR 2019), Long Beach, CA, USA, 16–29 June 2019; pp. 1–10. [Google Scholar]

- Lin, Y.; Han, S.W.; Mao, H.; Wang, Y.; Dally, W.J. Deep Gradient Compression: Reducing the Communication Bandwidth for Distributed Training. arXiv 2017, arXiv:1712.01887. Available online: https://arxiv.org/abs/1712.01887 (accessed on 8 July 2020).

- Bonawitz, K.; Eichner, H.; Grieskamp, W.; Huba, D.; Ingerman, A.; Ivanov, V.; Kiddon, C.; Konecný, J.; Mazzocchi, S.; McMahan, H.B.; et al. Towards Federated Learning at Scale: System Design. arXiv 2019, arXiv:1902.01046. Available online: https://arxiv.org/abs/1902.01046 (accessed on 8 July 2020).

- Lim, W.Y.; Luong, N.C.; Hoang, D.T.; Jiao, Y.; Liang, Y.C.; Yang, Q.; Niyato, D.; Miao, C. Federated Learning in Mobile Edge Networks: A Comprehensive Survey. arXiv 2019, arXiv:1909.11875. Available online: https://arxiv.org/abs/1909.11875 (accessed on 8 July 2020).

- Teerapittayanon, S.; McDanel, B.; Kung, H.T. Distributed Deep Neural Networks Over the Cloud, the Edge and End Devices. In Proceedings of the 37th IEEE International Conference on Distributed Computing Systems (ICDCS 2017), Atlanta, GA, USA, 5–8 June 2017; pp. 328–339. [Google Scholar]

- Wang, S.; Tuor, T.; Salonidis, T.; Leung, K.K.; Makaya, C.; He, T.; Chan, K.S. Adaptive Federated Learning in Resource Constrained Edge Computing Systems. IEEE J. Sel. Areas Commun. 2018, 37, 1205–1221. [Google Scholar] [CrossRef]

- Liu, L.; Zhang, J.; Song, S.H.; Letaief, K.B. Client-Edge-Cloud Hierarchical Federated Learning. arXiv 2019, arXiv:1905.06641. Available online: https://arxiv.org/abs/1905.06641 (accessed on 8 July 2020).

- Tao, Z.; Li, Q. eSGD: Communication Efficient Distributed Deep Learning on the Edge. In Proceedings of the 1st Hot Topics in Edge Computing (HotEdge 2018), Boston, MA, USA, 10 July 2018; pp. 1–6. [Google Scholar]

- Wickramasuriya, D.S.; Perumalla, C.A.; Davaslioglu, K.; Gitlin, R.D. Base station prediction and proactive mobility management in virtual cells using recurrent neural networks. In Proceedings of the 18th IEEE Wireless and Microwave Technology Conference (WAMICON 2017), Cocoa Beach, FL, USA, 24–25 April 2017; pp. 1–6. [Google Scholar]

- Pham, Q.; Fang, F.; Ha, V.N.; Piran, M.J.; Le, M.; Le, L.B.; Hwang, W.; Ding, Z. Multiple contents offloading mechanism in AI-enabled opportunistic networks. IEEE Access 2020, 155, 93–103. [Google Scholar]

- An Introduction to Network Slicing. Technical Report, GSM Association. 2017. Available online: https://www.gsma.com/futurenetworks/wp-content/uploads/2017/11/GSMA-An-Introduction-to-Network-Slicing.pdf (accessed on 8 July 2020).

- Sun, G.; Gebrekidan, Z.T.; Boateng, G.O.; Ayepah-Mensah, D.; Jiang, W. Dynamic Reservation and Deep Reinforcement Learning Based Autonomous Resource Slicing for Virtualized Radio Access Networks. IEEE Access 2019, 7, 45758–45772. [Google Scholar] [CrossRef]

- Koo, J.; Mendiratta, V.B.; Rahman, M.R.; Elwalid, A. Deep Reinforcement Learning for Network Slicing with Heterogeneous Resource Requirements and Time Varying Traffic Dynamics. arXiv 2019, arXiv:1908.03242. Available online: https://arxiv.org/abs/1908.03242 (accessed on 30 May 2020).

- Sciancalepore, V.; Samdanis, K.; Costa, X.P.; Bega, D.; Gramaglia, M.; Banchs, A. Mobile traffic forecasting for maximizing 5G network slicing resource utilization. In Proceedings of the IEEE International Conference on Computer Communications (INFOCOM 2017), Atlanta, GA, USA, 1–4 May 2017; pp. 1–9. [Google Scholar] [CrossRef]

- Bega, D.; Gramaglia, M.; Banchs, A.; Sciancalepore, V.; Samdanis, K.; Costa, X.P. Optimising 5G infrastructure markets: The business of network slicing. In Proceedings of the IEEE International Conference on Computer Communications (INFOCOM 2017), Atlanta, GA, USA, 1–4 May 2017; pp. 1–9. [Google Scholar] [CrossRef]

- Li, R.; Zhao, Z.; Sun, Q.; Chih-Lin, I.; Yang, C.; Chen, X.; Zhao, M.; Zhang, H. Deep Reinforcement Learning for Resource Management in Network Slicing. IEEE Access 2018, 6, 74429–74441. [Google Scholar] [CrossRef]

- Khatibi, S.; Jano, A. Elastic Slice-Aware Radio Resource Management with AI-Traffic Prediction. In Proceedings of the 28th European Conference on Networks and Communications (EuCNC 2019), Valencia, Spain, 18–21 June 2019; pp. 575–579. [Google Scholar]

- Kim, Y.; Kim, S.; Lim, H. Reinforcement Learning Based Resource Management for Network Slicing. Appl. Sci. 2019, 9, 2361. [Google Scholar] [CrossRef]

- Li, J.; Gao, H.; Lv, T.; Lu, Y. Deep reinforcement learning based computation offloading and resource allocation for MEC. In Proceedings of the IEEE Wireless Communications and Networking Conference (IEEE WCNC 2018), Barcelona, Spain, 15–18 April 2018; pp. 1–6. [Google Scholar]

- Wei, Y.; Yu, F.R.; Song, M.; Han, Z. Joint Optimization of Caching, Computing, and Radio Resources for Fog-Enabled IoT Using Natural Actor–Critic Deep Reinforcement Learning. IEEE Internet Things J. 2019, 6, 2061–2073. [Google Scholar] [CrossRef]

- Chang, Z.; Lei, L.; Zhou, Z.; Mao, S.; Ristaniemi, T. Learn to Cache: Machine Learning for Network Edge Caching in the Big Data Era. IEEE Wirel. Commun. 2018, 25, 28–35. [Google Scholar] [CrossRef]

- He, Y.; Zhao, N.; Yin, H. Integrated Networking, Caching, and Computing for Connected Vehicles: A Deep Reinforcement Learning Approach. IEEE Trans. Veh. Technol. 2018, 67, 44–55. [Google Scholar] [CrossRef]

- Wang, R.; Li, M.; Peng, L.; Hu, Y.; Hassan, M.M.; Alelaiwi, A. Cognitive multi-agent empowering mobile edge computing for resource caching and collaboration. Future Gener. Comput. Syst. 2020, 102, 66–74. [Google Scholar] [CrossRef]

- Chien, W.C.; Weng, H.Y.; Lai, C.F. Q-learning based collaborative cache allocation in mobile edge computing. Future Gener. Comput. Syst. 2020, 102, 603–610. [Google Scholar] [CrossRef]

- Tan, L.T.; Hu, R.Q.; Hanzo, L.H. Twin-Timescale Artificial Intelligence Aided Mobility-Aware Edge Caching and Computing in Vehicular Networks. IEEE Trans. Veh. Technol. 2019, 68, 3086–3099. [Google Scholar] [CrossRef]

- Yang, J.; Zhang, J.; Ma, C.; Wang, H.; Zhang, J.; Zheng, G.H. Deep learning-based edge caching for multi-cluster heterogeneous networks. Neural Comput. Appl. 2019. [Google Scholar] [CrossRef]

- Li, Z.; Chen, J.; Zhang, Z. Socially Aware Caching in D2D Enabled Fog Radio Access Networks. IEEE Access 2019, 7, 84293–84303. [Google Scholar] [CrossRef]

- Chen, M.; Qian, Y.; Hao, Y.; Li, Y.; Song, J. Data-Driven Computing and Caching in 5G Networks: Architecture and Delay Analysis. IEEE Wirel. Commun. 2018, 25, 70–75. [Google Scholar] [CrossRef]

- Ning, Z.; Dong, P.; Wang, X.; Rodrigues, J.J.P.C.; Xia, F. Deep Reinforcement Learning for Vehicular Edge Computing: An Intelligent Offloading System. ACM Trans. Intell. Syst. Technol. 2019, 10, 1–25. [Google Scholar] [CrossRef]

- Huang, L.; Feng, X.; Zhang, C.; Qian, L.; Wu, Y. Deep reinforcement learning-based joint task offloading and bandwidth allocation for multi-user mobile edge computing. Digit. Commun. Netw. 2019, 5, 10–17. [Google Scholar] [CrossRef]

- Wang, Y.; Tao, X.; Zhang, X.; Zhang, P.; Hou, Y.T. Cooperative task offloading in three-tier mobile computing networks: An ADMM framework. IEEE Trans. Veh. Technol. 2019, 68, 2763–2776. [Google Scholar] [CrossRef]

- Martin, A.; Egaña, J.; Flórez, J.; Montalbán, J.; Olaizola, I.G.; Quartulli, M.; Viola, R.; Zorrilla, M. Network Resource Allocation System for QoE-Aware Delivery of Media Services in 5G Networks. IEEE Trans. Broadcast. 2018, 64, 561–574. [Google Scholar] [CrossRef]

- Aazam, M.; Harras, K.A.; Zeadally, S. Fog Computing for 5G Tactile Industrial Internet of Things: QoE-Aware Resource Allocation Model. IEEE Trans. Ind. Inform. 2019, 15, 3085–3092. [Google Scholar] [CrossRef]

- Wu, D.; Liu, Q.; Wang, H.; Yang, Q.; Wang, R. Cache Less for More: Exploiting Cooperative Video Caching and Delivery in D2D Communications. IEEE Trans. Multimed. 2019, 21, 1788–1798. [Google Scholar] [CrossRef]

- Alsenwi, M.; Tran, N.H.; Bennis, M.; Shashi RajPandey, A.K.B.; Hong, C.S. Intelligent Resource Slicing for eMBB and URLLC Coexistence in 5G and Beyond: A Deep Reinforcement Learning Based Approach. arXiv 2020, arXiv:2003.07651. Available online: https://arxiv.org/abs/2003.07651 (accessed on 8 July 2020).

- Maaz, D.; Galindo-Serrano, A.; Elayoubi, S.E. URLLC User Plane Latency Performance in New Radio. In Proceedings of the 25th International Conference on Telecommunications (ICT 2018), Saint Malo, France, 26–28 June 2018; pp. 225–229. [Google Scholar]

- Bennis, M.; Debbah, M.; Poor, H.V. Ultrareliable and Low-Latency Wireless Communication: Tail, Risk, and Scale. Proc. IEEE 2018, 106, 1834–1853. [Google Scholar] [CrossRef]

- Park, H.; Lee, Y.; Kim, T.; Kim, B.; Lee, J. Handover Mechanism in NR for Ultra-Reliable Low-Latency Communications. IEEE Netw. 2018, 32, 41–47. [Google Scholar] [CrossRef]

- Mukherjee, A. Energy Efficiency and Delay in 5G Ultra-Reliable Low-Latency Communications System Architectures. IEEE Netw. 2018, 32, 55–61. [Google Scholar] [CrossRef]

- Siddiqi, M.A.; Yu, H.; Joung, J. 5G Ultra-Reliable Low-Latency Communication Implementation Challenges and Operational Issues with IoT Devices. Electronics 2019, 8, 981. [Google Scholar] [CrossRef]

- Strubell, E.; Ganesh, A.; McCallum, A. Energy and Policy Considerations for Deep Learning in NLP. arXiv 2019, arXiv:1906.02243. Available online: https://arxiv.org/abs/1906.02243 (accessed on 8 July 2020).

- Ahvar, E.; Orgerie, A.; Lébre, A. Estimating Energy Consumption of Cloud, Fog and Edge Computing Infrastructures. IEEE Trans. Sustain. Comput. 2019, 1–12. [Google Scholar] [CrossRef]

- Chen, W.; Wang, D.; Li, K. Multi-User Multi-Task Computation Offloading in Green Mobile Edge Cloud Computing. IEEE Trans. Serv. Comput. 2019, 12, 726–738. [Google Scholar] [CrossRef]

- Liu, H.; Cao, L.; Pei, T.; Deng, Q.; Zhu, J. A Fast Algorithm for Energy-Saving Offloading With Reliability and Latency Requirements in Multi-Access Edge Computing. IEEE Access 2020, 8, 151–161. [Google Scholar] [CrossRef]

- Xiao, L.; Wan, X.; Dai, C.; Du, X.; Chen, X.; Guizani, M. Security in Mobile Edge Caching with Reinforcement Learning. IEEE Wirel. Commun. 2018, 25, 116–122. [Google Scholar] [CrossRef]

- Thantharate, A.; Paropkari, R.; Walunj, V.; Beard, C.; Kankariya, P. Secure5G: A Deep Learning Framework Towards a Secure Network Slicing in 5G and Beyond. In Proceedings of the 10th Annual Computing and Communication Workshop and Conference (CCWC 2020), Las Vegas, NV, USA, 6–8 January 2020; pp. 852–857. [Google Scholar]

- Truex, S.; Liu, L.; Chow, K.H.; Gursoy, M.E.; Wei, W. LDP-Fed: Federated Learning with Local Differential Privacy. In Proceedings of the 3rd ACM International Workshop on Edge Systems, Analytics and Networking (EdgeSys 2020), Heraklion, Greece, 27 April 2020; pp. 61–66. [Google Scholar]

- Akundi, S.; Prabhu, S.; BK, N.U.; Mondal, S.C. Suppressing Noisy Neighbours in 5G Networks: An End-to-End NFV-Based Framework to Detect and Suppress Noisy Neighbours. In Proceedings of the 21st International Conference on Distributed Computing and Networking (ICDCN 2020), Kolkata, India, 4–7 January 2020; pp. 1–6. [Google Scholar]

- Kafle, V.P.; Fukushima, Y.; Martinez-Julia, P.; Miyazawa, T. Consideration on Automation of 5G Network Slicing with Machine Learning. In Proceedings of the 10th ITU Kaleidoscope: Machine Learning for a 5G Future (ITU K 2018), Santa Fe, Argentina, 26–28 November 2018; pp. 1–8. [Google Scholar]

- Kafle, V.P.; Martinez-Julia, P.; Miyazawa, T. Automation of 5G Network Slice Control Functions with Machine Learning. IEEE Commun. Stand. Mag. 2019, 3, 54–62. [Google Scholar] [CrossRef]

- ETSI GS ENI 005 (V1.1.1): Experiential Networked Intelligence (ENI). System Architecture. 2020. Available online: https://www.etsi.org/deliver/etsi_gs/ENI/001_099/005/01.01.01_60/gs_ENI005v010101p.pdf (accessed on 8 July 2020).

- ETSI GS ENI 002 (V2.1.1): Experiential Networked Intelligence (ENI). ENI Requirements. 2018. Available online: https://www.etsi.org/deliver/etsi_gs/ENI/001_099/002/01.01.01_60/gs_ENI002v010101p.pdf (accessed on 8 July 2020).

- ETSI GR ENI 001 (V1.1.1): Experiential Networked Intelligence (ENI). ENI Use Cases. 2018. Available online: https://www.etsi.org/deliver/etsi_gr/ENI/001_099/001/01.01.01_60/gr_ENI001v010101p.pdf (accessed on 8 July 2020).

- 3GPP TR 23.791 (V16.1.0): Study of Enablers for Network Automation for 5G, Phase 2 (Release 17). February 2020. Available online: https://www.3gpp.org/ftp/Specs/archive/23_series/23.700-91/23700-91-030.zip (accessed on 8 July 2020).

- ETSI GR ZSM 004 V1.1.1 (2020-03) Zero-Touch Network and Service Management (ZSM). 2020. Available online: https://www.etsi.org/deliver/etsi_gr/ZSM/001_099/004/01.01.01_60/gr_ZSM004v010101p.pdf (accessed on 8 July 2020).

- FG-ML5G-ARC5G “Unified Architecture for Machine Learning in 5G and Future Networks”. 2019. Available online: https://www.itu.int/en/ITU-T/focusgroups/ml5g/Documents/ML5G-delievrables.pdf (accessed on 17 June 2020).

- Supplement 55 to ITU-T, Y.3170 “Machine Learning in Future Networks Including IMT-2020: Use Cases”. 2019. Available online: https://www.itu.int/rec/T-REC-Y.Sup55-201910-I (accessed on 8 July 2020).

- ITU-T, Y.3172 “Architectural Framework for Machine Learning in Future Networks including IMT-2020”. 2019. Available online: https://www.itu.int/rec/T-REC-Y.3172-201906-I/en (accessed on 8 July 2020).

- ITU-T, Y.3173 “Framework for Evaluating Intelligence Level of Future Networks including IMT-2020: Use Cases”. 2020. Available online: https://www.itu.int/rec/T-REC-Y.3173-202002-I (accessed on 8 July 2020).

- ITU-T, Y.3174 “Framework for Data Handling to Enable Machine Learning in Future Networks including IMT-2020: Use Cases”. 2020. Available online: https://www.itu.int/rec/T-REC-Y.3174-202002-I (accessed on 8 July 2020).

- Zheng, Y.; Xu, S.; Dhody, D. Usecases for Network Artificial Intelligence (NAI). Internet-Draft. 2017. Available online: https://tools.ietf.org/html/draft-zheng-opsawg-network-ai-usecases-00 (accessed on 8 July 2020).

- Thantharate, A.; Paropkari, R.; Walunj, V.; Beard, C. DeepSlice: A Deep Learning Approach towards an Efficient and Reliable Network Slicing in 5G Networks. In Proceedings of the 10th IEEE Annual Ubiquitous Computing, Electronics Mobile Communication Conference (UEMCON 2019), New York, NY, USA, 10–12 October 2019; pp. 762–767. [Google Scholar]

- 3GPP TR 23.791 (V16.2.0): Technical Report. 3rd Generation Partnership Project; Technical Specification Group Services and System Aspects; Study of Enablers for Network Automation for 5G (Release 16). June 2019. Available online: http://www.3gpp.org/ftp//Specs/archive/23_series/23.791/23791-g20.zip (accessed on 8 July 2020).

- Boubendir, A.; Guillemin, F.; Kerboeuf, S.; Orlandi, B.; Faucheux, F.; Lafragette, J. Network Slice Life-Cycle Management Towards Automation. In Proceedings of the IFIP/IEEE Symposium on Integrated Network and Service Management (IM 2019), Arlington, VA, USA, 8–12 April 2019; pp. 709–711. [Google Scholar]

- Si, M. Nation Ushers in 5G commercial Service Era. China Daily Press. November 2019. Available online: https://www.chinadaily.com.cn/a/201911/01/WS5dbb25a2a310cf3e35574c30.html (accessed on 8 July 2020).

- Tran, T.X.; Hajisami, A.; Pandey, P.; Pompili, D. Collaborative Mobile Edge Computing in 5G Networks: New Paradigms, Scenarios, and Challenges. IEEE Commun. Mag. 2017, 55, 54–61. [Google Scholar] [CrossRef]

- Dell Technologies and Orange Collaborate for Telco Multi-Access Edge Transformation. Dell Technologies News. May 2019. Available online: https://corporate.delltechnologies.com/en-ie/newsroom/dell-emc-and-orange-collaborate-for-telco-multi-access-edge-transformation.htm (accessed on 8 July 2020).

- NVIDIA with Microsoft Announces Technology Collaboration for Era of Intelligent Edge: Microsoft’s Intelligent Edge Solutions Extended with NVIDIA T4 GPUs to Help Accelerate AI Across Industries. Globe Newswire Press. October 2019. Available online: https://www.globenewswire.com/news-release/2019/10/21/1932901/0/en/NVIDIA-with-Microsoft-Announces-Technology-Collaboration-for-Era-of-Intelligent-Edge.html (accessed on 8 July 2020).

- World Wide Technology and MobiledgeX Expand Partnership to Accelerate Mobile Edge Computing Deployments and Power 5G Profitability. Business Wire Press. October 2019. Available online: https://www.businesswire.com/news/home/20191021005117/en/World-Wide-Technology-MobiledgeX-Expand-Partnership-Accelerate (accessed on 8 July 2020).

- Novo, O. Blockchain meets IoT: An architecture for scalable access management in IoT. IEEE Internet Things J. 2018, 5, 1184–1195. [Google Scholar] [CrossRef]

- Wang, J.; Pan, J.; Esposito, F.; Calyam, P.; Yang, Z.; Mohapatra, P. Edge Cloud Offloading Algorithms: Issues, Methods, and Perspectives. ACM Comput. Surv. 2019, 52, 1–23. [Google Scholar] [CrossRef]

- Li, S.; Tao, Y.; Qin, X.; Liu, L.; Zhang, Z.; Zhang, P. Energy-Aware Mobile Edge Computation Offloading for IoT Over Heterogenous Networks. IEEE Access 2019, 7, 13092–13105. [Google Scholar] [CrossRef]

- Mehrabi, A.; Siekkinen, M.; Ylä-Jääski, A. Cache-Aware QoE-Traffic Optimization in Mobile Edge Assisted Adaptive Video Streaming. arXiv 2018, arXiv:1805.09255. Available online: https://arxiv.org/abs/1805.09255 (accessed on 8 July 2020).

- Sasikumar, A.; Zhao, T.; Hou, I.H.; Shakkottai, S. Cache-Version Selection and Content Placement for Adaptive Video Streaming in Wireless Edge Networks. arXiv 2019, arXiv:1903.12164. Available online: https://arxiv.org/abs/1903.12164 (accessed on 8 July 2020).

- Ren, P.; Qiao, X.; Chen, J.; Dustdar, S. Mobile Edge Computing—A Booster for the Practical Provisioning Approach of Web-Based Augmented Reality. In Proceedings of the 3rd ACM/IEEE Symposium on Edge Computing (SEC 2018), Bellevue, WA, USA, 25–27 October 2018; pp. 349–350. [Google Scholar]

- Jeong, S.; Simeone, O.; Kang, J. Mobile Edge Computing via a UAV-Mounted Cloudlet: Optimization of Bit Allocation and Path Planning. IEEE Trans. Veh. Technol. 2018, 67, 2049–2063. [Google Scholar] [CrossRef]

- Yang, C.; Liu, Y.; Chen, X.; Zhong, W.; Xie, S. Efficient Mobility-Aware Task Offloading for Vehicular Edge Computing Networks. IEEE Access 2019, 7, 26652–26664. [Google Scholar] [CrossRef]

- Liu, Y.; Yang, C.; Jiang, L.; Xie, S.; Zhang, Y. Intelligent Edge Computing for IoT-Based Energy Management in Smart Cities. IEEE Netw. 2019, 33, 111–117. [Google Scholar] [CrossRef]

- Nikouei, S.Y.; Chen, Y.L.; Song, S.; Xu, R.; Choi, B.Y.; Faughnan, T.R. Real-Time Human Detection as an Edge Service Enabled by a Lightweight CNN. In Proceedings of the 3rd IEEE International Conference on Edge Computing (EDGE 2018), Milan, Italy, 8–13 July 2018; pp. 125–129. [Google Scholar]

- Chen, J.; Li, K.; Deng, Q.; Li, K.; Yu, P.S. Distributed Deep Learning Model for Intelligent Video Surveillance Systems with Edge Computing. arXiv 2019, arXiv:1904.06400. Available online: https://arxiv.org/abs/1904.06400 (accessed on 8 July 2020). [CrossRef]

- 5G Systems Enabling the Transformation of Industry and Society. Technical Report, Ericsson. 2017. Available online: https://www.ericsson.com/en/reports-and-papers/white-papers/5g-systems--enabling-the-transformation-of-industry-and-society (accessed on 8 July 2020).

- Li, L.; Ota, K.; Dong, M. Deep Learning for Smart Industry: Efficient Manufacture Inspection System with Fog Computing. IEEE Trans. Ind. Inform. 2018, 14, 4665–4673. [Google Scholar] [CrossRef]

- Li, X.; Wan, J.; Dai, H.N.; Imran, M.; Xia, M.; Celesti, A. A Hybrid Computing Solution and Resource Scheduling Strategy for Edge Computing in Smart Manufacturing. IEEE Trans. Ind. Inform. 2019, 15, 4225–4234. [Google Scholar] [CrossRef]

| Topic | Paper | DL Model | Purpose/Methods |

|---|---|---|---|

| Slicing | [27] | DNN | Uses spatio-temporal relationships between stations to predict future traffic demand patterns |

| [50] | RNN | Predicts base station pairing for highly mobile users to prepare for future demand | |

| [35] | RNN | Minimizes signaling overhead, latency, call dropping, and radio resource wastage using predictive handover | |

| [26] | RNN | Predicts traffic demand by exploiting space and time patterns between base stations | |

| [28] | DNN | Identifies real-time traffic and assigns to relevant network slice | |

| [54] | RL | Offers slicing strategy based on predictions for traffic and resource requirements | |

| [56] | RL | Maximizes network provider’s revenue through automated admission and allocation for slices | |

| [57] | RL | Provides automated priority-based radio resource slicing and allocation | |

| [53] | RL | Constructs network services on demand based on resource use to lower costs | |

| [103] | DNN | Selects slice for resilient and efficient balancing of network load | |

| Resource Allocation | [32] | DNN | Forecasts resource capacity and demands per slice using network probes |

| [58] | DNN | Predicts traffic demand and distributes radio resources between slices | |

| [60] | RL | Minimizes cost of delay and energy consumption for multi-user wireless system | |

| [61] | RL | Minimizes end-to-end delay for caching, offloading, and radio resources for IoT | |

| Caching | [62] | DNN | Reduces computational time and energy consumption for cache policy at edge |

| [63] | RL | Jointly optimizes caching and computation for vehicular networks | |

| [64] | RNN | Forecasts user movement and service type to cache and offload tasks in advance | |

| [65] | RL | Predicts optimal cache state for radio networks | |

| [66] | RL | Optimizes cost for caching, computation, and offloading using vehicle mobility and service deadlines restraints | |

| [67] | DNN | Updates cache placement dynamically based on station and content popularity | |

| [68] | DNN | Identifies communities of mobile users and predictive device-to-device caching | |

| Offloading | [70] | RL | Optimizes scheduling of offloaded tasks for vehicular networks |

| [71] | RL | Minimizes energy, computation, and delay cost for multiple task offloading | |

| QoS | [59] | RL | Dynamically meets QoS requirements for users while maximizing profits |

| [73] | DNN | Automates management of network resources for video streaming within QoS range | |

| Energy | [82] | DNN | Uses an NLP model to demonstrate DL energy requirements |

| [83] | DNN | Creates energy model to compare consumption of cloud architectures | |

| [84] | RL | Minimizes energy usage for task offloading from mobile devices | |

| Security | [86] | RL | Optimizes MEC security policies to protect against unknown attacks |

| [87] | DNN | Protects against various types of denial-of-service attacks | |

| [88] | DNN | Develops a federated learning system with differential privacy guarantees |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

McClellan, M.; Cervelló-Pastor, C.; Sallent, S. Deep Learning at the Mobile Edge: Opportunities for 5G Networks. Appl. Sci. 2020, 10, 4735. https://doi.org/10.3390/app10144735

McClellan M, Cervelló-Pastor C, Sallent S. Deep Learning at the Mobile Edge: Opportunities for 5G Networks. Applied Sciences. 2020; 10(14):4735. https://doi.org/10.3390/app10144735

Chicago/Turabian StyleMcClellan, Miranda, Cristina Cervelló-Pastor, and Sebastià Sallent. 2020. "Deep Learning at the Mobile Edge: Opportunities for 5G Networks" Applied Sciences 10, no. 14: 4735. https://doi.org/10.3390/app10144735

APA StyleMcClellan, M., Cervelló-Pastor, C., & Sallent, S. (2020). Deep Learning at the Mobile Edge: Opportunities for 5G Networks. Applied Sciences, 10(14), 4735. https://doi.org/10.3390/app10144735