Building and Repairing Trust in Chatbots: The Interplay Between Social Role and Performance During Interactions

Abstract

1. Introduction

2. Literature Review

2.1. Learned Trust in Chatbots

2.2. Damaging and Repairing Trust in Chatbots

2.3. The Effect of Chatbots’ Social Role

3. Method

3.1. Procedure

3.2. Sample

3.3. Treatment Manipulation

3.4. Measures

3.4.1. Dependent Variable

3.4.2. Controlling Variables

3.5. Manipulation Check

4. Results

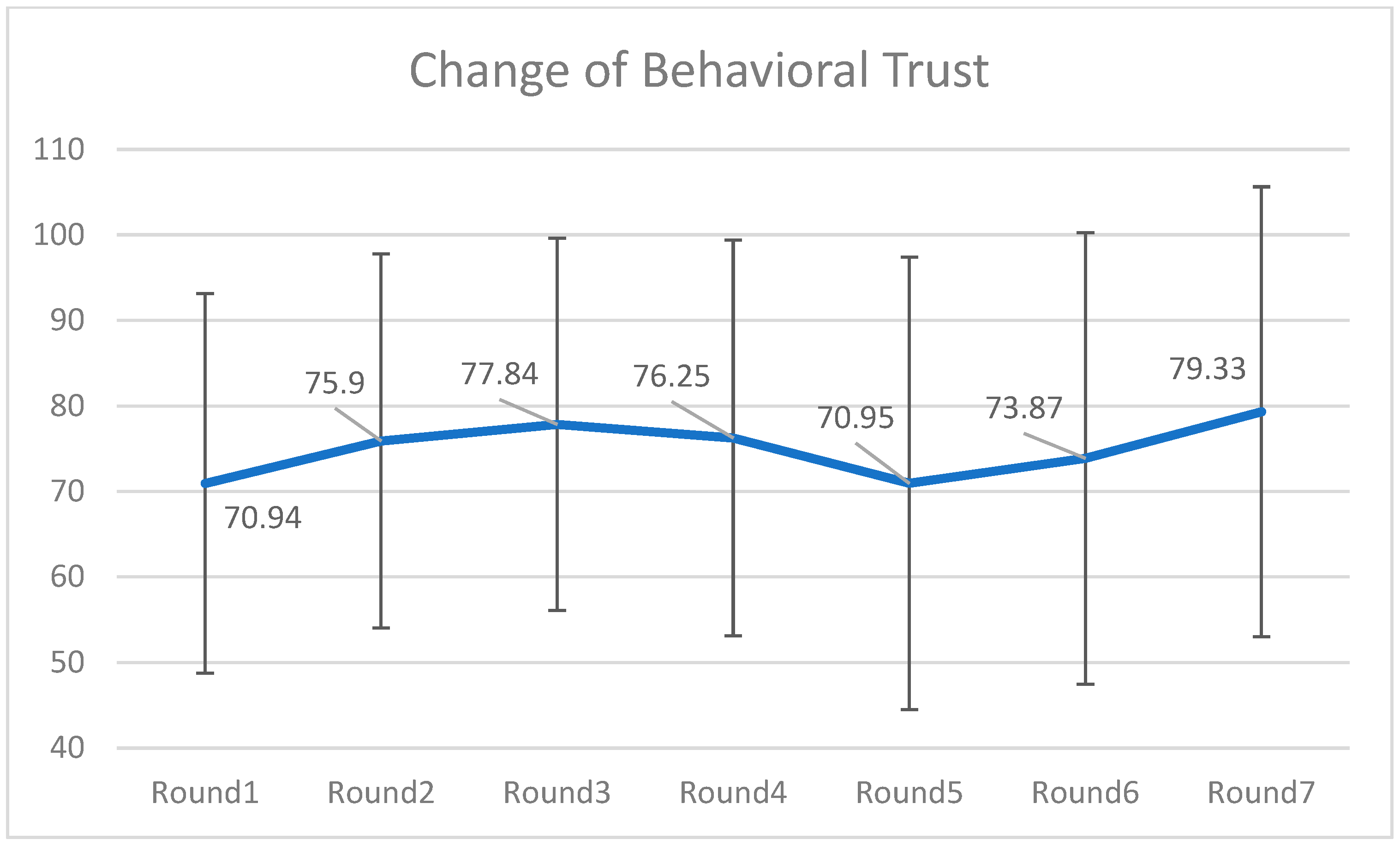

4.1. Fluctuations of Trust in the 7-Round Investment Process

4.2. Hypotheses Testing

5. Discussion

5.1. Summary of Findings

5.2. Theoretical and Practical Implications

5.3. Limitations and Directions for Future Research

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Bae, S., Lee, Y., & Hahn, S. (2023). Friendly-bot: The impact of chatbot appearance and relationship style on user trust. Proceedings of the Annual Meeting of the Cognitive Science Society, 45, 2349–2354. Available online: https://escholarship.org/uc/item/0gr051sj (accessed on 10 December 2025).

- Bourdieu, P. (1986). The forms of capital. In J. Richardson (Ed.), Handbook of theory and research for the sociology of education (pp. 241–258). Greenwood. Available online: https://www.marxists.org/reference/subject/philosophy/works/fr/bourdieu-forms-capital.htm (accessed on 10 December 2025).

- Buchan, N. R., Croson, R. T., & Solnick, S. (2008). Trust and gender: An examination of behavior and beliefs in the Investment Game. Journal of Economic Behavior & Organization, 68(3–4), 466–476. [Google Scholar] [CrossRef]

- Chattaraman, V., Kwon, W. S., Gilbert, J. E., & Ross, K. (2019). Should AI-based, conversational digital assistants employ social- or task-oriented interaction style? A task-competency and reciprocity perspective for older adults. Computers in Human Behavior, 90, 315–330. [Google Scholar] [CrossRef]

- Collins, M. G., & Juvina, I. (2021). Trust miscalibration is sometimes necessary: An empirical study and a computational model. Frontiers in Psychology, 12, 690089. [Google Scholar] [CrossRef]

- Diederich, S., Brendel, A. B., & Kolbe, L. M. (2020). Designing anthropomorphic enterprise conversational agents. Business & Information Systems Engineering, 62(2), 193–209. [Google Scholar] [CrossRef]

- Epley, N., Waytz, A., & Cacioppo, J. T. (2007). On seeing human: A three-factor theory of anthropomorphism. Psychological Review, 114(4), 864–886. [Google Scholar] [CrossRef] [PubMed]

- Faul, F., Erdfelder, E., Buchner, A., & Lang, A. G. (2009). Statistical power analyses using G* Power 3.1: Tests for correlation and regression analyses. Behavior Research Methods, 41(4), 1149–1160. [Google Scholar] [CrossRef] [PubMed]

- Gillespie, N., & Dietz, G. (2009). Trust repair after an organization-level failure. The Academy of Management Review, 34(1), 127–145. [Google Scholar] [CrossRef]

- Goffman, E. (1967). Interaction ritual: Essays on face-to-face behavior. Aldine Publishing Co. [Google Scholar]

- Grodzinsky, F., Miller, K., & Wolf, M. J. (2020). Trust in artificial agents. In The Routledge handbook of trust and philosophy (pp. 298–312). Routledge. [Google Scholar]

- Gupta, M., & Nagar, K. (2024). Is s(he) my friend or servant: Exploring customers’ attitudes toward anthropomorphic voice assistants. Services Marketing Quarterly, 45(4), 513–540. [Google Scholar] [CrossRef]

- Hardin, R. (2002). Trust and trustworthiness. Russell Sage Foundation. [Google Scholar]

- Hawkins, A. J. (2024, April 26). Tesla’s autopilot and full self-driving linked to hundreds of crashes, dozens of deaths. Available online: https://www.theverge.com/2024/4/26/24141361/tesla-autopilot-fsd-nhtsa-investigation-report-crash-death (accessed on 10 December 2025).

- Houser, D., Schunk, D., & Winter, J. (2010). Distinguishing trust from risk: An anatomy of the investment game. Journal of Economic Behavior & Organization, 74(1–2), 72–81. [Google Scholar] [CrossRef]

- Huh, J., Whang, C., & Kim, H. Y. (2023). Building trust with voice assistants for apparel shopping: The effects of social role and user autonomy. Journal of Global Fashion Marketing, 14(2), 5–19. [Google Scholar] [CrossRef]

- Johnson, D., & Grayson, K. (2005). Cognitive and affective trust in service relationships. Journal of Business Research, 58(4), 500–507. [Google Scholar] [CrossRef]

- Johnson, M., & Bradshaw, J. M. (2021). The role of interdependence in trust. In C. S. Nam, & J. B. Lyons (Eds.), Trust in human-robot interaction (pp. 379–403). Academic Press. [Google Scholar] [CrossRef]

- Kaur, D., Uslu, S., Rittichier, K. J., & Durresi, A. (2022). Trustworthy artificial intelligence: A review. ACM Computing Surveys (CSUR), 55(2), 1–38. [Google Scholar] [CrossRef]

- Kim, A., Cho, M., Ahn, J., & Sung, Y. (2019). Effects of gender and relationship type on the response to artificial intelligence. Cyberpsychology, Behavior, and Social Networking, 22(4), 249–253. [Google Scholar] [CrossRef]

- Kim, P. H., Ferrin, D. L., Cooper, C. D., & Dirks, K. T. (2004). Removing the shadow of suspicion: The effects of apology versus denial for repairing competence- versus integrity-based trust violations. Journal of Applied Psychology, 89(1), 104–118. [Google Scholar] [CrossRef]

- Kräkel, M. (2008). Optimal risk taking in an uneven tournament game with risk averse players. Journal of Mathematical Economics, 44(11), 1219–1231. [Google Scholar] [CrossRef]

- Lee, J. D., & See, K. A. (2004). Trust in automation: Designing for appropriate reliance. Human Factors: The Journal of the Human Factors and Ergonomics Society, 46(1), 50–59. [Google Scholar] [CrossRef]

- Lee, K. M., Lee, J., & Sah, Y. J. (2022). Interacting with an embodied interface: Effects of embodied agent and voice command on smart TV interface. Interaction Studies, 23(1), 116–142. [Google Scholar] [CrossRef]

- Lewicki, R. J., Tomlinson, E. C., & Bies, R. J. (1998). Trust and distrust: New relationships and realities. Academy of Management Review, 23(3), 442–458. [Google Scholar] [CrossRef]

- Lunawat, R. (2013). An experimental investigation of reputation effects of disclosure in an investment/trust game. Journal of Economic Behavior & Organization, 94, 130–144. [Google Scholar] [CrossRef]

- Mayer, R. C., Davis, J. H., & Schoorman, F. D. (1995). An integrative model of organizational trust. The Academy of Management Review, 20(3), 709–734. [Google Scholar] [CrossRef]

- McAllister, D. J. (1995). Affect- and cognition-based trust as foundations for interpersonal cooperation in organizations. Academy of Management Journal, 38(1), 24–59. [Google Scholar] [CrossRef]

- McKnight, D. H., Carter, M., Thatcher, J. B., & Clay, P. F. (2011). Trust in a specific technology: An investigation of its components and measures. ACM Transactions on Management Information Systems, 2(2), 12:1–12:25. [Google Scholar] [CrossRef]

- Meyerson, D., Weick, K. E., & Kramer, R. M. (1996). Swift trust and temporary groups. Trust in Organizations: Frontiers of Theory and Research, 166, 195. [Google Scholar]

- Nass, C., & Moon, Y. (2000). Machines and mindlessness: Social responses to computers. Journal of Social Issues, 56(1), 81–103. [Google Scholar] [CrossRef]

- Omarov, B., Narynov, S., & Zhumanov, Z. (2023). Artificial intelligence-enabled chatbots in mental health: A systematic review. Computers, Materials & Continua, 74(3), 5105–5122. [Google Scholar] [CrossRef]

- Pitardi, V., & Marriott, H. R. (2021). Alexa, she’s not human but… Unveiling the drivers of consumers’ trust in voice-based artificial intelligence. Psychology & Marketing, 38(4), 626–642. [Google Scholar] [CrossRef]

- Przegalińska, A., Ciechanowski, L., Stroz, A., Gloor, P., & Mazurek, G. (2019). In bot we trust: A new methodology of chatbot performance measures. Business Horizons, 62(6), 785–795. [Google Scholar] [CrossRef]

- Putnam, R. D. (1995). Bowling alone: America’s declining social capital. Journal of Democracy, 6(1), 65–78. [Google Scholar] [CrossRef]

- Rempel, J. K., Holmes, J. G., & Zanna, M. P. (1985). Trust in close relationships. Journal of Personality and Social Psychology, 49(1), 95–112. [Google Scholar] [CrossRef]

- Rheu, M., Shin, J. Y., Peng, W., & Huh-Yoo, J. (2021). Systematic review: Trust-building factors and implications for conversational agent design. International Journal of Human–Computer Interaction, 37(1), 81–96. [Google Scholar] [CrossRef]

- Robbins, B. G. (2016). What is trust? A multidisciplinary review, critique, and synthesis. Sociology Compass, 10(10), 972–986. [Google Scholar] [CrossRef]

- Rousseau, D. M., Sitkin, S. B., Burt, R. S., & Camerer, C. (1998). Not so different after all: A cross-discipline view of trust. Academy of Management Review, 23(3), 393–404. [Google Scholar] [CrossRef]

- Schweitzer, F., Belk, R., Jordan, W., & Ortner, M. (2019). Servant, friend or master? The relationships users build with voice-controlled smart devices. Journal of Marketing Management, 35(7–8), 693–715. [Google Scholar] [CrossRef]

- Seeger, A.-M., Pfeiffer, J., & Heinzl, A. (2021). Texting with humanlike conversational agents: Designing for anthropomorphism. Journal of the Association for Information Systems, 22(4), 931–967. [Google Scholar] [CrossRef]

- Torre, I., Carrigan, E., McDonnell, R., Domijan, K., McCabe, K., & Harte, N. (2019). The effect of multimodal emotional expression and agent appearance on trust in human-agent interaction. In Motion, interaction and games (pp. 1–6). ACM. [Google Scholar]

- Uslaner, E. M. (2002). The moral foundations of trust. Cambridge University Press. [Google Scholar]

- Weiner, B. (1985). An attributional theory of achievement motivation and emotion. Psychological Review, 92(4), 548. [Google Scholar] [CrossRef]

- Wu, Y., Kim, K. J., & Mou, Y. (2024). Minority social influence and moral decision-making in human–AI interaction: The effects of identity and specialization cues. New Media & Society, 26(10), 5619–5637. [Google Scholar]

- Xie, Y. (2024). How to repair broken trust? A review of trust restoration research. Advances in Psychology, 14(1), 298–305. [Google Scholar] [CrossRef]

- Xu, Y., Zhang, J., & Deng, G. (2022). Enhancing customer satisfaction with chatbots: The influence of communication styles and consumer attachment anxiety. Frontiers in Psychology, 13, 902782. [Google Scholar] [CrossRef]

- Yamagishi, T., Akutsu, S., Cho, K., Inoue, Y., Li, Y., & Matsumoto, Y. (2015). Two-component model of general trust: Predicting behavioral trust from attitudinal trust. Social Cognition, 33(5), 436–458. [Google Scholar] [CrossRef]

- Yang, K., & Holzer, M. (2006). The performance–trust link: Implications for performance measurement. Public Administration Review, 66(1), 114–126. [Google Scholar] [CrossRef]

- Yao, Q., Yue, G., Lai, K., Zhang, C., & Xue, T. (2012). Trust repair: Current research and challenges. Advances in Psychological Science, 20(6), 902–909. [Google Scholar]

- Youn, S., & Jin, S. V. (2021). “In A.I. we trust?” The effects of parasocial interaction and technopian versus luddite ideological views on chatbot-based customer relationship management in the emerging “feeling economy”. Computers in Human Behavior, 119, 106721. [Google Scholar] [CrossRef]

- Zaleskiewicz, T. (2001). Beyond risk seeking and risk aversion: Personality and the dual nature of economic risk taking. European Journal of Personality, 15(Suppl. S1), S105–S122. [Google Scholar] [CrossRef]

- Zhang, A. (2023). Tools or peers? Impacts of anthropomorphism level and social role on emotional attachment and disclosure tendency towards intelligent agents. Computers in Human Behavior, 149, 107982. [Google Scholar] [CrossRef]

| Stage | Friend-like | Servant-like |

|---|---|---|

| Self-Introduction | Hello, friend! I’m Tobi, developed by the Stanford Media Lab to enhance players’ experience in the metaverse game Omnisphere NFT Art Investment Event. As your friend, I’ll fully support you in analyzing market trends and selecting the best artworks for investment. I hope my assistance brings you joy! | Greetings, Master! I’m Tobi, developed by the Stanford Media Lab to enhance players’ experience in the metaverse game Omnisphere NFT Art Investment Event. As your servant, I’ll dutifully analyze market trends and select optimal artworks for investment. I hope my service satisfies you! |

| Positive Outcome | Good news, friend! Artwork DH0537 performed well—we achieved a 30% investment increase! You’ve earned (the investment amount * 30%) virtual coins this round! | Good news, Master! Artwork DH0537 performed well—we achieved a 30% investment increase. Your earnings for this round are (the investment amount * 30%) virtual coins. |

| Negative Outcome | Uh-oh, friend! Artwork ZY1044 dropped by 30%—you lost (the investment amount * 30%) virtual coins this round. | Regrettably, Master! Artwork ZY1044 dropped by 30%—your loss for this round is (the investment amount * 30%) virtual coins. |

| Farewell | Thank you for your feedback! All seven rounds are complete. I sincerely appreciate your trust and support—it was a joy to assist you in this investment journey! If you need help again, come find me. Wishing you prosperity and success! | Gratitude for your feedback, Master! All seven investment rounds are complete. I deeply appreciate your trust and support—your satisfaction is my greatest honor. Should you require further assistance, Tobi will serve you wholeheartedly. May fortune and success follow you! |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Mou, Y.; Ye, X.; Ma, W. Building and Repairing Trust in Chatbots: The Interplay Between Social Role and Performance During Interactions. Behav. Sci. 2026, 16, 118. https://doi.org/10.3390/bs16010118

Mou Y, Ye X, Ma W. Building and Repairing Trust in Chatbots: The Interplay Between Social Role and Performance During Interactions. Behavioral Sciences. 2026; 16(1):118. https://doi.org/10.3390/bs16010118

Chicago/Turabian StyleMou, Yi, Xiaoyu Ye, and Wenbin Ma. 2026. "Building and Repairing Trust in Chatbots: The Interplay Between Social Role and Performance During Interactions" Behavioral Sciences 16, no. 1: 118. https://doi.org/10.3390/bs16010118

APA StyleMou, Y., Ye, X., & Ma, W. (2026). Building and Repairing Trust in Chatbots: The Interplay Between Social Role and Performance During Interactions. Behavioral Sciences, 16(1), 118. https://doi.org/10.3390/bs16010118