Identification of Retinal Diseases Using Light Convolutional Neural Networks and Intrinsic Mode Function Technique

Abstract

1. Introduction

1.1. Challenges

1.2. Existing Approaches and Limitations

Limitations of Existing Research Approaches

1.3. Motivation

1.4. Proposed Solution

1.4.1. Contributions

1.4.2. Effectiveness of the Proposed IMF+LCNN Framework

2. Materials and Methods

2.1. Data Preprocessing and Augmentation

2.1.1. Image Normalization

Contrast Enhancement Using CLAHE

- Clip limit: 2.0

- Tile grid size:

- Interpolation: Bilinear

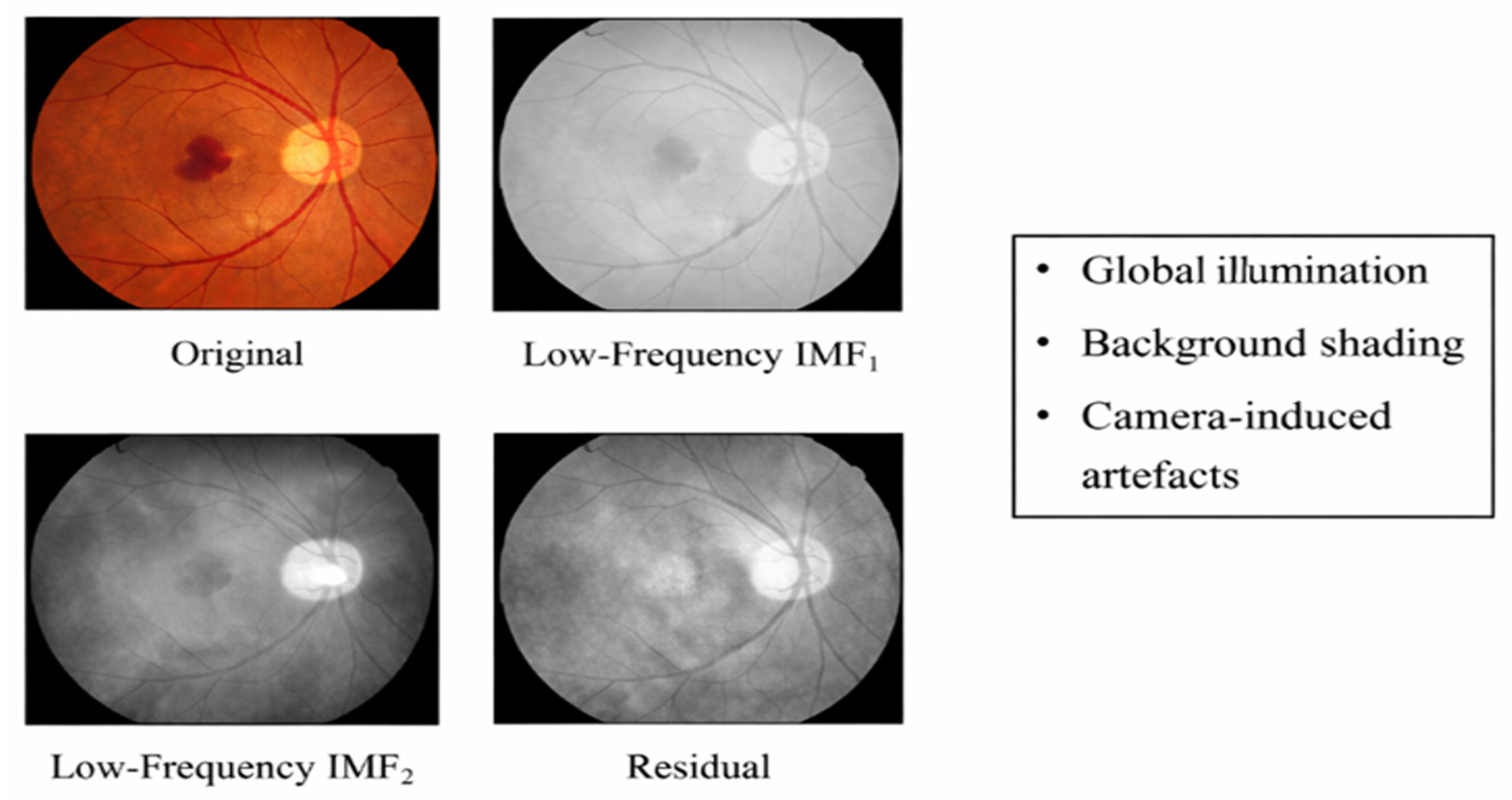

2.1.2. IMF-Based Feature Enhancement

2.1.3. Data Augmentation Strategy

- (a)

- Original fundus image.

- (b)

- IMF-decomposed and recombined feature-enhanced image.

- (c)

- Augmented variants including rotation, flipping, and scaling.

2.2. Proposed Approach (IMF+LCNN)

2.2.1. Empirical Mode Decomposition (EMD): Formal Definition

2.2.2. Mathematical Conditions for an Intrinsic Mode Function

- For a decomposed component to qualify as an IMF, it must satisfy both of the following conditions:

- Extrema–Zero Crossing Condition

- 3.

- Local Mean Condition

- 4.

- These conditions ensure that each IMF represents a well-behaved, narrow-band oscillatory mode, making it suitable for frequency-aware feature analysis.

2.2.3. Sifting Process and IMF Extraction

- 1.

- Identify all local maxima and minima of .

- 2.

- Interpolate maxima to form the upper envelope .

- 3.

- Interpolate minima to form the lower envelope .

- 4.

- Compute the local mean:

- 5.

- Extract the detail component:

- 6.

- Repeat steps 1–5 until IMF conditions are satisfied.

- 7.

- Subtract the IMF and repeat the process on the residual.

- 8.

- This adaptive process continues until the residual becomes monotonic.

2.2.4. Selection of Relevant IMF Components

2.2.5. Clinical Interpretation of IMF Frequency Bands and Integration with Light CNN

2.2.6. Workflow of the Proposed IMF-Based Feature Learning

| Algorithm 1: IMF-Based Adaptive Feature Enhancement and LightCNN Classification |

| IMF Algorithm |

| Input Fundus image dataset with corresponding class labels Output Predicted retinal disease classes with confidence scores |

Step 1: Image Preprocessing

Step 5: Data Augmentation (Training Only)

|

2.3. Deep Learning Layer Design Using IMF

2.4. Hypertuning

2.5. Experimental Setup

2.5.1. Dataset Description and Scale-Wise Utilization

2.5.2. Model Architecture and Training Configuration

- Batch size: 32

- Number of epochs: 50

- Initial learning rate:

- Loss function: Categorical cross-entropy

- Regularization: Dropout and early stopping based on validation loss

2.5.3. Hardware and Software Environment

2.5.4. Evaluation Protocol and Performance Metrics

- Accuracy.

- Precision.

- Recall (sensitivity).

- F1-Score.

- Area Under the ROC Curve (AUC-ROC).

- Dice Similarity Coefficient (DSC) (for structure-level evaluation).

- Confusion Matrix.

3. Results

3.1. Ablation Study and Component-Wise Contribution Analysis

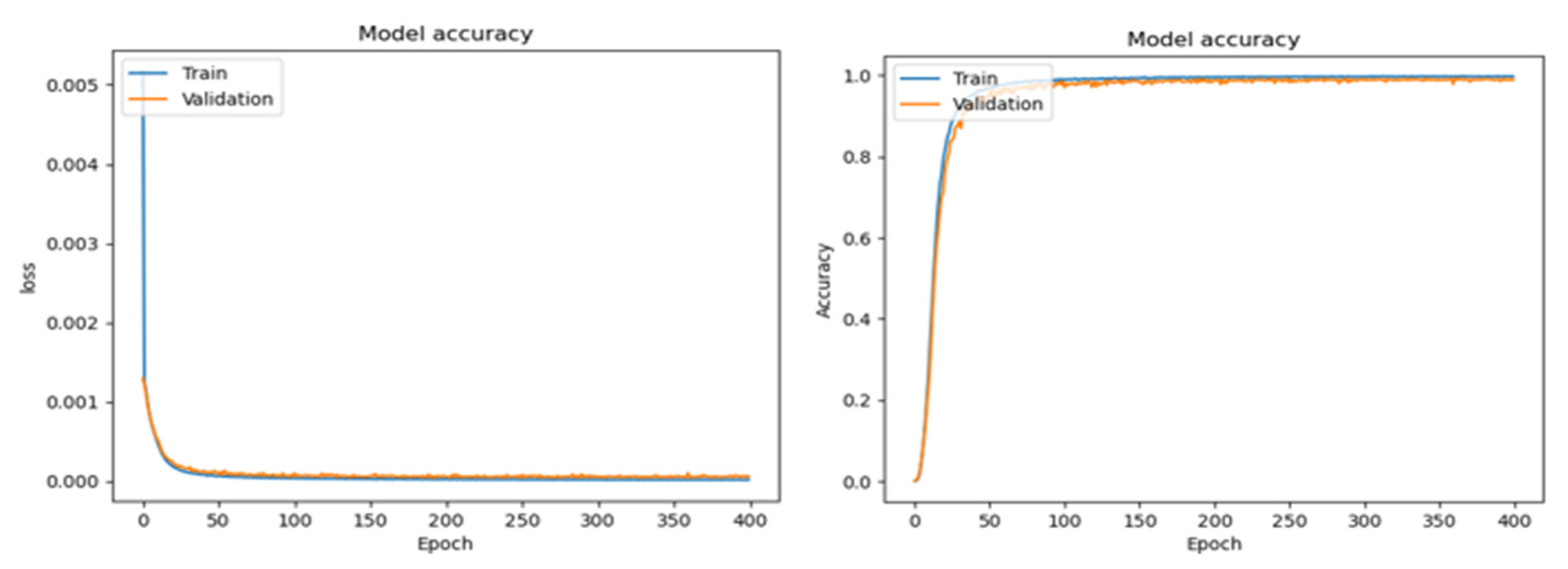

3.2. Training Analysis

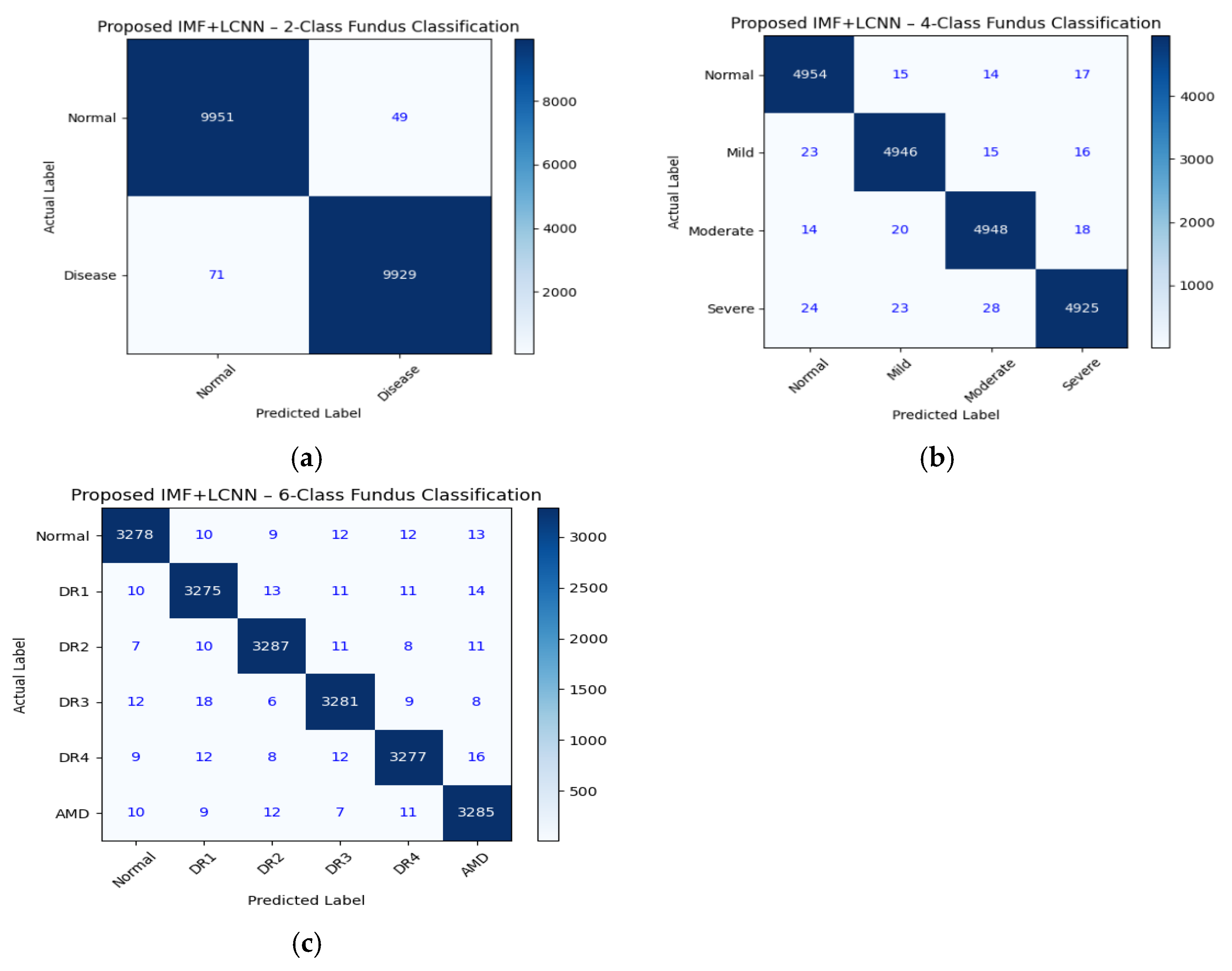

3.3. Testing Analysis

4. Discussions

Achievements of the Proposed IMF+LCNN Framework

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

Abbreviations

| IMF | Intrinsic Mode Function |

| EMD | Empirical Mode Decomposition |

| LCNN | Light Convolutional Neural Network |

| ResNet | Residual Neural Network |

| DL | Deep Learning |

| ML | Machine Learning |

| DR | Diabetic Retinopathy |

| GANs | Generative Adversal Networks |

| UDA | Unsupervised Domain Adaptation |

| ReLU | Rectified Linear Unit |

| CLAHE | Contrast Limited Adaptive Histogram Equalization. |

| AUC ROC | Area Under the Curve Receiver Operating Characteristics |

References

- Lei, H.; Liu, W.; Xie, H.; Zhao, B.; Yue, G.; Lei, B. Unsupervised domain adaptation-based image synthesis and feature alignment for joint optic disc and cup segmentation. IEEE J. Biomed. Health Inform. 2022, 26, 90–102. [Google Scholar] [CrossRef]

- Zhou, W.; Ji, J.; Cui, W.; Wang, Y.; Yi, Y. Unsupervised domain adaptation fundus image segmentation via multi-scale adaptive adversarial learning. IEEE J. Biomed. Health Inform. 2024, 28, 5792–5803. [Google Scholar] [CrossRef]

- Junayed, M.S.; Islam, M.B.; Sadeghzadeh, A.; Rahman, S. CataractNet: An automated cataract detection system using deep learning for fundus images. IEEE Access 2021, 9, 128799–128808. [Google Scholar] [CrossRef]

- Palaniswamy, T.; Vellingiri, M. Internet of things and deep learning enabled diabetic retinopathy diagnosis using retinal fundus images. IEEE Access 2023, 11, 27590–27601. [Google Scholar] [CrossRef]

- Rashid, R.; Aslam, W.; Mehmood, A.; Vargas, D.L.R.; Diez, I.D.L.T.; Ashraf, I. A detectability analysis of retinitis pigmentosa using novel SE-ResNet based deep learning model and color fundus images. IEEE Access 2024, 12, 28297–28309. [Google Scholar] [CrossRef]

- Li, X.; Hu, X.; Qi, X.; Yu, L.; Zhao, W.; Heng, P.A.; Xing, L. Rotation-oriented collaborative self-supervised learning for retinal disease diagnosis. IEEE Trans. Med. Imaging 2021, 40, 2284–2294. [Google Scholar] [CrossRef]

- Atwany, M.Z.; Sahyoun, A.H.; Yaqub, M. Deep learning techniques for diabetic retinopathy classification: A survey. IEEE Access 2022, 10, 28642–28655. [Google Scholar] [CrossRef]

- Luo, Y.; Pan, J.; Fan, S.; Du, Z.; Zhang, G. Retinal image classification by self-supervised fuzzy clustering network. IEEE Access 2020, 8, 92352–92362. [Google Scholar] [CrossRef]

- Wang, J.; Bao, Y.; Wen, Y.; Lu, H.; Luo, H.; Xiang, Y.; Li, X.; Liu, C.; Qian, D. Prior-attention residual learning for more discriminative COVID-19 screening in CT images. IEEE Trans. Med. Imaging 2020, 39, 2572–2583. [Google Scholar] [CrossRef]

- Civit-Masot, J.; Domínguez-Morales, M.J.; Vicente-Díaz, S.; Civit, A. Dual machine-learning system to aid glaucoma diagnosis using disc and cup feature extraction. IEEE Access 2020, 8, 127519–127529. [Google Scholar] [CrossRef]

- Chen, F.; Ma, S.; Hao, J.; Liu, M.; Gu, Y.; Yi, Q.; Zhang, J.; Zhao, Y. Dual-path and multi-scale enhanced attention network for retinal diseases classification using ultra-wide-field images. IEEE Access 2023, 11, 45405–45415. [Google Scholar] [CrossRef]

- Zhao, Y.; Fan, S.; Zhang, H.; Zhang, G. Retinal vascular network topology reconstruction and artery/vein classification via dominant set clustering. IEEE Trans. Med. Imaging 2020, 39, 341–356. [Google Scholar] [CrossRef] [PubMed]

- Li, X.; Jia, M.; Islam, M.T.; Yu, L.; Xing, L. Self-supervised feature learning via exploiting multi-modal data for retinal disease diagnosis. IEEE Trans. Med. Imaging 2020, 39, 4023–4033. [Google Scholar] [CrossRef] [PubMed]

- Abdelmaksoud, E.; El-Sappagh, S.; Barakat, S.; Abuhmed, T.; Elmogy, M. Automatic diabetic retinopathy grading system based on detecting multiple retinal lesions. IEEE Access 2021, 9, 15939–15960. [Google Scholar] [CrossRef]

- Hussain, M.; Al-Aqrabi, H.; Munawar, M.; Hill, R.; Parkinson, S. Exudate regeneration for automated exudate detection in retinal fundus images. IEEE Access 2023, 11, 83934–83945. [Google Scholar] [CrossRef]

- Rodrigues, E.O.; Conci, A.; Liatsis, P. ELEMENT: Multi-modal retinal vessel segmentation based on a coupled region growing and machine learning approach. IEEE J. Biomed. Health Inform. 2020, 24, 3507–3519. [Google Scholar] [CrossRef]

- Zong, Y.; Chen, J.; Yang, L.; Tao, S.; Aoma, C.; Zhao, J.; Wang, S. U-net based method for automatic hard exudates segmentation in fundus images using inception module and residual connection. IEEE Access 2020, 8, 167225–167235. [Google Scholar] [CrossRef]

- Shafiq, M.; Fan, Q.; Alghamedy, F.H.; Obidallah, W.J. DualEye-FeatureNet: A dual-stream feature transfer framework for multi-modal ophthalmic image classification. IEEE Access 2024, 12, 143985–144008. [Google Scholar] [CrossRef]

- Atteia, G.; Abdel Samee, N.; Hassan, H.Z. DFTSA-Net: Deep feature transfer-based stacked autoencoder network for DME diagnosis. Entropy 2021, 23, 1251. [Google Scholar] [CrossRef]

- Wang, Y.; Zhen, L.; Tan, T.E.; Fu, H.; Feng, Y.; Wang, Z.; Xu, X.; Goh, R.S.M.; Ng, Y.; Calhoun, C.; et al. Geometric correspondence-based multimodal learning for ophthalmic image analysis. IEEE Trans. Med. Imaging 2024, 43, 1945–1957. [Google Scholar] [CrossRef]

- Ikram, A.; Imran, A.; Li, J.; Alzubaidi, A.; Fahim, S.; Yasin, A.; Fathi, H. A systematic review on fundus image-based diabetic retinopathy detection and grading: Current status and future directions. IEEE Access 2024, 12, 96273–96303. [Google Scholar] [CrossRef]

- Aurangzeb, K.; Aslam, S.; Alhussein, M.; Naqvi, R.A.; Arsalan, M.; Haider, S.I. Contrast enhancement of fundus images by employing modified PSO for improving the performance of deep learning models. IEEE Access 2021, 9, 47930–47945. [Google Scholar] [CrossRef]

- Kulkarni, P.; Reddy, K.S. An OGFA+CNN approach for multi-level disease identification in fundus images. IEEE Access 2025, 13, 100234–100246. [Google Scholar] [CrossRef]

| Ref No. | Authors | Key Contributions | Main Findings | Identifies Research Gap |

|---|---|---|---|---|

| [1] | H. Lei et al. | Proposed an unsupervised domain adaptation framework using image synthesis and feature alignment for joint optic disc and cup segmentation | Demonstrated improved segmentation performance across datasets with domain variability | Focused only on segmentation; does not address disease classification for real time deployment |

| [3] | M.S. Junayed et al. | Developed CataractNet, a CNN-based automated cataract detection model for fundus images | Achieved high accuracy for cataract detection and showed feasibility of automated screening | Limited to single disease, lacks adaptive feature enhancement and generalization across multiple retinal conditions |

| [6] | X.Li et al. | Introduced a rotation based self-supervised learning framework exploiting fundus image symmetry | Improved diagnostic accuracy using unlabeled data and enhanced model | Relies on heavy backbone networks; complexity and deployment constraints are not addressed |

| [11] | F Chen et al. | Proposed a dual path, multi-scale attention network for retinal disease classification using ultra-wide field images | Achieved improved lesion detection and classification accuracy using attention mechanisms | Uses complex deep architectures, lacks lightweight design and adaptive signal decomposition for noise suppression |

| [18] | M. Shafiq et al. | Proposed Dual Eye-Feature Net for multimodal ophthalmic image classification using fundus and OCT images | Improved classification performance through feature transfer between modalities | Requires multi-modal data (Fundus OCT), limiting applicability in fundus-only screening scenarios |

| (a) | |||||

| ALGORITHMS WITH FUNDUS 20 K | ACCURACY (TRAINING) | ACCURACY (TESTING) | PRECISION | RECALL | F1-SCORE |

| CNN [12] | 88.40 | 85.10 | 86.20 | 82.75 | 84.44 |

| LSTM [7] | 87.95 | 80.40 | 77.10 | 83.60 | 80.23 |

| Ensemble (CNN) [5] | 79.30 | 82.10 | 83.55 | 81.20 | 82.36 |

| GAN [6] | 97.80 | 96.90 | 95.85 | 95.60 | 95.72 |

| ResNet [18] | 84.60 | 86.20 | 86.75 | 83.40 | 85.04 |

| IMF [21] | 98.20 | 97.60 | 96.45 | 95.80 | 96.12 |

| Proposed IMF+LCNN | 98.90 | 98.35 | 98.20 | 97.85 | 98.02 |

| (b) | |||||

| ALGORITHMS WITH FUNDUS 20 K | ACCURACY (TRAINING) | ACCURACY (TESTING) | PRECISION | RECALL | F1-SCORE |

| CNN [12] | 90.50 | 88.25 | 89.36 | 85.14 | 86.25 |

| LSTM [7] | 90.63 | 82.25 | 79.29 | 85.64 | 81.75 |

| Ensemble (CNN) [5] | 81.25 | 84.25 | 86.41 | 83.64 | 83.58 |

| GAN [6] | 95.63 | 91.98 | 94.52 | 97.71 | 96.89 |

| ResNet [18] | 86.36 | 88.29 | 88.49 | 85.96 | 87.28 |

| IMF [21] | 98.95 | 94.41 | 92.25 | 91.36 | 95.21 |

| Proposed IMF+LCNN | 98.40 | 97.40 | 95.01 | 95.87 | 96.73 |

| (c) | |||||

| ALGORITHMS WITH FUNDUS 20 K | ACCURACY (TRAINING) | ACCURACY (TESTING) | PRECISION | RECALL | F1-SCORE |

| CNN [12] | 92.30 | 90.15 | 90.85 | 88.40 | 89.61 |

| LSTM [7] | 91.75 | 85.60 | 82.40 | 87.90 | 85.05 |

| Ensemble (CNN) [5] | 84.90 | 87.40 | 88.20 | 85.75 | 86.96 |

| GAN [6] | 99.05 | 93.60 | 93.80 | 95.95 | 94.87 |

| ResNet [18] | 88.95 | 90.40 | 90.10 | 88.65 | 89.37 |

| IMF [21] | 94.20 | 92.90 | 91.95 | 90.20 | 91.57 |

| Proposed IMF+LCNN | 99.65 | 94.58 | 91.30 | 97.05 | 95.17 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Kulkarni, P.; Reddy, K.S. Identification of Retinal Diseases Using Light Convolutional Neural Networks and Intrinsic Mode Function Technique. Diagnostics 2026, 16, 773. https://doi.org/10.3390/diagnostics16050773

Kulkarni P, Reddy KS. Identification of Retinal Diseases Using Light Convolutional Neural Networks and Intrinsic Mode Function Technique. Diagnostics. 2026; 16(5):773. https://doi.org/10.3390/diagnostics16050773

Chicago/Turabian StyleKulkarni, Preethi, and Konda Srinivasa Reddy. 2026. "Identification of Retinal Diseases Using Light Convolutional Neural Networks and Intrinsic Mode Function Technique" Diagnostics 16, no. 5: 773. https://doi.org/10.3390/diagnostics16050773

APA StyleKulkarni, P., & Reddy, K. S. (2026). Identification of Retinal Diseases Using Light Convolutional Neural Networks and Intrinsic Mode Function Technique. Diagnostics, 16(5), 773. https://doi.org/10.3390/diagnostics16050773