Automated Classification of Maxillary Sinus Ostium Patency Using a ConvNeXt-Tiny + DeiT Gated MLP-Based Hybrid Deep Learning Model: A Retrospective CBCT Study

Abstract

1. Introduction

2. Materials and Methods

2.1. Data Collection and Ethical Approval

2.2. Inclusion-Exclusion Criteria and Dataset

2.3. Manual Classification and Standardization

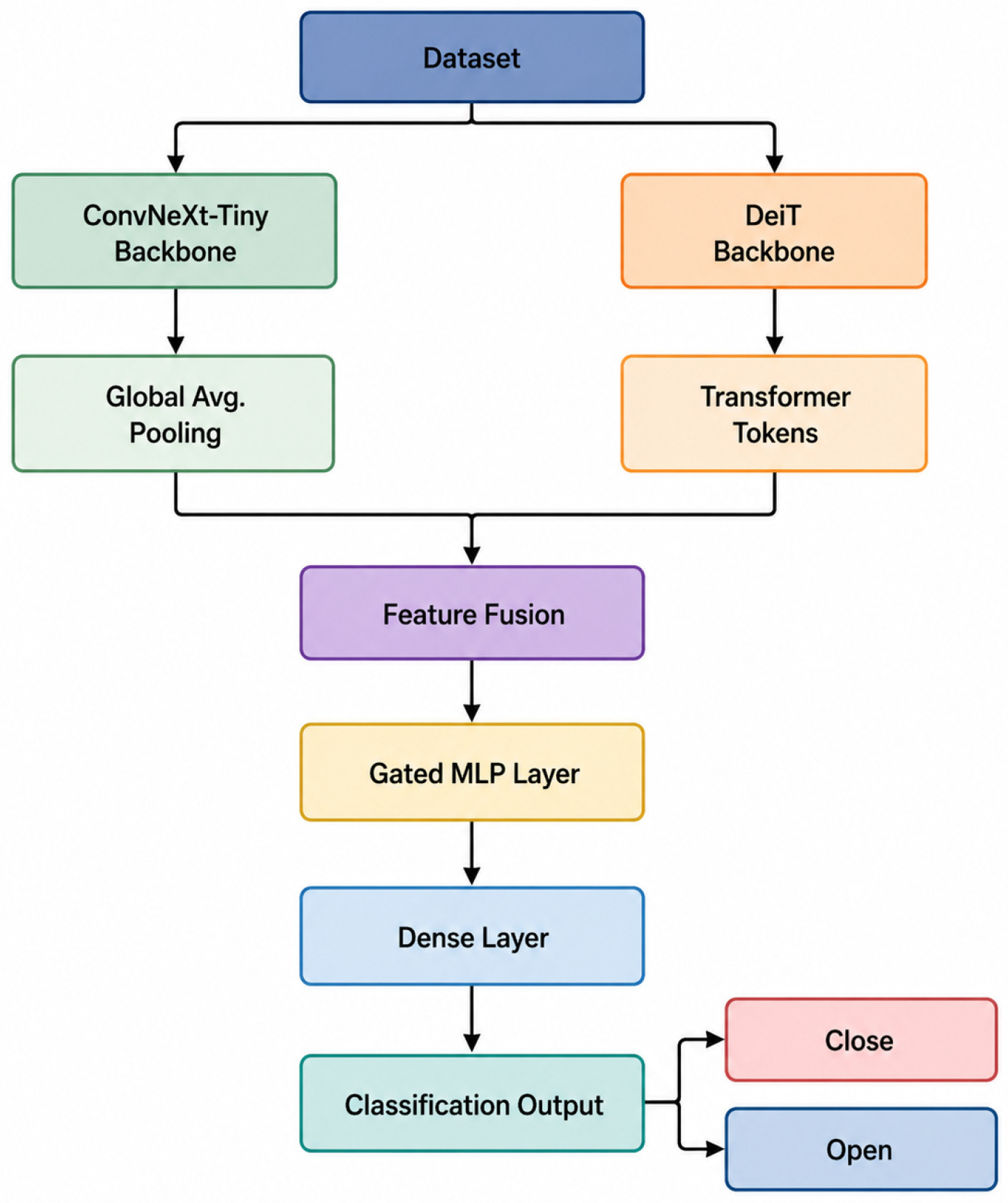

2.4. Proposed Model

2.5. Train Strategy and Performance Measurement Metrics

3. Result

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Pjetursson, B.E.; Lang, N.P. Sinus floor elevation utilizing the transalveolar approach. Periodontology 2000 2014, 66, 59–71. [Google Scholar] [CrossRef]

- Do, J.; Han, J.J. Anatomical characteristics of the accessory maxillary ostium in three-dimensional analysis. Medicina 2022, 58, 1243. [Google Scholar] [CrossRef] [PubMed]

- Aksoy, U.; Orhan, K. Association between odontogenic conditions and maxillary sinus mucosal thickening: A retrospective CBCT study. Clin. Oral Investig. 2019, 23, 123–131. [Google Scholar] [CrossRef]

- Park, M.J.; Park, H.I.; Ahn, K.; Kim, J.H.; Chung, Y.; Jang, Y.J.; Yu, M.S. Features of odontogenic sinusitis associated with dental implants. Laryngoscope 2023, 133, 237–243. [Google Scholar] [CrossRef]

- Schwarz, L.; Schiebel, V.; Hof, M.; Ulm, C.; Watzek, G.; Pommer, B. Risk factors of membrane perforation and postoperative complications in sinus floor elevation surgery: Review of 407 augmentation procedures. J. Oral Maxillofac. Surg. 2015, 73, 1275–1282. [Google Scholar] [CrossRef]

- Chirilă, L.; Rotaru, C.; Filipov, I.; Săndulescu, M. Management of acute maxillary sinusitis after sinus bone grafting procedures with simultaneous dental implants placement–a retrospective study. BMC Infect. Dis. 2016, 16, 94. [Google Scholar] [CrossRef]

- Kayabasoglu, G.; Nacar, A.; Altundag, A.; Cayonu, M.; Muhtarogullari, M.; Cingi, C. A retrospective analysis of the relationship between rhinosinusitis and sinus lift dental implantation. Head Face Med. 2014, 10, 53. [Google Scholar] [CrossRef]

- Molina, A.; Sanz-Sánchez, I.; Sanz-Martín, I.; Ortiz-Vigón, A.; Sanz, M. Complications in sinus lifting procedures: Classification and management. Periodontology 2000 2022, 88, 103–115. [Google Scholar] [CrossRef]

- Shanbhag, S.; Karnik, P.; Shirke, P.; Shanbhag, V. Cone-beam computed tomographic analysis of sinus membrane thickness, ostium patency, and residual ridge heights in the posterior maxilla: Implications for sinus floor elevation. Clin. Oral Implant. Res. 2014, 25, 755–760. [Google Scholar] [CrossRef] [PubMed]

- Vaddi, A.; Villagran, S.; Muttanahally, K.S.; Tadinada, A. Evaluation of available height, location, and patency of the ostium for sinus augmentation from an implant treatment planning perspective. Imaging Sci. Dent. 2021, 51, 243. [Google Scholar] [CrossRef] [PubMed]

- Çapar, I.; Şeker, Ç.; Cicek, O. Assessment of the Relationship Between Haller Cells, Accessory Maxillary Ostium, and Maxillary Sinus Pathologies: A Cross-Sectional CBCT Study. Diagnostics 2025, 15, 2557. [Google Scholar] [CrossRef]

- Ohlmeyer, S.; Saake, M.; Buder, T.; May, M.; Uder, M.; Wuest, W. Cone Beam CT Imaging of the Paranasal Region with a Multipurpose X-ray System—Image Quality and Radiation Exposure. Appl. Sci. 2020, 10, 5876. [Google Scholar] [CrossRef]

- Thakur, P.; Sharma, M.; Kotwal, S.; Gupta, V. Inter Observer agreement among radiologist and otorhinolaryngologists on paranasal sinus computed tomography scans in chronic rhinosinusitis. Indian J. Otolaryngol. Head Neck Surg. 2022, 74, 501–509. [Google Scholar] [CrossRef]

- Wu, Z.; Yu, X.; Chen, Y.; Chen, X.; Xu, C. Deep learning in the diagnosis of maxillary sinus diseases: A systematic review. Dentomaxillofac. Radiol. 2024, 53, 354–362. [Google Scholar] [CrossRef]

- Baybars, S.C.; Daldal, M.; Baydoğan, M.P.; Tuncer, S.A. Evaluation of apical closure in panoramic radiographs using vision transformer architectures ViT-based apical closure classification. Diagnostics 2025, 15, 2350. [Google Scholar] [CrossRef]

- Talu, M.H.; Coşgun-Baybars, S.; Danacı, Ç.; Arslan-Tuncer, S. From image to diagnosis: Convolutional neural networks in tongue lesions. J. Imaging Inform. Med. 2025, 39, 853–863. [Google Scholar] [CrossRef]

- Duger, N.; Talo, F.; Tekin, G.G.; Dagtekin, B.; Karaduman, M.; Yildirim, M.; Yildirim, T.T. Evaluation of Maxillary Sinus Membrane Morphology Using a Novel Hybrid CNN-ViT-Based Deep Learning Model: An Automated Classification Study. Diagnostics 2026, 16, 777. [Google Scholar] [CrossRef]

- Ali, M.B.; Irfan, M.B.; Ali, T.M.; Wei, C.R.; Akilimali, A.B. Artificial intelligence in dental radiology: A narrative review. Ann. Med. Surg. 2025, 87, 2212–2217. [Google Scholar] [CrossRef] [PubMed]

- Shetty, S.; Talaat, W.; Al-Rawi, N.; Al Kawas, S.; Sadek, M.; Elayyan, M.; Gaballah, K.; Narasimhan, S.; Ozsahin, I.; Ozsahin, D.U.; et al. Accuracy of deep learning models in the detection of accessory ostium in coronal cone beam computed tomographic images. Sci. Rep. 2025, 15, 8324. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Woo, S.; Debnath, S.; Hu, R.; Chen, X.; Liu, Z.; Kweon, I.S.; Xie, S. Convnext v2: Co-designing and scaling convnets with masked autoencoders. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Vancouver, BC, Canada, 18–22 June 2023. [Google Scholar]

- Wang, Q.; Wu, B.; Zhu, P.; Li, P.; Zuo, W.; Hu, Q. ECA-Net: Efficient channel attention for deep convolutional neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 16–18 June 2020. [Google Scholar]

- Touvron, H.; Cord, M.; Douze, M.; Massa, F.; Sablayrolles, A.; Jégou, H. Training data-efficient image transformers & distillation through attention. In International Conference on Machine Learning; PMLR: Cambridge, MA, USA, 2021. [Google Scholar]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision, Montreal, QB, Canada, 11–17 October 2021. [Google Scholar]

- Powers, D.M. Evaluation: From precision, recall and F-measure to ROC, informedness, markedness and correlation. arXiv 2020, arXiv:2010.16061. [Google Scholar] [CrossRef]

- Çınar, A.; Yıldırım, M.; Eroğlu, Y. Classification of pneumonia cell images using improved ResNet50 model. Trait. Du Signal 2021, 38, 165–173. [Google Scholar] [CrossRef]

- Matthews, B.W. Comparison of the predicted and observed secondary structure of T4 phage lysozyme. Biochim. Et Biophys. Acta (BBA)-Protein Struct. 1975, 405, 442–451. [Google Scholar] [CrossRef]

- Morgan, N.; Meeus, J.; Shujaat, S.; Cortellini, S.; Bornstein, M.M.; Jacobs, R. CBCT for diagnostics, treatment planning and monitoring of sinus floor elevation procedures. Diagnostics 2023, 13, 1684. [Google Scholar] [CrossRef]

- Lee, J.W.; Yoo, J.Y.; Paek, S.J.; Park, W.-J.; Choi, E.J.; Choi, M.-G.; Kwon, K.-H. Correlations between anatomic variations of maxillary sinus ostium and postoperative complication after sinus lifting. J. Korean Assoc. Oral Maxillofac. Surg. 2016, 42, 278. [Google Scholar] [CrossRef]

- Valentini, P. How to Prevent and Manage Postoperative Complications in Maxillary Sinus Augmentation Using the Lateral Approach: A Review. Int. J. Oral Maxillofac. Implant. 2023, 38, 1005–1013. [Google Scholar] [CrossRef]

- Shetty, S.; Mubarak, A.S.; David, L.R.; Al Jouhari, M.O.; Talaat, W.; Al Kawas, S.; Al-Rawi, N.; Shetty, S.; Shetty, M.; Ozsahin, D.U. Detection of concha bullosa using deep learning models in cone-beam computed tomography images: A feasibility study. Arch. Craniofac. Surg. 2025, 26, 19. [Google Scholar] [CrossRef]

- Esmaeilyfard, R.; Esmaeeli, N.; Paknahad, M. An artificial intelligence mechanism for detecting cystic lesions on CBCT images using deep learning. J. Stomatol. Oral Maxillofac. Surg. 2025, 126, 102152. [Google Scholar] [CrossRef] [PubMed]

- Yang, F.; Wu, X.; Zhang, Y.; Lang, X.; Yang, Y.; Ma, L.; Ding, Y.; Wang, L. A deep learning based automated maxillary sinus segmentation and bone grafts analysis in CBCT images. npj Digit. Med. 2025, 9, 90. [Google Scholar] [CrossRef] [PubMed]

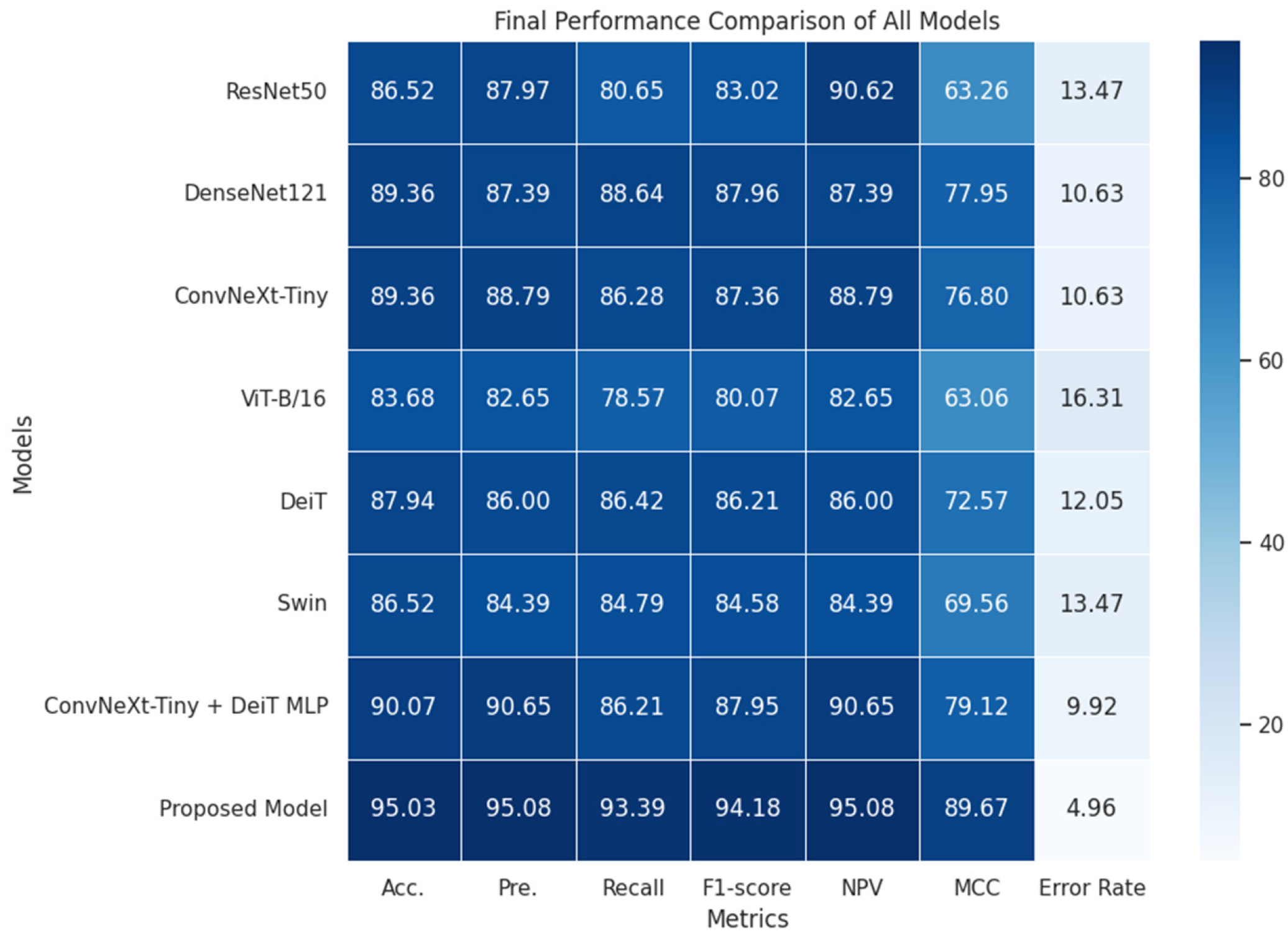

| Models | Acc. | Pre. | Recall | F1-Score | NPV | MCC | Error Rate |

|---|---|---|---|---|---|---|---|

| ResNet50 | 86.52 | 87.97 | 80.65 | 83.02 | 90.62 | 63.26 | 13.47 |

| DenseNet121 | 89.36 | 87.39 | 88.64 | 87.96 | 87.39 | 77.95 | 10.63 |

| ConvNeXt-Tiny | 89.36 | 88.79 | 86.28 | 87.36 | 88.79 | 76.80 | 10.63 |

| ViT-B/16 | 83.68 | 82.65 | 78.57 | 80.07 | 82.65 | 63.06 | 16.31 |

| DeiT | 87.94 | 86.00 | 86.42 | 86.21 | 86.00 | 72.57 | 12.05 |

| Swin | 86.52 | 84.39 | 84.79 | 84.58 | 84.39 | 69.56 | 13.47 |

| Models | Acc. | Pre. | Recall | F1-Score | NPV | MCC | Error Rate |

|---|---|---|---|---|---|---|---|

| ConvNeXt-Tiny | 89.36 | 88.79 | 86.28 | 87.36 | 88.79 | 76.80 | 10.63 |

| DeiT | 87.94 | 86.00 | 86.42 | 86.21 | 86.00 | 72.57 | 12.05 |

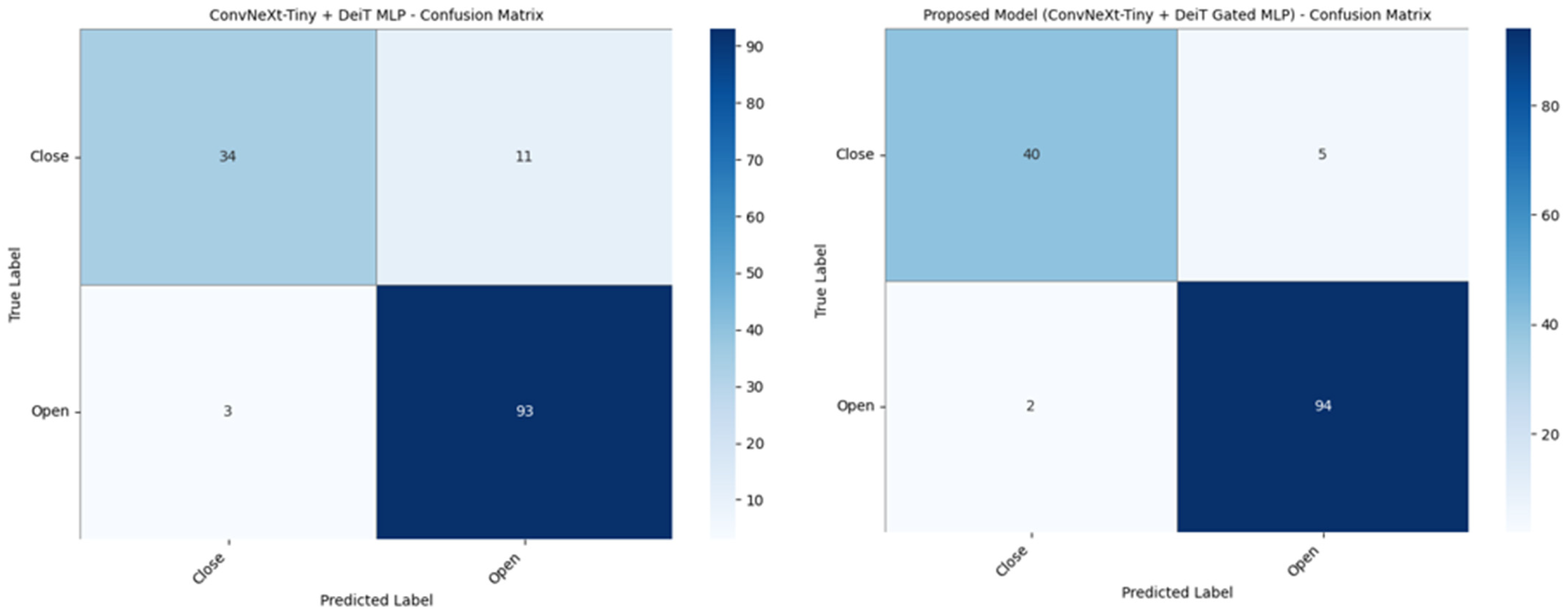

| ConvNeXt-Tiny + DeiT MLP | 90.07 | 90.65 | 86.21 | 87.95 | 90.65 | 79.12 | 09.92 |

| Proposed Model (ConvNeXt-Tiny + DeiT Gated MLP) | 95.03 | 95.08 | 93.39 | 94.18 | 95.08 | 89.67 | 04.96 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Talo, F.; Duger, N.; Aslan, E.; Yildirim, M.; Kaya, M.; Ozer, A.B.; Yildirim, T.T. Automated Classification of Maxillary Sinus Ostium Patency Using a ConvNeXt-Tiny + DeiT Gated MLP-Based Hybrid Deep Learning Model: A Retrospective CBCT Study. Diagnostics 2026, 16, 1512. https://doi.org/10.3390/diagnostics16101512

Talo F, Duger N, Aslan E, Yildirim M, Kaya M, Ozer AB, Yildirim TT. Automated Classification of Maxillary Sinus Ostium Patency Using a ConvNeXt-Tiny + DeiT Gated MLP-Based Hybrid Deep Learning Model: A Retrospective CBCT Study. Diagnostics. 2026; 16(10):1512. https://doi.org/10.3390/diagnostics16101512

Chicago/Turabian StyleTalo, Furkan, Nurullah Duger, Emre Aslan, Muhammed Yildirim, Mahmut Kaya, Ahmet Bedri Ozer, and Tuba Talo Yildirim. 2026. "Automated Classification of Maxillary Sinus Ostium Patency Using a ConvNeXt-Tiny + DeiT Gated MLP-Based Hybrid Deep Learning Model: A Retrospective CBCT Study" Diagnostics 16, no. 10: 1512. https://doi.org/10.3390/diagnostics16101512

APA StyleTalo, F., Duger, N., Aslan, E., Yildirim, M., Kaya, M., Ozer, A. B., & Yildirim, T. T. (2026). Automated Classification of Maxillary Sinus Ostium Patency Using a ConvNeXt-Tiny + DeiT Gated MLP-Based Hybrid Deep Learning Model: A Retrospective CBCT Study. Diagnostics, 16(10), 1512. https://doi.org/10.3390/diagnostics16101512