1. Introduction

Aluminum is among the most widely-used metallic elements. Due to its beneficial properties such as being lightweight and having high corrosion resistance for a considerable range of factors, it is preferred for the fabrication of various parts in the automotive and aerospace industry. Aluminum is often found in the form of an alloy with various elements such as copper, magnesium, manganese, and silicon, among others, and can be subjected to special treatment such as quenching or precipitation hardening in order to alter its properties, such as strength. Various aluminum alloys exhibit considerably high strength-to-weight ratios, and most of the alloys have excellent machinability.

As aluminum is used for a wide variety of applications, a large amount of experimental work has already been conducted regarding various machining processes. Especially in the case of the drilling process, several parameters regarding the influence of process parameters on the forces, surface quality, or tool wear have been conducted, providing useful details for the appropriate processing of this material. Nouari et al. [

1] investigated the effect of drilling process parameters and tool coating on tool wear during dry drilling of AA2024 aluminum alloy. They noted that at a certain cutting speed, a transition in tool wear mechanisms occurs, leading in higher tool wear, and thus, they investigated the shift in wear mechanism for various tool coating materials. Their results indicated that, at high cutting speeds, larger deviations from the nominal hole diameters are obtained mainly due to the higher temperature generated on the rake face of the tool, leading to increased tool damage. While abrasion or adhesion wear is observed for lower speeds in the form of built-up edge or built-up layer, the increase of temperature for high cutting speeds resulted in diffusion wear and the increase of the material adhered to the tool. Furthermore, although the uncoated tool produced more accurate hole dimensions at low cutting speeds, this trend was reversed in the case of higher cutting speeds, and the use of the uncoated HSS tool was found to produce the least accurate holes. Girot et al. [

2] also conducted experiments regarding tool wear during dry drilling of aluminum alloys. In their work, they aimed at the reduction of the built-up layer in the cutting tool by altering the process parameters and tool coating and geometry. They employed an uncoated carbide drill, a nano-crystalline diamond-coated drill, a nano-crystalline Balinit-coated drill, and a Mo diamond-coated drill and conducted experiments with variable cutting speed. After they established the relationship between the cutting parameters, thrust force, and burr height, they found that diamond coatings were superior due to their low friction coefficient and adequate adhesion of the coating on the tool, and the nano-structured diamond coated tools were the best performing in particular.

Farid et al. [

3] conducted a study on chip morphology during high speed drilling of Al-Si alloy. More specifically, they investigated the effect of cutting speed and feed rate on chip morphology by conducting observations at various surfaces of the chip using microscopy techniques. For the drilling experiments, uncoated WC drills were employed to produce 15 mm-diameter holes under various cutting speeds and feed values, in the range of 240–320 m/min and 0.16–0.24 mm/rev, respectively. It was found that, on the free surface of the chip, lamella structures were created and that an increase of speed and feed rate led to a decrease of the frequency of the appearance of these lamella structures. Regarding chip morphology, the increase of cutting speed and feed led to the increase of chip pitch and height and a decrease of the chip compression ratio. Finally, thrust force was found to increase with a decrease of cutting speed and an increase of the feed rate, so it was suggested that high cutting speeds are essential in order to minimize thrust force in the case of Al-Si alloys’ drilling.

Qiu et al. [

4] investigated the use of high-performance drills during drilling of aluminum and titanium alloys with a view toward minimizing cutting force and torque. For their experiments, they employed two conventional and two modified types of drills, which had larger point, primary relief, and helix angles and a lower second clearance angle. For the aluminum alloy, the cutting speed was varied from 100–200 m/min and the feed rate from 0.1–0.2 mm/rev. The experiments indicated that the tools with the modified geometry were able to reduce thrust force and torque in the majority of cases, with the average reduction of force being 9.78% and torque 21.08%. Dasch et al. [

5] conducted a thorough comparison regarding various categories of coated cutting tools for the drilling process of aluminum. For their study, they used five different types of coating materials, namely carbon, graphitic, hydrogenated and hydrogen-free diamond-like carbon, and diamond on two types of drills, namely HSS and carbide drills. In the tests, they measured tool wear, temperature, and spindle power. After conducting tests on various cases of cutting speed and feed combinations, they concluded that the most important is to coat the flutes of the tool rather than the cutting edge, that coated tools were able to drill up to 100-times more holes than the uncoated, and that hydrogenated carbon coatings are superior to the other types of coating.

Regarding modeling of the drilling process, various studies have been conducted due to its popularity. Kurt, Bagci, and Kaynak [

6] determined the optimum cutting parameters for high surface quality and hole accuracy using the Taguchi method. In their work, they conducted experiments at various cutting speeds, feed rates, and depths of drilling and tested the performance of both uncoated and coated HSS drills. After the experiments were conducted by the Taguchi method, regression models for Ra, and hole diameter accuracy were derived, and the optimum parameters were identified by analyzing the S/N ratio results. Kilickap [

7] used the Taguchi method and Response Surface Methodology (RSM) to predict burr height during drilling of aluminum alloys and to determine the optimum drilling parameters. He conducted experiments using various cutting speeds, feed rates, and point angles and observed the burr height and surface roughness. Analysis using the Taguchi method and Analysis Of Variance (ANOVA) revealed the optimum parameters for reducing the burr height and surface roughness, and regression models created using RSM were proven to be adequate to describe the correlation between process parameters and process outcome. Sreenivasulu and Rao [

8], Efkolidis et al [

9], and Kyratsis et al [

10] also employed the Taguchi method to determine the optimum levels of the process parameters for the minimization of thrust force and torque during drilling of aluminum alloys. After they performed experiments at various cutting speeds, feed rates, and various drill diameters and geometries, they determined the most significant parameters of the process and their optimum values.

Apart from regression models, several studies based on neural network models have been reported in the relevant literature. Singh et al. [

11] employed an Artificial Neural Network (ANN) model to predict tool wear during drilling of copper workpieces. As inputs for the neural network, feed rate, spindle speed, and drill diameter were selected, and thrust force, torque, and maximum wear were the outputs. After the optimum number of hidden layers and neurons, as well as the optimum learning rate and momentum coefficient were determined, it was shown that the best neural network predicted tool wear with a maximum error of 7.5%. Umesh Gowda et al. [

12] presented an ANN model for the prediction of circularity, cylindricity, and surface roughness when drilling aluminum-based composites. After the experiments, tool wear, surface roughness, circularity, and cylindricity were obtained as a function of machining time, and NN models were used to correlate process parameters with these quantities.

Besides the usual MLP models, other similar approaches such as RBF-NN or hybrid approaches combining MLP with fuzzy logic or genetic algorithms have also been presented. Neto et al. [

13] also presented MLP and ANFIS models for the prediction of hole diameter during drilling of various alloys. For the neural network models, inputs from various sensors such as acoustic emission, electric power, force, and vibration were employed. Thorough analysis of the predicted results’ errors revealed that MLP models had superior performance to ANFIS models. Ferreiro et al. [

14] conducted a comprehensive study in developing an AI-based burr detection system for the drilling process of Al7075-T6. For that reason, they employed data-mining techniques and tested various approaches with a view toward reducing the classification errors. All developed models performed better than the existing mathematical model for burr prediction, and in particular, it was shown that a model based on the naive Bayes method exhibited the maximum accuracy.

Lo [

15] employed an ANFIS model for the prediction of surface roughness in end milling. In their models, they employed both a triangular and trapezoidal membership function with 48 sets of data for training and 24 for testing. The error was found to be minimum using the triangular membership function. Zuperl et al. [

16] used an ANFIS model for the estimation of flank wear during milling. The model was trained with 140 sets of data (75 for training and 65 for testing) and included 32 fuzzy rules. It was shown that a model with a triangular membership function was again capable of exhibiting the lowest error. Song and Baseri [

17] applied the ANFIS model for the selection of drilling parameters in order to reduce burr size and improve surface quality. In this work, a different model was built for each of the four outputs, namely burr height, thickness, type, and hole overcut. One hundred and fifty datasets were used, 92 for the training, 25 for checking, and 25 for testing of the model. For the first output, the best model had four membership functions of a triangular type; for the second one, the best model had a three-membership function of a Gaussian type; and the last two three- and four-membership functions of a generalized bell type, respectively. Fang et al. [

18] used an RBF model for surface roughness during machining of aluminum alloys. Their model included eight inputs, related to process parameters, cutting forces, and vibration components, and five outputs, related to surface roughness. The RBF model was proven to be faster than an MLP model, but it was inferior in terms of accuracy. Elmounayri et al. [

19] employed an RBF model for the prediction of cutting forces during ball-end milling. The model had four inputs, related to the process parameters and four outputs, namely the maximum, minimum, mean, and standard deviation of instantaneous cutting force. In this case, it was noted that the RBF network was more efficient and accurate and faster than the classical MLP.

Although comparisons between regression models and neural network model for cases of machining processes have already been conducted, comparison between several soft computing methods has rarely been conducted, and only a few relevant studies exist. Tsai and Wang [

20] conducted a thorough comparison between various neural network models such as different variants of MLP, RBF-NN, and ANFIS for the cases of electrical discharge machining. They concluded that the best performing method was ANFIS, as it exhibited the best accuracy among the tested methods. Nalbant et al. [

21] conducted a comparison between regression and artificial neural network models for CNC turning cases and concluded that although the neural network model was performing slightly better, both models were appropriate for modeling the experimental results. Jurkovic et al. [

22] compared support vector regression, polynomial regression, and artificial neural networks in the case of high-speed turning. Their models included feed, cutting speed, and depth of cut as inputs, and separate models were created for three output quantities, namely cutting forces, surface roughness, and cutting tool life. In the cases of cutting force and surface roughness, they found that the polynomial regression model was the best, whereas in the case of cutting tool life, ANN performed better than the other methods.

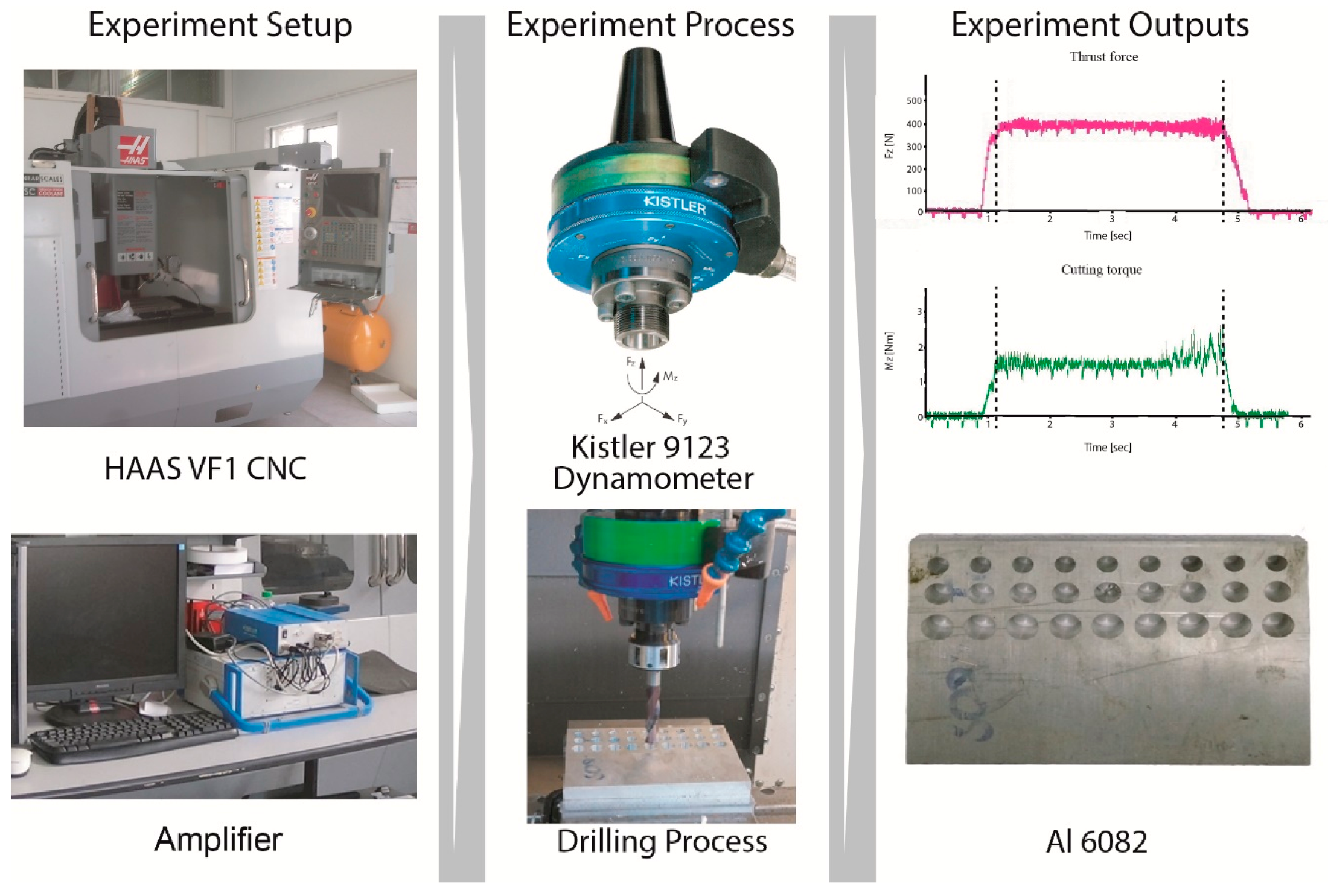

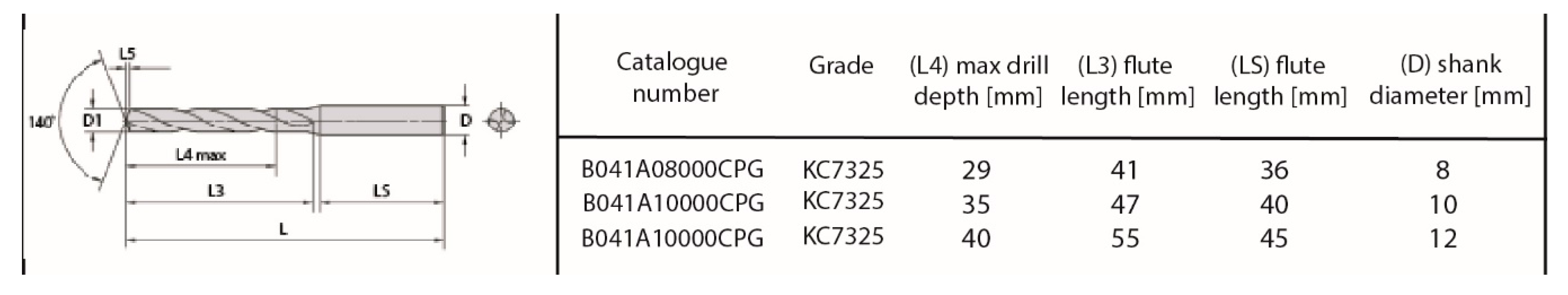

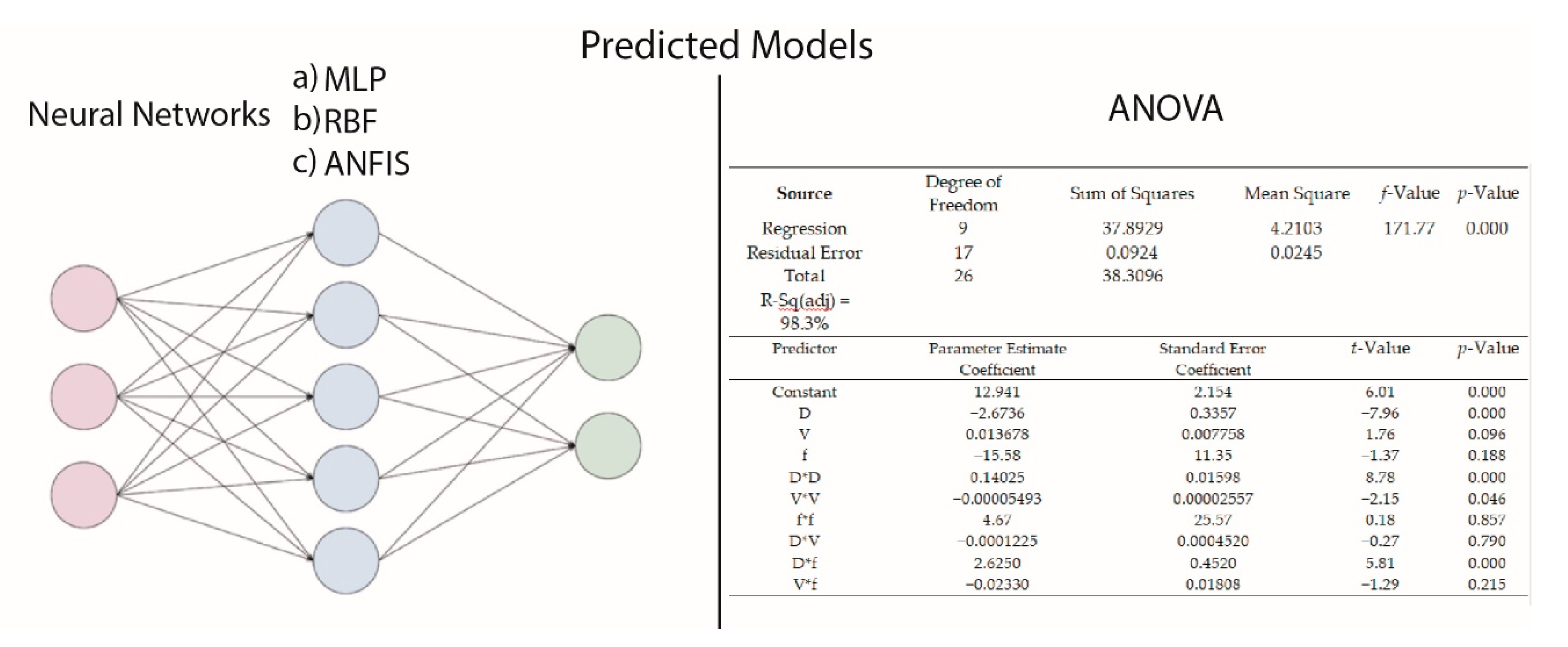

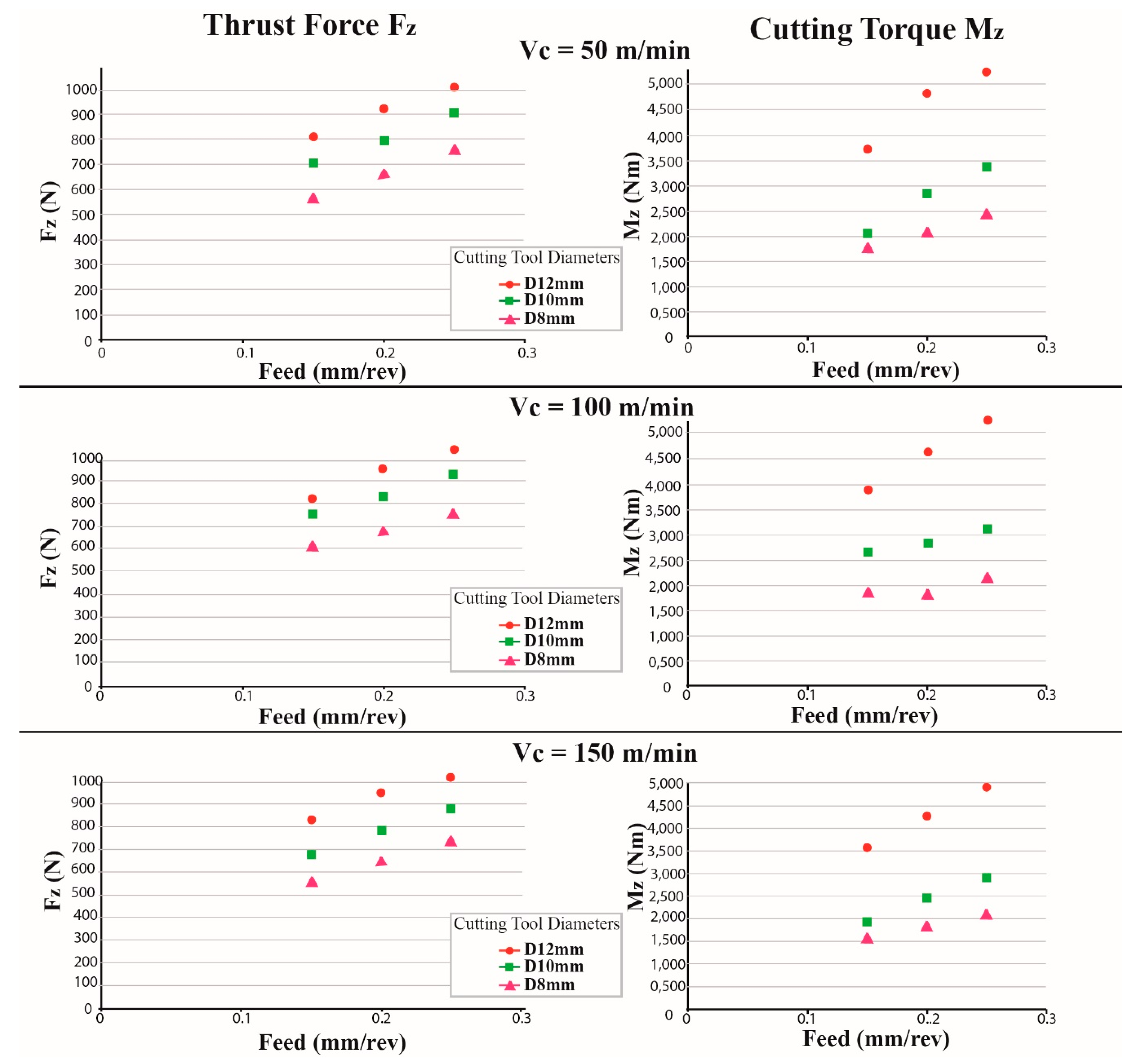

In the present study, a comparison of various neural network models, as well as multiple regression models is conducted with a view toward determining the best performing model for the case of drilling Al6082-T6. As in this case, a relatively small dataset is used, it is expected that the results of regression models and neural network models will be comparable, and so, it is interesting to find out whether there can be a clear difference in the performance of these methods in the present case. The various neural network models are trained with different parameters, and the comparison between them is conducted by criteria relevant to the prediction error, both for training and testing datasets.

2. Artificial Neural Networks

Artificial neural networks are among the most important soft computing methods, widely used for a great range of applications spanning across various scientific fields. This method is able to successfully predict the outcome of a process by using pairs of input and output data in a learning procedure. In fact, this method imitates the function of biological neurons, which receive inputs, process them, and generate a suitable output. The most common ANN model, namely MLP, has a structure containing various interconnected layers of neurons, starting from an input layer and ending at an output layer. The middle layers are called hidden layers, and their number is variable, depending on the size and the characteristics of each problem. Each neuron is connected by artificial synapses to the neurons of the following layer, and a weighting coefficient, or weight, is assigned to each synapse. The inputs for each neuron are multiplied by the weighting coefficient of the respective synapse, and then, they are summed; after that, the output from each neuron is generated by using an activation function, which usually is a sigmoid function, such as the hyperbolic tangent.

The ANN model is created by a learning process, containing three different stages, i.e., training, validation, and testing. For each stage, a part of the total input/output data is reserved, most commonly 70%, 15%, and 15%, respectively. The learning process consists of the determination of suitable weight values according to real input/output data pairs and is an iterative process. This process is called error backpropagation, as the difference of the predicted and actual output is first computed in the output layer, and then, the error is propagated through the network in the opposite way, from the output to the input layer; after various iterations, or epochs, the network weights are properly adjusted so that the error is minimized. The training and validation stage both involve adjustment of the weights, and it is possible to stop the learning process if the error is not decreasing after some epochs, a technique that is named the early stopping technique. Finally, during the testing stage, the trained model is checked for its generalization capabilities by providing it with unknown data from the testing data sample. Usually, the Mean Squared Error (MSE) is used for the determination of model performance:

where

n is the number of data samples, Y

i are the predicted values and A

i the actual values.

Furthermore, the Mean Absolute Percentage Error (MAPE) can be also employed to evaluate the results of neural network models:

Finally, RBF-NN is constructed by using an appropriate radial function as the activation function for a neural network model with a single layer. Most commonly, a Gaussian-type function is used as the activation function:

where ε is a small number and r =

, with x being an input and x

i the center of the radial basis function. Apart from the widely-used MLP model and RBF-NN models, various other types of neural networks exist, as well as hybrid models, combining ANN with other soft computing methods such as fuzzy logic. In addition to the learning and optimization ability of the neural networks, fuzzy logic systems are able to provide a means for reasoning to the system with the use of IF-THEN rules. ANFIS is a soft computing method with characteristics related both to neural networks and fuzzy logic. Generally, the creation of an ANFIS model has several similarities to the creation of a MLP model, but there exist also some basic differences. This model has a structure similar to the MLP structure, but there are some additional layers, related to membership functions of the Fuzzy Inference System (FIS). One of the most common fuzzy models used in ANFIS models is the Sugeno fuzzy model. By the training process, fuzzy rules are formed, and an FIS is created. At first, the input data are converted into fuzzy sets through a fuzzification layer, and then, membership functions are generated. Membership functions can have various shapes such as triangular, trapezoid, or Gaussian, among others. In the second layer, the number of nodes is equal to the number of fuzzy rules of the system; the determination of the number of fuzzy rules is conducted using a suitable method such as the subtractive clustering method. The output of the second layer nodes is the product of the input signals, which they receive from the previous nodes. Then, in the third layer, the ratio of each rule’s firing strength to the sum of the firing strengths of all rules is calculated, and it is used in the defuzzification layer to determine the output values from each node. Finally, the total output is calculated by summation of the outputs of the fourth layer. The FIS parameters are updated by means of a learning algorithm in order to reduce the prediction error, such as in the case of MLP model.

5. Conclusions

In the present paper, various methods for the modeling of the drilling process, such as regression and neural network models, were compared in order to determine which is the most accurate and efficient one. These models were applied to cases of drilling Al 6082-T6 alloy under various process conditions. From this study, several conclusions can be drawn.

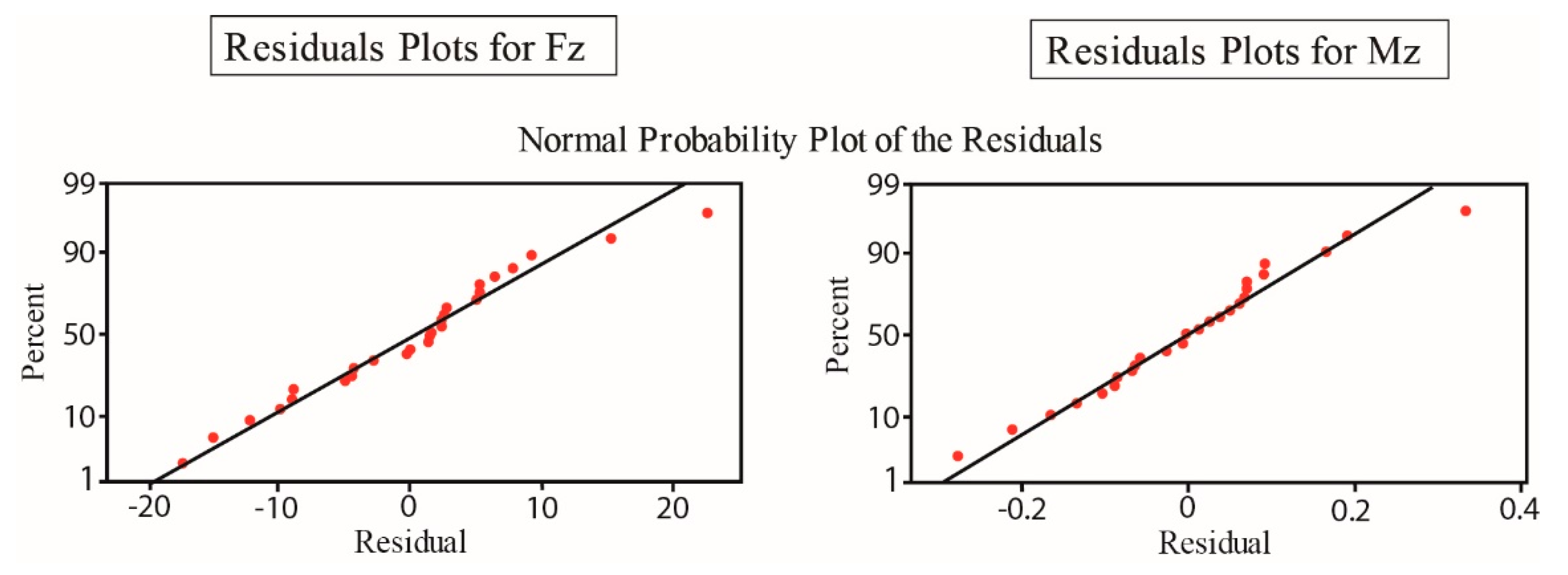

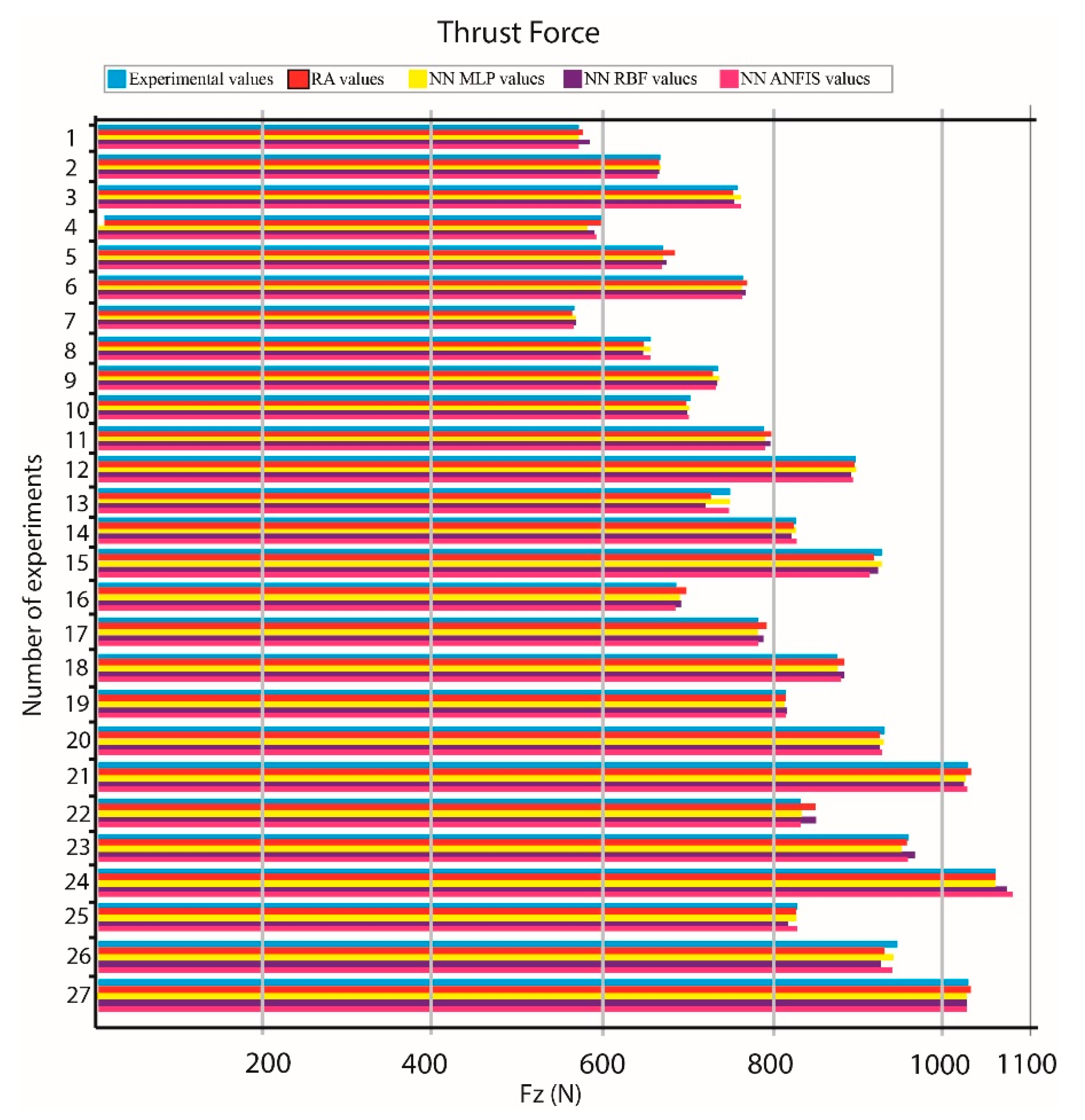

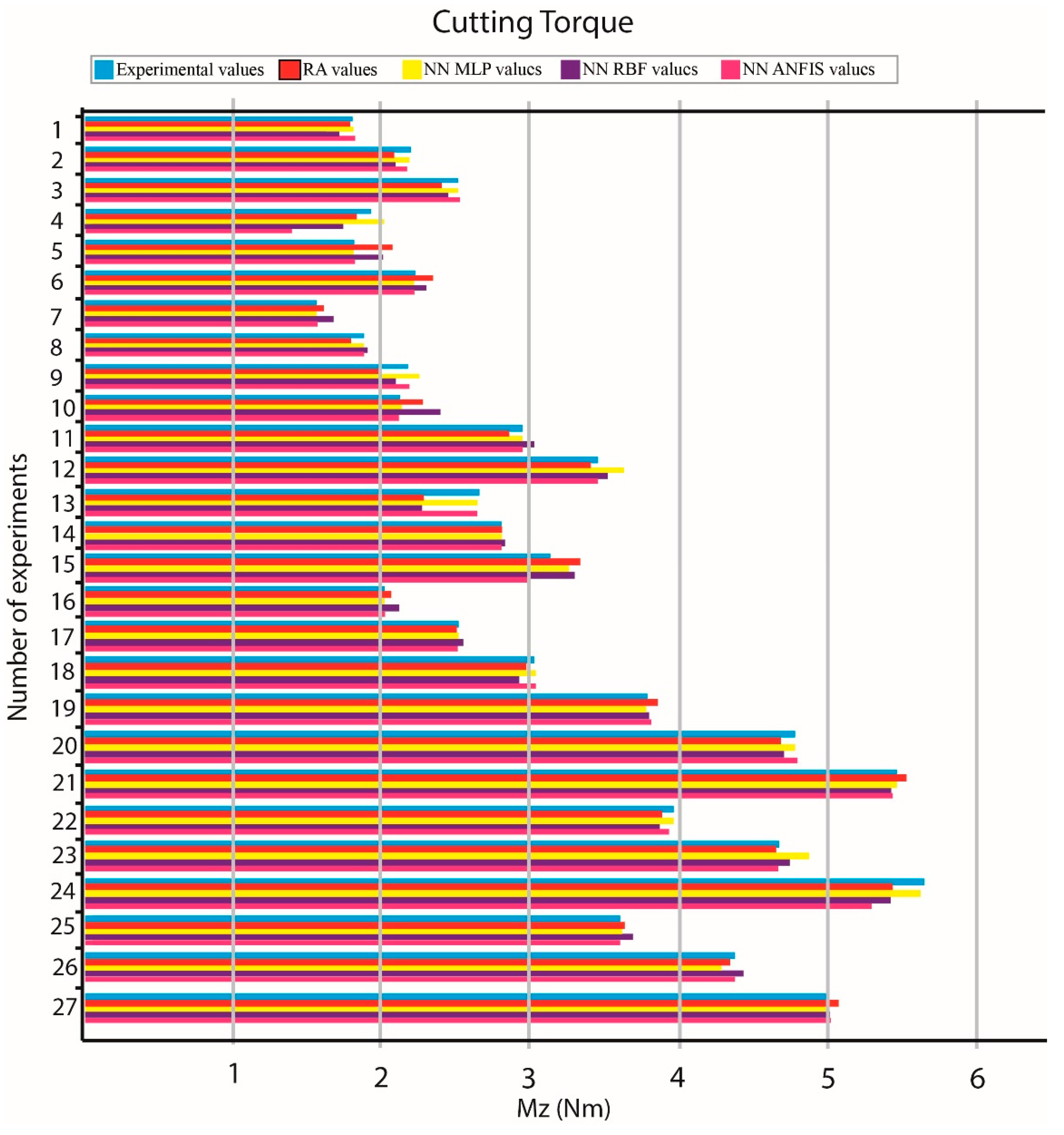

From the statistical analysis and derivation of the multiple regression models, it can be deduced that the developed models are sufficiently accurate, as they exhibited high correlation coefficient values, namely 99.6% and 98.9% for the Fz and Mz models, respectively, and also low MAPE values, namely 0.86305% and 3.0495% for the Fz and Mz models, respectively.

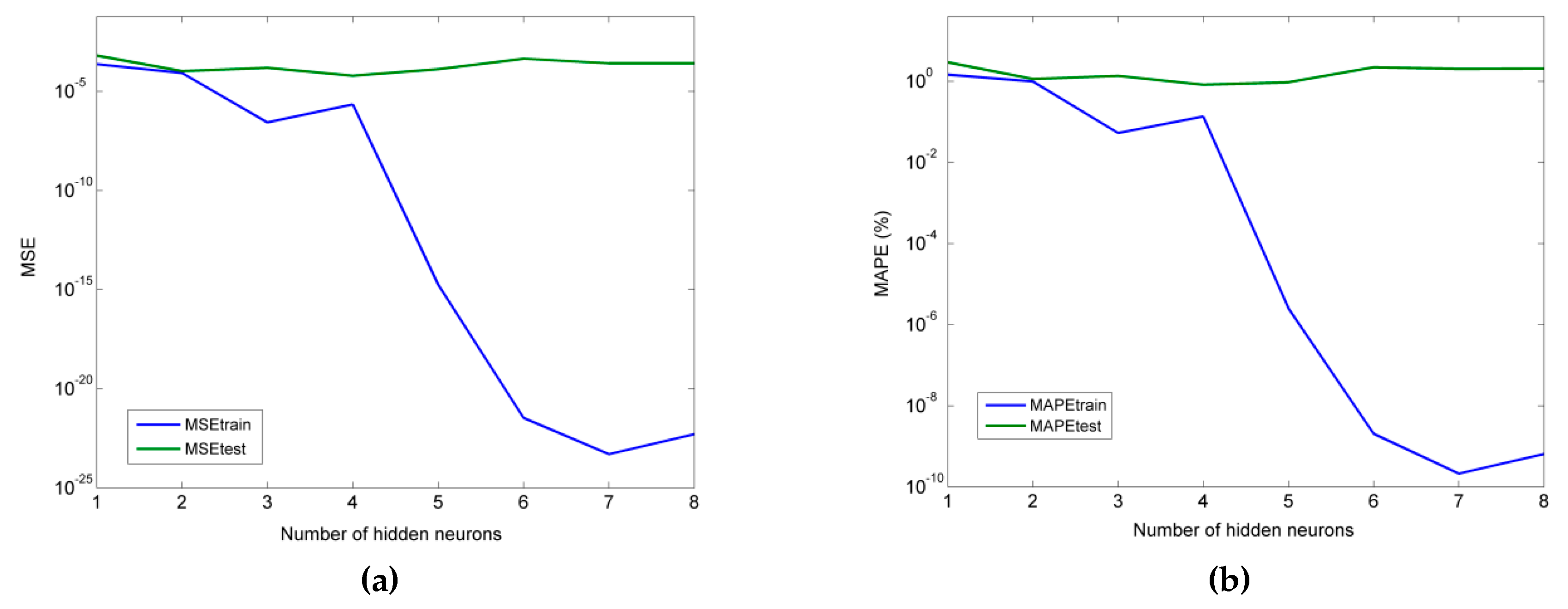

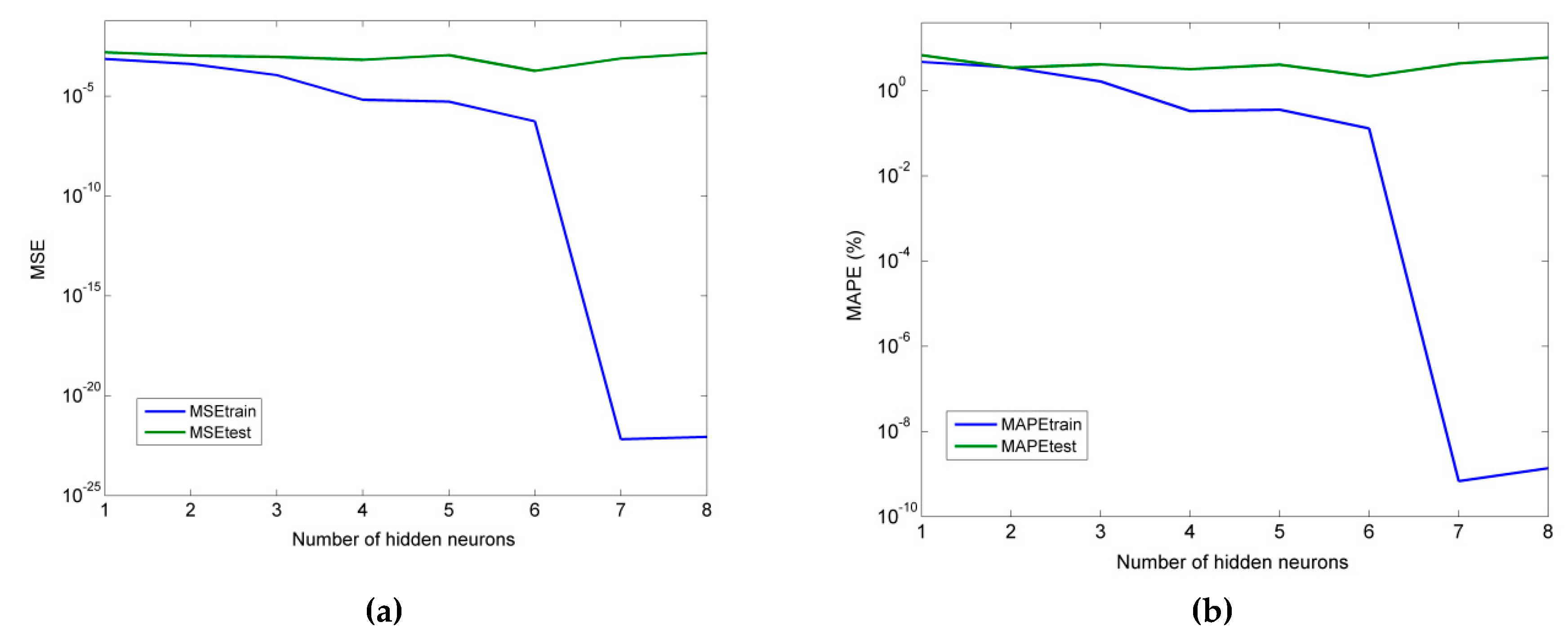

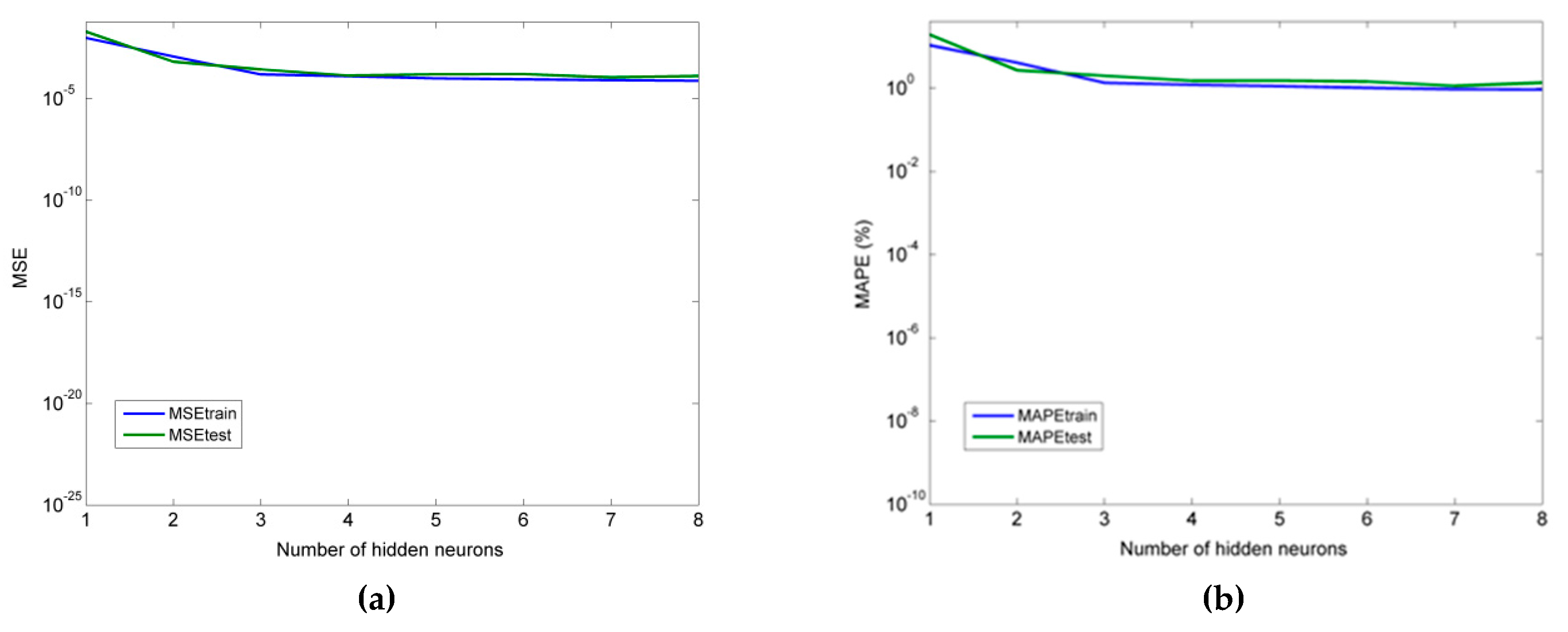

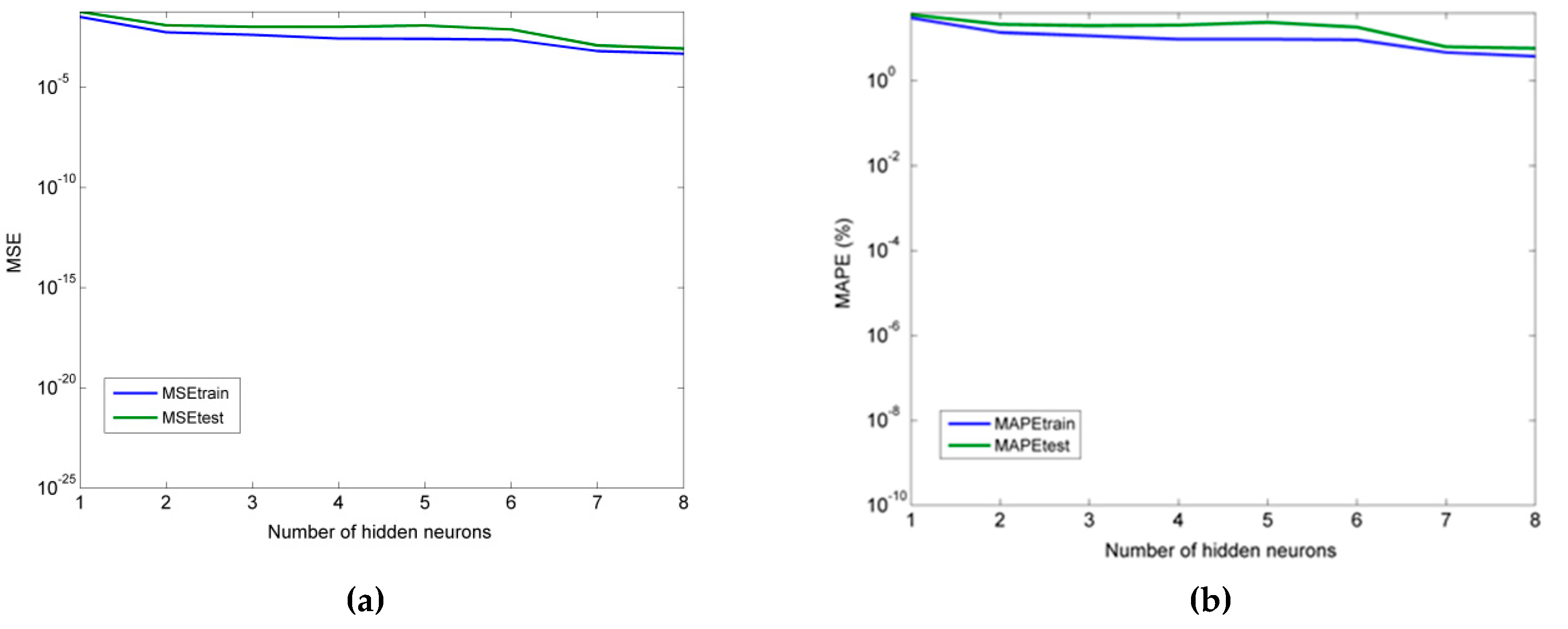

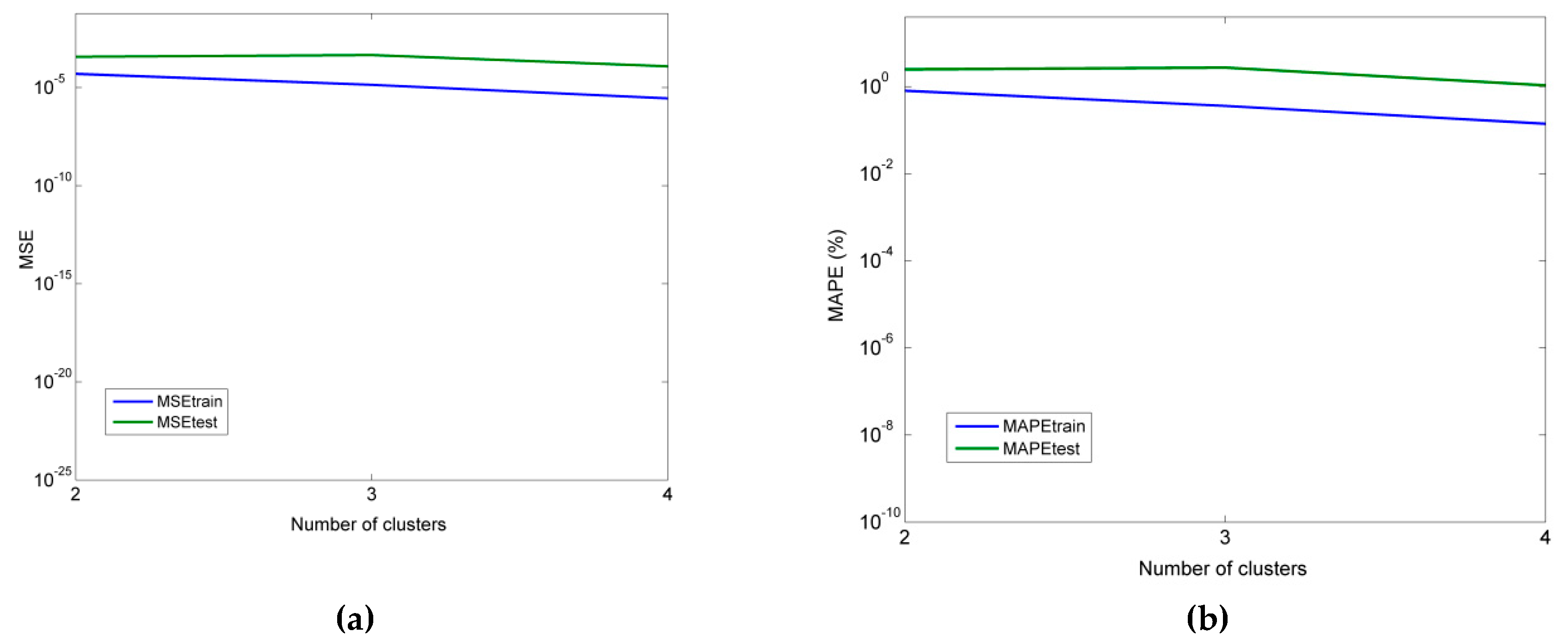

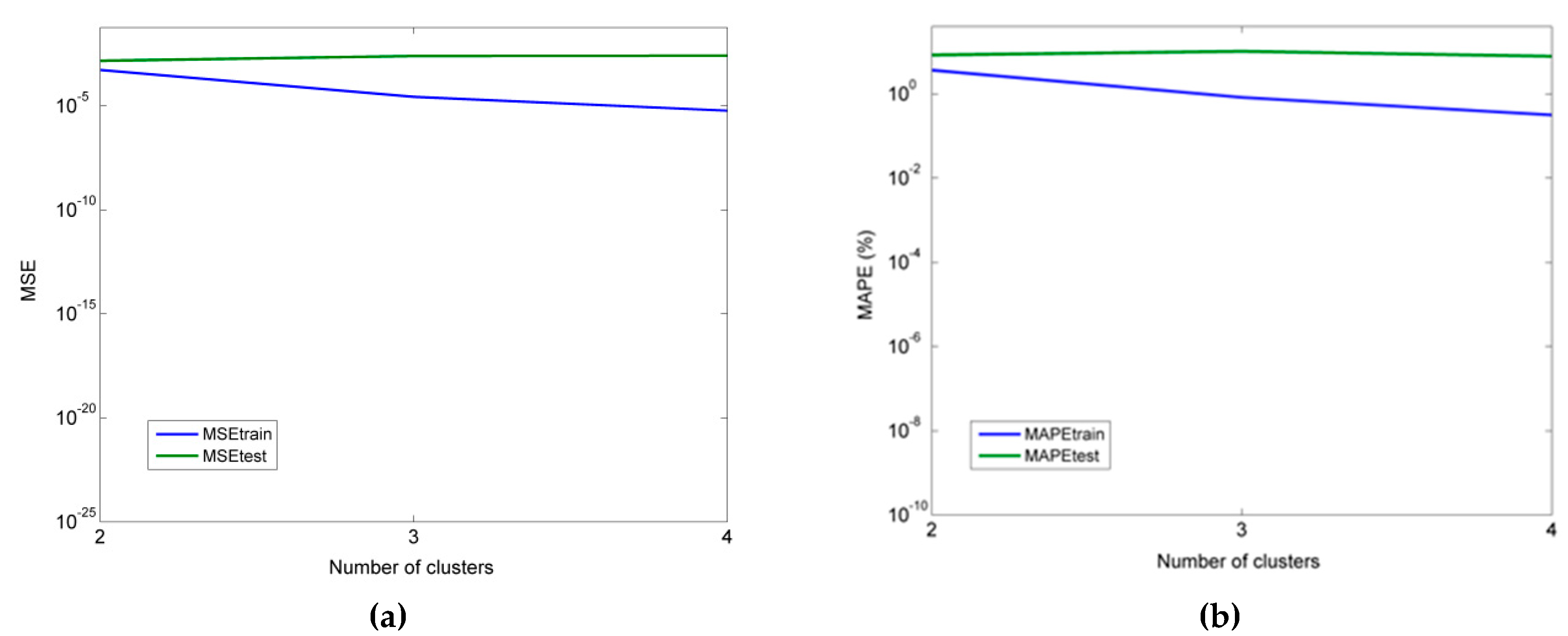

As for the neural network models, it was determined that an MLP model with 4 hidden neurons was more suitable for the prediction of thrust force, and an MLP model with six hidden neurons was more appropriate for the prediction of cutting torque. Furthermore, for the RBF-NN model, a higher number of hidden neurons was shown to lead to better accuracy, namely seven and eight in the cases of thrust force and torque, respectively. Finally, for the ANFIS models with fuzzy c-means clustering, the use of four clusters was the best choice in both the Fz and Mz cases.

From the comparison of all models, it was concluded that the MLP model performed better in all cases, followed by ANFIS, multiple regression, and RBF-NN. Thus, it was shown that MLP can be competitive with multiple regression models even for relatively small datasets and that ANFIS and RBF-NN are less suitable for cases with small datasets.