Abstract

A plethora of applications from mathematical programming, such as minimax, and mathematical programming, penalization, fixed point to mention a few can be framed as equilibrium problems. Most of the techniques for solving such problems involve iterative methods that is why, in this paper, we introduced a new extragradient-like method to solve equilibrium problems in real Hilbert spaces with a Lipschitz-type condition on a bifunction. The advantage of a method is a variable stepsize formula that is updated on each iteration based on the previous iterations. The method also operates without the previous information of the Lipschitz-type constants. The weak convergence of the method is established by taking mild conditions on a bifunction. For application, fixed-point theorems that involve strict pseudocontraction and results for pseudomonotone variational inequalities are studied. We have reported various numerical results to show the numerical behaviour of the proposed method and correlate it with existing ones.

1. Introduction

For a nonempty, closed and convex subset of a real Hilbert space and is a bifunction with , for each A equilibrium problem [1,2] for f on the set is defined in the following way:

The problem (1) is very general, it includes many problems, such as fixed point problems, variational inequalities problems, the optimization problems, the Nash equilibrium of non-cooperative games, the complementarity problems, the saddle point problems, and the vector optimization problem (for further details see [1,3,4]). The equilibrium problem is also considered as the famous Ky Fan inequality [2]. This above-defined particular format of an equilibrium problem (1) is initiated by Muu and Oettli [5] in 1992 and further investigation on its theoretical properties studied by Blum and Oettli [1]. The construction of new optimization-based methods and the modification and extension of existing methods, as well as the examination of their convergence analysis, is an important research direction in equilibrium problem theory. Many methods have been developed over the last few years to numerically solve the equilibrium problems in both finite and infinite dimensional Hilbert spaces, i.e., the extragradient algorithms [6,7,8,9,10,11,12,13,14] subgradient algorithms [15,16,17,18,19,20,21] inertial methods [22,23,24,25], and others in [26,27,28,29,30,31,32,33,34].

In particular, a proximal method [35] is an efficient way to solve equilibrium problems that are equivalent to solving minimization problems on each step. This approach is also considered as the two-step extragradient-like method in [6], because of the early contribution of the Korpelevich [36] extragradient method to solve the saddle point problems. More precisely, Tran et al. introduced a method in [6], in which an iterative sequence was generated in the following manner:

where and are Lipschitz constants. Moreover, is the value of x in set for which attains it’s minimum. The iterative sequence generated from the above-described method provides a weak convergent iterative sequence and in order to operate it, previous knowledge of the Lipschitz-like constants are required. These Lipschitz-type constants are normally unknown or hard to evaluate. In order to overcome this situation, Hieu et al. [12] introduced an extension of the method in [37] to solve the problems of equilibrium in the following manner: let and choose with , such that

where the stepsize sequence is updated in the following way:

Recently, Vinh and Muu proposed an inertial iterative algorithm in [38] to solve a pseudomonotone equilibrium problem. The key contribution is an inertial factor in the method that used to enhance the convergence speed of the iterative sequence. The iterative sequence was defined in the following manner:

- (i)

- Choose where a sequence is satisfies the following conditions:

- (ii)

- Choose satisfying and

- (iii)

- Compute

Recently, another efficient inertial algorithm proposed by Hieu et al. in [39] as follows: let and the sequence was defined in the following manner:

In this article, we concentrates on projection methods that are normally well-established and easy to execute due to their efficient numerical computation. Motivated by the works of [12,38], we formulate an inertial explicit subgradient extragradient method to solve the pseudomonotone equilibrium problem. These results can be seen as the modification of the methods appeared in [6,12,38,39]. Under certain mild conditions, a weak convergence theorem is proved regarding the iterative sequence of the algorithm. Moreover, experimental studies have documented that the designed method tends to be more efficient when compared to the existing methods that are presented in [38,39].

The remainder of the paper has been arranged, as follows: Section 2 contains the elementary results used in this paper. Section 3 contains our main algorithm and proves their convergence. Section 4 and Section 5 incorporate the applications of our main results. Section 6 carries out the numerical results that prove the computational effectiveness of our suggested method.

2. Preliminaries

Assume that be a convex function on a nonempty, closed and convex subset of a real Hilbert space and subdifferential of a function h at is defined by

Assume that be a nonempty, closed and convex subset of a real Hilbert space and Normal cone of at is defined by

A metric projection for onto a closed and convex subset of is defined by

Now, consider the following definitions of monotonicity a bifunction (see for details [1,40]). Assume that on for is said to be

- (1)

- γ-strongly monotone if

- (2)

- monotone if

- (3)

- γ-strongly pseudomonotone if

- (4)

- pseudomonotone if

We have the following implications from the above definitions:

In general, the converses are not true. Suppose that satisfy the Lipschitz-type condition [41] on a set if there exist two constants , such that

Lemma 1

([42]). Suppose be a nonempty, closed and convex subset of and is metric projection from onto .

- (i)

- Let and we have

- (ii)

- if and only if

- (iii)

- For any and

Lemma 2

([43,44]). Assume that be a convex, lower semicontinuous and subdifferentiable function on where is a nonempty, convex and closed subset of a Hilbert space Subsequently, is minimizer of a function h if and only if where and denotes the subdifferential of h at and the normal cone of at , respectively.

Lemma 3

([45]). Let be a sequence in and , such that the following conditions are satisfied:

- (i)

- for every the exists;

- (ii)

- each sequentially weak cluster limit point of the sequence belongs to .

Then, weakly converge to some element in

Lemma 4

([46]). Let and be sequences of non-negative real numbers satisfying for each If then exists.

Lemma 5

([47]). For every and then

Suppose that bifunction f satisfies the following conditions:

- (f1)

- f is pseudomonotone on and for every ;

- (f2)

- f satisfies the Lipschitz-type condition on with constants and

- (f3)

- for every and satisfying ;

- (f4)

- needs to be convex and subdifferentiable on for all

3. The Modified Extragradient Algorithm for the Problem (1) and Its Convergence Analysis

We provide a method consisting of two strongly convex minimization problems with an inertial term and an explicit stepsize formula that are being used to enhance the convergence rate of the iterative sequence and to make the algorithm independent of the Lipschitz constants. For the sake of simplicity in the presentation, we will use the notation and follow the conventions and The detailed method is provided below (Algorithm 1):

| Algorithm 1 (Modified Extragradient Algorithm for the Problem (1)) |

|

Lemma 6.

The sequence is monotonically decreasing with a lower bound and it converges to

Proof.

From the definition of sequence implies that sequence decreasing monotonically. It is given that f satisfy the Lipschitz-type condition with and . Let , such that

The above implies that has a lower bound Moreover, there exists a fixed real number , such that □

Remark 1.

Because of the summability of and the expression (5) implies that

that implies

Lemma 7.

Suppose that be a bifunction satisfies the conditions(f1)–(f4). For each , we have

Proof.

From the value of , we have

For some , there exists , such that

The above expression implies that

For given , imply that ∀ It provides that

From , we have

By substituting in (11), gives that

Because , then provides that

From the formula of we obtain

Similar to expression (11), the value of gives that

By substituting in the above expression, we have

We have the given formulas:

The above expressions with (18), we have

□

Theorem 1.

Assume that be a bifunction satisfies the conditions(f1)–(f4) and belongs to solution set Subsequently, the sequences and generated through Algorithm 1 weakly converges to In addition,

Proof.

By value of through Lemma 5, we obtain

By Lemma 7 and expression (19), we obtain

Because then there exists a fixed number , such that

Subsequently, there exist a fixed real number such that

By definition of the , we have

From the definition of in Algorithm 1, we obtain

The expression (22) can also be written as

The equality (8) implies that

By letting in (24) implies that

By letting in (31), we obtain

By using the Cauchy inequality and expression (32), we obtain

It follows from the expressions (27), (29) and (34) that the sequences and are bounded. Now, we need to use Lemma 3, for this it is compulsory to show that any weak sequential limit points of lies in the set Consider z to be a weak limit point of i.e., there is a of that is weakly converges to Because , then also weakly converge to z and so Now, it is renaming to show that From relation (11), due to and (17), we have

where It follows from (28), (32), (33) and the boundedness of right hand side tend to zero. Due to condition (f3) and implies

Because imply that It is prove that By Lemma 3, provides that and weakly converges to as

Finally, to prove that Let For any , we have

Clearly, the above implies that sequence is bounded. Next, we need to show that is a Cauchy sequence. By using Lemma 1(iii) and (23), we have

Thus, Lemma 4 provides the existence of Next, take (23) ∀ we have

Suppose that for through Lemma 1(i) and (39), we have

The existence of and the summability of the series imply ∀ As a result, is a Cauchy sequence and due the closeness of the set the sequence strongly converges to Next, remaining to show that From Lemma 1(ii) and , we have

Because of and , we obtain

implies that □

4. Applications to Solve Fixed Point Problems

Now, consider the applications of our results that are discussed in Section 3 to solve fixed-point problems involving -strict pseudo-contraction. Let be a mapping and the fixed point problem is formulated in the following manner:

Let a mapping is said to be

- (i)

- sequentially weakly continuous on if

- (ii)

- κ-strict pseudo-contraction [48] on ifthat is equivalent to

Note: if we define Then, the problem (1) convert into the fixed point problem with The value of in Algorithm 1 convert into followings:

In the similar way to the expression (44), we obtain

As a consequence of the results in Section 3, we have the following fixed point theorem:

Corollary 1.

Assume that to be a weakly continuous and κ-strict pseudocontraction with The sequences and be generated in the following way:

- (i)

- Choose and satisfies the following condition:

- (ii)

- Choose satisfies , such that

- (iii)

- Compute , where

- (iv)

- Revised the stepsize in the following way:

Subsequently, and be the sequences converges weakly to

5. Application to Solve Variational Inequality Problems

Now, consider the applications of our results that are discussed in Section 3 in order to solve variational inequality problems involving pseudomonotone and Lipschitz-type continuous operator. Let a operator and the variational inequality problem is formulated as follows:

A mapping is said to be

- (i)

- L-Lipschitz continuous on if

- (ii)

- monotone on if

- (iii)

- pseudomonotone on if

Note: let Thus, problem (1) translates into the problem (VIP) with From the value of we have

In similar way to the expression (49), we obtain

Suppose that a mapping L satisfies the following conditions:

- (L1)

- L is monotone on with ;

- (L2)

- L is L-Lipschitz continuous on with ;

- (L3)

- L is pseudomonotone on with ; and,

- (L4)

- and satisfying

Next, let L to be monotone and (L4) can be removed. The condition (L4) is used to defined and satisfy the conditions (L4). The condition (f3) is required to show see (36). The condition (L4) is required to show Further, to show that By letting the monotonicity of operator L, we have

By letting with expression (35), implies that

Therefore, provides ∀ Let ∀ Since for , we have

That is every Due to , while , we have for all consequently

Corollary 2.

Let be a mapping and satisfying the conditions(L1)–(L2). Assume that the sequences and generated in the following manner:

- (i)

- Choose and , such that

- (ii)

- Let satisfies and

- (iii)

- Compute where

- (iv)

- Stepsize is revised in the following way:

Subsequently, the sequences and converge weakly to

Corollary 3.

Let be a mapping and satisfying the conditions(L2)–(L4). Assume that the sequences and generated in the following manner:

- (i)

- Choose and , such that

- (ii)

- Choose satisfying , such that

- (iii)

- Compute where

- (iv)

- The stepsize is updated in the following way:

Subsequently, the sequences and converge weakly to

6. Numerical Experiments

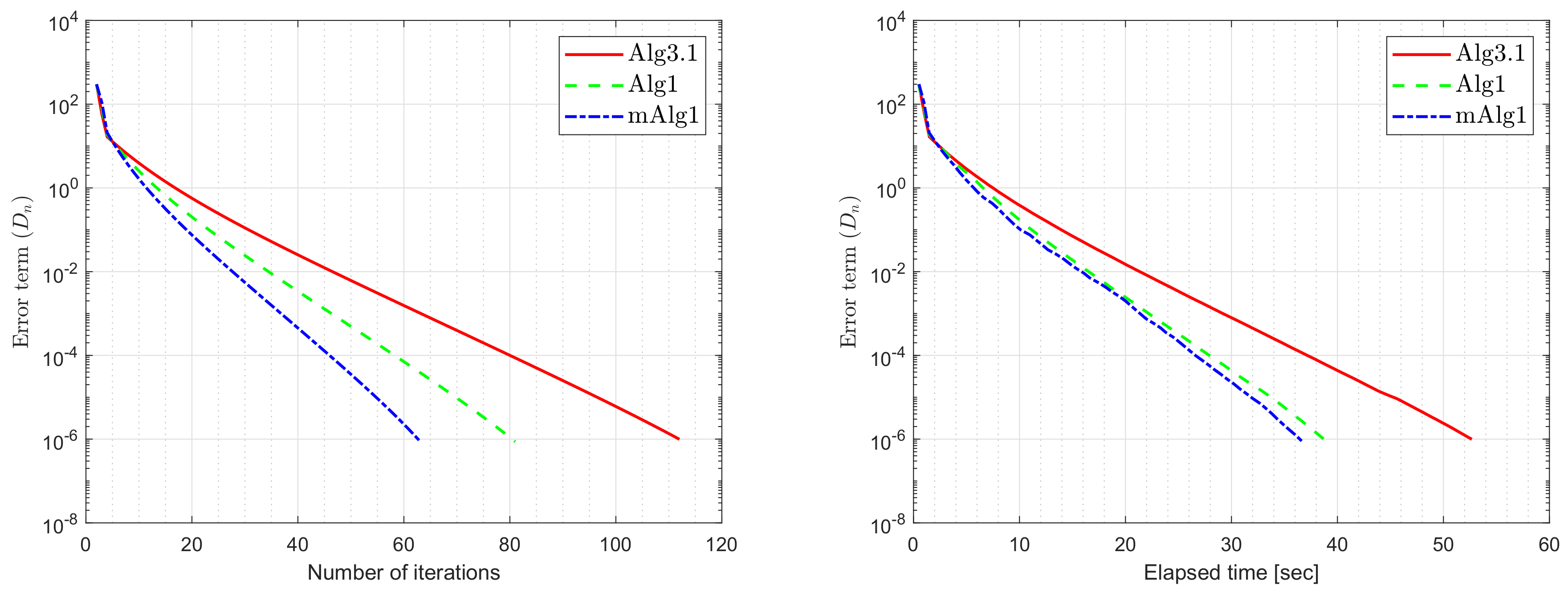

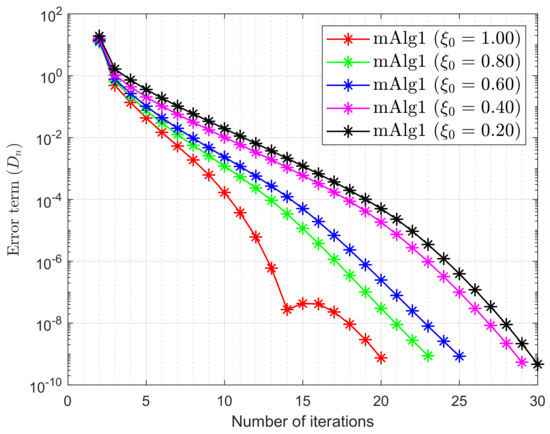

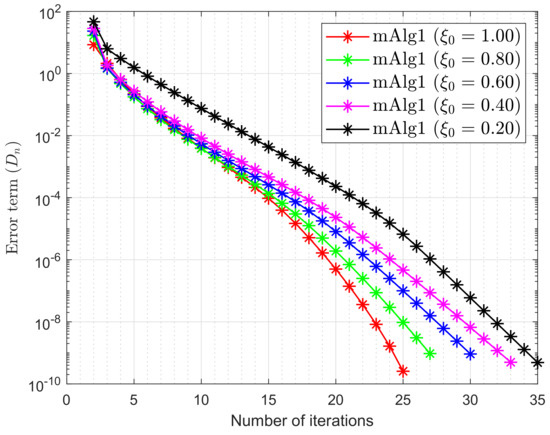

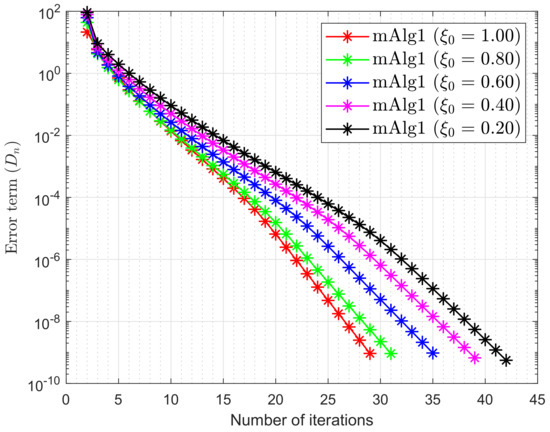

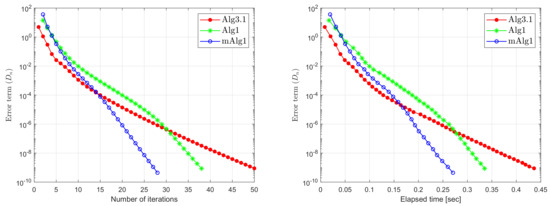

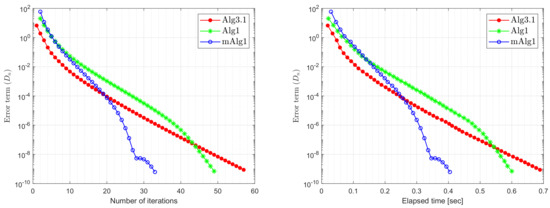

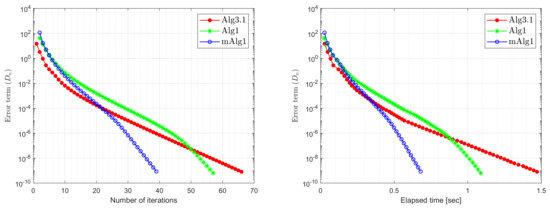

The computational results present this section to prove the effectiveness of Algorithm 1 when compared to Algorithm 3.1 in [39] and Algorithm 1 in [38].

- (i)

- For Algorithm 3.1 (Alg3.1) in [39]:

- (ii)

- For Algorithm 1 (Alg1) in [38]:

- (iii)

- For Algorithm 1 (mAlg1):

Example 1.

Let take the Nash–Cournot Equilibrium Model that found in the paper [6]. A bifunction f consider into the following form:

where with matrices P, Q of order m and Lipschitz constants are (see for more details [6]). In our case, are taken at random (choose diagonal matrices and randomly entries from and , respectively. Two random orthogonal matrices and provide positive semidefinite matrix and negative semidefinite matrix Finally, set and ) and elements of q are taken arbitrary form A set is taken as

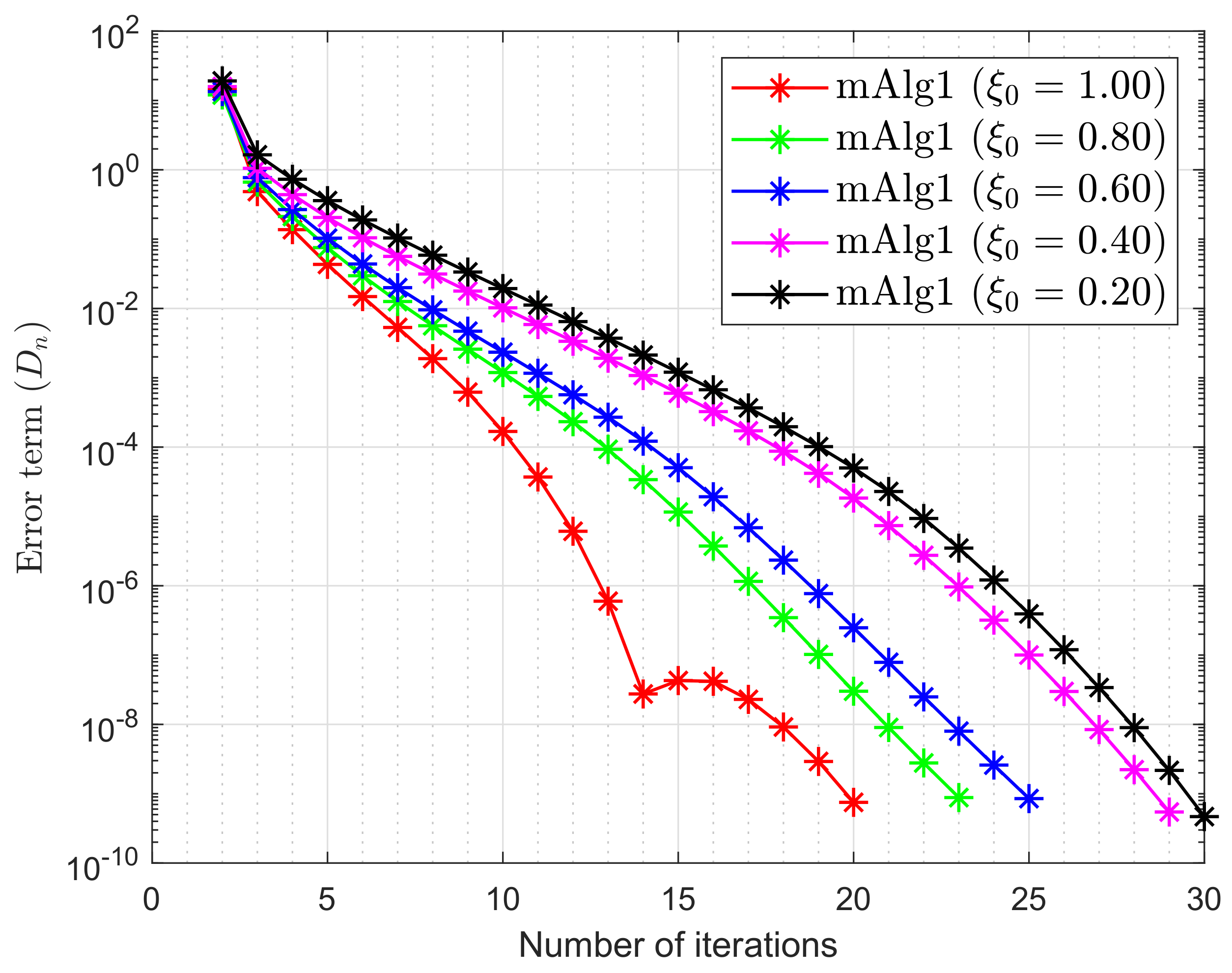

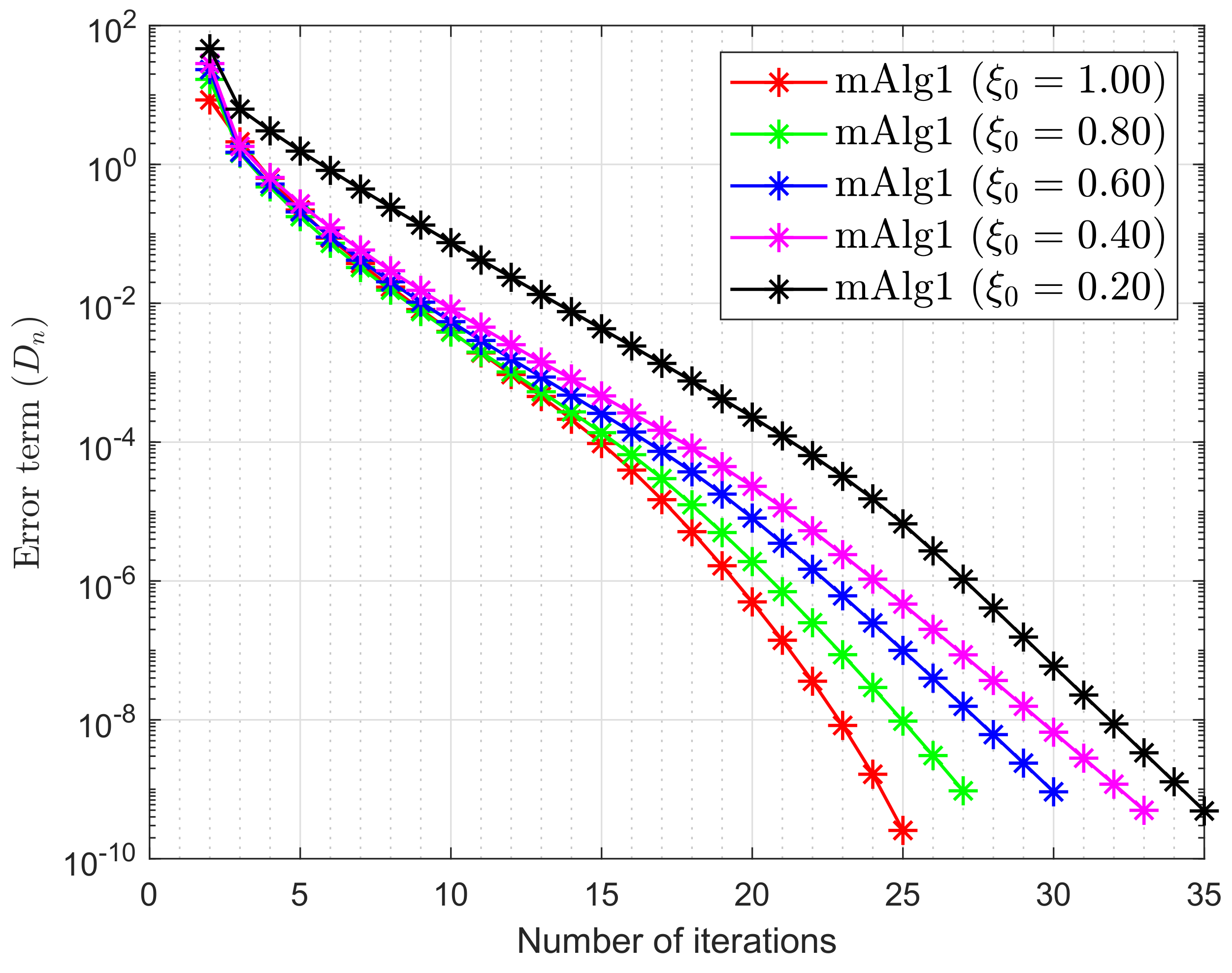

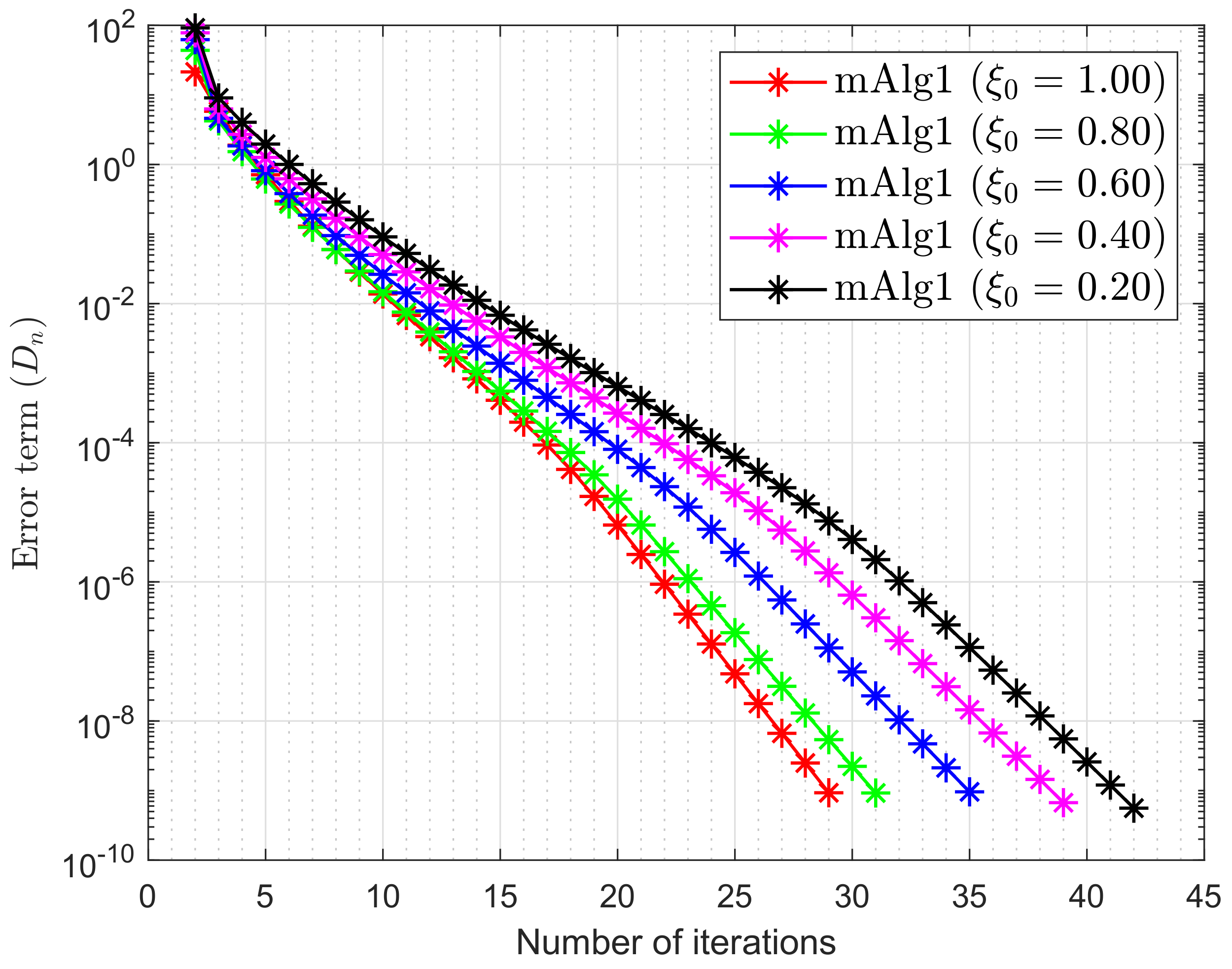

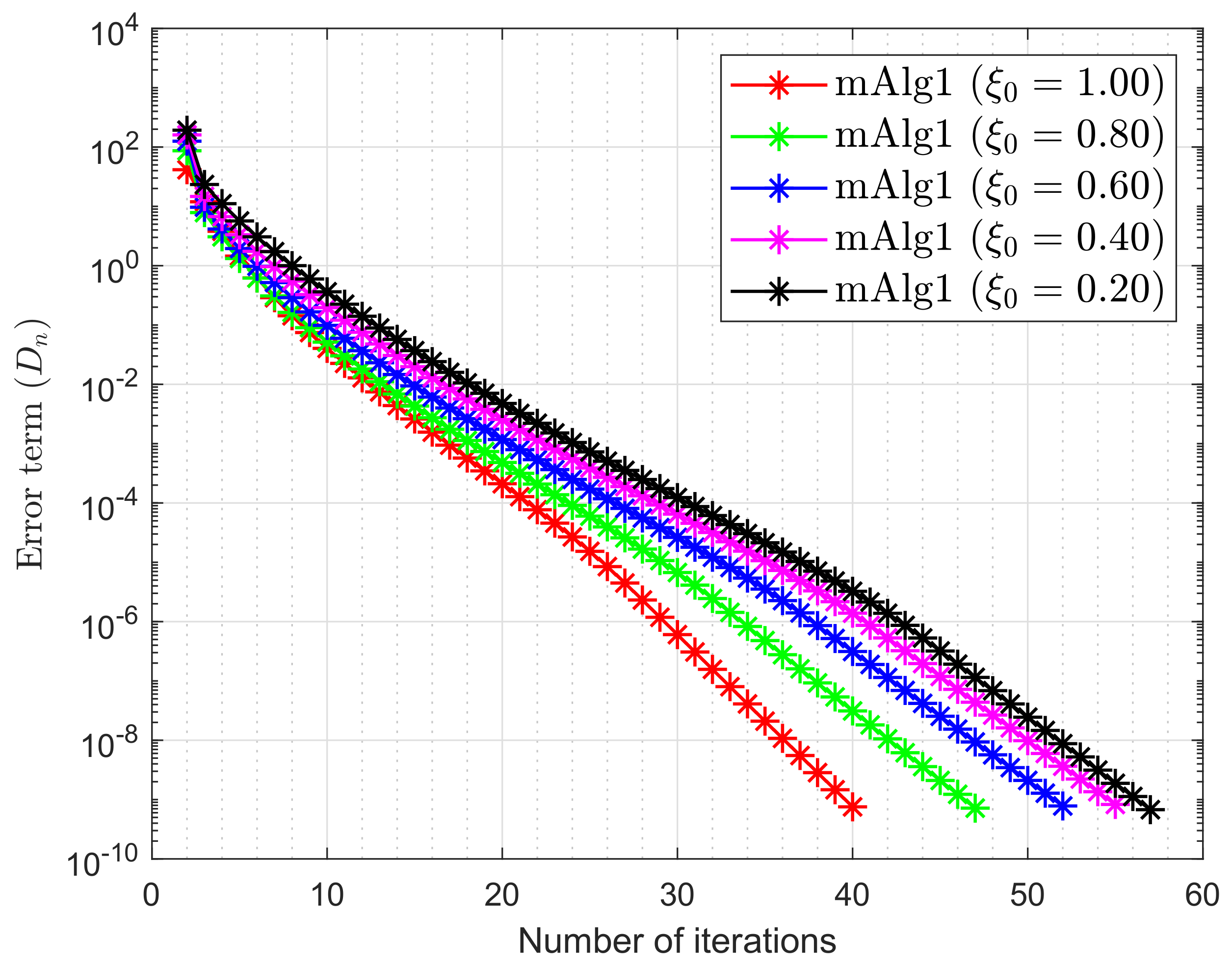

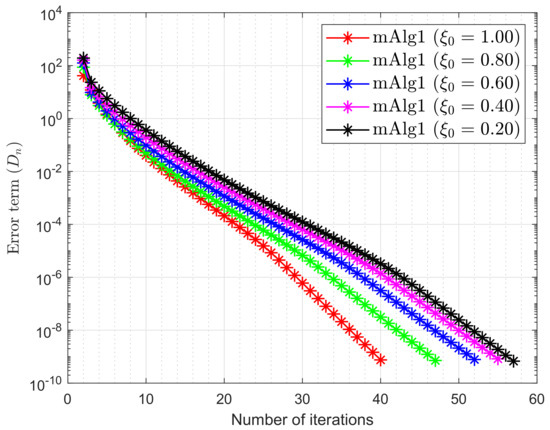

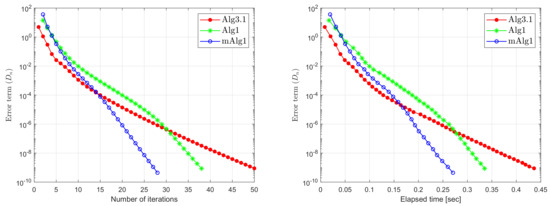

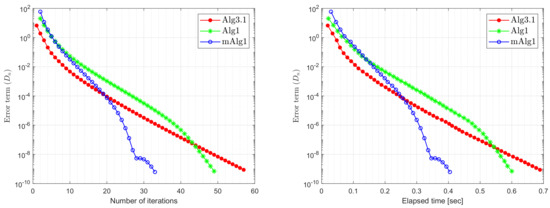

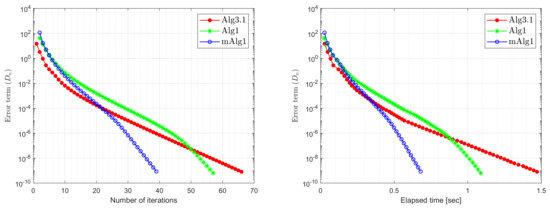

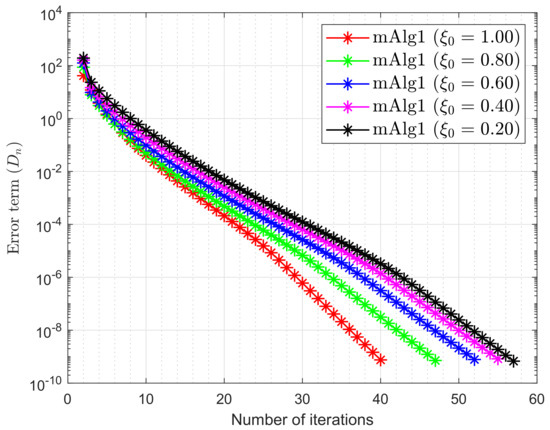

Table 1 and Table 2 and Figure 1, Figure 2, Figure 3, Figure 4, Figure 5, Figure 6, Figure 7 and Figure 8 presented the numerical results by taking and

Table 1.

Example 1: Algorithm 1 numerical behaviour by letting different options for and m.

Table 2.

Example 1: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38].

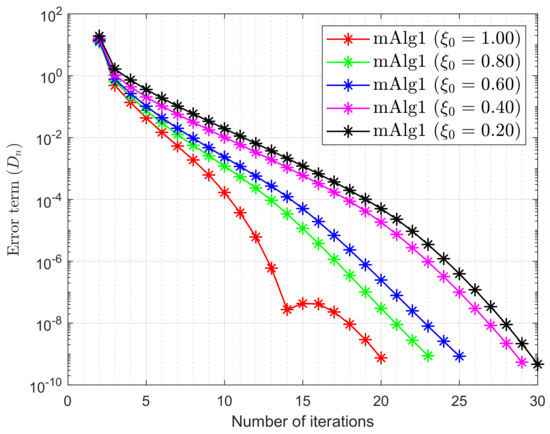

Figure 1.

Example 1: numerical behaviour of Algorithm 1 by letting different options for , while m = 10.

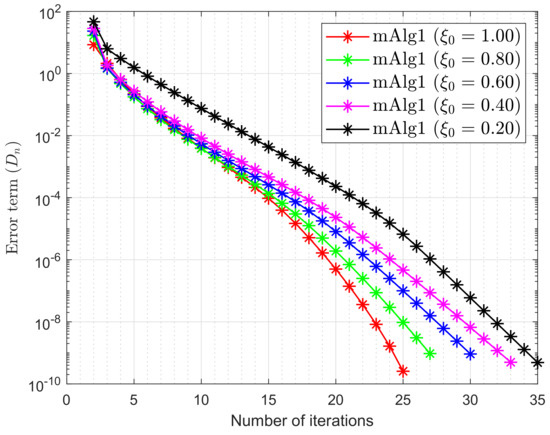

Figure 2.

Example 1: numerical behaviour of Algorithm 1 by letting different options for , while m = 20.

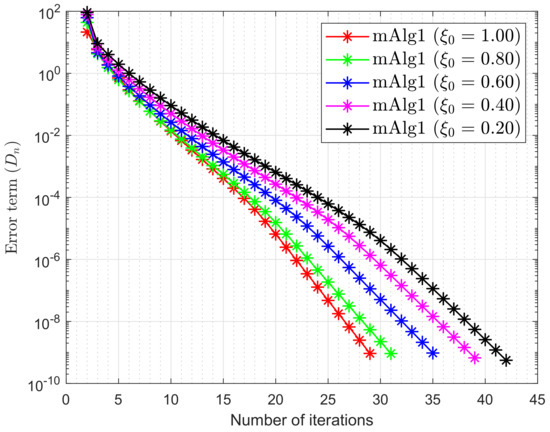

Figure 3.

Example 1: numerical behaviour of Algorithm 1 by letting different options for while m = 50.

Figure 4.

Example 1: numerical behaviour of Algorithm 1 by letting different options for while m = 100.

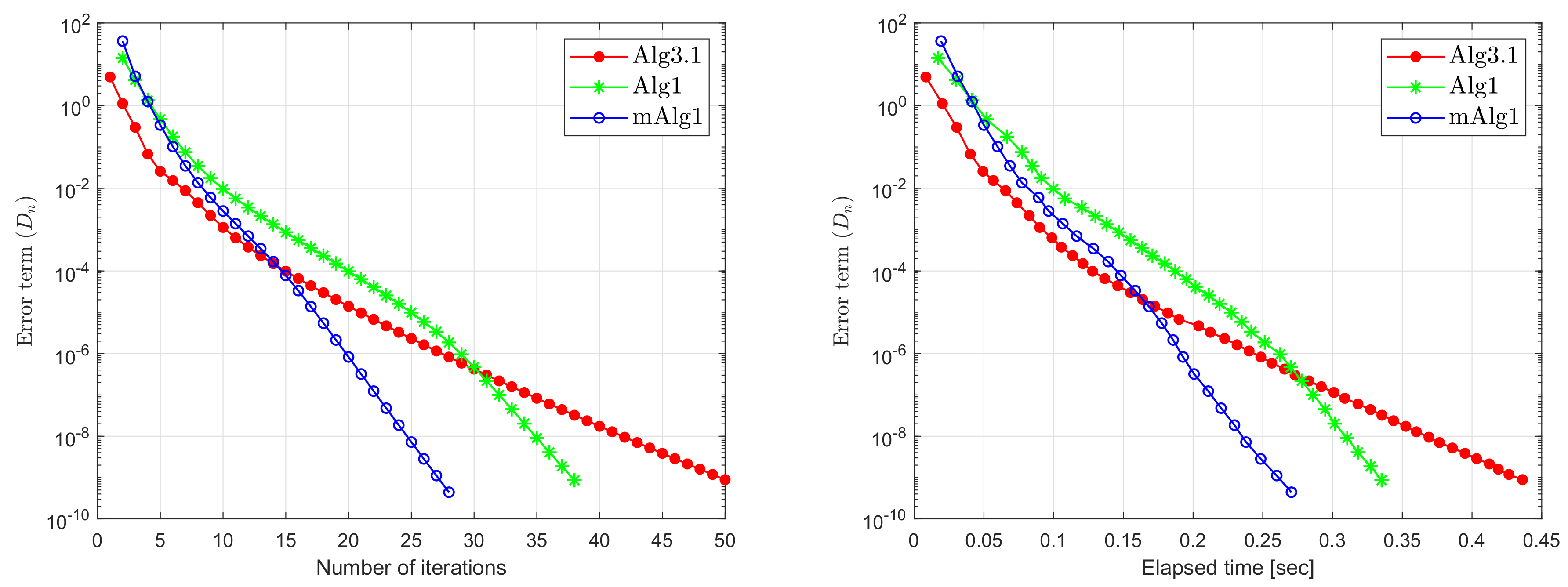

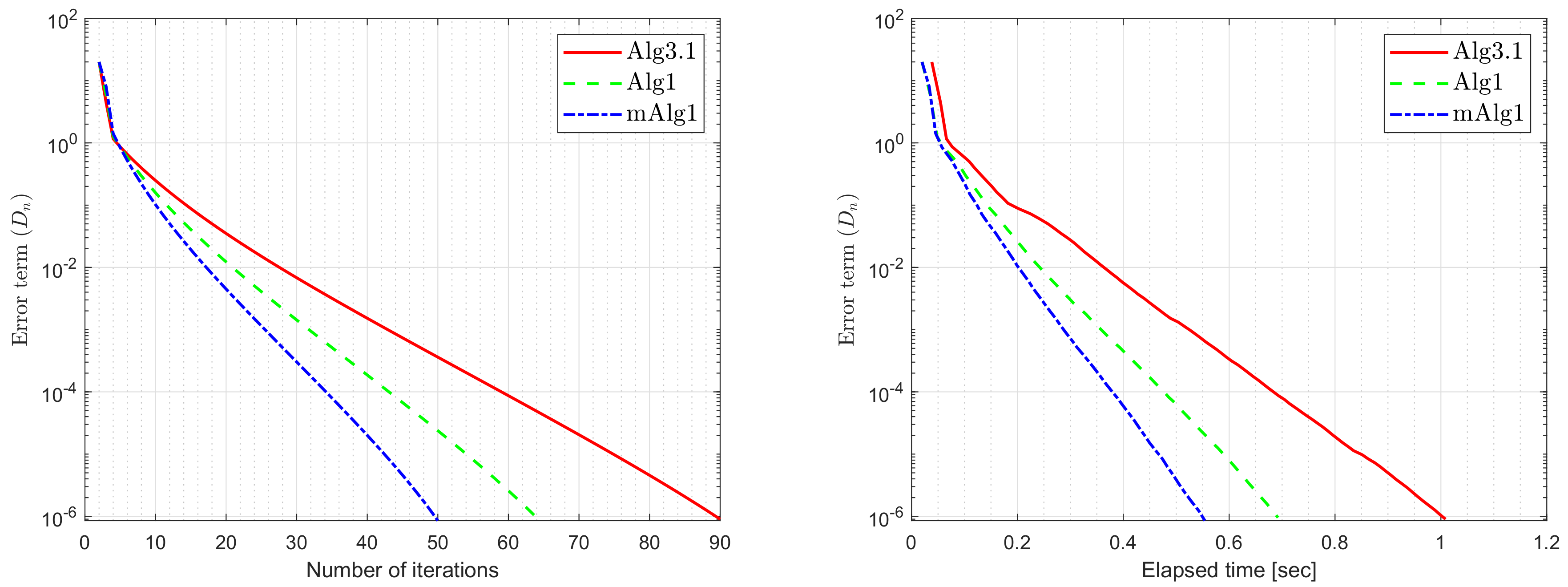

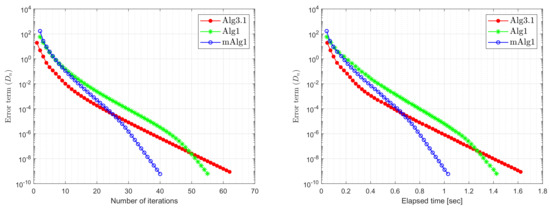

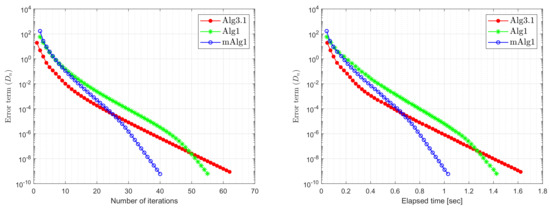

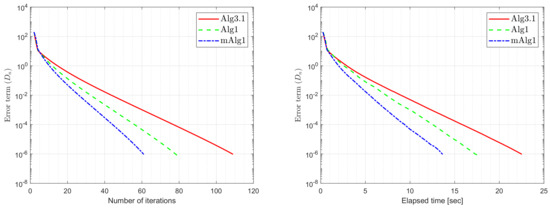

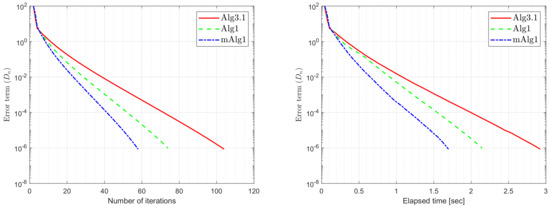

Figure 5.

Example 1: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 60.

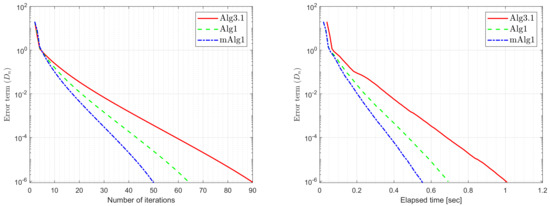

Figure 6.

Example 1: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 120.

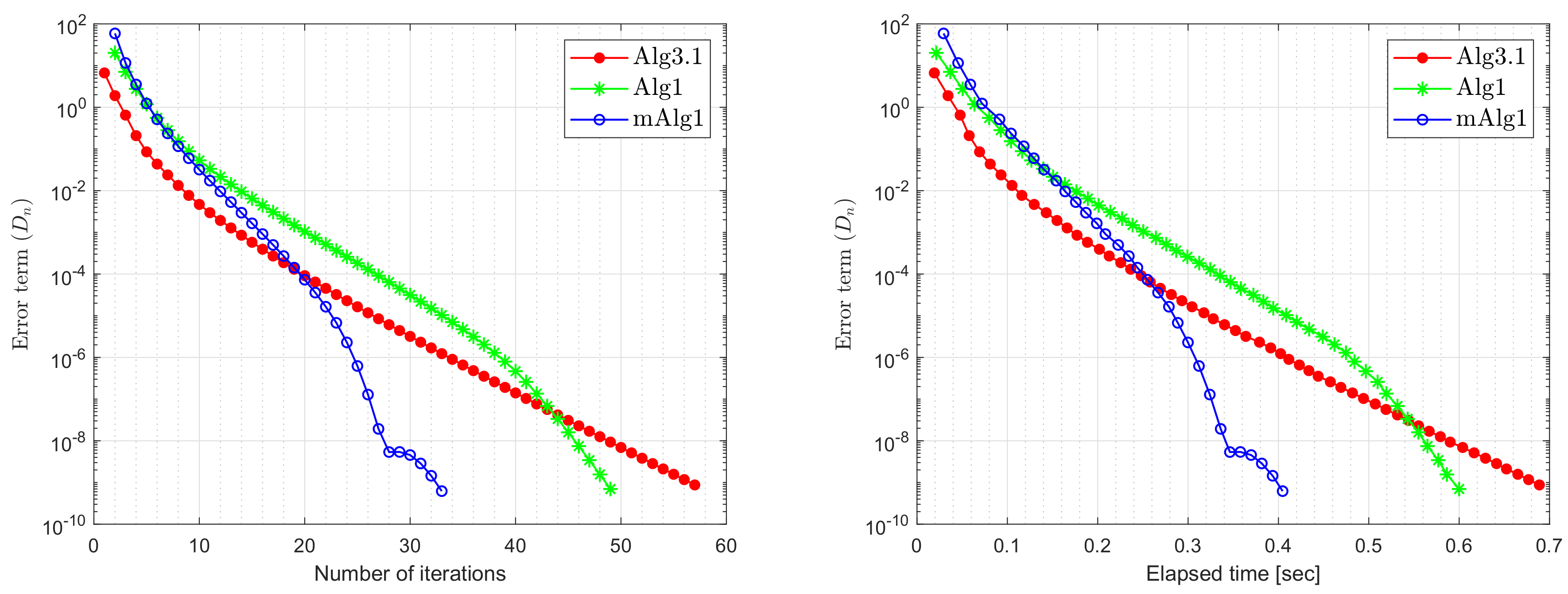

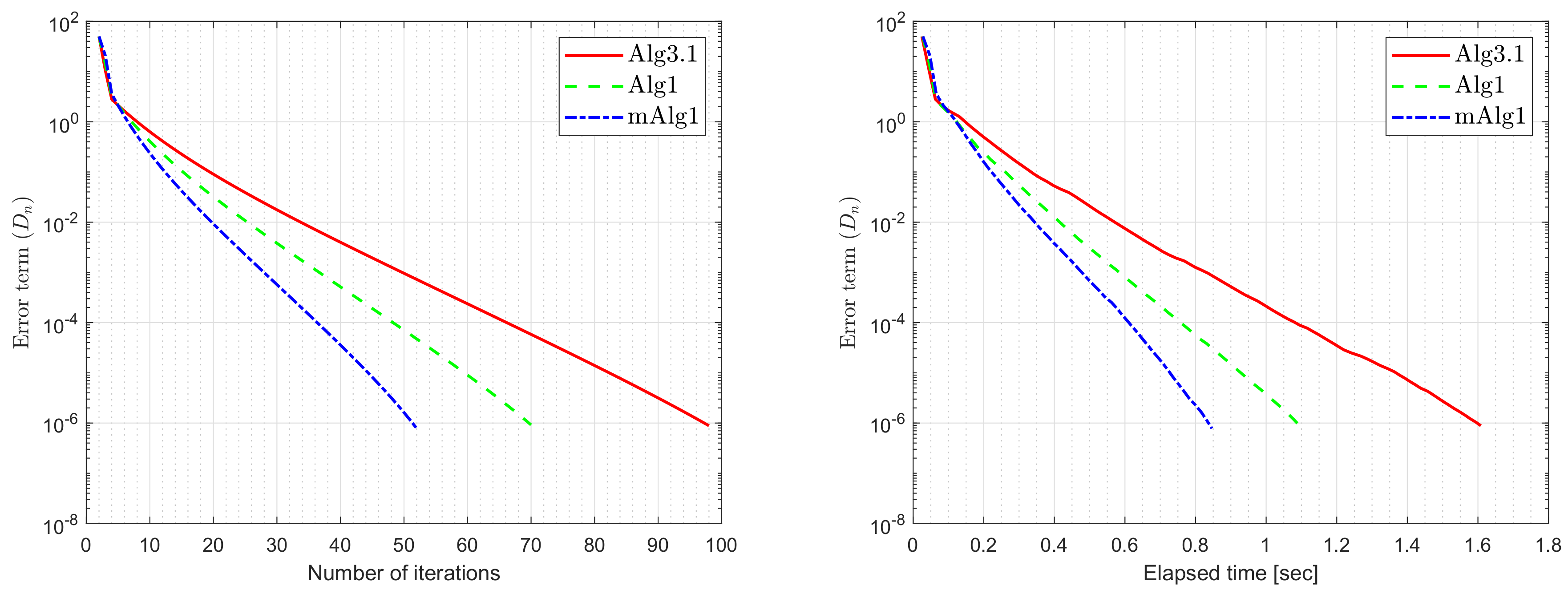

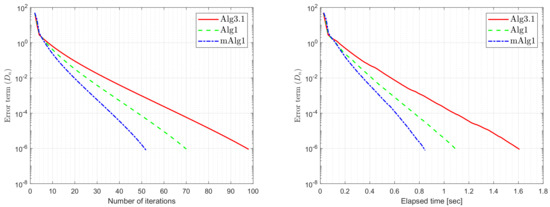

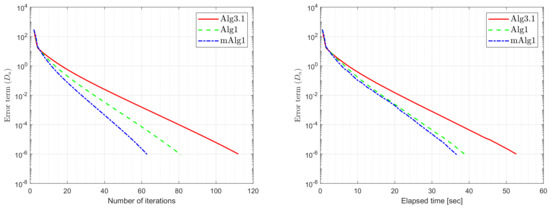

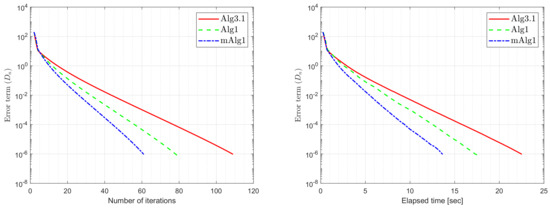

Figure 7.

Example 1: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 200.

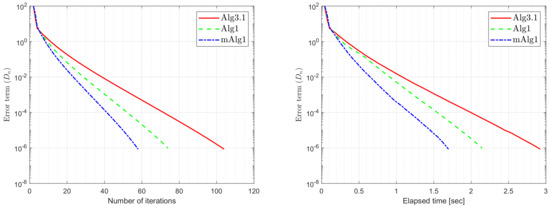

Figure 8.

Example 1: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 300.

Example 2.

Suppose that be a bifunction defined in the following way

where A bifunction f is Lipschitz-type continuous with constants and satisfy the conditions(f1)–(f4). In order to evaluate the best possible value of the control parameters, a numerical test is performed taking the variation of the inertial factor The numerical comparison results are shown in the Table 3 by using and

Table 3.

Example 2: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38].

Example 3.

Let be a Hilbert space with an inner product and the induced norm The set Suppose that is defined by

where for every and The projection on set is computed in the following way:

Table 4 reports the numerical results by using stopping criterion and letting

Table 4.

Example 3: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38].

Example 4.

Assume that a bifunction f is defined by

where

and

Let the matrix E of order m are consider in the following way:

where Figure 9, Figure 10, Figure 11, Figure 12 and Figure 13 and Table 5 report the numerical results by taking and

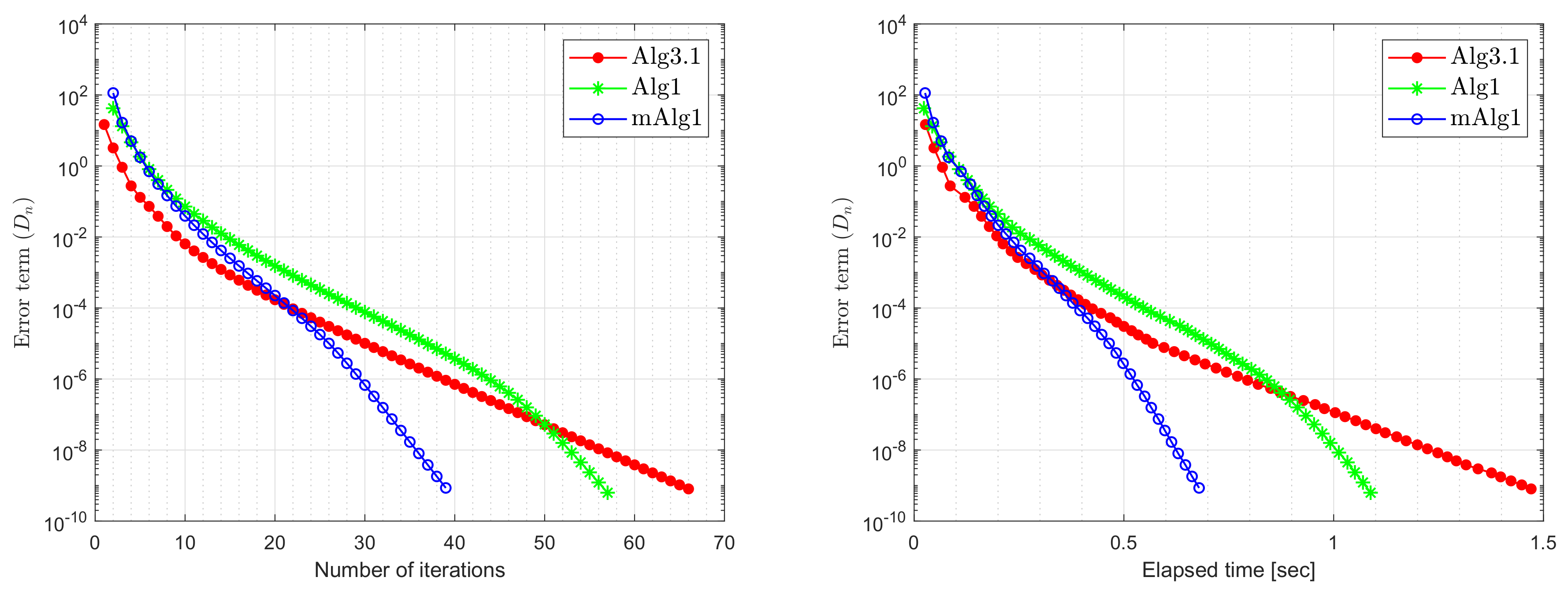

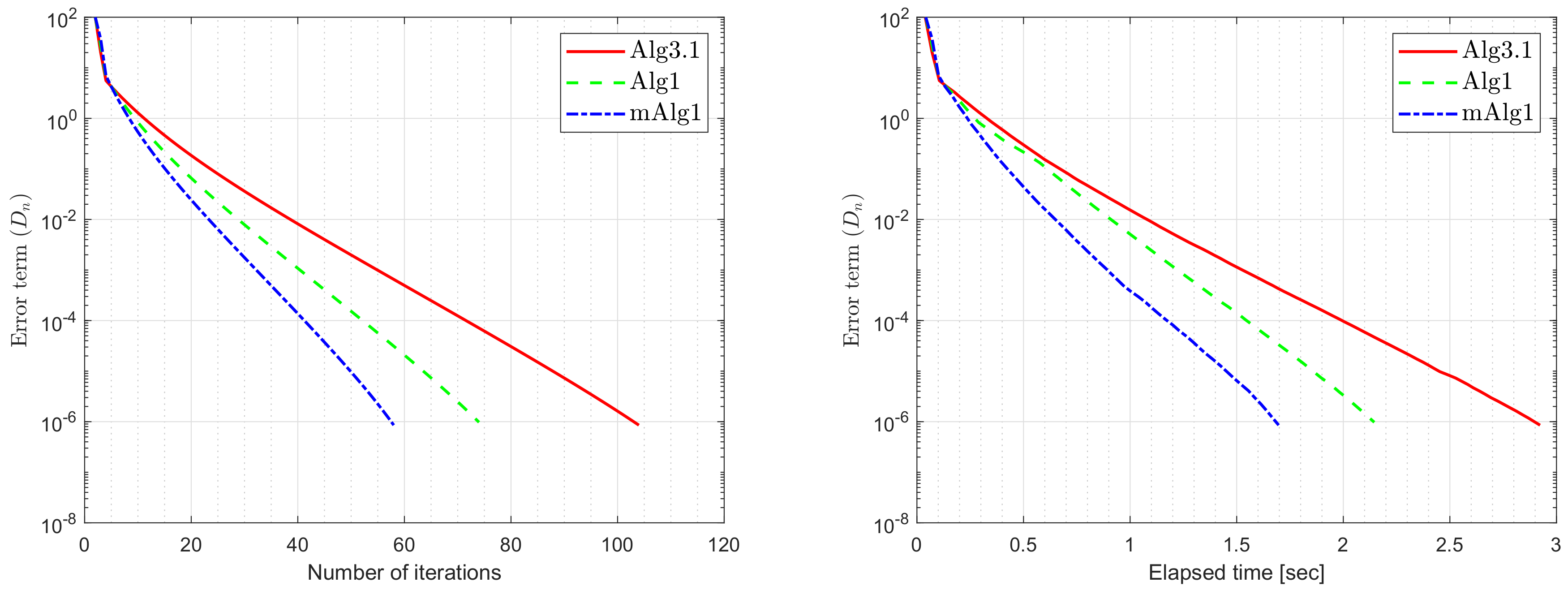

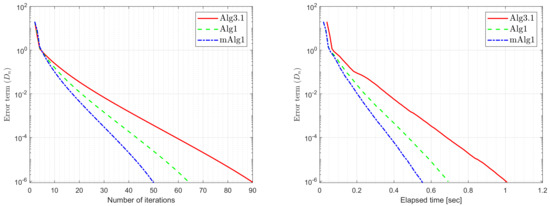

Figure 9.

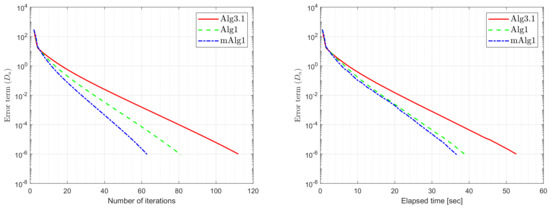

Example 4: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 20.

Figure 10.

Example 4: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 50.

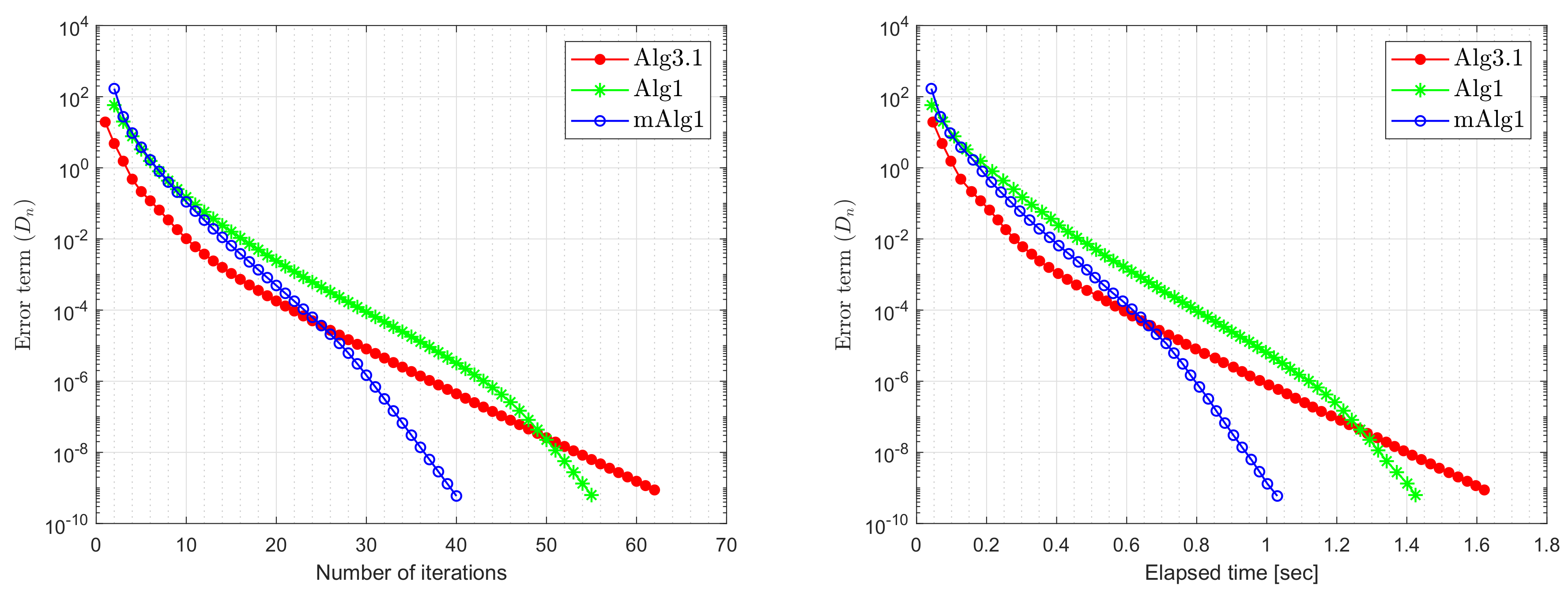

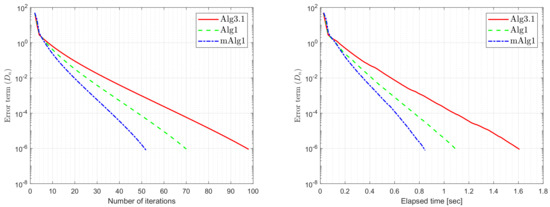

Figure 11.

Example 4: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 100.

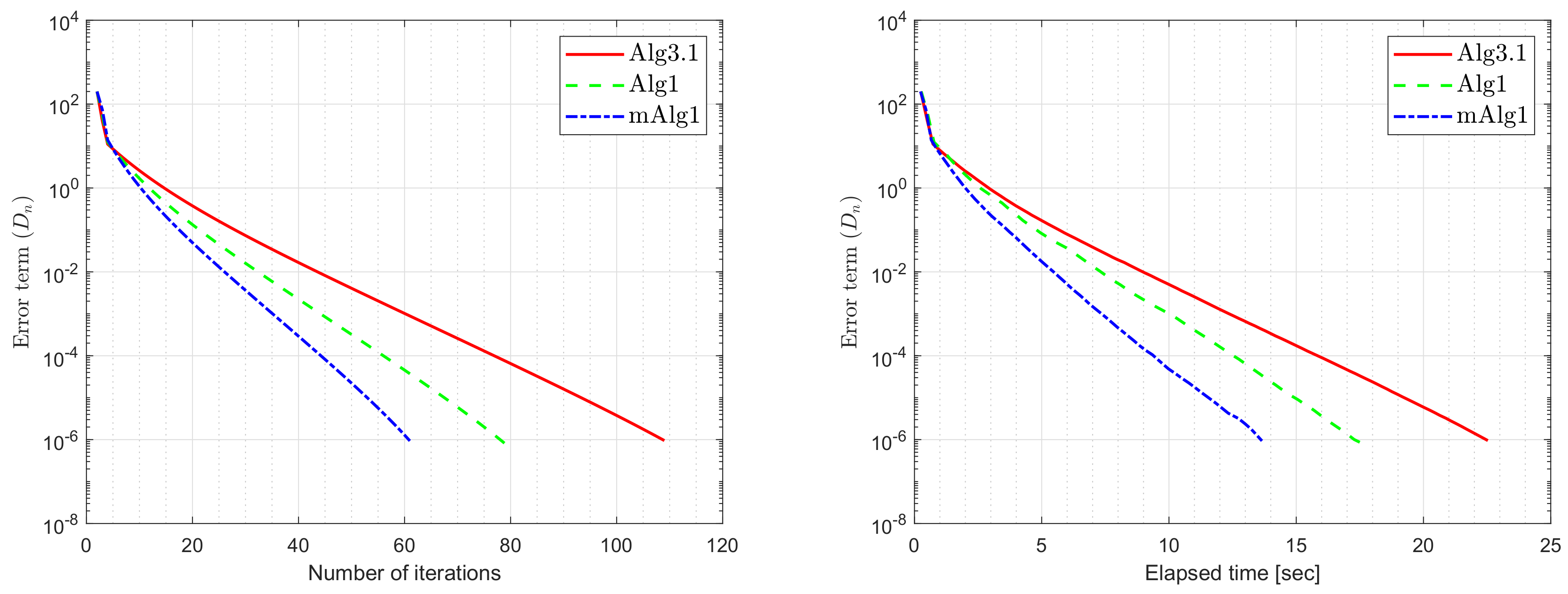

Figure 12.

Example 4: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 200.

Figure 13.

Example 4: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38] while m = 300.

Table 5.

Example 4: Algorithm 1 (mAlg1) numerical comparison with Algorithm 3.1 (Alg3.1) in [39] and Algorithm 1 (Alg1) in [38].

Remark 2.

- (i)

- It is also significant that the value of is crucial and performs best when it is nearer to

- (ii)

- It is observed that the selection of the value ϑ is often significant and roughly the value performs better than most other values.

7. Conclusions

In this paper, we consider the convergence result for pseudomonotone equilibrium problems that involve Lipschitz-type continuous bifunction but the Lipschitz-type constants are unknown. We modify the extragradient methods with an inertial term and new step size formula. Weak convergence theorem is proved for sequences generated by the algorithm. Several numerical experiments confirm the effectiveness of the proposed algorithms.

Author Contributions

Conceptualization, H.u.R., N.P. and M.D.l.S.; Writing-Original Draft Preparation, N.W., N.P. and H.u.R.; Writing-Review & Editing, N.W., N.P., H.u.R. and M.D.l.S.; Methodology, N.P. and H.u.R.; Visualization, N.W. and N.P.; Software, H.u.R.; Funding Acquisition, M.D.l.S.; Supervision, M.D.l.S. and H.u.R.; Project Administration; M.D.l.S.; Resources; M.D.l.S. and H.u.R. All authors have read and agreed to the published version of this manuscript.

Funding

This research work was financially supported by Spanish Government for Grant RTI2018-094336-B-I00 (MCIU/AEI/FEDER, UE) and to the Basque Government for Grant IT1207-19.

Acknowledgments

We are very grateful to the Editor and the anonymous referees for their valuable and useful comments, which helped improve the quality of this work. Nopparat Wairojjana was partially supported by Valaya Alongkorn Rajabhat University under the Royal Patronage, Thailand. The corresponding author are grateful to the Spanish Government for Grant RTI2018-094336-B-I00 (MCIU/AEI/FEDER, UE) and to the Basque Government for Grant IT1207-19.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Blum, E. From optimization and variational inequalities to equilibrium problems. Math. Stud. 1994, 63, 123–145. [Google Scholar]

- Fan, K. A Minimax Inequality and Applications, Inequalities III; Shisha, O., Ed.; Academic Press: New York, NY, USA, 1972. [Google Scholar]

- Facchinei, F.; Pang, J.S. Finite-Dimensional Variational Inequalities and Complementarity Problems; Springer Science & Business Media: Berlin, Germany, 2007. [Google Scholar]

- Konnov, I. Equilibrium Models and Variational Inequalities; Elsevier: Amsterdam, The Netherlands, 2007; Volume 210. [Google Scholar]

- Muu, L.D.; Oettli, W. Convergence of an adaptive penalty scheme for finding constrained equilibria. Nonlinear Anal. Theory Methods Appl. 1992, 18, 1159–1166. [Google Scholar] [CrossRef]

- Quoc, T.D.; Le Dung, M.N.V.H. Extragradient algorithms extended to equilibrium problems. Optimization 2008, 57, 749–776. [Google Scholar] [CrossRef]

- Quoc, T.D.; Anh, P.N.; Muu, L.D. Dual extragradient algorithms extended to equilibrium problems. J. Glob. Optim. 2011, 52, 139–159. [Google Scholar] [CrossRef]

- Lyashko, S.I.; Semenov, V.V. A New Two-Step Proximal Algorithm of Solving the Problem of Equilibrium Programming. In Optimization and Its Applications in Control and Data Sciences; Springer International Publishing: Berlin/Heidelberg, Germany, 2016; pp. 315–325. [Google Scholar] [CrossRef]

- Takahashi, S.; Takahashi, W. Viscosity approximation methods for equilibrium problems and fixed point problems in Hilbert spaces. J. Math. Anal. Appl. 2007, 331, 506–515. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Cho, Y.J.; Yordsorn, P. Weak convergence of explicit extragradient algorithms for solving equilibirum problems. J. Inequalities Appl. 2019, 2019. [Google Scholar] [CrossRef]

- Anh, P.N.; Hai, T.N.; Tuan, P.M. On ergodic algorithms for equilibrium problems. J. Glob. Optim. 2015, 64, 179–195. [Google Scholar] [CrossRef]

- Hieu, D.V.; Quy, P.K.; Vy, L.V. Explicit iterative algorithms for solving equilibrium problems. Calcolo 2019, 56. [Google Scholar] [CrossRef]

- Hieu, D.V. New extragradient method for a class of equilibrium problems in Hilbert spaces. Appl. Anal. 2017, 97, 811–824. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Je Cho, Y.; Suleiman, Y.I.; Kumam, W. Modified Popov’s explicit iterative algorithms for solving pseudomonotone equilibrium problems. Optim. Methods Softw. 2020, 1–32. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Abubakar, A.B.; Cho, Y.J. The extragradient algorithm with inertial effects extended to equilibrium problems. Comput. Appl. Math. 2020, 39. [Google Scholar] [CrossRef]

- Santos, P.; Scheimberg, S. An inexact subgradient algorithm for equilibrium problems. Comput. Appl. Math. 2011, 30, 91–107. [Google Scholar]

- Hieu, D.V. Halpern subgradient extragradient method extended to equilibrium problems. Revista de la Real Academia de Ciencias Exactas, Físicas y Naturales Serie A Matemáticas 2016, 111, 823–840. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Kumam, W.; Shutaywi, M.; Jirakitpuwapat, W. The Inertial Sub-Gradient Extra-Gradient Method for a Class of Pseudo-Monotone Equilibrium Problems. Symmetry 2020, 12, 463. [Google Scholar] [CrossRef]

- Anh, P.N.; An, L.T.H. The subgradient extragradient method extended to equilibrium problems. Optimization 2012, 64, 225–248. [Google Scholar] [CrossRef]

- Muu, L.D.; Quoc, T.D. Regularization Algorithms for Solving Monotone Ky Fan Inequalities with Application to a Nash-Cournot Equilibrium Model. J. Optim. Theory Appl. 2009, 142, 185–204. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Argyros, I.K.; Deebani, W.; Kumam, W. Inertial Extra-Gradient Method for Solving a Family of Strongly Pseudomonotone Equilibrium Problems in Real Hilbert Spaces with Application in Variational Inequality Problem. Symmetry 2020, 12, 503. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Argyros, I.K.; Alreshidi, N.A.; Kumam, W.; Jirakitpuwapat, W. A Self-Adaptive Extra-Gradient Methods for a Family of Pseudomonotone Equilibrium Programming with Application in Different Classes of Variational Inequality Problems. Symmetry 2020, 12, 523. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Argyros, I.K.; Shutaywi, M.; Shah, Z. Optimization Based Methods for Solving the Equilibrium Problems with Applications in Variational Inequality Problems and Solution of Nash Equilibrium Models. Mathematics 2020, 8, 822. [Google Scholar] [CrossRef]

- Yordsorn, P.; Kumam, P.; ur Rehman, H.; Ibrahim, A.H. A Weak Convergence Self-Adaptive Method for Solving Pseudomonotone Equilibrium Problems in a Real Hilbert Space. Mathematics 2020, 8, 1165. [Google Scholar] [CrossRef]

- Yordsorn, P.; Kumam, P.; Rehman, H.U. Modified two-step extragradient method for solving the pseudomonotone equilibrium programming in a real Hilbert space. Carpathian J. Math. 2020, 36, 313–330. [Google Scholar]

- La Sen, M.D.; Agarwal, R.P.; Ibeas, A.; Alonso-Quesada, S. On the Existence of Equilibrium Points, Boundedness, Oscillating Behavior and Positivity of a SVEIRS Epidemic Model under Constant and Impulsive Vaccination. Adv. Differ. Equ. 2011, 2011, 1–32. [Google Scholar] [CrossRef]

- La Sen, M.D.; Agarwal, R.P. Some fixed point-type results for a class of extended cyclic self-mappings with a more general contractive condition. Fixed Point Theory Appl. 2011, 2011. [Google Scholar] [CrossRef]

- Wairojjana, N.; ur Rehman, H.; Argyros, I.K.; Pakkaranang, N. An Accelerated Extragradient Method for Solving Pseudomonotone Equilibrium Problems with Applications. Axioms 2020, 9, 99. [Google Scholar] [CrossRef]

- La Sen, M.D. On Best Proximity Point Theorems and Fixed Point Theorems for -Cyclic Hybrid Self-Mappings in Banach Spaces. Abstr. Appl. Anal. 2013, 2013, 1–14. [Google Scholar] [CrossRef]

- ur Rehman, H.; Kumam, P.; Shutaywi, M.; Alreshidi, N.A.; Kumam, W. Inertial Optimization Based Two-Step Methods for Solving Equilibrium Problems with Applications in Variational Inequality Problems and Growth Control Equilibrium Models. Energies 2020, 13, 3292. [Google Scholar] [CrossRef]

- Rehman, H.U.; Kumam, P.; Dong, Q.L.; Peng, Y.; Deebani, W. A new Popov’s subgradient extragradient method for two classes of equilibrium programming in a real Hilbert space. Optimization 2020, 1–36. [Google Scholar] [CrossRef]

- Wang, L.; Yu, L.; Li, T. Parallel extragradient algorithms for a family of pseudomonotone equilibrium problems and fixed point problems of nonself-nonexpansive mappings in Hilbert space. J. Nonlinear Funct. Anal. 2020, 2020, 13. [Google Scholar]

- Shahzad, N.; Zegeye, H. Convergence theorems of common solutions for fixed point, variational inequality and equilibrium problems, J. Nonlinear Var. Anal. 2019, 3, 189–203. [Google Scholar]

- Farid, M. The subgradient extragradient method for solving mixed equilibrium problems and fixed point problems in Hilbert spaces. J. Appl. Numer. Optim. 2019, 1, 335–345. [Google Scholar]

- Flåm, S.D.; Antipin, A.S. Equilibrium programming using proximal-like algorithms. Math. Program. 1996, 78, 29–41. [Google Scholar] [CrossRef]

- Korpelevich, G. The extragradient method for finding saddle points and other problems. Matecon 1976, 12, 747–756. [Google Scholar]

- Yang, J.; Liu, H.; Liu, Z. Modified subgradient extragradient algorithms for solving monotone variational inequalities. Optimization 2018, 67, 2247–2258. [Google Scholar] [CrossRef]

- Vinh, N.T.; Muu, L.D. Inertial Extragradient Algorithms for Solving Equilibrium Problems. Acta Math. Vietnam. 2019, 44, 639–663. [Google Scholar] [CrossRef]

- Hieu, D.V.; Cho, Y.J.; bin Xiao, Y. Modified extragradient algorithms for solving equilibrium problems. Optimization 2018, 67, 2003–2029. [Google Scholar] [CrossRef]

- Bianchi, M.; Schaible, S. Generalized monotone bifunctions and equilibrium problems. J. Optim. Theory Appl. 1996, 90, 31–43. [Google Scholar] [CrossRef]

- Mastroeni, G. On Auxiliary Principle for Equilibrium Problems. In Nonconvex Optimization and Its Applications; Springer: New York, NY, USA, 2003; pp. 289–298. [Google Scholar] [CrossRef]

- Kreyszig, E. Introductory Functional Analysis with Applications, 1st ed.; Wiley Classics Library, Wiley: Hoboken, NJ, USA, 1989. [Google Scholar]

- Tiel, J.V. Convex Analysis: An Introductory Text, 1st ed.; Wiley: New York, NY, USA, 1984. [Google Scholar]

- Ioffe, A.D.; Tihomirov, V.M. (Eds.) Theory of Extremal Problems. In Studies in Mathematics and Its Applications 6; North-Holland, Elsevier: Amsterdam, The Netherlands; New York, NY, USA, 1979. [Google Scholar]

- Opial, Z. Weak convergence of the sequence of successive approximations for nonexpansive mappings. Bull. Amer. Math. Soc. 1967, 73, 591–598. [Google Scholar] [CrossRef]

- Tan, K.; Xu, H. Approximating Fixed Points of Nonexpansive Mappings by the Ishikawa Iteration Process. J. Math. Anal. Appl. 1993, 178, 301–308. [Google Scholar] [CrossRef]

- Bauschke, H.H.; Combettes, P.L. Convex Analysis and Monotone Operator Theory in Hilbert Spaces; Springer: New York, NY, USA, 2011; Volume 408. [Google Scholar]

- Browder, F.; Petryshyn, W. Construction of fixed points of nonlinear mappings in Hilbert space. J. Math. Anal. Appl. 1967, 20, 197–228. [Google Scholar] [CrossRef]

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).