Tool-Wear Analysis Using Image Processing of the Tool Flank

Abstract

:1. Introduction

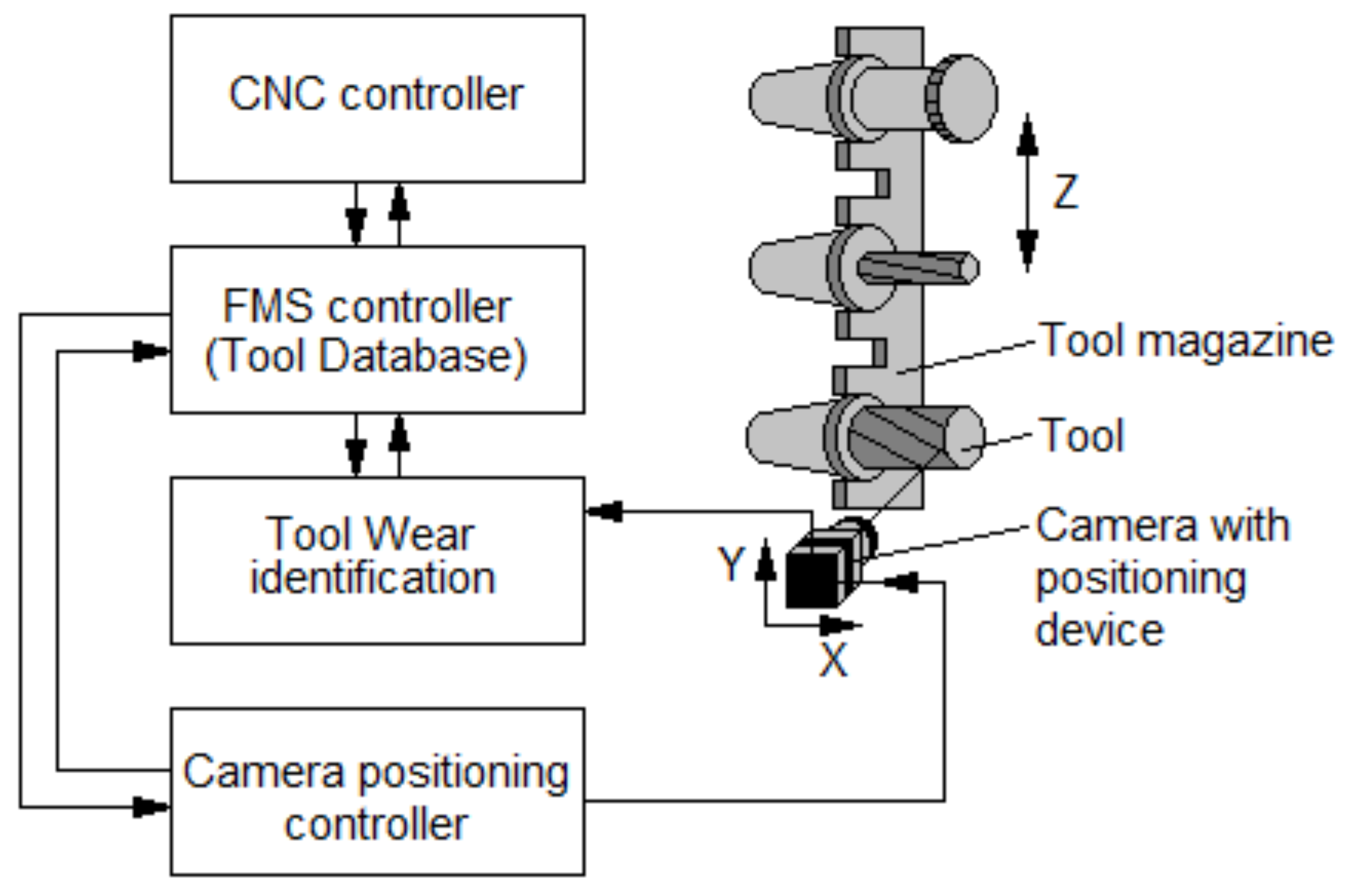

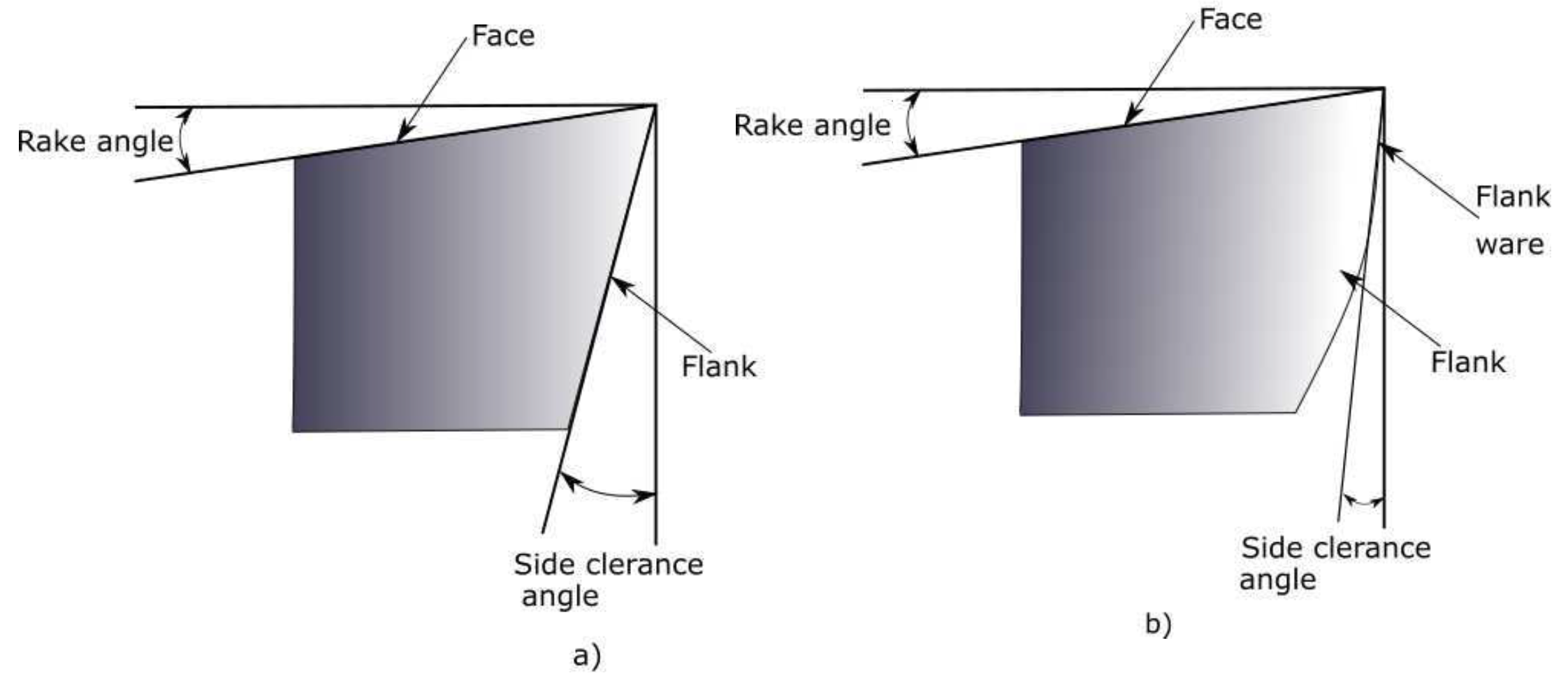

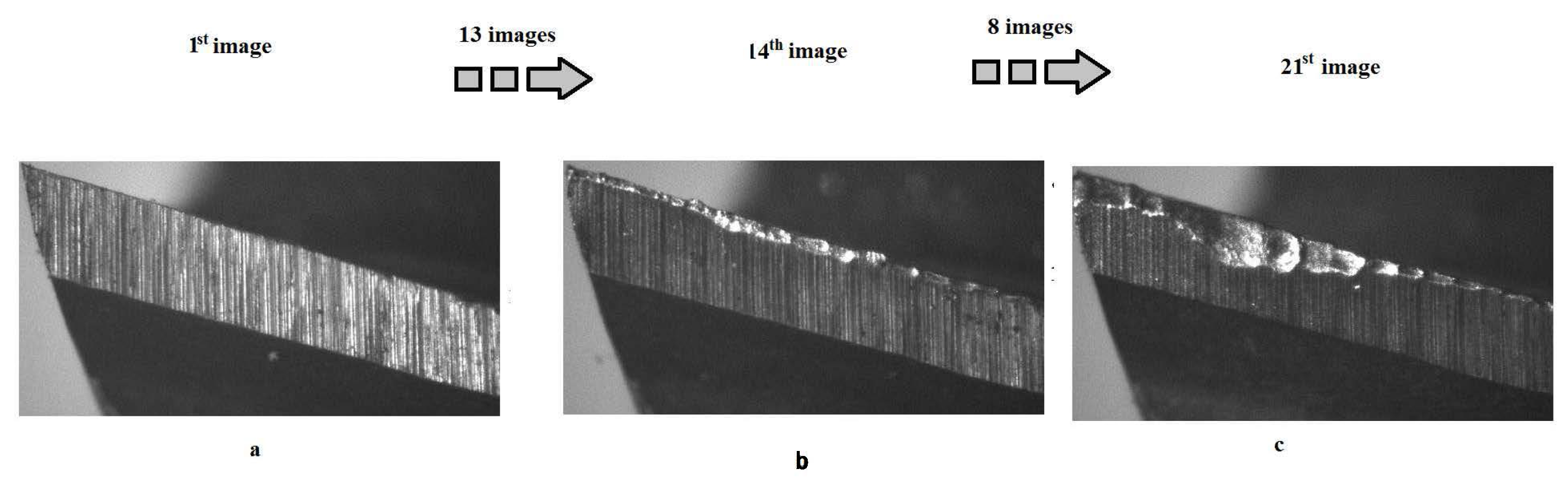

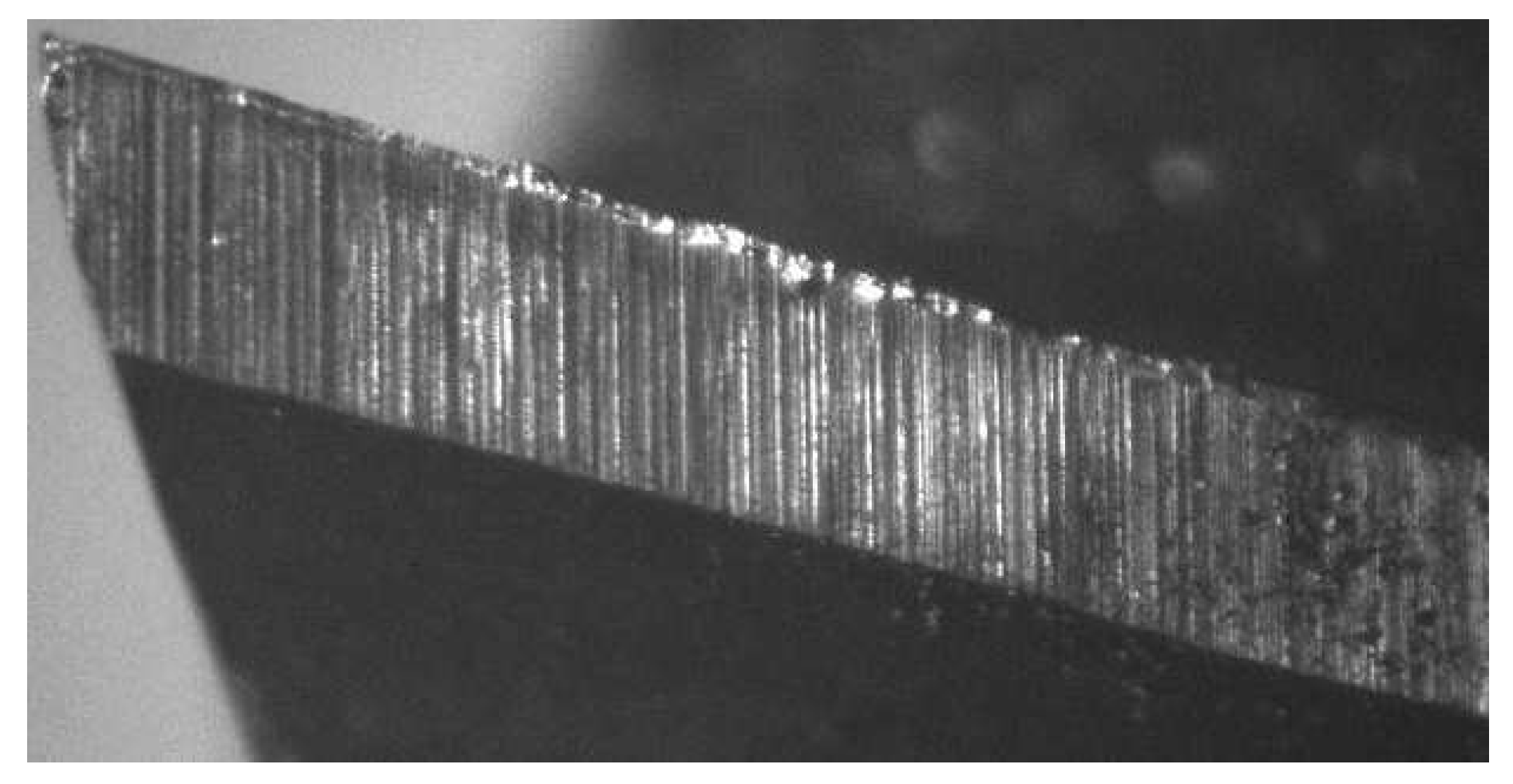

2. Tool-Flank-Wear Monitoring System

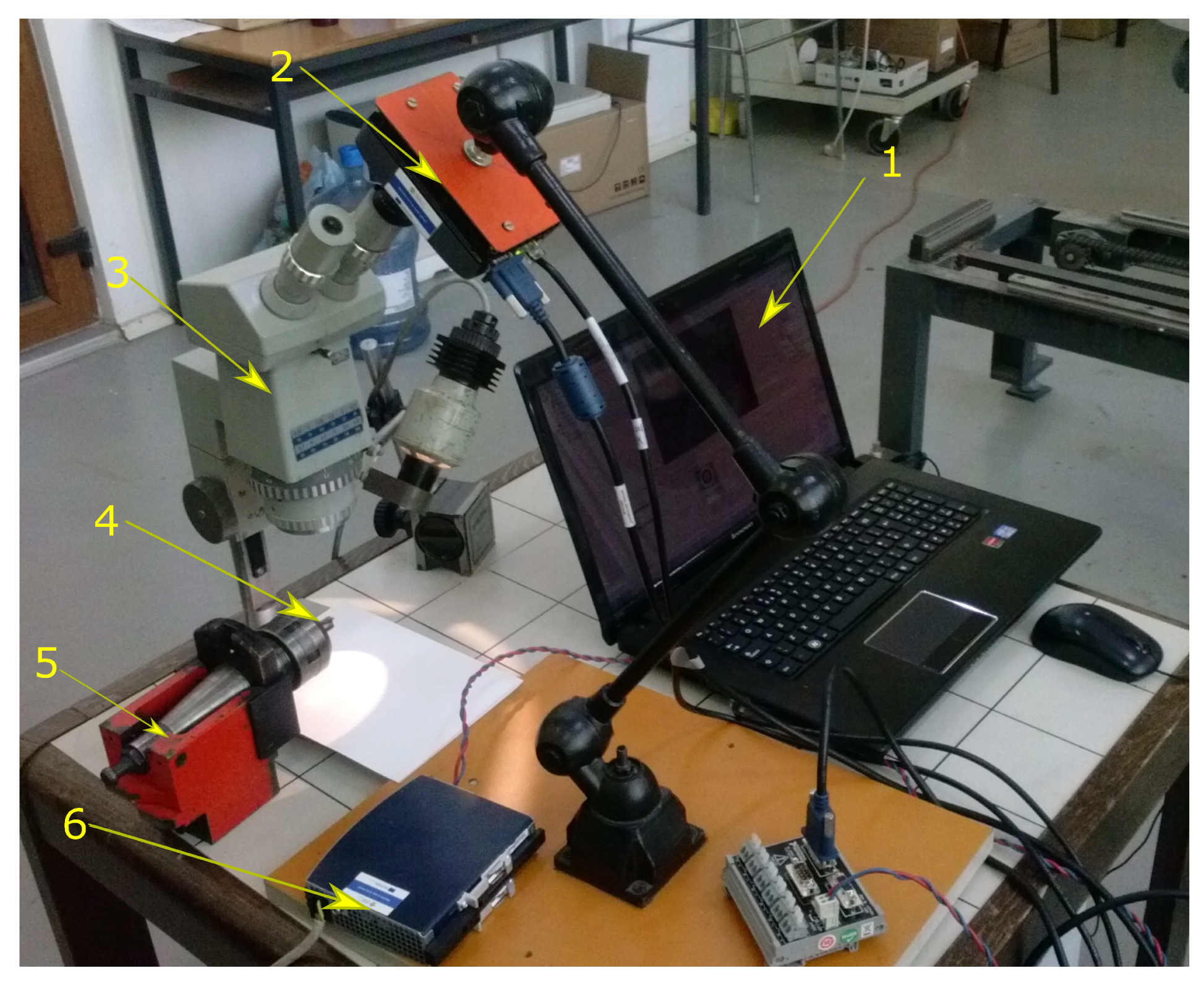

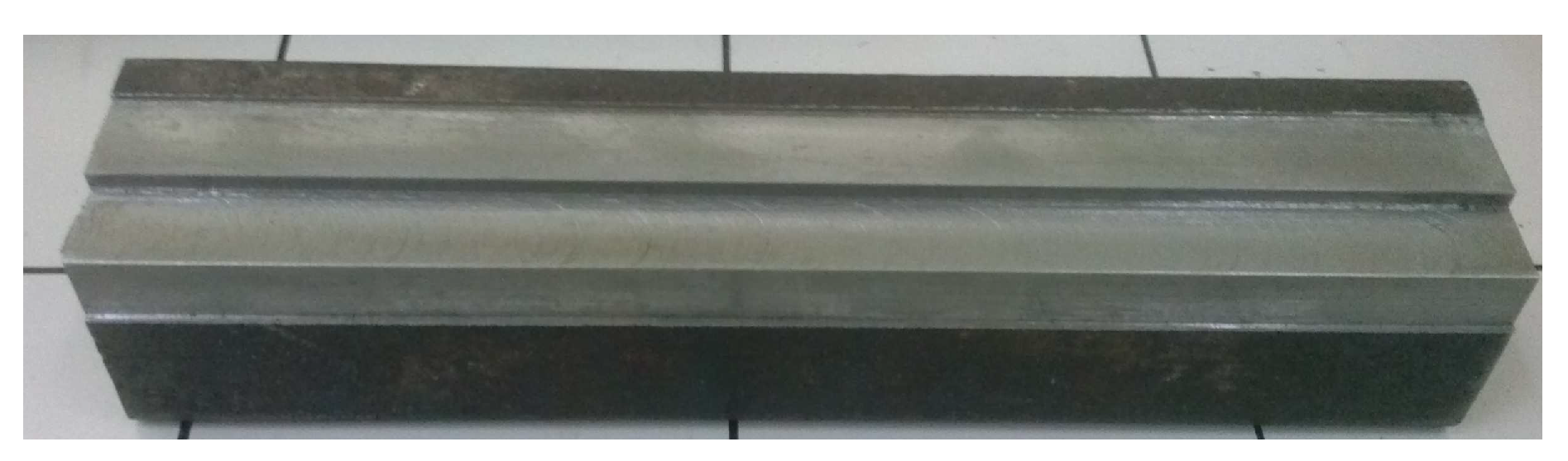

3. Experimental Setup for Tool-Flank-Wear Detection

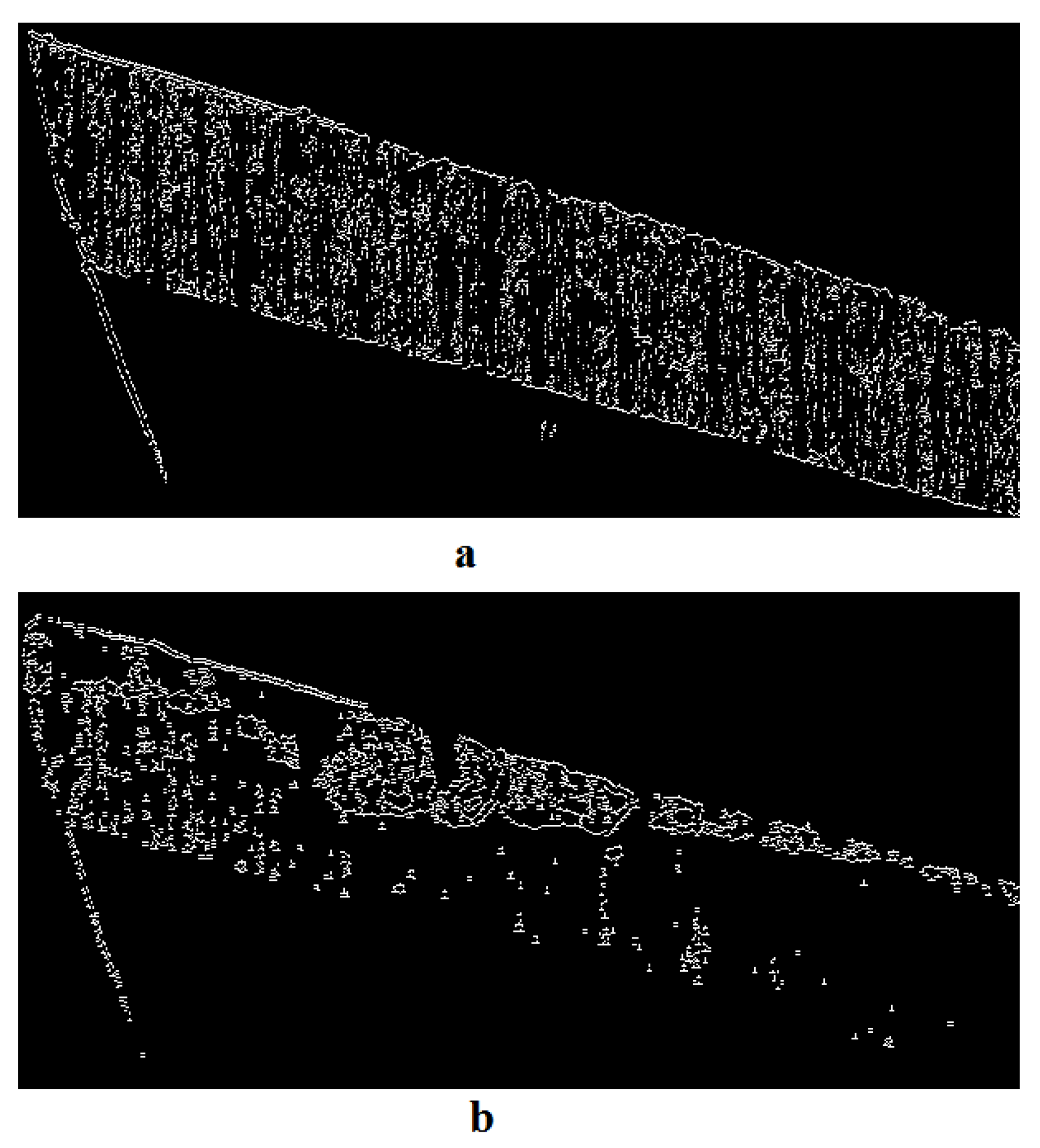

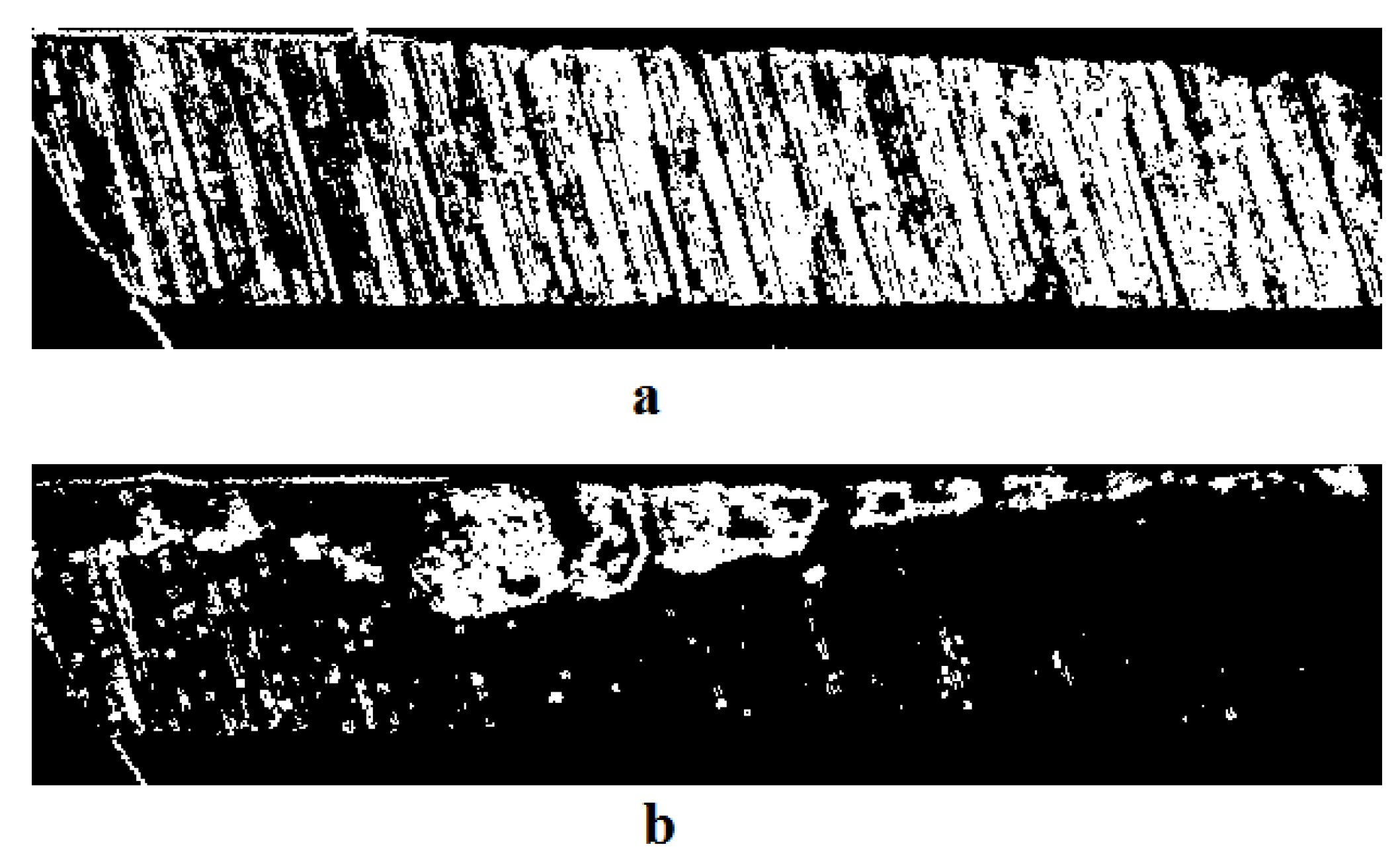

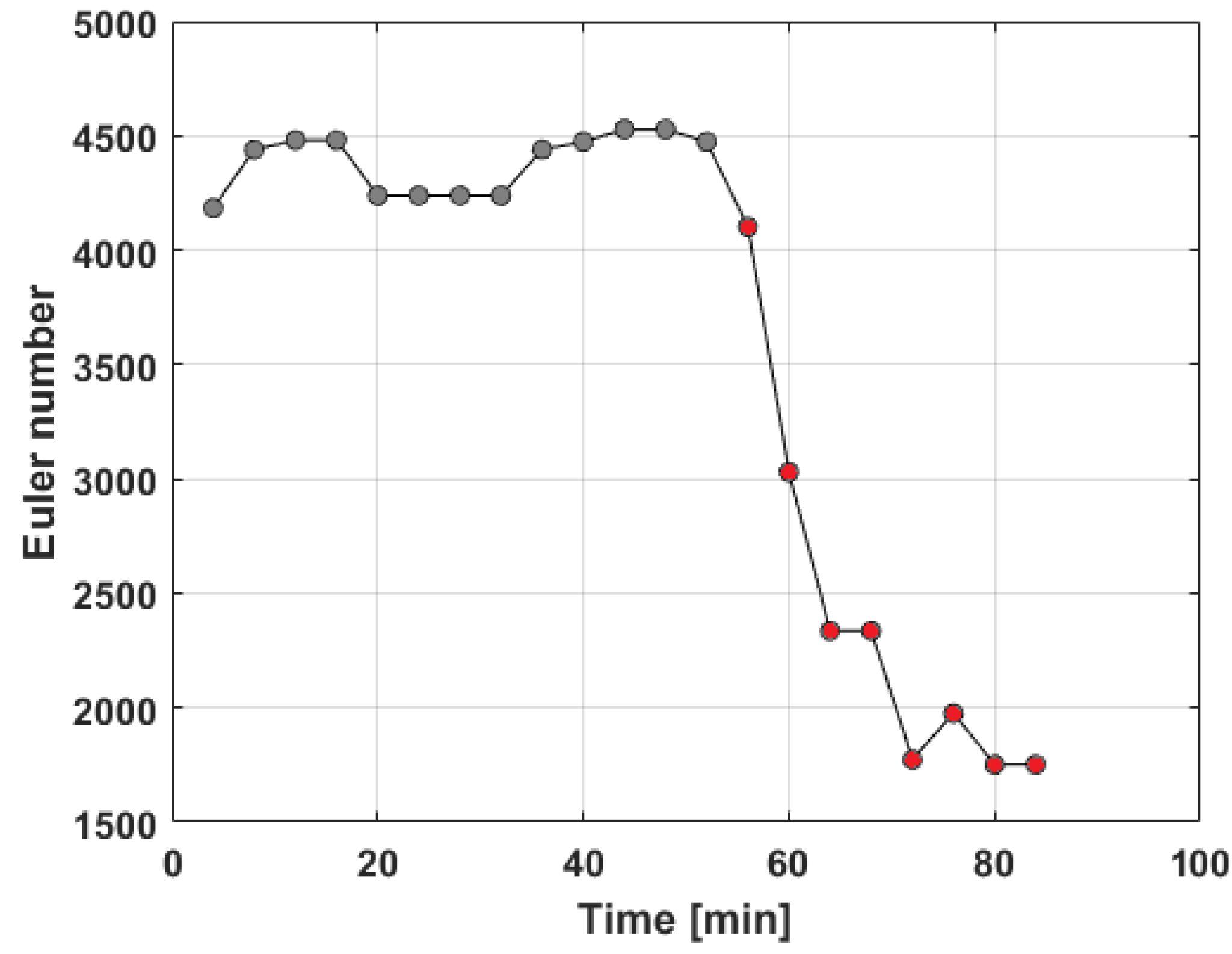

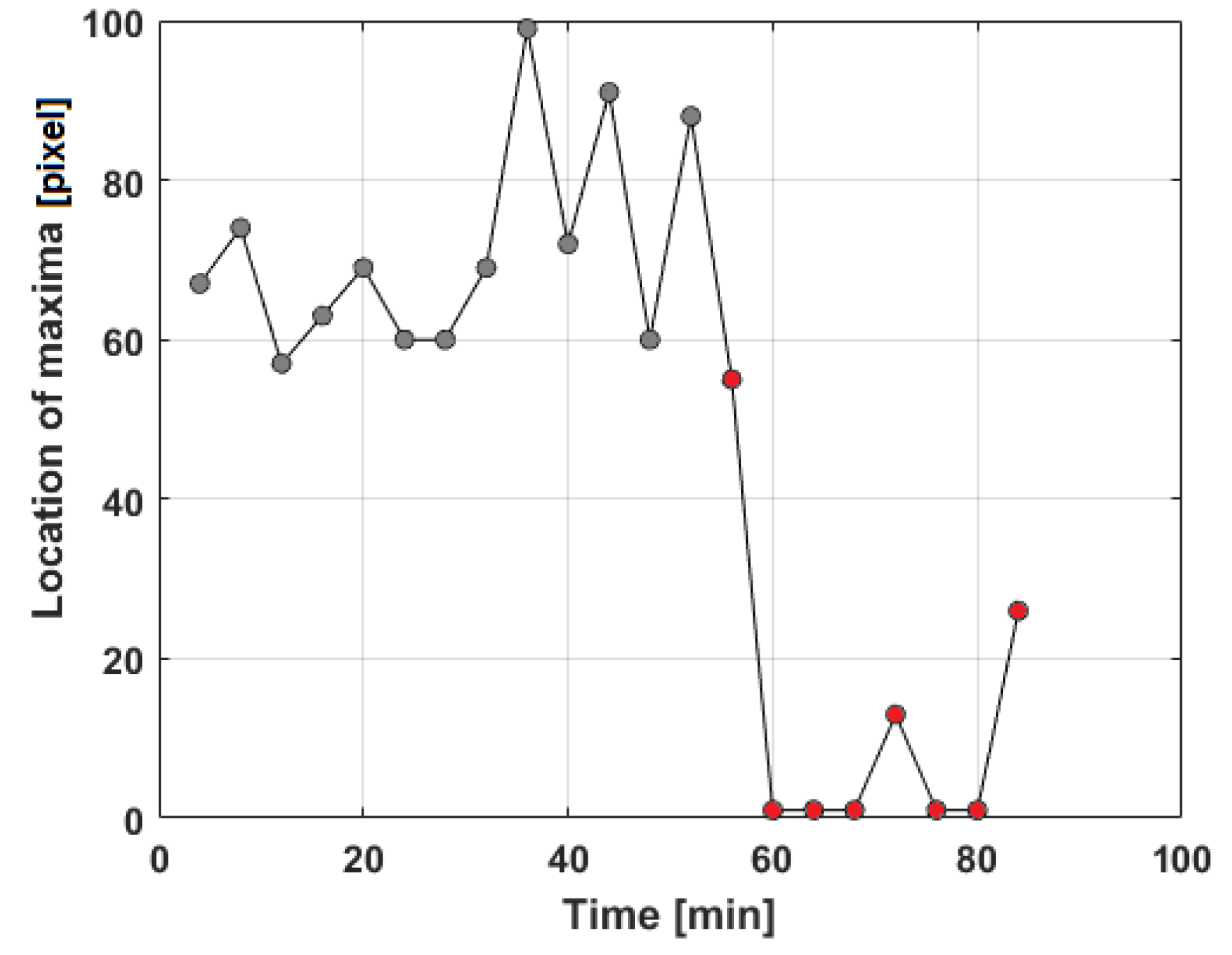

4. Image Processing for Tool-Flank-Wear Detection

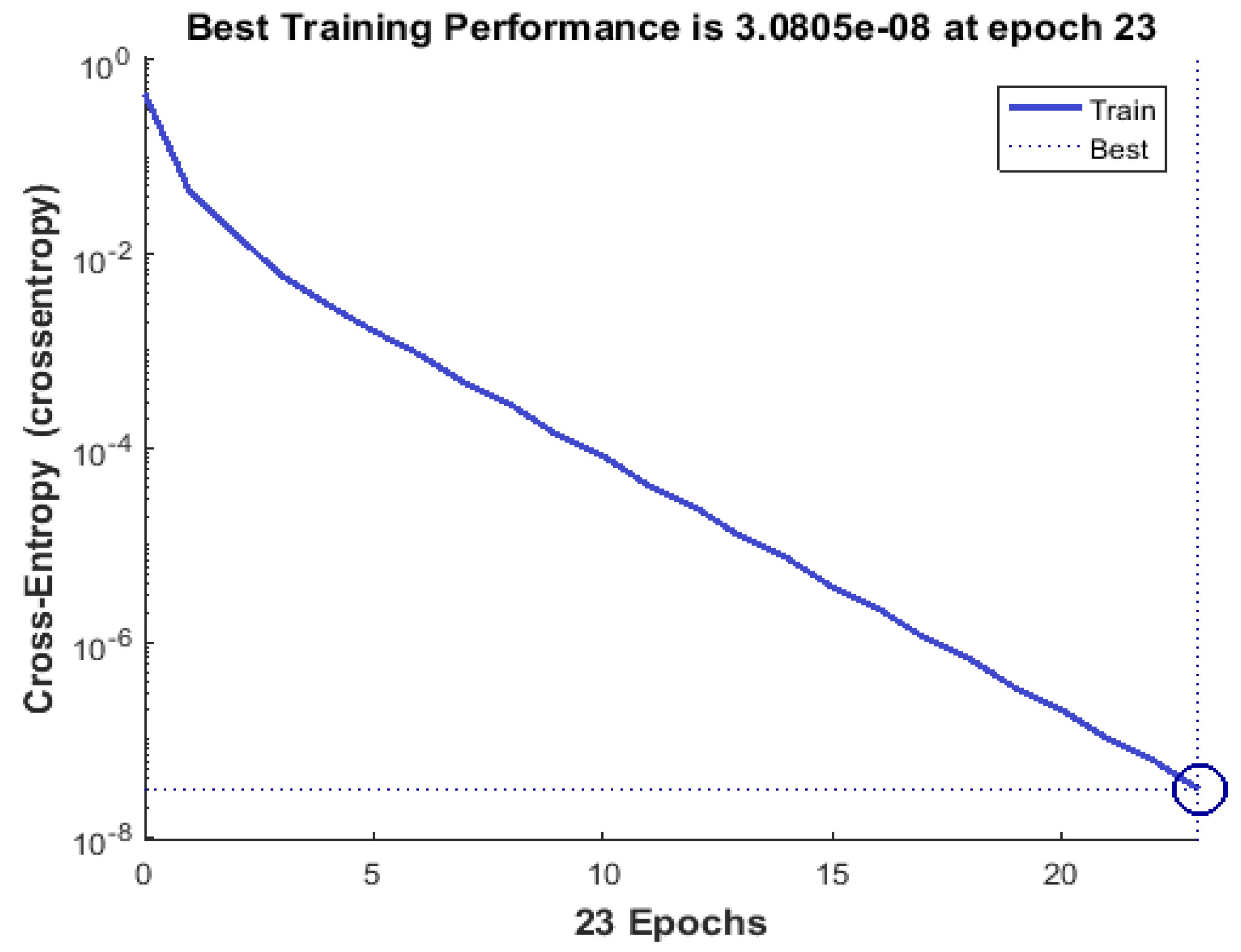

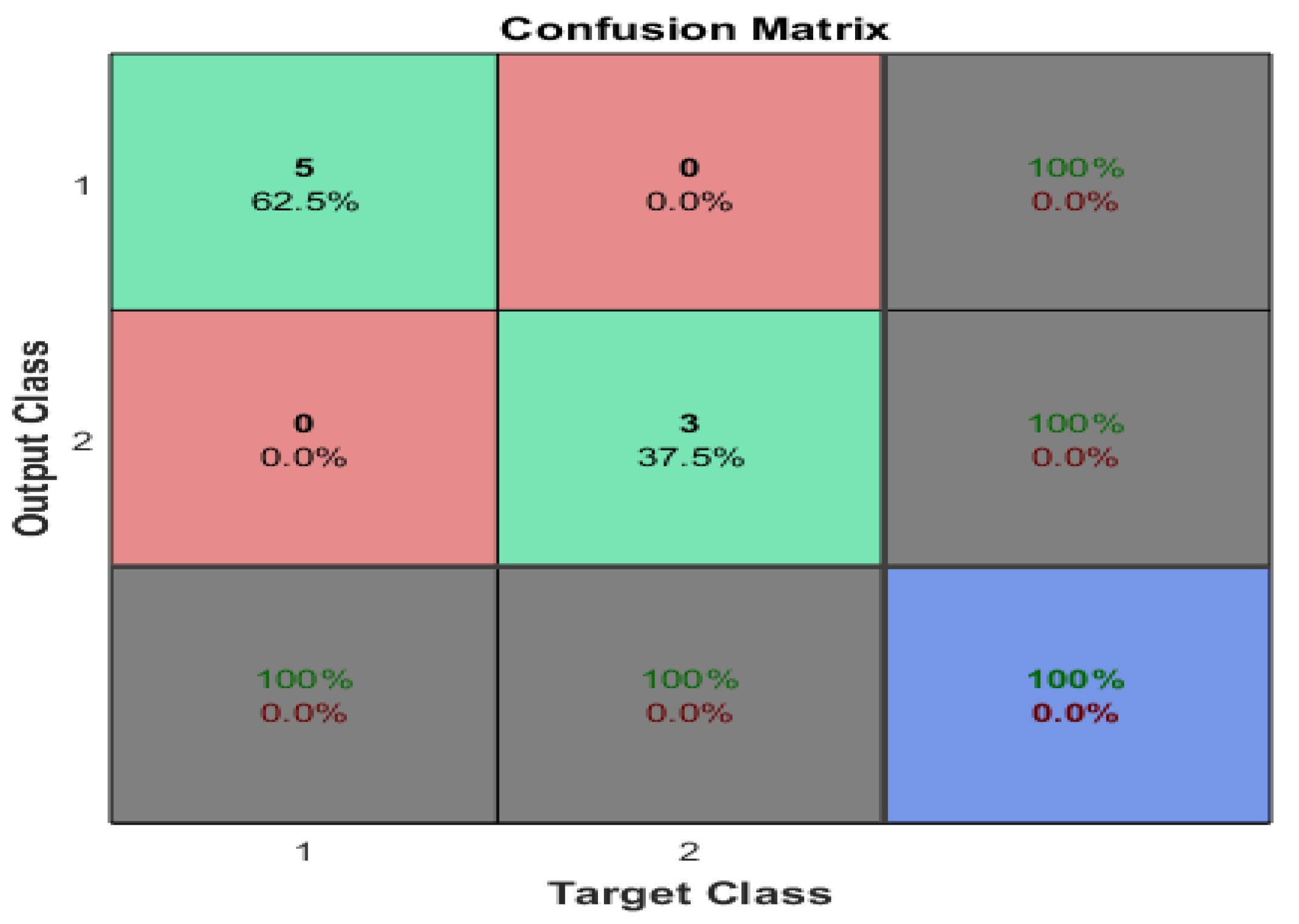

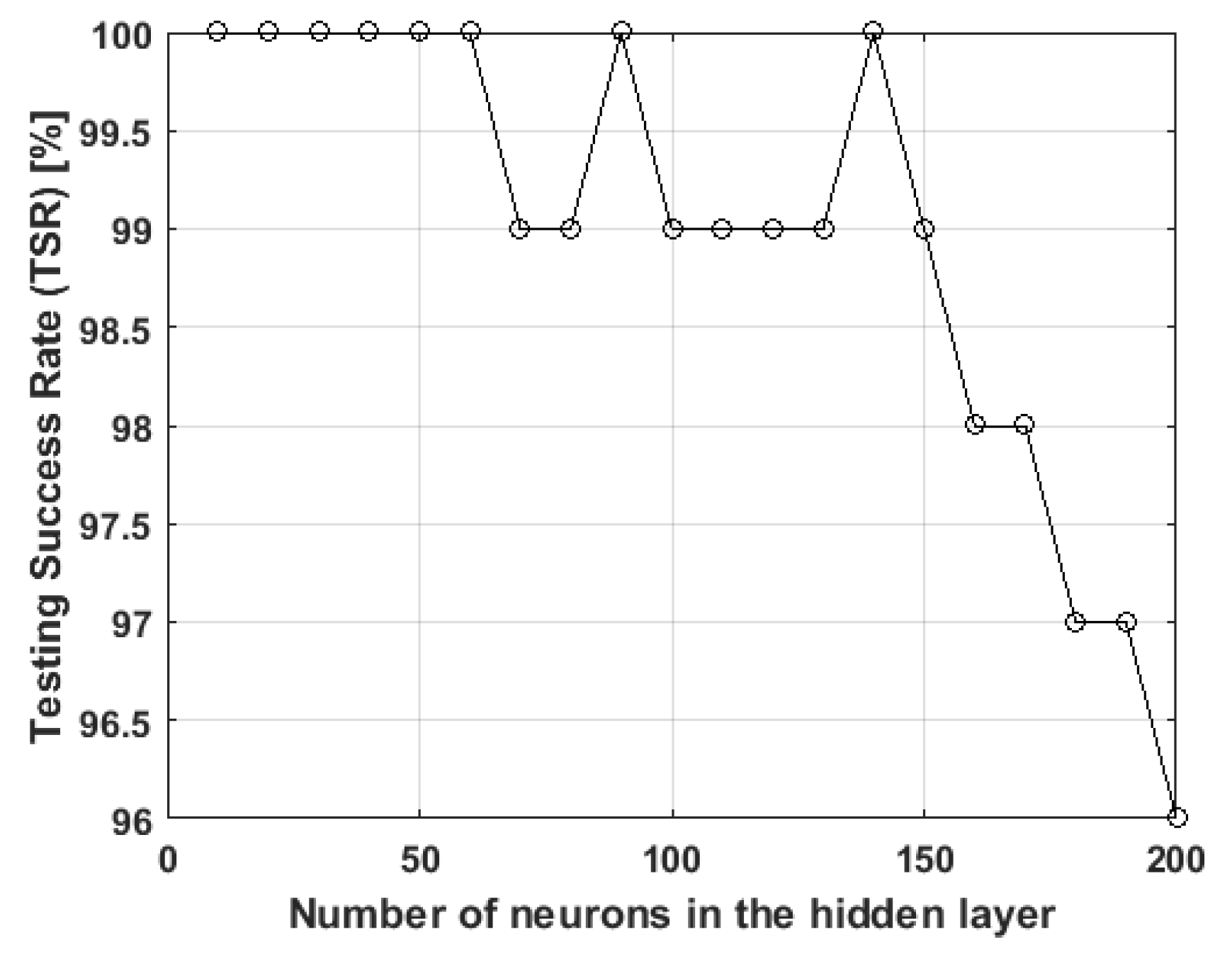

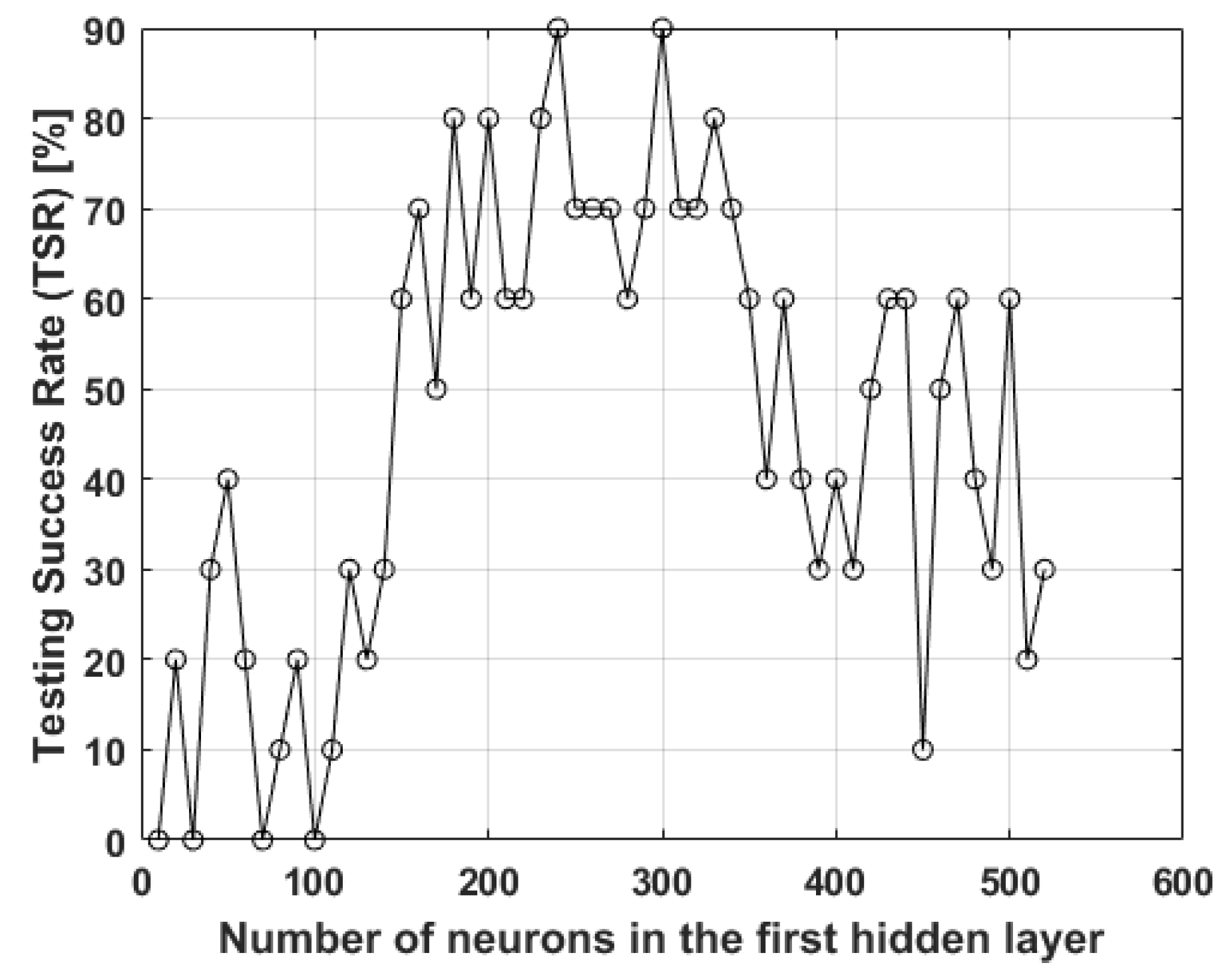

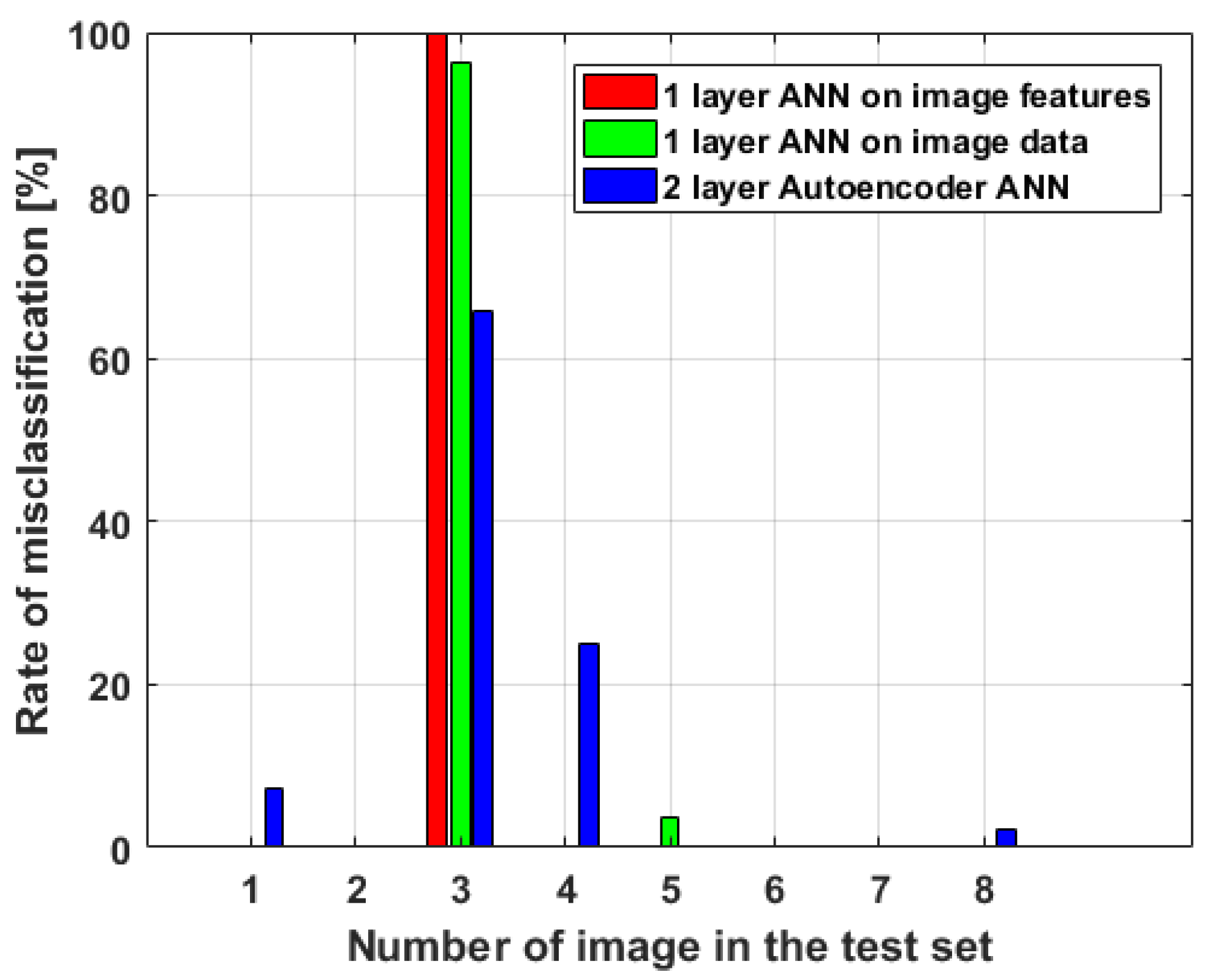

4.1. Image Classification Using One-Hidden-Layer ANN on Features Extracted from Image Data

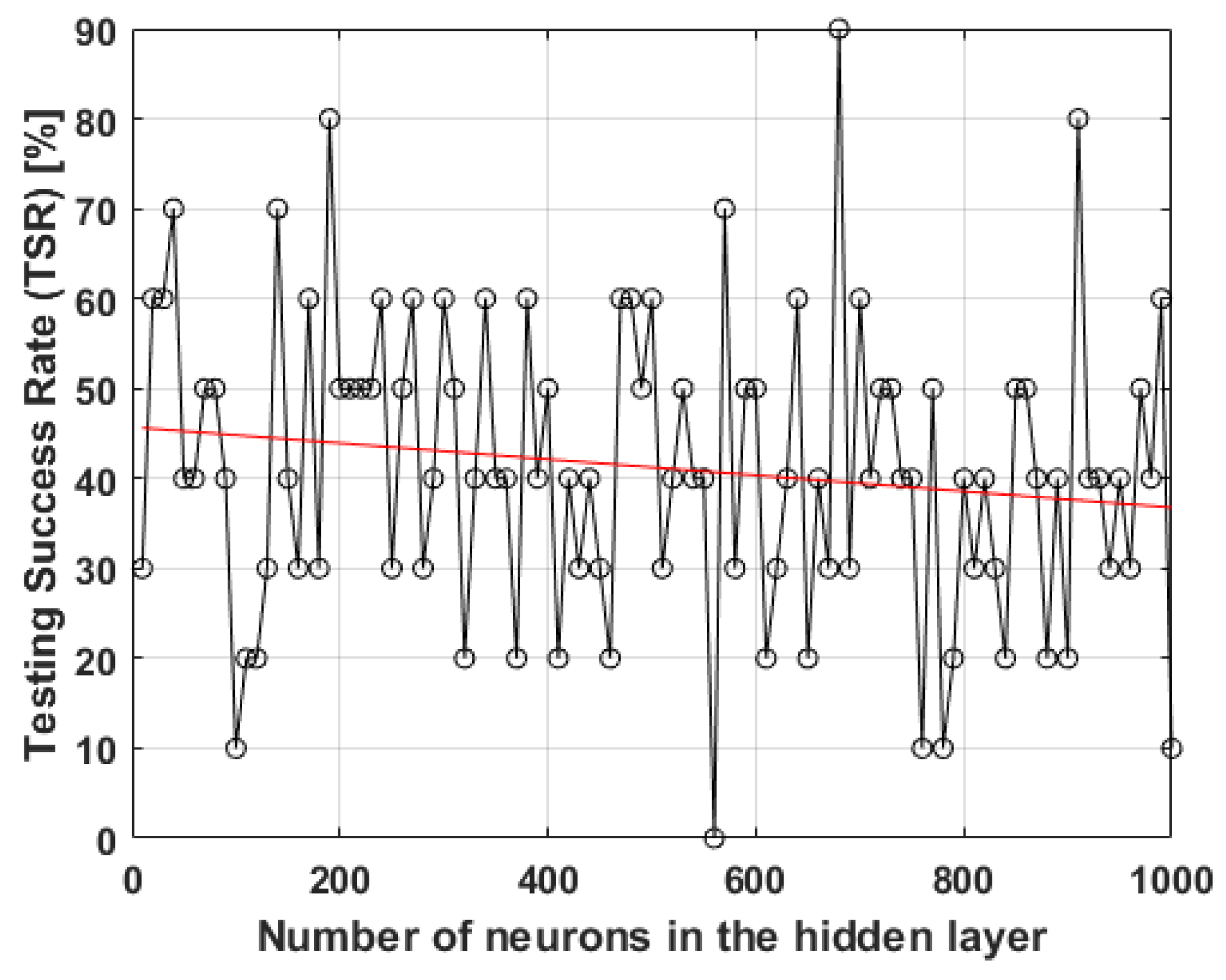

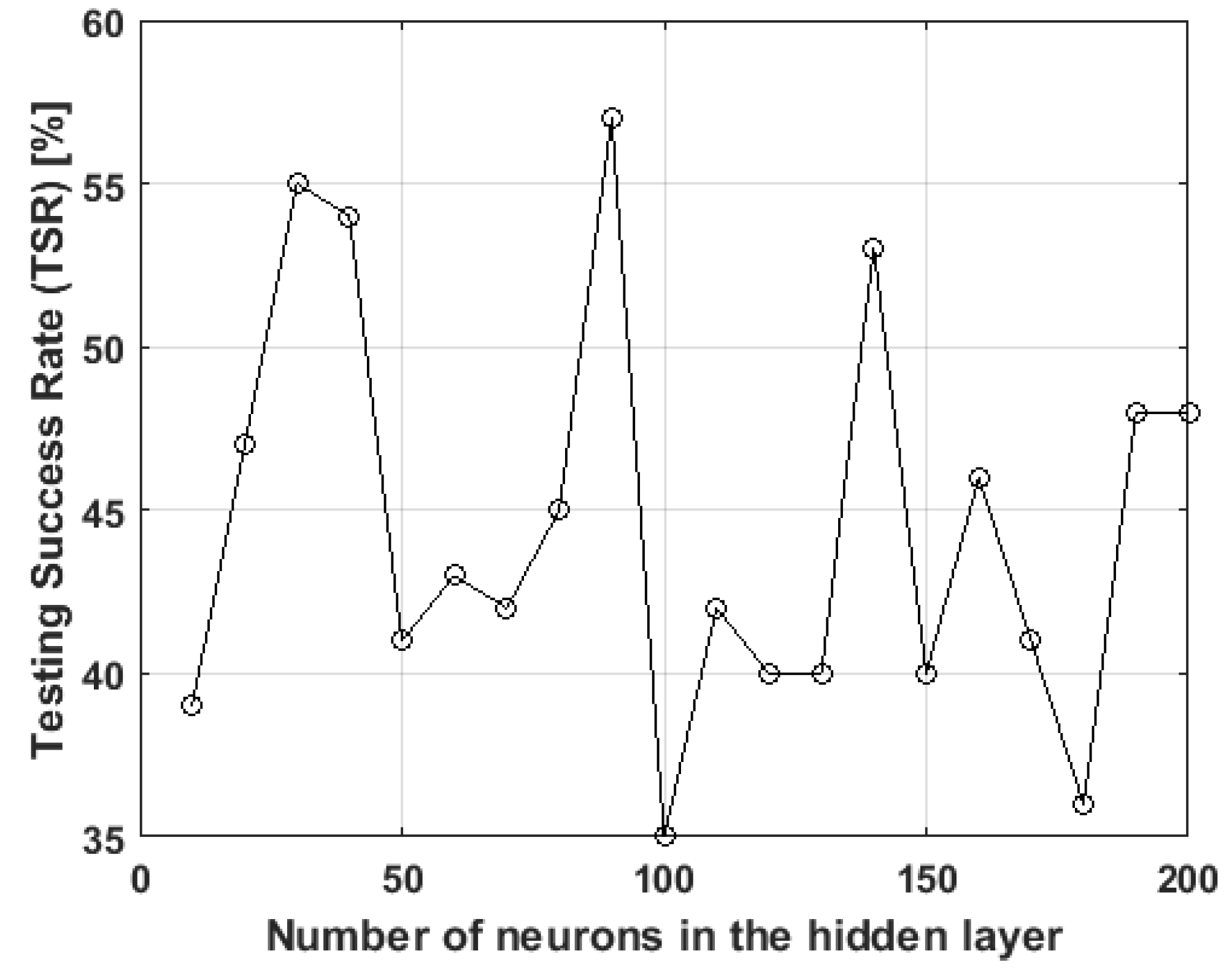

- The number of neurons in the hidden layer must be established on a somehow empirical basis. The number of neurons in the hidden layers may be important in order to extract the meaningful features of the image.

- Every training session can produce different results because of the fact that the initial weights and biases of each neuron are set randomly. Training the network with the same number of neurons on the same input datasets can produce different results when tested with unlabeled data.

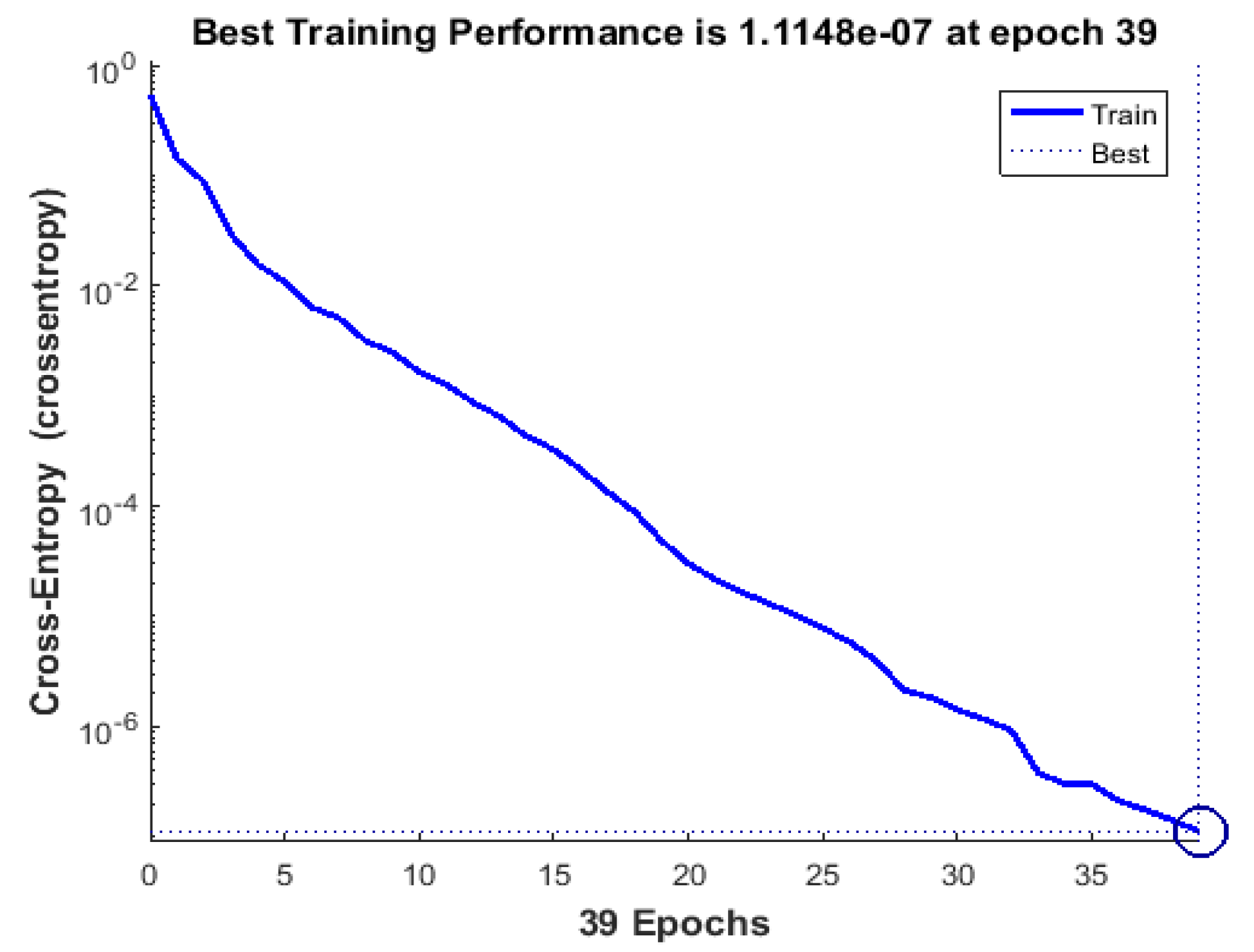

- The number of training epochs has to be well established in order to avoid overfitting. If overfitting occurs, the network will be less successful in classifying unlabeled data.

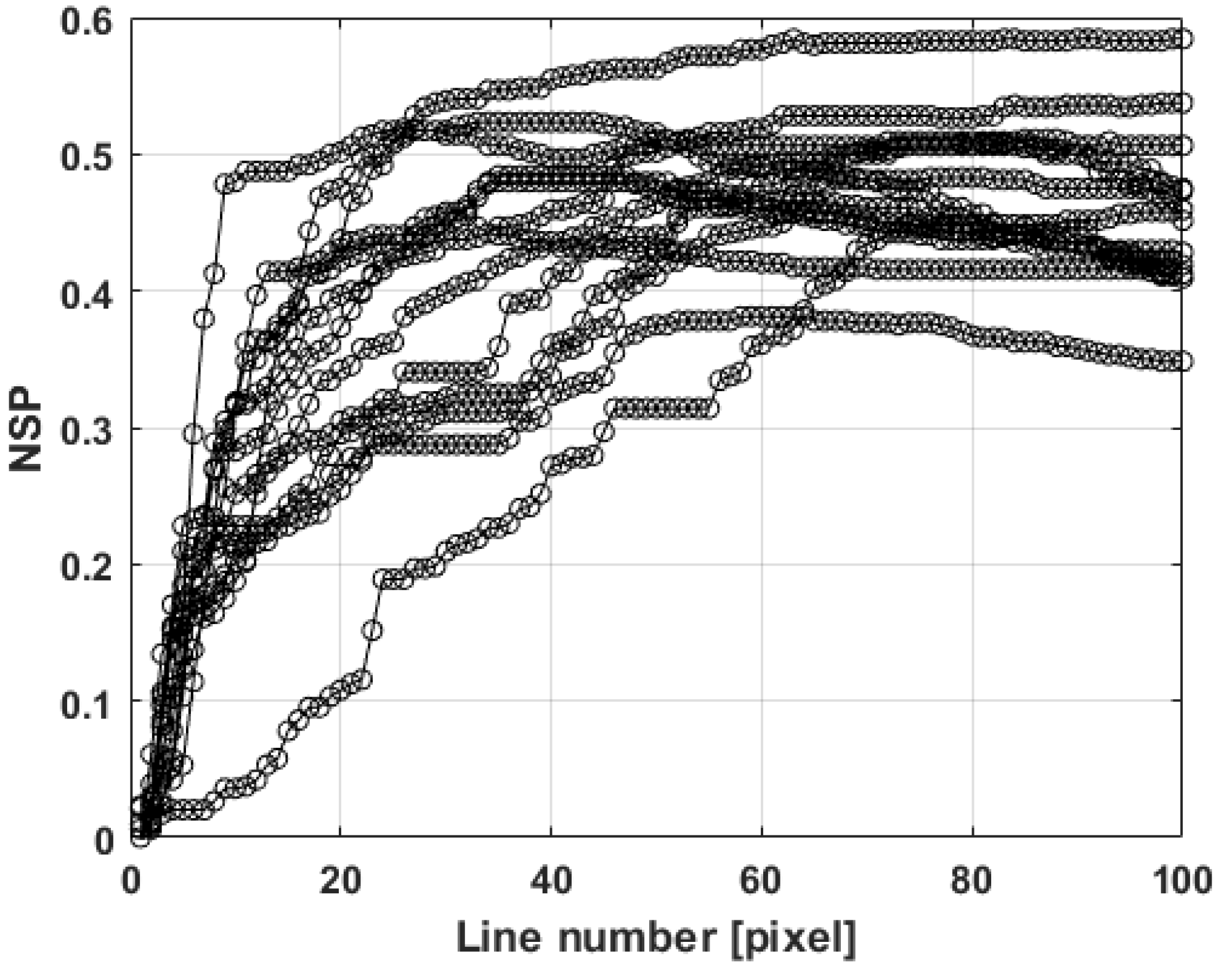

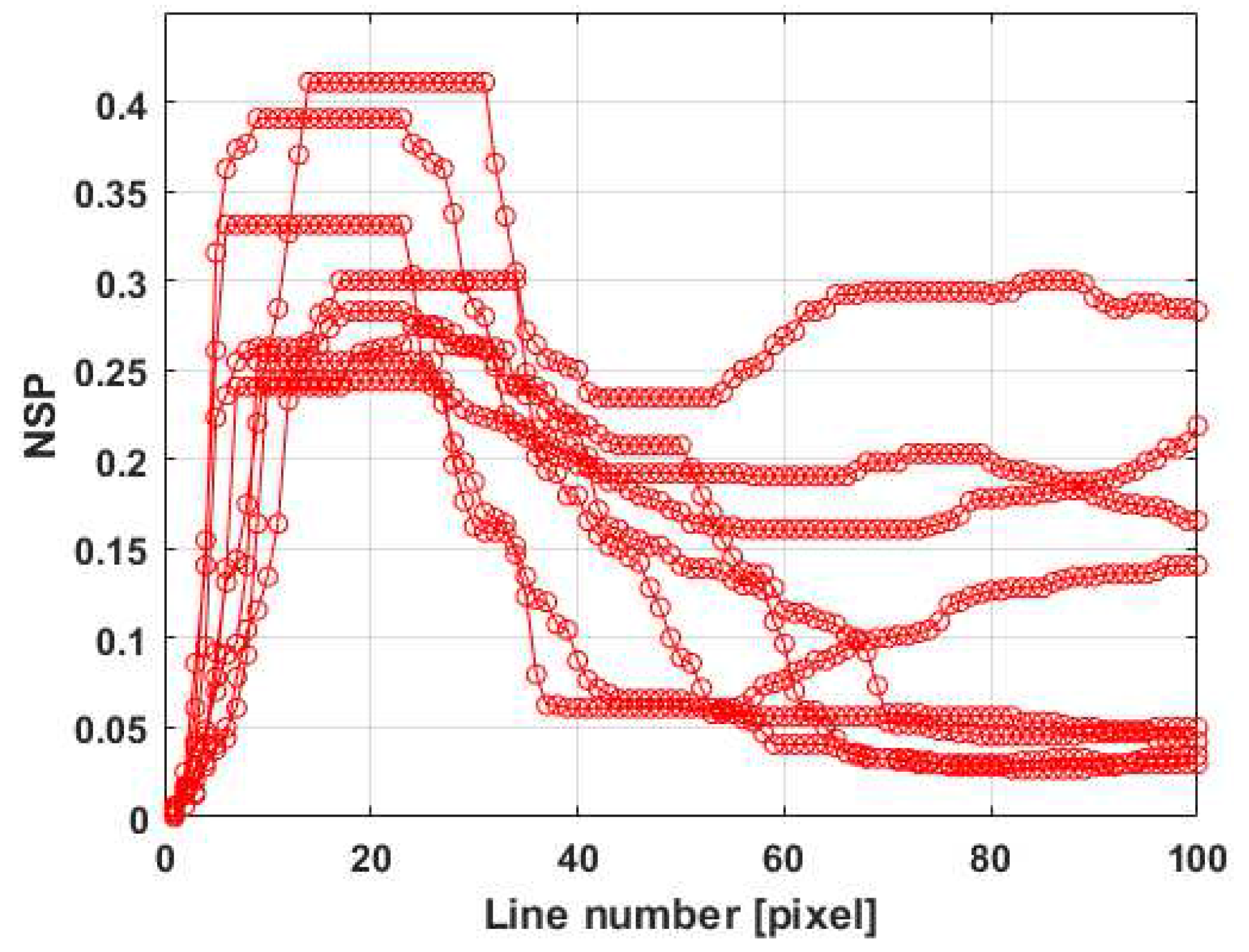

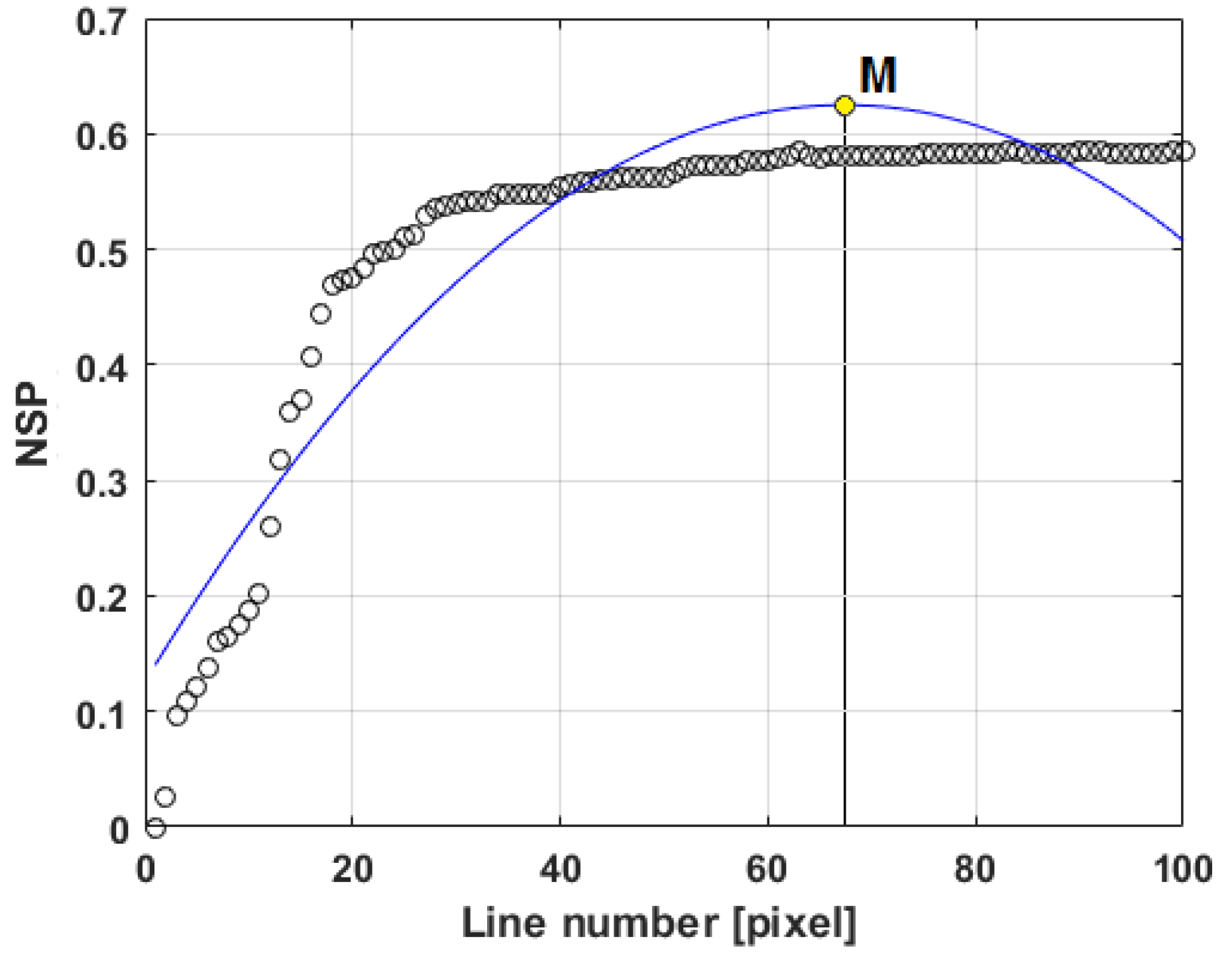

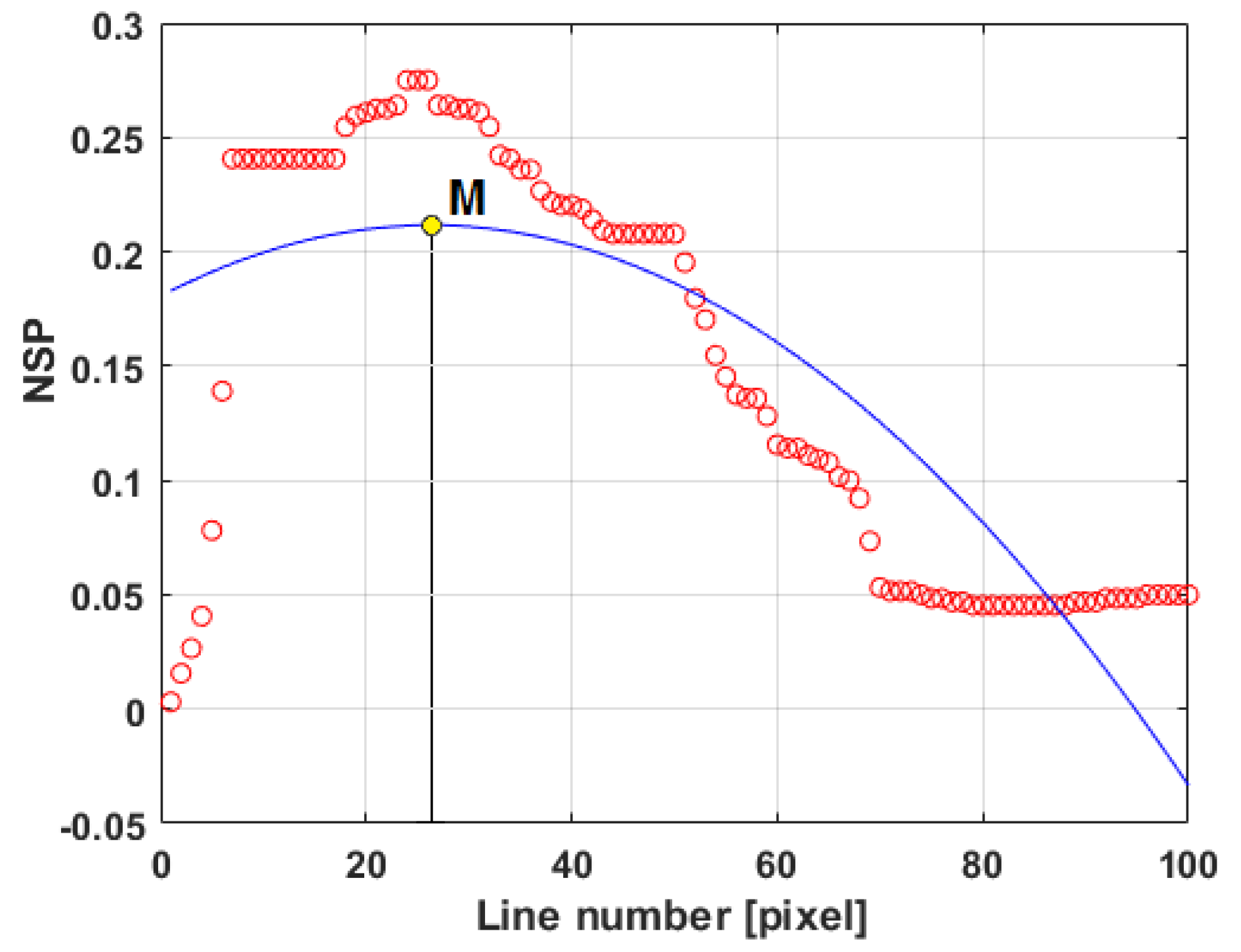

4.2. Image Classification Using One-Hidden-Layer ANN on Image Data

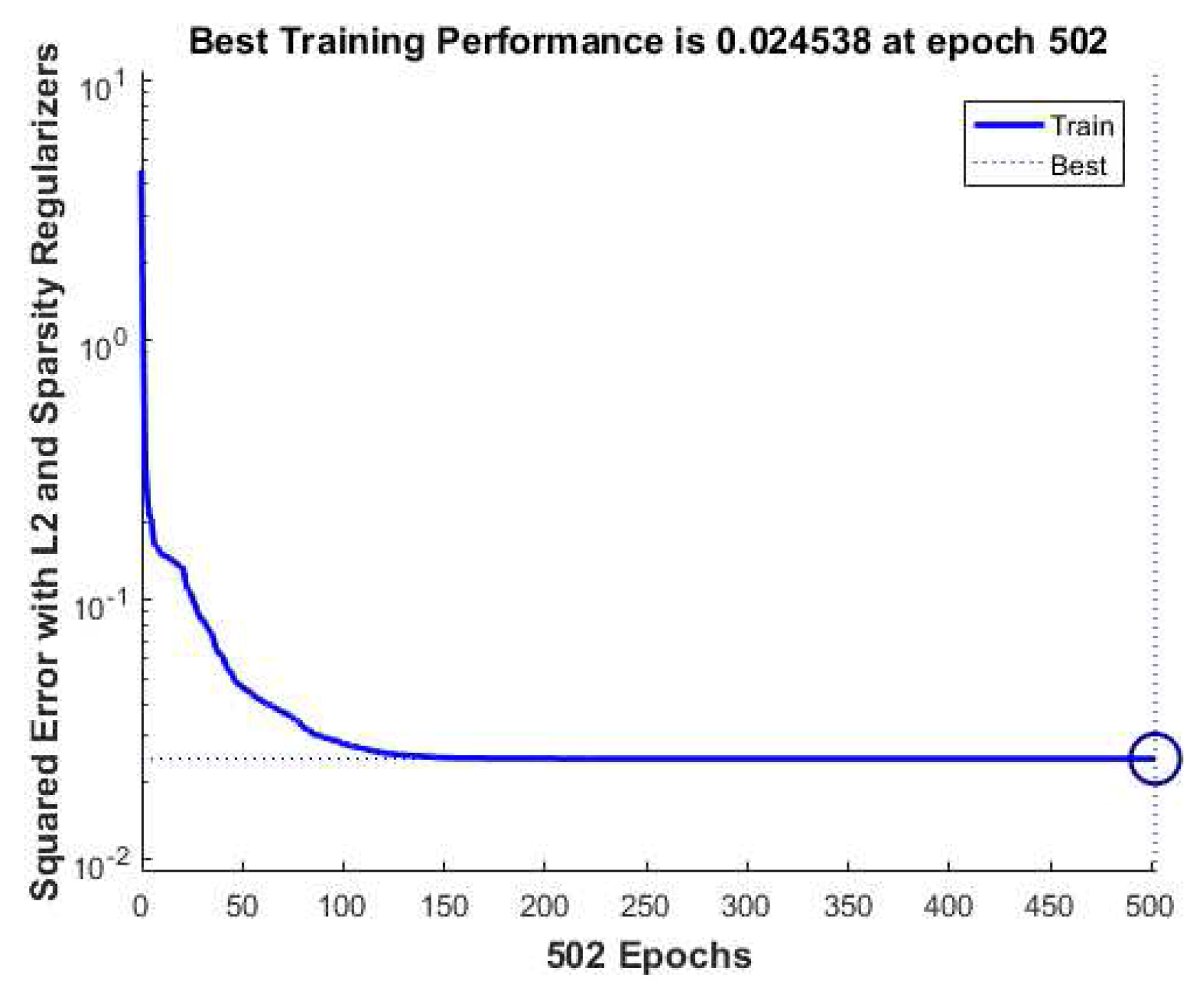

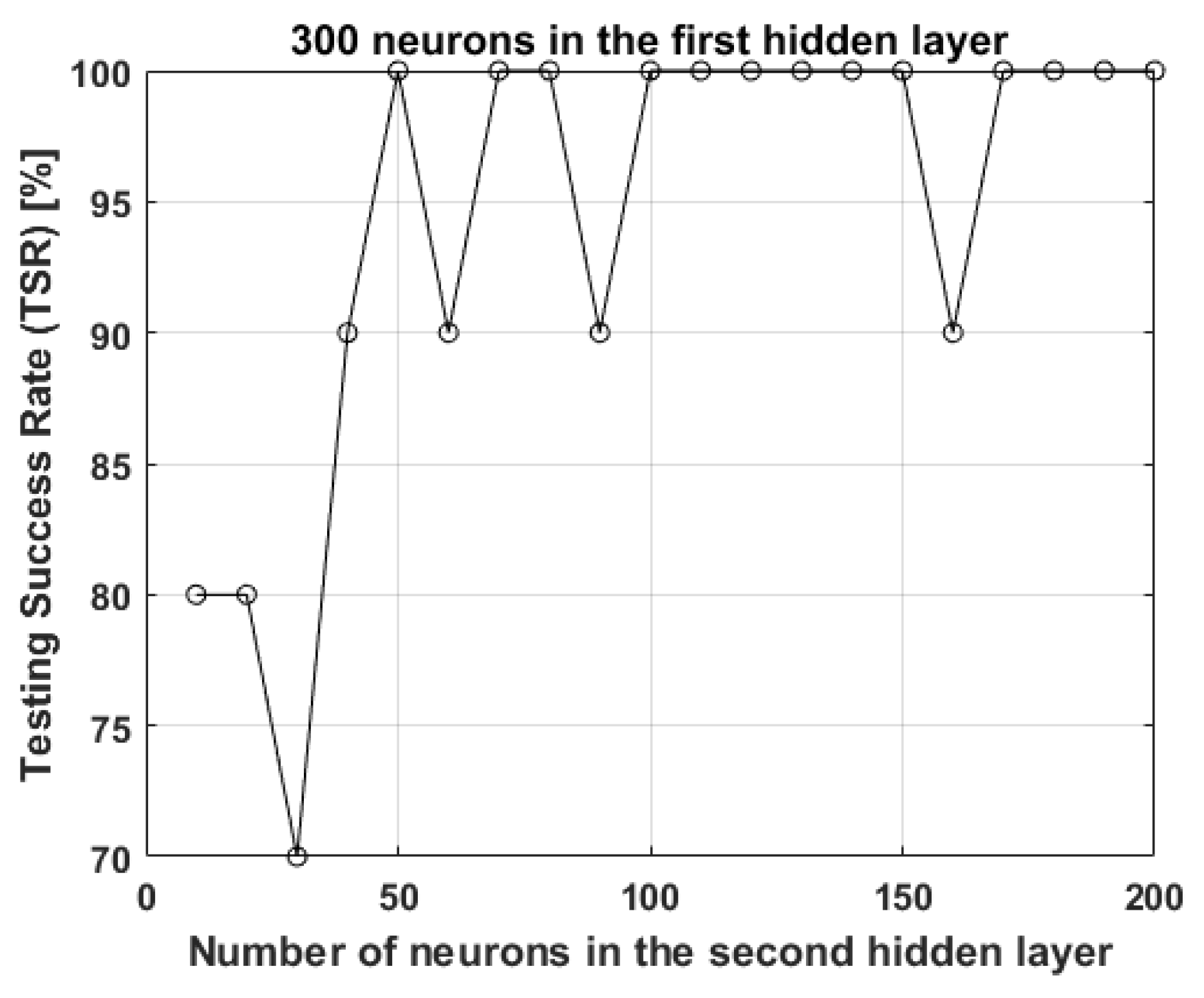

4.3. Image Classification with Autoencoders on Image Data

5. Conclusions

- What kind of image processing and classification method would be successful?

- What are the costs for such a system to be implemented?

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Denkena, B.; Krüger, M.; Schmidt, J. Condition-based tool management for small batch production. Int. J. Adv. Manuf. Technol. 2014, 74, 471–480. [Google Scholar] [CrossRef]

- Çelik, Y.H.; Kilickap, E.; Güney, M.J. Investigation of cutting parameters affecting on tool wear and surface roughness in dry turning of Ti-6Al-4V using CVD and PVD coated tools. J. Braz. Soc. Mech. Sci. Eng. 2016, 1–9. [Google Scholar] [CrossRef]

- Jain, V.; Raj, T. Tool life management of unmanned production system based on surface roughness by ANFIS. Int. J. Syst. Assur. Eng. Manag. 2016, 1–10. [Google Scholar] [CrossRef]

- Bhat, N.N.; Dutta, S.; Vashisth, T. Tool condition monitoring by SVM classification of machined surface images in turning. Int. J. Adv. Manuf. Technol. 2016, 83, 1487–1502. [Google Scholar] [CrossRef]

- Huang, P.B. An intelligent neural-fuzzy model for an in-process surface roughness monitoring system in end milling operations. J. Intell. Manuf. 2016, 27, 689–700. [Google Scholar] [CrossRef]

- Li, N.; Chen, Y.; Kong, D. Force-based tool condition monitoring for turning process using v-support vector regression. Int. J. Adv. Manuf. Technol. 2016, 1–11. [Google Scholar] [CrossRef]

- Rimpault, X.; Chatelain, J.-F.; Klemberg-Sapieha, J.E.; Balazinski, M. Tool wear and surface quality assessment of CFRP trimming using fractal analyses of the cutting force signals. CIRP J. Manuf. Sci. Technol. 2017, 16, 72–80. [Google Scholar] [CrossRef]

- Wang, G.; Guo, Z.; Qian, L. Tool wear prediction considering uncovered data based on partial least square regression. J. Mech. Sci. Technol. 2014, 28, 317–322. [Google Scholar] [CrossRef]

- Khajavi, M.N.; Nasernia, E.; Rostaghi, M. Milling tool wear diagnosis by feed motor current signal using an artificial neural network. J. Mech. Sci. Technol. 2016, 30, 4869–4875. [Google Scholar] [CrossRef]

- Arruda, E.M.; Ribeiro Filho, S.L.M.; Assunção, J.T.; Brandão, L.C. Online prediction of tool wear in the milling of the AISI P20 steel through electric power of the main motor. Arab. J. Sci. Eng. 2015, 40, 3321–3328. [Google Scholar] [CrossRef]

- Postnov, V.V.; Idrisova, Y.V.; Fetsak, S.I. Influence of machine-tool dynamics on the tool wear. Russ. Eng. Res. 2015, 35, 936–940. [Google Scholar] [CrossRef]

- Stavropoulos, P.; Papacharalampopoulos, A.; Vasiliadis, E.; Chryssolouris, G. Tool wear predictability estimation in milling based on multi-sensorial data. Int. J. Adv. Manuf. Technol. 2016, 82, 509–521. [Google Scholar] [CrossRef]

- Zhang, G.; To, S.; Zhang, S.H. Evaluation for tool flank wear and its influences on surface roughness in ultra-precision raster fly cutting. Int. J. Mech. Sci. 2016, 118, 125–134. [Google Scholar] [CrossRef]

- Cerce, L.; Pusavec, F.; Kopac, J. 3D cutting tool-wear monitoring in the process. J. Mech. Sci. Technol. 2015, 29, 3885–3895. [Google Scholar] [CrossRef]

- Garcia-Ordás, M.T.; Alegre, E.; González-Castro, V. A computer vision approach to analyze and classify tool wear level in milling processes using shape descriptors and machine learning techniques. Int. J. Adv. Manuf. Technol. 2016, 1–15. [Google Scholar] [CrossRef]

- Javed, K.; Gouriveau, R.; Li, X. Tool wear monitoring and prognostics challenges: A comparison of connectionist methods toward an adaptive ensemble model. J. Intell. Manuf. 2016, 1–18. [Google Scholar] [CrossRef]

- Kong, D.; Chen, Y.; Li, N. Tool wear monitoring based on kernel principal component analysis and v-support vector regression. Int. J. Adv. Manuf. Technol. 2016, 1–16. [Google Scholar] [CrossRef]

- Chetan, E.; Narasimhulu, A.; Ghosh, S.; Rao, P.V. Study of tool wear mechanisms and mathematical modeling of flank wear during machining of Ti alloy (Ti6Al4V). J. Inst. Eng. (India) 2015, 96, 279–285. [Google Scholar] [CrossRef]

- Mia, M.; Al Bashir, M.; Dhar, N.R. Modeling of principal flank wear: An empirical approach combining the effect of tool. Environ. Workpiece Hardness J. Inst. Eng. (India) 2016, 97, 517–526. [Google Scholar]

- Yang, W.A.; Zhou, W.; Liao, W. Prediction of drill flank wear using ensemble of co-evolutionary particle swarm optimization based-selective neural network ensembles. J. Intell. Manuf. 2016, 27, 343–361. [Google Scholar] [CrossRef]

- Muratov, K.R. Influence of rigid and frictional kinematic linkages in tool–workpiece contact on the uniformity of tool wear. Russ. Eng. Res. 2016, 36, 321–323. [Google Scholar] [CrossRef]

- Park, K.H.; Yang, G.D.; Lee, D.Y. Tool wear analysis on coated and uncoated carbide tools in inconel machining. Int. J. Precis. Eng. Manuf. 2015, 16, 1639–1645. [Google Scholar] [CrossRef]

- Yingfei, G.; Muñoz de Escalona, P.; Galloway, A. Influence of cutting parameters and tool wear on the surface integrity of cobalt-based stellite 6 alloy when machined under a dry cutting environment. J. Mater. Eng. Perform. 2017, 26, 312–326. [Google Scholar] [CrossRef]

- Mathworks®. MATLAB, Neural Network Toolbox, Image Processing Toolbox, R2016b, User’s Guide. Available online: https://www.mathworks.com/help/ (accessed on 4 November 2017).

- Hinton, G.E.; Osindero, S.; Teh, Y.W. A fast learning algorithm for deep belief nets. Neural Comput. 2006, 18, 1527–1554. [Google Scholar] [CrossRef] [PubMed]

- Bengio, Y.; Lamblin, P.; Popovici, D.; Larochelle, H. Greedy layer-wise training of deep networks. In Proceedings of the Twentieth Annual Conference on Neural Information Processing Systems, Vancouver, BC, Canada, 4–7 December 2006. [Google Scholar]

| Type of Network | No. of Neurons | Average No. of Training Epochs | Average Training Success Rate | Average Training Time (s) |

|---|---|---|---|---|

| A. Single hidden layer on image features | 10 | 15–20 | 100 | 0.20 |

| 200 | 25–30 | 96 | 0.25 | |

| B. Single hidden layer on image data | 10 | 30–40 | 46 | 0.75 |

| 200 | 30–40 | 42 | 12 | |

| C. Two autoencoder hidden layers (L1, L2) on image data | L1, L2 | L1, L2 | L1 + L2 | L1 + L2 |

| 10 to 140, 10 | 1000, 1000 | 15 | 280 | |

| 150 to 200, 10 | 1000, 1000 | 70 | 1900 | |

| 300, 100 to 150 | 1000, 1000 | 100 | 1900 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Moldovan, O.G.; Dzitac, S.; Moga, I.; Vesselenyi, T.; Dzitac, I. Tool-Wear Analysis Using Image Processing of the Tool Flank. Symmetry 2017, 9, 296. https://doi.org/10.3390/sym9120296

Moldovan OG, Dzitac S, Moga I, Vesselenyi T, Dzitac I. Tool-Wear Analysis Using Image Processing of the Tool Flank. Symmetry. 2017; 9(12):296. https://doi.org/10.3390/sym9120296

Chicago/Turabian StyleMoldovan, Ovidiu Gheorghe, Simona Dzitac, Ioan Moga, Tiberiu Vesselenyi, and Ioan Dzitac. 2017. "Tool-Wear Analysis Using Image Processing of the Tool Flank" Symmetry 9, no. 12: 296. https://doi.org/10.3390/sym9120296

APA StyleMoldovan, O. G., Dzitac, S., Moga, I., Vesselenyi, T., & Dzitac, I. (2017). Tool-Wear Analysis Using Image Processing of the Tool Flank. Symmetry, 9(12), 296. https://doi.org/10.3390/sym9120296