Accurate Dense Stereo Matching Based on Image Segmentation Using an Adaptive Multi-Cost Approach

Abstract

:1. Introduction

2. Related Works

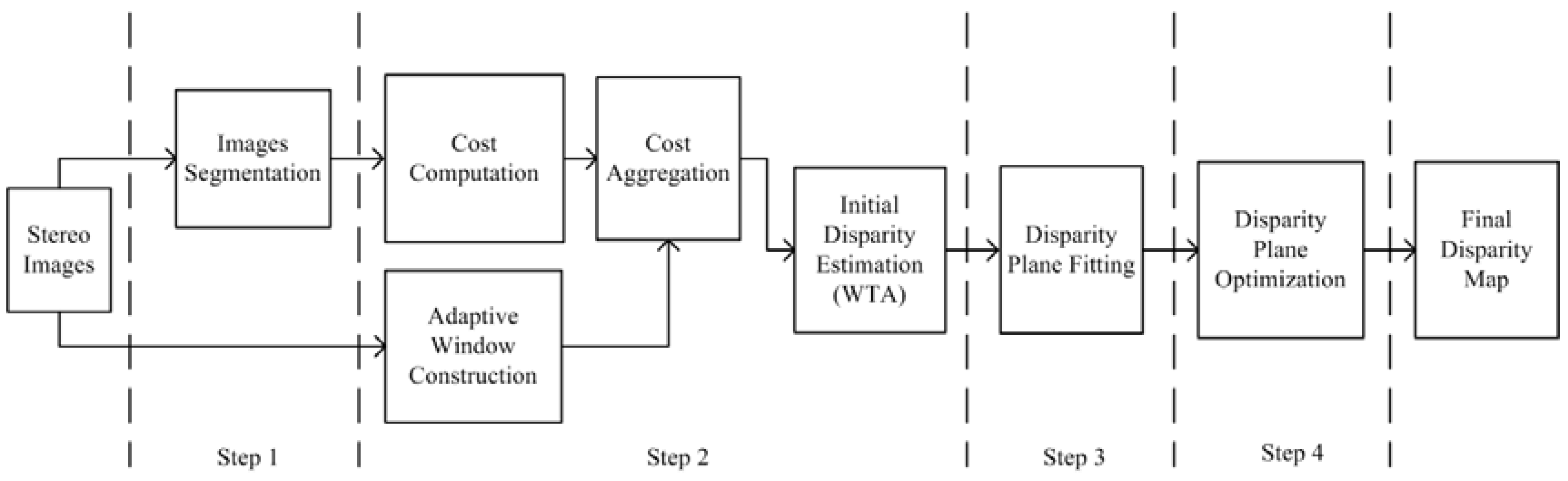

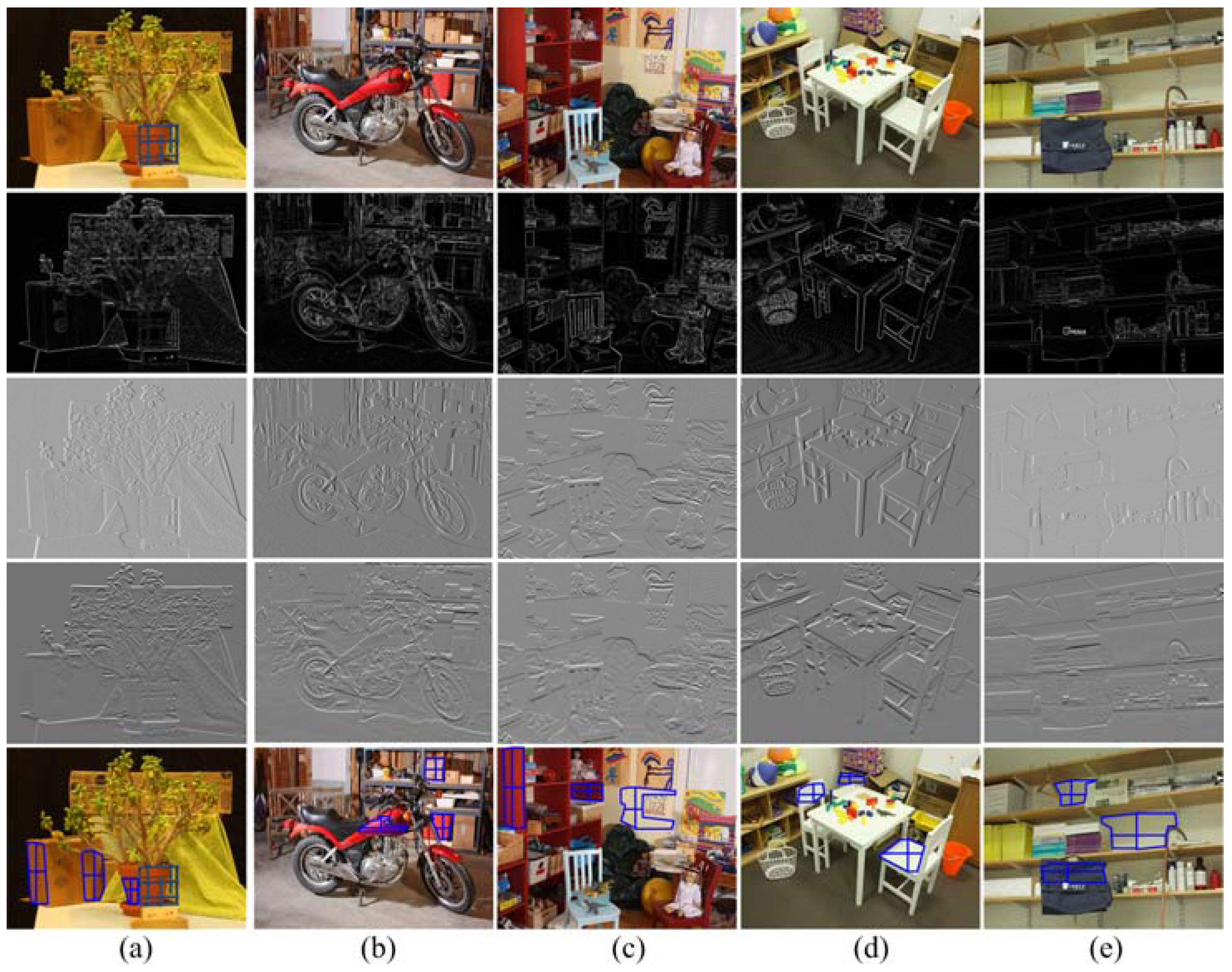

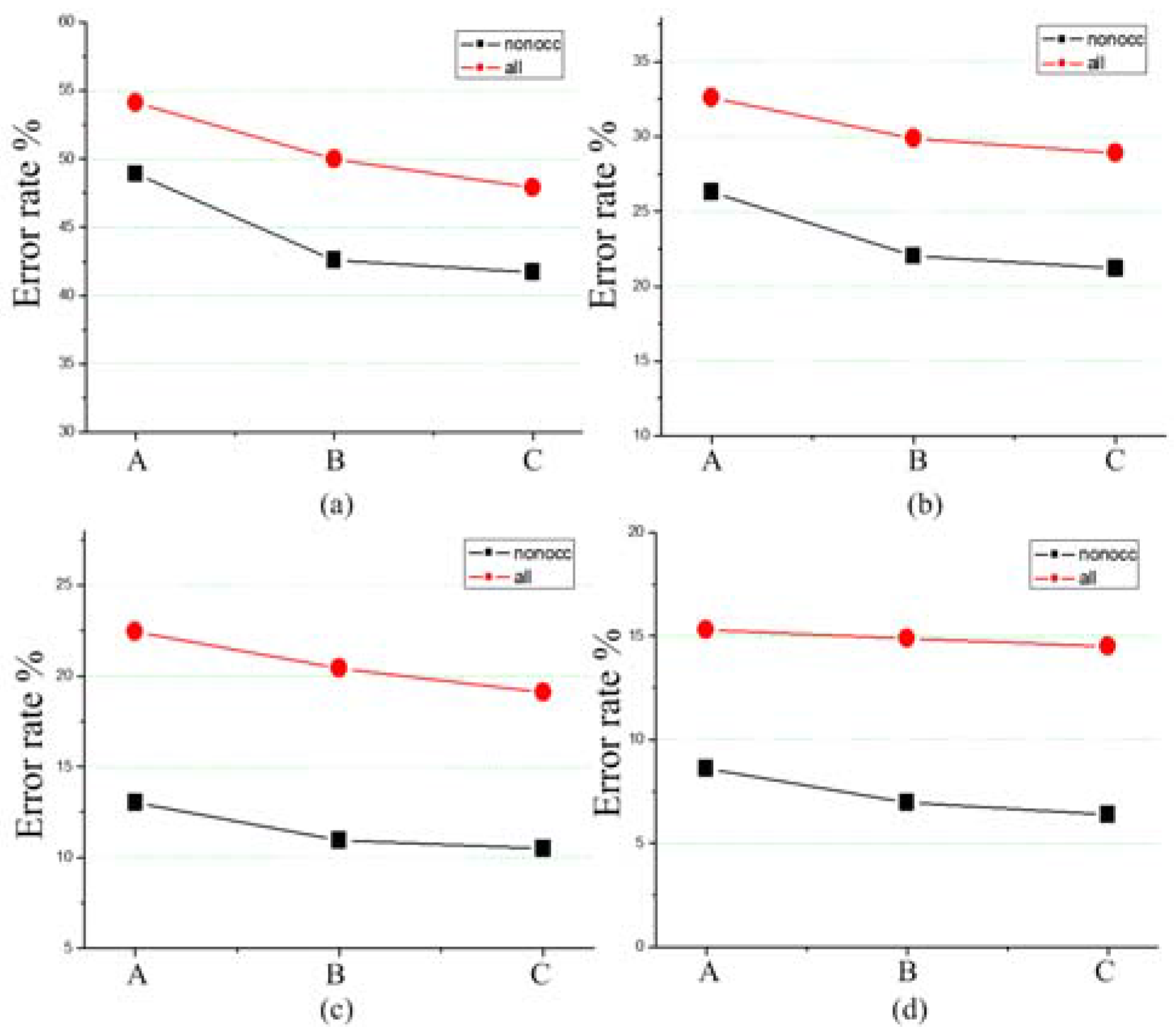

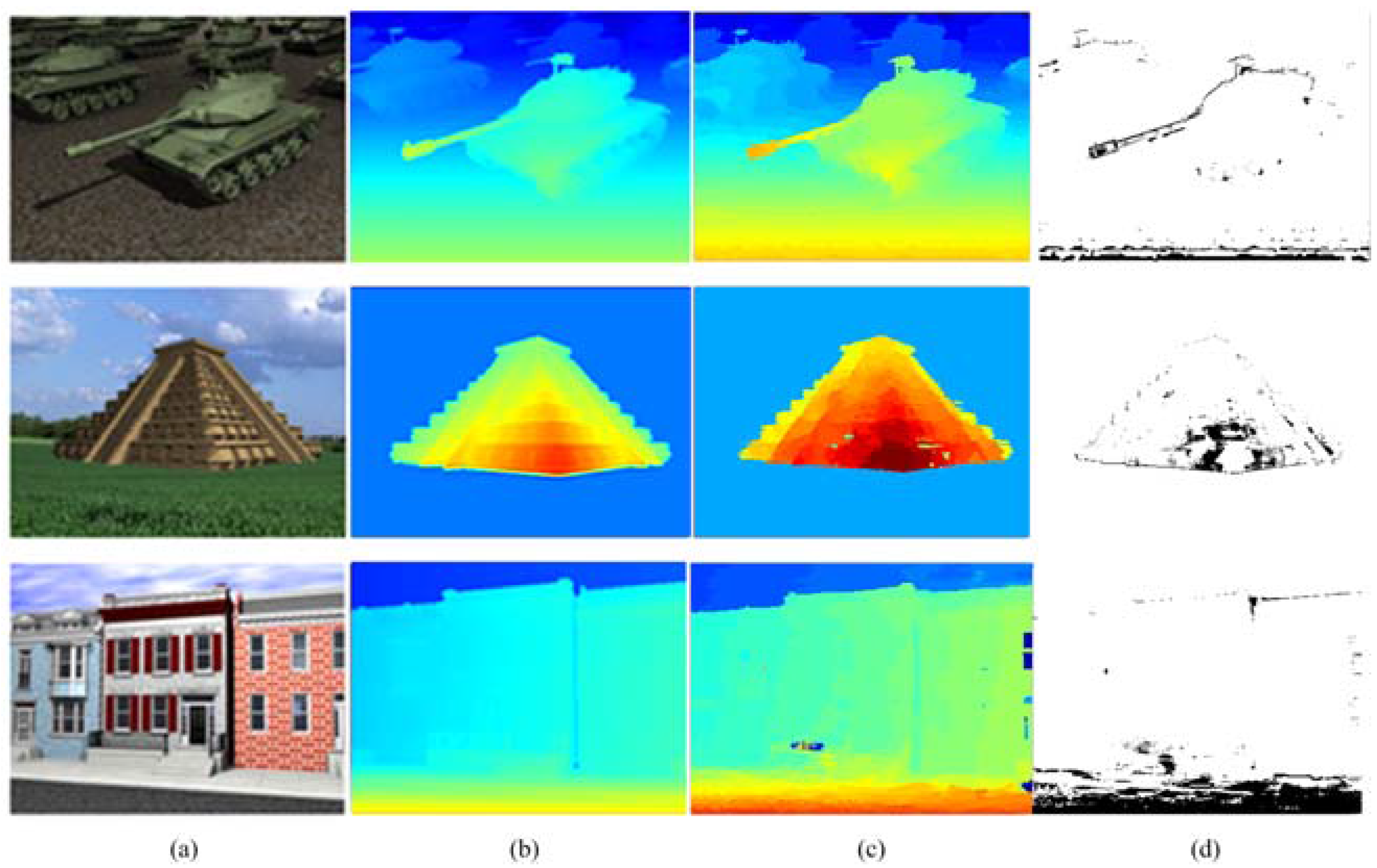

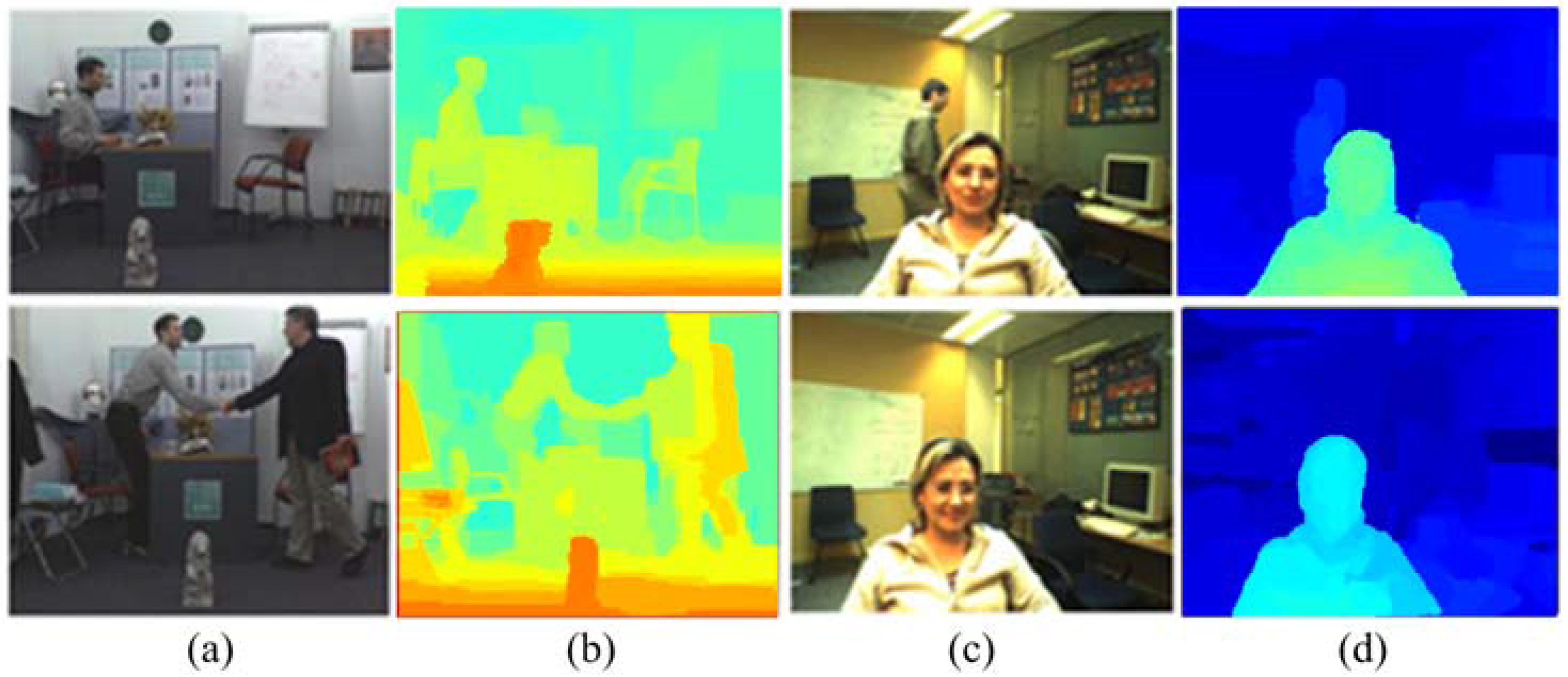

3. Stereo Matching Algorithm

3.1. Image Segmentation

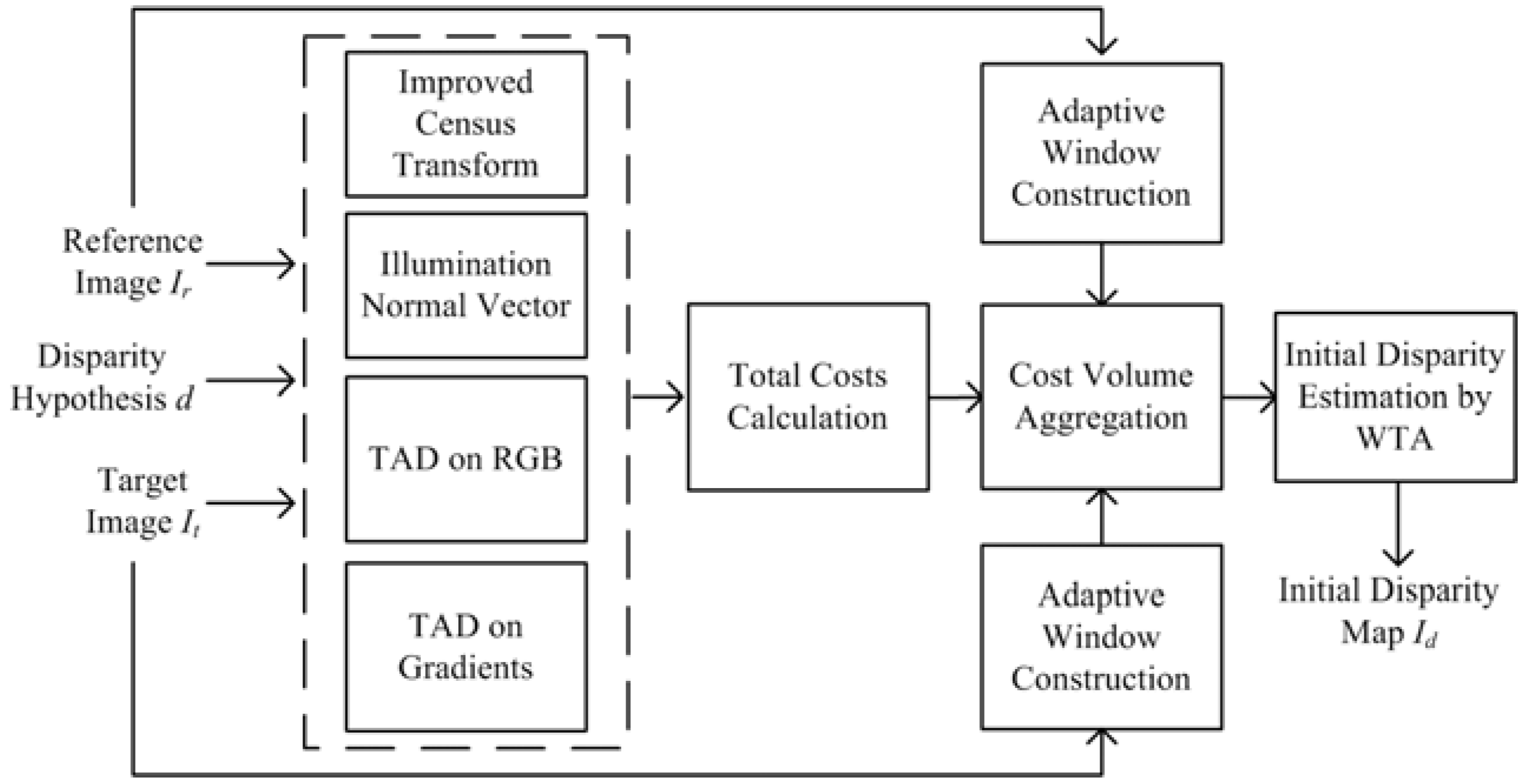

3.2. Initial Disparity Map Estimation

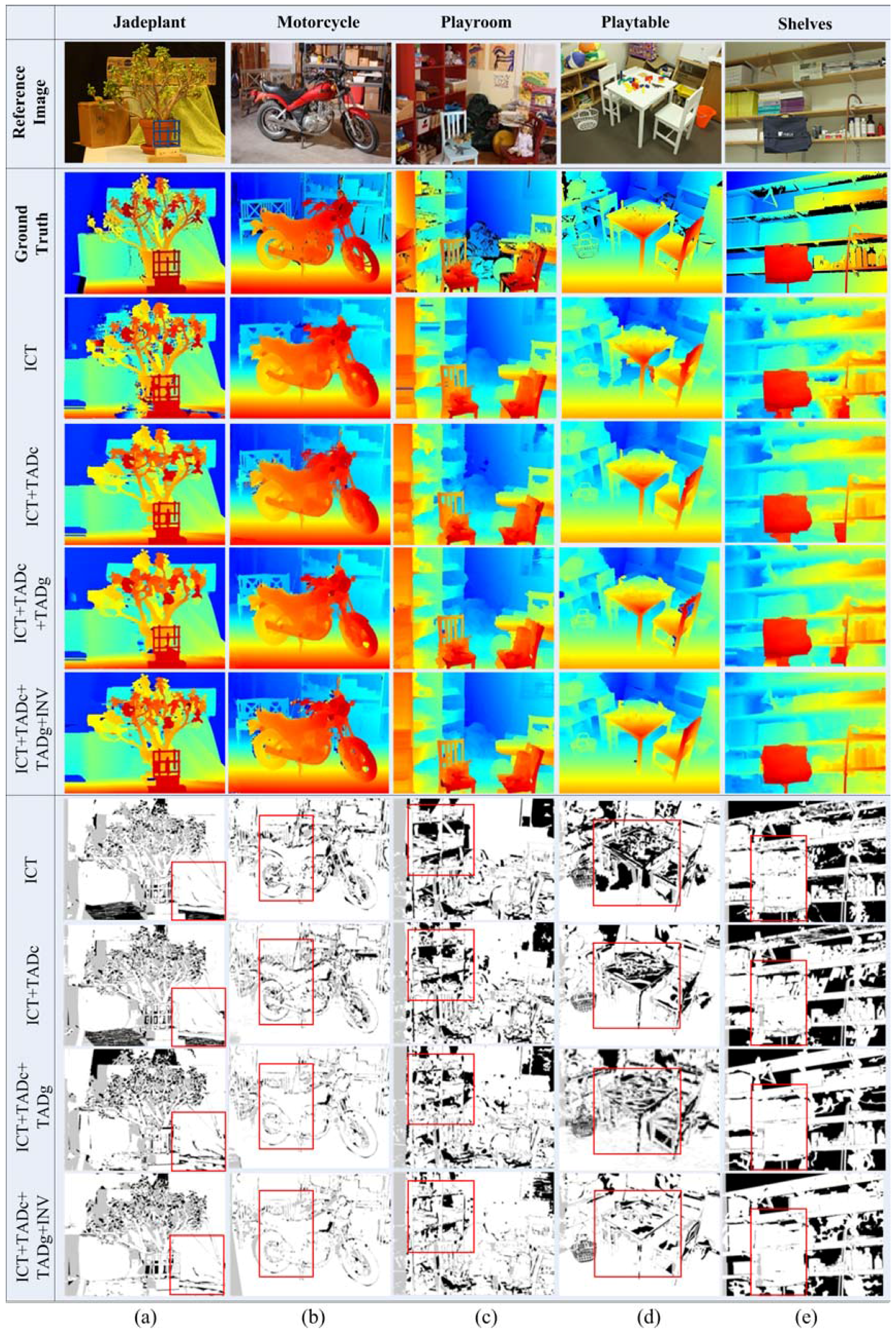

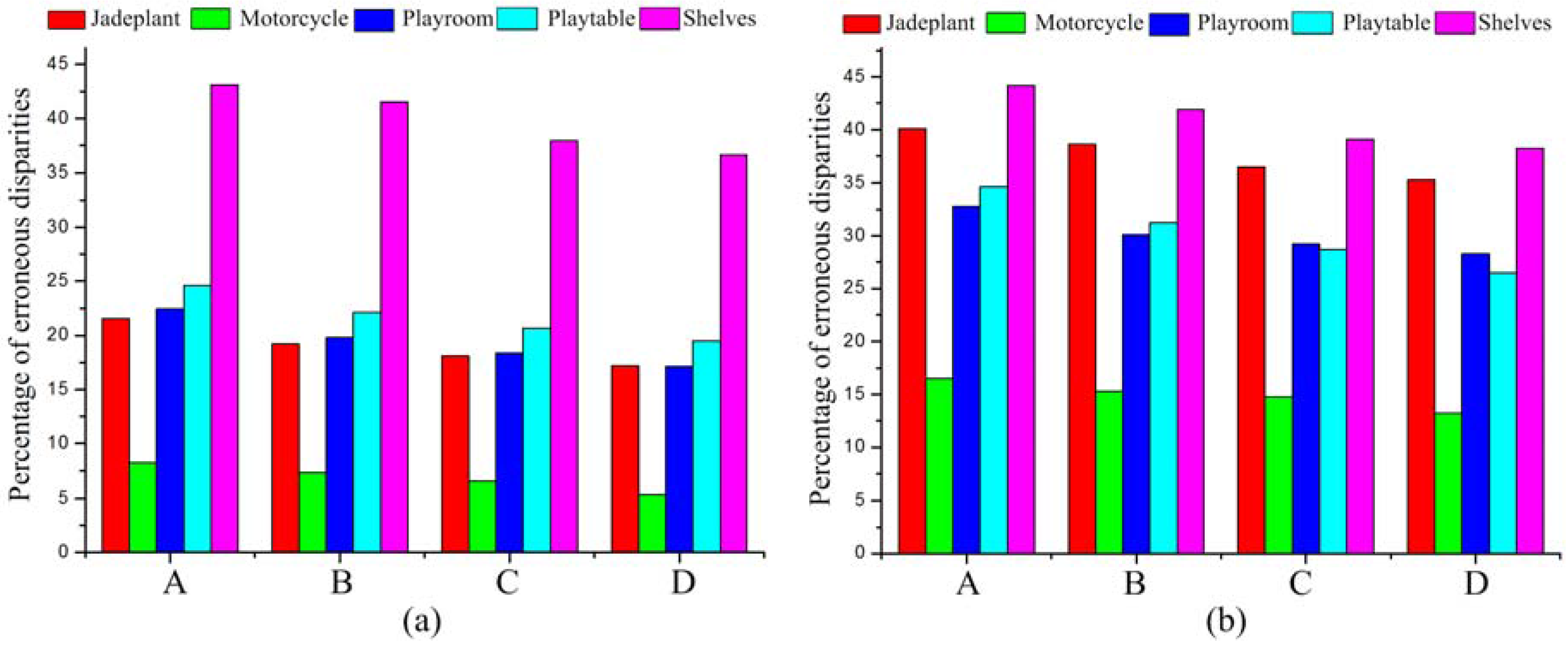

3.2.1. The Multi-Cost Function

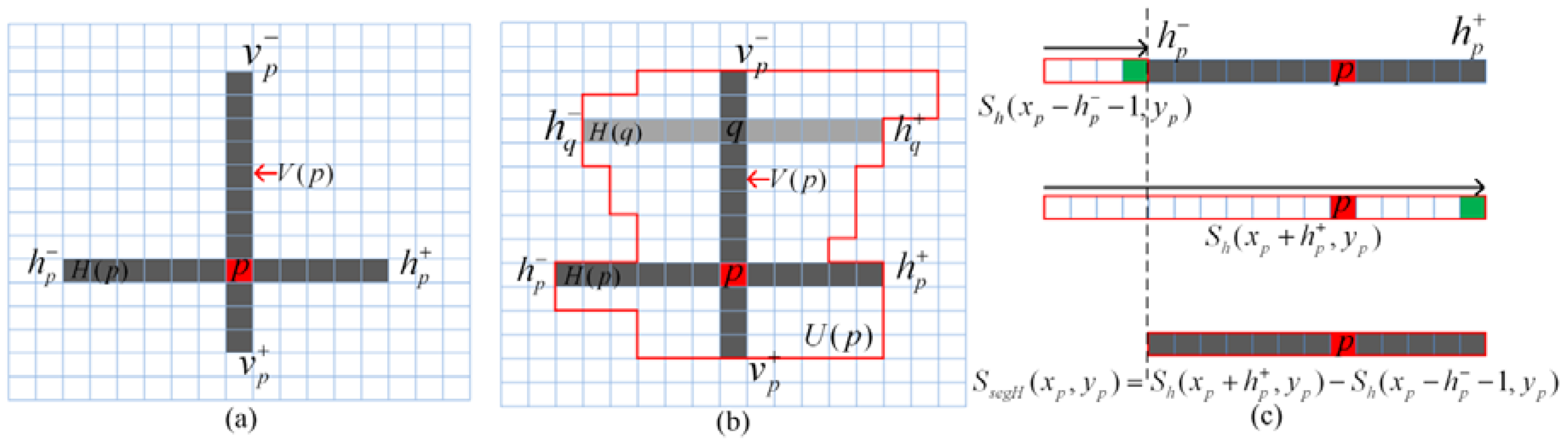

3.2.2. Cost Aggregation

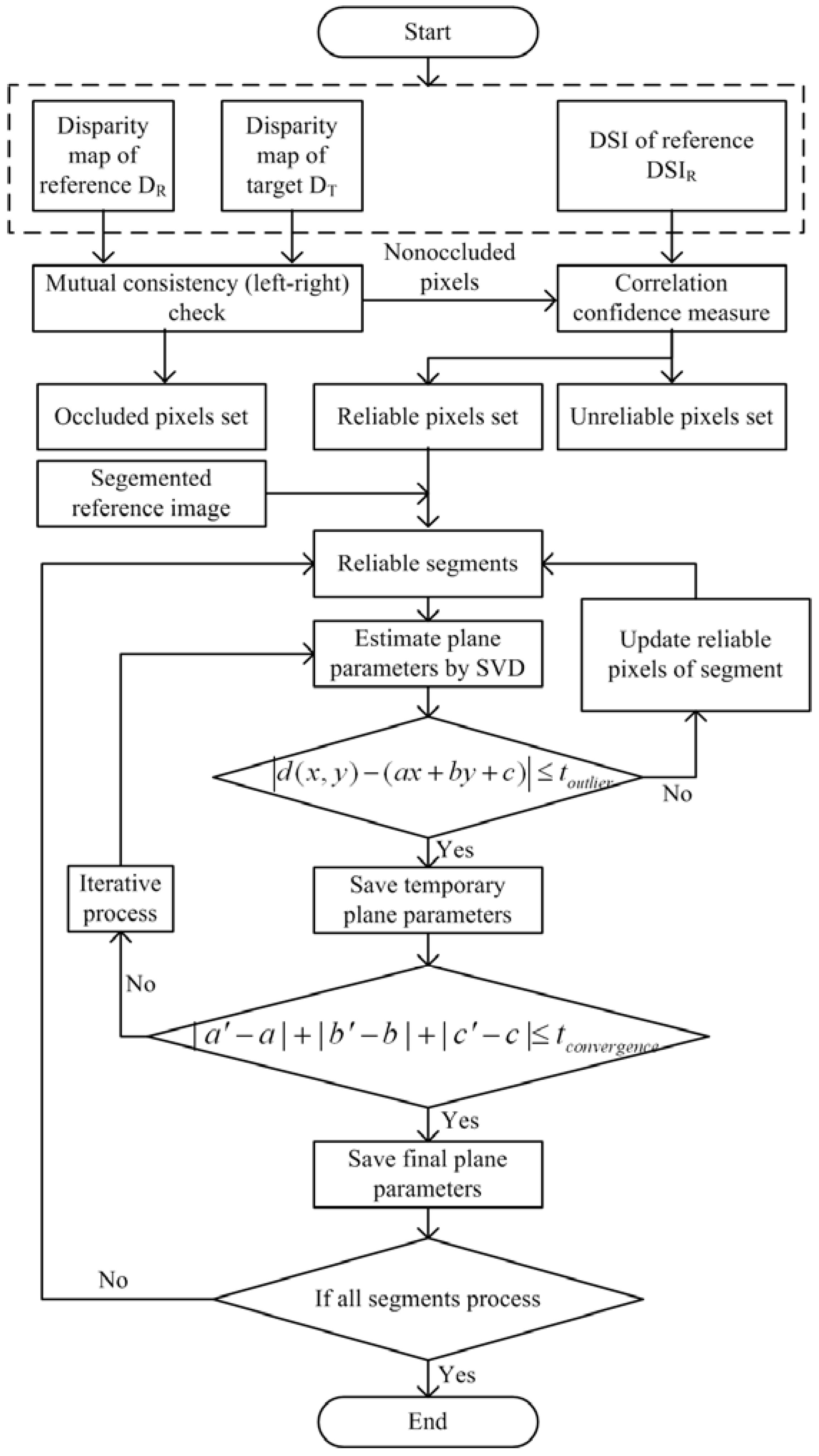

3.3. Disparity Plane Fitting

3.4. Disparity Plane Optimization by Belief Propagation

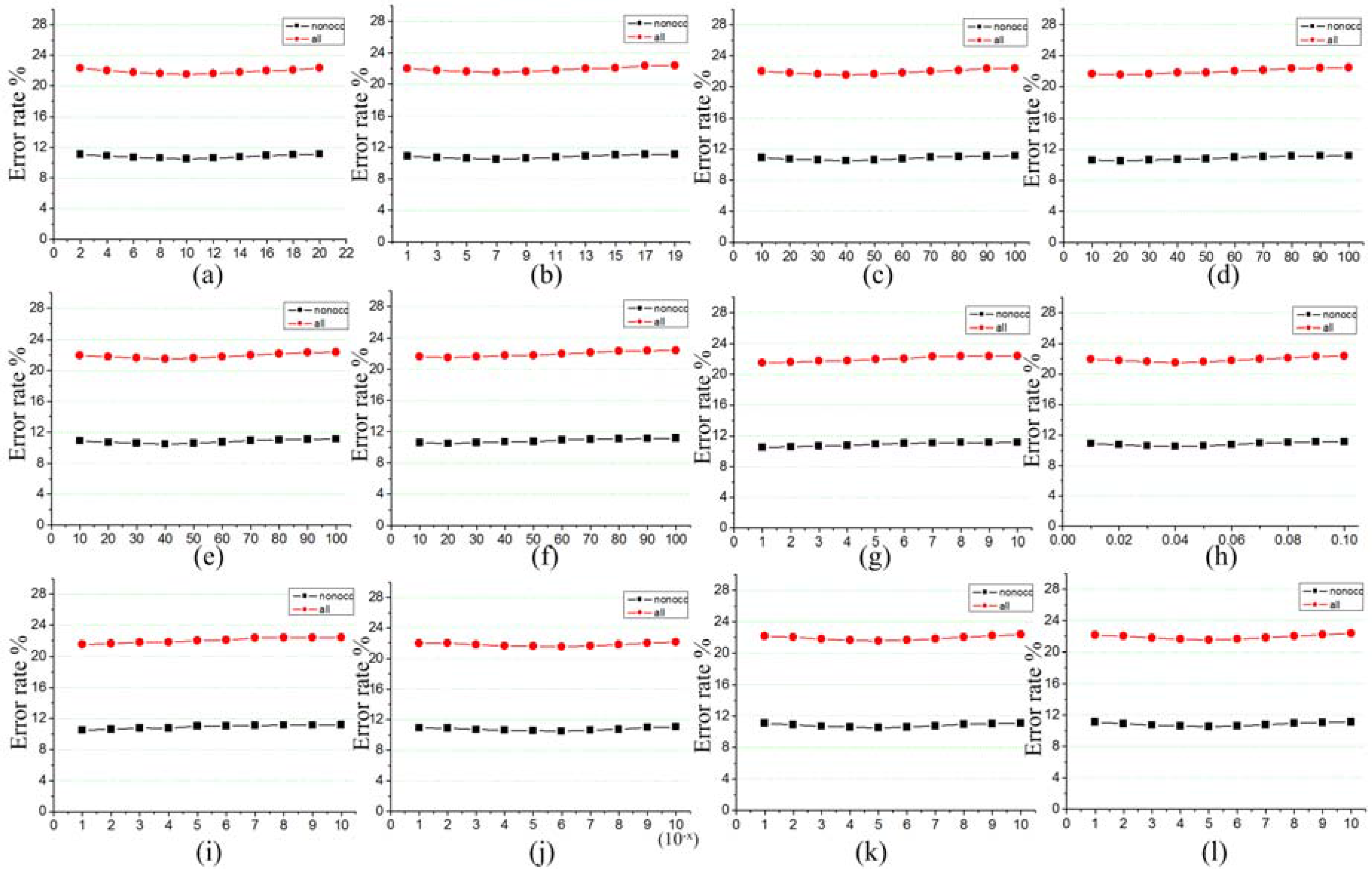

4. Experimental Results

5. Discussion and Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Scharstein, D.; Szeliski, R. A taxonomy and evaluation of dense two-frame stereo correspondence algorithms. Int. J. Comput. Vis. 2002, 47, 7–42. [Google Scholar] [CrossRef]

- Pham, C.C.; Jeon, J.W. Domain transformation-based efficient cost aggregation for local stereo matching. IEEE Trans. Circuits Syst. Video Technol. 2013, 23, 1119–1130. [Google Scholar] [CrossRef]

- Wang, D.; Lim, K.B. Obtaining depth map from segment-based stereo matching using graph cuts. J. Vis. Commun. Image Represent. 2011, 22, 325–331. [Google Scholar] [CrossRef]

- Scharstein, D.; Pal, C. Learning conditional random fields for stereo. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 17–22 June 2007; pp. 1–8.

- Scharstein, D.; Szeliski, R. High-accuracy stereo depth maps using structured light. In Proceedings of the Computer Vision and Pattern Recognition, Madison, WI, USA, 18–20 June 2003.

- Shan, Y.; Hao, Y.; Wang, W.; Wang, Y.; Chen, X.; Yang, H.; Luk, W. Hardware acceleration for an accurate stereo vision system using mini-census adaptive support region. ACM Trans. Embed. Comput. Syst. 2014, 13, 132. [Google Scholar] [CrossRef]

- Stentoumis, C.; Grammatikopoulos, L.; Kalisperakis, I.; Karras, G. On accurate dense stereo-matching using a local adaptive multi-cost approach. ISPRS J. Photogramm. Remote Sens. 2014, 91, 29–49. [Google Scholar] [CrossRef]

- Miron, A.; Ainouz, S.; Rogozan, A.; Bensrhair, A. A robust cost function for stereo matching of road scenes. Pattern Recognit. Lett. 2014, 38, 70–77. [Google Scholar] [CrossRef]

- Saygili, G.; van der Maaten, L.; Hendriks, E.A. Adaptive stereo similarity fusion using confidence measures. Comput. Vis. Image Underst. 2015, 135, 95–108. [Google Scholar] [CrossRef]

- Stentoumis, C.; Grammatikopoulos, L.; Kalisperakis, I.; Karras, G.; Petsa, E. Stereo matching based on census transformation of image gradients. In Proceedings of the SPIE Optical Metrology, International Society for Optics and Photonics, Munich, Germany, 21 June 2015.

- Klaus, A.; Sormann, M.; Karner, K. Segment-based stereo matching using belief propagation and a self-adapting dissimilarity measure. In Proceedings of the IEEE 18th International Conference on Pattern Recognition (ICPR’06), Hong Kong, China, 20–24 August 2006; pp. 15–18.

- Kordelas, G.A.; Alexiadis, D.S.; Daras, P.; Izquierdo, E. Enhanced disparity estimation in stereo images. Image Vis. Comput. 2015, 35, 31–49. [Google Scholar] [CrossRef]

- Wang, Z.F.; Zheng, Z.G. A region based stereo matching algorithm using cooperative optimization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8.

- Xu, S.; Zhang, F.; He, X.; Zhang, X. PM-PM: PatchMatch with Potts model for object segmentation and stereo matching. IEEE Trans. Image Process. 2015, 24, 2182–2196. [Google Scholar] [PubMed]

- Taguchi, Y.; Wilburn, B.; Zitnick, C.L. Stereo reconstruction with mixed pixels using adaptive over-segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8.

- Damjanović, S.; van der Heijden, F.; Spreeuwers, L.J. Local stereo matching using adaptive local segmentation. ISRN Mach. Vis. 2012, 2012, 163285. [Google Scholar] [CrossRef]

- Yoon, K.J.; Kweon, I.S. Adaptive support-weight approach for correspondence search. IEEE Trans. Pattern Anal. 2006, 28, 650–656. [Google Scholar] [CrossRef] [PubMed]

- Zhang, K.; Lu, J.; Lafruit, G. Cross-based local stereo matching using orthogonal integral images. IEEE Trans. Circuits Syst. Video Technol. 2009, 19, 1073–1079. [Google Scholar] [CrossRef]

- Veksler, O. Fast variable window for stereo correspondence using integral images. In Proceedings of the 2003 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, Madison, WI, USA, 18–20 June 2003.

- Okutomi, M.; Katayama, Y.; Oka, S. A simple stereo algorithm to recover precise object boundaries and smooth surfaces. Int. J. Comput. Vis. 2002, 47, 261–273. [Google Scholar] [CrossRef]

- Hosni, A.; Rhemann, C.; Bleyer, M.; Rother, C.; Gelautz, M. Fast cost-volume filtering for visual correspondence and beyond. IEEE Trans. Pattern Anal. 2013, 35, 504–511. [Google Scholar] [CrossRef] [PubMed]

- Gao, K.; Chen, H.; Zhao, Y.; Geng, Y.N.; Wang, G. Stereo matching algorithm based on illumination normal similarity and adaptive support weight. Opt. Eng. 2013, 52, 027201. [Google Scholar] [CrossRef]

- Wang, L.; Yang, R.; Gong, M.; Liao, M. Real-time stereo using approximated joint bilateral filtering and dynamic programming. J. Real-Time Image Process. 2014, 9, 447–461. [Google Scholar] [CrossRef]

- Taniai, T.; Matsushita, Y.; Naemura, T. Graph cut based continuous stereo matching using locally shared labels. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014; pp. 1613–1620.

- Besse, F.; Rother, C.; Fitzgibbon, A.; Kautz, J. Pmbp: Patchmatch belief propagation for correspondence field estimation. Int. J. Comput. Vis. 2014, 110, 2–13. [Google Scholar] [CrossRef]

- Hirschmuller, H. Stereo Processing by Semiglobal Matching and Mutual Information. IEEE Trans. Pattern Anal. 2008, 30, 328–341. [Google Scholar] [CrossRef] [PubMed]

- Comaniciu, D.; Meer, P. Mean shift: A robust approach toward feature space analysis. IEEE Trans. Pattern Anal. 2002, 24, 603–619. [Google Scholar] [CrossRef]

- Yao, L.; Li, D.; Zhang, J.; Wang, L.H.; Zhang, M. Accurate real-time stereo correspondence using intra-and inter-scanline optimization. J. Zhejiang Univ. Sci. C 2012, 13, 472–482. [Google Scholar] [CrossRef]

- Scharstein, D.; Hirschmüller, H.; Kitajima, Y.; Krathwohl, G.; Nešić, N.; Wang, X.; Westling, P. High-resolution stereo datasets with subpixel-accurate ground truth. In Proceedings of the German Conference on Pattern Recognition, Münster, Germany, 2–5 September 2014; pp. 31–42.

- Hirschmuller, H.; Scharstein, D. Evaluation of cost functions for stereo matching. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Minneapolis, MN, USA, 17–22 June 2007; pp. 1–8.

- Richardt, C.; Orr, D.; Davies, I.; Criminisi, A.; Dodgson, N.A. Real-time spatiotemporal stereo matching using the dual-cross-bilateral grid. In Proceedings of the European Conference on Computer Vision, Crete, Greece, 5–11 September 2010; pp. 510–523.

- Park, M.G.; Yoon, K.J. As-planar-as-possible depth map estimation. IEEE Trans. Pattern Anal. 2016. submitted. [Google Scholar]

- Li, L.; Zhang, S.; Yu, X.; Zhang, L. PMSC: PatchMatch-based superpixel cut for accurate stereo matching. IEEE T. Circ. Syst. Vid. 2016. [Google Scholar] [CrossRef]

- Zhang, C.; Li, Z.; Cheng, Y.; Cai, R.; Chao, H.; Rui, Y. Meshstereo: A global stereo model with mesh alignment regularization for view interpolation. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 13–16 December 2015; pp. 2057–2065.

- Kim, K.R.; Kim, C.S. Adaptive smoothness constraints for efficient stereo matching using texture and edge information. In Proceedings of the Image Processing (ICIP), Phoenix, AZ, USA, 25–28 September 2016; pp. 3429–3433.

- Zbontar, J.; LeCun, Y. Stereo matching by training a convolutional neural network to compare image patches. J. Mach. Learn. Res. 2016, 17, 1–32. [Google Scholar]

- Barron, J.T.; Poole, B. The Fast Bilateral Solver. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 11–14 October 2016; pp. 617–632.

- Mozerov, M.G.; van de Weijer, J. Accurate stereo matching by two-step energy minimization. IEEE Trans. Image Process. 2015, 24, 1153–1163. [Google Scholar] [CrossRef] [PubMed]

| Parameter Name | Purpose | Algorithm Steps | Parameter Value |

|---|---|---|---|

| Spatial bandwidth hs | Image segmentation | Step (1) | 10 |

| Spectral bandwidth hr | 7 | ||

| Gamma | Matching cost computation | Step (2) | 40 |

| Gamma | 20 | ||

| Gamma | 40 | ||

| Gamma | 20 | ||

| Threshold | Outliers filter and disparity plane parameters fitting | Step (3) | 1 |

| Threshold | 0.04 | ||

| Threshold | 1 | ||

| Threshold | 10−6 | ||

| Smoothness penalty | Smoothness and occlusion penalty | Step (4) | 5 |

| Occlusion penalty | 5 |

| Name | Res | Avg | Adiron | ArtL | Jadepl | Motor | MotorE | Piano | PianoL | Pipes | Playrm | Playt | PlaytP | Recyc | Shelvs | Teddy | Vintge |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| APAP-Stereo [32] | H | 7.78 | 3.04 | 7.22 | 13.5 | 4.39 | 4.68 | 10.7 | 16.1 | 5.35 | 10.1 | 8.60 | 8.11 | 7.70 | 12.2 | 5.16 | 7.97 |

| PMSC [33] | H | 8.35 | 1.46 | 4.44 | 11.2 | 3.68 | 4.07 | 11.9 | 18.2 | 5.25 | 12.6 | 8.03 | 6.89 | 7.58 | 31.6 | 3.77 | 17.9 |

| MeshStereoExt [34] | H | 9.51 | 3.53 | 6.76 | 18.1 | 5.30 | 5.88 | 8.80 | 13.8 | 8.10 | 11.1 | 8.87 | 8.33 | 10.5 | 31.2 | 4.96 | 12.2 |

| MCCNN_Layout | H | 9.54 | 3.49 | 7.97 | 14.0 | 3.91 | 4.23 | 12.6 | 15.6 | 4.56 | 12.3 | 14.9 | 12.9 | 7.79 | 24.9 | 5.20 | 17.6 |

| NTDE [35] | H | 10.1 | 4.54 | 7.00 | 15.7 | 3.97 | 4.37 | 13.3 | 19.3 | 5.12 | 14.4 | 12.1 | 11.7 | 8.35 | 33.5 | 3.75 | 17.8 |

| MC-CNN-acrt [36] | H | 10.3 | 3.33 | 8.04 | 16.1 | 3.66 | 3.76 | 12.5 | 18.5 | 4.22 | 14.6 | 15.1 | 13.3 | 6.92 | 30.5 | 4.65 | 24.8 |

| LPU | H | 10.4 | 3.17 | 6.83 | 11.5 | 5.8 | 6.35 | 13.5 | 26 | 7.4 | 15.3 | 9.63 | 6.48 | 10.7 | 35.9 | 4.19 | 21.6 |

| MC-CNN+RBS [37] | H | 10.9 | 3.85 | 10 | 18.6 | 4.17 | 4.31 | 12.6 | 17.6 | 7.33 | 14.8 | 15.6 | 13.3 | 7.32 | 30.1 | 5.02 | 22.2 |

| SGM [26] | F | 22.1 | 28.4 | 6.52 | 20.1 | 13.9 | 11.7 | 19.7 | 33.2 | 15.5 | 30 | 58.3 | 18.5 | 23.8 | 49.5 | 7.38 | 49.9 |

| TSGO [38] | F | 31.3 | 27.3 | 12.3 | 53.1 | 23.5 | 25.7 | 33.4 | 54.5 | 22.5 | 49.6 | 45 | 27 | 24.2 | 52.2 | 13.3 | 57.5 |

| Our method | Q | 33.9 | 36.5 | 20.6 | 35.7 | 27.6 | 30.5 | 38.8 | 59 | 26.6 | 46.8 | 56.9 | 31.8 | 29.6 | 53.3 | 12.2 | 52.8 |

| Name | Res | Avg | Austr | AustrP | Bicyc2 | Class | ClassE | Compu | Crusa | CrusaP | Djemb | DjembL | Hoops | Livgrm | Nkuba | Plants | Stairs |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PMSC [33] | H | 6.87 | 3.46 | 2.68 | 6.19 | 2.54 | 6.92 | 6.54 | 3.96 | 4.04 | 2.37 | 13.1 | 12.3 | 12.2 | 16.2 | 5.88 | 10.8 |

| MeshStereoExt [34] | H | 7.29 | 4.41 | 3.98 | 5.4 | 3.17 | 10 | 8.89 | 4.62 | 4.77 | 3.49 | 12.7 | 12.4 | 10.4 | 14.5 | 7.8 | 8.85 |

| APAP-Stereo [32] | H | 7.46 | 5.43 | 4.91 | 5.11 | 5.17 | 21.6 | 9.5 | 4.31 | 4.23 | 3.24 | 14.3 | 9.78 | 7.32 | 13.4 | 6.3 | 8.46 |

| NTDE [35] | H | 7.62 | 5.72 | 4.36 | 5.92 | 2.83 | 10.4 | 8.02 | 5.3 | 5.54 | 2.4 | 13.5 | 14.1 | 12.6 | 13.9 | 6.39 | 12.2 |

| MC-CNN-acrt [36] | H | 8.29 | 5.59 | 4.55 | 5.96 | 2.83 | 11.4 | 8.44 | 8.32 | 8.89 | 2.71 | 16.3 | 14.1 | 13.2 | 13 | 6.4 | 11.1 |

| MC-CNN+RBS [37] | H | 8.62 | 6.05 | 5.16 | 6.24 | 3.27 | 11.1 | 8.91 | 8.87 | 9.83 | 3.21 | 15.1 | 15.9 | 12.8 | 13.5 | 7.04 | 9.99 |

| MCCNN_Layout | H | 9.16 | 5.53 | 5.63 | 5.06 | 3.59 | 12.6 | 9.97 | 7.53 | 8.86 | 5.79 | 23 | 13.6 | 15 | 14.7 | 5.85 | 10.4 |

| LPU | H | 10.5 | 11.4 | 3.18 | 8.1 | 6.08 | 20.9 | 9.84 | 6.94 | 4 | 4.04 | 33.9 | 16.9 | 15.2 | 17.8 | 9.12 | 11.6 |

| SGM [26] | F | 25.3 | 45.1 | 4.33 | 6.87 | 32.2 | 50 | 13 | 48.1 | 18.3 | 7.66 | 29.6 | 36.1 | 31.2 | 24.2 | 24.5 | 50.2 |

| Our method | Q | 38.7 | 40.4 | 20.3 | 27.3 | 35.1 | 55.9 | 22.3 | 56.1 | 50.9 | 24.2 | 58 | 56.3 | 36.5 | 32.1 | 38.7 | 69.7 |

| TSGO [38] | F | 39.1 | 34.1 | 16.9 | 20 | 43.3 | 55.4 | 14.3 | 54.1 | 49.2 | 33.9 | 66.2 | 45.9 | 39.8 | 42.6 | 47.2 | 52.6 |

© 2016 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC-BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Ma, N.; Men, Y.; Men, C.; Li, X. Accurate Dense Stereo Matching Based on Image Segmentation Using an Adaptive Multi-Cost Approach. Symmetry 2016, 8, 159. https://doi.org/10.3390/sym8120159

Ma N, Men Y, Men C, Li X. Accurate Dense Stereo Matching Based on Image Segmentation Using an Adaptive Multi-Cost Approach. Symmetry. 2016; 8(12):159. https://doi.org/10.3390/sym8120159

Chicago/Turabian StyleMa, Ning, Yubo Men, Chaoguang Men, and Xiang Li. 2016. "Accurate Dense Stereo Matching Based on Image Segmentation Using an Adaptive Multi-Cost Approach" Symmetry 8, no. 12: 159. https://doi.org/10.3390/sym8120159

APA StyleMa, N., Men, Y., Men, C., & Li, X. (2016). Accurate Dense Stereo Matching Based on Image Segmentation Using an Adaptive Multi-Cost Approach. Symmetry, 8(12), 159. https://doi.org/10.3390/sym8120159