1. Introduction

Metaheuristic algorithms are algorithmic frameworks designed to address complex optimization problems that often surpass the capabilities of traditional methods. In large-scale, highly complex, and strongly nonlinear search spaces, these algorithms offer distinct advantages—conventional optimization techniques frequently struggle due to prohibitive computational complexity [

1]. As high-level strategies, metaheuristics guide heuristic searches to efficiently explore and exploit solution landscapes, aiming to identify global optimal or near-optimal solutions within feasible timeframes. Most draw inspiration from natural processes, providing robust mechanisms for navigating vast and intricate solution spaces [

2].

Metaheuristic techniques span a wide range of approaches, from those inspired by natural phenomena and psychological processes to human-engineered systems [

3]. Prominent categories include evolutionary algorithms (EAs) [

4]—such as differential evolution (DE) [

5]—swarm intelligence (SI) [

6] (e.g., particle swarm optimization (PSO) [

7] and ant colony optimization (ACO) [

8]), and physics-based methods like the gravitational search algorithm (GSA) [

9]. All operate on iterative refinement: a set of candidate solutions converges toward optimality through processes such as selection, crossover, mutation, movement, and position updates [

10].

These techniques find applications across diverse domains, including engineering design optimization [

3], image segmentation, path planning, task scheduling, machine learning, and network design [

11,

12,

13,

14,

15].

However, metaheuristics face inherent challenges: risks of early convergence, the need to balance exploration and exploitation, and demands for parameter tuning. Additionally, the “No Free Lunch” theorem [

16] establishes that no single algorithm universally outperforms others across all problems. Thus, selecting an appropriate method depends on the problem’s specific nature and requirements, emphasizing the need for new algorithms to tackle diverse optimization challenges in engineering and related fields [

17].

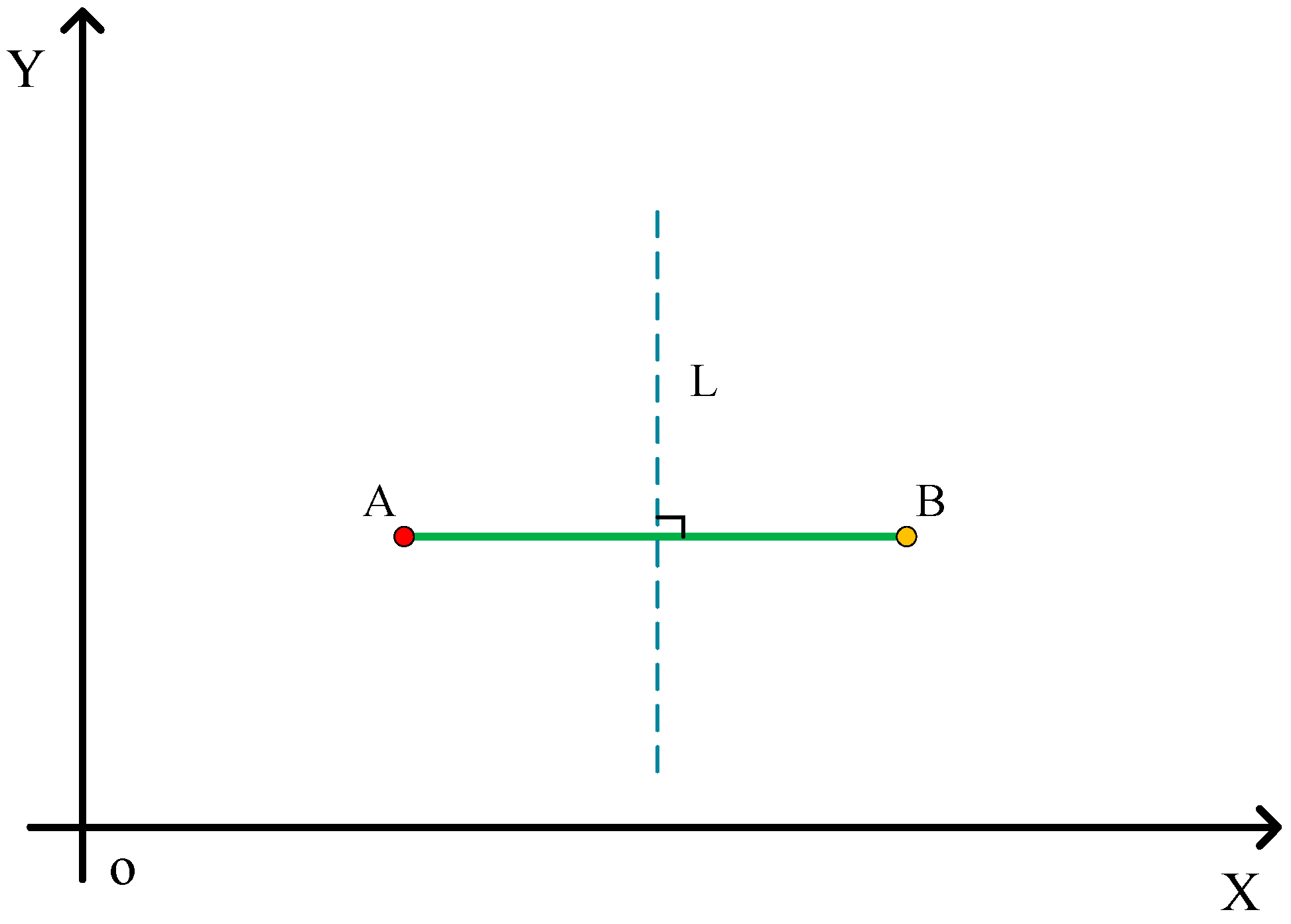

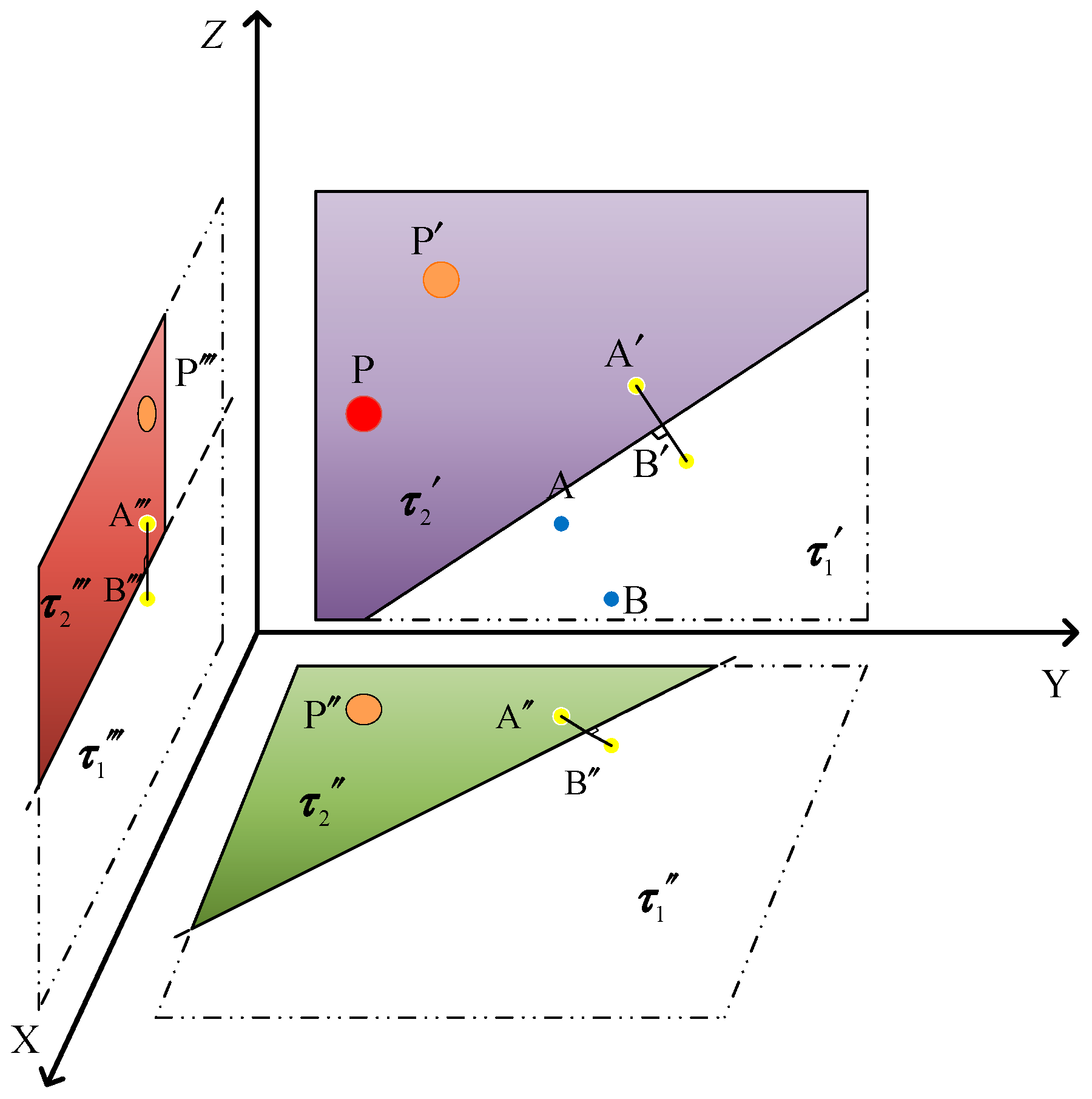

This paper aims to propose a novel mathematics-inspired optimization algorithm—the Perpendicular Bisector Optimization Algorithm (PBOA). A perpendicular bisector is defined as a line perpendicular to a line segment and passing through its midpoint. Its core property—that any point on the bisector is equidistant from the two endpoints of the segment—is a fundamental conclusion in Euclidean geometry, provable via the theory of congruent triangles. This property has been validated over time: Euclid established its foundational role in Elements [

18], and it remains a key theoretical reference in modern geometric research [

19]. In the framework of swarm intelligence optimization, algorithms update individual positions through correlations between population members. The perpendicular bisector, by partitioning the search space and defining distance relationships between individual positions and potential optimal solutions, provides geometric constraints and directional guidance for particle position updates, forming the algorithm’s theoretical basis. Built on this principle, the PBOA is designed to tackle increasingly complex optimization challenges.

The main contributions of this paper are summarized as follows:

Propose a novel metaheuristic optimization algorithm—the Perpendicular Bisector Optimization Algorithm (PBOA), which fully leverages the properties of perpendicular bisectors in geometric principles, offering a new perspective for metaheuristic optimization.

Evaluate the optimization performance of the PBOA using 27 benchmark functions and validate the reliability of the results via detailed visual analytics.

Verify the application potential of the PBOA through 3 engineering design problems, demonstrating its effectiveness in practical engineering scenarios.

Apply the PBOA to the parameter optimization of the time-varying non-singular fast terminal sliding mode controller for H-type motion platforms, with the experimental results showing that it exhibits significant advantages in multi-parameter controller optimization.

After the Introduction,

Section 2 will review related work on metaheuristic algorithms;

Section 3 will introduce the proposed PBOA and its mathematical modeling;

Section 4 will describe the experimental setup;

Section 5 will conduct a comprehensive simulation and performance analysis of the PBOA on 27 benchmark functions;

Section 6 will examine the PBOA’s effectiveness in 5 practical engineering design problems and the optimization of the time-varying non-singular fast terminal sliding mode controller for H-type motion platforms;

Section 7 will summarize the key findings and suggest future research directions.

2. Related Work

Optimization algorithms are pivotal in computational science, serving as efficient tools to solve complex problems across diverse fields. A large number of these algorithms take inspiration from natural and social phenomena, converting biological, physical, chemical, and behavioral principles into practical computational strategies [

3,

10,

20]. Their diversity stems from a wide array of sources, such as animal behaviors, plant interactions, and physical processes [

3]. This extensive foundation facilitates the development of algorithms that are not only effective but also robust, adapting well to complex high-dimensional optimization tasks. Each algorithm is designed to simulate specific natural mechanisms, providing unique strategies to find efficient solutions in multi-dimensional and dynamic optimization scenarios [

10]. By modeling natural phenomena, these algorithms optimize functions through exploration, exploitation, and evolution, embodying the core mechanisms observed in natural systems [

3]. Correspondingly,

Table 1 [

20] offers a detailed classification of state-of-the-art optimization algorithms, categorizing them by their primary inspiration and core methodologies.

Optimization via swarm intelligence algorithms is achieved through the collective intelligence and decentralized decision-making of social animals. Consider Particle Swarm Optimization (PSO) [

21], for instance: drawing on the foraging habits of bird flocks and fish schools, it maintains a population of particles (candidate solutions) that navigate the search space. These particles adjust their velocities and positions under the guidance of both individual and collective top-performing solutions, with the swarm moving toward high-quality regions of the search space over iterations. In a similar manner, Ant Colony Optimization (ACO) [

22] strengthens promising paths through pheromone deposition and evaporation, balancing the exploitation of known routes with the exploration of new options. The variety of swarming behaviors applicable to optimization tasks is further highlighted by other swarm-based methods like Artificial Bee Colony (ABC) [

23], Ant Lion Optimizer (ALO) [

24], and Jellyfish Search (JS) [

25].

Drawing on mammalian behavior, certain metaheuristic algorithms integrate foraging tactics, social hierarchies, or alertness mechanisms. For example, the Gray Wolf Optimizer (GWO) [

16] imitates the hierarchical behavior (alpha, beta, delta, omega) of wolf packs; the Cheetah Optimizer (CO) [

26] reproduces how cheetahs pursue prey rapidly and shift direction abruptly; the Meerkat Optimization Algorithm (MOA) [

27] is based on meerkats’ coordinated vigilance; and the Harris Hawks Optimizer (HHO) [

28] models hawks’ sudden assault strategies. By incorporating these natural behaviors, search diversity is boosted, and solutions are steered toward high-quality regions.

Inspired by physical and chemical phenomena, another major class of optimization algorithms functions differently. Take Simulated Annealing (SA) [

29]—rooted in metallurgical annealing—as an example: it uses a gradually declining temperature parameter to temporarily accept inferior solutions, helping escape local optima. In a similar way, the Gravitational Search Algorithm (GSA) [

30] treats candidate solutions as masses under gravitational pull; fitter (heavier) solutions exert stronger attraction, steering the population toward optimal solutions. The Multi-Verse Optimizer (MVO) [

31] simulates inflation rates across parallel universes, and the Black Hole (BH) Algorithm [

32] depicts how solutions converge into a dominant gravitational well.

Within the chemical and biochemical domain, Atom Search Optimization (ASO) [

33], Chemical Reaction Optimization (CRO) [

34], and Nuclear Reaction Optimization (NRO) [

35] make use of molecular interactions, reaction dynamics, and fission or fusion processes to navigate the search space toward configurations with more favorable energy levels.

Biological evolution serves as the source of operational mechanisms for evolutionary algorithms (EAs). Genetic Algorithms (GAs) [

36] employ selection, crossover, and mutation operators to iteratively refine a solution population. GAs maintain diversity and reduce the risk of premature convergence by favoring fitter solutions and introducing controlled randomness. As generations progress, the population slowly approaches global or near-global optima. Differential Evolution (DE) [

5] improves solutions by applying scaled differences between existing candidates, and Genetic Programming (GP) [

37] extends these principles to the evolution of entire programs, thereby demonstrating the adaptability and versatility of evolution-based optimizers.

Another category of optimization algorithms, mathematical and numerical methods, relies on pure mathematical principles instead of biological or social analogies. Take the Arithmetic Optimization Algorithm (AOA) [

38] as an example: it uses arithmetic operators—addition, subtraction, multiplication, and division—in a controlled way. Starting with extensive exploration, it gradually narrows its focus to promising regions, with an adjustable parameter regulating how intense arithmetic operations are over time. In a similar vein, Chaos Game Optimization (CGO) [

39] and the Sine Cosine Algorithm (SCA) [

40] each use fractal geometry and trigonometric models to systematically balance exploration and exploitation within the search space.

Drawing inspiration from natural cycles and environmental dynamics, environmental and ecologically inspired approaches enhance the search process. The Snow Ablation Optimizer (SAO) [

41] simulates how snow melts, while the Water Cycle Algorithm (WCA) [

42] models the natural water cycle, which includes evaporation, precipitation, and flow. These algorithms achieve a dynamic balance between exploration and exploitation by replicating ecological cycles.

Optimization algorithms have also found inspiration in human social behavior. The Chef-Based Optimization Algorithm (CBOA) [

43] imitates the decision-making strategies chefs use when creating and refining recipes; Cultural Algorithms (CA) [

44] combine individual adaptation with a shared belief space to facilitate collective learning.

Within non-biological inspiration domains, optimizers inspired by games—such as the Dart Game Optimizer (DGO) [

45], Puzzle Optimization Algorithm (POA) [

46], and Squid Game Optimizer (SGO) [

47]—derive their strategic decision-making from entertainment and popular culture. Meanwhile, algorithms rooted in economics, including Supply-Demand Optimization (SDO) [

48] and Search and Rescue Optimizer (SARO) [

49], leverage market equilibrium dynamics and systematic rescue strategies to guide convergence toward optimal solutions.

Table 1 illustrates that optimization algorithms exhibit diversity across multiple domains, from swarm intelligence and mammalian hunting strategies to physical-chemical analogies. This diversity showcases the flexibility and adaptability of metaheuristic algorithms when addressing complex optimization challenges.

That said, existing metaheuristic and evolutionary algorithms come with notable limitations: premature convergence, slow adaptation to dynamic environments, and strict parameter tuning requirements [

3,

50]. For traditional evolutionary methods, computational costs rise exponentially as problem scale increases, making them inefficient in large-scale search spaces. Additionally, gradient-dependent algorithms face difficulties with highly nonlinear or discontinuous problems, which emphasizes the need for flexible gradient-free techniques [

3].

The performance of metaheuristic algorithms in high-dimensional, complex optimization tasks is significantly restricted by these challenges. A core issue among them is premature convergence: in multimodal landscapes, algorithms find it hard to maintain solution diversity [

51], which often results in convergence to local optima and limits their capacity to identify global solutions [

52]. Slow adaptation to dynamic environments is another major challenge; many algorithms depend on fixed exploration-exploitation mechanisms [

53], and such rigidity lowers their efficiency in responding to time-varying objectives and constraints, thus weakening their effectiveness in practical applications [

54].

Table 1.

Detailed Classification of Mainstream Optimization Algorithms.

Table 1.

Detailed Classification of Mainstream Optimization Algorithms.

| Category | Algorithm | Year | Authors | Brief Description | Ref. |

|---|

| Swarm intelligence | Particle swarm optimization (PSO) | 1995 | Kennedy, Eberhart | Models swarm behavior of birds; particles adjust velocity and position based on solutions; global best solution is updated | [21] |

| Ant colony optimization (ACO) | 2006 | Dorigo, Stützle | Simulation foraging behavior of ants; uses pheromone trails for promoting paths | [22] |

| Artificial bee colony (ABC) | 2005 | Karaboga, Basturk | Mimics bee foraging patterns in a graph; employed, onlooker, and scout bees for exploitation of nectar sources | [23] |

| Whale optimization algorithm (WOA) | 2016 | Mirjalili, Lewis | Simulates “bubble-net attack” hunting strategy of humpback whales; updating surround prey: spiral updating mechanism and encircling prey | [55] |

| Bacteria phototaxis optimizer (BPO) | 2023 | Pan, Teng, Li, Zhan | Inspired by bacterial phototaxis; uses phototaxis, aerotaxis, and chemotaxis mechanisms for exploration and exploitation | [56] |

| Mammalian behavior | Gray wolf optimizer (GWO) | 2014 | Mirjalili, et al. | Mimics gray wolves’ strategy in nature (grey wolf hierarchy: alpha, beta, omega); three main steps: tracking, encircling, and attacking prey | [16] |

| Harris hawks optimizer (HHO) | 2019 | Heidari, et al. | Utilizes covert attack strategies of Harris’ hawks to enhance exploration and exploitation | [28] |

| Cheetah optimizer (CO) | 2022 | Akbar, Deb | Inspired by cheetahs’ chasing speed; it features rapid global search ability and efficient exploitation near optimal solutions | [26] |

| Mammalian behavior | Meerkat optimization algorithm (MOA) | 2023 | Xian et al. | Mimics meerkats’ alertness; sentry meerkat for exploration; forager meerkats for solution search | [27] |

| Physical phenomena | Simulated annealing (SA) | 1983 | Kirkpatrick et al. | Based on the thermal annealing process in metallurgy; its cooling schedule enables escape from local optima to find better solutions | [29] |

| Gravitational search algorithm (GSA) | 2009 | Rashedi et al. | Considers candidate solutions as masses; gravitational force draws solutions toward fitter agents | [30] |

| Multi-verse optimizer (MVO) | 2016 | Mirjalili, et al. | Inspired by multi-verse theory; explores universe with varied inflation rates | [31] |

| Black hole algorithm (BHA) | 2013 | Hammoudeh, et al. | Simulates stars in a black hole attracting stars; solutions converge by “falling” into the black hole | [32] |

| Elastic deformation optimization algorithm (EDOA) | 2022 | Pan, Tang, et al. | Based on Hooke’s law and Newton’s theory; uses elastic deformation for exploration and exploitation | [57] |

| Chemical biochemical | Atom search optimization (ASO) | 2019 | Zhao et al. | Simulates integrative interaction forces; solutions find information exchange interactions and reactive relations | [33] |

| Chemical reaction optimization (CRO) | 2009 | Lam, Li | Mimics molecular reactions; uses collision, decomposition, synthesis, and interchange to seek stable, lower-energy solutions | [34] |

| Nuclear reaction optimization (NRO) | 2019 | Wei et al. | Based on nuclear fission/fusion; explores solution space via collision and entropy search | [35] |

| Evolutionary algorithms | Differential evolution (DE) | 1997 | Storn, Price | Creates new candidates by adding scaled differences to existing ones | [5] |

| Genetic algorithm (GA) | 1975 | Holland | Employs survival-of-the-fittest principles; along with crossover and mutation operators, to evolve a population toward optimal solutions | [36] |

| Genetic programming (GP) | 1998 | Banzhaf et al. | Evolves computer programs; represented as trees; genetic operators evolve a population | [37] |

| Mathematical models | Arithmetic optimization algorithm (AOA) | 2021 | Abualigah et al. | Employs arithmetic operations; transitions from broad exploration to exploitation | [38] |

| Chaos game optimization (CGO) | 2021 | Talatnahr, et al. | Uses chaos fractals; random walks and greedy strategies support both exploration and exploitation | [39] |

| Sine cosine algorithm (SCA) | 2016 | Mirjalili | Updates solutions via sine/cosine functions; balances global and local search | [40] |

| Expectation-based weighted hypergraph in optimization (EBHO) | 2024 | Pan, Wang et al. | Incorporates the adaptive hypergraph model in information dissemination; balances global exploration and local exploitation | [58] |

| Environmental ecological | Snow ablation optimization (SAO) | 2023 | Deng et al. | Simulates snow melting process; iterative ablation reveals promising regions | [41] |

| Water cycle algorithm (WCA) | 2012 | Eskandar et al. | Mimics the water cycle processes; streams merge into rivers and lakes at varying rates | [42] |

| Human behavior/Social dynamics | Chaotic-based optimization algorithm (CBOA) | 2022 | Tranojevska et al. | Refines solutions via iterative chaotic maps; balances exploration and exploitation | [43] |

| Cultural algorithm (CA) | 1994 | Reynolds | Inspired by cultural evolution; integrates continuous adaptation with a shared belief space for collective learning | [44] |

| Game—based algorithms | Darts game optimizer (DGO) | 2020 | Dehghani et al. | Inspired by dart throwing; solutions adapt by aiming closer at target optima iteratively | [45] |

| Puzzle optimization algorithm (POA) | 2022 | Ahmadi et al. | Analogous to solving a puzzle; piecewise adjustments gradually assemble coherent optimal structures. | [46] |

| Economic theory | Supply-demand—based optimization (SDO) | 2019 | Zhao et al. | Mimic market equilibria; supply-demand interactions converge on best solutions over time. | [48] |

| Economic theory | Search and rescue optimization (SAR) | 2020 | Shabani et al. | Models rescue missions; systematic hunting processes locate high-fitness solutions (survivors) | [49] |

Furthermore, the wide applicability of algorithms is hindered by stringent parameter tuning. Parameters like mutation rate, crossover probability, and weight scaling usually require manual tuning [

54], a time-consuming process that impairs the algorithm’s generalizability and practical usability. Meanwhile, as the problem dimension grows, the “curse of dimensionality” causes computational costs to rise exponentially: traditional evolutionary methods demand more iterations and evaluations, which reduces their scalability for large-scale problems [

51]. Metaheuristic algorithms fare poorly with highly nonlinear, discontinuous, or noisy objective functions, and gradient-based methods also struggle to produce reliable solutions in these scenarios. This further underscores the need for robust gradient-free techniques when dealing with complex real-world problems [

53].

Moreover, these algorithms show weak robustness in multimodal optimization: when multiple local optima are present [

53], most fail to explore and exploit multiple search regions simultaneously, resulting in suboptimal solutions when the fitness landscape has significant irregularities. Scalability remains a persistent issue: many algorithms are designed for small-scale problems, yet their performance drops sharply as problem complexity increases [

52]. Finally, heavy reliance on specific problems limits the universality of metaheuristic algorithms [

51]; extensive customization for different problem domains is required for most algorithms, which reduces their versatility in addressing a wide range of optimization challenges.

To tackle the challenges mentioned above, the Perpendicular Bisector Optimization Algorithm (PBOA) introduces a search space expansion mechanism, which effectively lowers the risk of premature convergence. By selecting 4 different particles and constructing line segments with the current particle to generate perpendicular bisectors, the algorithm simulates a geometry-driven search expansion process—this significantly enhances its exploration and exploitation capabilities. Core features, such as the equidistant property of perpendicular bisectors and differentiated convergence strategies (slow convergence in the exploration phase to expand the search range, and fast convergence in the exploitation phase for refined search), adapt to the search needs of complex solution spaces, boosting adaptability in diverse optimization scenarios. Integrating deterministic line segment construction and dynamic convergence control mechanisms, the PBOA extends the performance boundaries of mathematical heuristic algorithms. These mechanisms maintain solution diversity while ensuring convergence efficiency, making the PBOA particularly effective in multimodal and perturbed problem spaces.

A key strength of the PBOA is its deterministic theoretical foundation, a characteristic that equips the algorithm with robust exploitation capability and allows it to focus most computational resources on the search process. Thanks to this resource allocation mechanism, the PBOA exhibits superior performance and robustness in the majority of optimization problems.

By leveraging the geometric properties of perpendicular bisectors to their full extent, the PBOA successfully addresses the core limitations of existing metaheuristic methods, providing a reliable solution approach for complex optimization problems. Subsequent sections will elaborate on the PBOA’s mathematical model and its specific applications in engineering design problems.

5. Experimental Results and Analysis

5.1. Parameter Sensitivity Analysis

The core parameters involved in this paper include: the number of optimal points among the types of particles to be selected as endpoints of line segments, the key factor λ for selecting particle movement modes, and the probability parameters P1, P2, P3, and P4 that control the probability of particles following different targets. To evaluate the impact of these parameters on the algorithm performance, a parameter sensitivity analysis is conducted.

The experiment adopts a unified setup: the population size N = 50, and the maximum number of iterations

Max_iter = 1000. For each parameter combination, the PBOA runs independently 50 times on each test function. The results of the sensitivity analysis are shown in

Figure 6,

Figure 7 and

Figure 8. Based on these results, this paper determines the optimal setting method for each parameter, which is as follows:

5.1.1. Selection of Test Functions

To further evaluate the impact of parameters on the algorithm performance, this study selects 8 representative benchmark functions (F1, F3, F5, F9, F10, F12, F14, F18) for analysis. The specific parameters of each test function are detailed in

Table 1, covering different characteristics (such as unimodal/multimodal, high-dimensional/low-dimensional, etc.), which can support a comprehensive evaluation of parameter impacts.

5.1.2. Sensitivity Analysis of the Number of Optimal Points

To quantify the impact of the number of optimal points among particle types selected as endpoints of line segments (hereinafter referred to as “endpoint candidate optimal points”) on the optimization performance of the PBOA, this experiment systematically adjusts this number from 1 to 10, resulting in 10 distinct parameter combinations. The remaining parameters of the PBOA are fixed as follows: each endpoint candidate optimal point has an equal probability of being selected; the control parameter λ is set to 0.4. For each combination of the number of endpoint candidate optimal points and test functions, 50 independent runs are conducted to ensure statistical robustness. The comprehensive ranking of algorithm performance is adopted as the core evaluation metric, where a smaller ranking indicates superior overall performance under that parameter configuration.

Based on the optimization results of the PBOA on benchmark functions with different numbers of endpoint candidate optimal points,

Figure 6 presents the final rankings of each function under the 10 quantity configurations. The key findings are summarized as follows:

Unimodal functions (F1, F3, F5): For F1, the ranking is optimal with 3 endpoint candidate optimal points, followed by 2 and 4, while the performance is the poorest with 10. This indicates that a moderate number of endpoint candidate optimal points (3–4) facilitates efficient local exploitation for this unimodal problem. In F3, the performance is optimal with 1 endpoint candidate optimal point, followed by 2 and 3, with the worst performance observed with 7, suggesting that fewer endpoint candidate optimal points can improve the convergence efficiency of this function. For F5, the ranking is optimal with 7 endpoint candidate optimal points, with 2 and 8 also performing well, and the lowest ranking with 10. Overall, these results demonstrate that for unimodal function optimization, the number of endpoint candidate optimal points in the range (3–7) yields superior comprehensive rankings, reflecting strong adaptability to local exploitation scenarios.

Multimodal functions (F9, F10, F12): In F9, the algorithm achieves optimal rankings when the number of endpoint candidate optimal points is 3, 4, 5, 6, 7, or 9, indicating high robustness to variations in this number for this multimodal function; however, performance is the poorest with 8. For F10, the best rankings are observed with 7 and 9 endpoint candidate optimal points, followed by 8, with the lowest ranking with 10, suggesting that a moderate-to-large number of endpoint candidate optimal points (7–9) effectively balances exploration across multiple peaks. In F12, the optimal ranking is attained with 4 endpoint candidate optimal points, followed by 7 and 3/6, with the worst performance with 10. Overall, for multimodal function optimization, the number of endpoint candidate optimal points in the range (3–7) results in balanced rankings across most functions, effectively synergizing global exploration and local exploitation.

Fixed-dimensional multimodal functions (F14, F18): For F14, optimal rankings are achieved with 4, 6, or 7 endpoint candidate optimal points, followed by 3/9, with the poorest performance with 10. In F18, the algorithm exhibits exceptional stability: the number of endpoint candidate optimal points ranging from 3 to 9 all achieve optimal rankings, while 1 and 2 perform significantly worse. These results indicate that fixed-dimensional multimodal functions benefit from a moderate-to-large number of endpoint candidate optimal points (3–9), with low sensitivity to specific values within this range.

In summary, integrating the ranking results across unimodal, multimodal, and fixed-dimensional multimodal functions, when the number of endpoint candidate optimal points is in the range (3–7), the PBOA achieves superior comprehensive rankings on most test functions with strong performance stability. This range demonstrates robust performance across diverse optimization scenarios, thus recommending (3–7) as the optimal range for the number of endpoint candidate optimal points in the PBOA. Considering the algorithm’s outstanding performance with 3 optimal points in unimodal functions and its good adaptability in multimodal and fixed-dimensional multimodal functions, this paper ultimately selects 3 as the number of endpoint candidate optimal points.

5.1.3. Sensitivity Analysis of Parameter λ

To quantify the impact of parameter λ on the search ability of the PBOA, the experiment sets λ to increase with a fixed step size of 0.02 in the interval [0, 1], forming 51 groups of parameter experiments. Other parameters of the PBOA are set as follows: P1, P2, P3 are all 0.1, and P4 is 0.7. For each combination of parameters and test functions, 51 independent optimization experiments are conducted, and the comprehensive ranking of algorithm performance is recorded as the core basis for parameter evaluation.

Based on the performance of the PBOA on benchmark functions under different

λ values,

Figure 7 illustrates the final rankings of the PBOA for optimizing each function with 51

λ values (a smaller ranking indicates better comprehensive performance of the algorithm under that parameter combination). The experimental results show that:

Unimodal functions (F1, F3, F5): In F1, when λ ∈ [0.02, 1], the algorithm’s ranking is overall in a better range; in F3, the ranking is stable and high when λ ∈ [0.02, 1], with the optimal ranking when λ ∈ [0.10, 1]. This indicates that in unimodal function optimization, λ can maintain good performance of the PBOA within a large range, especially when λ is in the above range, the comprehensive ranking is better, reflecting that the algorithm has strong adaptability to λ in local exploitation scenarios. In F5, the final ranking of the algorithm is the smallest (optimal) when λ ∈ [0.02, 0.2], indicating that a low λ value is more conducive to improving the convergence accuracy of unimodal functions. Based on the performance of unimodal functions, it is recommended that λ be preferentially selected from [0.02, 0.5].

Multimodal functions (F9, F10, F12): In F9, the algorithm rankings corresponding to 51 λ values show disordered fluctuations, indicating that this function has low sensitivity to λ; in F10, the ranking is significantly better and more stable when λ ∈ [0.18, 1]; in F12, lower λ values in [0.02, 0.3] correspond to better rankings. Overall, in multimodal function optimization, when λ ∈ [0.18, 0.5], the algorithm’s ranking performance is more balanced across most functions, which can well balance the synergy between global exploration and local exploitation.

Fixed-dimensional multimodal functions (F14, F18): Under all λ values, the final rankings of the algorithm show random distribution characteristics, and the overall ranking is low. This indicates that when the PBOA handles fixed-dimensional multimodal functions, its performance has low dependence on λ and has certain limitations.

In summary, combining the ranking results of unimodal and multimodal functions, when λ ∈ [0.18, 0.5], the PBOA achieves better comprehensive rankings on most test functions with strong performance stability. Therefore, this paper recommends this interval as the optimal value range for parameter λ.

5.1.4. Sensitivity Analysis of Parameter P1, P2, P3, and P4

In the PBOA, parameters P1, P2, P3, and P4 are key factors affecting the construction of perpendicular bisectors between particles and other objects. Among them, P1, P2, and P3 represent the probabilities of particles associating with the globally optimal (rank 1), second optimal (rank 2), and third optimal (rank 3) particles, respectively, while P4 is the probability of particles associating with randomly selected remaining particles. This section explores the impact of these parameters on PBOA performance through experiments, with the specific design as follows: P1 = P2 = P3 is set to increase from 0 to 0.33 with a step size of 0.01 (34 parameter combinations in total), and P4 is calculated as P4 = 1 − P1 − P2 − P3; to ensure experimental validity, other parameters are fixed as λ = 0.4; for each combination of parameters and test functions, 50 independent optimization experiments are conducted, with the comprehensive ranking as the core performance evaluation index (a smaller ranking indicates better comprehensive performance).

Figure 8 shows the ranking trends of the 34 parameter combinations. Based on the above results, the analysis is as follows:

Unimodal functions (F1, F3, F5): In F1 and F3, when the values of P1, P2, and P3 are greater than 0.08, the algorithm rankings are overall high and stable, indicating that the algorithm performs excellently in unimodal function optimization; in F5, higher values of P1, P2, and P3 correspond to better rankings, suggesting that increasing these parameters helps improve the search accuracy of the algorithm on this function. Overall, larger values of P1, P2, and P3 are more conducive to the algorithm’s performance in unimodal function optimization.

Multimodal functions (F9, F10, F12): F9 achieves better rankings when P1, P2, P3 ∈ [0.06, 0.33]; in F10, rankings improve significantly when P1, P2, and P3 are greater than 0.07; in F12, rankings show an optimizing trend as parameter values increase. Overall, in multimodal function optimization, larger values of P1, P2, and P3 lead to better rankings, with the combination P1 = P2 = P3 = 0.28 achieving the optimal ranking and the strongest performance stability.

Fixed-dimensional multimodal functions (F14, F18): Rankings of all parameter combinations show disordered distribution, with overall low rankings, indicating that adjusting P1, P2, P3, and P4 cannot effectively improve the algorithm’s performance on this type of function.

In summary, considering the ranking performance across all function types, when P1 = P2 = P3 = 0.28 and P4 = 0.16, the PBOA achieves the optimal comprehensive ranking on most test functions with strong performance stability, which is beneficial for enhancing the overall robustness of the algorithm. Therefore, this parameter combination is recommended as the optimal setting for P1, P2, P3, and P4.

5.2. Qualitative Analysis

This section systematically presents the execution process of the PBOA on selected test functions.

Figure 9 shows the qualitative analysis results of the PBOA on 8 test functions. The analysis uses four recognized indicators to intuitively evaluate the algorithm performance: search history, average fitness of the population, first-dimensional trajectory, and convergence curve. The specific meanings of each indicator are as follows:

Search history: Used to display the spatial distribution of the population during the search process;

First-dimensional trajectory: Reflects the position change law of solutions along the first dimension during algorithm iteration;

Average fitness: Characterizes the evolution trend of the overall fitness of the population as iterations progress;

Convergence curve: Describes the dynamic process of the algorithm approaching the optimal solution.

For detailed observation, the number of iterations of the PBOA is set to 1000, and the population size is set to 50.

From the search history, it can be seen that the PBOA exhibits strong search ability in both global and local search spaces and can quickly converge to the optimal solution region. The first-dimensional trajectory clearly shows that in the initial iteration stage, the position change range of the algorithm is wide to quickly locate potential optimal regions; in subsequent iterations, the frequency and amplitude of position changes gradually decrease, reflecting the strategic transition from global exploration to local exploitation. The average fitness curve shows a continuous downward trend, indicating that the population gradually converges to the optimal solution with iterations. These characteristics collectively indicate that the proposed PBOA has high efficiency in solution space search and can effectively balance the synergy between exploitation and exploration.

Figure 10 shows the iterative variation law of PBOA population diversity on 8 test functions. The results indicate that the population does not exhibit homogenization during iteration; especially on F14 and F18, the population diversity shows an upward trend with the increase in the number of iterations, demonstrating that the PBOA has strong global search capability and can effectively escape local optimal traps.

In the PBOA, particles are reset to random positions within the search space bounded by the lower bound (LB) and upper bound (UB) when either or . This “escape mechanism” serves as a core strategy to prevent the algorithm from stagnating in local optima. Experimental results indicate that particle resets triggered by or account for an average of 12–18% of the total iterations across all test scenarios. For multimodal functions such as F16 and F18, this proportion increases significantly to 25–30%. From a performance perspective, variations in reset frequency exhibit dual effects: excessive resets prolong convergence time, whereas a moderate increase in reset frequency enhances the algorithm’s ability to escape local optima, thereby improving global optimization efficiency. Overall, this mechanism effectively strengthens the performance of the PBOA in solving multi-extremal problems.

5.3. Comparative Experiments and Analysis

This section systematically evaluates the performance advantages and limitations of the PBOA through a comprehensive comparison with current mainstream optimization algorithms in terms of solution accuracy, convergence speed, and stability. In this section, all algorithms are set with 500 iterations and a population size of 50. The test results are shown in

Table 5,

Table 6,

Table 7 and

Table 8, with detailed analysis as follows:

Unimodal functions (F1~F7): The PBOA ranks first in F1~F4 and F7; it performs slightly worse than the WOA in F5 and slightly worse than GTO and DE in F6. Overall, the PBOA shows strong performance in the unimodal function test suite, with its comprehensive ranking significantly outperforming most algorithms (1/2 of WOA, which ranks second, and 1/10.63 of MFO, which ranks the worst). Among algorithms based on mathematical geometry principles, the PBOA exhibits particular competitiveness.

Multimodal functions (F8~F13): The PBOA ranks first in all test functions except F12, where it ranks second (slightly behind DRA). Overall, the PBOA performs outstandingly in multimodal function tests, maintaining significant competitiveness among all comparative algorithms including VOR and NEL.

Fixed-dimensional multimodal functions (F14~F23): The PBOA ranks first in all test functions, demonstrating significant superiority in optimizing this type of function. Its performance outperforms other geometry-based algorithms such as VOR and NEL, as well as mainstream optimization algorithms.

Perturbed unimodal functions (F24~F27): The PBOA ranks first in F25; it is outperformed by GTO and DE in F24, by the DRA and WOA in F26, and by the DRA in F27. Overall, the PBOA ranks second in this type of function test (only behind DRA), still maintaining certain performance advantages in competition with comparative algorithms including VOR and NEL.

Table 9 presents the results of the non-parametric Wilcoxon rank-sum test at a significance level of 0.05, showing that the PBOA has significant differences from most comparative algorithms, with only small differences from DE and MFO in specific scenarios. This result further confirms the robustness and uniqueness of the PBOA.

Figure 11 presents the convergence curves of the top 7 algorithms (PBOA, DRA, WOA, DE, GTO, GWO, WAO) among the 16 comparative algorithms. The results show that the PBOA maintains a stable convergence trend across all test functions. The PBOA expands the exploration range by introducing a strategy of constructing perpendicular bisectors with four types of reference points and controlling the update distance, which is reflected in

Figure 10 as a consistently steady downward trend in its convergence curve. Although the PBOA has a relatively slower convergence speed compared to other comparative algorithms, it still maintains a significant downward trend even when the number of evaluations is close to the optimal value. This characteristic implies that the PBOA may further find better solutions with additional computational resources, demonstrating its ability to dynamically balance exploration and exploitation, thereby enhancing the potential to avoid local optima.

Further analysis of the boxplots in

Figure 12 reveals that the result distribution of the PBOA is more concentrated with fewer outliers, which confirms its strong robustness and adaptability.

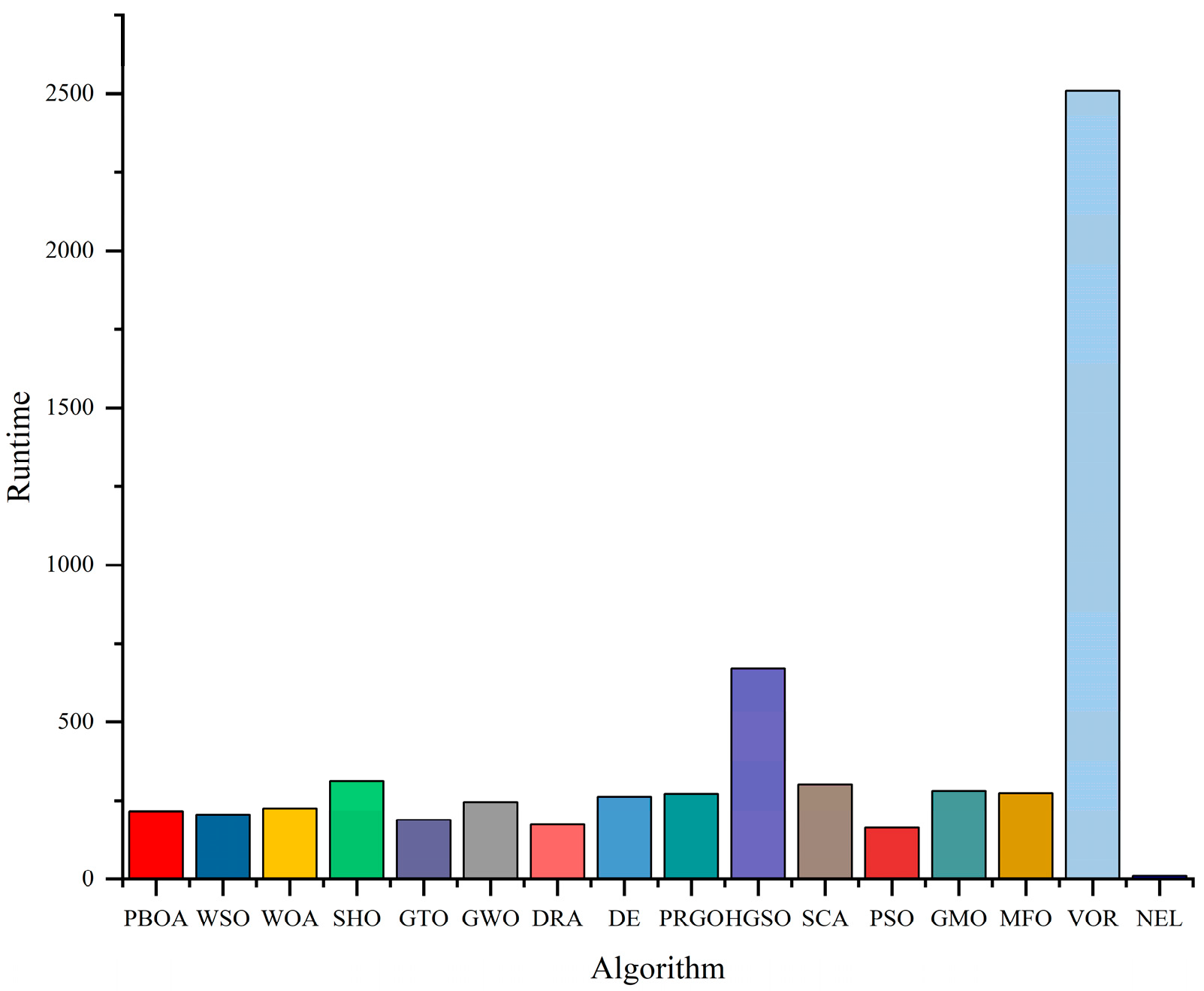

To compare operational efficiency,

Figure 13 records the time consumption of 14 algorithms to complete the experiment. The results show that PSO performs the best in operational efficiency, while the PBOA ranks fifth with relatively longer running time, which constitutes its potential performance bottleneck. Generally, newer algorithms tend to have higher computational loads due to more sophisticated exploration and exploitation mechanisms. Compared with the DRA and PRGO proposed in 2025, the PBOA has a shorter running time than PRGO and slightly longer than the DRA; the three algorithms have similar time consumption, all within 1.4 times that of PSO.

5.4. Comparison and Analysis with the Original Literature Data of Some Algorithms

To more objectively evaluate the performance of the PBOA, this study extracted experimental data from the original studies of the DRA, WOA, GTO, GWO, SHO, and GMO algorithms for comparative analysis; other algorithms were not included in the comparison as the original studies did not conduct relevant experiments.

Table 10 presents the

p-values of the Wilcoxon rank-sum test results between the PBOA and the aforementioned 6 algorithms on 23 benchmark functions (F1–F23) at a significance level of 0.05. Statistical analysis shows that the PBOA exhibits significant statistical differences from most comparative algorithms in the majority of test functions, as detailed below:

Compared with the DRA, 19 out of 23 functions show significant differences (p < 0.05), among which the differences in F3 (p = 6.94 × 10−5) and F1 (p = 1.09 × 10−3) are particularly significant, indicating that there are essential differences in their performance patterns when handling high-dimensional problems and extreme value problems.

Compared with the WOA, 17 functions present significant differences, typically in F3 (p = 2.08 × 10−3) and F1 (p = 3.89 × 10−2), suggesting that the PBOA has better stability in unimodal optimization scenarios.

Compared with GTO, 16 functions demonstrate significant differences, with prominent differences in F6 (p = 1.79 × 10−2) and F4 (p = 4.55 × 10−3), reflecting that the PBOA has stronger adaptability in multi-modal problems.

Compared with GWO, 15 functions have significant differences, including F3 (p = 3.47 × 10−4) and F2 (p = 6.67 × 10−3), which embodies the advantage of the PBOA in balancing exploration and exploitation capabilities.

Compared with SHO, 14 functions show significant differences, with obvious differences in F6 (p = 7.14 × 10−3) and F3 (p = 8.33 × 10−4), highlighting the efficiency advantage of the PBOA in the fine search process.

Compared with GMO, 15 functions present significant differences, such as F3 (p = 1.23 × 10−4) and F2 (p = 3.51 × 10−3), verifying that the PBOA has a unique optimization trajectory.

It is worth noting that non-significant differences (p ≥ 0.05) only exist in a few functions (e.g., F16, F18), where all algorithms converge to similar solutions, which may be related to the simplicity or specific attributes of the functions themselves. In summary, the statistical test results confirm that the performance of the PBOA has significant statistical differences from most comparative algorithms, confirming its robustness and uniqueness in handling diverse optimization problems.

6. Engineering Design Problems

In this section, the performance of the PBOA in practical optimization scenarios is evaluated through six engineering design problems to verify its optimization potential in real-world engineering environments. All experimental results are detailed in tabular form, with values recorded after rounding; algorithm rankings are strictly determined based on unrounded raw results to ensure the accuracy of the sorting. For practical problems 1 to 5 covered in this section, all algorithms are set with a maximum number of iterations of 10,000 and a population size of 50, while the maximum number of iterations for practical problem 6 is 200. The presented results are the optimal outcomes obtained from 50 independent runs of each algorithm on each problem.

6.1. Tension/Compression Spring Design

As shown in

Figure 13, the core objective of the tension/compression spring design problem is to minimize the spring weight, with three design parameters as optimization variables: wire diameter (

d), mean coil diameter (

D), and number of active coils (

N). The mathematical model of this problem can be expressed as:

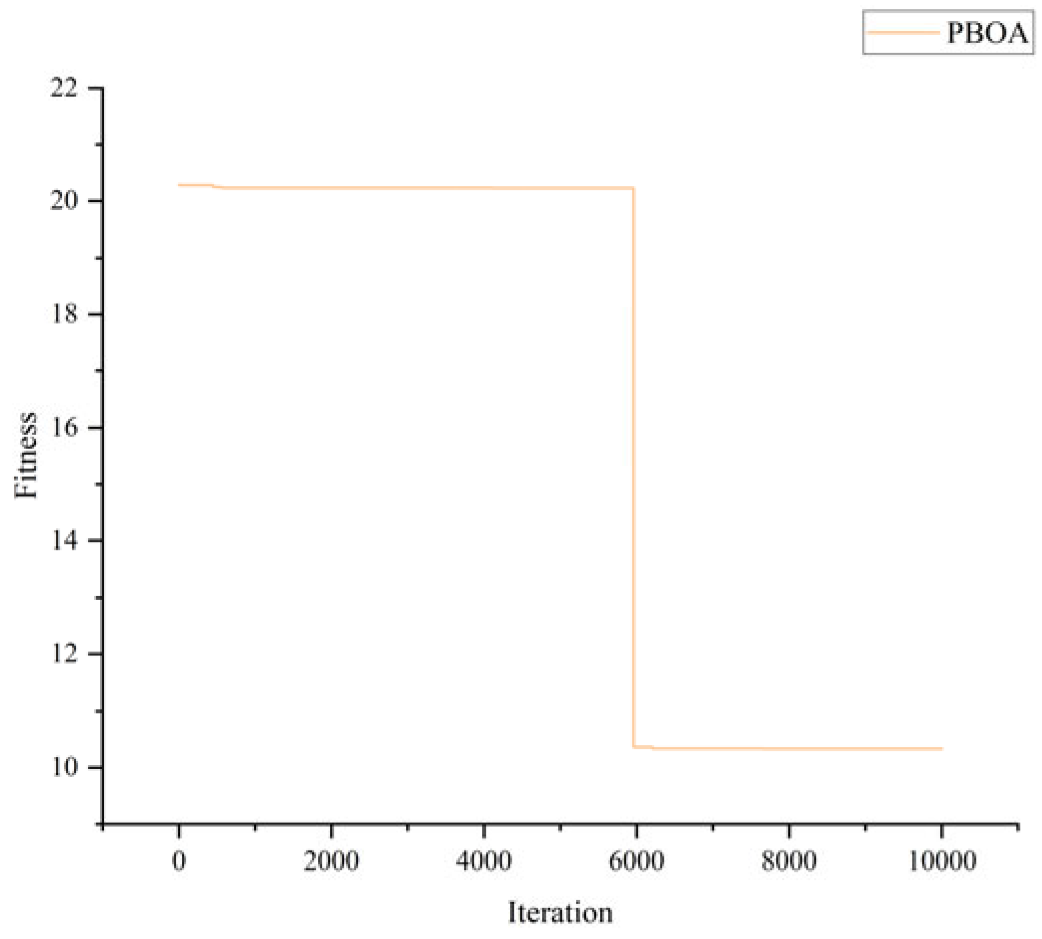

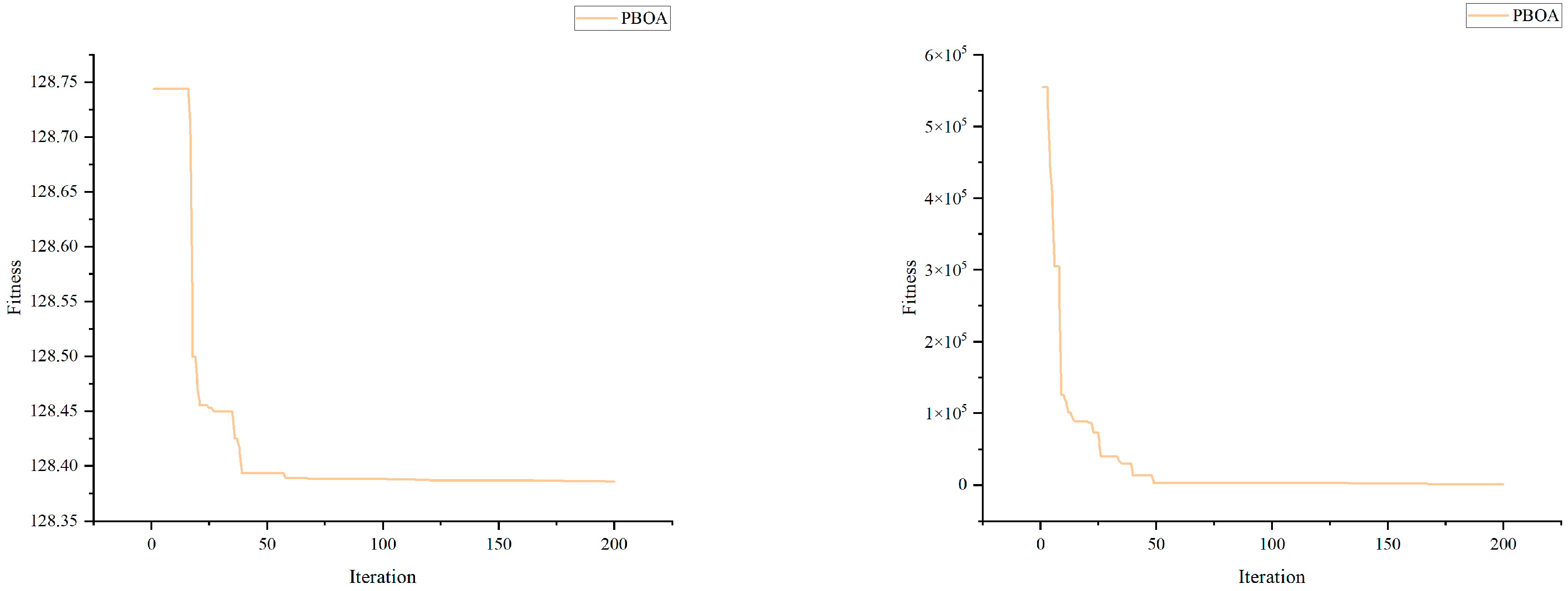

Table 11 lists the optimal solutions and corresponding fitness values of the PBOA and other comparative algorithms for this problem. The results show that the fitness value of the solution obtained by the PBOA is significantly better than that of other algorithms, demonstrating superior optimization performance. The convergence process of the PBOA for this problem is shown in

Figure 14.

6.2. Pressure Vessel Design

As shown in

Figure 15, the core objective of the pressure vessel design problem is: to minimize the manufacturing cost under the premise of ensuring that the vessel performance meets the standards. This problem involves four optimization variables, namely: shell thickness

Ts, head thickness

Th, inner radius

R, and length of the cylindrical part without heads

L. Its mathematical expression is as follows:

Table 12 presents the optimal solutions and corresponding fitness values of the PBOA and other comparative algorithms for the pressure vessel design problem. The results show that the fitness value of the solution obtained by the PBOA is the best among all comparative algorithms. The convergence process of the PBOA for this problem is shown in

Figure 15.

6.3. Welded Beam Design

The schematic diagram of the welded beam design problem is shown in

Figure 16. The core objective of this problem is to minimize the economic cost, which is achieved by adjusting 4 key parameters: beam thickness (

h), length (

l), height (

t), and width (

b).

Table 13 presents the optimization schemes and corresponding fitness values of various algorithms for the welded beam design problem. The results show that the fitness value of the solution obtained by the PBOA is the best among all comparative algorithms. The convergence process of the PBOA for this problem is shown in

Figure 16.

6.4. Wireless Sensor Network Coverage Optimization

Wireless Sensor Networks (WSNs) play a crucial role in fields such as environmental monitoring and smart agriculture, where the rationality of node deployment directly affects monitoring efficiency. The core objective of the wireless sensor network coverage optimization problem is to minimize the fitness value. The optimization variables are the coordinates of 20 sensor nodes: the x and y coordinates of each node (, for ). The mathematical model of this problem can be expressed as:

With:

where

is the minimum distance from node

i to the area edge, and

is the mean of

e(

i)

Table 14 presents the optimization schemes of various algorithms for the wireless sensor network coverage optimization problem (due to space constraints, only the first 4 variables of each algorithm’s result are shown) along with their corresponding fitness values. Among them, the complete solution of the PBOA is (10.51, 40.34, 65.21, 84.98, 86.92, 50.81, 86.25, 13.19, 31.70, 24.82, 9.66, 86.05, 22.33, 60.90, 87.17, 69.22, 66.53, 55.50, 67.53, 10.33, 44.29, 48.97, 87.39, 87.41, 51.60, 85.82, 31.69, 88.48, 85.54, 32.69, 11.87, 63.17, 34.37, 9.21, 10.52, 12.92, 45.95, 78.65, 55.84, 25.89).

The results show that among all the comparative algorithms, the fitness value of the solution obtained by the PBOA is the optimal. The convergence process of the PBOA for this problem is illustrated in

Figure 17.

6.5. Unmanned Aerial Vehicle (UAV) Path Planning Problem

In scenarios like environmental monitoring and logistics distribution, the rationality of Unmanned Aerial Vehicle (UAV) path planning directly affects task execution efficiency and energy consumption. The core objective of the UAV path planning problem is to minimize the fitness value, which comprehensively considers flight distance, energy consumption, and penalties for violating constraints. The optimization variables consist of values related to the visit order (first 5 variables) and coordinates of waypoints (next 20 variables, with each pair representing an coordinate). The mathematical model of this problem, with known parameters specified, is as follows:

Known Parameters:

Start point coordinates: ;

Target points coordinates (5 in total):

Flight height: Fixed at 100 (unit: meters, does not affect 2D path calculation);

Maximum flight distance: (unit: meters, including redundancy);

Maximum load: (unit: kg);

Load reduction per target point: (unit: kg, load decreases by this value after reaching each target point);

Energy consumption coefficient: ;

No-fly zones (each defined by center coordinates

and radius

r):

Safe distance from no-fly zones: (unit: meters, the minimum allowed distance between flight path and no-fly zones).

: Values used to determine the visit order of the 5 target points (converted into a permutation of {1, 2, 3, 4, 5} via sorting). : x and y coordinates of 10 waypoints (inserted between consecutive points in the path).

The sum of Euclidean distances between consecutive points in the full path. The full path is constructed as:

where

is the visit order of target points (a permutation of {1, 2, 3, 4, 5}). Mathematically

, where

are consecutive points in the full path.

Calculated using the model , where is the distance of the i-th segment, and is the load during the i-th segment. The load changes as: after visiting each target point t.

If any flight segment is within the range of a no-fly zone (), P increases by 1000 per violation. If total distance , P increases by .

Subject to:

No-fly zone avoidance: For each no-fly zone

and each flight segment between points

and

, the minimum distance from

to the segment must be

:

Maximum distance limit: .

Unique visit order: The visit order must be a permutation of {1, 2, 3, 4, 5} (all target points are visited exactly once).

Table 15 presents the optimization schemes of various algorithms for the UAV path planning problem (due to space constraints, only the first 4 variables of each algorithm’s result are shown) along with their corresponding fitness values. Among them, the complete solution of the PBOA is (1.00, 5.00, 1.03, 2.09, 3.03, 0.03, 0.04, 311.73, 486.28, 300.28, 599.86, 640.89, 281.84, 562.35, 162.36, 258.95, 51.83, 400.99, 252.66, 405.41, 782.37, 586.97, 489.63, 0.00, 54.58).

The results show that among all the comparative algorithms, the fitness value of the solution obtained by the PBOA is the optimal. The convergence process of the PBOA for this problem is illustrated in

Figure 18.

6.6. Design of Time-Varying Nonsingular Fast Terminal Sliding Mode Controller for H-Type Motion Platform

Figure 19 shows the schematic diagram and structural sketch of the H-type motion platform. The motion mechanism of the platform is as follows: two parallel Y-direction ball screws drive the worktable collaboratively to realize Y-direction motion; the X-direction ball screw is connected across the two Y-axis ball screws, responsible for driving the worktable to move in the X-direction; the entire motion system is powered by a servo motor. Benefiting from its stable structural design, the H-type motion platform has a wide range of application scenarios.

In terms of control accuracy, the synchronization error of the two Y-direction ball screws must be strictly controlled within a very small range, which is the key to ensuring the Y-direction displacement accuracy of the platform. As proposed in Reference [

71], the actual displacement in the X-direction

dx′ and Y-direction

dy′ of the worktable can be expressed as:

where

dy1 is the displacement of the Y

1 motion mechanism,

dy2 is the displacement of the Y

2 motion mechanism,

dx is the displacement of the X motion mechanism,

θ is the angle between the X motion mechanism and the line perpendicular to the Y

1 and Y

2 motion mechanisms, and

L is the distance between Y

1 and Y

2.

A time-varying nonsingular fast terminal sliding mode controller is designed in Reference [

71]:

The control rate

wx for the X-direction displacement is:

where

where

di,

do,

vi, and

vo represent the desired displacement, output displacement, desired velocity, and output velocity in the

i-direction (where

i can denote X, Y

1, and Y

2), respectively;

δ,

k1,

k2,

k3,

k4,

k5, and

k6 are constants greater than 0;

τ takes values in the range of 1–300;

K is a constant affected by the screw lead and gearbox reduction ratio; and tanh is the hyperbolic tangent function.

The control rate

wY1 for the Y

1-direction is:

The control rate

wY2 for the Y

2-direction is:

where

Among the three controllers for the X, Y1, and Y2 directions, although the symbols δ, β, k1, k2, k3, k4, k5, and k6 are consistent, parameter settings of different controllers need to be differentiated to achieve optimal control effects. Based on this, the problem can be formulated as the “parameter optimization problem of time-varying nonsingular fast terminal sliding mode controller for H-type motion platform”. Its core objectives are: to improve the X-direction control accuracy by optimizing the parameters δ, k1, k2, k3, k4, k5, and k6 of the X-direction controller; and to enhance the Y-direction control accuracy while reducing the synchronization error between the Y1 and Y2 motion mechanisms by optimizing the parameters δ, β, k1, k2, k3, k4, k5, and k6 of the two Y-direction controllers. The mathematical formulation of this problem is as follows:

Consider for X-direction controller:

Consider for Y

1-direction controller or Y

2-direction controller:

Minimize for X-direction controller:

Minimize for Y

1-direction controller or Y

2-direction controller:

All variables range from [0.0001, 10,000]. Considering factors such as transmission efficiency and disturbances, the X-direction motion mechanism is set with K = 0.99, the Y1-direction motion mechanism with K = 0.99, and the Y2-direction motion mechanism with K = 0.98. Additionally, random numbers following a normal distribution (mean = 0, variance = 0.001) are added to the system output to simulate external disturbances.

Table 16,

Table 17 and

Table 18 present the optimization schemes and corresponding fitness values of the PBOA and other comparative algorithms for the H-type motion platform optimization problem. The results indicate that among all comparative algorithms, the fitness value of the solution obtained by the PBOA is optimal.

Figure 20 illustrates the convergence process of the PBOA for this problem.

After parameter optimization using the PBOA, the input-output curves (actual displacements of the X-axis, Y1-axis, and Y2-axis) of the H-type motion platform, as well as the synchronization error between the two Y-direction motion mechanisms, are shown in

Figure 21. Experimental data reveal that, with a standard displacement of 1 mm as the reference, the control errors between the actual displacements of the

X-axis, Y1-axis, Y2-axis and the standard value are all stabilized within the range of ±2 × 10

−5 mm. Meanwhile, the synchronization error of the two Y-direction mechanisms is controlled within ±2.6 × 10

−5 mm without significant fluctuations.

These results fully demonstrate that in engineering scenarios with disturbances, the PBOA can effectively ensure the motion accuracy and synchronization stability of the H-type motion platform, which fully meets the requirements of high-precision motion control in engineering applications.

6.7. Summary of PBOA’s Performance in Practical Problems

Table 19 presents the results of the non-parametric Wilcoxon rank-sum test at a significance level of 0.05, analyzing the performance differences between the PBOA and other comparative algorithms across 5 problems (P1 to P5). The

p-values in the table indicate the statistical significance of these differences, with a

p-value < 0.05 suggesting a significant performance gap between the PBOA and the corresponding algorithm.

The results show that the PBOA exhibits significant differences from most comparative algorithms across all 5 problems. Specifically, algorithms such as WSO, WOA, SHO, GTO, GWO, DRA, PRGO, HGSO, SCA, PSO, GMO, VOR, and NEL all yield p-values far below 0.05 when compared with PBOA. For instance, in P3, the extremely small p-values of the WOA (1.15 × 10−9) and SHO (2.98 × 10−6) indicate that the performance advantage of the PBOA over these algorithms is statistically highly significant. Similarly, NEL demonstrates remarkably low p-values across multiple problems, such as 4.17 × 10−6 (P2) and 9.74 × 10−10 (P3), reflecting a distinct performance gap with the PBOA.

Notably, the PBOA shows relatively smaller differences from DE and MFO in specific scenarios. For example, in P1, although the p-values of DE (3.02 × 10−3) and MFO (2.81 × 10−3) are still below 0.05, they are higher than those of algorithms like the WOA (2.56 × 10−3) and SHO (1.67 × 10−3). In P4, the p-values of DE (3.35 × 10−3) and MFO (3.05 × 10−3) are also larger than those of GWO (1.78 × 10−3) and DRA (2.67 × 10−3), indicating a relatively less pronounced performance gap. These results suggest that while the PBOA still outperforms DE and MFO, the statistical significance of this advantage is relatively weaker in certain problems.

Overall, the results of the Wilcoxon rank-sum test further confirm the robustness and uniqueness of the PBOA: it maintains a significant performance advantage over most comparative algorithms across different problems, and even in cases where the differences with individual algorithms (DE and MFO) are relatively smaller, it still demonstrates stable superiority. This consistency underscores the effectiveness of the PBOA in solving the target optimization problems.