KD-MSA: A Multimodal Implicit Sentiment Analysis Approach Based on KAN and Asymmetric Contribution-Aware Dynamic Fusion

Abstract

1. Introduction

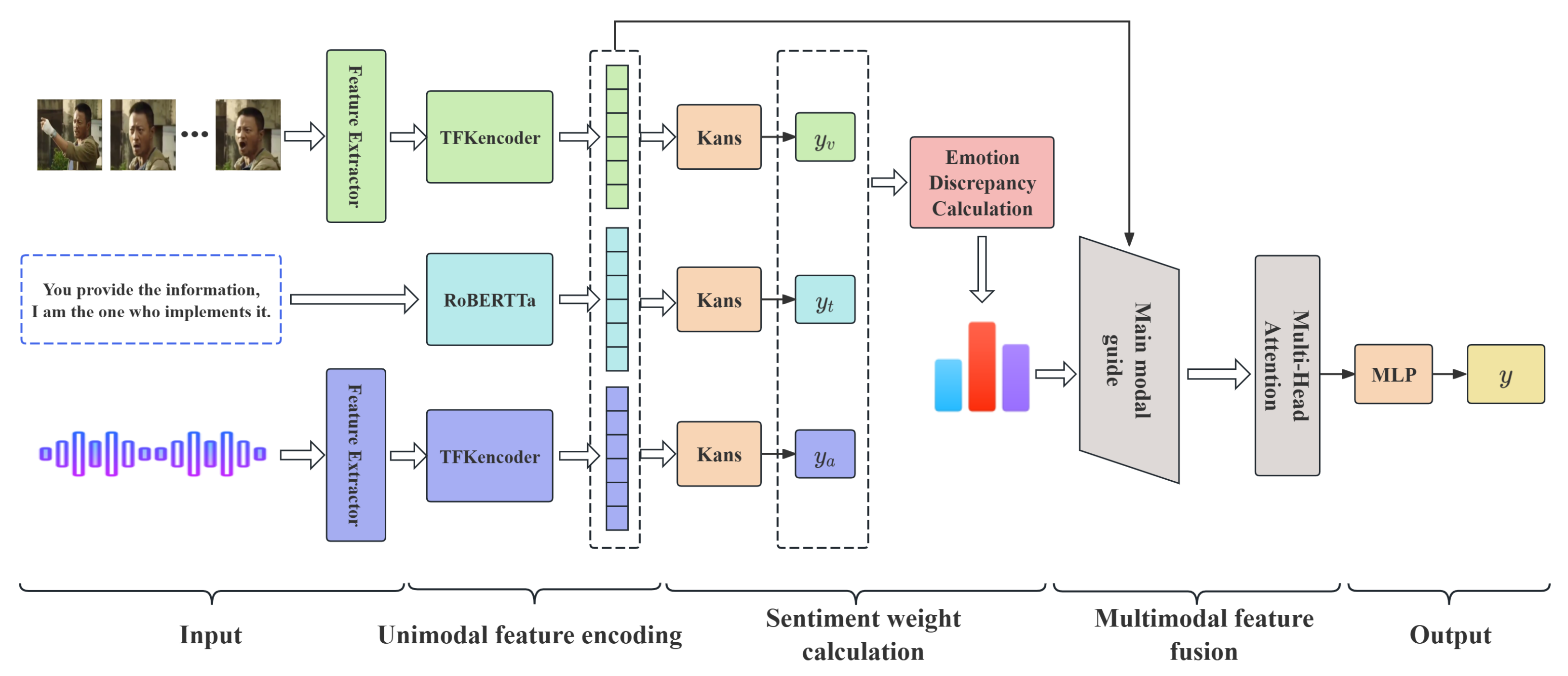

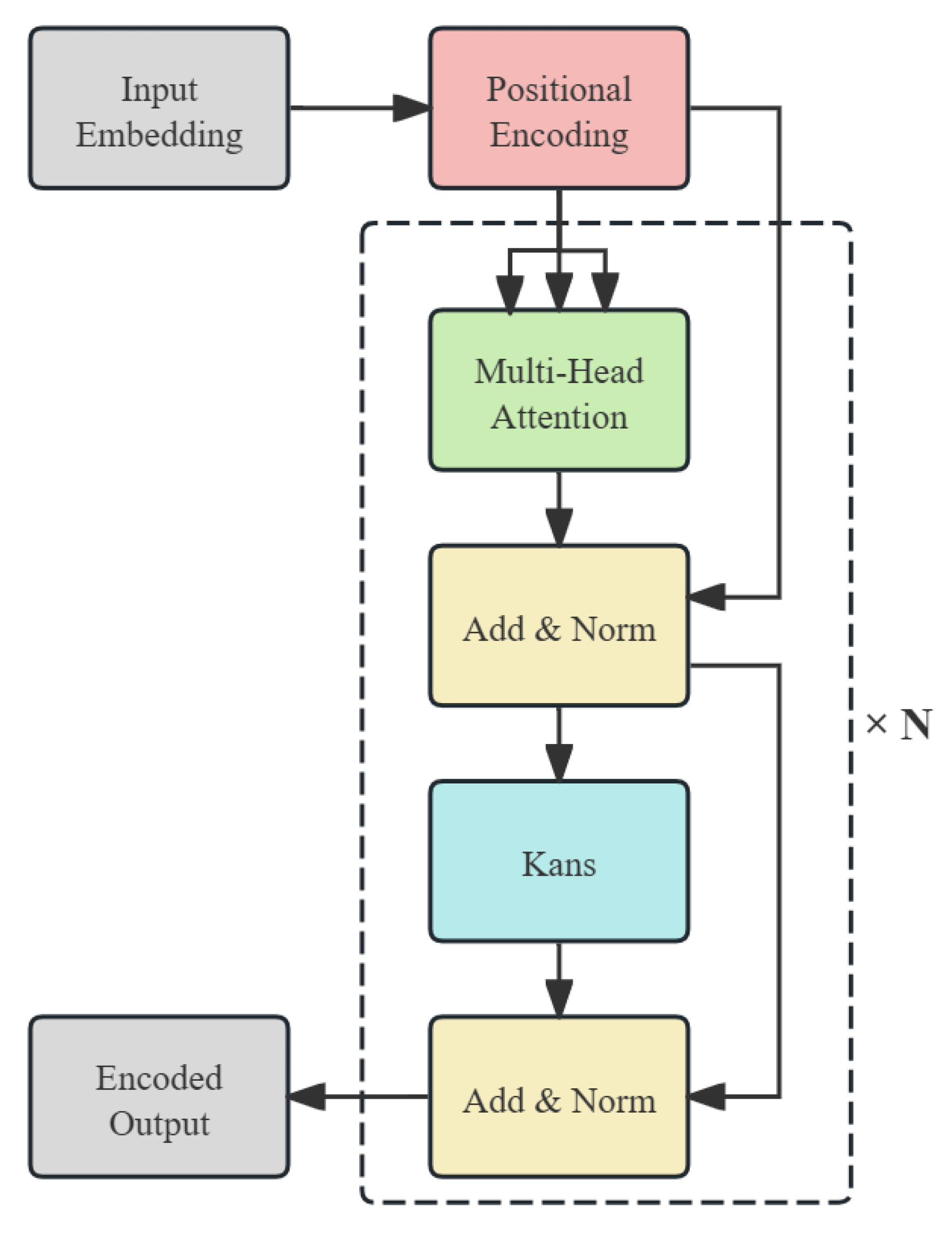

- KAN-based feature encoding: We introduce a feature encoder–decoder architecture that replaces the conventional feed-forward network with a KAN module. By leveraging learnable B-spline basis functions, our model captures complex nonlinear relationships that traditional MLP-based encoders fail to represent, thereby improving the expressiveness and interpretability of unimodal sentiment features.

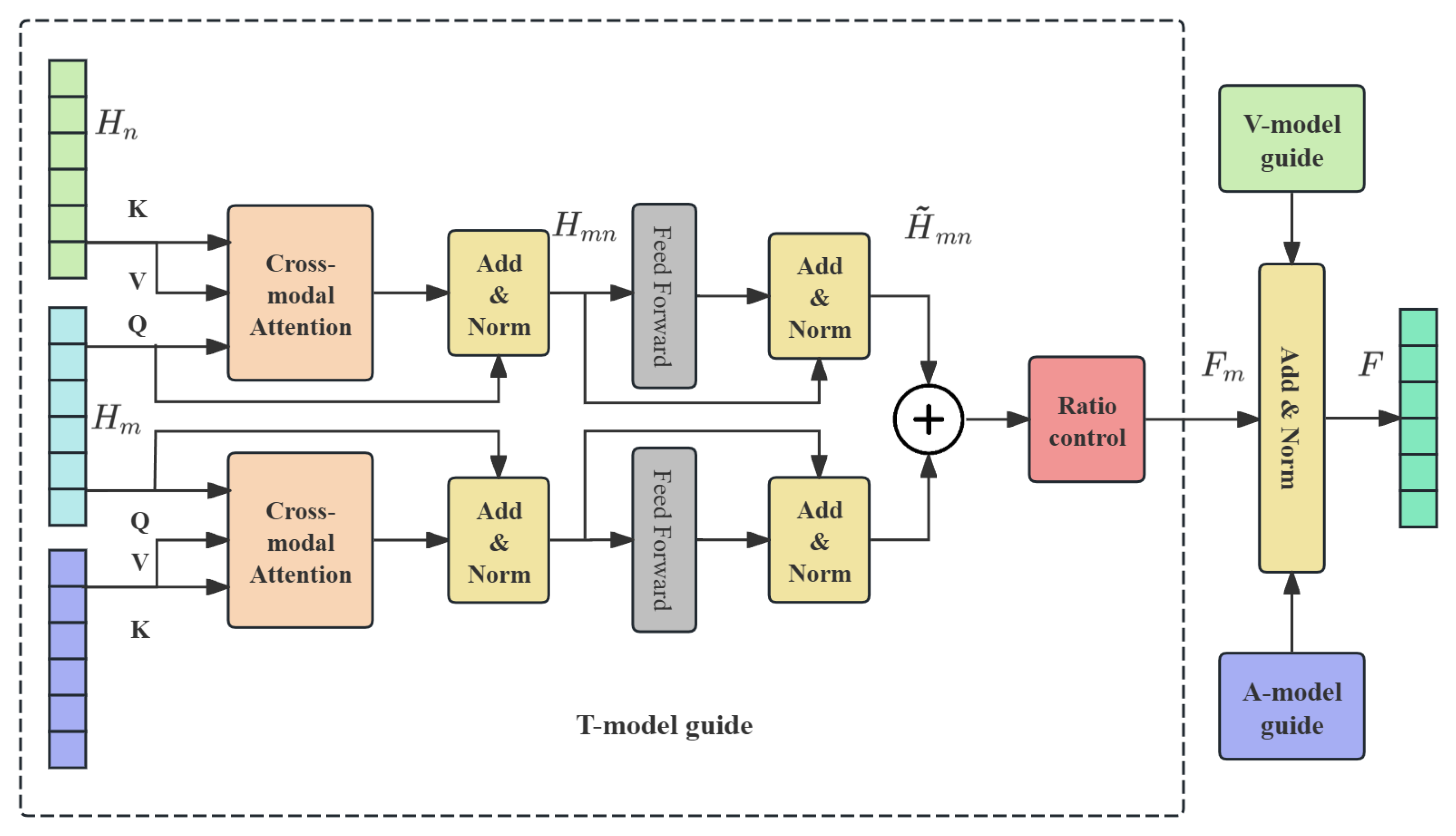

- Sentiment-aware dynamic fusion: We propose a novel cross-fusion strategy guided by dynamically calculated unimodal sentiment weights. Unlike existing static or text-dominant fusion methods, our approach adaptively adjusts modality contributions according to their emotional consistency, effectively suppressing noisy signals and enhancing complementary cues across modalities.

- Integration of multi-head attention: Multi-head attention is incorporated in both unimodal encoding and multimodal fusion stages. This enables the model to capture dependencies from multiple representation subspaces in parallel, improving robustness in handling ambiguous or implicit emotional expressions.

- Comprehensive evaluation with significant improvements: Extensive experiments on two Chinese datasets (CH-SIMS, CH-SIMSv2) and two English datasets (MOSI, MOSEI) demonstrate that our model consistently outperforms strong baselines. Specifically, on CH-SIMSv2, KD-MSA achieves an F1 score of 81.02%, surpassing the best baseline (CENet, 79.63%) by 1.39%. On MOSI, KD-MSA improves the F1 score to 84.87%, a relative gain of 2.64% over MulT. On the large-scale MOSEI dataset, KD-MSA attains an F1 score of 85.89%, exceeding BBFN (85.56%) and MMIM (85.26%), while reducing the MAE to 0.5299, the lowest among all compared models. These results verify the effectiveness and cross-lingual generalization capability of our method.

2. Related Work

2.1. Implicit Sentiment Analysis

2.2. Multimodal Fusion Strategy

3. Methodology

3.1. Task Definition

3.2. Overall Architecture

3.3. Unimodal Feature Extraction

3.4. Encoding with KAN

3.5. Modal Weight Calculation

3.6. Multimodal Dynamic Fusion

3.7. Output and Optimization Objectives

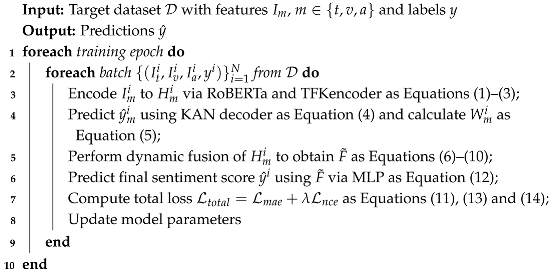

| Algorithm 1: Training Procedure of KD-MSA |

|

4. Experiments

4.1. Datasets

4.2. Evaluation Metrics

4.3. Baselines

4.4. Experimental Settings

4.5. Results and Analysis

4.5.1. Comparison with Baselines

4.5.2. Ablation Study and Analysis

4.5.3. Example Analysis

5. Conclusions and Future Work

- (1)

- Although this paper has achieved asymmetry modeling at the modal level, it has not yet considered more fine-grained modal differences, such as local asymmetry in the temporal dimension or at the semantic segment level. Next, we plan to extend the dynamic fusion mechanism to the local level to more accurately characterize the contribution changes of different modalities in different semantic segments.

- (2)

- We will try to introduce external knowledge resources, such as metaphor knowledge bases, emotional common sense graphs, etc., to enhance the model’s perception of implicit emotional clues, which play an important complementary role in low-resource modalities such as visual media and speech.

- (3)

- The modal asymmetric fusion strategy proposed in this paper will be extended to other implicit sentiment task analyses, such as sarcasm recognition and racial discrimination recognition, to further verify the universality and extensibility of the method.

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Zhang, C.; Yang, Z.; He, X.; Deng, L. Multimodal Intelligence: Representation Learning, Information Fusion, and Applications. IEEE J. Sel. Top. Signal Process. 2020, 14, 478–493. [Google Scholar] [CrossRef]

- Baltrušaitis, T.; Ahuja, C.; Morency, L.P. Multimodal Machine Learning: A Survey and Taxonomy. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 41, 423–443. [Google Scholar] [CrossRef]

- Wu, T.; Peng, J.; Zhang, W.; Zhang, H.; Tan, S.; Yi, F.; Ma, C.; Huang, Y. Video sentiment analysis with bimodal information-augmented multi-head attention. Knowl.-Based Syst. 2022, 235, 107676. [Google Scholar] [CrossRef]

- Zhang, H.; Li, M.; Zhang, J. Implicit Sentiment Analysis for Chinese Texts based on Multimodal Information Fusion. Comput. Eng. Appl. 2025, 179–190. [Google Scholar] [CrossRef]

- Huang, J.; Zhou, J.; Tang, Z.; Lin, J.; Chen, C.Y.C. TMBL: Transformer-based multimodal binding learning model for multimodal sentiment analysis. Knowl.-Based Syst. 2024, 285, 111346. [Google Scholar] [CrossRef]

- Zhang, H.; Wang, Y.; Yin, G.; Liu, K.; Liu, Y.; Yu, T. Learning Language-guided Adaptive Hyper-modality Representation for Multimodal Sentiment Analysis. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, Singapore, 6–10 December 2023; Bouamor, H., Pino, J., Bali, K., Eds.; pp. 756–767. [Google Scholar] [CrossRef]

- Wang, Y.; Lu, C.; Chen, Z. Multimodal sentiment analysis model with cross-modal text-information enhancement. J. Comput. Appl. 2024, 1–10. [Google Scholar] [CrossRef]

- Liu, Z.; Wang, Y.; Vaidya, S.; Ruehle, F.; Halverson, J.; Soljačić, M.; Hou, T.Y.; Tegmark, M. KAN: Kolmogorov-Arnold Networks. arXiv 2024, arXiv:2404.19756. [Google Scholar]

- Turney, P.; Neuman, Y.; Assaf, D.; Cohen, Y. Literal and Metaphorical Sense Identification through Concrete and Abstract Context. In Proceedings of the 2011 Conference on Empirical Methods in Natural Language Processing, Edinburgh, UK, 27–31 July 2011; Barzilay, R., Johnson, M., Eds.; pp. 680–690. [Google Scholar]

- Liao, J.; Wang, S.; Li, D. Identification of fact-implied implicit sentiment based on multi-level semantic fused representation. Knowl.-Based Syst. 2019, 165, 197–207. [Google Scholar] [CrossRef]

- Gandy, L.; Allan, N.; Atallah, M.; Frieder, O.; Howard, N.; Kanareykin, S.; Koppel, M.; Last, M.; Neuman, Y.; Argamon, S. Automatic identification of conceptual metaphors with limited knowledge. In Proceedings of the Twenty-Seventh AAAI Conference on Artificial Intelligence, Bellevue, WA, USA, 14–18 July 2013; AAAI Press: Washington, DC, USA, 2013. AAAI’13. pp. 328–334. [Google Scholar]

- Wei, J.; Liao, J.; Yang, Z.; Wang, S.; Zhao, Q. BiLSTM with Multi-Polarity Orthogonal Attention for Implicit Sentiment Analysis. Neurocomputing 2020, 383, 165–173. [Google Scholar] [CrossRef]

- Fu, L.; Liu, S. A Syntax-based BSGCN Model for Chinese Implicit Sentiment Analysis with Multi-classification. In Proceedings of the 2022 IEEE 16th International Conference on Application of Information and Communication Technologies (AICT), Washington DC, USA, 12–14 October 2022; pp. 1–7. [Google Scholar] [CrossRef]

- Li, R.; Chen, H.; Feng, F.; Ma, Z.; Wang, X.; Hovy, E. DualGCN: Exploring Syntactic and Semantic Information for Aspect-Based Sentiment Analysis. IEEE Trans. Neural Netw. Learn. Syst. 2024, 35, 7642–7656. [Google Scholar] [CrossRef]

- Tian, J.; Ai, F. Aspect-Level Sentiment Analysis Based on Enhanced Syntactic Information and Multi-feature Graph Convolutional Fusion. J. Front. Comput. Sci. Technol. 2025, 19, 738–748. [Google Scholar]

- Zhou, X.; Wan, X.; Xiao, J. Attention-based LSTM network for cross-lingual sentiment classification. In Proceedings of the 2016 Conference on Empirical Methods in Natural Language Processing, Austin, TX, USA, 1–4 November 2016; pp. 247–256. [Google Scholar]

- Zhang, J.; Zhang, L.; Shen, F.; Tan, H.; He, Y. Implicit Sentiment Analysis Based on RoBERTa Fused with BiLSTM and Attention Mechanism. Comput. Eng. Appl. 2022, 58, 142–150. [Google Scholar]

- Ma, Y.; Yu, L.; Tian, S.; Qian, M.; Zhang, L. Metaphorical Aspect Sentiment Analysis Based on RoBERTa and Attention Mechanism. J. Chin. Comput. Syst. 2023, 44, 2236–2241. [Google Scholar] [CrossRef]

- Shutova, E.; Kiela, D.; Maillard, J. Black Holes and White Rabbits: Metaphor Identification with Visual Features. In Proceedings of the 2016 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, San Diego, CA, USA, 12–17 June 2016; Knight, K., Nenkova, A., Rambow, O., Eds.; pp. 160–170. [Google Scholar] [CrossRef]

- Zhang, D.; Zhang, M.; Guo, T.; Peng, C.; Saikrishna, V.; Xia, F. In Your Face: Sentiment Analysis of Metaphor with Facial Expressive Features. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN), Shenzhen, China, 18–22 July 2021; pp. 1–8. [Google Scholar] [CrossRef]

- Chen, M.; Ubul, K.; Xu, X.; Aysa, A.; Muhammat, M. Connecting Text Classification with Image Classification: A New Preprocessing Method for Implicit Sentiment Text Classification. Sensors 2022, 22, 1899. [Google Scholar] [CrossRef] [PubMed]

- Fan, X.; Yang, X.; Zhang, L.; Ye, Q.; Ye, N. Emotion recognition based on visual and auditory information. J. Nanjing Univ. (Nat. Sci.) 2021, 57, 309–317. [Google Scholar] [CrossRef]

- Wang, D.; Guo, X.; Tian, Y.; Liu, J.; He, L.; Luo, X. TETFN: A text enhanced transformer fusion network for multimodal sentiment analysis. Pattern Recognit. 2023, 136, 109259. [Google Scholar] [CrossRef]

- Zhu, C.; Chen, M.; Zhang, S.; Sun, C.; Liang, H.; Liu, Y.; Chen, J. SKEAFN: Sentiment knowledge enhanced attention fusion network for multimodal sentiment analysis. Inf. Fusion 2023, 100, 101958. [Google Scholar] [CrossRef]

- Han, W.; Chen, H.; Poria, S. Improving Multimodal Fusion with Hierarchical Mutual Information Maximization for Multimodal Sentiment Analysis. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Online and Punta Cana, Dominican Republic, 7–11 November 2021; Moens, M.F., Huang, X., Specia, L., Yih, S.W.T., Eds.; pp. 9180–9192. [Google Scholar] [CrossRef]

- Li, Z.; Zhou, Y.; Zhang, W.; Liu, Y.; Yang, C.; Lian, Z.; Hu, S. AMOA: Global Acoustic Feature Enhanced Modal-Order-Aware Network for Multimodal Sentiment Analysis. In Proceedings of the 29th International Conference on Computational Linguistics, Gyeongju, Republic of Korea, 12–17 October 2022; Calzolari, N., Huang, C.R., Kim, H., Pustejovsky, J., Wanner, L., Choi, K.S., Ryu, P.M., Chen, H.H., Donatelli, L., Ji, H., et al., Eds.; pp. 7136–7146. [Google Scholar]

- Lin, R.; Hu, H. Multimodal Contrastive Learning via Uni-Modal Coding and Cross-Modal Prediction for Multimodal Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2022, Abu Dhabi, United Arab Emirates, 7–11 December 2022; Goldberg, Y., Kozareva, Z., Zhang, Y., Eds.; pp. 511–523. [Google Scholar] [CrossRef]

- Feng, X.; Lin, Y.; He, L.; Li, Y.; Chang, L.; Zhou, Y. Knowledge-Guided Dynamic Modality Attention Fusion Framework for Multimodal Sentiment Analysis. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024, Miami, FL, USA, 12–16 November 2024. [Google Scholar]

- Zhang, Y.; Zhang, H.; Liu, Y.; Liang, K.; Wang, Y. Multimodal Sentiment Analysis Based on Bidirectional Mask Attention Mechanism. Data Anal. Knowl. Discov. 2023, 7, 46–55. [Google Scholar]

- Wang, Y.; He, J.; Wang, D.; Wang, Q.; Wan, B.; Luo, X. Multimodal transformer with adaptive modality weighting for multimodal sentiment analysis. Neurocomputing 2024, 572, 127181. [Google Scholar] [CrossRef]

- Tsai, Y.H.H.; Bai, S.; Liang, P.P.; Kolter, J.Z.; Morency, L.P.; Salakhutdinov, R. Multimodal Transformer for Unaligned Multimodal Language Sequences. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 28 July–2 August 2019; Korhonen, A., Traum, D., Màrquez, L., Eds.; pp. 6558–6569. [Google Scholar] [CrossRef]

- Wang, D.; Liu, S.; Wang, Q.; Tian, Y.; He, L.; Gao, X. Cross-Modal Enhancement Network for Multimodal Sentiment Analysis. IEEE Trans. Multimed. 2023, 25, 4909–4921. [Google Scholar] [CrossRef]

- Chen, Y.; Zhang, L.; Zhang, L.; Lü, X. Multimodal Sentiment Analysis Method Based on Cross-Modal Attention and Gated Unit Fusion Network. Data Anal. Knowl. Discov. 2024, 8, 67–76. [Google Scholar]

- Mengara Mengara, A.G.; Moon, Y.k. CAG-MoE: Multimodal Emotion Recognition with Cross-Attention Gated Mixture of Experts. Mathematics 2025, 13, 1907. [Google Scholar] [CrossRef]

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- Cui, Y.; Che, W.; Liu, T.; Qin, B.; Wang, S.; Hu, G. Revisiting Pre-Trained Models for Chinese Natural Language Processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: Findings, Online, 16–20 November 2020; pp. 657–668. [Google Scholar]

- Baltrusaitis, T.; Zadeh, A.; Lim, Y.C.; Morency, L.P. OpenFace 2.0: Facial Behavior Analysis Toolkit. In Proceedings of the 2018 13th IEEE International Conference on Automatic Face & Gesture Recognition (FG 2018), Xi’an, China, 15–19 May 2018; pp. 59–66. [Google Scholar] [CrossRef]

- McFee, B.; Raffel, C.; Liang, D.; Ellis, D.P.; McVicar, M.; Battenberg, E.; Nieto, O. librosa: Audio and music signal analysis in python. SciPy 2015, 2015, 18–24. [Google Scholar]

- Gutmann, M.; Hyvärinen, A. Noise-contrastive estimation: A new estimation principle for unnormalized statistical models. In Proceedings of the Thirteenth International Conference on Artificial Intelligence and Statistics, Chia Laguna Resort, Sardinia, Italy, 13–15 May 2010; Teh, Y.W., Titterington, M., Eds.; Volume 9, Proceedings of Machine Learning Research, pp. 297–304. [Google Scholar]

- Yu, W.; Xu, H.; Meng, F.; Zhu, Y.; Ma, Y.; Wu, J.; Zou, J.; Yang, K. CH-SIMS: A Chinese Multimodal Sentiment Analysis Dataset with Fine-grained Annotation of Modality. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020; Jurafsky, D., Chai, J., Schluter, N., Tetreault, J., Eds.; pp. 3718–3727. [Google Scholar] [CrossRef]

- Liu, Y.; Yuan, Z.; Mao, H.; Liang, Z.; Yang, W.; Qiu, Y.; Cheng, T.; Li, X.; Xu, H.; Gao, K. Make Acoustic and Visual Cues Matter: CH-SIMS v2.0 Dataset and AV-Mixup Consistent Module. In Proceedings of the 2022 International Conference on Multimodal Interaction, Bengaluru, India, 7–11 November 2022; Association for Computing Machinery: New York, NY, USA, ICMI ’22; pp. 247–258. [Google Scholar] [CrossRef]

- Zadeh, A.; Zellers, R.; Pincus, E.; Morency, L.P. Multimodal Sentiment Intensity Analysis in Videos: Facial Gestures and Verbal Messages. IEEE Intell. Syst. 2016, 31, 82–88. [Google Scholar] [CrossRef]

- Bagher Zadeh, A.; Liang, P.P.; Poria, S.; Cambria, E.; Morency, L.P. Multimodal Language Analysis in the Wild: CMU-MOSEI Dataset and Interpretable Dynamic Fusion Graph. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Melbourne, Australia, 15–20 July 2018; Gurevych, I., Miyao, Y., Eds.; pp. 2236–2246. [Google Scholar] [CrossRef]

- Zadeh, A.; Chen, M.; Poria, S.; Cambria, E.; Morency, L.P. Tensor Fusion Network for Multimodal Sentiment Analysis. In Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, Copenhagen, Denmark, 9–11 September 2017; Palmer, M., Hwa, R., Riedel, S., Eds.; pp. 1103–1114. [Google Scholar] [CrossRef]

- Liu, Z.; Shen, Y.; Lakshminarasimhan, V.B.; Liang, P.P.; Bagher Zadeh, A.; Morency, L.P. Efficient Low-rank Multimodal Fusion With Modality-Specific Factors. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), Melbourne, Australia, 15–20 July 2018; Gurevych, I., Miyao, Y., Eds.; pp. 2247–2256. [Google Scholar] [CrossRef]

- Hazarika, D.; Zimmermann, R.; Poria, S. MISA: Modality-Invariant and -Specific Representations for Multimodal Sentiment Analysis. In Proceedings of the 28th ACM International Conference on Multimedia, Seattle, WA, USA, 12–16 October 2020; Association for Computing Machinery: New York, NY, USA, 2020. MM ’20. pp. 1122–1131. [Google Scholar] [CrossRef]

- Han, W.; Chen, H.; Gelbukh, A.; Zadeh, A.; Morency, L.p.; Poria, S. Bi-Bimodal Modality Fusion for Correlation-Controlled Multimodal Sentiment Analysis. In Proceedings of the 2021 International Conference on Multimodal Interaction, Montréal, QC, Canada, 18–22 October 2021; Association for Computing Machinery: New York, NY, USA, 2021. ICMI ’21. pp. 6–15. [Google Scholar] [CrossRef]

- Sun, H.; Wang, H.; Liu, J.; Chen, Y.W.; Lin, L. CubeMLP: An MLP-based Model for Multimodal Sentiment Analysis and Depression Estimation. In Proceedings of the 30th ACM International Conference on Multimedia, Lisboa, Portugal, 10–14 October 2022; Association for Computing Machinery: New York, NY, USA, 2022. MM’22. pp. 3722–3729. [Google Scholar] [CrossRef]

- Mao, H.; Yuan, Z.; Xu, H.; Yu, W.; Liu, Y.; Gao, K. M-SENA: An Integrated Platform for Multimodal Sentiment Analysis. In Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics: System Demonstrations, Dublin, Ireland, 22–27 May 2022; Basile, V., Kozareva, Z., Stajner, S., Eds.; pp. 204–213. [Google Scholar] [CrossRef]

| Datasets | Modality | Size 1 | Len 2 | Dim 3 |

|---|---|---|---|---|

| CH-SIMS | Text | 39 | 768 | |

| Vision | 2281 | 55 | 709 | |

| Audio | 400 | 33 | ||

| CH-SIMSv2 | Text | 50 | 768 | |

| Vision | 4403 | 232 | 177 | |

| Audio | 952 | 25 | ||

| MOSI | Text | 50 | 768 | |

| Vision | 2199 | 500 | 20 | |

| Audio | 375 | 5 | ||

| MOSEI | Text | 50 | 768 | |

| Vision | 22856 | 500 | 35 | |

| Audio | 500 | 74 |

| Dataset | Train | Valid | Test | Total | Language |

|---|---|---|---|---|---|

| CH-SIMS | 1368 | 456 | 457 | 2281 | Chinese |

| CH-SIMSv2 | 2722 | 647 | 1034 | 4403 | Chinese |

| MOSI | 1284 | 229 | 686 | 2199 | English |

| MOSEI | 16326 | 1871 | 4659 | 22856 | English |

| Parameters | CH-SIMS | CH-SIMSv2 | MOSI | MOSEI |

|---|---|---|---|---|

| Batch Size | 32 | 32 | 32 | 64 |

| Learning Rate | 3 | 3 | 3 | 4 |

| Epochs | 100 | 100 | 100 | 100 |

| Optimizer | AdamW | AdamW | AdamW | AdamW |

| k | 0.1 | 0.3 | 2.0 | 5.0 |

| 0.01 | 0.01 | 0.01 | 0.1 |

| Models | CH-SIMS | CH-SIMSv2 | ||||||

|---|---|---|---|---|---|---|---|---|

| MAE | Corr | Acc-2 | F1 | MAE | Corr | Acc-2 | F1 | |

| LMF † | 0.441 | 0.576 | 0.7777 | 0.7788 | 0.367 | 0.557 | 0.7418 | 0.7388 |

| BBFN * | 0.430 | 0.564 | 0.7812 | 0.7788 | 0.300 | 0.708 | 0.7853 | 0.7841 |

| CubeMLP * | 0.419 | 0.593 | 0.7768 | 0.7759 | 0.334 | 0.648 | 0.7853 | 0.7853 |

| CENet † | 0.471 | 0.534 | 0.7790 | 0.7753 | 0.310 | 0.699 | 0.7956 | 0.7963 |

| KD-MSA | 0.4108 | 0.5950 | 0.7877 | 0.7846 | 0.2931 | 0.7365 | 0.8054 | 0.8102 |

| Models | MOSI | MOSEI | ||||||

|---|---|---|---|---|---|---|---|---|

| MAE | Corr | Acc-2 | F1 | MAE | Corr | Acc-2 | F1 | |

| TFN † | 0.947 | 0.673 | 0.7908 | 0.7911 | 0.572 | 0.714 | 0.8189 | 0.8174 |

| LMF † | 0.950 | 0.651 | 0.7918 | 0.7915 | 0.576 | 0.717 | 0.8348 | 0.8336 |

| MulT † | 0.879 | 0.702 | 0.8098 | 0.8095 | 0.559 | 0.733 | 0.8463 | 0.8452 |

| MISA † | 0.776 | 0.778 | 0.8354 | 0.8358 | 0.557 | 0.751 | 0.8467 | 0.8466 |

| BBFN * | 0.796 | 0.744 | 0.8247 | 0.8244 | 0.545 | 0.760 | 0.8573 | 0.8556 |

| MMIM * | 0.744 | 0.780 | 0.8430 | 0.8423 | 0.550 | 0.761 | 0.8542 | 0.8526 |

| CubeMLP * | 0.755 | 0.772 | 0.8232 | 0.8423 | 0.537 | 0.761 | 0.8523 | 0.8504 |

| KD-MSA | 0.7123 | 0.7975 | 0.8491 | 0.8487 | 0.5299 | 0.7792 | 0.8591 | 0.8589 |

| Models | CH-SIMS | MOSI | ||||

|---|---|---|---|---|---|---|

| MAE | Acc-2 | F1 | MAE | Acc-2 | F1 | |

| KD-MSA | 0.4108 | 0.7877 | 0.7846 | 0.7123 | 0.8491 | 0.8487 |

| w/o TFKE | 0.4106 | 0.7724 | 0.7696 | 0.7204 | 0.8338 | 0.8340 |

| w/o KAND | 0.4113 | 0.7702 | 0.7647 | 0.7399 | 0.8262 | 0.8261 |

| w/o DWA | 0.4174 | 0.7549 | 0.7504 | 0.7771 | 0.8262 | 0.8258 |

| Example | Truth | Predict | |||||

|---|---|---|---|---|---|---|---|

| T | A | V | M | MSA * | KD-MSA | ||

| Case 1 |  | 0.6 | −1 | −1 | −0.2 | −0.42 | −0.34 |

| Case 2 |  | −1 | 0.2 | 1 | 0.6 | 0.95 | 0.84 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Hou, Z.; Zhang, Q.; Lei, Z.; Zeng, Z.; Jia, R. KD-MSA: A Multimodal Implicit Sentiment Analysis Approach Based on KAN and Asymmetric Contribution-Aware Dynamic Fusion. Symmetry 2025, 17, 1401. https://doi.org/10.3390/sym17091401

Hou Z, Zhang Q, Lei Z, Zeng Z, Jia R. KD-MSA: A Multimodal Implicit Sentiment Analysis Approach Based on KAN and Asymmetric Contribution-Aware Dynamic Fusion. Symmetry. 2025; 17(9):1401. https://doi.org/10.3390/sym17091401

Chicago/Turabian StyleHou, Zhiyuan, Qiang Zhang, Ziwei Lei, Zheng Zeng, and Ruijun Jia. 2025. "KD-MSA: A Multimodal Implicit Sentiment Analysis Approach Based on KAN and Asymmetric Contribution-Aware Dynamic Fusion" Symmetry 17, no. 9: 1401. https://doi.org/10.3390/sym17091401

APA StyleHou, Z., Zhang, Q., Lei, Z., Zeng, Z., & Jia, R. (2025). KD-MSA: A Multimodal Implicit Sentiment Analysis Approach Based on KAN and Asymmetric Contribution-Aware Dynamic Fusion. Symmetry, 17(9), 1401. https://doi.org/10.3390/sym17091401