Application of a Machine Learning Algorithm for Evaluation of Stiff Fractional Modeling of Polytropic Gas Spheres and Electric Circuits

Abstract

1. Introduction

- •

- The process of finding a solution continues without any coordinate transformations.

- •

- ANNs with machine learning optimization techniques are capable of analytically solving differential equations.

- •

- The increase in the number of sample points does not result in an immediate spike in the computational complexity.

- •

- The ANNs are successful techniques for generating differentiable solutions and have proved their effectiveness in resolving the problem of iterative processes. They can handle complex singular and nonlinear differential equations without difficulty.

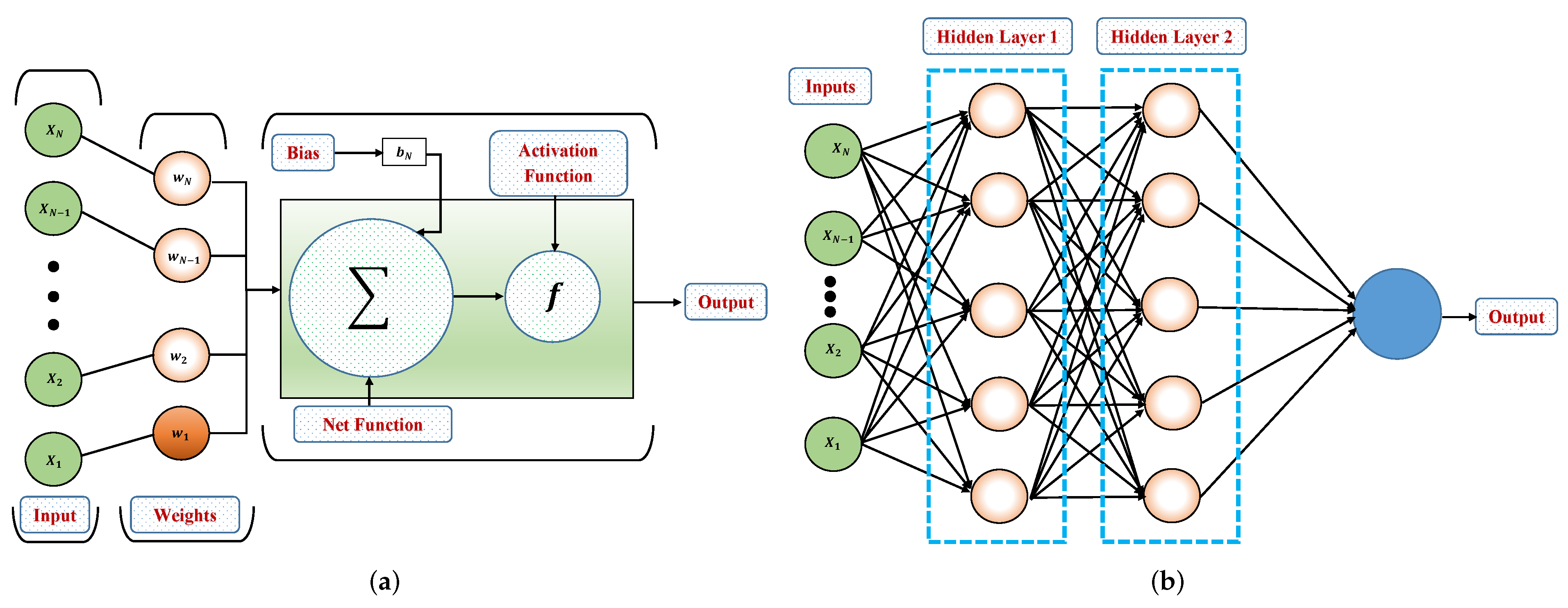

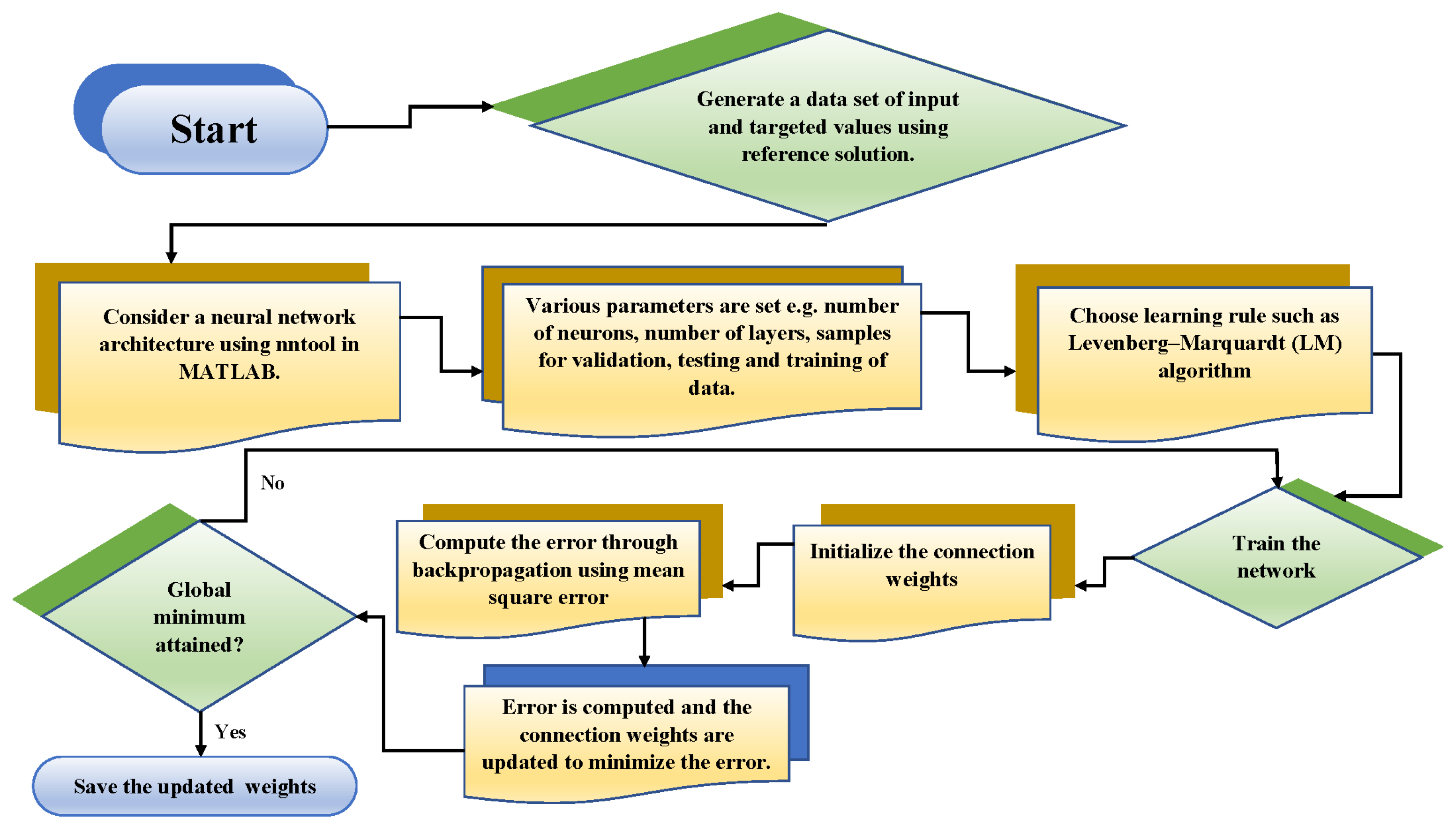

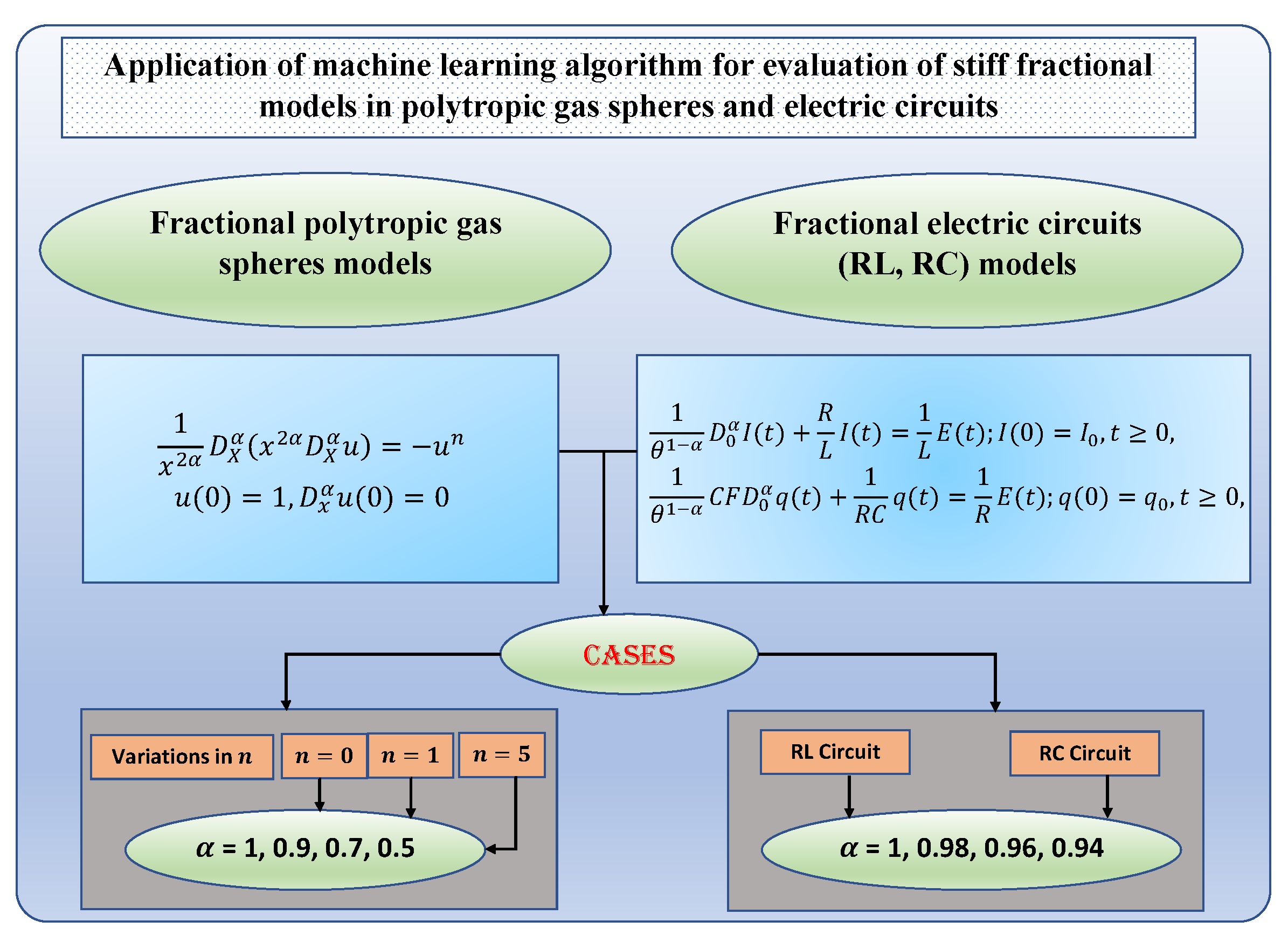

2. Proposed Neural Network-Based Approach for Solving FDEs

3. Numerical Experimentation

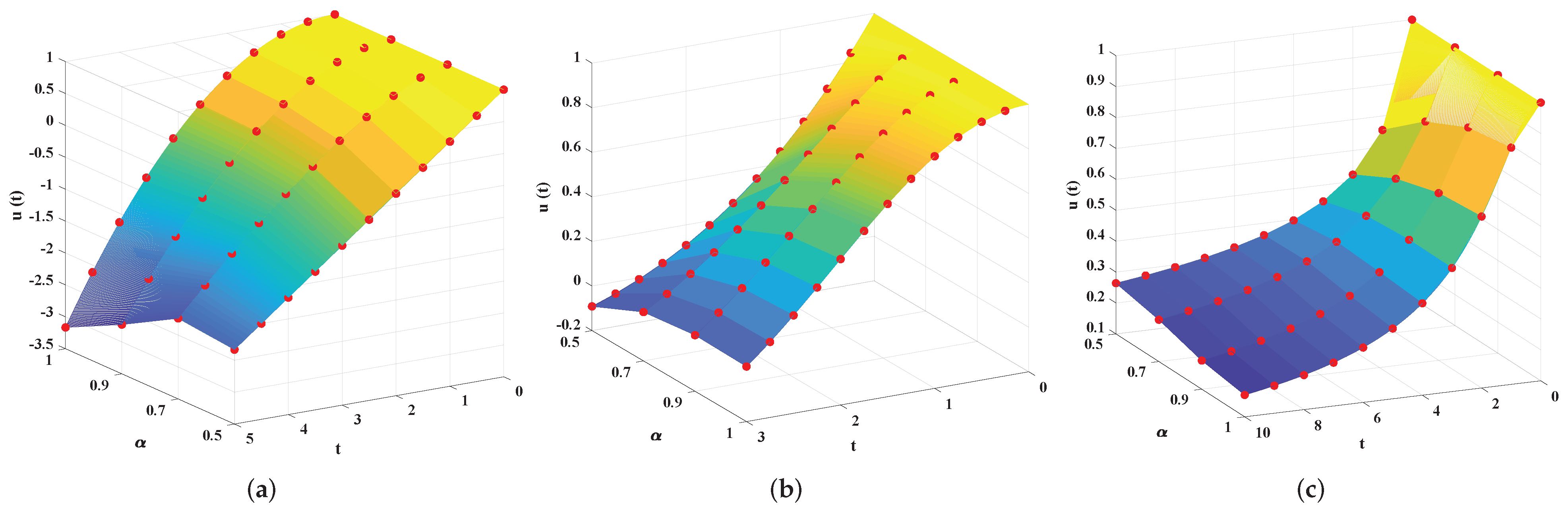

3.1. Fractional Polytropic Gas Sphere Model

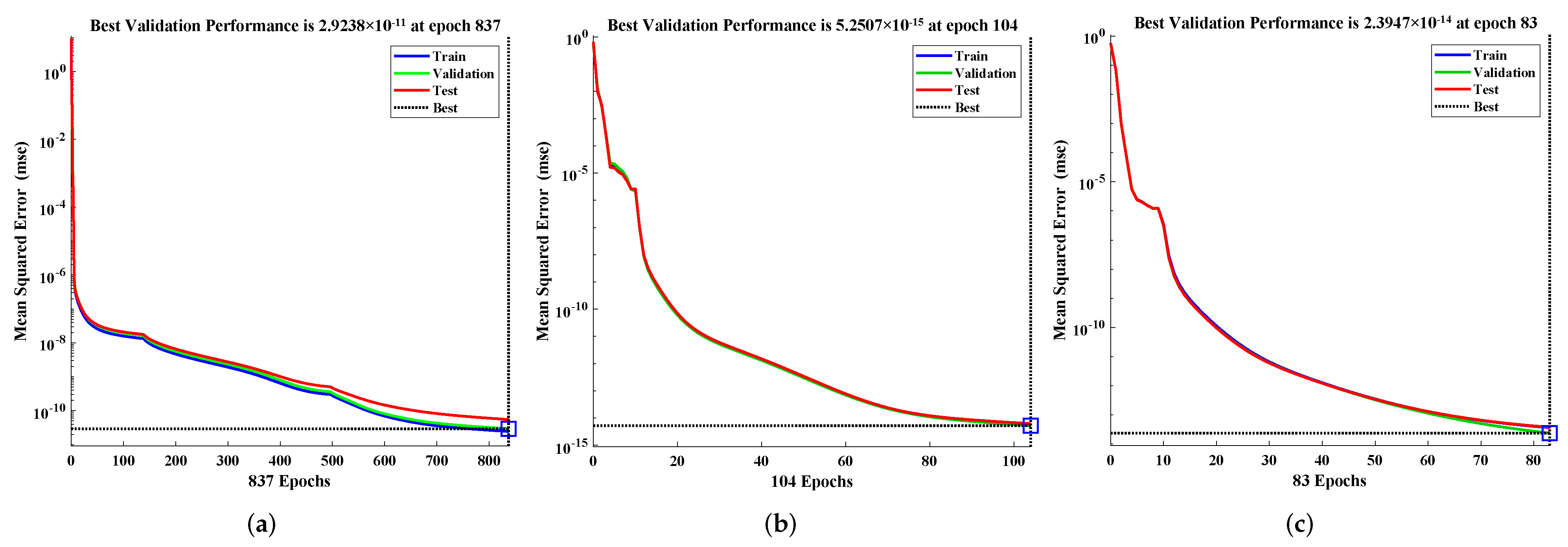

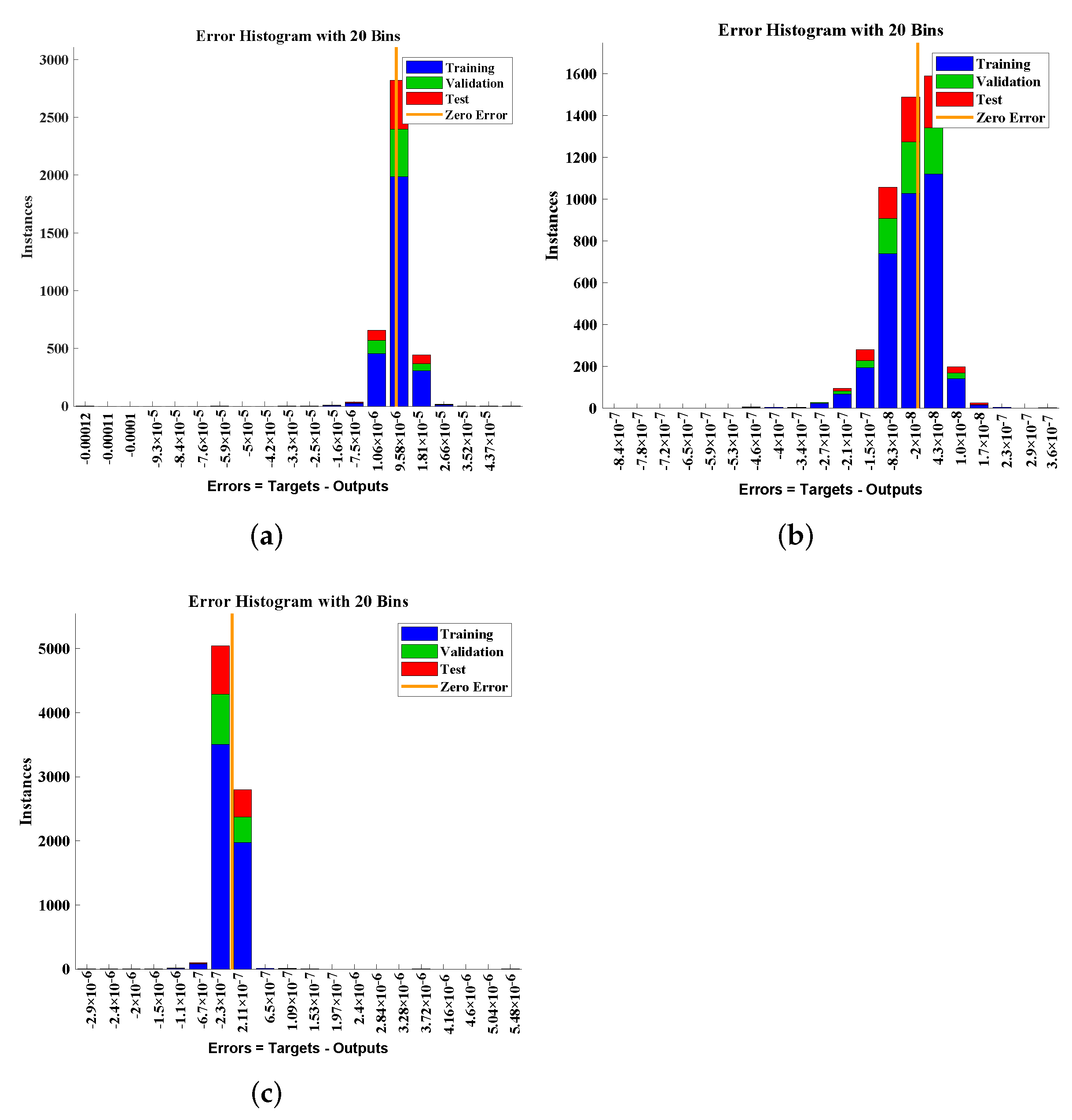

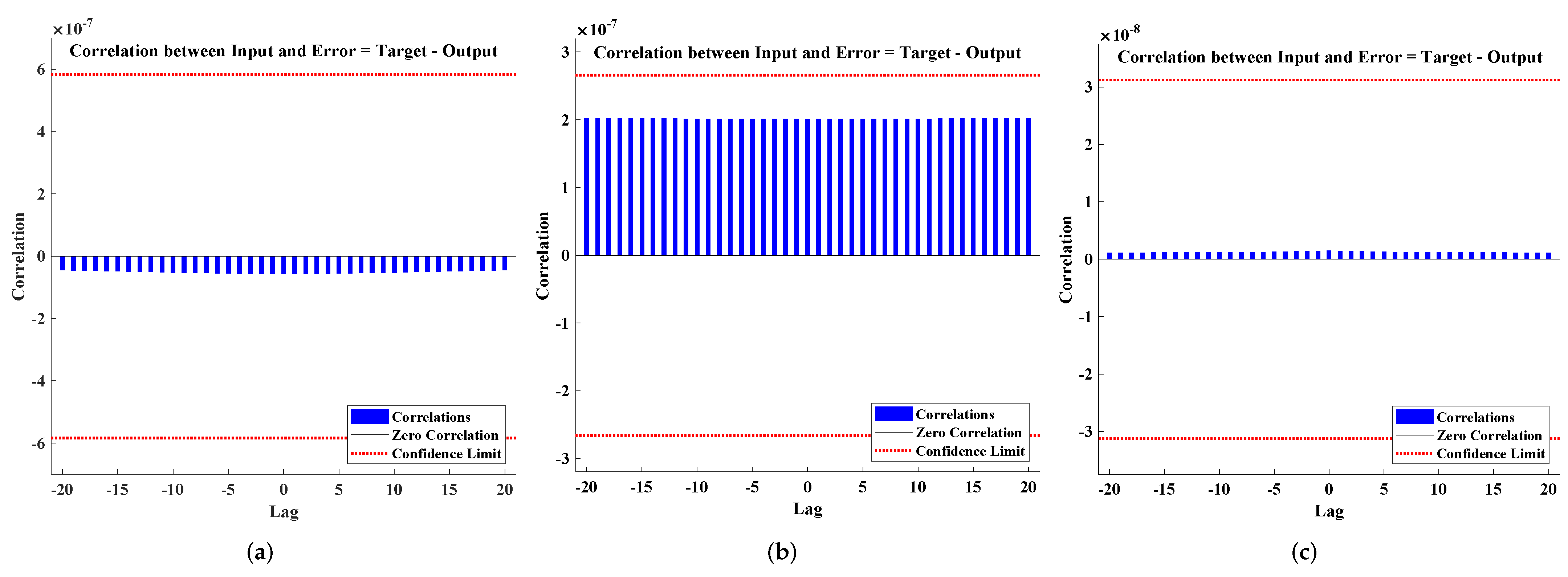

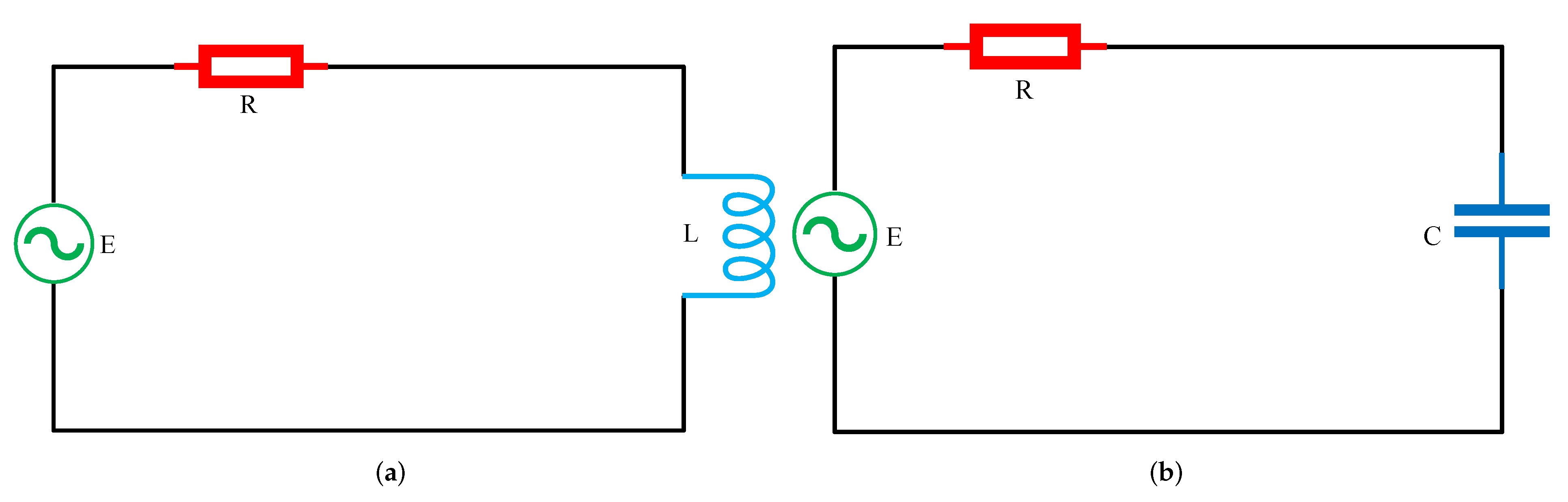

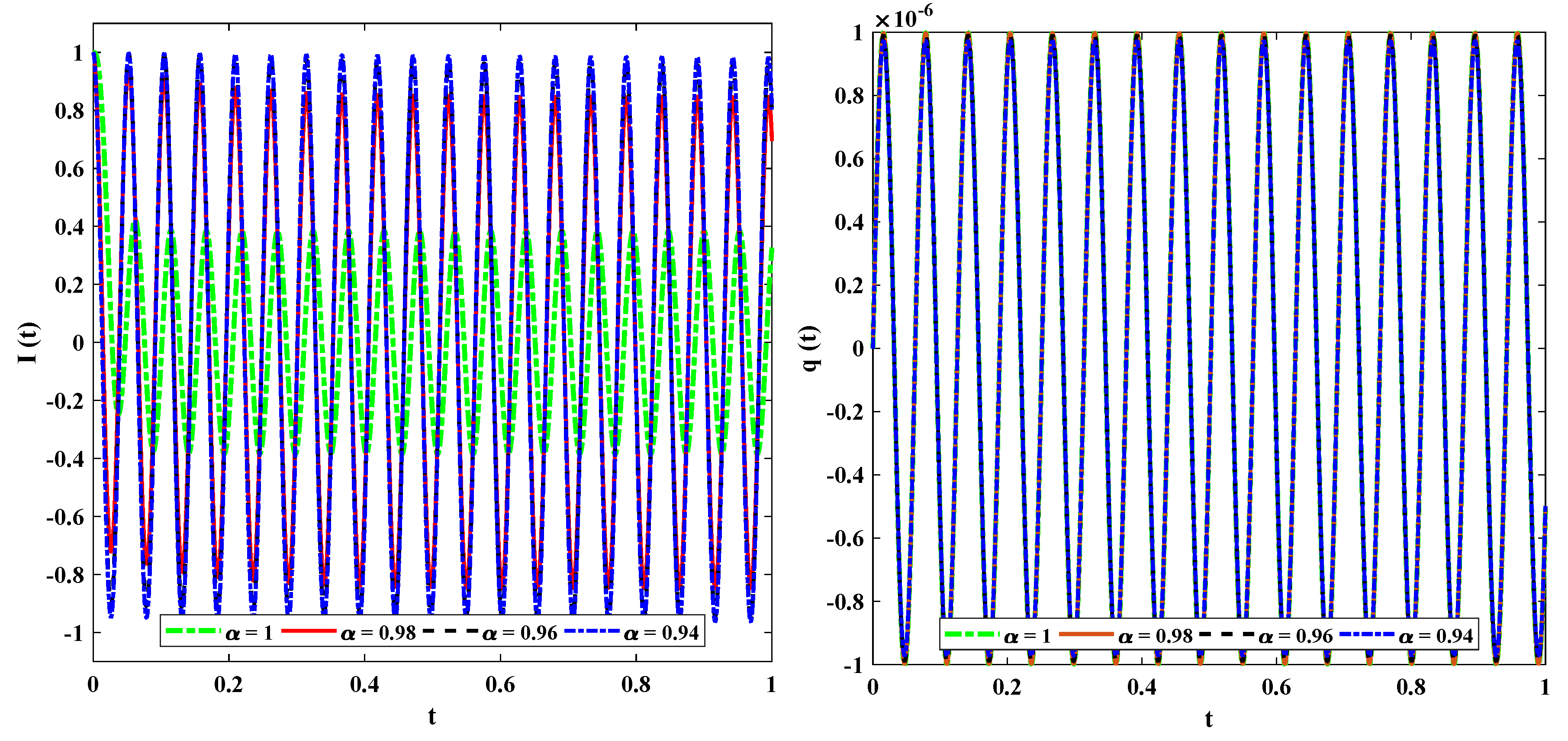

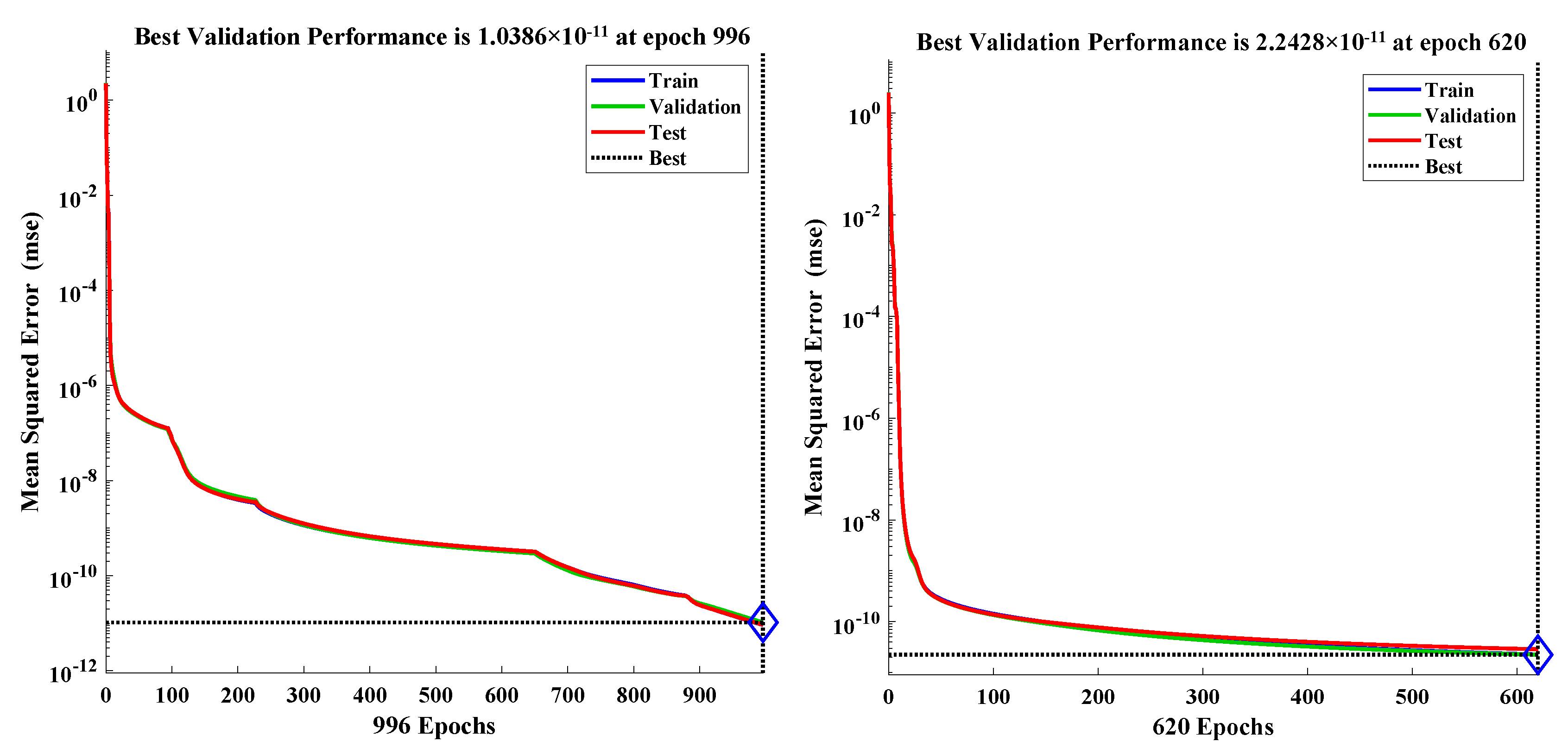

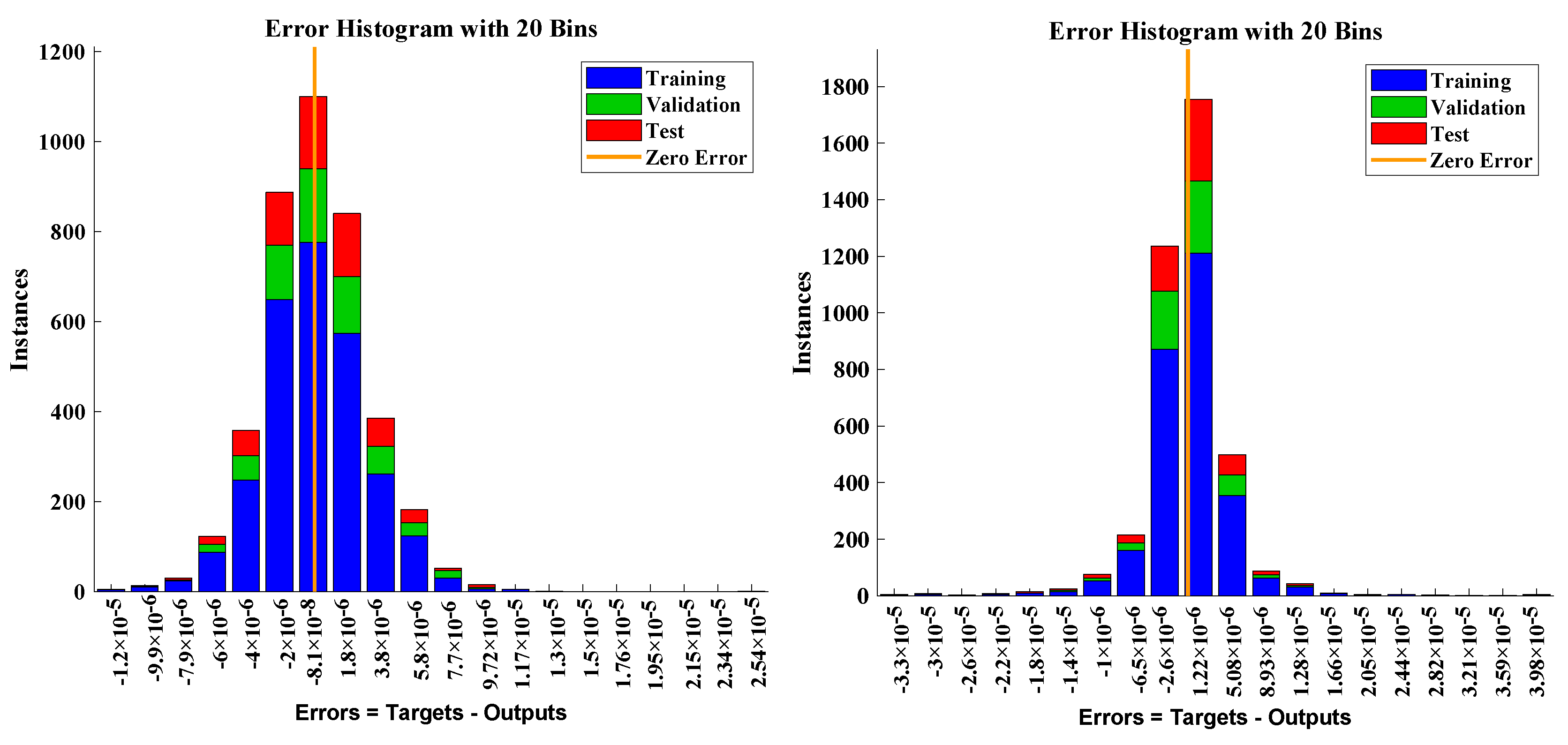

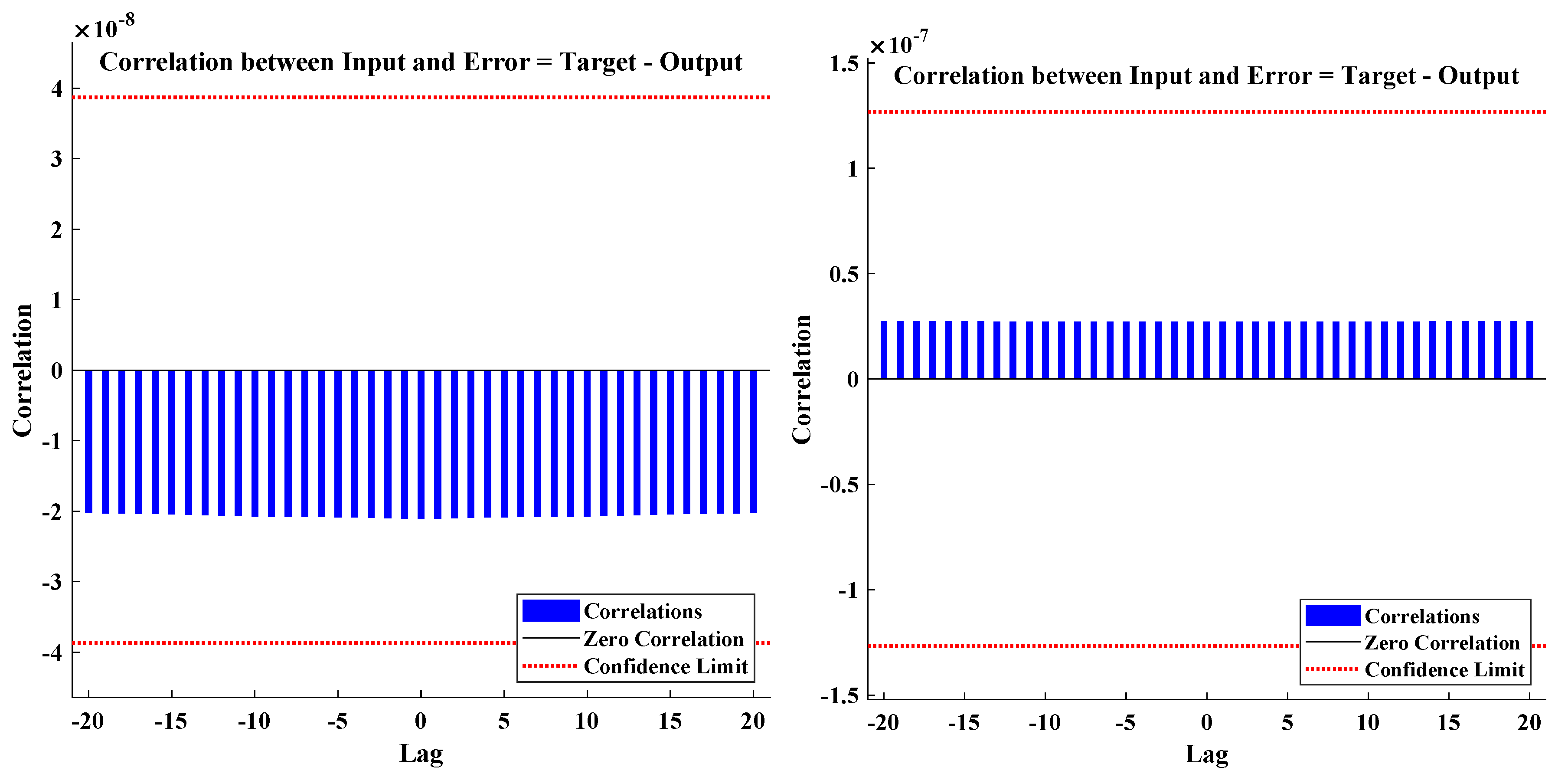

3.2. Stiff Fractional Model of Electrical Circuits

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Oldham, K.; Spanier, J. The Fractional Calculus Theory and Applications of Differentiation and Integration to Arbitrary Order; Elsevier: Amsterdam, The Netherlands, 1974. [Google Scholar]

- Miller, K.S.; Ross, B. An Introduction to the Fractional Calculus and Fractional Differential Equations; Wiley and Sons: Hoboken, NJ, USA, 1993. [Google Scholar]

- Huang, N.; Wang, S.; Wang, R.; Cai, G.; Liu, Y.; Dai, Q. Gated spatial-temporal graph neural network based short-term load forecasting for wide-area multiple buses. Int. J. Electr. Power Energy Syst. 2023, 145, 108651. [Google Scholar] [CrossRef]

- Li, C.; Sarwar, S. Existence and continuation of solutions for Caputo type fractional differential equations. Electron. J. Differ. Equ. 2016, 2016, 1–14. [Google Scholar] [CrossRef]

- Mainardi, F. Fractional calculus: Some basic problems in continuum and statistical mechanics. arXiv 2012, arXiv:1201.0863. [Google Scholar]

- Bagley, R.L.; Torvik, P.J. Fractional calculus in the transient analysis of viscoelastically damped structures. AIAA J. 1985, 23, 918–925. [Google Scholar] [CrossRef]

- Luo, G.; Yuan, Q.; Li, J.; Wang, S.; Yang, F. Artificial intelligence powered mobile networks: From cognition to decision. IEEE Netw. 2022, 36, 136–144. [Google Scholar] [CrossRef]

- Podlubny, I.; Chechkin, A.; Skovranek, T.; Chen, Y.; Jara, B.M.V. Matrix approach to discrete fractional calculus II: Partial fractional differential equations. J. Comput. Phys. 2009, 228, 3137–3153. [Google Scholar] [CrossRef]

- Odibat, Z. Fractional power series solutions of fractional differential equations by using generalized Taylor series. Appl. Comput. Math. 2020, 19, 47–58. [Google Scholar]

- Xiao, Y.; Zhang, Y.; Kaku, I.; Kang, R.; Pan, X. Electric vehicle routing problem: A systematic review and a new comprehensive model with nonlinear energy recharging and consumption. Renew. Sustain. Energy Rev. 2021, 151, 111567. [Google Scholar] [CrossRef]

- Ali, K.K.; Abd El Salam, M.A.; Mohamed, E.M.; Samet, B.; Kumar, S.; Osman, M. Numerical solution for generalized nonlinear fractional integro-differential equations with linear functional arguments using Chebyshev series. Adv. Differ. Equ. 2020, 2020, 1–23. [Google Scholar] [CrossRef]

- Rezapour, S.; Tellab, B.; Deressa, C.T.; Etemad, S.; Nonlaopon, K. HU-type stability and numerical solutions for a nonlinear model of the coupled systems of Navier BVPs via the generalized differential transform method. Fract. Fract. 2021, 5, 166. [Google Scholar] [CrossRef]

- Hammouch, Z.; Yavuz, M.; Özdemir, N. Numerical solutions and synchronization of a variable-order fractional chaotic system. Math. Model. Num. Simul. Appl. 2021, 1, 11–23. [Google Scholar] [CrossRef]

- Feng, Y.; Zhang, B.; Liu, Y.; Niu, Z.; Fan, Y.; Chen, X. A D-band Manifold Triplexer With High Isolation Utilizing Novel Waveguide Dual-Mode Filters. IEEE Trans. Terahertz Sci. Technol. 2022, 12, 678–681. [Google Scholar] [CrossRef]

- Doha, E.H.; Bhrawy, A.H.; Ezz-Eldien, S.S. A Chebyshev spectral method based on operational matrix for initial and boundary value problems of fractional order. Comp. Math. Appl. 2011, 62, 2364–2373. [Google Scholar] [CrossRef]

- Dai, B.; Zhang, B.; Niu, Z.; Feng, Y.; Liu, Y.; Fan, Y. A novel ultrawideband branch waveguide coupler with low amplitude imbalance. IEEE Trans. Microw. Theory Tech. 2022, 70, 3838–3846. [Google Scholar] [CrossRef]

- Esmaeili, S.; Shamsi, M. A pseudo-spectral scheme for the approximate solution of a family of fractional differential equations. Comm. Nonlinear Sci. Num. Simul. 2011, 16, 3646–3654. [Google Scholar] [CrossRef]

- Xi, Y.; Jiang, W.; Wei, K.; Hong, T.; Cheng, T.; Gong, S. Wideband RCS Reduction of Microstrip Antenna Array Using Coding Metasurface With Low Q Resonators and Fast Optimization Method. IEEE Antennas Wirel. Propag. Lett. 2021, 21, 656–660. [Google Scholar] [CrossRef]

- El-Sayed, A.; El-Kalla, I.; Ziada, E. Analytical and numerical solutions of multi-term nonlinear fractional orders differential equations. Appl. Num. Math. 2010, 60, 788–797. [Google Scholar] [CrossRef]

- Faghih, A.; Mokhtary, P. A new fractional collocation method for a system of multi-order fractional differential equations with variable coefficients. J. Comput. Appl. Math. 2021, 383, 113139. [Google Scholar] [CrossRef]

- Majeed, A.; Kamran, M.; Rafique, M. An approximation to the solution of time fractional modified Burgers’ equation using extended cubic B-spline method. Comput. Appl. Math. 2020, 39, 257. [Google Scholar] [CrossRef]

- Alkan, S.; Yildirim, K.; Secer, A. An efficient algorithm for solving fractional differential equations with boundary conditions. Open Phy. 2016, 14, 6–14. [Google Scholar] [CrossRef][Green Version]

- Hong, T.; Guo, S.; Jiang, W.; Gong, S. Highly Selective Frequency Selective Surface With Ultrawideband Rejection. IEEE Trans. Antennas Propag. 2021, 70, 3459–3468. [Google Scholar] [CrossRef]

- Yang, S.; Xiao, A.; Su, H. Convergence of the variational iteration method for solving multi-order fractional differential equations. Comput. Math. Appl. 2010, 60, 2871–2879. [Google Scholar] [CrossRef]

- Kumar, D.; Singh, J.; Baleanu, D. On the analysis of vibration equation involving a fractional derivative with Mittag–Leffler law. Math. Meth. Appl. Sci. 2020, 43, 443–457. [Google Scholar] [CrossRef]

- Nemati, A.; Yousefi, S.; Soltanian, F.; Ardabili, J.S. An efficient numerical solution of fractional optimal control problems by using the Ritz method and Bernstein operational matrix. Asian J. Cont. 2016, 18, 2272–2282. [Google Scholar] [CrossRef]

- Ghoreishi, F.; Yazdani, S. An extension of the spectral Tau method for numerical solution of multi-order fractional differential equations with convergence analysis. Comput. Math. Appl. 2011, 61, 30–43. [Google Scholar] [CrossRef]

- Xu, K.D.; Weng, X.; Li, J.; Guo, Y.J.; Wu, R.; Cui, J.; Chen, Q. 60-GHz third-order on-chip bandpass filter using GaAs pHEMT technology. Semicond. Sci. Technol. 2022, 37, 055004. [Google Scholar] [CrossRef]

- Pourbabaee, M.; Saadatmandi, A. A new operational matrix based on Müntz–Legendre polynomials for solving distributed order fractional differential equations. Math. Comput. Simul. 2022, 194, 210–235. [Google Scholar] [CrossRef]

- Liu, Y.; Xu, K.D.; Li, J.; Guo, Y.J.; Zhang, A.; Chen, Q. Millimeter-Wave E-Plane Waveguide Bandpass Filters Based on Spoof Surface Plasmon Polaritons. IEEE Trans. Microw. Theory Tech. 2022, 70, 4399–4409. [Google Scholar] [CrossRef]

- Owyed, S.; Abdou, M.; Abdel-Aty, A.H.; Alharbi, W.; Nekhili, R. Numerical and approximate solutions for coupled time fractional nonlinear evolutions equations via reduced differential transform method. Chaos Solitons Fractals 2020, 131, 109474. [Google Scholar] [CrossRef]

- Nadeem, M.; He, J.H.; Islam, A. The homotopy perturbation method for fractional differential equations: Part 1 Mohand transform. Int. J. Num. Meth. Heat Fluid Flow 2021, 31, 3490–3504. [Google Scholar] [CrossRef]

- Singh, J.; Gupta, A.; Baleanu, D. On the analysis of an analytical approach for fractional Caudrey-Dodd-Gibbon equations. Alex. Eng. J. 2022, 61, 5073–5082. [Google Scholar] [CrossRef]

- Singh, J. Analysis of fractional blood alcohol model with composite fractional derivative. Chaos Solitons Fractals 2020, 140, 110127. [Google Scholar] [CrossRef]

- Bonyah, E.; Sagoe, A.K.; Kumar, D.; Deniz, S. Fractional optimal control dynamics of coronavirus model with Mittag–Leffler law. Ecol. Compl. 2021, 45, 100880. [Google Scholar] [CrossRef]

- Abdel-Salam, E.A.B.; Nouh, M.I.; Elkholy, E.A. Analytical solution to the conformable fractional Lane-Emden type equations arising in astrophysics. Sci. Afr. 2020, 8, e00386. [Google Scholar] [CrossRef]

- Xu, K.D.; Guo, Y.J.; Liu, Y.; Deng, X.; Chen, Q.; Ma, Z. 60-GHz compact dual-mode on-chip bandpass filter using GaAs technology. IEEE Electron Device Lett. 2021, 42, 1120–1123. [Google Scholar] [CrossRef]

- El-Nabulsi, A.R. The fractional white dwarf hydrodynamical nonlinear differential equation and emergence of quark stars. Appl. Math. Comput. 2011, 218, 2837–2849. [Google Scholar] [CrossRef]

- Liu, K.; Yang, Z.; Wei, W.; Gao, B.; Xin, D.; Sun, C.; Gao, G.; Wu, G. Novel detection approach for thermal defects: Study on its feasibility and application to vehicle cables. High Volt. 2022, 1–10. [Google Scholar] [CrossRef]

- Nouh, M. Accelerated power series solution of polytropic and isothermal gas spheres. New Astron. 2004, 9, 467–473. [Google Scholar] [CrossRef]

- Dang, W.; Guo, J.; Liu, M.; Liu, S.; Yang, B.; Yin, L.; Zheng, W. A semi-supervised extreme learning machine algorithm based on the new weighted kernel for machine smell. Appl. Sci. 2022, 12, 9213. [Google Scholar] [CrossRef]

- Saeed, U. Haar Adomian method for the solution of fractional nonlinear Lane-Emden type equations arising in astrophysics. Taiwan. J. Math. 2017, 21, 1175–1192. [Google Scholar] [CrossRef]

- Li, A.; Spano, D.; Krivochiza, J.; Domouchtsidis, S.; Tsinos, C.G.; Masouros, C.; Chatzinotas, S.; Li, Y.; Vucetic, B.; Ottersten, B. A tutorial on interference exploitation via symbol-level precoding: Overview, state-of-the-art and future directions. IEEE Commun. Surv. Tutor. 2020, 22, 796–839. [Google Scholar] [CrossRef]

- Nouh, M.I.; Abdel-Salam, E.A. Analytical solution to the fractional polytropic gas spheres. Eur. Phy. J. Plus 2018, 133, 1–13. [Google Scholar] [CrossRef]

- Li, A.; Masouros, C.; Swindlehurst, A.L.; Yu, W. 1-bit massive MIMO transmission: Embracing interference with symbol-level precoding. IEEE Commun. Mag. 2021, 59, 121–127. [Google Scholar] [CrossRef]

- Demirci, E.; Ozalp, N. A method for solving differential equations of fractional order. J. Comput. Appl. Math. 2012, 236, 2754–2762. [Google Scholar] [CrossRef]

- Pakdaman, M.; Ahmadian, A.; Effati, S.; Salahshour, S.; Baleanu, D. Solving differential equations of fractional order using an optimization technique based on training artificial neural network. J. Comput. Math. 2017, 293, 81–95. [Google Scholar] [CrossRef]

- Lu, S.; Guo, J.; Liu, S.; Yang, B.; Liu, M.; Yin, L.; Zheng, W. An Improved Algorithm of Drift Compensation for Olfactory Sensors. Appl. Sci. 2022, 12, 9529. [Google Scholar] [CrossRef]

- Lagaris, I.E.; Likas, A.; Fotiadis, D.I. Artificial neural networks for solving ordinary and partial differential equations. IEEE Trans. Neural Netw. 1998, 9, 987–1000. [Google Scholar] [CrossRef] [PubMed]

- Khan, N.A.; Ibrahim Khalaf, O.; Andrés Tavera Romero, C.; Sulaiman, M.; Bakar, M.A. Application of intelligent paradigm through neural networks for numerical solution of multiorder fractional differential equations. Comput. Intell. Neurosci. 2022, 2022, 2710576. [Google Scholar] [CrossRef]

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. J. Comput. Phys. 2019, 378, 686–707. [Google Scholar] [CrossRef]

- Ma, K.; Hu, X.; Yue, Z.; Wang, Y.; Yang, J.; Zhao, H.; Liu, Z. Voltage Regulation With Electric Taxi Based on Dynamic Game Strategy. IEEE Trans. Veh. Technol. 2022, 71, 2413–2426. [Google Scholar] [CrossRef]

- Ahmad Khan, N.; Sulaiman, M. Heat transfer and thermal conductivity of magneto micropolar fluid with thermal non-equilibrium condition passing through the vertical porous medium. Waves Random Complex Media 2022, 1–25. [Google Scholar] [CrossRef]

- Khan, N.A.; Sulaiman, M.; Tavera Romero, C.A.; Laouini, G.; Alshammari, F.S. Study of rolling motion of ships in random beam seas with nonlinear restoring moment and damping effects using neuroevolutionary technique. Materials 2022, 15, 674. [Google Scholar] [CrossRef]

- Khan, N.A.; Alshammari, F.S.; Romero, C.A.T.; Sulaiman, M. Study of Nonlinear Models of Oscillatory Systems by Applying an Intelligent Computational Technique. Entropy 2021, 23, 1685. [Google Scholar] [CrossRef] [PubMed]

- Ma, K.; Li, Z.; Liu, P.; Yang, J.; Geng, Y.; Yang, B.; Guan, X. Reliability-constrained throughput optimization of industrial wireless sensor networks with energy harvesting relay. IEEE Internet Things J. 2021, 8, 13343–13354. [Google Scholar] [CrossRef]

- Khan, N.A.; Sulaiman, M.; Aljohani, A.J.; Bakar, M.A. Mathematical models of CBSC over wireless channels and their analysis by using the LeNN-WOA-NM algorithm. Eng. Appl. Artif. Intel. 2022, 107, 104537. [Google Scholar] [CrossRef]

- Yang, J.; Liu, H.; Ma, K.; Yang, B.; Guerrero, J.M. An Optimization Strategy of Price and Conversion Factor Considering the Coupling of Electricity and Gas Based on Three-Stage Game. IEEE Trans. Autom. Sci. Eng. 2022, 1–14. [Google Scholar] [CrossRef]

- Géron, A. Hands-on Machine Learning with Scikit-Learn, Keras, and TensorFlow: Concepts, Tools, and Techniques to Build Intelligent Systems; O’Reilly Media, Inc.: Sebastopol, CA, USA, 2019. [Google Scholar]

- Wang, S.; Fan, K.; Luo, N.; Cao, Y.; Wu, F.; Zhang, C.; Heller, K.A.; You, L. Massive computational acceleration by using neural networks to emulate mechanism-based biological models. Nature Comm. 2019, 10, 4354. [Google Scholar] [CrossRef]

- Wang, H.; Wu, X.; Zheng, X.; Yuan, X. Model Predictive Current Control of Nine-Phase Open-End Winding PMSMs With an Online Virtual Vector Synthesis Strategy. IEEE Trans. Ind. Electron. 2022, 1. [Google Scholar] [CrossRef]

- Pakdaman, M.; Falamarzi, Y.; Babaeian, I.; Javanshiri, Z. Post-processing of the North American multi-model ensemble for monthly forecast of precipitation based on neural network models. Theo. Appl. Climat. 2020, 141, 405–417. [Google Scholar] [CrossRef]

- Wang, H.; Zheng, X.; Yuan, X.; Wu, X. Low-Complexity Model-Predictive Control for a Nine-Phase Open-End Winding PMSM With Dead-Time Compensation. IEEE Trans. Power Electron. 2022, 37, 8895–8908. [Google Scholar] [CrossRef]

- Burrascano, P.; Fiori, S.; Mongiardo, M. A review of artificial neural networks applications in microwave computer-aided design (invited article). Int. J. Microw. Comput. Eng. 1999, 9, 158–174. [Google Scholar] [CrossRef]

- Cybenko, G. Approximation by superpositions of a sigmoidal function. Math. Control. Signals Syst. 1989, 2, 303–314. [Google Scholar] [CrossRef]

- Zúñiga-Aguilar, C.; Romero-Ugalde, H.; Gómez-Aguilar, J.; Escobar-Jiménez, R.; Valtierra-Rodríguez, M. Solving fractional differential equations of variable-order involving operators with Mittag–Leffler kernel using artificial neural networks. Chaos Solitons Fractals 2017, 103, 382–403. [Google Scholar] [CrossRef]

- Chauhan, R.; Dumka, P.; Mishra, D.R. Modelling conventional and solar earth still by using the LM algorithm-based artificial neural network. Int. J. Ambient. Energy 2022, 43, 1389–1396. [Google Scholar] [CrossRef]

- Abu-Al-Nadi, D.; Ismail, T.; Mismar, M. Interference suppression by element position control of phased arrays using LM algorithm. AEU Int. J. Electron. Commun. 2006, 60, 151–158. [Google Scholar] [CrossRef]

- Abdel-Salam, E.A.B.; Nouh, M.I. Conformable fractional polytropic gas spheres. New Astron. 2020, 76, 101322. [Google Scholar] [CrossRef]

- Nouh, M.I.; Azzam, Y.A.; Abdel-Salam, E.A.B. Modeling fractional polytropic gas spheres using artificial neural network. Neural Comput. Appl. 2021, 33, 4533–4546. [Google Scholar] [CrossRef]

- Idrees, M.; Mabood, F.; Ali, A.; Zaman, G. Exact solution for a class of stiff systems by differential transform method. Appl. Math. 2013, 4, 440–444. [Google Scholar] [CrossRef]

- Yan, B.; Zhou, S.; Litak, G. Nonlinear analysis of the tristable energy harvester with a resonant circuit for performance enhancement. Int. J. Bifurc. Chaos 2018, 28, 1850092. [Google Scholar] [CrossRef]

- Chauhan, N.S. A new approach for solving fractional RL circuit model through quadratic Legendre multi-wavelets. Int. J. Math. Phys. 2018, 1, 8. [Google Scholar] [CrossRef]

- Gomez-Aguilara, J.F.; Rosales-García, J.J.; Bernal-Alvarado, J.J.; Córdova-Fragaa, T.; Guzmán-Cabrerab, R. Fractional Mechanical Oscillators. Rev. Mex. Física 2012, 58, 348–352. [Google Scholar]

- Hasan, S.; Al-Smadi, M.; Dutta, H.; Momani, S.; Hadid, S. Multi-step reproducing kernel algorithm for solving Caputo–Fabrizio fractional stiff models arising in electric circuits. Soft Comput. 2022, 26, 3713–3727. [Google Scholar] [CrossRef]

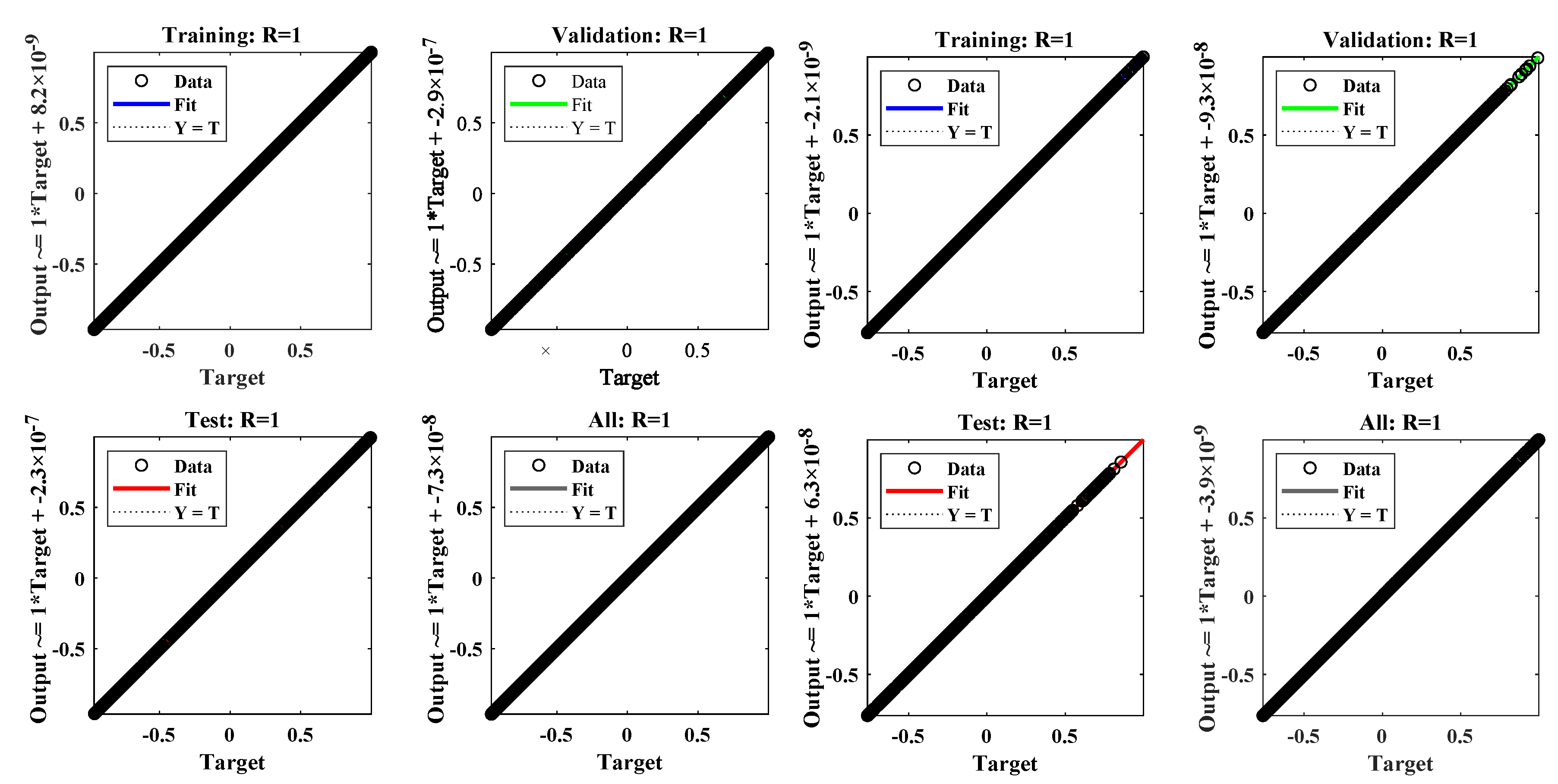

| Parameters | Cases | Performance | Analysis | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Index | Hidden Neurons | Time Delay | Training | Validation | Testing | Gradient | Regression | Time (s) | Iterations | |

| n = 0 | 10 | 2 | 1 | 1 | 0.02 | 665 | ||||

| 0.9 | 1 | 0.03 | 722 | |||||||

| 0.7 | 1 | 0.02 | 617 | |||||||

| 0.5 | 1 | 0.02 | 682 | |||||||

| 12 | 3 | 1 | 1 | 0.01 | 276 | |||||

| 0.9 | 1 | 0.04 | 710 | |||||||

| 0.7 | 1 | 0.05 | 899 | |||||||

| 0.5 | 1 | 0.01 | 161 | |||||||

| n = 1 | 10 | 2 | 1 | 1 | 0.0001 | 60 | ||||

| 0.9 | 1 | 0.00001 | 49 | |||||||

| 0.7 | 1 | 0.01 | 244 | |||||||

| 0.5 | 1 | 0.0001 | 143 | |||||||

| 12 | 3 | 1 | 1 | 0.0001 | 61 | |||||

| 0.9 | 1 | 0.0001 | 69 | |||||||

| 0.7 | 1 | 0.00001 | 40 | |||||||

| 0.5 | 1 | 0.00001 | 48 | |||||||

| n = 5 | 10 | 2 | 1 | 1 | 0.0001 | 110 | ||||

| 0.9 | 1 | 0.00001 | 70 | |||||||

| 0.7 | 1 | 0.00001 | 96 | |||||||

| 0.5 | 1 | 0.00001 | 74 | |||||||

| 12 | 3 | 1 | 1 | 0.000001 | 31 | |||||

| 0.9 | 1 | 0.00001 | 85 | |||||||

| 0.7 | 1 | 0.00001 | 66 | |||||||

| 0.5 | 1 | 0.00001 | 65 | |||||||

| LTM | MS-RKM | DNN-LM | LTM | MS-RKM | DNN-LM | LTM | MS-RKM | DNN-LM | LTM | MS-RKM | DNN-LM | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| t | = 1 | = 0.98 | = 0.96 | = 0.94 | ||||||||

| 0.1 | −0.0599275843 | −0.0599270127 | −0.0599275843 | 0.4305994034 | 0.4305994701 | 0.4305994034 | 0.6147280840 | 0.6147324618 | 0.6147280840 | 0.7032568583 | 0.7032597645 | 0.7032568583 |

| 0.2 | −0.2587200529 | −0.2587193814 | −0.2587200529 | 0.1374554861 | 0.1374561882 | 0.1374554861 | 0.2623940181 | 0.2623986240 | 0.2623940181 | 0.3242278859 | 0.3242322314 | 0.3242278859 |

| 0.3 | −0.3710401148 | −0.3710397800 | −0.3710401148 | −0.1640207924 | −0.1640203771 | −0.1640207924 | −0.1274083878 | −0.1274052916 | −0.1274083878 | −0.1143523331 | −0.1143498579 | −0.1143523331 |

| 0.4 | −0.3674490406 | −0.3674484240 | −0.3674490406 | −0.4113609872 | −0.4113604709 | −0.4113609872 | −0.4686274278 | −0.4686234467 | −0.4686274278 | −0.5045580718 | −0.5045571454 | −0.5045580718 |

| 0.5 | −0.2491062827 | −0.2491056925 | −0.2491062827 | −0.5299909957 | −0.5299907413 | −0.5299909957 | −0.6617587214 | −0.6617495051 | −0.6617587214 | −0.7333491014 | −0.7333411590 | −0.7333491014 |

| 0.6 | −0.0529696032 | −0.0529690513 | −0.0529696032 | −0.4830883429 | −0.4830874057 | −0.4830883429 | −0.6478841060 | −0.6478823520 | −0.6478841060 | −0.7319533965 | −0.7319516228 | −0.7319533965 |

| 0.7 | 0.1597090640 | 0.1597095680 | 0.1597090640 | −0.2853192846 | −0.2853183896 | −0.2853192846 | −0.4316124096 | −0.4316096686 | −0.4316124096 | −0.5016198896 | −0.5016112951 | −0.5016198896 |

| 0.8 | 0.3225118550 | 0.3225127150 | 0.3225118550 | 0.0015528739 | 0.0015530060 | 0.0015528739 | −0.0805381275 | −0.0805377073 | −0.0805381275 | −0.1145268018 | −0.1145220385 | −0.1145268018 |

| 0.9 | 0.3845967472 | 0.3845974562 | 0.3845967472 | 0.2879400946 | 0.2879407366 | 0.2879400946 | 0.2956902333 | 0.2956919658 | 0.2956902333 | 0.3083647912 | 0.3083701102 | 0.3083647912 |

| 1 | 0.3265751202 | 0.3265760999 | 0.3265751202 | 0.4844059045 | 0.4844063129 | 0.4844059045 | 0.5795774012 | 0.5795861470 | 0.5795774012 | 0.6349664283 | 0.6349741110 | 0.6349664283 |

| LTM | MS-RKM | DNN-LM | LTM | MS-RKM | DNN-LM | LTM | MS-RKM | DNN-LM | LTM | MS-RKM | DNN-LM | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| t | = 1 | = 0.98 | = 0.96 | = 0.94 | ||||||||

| 0.1 | ||||||||||||

| 0.2 | ||||||||||||

| 0.3 | ||||||||||||

| 0.4 | ||||||||||||

| 0.5 | ||||||||||||

| 0.6 | ||||||||||||

| 0.7 | ||||||||||||

| 0.8 | ||||||||||||

| 0.9 | ||||||||||||

| 1 | ||||||||||||

| MS-RKM | DNN-LM | MS-RKM | DNN-LM | MS-RKM | DNN-LM | MS-RKM | DNN-LM | |

|---|---|---|---|---|---|---|---|---|

| t | = 1 | = 0.98 | = 0.96 | = 0.94 | ||||

| 0.1 | ||||||||

| 0.2 | ||||||||

| 0.3 | ||||||||

| 0.4 | ||||||||

| 0.5 | ||||||||

| 0.6 | ||||||||

| 0.7 | ||||||||

| 0.8 | ||||||||

| 0.9 | ||||||||

| 1 | ||||||||

| MS-RKM | DNN-LM | MS-RKM | DNN-LM | MS-RKM | DNN-LM | MS-RKM | DNN-LM | |

|---|---|---|---|---|---|---|---|---|

| t | = 1 | = 0.9 | = 0.8 | = 0.7 | ||||

| 0.1 | ||||||||

| 0.2 | ||||||||

| 0.3 | ||||||||

| 0.4 | ||||||||

| 0.5 | ||||||||

| 0.6 | ||||||||

| 0.7 | ||||||||

| 0.8 | ||||||||

| 0.9 | ||||||||

| 1 | ||||||||

| Parameters | Cases | Performance | Analysis | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Example | Hidden neurons | Time delay | alpha | Training | Validation | Testing | Gradient | Regression | Time (s) | Iterations |

| 1 | 10 | 2 | 1 | 1 | 0.03 | 753 | ||||

| 10 | 2 | 0.98 | 1 | 0.04 | 1000 | |||||

| 10 | 2 | 0.96 | 1 | 0.04 | 1000 | |||||

| 10 | 2 | 0.94 | 1 | 0.05 | 735 | |||||

| 12 | 3 | 1 | 1 | 0.05 | 675 | |||||

| 12 | 3 | 0.98 | 1 | 0.05 | 744 | |||||

| 12 | 3 | 0.96 | 1 | 0.05 | 799 | |||||

| 12 | 3 | 0.94 | 1 | 0.04 | 1000 | |||||

| 2 | 10 | 2 | 1 | 1 | 0.0005 | 49 | ||||

| 10 | 2 | 0.98 | 1 | 0.004 | 98 | |||||

| 10 | 2 | 0.96 | 1 | 0.0005 | 60 | |||||

| 10 | 2 | 0.94 | 1 | 0.03 | 148 | |||||

| 12 | 3 | 1 | 1 | 0.0005 | 35 | |||||

| 12 | 3 | 0.98 | 1 | 0.005 | 46 | |||||

| 12 | 3 | 0.96 | 1 | 0.05 | 223 | |||||

| 12 | 3 | 0.94 | 1 | 0.02 | 571 | |||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Alarfaj, F.K.; Khan, N.A.; Sulaiman, M.; Alomair, A.M. Application of a Machine Learning Algorithm for Evaluation of Stiff Fractional Modeling of Polytropic Gas Spheres and Electric Circuits. Symmetry 2022, 14, 2482. https://doi.org/10.3390/sym14122482

Alarfaj FK, Khan NA, Sulaiman M, Alomair AM. Application of a Machine Learning Algorithm for Evaluation of Stiff Fractional Modeling of Polytropic Gas Spheres and Electric Circuits. Symmetry. 2022; 14(12):2482. https://doi.org/10.3390/sym14122482

Chicago/Turabian StyleAlarfaj, Fawaz Khaled, Naveed Ahmad Khan, Muhammad Sulaiman, and Abdullah M. Alomair. 2022. "Application of a Machine Learning Algorithm for Evaluation of Stiff Fractional Modeling of Polytropic Gas Spheres and Electric Circuits" Symmetry 14, no. 12: 2482. https://doi.org/10.3390/sym14122482

APA StyleAlarfaj, F. K., Khan, N. A., Sulaiman, M., & Alomair, A. M. (2022). Application of a Machine Learning Algorithm for Evaluation of Stiff Fractional Modeling of Polytropic Gas Spheres and Electric Circuits. Symmetry, 14(12), 2482. https://doi.org/10.3390/sym14122482