3.1. Time Estimation

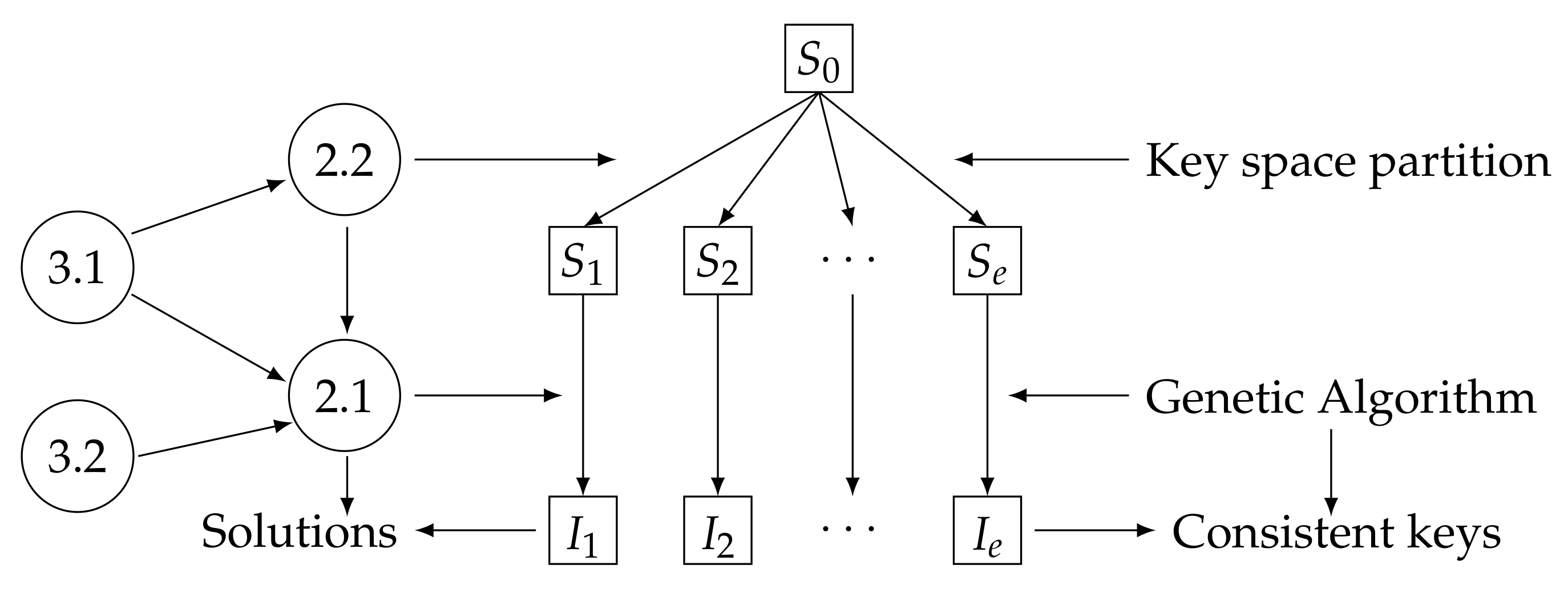

In GAs, less complex operations such as mutation and crossing are performed within each class, where the elements have block length

or

depending on the way of partitioning the space. However, despite the variation of these two parameters, the calculation of the fitness function, being the function of greater complexity within the GA, is carried out using (

8), i.e., with the complete key of length

, and not with the part of it found in the class. This means that a variation in the number of elements in a class does not affect the fitness function’s cost. Moreover, if all the parameters remain the same, the GA’s time in each generation must be quite similar, even if

varies. To check this, experiments were done with a PC with an Intel(R) Core (TM) i3-4160 CPU @ 3.60GHz (four CPUs), and 4GB of RAM. AES(

t) encryption was used, a parametric version of AES, where

and also AES(8) = AES (see [

18,

19]). The experiment consisted of executing the GA with the BBM methodology and measuring the time (in minutes) that it took in a generation for different values of

(keeping the other parameters fixed), then verifying if these data were used to forecast the time it would take in

n generations. The size of the population was

in all cases.

Table 1,

Table 2 and

Table 3 summarize the results corresponding to AES(3), AES(4), and AES(7), respectively. The first column has the different values that were given to

. The second column is the average time

that was obtained for a generation in 10 executions of each

. The general mean for all the

values is

minutes approximately in

Table 1,

in

Table 2, and

in

Table 3. The third column represents the number of generations (

). The real-time that the algorithm takes,

, appears in the fourth column. The fifth column is the estimated time,

, that should be delayed, the calculation of which is based on:

Finally, the last column is the error of the prediction, With these experiments, we wanted to check for the procedure whether if for a specific value of and having generations, then the approximate time (t) that the GA would take to complete those generations was

With a generation, or very few, the average time it took for the GA was slightly slower, decreasing and tending to stabilize at a limit as it performed more iterations. This was due to probabilistic functions that intervened in the GA and a set of operations to randomly create an initial population. Therefore, the criterion for calculating the average time was to let the GA finish executing in a certain number of generations, either because it found the key or because it reached the last iteration without finding it, and then calculate the average. Therefore, calculating in a few generations or setting the amount to one, would get longer times; however, doing so would be valid if the intention were to go over the top in estimating the time that the algorithm consumed.

In the case of AES(7) (

Table 3), we only experimented with the values 17 and 18 of

, since considering all the previous (or higher) values would take a considerably longer time (given the greater strength of AES(7)).

Similar results were obtained if more values of

were chosen to calculate

. For example, using a PC Laptop with a processor: Intel (R) Celeron (R) CPU N3050 @ 1.60GHz (two CPUs), ∼1.6 GHz, and 4 GB of RAM and going through all the values of

from 10 to 48 (AES(3) key length),

was obtained. Now, for

, we had

and

In another test:

,

, then

. Note that the PC used in this case had different characteristics and less computational capacity than the experiments in

Table 1,

Table 2 and

Table 3. The interesting thing is that under these conditions, the results were as expected as well.

In a similar way, the GA was executed with the TBB methodology for the search in , for values of equal to those of and different generations (). It was observed that the time estimates behaved in a similar way to the results presented previously for the BBM methodology. Note that in the AES(t) family of ciphers, the length of the key increases from 48 for AES(3) to 128 for AES(8); however, regardless of the key length, the same behavior was seen in all of them.

Now, we showed with these experiments another application of this study on time estimation. In the GA scheme with the BBM methodology, the total number of generations (iterations) to perform for a given value of

is:

Taking

, by using

, then we can do an a priori estimation for a given value of

, of the total time it will take the GA to perform all the generations or a certain desired percent of them. For example, in AES(3), for

, in Expression (

10), we have

; now, since

in

Table 1, then the approximate time that the GA will consume to perform 655 generations is

, as can be seen in the table. Another example can be seen in

Table 2, also for

.

On the other hand, supposing we have an available time

, to carry out the attack with this model, thus we may use (

9) and (

10), to compute an approximated value of

, which implies doing the corresponding partition of the space and computing the number of generations to perform for this time

and the value of

. In this sense, doing

in (

9), we have:

We remark that the above is valid in the TBB methodology, only that is used instead of .

As can be observed, the results on the estimation of time were favorable. In this sense, the following points can be summarized:

Taking into account the estimation of time

and its observed closeness to the real value

, a number of generations to be carried out in an available or desired time can be estimated (using Expression (

9)), which can be taken as a starting point for the proper choice of

, or

in

(see

Section 2). In this way, it is possible to adapt the size of the search space (to choose a proper value of

using (

11)) to the number of generations that it is estimated can be executed in a given time.

The time could be used to perform the time estimation of its own , but as can be seen in the tables, sometimes, it makes predictions with minor errors and other times greater than with . Another drawback is that it cannot be used for other . On the contrary, the main advantage of using is that it can be calculated for some sparse values of and be used to estimate the time even with values of this parameter whose has not been calculated.

3.2. Proposal of Other Fitness Functions

In the context of the BBM and TBB methodologies used in this work with the GA, we studied in this section which fitness functions provided a better response, in the sense that consistent keys were obtained as solutions in a greater percentage of occasions. Let

E be a block cipher with length

n of plaintext and ciphertext, defined as in Expression (

7),

T a plaintext,

K a key, and

C the corresponding ciphertext, that is

. Let:

be the function of decryption of

E, such that

. Then, the fitness function with which we have been working and based on the Hamming distance

, for a certain individual

X of the population, is:

which measures the closeness between the encrypted texts

C and the text obtained from encrypting

T with the probable key

X (see [

16]). A similar function is the one that measures the closeness between plaintexts:

Another function that follows the idea of comparing texts in binary with

is the weighting of

and

. Let

, such that

, then this function would be defined as follows:

It is interesting to note that is more time consuming than each function separately, but the idea is to be more efficient in searching for the key.

The fitness functions proposed at this point are based on measuring the closeness of the plaintext and ciphertext, but in decimals. Let

be the corresponding conversion to decimals of the binary block

Y. The first function is defined as follows,

Note that if the encrypted texts are equal,

, then

, which implies that

, i.e., if they are equal, then the fitness function takes the highest value. On the contrary, the greatest difference is the farthest they can be, i.e.,

and

, and therefore,

. The following is a weighting of the functions

and

,

Both functions have in common that they measure the closeness between ciphertexts. This is not ambiguous since, for example, if

C and

differ by two bits, the function

will always have the same value no matter what these two bits are. On the contrary, it is not the same in

if the bits are both more or less significant since the numbers are not the same in their decimal representation. The following function measures the closeness in decimals of plaintexts:

Finally, the functions

,

, and

are defined with respect to the previous ones as follows,

where

and

. This guarantees that in general, each

.

The idea behind the introduction of these functions lies mainly in the fact that there are changes that the Hamming distance does not detect, as opposed to the decimal distance. For example, suppose the key is , and is the possible key, both in binary. It is clear that the Hamming distance is five, and the distance in decimals is 62 since and ; the fitness functions take the values for the binary version and for the decimal version. Now, if , the binary fitness function would still be 0.17 since there are still five different bits; on the other hand, , so the decimal fitness function takes the value . Finally, if we take , then the distance in binary remains the same value, but the decimal continues to change, therefore, the fitness function as well, and takes the value 0.49. Therefore, this shows that the change of b, the decimal distance, is always detected, unlike the binary distance, which remains the same for certain changes.

AES(3) encryption attack experiments were carried out for the two methodologies for partitioning the key space to compare these functions. The main idea is to find the key and not do a component percent match analysis between them, where the fitness functions with the Hamming distance would be more useful. A PC with an Inter (R) Core (TM) i3-4160 CPU @ 3.60GHz (four CPUs), and 4 GB of RAM was used. For the results, we took into account the average time it took to find the key, the average number of generations in which it was found, the percentage of failures (in many attacks carried out), and a parameter called efficiency, , which resulted in a weighting of the three previous criteria.

Definition 1 (Fitness functions’ efficiency)

. Let , , , , the time it takes the GA to find the key with , on an average for generations, and the percent of attempts in that the GA did not find the key with . Then, the efficiency, , of the fitness function with respect to the other functions, , is defined as, Note that the number of generations and the failure percentage are inversely proportional to the efficiency

as the higher these parameters, the lower its efficiency fitness function.

Table 4 presents the results of the comparison of the different fitness functions for the BBM space partitioning methodology, in this case

. We took

and each

. To calculate

the values

,

and

were taken for

,

, and

, respectively. Sorting

with respect to efficiency, the first five would be

,

,

,

, and

. It is noteworthy that of the first three that use only the Hamming distance, only

appears.

In the comparison of these functions for the TBB methodology of partitioning the key space and searching in

, the experiment results are presented in

Table 5. In this case, ordering the functions by their efficiency, the first five would be

,

,

,

, and

. Again, a single function appears from the first three, in this case

, and the others repeat. Note in particular that

(the weight of the functions in decimals) is better than

(the weight of the functions in binary) in each of the parameters measured in both methodologies.

It is interesting to see what happens if the values of the weights are changed in the functions , , and , which combine the functions with distance in decimals and binary, keeping fixed , , and for the calculation of . In this sense, in the following group of experiments, the weights were assigned as follows for each methodology: the values were 0.2 and 0.8; first, in each of these three functions, the subfunctions in binary were favored, from which , (in , ), , and (in ; note that this function has two subfunctions with the distance in binary and two in decimals); in this case, we identified the functions as , , and ; then, we changed the order of these same weights, and the largest were given to the subfunctions whose distance was in decimals; and we identified the functions for this case as , , and .

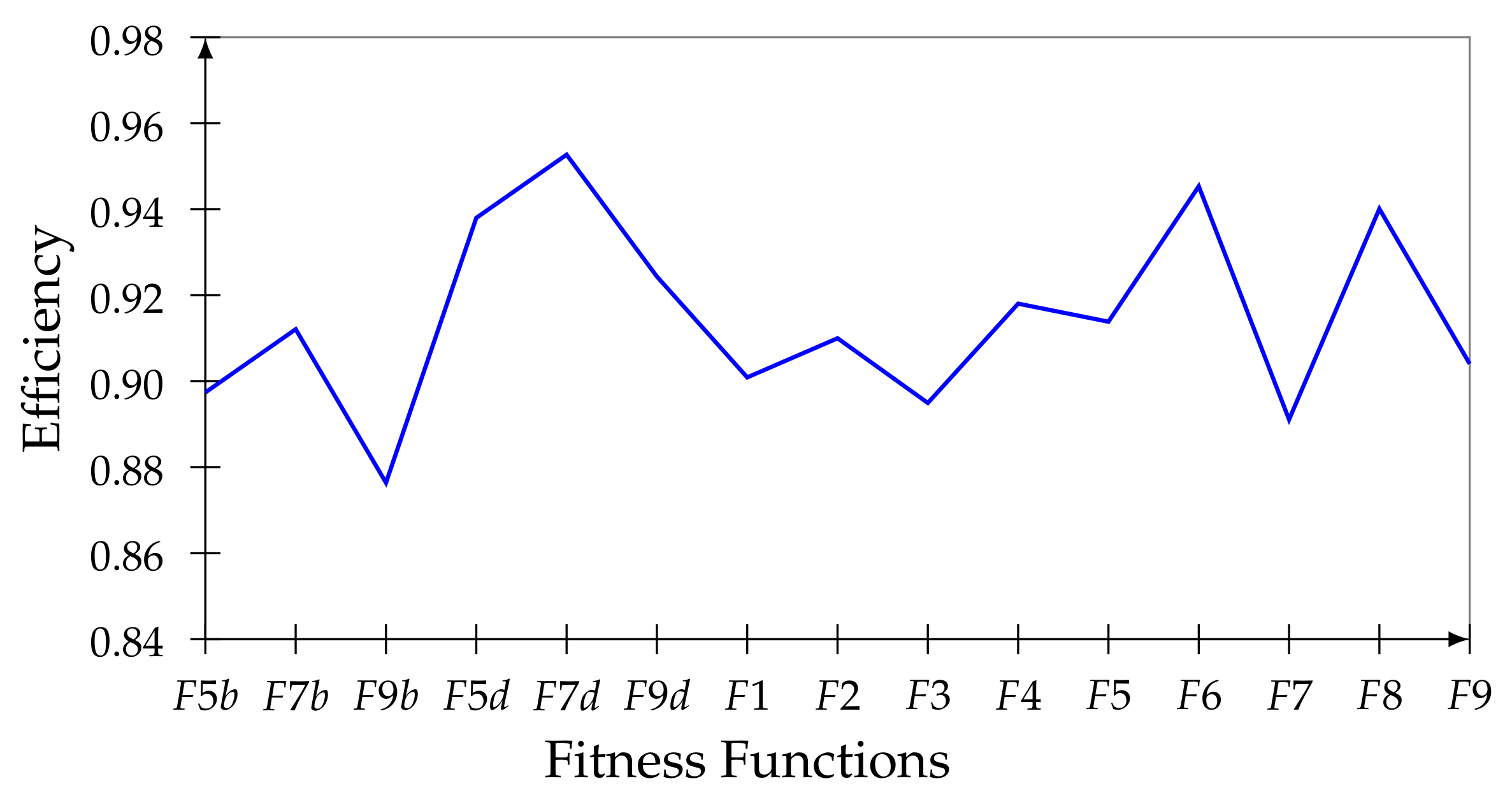

For the BBM methodology, the results are presented in

Table 6. Note that according to

, the first is

, followed by

and

.

In

Figure 2, these results are compared, according to

, with those of

Table 4, also including the values of

,

, and

. Sorting the functions according to their efficiency, the first five are

,

,

,

, and

.

Notice how the best results prevail in the functions with the distance in decimals. In this sense, and (now as and ) are incorporated into the first ones and three of those that already were in this group in the above experiments, (as , , and .

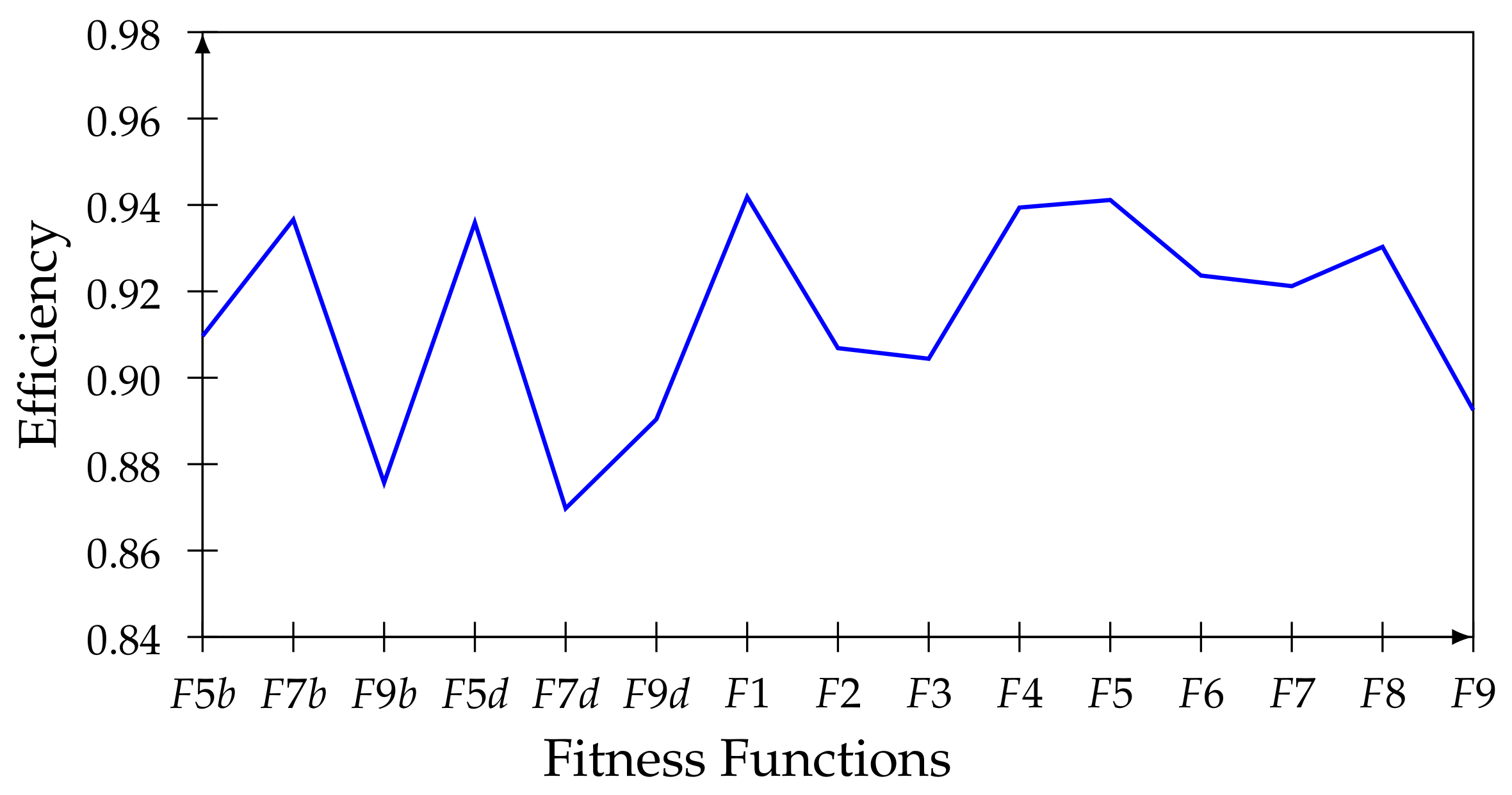

In the case of the TBB methodology, the results are presented in

Table 7. According to efficiency, the first is

, followed by

and

.

In

Figure 3, these results are compared with those of all the functions of

Table 5. The first five are now

,

,

,

, and

; notice how the functions that contain the distance prevail in decimals and this combined with binary. In the experiments, the best global behavior of the functions with the decimal distance is verified, and specifically in the BBM methodology, where the keys are grouped into intervals according to their decimal position in space, contrary to the other methodology, where the keys of each class are positioned throughout the space.

Note that when comparing

Figure 2 and

Figure 3, the values of

that are in the tables are not directly compared, but rather, it is necessary to recalculate

taking into account that there are 15 functions. We mean,

where

,

, and,

.