Entity Relation Extraction Based on Entity Indicators

Abstract

1. Introduction

- 1

- Entity indicators are designed to support relation extraction. Several types of entity indicators are proposed in this paper. These indicators are effective for capturing the semantic and structural information of a relation instance.

- 2

- The entity indicators are evaluated based on three public corpora, providing a systematic analysis of these indicators in supporting relation extraction. A performance comparison showed that our method considerably outperforms all compared works.

2. Related Works

3. Methodology

3.1. Entity Indicators

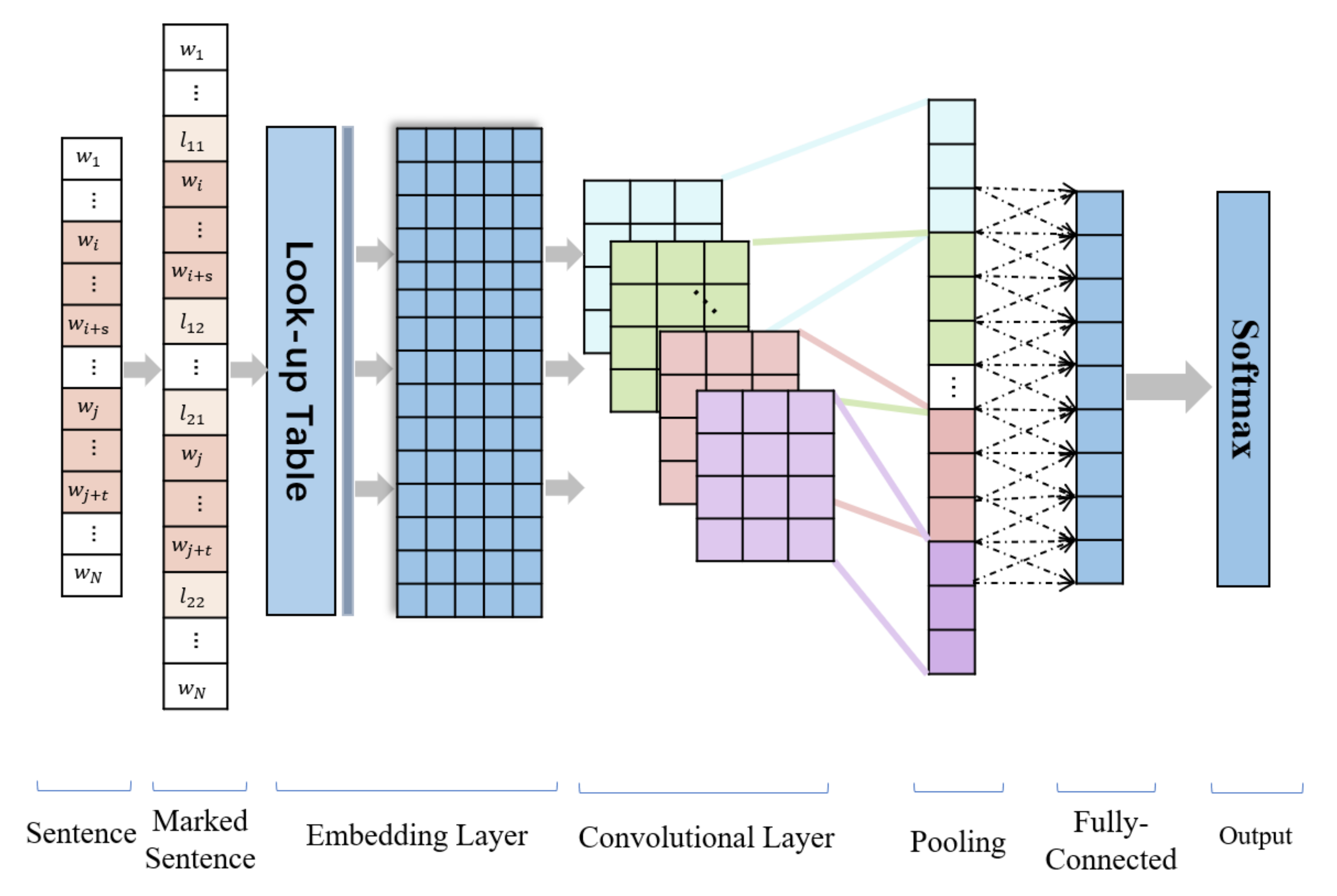

3.2. Model

4. Experiments

4.1. Performance of Entity Indicators

4.2. Comparison with Other Strategies

4.3. Evaluation on the Chinese Corpus

4.4. Evaluation on the English Corpus

5. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Hendrickx, I.; Kim, S.N.; Kozareva, Z.; Nakov, P.; Séaghdha, D.O.; Padó, S.; Pennacchiotti, M.; Romano, L.; Szpakowicz, S. Semeval-2010 task 8: Multi-way classification of semantic relations between pairs of nominals. In Proceedings of the 5th International Workshop on Semantic Evaluation, ACL, Uppsala, Sweden, 15–16 July 2010; pp. 33–38. [Google Scholar]

- Agosti, M.; Nunzio, G.M.D.; Marchesin, S.; Silvello, G. A relation extraction approach for clinical decision support. arXiv 2019, arXiv:1905.01257. [Google Scholar]

- Zheng, S.; Dharssi, S.; Wu, M.; Li, J.; Lu, Z. Text mining for drug discovery. In Bioinformatics and Drug Discovery; Springer: Berlin/Heidelberg, Germany, 2019; pp. 231–252. [Google Scholar]

- Jabbari, A.; Sauvage, O.; Zeine, H.; Chergui, H. A french corpus and annotation schema for named entity recognition and relation extraction of financial news. In Proceedings of the LREC ’20, Marseille, France, 11–16 May 2020; pp. 2293–2299. [Google Scholar]

- Macdonald, E.; Barbosa, D. Neural relation extraction on wikipedia tables for augmenting knowledge graphs. In Proceedings of the CIKM ’20, Galway, Ireland, 17–20 August 2020; pp. 2133–2136. [Google Scholar]

- Li, X.; Yin, F.; Sun, Z.; Li, X.; Yuan, A.; Chai, D.; Zhou, M.; Li, J. Entity-relation extraction as multi-turn question answering. arXiv 2019, arXiv:1905.05529. [Google Scholar]

- Han, R.; Liang, M.; Alhafni, B.; Peng, N. Contextualized word embeddings enhanced event temporal relation extraction for story understanding. arXiv 2019, arXiv:1904.11942. [Google Scholar]

- Liu, K. A survey on neural relation extraction. Sci. China Technol. Sci. 2020, 63, 1971–1989. [Google Scholar] [CrossRef]

- Liu, C.Y.; Sun, W.B.; Chao, W.H.; Che, W.X. Convolution neural network for relation extraction. In Proceedings of the DMA 2013: Advanced Data Mining and Applications, Hangzhou, China, 14–16 December 2013. [Google Scholar]

- Li, Z.; Yang, J.; Gou, X.; Qi, X. Recurrent neural networks with segment attention and entity description for relation extraction from clinical texts. Artif. Intell. Med. 2019, 97, 9–18. [Google Scholar] [CrossRef] [PubMed]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention is all you need. In Proceedings of the Advances in Neural Information Processing Systems, Long Beach, CA, USA, 4–9 December 2017; pp. 5998–6008. [Google Scholar]

- Zeng, D.; Liu, K.; Chen, Y.; Zhao, J. Distant supervision for relation extraction via piecewise convolutional neural networks. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, Lisbon, Portugal, 17–21 September 2015. [Google Scholar]

- Chen, Y.; Wang, K.; Yang, W.; Qing, Y.; Huang, R.; Chen, P. A multi-channel deep neural network for relation extraction. IEEE Access 2020, 8, 13195–13203. [Google Scholar] [CrossRef]

- Xu, Y.; Mou, L.; Li, G.; Chen, Y.; Peng, H.; Jin, Z. Classifying relations via long short term memory networks along shortest dependency paths. In Proceedings of the Proceedings of the EMNLP 2015, Lisbon, Portugal, 17–21 September 2015; pp. 1785–1794. [Google Scholar]

- Zhang, C.; Xu, W.; Ma, Z.; Gao, S.; Guo, J. Construction of semantic bootstrapping models for relation extraction. Knowl. Based Syst. 2015, 83, 128–137. [Google Scholar] [CrossRef]

- Zheng, S.; Xu, J.; Zhou, P.; Bao, H.; Qi, Z.; Xu, B. A neural network framework for relation extraction: Learning entity semantic and relation pattern. Knowl. Based Syst. 2016, 114, 12–23. [Google Scholar] [CrossRef]

- Soares, L.B.; FitzGerald, N.; Ling, J.; Kwiatkowski, T. Matching the blanks: Distributional similarity for relation learning. arXiv 2019, arXiv:1906.03158. [Google Scholar]

- Zhang, D.; Wang, D. Relation classification via recurrent neural network. arXiv 2015, arXiv:1508.01006. [Google Scholar]

- Zhong, Z.; Chen, D. A frustratingly easy approach for joint entity and relation extraction. arXiv 2020, arXiv:2010.12812. [Google Scholar]

- Kambhatla, N. Combining lexical, syntactic and semantic features with maximum entropy models for extracting relations. In Proceedings of the ACL (07 2004), Barcelona, Spain, 21–26 July 2004. [Google Scholar] [CrossRef]

- Zelenko, D.; Aone, C.; Richardella, A. Kernel methods for relation extraction. J. Mach. Learn. Res. 2003, 3, 1083–1106. [Google Scholar]

- Noble, W.S. What is a support vector machine? Nat. Biotechnol. 2006, 24, 1565–1567. [Google Scholar] [CrossRef] [PubMed]

- Dashtipour, K.; Gogate, M.; Adeel, A.; Algarafi, A.; Howard, N.; Hussain, A. Persian named entity recognition. In Proceedings of the 2017 IEEE 16th International Conference on Cognitive Informatics & Cognitive Computing (ICCI* CC), Oxford, UK, 26–28 July 2017; pp. 79–83. [Google Scholar]

- Minard, A.-L.; Ligozat, A.-L.; Grau, B. Multi-class svm for relation extraction from clinical reports. In Proceedings of the International Conference Recent Advances in Natural Language Processing, Varna, Bulgaria, 2–4 September 2011. [Google Scholar]

- Chen, Y.; Zheng, Q.; Chen, P. Feature assembly method for extracting relations in chinese. Artif. Intell. 2015, 228, 179–194. [Google Scholar] [CrossRef]

- Liu, D.; Hu, Y.; Qian, L. Exploiting lexical semantic resource for tree kernel-based chinese relation extraction. In Proceedings of the NLPCC, Beijing, China, 31 October–5 November 2012; pp. 213–224. [Google Scholar]

- Panyam, N.C.; Verspoor, K.; Cohn, T.; Kotagiri, R. Asm kernel: Graph kernel using approximate subgraph matching for relation extraction. In Proceedings of the ALTA 2016, Perth, Australia, 21–28 May 2016; pp. 65–73. [Google Scholar]

- Leng, J.; Jiang, P. A deep learning approach for relationship extraction from interaction context in social manufacturing paradigm. Knowl. Based Syst. 2016, 100, 188–199. [Google Scholar] [CrossRef]

- Zeng, D.; Liu, K.; Lai, S.; Zhou, G.; Zhao, J. Relation classification via convolutional deep neural network. In Proceedings of the COLING’14, Dublin, Ireland, 23–29 August 2014. [Google Scholar]

- Li, Y.; Nee, M.; Li, G.; Chang, V. Effective piecewise cnn with attention mechanism for distant supervision on relation extraction task. In Proceedings of the 5th International Conference on Complexity, Future Information Systems and Risk 2020 (COMPLEXIS 2020), Prague, Malta, 8–9 May 2020. [Google Scholar]

- Zhang, C.; Zheng, Y.; Guo, B.; Li, C.; Liao, N. Scn: A novel shape classification algorithm based on convolutional neural network. Symmetry 2021, 13, 499. [Google Scholar] [CrossRef]

- Wang, H.; Qin, K.; Lu, G.; Luo, G.; Liu, G. Direction-sensitive relation extraction using bi-sdp attention model—Sciencedirect. Knowl. Based Syst. 2020, 198, 105928. [Google Scholar] [CrossRef]

- Zhou, P.; Shi, W.; Tian, J.; Qi, Z.; Li, B.; Hao, H.; Xu, B. Attention-based bidirectional long short-term memory networks for relation classification. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, Berlin, Germany, 7–12 August 2016; Volume 2, pp. 207–212. [Google Scholar]

- Lee, J.; Seo, S.; Choi, Y.S. Semantic relation classification via bidirectional lstm networks with entity-aware attention using latent entity typing. Symmetry 2019, 11, 785. [Google Scholar] [CrossRef]

- Zhao, L.; Xu, W.; Gao, S.; Guo, J. Cross-sentence n-ary relation classification using lstms on graph and sequence structures. Knowl. Based Syst. 2020, 207, 106266. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv 2018, arXiv:1810.04805. [Google Scholar]

- Huang, W.; Mao, Y.; Yang, Z.; Zhu, L.; Long, J. Relation classification via knowledge graph enhanced transformer encoder. Knowl. Based Syst. 2020, 206, 106321. [Google Scholar] [CrossRef]

- McDonough, K.; Moncla, L.; van de Camp, M. Named entity recognition goes to old regime france: geographic text analysis for early modern french corpora. Int. J. Geogr. Inf. Sci. 2019, 33, 2498–2522. [Google Scholar] [CrossRef]

- Isozaki, H. Japanese named entity recognition based on a simple rule generator and decision tree learning. In Proceedings of the 39th Annual Meeting of the Association for Computational Linguistics, Toulouse, France, 6–11 July 2001; pp. 314–321. [Google Scholar]

- Weegar, R.; Pérez, A.; Casillas, A.; Oronoz, M. Deep medical entity recognition for swedish and spanish. In Proceedings of the 2018 IEEE International Conference on Bioinformatics and Biomedicine (BIBM), Madrid, Spain, 3–6 December 2018; pp. 1595–1601. [Google Scholar]

- Dong, C.; Zhang, J.; Zong, C.; Hattori, M.; Di, H. Character-based lstm-crf with radical-level features for chinese named entity recognition. In Natural Language Understanding and Intelligent Applications; Springer: Berlin/Heidelberg, Germany, 2016; pp. 239–250. [Google Scholar]

- Walker, C.; Strassel, S.; Medero, J.; Maeda, K. Ace 2005 multilingual training corpus. Linguist. Data Consort. Phila. 2006, 57, 45. [Google Scholar]

- Xu, J.; Wen, J.; Sun, X.; Su, Q. A discourse-level named entity recognition and relation extraction dataset for chinese literature text. arXiv 2017, arXiv:1711.07010. [Google Scholar]

- Wen, J.; Sun, X.; Ren, X.; Su, Q. Structure regularized neural network for entity relation classification for chinese literature text. arXiv 2018, arXiv:1803.05662. [Google Scholar]

- Liu, D.; Zhao, Z.; Hu, Y.; Qian, L. Chinese semantic relation extraction based on syntax and entity semantic tree. J. Chin. Inf. Process. 2010, 24, 11–21. [Google Scholar]

- Chen, Y.; Zheng, Q.; Chen, P. A set space model for feature calculus. IEEE Intell. Syst. 2017, 32, 36–42. [Google Scholar] [CrossRef]

- Li, Z.; Ding, N.; Liu, Z.; Zheng, H.; Shen, Y. Chinese relation extraction with multi-grained information and external linguistic knowledge. In Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, 28 July–2 August 2019; pp. 4377–4386. [Google Scholar]

- Chen, Y.; Wang, G.; Zheng, Q.; Qin, Y.; Huang, R.; Chen, P. A set space model to capture structural information of a sentence. IEEE Access 2019, 7, 142515–142530. [Google Scholar] [CrossRef]

- Zhang, P.; Li, W.; Hou, Y.; Song, D. Developing position structure-based framework for chinese entity relation extraction. ACM Trans. Asian Lang. Inf. Process. 2011, 10. [Google Scholar] [CrossRef]

- Socher, R.; Pennington, J.; Huang, E.H.; Ng, A.Y.; Manning, C.D. Semi-supervised recursive autoencoders for predicting sentiment distributions. In Proceedings of the 2011 Conference on Empirical Methods in Natural Language Processing, Scotland, UK, 27–31 July 2011; pp. 151–161. [Google Scholar]

- Santos, C.N.d.; Xiang, B.; Zhou, B. Classifying relations by ranking with convolutional neural networks. arXiv 2015, arXiv:1504.06580. [Google Scholar]

- Liu, Y.; Wei, F.; Li, S.; Ji, H.; Zhou, M.; Wang, H. A dependency-based neural network for relation classification. arXiv 2015, arXiv:1507.04646. [Google Scholar]

- Cai, R.; Zhang, X.; Wang, H. Bidirectional recurrent convolutional neural network for relation classification. In Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, Berlin, Germany, 7–12 August 2016; Volume 1, pp. 756–765. [Google Scholar]

- Zhang, J.; Hao, K.; Tang, X.s.; Cai, X.; Xiao, Y.; Wang, T. A multi-feature fusion model for chinese relation extraction with entity sense. Knowl. Based Syst. 2020, 206, 106348. [Google Scholar] [CrossRef]

- Gormley, M.R.; Yu, M.; Dredze, M. Improved relation extraction with feature-rich compositional embedding models. arXiv 2015, arXiv:1505.02419. [Google Scholar]

- Zhou, G.; Su, J.; Zhang, J.; Zhang, M. Exploring various knowledge in relation extraction. In Proceedings of the 43rd annual meeting of the association for computational linguistics (acl’05), Ann Arbor, MI, USA, 25–30 June 2005; pp. 427–434. [Google Scholar]

| TYPE | SUBTYPE | Abbreviation | Examples |

|---|---|---|---|

| Position Indicators | Dull Positions | P_D | |

| Two-side Positions | P_TS | ||

| Two-side-ARG Positions | P_TSA | ||

| Semantic Indicators | Two-side Types | S_TS_T | |

| Two-side Subtypes | S_TS_S | ||

| Two-side-ARG Types | S_TSA_T | ||

| Two-side-ARG Subtypes | S_TSA_S | ||

| Compound Indicators | Dual-types-side-ARG | C_DTSA | |

| Type-POS-side-ARG | C_PTSA |

| Entity Indicators | ACE Chinese | ACE English | CLTC | ||||||

|---|---|---|---|---|---|---|---|---|---|

| P(%) | R(%) | F1(%) | P(%) | R(%) | F1(%) | P(%) | R(%) | F1(%) | |

| None | 71.29 | 54.92 | 60.32 | 69.54 | 53.43 | 60.40 | 50.64 | 29.28 | 37.10 |

| P_D | 80.11 | 59.54 | 68.31 | 79.49 | 61.24 | 69.18 | 64.38 | 55.34 | 59.52 |

| P_TS | 77.42 | 63.25 | 69.16 | 80.80 | 61.36 | 69.75 | 62.45 | 59.20 | 60.78 |

| P_TSA | 78.65 | 60.02 | 68.08 | 80.13 | 60.81 | 69.15 | 61.41 | 56.74 | 58.98 |

| S_TS_T | 85.27 | 65.89 | 74.34 | 88.01 | 67.63 | 76.49 | 70.13 | 62.79 | 66.26 |

| S_TS_S | 83.90 | 67.89 | 75.05 | 84.90 | 68.55 | 75.85 | × | × | × |

| S_TSA_T | 84.18 | 71.39 | 77.30 | 84.93 | 70.13 | 76.82 | 75.23 | 72.30 | 73.74 |

| S_TSA_S | 85.91 | 67.22 | 75.42 | 87.03 | 69.85 | 77.50 | × | × | × |

| C_DTSA | 85.32 | 70.70 | 77.33 | 86.75 | 71.37 | 78.31 | × | × | × |

| C_PTSA | 91.18 | 88.55 | 89.85 | 85.92 | 69.94 | 77.11 | 75.90 | 73.57 | 74.72 |

| Data | TYPE | None | Position Emb. | Multichannel | Entity Indicator | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| P(%) | R(%) | F1(%) | P(%) | R(%) | F1(%) | P(%) | R(%) | F1(%) | P(%) | R(%) | F1(%) | ||

| ACE Chinese | PHYS | 39.70 | 45.40 | 42.36 | 53.21 | 33.33 | 40.99 | 55.00 | 25.29 | 34.65 | 85.88 | 83.91 | 84.88 |

| ART | 64.00 | 20.51 | 31.07 | 66.67 | 35.90 | 46.67 | 70.00 | 26.92 | 38.89 | 95.45 | 80.77 | 87.50 | |

| GEN-AFF | 79.35 | 67.91 | 73.18 | 67.27 | 68.84 | 68.05 | 76.29 | 68.84 | 72.37 | 84.62 | 86.98 | 85.78 | |

| ORG-AFF | 82.01 | 73.46 | 77.50 | 70.82 | 78.20 | 74.32 | 88.70 | 74.41 | 80.93 | 95.22 | 94.31 | 94.76 | |

| PART-WHOLE | 79.36 | 73.62 | 76.38 | 66.91 | 76.60 | 71.43 | 78.32 | 75.32 | 76.79 | 87.34 | 88.09 | 87.71 | |

| PER-SOC | 83.33 | 48.61 | 61.40 | 65.22 | 62.50 | 63.83 | 73.08 | 52.78 | 61.29 | 98.59 | 97.22 | 97.90 | |

| Total | 71.29 | 54.92 | 60.32 | 65.02 | 59.23 | 61.99 | 73.56 | 53.93 | 62.23 | 91.18 | 88.55 | 89.85 | |

| ACE English | PHYS | 62.96 | 31.29 | 41.80 | 75.00 | 34.94 | 47.70 | 82.61 | 34.97 | 49.14 | 72.86 | 62.58 | 67.33 |

| ART | 71.05 | 42.19 | 52.94 | 85.29 | 45.31 | 59.18 | 86.11 | 48.44 | 62.00 | 88.37 | 59.38 | 71.03 | |

| GEN-AFF | 56.86 | 43.28 | 49.15 | 67.31 | 52.24 | 58.82 | 69.09 | 56.72 | 62.30 | 90.24 | 55.22 | 68.52 | |

| ORG-AFF | 70.26 | 74.05 | 72.11 | 84.80 | 78.38 | 81.46 | 79.49 | 83.78 | 81.58 | 90.45 | 87.03 | 88.71 | |

| PART-WHOLE | 66.67 | 59.77 | 63.03 | 68.82 | 73.56 | 71.11 | 74.67 | 64.37 | 69.14 | 85.71 | 82.76 | 84.21 | |

| PER-SOC | 88.89 | 70.00 | 78.32 | 85.51 | 73.75 | 79.19 | 89.71 | 76.25 | 82.43 | 92.86 | 81.25 | 86.67 | |

| Total | 69.54 | 53.43 | 60.40 | 77.79 | 59.70 | 67.55 | 80.28 | 60.75 | 67.76 | 86.75 | 71.37 | 78.31 | |

| CLTC | Ownership | 26.67 | 4.65 | 7.92 | 50.00 | 9.30 | 15.69 | 63.86 | 61.63 | 62.72 | 82.61 | 66.28 | 73.55 |

| Create | 17.65 | 7.89 | 10.91 | 39.29 | 28.95 | 33.33 | 71.43 | 52.63 | 60.61 | 65.00 | 68.42 | 66.67 | |

| Family | 49.05 | 68.62 | 57.21 | 57.02 | 69.15 | 62.50 | 81.22 | 85.11 | 83.12 | 89.56 | 86.70 | 88.11 | |

| Social | 50.91 | 25.69 | 34.15 | 45.22 | 47.71 | 46.43 | 70.19 | 66.97 | 68.54 | 71.54 | 85.32 | 77.82 | |

| Located | 56.00 | 83.07 | 66.90 | 59.42 | 83.42 | 69.41 | 82.38 | 94.00 | 87.81 | 90.02 | 97.00 | 93.38 | |

| General-Special | 22.12 | 26.04 | 23.92 | 34.29 | 25.00 | 28.92 | 47.32 | 55.21 | 50.96 | 58.82 | 52.08 | 55.25 | |

| Near | 100.00 | 1.96 | 3.85 | 100.00 | 1.96 | 3.85 | 50.00 | 19.61 | 28.17 | 64.29 | 35.29 | 45.57 | |

| Use | 85.71 | 5.56 | 10.43 | 52.17 | 22.22 | 31.17 | 75.96 | 73.15 | 74.53 | 76.34 | 92.59 | 83.68 | |

| Part-Whole | 47.66 | 40.05 | 43.52 | 55.47 | 48.15 | 51.55 | 80.79 | 71.06 | 75.62 | 84.96 | 78.47 | 81.59 | |

| Total | 50.64 | 29.28 | 37.10 | 54.76 | 37.32 | 44.39 | 69.24 | 64.37 | 66.72 | 75.90 | 73.57 | 74.72 | |

| Model | Method | P(%) | R(%) | F1(%) |

|---|---|---|---|---|

| Yu et al. [45] | Convolutional kernel based on syntax and entity semantic tree. | 75.30 (×) | 60.43 (×) | 67.00 (×) |

| Liu et al. [26] | Tree-Kernel with lexical semantic resources. | 81.10 (79.1) | 60.00 (57.5) | 69.00 (66.6) |

| Zhang et al. [49] | Position structures between named entities. | 80.71 (×) | 62.48 (×) | 70.43 (×) |

| Chen et al. [46] | Combined features for capturing structural information. | 93.01 (81.41) | 89.45 (72.30) | 91.20 (76.59) |

| Li et al. [47] | Lattice LSTM with multigrained information. | × (×) | × (×) | 78.17 (×) |

| Chen et al. [48] | A CNN and attention architecture. | 82.35 (×) | 79.22 (×) | 80.33 (×) |

| Ours | Random-CNN with the “C_PTSA” encoding. | 91.18 (76.94) | 88.55 (73.18) | 89.85 (75.01) |

| BERT-CNN with the “C_PTSA” encoding. | 95.32 (84.34) | 94.57 82.69) | 94.94 (83.51) |

| Model | Arch. | Information | F1(%) |

|---|---|---|---|

| Hendrickx et al. [1] | SVM | Word embeddings, NER, WordNet, dependency parse, HowNet, POS, Google n-gram | 48.9 |

| Socher et al. [50] | RNN | Word embeddings, POS, NER, WordNet | 49.1 |

| Zeng et al. [29] | CNN | Word embeddings, position embeddings, NER, WordNet | 52.4 |

| Santos et al. [51] | CR-CNN | Word embeddings, position embeddings | 54.1 |

| Xu et al. [14] | SDP-LSTM | Word embeddings, POS, NER, WordNet | 55.3 |

| Liu et al. [52] | DepNN | Word embeddings, WordNet | 55.2 |

| Cai et al. [53] | BRCNN | Word embeddings, POS, NER, WordNet | 55.6 |

| Zhang et al. [54] | C-ATT-BLSTM | Character embedding, position embedding, entity sense | 56.2 |

| Wen et al. [44] | SR-BRCNN | Word embeddings, POS, NER, WordNet | 65.9 |

| Ours | Random-CNN | Entity indicators with the S_TSA_T encoding. | 74.72 |

| BERT-CNN | Entity indicators with the S_TSA_T encoding. | 77.14 |

| Model | Arch. | Method | P(%) | R(%) | F1(%) |

|---|---|---|---|---|---|

| Kambhatla et al. [20] | ME | A feature-based model. | 63.50 (×) | 45.20 (×) | 52.80 (×) |

| Zheng et al. [16] | MIX-CNN | Automatically extract features based on multiple CNNs. | 60.00 (×) | 48.40 (×) | 53.60 (×) |

| Gormley et al. [55] | FCM | Combine features and word embeddings. | 71.52 (×) | 49.32 (×) | 58.26 (×) |

| Zhou et al. [56] | SVM | Phrase chunking information | 77.20 | 60.70 | 68.00 |

| Zhong et al. [19] | BERT | Entity marker embedding | × (×) | × (×) | 73.10 (×) |

| Chen et al. [46] | SSM | Feature calculus | 84.50 (71.89) | 77.32 (56.06) | 80.75 (63.03) |

| Ours | BERT-CNN | Entity indicators with the C_PTSA encoding. | 88.83 (88.82) | 82.78 (75.67) | 85.70 (81.72) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Qin, Y.; Yang, W.; Wang, K.; Huang, R.; Tian, F.; Ao, S.; Chen, Y. Entity Relation Extraction Based on Entity Indicators. Symmetry 2021, 13, 539. https://doi.org/10.3390/sym13040539

Qin Y, Yang W, Wang K, Huang R, Tian F, Ao S, Chen Y. Entity Relation Extraction Based on Entity Indicators. Symmetry. 2021; 13(4):539. https://doi.org/10.3390/sym13040539

Chicago/Turabian StyleQin, Yongbin, Weizhe Yang, Kai Wang, Ruizhang Huang, Feng Tian, Shaolin Ao, and Yanping Chen. 2021. "Entity Relation Extraction Based on Entity Indicators" Symmetry 13, no. 4: 539. https://doi.org/10.3390/sym13040539

APA StyleQin, Y., Yang, W., Wang, K., Huang, R., Tian, F., Ao, S., & Chen, Y. (2021). Entity Relation Extraction Based on Entity Indicators. Symmetry, 13(4), 539. https://doi.org/10.3390/sym13040539