Human Symmetry Uncertainty Detected by a Self-Organizing Neural Network Map

Abstract

1. Introduction

2. Materials and Methods

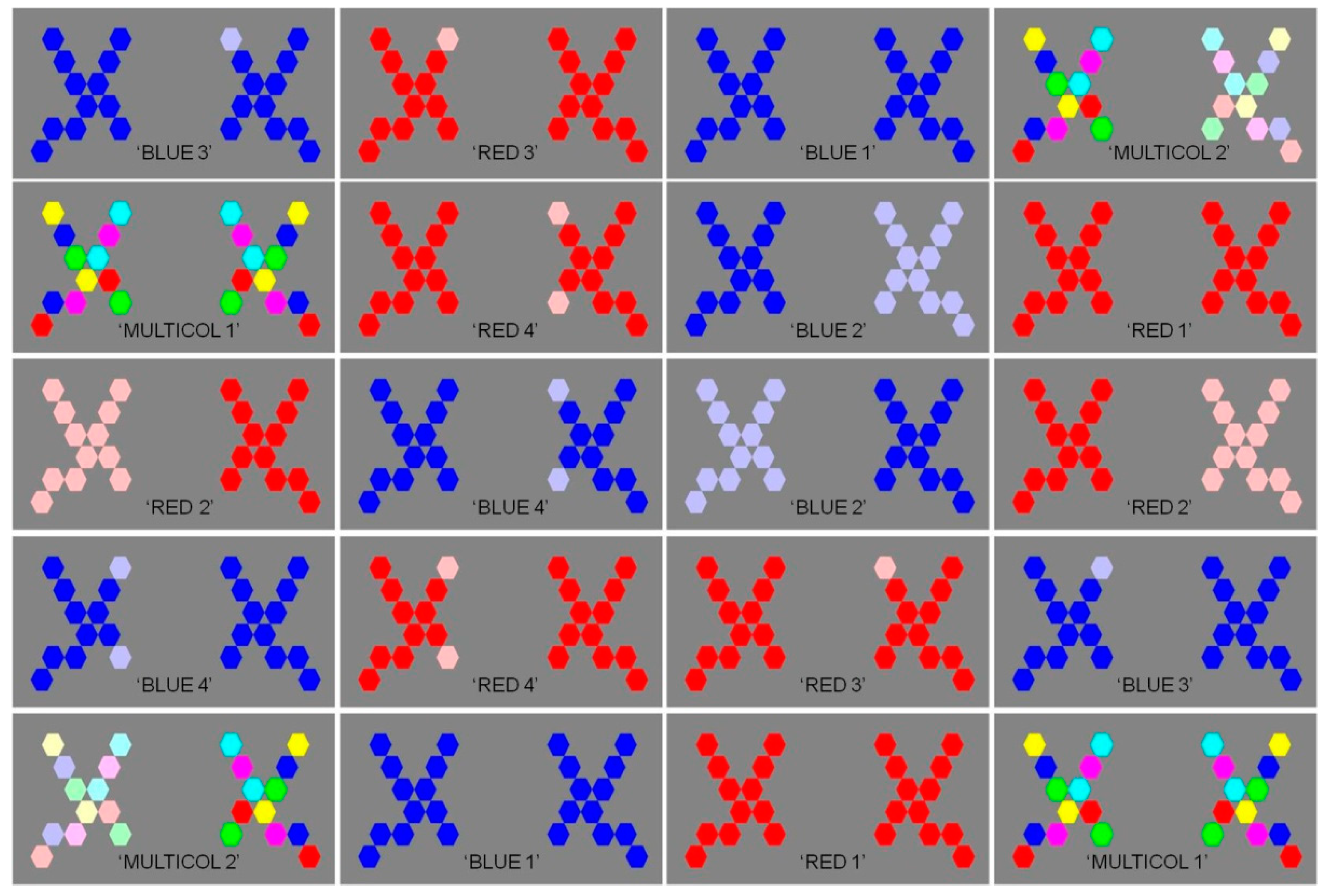

2.1. Original Images

2.2. Experimental Display

2.3. Choice Response Time Test

2.4. Neural Network (SOM) Analysis

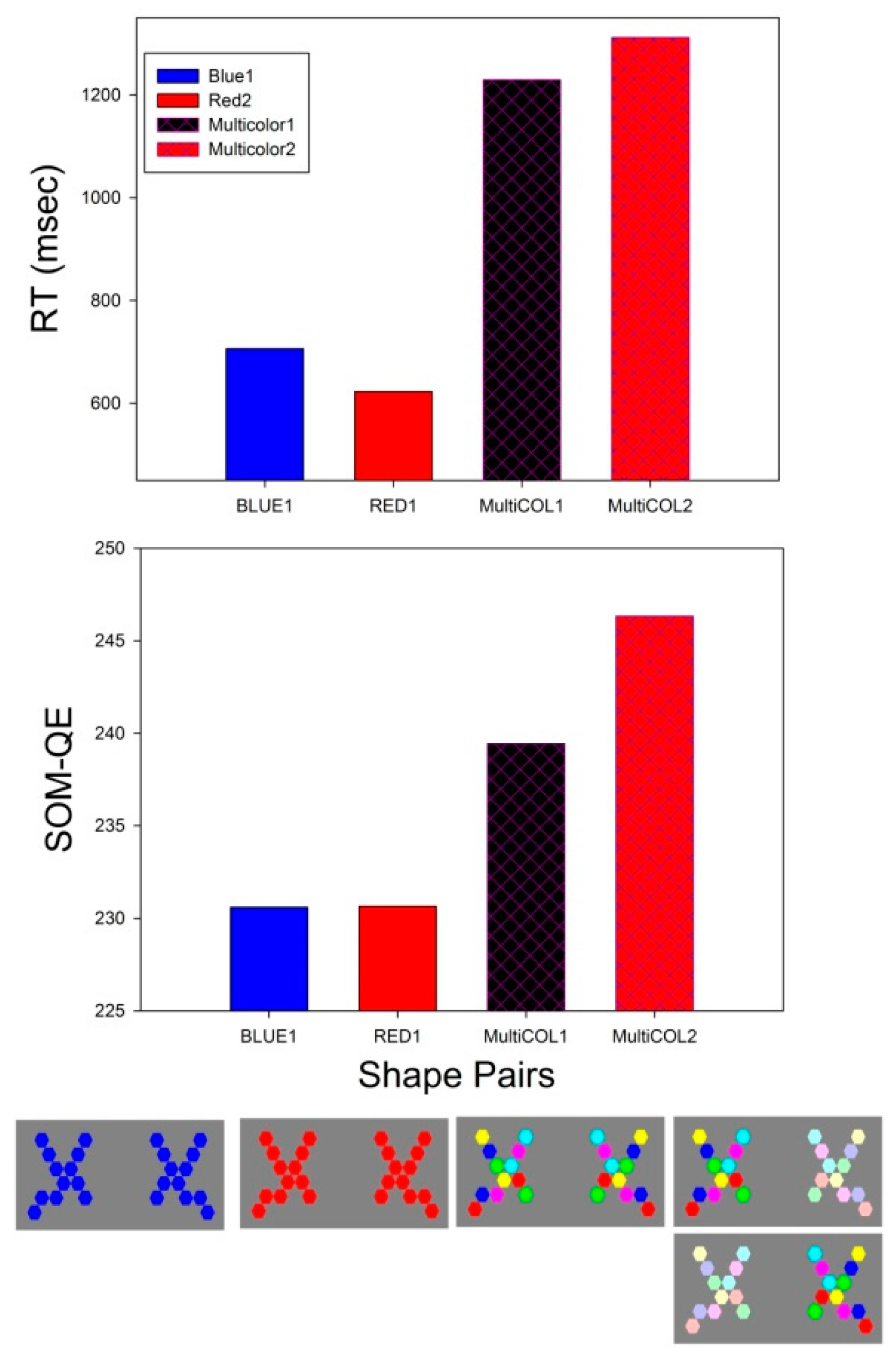

3. Results

3.1. Two-Way ANOVA on Choice Response Times

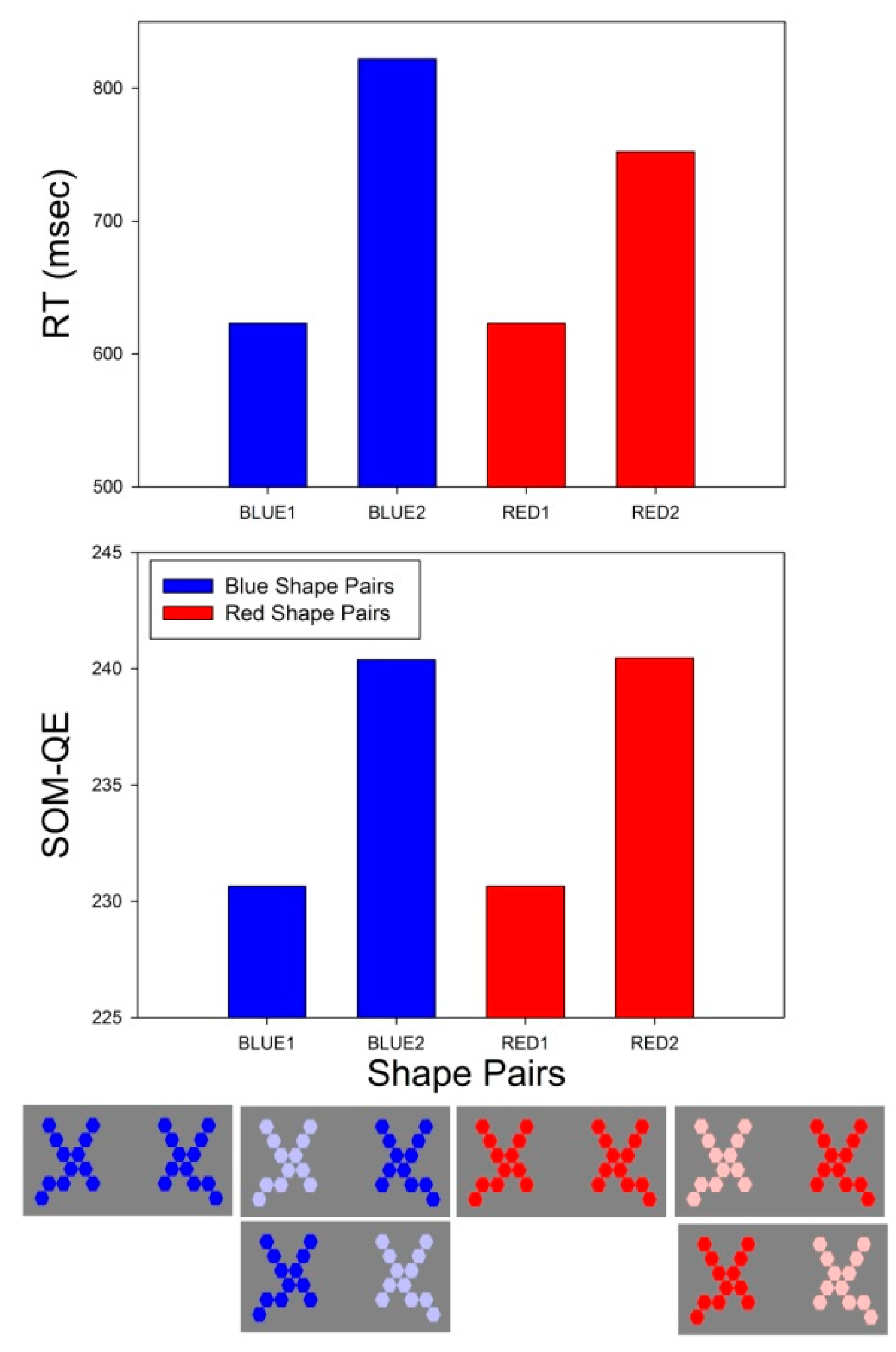

3.1.1. A4 × C2 × 15

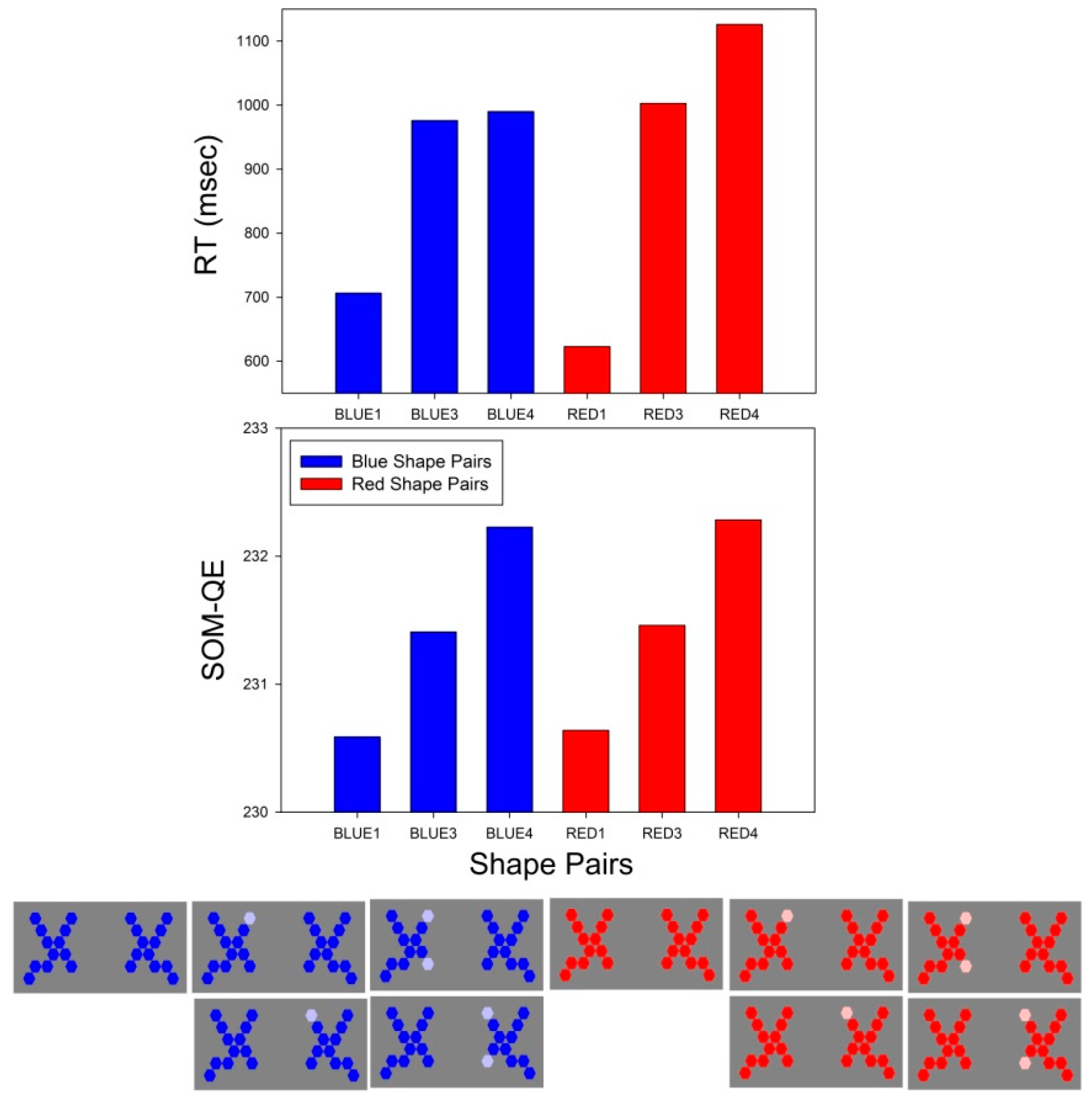

3.1.2. A2 × C3 × 15

3.2. RT Effect Sizes

3.3. SOM-QE Effect Sizes

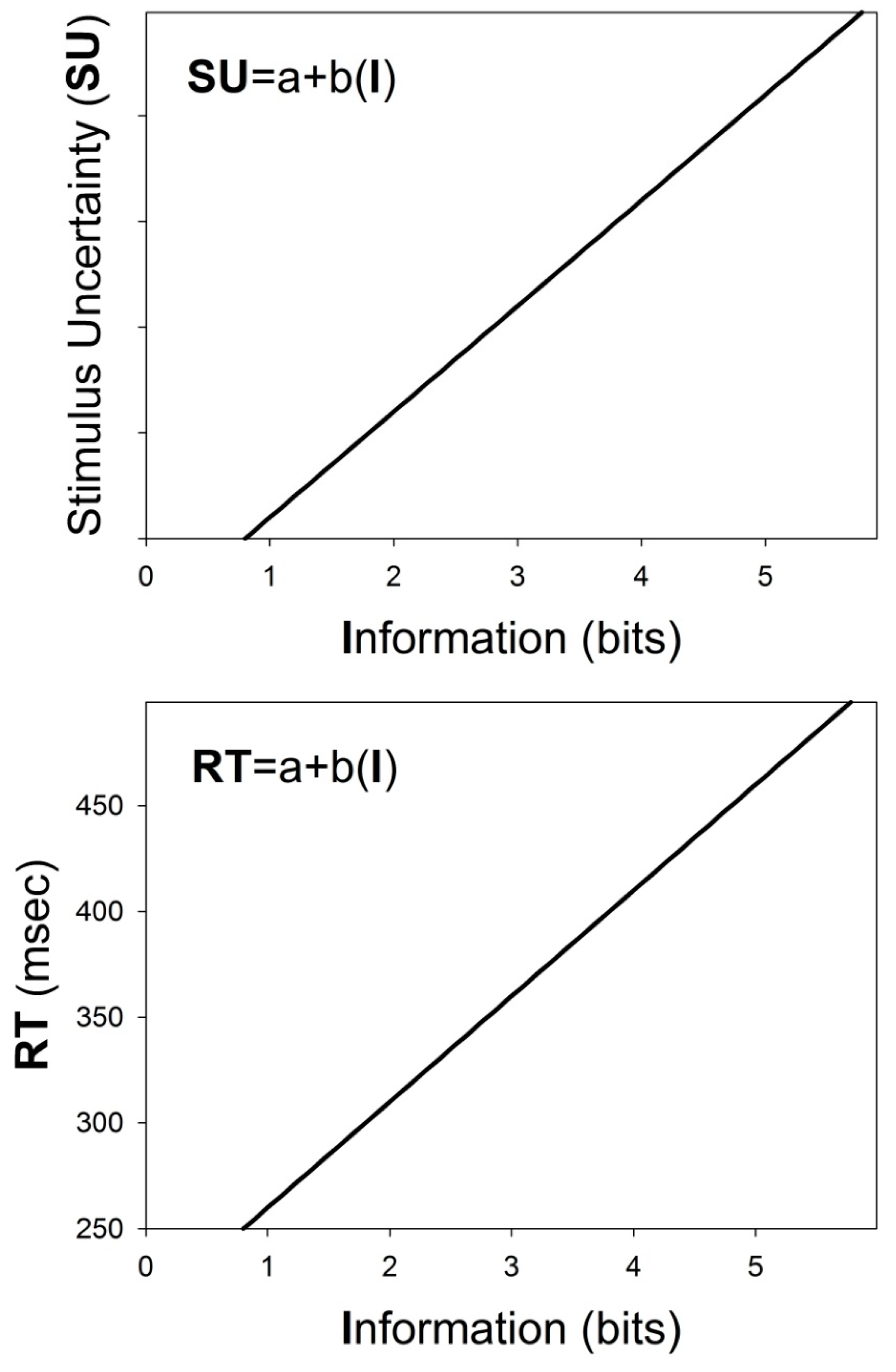

3.4. Linear Regression Analyses

4. Discussion

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Schweisguth, F.; Corson, F. Self-Organization in Pattern Formation. Dev. Cell 2019, 49, 659–677. [Google Scholar] [CrossRef] [PubMed]

- Carroll, S.B. Chance and necessity: The evolution of morphological complexity and diversity. Nature 2001, 409, 1102–1109. [Google Scholar] [CrossRef] [PubMed]

- García-Bellido, A. Symmetries throughout organic evolution. Proc. Natl. Acad. Sci. USA 1996, 93, 14229–14232. [Google Scholar] [CrossRef]

- Groves, J.T. The physical chemistry of membrane curvature. Nat. Chem. Biol. 2009, 5, 783–784. [Google Scholar] [CrossRef]

- Hatzakis, N.S.; Bhatia, V.K.; Larsen, J.; Madsen, K.L.; Bolinger, P.Y.; Kunding, A.H.; Castillo, J.; Gether, U.; Hedegård, P.; Stamou, D. How curved membranes recruit amphipathic helices and protein anchoring motifs. Nat. Chem. Biol. 2009, 5, 835–841. [Google Scholar] [CrossRef] [PubMed]

- Holló, G. Demystification of animal symmetry: Symmetry is a response to mechanical forces. Biol. Direct 2017, 12, 11. [Google Scholar] [CrossRef]

- Mach, E. On Symmetry. In Popular Scientific Lectures; Open Court Publishing: Lasalle, IL, USA, 1893. [Google Scholar]

- Arnheim, R. Visual Thinking, 1969; University of California Press: Oakland, CA, USA, 2004. [Google Scholar]

- Deregowski, J.B. Symmetry, Gestalt and information theory. Q. J. Exp. Psychol. 1971, 23, 381–385. [Google Scholar] [CrossRef]

- Eisenman, R. Complexity–simplicity: I. Preference for symmetry and rejection of complexity. Psychon. Sci. 1967, 8, 169–170. [Google Scholar] [CrossRef]

- Eisenman, R.; Rappaport, J. Complexity preference and semantic differential ratings of complexity-simplicity and symmetry-asymmetry. Psychon. Sci. 1967, 7, 147–148. [Google Scholar] [CrossRef]

- Deregowski, J.B. The role of symmetry in pattern reproduction by Zambian children. J. Cross Cult. Psychol. 1972, 3, 303–307. [Google Scholar] [CrossRef]

- Amir, O.; Biederman, I.; Hayworth, K.J. Sensitivity to non-accidental properties across various shape dimensions. Vis. Res. 2012, 62, 35–43. [Google Scholar] [CrossRef]

- Bahnsen, P. Eine Untersuchung über Symmetrie und Asymmetrie bei visuellen Wahrnehmungen. Z. Für Psychol. 1928, 108, 129–154. [Google Scholar]

- Wagemans, J. Characteristics and models of human symmetry detection. Trends Cogn. Sci. 1997, 9, 346–352. [Google Scholar] [CrossRef]

- Sweeny, T.D.; Grabowecky, M.; Kim, Y.J.; Suzuki, S. Internal curvature signal and noise in low- and high-level vision. J. Neurophysiol. 2011, 105, 1236–1257. [Google Scholar] [CrossRef] [PubMed]

- Wilson, H.R.; Wilkinson, F. Symmetry perception: A novel approach for biological shapes. Vis. Res. 2002, 42, 589–597. [Google Scholar] [CrossRef]

- Baylis, G.C.; Driver, J. Perception of symmetry and repetition within and across visual shapes: Part-descriptions and object-based attention. Vis. Cognit. 2001, 8, 163–196. [Google Scholar] [CrossRef]

- Michaux, A.; Kumar, V.; Jayadevan, V.; Delp, E.; Pizlo, Z. Binocular 3D Object Recovery Using a Symmetry Prior. Symmetry 2017, 9, 64. [Google Scholar] [CrossRef]

- Jayadevan, V.; Sawada, T.; Delp, E.; Pizlo, Z. Perception of 3D Symmetrical and Nearly Symmetrical Shapes. Symmetry 2018, 10, 344. [Google Scholar] [CrossRef]

- Li, Y.; Sawada, T.; Shi, Y.; Steinman, R.M.; Pizlo, Z. Symmetry is the sine qua non of shape. In Shape Perception in Human and Computer Vision; Dickinson, S., Pizlo, Z., Eds.; Springer: London, UK, 2013; pp. 21–40. [Google Scholar]

- Pizlo, Z.; Sawada, T.; Li, Y.; Kropatsch, W.G.; Steinman, R.M. New approach to the perception of 3D shape based on veridicality, complexity, symmetry and volume: A mini-review. Vis. Res. 2010, 50, 1–11. [Google Scholar] [CrossRef]

- Barlow, H.B.; Reeves, B.C. The versatility and absolute efficiency of detecting mirror symmetry in random dot displays. Vis. Res. 1979, 19, 783–793. [Google Scholar] [CrossRef]

- Barrett, B.T.; Whitaker, D.; McGraw, P.V.; Herbert, A.M. Discriminating mirror symmetry in foveal and extra-foveal vision. Vis. Res. 1999, 39, 3737–3744. [Google Scholar] [CrossRef]

- Machilsen, B.; Pauwels, M.; Wagemans, J. The role of vertical mirror symmetry in visual shape perception. J. Vis. 2009, 9, 11. [Google Scholar] [CrossRef] [PubMed]

- Dresp-Langley, B. Bilateral Symmetry Strengthens the Perceptual Salience of Figure against Ground. Symmetry 2019, 11, 225. [Google Scholar] [CrossRef]

- Dresp-Langley, B. Affine Geometry, Visual Sensation, and Preference for Symmetry of Things in a Thing. Symmetry 2016, 8, 127. [Google Scholar] [CrossRef]

- Sabatelli, H.; Lawandow, A.; Kopra, A.R. Asymmetry, symmetry and beauty. Symmetry 2010, 2, 1591–1624. [Google Scholar] [CrossRef]

- Poirier, F.J.A.M.; Wilson, H.R. A biologically plausible model of human shape symmetry perception. J. Vis. 2010, 10, 1–16. [Google Scholar] [CrossRef] [PubMed]

- Giurfa, M.; Eichmann, B.; Menzl, R. Symmetry perception in an insect. Nature 1996, 382, 458–461. [Google Scholar] [CrossRef]

- Krippendorf, S.; Syvaeri, M. Detecting symmetries with neural networks. Mach. Learn. Sci. Technol. 2021, 2, 015010. [Google Scholar] [CrossRef]

- Toureau, V.; Bibiloni, P.; Talavera-Martínez, L.; González-Hidalgo, M. Automatic Detection of Symmetry in Dermoscopic Images Based on Shape and Texture. Inf. Process. Manag. Uncertain. Knowl. Based Syst. 2020, 1237, 625–636. [Google Scholar]

- Shen, D.; Wu, G.; Suk, H.I. Deep Learning in Medical Image Analysis. Annu. Rev. Biomed. Eng. 2017, 19, 221–248. [Google Scholar] [CrossRef]

- Hramov, A.E.; Frolov, N.S.; Maksimenko, V.A.; Makarov, V.V.; Koronovskii, A.A.; Garcia-Prieto, J.; Antón-Toro, L.F.; Maestú, F.; Pisarchik, A.N. Artificial neural network detects human uncertainty. Chaos 2018, 28, 033607. [Google Scholar] [CrossRef]

- Dresp-Langley, B. Seven Properties of Self-Organization in the Human Brain. Big Data Cogn. Comput. 2020, 4, 10. [Google Scholar] [CrossRef]

- Wandeto, J.M.; Dresp-Langley, B. Ultrafast automatic classification of SEM image sets showing CD4 + cells with varying extent of HIV virion infection. In Proceedings of the 7ièmes Journées de la Fédération de Médecine Translationnelle de l’Université de Strasbourg, Strasbourg, France, 25–26 May 2019. [Google Scholar]

- Dresp-Langley, B.; Wandeto, J.M. Unsupervised classification of cell imaging data using the quantization error in a Self-Organizing Map. In Transactions on Computational Science and Computational Intelligence; Arabnia, H.R., Ferens, K., de la Fuente, D., Kozerenko, E.B., Olivas Varela, J.A., Tinetti, F.G., Eds.; Advances in Artificial Intelligence and Applied Computing; Springer-Nature: Berlin/Heidelberg, Germany, in press.

- Wandeto, J.M.; Nyongesa, H.K.O.; Remond, Y.; Dresp-Langley, B. Detection of small changes in medical and random-dot images comparing self-organizing map performance to human detection. Inf. Med. Unlocked 2017, 7, 39–45. [Google Scholar] [CrossRef]

- Wandeto, J.M.; Nyongesa, H.K.O.; Dresp-Langley, B. Detection of smallest changes in complex images comparing self-organizing map and expert performance. In Proceedings of the 40th European Conference on Visual Perception, Berlin, Germany, 27–31 August 2017. [Google Scholar]

- Wandeto, J.M.; Dresp-Langley, B.; Nyongesa, H.K.O. Vision-Inspired Automatic Detection of Water-Level Changes in Satellite Images: The Example of Lake Mead. In Proceedings of the 41st European Conference on Visual Perception, Trieste, Italy, 26–30 August 2018. [Google Scholar]

- Dresp-Langley, B.; Wandeto, J.M.; Nyongesa, H.K.O. Using the quantization error from Self-Organizing Map output for fast detection of critical variations in image time series. In ISTE OpenScience, collection “From data to decisions”; Wiley & Sons: London, UK, 2018. [Google Scholar]

- Wandeto, J.M.; Dresp-Langley, B. The quantization error in a Self-Organizing Map as a contrast and colour specific indicator of single-pixel change in large random patterns. Neural Netw. 2019, 119, 273–285, Special Issue in Neural Netw. 2019, 120, 116–128.. [Google Scholar] [CrossRef] [PubMed]

- Dresp-Langley, B.; Wandeto, J.M. Pixel precise unsupervised detection of viral particle proliferation in cellular imaging data. Inf. Med. Unlocked 2020, 20, 100433. [Google Scholar] [CrossRef] [PubMed]

- Dresp-Langley, B.; Reeves, A. Simultaneous brightness and apparent depth from true colors on grey: Chevreul revisited. Seeing Perceiving 2012, 25, 597–618. [Google Scholar] [CrossRef] [PubMed]

- Dresp-Langley, B.; Reeves, A. Effects of saturation and contrast polarity on the figure-ground organization of color on gray. Front. Psychol. 2014, 5, 1136. [Google Scholar] [CrossRef]

- Dresp-Langley, B.; Reeves, A. Color and figure-ground: From signals to qualia, In Perception Beyond Gestalt: Progress in Vision Research; Geremek, A., Greenlee, M., Magnussen, S., Eds.; Psychology Press: Hove, UK, 2016; pp. 159–171. [Google Scholar]

- Dresp-Langley, B.; Reeves, A. Color for the perceptual organization of the pictorial plane: Victor Vasarely’s legacy to Gestalt psychology. Heliyon 2020, 6, 04375. [Google Scholar] [CrossRef]

- Bonnet, C.; Fauquet, A.J.; Estaún Ferrer, S. Reaction times as a measure of uncertainty. Psicothema 2008, 20, 43–48. [Google Scholar]

- Brown, S.D.; Marley, A.A.; Donkin, C.; Heathcote, A. An integrated model of choices and response times in absolute identification. Psychol. Rev. 2008, 115, 396–425. [Google Scholar] [CrossRef] [PubMed]

- Luce, R.D. Response Times: Their Role in Inferring Elementary Mental Organization; Oxford University Press: New York, NY, USA, 1986. [Google Scholar]

- Posner, M.I. Timing the brain: Mental chronometry as a tool in neuroscience. PLoS Biol. 2005, 3, e51. [Google Scholar] [CrossRef]

- Posner, M.I. Chronometric Explorations of Mind; Erlbaum: Hillsdale, NJ, USA, 1978. [Google Scholar]

- Hickw, E. Rate Gain Information. Q. J. Exp. Psychol. 1952, 4, 11–26. [Google Scholar]

- Bartz, A.E. Reaction time as a function of stimulus uncertainty on a single trial. Percept. Psychophys. 1971, 9, 94–96. [Google Scholar] [CrossRef]

- Jensen, A.R. Clocking the Mind: Mental Chronometry and Individual Differences; Elsevier: Amsterdam, The Netherland, 2006. [Google Scholar]

- Salthouse, T.A. Aging and measures of processing speed. Biol. Psychol. 2000, 54, 35–54. [Google Scholar] [CrossRef]

- Kuang, S. Is reaction time an index of white matter connectivity during training? Cogn. Neurosci. 2017, 8, 126–128. [Google Scholar] [CrossRef] [PubMed]

- Ishihara, S. Tests for Color-Blindness; Hongo Harukicho: Tokyo, Japan, 1917. [Google Scholar]

- Monfouga, M. Python Code for 2AFC Forced-Choice Experiments Using Contrast Patterns. 2019. Available online: https://pumpkinmarie.github.io/ExperimentalPictureSoftware/ (accessed on 8 January 2021).

- Dresp-Langley, B.; Monfouga, M. Combining Visual Contrast Information with Sound Can Produce Faster Decisions. Information 2019, 10, 346. [Google Scholar] [CrossRef]

- Kohonen, T. Self-Organizing Maps. 2001. Available online: http://link.springer.com/10.1007/978-3-642-56927-2 (accessed on 8 January 2021).

- Kohonen, T. MATLAB Implementations and Applications of the Self-Organizing Map; Unigrafia Oy: Helsinki, Finland, 2014; p. 177. [Google Scholar]

- Nordfang, M.; Dyrholm, M.; Bundesen, C. Identifying bottom-up and top-down components of attentional weight by experimental analysis and computational modeling. J. Exp. Psychol. Gen. 2013, 142, 510–535. [Google Scholar] [CrossRef] [PubMed]

- Liesefeld, H.R.; Müller, H.J. Modulations of saliency signals at two hierarchical levels of priority computation revealed by spatial statistical distractor learning. J. Exp. Psychol. Gen. 2020, in press. [Google Scholar] [CrossRef] [PubMed]

- Dresp, B.; Fischer, S. Asymmetrical contrast effects induced by luminance and color configurations. Percept. Psychophys. 2001, 63, 1262–1270. [Google Scholar] [CrossRef]

- Dresp-Langley, B. Why the brain knows more than we do: Non-conscious representations and their role in the construction of conscious experience. Brain Sci. 2012, 2, 1–21. [Google Scholar] [CrossRef]

- Dresp-Langley, B. Generic properties of curvature sensing by vision and touch. Comput. Math. Methods Med. 2013, 634168. [Google Scholar] [CrossRef] [PubMed]

- Dresp-Langley, B. 2D geometry predicts perceived visual curvature in context-free viewing. Comput. Intell. Neurosci. 2015, 9. [Google Scholar] [CrossRef]

- Gerbino, W.; Zhang, L. Visual orientation and symmetry detection under affine transformations. Bull. Psychon. Soc. 1991, 29, 480. [Google Scholar]

- Batmaz, A.U.; de Mathelin, M.; Dresp-Langley, B. Seeing virtual while acting real: Visual display and strategy effects on the time and precision of eye-hand coordination. PLoS ONE 2017, 12, e0183789. [Google Scholar] [CrossRef] [PubMed]

- Dresp-Langley, B. Principles of perceptual grouping: Implications for image-guided surgery. Front. Psychol. 2015, 6, 1565. [Google Scholar] [CrossRef]

- Martinovic, J.; Jennings, B.J.; Makin, A.D.J.; Bertamini, M.; Angelescu, I. Symmetry perception for patterns defined by color and luminance. J. Vis. 2018, 18, 4. [Google Scholar] [CrossRef] [PubMed]

- Treder, M.S. Behind the Looking-Glass: A Review on Human Symmetry Perception. Symmetry 2010, 2, 1510–1543. [Google Scholar] [CrossRef]

- Spillmann, L.; Dresp-Langley, B.; Tseng, C.H. Beyond the classic receptive field: The effect of contextual stimuli. J. Vis. 2015, 15, 7. [Google Scholar] [CrossRef]

- Tsogkas, S.; Kokkinos, I. Learning-Based Symmetry Detection in Natural Images. In Lecture Notes in Computer Science; Computer Vision—ECCV 2012; Fitzgibbon, A., Lazebnik, S., Perona, P., Sato, Y., Schmid, C., Eds.; Springer: Berlin/Heidelberg, Germany, 2012; Volume 7578. [Google Scholar]

- Liu, Y. Computational Symmetry in Computer Vision and Computer Graphics; Now Publishers Inc.: Norwell, MA, USA, 2009. [Google Scholar]

- Bakhshandeh, S.; Azmi, R.; Teshnehlab, M. Symmetric uncertainty class-feature association map for feature selection in microarray dataset. Int. J. Mach. Learn. Cyber. 2020, 11, 15–32. [Google Scholar] [CrossRef]

- Radovic, M.; Ghalwash, M.; Filipovic, N.; Obradovic, Z. Minimum redundancy maximum relevance feature selection approach for temporal gene expression data. BMC Bioinform. 2017, 18, 9. [Google Scholar] [CrossRef]

- Strippoli, P.; Canaider, S.; Noferini, F.; D’Addabbo, P.; Vitale, L.; Facchin, F.; Lenzi, L.; Casadei, R.; Carinci, P.; Zannotti, M.; et al. Uncertainty principle of genetic information in a living cell. Theor. Biol. Med. Model. 2005, 30, 40. [Google Scholar] [CrossRef] [PubMed]

| Color | Hue | Saturation | Lightness | R-G-B | |

|---|---|---|---|---|---|

| “Strong” | BLUE | 240 | 100 | 50 | 0-0-255 |

| RED | 0 | 100 | 50 | 255-0-0 | |

| GREEN | 120 | 100 | 50 | 0-255-0 | |

| MAGENTA | 300 | 100 | 50 | 255-0-255 | |

| YELLOW | 60 | 100 | 50 | ||

| “Pale” | BLUE | 180 | 95 | 50 | 10-250-250 |

| RED | 0 | 100 | 87 | 255-190-190 | |

| GREEN | 120 | 100 | 87 | 190-255-190 | |

| MAGENTA | 300 | 25 | 87 | 255-190-255 | |

| YELLOW | 600 | 65 | 67 | 255-255-190 |

| Factor | DF | F | p | |

|---|---|---|---|---|

| 1st 2-way ANOVA | APPEARANCE | 3 | 68.42 | <0.001 |

| COLOR | 1 | 0.012 | <0.914 NS | |

| INTERACTION | 3 | 5.37 | <0.01 | |

| 2nd 2-way ANOVA | APPEARANCE | 1 | 8.20 | <0.01 |

| COLOR | 2 | 123.56 | <0.001 | |

| INTERACTION | 2 | 0.564 | <0.57 NS |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Dresp-Langley, B.; Wandeto, J.M. Human Symmetry Uncertainty Detected by a Self-Organizing Neural Network Map. Symmetry 2021, 13, 299. https://doi.org/10.3390/sym13020299

Dresp-Langley B, Wandeto JM. Human Symmetry Uncertainty Detected by a Self-Organizing Neural Network Map. Symmetry. 2021; 13(2):299. https://doi.org/10.3390/sym13020299

Chicago/Turabian StyleDresp-Langley, Birgitta, and John M. Wandeto. 2021. "Human Symmetry Uncertainty Detected by a Self-Organizing Neural Network Map" Symmetry 13, no. 2: 299. https://doi.org/10.3390/sym13020299

APA StyleDresp-Langley, B., & Wandeto, J. M. (2021). Human Symmetry Uncertainty Detected by a Self-Organizing Neural Network Map. Symmetry, 13(2), 299. https://doi.org/10.3390/sym13020299