1. Introduction

Copy-move forgery (CMF) is a popular image tampering method, wherein a portion of an image is copied from one section of the image and is pasted elsewhere in the same image. An image can be forged to conceal or change its meaning by the copy-move process. Therefore, it is important to verify the authenticity of the image and localize the copied and moved regions. Because the copied portion of an image is generally scaled or rotated, it is difficult to verify the authenticity of the image based on visual inspection alone. For this reason, the development of reliable copy-move forgery detection (CMFD) methods has become an important issue [

1,

2,

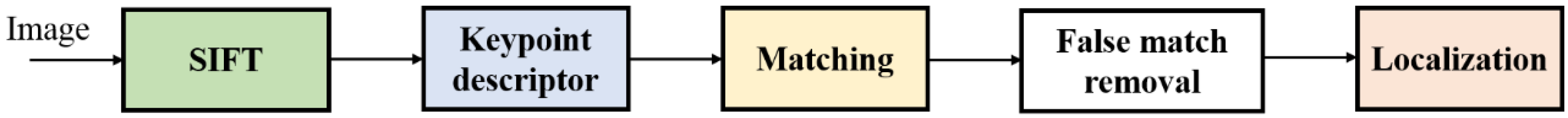

3]. The common framework of CMFD comprises five steps: preprocessing (optional), feature extraction, matching, false match removal, and localization.

The optional first step of the CMFD process is preprocessing. In this step, the conversion of RGB color channels to grayscale [

4,

5] is often exploited to reduce the dimensionality of the input images. Alternately, RGB colors can be converted into the YCbCr [

6,

7] or the HSV [

8] color space to use both the luminance and chrominance information. Various block division and segmentation methods can be considered for use in preprocessing. An image can be divided into overlapping square blocks [

9,

10,

11], non-overlapping square blocks [

12], or circular blocks [

13,

14]. Image segmentation techniques [

15,

16] are often included in the preprocessing step to separate the copied source region from the pasted target region.

Among these five CMFD steps, feature extraction is the main step of the CMFD process. Extracted features must be invariant to scale and rotation, and must be robust when subjected to post-processing steps, such as blurring, compression, and noise addition. Transform-based methods are frequently exploited to eliminate information that is unnecessary for detection, in addition, to enhancing the essential components. A variety of transform methods, such as the polar cosine transform [

17], Fourier–Mellin transform [

18,

19], and polar complex exponential transform [

20] were used for forgery detection, in conjunction with the popular Fourier transform [

5], discrete cosine transform [

7,

11,

21,

22,

23], and various wavelet transforms [

7,

11,

24,

25,

26]. Recently, the local binary pattern (LBP) was successfully exploited to detect CMFs in combination with the wavelet transform [

25] and singular value decomposition [

27]. The center symmetric local binary pattern (CSLBP) [

28], which is a variant of the LBP, was used to make CMFD more robust against noise during feature extractions. As another class of methods used to detect CMF, keypoint-based approaches have been actively studied thus far. Because keypoint features are robust under scaling, rotation, and occlusion, they are well suited to the CMFD process. Since the time that scale invariant feature transform (SIFT) was applied to forgery detection [

29], various SIFT-based transforms, such as, binarized SIFT [

30], opponent SIFT [

31], and affine SIFT [

32], have been applied to the CMFD. In addition to these schemes, histogram-based techniques [

4] and statistical moment-based methods [

20,

33,

34] have also been presented. Please refer to the referenced papers [

1,

2,

3] for more details on these algorithms and other feature extraction methods.

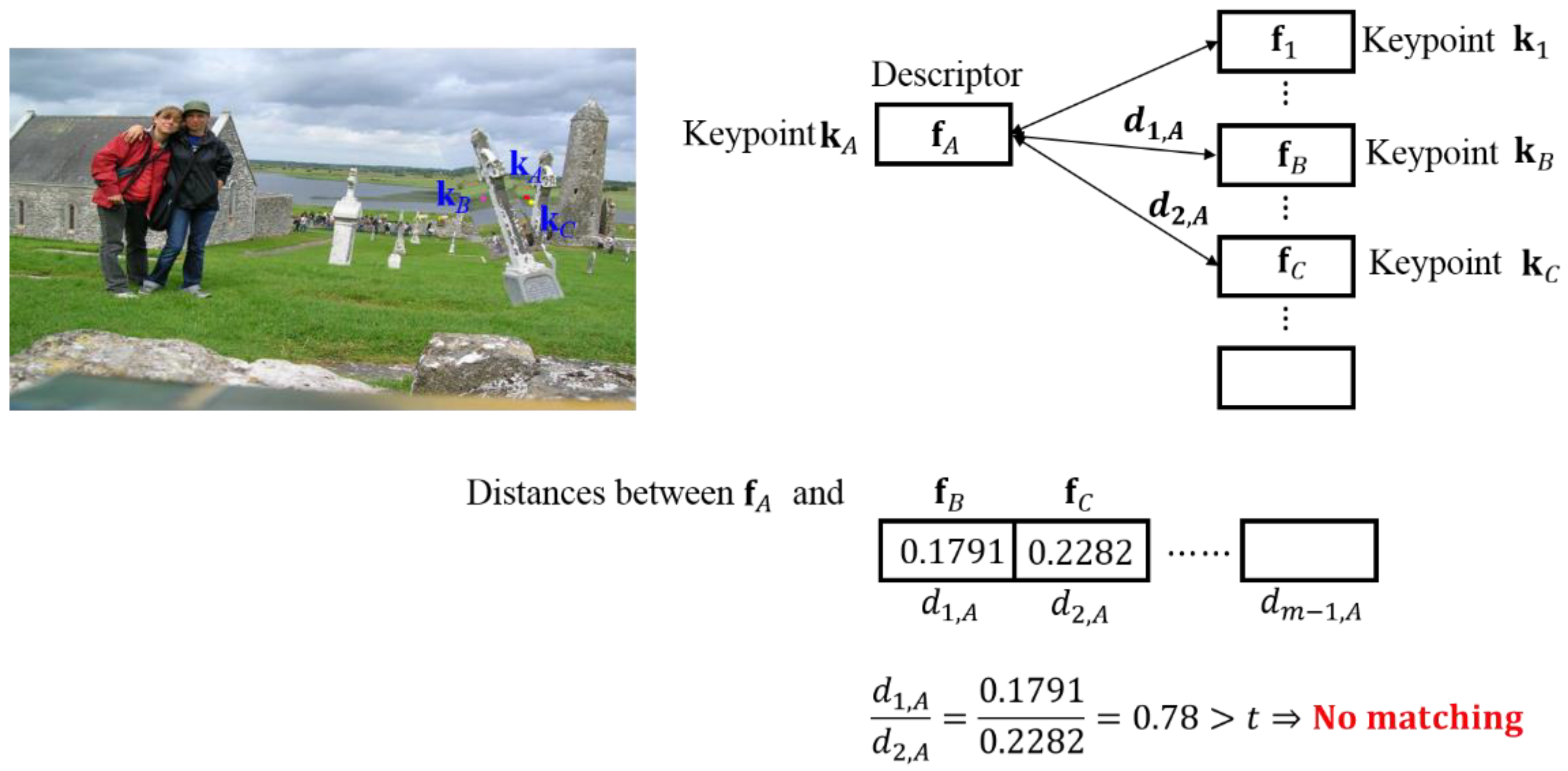

Feature matching is a process of determining a candidate pair of the original section and the corresponding copy-move section using the extracted features. This process utilizes searching and similarity measurement techniques. Searching methods contain various sorting [

6,

7,

35], nearest neighbor [

31,

36], and hashing methods [

16]. These algorithms usually involve the use of dimension reduction techniques. During the searching process, the matching methods search for possible matches to evaluate the similarity between the selected possible matches. The Euclidean distance is the most popular and simple similarity metric employed. Several CMFD methods [

4,

16,

20,

35] use Euclidean distance. Additionally, Manhattan distance [

37] and Hamming distance [

30] between two features have also been employed to determine similarity.

However, even after the completion of the feature matching process, the false positive matches are not completely eliminated. To eliminate these false matches, various clustering algorithms, such as J-linkage clustering [

31], distance-based clustering [

38], and hierarchical clustering [

39,

40] have been presented to separate the authentic and tampered areas. One of the most robust and frequently used false match removal methods is the random sample consensus (RANSAC) algorithm, which can remove outliers from the matched features. The RANSAC algorithm is widely used in keypoint-based CMFD methods [

15,

31,

35,

36,

39,

40,

41].

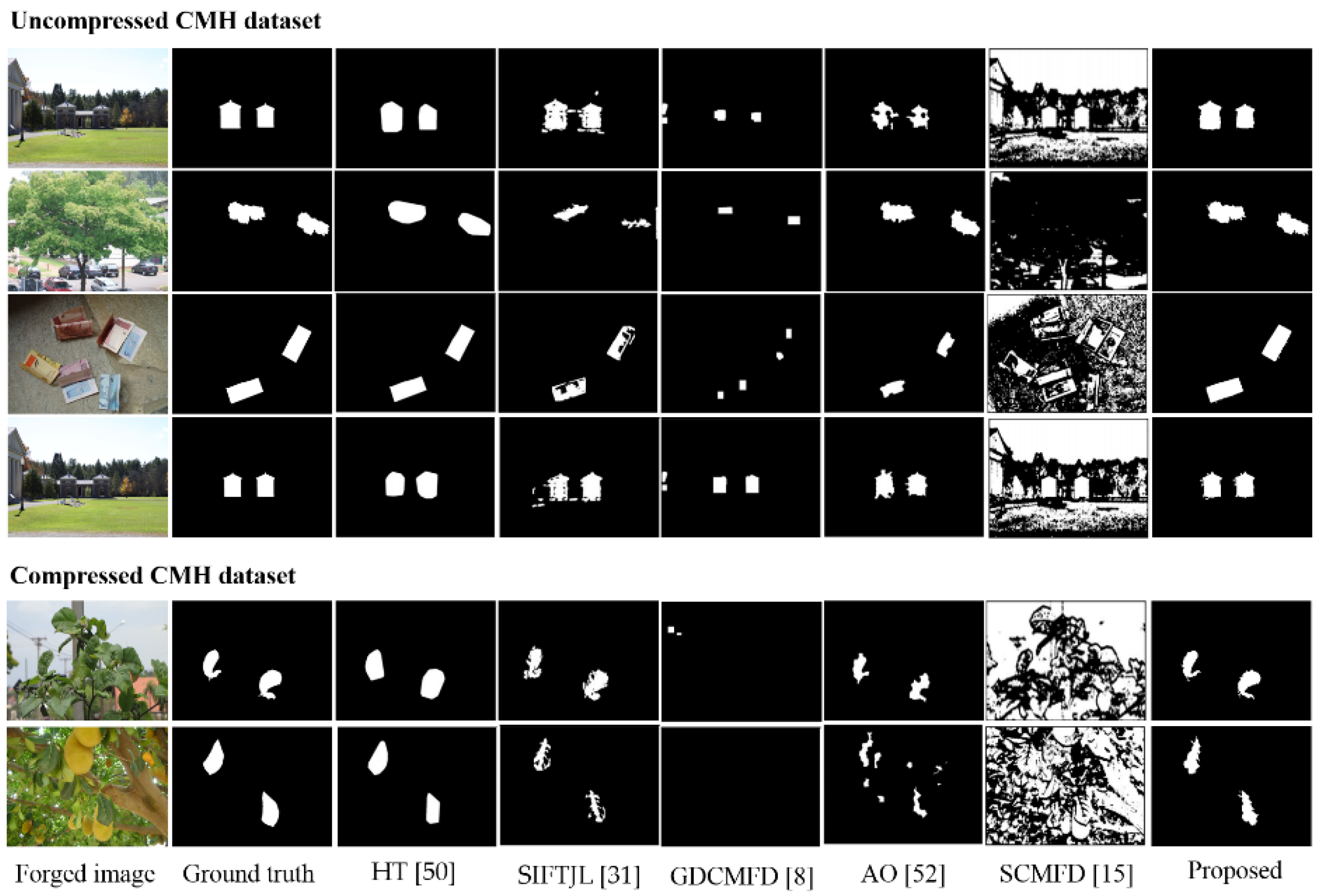

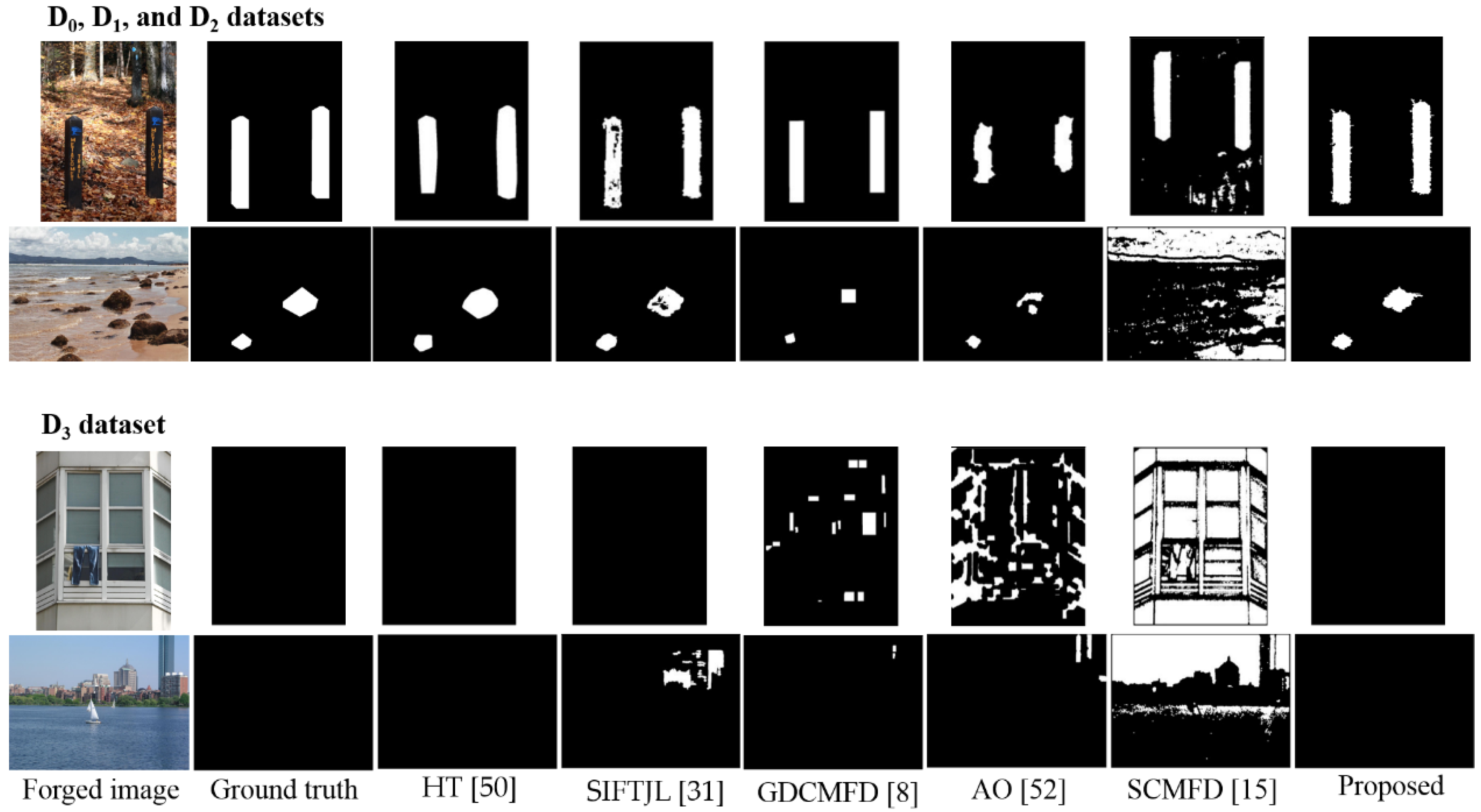

The final step in the CMFD process is the localization process. The detection output can be visualized as a binary image that presents the detected copy-moved regions and their corresponding authentic image parts within the target image. This process is commonly applied with various post-processing methods.

Keypoint-based CMFD algorithms have attracted a significant amount of attention in recent years. SIFT is one of the most frequently used keypoint extraction methods for the detection of CMF. SIFT generates both scale- and rotation-invariant keypoints, and each keypoint is commonly represented by a 128-dimensional descriptor. The SIFT descriptor of a keypoint is obtained from 16 4 × 4 windows around the keypoint and is generated as a set of gradient histograms representing 8 directions. However, although keypoint-based methods have been successfully applied to CMFD, their performance is degraded when the CMF implementation involves small or smooth regions [

31,

42]. Additionally, when a keypoint exists close to the boundary of the copy-moved portion and the authentic region or if an image has been compressed after CMF, the keypoint descriptor of the copied portion will be different from that of the moved portion.

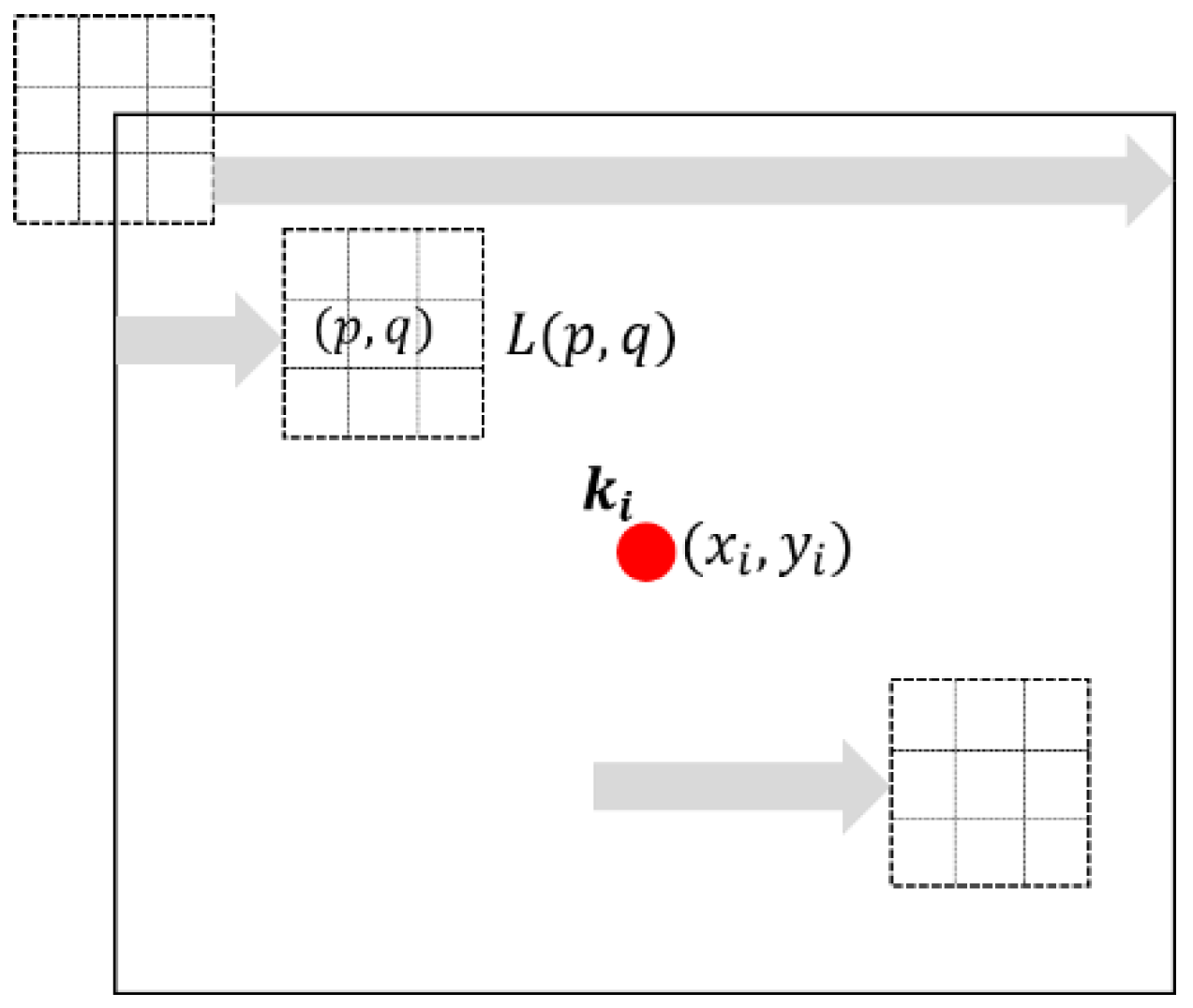

For a single keypoint, the existing SIFT-based methods divide a 16 × 16 window into 16 sub-windows, each of size 4 × 4 pixels. The gradient values for the corresponding 8 directions in a 4 × 4 sub-window are used to generate an 8-dimensional descriptor for each sub-window. Next, the descriptors in all 16 sub-windows are arranged in series to create the final 128-dimensional descriptor. The limitation of the conventional descriptor generation method is that it only provides local information about a single keypoint. Because this method cannot represent global information around the keypoint, it may be difficult to cope with pixel changes, such as compression or differences in the background area caused by the copy-move process.

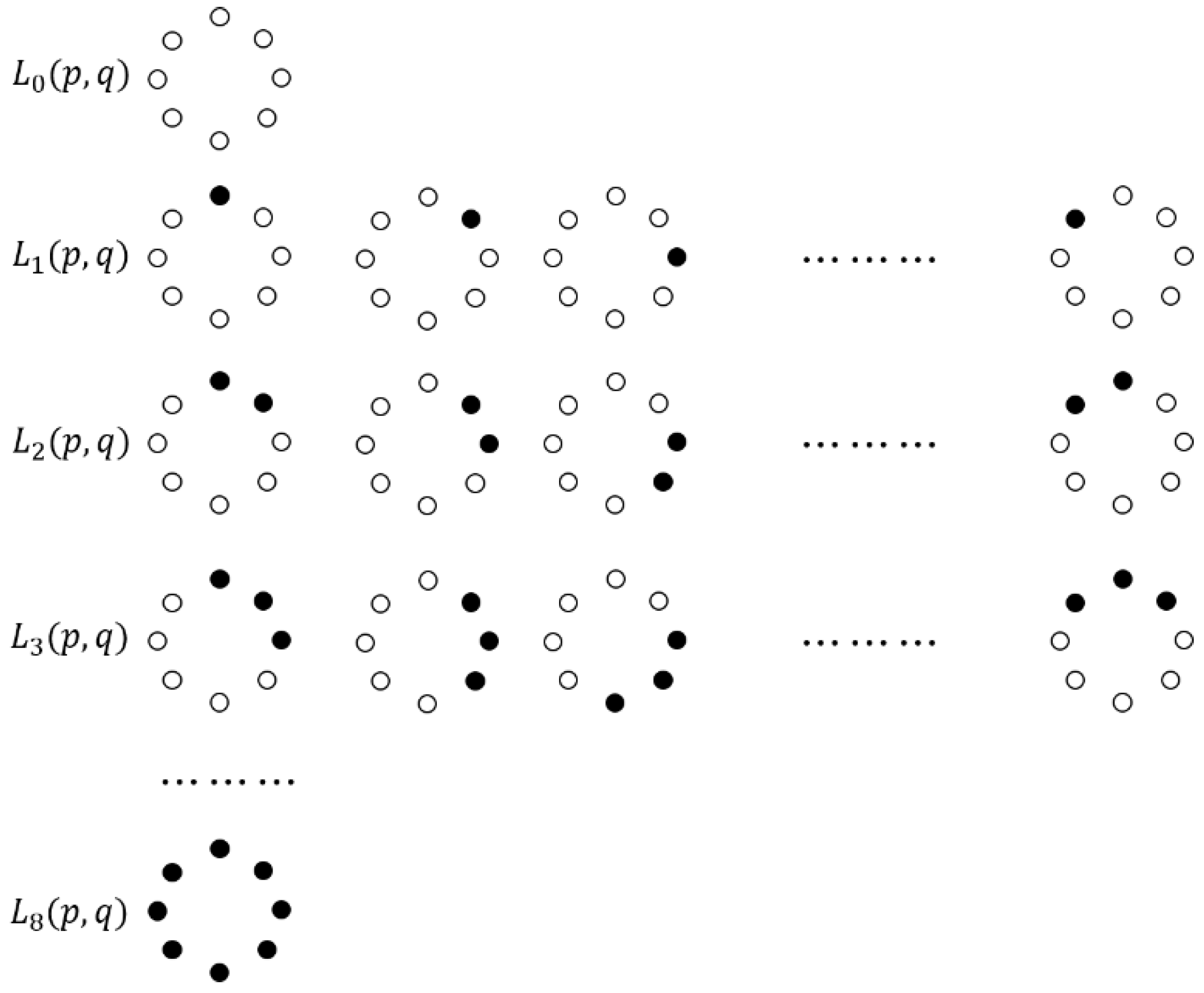

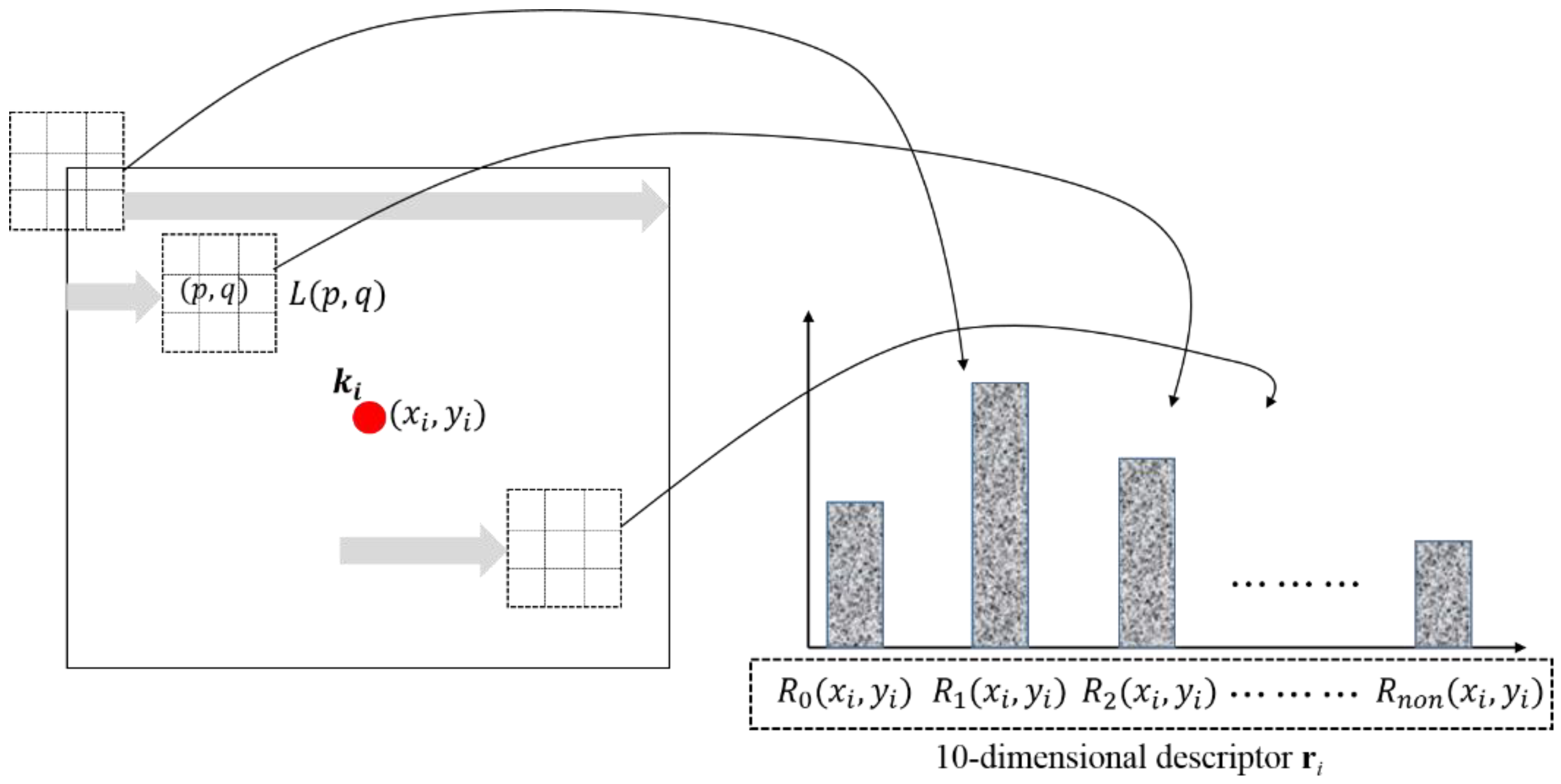

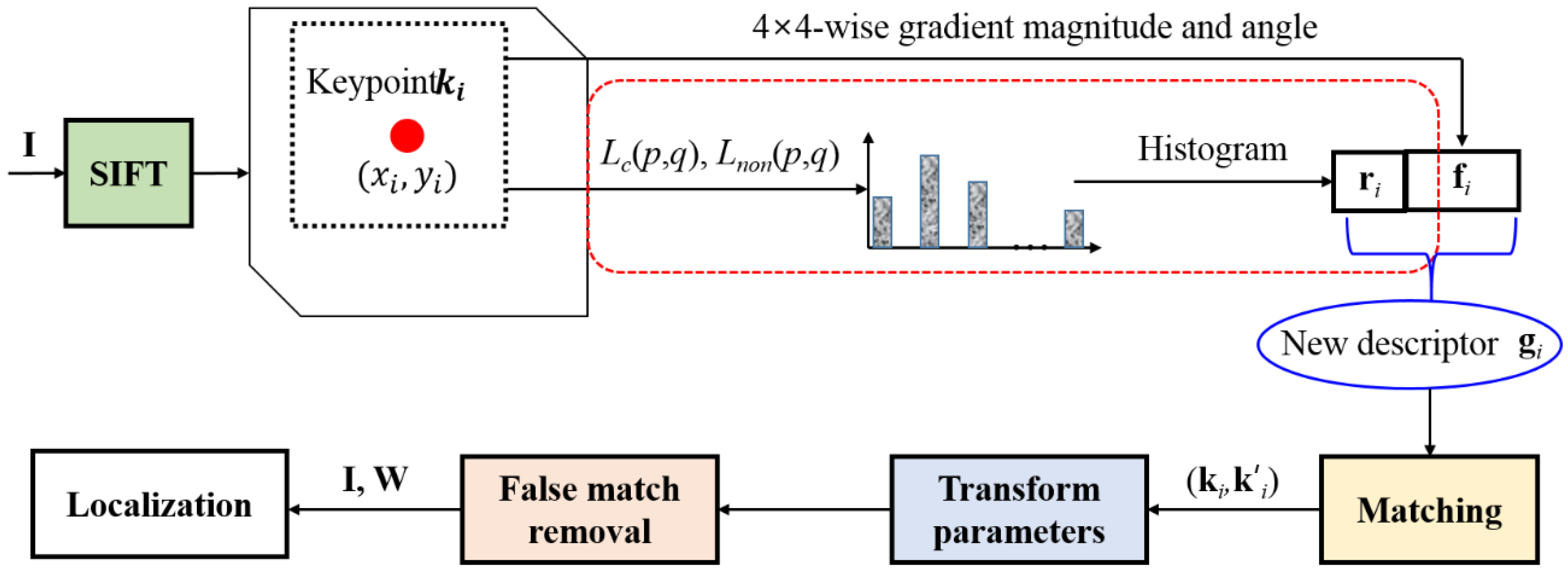

In this paper, we present an improved CMFD algorithm by adding a new descriptor. The proposed additional descriptor based on the LBP feature is capable of capturing global information associated with the keypoints. LBP is acknowledged as one of the features that is sufficiently robust to handle small pixel changes. For this reason, the proposed descriptor is generated using a histogram of LBP values for a 16 × 16 window centered on the keypoint. The LBP values are not generated at every pixel in the image but only for keypoints by the SIFT. Because a typical LBP has 256 levels for a pixel, we reduce the LBP levels to 10 to prevent an increase in the descriptor dimension. In total, the proposed descriptor for a keypoint has 138 dimensions. By means of experiments using various test datasets, we demonstrate that the proposed method generates more accurate estimation results in detecting CMFs than conventional methods.

The remainder of this paper is organized as follows.

Section 2 introduces the basic process of the SIFT-based CMFD method and its limitations.

Section 3 presents the proposed CMFD algorithm. In

Section 4, the performance of the proposed method is compared with those of existing methods using the experimental results.

Section 5 presents the discussion, and the conclusion is presented in

Section 6.