Emotion Classification Based on Biophysical Signals and Machine Learning Techniques

Abstract

1. Introduction

2. Materials and Methods

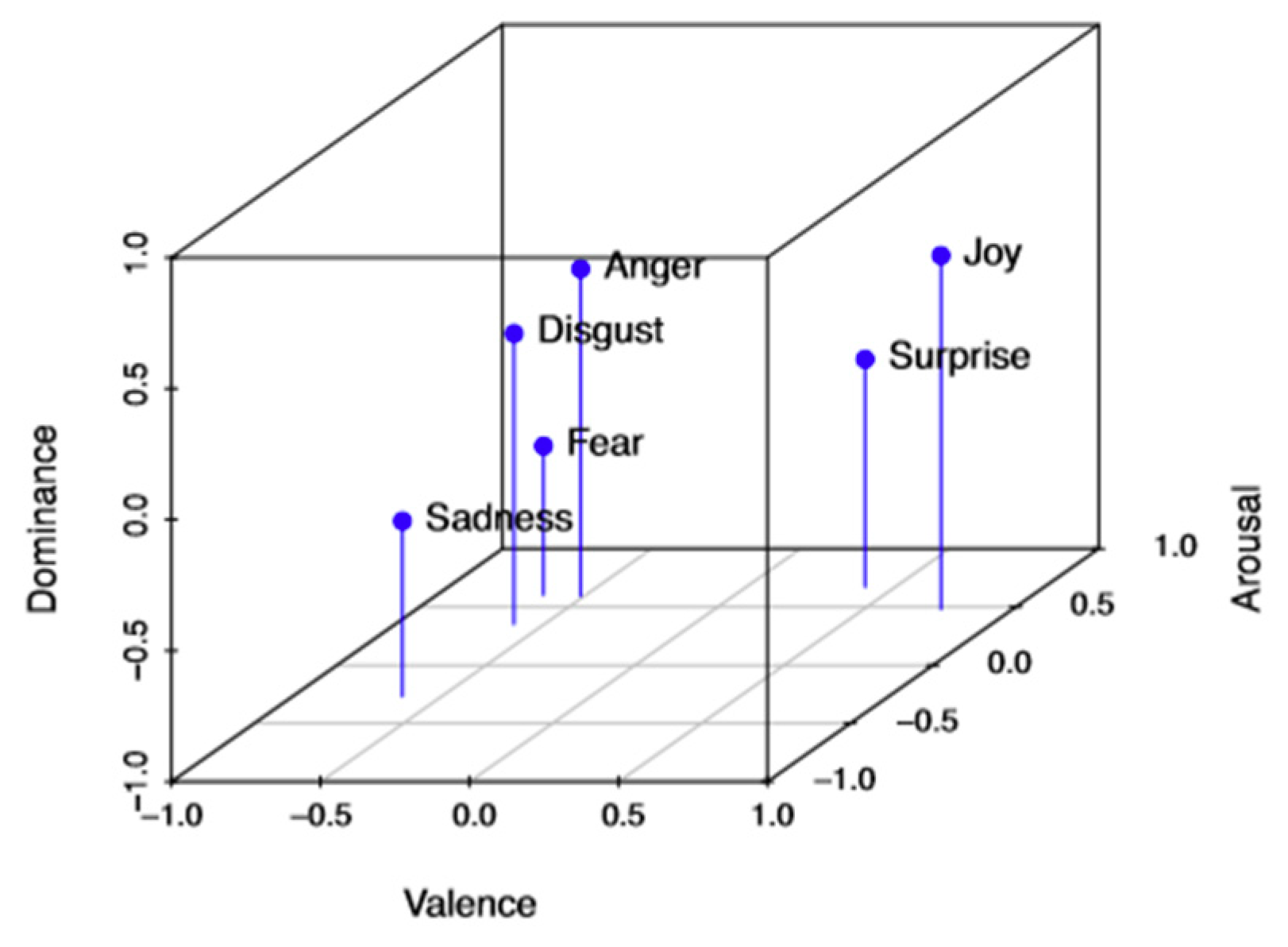

2.1. Emotion Models

2.2. Emotions and the Nervous System

2.3. The Six Basic Emotions and Their Corresponding Physiological Reactions

2.4. Biophysical Data

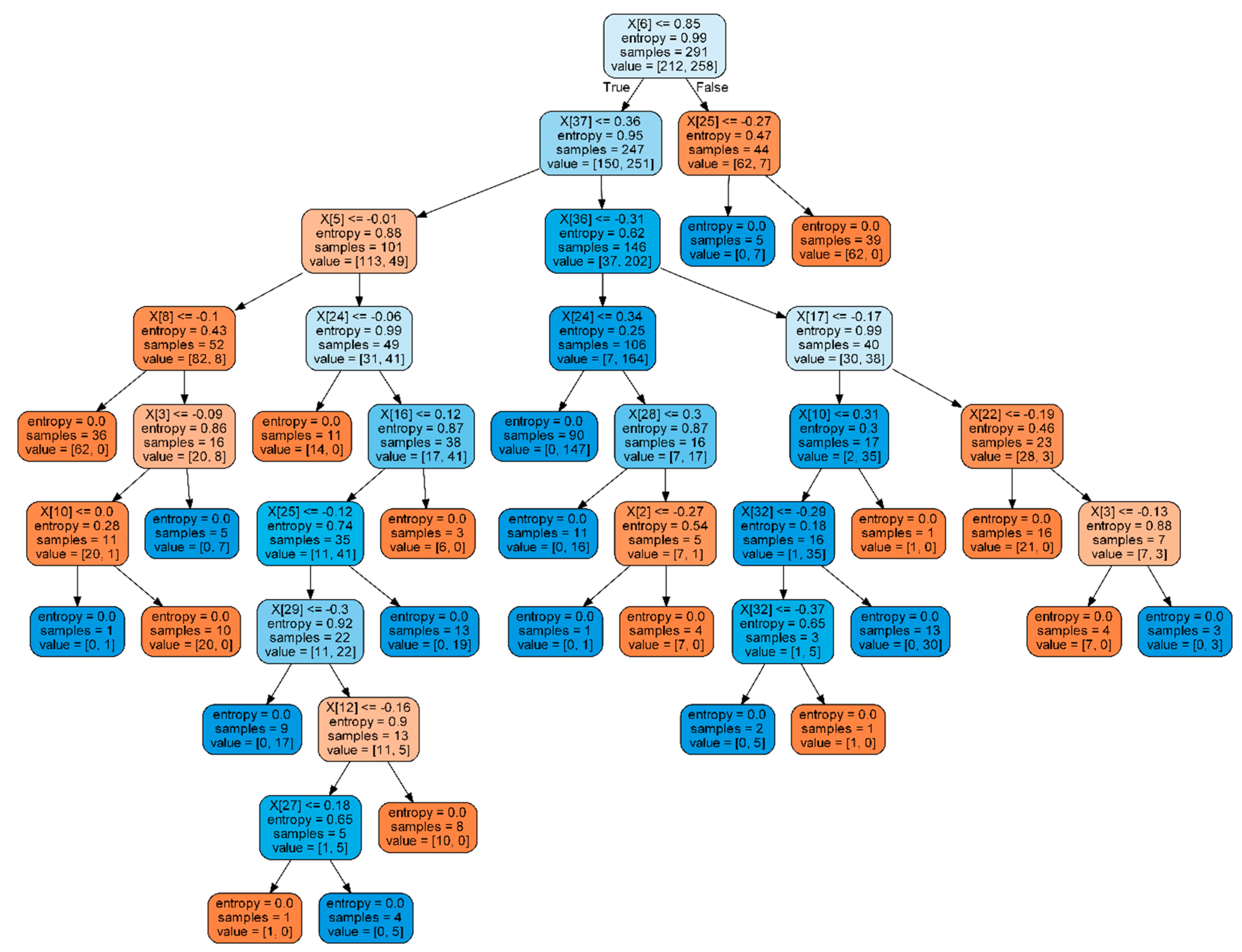

2.5. Machine Learning Techniques for Emotions Classification

2.6. Our Paradigm for Emotions Classification

3. Results

4. Discussion

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Scherer, K.R. What are emotions? And how can they be measured. Soc. Sci. Inf. 2005, 44, 693–727. [Google Scholar] [CrossRef]

- Ekman, P.; Sorenson, E.R.; Friesen, W.V. Pan-cultural elements in facial displays of emotions. Science 1969, 164, 86–88. [Google Scholar] [CrossRef] [PubMed]

- Russell, J.A.; Mehrabian, A. Evidence for a three-factor theory of emotions. J. Res. Personal. 1977, 11, 273–294. [Google Scholar] [CrossRef]

- Koelstra, S.; Muehl, C.; Soleymani, M.; Lee, J.-S.; Yazdani, A.; Ebrahimi, T.; Pun, T.; Nijholt, A.; Patras, I. DEAP: A Database for Emotion Analysis using Physiological Signals. IEEE Trans. Affect. Comput. 2012, 3, 18–31. [Google Scholar] [CrossRef]

- Soleymani, M.; Lichtenauer, J.; Pun, T.; Pantic, M. A multimodal database for affect recognition and implicit tagging. IEEE Trans. Affect. Comput. 2012, 3, 42–55. [Google Scholar] [CrossRef]

- Chai, X.; Wang, Q.S.; Zhao, Y.P.; Liu, X.; Bai, O.; Li, Y.Q. Unsupervised domain adaptation techniques based on auto-encoder for non-stationary EEG-based emotion recognition. Comput. Biol. Med. 2016, 79, 205–214. [Google Scholar] [CrossRef]

- Goshvarpour, A.; Abbasi, A. Dynamical analysis of emotional states from electroencephalogram signals. Biomed. Eng. Appl. Basis Commun. 2016, 28, 1650015. [Google Scholar] [CrossRef]

- Bălan, O.; Moise, G.; Moldoveanu, A.; Leordeanu, M.; Moldoveanu, F. Fear Level Classification Based on Emotional Dimensions and Machine Learning Techniques. Sensors 2019, 19, 1738. [Google Scholar] [CrossRef]

- Bălan, O.; Moise, G.; Moldoveanu, A.; Leordeanu, M.; Moldoveanu, F. Does automatic game difficulty level adjustment improve acrophobia therapy? Differences from baseline. In Proceedings of the 24th ACM Symposium on Virtual Reality Software and Technology, Tokyo, Japan, 28 November–1 December 2018. [Google Scholar]

- Bălan, O.; Moise, G.; Moldoveanu, A.; Leordeanu, M.; Moldoveanu, F. Automatic adaptation of exposure intensity in VR acrophobia therapy, based on deep neural networks. In Proceedings of the Twenty-Seventh European Conference on Information Systems (ECIS2019), Stockholm-Uppsala, Sweden, 8–14 June 2019. [Google Scholar]

- Ekman, P. Basic emotions. In Handbook of Cognition and Emotion; Dalgleish, T., Power, M., Eds.; John Wiley&Sons Ltd.: Hoboken, NJ, USA, 1999. [Google Scholar]

- Cohen, M.A. Against Basic Emotions, and Toward a Comprehensive Theory. J. Mind Behav. 2009, 26, 229–254. [Google Scholar]

- Plutchik, R. Emotion: A Psychoevolutionary Synthesis; Harper & Row: New York, NY, USA, 1980. [Google Scholar]

- Whissell, C. The dictionary of affect in language, Emotion: Theory, Research and Experience. In The Measurement of Emotions; Academic: New York, NY, USA, 1989; Volume 4. [Google Scholar]

- Russell, J.A. A circumplex model of Affect. J. Personal. Soc. Psychol. 1980, 39, 1161–1178. [Google Scholar] [CrossRef]

- Whissell, C. Using the revised dictionary of affect in language to quantify the emotional undertones of samples of natural language. Psychol. Rep. 2009, 105, 509–521. [Google Scholar] [CrossRef] [PubMed]

- Mehrabian, A.; Russell, J.A. An Approach to Environmental Psychology; The Massachusetts Institute of Technology: Cambridge, MA, USA, 1974. [Google Scholar]

- Mehrabian, A. Framework for a comprehensive description and measurement of emotional states. Genet. Soc. Gen. Psychol. Monogr. 1995, 121, 339–361. [Google Scholar] [PubMed]

- Mehrabian, A. Pleasure-Arousal-Dominance: A general framework for describing and measuring individual differences in temperament. Curr. Psychol. 1996, 14, 261–292. [Google Scholar] [CrossRef]

- Buechel, S.; Hahn, U. Emotion analysis as a regression problem—Dimensional models and their implications on emotion representation and metrical evaluation. In Proceedings of the 22nd European Conference on Artificial Intelligence (ECAI), The Hague, The Netherlands, 29 August–2 September 2016. [Google Scholar]

- Schumann, C.M.; Bauman, M.D.; Amaral, D.G. Abnormal structure or function of the amygdala is a common component of neurodevelopmental disorders. Neuropsychologia 2011, 49, 745–759. [Google Scholar] [CrossRef]

- Bos, D.O. EEG-based emotion recognition. Influ. Vis. Audit. Stimuli 2006, 56, 1–17. [Google Scholar]

- Suzuki, A. Emotional functions of the insula. Brain Nerve 2012, 64, 1103–1112. [Google Scholar]

- Craig, A.D. How do you feel now? The anterior insula and human awareness. Nat. Rev. Neurosci. 2009, 10, 59–70. [Google Scholar] [CrossRef]

- Cha, J.; Greenberg, T.; Song, I.; Blair Simpson, H.; Posner, J.; Mujica-Parodi, L.R. Abnormal hippocampal structure and function in clinical anxiety and comorbid depression. Hippocampus 2016, 26, 545–553. [Google Scholar] [CrossRef]

- Garcia-Rill, E.; Virmani, T.; Hyde, J.R.; D’Onofrio, S.; Mahaffey, S. Arousal and the control of perception and movement. Curr. Trends Neurol. 2016, 10, 53–64. [Google Scholar]

- Ekman, P.; Friesen, W.V. Unmasking the Face: A Guide to Recognizing Emotions from Facial Clues; Prentice-Hall: Englewood Cliffs, NJ, USA, 1975. [Google Scholar]

- Fusar-Poli, P.; Placentino, A.; Carletti, F.; Landi, P.; Allen, P.; Surguladze, S.; Benedetti, F.; Abbamonte, M.; Gasparotti, R.; Barale, F.; et al. Functional atlas of emotional faces processing: A voxel-based meta-analysis of 105 functional magnetic resonance imaging studies. J. Psychiatry Neurosci. 2009, 34, 418–432. [Google Scholar]

- Alkozei, A.; Killgore, W.D. Emotional intelligence is associated with reduced insula responses to masked angry faces. Neuroreport 2015, 26, 567–571. [Google Scholar] [CrossRef] [PubMed]

- Chen, C.; Chou, C.; Wang, J. The personal characteristics of happiness: An EEG study. In Proceedings of the 2015 International Conference on Orange Technologies (ICOT), Hong Kong, China, 19–22 December 2015. [Google Scholar]

- Li, M.; Lu, B. Emotion classification based on gamma-band EEG. In Proceedings of the 2009 Annual International Conference of the IEEE Engineering in Medicine and Biology Society, Minneapolis, MN, USA, 3–6 September 2009. [Google Scholar]

- Abhang, P.A.; Gawali, B.W.; Mehrotra, S.C. Chapter 3—Technical Aspects of Brain Rhythms and Speech Parameters. In Introduction to EEG and Speech-Based Emotion Recognition; Abhang, P.A., Gawali, B.W., Mehrotra, S.C., Eds.; Academic Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Sequeira, H.; Hot, P.; Silvert, L.; Delplanque, S. Electrical autonomic correlates of emotion. Int. J. Psychophysiol. 2009, 71, 50–56. [Google Scholar] [CrossRef] [PubMed]

- Shibasaki, M.; Crandall, C.G. Mechanisms and controllers of eccrine sweating in humans. Front. Biosci. (Sch. Ed.) 2010, 2, 685–696. [Google Scholar]

- Macefield, V.G.; Wallin, B.G. The discharge behaviour of single sympathetic neurones supplying human sweat glands. J. Auton. Nerv. Syst. 1996, 61, 277–286. [Google Scholar] [CrossRef]

- Lidberg, L.; Wallin, B.G. Sympathetic skin nerve discharges in relation to amplitude of skin resistance responses. Psychophysiology 1981, 18, 268–270. [Google Scholar] [CrossRef]

- Boucsein, W. Electrodermal Activity; Springer Science & Business Media: Berlin, Germany, 2012. [Google Scholar]

- Thayer, J.F.; Åhs, F.; Fredrikson, M.; Sollers, J.J., III; Wager, T.D. A meta-analysis of heart rate variability and neuroimaging studies: Implications for heart rate variability as a marker of stress and health. Neurosci. Biobehav. Rev. 2012, 36, 747–756. [Google Scholar] [CrossRef]

- Mather, M.; Thayer, J. How heart rate variability affects emotion regulation brain networks. Curr. Opin. Behav. Sci. 2018, 19, 98–104. [Google Scholar] [CrossRef]

- Homma, I.; Masaoka, Y. Breathing rhythms and emotions. Exp. Physiol. 2008, 93, 1011–1021. [Google Scholar] [CrossRef]

- Marín-Morales, J.; Higuera-Trujillo, J.L.; Greco, A.; Guixeres, J.; Llinares, C.; Scilingo, E.P.; Alcañiz, M.; Valenza, G. Affective computing in virtual reality: Emotion recognition from brain and heartbeat dynamics using wearable sensors. Sci. Rep. 2018, 8, 13657. [Google Scholar] [CrossRef]

- Atkinson, J.; Campos, D. Improving BCI-based emotion recognition by combining EEG feature selection and kernel classifiers. Expert Syst. Appl. 2016, 47, 35–41. [Google Scholar] [CrossRef]

- Yoon, H.J.; Chung, S.Y. EEG-based emotion estimation using Bayesian weighted-log-posterior function and perceptron convergence algorithm. Comput. Biol. Med. 2013, 43, 2230–2237. [Google Scholar] [CrossRef] [PubMed]

- Naser, D.S.; Saha, G. Recognition of emotions induced by music videos using dt-cwpt. In Proceedings of the 2013 Indian Conference on Medical Informatics and Telemedicine (ICMIT), Kharagpur, India, 28–30 March 2013. [Google Scholar]

- Daly, I.; Malik, A.; Weaver, J.; Hwang, F.; Nasuto, S.; Williams, D.; Kirke, A.; Miranda, E. Identifying music-induced emotions from EEG for use in brain computer music interfacing. In Proceedings of the 6th Affective Computing and Intelligent Interaction, Xi’an, China, 21–24 September 2015. [Google Scholar]

- Liu, Y.; Sourina, O. EEG databases for emotion recognition. In Proceedings of the 2013 International Conference on Cyberworlds (CW), Okohama, Japan, 21–23 October 2013. [Google Scholar]

- Liu, C.; Rani, P.; Sarkar, N. An empirical study of machine learning techniques for affect recognition in human-robot interaction. In Proceedings of the International Conference on Intelligent Robots and Systems, IEEE, Edmonton, AB, Canada, 2–6 August 2005. [Google Scholar]

- Koelstra, S.; Yazdani, A.; Soleymani, M.; Muhl, C.; Lee, J.-S.; Nijholt, A.; Pun, T.; Ebrahimi, T.; Patras, I. Single trial classification of EEG and peripheral physiological signals for recognition of emotions induced by music videos. Brain Inform. Ser. Lect. Notes Comput. Sci. 2010, 6334, 89–100. [Google Scholar]

- Wiem, M.B.H.; Lachiri, Z. Emotion Classification in Arousal Valence Model using MAHNOB-HCI Database. Int. J. Adv. Comput. Sci. Appl. 2017, 8, 318–323. [Google Scholar]

- Alhagry, S.; Aly, A.; Reda, A. Emotion Recognition based on EEG using LSTM Recurrent Neural Network. Int. J. Adv. Comput. Sci. Appl. 2017, 8, 355–358. [Google Scholar] [CrossRef]

- Jirayucharoensak, S.; Pan-Ngum, S.; Israsena, P. EEG-based emotion recognition using deep learning network with principal component-based covariate shift adaptation. Sci. World J. 2014, 2014, 627892. [Google Scholar] [CrossRef]

- Salama, E.S.; El-Khoribi, R.A.; Shoman, M.E.; Shalaby, M.A.W. EEG-Based Emotion Recognition using 3D Convolutional Neural Networks. Int. J. Adv. Comput. Sci. Appl. 2018, 9, 329–337. [Google Scholar]

- Wen, W.; Liu, G.; Cheng, N.; Wei, J.; Shangguan, P.; Huang, W. Emotion Recognition Based on Multi-Variant Correlation of Physiological Signals. IEEE Trans. Affect. Comput. 2014, 5, 126–140. [Google Scholar] [CrossRef]

- Higuchi, T. Approach to an irregular time series on the basis of the fractal theory. Physica 1988, 31, 277–283. [Google Scholar] [CrossRef]

- Katz, M. Fractals and the analysis of waveforms. Comput. Biol. Med. 1988, 18, 145–156. [Google Scholar] [CrossRef]

- Petrosian, A. Kolmogorov complexity of finite sequences and recognition of different preictal EEG patterns. In Proceedings of the 8th IEEE Symposium on Computer-Based Medical Systems, Lubbock, TX, USA, 9–10 June 1995. [Google Scholar]

- Lee, G.M.; Fattinger, S.; Mouthon, A.L.; Noirhomme, Q.; Huber, R. Electroencephalogram approximate entropy influenced by both age and sleep. Front. Neuroinform. 2013, 7, 33. [Google Scholar] [CrossRef]

- Burioka, N.; Miyata, M.; Cornélissen, G.; Halberg, F.; Takeshima, T.; Kaplan, D.T.; Suyama, H.; Endo, M.; Maegaki, Y.; Nomura, T.; et al. Approximate entropy in the electroencephalogram during wake and sleep. Clin. EEG Neurosci. 2005, 36, 21–24. [Google Scholar] [CrossRef] [PubMed]

- PyEEG. Available online: http://pyeeg.sourceforge.net/ (accessed on 1 November 2019).

- Keras Deep Learning Library. Available online: https://keras.io/ (accessed on 1 November 2019).

- Scikit Learn Library. Available online: https://scikit-learn.org/stable/ (accessed on 1 November 2019).

- Soleymani, M.; Kierkels, J.; Chanel, G.; Pun, T. A Bayesian framework for video affective representation. In Proceedings of the 3rd International Conference on Affective Computing and Intelligent Interaction and Workshops, Amsterdam, The Netherlands, 10–12 September 2009; pp. 1–7. [Google Scholar]

- Stickel, C.; Ebner, M.; Steinbach-Nordmann, S.; Searle, G.; Holzinger, A. Emotion detection: Application of the valence arousal space for rapid biological usability testing to enhance universal access. In Universal Access in Human-Computer Interaction; Addressing Diversity, Lecture Notes in Computer Science, Lncs 5614; Stephanidis, C., Ed.; Springer: Berlin/Heidelberg, Germany, 2009; pp. 615–624. [Google Scholar]

- Picard, R. Affective Computing; MIT Press: Cambridge, MA, USA, 1997. [Google Scholar]

- Conn, K.; Liu, C.; Sarkar, N.; Stone, W.; Warren, Z. Towards Affect-sensitive Assistive Intervention Technologies for Children with Autism. In Affective Computing Focus on Emotion Expression, Synthesis and Recognition; Or, J., Ed.; I-Tech Education and Publishing: Vienna, Austria, 2008. [Google Scholar]

- Xu, T.; Zhou, Y.; Wang, Z.; Peng, Y. Learning Emotions EEG-based Recognition and Brain Activity: A Survey Study on BCI for Intelligent Tutoring System. Procedia Comput. Sci. 2018, 130, 376–382. [Google Scholar] [CrossRef]

- Tkalčič, M.; Košir, A.; Juij Tasic, J. Affective recommender systems: The role of emotions in recommender systems. In Proceedings of the RecSys Workshop Hum. Decision Making Recommender System, Chicago, IL, USA, 23–27 October 2011. [Google Scholar]

- Tkalčič, M. Emotions and personality in recommender systems: Tutorial. In Proceedings of the 12th ACM Conference on Recommender Systems (RecSys ‘18), Vancouver, BC, Canada, 2–7 October 2018; ACM: New York, NY, USA, 2018. [Google Scholar]

- Cambria, E. Affective Computing and Sentiment Analysis. IEEE Intell. Syst. 2016, 31, 102–107. [Google Scholar] [CrossRef]

- Moldoveanu, A.; Ivascu, S.; Stanica, I.; Dascalu, M.; Lupu, R.; Ivanica, G.; Bălan, O.; Caraiman, S.; Ungureanu, F.; Moldoveanu, F.; et al. Mastering an Advanced Sensory Substitution Device for Visually Impaired through Innovative Virtual Training. In Proceedings of the 7th IEEE International Conference on Consumer Electronics, Berlin, Germany, 3–6 September 2017; ISBN 978-1-5090-4014-8. ISSN 2166-6822. [Google Scholar]

- Bălan, O.; Moldoveanu, A.; Nagy, H.; Wersenyi, G.; Stan, A.; Lupu, R. Haptic-Auditory Perceptual Feedback Based Training for Improving the Spatial Acoustic Resolution of the Visually Impaired People. In Proceedings of the 21st International Conference on Auditory Display, Graz, Austria, 6–10 July 2015. [Google Scholar]

- Holzinger, A.; Bruschi, M.; Eder, W. On interactive data visualization of physiological low-cost-sensor data with focus on mental stress. In Multidisciplinary Research and Practice for Information Systems; Alfredo Cuzzocrea, C.K., Dimitris, E.S., Weippl, E., Xu, L., Eds.; Springer Lecture Notes in Computer Science LNCS 8127; Springer: Heidelberg/Berlin, Germany, 2013; pp. 469–480. [Google Scholar]

- Holzinger, A.; Kieseberg, P.; Weippl, E.; Tjoa, A.M. Current advances, trends and challenges of machine learning and knowledge extraction: From machine learning to explainable AI. In Springer Lecture Notes in Computer Science LNCS 11015; Springer: Cham, Switzerland, 2018; pp. 1–8. [Google Scholar]

| Valence | Arousal | Dominance | |

| Anger | –0.43 | 0.67 | 0.34 |

| Joy | 0.76 | 0.48 | 0.35 |

| Surprise | 0.4 | 0.67 | –0.13 |

| Disgust | –0.6 | 0.35 | 0.11 |

| Fear | –0.64 | 0.6 | –0.43 |

| Sadness | –0.63 | 0.27 | –0.33 |

| Reference | Open Data Source | Classifiers | Classification | Feature Selection/Processing | Measure of Performance (%) |

|---|---|---|---|---|---|

| [4] 2012 | DEAP | Gaussian Naïve Bayes | Two-level class: arousal, valence, liking | Fischer’s linear discriminant | F1—scores 62.9—arousal 65.2—valence 64.2—liking |

| [42] 2016 | DEAP | SVM | Two-level class: arousal, valence | Minimum redundancy Maximum relevance | Accuracy 73.06—arousal 73.14—valence |

| [42] 2013 | DEAP | Probabilistic classifier based on Bayes’ theorem | Two-level class: arousal, valence | Pearson correlation coefficient | F1- scores 74.9—high arousal 62.8—low arousal 74.7—high valence 65.9—low valence Accuracy 70.1—high arousal 70.9—high valence |

| [43] 2013 | DEAP | Probabilistic classifier based on Bayes’ theorem | Three-level class: arousal, valence | Pearson correlation coefficient | F1- scores 63.3 - high arousal 43.3—medium arousal 53.9—low arousal 66.1—high valence 40.9—medium valence 51.8—low valence Accuracy 55.2—high arousal 55.4—high valence |

| [44] 2013 | - | SVM | Two-level class | DT-CWPT | Accuracy 66.20—arousal 64.30—valence 68.90—dominance |

| [45] 2015 | - | SVM | Two-level class | Stepwise Linear Regression | Accuracy (%) 62.4—valence 69.4—arousal |

| [45] 2015 | - | LDA | Two-level class | Stepwise Linear Regression | Accuracy (%) 65.6—valence 62.4—arousal |

| [46] 2013 | - | SVM | Discrete emotion (presence or not) | HOC+6 statistical +FD | Accuracy (%) Audio database 87.02—2 emotions 76.53—2 emotions Visual database 61.67%—5 emotions 56.6—5 emotions |

| [46] 2013 | DEAP | SVM | Discrete emotion (presence or not) | HOC+6 statistical +FD | Accuracy 83.73—2 emotions 53.7—8 emotions |

| [47] 2005 | - | kNN RT BNT SVM | Three-level class | Entire feature set | Average accuracy 75.12 83.50 74.03 85.8 |

| [48] 2010 | - | SVM | Two-level class | Fast correlation based filter (FCBF) | Average accuracy 54.2—valence 58.9—arousal 57.9—like/dislike |

| [49] 2017 | MAHNOB-HCI | SVM Gaussian kernel | Two-level class | Feature fusion | Accuracy 63.63—arousal 68.75—valence |

| [49] 2017 | MAHNOB-HCI | SVM Gaussian kernel | Three-level class | Feature fusion | Accuracy 59.57—arousal 57.44—valence |

| [50] 2017 | DEAP | End-to-end deep learning neural networks | Two-level class | Raw EEG signals | Average accuracy 85.65—arousal 85.45—valence 87.99—liking |

| [51] 2014 | DEAP | Deep learning network | Three-level class | PCA PCA CSA CSA | Accuracy 50.88—valence 48.64—arousal 53.42—valence 52.03—arousal |

| [52] 2018 | DEAP | 3D Convolutional Neural Networks | Two-level class | Spatiotemporal features are obtained from EEG signals | F1 score 86—valence 86—arousal Accuracy 87.44—valence 88.49—arousal |

| [53] 2014 | - | RF | Quinary classification: amusement, anger, grief, fear, baseline | Correlation Analysis and t-test | Correct rate 25.6—amusement 36.4—anger 74.8—grief 80.1—fear 88.1—baseline |

| Valence | Arousal | Dominance | ||||

|---|---|---|---|---|---|---|

| Rating from [3] | Rating Adapted from the DEAP Database | Rating from [3] | Rating Adapted from the DEAP Database | Rating from [3] | Rating Adapted from the DEAP Database | |

| Anger | –0.43 | Low [1; 5) | 0.67 | High [5; 9] | 0.34 | [6;7] |

| Joy | 0.76 | High [5;9] | 0.48 | High [5;9] | 0.35 | [6;7] |

| Surprise | 0.4 | High [5;9] | 0.67 | High [5;9] | –0.13 | [4;5] |

| Disgust | –0.6 | Low [1; 5) | 0.35 | High [5; 9] | 0.11 | [5;6] |

| Fear | –0.64 | Low [1; 5) | 0.6 | High [5; 9] | –0.43 | [3;4] |

| Sadness | –0.63 | Low [1; 5) | 0.27 | Low [1; 5) | –0.33 | [3;4] |

| Valence | Arousal | Dominance | ||

| Anger | No anger (0) | High [5; 9] | Low [1; 5) | [1;6) or (7;9] |

| Anger (1) | Low [1; 5) | High [5; 9] | [6;7] | |

| Joy | No joy (0) | Low [1; 5) | Low [1; 5) | [1;6) or (7;9] |

| Joy (1) | High [5; 9] | High [5; 9] | [6;7] | |

| Surprise | No surprise (0) | Low [1; 5) | Low [1; 5) | [1;4) or (5;9] |

| Surprise (1) | High [5; 9] | High [5; 9] | [4;5] | |

| Disgust | No disgust (0) | High [5; 9] | Low [1; 5) | [1;5) or (6;9] |

| Disgust (1) | Low [1; 5) | High [5; 9] | [5;6] | |

| Fear | No fear (0) | High [5; 9] | Low [1; 5) | [1;3) or (4;9] |

| Fear (1) | Low [1; 5) | High [5; 9] | [3;4] | |

| Sadness | No sadness (0) | High [5; 9] | High [5; 9] | [1;3) or (4;9] |

| Sadness (1) | Low [1; 5) | Low [1; 5) | [3;4] | |

| Number of entries Condition 1 | Number of entries Condition 0 | Total number of entries (5 s long) | |

| Anger | Anger 28 | No anger 239 | 672 |

| Joy | Joy 117 | No joy 249 | 2808 |

| Surprise | Surprise 201 | No surprise 233 | 4824 |

| Disgust | Disgust 61 | No disgust 186 | 1464 |

| Fear | Fear 81 | No fear 160 | 1944 |

| Sadness | Sadness 89 | No sadness 337 | 2136 |

| Type of Feature Selection | Classifier | Anger | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Raw | Petrosian | Higuchi Fractal Dimension | Approximate Entropy | ||||||

| F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | ||

| No feature selection | DNN1 | 91.22 | 91.22 | 90.03 | 90.03 | 90.03 | 90.03 | 74.46 | 74.55 |

| DNN2 | 87.80 | 87.76 | 87.05 | 87.04 | 81.24 | 81.25 | 73.06 | 73.07 | |

| DNN3 | 93.30 | 93.30 | 87.50 | 87.50 | 89.73 | 89.73 | 75.15 | 75.15 | |

| DNN4 | 88.39 | 88.39 | 84.97 | 84.96 | 80.08 | 80.21 | 71.20 | 71.28 | |

| SVM | 92.57 | 92.58 | 98.02 | 98.02 | 98.02 | 98.02 | 68.28 | 68.32 | |

| RF | 96.04 | 96.04 | 95.05 | 95.04 | 97.52 | 97.52 | 92.55 | 92.57 | |

| LDA | 85.64 | 85.63 | 92.08 | 92.08 | 96.04 | 96.04 | 68.81 | 68.81 | |

| kNN | 93.56 | 93.53 | 95.05 | 95.04 | 97.03 | 97.03 | 85.15 | 85.15 | |

| Fisher | SVM | 86.14 | 86.04 | 95.05 | 95.05 | 94.05 | 94.06 | 69.70 | 69.80 |

| RF | 95.54 | 95.54 | 92.57 | 92.56 | 88.15 | 88.12 | 92.08 | 92.08 | |

| LDA | 80.69 | 80.64 | 93.07 | 93.07 | 87.12 | 87.13 | 61.74 | 62.38 | |

| kNN | 97.52 | 97.52 | 93.56 | 93.55 | 93.56 | 93.56 | 88.09 | 88.12 | |

| PCA | SVM | 93.42 | 93.42 | 97.67 | 97.67 | 98.32 | 98.32 | 81.93 | 82.08 |

| RF | 92.43 | 92.42 | 92.28 | 92.26 | 93.62 | 93.61 | 86.42 | 86.44 | |

| LDA | 81.24 | 81.15 | 91.73 | 91.73 | 94.75 | 94.75 | 65.93 | 66.09 | |

| kNN | 93.96 | 93.95 | 95.05 | 95.05 | 95.59 | 95.59 | 87.37 | 87.38 | |

| SFS | SVM | 91 | 91 | 91 | 91 | 84 | 84 | 71 | 71 |

| RF | 86 | 86 | 86 | 86 | 83 | 83 | 78 | 78 | |

| LDA | 91 | 91 | 91 | 91 | 85 | 85 | 66 | 66 | |

| kNN | 91 | 91 | 91 | 91 | 83 | 83 | 79 | 79 | |

| Type of Feature Selection | Classifier | Joy | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Raw | Petrosian | Higuchi Fractal Dimension | Approximate Entropy | ||||||

| F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | ||

| No feature selection | DNN1 | 82.29 | 82.30 | 80.62 | 80.63 | 79.46 | 79.49 | 69.96 | 70.16 |

| DNN2 | 80.30 | 80.34 | 78.10 | 78.10 | 76.14 | 76.18 | 67.24 | 67.38 | |

| DNN3 | 83.62 | 83.65 | 80.91 | 80.91 | 80.51 | 80.52 | 72.14 | 72.15 | |

| DNN4 | 81.58 | 81.59 | 79.02 | 79.02 | 76.80 | 76.85 | 67.17 | 67.45 | |

| SVM | 83.60 | 83.63 | 86.47 | 86.48 | 84.45 | 84.46 | 65.15 | 65.95 | |

| RF | 90.25 | 90.27 | 86.31 | 86.36 | 87.57 | 87.66 | 86.40 | 86.48 | |

| LDA | 71.63 | 71.65 | 70.94 | 70.94 | 72.12 | 72.12 | 65.02 | 65.12 | |

| kNN | 91.22 | 91.22 | 87.90 | 87.90 | 87.60 | 87.60 | 83.35 | 83.39 | |

| Fisher | SVM | 78.48 | 78.65 | 83.98 | 83.99 | 79.58 | 79.60 | 68.65 | 69.16 |

| RF | 89.55 | 89.56 | 80.64 | 80.78 | 81.09 | 81.14 | 80.37 | 80.43 | |

| LDA | 64.03 | 64.29 | 68.82 | 68.92 | 67.03 | 67.02 | 64.44 | 64.53 | |

| kNN | 89.92 | 89.92 | 85.76 | 85.77 | 83.75 | 83.75 | 74.01 | 74.02 | |

| PCA | SVM | 83.17 | 83.21 | 86.39 | 86.39 | 84.95 | 84.96 | 72.01 | 72.41 |

| RF | 88.48 | 88.51 | 81.83 | 81.91 | 84.91 | 84.95 | 82.20 | 82.20 | |

| LDA | 70.62 | 70.77 | 71.71 | 71.71 | 72.32 | 72.33 | 63.54 | 63.74 | |

| kNN | 89.83 | 89.83 | 88.08 | 88.08 | 87.55 | 87.56 | 82.25 | 82.27 | |

| SFS | SVM | 98 | 98 | 67 | 67 | 67 | 67 | 66 | 66 |

| RF | 96 | 96 | 70 | 70 | 70 | 70 | 70 | 70 | |

| LDA | 100 | 100 | 65 | 65 | 65 | 65 | 61 | 61 | |

| kNN | 98 | 98 | 66 | 66 | 66 | 66 | 66 | 66 | |

| Type of Feature Selection | Classifier | Surprise | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Raw | Petrosian | Higuchi Fractal Dimension | Approximate Entropy | ||||||

| F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | ||

| No feature selection | DNN1 | 70.89 | 70.94 | 78.04 | 78.05 | 76.41 | 76.43 | 71.50 | 71.54 |

| DNN2 | 69.74 | 69.88 | 74.94 | 74.94 | 74.19 | 74.23 | 69.55 | 69.57 | |

| DNN3 | 71.41 | 71.41 | 77.67 | 77.67 | 78.05 | 78.11 | 70.47 | 71.02 | |

| DNN4 | 68.92 | 68.93 | 76.12 | 76.12 | 74.42 | 74.42 | 69.61 | 69.94 | |

| SVM | 71.52 | 71.75 | 80.92 | 80.94 | 81.84 | 81.84 | 63.25 | 65.06 | |

| RF | 83.91 | 83.98 | 82.12 | 82.25 | 80.51 | 80.80 | 81.22 | 81.49 | |

| LDA | 59.79 | 59.88 | 63.73 | 63.74 | 67.73 | 67.75 | 59.74 | 59.74 | |

| kNN | 85.01 | 85.01 | 84.74 | 84.74 | 83.64 | 83.63 | 81.30 | 81.30 | |

| Fisher | SVM | 70.37 | 70.86 | 75.76 | 75.76 | 72.50 | 72.51 | 62.21 | 63.54 |

| RF | 81.85 | 81.91 | 76.97 | 77.07 | 80.69 | 80.80 | 82.59 | 82.73 | |

| LDA | 62.52 | 62.71 | 58.48 | 58.49 | 60.43 | 60.43 | 59.58 | 59.60 | |

| kNN | 80.94 | 80.94 | 80.59 | 80.59 | 79.14 | 79.14 | 79.42 | 79.42 | |

| PCA | SVM | 73.57 | 73.71 | 80.74 | 80.74 | 80.20 | 80.20 | 70.46 | 70.50 |

| RF | 81.34 | 81.40 | 79.21 | 79.29 | 81.47 | 81.52 | 78.36 | 78.42 | |

| LDA | 60.65 | 60.71 | 65.60 | 65.62 | 66.81 | 66.84 | 60.02 | 60.12 | |

| kNN | 83.60 | 83.60 | 84.81 | 84.81 | 82.94 | 82.94 | 79.71 | 79.72 | |

| SFS | SVM | 96 | 96 | 61 | 61 | 61 | 61 | 61 | 61 |

| RF | 90 | 90 | 66 | 66 | 64 | 64 | 65 | 65 | |

| LDA | 93 | 93 | 58 | 58 | 58 | 58 | 61 | 61 | |

| kNN | 92 | 92 | 62 | 62 | 62 | 62 | 63 | 63 | |

| Type of Feature Selection | Classifier | Disgust | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Raw | Petrosian | Higouchi Fractal Dimension | Approximate Entropy | ||||||

| F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | ||

| No feature selection | DNN1 | 84.65 | 84.70 | 85.04 | 85.04 | 87.08 | 87.09 | 68.90 | 68.99 |

| DNN2 | 80.80 | 80.81 | 84.02 | 84.02 | 82.79 | 82.79 | 65.38 | 65.71 | |

| DNN3 | 87.07 | 87.09 | 85.92 | 85.93 | 87.70 | 87.70 | 67.84 | 68.44 | |

| DNN4 | 82.57 | 82.65 | 83.54 | 83.54 | 81.56 | 81.56 | 65.96 | 66.80 | |

| SVM | 86.82 | 86.82 | 91.13 | 91.14 | 91.59 | 91.59 | 64.27 | 65 | |

| RF | 93.63 | 93.64 | 91.36 | 91.36 | 90.19 | 90.23 | 83.14 | 83.18 | |

| LDA | 74.72 | 74.77 | 84.32 | 84.32 | 85.91 | 85.91 | 58.72 | 59.09 | |

| kNN | 92.03 | 92.05 | 95 | 95 | 91.36 | 91.36 | 82.25 | 82.27 | |

| Fisher | SVM | 83.20 | 83.18 | 90 | 90 | 90.22 | 90.23 | 64.77 | 65.45 |

| RF | 89.32 | 89.32 | 84.52 | 84.55 | 90.23 | 90.23 | 75.26 | 75.23 | |

| LDA | 72.27 | 72.27 | 80.45 | 80.45 | 83.39 | 83.41 | 61.97 | 62.73 | |

| kNN | 89.74 | 89.77 | 88.64 | 88.64 | 89.31 | 89.32 | 66.60 | 66.59 | |

| PCA | SVM | 86.40 | 86.41 | 92.93 | 92.93 | 93.55 | 93.55 | 74.77 | 74.95 |

| RF | 87.42 | 87.43 | 87.64 | 87.66 | 90.11 | 90.11 | 78.74 | 78.80 | |

| LDA | 72.37 | 72.43 | 85.28 | 85.30 | 87.52 | 87.52 | 61.61 | 62 | |

| kNN | 90.84 | 90.84 | 93.89 | 93.89 | 92.43 | 92.43 | 82.05 | 82.05 | |

| SFS | SVM | 72 | 72 | 73 | 73 | 75 | 75 | 64 | 64 |

| RF | 65 | 65 | 65 | 65 | 66 | 66 | 62 | 62 | |

| LDA | 76 | 76 | 77 | 77 | 74 | 74 | 62 | 62 | |

| kNN | 74 | 74 | 73 | 73 | 67 | 67 | 57 | 57 | |

| Type of Feature Selection | Classifier | Fear | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Raw | Petrosian | Higuchi Fractal Dimension | Approximate Entropy | ||||||

| F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | ||

| No feature selection | DNN1 | 82.86 | 82.87 | 78.54 | 78.55 | 81.22 | 81.22 | 66.96 | 67.03 |

| DNN2 | 79.45 | 79.53 | 75.31 | 75.31 | 79.83 | 79.84 | 63.69 | 63.84 | |

| DNN3 | 84.88 | 84.93 | 78.61 | 78.65 | 80.97 | 81.02 | 67.25 | 67.34 | |

| DNN4 | 82.33 | 82.46 | 75.87 | 75.87 | 78.65 | 78.65 | 63.26 | 63.32 | |

| SVM | 80.21 | 80.48 | 86.82 | 86.82 | 87.15 | 87.16 | 66.02 | 66.95 | |

| RF | 89.52 | 89.55 | 88.20 | 88.18 | 84.41 | 84.42 | 79.26 | 79.28 | |

| LDA | 68.64 | 68.66 | 70.93 | 71.23 | 77.86 | 77.91 | 57.15 | 57.36 | |

| kNN | 90.75 | 90.75 | 89.72 | 89.73 | 89.04 | 89.04 | 80.66 | 80.65 | |

| Fisher | SVM | 74.37 | 74.49 | 78.50 | 78.60 | 82.36 | 82.36 | 67.72 | 68.84 |

| RF | 88.85 | 88.87 | 78.18 | 78.25 | 80.39 | 80.48 | 79.27 | 79.28 | |

| LDA | 65.24 | 65.24 | 69.39 | 69.52 | 72.43 | 72.43 | 59.32 | 59.76 | |

| kNN | 86.98 | 86.99 | 80.82 | 80.82 | 83.39 | 83.39 | 79.42 | 79.45 | |

| PCA | SVM | 80.53 | 80.77 | 87.25 | 87.26 | 89.77 | 89.78 | 72.73 | 73.39 |

| RF | 86.98 | 87.02 | 82.71 | 82.77 | 86.74 | 86.76 | 76.75 | 76.78 | |

| LDA | 62.09 | 62.14 | 73.18 | 73.20 | 77.19 | 77.19 | 57.62 | 57.69 | |

| kNN | 89.21 | 89.23 | 89.95 | 89.95 | 89.38 | 89.38 | 82.25 | 82.26 | |

| SFS | SVM | 65 | 65 | 66 | 66 | 65 | 65 | 60 | 60 |

| RF | 61 | 61 | 61 | 61 | 62 | 62 | 61 | 61 | |

| LDA | 69 | 69 | 69 | 69 | 73 | 73 | 59 | 59 | |

| kNN | 61 | 61 | 61 | 61 | 65 | 65 | 59 | 59 | |

| Type of Feature Selection | Classifier | Sadness | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Raw | Petrosian | Higuchi Fractal Dimension | Approximate Entropy | ||||||

| F1 Score (%) | Accuracy (% | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | F1 Score (%) | Accuracy (%) | ||

| No feature selection | DNN1 | 80.17 | 80.20 | 81.79 | 81.79 | 83.70 | 83.71 | 68.11 | 68.12 |

| DNN2 | 78.52 | 78.56 | 79.07 | 79.07 | 82.49 | 82.49 | 67.39 | 67.46 | |

| DNN3 | 81.72 | 81.74 | 81.95 | 81.98 | 83.31 | 83.33 | 69.71 | 69.76 | |

| DNN4 | 79.56 | 79.59 | 79.19 | 79.21 | 83.13 | 83.15 | 65.90 | 66.10 | |

| SVM | 76.26 | 76.91 | 86.90 | 86.90 | 90.80 | 90.80 | 65.21 | 65.68 | |

| RF | 87.49 | 87.52 | 84.18 | 84.24 | 84.52 | 84.56 | 81.86 | 81.90 | |

| LDA | 69.37 | 69.42 | 75.82 | 75.82 | 82.37 | 82.37 | 47.12 | 51.17 | |

| kNN | 86.81 | 86.90 | 90.17 | 90.17 | 89.06 | 89.08 | 80.50 | 80.50 | |

| Fisher | SVM | 73.96 | 74.26 | 80.97 | 80.97 | 86.43 | 86.43 | 59 | 60.22 |

| RF | 84.24 | 84.24 | 81.37 | 81.44 | 84.83 | 84.87 | 64.43 | 64.43 | |

| LDA | 66.07 | 66.61 | 69.61 | 69.58 | 78.78 | 78.78 | 50.08 | 50.08 | |

| kNN | 85.76 | 85.80 | 83.29 | 83.31 | 84.71 | 84.71 | 76.45 | 76.44 | |

| PCA | SVM | 79.63 | 79.92 | 87.21 | 87.21 | 89.31 | 89.31 | 74.56 | 74.85 |

| RF | 85.55 | 85.62 | 82.38 | 82.45 | 86.49 | 86.52 | 80.11 | 80.14 | |

| LDA | 58.91 | 58.99 | 74.47 | 74.46 | 80.31 | 80.31 | 52.36 | 53.29 | |

| kNN | 88.14 | 88.17 | 88.53 | 88.53 | 88.12 | 88.13 | 82.56 | 82.56 | |

| SFS | SVM | 63 | 63 | 68 | 68 | 69 | 69 | 63 | 63 |

| RF | 65 | 65 | 66 | 66 | 66 | 66 | 58 | 58 | |

| LDA | 65 | 65 | 67 | 67 | 71 | 71 | 64 | 64 | |

| kNN | 61 | 61 | 63 | 63 | 61 | 61 | 58 | 58 | |

| Raw | Petrosian | Higuchi | Approximate Entropy | |

|---|---|---|---|---|

| Anger | tEMG | F3 | FC1 | tEMG |

| Respiration | AF3 | AF3 | Respiration | |

| O2 | tEMG | F3 | GSR | |

| P3 | Respiration | CP5 | PPG | |

| C3 | FC1 | tEMG | vEOG | |

| Joy | GSR | GSR | Cz | GSR |

| FC1 | Oz | GSR | Respiration | |

| PO3 | zEMG | P8 | zEMG | |

| C3 | O1 | P3 | hEOG | |

| Cz | PO3 | T7 | vEOG | |

| Surprise | GSR | GSR | GSR | GSR |

| Cz | FC1 | FC1 | PPG | |

| C3 | FC2 | Cz | vEOG | |

| Oz | Cz | P3 | Respiration | |

| C4 | CP2 | Pz | zEMG | |

| Disgust | vEOG | FC2 | vEOG | vEOG |

| FC5 | vEOG | T7 | hEOG | |

| C3 | Oz | AF3 | GSR | |

| P7 | PO3 | hEOG | CP5 | |

| Respiration | FP1 | CP5 | Oz | |

| Fear | tEMG | FC1 | FC1 | vEOG |

| hEOG | F4 | F4 | zEMG | |

| vEOG | T8 | FC2 | Respiration | |

| zEMG | Cz | AF4 | hEOG | |

| Cz | FC2 | Pz | GSR | |

| Sadness | CP1 | FC1 | FC1 | PPG |

| F8 | FP1 | P3 | Temperature | |

| P7 | AF3 | O1 | tEMG | |

| Cz | FC2 | FP1 | Oz | |

| Respiration | Oz | AF3 | zEMG |

| Raw | Petrosian | Higuchi Fractal Dimension | Approximate Entropy | |||||

|---|---|---|---|---|---|---|---|---|

| No Feature Selection | With Feature Selection | No Feature Selection | With Feature Selection | No Feature Selection | With Feature Selection | No Feature Selection | With Feature Selection | |

| Anger | Random Forest 96.04% | kNN Fisher 97.52% | SVM 98.02% | SVM Fisher 95.05% | SVM 98.02% | SVM Fisher 94.05% | Random Forest 92.55% | Random Forest Fisher 92.08% |

| Joy | kNN 91.22% | LDA SFS 100% | kNN 87.9% | kNN Fisher 85.76% | kNN 87.60% | kNN Fisher 83.75% | Random Forest 86.40% | Random Forest Fisher 80.37% |

| Surprise | kNN 85.01% | SVM SFS 96% | kNN 84.75% | kNN Fisher 80.59% | kNN 83.64% | Random Forest Fisher 80.69% | kNN 81.30% | kNN Fisher 82.59% |

| Disgust | Random Forest 93.63% | kNN Fisher 89.74% | kNN 95% | SVM Fisher 90% | SVM 91.59% | Random Forest Fisher 90.23% | Random Forest 83.14% | Random Forest Fisher 75.26% |

| Fear | kNN 90.75% | Random Forest Fisher 80.85% | kNN 89.72% | kNN Fisher 80.82% | kNN 89.04% | kNN Fisher 83.39% | kNN 80.66% | kNN Fisher 79.45% |

| Sadness | Random Forest 87.49% | kNN Fisher 85.76% | kNN 90.17% | kNN Fisher 83.29% | SVM 90.8% | SVM Fisher 86.43% | Random Forest 81.86% | kNN Fisher 76.45% |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bălan, O.; Moise, G.; Petrescu, L.; Moldoveanu, A.; Leordeanu, M.; Moldoveanu, F. Emotion Classification Based on Biophysical Signals and Machine Learning Techniques. Symmetry 2020, 12, 21. https://doi.org/10.3390/sym12010021

Bălan O, Moise G, Petrescu L, Moldoveanu A, Leordeanu M, Moldoveanu F. Emotion Classification Based on Biophysical Signals and Machine Learning Techniques. Symmetry. 2020; 12(1):21. https://doi.org/10.3390/sym12010021

Chicago/Turabian StyleBălan, Oana, Gabriela Moise, Livia Petrescu, Alin Moldoveanu, Marius Leordeanu, and Florica Moldoveanu. 2020. "Emotion Classification Based on Biophysical Signals and Machine Learning Techniques" Symmetry 12, no. 1: 21. https://doi.org/10.3390/sym12010021

APA StyleBălan, O., Moise, G., Petrescu, L., Moldoveanu, A., Leordeanu, M., & Moldoveanu, F. (2020). Emotion Classification Based on Biophysical Signals and Machine Learning Techniques. Symmetry, 12(1), 21. https://doi.org/10.3390/sym12010021