Some Novel Picture 2-Tuple Linguistic Maclaurin Symmetric Mean Operators and Their Application to Multiple Attribute Decision Making

Abstract

1. Introduction

2. Preliminaries

2.1. 2-Tuple Linguistic Term Sets

- (1)

- If and only if , then .

- (2)

- is the negation operator.

- (3)

- If , then .

- (4)

- If , then .

2.2. Picture Fuzzy Set

- (1)

- if,,,;

- (2)

- ;

- (3)

- ;

- (4)

- .

- (1)

- ;

- (2)

- ;

- (3)

- ,;

- (4)

- ,.

2.3. Archimedean T-Norm and T-Conorm

- (1)

- andfor all x;

- (2)

- for all x and y;

- (3)

- ifand;

- (4)

- for all x, y and z.

- (1)

- andfor all x;

- (2)

- for all x and y;

- (3)

- ifand;

- (4)

- for all x, y and z.

- (1)

- ;

- (2)

- ;

- (3)

- ;

- (4)

- .

- (1)

- Let , , , and . Then, the algebraic t-norm and t-conorm are obtained: , .

- (2)

- Let , , , and . Then, the Einstein t-norm and t-conorm are obtained: , .

- (3)

- Let , , , , and . Then, the Hamacher t-norm and t-conorm are obtained: , , .

2.4. MSM Operators

- (1)

- Idempotency. If for each , ;

- (2)

- Monotonicity. If for all , ;

- (3)

- Boundedness. Min max .

- (1)

- Idempotency. If for each , then ;

- (2)

- Monotonicity. If for all , ;

- (3)

- Boundedness. .

- (1)

- Idempotency. If and for each , then ;

- (2)

- Monotonicity. If for all , ;

- (3)

- Boundedness. .

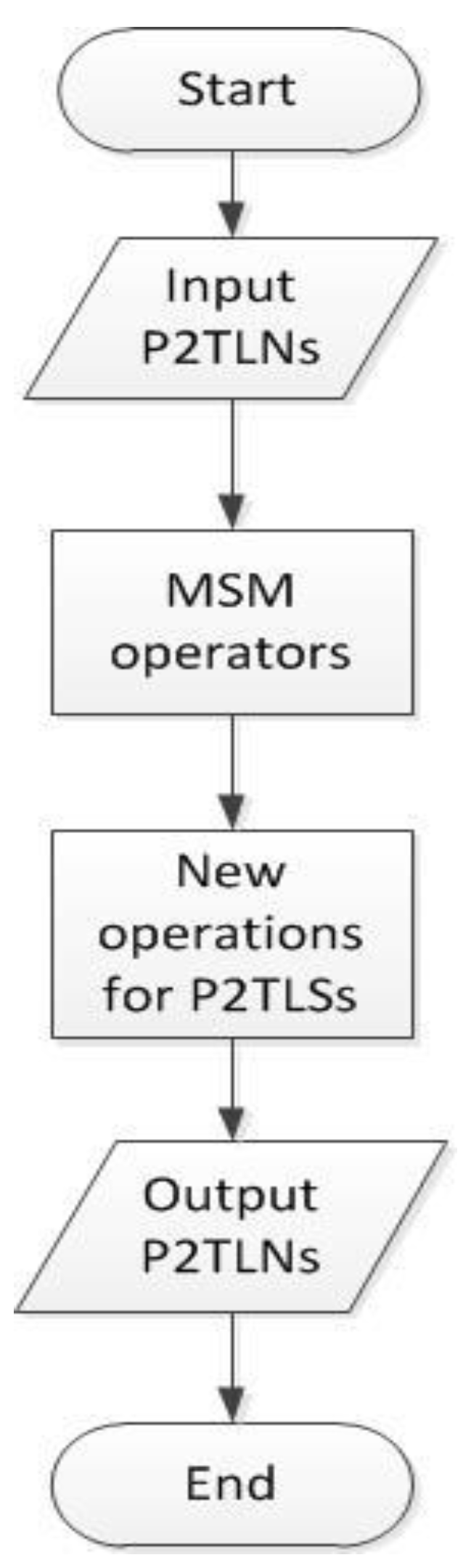

3. Picture 2-Tuple Linguistic Sets and a New Operation

3.1. Picture 2-Tuple Linguistic Sets

- (a)

- If , is smaller than , denoted by .

- (b)

- If , is the same as , denoted by .

- (c)

- If , is larger than , denoted by .

3.2. New Operations for Picture 2-Tuple Linguistic Sets Based on ATT

- (1)

- ;

- (2)

- ;

- (3)

- ;

- (4)

- .

- (1)

- ;

- (2)

- ;

- (3)

- ;

- (4)

- .

- (1)

- ;

- (2)

- ;

- (3)

- ;

- (4)

- .

- (1)

- ;

- (2)

- ;

- (3)

- ;

- (4)

- .

4. Picture 2-Tuple Linguistic MSM Operators Based on ATT

4.1. The ATT-P2TLMSM and ATT-P2TLGMSM Operators

- (1)

- Idempotency: If the P2TLNsfor all, then.

- (2)

- Commutativity: Assumeis a permutation offor all; then,.

- (3)

- Monotonicity: If,,andfor each, thenand.

- (4)

- Boundedness: Ifand, then.

- Since each , that is,

- This property is obvious, and we do not prove it here.

- If , , , and for each and ,, according to idempotency, and .Therefore, we have

- According to idempotency, let and . Based on the monotonicity, if and for each , then we have and . Therefore, the following conclusion can be obtained.

- (1)

- When m = 1, Equation (10) degrades to the following formula.

- (2)

- When m = 2, Equation (10) degrades to the following formula.

- (3)

- When m = n, Equation (10) degrades to the following formula.

- (1)

- Idempotency: If the P2TLNsfor all, then.

- (2)

- Commutativity: Assumeis a permutation offor all; then,.

- (3)

- Monotonicity: If,,andfor each, thenand.

- (4)

- Boundedness: Ifand, then.

- (1)

- When m = 1, Equation (17) degrades to the following formula.

- (2)

- When m = 2, Equation (17) degrades to the following formula.

- (3)

- When m = n, Equation (17) degrades to the following formula.

4.2. The ATT-P2TLWMSM and ATT-P2TLWGMSM Operators

- (1)

- Monotonicity: If,,andfor each, thenand.

- (2)

- Boundedness: Ifand, then.

- (1)

- When m = 1, Equation (23) degrades to the following formula.

- (2)

- When m = 2, Equation (23) degrades to the following formula.

- (3)

- When m = n, Equation (23) degrades to the following formula.

- (1)

- Monotonicity: If,,andfor each, thenand.

- (2)

- Boundedness: Ifand, then.

- (1)

- When m = 1, Equation (29) degrades to the following formula.

- (2)

- When m = 2, Equation (23) degrades to the following formula.

- (3)

- When m = n, Equation (23) degrades to the following formula.

5. MADM Based on the ATT-P2TLMSM Operator

6. Illustrative Example

6.1. Data and Backdrop

6.2. Method Based on the ATT-P2TLWMSM and ATT-P2TLGWMSM Operators

6.3. Comparative Analysis and Discussion

- (1)

- The calculation object of the operators proposed in this paper is P2TLN, which not only includes 2-tuple linguistic information but also expresses the degree of positive membership, the degree of neutral membership, the degree of negative membership and the degree of refusal membership of an element in linguistic terms. These functions make the representation of linguistic information more precise.

- (2)

- The same ranking results as those in references [36,41] show that the methods proposed in this paper are valid and effective for solving MADM problems in which the attribute values take the form of picture 2-tuple linguistic information. Compared with the methods based on P2TLWA and P2TLWGBM proposed by Wei [36,41], the operators developed in this paper can capture the interrelationships among multiple input parameters. Therefore, the methods proposed in this paper are valid and correct and can solve MADM problems better.

7. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Zadeh, L.A. Fuzzy sets. Inf. Control 1965, 8, 338–353. [Google Scholar] [CrossRef]

- Atanassov, K.T. Intuitionistic fuzzy sets. Fuzzy Sets Syst. 1986, 20, 87–96. [Google Scholar] [CrossRef]

- Zadeh, L.A. The Concept of a Linguistic Variable and its Application to Approximate Reasoning. Inf. Sci. 1974, 8, 199–249. [Google Scholar] [CrossRef]

- Bordogna, G.; Fedrizzi, M.; Pasi, G. A linguistic modeling of consensus in group decision making based on OWA operators. IEEE Trans. Syst. Man Cybern. Part A Syst. Hum. 1997, 27, 126–133. [Google Scholar] [CrossRef]

- Merigó, J.M.; Casanovas, M.; Palacios-Marqués, D. Linguistic group decision making with induced aggregation operators and probabilistic information. Appl. Soft Comput. J. 2014, 24, 669–678. [Google Scholar] [CrossRef]

- Herrera, F.; Herrera-Viedma, E. Linguistic decision analysis: Steps for solving decision problems under linguistic information. Fuzzy Sets Syst. 2000, 115, 67–82. [Google Scholar] [CrossRef]

- Deveci, M.; Özcan, E.; John, R.; Öner, S.C. Interval type-2 hesitant fuzzy set method for improving the service quality of domestic airlines in Turkey. J. Air Transp. Manag. 2018, 69, 83–98. [Google Scholar] [CrossRef]

- Herrera, F.; Martínez, L. A 2-tuple fuzzy linguistic representation model for computing with words. Fuzzy Syst. 2000, 8, 746–752. [Google Scholar]

- Xu, Y.; Wang, H. Approaches based on 2-tuple linguistic power aggregation operators for multiple attribute group decision making under linguistic environment. Appl. Soft Comput. J. 2011, 11, 3988–3997. [Google Scholar] [CrossRef]

- Wei, G.; Zhao, X. Some dependent aggregation operators with 2-tuple linguistic information and their application to multiple attribute group decision making. Expert Syst. Appl. 2012, 39, 5881–5886. [Google Scholar] [CrossRef]

- Jiang, X.P.; Wei, G.W. Some Bonferroni mean operators with 2-tuple linguistic information and their application to multiple attribute decision making. J. Intell. Fuzzy Syst. 2014, 27, 2153–2162. [Google Scholar]

- Merigó, J.M.; Gil-Lafuente, A.M. Induced 2-tuple linguistic generalized aggregation operators and their application in decision-making. Inf. Sci. 2013, 236, 1–16. [Google Scholar] [CrossRef]

- Wang, J.Q.; Wang, D.D.; Zhang, H.Y.; Chen, X.H. Multi-criteria group decision making method based on interval 2-tuple linguistic information and Choquet integral aggregation operators. Soft Comput. A Fusion Found. Methodol. Appl. 2015, 19, 389–405. [Google Scholar] [CrossRef]

- Qin, J.; Liu, X. 2-tuple linguistic Muirhead mean operators for multiple attribute group decision making and its application to supplier selection. Kybernetes 2016, 45, 2–29. [Google Scholar] [CrossRef]

- Cuong, B.C. Picture fuzzy sets-first results (part 1). In Seminar on Neuro-Fuzzy Systems with Applications; Institute of Mathematics: Hanoi, Vietnam, March 2013. [Google Scholar]

- Singh, P. Correlation coefficients for picture fuzzy sets. J. Intell. Fuzzy Syst. 2015, 2, 591–604. [Google Scholar]

- Yang, Y.; Liang, C.; Ji, S.; Liu, T. Adjustable soft discernibility matrix based on picture fuzzy soft sets and its applications in decision making. J. Intell. Fuzzy Syst. 2015, 4, 1711–1722. [Google Scholar] [CrossRef]

- Le, H.S. Generalized picture distance measure and applications to picture fuzzy clustering. Appl. Soft Comput. 2016, 46, 284–295. [Google Scholar]

- Wei, G. Picture fuzzy cross-entropy for multiple attribute decision making problems. J. Bus. Econ. Manag. 2016, 17, 491–502. [Google Scholar] [CrossRef]

- Thong, N.T. HIFCF: An effective hybrid model between picture fuzzy clustering and intuitionistic fuzzy recommender systems for medical diagnosis. Expert Syst. Appl. 2015, 42, 3682–3701. [Google Scholar] [CrossRef]

- Beliakov, G.; Bustince, H.; Goswami, D.P.; Mukherjee, U.K.; Pal, N.R. On averaging operators for Atanassov intuitionistic fuzzy sets. Inf. Sci. Int. J. 2011, 181, 1116–1124. [Google Scholar]

- Liu, P. The Aggregation Operators Based on Archimedean t-Conorm and t-Norm for Single-Valued Neutrosophic Numbers and their Application to Decision Making. Int. J. Fuzzy Syst. 2016, 18, 849–863. [Google Scholar] [CrossRef]

- Liu, P.; Zhang, X. A Novel Picture Fuzzy Linguistic Aggregation Operator and Its Application to Group Decision-making. Cogn. Comput. 2018, 10, 242–259. [Google Scholar] [CrossRef]

- Yager, R.R. The power average operator. IEEE Trans. Syst. Man Cybern. Part A Syst. Hum. 2001, 31, 724–731. [Google Scholar] [CrossRef]

- Tan, C.; Chen, X. Induced Choquet ordered averaging operator and its application to group decision making. Int. J. Intell. Syst. 2010, 25, 59–82. [Google Scholar] [CrossRef]

- Bonferroni, C. Sulle medie multiple di potenze. Boll. Mater. Italiana 1950, 5, 267–270. [Google Scholar]

- Liu, P.; Chen, Y.; Chu, Y. Intuitionistic uncertain linguistic weighted bonferroni owa operator and Its application to multiple attribute decision making. Sci. World J. 2014, 45, 418–438. [Google Scholar] [CrossRef]

- Liu, P. Some Heronian mean operators with 2-tuple linguistic information and their application to multiple attribute group decision making. Technol. Econ. Dev. Econ. 2015, 21, 797–814. [Google Scholar]

- Maclaurin, C. A second letter to Martin Folkes, Esq.: Concerning the roots of equations, with the demonstration of other rules of algebra. Philos. Trans. R. Soc. Lond. Ser. A 1729, 36, 59–96. [Google Scholar]

- Qin, J.; Liu, X.; Pedrycz, W. Hesitant Fuzzy Maclaurin Symmetric Mean Operators and Its Application to Multiple-Attribute Decision Making. Int. J. Fuzzy Syst. 2015, 17, 509–520. [Google Scholar] [CrossRef]

- Wang, J.Q.; Yang, Y.; Li, L. Multi-criteria decision-making method based on single-valued neutrosophic linguistic Maclaurin symmetric mean operators. Neural Comput. Appl. 2016, 4, 1529–1547. [Google Scholar] [CrossRef]

- Wei, G.; Mao, L. Pythagorean Fuzzy Maclaurin Symmetric Mean Operators in Multiple Attribute Decision Making: Pythagorean fuzzy maclaurin symmetric mean operators. Int. J. Intell. Syst. 2018, 33, 1043–1070. [Google Scholar] [CrossRef]

- Liu, P.; Zhang, X. Some Maclaurin Symmetric Mean Operators for Single-Valued Trapezoidal Neutrosophic Numbers and Their Applications to Group Decision Making. Int. J. Fuzzy Syst. 2018, 20, 45–61. [Google Scholar] [CrossRef]

- Herrera, F.; Herrera-Viedma, E. A model of consensus in group decision making under linguistic assessments. Fuzzy Sets Syst. 1996, 78, 73–87. [Google Scholar] [CrossRef]

- Herreraab, F. Managing non-homogeneous information in group decision making. Eur. J. Oper. Res. 2005, 166, 115–132. [Google Scholar] [CrossRef]

- Wei, G. Picture 2-Tuple Linguistic Bonferroni Mean Operators and Their Application to Multiple Attribute Decision Making. Int. J. Fuzzy Syst. 2017, 19, 997–1010. [Google Scholar] [CrossRef]

- Schweizer, B.; Sklar, A. Associative functions and abstract semi-groups. Publ. Math. 1964, 10, 69–81. [Google Scholar]

- Simon, D. Fuzzy Sets; Fuzzy Logic: Theory and Applications. Control Eng. Pract. 1996, 4, 1332–1333. [Google Scholar] [CrossRef]

- Tao, Z.; Chen, H.; Zhou, L.; Liu, J. On new operational laws of 2-tuple linguistic information using Archimedean t-norm and s-norm. Knowl.-Based Syst. 2014, 66, 156–165. [Google Scholar] [CrossRef]

- Ling, C.H. Representation of associative functions. Publ. Math. Debrecent 1965, 12, 189–212. [Google Scholar]

- Wei, G.; Alsaadi, F.E.; Hayat, T.; Alsaedi, A. Picture2-tuple linguistic aggregation operators in multiple attribute decision making. Soft Comput. 2018, 22, 989–1002. [Google Scholar] [CrossRef]

| Options\Attributes | ||||

|---|---|---|---|---|

| Operators\Attributes | ATT-P2TLWMSM | ATT-P2TLGWMSM (p = 1, q = 2) |

|---|---|---|

| Operators\Attributes | ATT-P2TLWMSM | ATT-P2TLGWMSM (p = 1, q = 2) |

|---|---|---|

| Operator | Parameter | Ranking | ||

|---|---|---|---|---|

| m | ||||

| 2 | - | - | ||

| 2 | 1 | 2 | ||

| Method | Operator | Ranking |

|---|---|---|

| Method in [36] | (m = 2) | |

| Method in [41] | (p1 = p2 = 1) | |

| Proposed methods in this paper | (m = 2) | |

| Proposed methods in this paper | (p1 = p2 = 1) |

| Operator | m | Ranking | |||

|---|---|---|---|---|---|

| 1 | - | - | - | ||

| 2 | - | - | - | ||

| 3 | - | - | - | ||

| 4 | - | - | - | ||

| 2 | 0 | 1 | - | ||

| 1 | 0 | - | |||

| 1 | 1 | - | |||

| 1 | 2 | - | |||

| 1 | 3 | - | |||

| 2 | 1 | - | |||

| 2 | 2 | - | |||

| 2 | 3 | - | |||

| 3 | 1 | - | |||

| 3 | 2 | - | |||

| 3 | 3 | - | |||

| 3 | 1 | 0 | 0 | ||

| 0 | 1 | 0 | |||

| 0 | 0 | 1 | |||

| 1 | 1 | 1 | |||

| 1 | 1 | 2 | |||

| 1 | 1 | 3 | |||

| 1 | 1 | 4 | |||

| 1 | 2 | 1 | |||

| 1 | 3 | 1 | |||

| 1 | 4 | 1 | |||

| 2 | 1 | 1 | |||

| 3 | 1 | 1 | |||

| 4 | 1 | 1 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Feng, M.; Geng, Y. Some Novel Picture 2-Tuple Linguistic Maclaurin Symmetric Mean Operators and Their Application to Multiple Attribute Decision Making. Symmetry 2019, 11, 943. https://doi.org/10.3390/sym11070943

Feng M, Geng Y. Some Novel Picture 2-Tuple Linguistic Maclaurin Symmetric Mean Operators and Their Application to Multiple Attribute Decision Making. Symmetry. 2019; 11(7):943. https://doi.org/10.3390/sym11070943

Chicago/Turabian StyleFeng, Min, and Yushui Geng. 2019. "Some Novel Picture 2-Tuple Linguistic Maclaurin Symmetric Mean Operators and Their Application to Multiple Attribute Decision Making" Symmetry 11, no. 7: 943. https://doi.org/10.3390/sym11070943

APA StyleFeng, M., & Geng, Y. (2019). Some Novel Picture 2-Tuple Linguistic Maclaurin Symmetric Mean Operators and Their Application to Multiple Attribute Decision Making. Symmetry, 11(7), 943. https://doi.org/10.3390/sym11070943