Feature Selection with Conditional Mutual Information Considering Feature Interaction

Abstract

1. Introduction

2. Basic Information-Theoretic Concepts

3. Related Work

4. Some Definitions about Feature Relationships

5. A New Feature Smethod Considering Feature Interaction

| Algorithm 1 CMIFSI algorithm |

| Input: A training dataset D with a full feature set F = {f1,f2,…fn} and class vector C |

| A predefined threshold K |

| Output: The selected feature sequence |

| 1. Initialize parameters: the selected feature subset S = Ø, k = 0, deviated function DF(fi, S) = 0 for all candidate features; |

| 2. for i = 1 to n do |

| 3. Calculate I(fi;C) |

| 4. end |

| 5. While k < K do |

| 6. For each candidate feature fi ∈ F do |

| 7. Update DF(fi, S) according to Equation (36) |

| 8. Calculate the evaluation value |

| JCMI(fi) = I(fi;C) + DF(fi, S) |

| 9. End |

| 10. Select the feature fj with the largest JCMI(fi) |

| S = S∪fj |

| F = F − fj |

| 11. k = k + 1 |

| 12. end |

6. Experiments and Results

6.1. Experiment Setup

6.2. Experiment on Synthetic Datasets

6.2.1. Synthetic Datasets

6.2.2. Results on Synthetic Datasets

6.3. Experiment on Benchmark Datasets

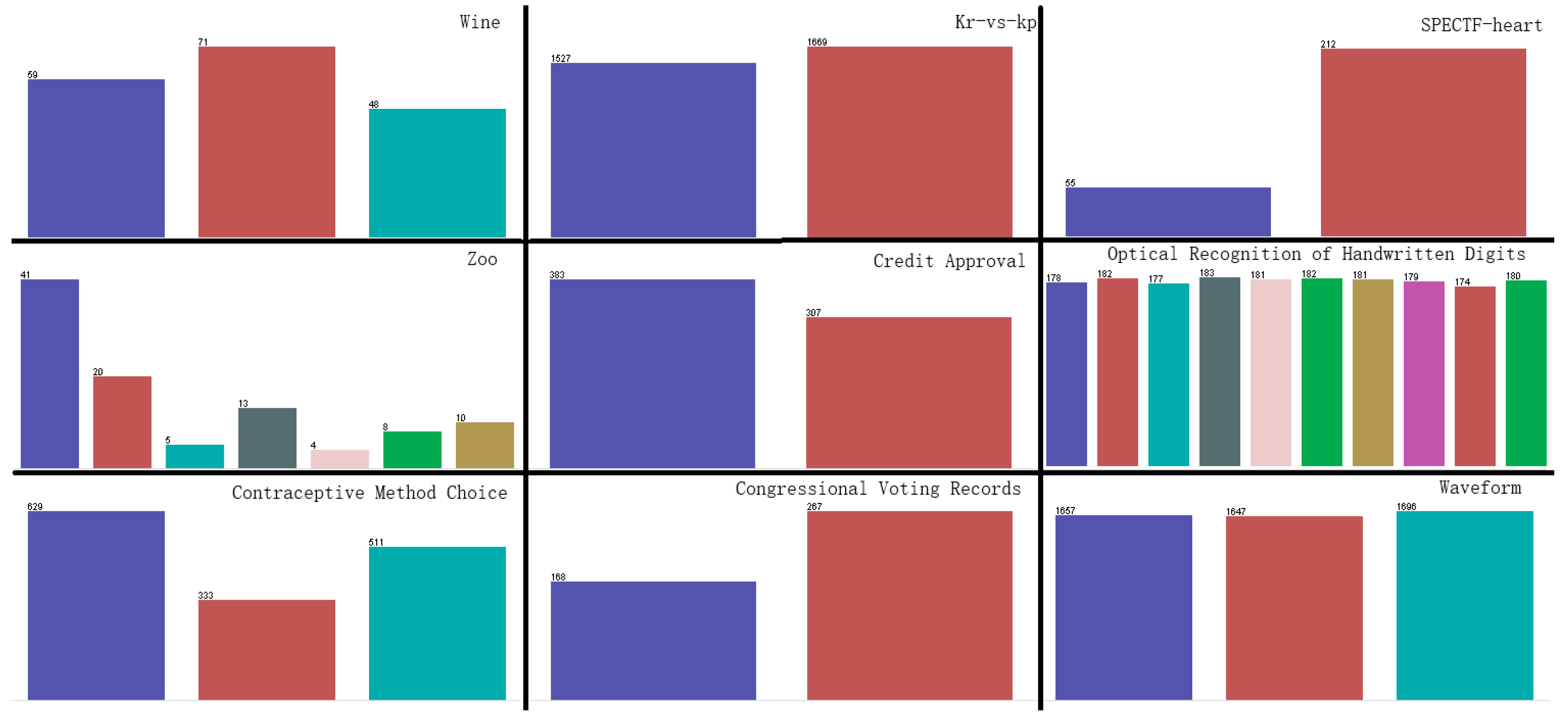

6.3.1. Benchmark Datasets

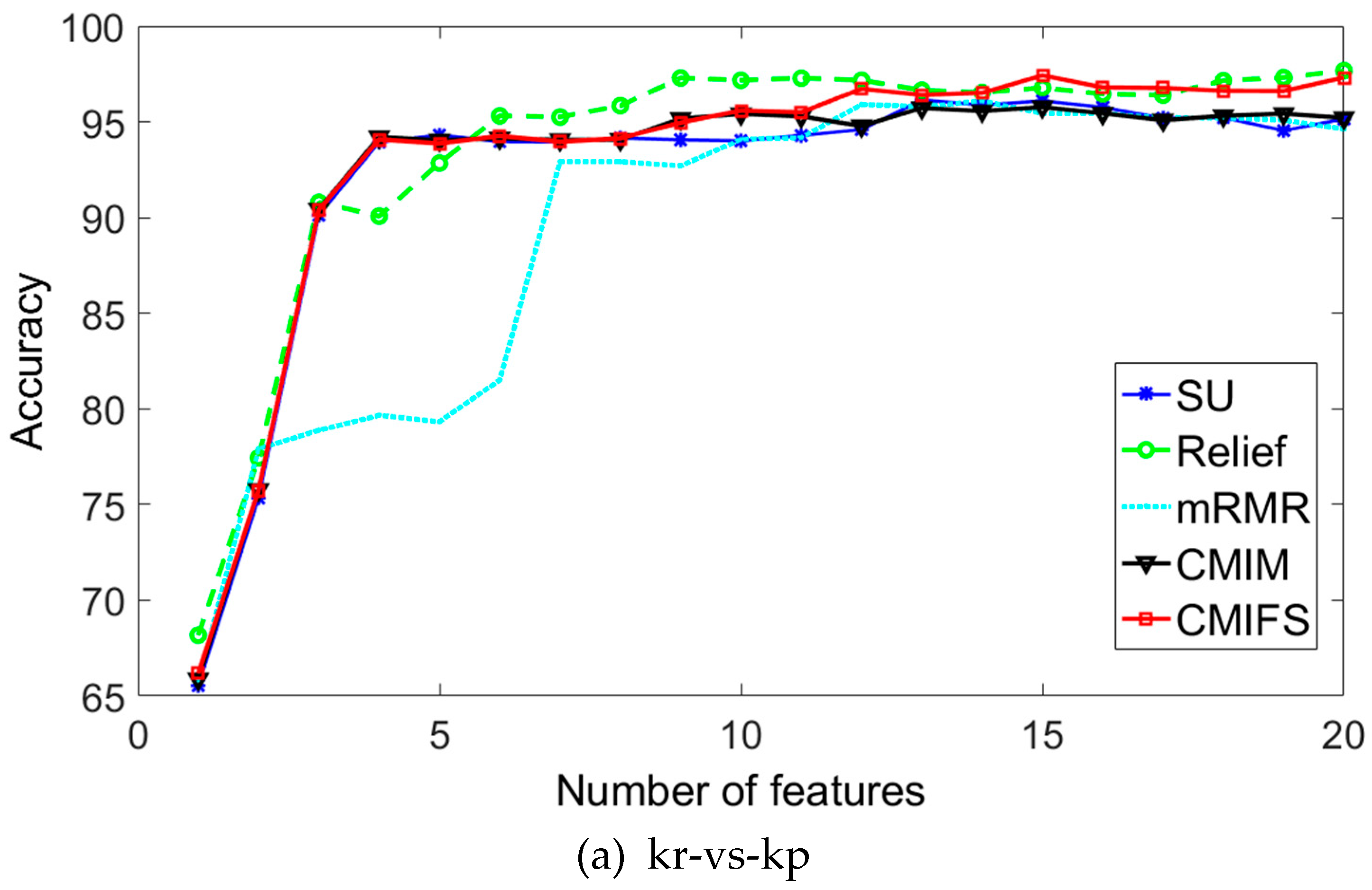

6.3.2. Results on Benchmark Datasets

7. Discussion and Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Guyon, I.; Elisseeff, A. An Introduction to Variable and Feature Selection. J. Mach. Learning Res. 2002, 3, 1157–1182. [Google Scholar]

- Maldonado, S.; Weber, R. A wrapper method for feature selection using support vector machines. Inf. Sci. 2009, 179, 2208–2217. [Google Scholar] [CrossRef]

- Bolón-Canedo, V.; Sánchez-Maroño, N.; Alonso-Betanzos, A. Recent advances and emerging challenges of feature selection in the context of big data. Knowledge-Based Syst. 2015, 86, 33–45. [Google Scholar] [CrossRef]

- Dash, M.; Liu, H. Feature Selection for Classification. Intel. Data Anal. 1997, 1, 131–156. [Google Scholar] [CrossRef]

- Jakulin, A.; Bratko, I. Testing the Significance of Attribute Interactions. In Proceedings of the twenty-first international conference on Machine learning, Banff, AB, Canada, 4–8 July 2004. [Google Scholar]

- Zhao, Z.; Liu, H. Searching for interacting features in subset selection. Intel. Data Anal. 2009, 13, 207–228. [Google Scholar] [CrossRef]

- Zeng, Z.; Zhang, H.; Zhang, R.; Yin, C. A novel feature selection method considering feature interaction. Pattern Recogn. 2015, 48, 2656–2666. [Google Scholar] [CrossRef]

- Tao, H.; Hou, C.; Nie, F.; Jiao, Y.; Yi, D. Effective Discriminative Feature Selection with Nontrivial Solution. IEEE Trans. Neural Netw. Learning Syst. 2016, 27, 3013–3017. [Google Scholar] [CrossRef] [PubMed]

- Murthy, C.A.; Chanda, B. Generation of Compound Features based on feature Interaction for Classification. Expert Syst. Appl. 2018, 108, 61–73. [Google Scholar]

- Chang, X.; Ma, Z.; Lin, M.; Yang, Y.; Hauptmann, A.G. Feature Interaction Augmented Sparse Learning for Fast Kinect Motion Detection. IEEE Trans. Image Process. 2017, 26, 3911–3920. [Google Scholar] [CrossRef] [PubMed]

- Yin, Y.; Zhao, Y.; Zhang, B.; Li, C.; Guo, S. Enhancing ELM by Markov Boundary Based Feature Selection. Neurocomputing 2017, 261, 57–69. [Google Scholar] [CrossRef]

- Brown, G.; Pocock, A.; Zhao, M.J.; Luján, M. Conditional Likelihood Maximisation: A Unifying Framework for Information Theoretic Feature Selection. J. Mach. Learning Res. 2012, 13, 27–66. [Google Scholar]

- Lewis, D.D. Feature Selection and Feature Extraction for Text Categorization. In Proceedings of the workshop on Speech and Natural Language, New York, NY, USA, 23–26 February 1992; pp. 212–217. [Google Scholar]

- Press, W. Numerical Recipes in FORTRAN: The Art of Scientific Computing. Available online: https://doi.org/10.5860/choice.30-5638a (accessed on 29 May 2019).

- Battiti, R. Using mutual information for selecting features in supervised neural net learning. IEEE Trans. Neural Netw. 1994, 5, 537–550. [Google Scholar] [CrossRef] [PubMed]

- Peng, H.; Long, F.; Ding, C. Feature selection based on mutual information: criteria of max-dependency, max-relevance, and min-redundancy. IEEE Trans. Pattern Anal. Mach. Intel. 2005, 27, 1226–1238. [Google Scholar] [CrossRef] [PubMed]

- Yang, H.H.; Moody, J. Data visualization and feature selection: New algorithms for non-gaussian data. Advances in Neural Information Processing Systems. 2000, pp. 687–693. Available online: https://www.researchgate.net/publication/2460722 (accessed on 29 May 2019).

- Fleuret, F. Fast Binary Feature Selection with Conditional Mutual Information. J. Mach. Learn. Res. 2004, 5, 1531–1555. [Google Scholar]

- Yu, L.; Liu, H. Efficient Feature Selection via Analysis of Relevance and Redundancy. J. Mach. Learn. Res. 2004, 5, 1205–1224. [Google Scholar]

- Tesmer, M.; Estevez, P.A. AMIFS: Adaptive feature selection by using mutual information. In Proceedings of the 2004 IEEE International Joint Conference on Neural Networks, Budapest, Hungary, 25–29 July 2004; pp. 303–308. [Google Scholar]

- Cheng, G.; Qin, Z.; Feng, C.; Wang, Y.; Li, F. Conditional Mutual Information-Based Feature Selection Analyzing for Synergy and Redundancy. Etri J. 2011, 33, 210–218. [Google Scholar] [CrossRef]

- John, G.H.; Kohavi, R.; Pfleger, K. Irrelevant Features and the Subset Selection Problem. In Proceedings of the Machine Learning Proceedings, New Brunswick, NJ, USA, 10–13 July 1994; pp. 121–129. [Google Scholar]

- Wang, G.; Lochovsky, F.H. Feature selection with conditional mutual information maximin in text categorization. In Proceedings of the 13th ACM international conference on Information and knowledge management, Washington, DC, USA, 8–13 November 2004; pp. 342–349. [Google Scholar]

- Kira, K.; Rendell, L.A. A Practical Approach to Feature Selection. In Proceedings of the Ninth International Workshop on Machine Learning, Aberdeen, Scotland, 1–3 July 1992; pp. 249–256. [Google Scholar]

- Hall, M.A. Correlation-based Feature Selection for Discrete and Numeric Class Machine Learning. In Proceedings of the Seventeenth International Conference on Machine Learning, Stanford, CA, USA, 29 June–2 July 2000; pp. 359–366. [Google Scholar]

- UCI Repository of Machine Learning Databases. Available online: http://www.ics.uci.edu/~mlearn/MLRepository.html (accessed on 29 May 2019).

- Liu, H.; Sun, J.; Liu, L.; Zhang, H. Feature selection with dynamic mutual information. Pattern Recognit. 2009, 42, 1330–1339. [Google Scholar] [CrossRef]

| Dataset | Relevant Features | Interactive Features |

|---|---|---|

| Data1 | a1,a5,a6 | (a1,a6) |

| Data2 | a0,a1,a5,a6,a8 | (a0,a1,a5),(a0,a6,a8) |

| Data3 | a1,a5,a6,a8 | (a1,a6,a8) |

| Data4 | a2,a8,a12,a13 | (a2,a8,a12) |

| Data5 | a0,a1,a5,a6 | (a1,a5),(a0,a6) |

| Algorithm | Data1 | Data2 |

| GA | a1,a2,a5,a0,a6,a9,a4,a7,a3,a8 | a8,a0,a6,a5,a7,a3,a2,a1,a9,a4 |

| SU | a1,a5,a2,a0,a7,a9,a8,a4,a3,a6 | a8,a0,a6,a7,a1,a5,a4,a3,a2,a9 |

| Relief | a1,a5,a6,a2,a7,a7,a4,a9,a0,a3* | a8,a0,a6,a1,a5,a7,a2,a3,a4,a9* |

| CFS | a1,a2,a5 | a0,a6,a7,a8 |

| MRMR | a1,a0,a7,a8,a6,a5,a2,a9,a4,a3 | a8,a6,a5,a3,a2,a0,a7,a1,a9,a4 |

| CMIM | a1,a6,a5,a2,a7,a9,a0,a8,a3,a4* | a8,a0,a6,a5,a7,a1,a4,a3,a9,a2 |

| CMIFSI | a1,a6,a5,a4,a2,a9,a7,a8,a3,a0* | a8,a0,a1,a6,a5,a7,a4,a2,a9,a3* |

| Optimal subset | a1,a5,a6 | a0,a1,a5,a6,a8 |

| Algorithm | Data3 | Data4 |

| GA | a5,a6,a9,a1,a3,a2,a0,a7,a8,a4 | a2,a13,a11,a8,a1,a14,a5,a12,a0,a6,a10,a7,a3,a4,a9 |

| SU | a5,a1,a6,a7,a0,a3,a4,a9,a8,a2 | a2,a8,a13,a10,a6,a11,a0,a1,a14,a4,a7,a5,a3,a9,a12 |

| Relief | a5,a1,a6,a2,a0,a9,a4,a8,a7,a3 | a2,a8,a13,a11,a14,a10,a7,a4,a12,a1,a6,a3,a5,a0,a9 |

| CFS | a0,a1,a5,a7 | a0,a2,a8,a10,a11,a13,a14 |

| MRMR | a5,a6,a3,a2,a9,a1,a0,a4,a7,a8 | a2,a13,a11,a1,a8,a7,a5,a12,a10,a6,a0,a14,a4,a3,a9 |

| CMIM | a5,a6,a1,a0,a7,a2,a4,a8,a9,a3 | a2,a8,a13,a4,a10,a1,a0,a14,a11,a6,a12,a3,a5,a7,a9 |

| CMIFSI | a5,a6,a1,a8,a2,a4,a0,a9,a7,a3* | a2,a8,a13,a4,a12,a11,a5,a1,a14,a10,a3,a0,a6,a9,a7 |

| Optimal subset | a1,a5,a6,a8 | a2,a8,a12,a13 |

| Algorithm | Data5 | |

| GA | a1,a6,a9,a5,a3,a2,a8,a4,a0,a7 | |

| SU | a1,a5,a6,a2,a9,a0,a7,a3,a4,a8 | |

| Relief | a5,a1,a6,a2,a9,a4,a8,a0,a7,a3 | |

| CFS | a6,a2,a1,a5 | |

| MRMR | a1,a6,a2,a9,a5,a3,a0,a8,a7,a4 | |

| CMIM | a5,a6,a1,a9,a0,a2,a4,a7,a8,a3 | |

| CMIFSI | a5,a6,a1,a2,a0,a9,a4,a8,a7,a3 | |

| Optimal subset | a0,a1,a5,a6 | |

| No. | Datasets | Features | Instances | Classes |

|---|---|---|---|---|

| 1 | Wine | 13 | 178 | 3 |

| 2 | Kr-vs-kp | 36 | 3196 | 2 |

| 3 | SPECTF-heart | 44 | 267 | 2 |

| 4 | Zoo | 16 | 101 | 7 |

| 5 | Credit Approval | 15 | 690 | 2 |

| 6 | Optical Recognition of Handwritten Digits | 64 | 1797 | 10 |

| 7 | Contraceptive Method Choice | 9 | 1473 | 3 |

| 8 | Congressional Voting Records | 16 | 435 | 2 |

| 9 | Waveform | 21 | 5000 | 3 |

| 10 | Waveform+noise | 40 | 5000 | 3 |

| No. | C4.5 | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| Total | GA | SU | Relief | CFS | MRMR | CMIM | CMIFSI | AVE. | |

| 1 | 13 | 6 | 3 | 5 | 11 | 3 | 3 | 3 | 4.86 |

| 2 | 36 | 15 | 13 | 15 | 7 | 14 | 19 | 19 | 14.57 |

| 3 | 44 | 3 | 2 | 1 | 21 | 1 | 2 | 3 | 4.71 |

| 4 | 16 | 10 | 14 | 9 | 9 | 10 | 4 | 4 | 8.57 |

| 5 | 15 | 8 | 6 | 9 | 6 | 4 | 3 | 7 | 6.14 |

| 6 | 64 | 20 | 15 | 20 | 38 | 17 | 20 | 20 | 21.43 |

| 7 | 9 | 8 | 7 | 7 | 8 | 8 | 7 | 4 | 7.0 |

| 8 | 16 | 12 | 13 | 9 | 5 | 12 | 10 | 6 | 9.57 |

| 9 | 21 | 17 | 17 | 16 | 16 | 17 | 16 | 9 | 15.43 |

| 10 | 40 | 16 | 13 | 11 | 15 | 16 | 11 | 13 | 13.57 |

| No. | SVM | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| Total | GA | SU | Relief | CFS | MRMR | CMIM | CMIFSI | AVE. | |

| 1 | 13 | 2 | 5 | 8 | 11 | 9 | 6 | 8 | 7.0 |

| 2 | 36 | 13 | 13 | 20 | 7 | 12 | 15 | 15 | 13.57 |

| 3 | 44 | 15 | 18 | 5 | 21 | 14 | 8 | 19 | 14.29 |

| 4 | 16 | 9 | 9 | 8 | 9 | 7 | 9 | 5 | 8.0 |

| 5 | 15 | 6 | 5 | 1 | 6 | 6 | 4 | 3 | 4.43 |

| 6 | 64 | 20 | 19 | 13 | 38 | 17 | 11 | 11 | 18.43 |

| 7 | 9 | 8 | 5 | 5 | 8 | 9 | 3 | 7 | 6.43 |

| 8 | 16 | 6 | 2 | 1 | 5 | 3 | 5 | 4 | 3.71 |

| 9 | 21 | 19 | 19 | 18 | 16 | 18 | 20 | 19 | 18.43 |

| 10 | 40 | 20 | 20 | 19 | 15 | 18 | 17 | 16 | 18.29 |

| No. | C4.5 | |||||||

|---|---|---|---|---|---|---|---|---|

| Unselected | GA | SU | Relief | CFS | MRMR | CMIM | CMIFSI | |

| 1 | 93.80 | 92.67 | 96.07 | 94.38 | 93.80 | 97.19* | 97.19* | 97.19* |

| 2 | 99.31* | 95.48 | 96.62 | 97.70 | 94.09 | 96.65 | 96.53 | 97.74 |

| 3 | 74.90 | 79.40 | 79.78 | 79.40 | 77.90 | 79.40 | 79.78 | 80.15* |

| 4 | 92.08 | 95.05* | 95.05* | 95.05* | 93.07 | 95.05* | 94.06 | 94.06 |

| 5 | 84.93 | 86.24 | 86.09 | 86.81 | 86.81 | 86.96* | 85.65 | 85.94 |

| 6 | 87.42 | 86.16 | 87.48* | 86.94 | 87.42 | 87.20 | 86.92 | 87.26 |

| 7 | 53.22 | 55.53 | 55.53 | 55.53 | 54.58 | 45.96 | 55.53 | 56.21* |

| 8 | 96.32 | 95.86 | 96.32 | 96.32 | 94.94 | 96.78* | 96.32 | 96.32 |

| 9 | 75.94 | 76.82 | 77.04 | 76.92 | 76.76 | 77.04 | 77.16 | 77.22* |

| 10 | 75.08 | 77.40 | 77.82 | 77.92* | 77.30 | 76.58 | 77.82 | 77.82 |

| Ave. | 83.30 | 84.06 | 84.78 | 84.71 | 83.67 | 83.88 | 84.70 | 84.99 |

| W/T/L | 1/1/8 | 2/0/8 | 3/2/5 | 3/2/5 | 2/0/8 | 3/1/6 | 0/4/6 | |

| No. | SVM | |||||||

|---|---|---|---|---|---|---|---|---|

| Unselected | GA | SU | Relief | CFS | MRMR | CMIM | CMIFSI | |

| 1 | 94.44 | 97.85 | 97.22 | 98.89 | 95.56 | 98.89 | 98.33 | 99.40* |

| 2 | 94.91 | 95.03 | 95.68 | 97.80* | 94.87 | 96.21 | 95.03 | 96.94 |

| 3 | 79.78 | 82.57 | 83.70 | 83.33 | 81.48 | 82.22 | 82.59 | 84.81* |

| 4 | 93.07 | 97.27 | 94.54 | 96.36 | 88.18 | 97.27 | 98.18* | 97.27 |

| 5 | 85.51 | 87.79 | 88.10 | 87.82 | 88.50 | 87.39 | 87.10 | 88.55* |

| 6 | 95.11* | 88.28 | 90.78 | 88.39 | 88.28 | 89.44 | 88.00 | 88.00 |

| 7 | 51.28 | 53.85 | 54.32 | 53.85 | 54.79 | 50.81 | 54.46 | 55.34* |

| 8 | 96.09 | 94.31 | 95.90 | 95.45 | 93.86 | 96.59 | 96.59 | 97.27* |

| 9 | 84.08 | 85.59 | 86.22 | 85.70 | 85.20 | 86.12 | 86.56* | 86.24 |

| 10 | 86.02 | 86.12 | 86.18 | 86.12 | 85.50 | 86.42* | 85.82 | 86.18 |

| Ave. | 86.03 | 86.87 | 87.26 | 87.37 | 85.62 | 87.14 | 87.27 | 88.00 |

| W/T/L | 1/0/9 | 1/1/8 | 1/1/8 | 1/0/9 | 1/0/9 | 2/1/7 | 2/2/6 | |

| No. | C4.5 | |||||||

|---|---|---|---|---|---|---|---|---|

| Unselected | GA | SU | Relief | CFS | MRMR | CMIM | CMIFSI | |

| 1 | [93.41,94.35] | [92.04,92.96] | [95.73,96.70] | [93.50,94.46] | [93.20,94.16] | [96.77,97.70] | [96.53,97.70] | [96.84,97.86] |

| 2 | [98.62,99.58] | [94.92,95.81] | [95.95,97.00] | [97.55,98.50] | [93.49,94.36] | [95.59,96.62] | [95.81,96.76] | [97.68,98.66] |

| 3 | [74.45,75.44] | [78.76,79.71] | [79.84,80.90] | [79.10,80.08] | [77.59,78.66] | [78.78,79.80] | [77.96,79.80] | [79.68,80.65] |

| 4 | [91.92,92.86] | [94.48,95.46] | [94.55,95.55] | [94.68,95.59] | [92.53,93.52] | [94.19,95.23] | [93.58,94.61] | [93.56,94.51] |

| 5 | [84.03,85.05] | [85.80,86.73] | [85,58,86.40] | [86.50,87.55] | [86.04,87.17] | [86.84,87.72] | [85.13,86.10] | [85.14,86.00] |

| 6 | [86.87,87.73] | [85.57,86.60] | [87.20,88.09] | [86.40,87.49] | [87.05,87.92] | [86.71,87.74] | [86.53,87.44] | [86.36,87.40] |

| 7 | [52.47,53.93] | [54.29,55.75] | [55.06,56.35] | [55.13,56.60] | [53.82,55.66] | [45.45,46.71] | [53.85,55.38] | [55.34,56.33] |

| 8 | [96.32,96.74] | [95.56,96.37] | [95.81,96.72] | [95.56,96.38] | [94.08,94.87] | [96.16,96.95] | [96.06,96.87] | [96.16,97.05] |

| 9 | [75.19,76.16] | [76.63,77.52] | [76.67,77.56] | [76.58,77.51] | [76.33,77.30] | [76.51,77.47] | [76.45,77.31] | [77.09,78.09] |

| 10 | [74.58,75.58] | [77.01,77.97] | [76.92,77.70] | [77.86,78.77] | [76.97,77.86] | [75.96,76,91] | [77.10,78.06] | [77.27,78.15] |

| No. | SVM | |||||||

|---|---|---|---|---|---|---|---|---|

| Unselected | GA | SU | Relief | CFS | MRMR | CMIM | CMIFSI | |

| 1 | [93.95,94.87] | [97.71,98.52] | [96.80,97.64] | [98.59,99.35] | [95.14,95.95] | [98.48,99.27] | [97.61,98.36] | [98.47,99.52] |

| 2 | [94.40,95.27] | [94.81,95.60] | [95.14,95.92] | [97.50,98.32] | [94.22,94.96] | [95.35,96.23] | [94.46,95.31] | [96.83,97.60] |

| 3 | [78.68,79.49] | [82.39,83.31] | [83.05,83.84] | [82.94,83.73] | [80.81,81.55] | [81.98,82.88] | [82.50,83.39] | [84.32,85.13] |

| 4 | [92.38,93.33] | [96.71,97.42] | [94.31,95.20] | [95.56,96.35] | [87.56,88.47] | [96.77,97.61] | [96.78,98.55] | [96.88,97.77] |

| 5 | [85.26,86.08] | [87.52,88.27] | [87,58,88.46] | [87.01,87.85] | [88.22,89.14] | [86.77,87.52] | [86.49,87.38] | [88.56,89.40] |

| 6 | [94.68,95.60] | [87.69,88.44] | [90.39,91.22] | [87.87,88.77] | [87.88,88.65] | [89.07,89.98] | [87.66,88.46] | [87.20,88.03] |

| 7 | [51.24,52.02] | [53.41,54.22] | [53.74,54.59] | [53.63,54.46] | [54.34,55.17] | [50.53,51.38] | [54.02,54.79] | [55.18,55.98] |

| 8 | [95.82,96.60] | [93.64,94.47] | [95.34,96.21] | [95.30,96.18] | [93.60,94.38] | [96.13,96.97] | [96.37,97.23] | [96.53,97.28] |

| 9 | [83.79,84.59] | [85.13,86.00] | [85.43,86.40] | [85.06,85.80] | [84.89,85.80] | [85.77,86.63] | [86.18,86.98] | [86.10,86.90] |

| 10 | [85.69,86.58] | [86.33,87.13] | [86.68,87.60] | [87.45,88.21] | [84.86,85.69] | [86.94,87.77] | [86.80,87.62] | [87.71,88.56] |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Liang, J.; Hou, L.; Luan, Z.; Huang, W. Feature Selection with Conditional Mutual Information Considering Feature Interaction. Symmetry 2019, 11, 858. https://doi.org/10.3390/sym11070858

Liang J, Hou L, Luan Z, Huang W. Feature Selection with Conditional Mutual Information Considering Feature Interaction. Symmetry. 2019; 11(7):858. https://doi.org/10.3390/sym11070858

Chicago/Turabian StyleLiang, Jun, Liang Hou, Zhenhua Luan, and Weiping Huang. 2019. "Feature Selection with Conditional Mutual Information Considering Feature Interaction" Symmetry 11, no. 7: 858. https://doi.org/10.3390/sym11070858

APA StyleLiang, J., Hou, L., Luan, Z., & Huang, W. (2019). Feature Selection with Conditional Mutual Information Considering Feature Interaction. Symmetry, 11(7), 858. https://doi.org/10.3390/sym11070858