Abstract

In this paper, a novel fractional-order fusion model (FFM) is presented for low-light image enhancement. Existing image enhancement methods don’t adequately extract contents from low-light areas, suppress noise, and preserve naturalness. To solve these problems, the main contributions of this paper are using fractional-order mask and the fusion framework to enhance the low-light image. Firstly, the fractional mask is utilized to extract illumination from the input image. Secondly, image exposure adjusts to visible the dark regions. Finally, the fusion approach adopts the extracting of more hidden contents from dim areas. Depending on the experimental results, the fractional-order differential is much better for preserving the visual appearance as compared to traditional integer-order methods. The FFM works well for images having complex or normal low-light conditions. It also shows a trade-off among contrast improvement, detail enhancement, and preservation of the natural feel of the image. Experimental results reveal that the proposed model achieves promising results, and extracts more invisible contents in dark areas. The qualitative and quantitative comparison of several recent and advance state-of-the-art algorithms shows that the proposed model is robust and efficient.

1. Introduction

Primarily, people receive information daily in the form of audio and images.The human mind interprets and processes visual information efficiently. The images captured with digital devices may be influenced by various conditions such as bad light, weather, noise, etc. The low illumination images have different forms such as one side dark and one side bright or some particular portion effect with bad light. Hence, it is difficult for the human eye to extract hidden meaningful contents from these images. Currently, the enhancement techniques used for images degraded from various conditions have a wide range of applications and significant impact on the performance of various high-precision computer vision systems, such as target tracking [1], object detection [2], and medical image analysis [3].

There are numerous algorithms that have been proposed to make visible the hidden contents. The simplest method Histogram Equalization (HE) [4,5] solves this problem by equalizing the histogram of the original image. The disadvantage of this method is that it enhances the contrast and don’t pay attention to real illumination cases. The results are always super-saturated or over-enhanced. According to the observations, the inversion of low-light images is close to hazy images. The technique in [6,7] transforms the original images into a photo-negative effect and uses the dehaze strategy to enhance. However, those methods cause the results look unnatural due to the lack of physical theory support. The most widely used theory for image enhancement is the Retinex theory (RT) [8]. Based on this theory, an image can be viewed as the product of reflectance and illumination [8]. Retinex-based methods use a variety of priors to get illumination, and then adjust the illumination to enhance the low-light images [9,10,11,12]. Naturalness preserved enhancement (NPE) [12] uses a bright-pass filter to decompose the original image into illumination and reflectance. Cai et al. [9] introduced a model that constrains illumination and reflection by shape, texture and illumination priors. The objective assessment of this method is good, but the results are still dark. Based on the Retinex methods, Fu et al. [13] use arc tangent transformation and histogram equalization to adjust the illumination, and then the two type weights are used to fuse these adjusted illuminations. However, the enhanced images have the risk of over-exposure due to the inclusion of the histogram equalization method. Ying et al. [11] propose a Camera Response Model (CRM) to make the adjusted illumination more similar to camera exposure. This is the era of neural networks, and different neural network methods [14,15,16,17] have emerged. Li et al. [17] use a Convolutional Neural Network to predict the good exposure illumination map. However, the processed images have visible visual artifacts.

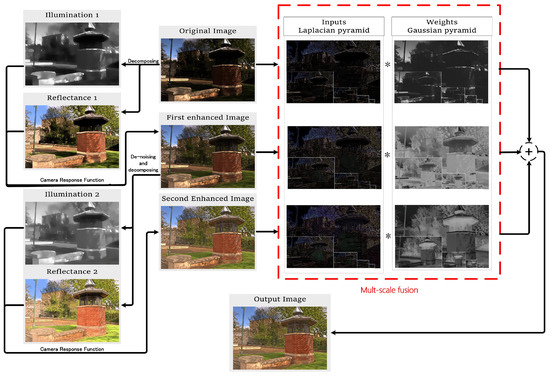

Although there are many algorithms that process and extract hidden contents in low-light images, still there are several problems that should be solved. The existing algorithms may not fully extract the dark contents from the original image, which can’t get good visual effects in low illumination regions, and have the risk of over-enhancement. We proposed a fractional-order fusion model to enhance images well and solve all the problems. Firstly, fractional-order is used to preserve the naturalness of the original image. Secondly, traditional methods use gamma transformation for illumination adjustment, but the processed image is a bit distorted due to lack of physical theory support [11]. Inspired by [11], the illumination adjustment strategy suitable for this model is applied to get the desired results. Finally, the proposed model extracts more contents in the low light region by using the fusion framework. An intuitive idea for extracting more contents is to treat the enhanced images as pending images and process it again. However, the error of pixel value in the low-light areas may be magnified, and affect the quality of results. To solve this issue, the proposed model process images with a denoising strategy before the second image enhancement step, as shown in Figure 1. After the second image enhancement step, the fusion process is applied to compensate for the loss of detail due to the filtering while improving image brightness and avoiding over-enhancement.

Figure 1.

The flow chart of the fusion model.

Contribution: The main contributions of the proposed model are:

- As compared to integer-order, we apply fractional calculus to process the original images without logarithmic transformation. Remarkable results have been achieved in preserving the natural character of images.

- A novel fusion framework is introduced to extract more contents in the dark areas while preserving the visual appearance of images.

- The experimental results compared with other image enhancement algorithms show that the proposed model can reveal more hidden contents in dark regions of the images.

This paper is structured as follows: Section 2 is a brief introduction to fractional calculus and Retinex theory. The proposed model is elaborated in detail in Section 3. Section 4 describes the implementation of the algorithm. The qualitative and quantitative analysis is in Section 5, and Section 6 is related to the conclusions and future work.

2. Background

In this section, we introduce the fractional calculus and the Retinex theory briefly.

2.1. Fractional Calculus

Fractional calculus was born in 1695, almost as long as classic calculus. Fractional calculus is widely used in signal processing and image processing because of its strength such as long-term memory, weak singularity, and non-locality [18,19,20]. The most commonly used fractional calculus definitions are Grünwald-Letnikov, Riemann–Liouville, and Caputo [21,22,23]. The Grünwald-Letnikov definition is used as follows:

where is the domain of , and v is an arbitrary number which indicates the order of the derivation. is the gamma function.

2.2. Retinex Theory

The Retinex theory (RT) [8] is proposed by Edwin Land to explain human color vision system. The basic formula of Retinex is as follows:

where S is the observed image. R reflectance and L is illumination, respectively. The reflectance represents the intrinsic characteristics of the object, and the illumination represents the extrinsic property. · indicates element-wise multiplication. Furthermore, RT has been used with slight modification to achieve good quality results [24].

The previously proposed fractional-order models [25] processed the log-transformed image, i.e., where s, r and l are , and respectively. However, it is not appropriate to process the log-transformed image according to [26]. The important textures and edges in high magnitude stimuli areas may be masked by noise and irrelevant details in low illumination areas when taking the derivative. It is because , when x is very small, the weight of will be very large, is highly affected by . This problem is solved in this paper by processing the original image directly.

The Retinex-based algorithms get L and R from S through various priors, and then adjust L and R to get the final enhanced results.

3. Fractional-Order Fusion Model Based On Retinex

In this section, we present the step by step explanation of the proposed model. The model steps are:

3.1. Reflectance and Illumination Based On Fractional-Order

3.1.1. Reflectance

According to [12], the reflectance is piece-wise continuous and contains fine textures. The traditional methods use integer-order differential to constrain reflectance, such as , . However, using fractional calculus gets better effects on preserving texture details and suppressing noise according to Section 5.1. The energy function is modeled as:

where designates norms, and , are fractional parameters.

3.1.2. Illumination

The detailed information about the illumination priors are as follows:

- For color images, three color channels (R, G, B) share the same illumination map [27].

- R is restricted to the unit interval, according to Equation (2), we know .

- L and S should be close enough [10].

- L should contain the structural information of the image and remove the texture details [9,27].

To express the information shown in 1, 2, 3, we can design the formula as:

where designates norms, and is the initial illumination map.

where x is the pixel of interest. donates the pixels’ neighboring x. c represents three color channels.

The 4th illumination prior indicates that we should simultaneously preserve the overall structure and smooth the textural details. The proposed illumination constraint is:

where is a small number to avoid division by zero. means Gaussian filtering. In the texture detail areas, is small compared to . This is because Gaussian filtering leads to loss of details in these areas, so . In the structural detail areas, the patch around L changes in accordance, and are very close, so .

3.1.3. The Energy Function

The total energy function can be obtained by combining the illumination priors, reflection priors, and the fidelity term. The optimization goal is as follows:

where , , and are the coefficients to balance the involved four terms. The fidelity term is to ensure that the image () and the original image are very close. By solving , we can get L and R that match our priors.

3.1.4. Adjust Illumination

Once the reflectance (R) and illumination (L) are solved, the next step is to adjust illumination. Traditional methods use gamma transformation to adjust illumination. The equation is defined as:

where is the enhanced image, is the adjusted illuminate and is the empirical parameter, in general.

Recently, Ying et al. [11] proposed camera response function (CRF) to adjust illumination, which is inspired by the response characteristics of cameras. The equation is defined as:

where k is exposure ratio, and . is the input image, and g is enhanced image; a and b are constants, and .

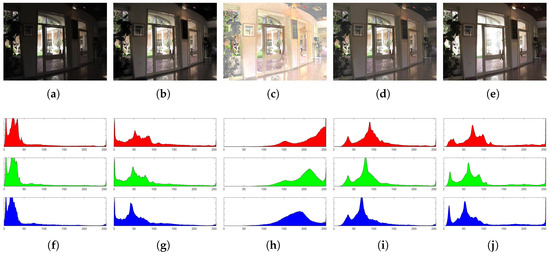

Since the prior conditions of the proposed model are different from [11], parameters a and b shouldn’t apply to the proposed model directly. As shown in Figure 2, (a) and (e) are a pair of images that differ only in exposure. (b) and (c) are the results of the gamma transformation and the original CRF, respectively. The result of Gamma transformation is a bit dim, and the original CRF produces the over-saturated effect.

Figure 2.

Comparison of different illumination adjustment strategy. (a) the under-exposure image; (b) the Gamma transformation according to Equation (8); (c) the original camera response function (CRF) according to Equation (9); (d) the modified result of CRF; (e) the well-exposure image. (f), (g), (h), (i) and (j) are the three color channels (R, G, B) histograms of (a), (b), (c), (d) and (e), respectively.

In order to set the enhancement process to present the images as close as possible to the well-exposure image, we adjust the parameters a and b, and then set the histogram of them as consistent as possible. Finally, the appropriate parameters are found, and . The final result is shown in Figure 2d.

3.2. Fusion Framework

In the general Retinex-based algorithms, the final step is to adjust the illumination and get an enhanced result. However, the contents of low-light images may not be fully revealed. Therefore, a fusion method is proposed to extract more hidden contents.

As shown in Figure 1, firstly, the input image is divided into R and L using Equation (7), and CRF is applied to adjust exposure. Secondly, in order to extract more contents while preserving visual quality, BM3D [28] is used to filter the first enhanced image, and then another enhancement is made to the result. Finally, the fusion process is applied to get the final enhance result.

The fusion framework can be expressed as the following formula:

where is the weight of the i-th image, and . In the proposed model, , , and denote the original image, first enhanced image and second enhanced image, respectively. donates the final result.

As showing in Figure 3, (a), (b) and (c) are , and , respectively. For , it is ideal to enhance the areas with low illumination, while retaining the areas with high illumination. On the contrary, has a serious loss of details due to the filtering process, and it can reveal more contents compared with (a) and (b), it is ideal to retain the areas with low illumination. It is similar to supervised machine learning. Therefore, the sigmoid function widely used in machine learning is used to set the weights of and . According to [13], the pixel distribution of well-exposed images approximates Gaussian distribution with a mean of 0.5 and a variance of 0.25. The weight of is set to the Gaussian function. In order to balance the Gaussian distribution function and Sigmoid, several changes are made to the standard formulas and get the following weight formulas:

where I is the corresponding illumination. The normalization process is performed after calculating the weights by illumination.

Figure 3.

Multi-scale fusion process weights setting. (a) the original image; (b) the first enhanced image; (c) the second enhanced image; (d) the result of fusion; (e) the weight function.

As can be seen from Figure 3e, dominates where the illumination is strong, has a large weight around 0.5, and dominates in areas with dark light. The result, Figure 3d, is brighter than and , darker than . The fusion framework achieves a good balance between enhancing brightness and avoiding over-exposure.

4. Implementation of FFM

This section describes the implementation steps of the algorithm in detail.

4.1. Optimization of the Energy Function

As discussed in Section 3.1, L and R are obtained by minimizing . According to [29], the block coordinate descent method is adopted to minimize the energy function Equation (7). The optimal solution can be obtained by iteratively updating one variable at a time, while other parameters are treated as constants. Once all the variables converge, we get the optimal solution.

In the n-th iteration, the problem is divided into R and L sub-problems.

4.1.1. R Sub-Problem

According to [30,31], Equation (3) can be re-organized as:

By ignoring items that don’t contain the variable R, we can get the energy function of R sub-problem:

This is a typical problem that can be solved by weighted least squares [32], Equation (15) can be converted into the matrix form as:

where and are the diagonal matrices formed by the vectorized , , respectively. and are the Toeplitz matrices from the discrete fractional-order gradient operators which can be obtained by Equations (A1) and (A2) (Appendix A). Since the equation is decomposed into quadratic and nonlinear parts, a numerically stable approximation can be obtained. By deriving Equation (16) and making it equal to zero, we can get the optimal solution of the n-th R:

where is the diagonal matrix formed by , .

4.1.2. L Sub-Problem

The energy function of L sub-problem can be rewritten as:

Equation (20) is transformed into the matrix form:

, , and are vector forms of L, R, S and , respectively. and are the diagonal matrices formed by , . and are the Toeplitz matrices from the discrete gradient operators [31]. By deriving Equation (21) and making it equal to zero, we can get the optimal solution of the n-th L:

where is the diagonal matrix formed by , and is the identity matrix.

4.2. Implementation of the Fusion Process

To get the final fused result, a multi-scale fusion process is adopted. In Ref. [33], each input image processed at various scales as a sum of patterns is represented. The Gaussian kernel convolved all the inputs to produce a low-pass filtered version. To extract image features, we decompose derived inputs using the Laplacian pyramid, and to perform smooth transitions each normalized weight provides to the Gaussian pyramid. This fusion process is effective. Subsequently, it fuses image features of intensities instead. Furthermore, the use of Gaussian and Laplacian operators are well-known techniques, and one can easily implement them for practical applications. The numbers of levels are the same for both Laplacian and Gaussian pyramids.

5. Experiments and Analysis

5.1. Fractional Order Impact

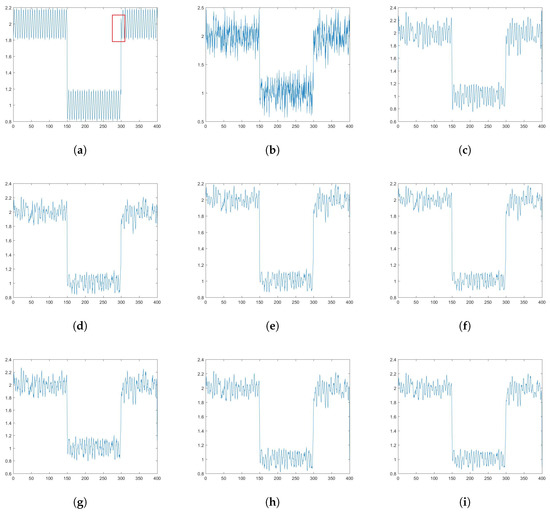

Since the fractional-order reflectance prior has not been adopted in previous non-logarithmic Retinex algorithms, its impact is analyzed in this experiment. To be more intuitive to see the advantages of fractional order, we simplify the problem to a one-dimensional signal. As shown in Figure 4a, it is the original signal, the square wave represents the structure of the image, and the triangle wave represents texture details. Figure 4b shows the signal with Gaussian noise, expressed with g. It is desired to denoise and maintain the texture details of g. There are three models to solve this problem, as shown in Formula (23)–(25):

where the first term in Equations (23)–(25) is fidelity item, and the second item is the regularization term. , and are coefficients to balance the fidelity and regularization terms. Equations (23) and (24) are conventional methods. We use fractional differential denoise technique in Equation (25).

Figure 4.

One-dimensional signal processing. (a) the original signal; (b) the signal with Gaussian noise added; (c) the result from Equation (25), v is 2.4 and is ; (d–f) the results are from Equation (23), is 0.6, 0.8, 1 respectively; (g–i) the outputs are from Equation (24), is 1.6, 2.4, 3.2, respectively.

The experimental results are shown in Figure 4c–i clearly. According to Equation (23), the signal has lost some details but is not smooth. The model of Equation (24) has improved denoising ability. However, as increases, some important details are lost. Compared with Equations (23) and (24), fractional calculus does have its unique advantages. The signal is smooth, and the details are still obvious. Therefore, the fractional order is used in Equation (3). This experiment also proves that the optimization algorithm can get good results while solving fractional-order equations.

The impact of fractional-order differential for image enhancement is shown in Figure 5. It clearly shows that the fractional-order preserves more contents in the dark area.

Figure 5.

The impact of fractional-order. (a) the original image; (b) the reflectance from first-order differential is more prominent than fractional-order differential; (c) the reflectance measured with fractional-order differential is more prominent than first-order differential.

5.2. Comparison with Other Algorithms

To validate the performance of the proposed model, three public available datasets (MF-data [13], NPE-data [12], and Middlebury [34]) is used. The Middlebury is a multi-exposure dataset, and the relatively dark images are selected for the experiments. The NPE-data contains the three databases (NPEpart1, NPEpart2, NPEpart3). The proposed model is compared to state-of-the-art methods, such as JIEP [9], NPE [12], CRM [11], MF [13], and LightenNet [17]. Since the first enhancement of the proposed model is regarded as a Retinex-based method, the method comparison includes the first enhanced result (FFM(1)) and the final result (FFM(2)).

During the experiments, the parameters , and in Equation (7). Moreover, fractional order parameters and . The maximum iterations N = 10 and stopping parameters = 0.01. All of the experiments are conducted on Matlab R2016a with 4G RAM, and 3.2 GHz CPU.

5.2.1. Visual Contrast

In order to have an intuitive impression, the images having complex light are selected from various datasets. As shown in Figure 6, the proposed model can extract more contents from the dark regions.

Figure 6.

Comparison of visual contrast in the low-light images which the contents are very dark. (a,i) are original images; (b,j) MF [13]; (c,k) LightenNet [17]; (d,l) CRM [11]; (e,m) NPE [12]; (f,n) JIEP [9]; (g,o) are enhanced images of FFM (1); (h,p) are final results of the proposed model.

The proposed model not only performs well for low-light images where the contents are very dark, but also has good performance for images slightly degraded from low light. As shown in Figure 7b, since the fusion process of MF involves histogram equalization, there is the risk of over-exposure. As can be seen from Figure 7c,e,m,s,u, the results of LightenNet and NPE have visible visual artifacts. The result of CRM is not vivid. The results of JIEP are dim; still, there are hidden contents that need to be revealed. FFM(2) only enhances the dark area of FFM(1), the contents of both are the same in the bright areas. Hence, FFM(2) avoids the risk of over-exposure. FFM(2) achieves a good balance in revealing the dark areas and avoids the risk of over-exposure. The proposed model is robust and improves the ability to enhance dark regions.

Figure 7.

Comparison of enhancement schemes for images which are slightly degraded from low-light conditions. (a,i,q) are original images; (b,j,r) MF [13]; (c,k,s) LightenNet [17]; (d,l,t) CRM [11]; (e,m,u) NPE [12]; (f,n,v) JIEP [9]; (g,o,w) Our enhanced images of FFM(1); (h,p,x) are final results.

5.2.2. Lightness Order Error

Lightness order error (LOE) is a method for objectively measuring the naturalness preservation between the original image and its enhanced image, proposed in [12]. The lightness order error can be defined as:

where m indicates the number of pixels in the image. denotes the difference of relative light order between the original image and the enhanced result at the x-th pixel:

⊕ denotes the exclusive-or operator, and are the maximum values among the three color channels (R, G, B) channels of the original image and the enhanced result, respectively. According to [12], the smaller the LOE value, the more natural the enhancement effect. However, LOE doesn’t adequately reflect the enhancement effect. When an algorithm doesn’t have any enhancement to the original image, then the value of LOE is zero.

As shown in Table 1, compared to other results, FFM(1) has the lowest LOE due to fractional-order. FFM(2) reveals the dark contents of FFM(1) further, inevitably increasing LOE. While extracting more contents, the LOE of FFM(2) is much lower than the other methods. It can be seen from the given data that the naturalness preservation of the proposed model is efficient.

Table 1.

Result comparison of Lightness Order Error.

5.2.3. Images Quality Assessment

A blind image quality assessment called no-reference image sharpness assessment in autoregressive parameter space (ARISM) [35] is used to evaluate the enhanced results. The lower ARISM value represents a higher image quality. There are two ways to calculate ARISM. The one use luminance component (ARISM(1)), and the second use both luminance and chromatic components (ARISM(2)).

As shown in Table 2, the results of FFM(2) score lowest in image quality assessment. FFM(2) is slightly smaller than FFM(1); this is partly due to the use of filtering operations. FFM(1) get lower scores than the other models. It shows that the use of fractional differential dramatically improves the image quality.

Table 2.

Results of image sharpness quality assessment using ARISM [35].

5.2.4. Images Similarity Assessment

The goal of image enhancement is to preserve the other characteristics of the image while enhancing the illumination. To evaluate the similarity between enhanced and original images, A fast reliable image quality predictor (PSIM) [36] and feature similarity (FSIM) [37] are used, which are all based on the human visual system. The PSIM metric combines the gradient magnitude similarities, the color similarity, and reliable perceptual-based pooling [36]. There are two algorithms for FSIM: the one only considers the luminance component i.e., FSIM(1), while another usees both grayscale and color information i.e., FSIM(2).

As shown in Table 3, the results of FFM(1) have the highest similarity with the original image, and the similarity of FFM(2) is slightly lower than FFM(1), which is much higher than the other methods. The score of FFM(2) is lower than FFM(1) mainly because there is filtering in the fusion framework, which lost a lot of contents. However, the proposed model adds the fusion process based on denoising technique, and the denoise only activates in the low-light areas. Hence, FFM(2) also has a high similarity to the original image. It can be seen from the data that the fractional-order is more powerful in preserving the feature of images, and the distortion of the proposed model is small.

Table 3.

Similarity quality assessment metric of input and enhanced images.

6. Conclusions

In this paper, we introduced a novel fractional-order fusion model for low-light image enhancement, which can extract more contents from dark regions. Firstly, we apply the fractional mask on the input image, and divide the input image into reflectance and illumination. The CRF suitable for the proposed model is used to adjust exposure. Furthermore, the BM3D technique is used to denoise the result, and then another CRF is used to adjust the exposure of the result. Finally, we fuse the original image, the first enhanced image and second enhanced image to get the final enhanced result. The subjective and objective assessments are conducted to check and compare the results. Compared with the state-of-the-art algorithms, the proposed model is efficient in preserving details and extracting contents. The proposed model can be applied in many vision-based applications such as object recognition, image classification, target tracking, facial attractiveness, surveillance, etc.

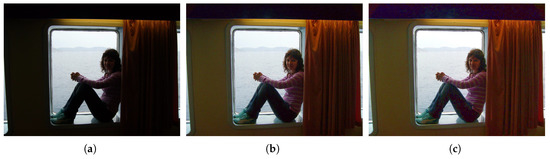

The limitation of the proposed model is: firstly, as shown in Figure 8, the performance of FFM(1) is good enough. If we use the fusion model to enhance the image (FFM(2)), some dark areas without details will be filled with noise. Although more contents are extracted, the visual effect of the images is degraded. The future work is to find a method to determine whether the areas have contents and whether it needs to be enhanced. Secondly, as shown in Table 4, the proposed method has no advantage in speed, compared with the other methods. The fractional order requires more information than the integer order, so the processing speed is slow. The proposed method reprocesses the enhanced image again, and it takes more time while extracting more contents. The filtering and fusion operation also increase the running time. In the future, the deep learning strategy could be used to fit the proposed model to run fast.

Figure 8.

An example of a failure case. (a) the original image; (b) the effect of FFM(1); (c) the result of FFM(2).

Table 4.

Running time comparison of the various methods. These values represent the average time, in seconds.

Author Contributions

Q.D. performed the experiments and wrote the manuscript; Y.-F.P. determined the research direction of this paper and made major optimizations to the structure of the paper; Z.R. guided and revised the manuscript; M.A. perform investigation process and evidence collection. All authors read and approved the final manuscript.

Funding

The work was supported by the National Key Research and Development Program Foundation of China under Grants 2018YFC0830300, and the National Natural Science Foundation of China under Grants 61571312.

Acknowledgments

The reviewers and editor made some useful suggestions for the paper, and the editor adjusted the layout of the paper to make the article look clearer. The papers code of JIEP [9], CRM [11] and MF [13] give us a lot of inspiration.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Berclaz, J.; Fleuret, F.; Turetken, E.; Fua, P. Multiple object tracking using k-shortest paths optimization. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 1806–1819. [Google Scholar] [CrossRef] [PubMed]

- Redmon, J.; Divvala, S.; Girshick, R.; Farhadi, A. You only look once: Unified, real-time object detection. Comput. Vis. Pattern Recognit. 2016, 779–788. [Google Scholar]

- Wang, R.; Wang, G. Medical X-ray image enhancement method based on tv-homomorphic filter. In Proceedings of the International Conference on Image, Vision and Computing, Chengdu, China, 2–4 June 2017; pp. 315–318. [Google Scholar]

- Cheng, H.D.; Shi, X.J. A simple and effective histogram equalization approach to image enhancement. Digit. Signal Process. 2004, 14, 158–170. [Google Scholar] [CrossRef]

- Reza, A.M. Realization of the contrast limited adaptive histogram equalization for real-time image enhancement. J. VLSI Signal Process. Syst. Signal Image Video Technol. 2004, 38, 35–44. [Google Scholar] [CrossRef]

- Dong, X.; Wang, G.; Pang, Y.; Li, W.; Wen, J.; Meng, W.; Lu, Y. Fast efficient algorithm for enhancement of low lighting video. In Proceedings of the IEEE International Conference on Multimedia and Expo, Barcelona, Spain, 11–15 July 2011; pp. 1–6. [Google Scholar]

- Li, L.; Wang, R.; Wang, W.; Gao, W. A low-light image enhancement method for both denoising and contrast enlarging. In Proceedings of the IEEE International Conference on Image Processing, Quebec City, QC, Canada, 27–30 September 2015; pp. 3730–3734. [Google Scholar]

- Land, E.H.; Mccann, J.J. Lightness and retinex theory. J. Opt. Soc. Am. 1971, 61, 1–11. [Google Scholar] [CrossRef] [PubMed]

- Cai, B.; Xu, X.; Guo, K.; Jia, K.; Hu, B.; Tao, D. A joint intrinsic-extrinsic prior model for retinex. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 4020–4029. [Google Scholar]

- Kimmel, R.; Elad, M.; Shaked, D.; Keshet, R.; Sobel, I. A variational framework for retinex. Int. J. Comput. Vis. 2003, 52, 7–23. [Google Scholar] [CrossRef]

- Ying, Z.; Li, G.; Ren, Y.; Wang, R.; Wang, W. A new low-light image enhancement algorithm using camera response model. In Proceedings of the IEEE International Conference on Computer Vision Workshop, Venice, Italy, 22–29 October 2017; pp. 3015–3022. [Google Scholar]

- Wang, S.; Zhang, J.; Hu, H.-M.; Li, B. Naturalness preserved enhancement algorithm for non-uniform illumination images. IEEE Trans. Image Process. 2013, 22, 3538–3548. [Google Scholar] [CrossRef] [PubMed]

- Fu, X.; Zeng, D.; Yue, H.; Liao, Y.; Ding, X.; Paisley, J. A fusion-based enhancing method for weakly illuminated images. Signal Process. 2016, 129, 82–96. [Google Scholar] [CrossRef]

- Lore, K.G.; Akintayo, A.; Sarkar, S. Llnet: A deep autoencoder approach to natural low-light image enhancement. Pattern Recognit. 2017, 61, 650–662. [Google Scholar] [CrossRef]

- Gharbi, M.; Chen, J.; Barron, J.T.; Hasinoff, S.W.; Durand, F. Deep bilateral learning for real-time image enhancement. ACM Trans. Graph. 2017, 36, 118. [Google Scholar] [CrossRef]

- Cai, J.; Gu, S.; Lei, Z. Learning a deep single image contrast enhancer from multi-exposure images. IEEE Trans. Image Process. 2018, 27, 2049–2062. [Google Scholar] [CrossRef] [PubMed]

- Chongyi, L.; Guo, J.; Porikli, F.; Pang, Y. Lightennet: A convolutional neural network for weakly illuminated image enhancement. Pattern Recognit. Lett. 2018, 104, 15–22. [Google Scholar]

- Pu, Y.-F.; Zhang, N.; Zhang, Y.; Zhou, J.L. A texture image denoising approach based on fractional developmental mathematics. Pattern Anal. Appl. 2016, 19, 427–445. [Google Scholar] [CrossRef]

- Pu, Y.-F. Fractional calculus approach to texture of digital image. In Proceedings of the International Conference on Signal Processing, Beijing, China, 16–20 November 2006; pp. 1002–1006. [Google Scholar]

- Aygören, A. Fractional Derivative and Integral; Gordon and Breach Science Publishers: Philadelphia, PA, USA, 1993. [Google Scholar]

- Koeller, R.C. Applications of fractional calculus to the theory of Viscoelasticity. Trans. ASME J. Appl. Mech. 1984, 51, 299–307. [Google Scholar] [CrossRef]

- Rossikhin, Y.A.; Shitikova, M.V. Applications of Fractional Calculus to Dynamic Problems of Linear and Nonlinear Hereditary Mechanics of Solids; McGraw-Hill: New York, NY, USA, 1997. [Google Scholar]

- Oldham, K.; Spanier, J. The Fractional Calculus Theory and Applications of Differentiation and Integration to Arbitrary Order; Elsevier: Amsterdam, The Netherlands, 1974. [Google Scholar]

- Lee, S. An efficient content-based image enhancement in the compressed domain using retinex theory. IEEE Trans. Circuits Syst. Video Technol. 2007, 17, 199–213. [Google Scholar] [CrossRef]

- Pu, Y.-F.; Siarry, P.; Chatterjee, A.; Wang, Z.N.; Zhang, Y.I.; Liu, Y.G.; Zhou, J.L.; Wang, Y. A fractional-order variational framework for retinex: Fractional-order partial differential equation based formulation for multi-scale nonlocal contrast enhancement with texture preserving. IEEE Trans. Image Process. 2018, 27, 1214–1229. [Google Scholar] [CrossRef] [PubMed]

- Fu, X.; Zeng, D.; Huang, Y.; Zhang, X.P.; Ding, X. A weighted variational model for simultaneous reflectance and illumination estimation. In Proceedings of the Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2782–2790. [Google Scholar]

- Guo, X.; Li, Y.; Ling, H. Lime: Low-light image enhancement via illumination map estimation. IEEE Trans. Image Process. 2017, 26, 982–993. [Google Scholar] [CrossRef] [PubMed]

- Dabov, K.; Foi, A.; Katkovnik, V.; Egiazarian, K. Image denoising by sparse 3-D transform-domain collaborative filtering. IEEE Trans. Image Process. 2007, 16, 2080–2095. [Google Scholar] [CrossRef] [PubMed]

- Tseng, P. Convergence of a block coordinate descent method for nondifferentiable minimization. J. Optim. Theory Appl. 2001, 109, 475–494. [Google Scholar] [CrossRef]

- Candès, E.J.; Wakin, M.B.; Boyd, S.P. Enhancing sparsity by reweighted lsb1 minimization. J. Fourier Anal. Appl. 2007, 14, 877–905. [Google Scholar] [CrossRef]

- Xu, L.; Yan, Q.; Xia, Y.; Jia, J. Structure extraction from texture via relative total variation. ACM Trans. Graph. 2012, 31, 139. [Google Scholar] [CrossRef]

- Farbman, Z.; Fattal, R.; Lischinski, D.; Szeliski, R. Edge-preserving decompositions for multi-scale tone and detail manipulation. ACM Trans. Graph. 2008, 27, 1–10. [Google Scholar] [CrossRef]

- Burt, P.J.; Adelson, E.H. The laplacian pyramid as a compact image Code. Read. Comput. Vis. 1987, 31, 671–679. [Google Scholar]

- Chakrabarti, A.; Scharstein, D.; Zickler, T.E. An empirical camera model for internet color vision. BMVC 2009, 1, 4. [Google Scholar]

- Gu, K.; Zhai, G.; Lin, W.; Yang, X.; Zhang, W. No-reference image sharpness assessment in autoregressive parameter space. IEEE Trans. Image Process. Publ. IEEE Signal Process. Soc. 2015, 24, 3218–3231. [Google Scholar]

- Gu, K.; Li, L.; Lu, H.; Min, X.; Lin, W. A fast reliable image quality predictor by fusing micro- and macro-structures. IEEE Trans. Ind. Electron. 2017, 64, 3903–3912. [Google Scholar] [CrossRef]

- Zhang, L.; Zhang, L.; Mou, X.; Zhang, D. Fsim: A feature similarity index for image quality assessment. IEEE Trans. Image Process. Publ. IEEE Signal Process. Soc. 2011, 20, 2378. [Google Scholar] [CrossRef]

- Pu, Y.-F.; Zhou, J.L.; Yuan, X. Fractional differential mask: A fractional differential-based approach for multiscale texture enhancement. IEEE Trans. Image Process. 2010, 19, 491–511. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).